f3d8ca6d14850c3264f75af4eece0d9a.ppt

- Количество слайдов: 55

Open only for Humans; Droids and Robots should go for CSE 462 next door ; -)

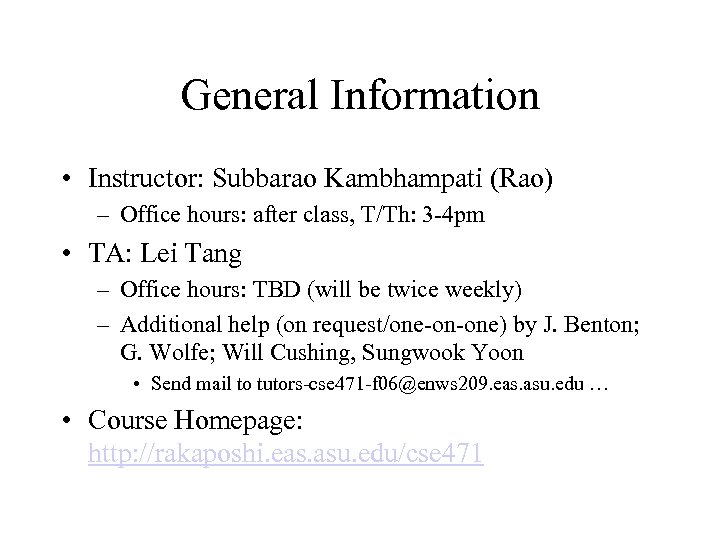

General Information • Instructor: Subbarao Kambhampati (Rao) – Office hours: after class, T/Th: 3 -4 pm • TA: Lei Tang – Office hours: TBD (will be twice weekly) – Additional help (on request/one-on-one) by J. Benton; G. Wolfe; Will Cushing, Sungwook Yoon • Send mail to tutors-cse 471 -f 06@enws 209. eas. asu. edu … • Course Homepage: http: //rakaposhi. eas. asu. edu/cse 471

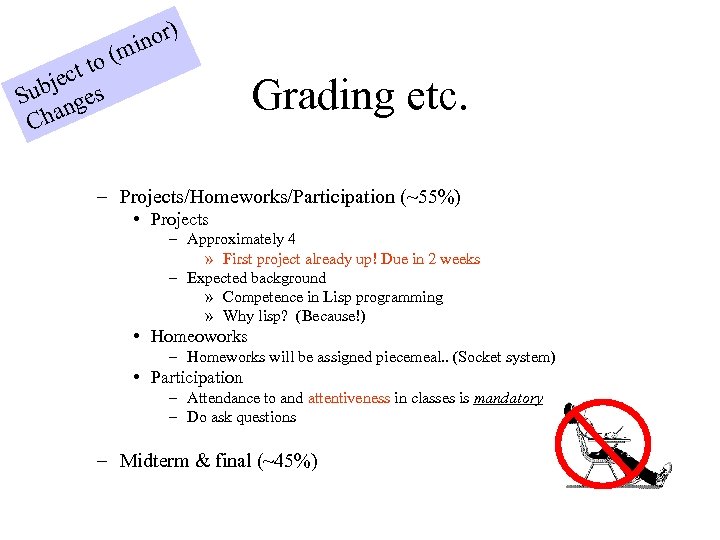

) nor mi to ( ject s Sub nge Cha Grading etc. – Projects/Homeworks/Participation (~55%) • Projects – Approximately 4 » First project already up! Due in 2 weeks – Expected background » Competence in Lisp programming » Why lisp? (Because!) • Homeoworks – Homeworks will be assigned piecemeal. . (Socket system) • Participation – Attendance to and attentiveness in classes is mandatory – Do ask questions – Midterm & final (~45%)

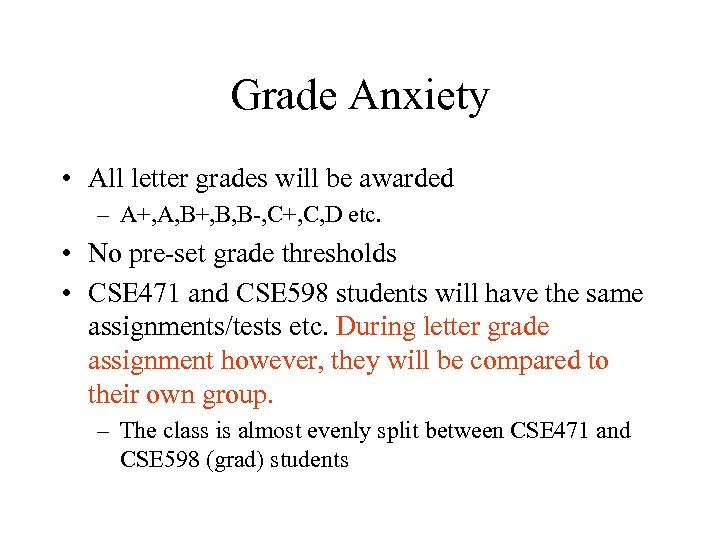

Grade Anxiety • All letter grades will be awarded – A+, A, B+, B, B-, C+, C, D etc. • No pre-set grade thresholds • CSE 471 and CSE 598 students will have the same assignments/tests etc. During letter grade assignment however, they will be compared to their own group. – The class is almost evenly split between CSE 471 and CSE 598 (grad) students

Honor Code • Unless explicitly stated otherwise, all assignments are: – Strictly individual effort – You are forbidden from trawling the web for answers/code etc • Any infraction will be dealt with in severest terms allowed.

Life with a homepage. . • I will not be giving any handouts – All class related material will be accessible from the web-page • Home works may be specified incrementally – (one problem at a time) – The slides used in the lecture will be available on the class page • I reserve the right to modify slides right up to the time of the class • When printing slides avoid printing the hidden slides

1946: ENIAC heralds the dawn of Computing

1950: Turing asks the question…. I propose to consider the question: “Can machines think? ” --Alan Turing, 1950

1956: A new field is born G G We propose that a 2 month, 10 man study of artificial intelligence be carried out during the summer of 1956 at Dartmouth College in Hanover, New Hampshire. - Dartmouth AI Project Proposal; J. Mc. Carthy et al. ; Aug. 31, 1955.

![1996: EQP proves that Robbin’s Algebras are all boolean [An Argonne lab program] has 1996: EQP proves that Robbin’s Algebras are all boolean [An Argonne lab program] has](https://present5.com/presentation/f3d8ca6d14850c3264f75af4eece0d9a/image-12.jpg)

1996: EQP proves that Robbin’s Algebras are all boolean [An Argonne lab program] has come up with a major mathematical proof that would have been called creative if a human had thought of it. -New York Times, December, 1996

1997: HAL 9000 becomes operational in fictional Urbana, Illinois …by now, every intelligent person knew that H-A-L is derived from Heuristic ALgorithmic -Dr. Chandra, 2010: Odyssey Two

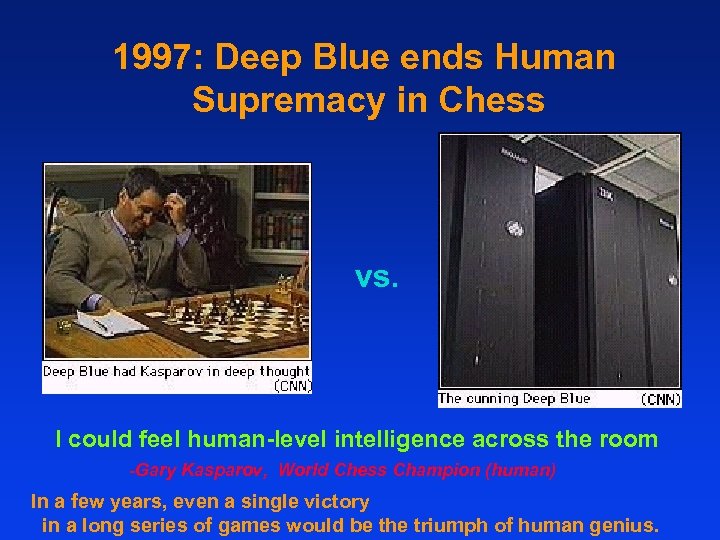

1997: Deep Blue ends Human Supremacy in Chess vs. I could feel human-level intelligence across the room -Gary Kasparov, World Chess Champion (human) In a few years, even a single victory in a long series of games would be the triumph of human genius.

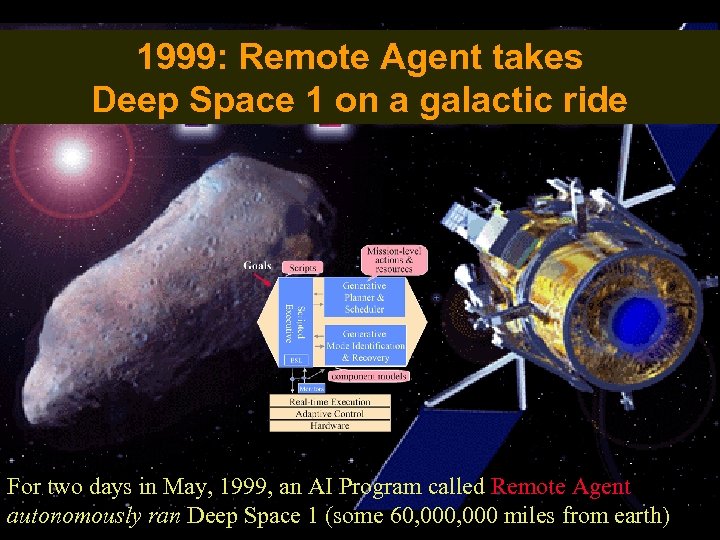

1999: Remote Agent takes Deep Space 1 on a galactic ride For two days in May, 1999, an AI Program called Remote Agent autonomously ran Deep Space 1 (some 60, 000 miles from earth)

2002: Computers start passing Advanced Placement Tests … a project funded by (Microsoft Co-founder) Paul Allen attempts to design a “Digital Aristotle”. Its first results involve programs that can pass High School Advanced Placement Exam in Chemistry…

2005: Cars Drive Themselves G Stanley and three other cars drive themselves over a 132 mile mountain road

2005: Robots play soccer (without headbutting!) G 2005 Robot Soccer: Humanoid league

2006: AI Celebrates its Golden Jubilee…

2006: AI Celebrates its Golden Jubilee… …and invites you along. . Welcome! To CSE 471/598 th Anniversary Edition 50 (tons of bonus material)

Course Overview • • What is AI – Intelligent Agents Search (Problem Solving Agents) – Single agent search [Project 1] • Markov Decision Processes • • • Constraint Satisfaction Problems – Adversarial (multi-agent) search Logical Reasoning [Project 2] Reasoning with uncertainity Planning [Project 3] Learning [Project 4]

What if we are writing intelligent agents that interact with humans? The COG project Mechanical flight became possible only when people decided to stop emulating birds…

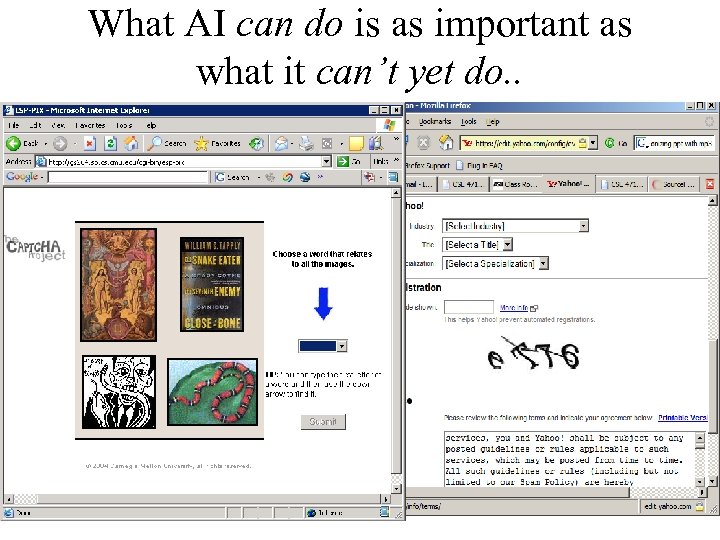

What AI can do is as important as what it can’t yet do. . • Captcha project

Arms race to defeat Captchas… (using unwitting masses) • Start opening an email account at Yahoo. . • Clip the captcha test • Show it to a human trying to get into another site – Usually a site that has pretty pictures of the persons of apposite* sex • Transfer their answer to the Yahoo Note: Apposite—not opposite. This course is nothing if not open minded

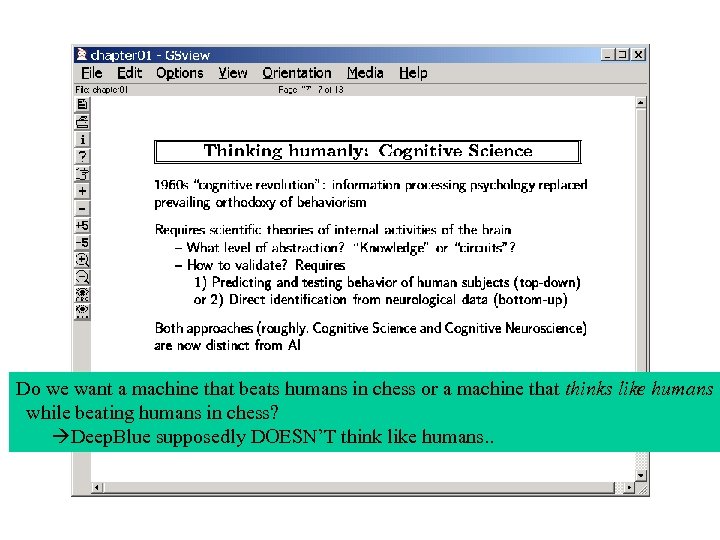

Do we want a machine that beats humans in chess or a machine that thinks like humans while beating humans in chess? Deep. Blue supposedly DOESN’T think like humans. .

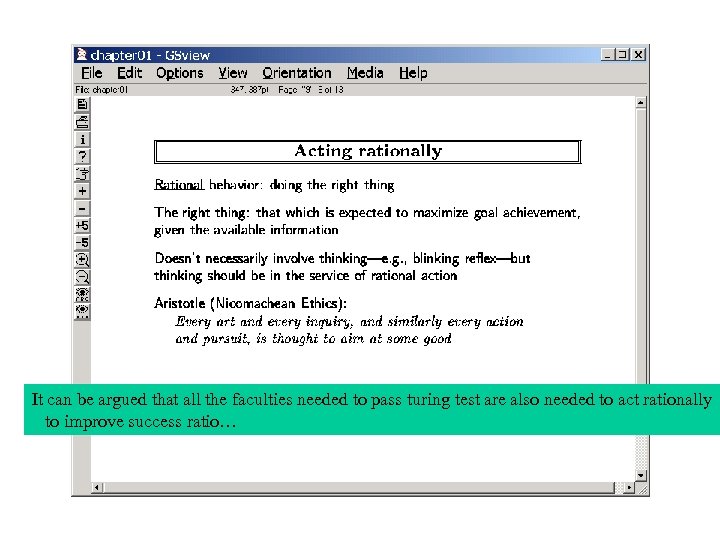

It can be argued that all the faculties needed to pass turing test are also needed to act rationally to improve success ratio…

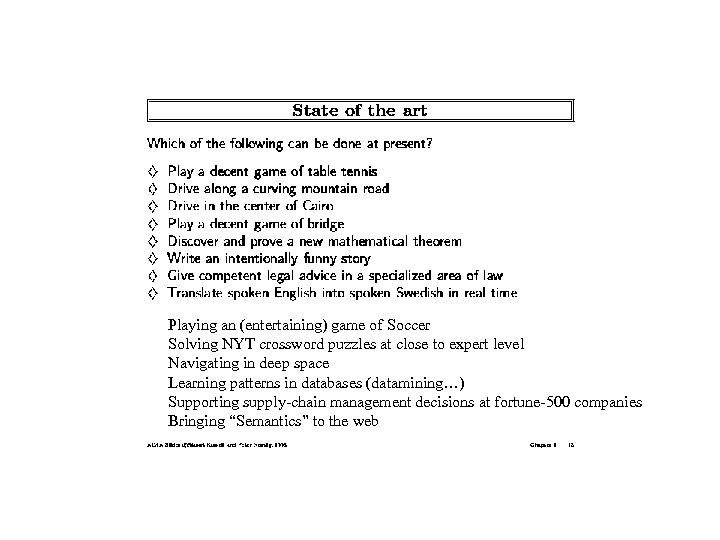

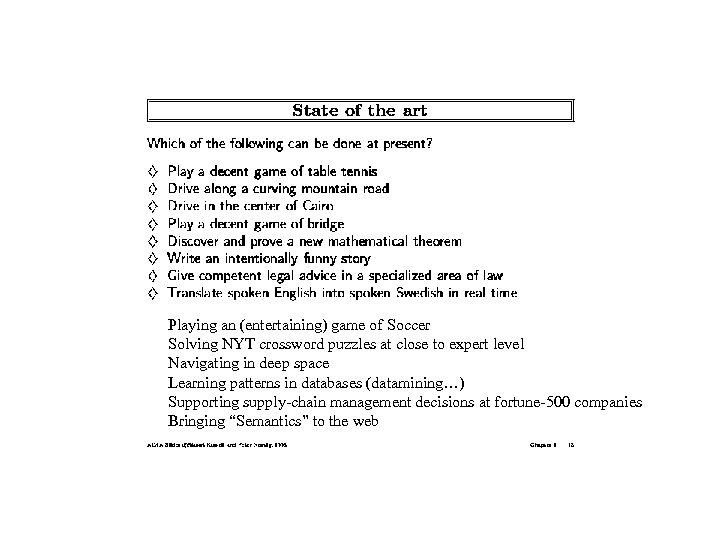

Playing an (entertaining) game of Soccer Solving NYT crossword puzzles at close to expert level Navigating in deep space Learning patterns in databases (datamining…) Supporting supply-chain management decisions at fortune-500 companies Bringing “Semantics” to the web

Playing an (entertaining) game of Soccer Solving NYT crossword puzzles at close to expert level Navigating in deep space Learning patterns in databases (datamining…) Supporting supply-chain management decisions at fortune-500 companies Bringing “Semantics” to the web

Pluto in mourning. . 8/24 Grigory Perelman Architectures for Intelligent Agents Wherein we discuss why do we need representation, reasoning and learning

TA office hours etc. • TA: Lei Tang • Office hours: – Regular: M/W 3: 30— 4: 30 BYENG 214 • (Exception Tomorrow: Friday: 10 -12; BY 561 AC)

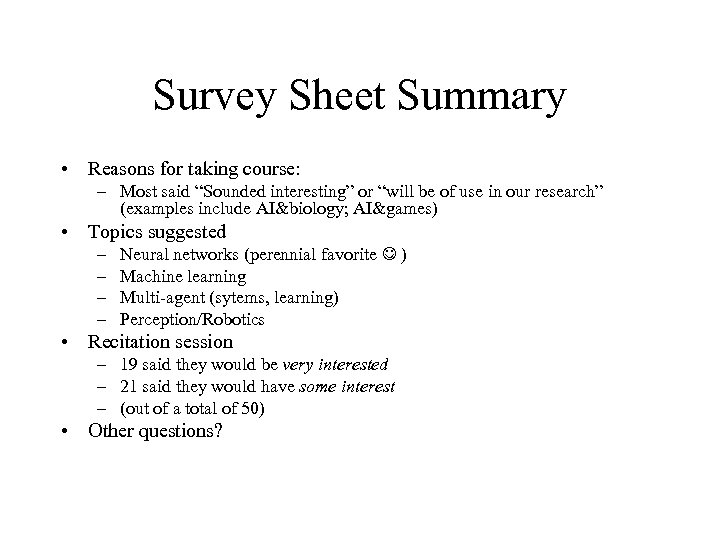

Survey Sheet Summary • Reasons for taking course: – Most said “Sounded interesting” or “will be of use in our research” (examples include AI&biology; AI&games) • Topics suggested – – Neural networks (perennial favorite ) Machine learning Multi-agent (sytems, learning) Perception/Robotics • Recitation session – 19 said they would be very interested – 21 said they would have some interest – (out of a total of 50) • Other questions?

Course Overview • • What is AI – Intelligent Agents Search (Problem Solving Agents) – Single agent search [Project 1] • Markov Decision Processes • • • Constraint Satisfaction Problems – Adversarial (multi-agent) search Logical Reasoning [Project 2] Reasoning with uncertainity Planning [Project 3] Learning [Project 4] We will explain the role of these topics in the context of designing intelligent agents

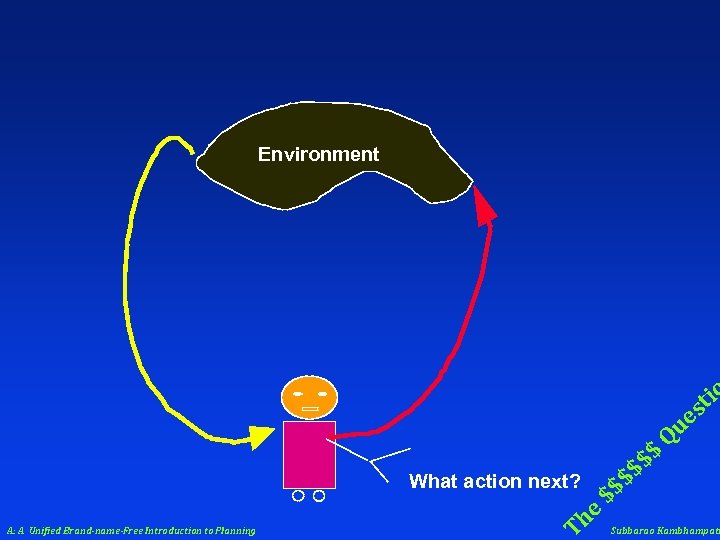

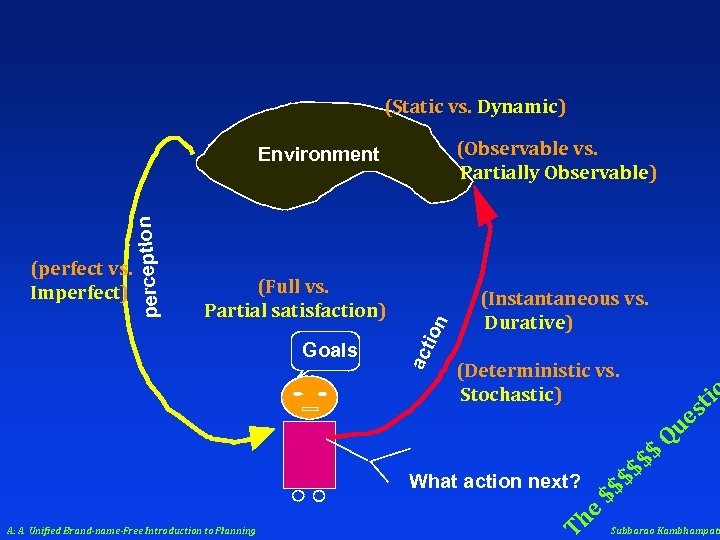

Environment tio s What action next? A: A Unified Brand-name-Free Introduction to Planning T he $$ $$ $ ue Q $ Subbarao Kambhampati

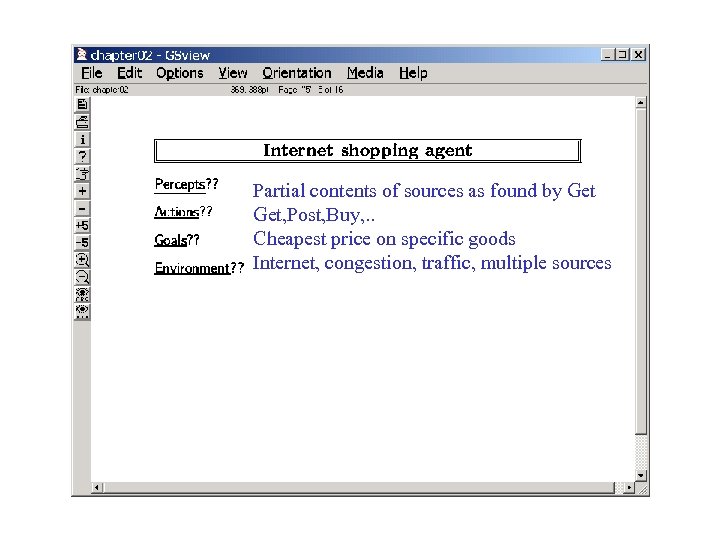

Partial contents of sources as found by Get, Post, Buy, . . Cheapest price on specific goods Internet, congestion, traffic, multiple sources

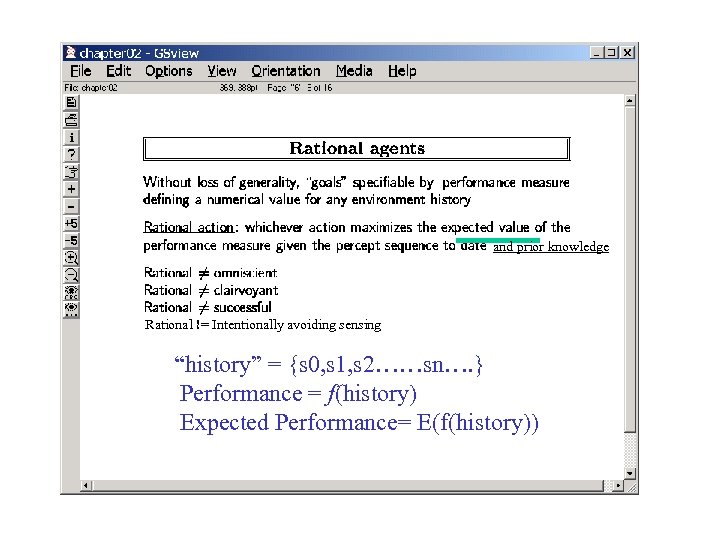

and prior knowledge Rational != Intentionally avoiding sensing “history” = {s 0, s 1, s 2……sn…. } Performance = f(history) Expected Performance= E(f(history))

(Static vs. Dynamic) (Observable vs. Partially Observable) Goals on (Full vs. Partial satisfaction) ac ti (perfect vs. Imperfect) perception Environment (Instantaneous vs. Durative) (Deterministic vs. Stochastic) What action next? A: A Unified Brand-name-Free Introduction to Planning T he $$ $$ $ tio s ue Q $ Subbarao Kambhampati

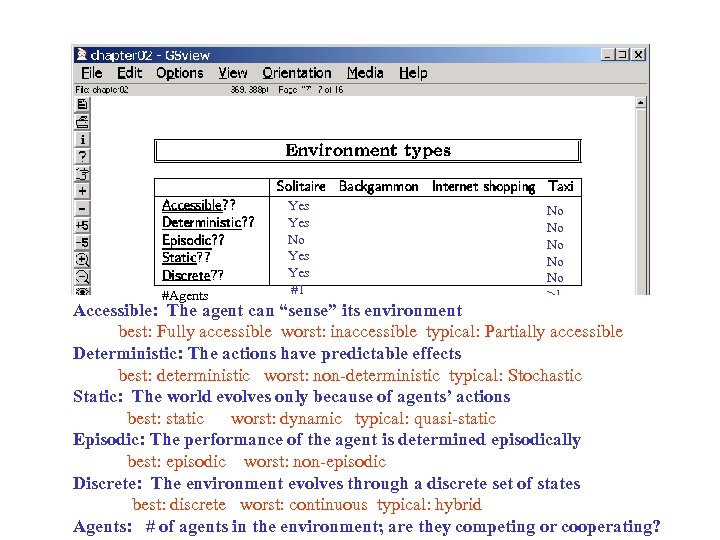

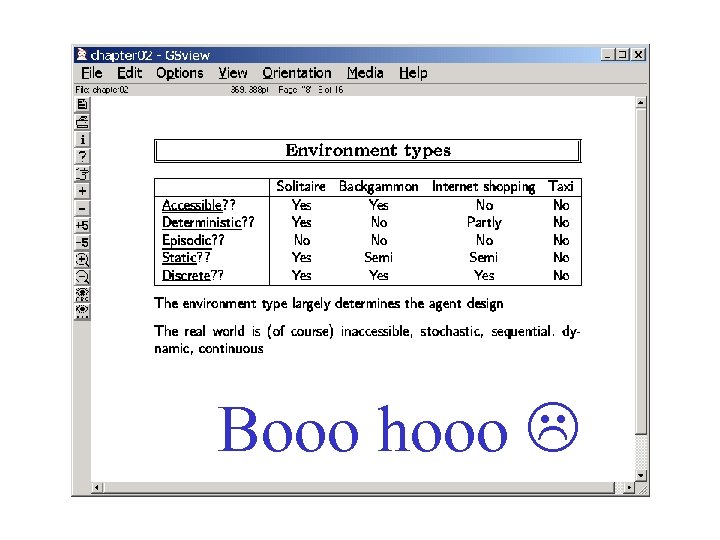

#Agents Yes No Yes #1 No No No >1 Accessible: The agent can “sense” its environment best: Fully accessible worst: inaccessible typical: Partially accessible Deterministic: The actions have predictable effects best: deterministic worst: non-deterministic typical: Stochastic Static: The world evolves only because of agents’ actions best: static worst: dynamic typical: quasi-static Episodic: The performance of the agent is determined episodically best: episodic worst: non-episodic Discrete: The environment evolves through a discrete set of states best: discrete worst: continuous typical: hybrid Agents: # of agents in the environment; are they competing or cooperating?

Booo hooo

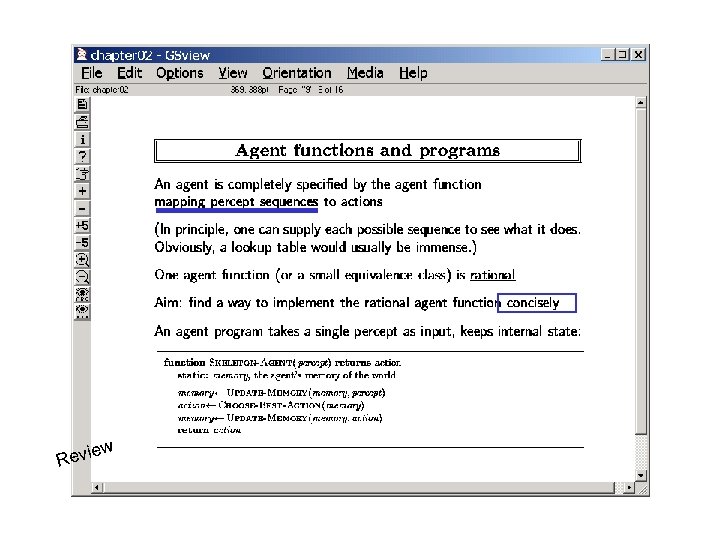

ew Revi

(Model-based reflex agents)

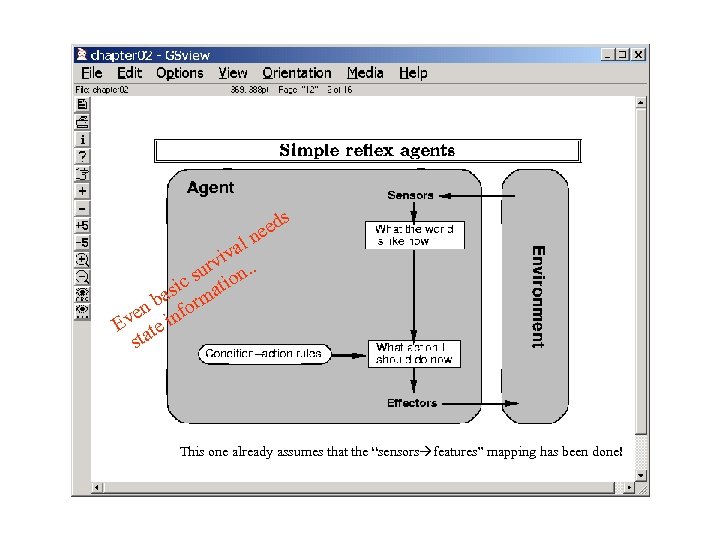

s eed n al v rvi n. . u c s atio si ba orm n ve e inf E t sta This one already assumes that the “sensors features” mapping has been done!

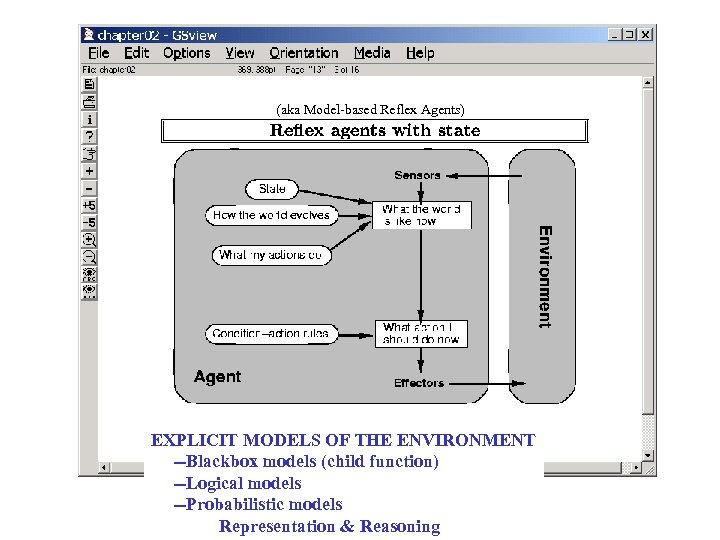

(aka Model-based Reflex Agents) EXPLICIT MODELS OF THE ENVIRONMENT --Blackbox models (child function) --Logical models --Probabilistic models Representation & Reasoning

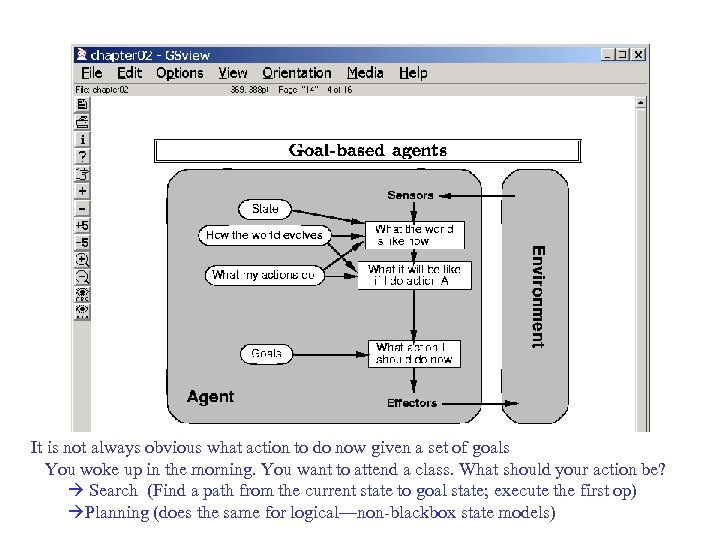

It is not always obvious what action to do now given a set of goals You woke up in the morning. You want to attend a class. What should your action be? Search (Find a path from the current state to goal state; execute the first op) Planning (does the same for logical—non-blackbox state models)

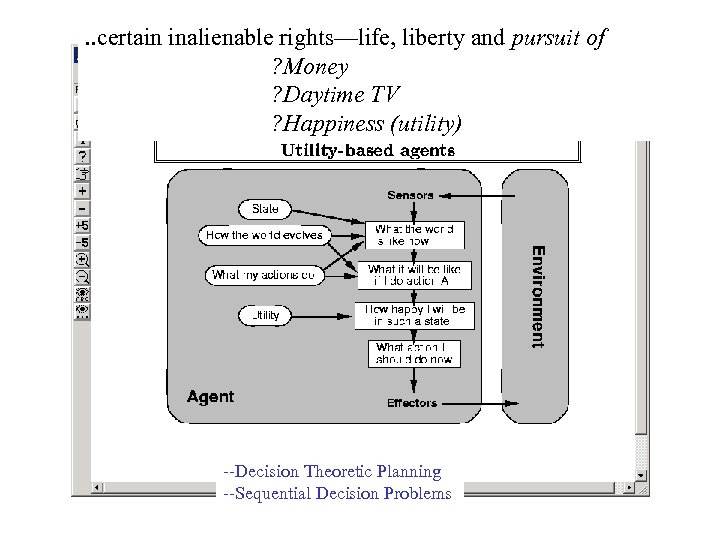

. . certain inalienable rights—life, liberty and pursuit of ? Money ? Daytime TV ? Happiness (utility) --Decision Theoretic Planning --Sequential Decision Problems

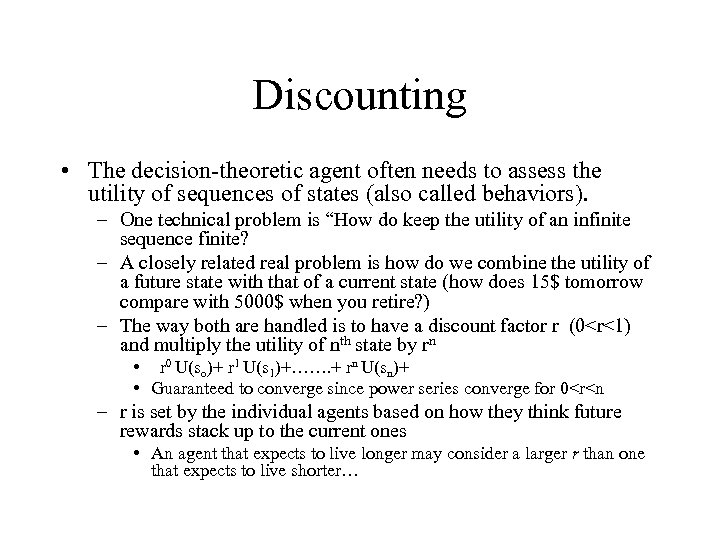

Discounting • The decision-theoretic agent often needs to assess the utility of sequences of states (also called behaviors). – One technical problem is “How do keep the utility of an infinite sequence finite? – A closely related real problem is how do we combine the utility of a future state with that of a current state (how does 15$ tomorrow compare with 5000$ when you retire? ) – The way both are handled is to have a discount factor r (0<r<1) and multiply the utility of nth state by rn • r 0 U(so)+ r 1 U(s 1)+……. + rn U(sn)+ • Guaranteed to converge since power series converge for 0<r<n – r is set by the individual agents based on how they think future rewards stack up to the current ones • An agent that expects to live longer may consider a larger r than one that expects to live shorter…

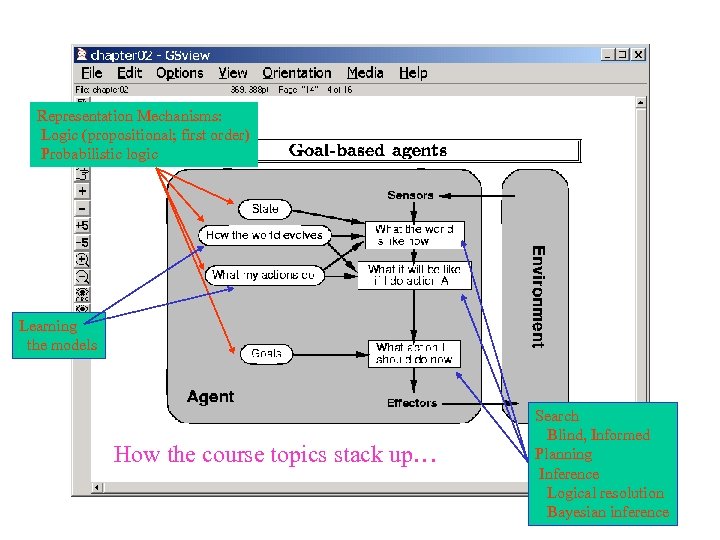

Representation Mechanisms: Logic (propositional; first order) Probabilistic logic Learning the models How the course topics stack up… Search Blind, Informed Planning Inference Logical resolution Bayesian inference

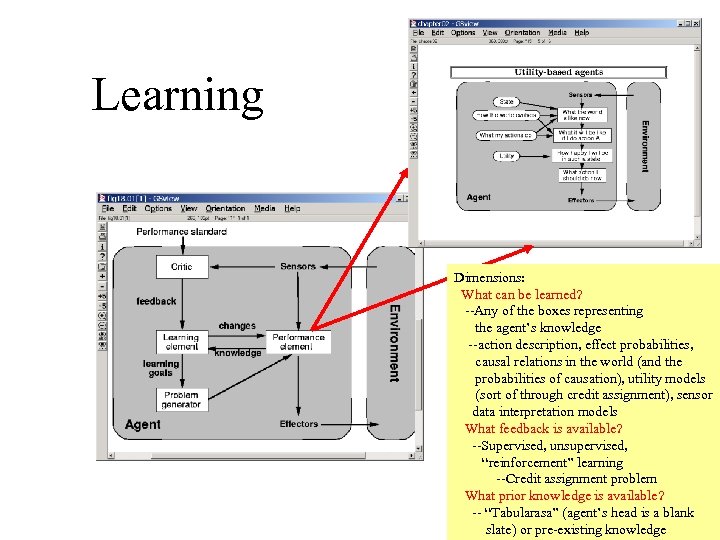

Learning Dimensions: What can be learned? --Any of the boxes representing the agent’s knowledge --action description, effect probabilities, causal relations in the world (and the probabilities of causation), utility models (sort of through credit assignment), sensor data interpretation models What feedback is available? --Supervised, unsupervised, “reinforcement” learning --Credit assignment problem What prior knowledge is available? -- “Tabularasa” (agent’s head is a blank slate) or pre-existing knowledge

f3d8ca6d14850c3264f75af4eece0d9a.ppt