d0197faa6158bdf87de6299d0c9cfaee.ppt

- Количество слайдов: 21

Online Summary Jean-Sebastien Graulich, Geneva o The heplnw 17 case o DAQ o CAM o Online Reconstruction o Data Base o Data Storage u Software also discussed in the same session, not reported here CM 26 March 2010 Jean-Sebastien Graulich Slide 1

Online Activities u u Main Issue: Breakdown of heplnw 17 Not discussed in Online session n u Collaboration Forum Revealed n n Lack of robustness, single point of failure Original misunderstanding: Private network <-> Protected subnet n u Need formally agreed support (from PPD or ISIS ? ) Consequence n n 3 months of relative chaos (bad) Start working on a general computing and network requirement document (good) CM 26 March 2010 Jean-Sebastien Graulich Slide 2

DAQ achievements u DAQ system upgrade is ready u Luminosity monitors integrated u Trigger system cabling optimized u DAQ and Trigger System Consolidation u Cabling documentation in progress u Progress in EMR front-end electronics CM 26 March 2010 Jean-Sebastien Graulich Slide 3

Event Building u The synchronization problem between the two crates persists n We incriminate the PCI/VME interface n It couldn’t be replaced because l l u all the spares used for the mirror DAQ system Massive failure of boards: 4 out of 10 boards had to be send for repair In the meanwhile n Online monitoring histogram allow to spot the problem n A VME and PC power cycle solve it temporarily n Shifter’s attention is required CM 26 March 2010 Jean-Sebastien Graulich Slide 4

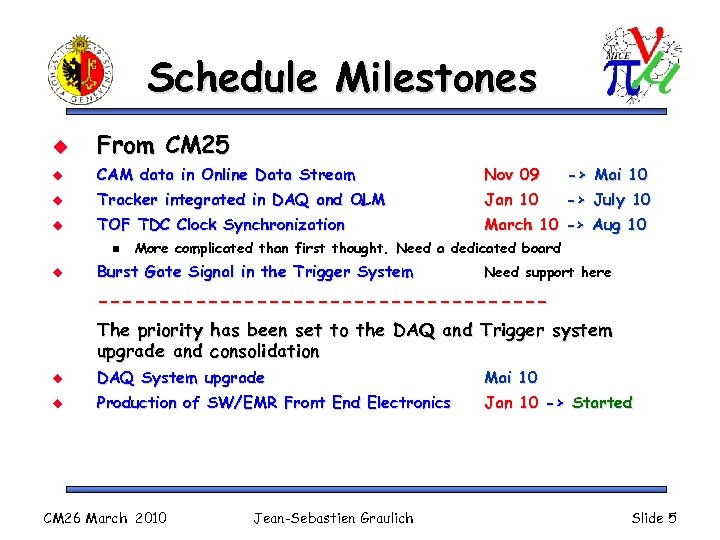

Schedule Milestones u From CM 25 u CAM data in Online Data Stream Nov 09 -> Mai 10 u Tracker integrated in DAQ and OLM Jan 10 -> July 10 u TOF TDC Clock Synchronization March 10 -> Aug 10 n u More complicated than first thought. Need a dedicated board Burst Gate Signal in the Trigger System Need support here ------------------The priority has been set to the DAQ and Trigger system upgrade and consolidation u DAQ System upgrade Mai 10 u Production of SW/EMR Front End Electronics Jan 10 -> Started CM 26 March 2010 Jean-Sebastien Graulich Slide 5

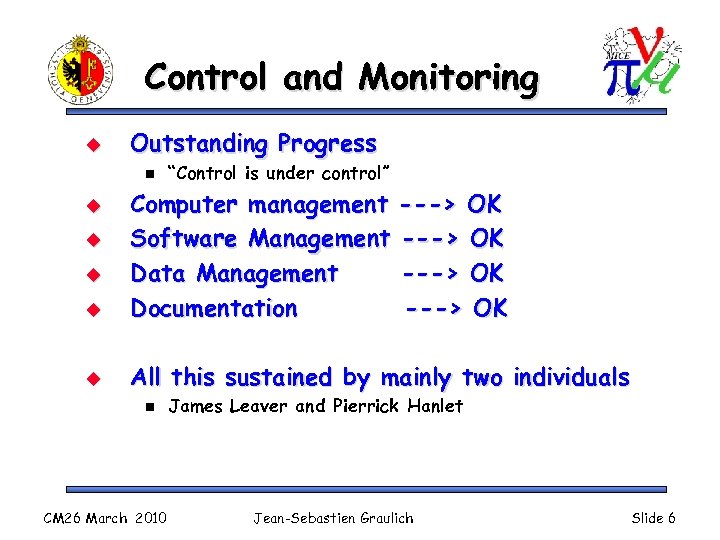

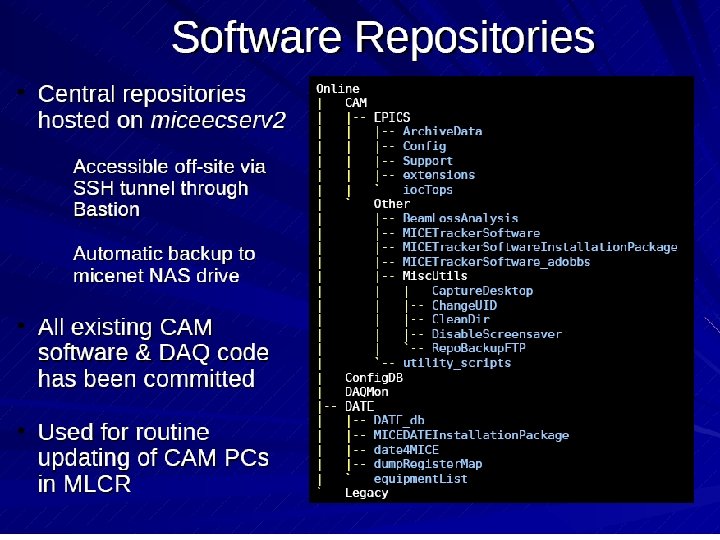

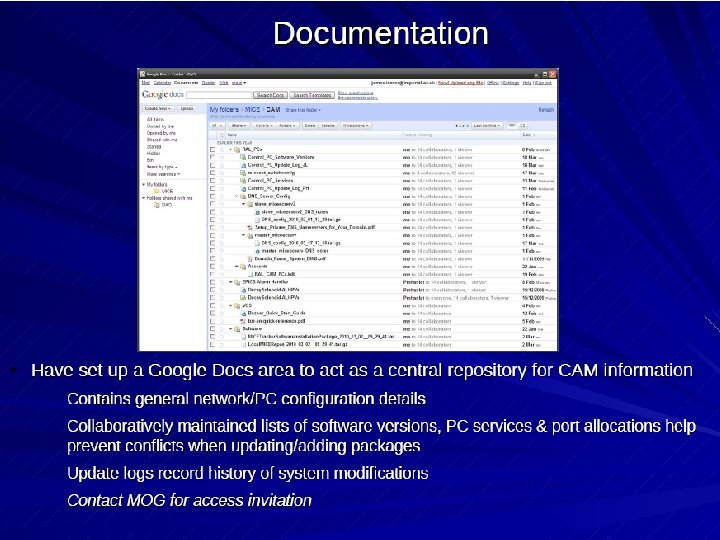

Control and Monitoring u Outstanding Progress n “Control is under control” u Computer management ---> OK Software Management ---> OK Data Management ---> OK Documentation ---> OK u All this sustained by mainly two individuals u u u n CM 26 March 2010 James Leaver and Pierrick Hanlet Jean-Sebastien Graulich Slide 6

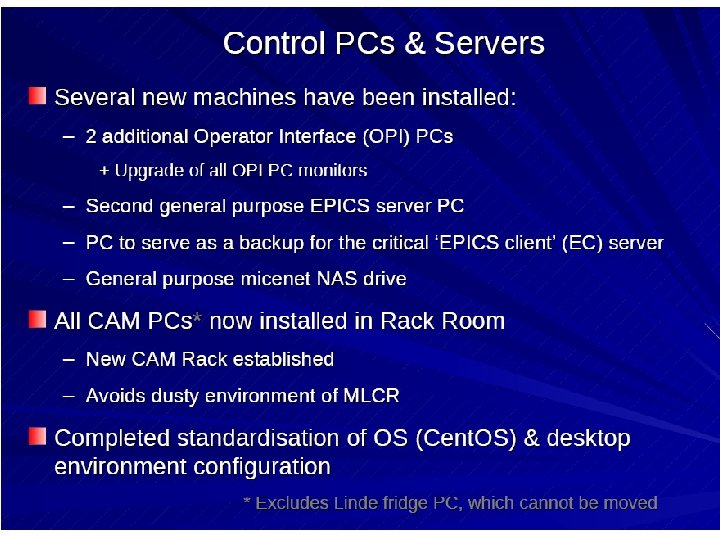

CM 26 March 2010 Jean-Sebastien Graulich Slide 7

CM 26 March 2010 Jean-Sebastien Graulich Slide 8

CM 26 March 2010 Jean-Sebastien Graulich Slide 9

CM 26 March 2010 Jean-Sebastien Graulich Slide 10

CM 26 March 2010 Jean-Sebastien Graulich Slide 11

CM 26 March 2010 Jean-Sebastien Graulich Slide 12

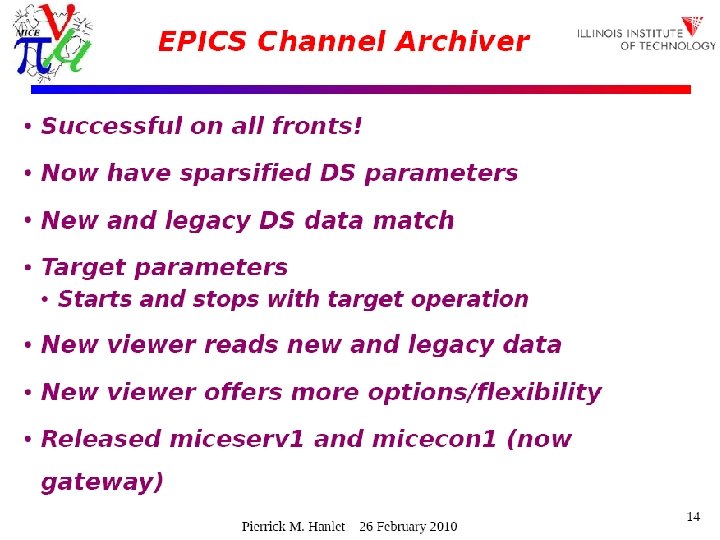

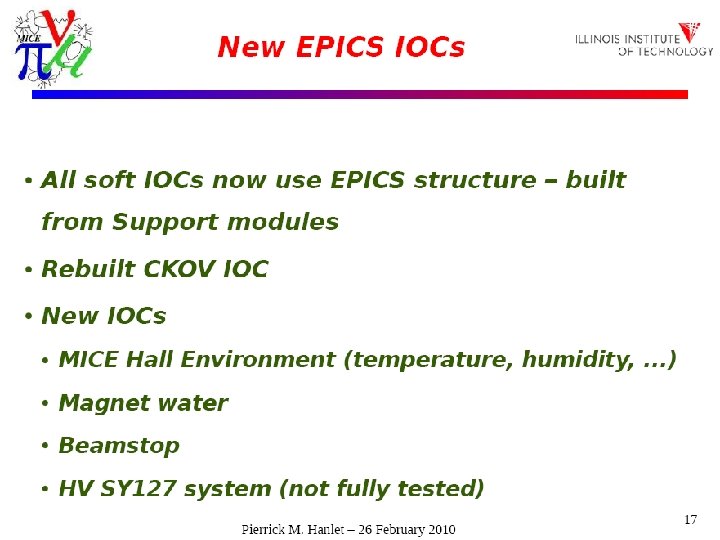

CAM u u Decay solenoid included in the alarm handler Linde control panel mirrored into EPICS n u Next: n n u Very useful for expert remote monitoring remote gateway and remote archive viewer new IOCs for new equipments The “Long, hard road to ramp up Ca. M infrastructure and knowledge base” has lead us to a point where we no longer foresee difficult hurdles to regularly add new IOCs, monitoring, alarm handling, and archiving… CM 26 March 2010 Jean-Sebastien Graulich Slide 13

CM 26 March 2010 Jean-Sebastien Graulich Slide 14

CM 26 March 2010 Jean-Sebastien Graulich Slide 15

CM 26 March 2010 Jean-Sebastien Graulich Slide 16

Data base u What it does n n u Record automatically the magnet settings, ‘ISIS settings’, target information and DAQ information n u u Superset of what is currently entered manually into the run configuration spreadsheet on the MICO page Allow retrieving these settings at the start of the run Also allow saving settings not attached to a run n u Store Configuration = Set values != read values Document hardware status - Geometry <-> G 4 MICE - Cabling - Alarm Handler settings, etc E. g. Pion at 300 Me. V/c EPICS client developed by James Leaver for this CM 26 March 2010 Jean-Sebastien Graulich Slide 17

Data base status u u u Progress was suspended in January due to failure of heplnw 17 Local copy of DB system under development in Glasgow, progress resumed The main server functionality requested has now been implemented Proper migration to Rutherford Lab scheduled (except cabling) u n u the bulk of outstanding work See David Forrest’s talk for details CM 26 March 2010 Jean-Sebastien Graulich Slide 18

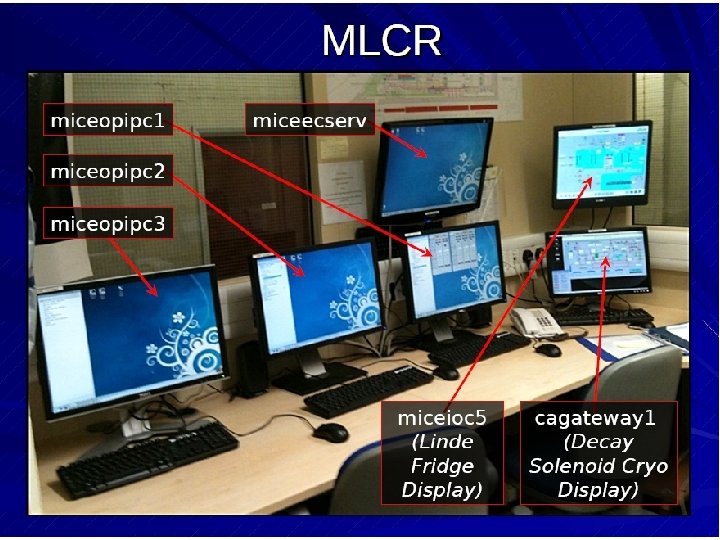

Data Storage u u u The only formally-agreed route for access to data (DAQ output) is via the Grid. The Grid Transfer Box (miceacq 05) is located in the MLCR. It will eventually run an autonomous agent that reads the data from the RAID system in the MLCR and uploads it to the Grid, in particular the CASTOR tape system at RAL In the meantime data IS being uploaded to the Grid, but on a manual, next-day timescale. CM 26 March 2010 Jean-Sebastien Graulich Slide 19

Data Access u u Henry Nebrensky presented a tutorial on how to access the data using the grid Open Issues n n n CM 26 March 2010 Permanent storage is on Tape at RAL Long access time (Robot loading the tape) We should foresee a place where actively used data is stored on disk Files on tape must be at least 200 MB… Eventually, someone on duty (MOM or shifter) will need to have a Grid certificate Once again we have a single (human) point of failure here Jean-Sebastien Graulich Slide 20

General Comment u u u The MOG still suffers for a loose leadership Compensated by the enthusiasm and commitment of the individuals inside the group Most members are on short term contracts n n CM 26 March 2010 Linda and Pierrick depend on NFS grant David has to write his Ph. D. James will leave in January 2011 I’ll leave on June 2011 Jean-Sebastien Graulich Slide 21

d0197faa6158bdf87de6299d0c9cfaee.ppt