d128911e7555e8893a64b8924a30bb10.ppt

- Количество слайдов: 39

Online Prediction of the Running Time Of Tasks Peter A. Dinda Department of Computer Science Northwestern University http: //www. cs. northwestern. edu/~pdinda

Online Prediction of the Running Time Of Tasks Peter A. Dinda Department of Computer Science Northwestern University http: //www. cs. northwestern. edu/~pdinda

Overview • Predict running time of task • Application supplies task size (0. 1 -10 seconds currently) • Task is compute-bound (current limit) • Prediction is a confidence interval • Expresses prediction error • Statistically valid decision-making in scheduler • Based on host load prediction • Homogenous Digital Unix hosts (current limit) » System is portable to many operating systems Everything in talk is publicly available 2

Overview • Predict running time of task • Application supplies task size (0. 1 -10 seconds currently) • Task is compute-bound (current limit) • Prediction is a confidence interval • Expresses prediction error • Statistically valid decision-making in scheduler • Based on host load prediction • Homogenous Digital Unix hosts (current limit) » System is portable to many operating systems Everything in talk is publicly available 2

Outline • • • Running time advisor Host load results Computing confidence intervals Performance evaluation Related work Conclusions 3

Outline • • • Running time advisor Host load results Computing confidence intervals Performance evaluation Related work Conclusions 3

A Universal Challenge in High Performance Distributed Applications Highly variable resource availability • • Shared resources No reservations No globally respected priorities Competition from other users - “background workload” Running time can vary drastically Adaptation example goal: soft real-time for interactivity example mechanism: server selection Performance queries 4

A Universal Challenge in High Performance Distributed Applications Highly variable resource availability • • Shared resources No reservations No globally respected priorities Competition from other users - “background workload” Running time can vary drastically Adaptation example goal: soft real-time for interactivity example mechanism: server selection Performance queries 4

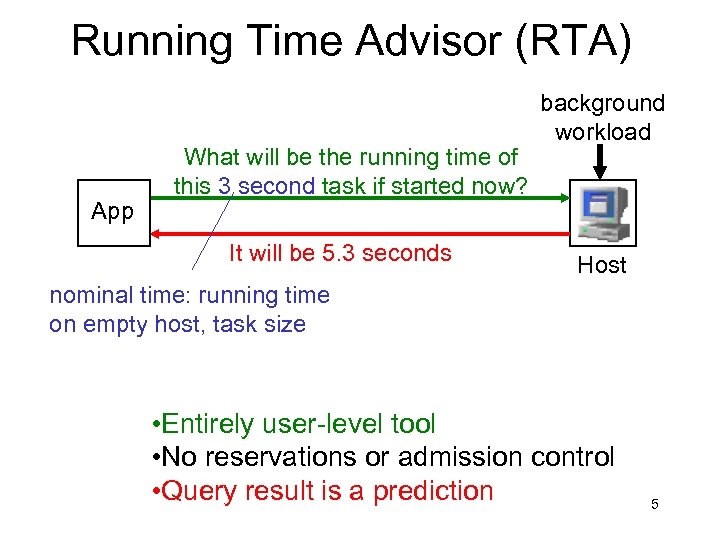

Running Time Advisor (RTA) App What will be the running time of this 3 second task if started now? It will be 5. 3 seconds background workload Host nominal time: running time on empty host, task size • Entirely user-level tool • No reservations or admission control • Query result is a prediction 5

Running Time Advisor (RTA) App What will be the running time of this 3 second task if started now? It will be 5. 3 seconds background workload Host nominal time: running time on empty host, task size • Entirely user-level tool • No reservations or admission control • Query result is a prediction 5

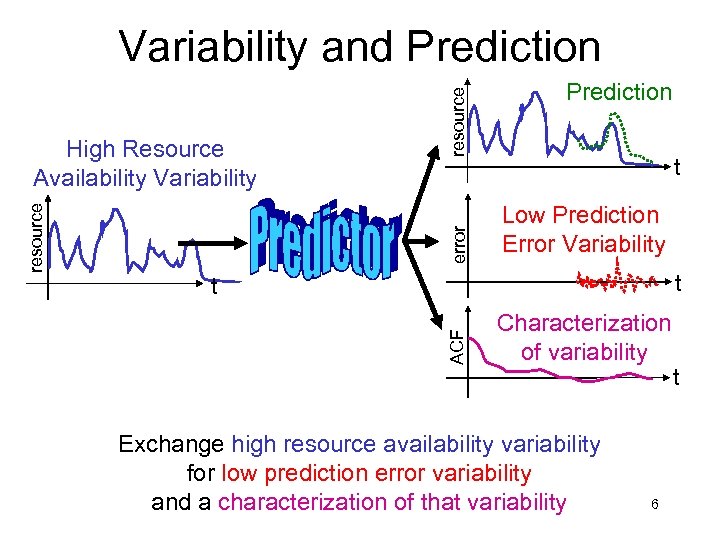

error Prediction t Low Prediction Error Variability t t ACF resource High Resource Availability Variability resource Variability and Prediction Characterization of variability Exchange high resource availability variability for low prediction error variability and a characterization of that variability 6 t

error Prediction t Low Prediction Error Variability t t ACF resource High Resource Availability Variability resource Variability and Prediction Characterization of variability Exchange high resource availability variability for low prediction error variability and a characterization of that variability 6 t

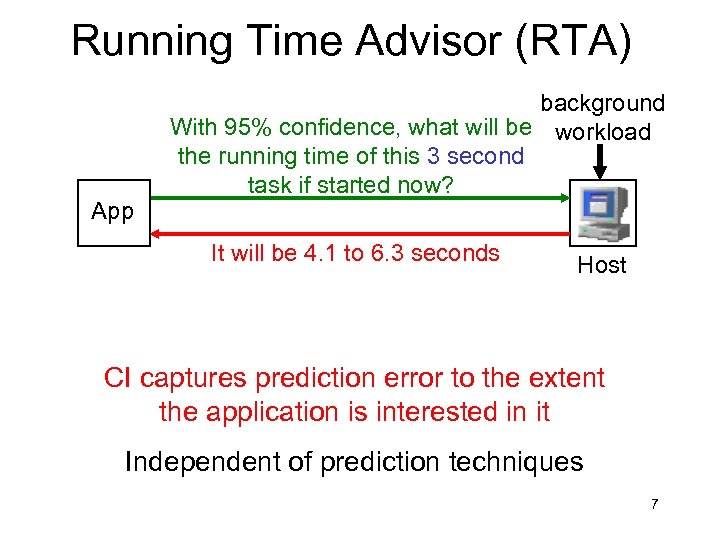

Running Time Advisor (RTA) App background With 95% confidence, what will be workload the running time of this 3 second task if started now? It will be 4. 1 to 6. 3 seconds Host CI captures prediction error to the extent the application is interested in it Independent of prediction techniques 7

Running Time Advisor (RTA) App background With 95% confidence, what will be workload the running time of this 3 second task if started now? It will be 4. 1 to 6. 3 seconds Host CI captures prediction error to the extent the application is interested in it Independent of prediction techniques 7

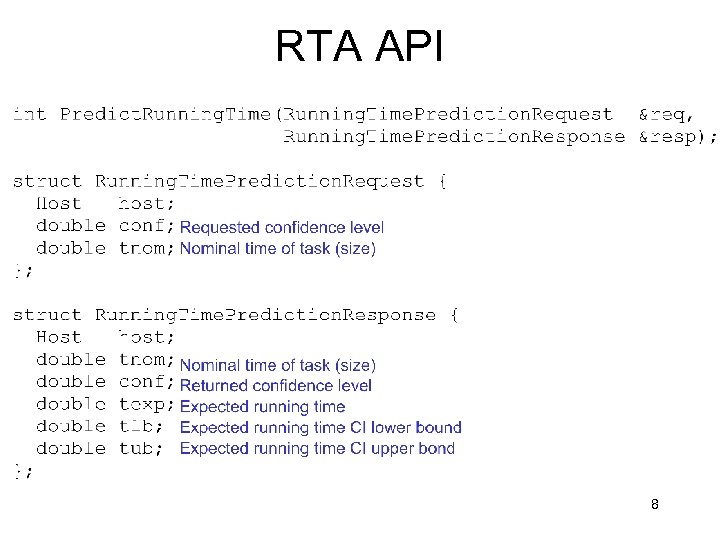

RTA API 8

RTA API 8

Outline • • • Running time advisor Host load results Computing confidence intervals Performance evaluation Related work Conclusions 9

Outline • • • Running time advisor Host load results Computing confidence intervals Performance evaluation Related work Conclusions 9

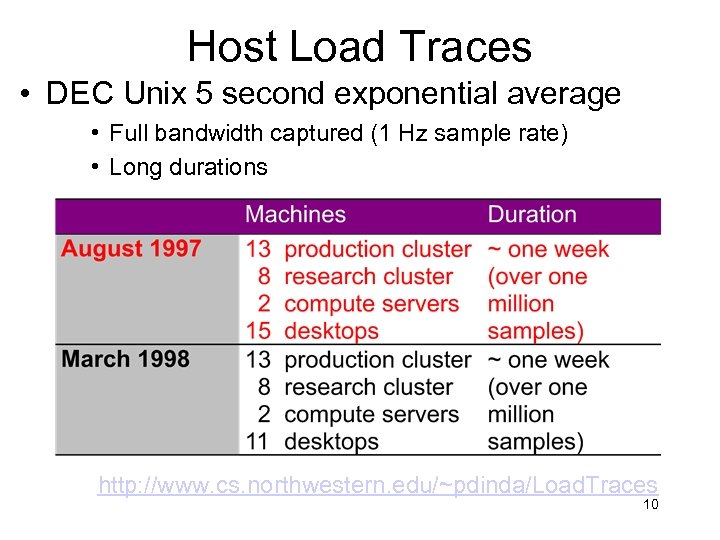

Host Load Traces • DEC Unix 5 second exponential average • Full bandwidth captured (1 Hz sample rate) • Long durations http: //www. cs. northwestern. edu/~pdinda/Load. Traces 10

Host Load Traces • DEC Unix 5 second exponential average • Full bandwidth captured (1 Hz sample rate) • Long durations http: //www. cs. northwestern. edu/~pdinda/Load. Traces 10

Host Load Properties • Self-similarity – long-range dependence • Epochal behavior – non-stationarity • Complex correlation structure [LCR ’ 98, Scientific Programming, 3: 4, 1999] 11

Host Load Properties • Self-similarity – long-range dependence • Epochal behavior – non-stationarity • Complex correlation structure [LCR ’ 98, Scientific Programming, 3: 4, 1999] 11

Host Load Prediction • Fully randomized study on traces • MEAN, LAST, AR, MA, ARIMA, ARFIMA models • AR(16) models most appropriate • Covariance matrix for prediction errors • Low overhead: <1% CPU [HPDC ’ 99, Cluster Computing, 3: 4, 2000] 12

Host Load Prediction • Fully randomized study on traces • MEAN, LAST, AR, MA, ARIMA, ARFIMA models • AR(16) models most appropriate • Covariance matrix for prediction errors • Low overhead: <1% CPU [HPDC ’ 99, Cluster Computing, 3: 4, 2000] 12

RPS Toolkit • Extensible toolkit for implementing resource signal prediction systems • Easy “buy-in” for users • C++ and sockets (no threads) • Prebuilt prediction components • Libraries (sensors, time series, communication) • Users have bought in • Incorporated in CMU Remos, BBN Qu. O [CMU-CS-99 -138] http: //www. cs. northwestern. edu/~RPS 13

RPS Toolkit • Extensible toolkit for implementing resource signal prediction systems • Easy “buy-in” for users • C++ and sockets (no threads) • Prebuilt prediction components • Libraries (sensors, time series, communication) • Users have bought in • Incorporated in CMU Remos, BBN Qu. O [CMU-CS-99 -138] http: //www. cs. northwestern. edu/~RPS 13

Outline • • • Running time advisor Host load results Computing confidence intervals Performance evaluation Related work Conclusions 14

Outline • • • Running time advisor Host load results Computing confidence intervals Performance evaluation Related work Conclusions 14

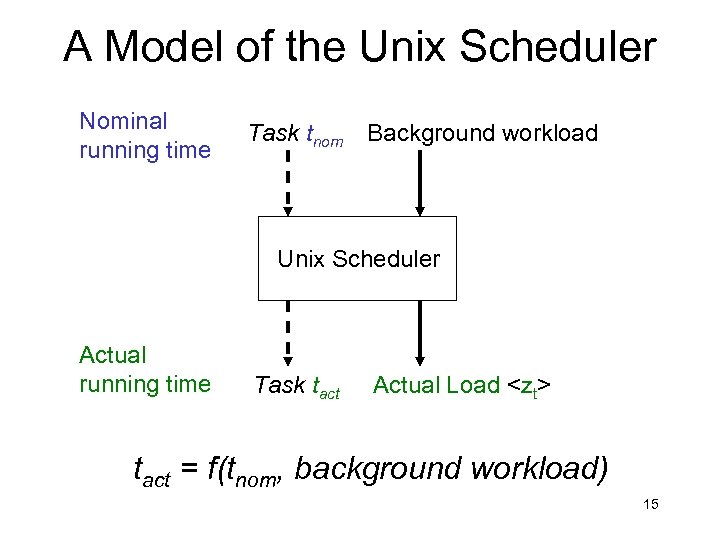

A Model of the Unix Scheduler Nominal running time Task tnom Background workload Unix Scheduler Actual running time Task tact Actual Load

A Model of the Unix Scheduler Nominal running time Task tnom Background workload Unix Scheduler Actual running time Task tact Actual Load

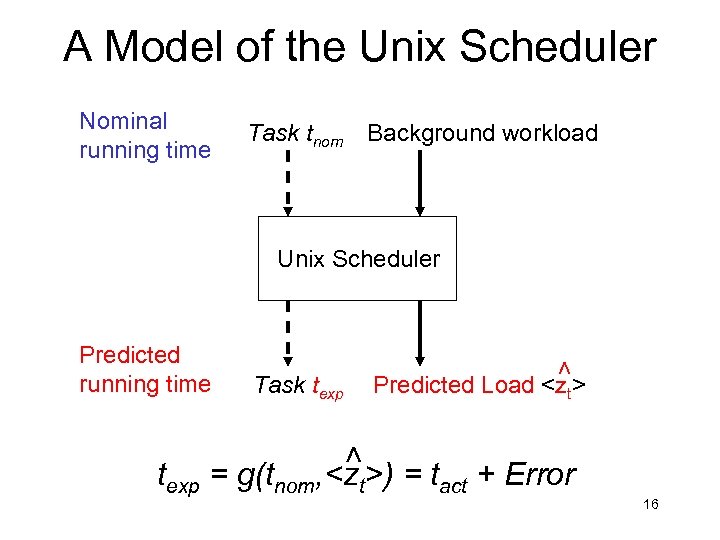

A Model of the Unix Scheduler Nominal running time Task tnom Background workload Task texp Predicted Load

A Model of the Unix Scheduler Nominal running time Task tnom Background workload Task texp Predicted Load

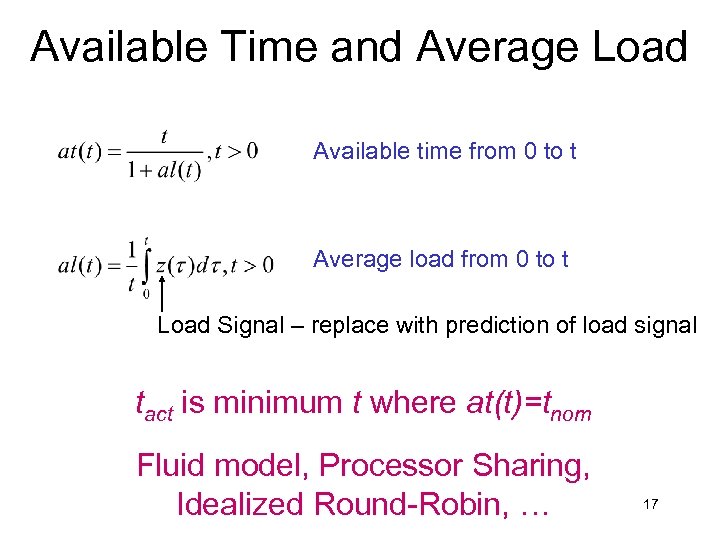

Available Time and Average Load Available time from 0 to t Average load from 0 to t Load Signal – replace with prediction of load signal tact is minimum t where at(t)=tnom Fluid model, Processor Sharing, Idealized Round-Robin, … 17

Available Time and Average Load Available time from 0 to t Average load from 0 to t Load Signal – replace with prediction of load signal tact is minimum t where at(t)=tnom Fluid model, Processor Sharing, Idealized Round-Robin, … 17

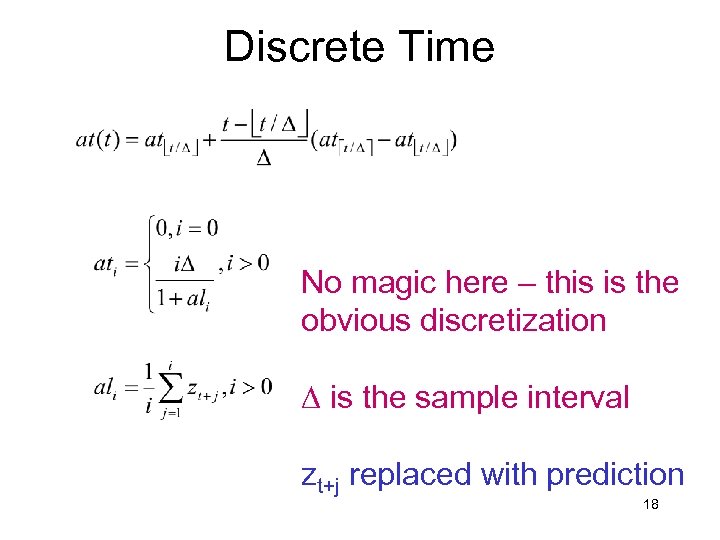

Discrete Time No magic here – this is the obvious discretization D is the sample interval zt+j replaced with prediction 18

Discrete Time No magic here – this is the obvious discretization D is the sample interval zt+j replaced with prediction 18

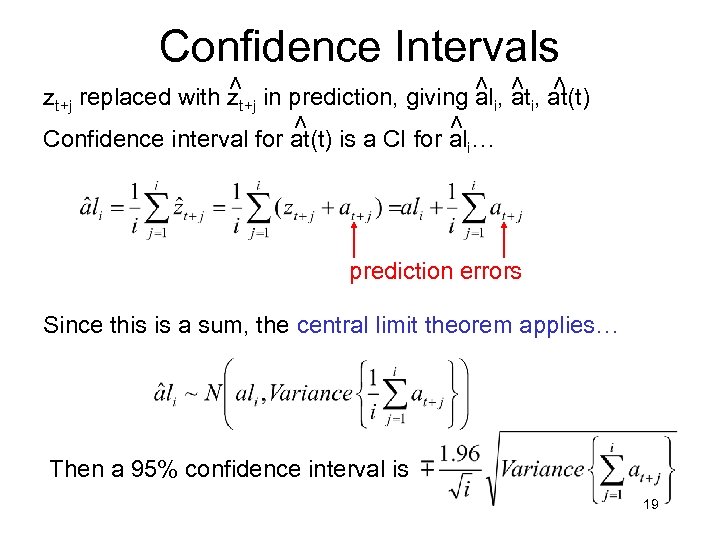

> > Confidence Intervals > > zt+j replaced with zt+j in prediction, giving ali, at(t) Confidence interval for at(t) is a CI for ali… prediction errors Since this is a sum, the central limit theorem applies… Then a 95% confidence interval is 19

> > Confidence Intervals > > zt+j replaced with zt+j in prediction, giving ali, at(t) Confidence interval for at(t) is a CI for ali… prediction errors Since this is a sum, the central limit theorem applies… Then a 95% confidence interval is 19

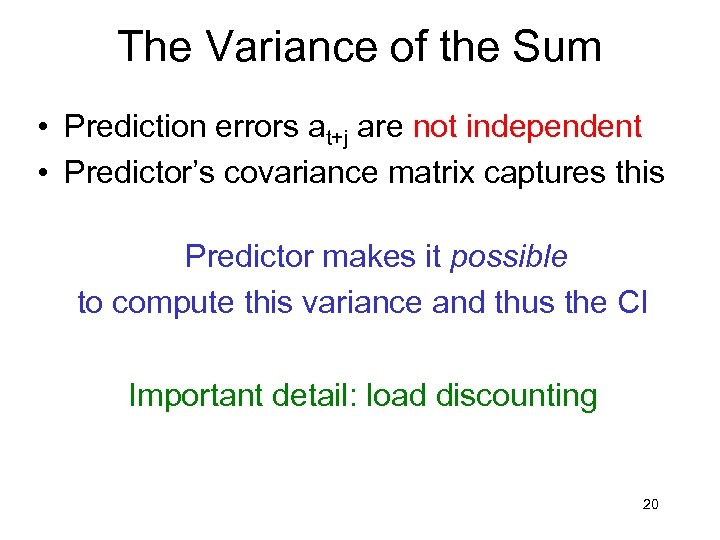

The Variance of the Sum • Prediction errors at+j are not independent • Predictor’s covariance matrix captures this Predictor makes it possible to compute this variance and thus the CI Important detail: load discounting 20

The Variance of the Sum • Prediction errors at+j are not independent • Predictor’s covariance matrix captures this Predictor makes it possible to compute this variance and thus the CI Important detail: load discounting 20

Outline • • • Running time advisor Host load results Computing confidence intervals Performance evaluation Related work Conclusions 21

Outline • • • Running time advisor Host load results Computing confidence intervals Performance evaluation Related work Conclusions 21

Experimental Setup • Environment – Alphastation 255 s, Digital Unix 4. 0 – Workload: host load trace playback [LCR 2000] – Prediction system on each host • AR(16), MEAN, LAST • Tasks – Nominal time ~ U(0. 1, 10) seconds – Interarrival time ~ U(5, 15) seconds – 95 % confidence level • Methodology – Predict CIs – Run task and measure http: //www. cs. northwestern. edu/~pdinda/Load. Traces/playload 22

Experimental Setup • Environment – Alphastation 255 s, Digital Unix 4. 0 – Workload: host load trace playback [LCR 2000] – Prediction system on each host • AR(16), MEAN, LAST • Tasks – Nominal time ~ U(0. 1, 10) seconds – Interarrival time ~ U(5, 15) seconds – 95 % confidence level • Methodology – Predict CIs – Run task and measure http: //www. cs. northwestern. edu/~pdinda/Load. Traces/playload 22

Metrics • Coverage • Fraction of testcases within confidence interval • Ideally should equal the target 95 % • Span • Average length of confidence interval • Ideally as short as possible • R 2 between texp and tact 23

Metrics • Coverage • Fraction of testcases within confidence interval • Ideally should equal the target 95 % • Span • Average length of confidence interval • Ideally as short as possible • R 2 between texp and tact 23

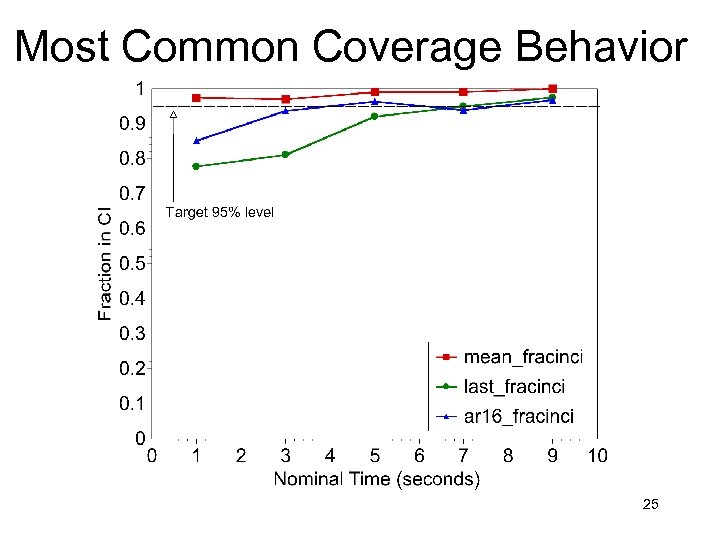

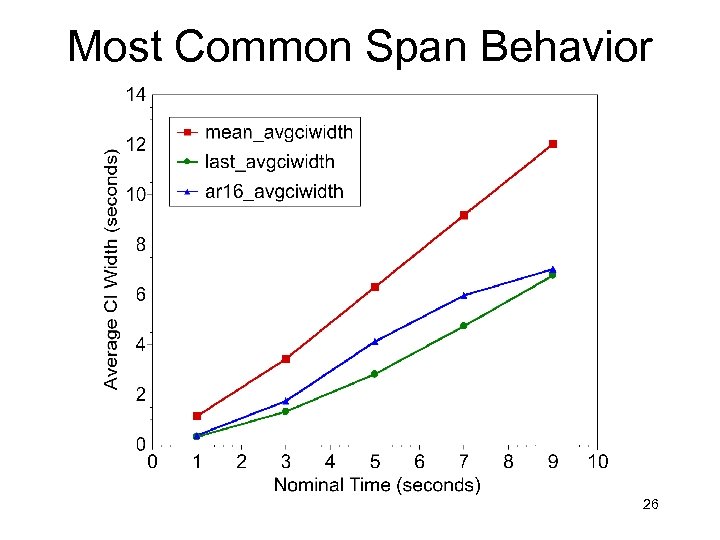

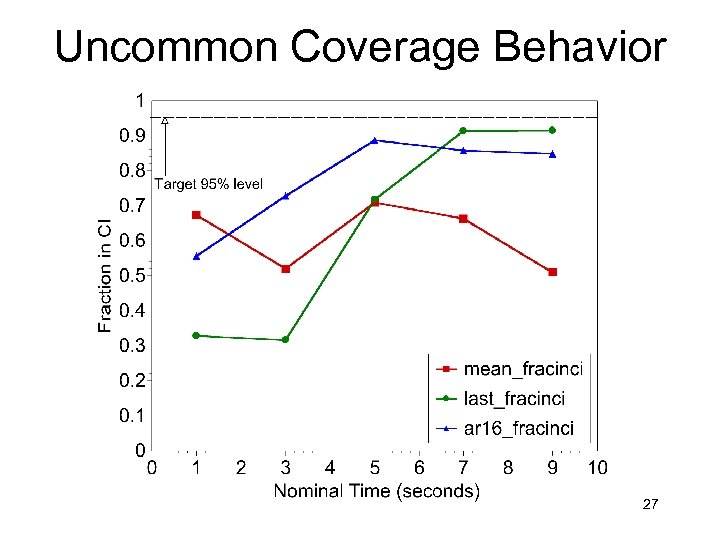

General Picture of Results • Five classes of behavior • I’ll show you two • RTA Works • Coverage near 95% in most cases is possible • Predictor quality matters • Better predictors lead to smaller spans on lightly loaded hosts and to correct coverage on heavily loaded hosts • AR(16) >= LAST >= MEAN • Performance is slightly dependent on nominal time 24

General Picture of Results • Five classes of behavior • I’ll show you two • RTA Works • Coverage near 95% in most cases is possible • Predictor quality matters • Better predictors lead to smaller spans on lightly loaded hosts and to correct coverage on heavily loaded hosts • AR(16) >= LAST >= MEAN • Performance is slightly dependent on nominal time 24

Most Common Coverage Behavior 25

Most Common Coverage Behavior 25

Most Common Span Behavior 26

Most Common Span Behavior 26

Uncommon Coverage Behavior 27

Uncommon Coverage Behavior 27

Uncommon Span Behavior 28

Uncommon Span Behavior 28

![Related Work • Distributed interactive applications • Quake. Viz/ Dv, Aeschlimann [PDPTA’ 99] • Related Work • Distributed interactive applications • Quake. Viz/ Dv, Aeschlimann [PDPTA’ 99] •](https://present5.com/presentation/d128911e7555e8893a64b8924a30bb10/image-29.jpg) Related Work • Distributed interactive applications • Quake. Viz/ Dv, Aeschlimann [PDPTA’ 99] • Quality of service • Qu. O, Zinky, Bakken, Schantz [TPOS, April 97] • QRAM, Rajkumar, et al [RTSS’ 97] • Distributed soft real-time systems • Lawrence, Jensen [assorted] • Workload studies for load balancing • Mutka, et al [Perf. Eval ‘ 91] • Harchol-Balter, et al [SIGMETRICS ‘ 96] • Resource signal measurement systems • Remos [HPDC’ 98] • Network Weather Service [HPDC‘ 97, HPDC’ 99] • Host load prediction • Wolski, et al [HPDC’ 99] (NWS) • Samadani, et al [PODC’ 95] • Hailperin [‘ 93] • Application-level scheduling • Berman, et al [HPDC’ 96] • Stochastic Scheduling, Schopf [Supercomputing ‘ 99] 29

Related Work • Distributed interactive applications • Quake. Viz/ Dv, Aeschlimann [PDPTA’ 99] • Quality of service • Qu. O, Zinky, Bakken, Schantz [TPOS, April 97] • QRAM, Rajkumar, et al [RTSS’ 97] • Distributed soft real-time systems • Lawrence, Jensen [assorted] • Workload studies for load balancing • Mutka, et al [Perf. Eval ‘ 91] • Harchol-Balter, et al [SIGMETRICS ‘ 96] • Resource signal measurement systems • Remos [HPDC’ 98] • Network Weather Service [HPDC‘ 97, HPDC’ 99] • Host load prediction • Wolski, et al [HPDC’ 99] (NWS) • Samadani, et al [PODC’ 95] • Hailperin [‘ 93] • Application-level scheduling • Berman, et al [HPDC’ 96] • Stochastic Scheduling, Schopf [Supercomputing ‘ 99] 29

Conclusions • • Predict running time of compute-bound task Based on host load prediction Prediction is a confidence interval Confidence interval algorithm • Covariance matrix • Load discounting • Effective for domain • Digital Unix, 0. 1 -10 second tasks, 5 -15 second interarrival • Extensions in progress 30

Conclusions • • Predict running time of compute-bound task Based on host load prediction Prediction is a confidence interval Confidence interval algorithm • Covariance matrix • Load discounting • Effective for domain • Digital Unix, 0. 1 -10 second tasks, 5 -15 second interarrival • Extensions in progress 30

For More Information • All software and traces are available • RPS + RTA + RTSA http: //www. cs. northwestern. edu/~RPS • Load Traces and playback http: //www. cs. northwestern. edu/~pdinda/Load. Traces • Prescience Lab • Peter Dinda, Jason Skicewicz, Dong Lu • http: //www. cs. northwestern. edu/~plab 31

For More Information • All software and traces are available • RPS + RTA + RTSA http: //www. cs. northwestern. edu/~RPS • Load Traces and playback http: //www. cs. northwestern. edu/~pdinda/Load. Traces • Prescience Lab • Peter Dinda, Jason Skicewicz, Dong Lu • http: //www. cs. northwestern. edu/~plab 31

Outline • • • Running time advisor Host load results Computing confidence intervals Performance evaluation Related work Conclusions 32

Outline • • • Running time advisor Host load results Computing confidence intervals Performance evaluation Related work Conclusions 32

A Universal Problem Which host should the application send the task to so that its running time is appropriate? Example: Real-time Task Known resource requirements ? What will the running time be if I. . . 33

A Universal Problem Which host should the application send the task to so that its running time is appropriate? Example: Real-time Task Known resource requirements ? What will the running time be if I. . . 33

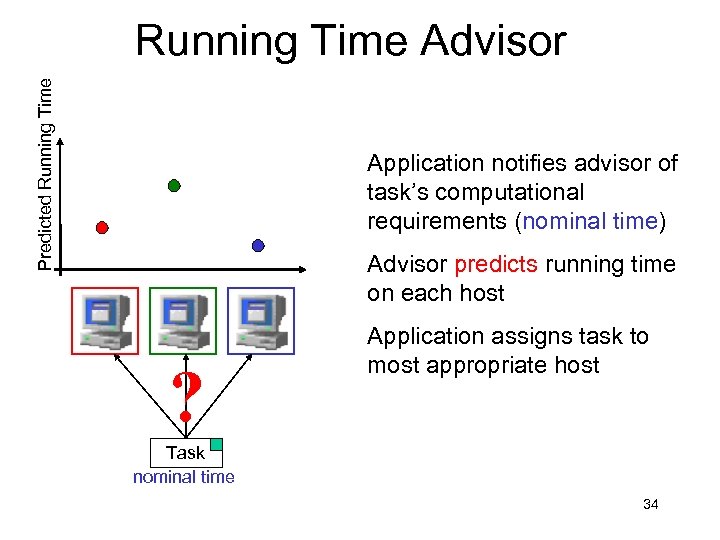

Predicted Running Time Advisor Application notifies advisor of task’s computational requirements (nominal time) Advisor predicts running time on each host ? Application assigns task to most appropriate host Task nominal time 34

Predicted Running Time Advisor Application notifies advisor of task’s computational requirements (nominal time) Advisor predicts running time on each host ? Application assigns task to most appropriate host Task nominal time 34

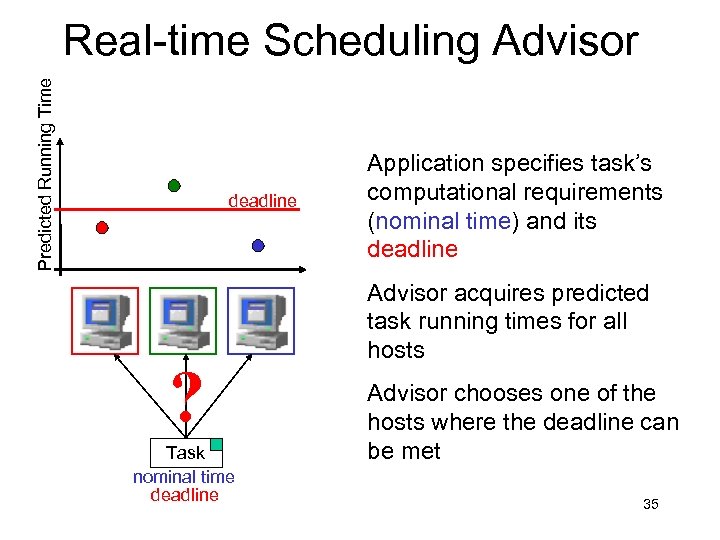

Predicted Running Time Real-time Scheduling Advisor deadline ? Task nominal time deadline Application specifies task’s computational requirements (nominal time) and its deadline Advisor acquires predicted task running times for all hosts Advisor chooses one of the hosts where the deadline can be met 35

Predicted Running Time Real-time Scheduling Advisor deadline ? Task nominal time deadline Application specifies task’s computational requirements (nominal time) and its deadline Advisor acquires predicted task running times for all hosts Advisor chooses one of the hosts where the deadline can be met 35

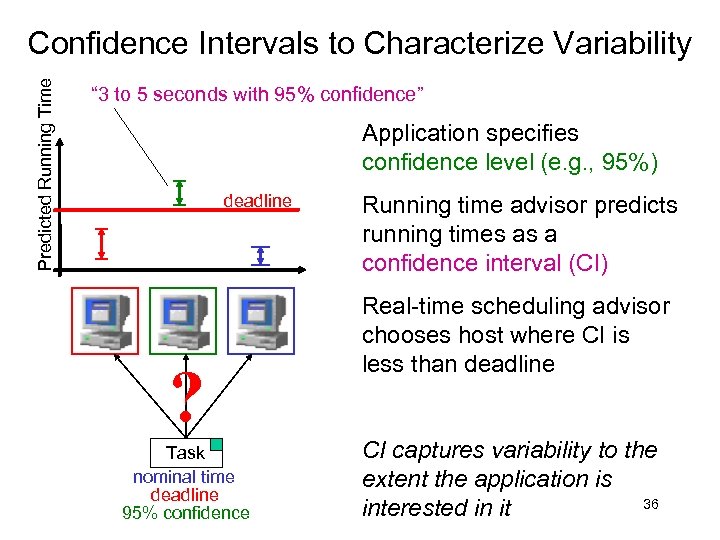

Predicted Running Time Confidence Intervals to Characterize Variability “ 3 to 5 seconds with 95% confidence” Application specifies confidence level (e. g. , 95%) deadline ? Task nominal time deadline 95% confidence Running time advisor predicts running times as a confidence interval (CI) Real-time scheduling advisor chooses host where CI is less than deadline CI captures variability to the extent the application is 36 interested in it

Predicted Running Time Confidence Intervals to Characterize Variability “ 3 to 5 seconds with 95% confidence” Application specifies confidence level (e. g. , 95%) deadline ? Task nominal time deadline 95% confidence Running time advisor predicts running times as a confidence interval (CI) Real-time scheduling advisor chooses host where CI is less than deadline CI captures variability to the extent the application is 36 interested in it

Prototype System This Paper 37

Prototype System This Paper 37

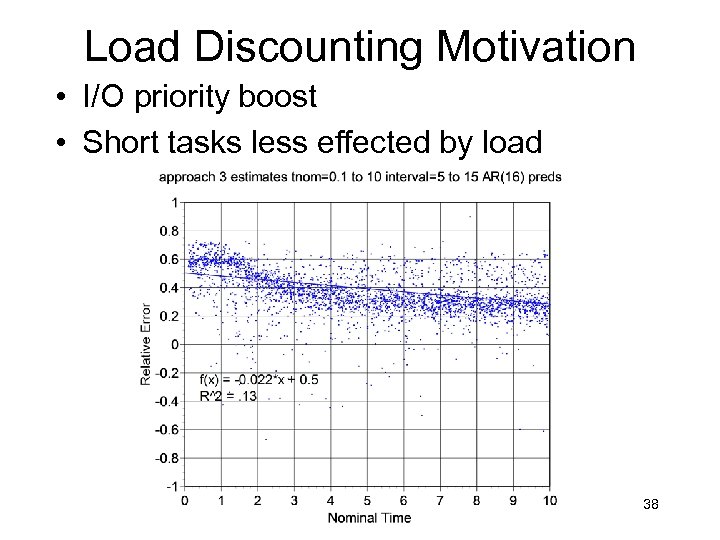

Load Discounting Motivation • I/O priority boost • Short tasks less effected by load 38

Load Discounting Motivation • I/O priority boost • Short tasks less effected by load 38

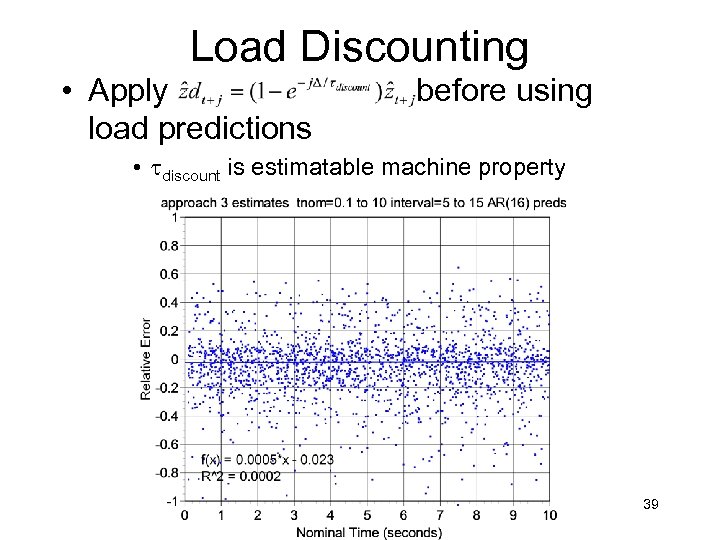

Load Discounting • Apply load predictions before using • tdiscount is estimatable machine property 39

Load Discounting • Apply load predictions before using • tdiscount is estimatable machine property 39