ee1a9a8f2a5effe4fd90de7eeb5baba0.ppt

- Количество слайдов: 31

Online Detection of Utility Cloud Anomalies Using Metric Distributions Author : Chengwei Wang, Vanish Talwar*, Karsten Schwan, Parthasarathy Ranganathan* Conference: IEEE 2010 Network Operations and Management Symposium (NOMS) Advisor: Yuh-Jye Lee Reporter: Yi-Hsiang Yang Email: M 9915016@mail. ntust. edu. tw 2011/06/09 1

Online Detection of Utility Cloud Anomalies Using Metric Distributions Author : Chengwei Wang, Vanish Talwar*, Karsten Schwan, Parthasarathy Ranganathan* Conference: IEEE 2010 Network Operations and Management Symposium (NOMS) Advisor: Yuh-Jye Lee Reporter: Yi-Hsiang Yang Email: M 9915016@mail. ntust. edu. tw 2011/06/09 1

Outline Introduction Problem Description Eb. AT Overview Entropy Time Series Evaluation With Distributed Online Service Discussion: Using Hadoop Applications Conclusions And Future Work 2011/06/09 2

Outline Introduction Problem Description Eb. AT Overview Entropy Time Series Evaluation With Distributed Online Service Discussion: Using Hadoop Applications Conclusions And Future Work 2011/06/09 2

Introduction • The online detection of anomalies • Detection must operate automatically • No need for prior knowledge about normal or anomalous behaviors • Apply to multiple levels of abstraction and subsystems and in large-scale systems 2011/06/09 3

Introduction • The online detection of anomalies • Detection must operate automatically • No need for prior knowledge about normal or anomalous behaviors • Apply to multiple levels of abstraction and subsystems and in large-scale systems 2011/06/09 3

Introduction • Eb. AT-Entropy-based Anomaly Testing • Eb. AT analyzes metric distributions rather than individual metric thresholds • Use entropy as a measurement 2011/06/09 4

Introduction • Eb. AT-Entropy-based Anomaly Testing • Eb. AT analyzes metric distributions rather than individual metric thresholds • Use entropy as a measurement 2011/06/09 4

Introduction Use online tools Spike Detecting (visually or using time series analysis) Signal Processing Subspace Method – to identify anomalies in entropy time series in general Detect anomalies Not well understood (i. e. , no prior models) Have not been experienced previously 2011/06/09 5

Introduction Use online tools Spike Detecting (visually or using time series analysis) Signal Processing Subspace Method – to identify anomalies in entropy time series in general Detect anomalies Not well understood (i. e. , no prior models) Have not been experienced previously 2011/06/09 5

Contributions A novel metric distribution-based method for anomaly detection using entropy A hierarchical aggregation of entropy time series via multiple analytical methods An evaluation with two typical utility cloud scenarios Outperforms threshold-based methods on average 57. 4% in F 1 score 59. 3% on average in false alarm rate with a ’near-optimum’ threshold-based method 2011/06/09 6

Contributions A novel metric distribution-based method for anomaly detection using entropy A hierarchical aggregation of entropy time series via multiple analytical methods An evaluation with two typical utility cloud scenarios Outperforms threshold-based methods on average 57. 4% in F 1 score 59. 3% on average in false alarm rate with a ’near-optimum’ threshold-based method 2011/06/09 6

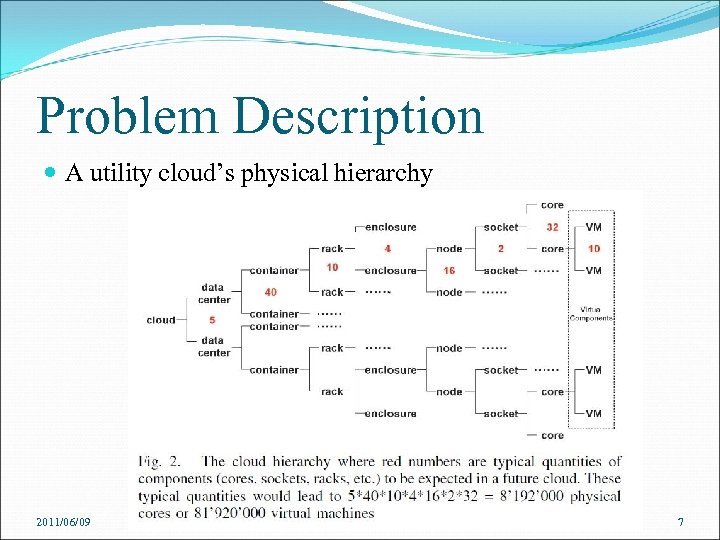

Problem Description A utility cloud’s physical hierarchy 2011/06/09 7

Problem Description A utility cloud’s physical hierarchy 2011/06/09 7

Problem Description - Utility cloud’s Exascale 10 M physical cores, there may be up to 10 virtual machines per node (or per core) Dynamism Utility clouds serve as a general computing facility Heterogeneous applications included Applications tend to have different workload patterns Online management of virtual machines make a utility cloud more dynamic 2011/06/09 8

Problem Description - Utility cloud’s Exascale 10 M physical cores, there may be up to 10 virtual machines per node (or per core) Dynamism Utility clouds serve as a general computing facility Heterogeneous applications included Applications tend to have different workload patterns Online management of virtual machines make a utility cloud more dynamic 2011/06/09 8

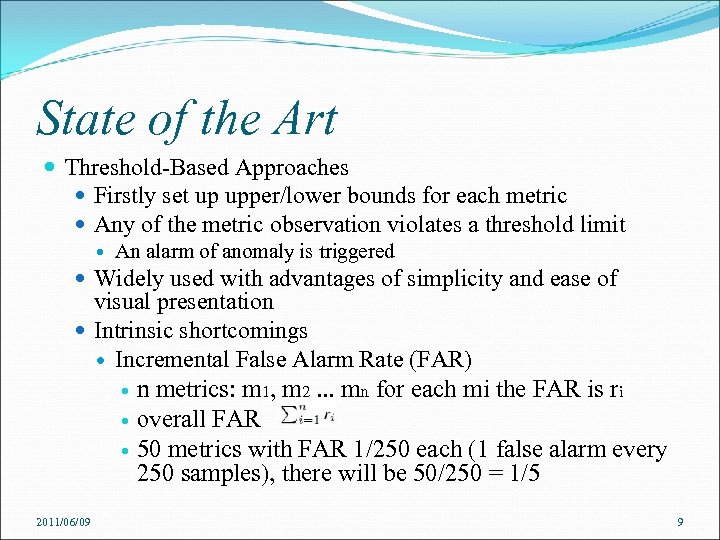

State of the Art Threshold-Based Approaches Firstly set up upper/lower bounds for each metric Any of the metric observation violates a threshold limit An alarm of anomaly is triggered Widely used with advantages of simplicity and ease of visual presentation Intrinsic shortcomings Incremental False Alarm Rate (FAR) n metrics: m 1, m 2. . . mn for each mi the FAR is ri overall FAR 50 metrics with FAR 1/250 each (1 false alarm every 250 samples), there will be 50/250 = 1/5 2011/06/09 9

State of the Art Threshold-Based Approaches Firstly set up upper/lower bounds for each metric Any of the metric observation violates a threshold limit An alarm of anomaly is triggered Widely used with advantages of simplicity and ease of visual presentation Intrinsic shortcomings Incremental False Alarm Rate (FAR) n metrics: m 1, m 2. . . mn for each mi the FAR is ri overall FAR 50 metrics with FAR 1/250 each (1 false alarm every 250 samples), there will be 50/250 = 1/5 2011/06/09 9

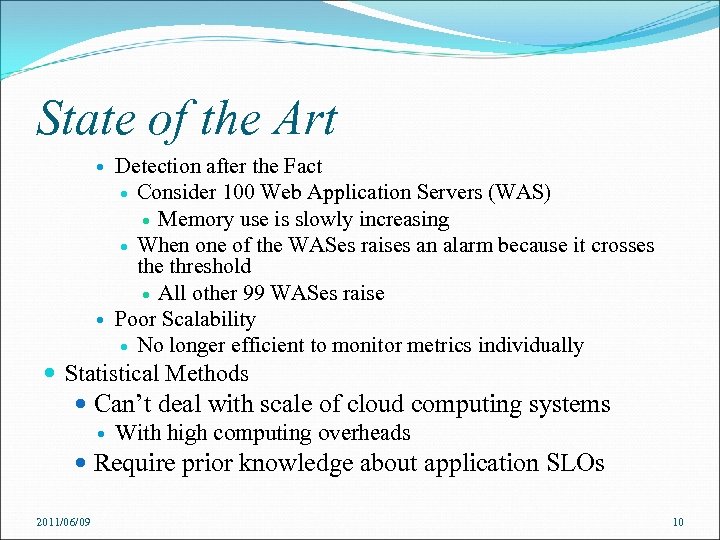

State of the Art Detection after the Fact Consider 100 Web Application Servers (WAS) Memory use is slowly increasing When one of the WASes raises an alarm because it crosses the threshold All other 99 WASes raise Poor Scalability No longer efficient to monitor metrics individually Statistical Methods Can’t deal with scale of cloud computing systems With high computing overheads Require prior knowledge about application SLOs 2011/06/09 10

State of the Art Detection after the Fact Consider 100 Web Application Servers (WAS) Memory use is slowly increasing When one of the WASes raises an alarm because it crosses the threshold All other 99 WASes raise Poor Scalability No longer efficient to monitor metrics individually Statistical Methods Can’t deal with scale of cloud computing systems With high computing overheads Require prior knowledge about application SLOs 2011/06/09 10

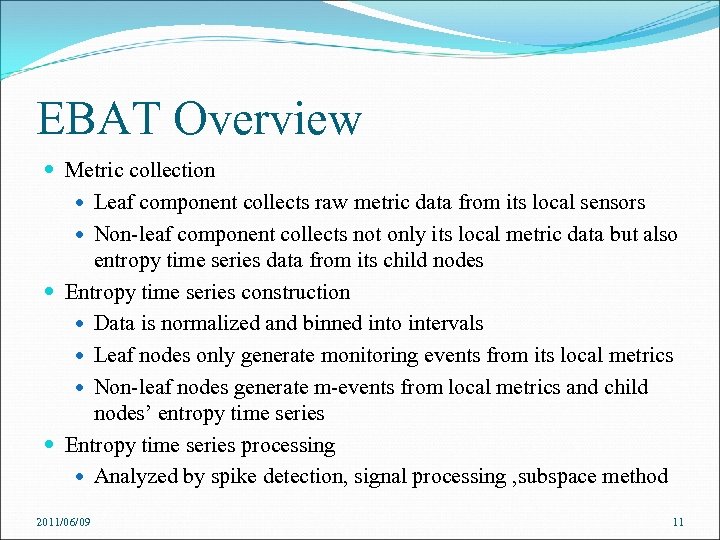

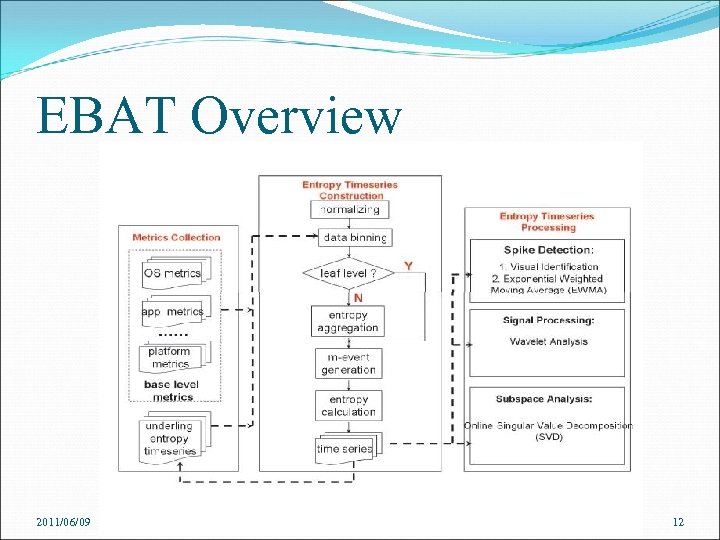

EBAT Overview Metric collection Leaf component collects raw metric data from its local sensors Non-leaf component collects not only its local metric data but also entropy time series data from its child nodes Entropy time series construction Data is normalized and binned into intervals Leaf nodes only generate monitoring events from its local metrics Non-leaf nodes generate m-events from local metrics and child nodes’ entropy time series Entropy time series processing Analyzed by spike detection, signal processing , subspace method 2011/06/09 11

EBAT Overview Metric collection Leaf component collects raw metric data from its local sensors Non-leaf component collects not only its local metric data but also entropy time series data from its child nodes Entropy time series construction Data is normalized and binned into intervals Leaf nodes only generate monitoring events from its local metrics Non-leaf nodes generate m-events from local metrics and child nodes’ entropy time series Entropy time series processing Analyzed by spike detection, signal processing , subspace method 2011/06/09 11

EBAT Overview 2011/06/09 12

EBAT Overview 2011/06/09 12

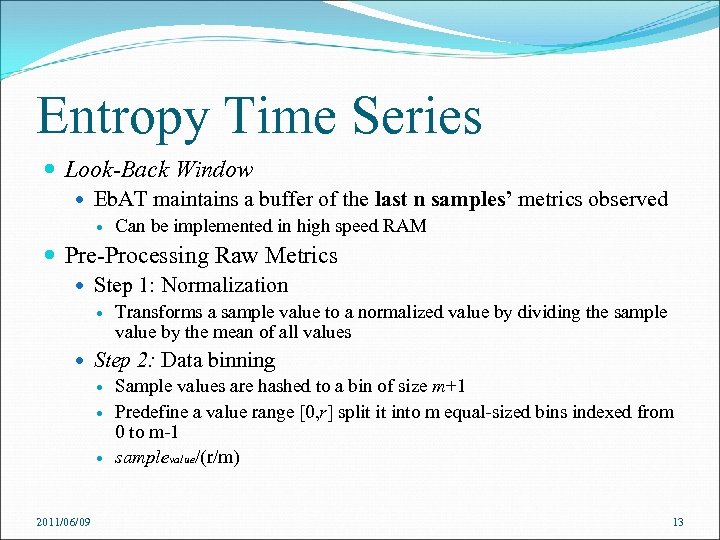

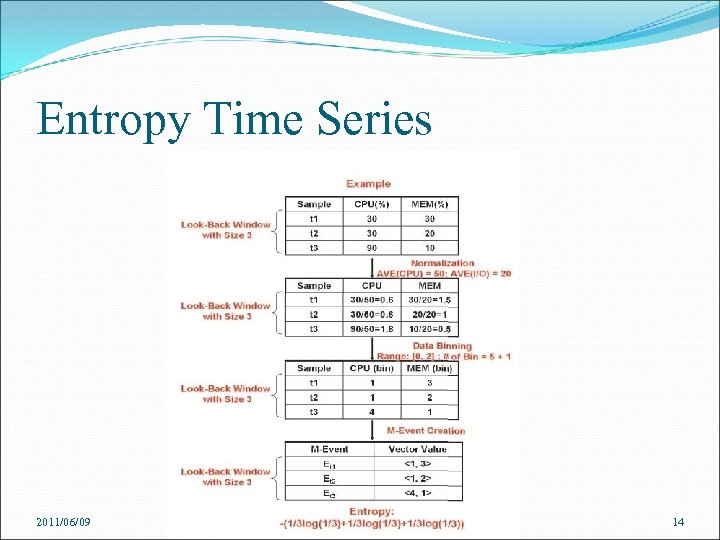

Entropy Time Series Look-Back Window Eb. AT maintains a buffer of the last n samples’ metrics observed Can be implemented in high speed RAM Pre-Processing Raw Metrics Step 1: Normalization Transforms a sample value to a normalized value by dividing the sample value by the mean of all values Step 2: Data binning Sample values are hashed to a bin of size m+1 Predefine a value range [0, r] split it into m equal-sized bins indexed from 0 to m-1 samplevalue/(r/m) 2011/06/09 13

Entropy Time Series Look-Back Window Eb. AT maintains a buffer of the last n samples’ metrics observed Can be implemented in high speed RAM Pre-Processing Raw Metrics Step 1: Normalization Transforms a sample value to a normalized value by dividing the sample value by the mean of all values Step 2: Data binning Sample values are hashed to a bin of size m+1 Predefine a value range [0, r] split it into m equal-sized bins indexed from 0 to m-1 samplevalue/(r/m) 2011/06/09 13

Entropy Time Series 2011/06/09 14

Entropy Time Series 2011/06/09 14

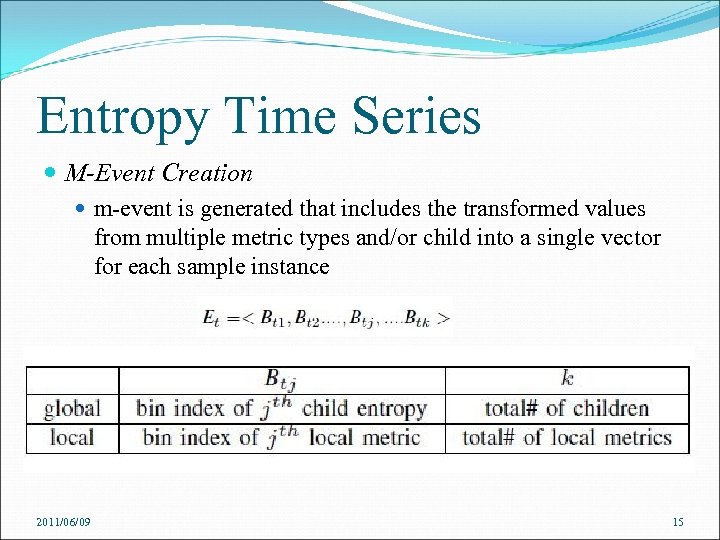

Entropy Time Series M-Event Creation m-event is generated that includes the transformed values from multiple metric types and/or child into a single vector for each sample instance 2011/06/09 15

Entropy Time Series M-Event Creation m-event is generated that includes the transformed values from multiple metric types and/or child into a single vector for each sample instance 2011/06/09 15

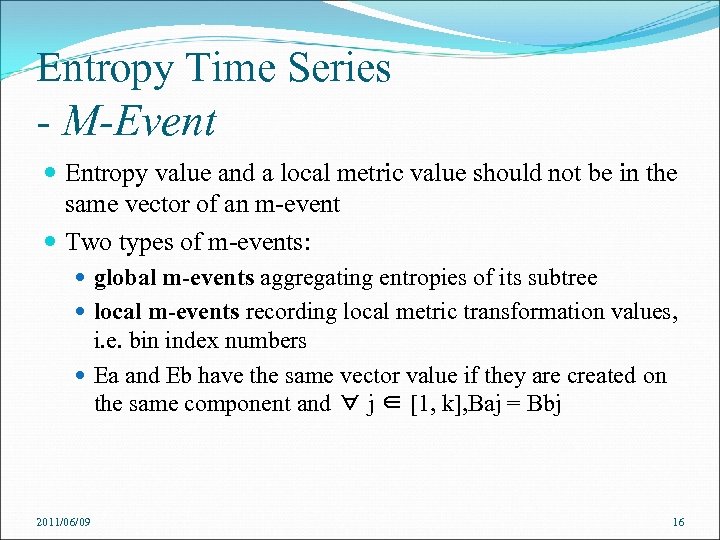

Entropy Time Series - M-Event Entropy value and a local metric value should not be in the same vector of an m-event Two types of m-events: global m-events aggregating entropies of its subtree local m-events recording local metric transformation values, i. e. bin index numbers Ea and Eb have the same vector value if they are created on the same component and ∀ j ∈ [1, k], Baj = Bbj 2011/06/09 16

Entropy Time Series - M-Event Entropy value and a local metric value should not be in the same vector of an m-event Two types of m-events: global m-events aggregating entropies of its subtree local m-events recording local metric transformation values, i. e. bin index numbers Ea and Eb have the same vector value if they are created on the same component and ∀ j ∈ [1, k], Baj = Bbj 2011/06/09 16

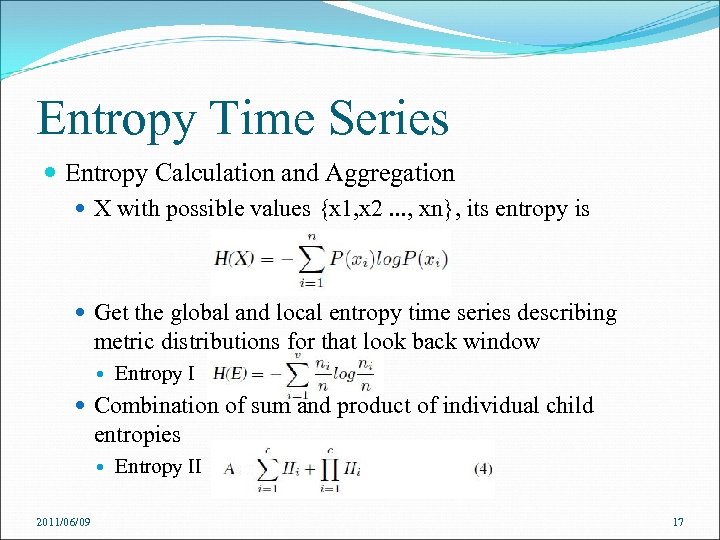

Entropy Time Series Entropy Calculation and Aggregation X with possible values {x 1, x 2. . . , xn}, its entropy is Get the global and local entropy time series describing metric distributions for that look back window Entropy I Combination of sum and product of individual child entropies 2011/06/09 Entropy II 17

Entropy Time Series Entropy Calculation and Aggregation X with possible values {x 1, x 2. . . , xn}, its entropy is Get the global and local entropy time series describing metric distributions for that look back window Entropy I Combination of sum and product of individual child entropies 2011/06/09 Entropy II 17

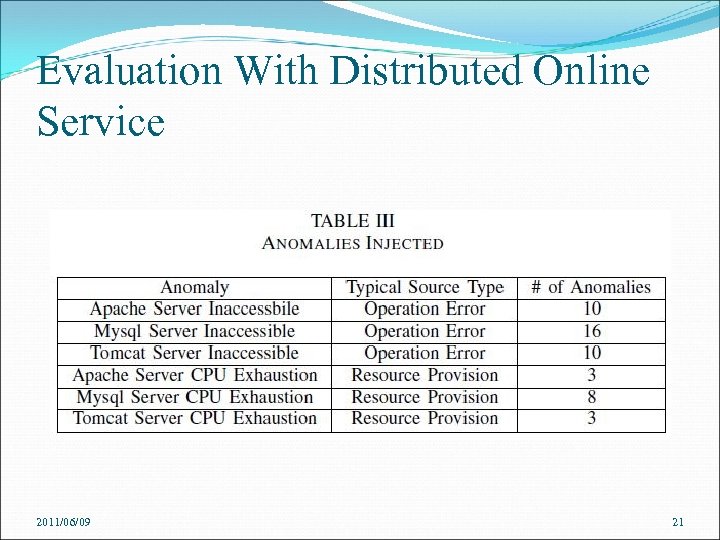

Evaluation With Distributed Online Service RUBi. S benchmark deployed as a set of virtual machines To detect synthetic anomalies injected into the RUBi. S services Effectiveness is evaluated using precision, recall and F 1 score Eb. AT outperforms threshold-based methods average 18. 9% increase in F 1 score 50% on average in false alarm rate with the ’near-optimum’ threshold-based method 2011/06/09 18

Evaluation With Distributed Online Service RUBi. S benchmark deployed as a set of virtual machines To detect synthetic anomalies injected into the RUBi. S services Effectiveness is evaluated using precision, recall and F 1 score Eb. AT outperforms threshold-based methods average 18. 9% increase in F 1 score 50% on average in false alarm rate with the ’near-optimum’ threshold-based method 2011/06/09 18

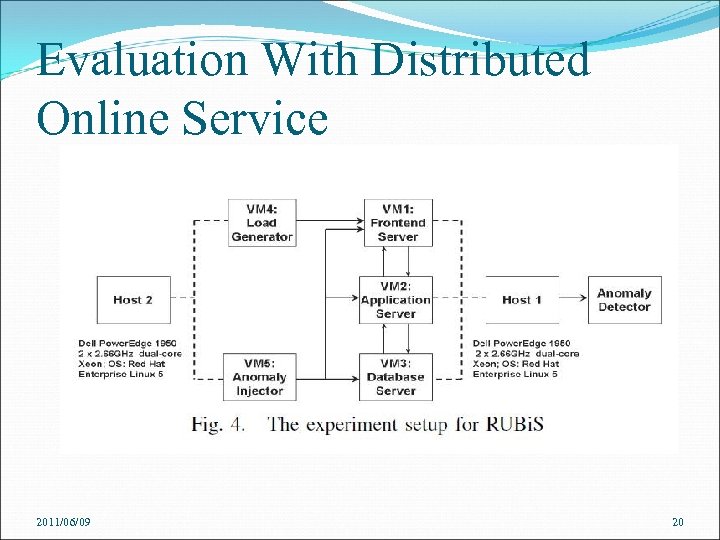

Evaluation With Distributed Online Service Experiment Setup The testbed uses 5 virtual machines (VM 1 to VM 5) on Xen platform hosted on two Dell Power. Edge 1950 servers (Host 1 and Host 2). VM 1, VM 2, and VM 3 are created on Host 1 Load generator and an anomaly injector are running on two virtual machines VM 4 and VM 5 in Host 2 2011/06/09 The generator creates 10 hours’ worth of service request load for Host 1 Anomaly injector injects 50 anomalies into the RUBi. S online service in Host 1 19

Evaluation With Distributed Online Service Experiment Setup The testbed uses 5 virtual machines (VM 1 to VM 5) on Xen platform hosted on two Dell Power. Edge 1950 servers (Host 1 and Host 2). VM 1, VM 2, and VM 3 are created on Host 1 Load generator and an anomaly injector are running on two virtual machines VM 4 and VM 5 in Host 2 2011/06/09 The generator creates 10 hours’ worth of service request load for Host 1 Anomaly injector injects 50 anomalies into the RUBi. S online service in Host 1 19

Evaluation With Distributed Online Service 2011/06/09 20

Evaluation With Distributed Online Service 2011/06/09 20

Evaluation With Distributed Online Service 2011/06/09 21

Evaluation With Distributed Online Service 2011/06/09 21

Evaluation With Distributed Online Service Baseline Methods – Threshold-Based Detection Observed CPU utilization with a lower bound and higher bound threshold Two separate values of the thresholds 2011/06/09 near-optimum threshold value set by an ’oracle’-based method statically set threshold value that is not optimum 22

Evaluation With Distributed Online Service Baseline Methods – Threshold-Based Detection Observed CPU utilization with a lower bound and higher bound threshold Two separate values of the thresholds 2011/06/09 near-optimum threshold value set by an ’oracle’-based method statically set threshold value that is not optimum 22

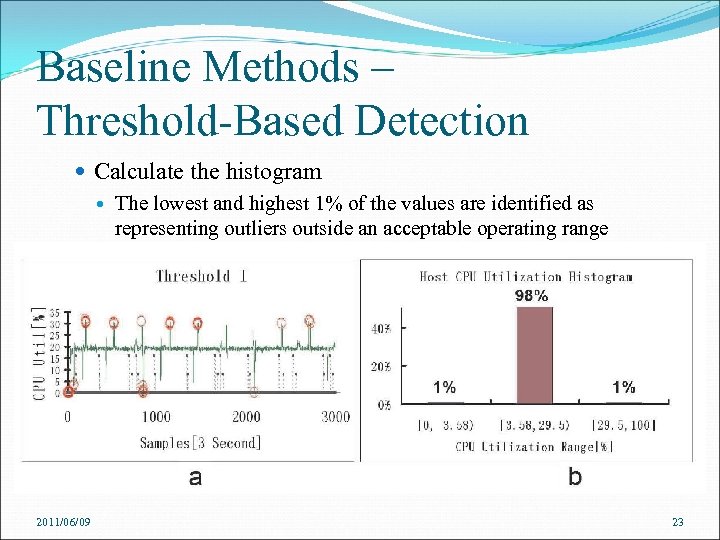

Baseline Methods – Threshold-Based Detection Calculate the histogram The lowest and highest 1% of the values are identified as representing outliers outside an acceptable operating range 2011/06/09 23

Baseline Methods – Threshold-Based Detection Calculate the histogram The lowest and highest 1% of the values are identified as representing outliers outside an acceptable operating range 2011/06/09 23

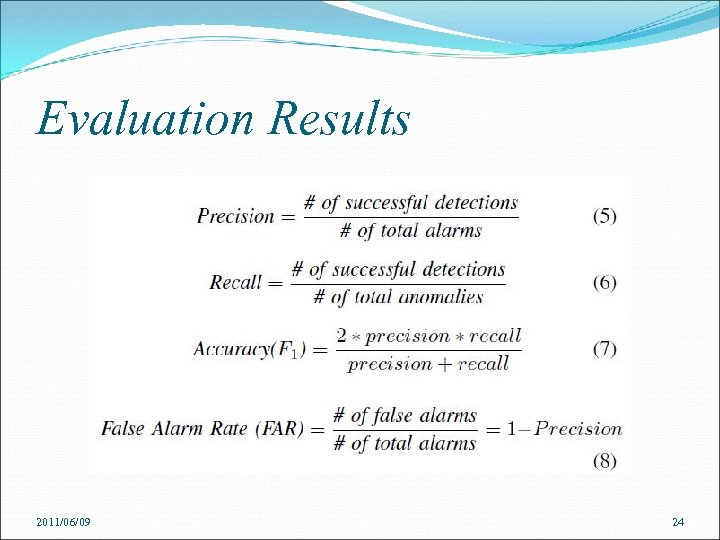

Evaluation Results 2011/06/09 24

Evaluation Results 2011/06/09 24

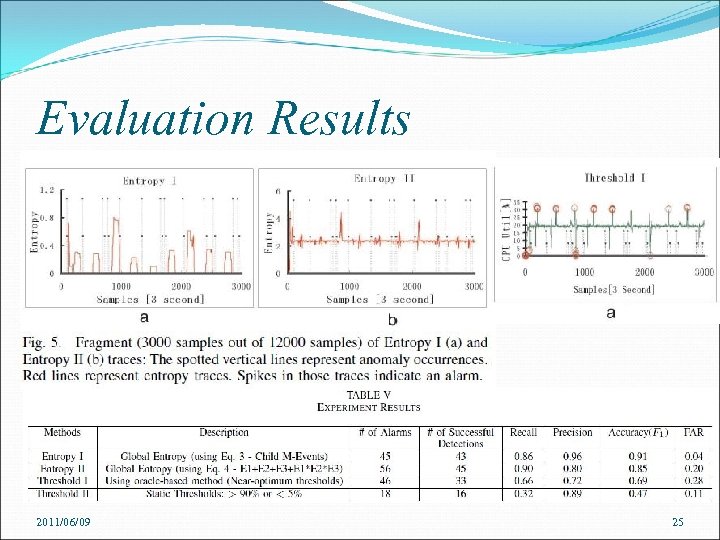

Evaluation Results 2011/06/09 25

Evaluation Results 2011/06/09 25

Evaluation Results Eb. AT methods outperform threshold-based methods in accuracy and almost all precision and recall measurements Threshold II only detects 16 anomalies out of total 50 The comparison between Entropy I and Threshold I Eb. AT’s metric distribution-based detection aggregating metrics across multiple vertical levels has advantages over solely looking at host level 2011/06/09 26

Evaluation Results Eb. AT methods outperform threshold-based methods in accuracy and almost all precision and recall measurements Threshold II only detects 16 anomalies out of total 50 The comparison between Entropy I and Threshold I Eb. AT’s metric distribution-based detection aggregating metrics across multiple vertical levels has advantages over solely looking at host level 2011/06/09 26

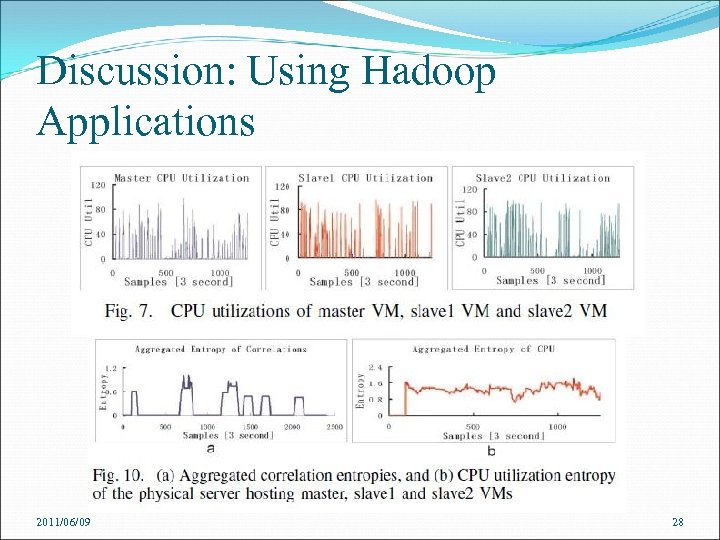

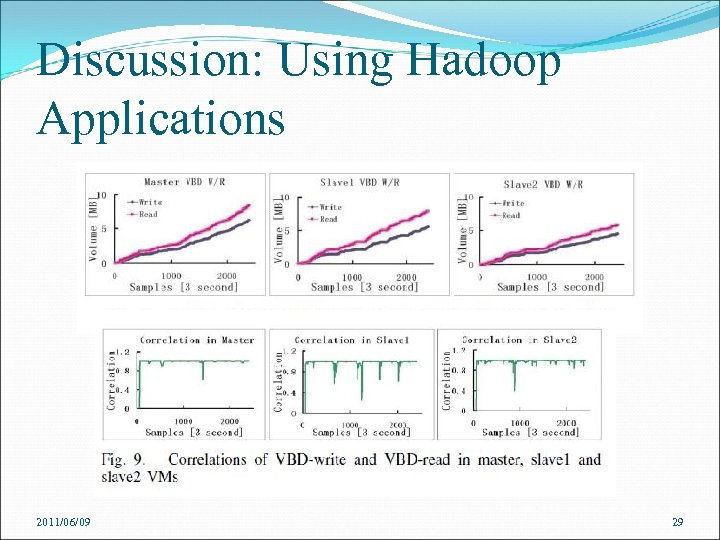

Discussion: Using Hadoop Applications Using Eb. AT to monitor complex, large-scale cloud applications Deploy three virtual machines named master, slave 1 and slave 2 2 hours with 6 anomalies caused by application level task failures Eb. AT observes CPU utilization and the number of VBDwrites and calculates their entropy time series 2011/06/09 27

Discussion: Using Hadoop Applications Using Eb. AT to monitor complex, large-scale cloud applications Deploy three virtual machines named master, slave 1 and slave 2 2 hours with 6 anomalies caused by application level task failures Eb. AT observes CPU utilization and the number of VBDwrites and calculates their entropy time series 2011/06/09 27

Discussion: Using Hadoop Applications 2011/06/09 28

Discussion: Using Hadoop Applications 2011/06/09 28

Discussion: Using Hadoop Applications 2011/06/09 29

Discussion: Using Hadoop Applications 2011/06/09 29

Conclusions And Future Work Eb. AT is an automated online detection framework for anomaly identification and tracking in data center systems Does not require human intervention or use predefined anomaly models/rules Future work concerning Eb. AT includes Zoom in detection to focus on possible areas of causes, Extending and evaluating the methods for cross-stack (multiple) metrics Evaluating scalability with large volumes of data and numbers of machines Continued evaluation with representative cloud workloads such as Hadoop 2011/06/09 30

Conclusions And Future Work Eb. AT is an automated online detection framework for anomaly identification and tracking in data center systems Does not require human intervention or use predefined anomaly models/rules Future work concerning Eb. AT includes Zoom in detection to focus on possible areas of causes, Extending and evaluating the methods for cross-stack (multiple) metrics Evaluating scalability with large volumes of data and numbers of machines Continued evaluation with representative cloud workloads such as Hadoop 2011/06/09 30

Thanks for listening! Q&A 2011/06/09 31

Thanks for listening! Q&A 2011/06/09 31