9241981da31d4502f288ee819f7ef9c4.ppt

- Количество слайдов: 27

On Missing Data Prediction using Sparse Signal Models: A Comparison of Atomic Decompositions with Iterated Denoising Onur G. Guleryuz Do. Co. Mo USA Labs, San Jose, CA 95110 guleryuz@docomolabs-usa. com (google: onur guleryuz) (Please view in full screen mode. The presentation tries to squeeze in too much, please feel free to email me any questions you may have. )

On Missing Data Prediction using Sparse Signal Models: A Comparison of Atomic Decompositions with Iterated Denoising Onur G. Guleryuz Do. Co. Mo USA Labs, San Jose, CA 95110 guleryuz@docomolabs-usa. com (google: onur guleryuz) (Please view in full screen mode. The presentation tries to squeeze in too much, please feel free to email me any questions you may have. )

Overview • Problem statement: Prediction of missing data. • Formulation as a sparse linear expansion overcomplete basis. • AD ( regularized) and ID formulations. • Short simulation results ( 1 regularized). • Why ID is better than AD. • Adaptive predictors on general data: all methods are mathematically the same. Key issues are basis selection, and utilizing what you have effectively. Mini FAQ: 1. Is ID the same as ? No. 2. Is ID the same as , except implemented iteratively? No. 3. Are predictors that yield the sparsest set of expansion coefficients the best? No, predictors that yield the smallest mse are the best. 4. On images, look for performance over large missing chunks (with edges). Some results from Ivan W. Selesnick, Richard Van Slyke, and Onur G. Guleryuz, ``Pixel Recovery via l 1 Minimization in the Wavelet Domain, '‘ Proc. IEEE Int'l Conf. on Image Proc. (ICIP 2004), Singapore, Oct. 2004. Pretty ID pictures: Onur G. Guleryuz, ``Nonlinear Approximation Based Image Recovery Using Adaptive Sparse Reconstructions and Iterated ( Some software available at my webpage. ) Denoising: Part II – Adaptive Algorithms, ‘’ IEEE Tr. on IP, to appear.

Overview • Problem statement: Prediction of missing data. • Formulation as a sparse linear expansion overcomplete basis. • AD ( regularized) and ID formulations. • Short simulation results ( 1 regularized). • Why ID is better than AD. • Adaptive predictors on general data: all methods are mathematically the same. Key issues are basis selection, and utilizing what you have effectively. Mini FAQ: 1. Is ID the same as ? No. 2. Is ID the same as , except implemented iteratively? No. 3. Are predictors that yield the sparsest set of expansion coefficients the best? No, predictors that yield the smallest mse are the best. 4. On images, look for performance over large missing chunks (with edges). Some results from Ivan W. Selesnick, Richard Van Slyke, and Onur G. Guleryuz, ``Pixel Recovery via l 1 Minimization in the Wavelet Domain, '‘ Proc. IEEE Int'l Conf. on Image Proc. (ICIP 2004), Singapore, Oct. 2004. Pretty ID pictures: Onur G. Guleryuz, ``Nonlinear Approximation Based Image Recovery Using Adaptive Sparse Reconstructions and Iterated ( Some software available at my webpage. ) Denoising: Part II – Adaptive Algorithms, ‘’ IEEE Tr. on IP, to appear.

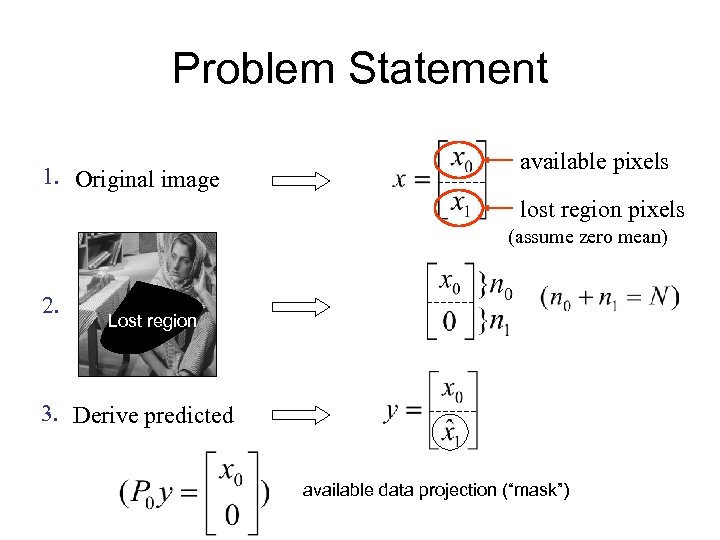

Problem Statement 1. Original image available pixels lost region pixels (assume zero mean) 2. Lost region 3. Derive predicted available data projection (“mask”)

Problem Statement 1. Original image available pixels lost region pixels (assume zero mean) 2. Lost region 3. Derive predicted available data projection (“mask”)

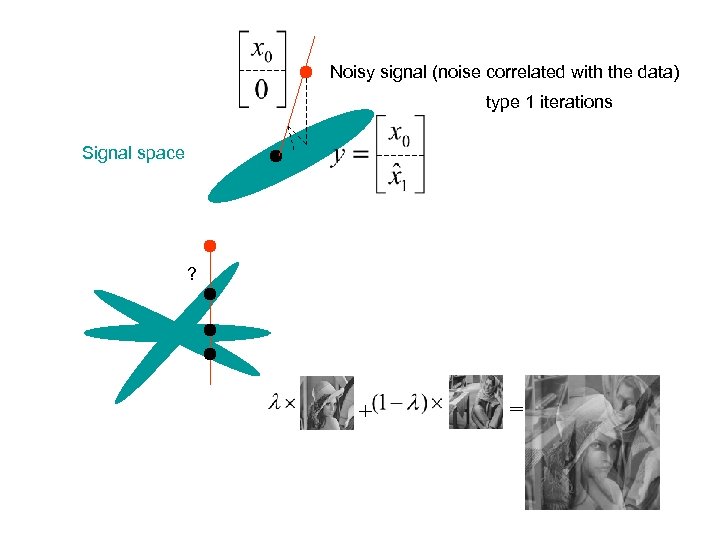

Noisy signal (noise correlated with the data) type 1 iterations Signal space ? + =

Noisy signal (noise correlated with the data) type 1 iterations Signal space ? + =

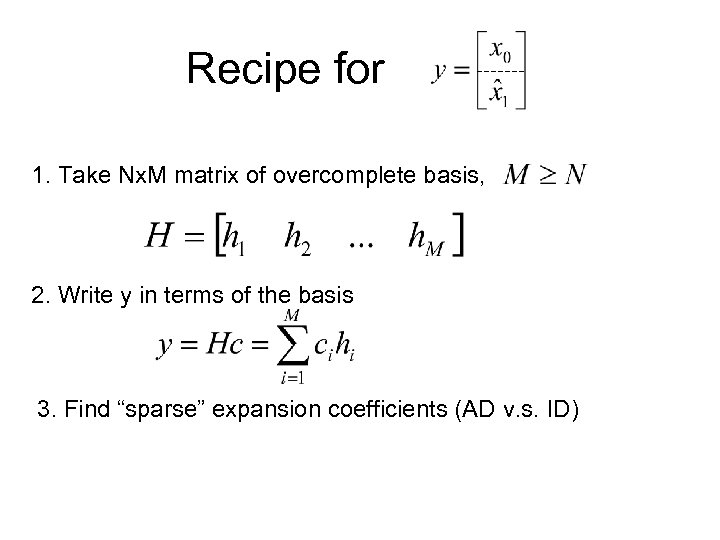

Recipe for 1. Take Nx. M matrix of overcomplete basis, 2. Write y in terms of the basis 3. Find “sparse” expansion coefficients (AD v. s. ID)

Recipe for 1. Take Nx. M matrix of overcomplete basis, 2. Write y in terms of the basis 3. Find “sparse” expansion coefficients (AD v. s. ID)

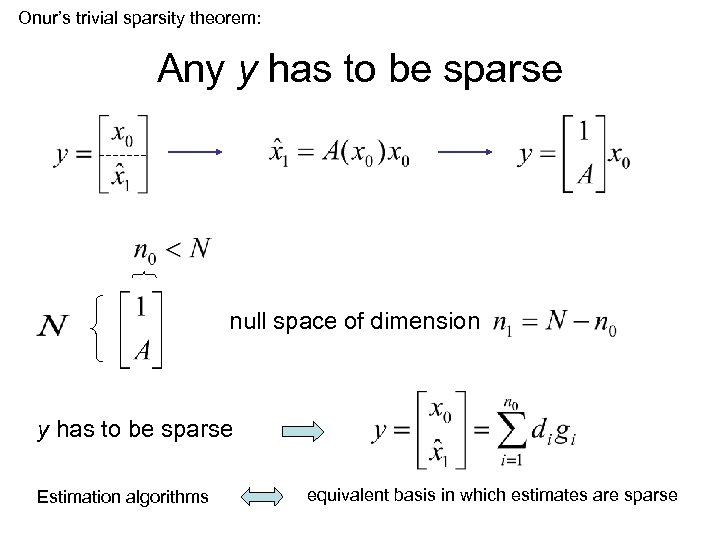

Onur’s trivial sparsity theorem: Any y has to be sparse null space of dimension y has to be sparse Estimation algorithms equivalent basis in which estimates are sparse

Onur’s trivial sparsity theorem: Any y has to be sparse null space of dimension y has to be sparse Estimation algorithms equivalent basis in which estimates are sparse

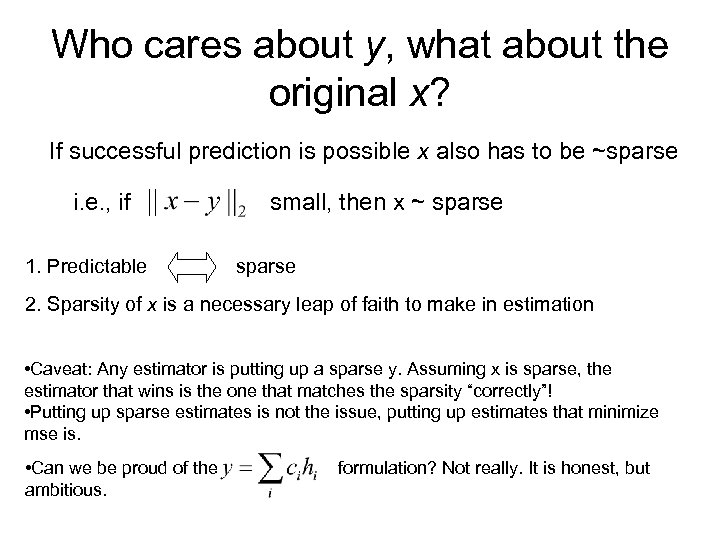

Who cares about y, what about the original x? If successful prediction is possible x also has to be ~sparse i. e. , if 1. Predictable small, then x ~ sparse 2. Sparsity of x is a necessary leap of faith to make in estimation • Caveat: Any estimator is putting up a sparse y. Assuming x is sparse, the estimator that wins is the one that matches the sparsity “correctly”! • Putting up sparse estimates is not the issue, putting up estimates that minimize mse is. • Can we be proud of the ambitious. formulation? Not really. It is honest, but

Who cares about y, what about the original x? If successful prediction is possible x also has to be ~sparse i. e. , if 1. Predictable small, then x ~ sparse 2. Sparsity of x is a necessary leap of faith to make in estimation • Caveat: Any estimator is putting up a sparse y. Assuming x is sparse, the estimator that wins is the one that matches the sparsity “correctly”! • Putting up sparse estimates is not the issue, putting up estimates that minimize mse is. • Can we be proud of the ambitious. formulation? Not really. It is honest, but

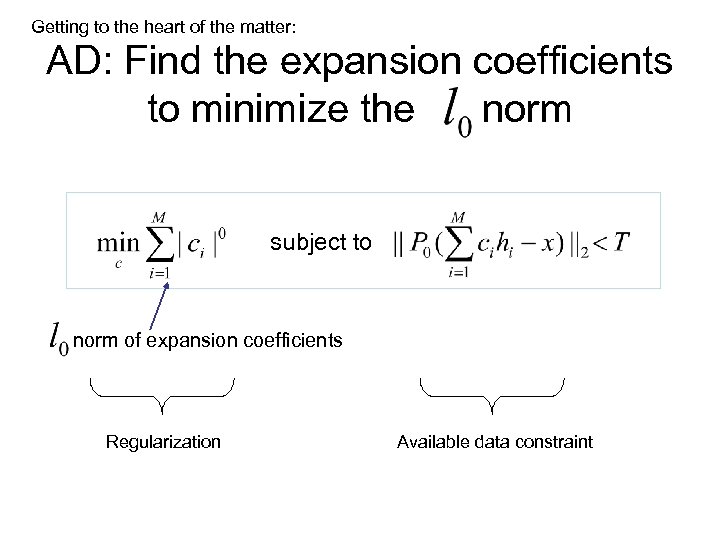

Getting to the heart of the matter: AD: Find the expansion coefficients to minimize the norm subject to norm of expansion coefficients Regularization Available data constraint

Getting to the heart of the matter: AD: Find the expansion coefficients to minimize the norm subject to norm of expansion coefficients Regularization Available data constraint

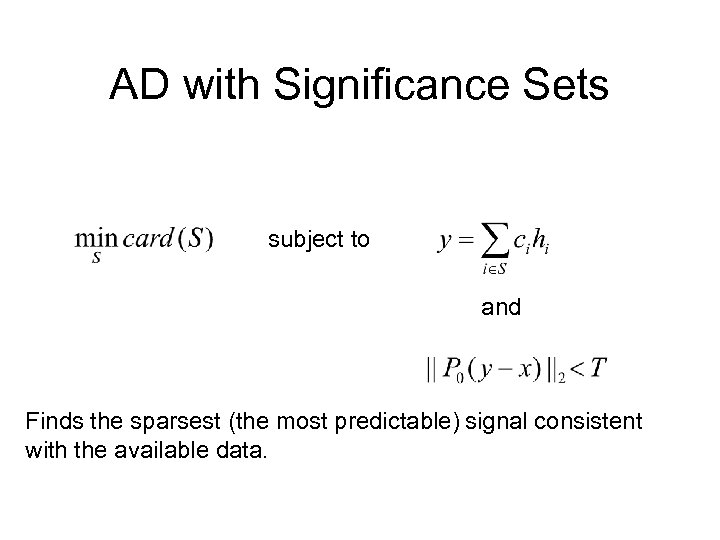

AD with Significance Sets subject to and Finds the sparsest (the most predictable) signal consistent with the available data.

AD with Significance Sets subject to and Finds the sparsest (the most predictable) signal consistent with the available data.

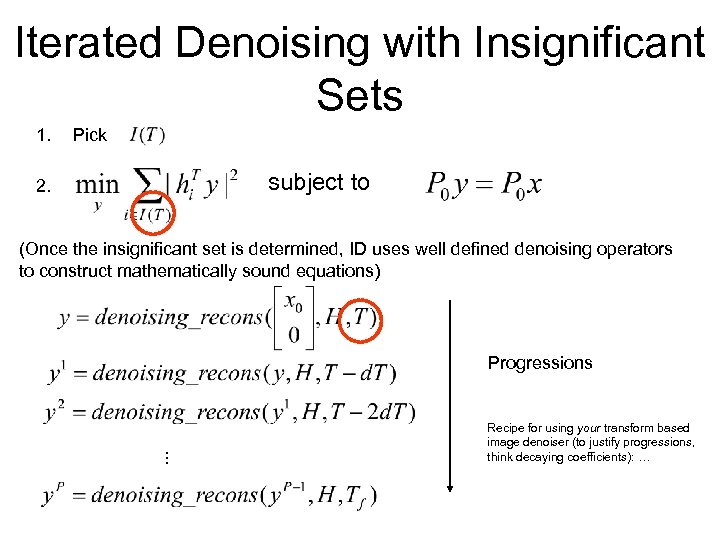

Iterated Denoising with Insignificant Sets 1. Pick subject to 2. (Once the insignificant set is determined, ID uses well defined denoising operators to construct mathematically sound equations) . . . Progressions Recipe for using your transform based image denoiser (to justify progressions, think decaying coefficients): …

Iterated Denoising with Insignificant Sets 1. Pick subject to 2. (Once the insignificant set is determined, ID uses well defined denoising operators to construct mathematically sound equations) . . . Progressions Recipe for using your transform based image denoiser (to justify progressions, think decaying coefficients): …

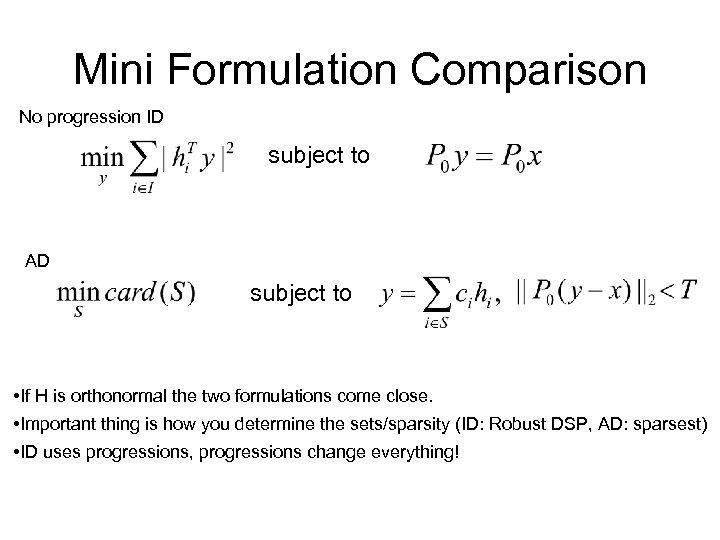

Mini Formulation Comparison No progression ID subject to AD subject to • If H is orthonormal the two formulations come close. • Important thing is how you determine the sets/sparsity (ID: Robust DSP, AD: sparsest) • ID uses progressions, progressions change everything!

Mini Formulation Comparison No progression ID subject to AD subject to • If H is orthonormal the two formulations come close. • Important thing is how you determine the sets/sparsity (ID: Robust DSP, AD: sparsest) • ID uses progressions, progressions change everything!

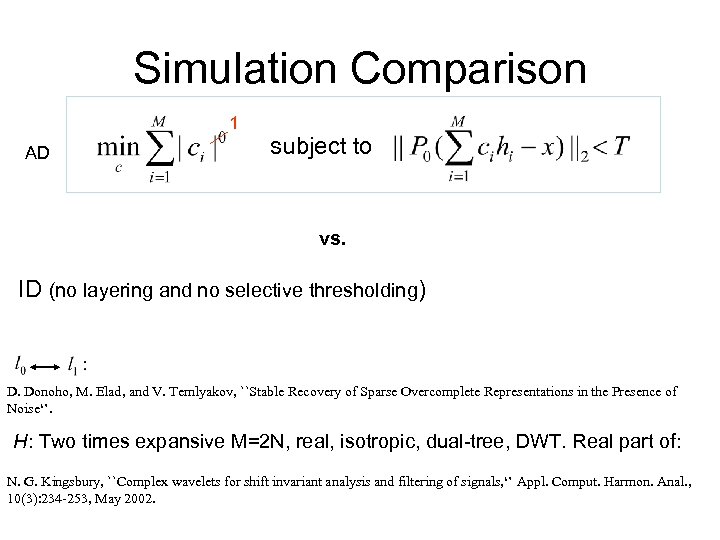

Simulation Comparison 1 AD subject to vs. ID (no layering and no selective thresholding) D. Donoho, M. Elad, and V. Temlyakov, ``Stable Recovery of Sparse Overcomplete Representations in the Presence of Noise‘’. H: Two times expansive M=2 N, real, isotropic, dual-tree, DWT. Real part of: N. G. Kingsbury, ``Complex wavelets for shift invariant analysis and filtering of signals, ‘’ Appl. Comput. Harmon. Anal. , 10(3): 234 -253, May 2002.

Simulation Comparison 1 AD subject to vs. ID (no layering and no selective thresholding) D. Donoho, M. Elad, and V. Temlyakov, ``Stable Recovery of Sparse Overcomplete Representations in the Presence of Noise‘’. H: Two times expansive M=2 N, real, isotropic, dual-tree, DWT. Real part of: N. G. Kingsbury, ``Complex wavelets for shift invariant analysis and filtering of signals, ‘’ Appl. Comput. Harmon. Anal. , 10(3): 234 -253, May 2002.

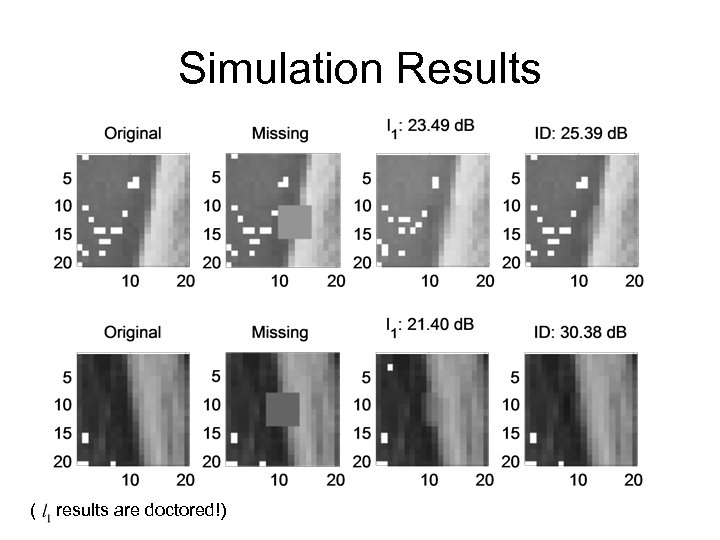

Simulation Results ( results are doctored!)

Simulation Results ( results are doctored!)

What is wrong with AD? Problems in ? Yes and no. • I will argue that even if we used an “ solver”, ID will in general prevail. • Specific issues with. • How to fix the problems with based AD. • How to do better. So let’s assume we can solve the problem. . .

What is wrong with AD? Problems in ? Yes and no. • I will argue that even if we used an “ solver”, ID will in general prevail. • Specific issues with. • How to fix the problems with based AD. • How to do better. So let’s assume we can solve the problem. . .

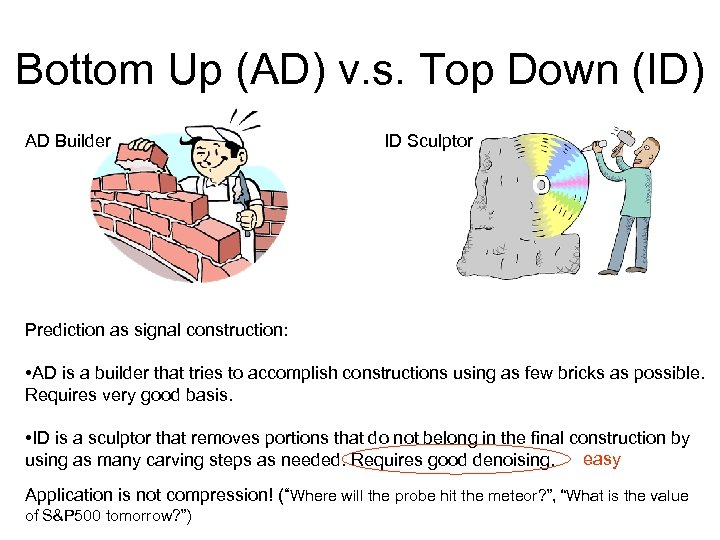

Bottom Up (AD) v. s. Top Down (ID) AD Builder ID Sculptor Prediction as signal construction: • AD is a builder that tries to accomplish constructions using as few bricks as possible. Requires very good basis. • ID is a sculptor that removes portions that do not belong in the final construction by easy using as many carving steps as needed. Requires good denoising. Application is not compression! (“Where will the probe hit the meteor? ”, “What is the value of S&P 500 tomorrow? ”)

Bottom Up (AD) v. s. Top Down (ID) AD Builder ID Sculptor Prediction as signal construction: • AD is a builder that tries to accomplish constructions using as few bricks as possible. Requires very good basis. • ID is a sculptor that removes portions that do not belong in the final construction by easy using as many carving steps as needed. Requires good denoising. Application is not compression! (“Where will the probe hit the meteor? ”, “What is the value of S&P 500 tomorrow? ”)

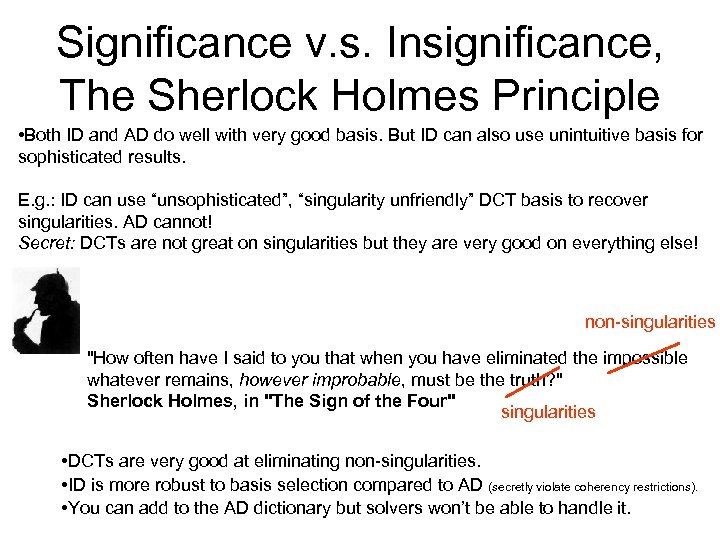

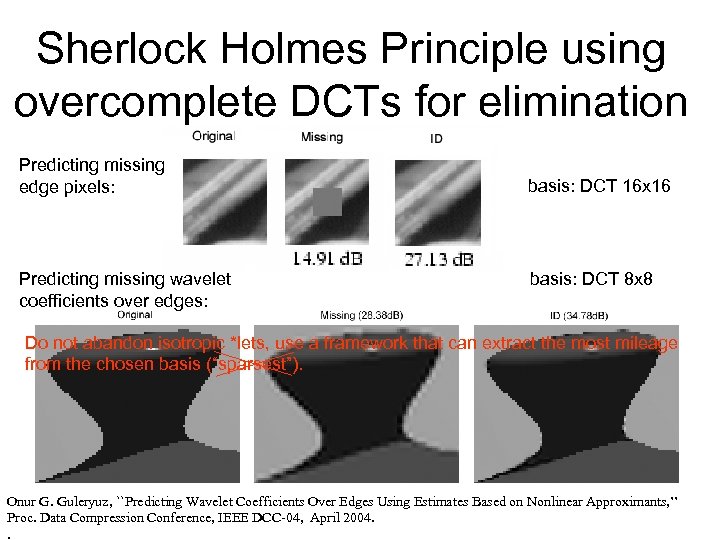

Significance v. s. Insignificance, The Sherlock Holmes Principle • Both ID and AD do well with very good basis. But ID can also use unintuitive basis for sophisticated results. E. g. : ID can use “unsophisticated”, “singularity unfriendly” DCT basis to recover singularities. AD cannot! Secret: DCTs are not great on singularities but they are very good on everything else! non-singularities "How often have I said to you that when you have eliminated the impossible whatever remains, however improbable, must be the truth? " Sherlock Holmes, in "The Sign of the Four" singularities • DCTs are very good at eliminating non-singularities. • ID is more robust to basis selection compared to AD (secretly violate coherency restrictions). • You can add to the AD dictionary but solvers won’t be able to handle it.

Significance v. s. Insignificance, The Sherlock Holmes Principle • Both ID and AD do well with very good basis. But ID can also use unintuitive basis for sophisticated results. E. g. : ID can use “unsophisticated”, “singularity unfriendly” DCT basis to recover singularities. AD cannot! Secret: DCTs are not great on singularities but they are very good on everything else! non-singularities "How often have I said to you that when you have eliminated the impossible whatever remains, however improbable, must be the truth? " Sherlock Holmes, in "The Sign of the Four" singularities • DCTs are very good at eliminating non-singularities. • ID is more robust to basis selection compared to AD (secretly violate coherency restrictions). • You can add to the AD dictionary but solvers won’t be able to handle it.

Sherlock Holmes Principle using overcomplete DCTs for elimination Predicting missing edge pixels: Predicting missing wavelet coefficients over edges: basis: DCT 16 x 16 basis: DCT 8 x 8 Do not abandon isotropic *lets, use a framework that can extract the most mileage from the chosen basis (“sparsest”). Onur G. Guleryuz, ``Predicting Wavelet Coefficients Over Edges Using Estimates Based on Nonlinear Approximants, ’’ Proc. Data Compression Conference, IEEE DCC-04, April 2004. .

Sherlock Holmes Principle using overcomplete DCTs for elimination Predicting missing edge pixels: Predicting missing wavelet coefficients over edges: basis: DCT 16 x 16 basis: DCT 8 x 8 Do not abandon isotropic *lets, use a framework that can extract the most mileage from the chosen basis (“sparsest”). Onur G. Guleryuz, ``Predicting Wavelet Coefficients Over Edges Using Estimates Based on Nonlinear Approximants, ’’ Proc. Data Compression Conference, IEEE DCC-04, April 2004. .

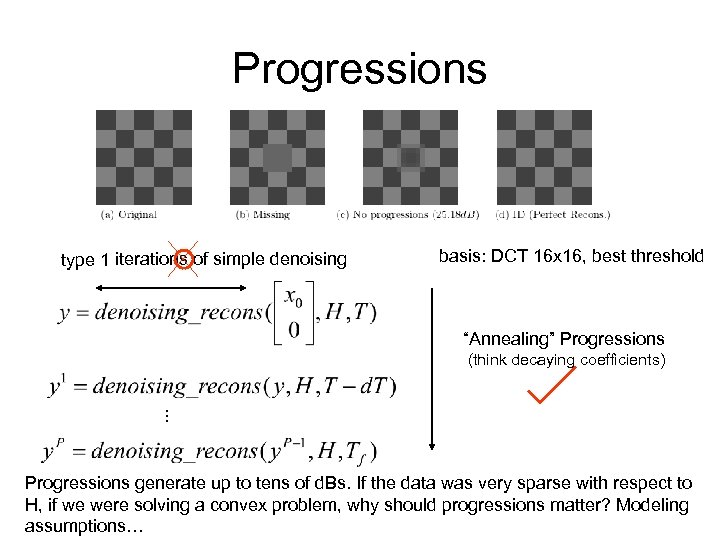

Progressions type 1 iterations of simple denoising basis: DCT 16 x 16, best threshold “Annealing” Progressions . . . (think decaying coefficients) Progressions generate up to tens of d. Bs. If the data was very sparse with respect to H, if we were solving a convex problem, why should progressions matter? Modeling assumptions…

Progressions type 1 iterations of simple denoising basis: DCT 16 x 16, best threshold “Annealing” Progressions . . . (think decaying coefficients) Progressions generate up to tens of d. Bs. If the data was very sparse with respect to H, if we were solving a convex problem, why should progressions matter? Modeling assumptions…

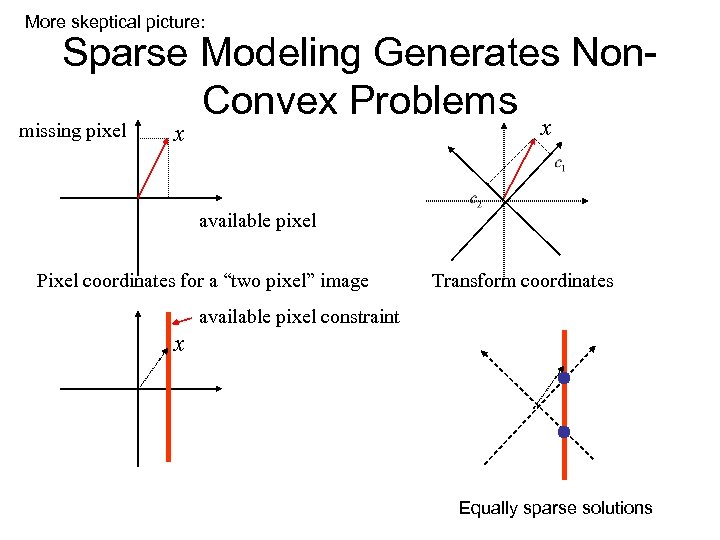

More skeptical picture: Sparse Modeling Generates Non. Convex Problems missing pixel x x available pixel Pixel coordinates for a “two pixel” image Transform coordinates available pixel constraint x Equally sparse solutions

More skeptical picture: Sparse Modeling Generates Non. Convex Problems missing pixel x x available pixel Pixel coordinates for a “two pixel” image Transform coordinates available pixel constraint x Equally sparse solutions

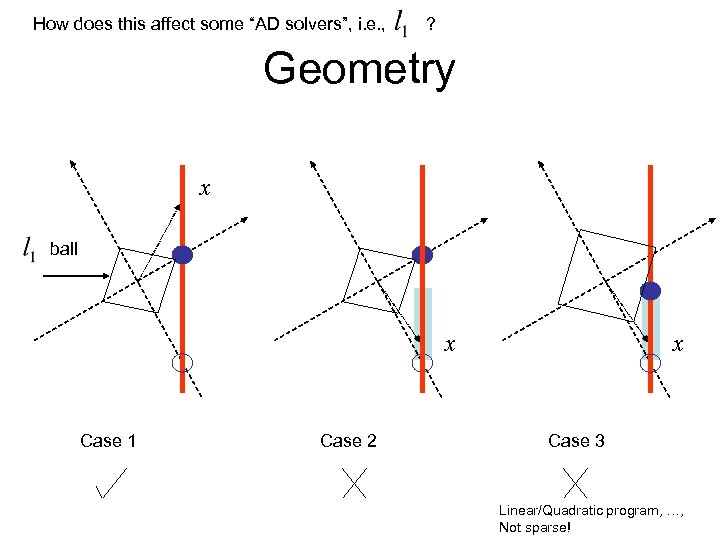

How does this affect some “AD solvers”, i. e. , ? Geometry x ball x Case 1 Case 2 x Case 3 Linear/Quadratic program, …, Not sparse!

How does this affect some “AD solvers”, i. e. , ? Geometry x ball x Case 1 Case 2 x Case 3 Linear/Quadratic program, …, Not sparse!

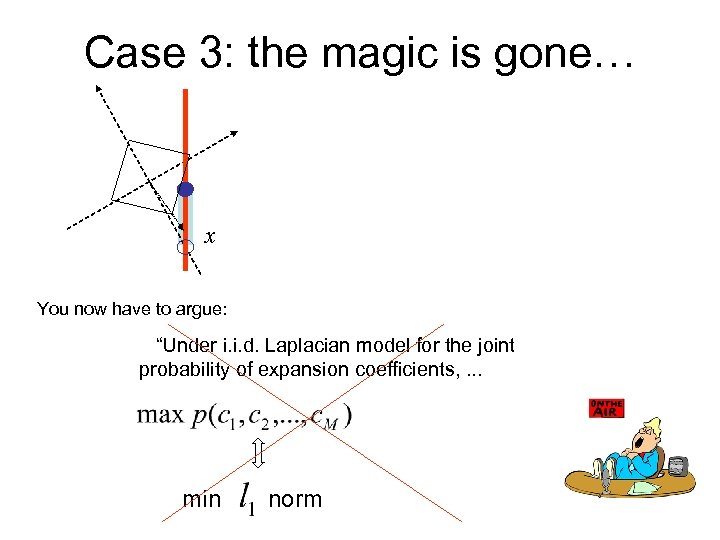

Case 3: the magic is gone… x You now have to argue: “Under i. i. d. Laplacian model for the joint probability of expansion coefficients, . . . min norm

Case 3: the magic is gone… x You now have to argue: “Under i. i. d. Laplacian model for the joint probability of expansion coefficients, . . . min norm

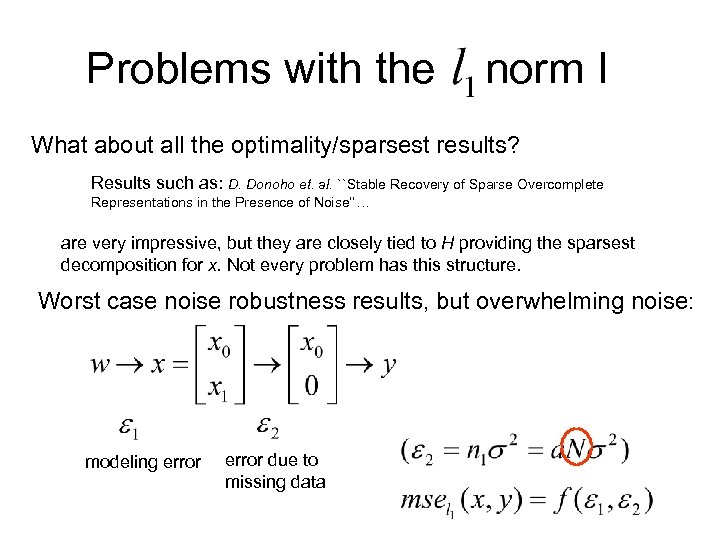

Problems with the norm I What about all the optimality/sparsest results? Results such as: D. Donoho et. al. ``Stable Recovery of Sparse Overcomplete Representations in the Presence of Noise‘’… are very impressive, but they are closely tied to H providing the sparsest decomposition for x. Not every problem has this structure. Worst case noise robustness results, but overwhelming noise: modeling error due to missing data

Problems with the norm I What about all the optimality/sparsest results? Results such as: D. Donoho et. al. ``Stable Recovery of Sparse Overcomplete Representations in the Presence of Noise‘’… are very impressive, but they are closely tied to H providing the sparsest decomposition for x. Not every problem has this structure. Worst case noise robustness results, but overwhelming noise: modeling error due to missing data

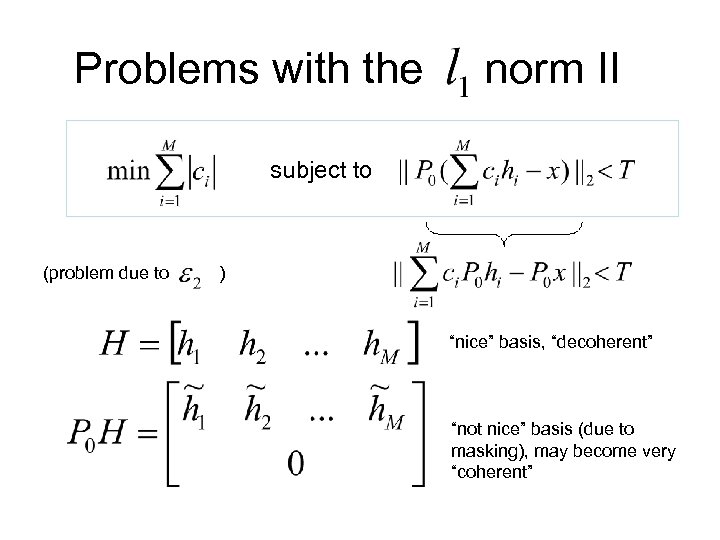

Problems with the norm II subject to (problem due to ) “nice” basis, “decoherent” “not nice” basis (due to masking), may become very “coherent”

Problems with the norm II subject to (problem due to ) “nice” basis, “decoherent” “not nice” basis (due to masking), may become very “coherent”

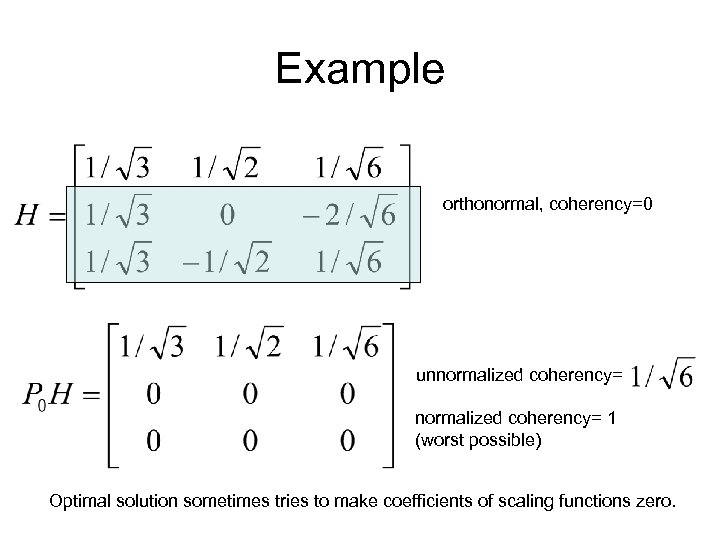

Example orthonormal, coherency=0 unnormalized coherency= 1 (worst possible) Optimal solution sometimes tries to make coefficients of scaling functions zero.

Example orthonormal, coherency=0 unnormalized coherency= 1 (worst possible) Optimal solution sometimes tries to make coefficients of scaling functions zero.

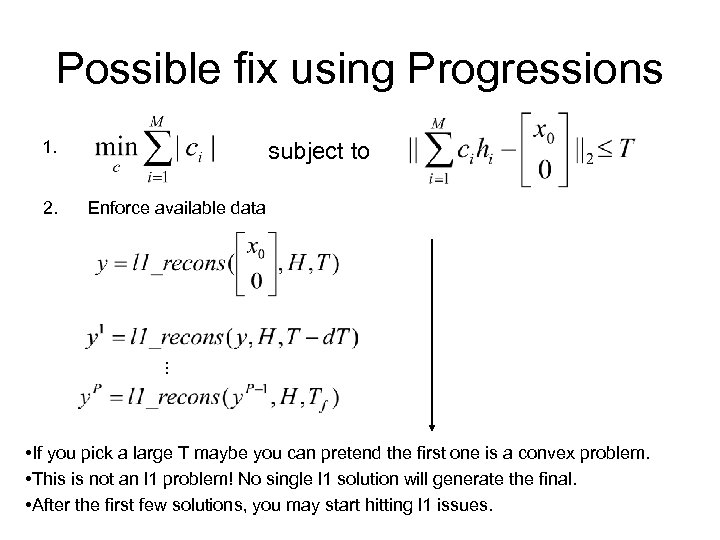

Possible fix using Progressions 1. Enforce available data . . . 2. subject to • If you pick a large T maybe you can pretend the first one is a convex problem. • This is not an l 1 problem! No single l 1 solution will generate the final. • After the first few solutions, you may start hitting l 1 issues.

Possible fix using Progressions 1. Enforce available data . . . 2. subject to • If you pick a large T maybe you can pretend the first one is a convex problem. • This is not an l 1 problem! No single l 1 solution will generate the final. • After the first few solutions, you may start hitting l 1 issues.

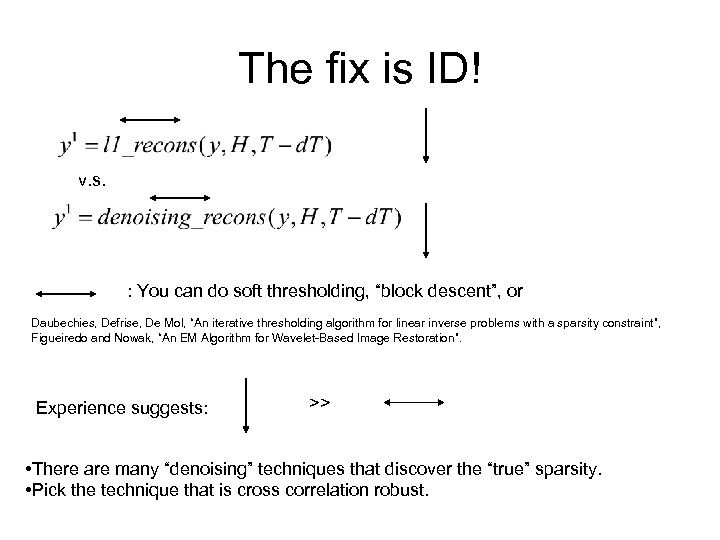

The fix is ID! v. s. : You can do soft thresholding, “block descent”, or Daubechies, Defrise, De Mol, “An iterative thresholding algorithm for linear inverse problems with a sparsity constraint”, Figueiredo and Nowak, “An EM Algorithm for Wavelet-Based Image Restoration”. Experience suggests: >> • There are many “denoising” techniques that discover the “true” sparsity. • Pick the technique that is cross correlation robust.

The fix is ID! v. s. : You can do soft thresholding, “block descent”, or Daubechies, Defrise, De Mol, “An iterative thresholding algorithm for linear inverse problems with a sparsity constraint”, Figueiredo and Nowak, “An EM Algorithm for Wavelet-Based Image Restoration”. Experience suggests: >> • There are many “denoising” techniques that discover the “true” sparsity. • Pick the technique that is cross correlation robust.

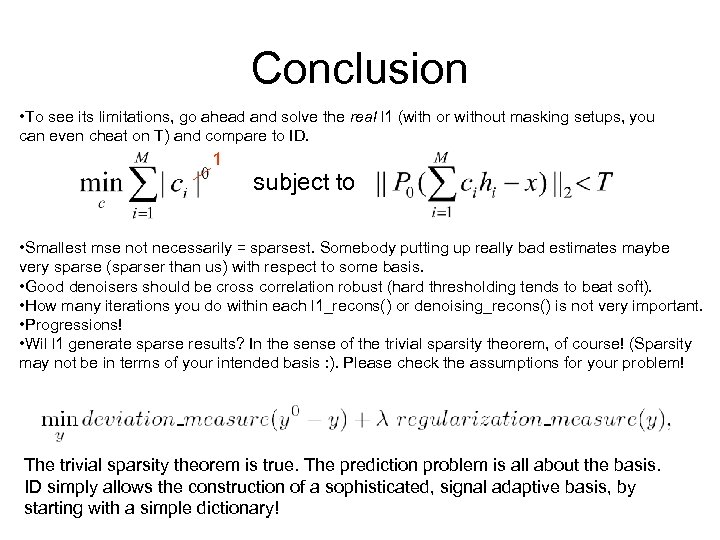

Conclusion • To see its limitations, go ahead and solve the real l 1 (with or without masking setups, you can even cheat on T) and compare to ID. 1 subject to • Smallest mse not necessarily = sparsest. Somebody putting up really bad estimates maybe very sparse (sparser than us) with respect to some basis. • Good denoisers should be cross correlation robust (hard thresholding tends to beat soft). • How many iterations you do within each l 1_recons() or denoising_recons() is not very important. • Progressions! • Wil l 1 generate sparse results? In the sense of the trivial sparsity theorem, of course! (Sparsity may not be in terms of your intended basis : ). Please check the assumptions for your problem! The trivial sparsity theorem is true. The prediction problem is all about the basis. ID simply allows the construction of a sophisticated, signal adaptive basis, by starting with a simple dictionary!

Conclusion • To see its limitations, go ahead and solve the real l 1 (with or without masking setups, you can even cheat on T) and compare to ID. 1 subject to • Smallest mse not necessarily = sparsest. Somebody putting up really bad estimates maybe very sparse (sparser than us) with respect to some basis. • Good denoisers should be cross correlation robust (hard thresholding tends to beat soft). • How many iterations you do within each l 1_recons() or denoising_recons() is not very important. • Progressions! • Wil l 1 generate sparse results? In the sense of the trivial sparsity theorem, of course! (Sparsity may not be in terms of your intended basis : ). Please check the assumptions for your problem! The trivial sparsity theorem is true. The prediction problem is all about the basis. ID simply allows the construction of a sophisticated, signal adaptive basis, by starting with a simple dictionary!