da19c012866461257f0c8980a5aa7815.ppt

- Количество слайдов: 79

Nearest Neighbor and Locality -Sensitive Hashing Yaniv Masler IDC - 16. 03. 08 Tell me who your neighbors are, and I'll know who you are

Lecture Outline • Variants of NN • Motivation • Algorithms: – – – Linear scan Quad-trees kd-trees Locality Sensitive Hashing R-tree (and its variants) VA-file • Examples – Colorization by Example – Medical Pattern recognition – Hand written digit recognition

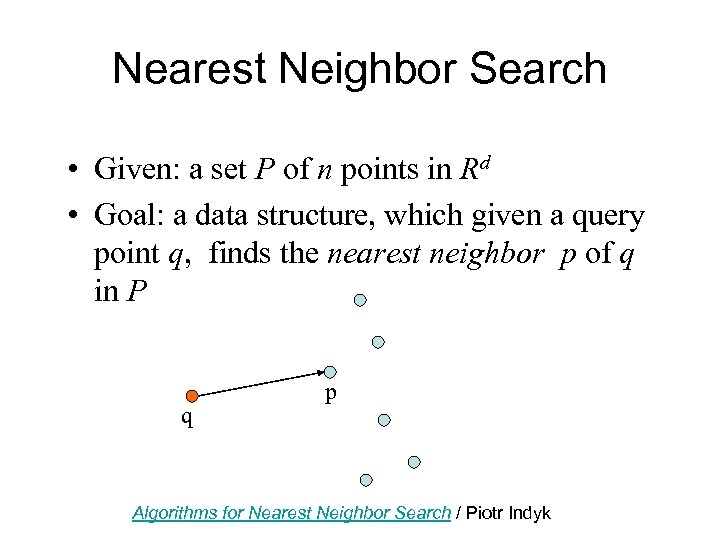

Nearest Neighbor Search • Given: a set P of n points in Rd • Goal: a data structure, which given a query point q, finds the nearest neighbor p of q in P q p Algorithms for Nearest Neighbor Search / Piotr Indyk

Nearest Neighbor Search Problem: what's the nearestaurant to my hotel?

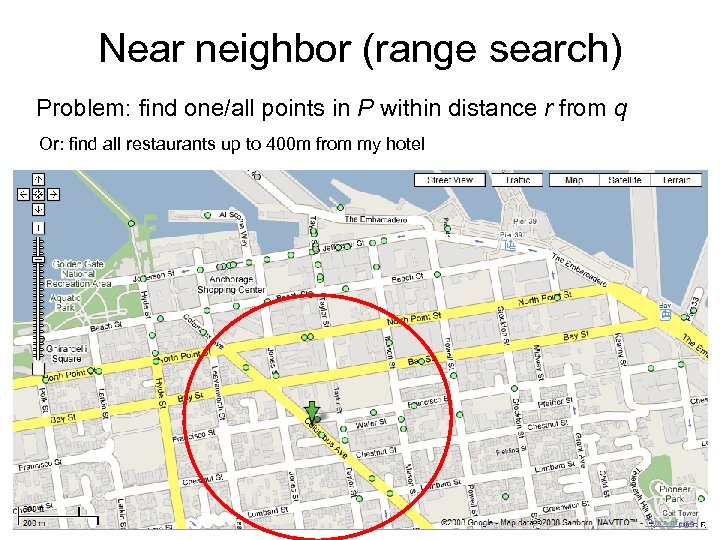

Near neighbor (range search) Problem: find one/all points in P within distance r from q Or: find all restaurants up to 400 m from my hotel

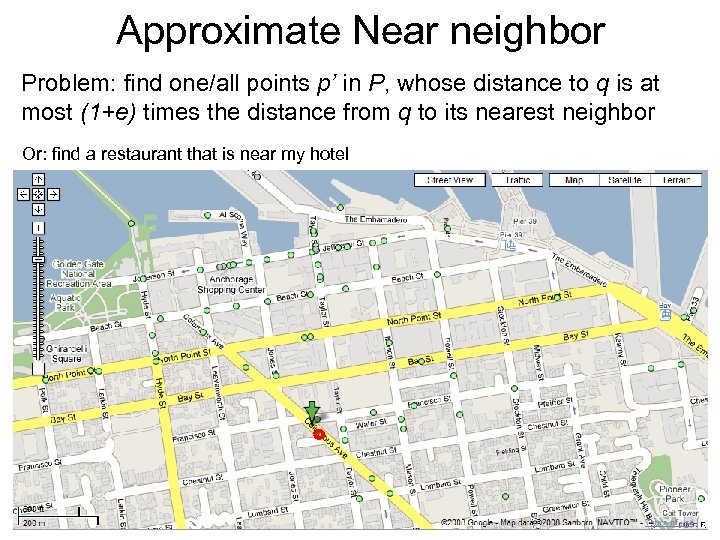

Approximate Near neighbor Problem: find one/all points p’ in P, whose distance to q is at most (1+e) times the distance from q to its nearest neighbor Or: find a restaurant that is near my hotel

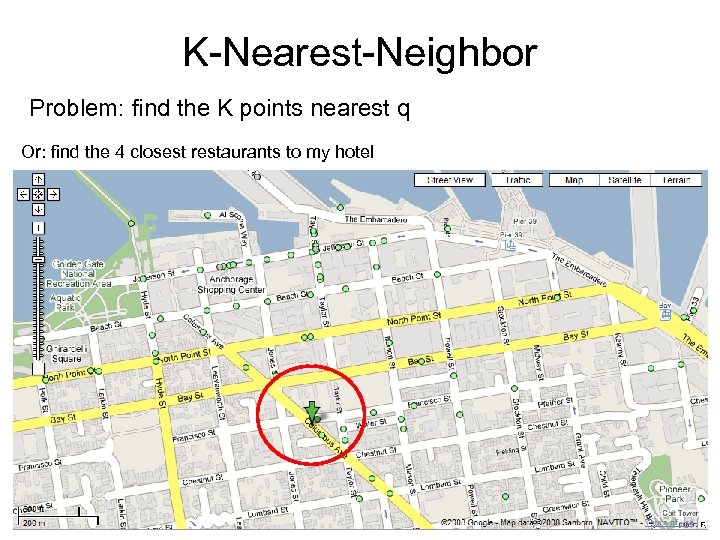

K-Nearest-Neighbor Problem: find the K points nearest q Or: find the 4 closest restaurants to my hotel

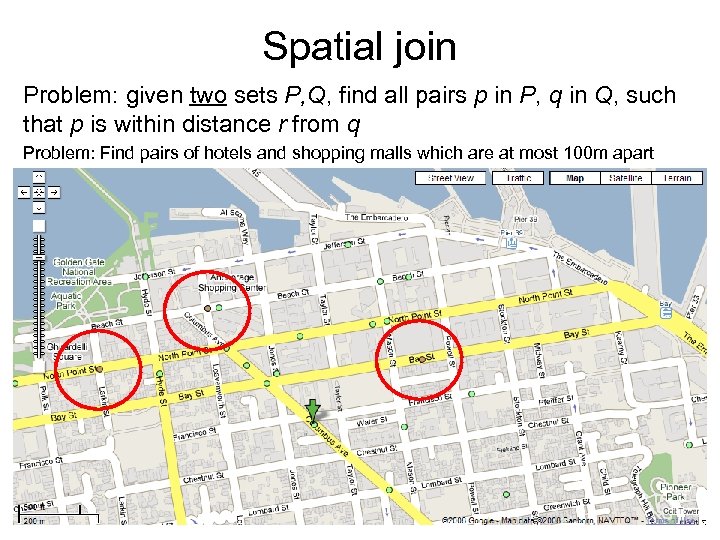

Spatial join Problem: given two sets P, Q, find all pairs p in P, q in Q, such that p is within distance r from q Problem: Find pairs of hotels and shopping malls which are at most 100 m apart

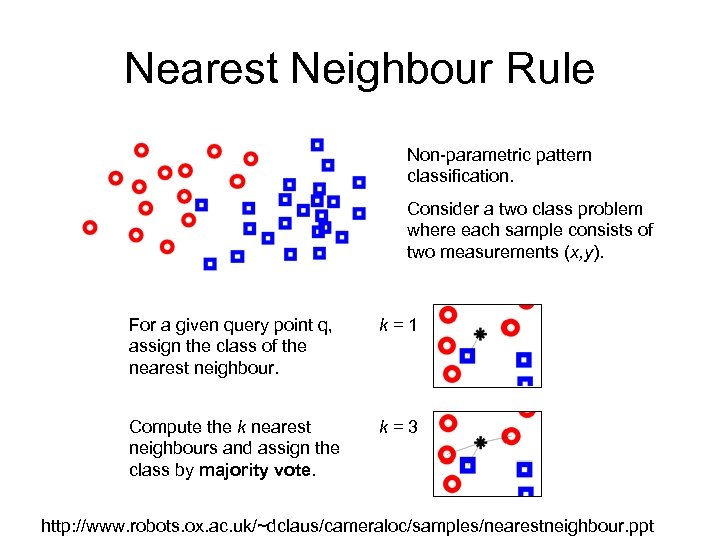

Nearest Neighbour Rule Non-parametric pattern classification. Consider a two class problem where each sample consists of two measurements (x, y). For a given query point q, assign the class of the nearest neighbour. k=1 Compute the k nearest neighbours and assign the class by majority vote. k=3 http: //www. robots. ox. ac. uk/~dclaus/cameraloc/samples/nearestneighbour. ppt

Motivation The nearest neighbor search problem arises in numerous fields of application, including: Pattern recognition Statistical classification Computer vision Databases Coding theory Data compression Internet marketing DNA sequencing Spell checking Plagiarism detection Copyright violation detection and many more

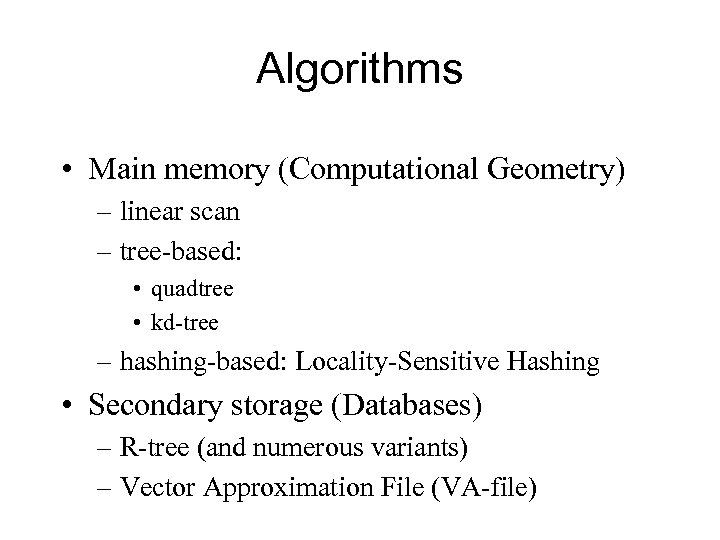

Algorithms • Main memory (Computational Geometry) – linear scan – tree-based: • quadtree • kd-tree – hashing-based: Locality-Sensitive Hashing • Secondary storage (Databases) – R-tree (and numerous variants) – Vector Approximation File (VA-file)

Linear scan (Naïve approach) • The simplest solution to the NNS problem • Compute the distance from the query point to every other point in the database, keeping track of the "best so far". • This algorithm works for small databases but quickly becomes intractable as either the size or the dimensionality of the problem becomes large. • Running time is O(Nd).

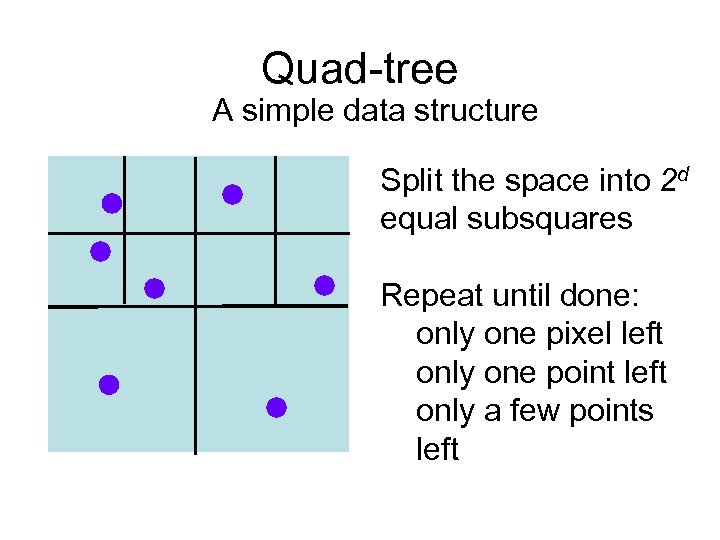

Quad-tree A simple data structure Split the space into 2 d equal subsquares Repeat until done: only one pixel left only one point left only a few points left

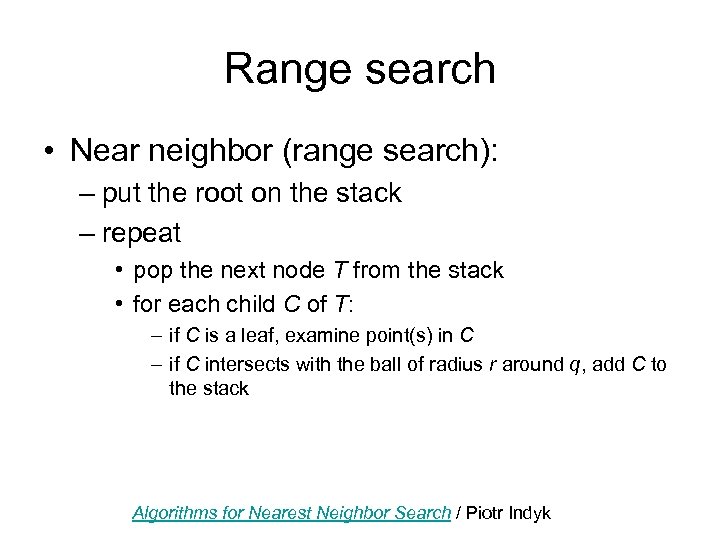

Range search • Near neighbor (range search): – put the root on the stack – repeat • pop the next node T from the stack • for each child C of T: – if C is a leaf, examine point(s) in C – if C intersects with the ball of radius r around q, add C to the stack Algorithms for Nearest Neighbor Search / Piotr Indyk

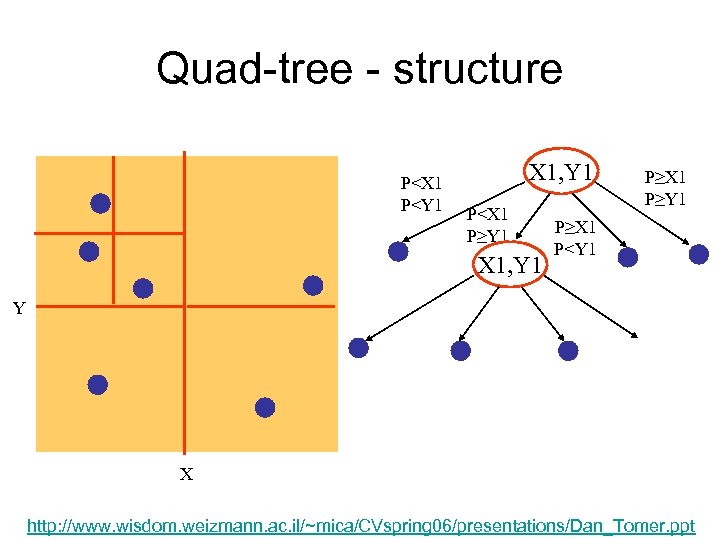

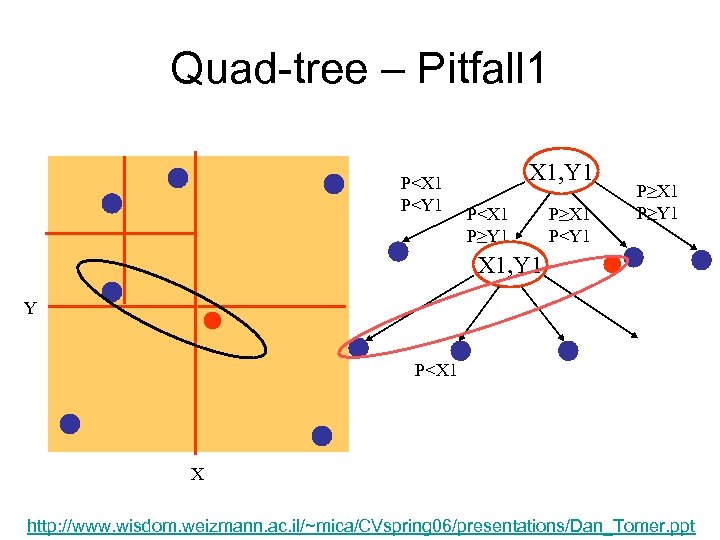

Quad-tree - structure P<X 1 P<Y 1 X 1, Y 1 P<X 1 P≥Y 1 X 1, Y 1 P≥X 1 P≥Y 1 P≥X 1 P<Y 1 Y X http: //www. wisdom. weizmann. ac. il/~mica/CVspring 06/presentations/Dan_Tomer. ppt

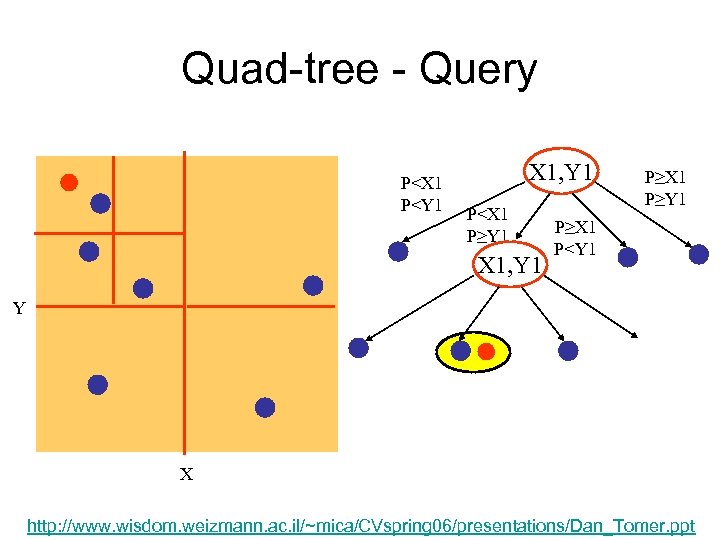

Quad-tree - Query P<X 1 P<Y 1 X 1, Y 1 P<X 1 P≥Y 1 X 1, Y 1 P≥X 1 P≥Y 1 P≥X 1 P<Y 1 Y X http: //www. wisdom. weizmann. ac. il/~mica/CVspring 06/presentations/Dan_Tomer. ppt

Quad-tree • Simple data structure • What's the downside ?

Quad-tree – Pitfall 1 P<X 1 P<Y 1 X 1, Y 1 P<X 1 P≥Y 1 P≥X 1 P<Y 1 P≥X 1 P≥Y 1 X 1, Y 1 Y P<X 1 X http: //www. wisdom. weizmann. ac. il/~mica/CVspring 06/presentations/Dan_Tomer. ppt

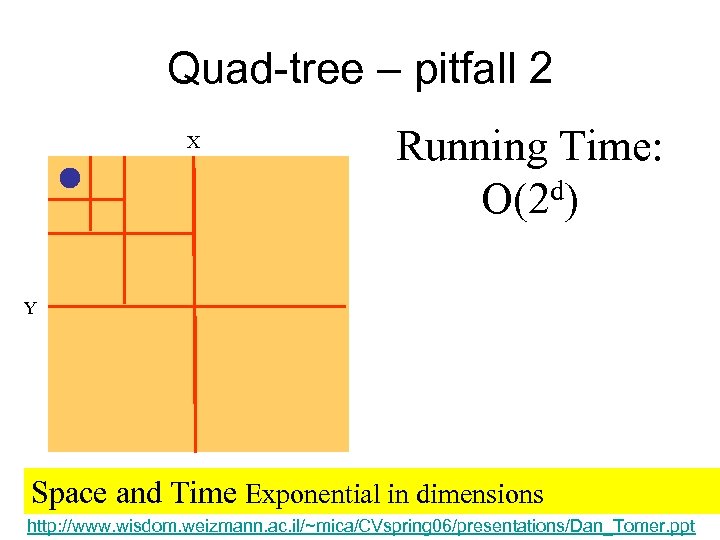

Quad-tree – pitfall 2 X Running Time: d) O(2 Y Space and Time Exponential in dimensions http: //www. wisdom. weizmann. ac. il/~mica/CVspring 06/presentations/Dan_Tomer. ppt

![Kd-trees [Bentley’ 75] • Main ideas: – only one-dimensional splits – instead of splitting Kd-trees [Bentley’ 75] • Main ideas: – only one-dimensional splits – instead of splitting](https://present5.com/presentation/da19c012866461257f0c8980a5aa7815/image-20.jpg)

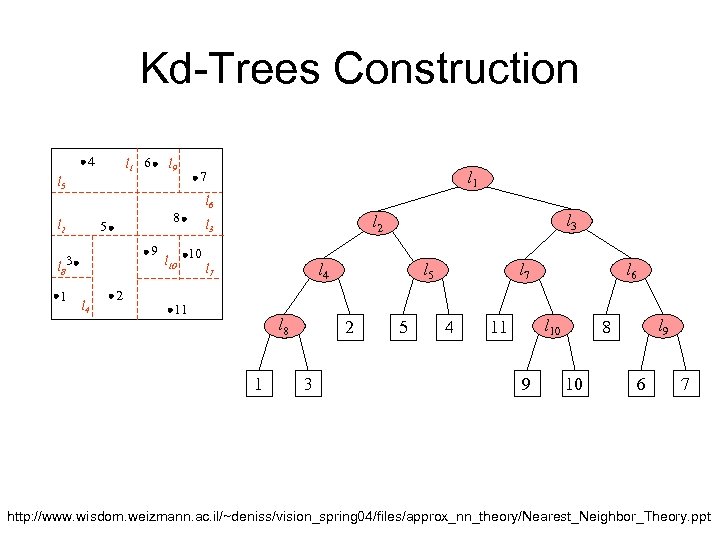

Kd-trees [Bentley’ 75] • Main ideas: – only one-dimensional splits – instead of splitting in the middle, choose the split “carefully” (many variations) – near(est) neighbor queries: as for quad-trees Algorithms for Nearest Neighbor Search / Piotr Indyk

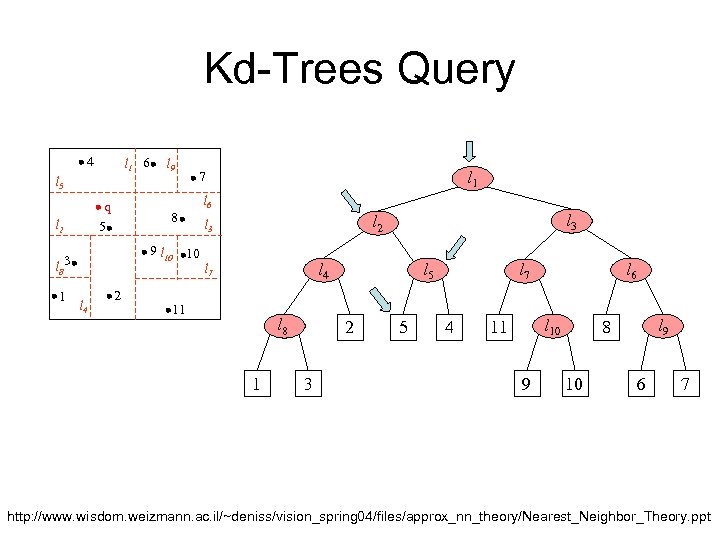

Kd-Trees Construction 4 l 1 6 l 9 l 5 l 2 8 5 9 l 8 3 1 l 4 2 l 1 7 l 10 10 l 6 l 3 l 2 l 3 l 4 l 7 11 l 8 1 l 5 2 3 5 l 7 4 l 6 l 10 11 9 l 9 8 10 6 7 http: //www. wisdom. weizmann. ac. il/~deniss/vision_spring 04/files/approx_nn_theory/Nearest_Neighbor_Theory. ppt

Kd-Trees Query 4 l 1 6 l 9 l 5 q 5 l 2 8 9 l 10 10 l 8 3 1 l 4 2 l 1 7 l 6 l 3 l 2 l 3 l 4 l 7 11 l 8 1 l 5 2 3 5 l 7 4 l 6 l 10 11 9 l 9 8 10 6 7 http: //www. wisdom. weizmann. ac. il/~deniss/vision_spring 04/files/approx_nn_theory/Nearest_Neighbor_Theory. ppt

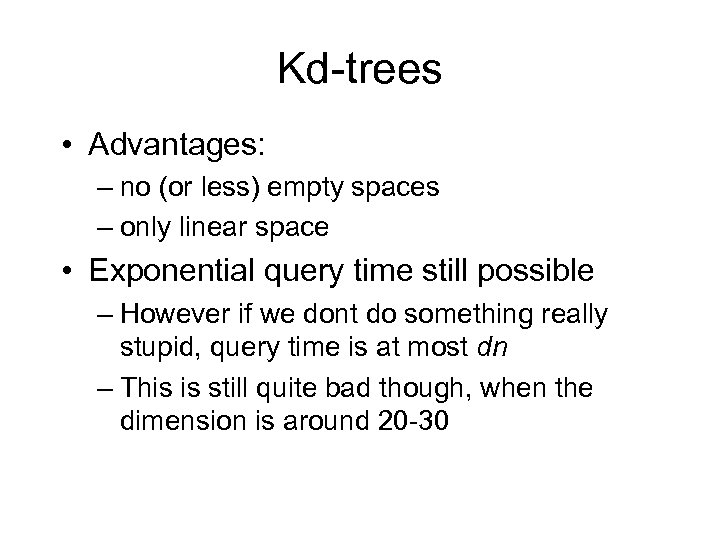

Kd-trees • Advantages: – no (or less) empty spaces – only linear space • Exponential query time still possible – However if we dont do something really stupid, query time is at most dn – This is still quite bad though, when the dimension is around 20 -30

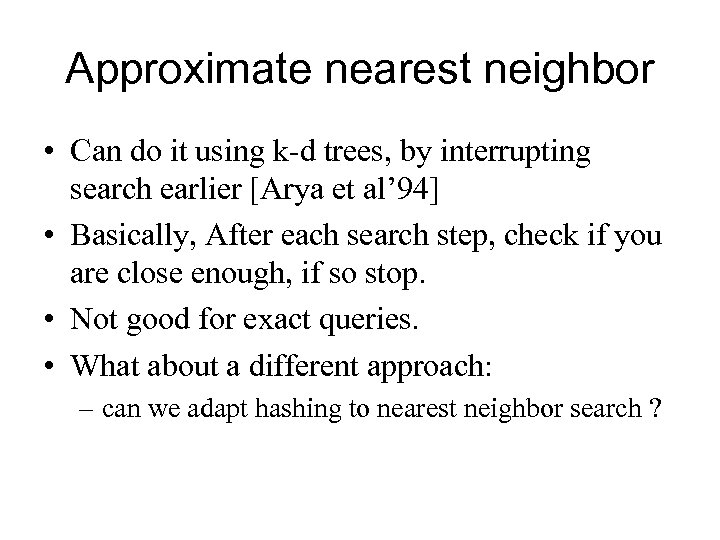

Approximate nearest neighbor • Can do it using k-d trees, by interrupting search earlier [Arya et al’ 94] • Basically, After each search step, check if you are close enough, if so stop. • Not good for exact queries. • What about a different approach: – can we adapt hashing to nearest neighbor search ?

![Locality-Sensitive Hashing [Indyk-Motwani’ 98] Key Idea • Preprocessing : – Hash the data-point using Locality-Sensitive Hashing [Indyk-Motwani’ 98] Key Idea • Preprocessing : – Hash the data-point using](https://present5.com/presentation/da19c012866461257f0c8980a5aa7815/image-25.jpg)

Locality-Sensitive Hashing [Indyk-Motwani’ 98] Key Idea • Preprocessing : – Hash the data-point using several LSH functions so that probability of collision is higher for closer objects • Querying : – Hash query point and retrieve elements in the buckets containing the query point

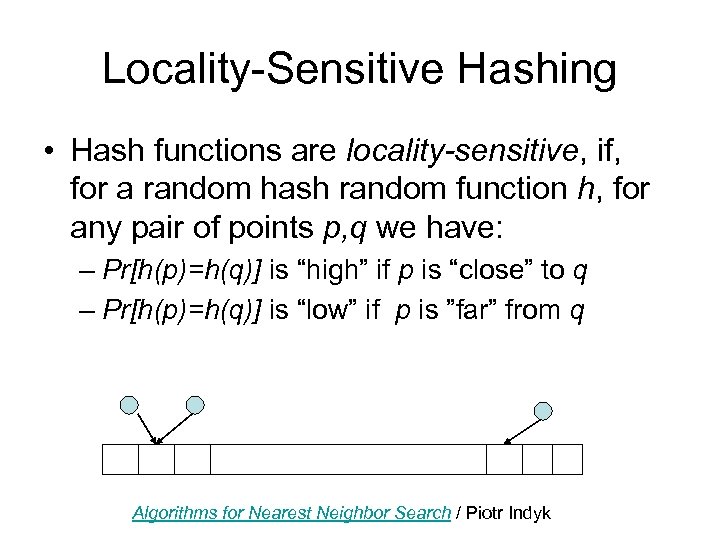

Locality-Sensitive Hashing • Hash functions are locality-sensitive, if, for a random hash random function h, for any pair of points p, q we have: – Pr[h(p)=h(q)] is “high” if p is “close” to q – Pr[h(p)=h(q)] is “low” if p is ”far” from q Algorithms for Nearest Neighbor Search / Piotr Indyk

Do such functions exist ? • Consider the hypercube, i. e. , – points from {0, 1}d – Hamming distance D(p, q)= # positions on which p and q differ • Define hash function h by choosing a set S of k random coordinates, and setting h(p) = projection of p on S Richard Hamming Algorithms for Nearest Neighbor Search / Piotr Indyk

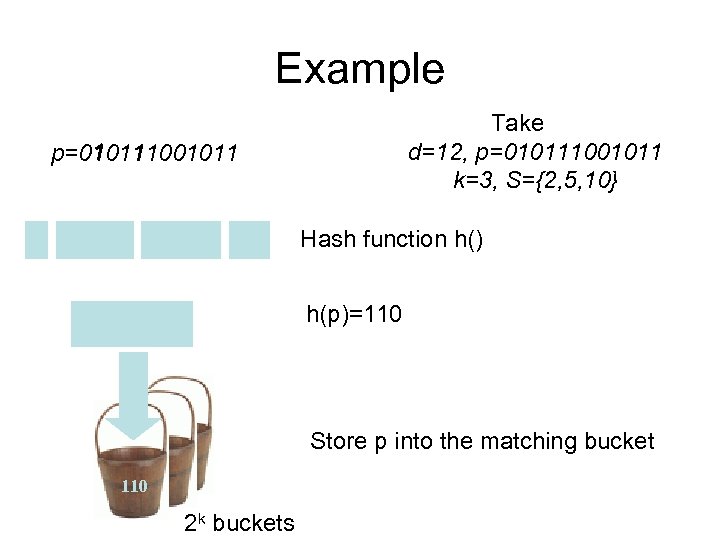

Example Take d=12, p=010111001011 k=3, S={2, 5, 10} 1 1 p=010111001011 0 Hash function h() h(p)=110 Store p into the matching bucket 110 2 k buckets

![h’s are locality-sensitive • Pr[h(p)=h(q)]=(1 -D(p, q)/d)k • We can vary the probability by h’s are locality-sensitive • Pr[h(p)=h(q)]=(1 -D(p, q)/d)k • We can vary the probability by](https://present5.com/presentation/da19c012866461257f0c8980a5aa7815/image-29.jpg)

h’s are locality-sensitive • Pr[h(p)=h(q)]=(1 -D(p, q)/d)k • We can vary the probability by changing k Pr k=1 distance Pr k=2 distance Algorithms for Nearest Neighbor Search / Piotr Indyk

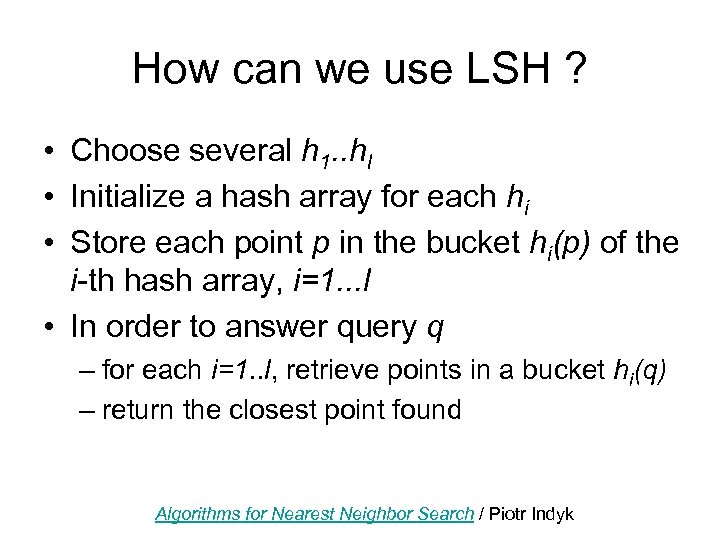

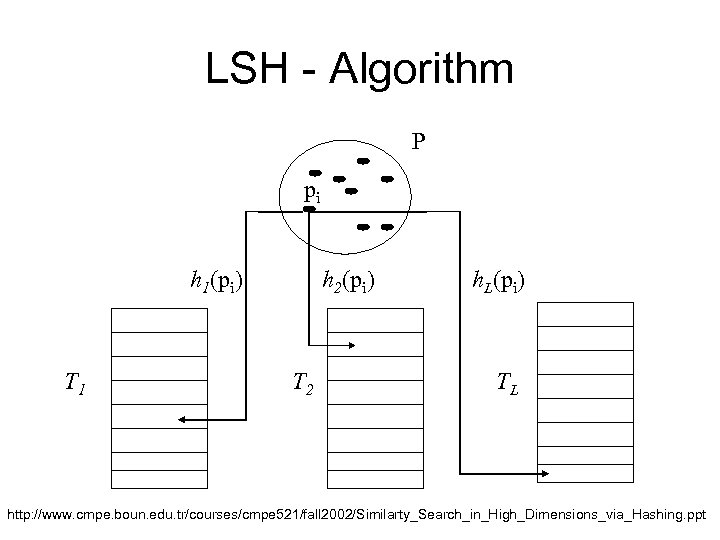

How can we use LSH ? • Choose several h 1. . hl • Initialize a hash array for each hi • Store each point p in the bucket hi(p) of the i-th hash array, i=1. . . l • In order to answer query q – for each i=1. . l, retrieve points in a bucket hi(q) – return the closest point found Algorithms for Nearest Neighbor Search / Piotr Indyk

LSH - Algorithm P pi h 1(pi) T 1 h 2(pi) T 2 h. L(pi) TL http: //www. cmpe. boun. edu. tr/courses/cmpe 521/fall 2002/Similarty_Search_in_High_Dimensions_via_Hashing. ppt

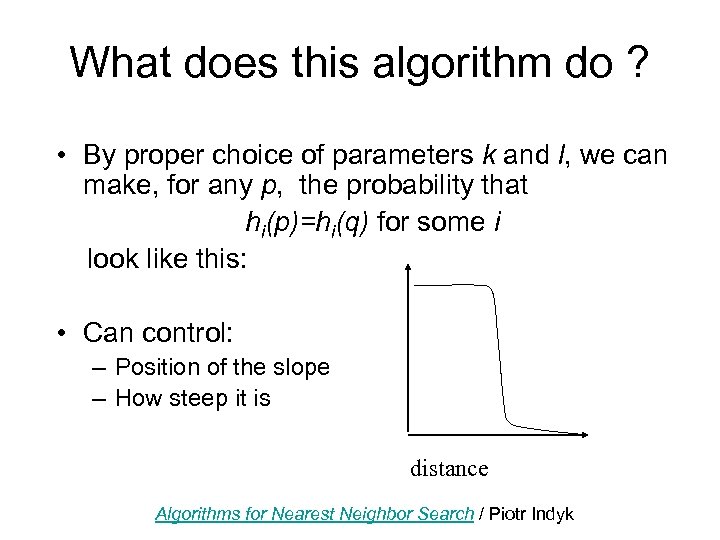

What does this algorithm do ? • By proper choice of parameters k and l, we can make, for any p, the probability that hi(p)=hi(q) for some i look like this: • Can control: – Position of the slope – How steep it is distance Algorithms for Nearest Neighbor Search / Piotr Indyk

The LSH algorithm • Therefore, we can solve (approximately) the near neighbor problem with given parameter r • Worst-case analysis guarantees dn 1/(1+e) query time • Practical evaluation indicates much better behavior [GIM’ 99, HGI’ 00, Buh’ 00, BT’ 00] • Drawbacks: • works best for Hamming distance (although can be generalized to Euclidean space) • requires radius r to be fixed in advance Algorithms for Nearest Neighbor Search / Piotr Indyk

Secondary storage • As mentioned in the Motivation Slide, There are many usages for NN. • Some store large datasets that need secondary storage.

Secondary storage • Grouping the data is crucial • Different approach required: – in main memory, any reduction in the number of inspected points was good – on disk, this is not the case !

![Disk-based algorithms • R-tree [Guttman’ 84] – departing point for many variations – over Disk-based algorithms • R-tree [Guttman’ 84] – departing point for many variations – over](https://present5.com/presentation/da19c012866461257f0c8980a5aa7815/image-36.jpg)

Disk-based algorithms • R-tree [Guttman’ 84] – departing point for many variations – over 600 citations ! (according to Cite. Seer) – “optimistic” approach: try to answer queries in logarithmic time • Vector Approximation File [WSB’ 98] – “pessimistic” approach: if we need to scan the whole data set, we better do it fast • LSH works also on disk Algorithms for Nearest Neighbor Search / Piotr Indyk

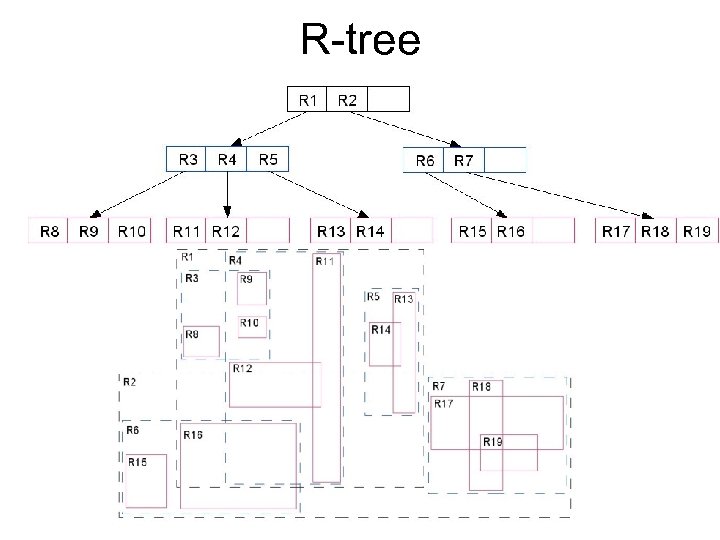

R-tree • “Bottom-up” approach (k-d-tree was “topdown”) : – Start with a set of points/rectangles – Partition the set into groups of small cardinality – For each group, find minimum rectangle containing objects from this group – Repeat Algorithms for Nearest Neighbor Search / Piotr Indyk

R-tree

R-tree • Advantages: – Supports near(est) neighbor search (similar as before) – Works for points and rectangles – Avoids empty spaces – Many variants: X-tree, SS-tree, SR-tree etc – Works well for low dimensions • Not so great for high dimensions Algorithms for Nearest Neighbor Search / Piotr Indyk

![VA-file [Weber, Schek, Blott’ 98] • Approach: – In high-dimensional spaces, all tree-based indexing VA-file [Weber, Schek, Blott’ 98] • Approach: – In high-dimensional spaces, all tree-based indexing](https://present5.com/presentation/da19c012866461257f0c8980a5aa7815/image-40.jpg)

VA-file [Weber, Schek, Blott’ 98] • Approach: – In high-dimensional spaces, all tree-based indexing structures examine large fraction of leaves – If we need to visit so many nodes anyway, it is better to scan the whole data set and avoid performing seeks altogether – 1 seek = transfer of few hundred KB Algorithms for Nearest Neighbor Search / Piotr Indyk

VA-file • Natural question: how to speed-up linear scan ? • Answer: use approximation – Use only i bits per dimension (and speed-up the scan by a factor of 32/i) – Identify all points which could be returned as an answer – Verify the points using original data set Algorithms for Nearest Neighbor Search / Piotr Indyk

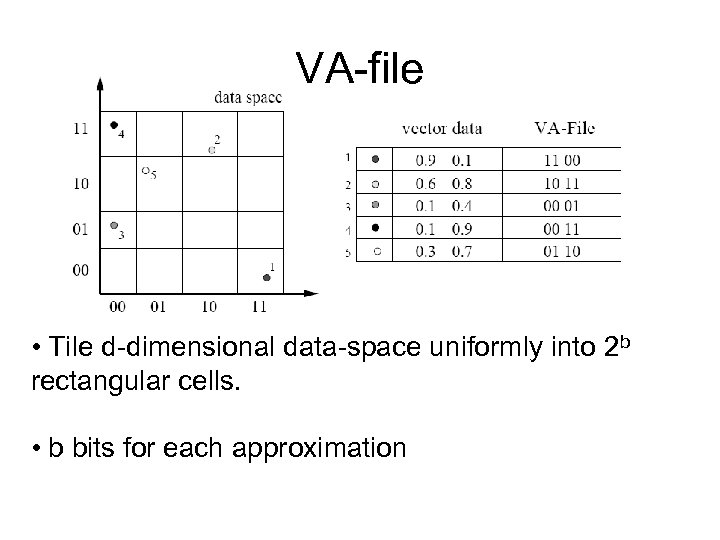

VA-file • Tile d-dimensional data-space uniformly into 2 b rectangular cells. • b bits for each approximation

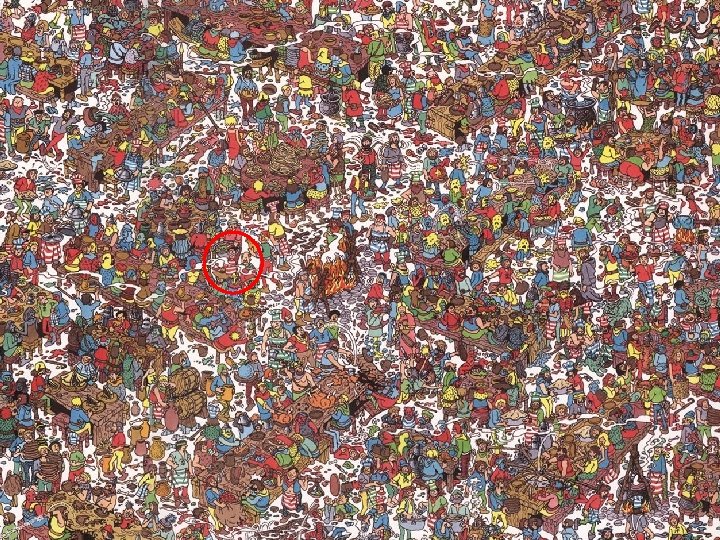

Where’s Waldo ?

Colorization by example R. Irony, D. Cohen-Or, D. Lischinski

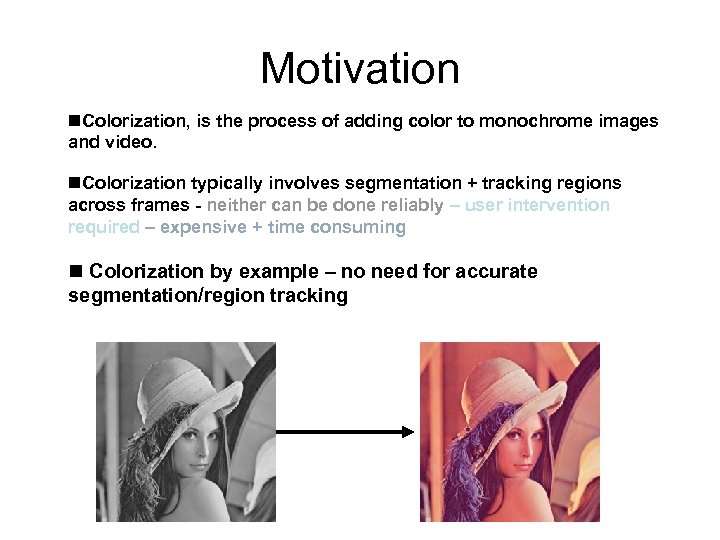

Motivation n. Colorization, is the process of adding color to monochrome images and video. n. Colorization typically involves segmentation + tracking regions across frames - neither can be done reliably – user intervention required – expensive + time consuming n Colorization by example – no need for accurate segmentation/region tracking

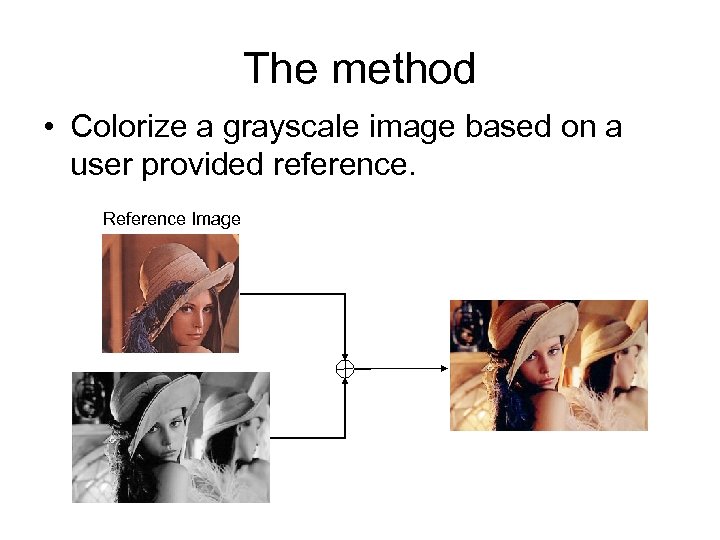

The method • Colorize a grayscale image based on a user provided reference. Reference Image

![Naïve Method Transferring color to grayscale images [Walsh, Ashikhmin, Mueller 2002] • Find a Naïve Method Transferring color to grayscale images [Walsh, Ashikhmin, Mueller 2002] • Find a](https://present5.com/presentation/da19c012866461257f0c8980a5aa7815/image-49.jpg)

Naïve Method Transferring color to grayscale images [Walsh, Ashikhmin, Mueller 2002] • Find a good match between a pixel and its neighborhood in a grayscale image and in a reference image.

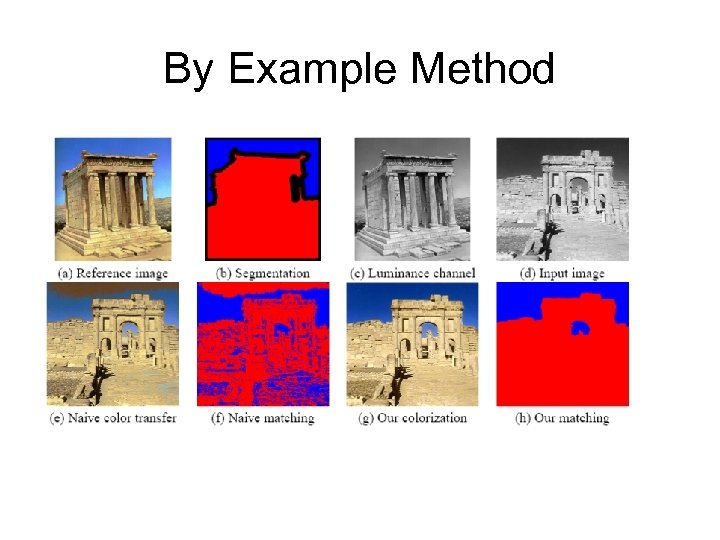

By Example Method

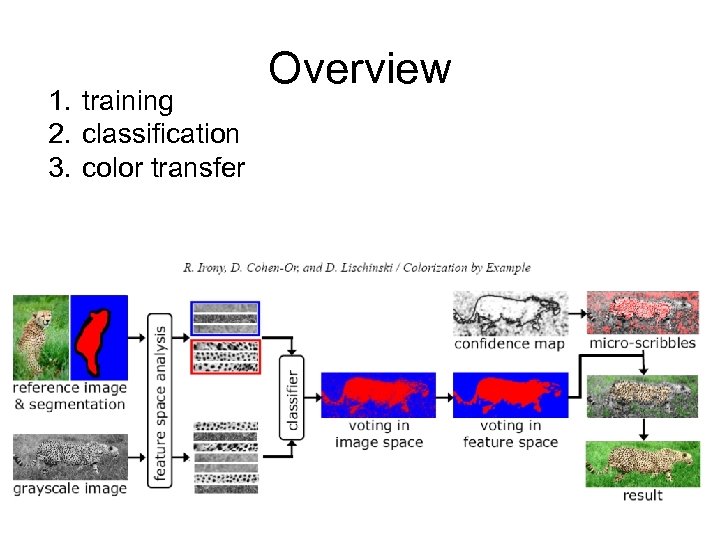

1. training 2. classification 3. color transfer Overview

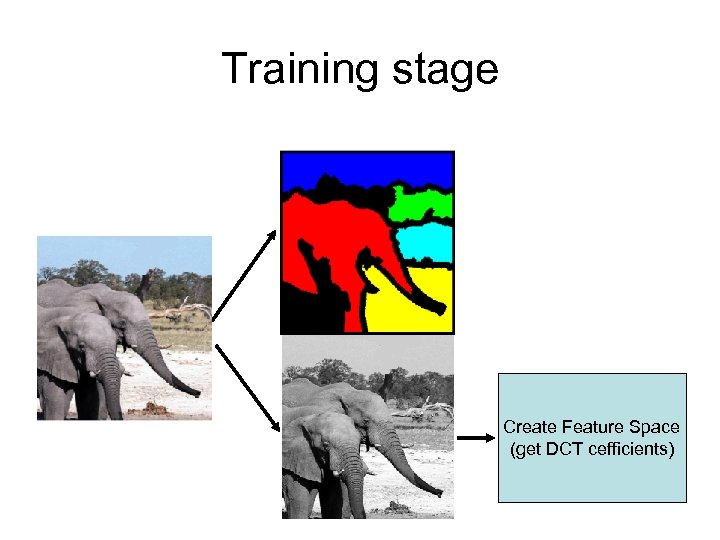

Training stage Input: 1. The luminance channel of the reference image 2. The accompanying partial segmentation Construct a low dimensional feature space in which it is easy to discriminate between pixels belonging to differently labeled regions, based on a small (grayscale) neighborhood around each pixel.

Training stage Create Feature Space (get DCT cefficients)

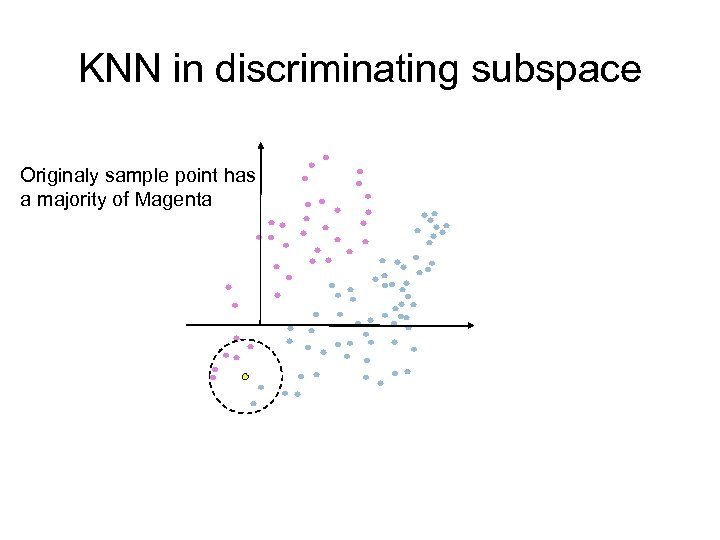

Classification stage For each grayscale image pixel, determine which region should be used as a color reference for this pixel. One way: K-Nearest –Neighbor Rule Better way: KNN in discriminating subspace.

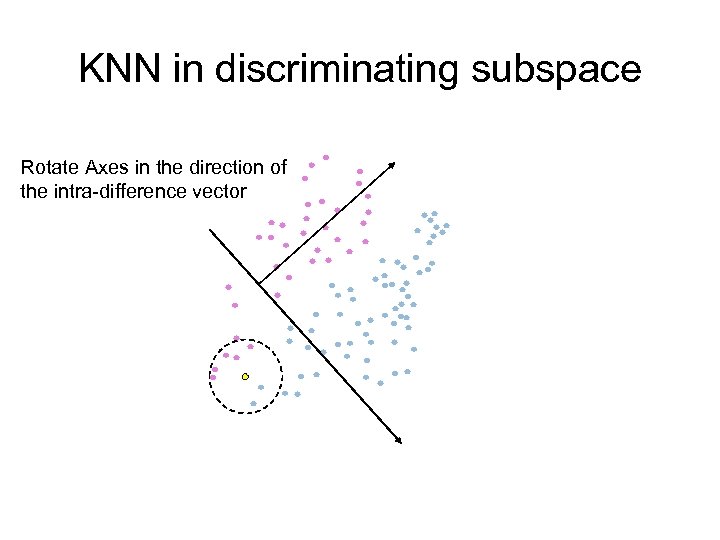

KNN in discriminating subspace Originaly sample point has a majority of Magenta

KNN in discriminating subspace Rotate Axes in the direction of the intra-difference vector

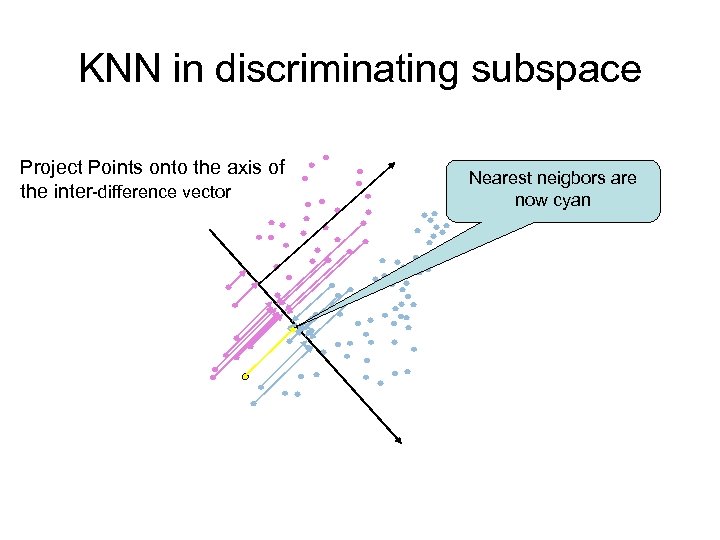

KNN in discriminating subspace Project Points onto the axis of the inter-difference vector Nearest neigbors are now cyan

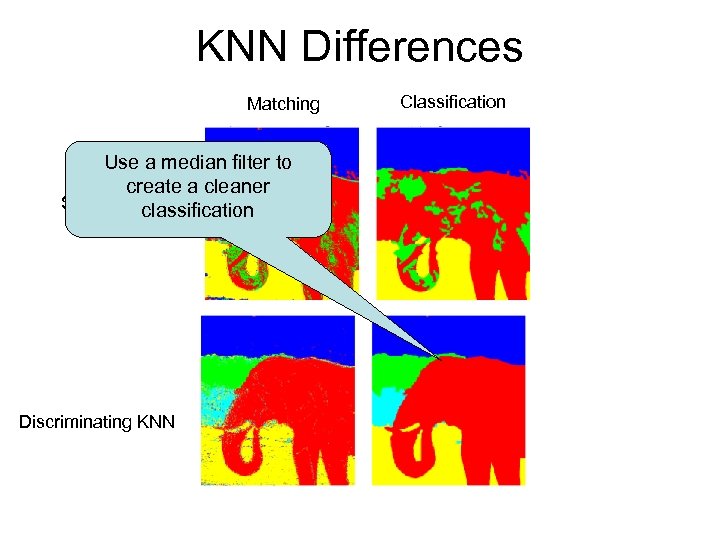

KNN Differences Matching Use a median filter to create a cleaner Simple KNN classification Discriminating KNN Classification

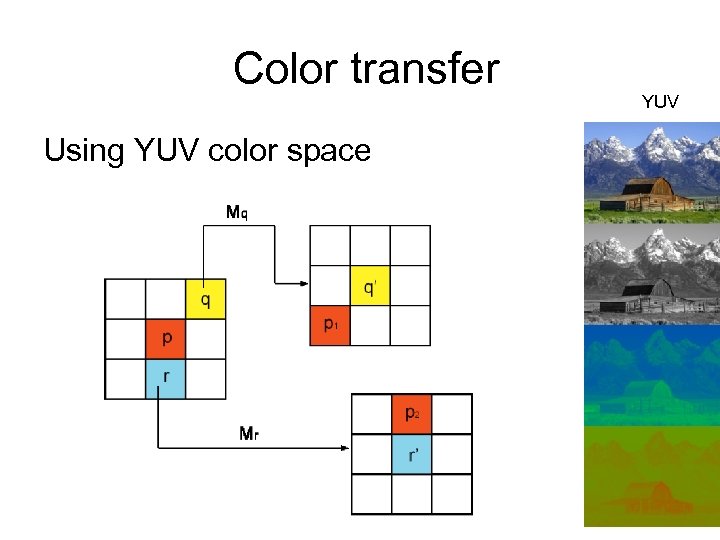

Color transfer Using YUV color space YUV

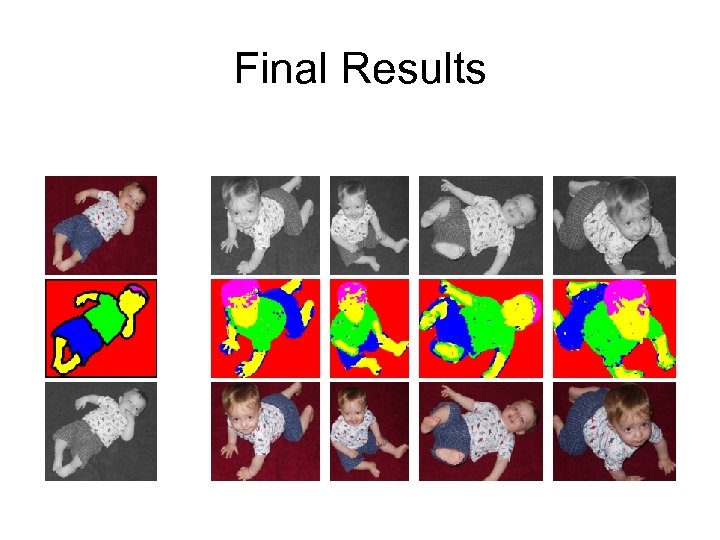

Final Results

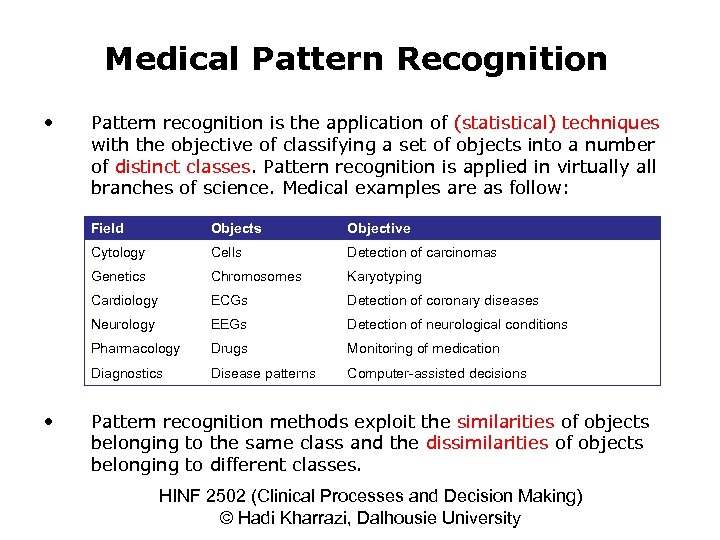

Medical Pattern Recognition • Pattern recognition is the application of (statistical) techniques with the objective of classifying a set of objects into a number of distinct classes. Pattern recognition is applied in virtually all branches of science. Medical examples are as follow: Field Objective Cytology Cells Detection of carcinomas Genetics Chromosomes Karyotyping Cardiology ECGs Detection of coronary diseases Neurology EEGs Detection of neurological conditions Pharmacology Drugs Monitoring of medication Diagnostics • Objects Disease patterns Computer-assisted decisions Pattern recognition methods exploit the similarities of objects belonging to the same class and the dissimilarities of objects belonging to different classes. HINF 2502 (Clinical Processes and Decision Making) © Hadi Kharrazi, Dalhousie University

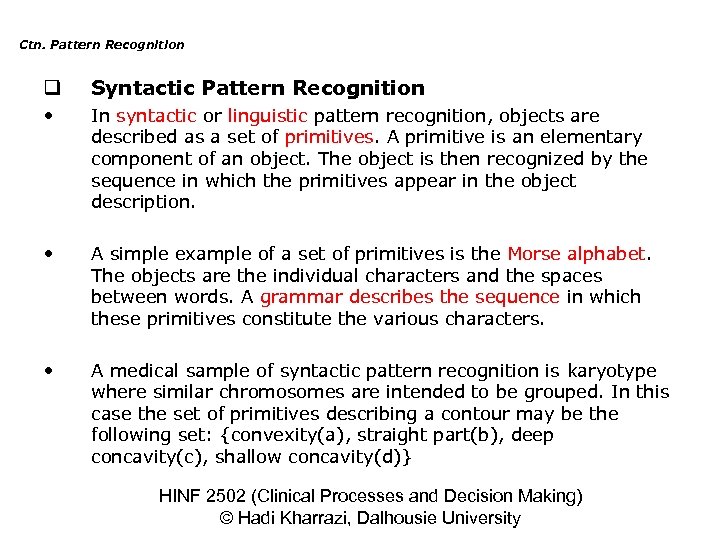

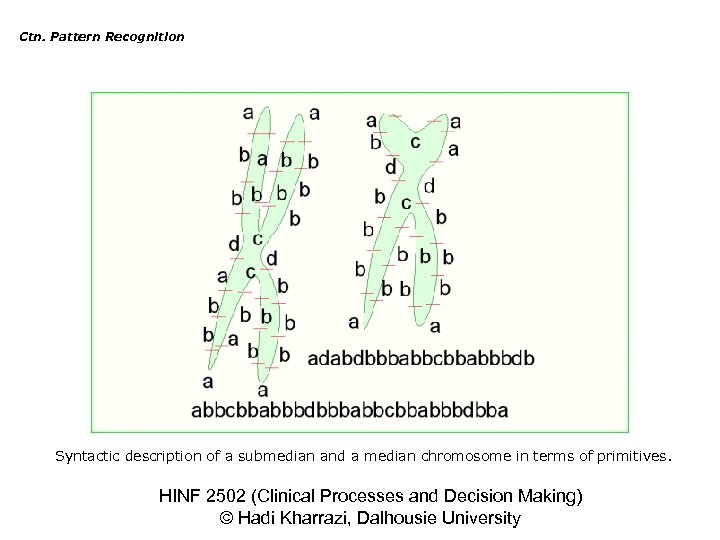

Ctn. Pattern Recognition q Syntactic Pattern Recognition • In syntactic or linguistic pattern recognition, objects are described as a set of primitives. A primitive is an elementary component of an object. The object is then recognized by the sequence in which the primitives appear in the object description. • A simple example of a set of primitives is the Morse alphabet. The objects are the individual characters and the spaces between words. A grammar describes the sequence in which these primitives constitute the various characters. • A medical sample of syntactic pattern recognition is karyotype where similar chromosomes are intended to be grouped. In this case the set of primitives describing a contour may be the following set: {convexity(a), straight part(b), deep concavity(c), shallow concavity(d)} HINF 2502 (Clinical Processes and Decision Making) © Hadi Kharrazi, Dalhousie University

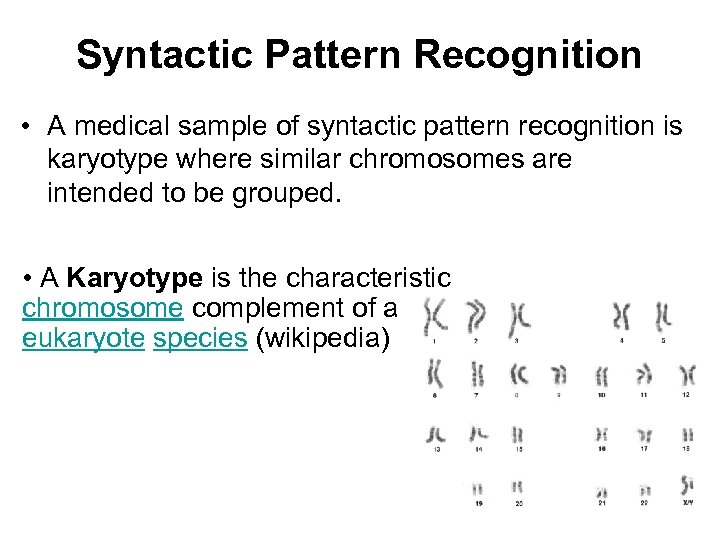

Syntactic Pattern Recognition • A medical sample of syntactic pattern recognition is karyotype where similar chromosomes are intended to be grouped. • A Karyotype is the characteristic chromosome complement of a eukaryote species (wikipedia)

Ctn. Pattern Recognition Syntactic description of a submedian and a median chromosome in terms of primitives. HINF 2502 (Clinical Processes and Decision Making) © Hadi Kharrazi, Dalhousie University

Ctn. Pattern Recognition q Statistical Pattern Recognition • In statistical pattern recognition objects are described by numerical features. This method is categorized into: supervised and unsupervised techniques. • In supervised techniques the number of distinct classes are known and a set of example objects is available. These objects are labeled with their class membership. The problem is to assign a new unclassified object to one of the classes. • In unsupervised techniques (such as clustering) a collection of observations is given and the problem is to establish whether these observations naturally divide into two or more different classes. HINF 2502 (Clinical Processes and Decision Making) © Hadi Kharrazi, Dalhousie University

Ctn. Pattern Recognition v Supervised Pattern Recognition • In supervised pattern recognition, class recognition is based on the differences of the statistical distributions of the features between the various classes. The development of supervised classification rules normally proceeds in two steps: • Learning phase: In this step the classification rule is designed on the basis of class properties as derived from a collection of class-labeled objects called the design (training) set. • Validation phase: In this step another collection of class labeled objects called test set will be tested by the results from the learning phase. Thus, the proportion of correct classifications obtained by the rule can be calculated. HINF 2502 (Clinical Processes and Decision Making) © Hadi Kharrazi, Dalhousie University

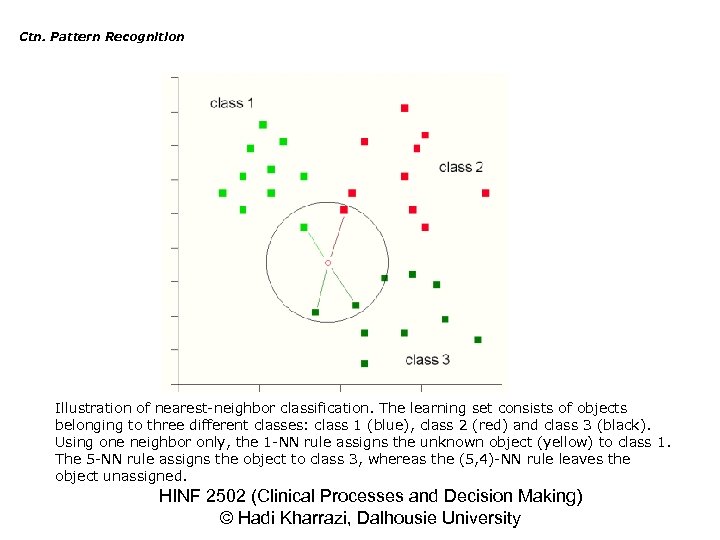

Ctn. Pattern Recognition • 1 -Nearest-Neighbor Rule: In the simplest form, to classify an unknown object the nearest object from the learning set is identified. The unknown object is then assigned to the class to which its nearest neighbor belongs. • q-Nearest-Neighbor Rule: Rather than deciding on class membership on the basis of a single nearest neighbor, a quorum of q nearest neighbors is inspected. The class membership of the unknown object is them established on the basis of the majority of the class memberships of these q nearest neighbors. • The problem with NN rules is that they are justifiable only with large learning sets and this increases the computational time. HINF 2502 (Clinical Processes and Decision Making) © Hadi Kharrazi, Dalhousie University

Ctn. Pattern Recognition Illustration of nearest-neighbor classification. The learning set consists of objects belonging to three different classes: class 1 (blue), class 2 (red) and class 3 (black). Using one neighbor only, the 1 -NN rule assigns the unknown object (yellow) to class 1. The 5 -NN rule assigns the object to class 3, whereas the (5, 4)-NN rule leaves the object unassigned. HINF 2502 (Clinical Processes and Decision Making) © Hadi Kharrazi, Dalhousie University

Back To Computer Science How we use Nearest Neighbor for OCR ? Classification techniques for Hand-Written Digit Recognition Venkat Raghavan N. S. , Saneej B. C. , and Karteek Popuri Department of Chemical and Materials Engineering University of Alberta, Canada.

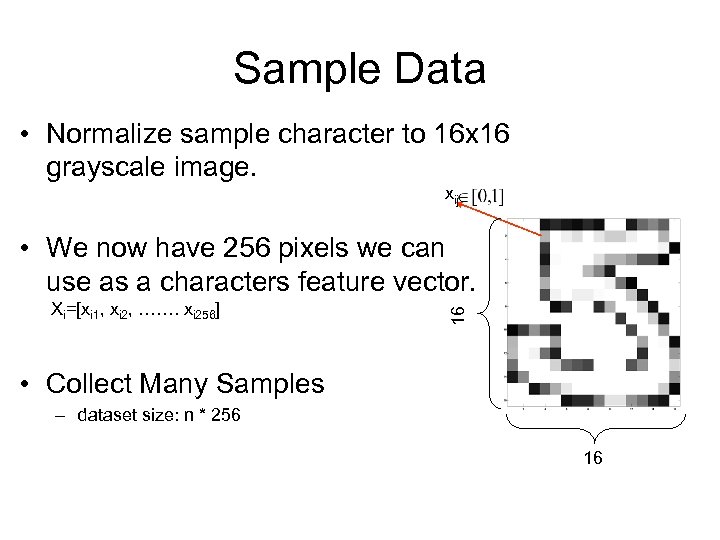

Sample Data • Normalize sample character to 16 x 16 grayscale image. xij Xi=[xi 1, xi 2, ……. xi 256] 16 • We now have 256 pixels we can use as a characters feature vector. • Collect Many Samples – dataset size: n * 256 16

Lets reduce dimensions How ? PCA Principal Component Analysis (we skipped this lecture)

Principal Components Analysis The Basic Principle PCA transforms a set of correlated variables into a smaller set of uncorrelated variables called principal components. The Objective Discovering the “true dimension” of the data. It may be that p dimensional data can be represented in q < p dimensions without losing much information Samples can be found: http: //www. cs. mcgill. ca/~sqrt/dimreduction. html

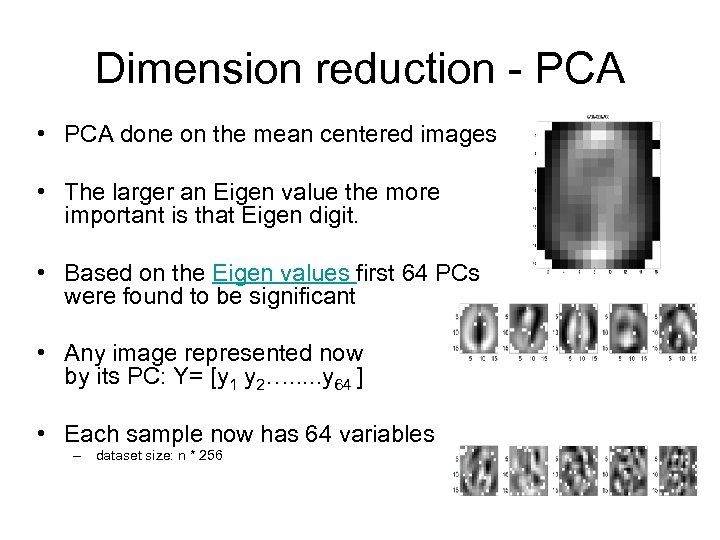

Dimension reduction - PCA • PCA done on the mean centered images • The larger an Eigen value the more important is that Eigen digit. • Based on the Eigen values first 64 PCs were found to be significant • Any image represented now by its PC: Y= [y 1 y 2…. . . y 64 ] • Each sample now has 64 variables – dataset size: n * 256 AVERAGE DIGIT

Interpreting the PCs as Image Features • Basically, the Eigen vectors are the rotation of the original axes to more meaningful directions. • The PCs are the projection of the data onto each of these new axes. • This is similar to what we did in ‘Colorization by Example’

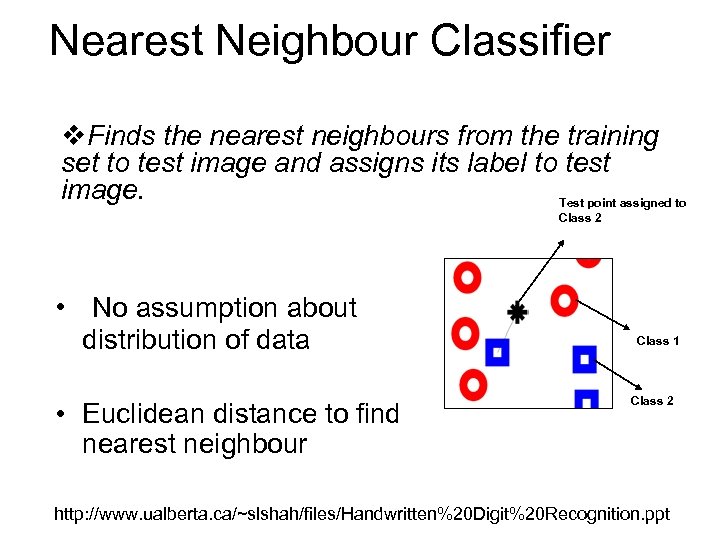

Nearest Neighbour Classifier v. Finds the nearest neighbours from the training set to test image and assigns its label to test image. Test point assigned to Class 2 • No assumption about distribution of data • Euclidean distance to find nearest neighbour Class 1 Class 2 http: //www. ualberta. ca/~slshah/files/Handwritten%20 Digit%20 Recognition. ppt

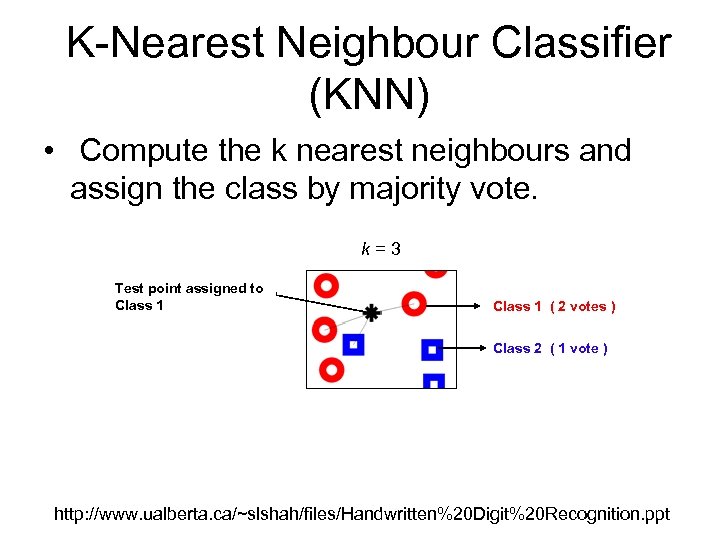

K-Nearest Neighbour Classifier (KNN) • Compute the k nearest neighbours and assign the class by majority vote. k=3 Test point assigned to Class 1 ( 2 votes ) Class 2 ( 1 vote ) http: //www. ualberta. ca/~slshah/files/Handwritten%20 Digit%20 Recognition. ppt

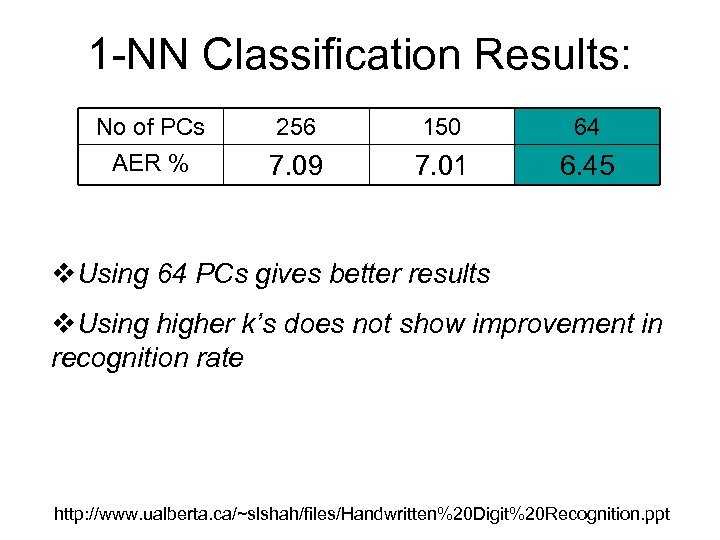

1 -NN Classification Results: No of PCs AER % 256 150 64 7. 09 7. 01 6. 45 v. Using 64 PCs gives better results v. Using higher k’s does not show improvement in recognition rate http: //www. ualberta. ca/~slshah/files/Handwritten%20 Digit%20 Recognition. ppt

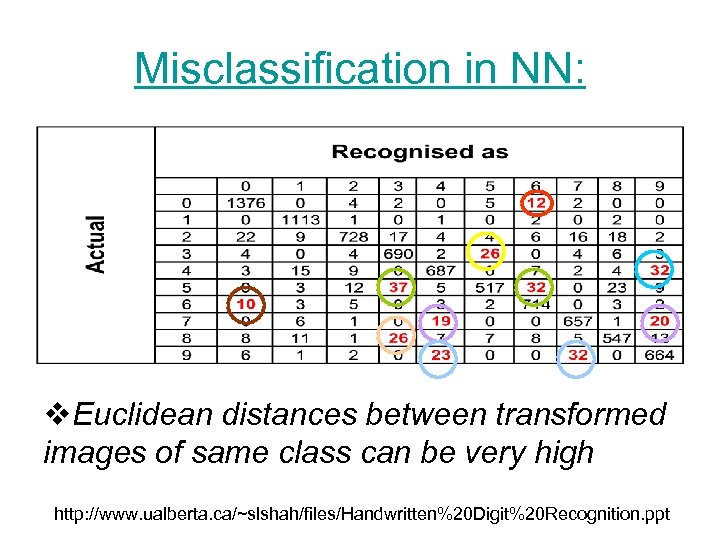

Misclassification in NN: v. Euclidean distances between transformed images of same class can be very high http: //www. ualberta. ca/~slshah/files/Handwritten%20 Digit%20 Recognition. ppt

Issues in NN: v. Expensive: To determine the nearest neighbour of a test image, must compute the distance to all N training examples v. Storage Requirements: Must store all training data http: //www. ualberta. ca/~slshah/files/Handwritten%20 Digit%20 Recognition. ppt

da19c012866461257f0c8980a5aa7815.ppt