ce7a82ae90cd018c23ac05310ef3f2bb.ppt

- Количество слайдов: 19

Natural Language Processing z. What’s the problem? y. Input? y. Output? 1

Example Applications z. Enables great user interfaces! z. Spelling and grammar checkers. z. Http: //www. askjeeves. com/ z. Document understanding on the WWW. z. Spoken language control systems: banking, shopping z. Classification systems for messages, articles. z. Machine translation tools. 2

NLP Problem Areas z. Phonology and phonetics: structure of sounds. z. Morphology: structure of words z. Syntactic interpretation (parsing): create a parse tree of a sentence. z. Semantic interpretation: translate a sentence into the representation language. y. Pragmatic interpretation: incorporate current situation into account. y. Disambiguation: there may be several interpretations. Choose the most probable 3

Some Difficult Examples z. From the newspapers: y. Squad helps dog bite victim. y. Helicopter powered by human flies. y. Levy won’t hurt the poor. y. Once-sagging cloth diaper industry saved by full dumps. z. Ambiguities: y. Lexical: meanings of ‘hot’, ‘back’. y. Syntactic: I heard the music in my room. y. Referential: The cat ate the mouse. It was ugly. 4

Parsing z. Context-free grammars: y. EXPR -> -> NUMBER VARIABLE (EXPR + EXPR) (EXPR * EXPR) z(2 + X) * (17 + Y) is in the grammar. z(2 + (X)) is not. z. Why do we call them context-free? 5

Using CFG’s for Parsing z. Can natural language syntax be captured using a context-free grammar? y. Yes, no, sort of, for the most part, maybe. z. Words: ynouns, adjectives, verbs, adverbs. y. Determiners: the, a, this, that y. Quantifiers: all, some, none y. Prepositions: in, onto, by, through y. Connectives: and, or, but, while. y. Words combine together into phrases: NP, VP 6

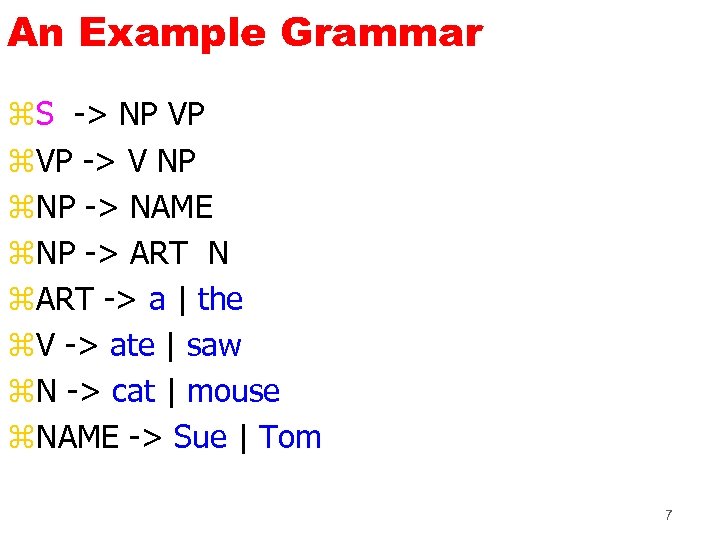

An Example Grammar z. S -> NP VP z. VP -> V NP z. NP -> NAME z. NP -> ART N z. ART -> a | the z. V -> ate | saw z. N -> cat | mouse z. NAME -> Sue | Tom 7

Example Parse z. The mouse saw Sue. 8

Try at Home z. The Sue saw. 9

Also works. . . z. The student like exam z. I is a man z. A girls like pizza z. Sue sighed the pizza. z. The basic word categories are not capturing everything… 10

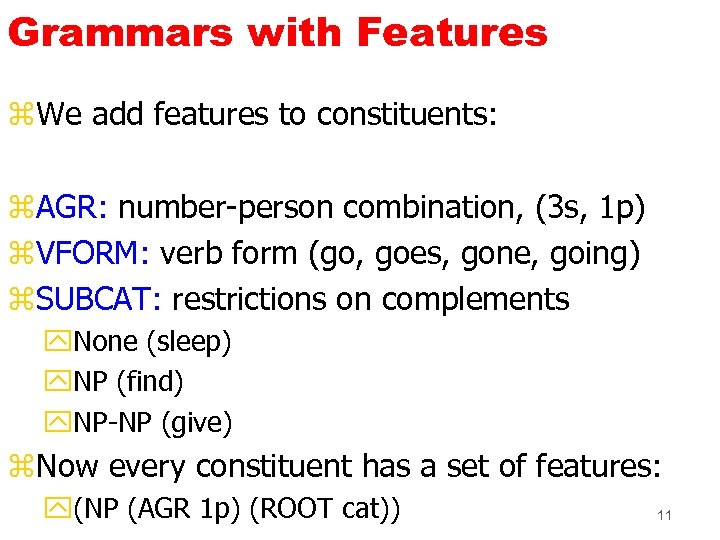

Grammars with Features z. We add features to constituents: z. AGR: number-person combination, (3 s, 1 p) z. VFORM: verb form (go, goes, gone, going) z. SUBCAT: restrictions on complements y. None (sleep) y. NP (find) y. NP-NP (give) z. Now every constituent has a set of features: y(NP (AGR 1 p) (ROOT cat)) 11

Grammar rules with Features z(S (AGR (? a)) -> (NP (AGR (? a))) (VP (AGR (? a))) z(VP (AGR (? a)) (VFORM (? vf))) --> (V (AGR (? a)) (VFORM (? vf)) (SUBCAT non)) zdog: (N (AGR 3 s) (ROOT dog)) zdogs: (N (AGR 3 p) (ROOT dog)) zbarks: (V (AGR 3 s) (VFORM pres) (SUBCAT none) (ROOT bark)) 12

Semantic Interpretation z. Our goal: to translate sentences into a logical form. z. But: sentences convey more than true/false: y. It will rain in Seattle tomorrow. y. Will it rain in Seattle tomorrow? z. A sentence can be analyzed by: ypropositional content, and yspeech act: tell, ask, request, deny, suggest 13

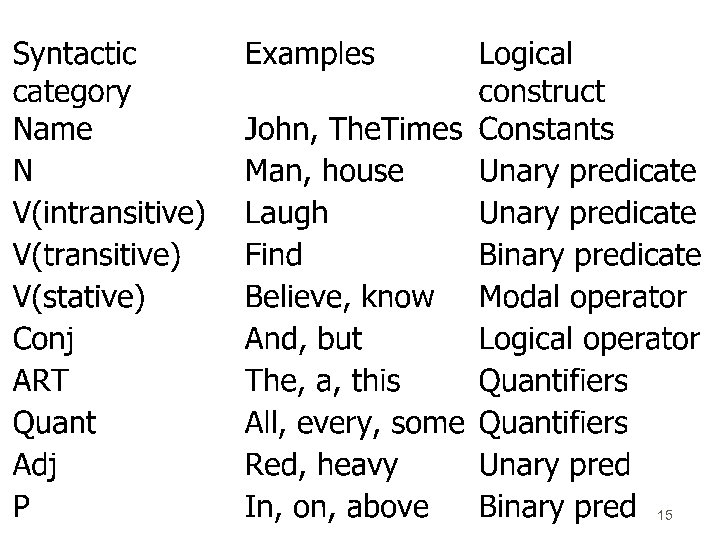

Propositional Content z. We develop a logic-like language for representing propositional content: y. Word-sense ambiguity y. Scope ambiguity z. Proper names --> objects (John, Alon) z. Nouns --> unary predicates (woman, house) z. Verbs --> ytransitive: binary predicates (find, go) yintransitive: unary predicates (laugh, cry) z. Quantifiers: most, some 14

15

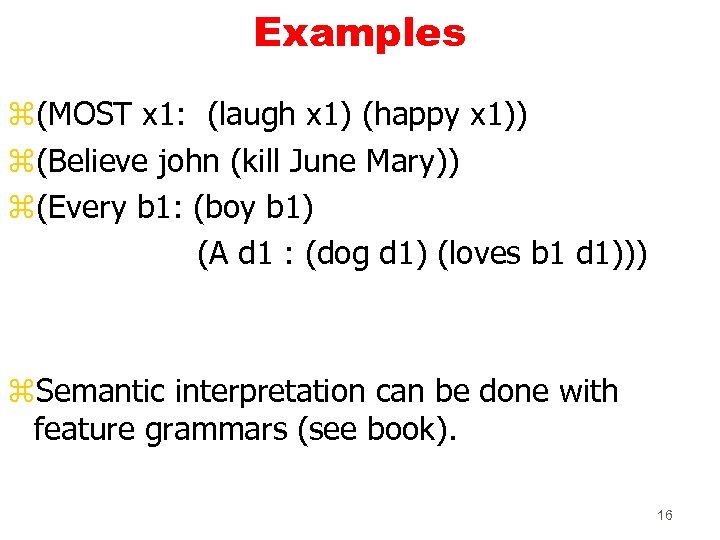

Examples z(MOST x 1: (laugh x 1) (happy x 1)) z(Believe john (kill June Mary)) z(Every b 1: (boy b 1) (A d 1 : (dog d 1) (loves b 1 d 1))) z. Semantic interpretation can be done with feature grammars (see book). 16

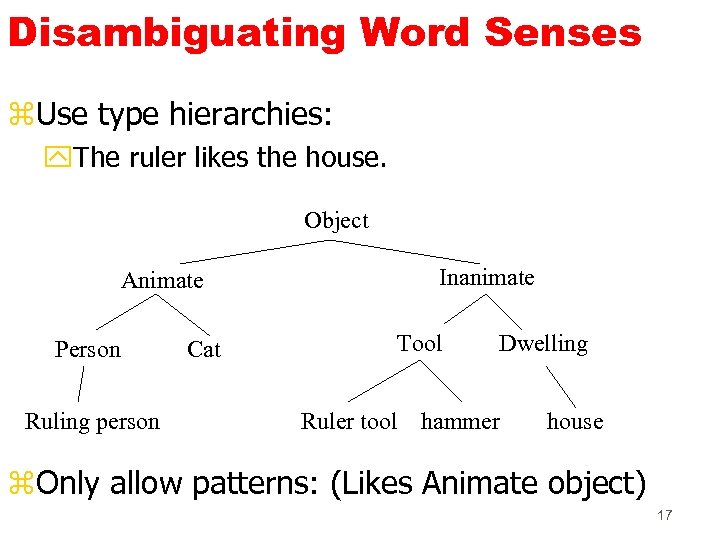

Disambiguating Word Senses z. Use type hierarchies: y. The ruler likes the house. Object Animate Person Ruling person Cat Inanimate Tool Dwelling Ruler tool hammer house z. Only allow patterns: (Likes Animate object) 17

Speech Acts z. What do you mean when you say: y. Do you know the time? Context Speaker knows time Speaker believes hearer knows time request Speaker believes hearer doesn’t know offer Speaker doesn’t know if hearer knows time yes-no question or cond offer Speaker doesn’t know request wasting time Y/N question or 18 request

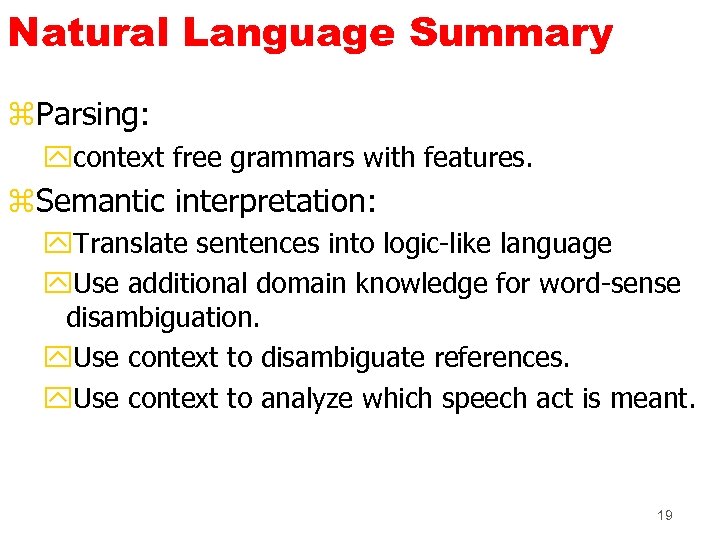

Natural Language Summary z. Parsing: ycontext free grammars with features. z. Semantic interpretation: y. Translate sentences into logic-like language y. Use additional domain knowledge for word-sense disambiguation. y. Use context to disambiguate references. y. Use context to analyze which speech act is meant. 19

ce7a82ae90cd018c23ac05310ef3f2bb.ppt