6cb19247613c7ea71cd65e68388abcce.ppt

- Количество слайдов: 39

Natural Language Processing in Augmentative and Alternative Communication Yael Netzer

Natural Language Processing in Augmentative and Alternative Communication Yael Netzer

Outline • Natural Language Processing • Augmentative and Alternative Communication • My work - Generation of messages – How does the process look like – What is needed • NLP in AAC – Word prediction – Message generation – IR methods

Outline • Natural Language Processing • Augmentative and Alternative Communication • My work - Generation of messages – How does the process look like – What is needed • NLP in AAC – Word prediction – Message generation – IR methods

Natural Language Processing Applications/models with usage of linguistic knowledge, or that provide linguistic knowledge (POS taggers, parsers etc. ) • Language applications: – Machine translation – Text summarization – Information retrieval/extraction – Human Computer interface.

Natural Language Processing Applications/models with usage of linguistic knowledge, or that provide linguistic knowledge (POS taggers, parsers etc. ) • Language applications: – Machine translation – Text summarization – Information retrieval/extraction – Human Computer interface.

Alternative and Augmentative Communication • AAC Users – Congenital diseases e. g. cerebral palsy – Progressive diseases e. g. ALS Amyotrophic Lateral Sclerosis (Lou Gehrig's Disease) – Trauma e. g. head injury • Cognitive disabilities vs. physical disabilities (each requires different methods and assumptions). • Slow rate of conversation – Speech rate 150 -200 wpm, skilled typist 60 wpm – Speech prosthesis users: 10 -15 wpm • Each ‘key stroke’ may consume a lot of energy. • Trade off between conversation rate and cohesion of utterances.

Alternative and Augmentative Communication • AAC Users – Congenital diseases e. g. cerebral palsy – Progressive diseases e. g. ALS Amyotrophic Lateral Sclerosis (Lou Gehrig's Disease) – Trauma e. g. head injury • Cognitive disabilities vs. physical disabilities (each requires different methods and assumptions). • Slow rate of conversation – Speech rate 150 -200 wpm, skilled typist 60 wpm – Speech prosthesis users: 10 -15 wpm • Each ‘key stroke’ may consume a lot of energy. • Trade off between conversation rate and cohesion of utterances.

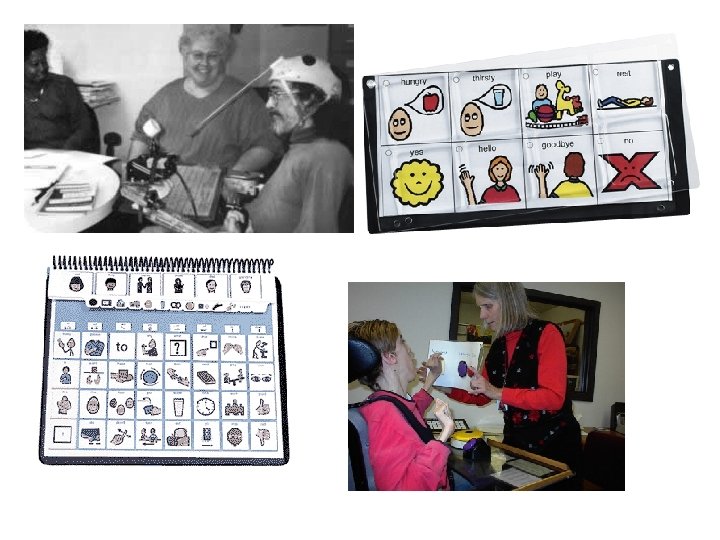

AAC Techniques • Simple pointing on boards and letter charts • Portable keyboard devices • Computer-based systems usingle-switch access for severely impaired subjects. • Symbols or letters – Various symbol systems (Blissymbolics) /sets (PCS). • Pre-stored phrases accessible via grid or iconic buttons.

AAC Techniques • Simple pointing on boards and letter charts • Portable keyboard devices • Computer-based systems usingle-switch access for severely impaired subjects. • Symbols or letters – Various symbol systems (Blissymbolics) /sets (PCS). • Pre-stored phrases accessible via grid or iconic buttons.

AAC and NLP • Common issues: – Text generation – Speech recognition – Text to speech synthesis – Information retrieval. • 3 workshops, 1 special edition in journal (Natural Language Engineering).

AAC and NLP • Common issues: – Text generation – Speech recognition – Text to speech synthesis – Information retrieval. • 3 workshops, 1 special edition in journal (Natural Language Engineering).

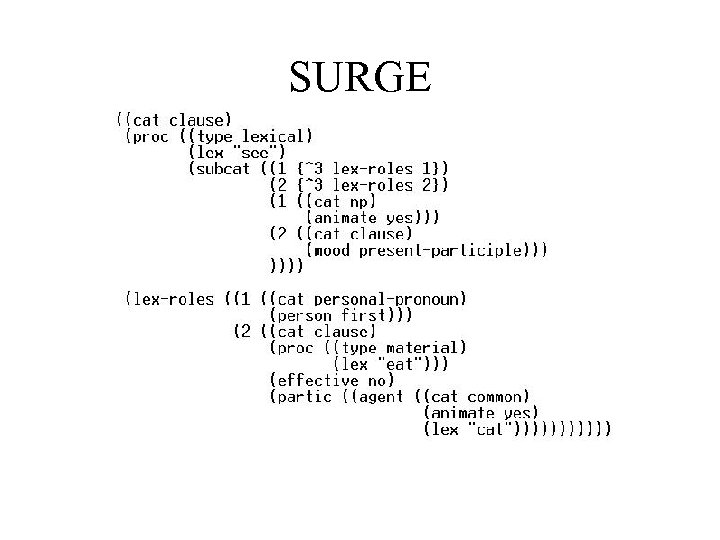

Our framework • Natural language generation – Content planning – Surface realization • Lexical choice • Syntactic realization • Morphological processing. • FUF/SURGE, HUGG • Lexicon (Jing et al. )

Our framework • Natural language generation – Content planning – Surface realization • Lexical choice • Syntactic realization • Morphological processing. • FUF/SURGE, HUGG • Lexicon (Jing et al. )

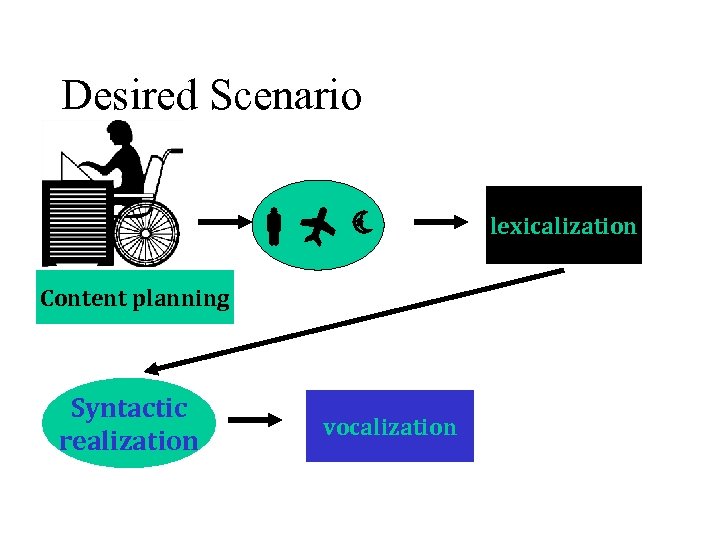

Desired Scenario Content planning Syntactic realization vocalization lexicalization

Desired Scenario Content planning Syntactic realization vocalization lexicalization

Examples • ME / TO SEE / CAT / TO EAT I saw the cat eating. • CAT / TO EAT / TO SEE / ME The cat ate and I saw it The cat that ate saw me.

Examples • ME / TO SEE / CAT / TO EAT I saw the cat eating. • CAT / TO EAT / TO SEE / ME The cat ate and I saw it The cat that ate saw me.

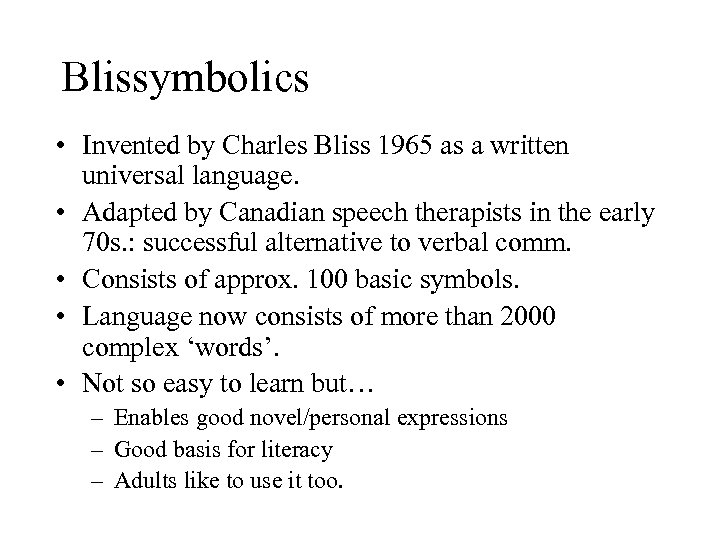

Blissymbolics • Invented by Charles Bliss 1965 as a written universal language. • Adapted by Canadian speech therapists in the early 70 s. : successful alternative to verbal comm. • Consists of approx. 100 basic symbols. • Language now consists of more than 2000 complex ‘words’. • Not so easy to learn but… – Enables good novel/personal expressions – Good basis for literacy – Adults like to use it too.

Blissymbolics • Invented by Charles Bliss 1965 as a written universal language. • Adapted by Canadian speech therapists in the early 70 s. : successful alternative to verbal comm. • Consists of approx. 100 basic symbols. • Language now consists of more than 2000 complex ‘words’. • Not so easy to learn but… – Enables good novel/personal expressions – Good basis for literacy – Adults like to use it too.

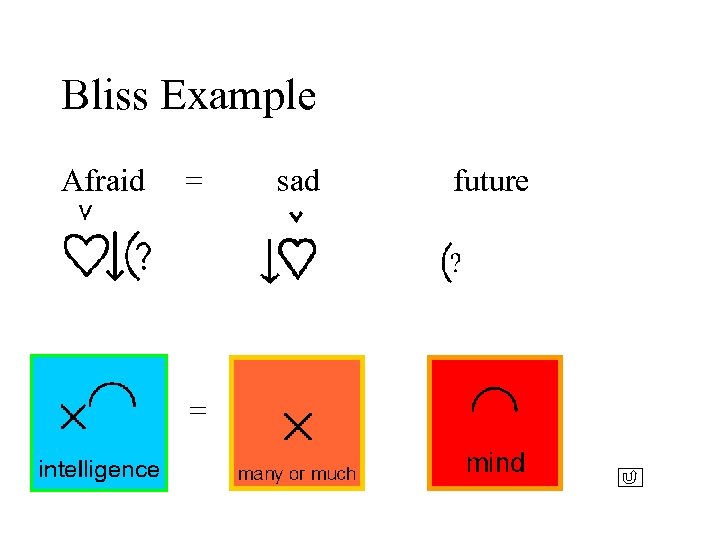

Bliss Example Afraid = = sad future

Bliss Example Afraid = = sad future

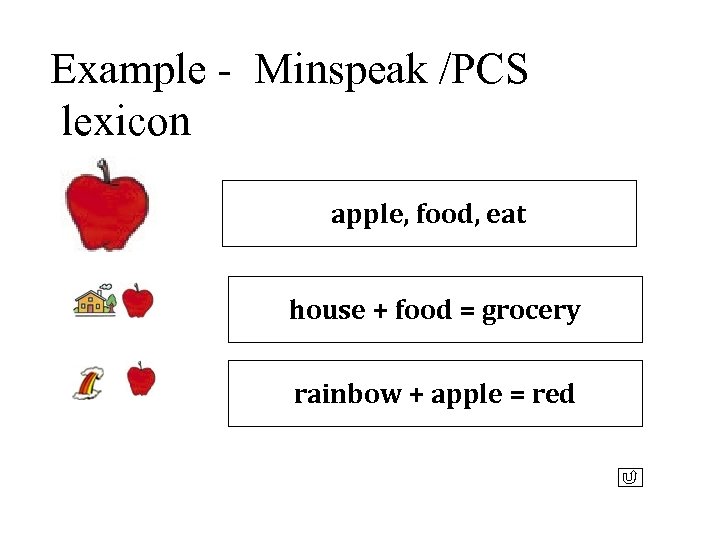

Example - Minspeak /PCS lexicon apple, food, eat house + food = grocery rainbow + apple = red

Example - Minspeak /PCS lexicon apple, food, eat house + food = grocery rainbow + apple = red

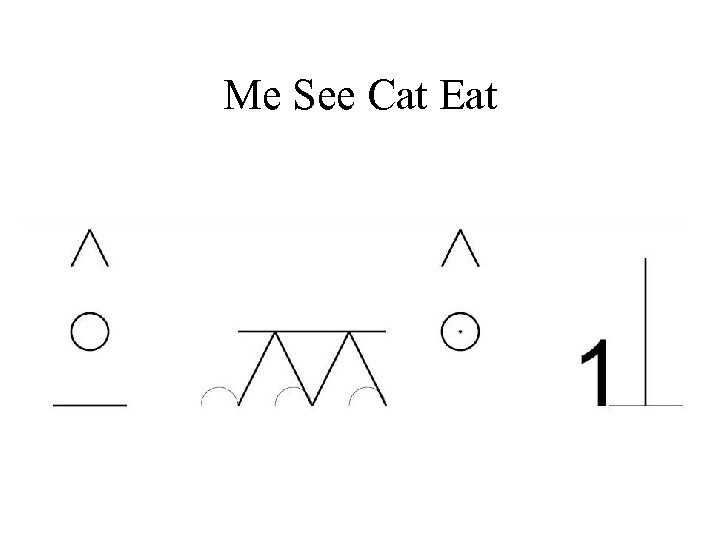

Me See Cat Eat

Me See Cat Eat

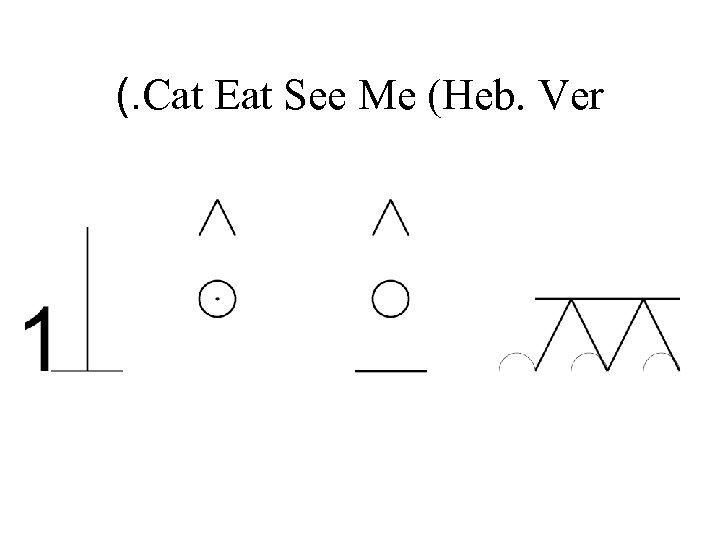

(. Cat Eat See Me (Heb. Ver

(. Cat Eat See Me (Heb. Ver

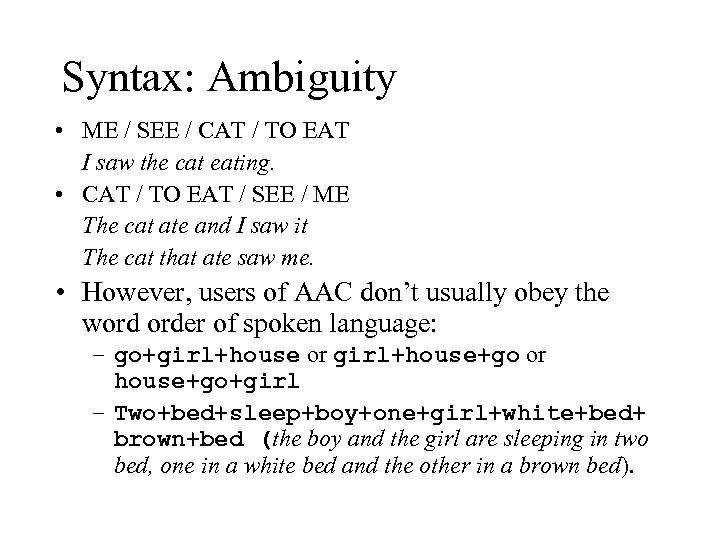

Syntax: Ambiguity • ME / SEE / CAT / TO EAT I saw the cat eating. • CAT / TO EAT / SEE / ME The cat ate and I saw it The cat that ate saw me. • However, users of AAC don’t usually obey the word order of spoken language: – go+girl+house or girl+house+go or house+go+girl – Two+bed+sleep+boy+one+girl+white+bed+ brown+bed (the boy and the girl are sleeping in two bed, one in a white bed and the other in a brown bed).

Syntax: Ambiguity • ME / SEE / CAT / TO EAT I saw the cat eating. • CAT / TO EAT / SEE / ME The cat ate and I saw it The cat that ate saw me. • However, users of AAC don’t usually obey the word order of spoken language: – go+girl+house or girl+house+go or house+go+girl – Two+bed+sleep+boy+one+girl+white+bed+ brown+bed (the boy and the girl are sleeping in two bed, one in a white bed and the other in a brown bed).

Pragmatics • where situation is taking place, • who’s the hearer, – Good morning vs. Hi – Open the window vs. Can you please open the window? • Gestures (facial, body)

Pragmatics • where situation is taking place, • who’s the hearer, – Good morning vs. Hi – Open the window vs. Can you please open the window? • Gestures (facial, body)

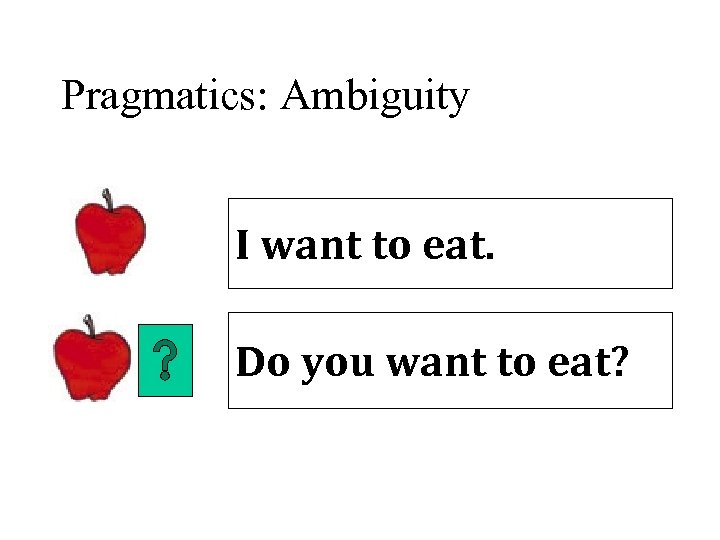

Pragmatics: Ambiguity I want to eat. Do you want to eat?

Pragmatics: Ambiguity I want to eat. Do you want to eat?

Contextual Resources • What is the context of the things that are said, following what was already said before, referential expressions. • In a restaurant you can talk about “the menu” • In front of a computer, “the menu” is a set of commands.

Contextual Resources • What is the context of the things that are said, following what was already said before, referential expressions. • In a restaurant you can talk about “the menu” • In front of a computer, “the menu” is a set of commands.

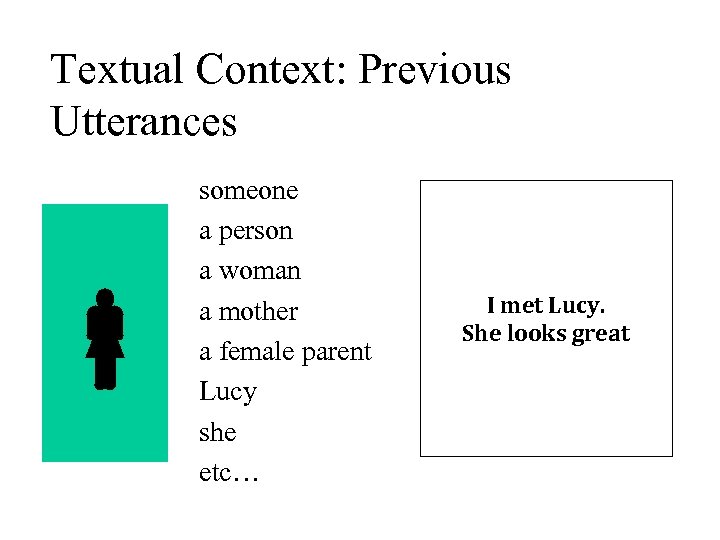

Textual Context: Previous Utterances someone a person a woman a mother a female parent Lucy she etc… I met Lucy. She looks great

Textual Context: Previous Utterances someone a person a woman a mother a female parent Lucy she etc… I met Lucy. She looks great

Generating from Symbols: Issues • Syntactic ambiguity • Contextual ambiguity • No strict rules for use of symbols – Individual codes, conventions, abbreviations. • Textual – how one word affects the choice of another, ordering words, fluency. • Practical: Enhancing communication rate w/o limiting expressing abilities. – (efficient keyboard setup, word prediction, structure prediction).

Generating from Symbols: Issues • Syntactic ambiguity • Contextual ambiguity • No strict rules for use of symbols – Individual codes, conventions, abbreviations. • Textual – how one word affects the choice of another, ordering words, fluency. • Practical: Enhancing communication rate w/o limiting expressing abilities. – (efficient keyboard setup, word prediction, structure prediction).

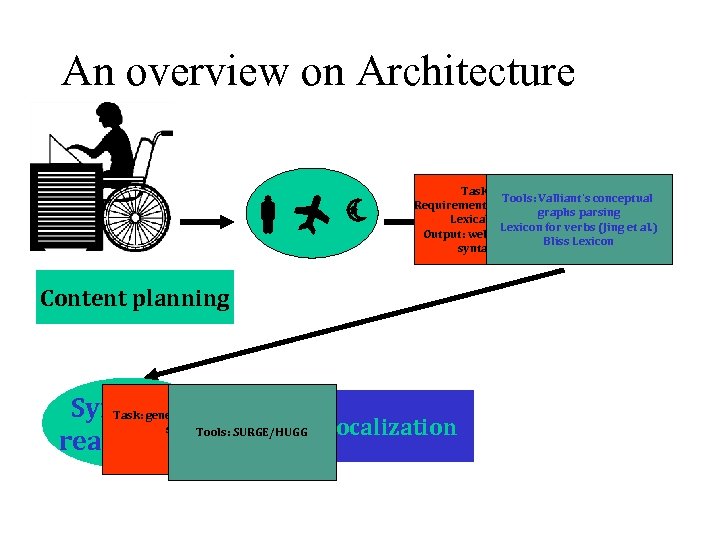

An overview on Architecture Task: Parsing – Tools: Valliant’s conceptual Requirements: world knowledge graphs parsing Lexical information Lexicon for verbs (Jing et al. ) Output: well formed input for Bliss Lexicon syntactic realizer Content planning Syntactic well formed Task: generating sentences SURGE/HUGG vocalization realization. Tools: lexicalization

An overview on Architecture Task: Parsing – Tools: Valliant’s conceptual Requirements: world knowledge graphs parsing Lexical information Lexicon for verbs (Jing et al. ) Output: well formed input for Bliss Lexicon syntactic realizer Content planning Syntactic well formed Task: generating sentences SURGE/HUGG vocalization realization. Tools: lexicalization

Lexicon • Mapping concepts - symbols to word • Compositional vs. non-compositional • Organization of symbols for efficient retrieval. – (POS, semantic connections) • Available lexical knowledge – Syntactic structure, irregularities etc.

Lexicon • Mapping concepts - symbols to word • Compositional vs. non-compositional • Organization of symbols for efficient retrieval. – (POS, semantic connections) • Available lexical knowledge – Syntactic structure, irregularities etc.

Methodology Test interaction of different aspects – Word/symbol/ structure prediction With more specific questions: – Concepts to words – Referential expression generation – Pragmatic considerations.

Methodology Test interaction of different aspects – Word/symbol/ structure prediction With more specific questions: – Concepts to words – Referential expression generation – Pragmatic considerations.

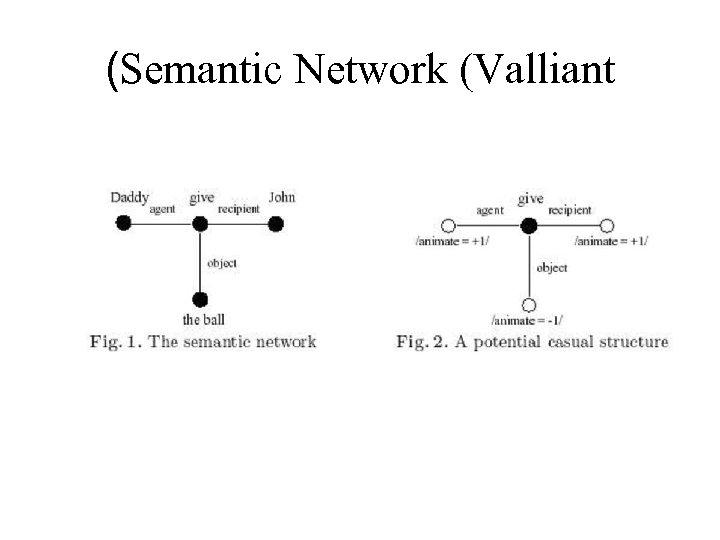

(Semantic Network (Valliant

(Semantic Network (Valliant

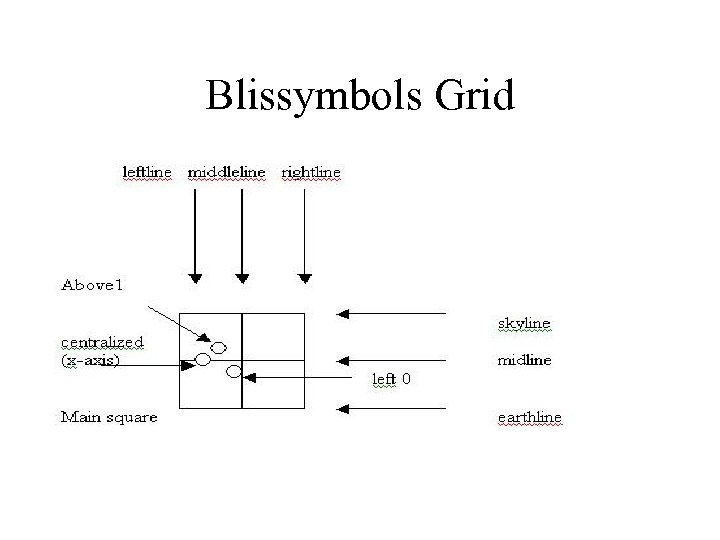

Blissymbols Grid

Blissymbols Grid

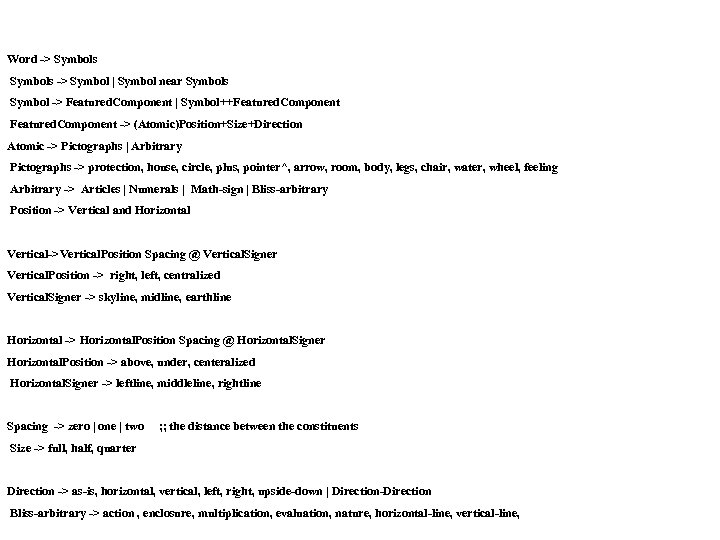

Word -> Symbols -> Symbol | Symbol near Symbols Symbol -> Featured. Component | Symbol++Featured. Component -> (Atomic)Position+Size+Direction Atomic -> Pictographs | Arbitrary Pictographs -> protection, house, circle, plus, pointer^, arrow, room, body, legs, chair, water, wheel, feeling Arbitrary -> Articles | Numerals | Math-sign | Bliss-arbitrary Position -> Vertical and Horizontal Vertical->Vertical. Position Spacing @ Vertical. Signer Vertical. Position -> right, left, centralized Vertical. Signer -> skyline, midline, earthline Horizontal -> Horizontal. Position Spacing @ Horizontal. Signer Horizontal. Position -> above, under, centeralized Horizontal. Signer -> leftline, middleline, rightline Spacing -> zero | one | two ; ; the distance between the constituents Size -> full, half, quarter Direction -> as-is, horizontal, vertical, left, right, upside-down | Direction-Direction Bliss-arbitrary -> action , enclosure, multiplication, evaluation, nature, horizontal-line, vertical-line,

Word -> Symbols -> Symbol | Symbol near Symbols Symbol -> Featured. Component | Symbol++Featured. Component -> (Atomic)Position+Size+Direction Atomic -> Pictographs | Arbitrary Pictographs -> protection, house, circle, plus, pointer^, arrow, room, body, legs, chair, water, wheel, feeling Arbitrary -> Articles | Numerals | Math-sign | Bliss-arbitrary Position -> Vertical and Horizontal Vertical->Vertical. Position Spacing @ Vertical. Signer Vertical. Position -> right, left, centralized Vertical. Signer -> skyline, midline, earthline Horizontal -> Horizontal. Position Spacing @ Horizontal. Signer Horizontal. Position -> above, under, centeralized Horizontal. Signer -> leftline, middleline, rightline Spacing -> zero | one | two ; ; the distance between the constituents Size -> full, half, quarter Direction -> as-is, horizontal, vertical, left, right, upside-down | Direction-Direction Bliss-arbitrary -> action , enclosure, multiplication, evaluation, nature, horizontal-line, vertical-line,

SURGE

SURGE

Work left to do: • Integration • Evaluation – – symbols to utterances corpus keystrokes savings

Work left to do: • Integration • Evaluation – – symbols to utterances corpus keystrokes savings

Previous work of NLP-AAC • Word prediction • Message Generation • Text simplification

Previous work of NLP-AAC • Word prediction • Message Generation • Text simplification

Word Prediction • Simple non-linguistic methods - possibly up to 50% savings of keystrokes. • Required – improvement, • Including syntactic/semantic knowledge in the prediction process, using machine learning methods, based on corpus analysis • Methods: – Frequency-based models (bi/tri-grams) – Grammatical and conceptual modeling to predict well formed utterances – such as the use of POS tags.

Word Prediction • Simple non-linguistic methods - possibly up to 50% savings of keystrokes. • Required – improvement, • Including syntactic/semantic knowledge in the prediction process, using machine learning methods, based on corpus analysis • Methods: – Frequency-based models (bi/tri-grams) – Grammatical and conceptual modeling to predict well formed utterances – such as the use of POS tags.

Word Prediction • KOMBE project – hand written syntactic rules. • Carlberger • Different languages?

Word Prediction • KOMBE project – hand written syntactic rules. • Carlberger • Different languages?

Message Generation • Language generation from reduced input – Telegraphic text [Cushler Badman Demasco and Mc. Coy] • think red hammer break John => I think that the red hammer was broken by John. – Cogeneration [Copestake] • Construction of full sentences from templates. – PVI • Main assumption: order of word choice implies topicalization and should be considered.

Message Generation • Language generation from reduced input – Telegraphic text [Cushler Badman Demasco and Mc. Coy] • think red hammer break John => I think that the red hammer was broken by John. – Cogeneration [Copestake] • Construction of full sentences from templates. – PVI • Main assumption: order of word choice implies topicalization and should be considered.

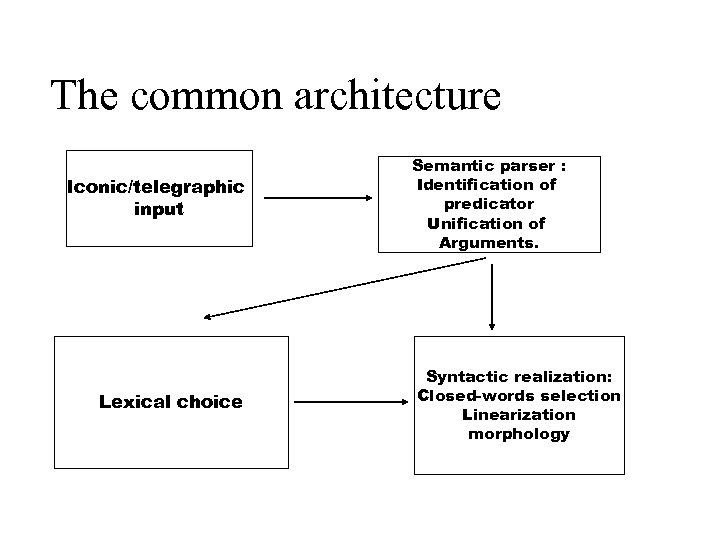

The common architecture Iconic/telegraphic input Lexical choice Semantic parser : Identification of predicator Unification of Arguments. Syntactic realization: Closed-words selection Linearization morphology

The common architecture Iconic/telegraphic input Lexical choice Semantic parser : Identification of predicator Unification of Arguments. Syntactic realization: Closed-words selection Linearization morphology

Cogeneration approach • Situation-based approach. • A set of pre-defined templates : Topic of discussion: <> Participants: <> Time of discussion: <> (optional) You know

Cogeneration approach • Situation-based approach. • A set of pre-defined templates : Topic of discussion: <> Participants: <> Time of discussion: <> (optional) You know

PVI • Paradigmatic dimension: icons organized in taxemes, further grouped in samantic domains. • Syntagmatic dimension: build a casual structure of predicative concepts. • Meaning of an icon: the features that distinguish it from the other icons. • Semantic analysis: reconstructing the meaning of the icon sequence – building a semantic network. • Lexical choice – assuming there is no bijection mapping of icons/words. • Generation

PVI • Paradigmatic dimension: icons organized in taxemes, further grouped in samantic domains. • Syntagmatic dimension: build a casual structure of predicative concepts. • Meaning of an icon: the features that distinguish it from the other icons. • Semantic analysis: reconstructing the meaning of the icon sequence – building a semantic network. • Lexical choice – assuming there is no bijection mapping of icons/words. • Generation

Message Selection Systems • • Discourse structure Talk: About univ. of Dundee A user uses pre-stored sentences. The sentences are indexed using rhetorical structure assumptions.

Message Selection Systems • • Discourse structure Talk: About univ. of Dundee A user uses pre-stored sentences. The sentences are indexed using rhetorical structure assumptions.

![Language Simplification and Language Understanding • PSET project [Carroll et al. ] • Intended Language Simplification and Language Understanding • PSET project [Carroll et al. ] • Intended](https://present5.com/presentation/6cb19247613c7ea71cd65e68388abcce/image-38.jpg) Language Simplification and Language Understanding • PSET project [Carroll et al. ] • Intended for aphasic readers – with lexical or syntactic impairments. • Syntactic simplification: – Passive to active • Lexical simplification: lookup for synonyms, use most frequent.

Language Simplification and Language Understanding • PSET project [Carroll et al. ] • Intended for aphasic readers – with lexical or syntactic impairments. • Syntactic simplification: – Passive to active • Lexical simplification: lookup for synonyms, use most frequent.

To sum…. . • NLP can be naturally and effectively integrated into AAC systems. • Relaxations – user feedback is available on the spot. • Data collection IS an issue here. • The aim: make more flexible, expressive tools, with enhanced rate. • Possibly, combined approaches.

To sum…. . • NLP can be naturally and effectively integrated into AAC systems. • Relaxations – user feedback is available on the spot. • Data collection IS an issue here. • The aim: make more flexible, expressive tools, with enhanced rate. • Possibly, combined approaches.