fabbaad795ba104ce1953d06ae897c21.ppt

- Количество слайдов: 55

Natural Language Processing (1) Zhao Hai 赵海 Department of Computer Science and Engineering Shanghai Jiao Tong University zhaohai@cs. sjtu. edu. cn

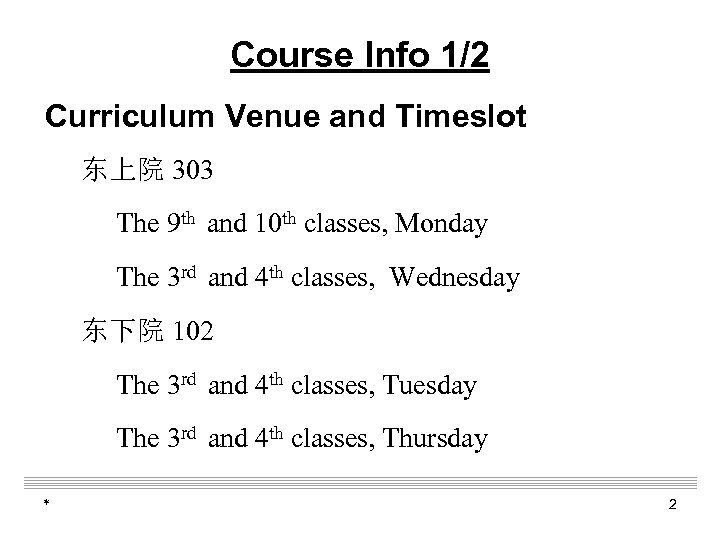

Course Info 1/2 Curriculum Venue and Timeslot 东上院 303 The 9 th and 10 th classes, Monday The 3 rd and 4 th classes, Wednesday 东下院 102 The 3 rd and 4 th classes, Tuesday The 3 rd and 4 th classes, Thursday * 2

Course Info 2/2 Web Site and Contact Email q http: //bcmi. sjtu. edu. cn/~zhaohai/nlp 4 u 2017 q TA q * TBD 3

Outline q Course Goals q Course Schedule q Course Requirements q Overview * 4

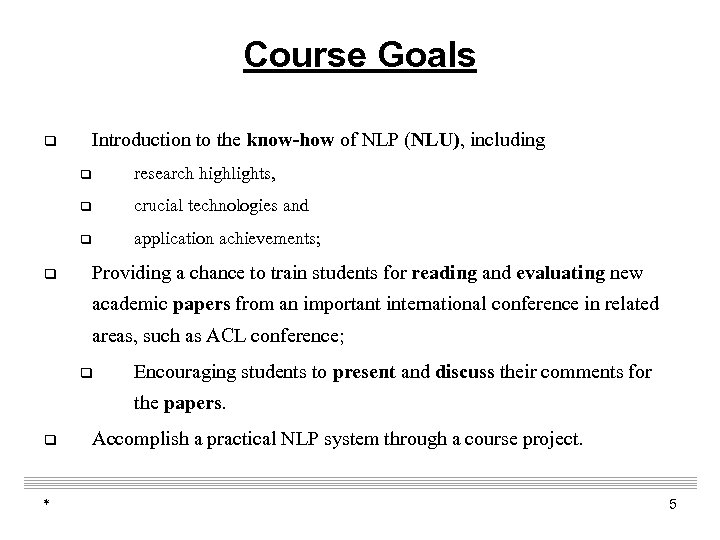

Course Goals Introduction to the know-how of NLP (NLU), including q q q crucial technologies and q q research highlights, application achievements; Providing a chance to train students for reading and evaluating new academic papers from an important international conference in related areas, such as ACL conference; q Encouraging students to present and discuss their comments for the papers. q * Accomplish a practical NLP system through a course project. 5

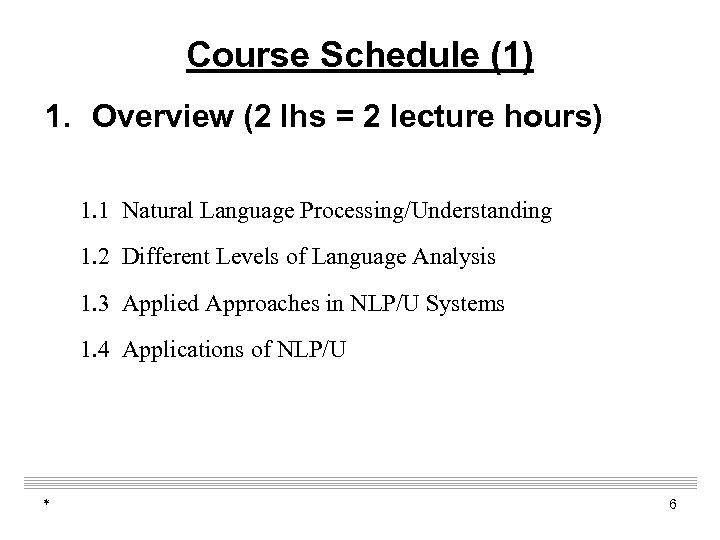

Course Schedule (1) 1. Overview (2 lhs = 2 lecture hours) 1. 1 Natural Language Processing/Understanding 1. 2 Different Levels of Language Analysis 1. 3 Applied Approaches in NLP/U Systems 1. 4 Applications of NLP/U * 6

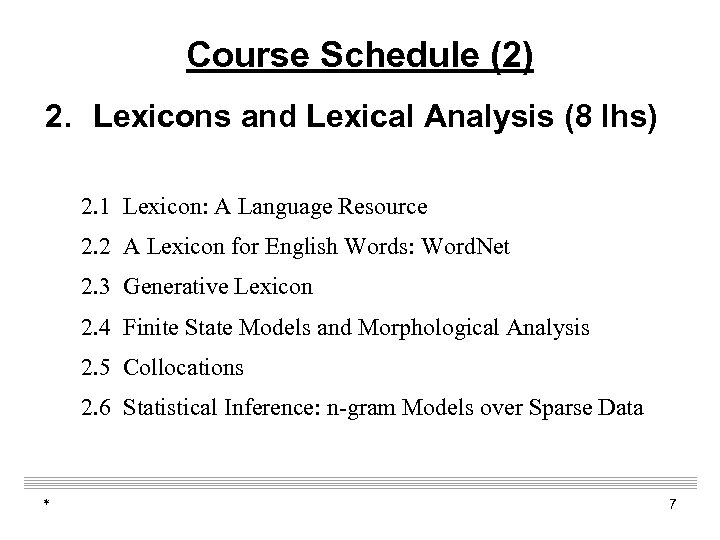

Course Schedule (2) 2. Lexicons and Lexical Analysis (8 lhs) 2. 1 Lexicon: A Language Resource 2. 2 A Lexicon for English Words: Word. Net 2. 3 Generative Lexicon 2. 4 Finite State Models and Morphological Analysis 2. 5 Collocations 2. 6 Statistical Inference: n-gram Models over Sparse Data * 7

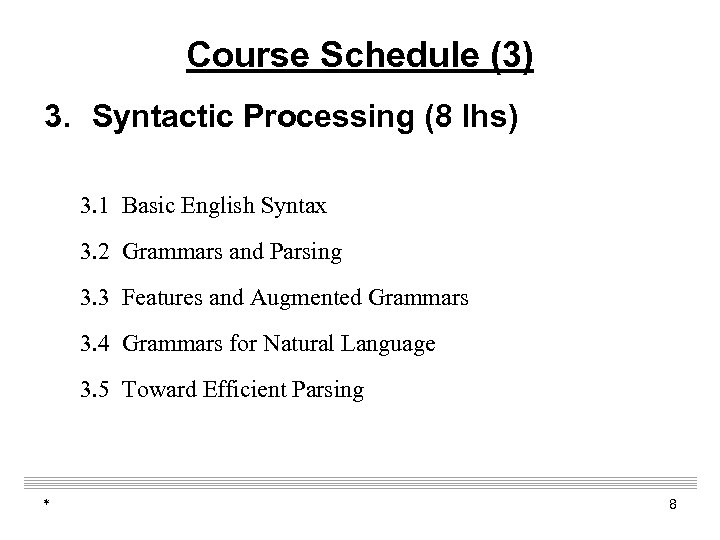

Course Schedule (3) 3. Syntactic Processing (8 lhs) 3. 1 Basic English Syntax 3. 2 Grammars and Parsing 3. 3 Features and Augmented Grammars 3. 4 Grammars for Natural Language 3. 5 Toward Efficient Parsing * 8

Course Schedule (4) 4. Learning Approaches for Natural language processing (8 lhs) 4. 0 Neural network, embedding 4. 1 Main machine learning approaches Maximum entropy K-nearest neighbor Support vector machine 4. 2 Sequence labeling: HMM, Maximum Entropy Markov Model and CRFs 4. 3 A Case Study: train a Part-of-speech tagger from labeled corpus * 9

Course Schedule (5) 5. Human language introduction (2 -3 lh) 5. 1 World language families, the difference and … 5. 2 Chinese dialects 6. Students Workshop (4 lh) 6. 1 How to Prepare for the Paper Reading 6. 2 ACL/EMNLP Paper Reading Groups 6. 3 Presentation and Discussion * 10

Course Requirements (1) 1. Texts and References q James Allen. Natural Language Understanding (The Second Ver. ). The Benjamin / Cummings Publishing Company, Inc. , 1995. q Christopher D. Manning and Hinrich Schütze. Foundations of Statistical Natural Language Processing. The MIT Press. Springer-Verlag, 1999. * 11

Course Requirements (2) 2. Online Literatures q ACL Anthology q q * http: //www. aclweb. org/anthology/ Other Related References. 12

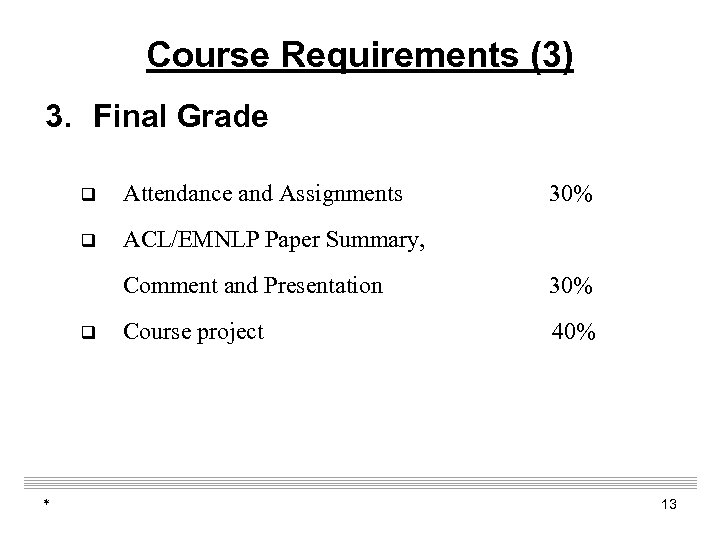

Course Requirements (3) 3. Final Grade q Attendance and Assignments q 30% ACL/EMNLP Paper Summary, Comment and Presentation q * 30% Course project 40% 13

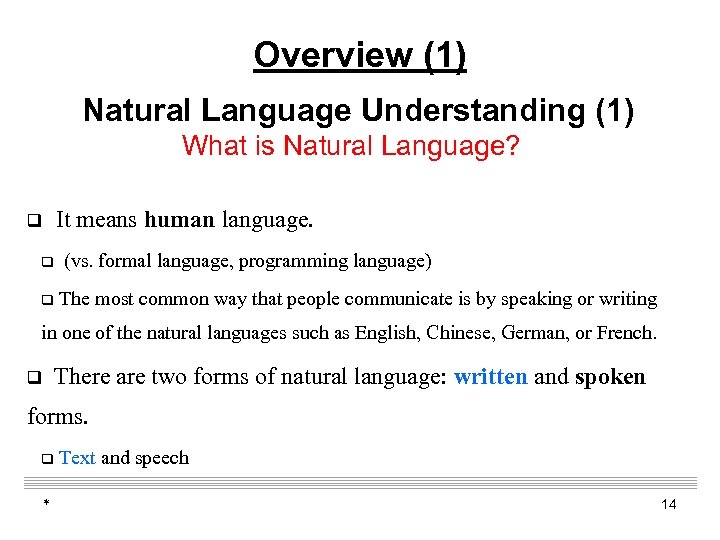

Overview (1) Natural Language Understanding (1) What is Natural Language? It means human language. q q q (vs. formal language, programming language) The most common way that people communicate is by speaking or writing in one of the natural languages such as English, Chinese, German, or French. There are two forms of natural language: written and spoken q forms. q * Text and speech 14

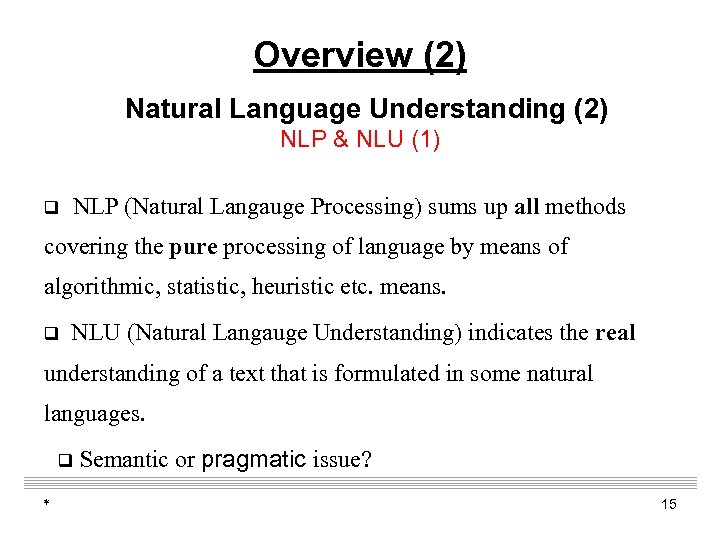

Overview (2) Natural Language Understanding (2) NLP & NLU (1) q NLP (Natural Langauge Processing) sums up all methods covering the pure processing of language by means of algorithmic, statistic, heuristic etc. means. q NLU (Natural Langauge Understanding) indicates the real understanding of a text that is formulated in some natural languages. q * Semantic or pragmatic issue? 15

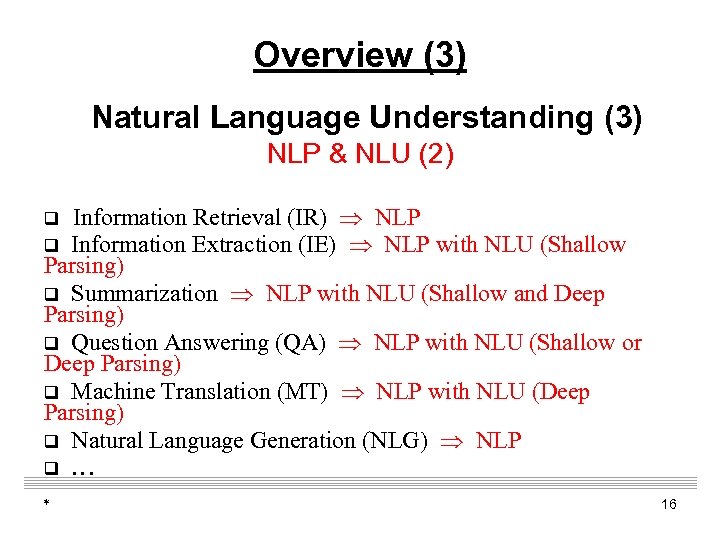

Overview (3) Natural Language Understanding (3) NLP & NLU (2) Information Retrieval (IR) NLP q Information Extraction (IE) NLP with NLU (Shallow Parsing) q Summarization NLP with NLU (Shallow and Deep Parsing) q Question Answering (QA) NLP with NLU (Shallow or Deep Parsing) q Machine Translation (MT) NLP with NLU (Deep Parsing) q Natural Language Generation (NLG) NLP q … q * 16

Overview (4) Natural Language Understanding (4) Computational Linguistics (1) Research in Computational Linguistics, the use of computers in the study of languages, – started soon after computers became available in the 1940’s. – This discipline, along with AI discipline and so on, promoted the progress of NLU. – Linguistics that also focuses on computational issues. * 17

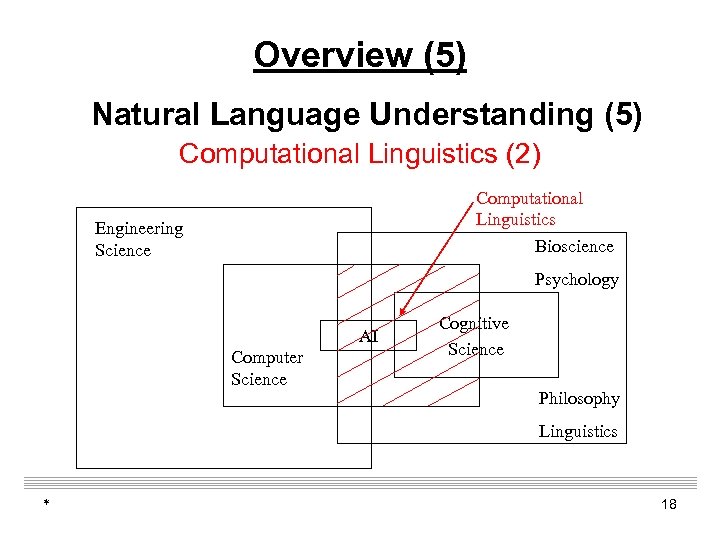

Overview (5) Natural Language Understanding (5) Computational Linguistics (2) Computational Linguistics Engineering Science Bioscience Psychology AI Computer Science Cognitive Science Philosophy Linguistics * 18

Overview (6) Natural Language Understanding (6) Why is NLU a Difficult Task? (1) Complexity of the target representation into which the matching is being done q Ø In fact, the procedure of understanding natural language is to transform it from one representation into another. Ø * Extracting meaningful information of source representation often requires the use of additional knowledge. 19

Overview (7) Natural Language Understanding (7) Why is NLU a Difficult Task? (2) q Type of mapping There are one-to-one, many-to-one, one-to-many, or many-tomany mappings. One-to-many mappings require a great deal of domain knowledge beyond the input to make the correct choice among target representations. For example (one-to-many): a) a tall giraffe vs. b) tall the truth * 20

Overview (8) Natural Language Understanding (8) Why is NLU a Difficult Task? (3) Level of interaction of the components of the source representation q In many natural language sentences, changing a single word can alter the interpretation of the entire structure. As the number of interactions increases, so does the complexity of the mapping. * 21

Overview (9) Natural Language Understanding (9) Why is NLU a Difficult Task? (4) q Modifier attachment problem The sentence Give me all the employees in a division making more than $50, 000 doesn't make it clear whether the speaker wants all employees making more than $50, 000, or only those in divisions making more than $50, 000. * 22

Overview (10) Natural Language Understanding (10) Why is NLU a Difficult Task? (5) Quantifier scoping problem In logic, some words such as “the”, “each”, or “what” that express “universal” ( ) or “existential” ( ). They can have several readings. q Elliptical utterances q The interpretation of a query may depend on previous queries and their interpretations. E. g. , asking Who is the manager of the automobile division and then saying, of aircraft? * 23

Overview (12) Natural Language Understanding (12) Machine Translation (1) q In 1949, Warren Weaver proposed that computers might be useful for “the solution of world-wide translation problems”. q However, even after more than 50 years of effort, current systems still produce output of limited quality, which is suitable for assimilation of foreign-language documents, but not for the production of publishable material. * 24

Overview (12) Natural Language Understanding (13) Machine Translation (2) q ALPAC Report, 1964 q q By John R. Pierce q q ALPAC (Automatic Language Processing Advisory Committee http: //www. hutchinsweb. me. uk/MTNI-14 -1996. pdf IBM Models q. P. Brown; John Cocke, S. Della Pietra, V. Della Pietra, Frederick Jelinek, Robert L. Mercer, P. Roossin (1988). "A statistical approach to language translation". COLING'88. 1: 71– 76. q. P. Brown; John Cocke, S. Della Pietra, V. Della Pietra, Frederick Jelinek, John D. Lafferty, Robert L. Mercer, P. Roossin (1990). "A statistical approach to machine translation". * Computational Linguistics. MIT Press. 16 (2): 79– 85 25

Overview (12) Natural Language Understanding (13) Machine Translation (2) q. MERT, q Minimum Error Rate Training in Statistical Machine Translation”, Franz Josef Och, ACL, 200 qhttp: //www. aclweb. org/anthology/P/P 09 -1019. pdf q BLEU q. Papineni, K. ; Roukos, S. ; Ward, T. ; Zhu, W. J. (2002). BLEU: a method for automatic evaluation of machine translation. ACL-2002: . pp. 311– 318 q NMT q 2014 q 2015 * Ar. Xiv, Neural Turing Machines (Google Deep. Mind) ICLR, Neural Machine Translation by Jointly Learning to Align and Translate 26

Overview (13) Natural Language Understanding (14) Machine Translation (3) By practice, the researchers have realized that human language translation is a complex cognitive ability involving knowledge of different kinds: q Ø Ø Ø * the structure of sentences; the meaning of words; a model of the listener (user model); the rules of conversation (dialogue translation); an extensive shared body of general information about the world. 27

Overview (14) Natural Language Understanding (15) Machine Translation (4) q Some forms of translation for information access is already today available in the web at no cost. e. g. qhttp: //translate. google. com/? hl=zh-CN&tab=w. T#auto|en| qhttp: //fanyi. baidu. com/ q. The increasing demand for these services will give a push to improve their quality; q The translation providers will find ways to increase vocabularies and translation quality semi-automatically from terminological resources, bilingual corpora and similar sources. * 28

Overview (16) Natural Language Understanding (16) Investigation Goals AI researchers in natural language processing expected their work to lead both to: q the development of practical, useful language understanding systems and q a better understanding of language and the nature of intelligence. * 29

Overview (17) Different Levels of Language Analysis (1) Six Analysis Levels for Written Texts q q Syntactic Analysis (Deep & Shallow Parsing) q Semantic Analysis q Pragmatic Analysis q * Morphological Analysis (Lexical Analysis) Discourse Analysis (Text Analysis) q World Knowledge Analysis (is it possible? ) 30

Overview (18) Different Levels of Language Analysis (2) Morphological Analysis (1) q It is the identification of a word-stem from a full word-form (and sometimes also the identification of the syntactic category of the stem). q For example, the word friendly is combined by the noun (stem) friend and the suffix -ly, which transforms a noun into an adjective. * 31

Overview (19) Different Levels of Language Analysis (3) Morphological Analysis (2) q Most systems that analyze natural language text typically start by segmenting the text into meaningful tokens. q In general, this procedure includes tokenization (segmentation), normalization (stemming), POS (part-ofspeech) tagging, named entity / phrase identification. * 32

Overview (20) Different Levels of Language Analysis (4) Syntactic Analysis (1) Its goal is to break down given textual units, e. g. sentences, into smaller constituents, to assign categorical labels to them, and to identify the grammatical relations between the various parts. q In most parsers, the grammar is separated from the processing components. q q * The grammar consists of a lexicon, and rules that syntactically and semantically combine words and phrases into larger phrases and sentences. 33

Overview (21) Different Levels of Language Analysis (5) Syntactic Analysis (2) q The output of a shallow parser is less complete than that from a deep or full parser, that is, it is not a phrase-structure tree. q A shallow parser may identify some phrasal constituents, such as noun phrase, without indicating their internal structure and their function in the sentence. q * It has the advantages of efficiency and robustness. 34

Overview (23) Different Levels of Language Analysis (7) Semantic Analysis (1) q The goal of semantic analysis is to assign meanings to utterances whose meaning is complete, containing word meaning and combination of word meaning, which is a contextindependent meaning. * 35

Overview (24) Different Levels of Language Analysis (8) Semantic Analysis (2) q The task of semantic analysis can be divided into several subtasks, depending on the linguistic level where it takes place. q The most important subtasks are q q * the semantic tagging of ambiguous words and phrases, and the resolution of referring expressions. 36

Overview (25) Different Levels of Language Analysis (9) Pragmatic Analysis q It depicts the relationships between the symbols of texts (talks) and the producers / users. q Note that here those present writers / readers and speakers / hearers. q In other words, the context of situation has significant impact for the interpretation of a discourse. * 37

Overview (26) Different Levels of Language Analysis (10) Discourse Analysis Extracting the knowledge contained in texts requires more than the resolution of local semantic ambiguities. q Discourse analysis needs to consider the global argumentative structure of texts. In addition, it also analyzes the relationships between sentences in a text. q This analysis is especially important for pronoun and temporal constituents. q * 38

Overview (27) Different Levels of Language Analysis (11) World Knowledge Analysis q It analyzes and infers the general world knowledge that each language users must have, e. g. other user’s beliefs and goals in a conversation. * 39

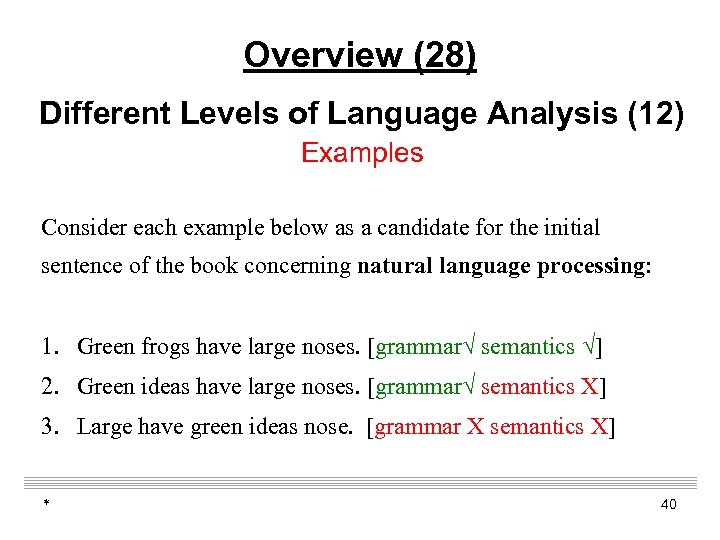

Overview (28) Different Levels of Language Analysis (12) Examples Consider each example below as a candidate for the initial sentence of the book concerning natural language processing: 1. Green frogs have large noses. [grammar√ semantics √] 2. Green ideas have large noses. [grammar√ semantics X] 3. Large have green ideas nose. [grammar X semantics X] * 40

Overview (29) Applied Approaches in NLU Systems (1) Historical Categories Borrowed from Winograd (1972), groups NLU approaches according to how they represent and use knowledge of their subject matter. On this basis, they can be divided into four historical categories. * 41

Overview (30) Applied Approaches in NLU Systems (2) Historical Categories q q * The earliest approach with limited results in specific, constrained domains (BASEBALL, SAD-SAM, STUDENT and ELIZA); Text-based approach (PROTOSYNTHEX-I and Semantic Memory); Limited logic-based approach (SIR, TLC, DEACON and CONVERSE); Knowledge-based approach (LUNAR, SHRDLU, MARGIE, SAM and LIFER). 42

![Overview (31) Applied Approaches in NLU Systems (3) BASEBALL [Bert Green, 1963] An information Overview (31) Applied Approaches in NLU Systems (3) BASEBALL [Bert Green, 1963] An information](https://present5.com/presentation/fabbaad795ba104ce1953d06ae897c21/image-43.jpg)

Overview (31) Applied Approaches in NLU Systems (3) BASEBALL [Bert Green, 1963] An information retrieval program with a large database of facts about all American League games over a given year. It accepted input questions from the user, limited to one clause with no logical connectives. * 43

![Overview (32) Applied Approaches in NLU Systems (4) SAD-SAM [Lindsay, 1963] q Syntactic Appraiser Overview (32) Applied Approaches in NLU Systems (4) SAD-SAM [Lindsay, 1963] q Syntactic Appraiser](https://present5.com/presentation/fabbaad795ba104ce1953d06ae897c21/image-44.jpg)

Overview (32) Applied Approaches in NLU Systems (4) SAD-SAM [Lindsay, 1963] q Syntactic Appraiser and Diagrammer -- Semantic Analyzing Machine. Programmed by Robert Lindsay in 1963 at CMU. q It uses a basic English vocabulary (1, 700 words) and follows a context-free grammar. q It parses input from left to right, builds derivation trees, and passes them to SAM, which extracts the semantically relevant information to build family trees and find answers to questions. * 44

![Overview (33) Applied Approaches in NLU Systems (5) ELIZA [Weizenbaum, 1966] It was built Overview (33) Applied Approaches in NLU Systems (5) ELIZA [Weizenbaum, 1966] It was built](https://present5.com/presentation/fabbaad795ba104ce1953d06ae897c21/image-45.jpg)

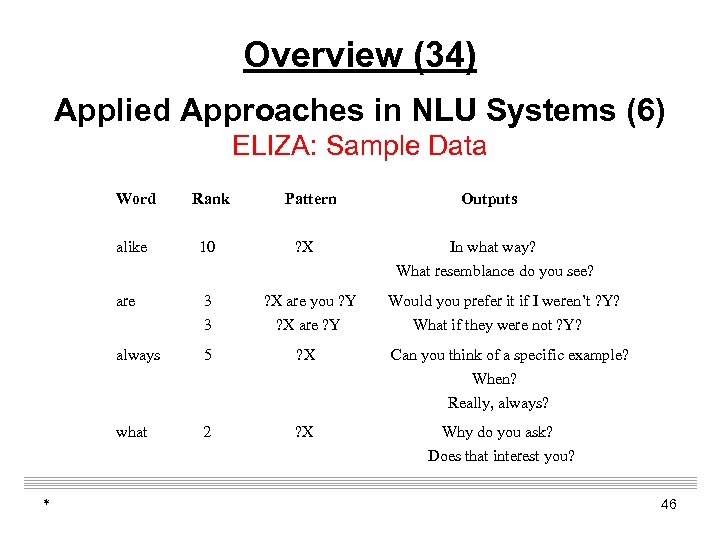

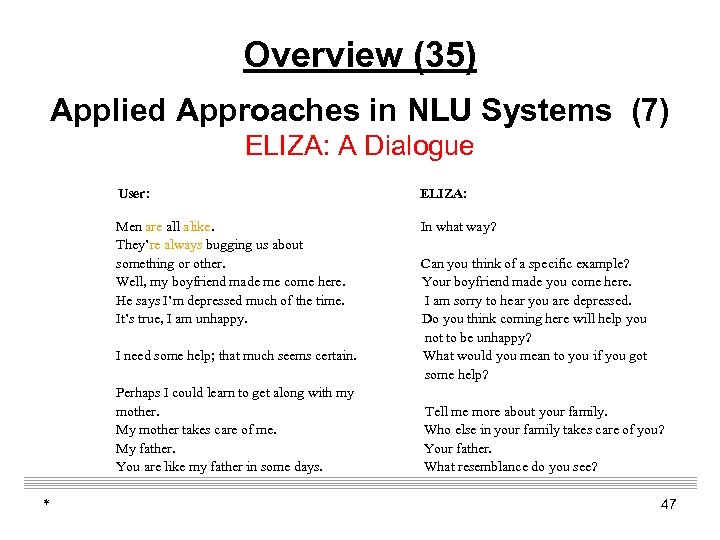

Overview (33) Applied Approaches in NLU Systems (5) ELIZA [Weizenbaum, 1966] It was built at MIT in 1966 and was the most famous patternmatching natural language system. The system assumes the role of a Rogerian, or “nondirective”, therapist in its dialog with the user. q It operated by matching the left sides of its rules against the user’s last sentence, and using the appropriate right side to generate a response. Rules were indexed by keywords so only a few had to be matched against a particular sentence. Some rules had no left side, so they could apply anywhere. q * 45

Overview (34) Applied Approaches in NLU Systems (6) ELIZA: Sample Data Word alike 10 are 3 3 ? X are you ? Y ? X are ? Y always 5 ? X what * Rank Pattern 2 ? X Outputs In what way? What resemblance do you see? Would you prefer it if I weren’t ? Y? What if they were not ? Y? Can you think of a specific example? When? Really, always? Why do you ask? Does that interest you? 46

Overview (35) Applied Approaches in NLU Systems (7) ELIZA: A Dialogue User: ELIZA: Men are all alike. They’re always bugging us about something or other. Well, my boyfriend made me come here. He says I’m depressed much of the time. It’s true, I am unhappy. In what way? I need some help; that much seems certain. Perhaps I could learn to get along with my mother. My mother takes care of me. My father. You are like my father in some days. * Can you think of a specific example? Your boyfriend made you come here. I am sorry to hear you are depressed. Do you think coming here will help you not to be unhappy? What would you mean to you if you got some help? Tell me more about your family. Who else in your family takes care of you? Your father. What resemblance do you see? 47

![Overview (36) Applied Approaches in NLU Systems (8) SIR [Bertram Raphael, 1968] Semantic Information Overview (36) Applied Approaches in NLU Systems (8) SIR [Bertram Raphael, 1968] Semantic Information](https://present5.com/presentation/fabbaad795ba104ce1953d06ae897c21/image-48.jpg)

Overview (36) Applied Approaches in NLU Systems (8) SIR [Bertram Raphael, 1968] Semantic Information Retrieval System, it was a prototype “understanding” machine, since it could accumulate facts and then make deductions about them in order to answer questions. * 48

![Overview (37) Applied Approaches in NLU Systems (9) LUNAR [William Woods, 1973] (1) q Overview (37) Applied Approaches in NLU Systems (9) LUNAR [William Woods, 1973] (1) q](https://present5.com/presentation/fabbaad795ba104ce1953d06ae897c21/image-49.jpg)

Overview (37) Applied Approaches in NLU Systems (9) LUNAR [William Woods, 1973] (1) q LUNAR answered questions about the rock samples brought back from the moon using two databases -- the chemical analyzes and the literature references. q Specifically, it helped geologists access, compare, and evaluate chemical analysis data on moon rocks and soil composition obtained from the Apollo-11 mission. * 49

![Overview (38) Applied Approaches in NLU Systems (10) LUNAR [William Woods, 1973] (2) q Overview (38) Applied Approaches in NLU Systems (10) LUNAR [William Woods, 1973] (2) q](https://present5.com/presentation/fabbaad795ba104ce1953d06ae897c21/image-50.jpg)

Overview (38) Applied Approaches in NLU Systems (10) LUNAR [William Woods, 1973] (2) q It operated by translating a question entered in English into an expression in a formal query language. The translation was done with an ATN parser coupled with a rule-driven semantic interpretation procedure. * 50

Overview (39) Applications of NLU (1) Text-Based Applications q Finding appropriate documents on certain topics from a database of texts; q Extracting information from messages or articles on certain topics; q q * Translating documents from one language to another; Summarizing texts for certain purposes. 51

Overview (40) Applications of NLU (2) Dialogue-Based Applications q Question-answering systems, where natural language is used to query a database; q Automated customer service over the telephone; q Tutoring systems, where the machine interacts with a student; q General cooperative problem-solving systems. * 52

Overview (41) CL Research Topics (1) Call for Papers from ACL- 2010 (1) p p p p * Discourse, dialogue, and pragmatics Grammar engineering Information extraction Information retrieval Knowledge acquisition Large scale language processing Language generation Language processing in domains such as bioinformatics, legal, medical, etc. Language resources, evaluation methods and metrics, science of annotation Lexical/ontological/formal semantics Machine translation Mathematical linguistics, grammatical formalisms Mining from textual and spoken language data 53

Overview (42) CL Research Topics (2) Call for Papers from ACL-2010 (2) p Multilingual language processing p Multimodal language processing (including speech, gestures, and other communication media) p NLP applications and systems p NLP on noisy unstructured text, such as emails, blogs, sms p Phonology/morphology, tagging and chunking, word segmentation p Psycholinguistics p Question answering p Semantic role labeling p Sentiment analysis and opinion mining p Spoken language processing p Statistical and machine learning methods p Summarization p Syntax, parsing, grammar induction p Text mining p Textual entailment and paraphrasing p Topic and text classification p Word sense disambiguation * 54

Overview (43) CL Research Topics (3) Accepted Regular Paper Statistics for JSCL-2005 (formal CCL) q lexical, syntactical, semantic and discourse analysis, 24 papers, 29. 3%; q resource building and related techniques, 12 papers, 14. 6%; q machine translation techniques, system and evaluation, 8 papers, 9. 7%; q intelligent retrieval, 30 papers, 36. 6%; q others, 8 papers, 9. 7%. * 55

fabbaad795ba104ce1953d06ae897c21.ppt