2e1351b696d3c7c34624d04a52866b1e.ppt

- Количество слайдов: 108

Named Entity Recognition http: //gate. ac. uk/ http: //nlp. shef. ac. uk/ Hamish Cunningham Kalina Bontcheva RANLP, Borovets, Bulgaria, 8 th September 2003 (110)

Named Entity Recognition http: //gate. ac. uk/ http: //nlp. shef. ac. uk/ Hamish Cunningham Kalina Bontcheva RANLP, Borovets, Bulgaria, 8 th September 2003 (110)

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges

Information Extraction • Information Extraction (IE) pulls facts and structured information from the content of large text collections. • IR - IE - NLU • MUC: Message Understanding Conferences • ACE: Automatic Content Extraction 3(110)

Information Extraction • Information Extraction (IE) pulls facts and structured information from the content of large text collections. • IR - IE - NLU • MUC: Message Understanding Conferences • ACE: Automatic Content Extraction 3(110)

MUC-7 tasks • • • NE: Named Entity recognition and typing CO: co-reference resolution TE: Template Elements TR: Template Relations ST: Scenario Templates 4(110)

MUC-7 tasks • • • NE: Named Entity recognition and typing CO: co-reference resolution TE: Template Elements TR: Template Relations ST: Scenario Templates 4(110)

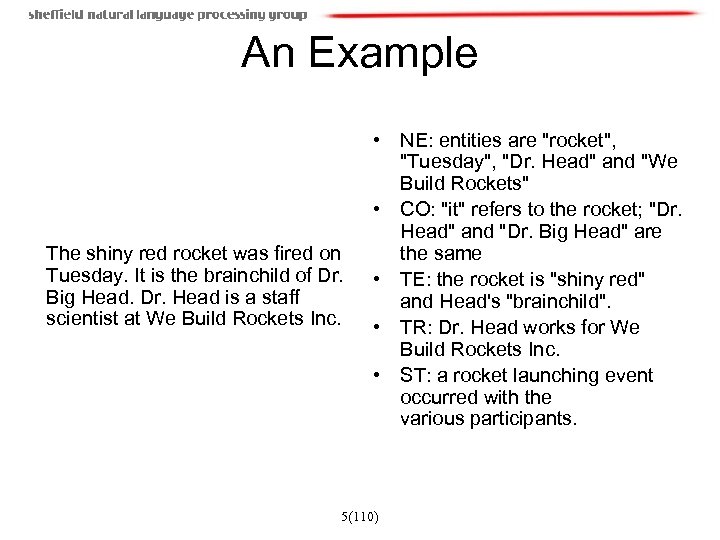

An Example The shiny red rocket was fired on Tuesday. It is the brainchild of Dr. Big Head. Dr. Head is a staff scientist at We Build Rockets Inc. • NE: entities are "rocket", "Tuesday", "Dr. Head" and "We Build Rockets" • CO: "it" refers to the rocket; "Dr. Head" and "Dr. Big Head" are the same • TE: the rocket is "shiny red" and Head's "brainchild". • TR: Dr. Head works for We Build Rockets Inc. • ST: a rocket launching event occurred with the various participants. 5(110)

An Example The shiny red rocket was fired on Tuesday. It is the brainchild of Dr. Big Head. Dr. Head is a staff scientist at We Build Rockets Inc. • NE: entities are "rocket", "Tuesday", "Dr. Head" and "We Build Rockets" • CO: "it" refers to the rocket; "Dr. Head" and "Dr. Big Head" are the same • TE: the rocket is "shiny red" and Head's "brainchild". • TR: Dr. Head works for We Build Rockets Inc. • ST: a rocket launching event occurred with the various participants. 5(110)

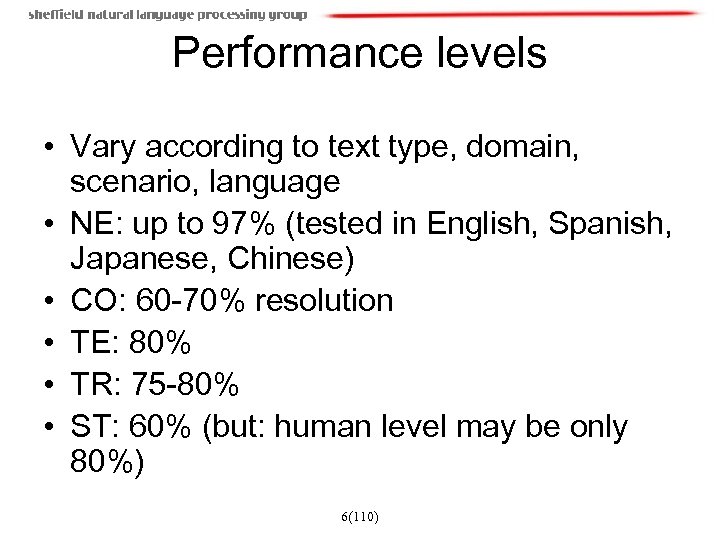

Performance levels • Vary according to text type, domain, scenario, language • NE: up to 97% (tested in English, Spanish, Japanese, Chinese) • CO: 60 -70% resolution • TE: 80% • TR: 75 -80% • ST: 60% (but: human level may be only 80%) 6(110)

Performance levels • Vary according to text type, domain, scenario, language • NE: up to 97% (tested in English, Spanish, Japanese, Chinese) • CO: 60 -70% resolution • TE: 80% • TR: 75 -80% • ST: 60% (but: human level may be only 80%) 6(110)

What are Named Entities? • NER involves identification of proper names in texts, and classification into a set of predefined categories of interest • Person names • Organizations (companies, government organisations, committees, etc) • Locations (cities, countries, rivers, etc) • Date and time expressions 7(110)

What are Named Entities? • NER involves identification of proper names in texts, and classification into a set of predefined categories of interest • Person names • Organizations (companies, government organisations, committees, etc) • Locations (cities, countries, rivers, etc) • Date and time expressions 7(110)

What are Named Entities (2) • Other common types: measures (percent, money, weight etc), email addresses, Web addresses, street addresses, etc. • Some domain-specific entities: names of drugs, medical conditions, names of ships, bibliographic references etc. • MUC-7 entity definition guidelines [Chinchor’ 97] http: //www. itl. nist. gov/iaui/894. 02/related_projects/muc /proceedings/ne_task. html 8(110)

What are Named Entities (2) • Other common types: measures (percent, money, weight etc), email addresses, Web addresses, street addresses, etc. • Some domain-specific entities: names of drugs, medical conditions, names of ships, bibliographic references etc. • MUC-7 entity definition guidelines [Chinchor’ 97] http: //www. itl. nist. gov/iaui/894. 02/related_projects/muc /proceedings/ne_task. html 8(110)

What are NOT NEs (MUC-7) • Artefacts – Wall Street Journal • Common nouns, referring to named entities – the company, the committee • Names of groups of people and things named after people – the Tories, the Nobel prize • Adjectives derived from names – Bulgarian, Chinese • Numbers which are not times, dates, percentages, and money amounts 9(110)

What are NOT NEs (MUC-7) • Artefacts – Wall Street Journal • Common nouns, referring to named entities – the company, the committee • Names of groups of people and things named after people – the Tories, the Nobel prize • Adjectives derived from names – Bulgarian, Chinese • Numbers which are not times, dates, percentages, and money amounts 9(110)

Basic Problems in NE • Variation of NEs – e. g. John Smith, Mr Smith, John. • Ambiguity of NE types: John Smith (company vs. person) – May (person vs. month) – Washington (person vs. location) – 1945 (date vs. time) • Ambiguity with common words, e. g. "may" 10(110)

Basic Problems in NE • Variation of NEs – e. g. John Smith, Mr Smith, John. • Ambiguity of NE types: John Smith (company vs. person) – May (person vs. month) – Washington (person vs. location) – 1945 (date vs. time) • Ambiguity with common words, e. g. "may" 10(110)

More complex problems in NE • Issues of style, structure, domain, genre etc. • Punctuation, spelling, spacing, formatting, . . . all have an impact: Dept. of Computing and Maths Manchester Metropolitan University Manchester United Kingdom Ø Tell me more about Leonardo Ø Da Vinci 11(110)

More complex problems in NE • Issues of style, structure, domain, genre etc. • Punctuation, spelling, spacing, formatting, . . . all have an impact: Dept. of Computing and Maths Manchester Metropolitan University Manchester United Kingdom Ø Tell me more about Leonardo Ø Da Vinci 11(110)

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges 12(110)

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges 12(110)

Applications • Can help summarisation, ASR and MT • Intelligent document access – Browse document collections by the entities that occur in them – Formulate more complex queries than IR can answer – Example application domains: • News • Scientific articles, e. g, MEDLINE abstracts 13(110)

Applications • Can help summarisation, ASR and MT • Intelligent document access – Browse document collections by the entities that occur in them – Formulate more complex queries than IR can answer – Example application domains: • News • Scientific articles, e. g, MEDLINE abstracts 13(110)

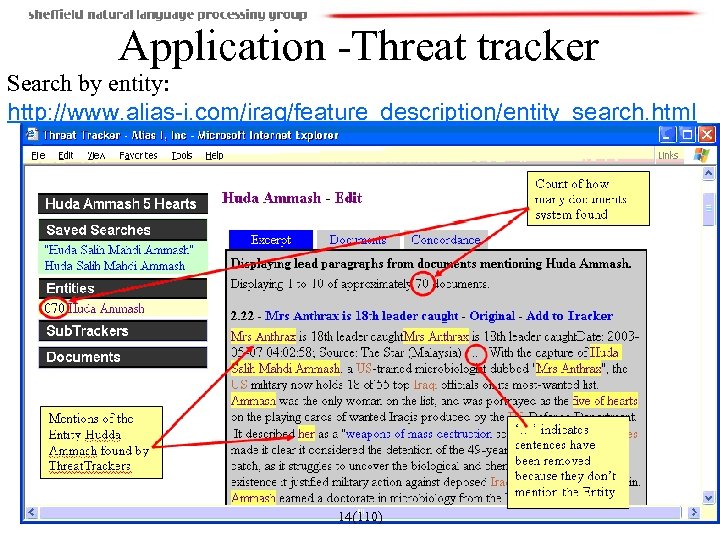

Application -Threat tracker Search by entity: http: //www. alias-i. com/iraq/feature_description/entity_search. html 14(110)

Application -Threat tracker Search by entity: http: //www. alias-i. com/iraq/feature_description/entity_search. html 14(110)

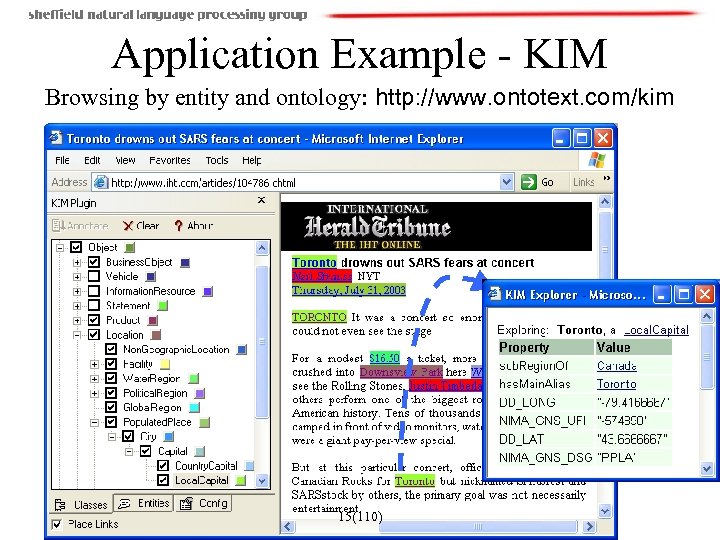

Application Example - KIM Browsing by entity and ontology: http: //www. ontotext. com/kim 15(110)

Application Example - KIM Browsing by entity and ontology: http: //www. ontotext. com/kim 15(110)

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges 18(110)

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges 18(110)

Some NE Annotated Corpora • MUC-6 and MUC-7 corpora - English • CONLL shared task corpora http: //cnts. uia. ac. be/conll 2003/ner/ - NEs in English and German http: //cnts. uia. ac. be/conll 2002/ner/ - NEs in Spanish and Dutch • TIDES surprise language exercise (NEs in Cebuano and Hindi) • ACE – English - http: //www. ldc. upenn. edu/Projects/ACE/ 19(110)

Some NE Annotated Corpora • MUC-6 and MUC-7 corpora - English • CONLL shared task corpora http: //cnts. uia. ac. be/conll 2003/ner/ - NEs in English and German http: //cnts. uia. ac. be/conll 2002/ner/ - NEs in Spanish and Dutch • TIDES surprise language exercise (NEs in Cebuano and Hindi) • ACE – English - http: //www. ldc. upenn. edu/Projects/ACE/ 19(110)

The MUC-7 corpus • • 100 documents in SGML News domain 1880 Organizations (46%) 1324 Locations (32%) 887 Persons (22%) Inter-annotator agreement very high (~97%) http: //www. itl. nist. gov/iaui/894. 02/related_project s/muc/proceedings/muc_7_proceedings/marsh_ slides. pdf 20(110)

The MUC-7 corpus • • 100 documents in SGML News domain 1880 Organizations (46%) 1324 Locations (32%) 887 Persons (22%) Inter-annotator agreement very high (~97%) http: //www. itl. nist. gov/iaui/894. 02/related_project s/muc/proceedings/muc_7_proceedings/marsh_ slides. pdf 20(110)

CAPE CANAVERAL,

Endeavour, with an international crew of six, was set to blast off from the

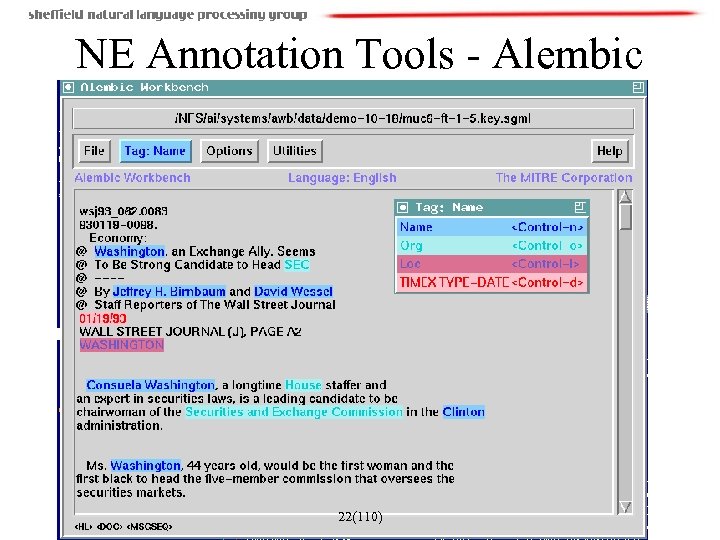

NE Annotation Tools - Alembic 22(110)

NE Annotation Tools - Alembic 22(110)

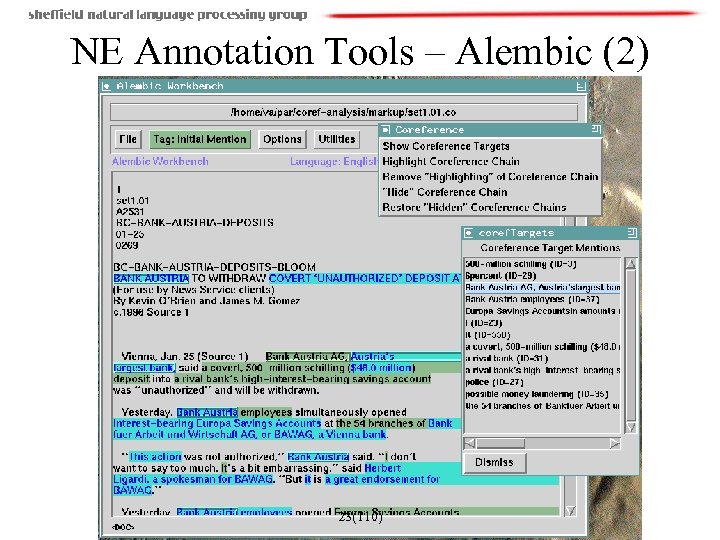

NE Annotation Tools – Alembic (2) 23(110)

NE Annotation Tools – Alembic (2) 23(110)

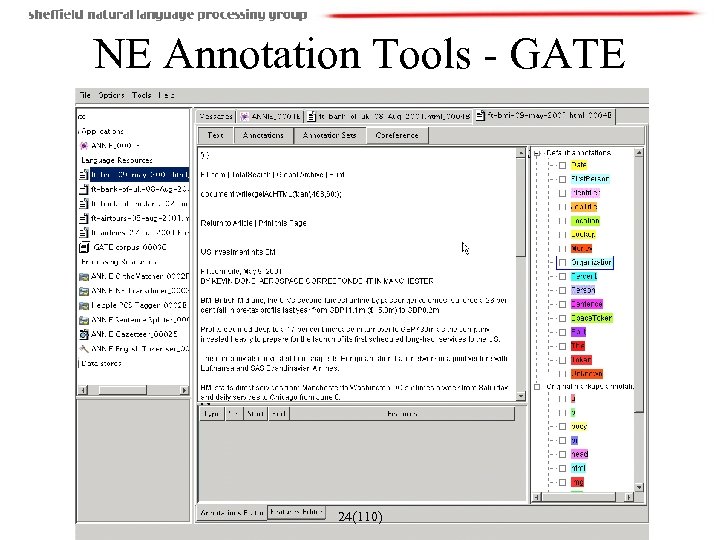

NE Annotation Tools - GATE 24(110)

NE Annotation Tools - GATE 24(110)

NE Annotation Tools : SPro. UT: http: //sprout. dfki. de/ (added by TD) • SPro. UT (Shallow Processing with Unification and Typed Feature Structures) is a platform for development of multilingual shallow text processing and information extraction systems. See http: //sprout. dfki. de/) • It consists of several reusable Unicode-capable online linguistic processing components for basic linguistic operations ranging from tokenization to coreference matching. Since typed feature structures (TFS) are used as a uniform data structure for representing the input and output by each of these processing resources, they can be flexibly combined into a pipeline that produces several streams of linguistically annotated structures, which serve as an input for the shallow grammar interpreter, applied at the next stage. The grammar formalism in SPro. UT, called XTDL is a blend of very efficient finite-state techniques and unification-based formalisms which are known to guarantee transparency and expressiveness. A grammar in SPro. UT consists of pattern/action rules, where the LHS of a rule is a regular expression over TFSs with functional operators and coreferences, representing the recognition pattern, and the RHS of a rule is a TFS specification of the output structure. Click here to learn more about XTDL. Furthermore, SPro. UT comes with an integrated grammar development and testing environment. Currently, the platform provides linguistic processing resources for several languages including among other English, German, French, Italian, Durch, Spanish, Polish, Czech, Chinese, and Japanese. • • • 25(110)

NE Annotation Tools : SPro. UT: http: //sprout. dfki. de/ (added by TD) • SPro. UT (Shallow Processing with Unification and Typed Feature Structures) is a platform for development of multilingual shallow text processing and information extraction systems. See http: //sprout. dfki. de/) • It consists of several reusable Unicode-capable online linguistic processing components for basic linguistic operations ranging from tokenization to coreference matching. Since typed feature structures (TFS) are used as a uniform data structure for representing the input and output by each of these processing resources, they can be flexibly combined into a pipeline that produces several streams of linguistically annotated structures, which serve as an input for the shallow grammar interpreter, applied at the next stage. The grammar formalism in SPro. UT, called XTDL is a blend of very efficient finite-state techniques and unification-based formalisms which are known to guarantee transparency and expressiveness. A grammar in SPro. UT consists of pattern/action rules, where the LHS of a rule is a regular expression over TFSs with functional operators and coreferences, representing the recognition pattern, and the RHS of a rule is a TFS specification of the output structure. Click here to learn more about XTDL. Furthermore, SPro. UT comes with an integrated grammar development and testing environment. Currently, the platform provides linguistic processing resources for several languages including among other English, German, French, Italian, Durch, Spanish, Polish, Czech, Chinese, and Japanese. • • • 25(110)

On-Line IE Tools Open Calais (Added by TD) • http: //www. opencalais. com/about 26(110)

On-Line IE Tools Open Calais (Added by TD) • http: //www. opencalais. com/about 26(110)

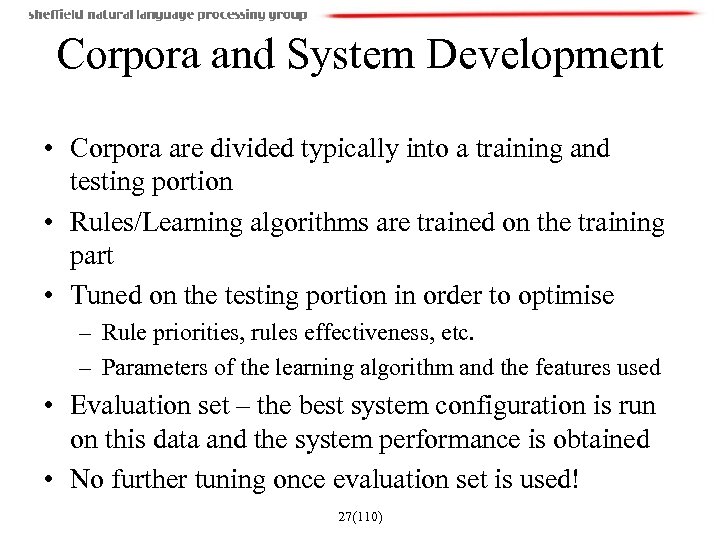

Corpora and System Development • Corpora are divided typically into a training and testing portion • Rules/Learning algorithms are trained on the training part • Tuned on the testing portion in order to optimise – Rule priorities, rules effectiveness, etc. – Parameters of the learning algorithm and the features used • Evaluation set – the best system configuration is run on this data and the system performance is obtained • No further tuning once evaluation set is used! 27(110)

Corpora and System Development • Corpora are divided typically into a training and testing portion • Rules/Learning algorithms are trained on the training part • Tuned on the testing portion in order to optimise – Rule priorities, rules effectiveness, etc. – Parameters of the learning algorithm and the features used • Evaluation set – the best system configuration is run on this data and the system performance is obtained • No further tuning once evaluation set is used! 27(110)

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges 28(110)

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges 28(110)

Performance Evaluation • Evaluation metric – mathematically defines how to measure the system’s performance against a human-annotated, gold standard • Scoring program – implements the metric and provides performance measures – For each document and over the entire corpus – For each type of NE 29(110)

Performance Evaluation • Evaluation metric – mathematically defines how to measure the system’s performance against a human-annotated, gold standard • Scoring program – implements the metric and provides performance measures – For each document and over the entire corpus – For each type of NE 29(110)

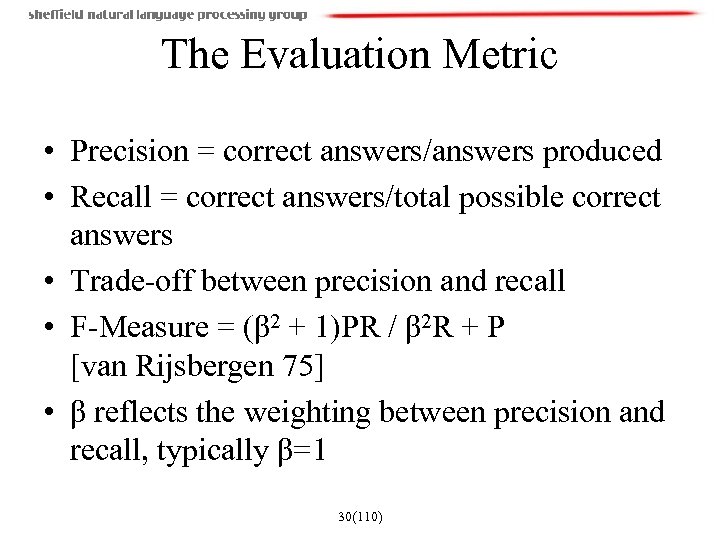

The Evaluation Metric • Precision = correct answers/answers produced • Recall = correct answers/total possible correct answers • Trade-off between precision and recall • F-Measure = (β 2 + 1)PR / β 2 R + P [van Rijsbergen 75] • β reflects the weighting between precision and recall, typically β=1 30(110)

The Evaluation Metric • Precision = correct answers/answers produced • Recall = correct answers/total possible correct answers • Trade-off between precision and recall • F-Measure = (β 2 + 1)PR / β 2 R + P [van Rijsbergen 75] • β reflects the weighting between precision and recall, typically β=1 30(110)

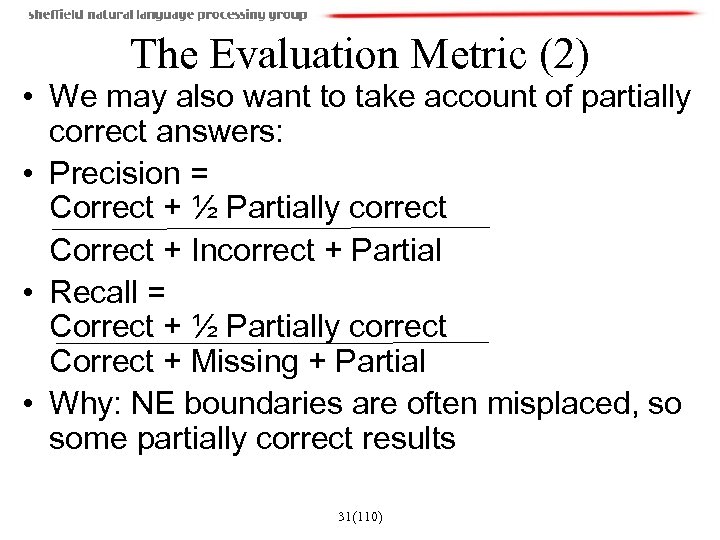

The Evaluation Metric (2) • We may also want to take account of partially correct answers: • Precision = Correct + ½ Partially correct Correct + Incorrect + Partial • Recall = Correct + ½ Partially correct Correct + Missing + Partial • Why: NE boundaries are often misplaced, so some partially correct results 31(110)

The Evaluation Metric (2) • We may also want to take account of partially correct answers: • Precision = Correct + ½ Partially correct Correct + Incorrect + Partial • Recall = Correct + ½ Partially correct Correct + Missing + Partial • Why: NE boundaries are often misplaced, so some partially correct results 31(110)

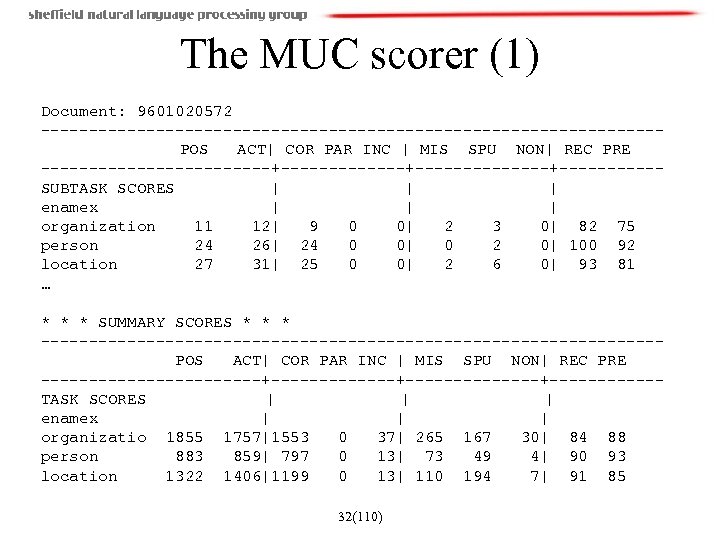

The MUC scorer (1) Document: 9601020572 --------------------------------POS ACT| COR PAR INC | MIS SPU NON| REC PRE ------------+--------------+-----SUBTASK SCORES | | | enamex | | | organization 11 12| 9 0 0| 2 3 0| 82 75 person 24 26| 24 0 0| 0 2 0| 100 92 location 27 31| 25 0 0| 2 6 0| 93 81 … * * * SUMMARY SCORES * * * --------------------------------POS ACT| COR PAR INC | MIS SPU NON| REC PRE ------------+--------------+------TASK SCORES | | | enamex | | | organizatio 1855 1757|1553 0 37| 265 167 30| 84 88 person 883 859| 797 0 13| 73 49 4| 90 93 location 1322 1406|1199 0 13| 110 194 7| 91 85 32(110)

The MUC scorer (1) Document: 9601020572 --------------------------------POS ACT| COR PAR INC | MIS SPU NON| REC PRE ------------+--------------+-----SUBTASK SCORES | | | enamex | | | organization 11 12| 9 0 0| 2 3 0| 82 75 person 24 26| 24 0 0| 0 2 0| 100 92 location 27 31| 25 0 0| 2 6 0| 93 81 … * * * SUMMARY SCORES * * * --------------------------------POS ACT| COR PAR INC | MIS SPU NON| REC PRE ------------+--------------+------TASK SCORES | | | enamex | | | organizatio 1855 1757|1553 0 37| 265 167 30| 84 88 person 883 859| 797 0 13| 73 49 4| 90 93 location 1322 1406|1199 0 13| 110 194 7| 91 85 32(110)

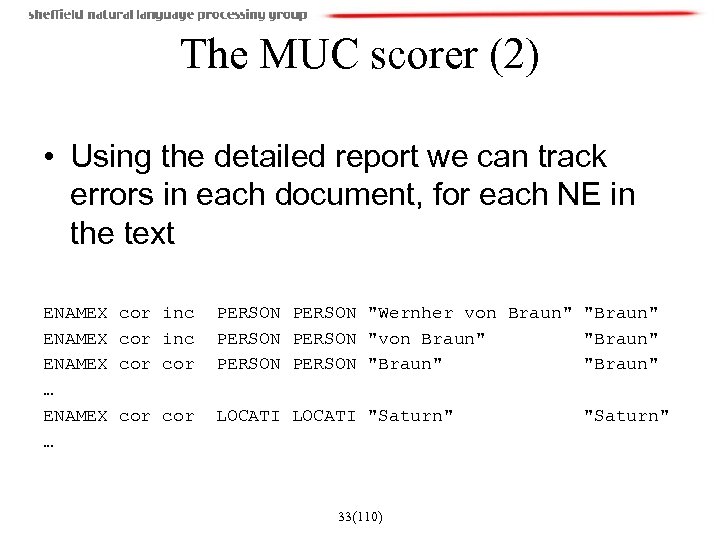

The MUC scorer (2) • Using the detailed report we can track errors in each document, for each NE in the text ENAMEX … cor inc cor PERSON "Wernher von Braun" "Braun" PERSON "Braun" cor LOCATI "Saturn" 33(110) "Saturn"

The MUC scorer (2) • Using the detailed report we can track errors in each document, for each NE in the text ENAMEX … cor inc cor PERSON "Wernher von Braun" "Braun" PERSON "Braun" cor LOCATI "Saturn" 33(110) "Saturn"

Regression Testing • Need to track system’s performance over time • When a change is made to the system we want to know what implications are over the entire corpus • Why: because an improvement in one case can lead to problems in others • GATE offers automated tool to help with the NE development task over time 35(110)

Regression Testing • Need to track system’s performance over time • When a change is made to the system we want to know what implications are over the entire corpus • Why: because an improvement in one case can lead to problems in others • GATE offers automated tool to help with the NE development task over time 35(110)

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges 37(110)

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges 37(110)

Pre-processing for NE Recognition • Format detection • Word segmentation (for languages like Chinese) • Tokenisation • Sentence splitting • POS tagging 38(110)

Pre-processing for NE Recognition • Format detection • Word segmentation (for languages like Chinese) • Tokenisation • Sentence splitting • POS tagging 38(110)

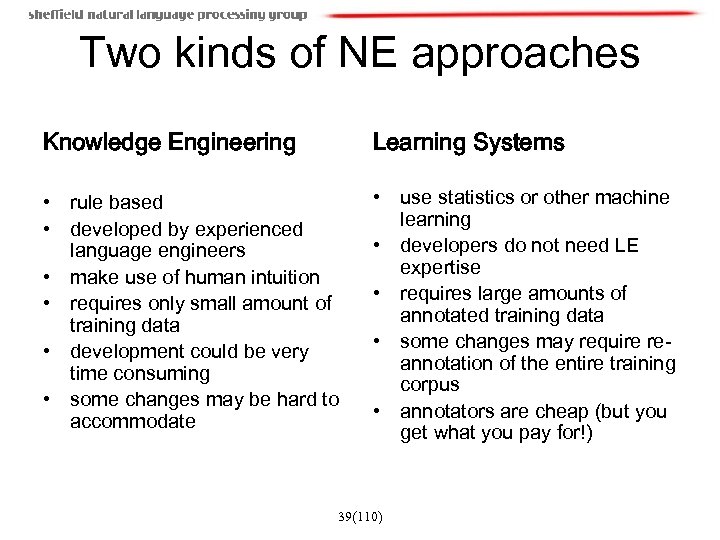

Two kinds of NE approaches Knowledge Engineering Learning Systems • rule based • developed by experienced language engineers • make use of human intuition • requires only small amount of training data • development could be very time consuming • some changes may be hard to accommodate • use statistics or other machine learning • developers do not need LE expertise • requires large amounts of annotated training data • some changes may require reannotation of the entire training corpus • annotators are cheap (but you get what you pay for!) 39(110)

Two kinds of NE approaches Knowledge Engineering Learning Systems • rule based • developed by experienced language engineers • make use of human intuition • requires only small amount of training data • development could be very time consuming • some changes may be hard to accommodate • use statistics or other machine learning • developers do not need LE expertise • requires large amounts of annotated training data • some changes may require reannotation of the entire training corpus • annotators are cheap (but you get what you pay for!) 39(110)

Baseline: list lookup approach • System that recognises only entities stored in its lists (gazetteers). • Advantages - Simple, fast, language independent, easy to retarget (just create lists) • Disadvantages – impossible to enumerate all names, collection and maintenance of lists, cannot deal with name variants, cannot resolve ambiguity 40(110)

Baseline: list lookup approach • System that recognises only entities stored in its lists (gazetteers). • Advantages - Simple, fast, language independent, easy to retarget (just create lists) • Disadvantages – impossible to enumerate all names, collection and maintenance of lists, cannot deal with name variants, cannot resolve ambiguity 40(110)

Creating Gazetteer Lists • Online phone directories and yellow pages for person and organisation names (e. g. [Paskaleva 02]) • Locations lists – US GEOnet Names Server (GNS) data – 3. 9 million locations with 5. 37 million names (e. g. , [Manov 03]) – UN site: http: //unstats. un. org/unsd/citydata – Global Discovery database from Europa technologies Ltd, UK (e. g. , [Ignat 03]) • Automatic collection from annotated training data 41(110)

Creating Gazetteer Lists • Online phone directories and yellow pages for person and organisation names (e. g. [Paskaleva 02]) • Locations lists – US GEOnet Names Server (GNS) data – 3. 9 million locations with 5. 37 million names (e. g. , [Manov 03]) – UN site: http: //unstats. un. org/unsd/citydata – Global Discovery database from Europa technologies Ltd, UK (e. g. , [Ignat 03]) • Automatic collection from annotated training data 41(110)

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges 42(110)

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges 42(110)

Shallow Parsing Approach (internal structure) • Internal evidence – names often have internal structure. These components can be either stored or guessed, e. g. location: • Cap. Word + {City, Forest, Center, River} • e. g. Sherwood Forest • Cap. Word + {Street, Boulevard, Avenue, Crescent, Road} • e. g. Portobello Street 43(110)

Shallow Parsing Approach (internal structure) • Internal evidence – names often have internal structure. These components can be either stored or guessed, e. g. location: • Cap. Word + {City, Forest, Center, River} • e. g. Sherwood Forest • Cap. Word + {Street, Boulevard, Avenue, Crescent, Road} • e. g. Portobello Street 43(110)

Problems with the shallow parsing approach • Ambiguously capitalised words (first word in sentence) [All American Bank] vs. All [State Police] • Semantic ambiguity "John F. Kennedy" = airport (location) "Philip Morris" = organisation • Structural ambiguity [Cable and Wireless] vs. [Microsoft] and [Dell]; [Center for Computational Linguistics] vs. message from [City Hospital] for [John Smith] 44(110)

Problems with the shallow parsing approach • Ambiguously capitalised words (first word in sentence) [All American Bank] vs. All [State Police] • Semantic ambiguity "John F. Kennedy" = airport (location) "Philip Morris" = organisation • Structural ambiguity [Cable and Wireless] vs. [Microsoft] and [Dell]; [Center for Computational Linguistics] vs. message from [City Hospital] for [John Smith] 44(110)

Shallow Parsing Approach with Context • Use of context-based patterns is helpful in ambiguous cases • "David Walton" and "Goldman Sachs" are indistinguishable • But with the phrase "David Walton of Goldman Sachs" and the Person entity "David Walton" recognised, we can use the pattern "[Person] of [Organization]" to identify "Goldman Sachs“ correctly. 45(110)

Shallow Parsing Approach with Context • Use of context-based patterns is helpful in ambiguous cases • "David Walton" and "Goldman Sachs" are indistinguishable • But with the phrase "David Walton of Goldman Sachs" and the Person entity "David Walton" recognised, we can use the pattern "[Person] of [Organization]" to identify "Goldman Sachs“ correctly. 45(110)

Identification of Contextual Information • Use KWIC index and concordancer to find windows of context around entities • Search for repeated contextual patterns of either strings, other entities, or both • Manually post-edit list of patterns, and incorporate useful patterns into new rules • Repeat with new entities 46(110)

Identification of Contextual Information • Use KWIC index and concordancer to find windows of context around entities • Search for repeated contextual patterns of either strings, other entities, or both • Manually post-edit list of patterns, and incorporate useful patterns into new rules • Repeat with new entities 46(110)

![Examples of context patterns • • • • [PERSON] earns [MONEY] [PERSON] joined [ORGANIZATION] Examples of context patterns • • • • [PERSON] earns [MONEY] [PERSON] joined [ORGANIZATION]](https://present5.com/presentation/2e1351b696d3c7c34624d04a52866b1e/image-43.jpg) Examples of context patterns • • • • [PERSON] earns [MONEY] [PERSON] joined [ORGANIZATION] [PERSON] left [ORGANIZATION] [PERSON] joined [ORGANIZATION] as [JOBTITLE] [ORGANIZATION]'s [JOBTITLE] [PERSON] [ORGANIZATION] [JOBTITLE] [PERSON] the [ORGANIZATION] [JOBTITLE] part of the [ORGANIZATION] headquarters in [LOCATION] price of [ORGANIZATION] sale of [ORGANIZATION] investors in [ORGANIZATION] is worth [MONEY] [JOBTITLE] [PERSON], [JOBTITLE] 47(110)

Examples of context patterns • • • • [PERSON] earns [MONEY] [PERSON] joined [ORGANIZATION] [PERSON] left [ORGANIZATION] [PERSON] joined [ORGANIZATION] as [JOBTITLE] [ORGANIZATION]'s [JOBTITLE] [PERSON] [ORGANIZATION] [JOBTITLE] [PERSON] the [ORGANIZATION] [JOBTITLE] part of the [ORGANIZATION] headquarters in [LOCATION] price of [ORGANIZATION] sale of [ORGANIZATION] investors in [ORGANIZATION] is worth [MONEY] [JOBTITLE] [PERSON], [JOBTITLE] 47(110)

Caveats • Patterns are only indicators based on likelihood • Can set priorities based on frequency thresholds • Need training data for each domain • More semantic information would be useful (e. g. to cluster groups of verbs) 48(110)

Caveats • Patterns are only indicators based on likelihood • Can set priorities based on frequency thresholds • Need training data for each domain • More semantic information would be useful (e. g. to cluster groups of verbs) 48(110)

![Rule-based Example: FACILE • FACILE - used in MUC-7 [Black et al 98] • Rule-based Example: FACILE • FACILE - used in MUC-7 [Black et al 98] •](https://present5.com/presentation/2e1351b696d3c7c34624d04a52866b1e/image-45.jpg) Rule-based Example: FACILE • FACILE - used in MUC-7 [Black et al 98] • Uses Inxight’s Linguisti. X tools for tagging and morphological analysis • Database for external information, role similar to a gazetteer • Linguistic info per token, encoded as feature vector: – – – Text offsets Orthographic pattern (first/all capitals, mixed, lowercase) Token and its normalised form Syntax – category and features Semantics – from database or morphological analysis Morphological analyses • Example: (1192 1196 10 T C "Mrs. " "mrs. " (PROP TITLE) (ˆPER_CIV_F) (("Mrs. " "Title" "Abbr")) NIL) PER_CIV_F – female civilian (from database) 49(110)

Rule-based Example: FACILE • FACILE - used in MUC-7 [Black et al 98] • Uses Inxight’s Linguisti. X tools for tagging and morphological analysis • Database for external information, role similar to a gazetteer • Linguistic info per token, encoded as feature vector: – – – Text offsets Orthographic pattern (first/all capitals, mixed, lowercase) Token and its normalised form Syntax – category and features Semantics – from database or morphological analysis Morphological analyses • Example: (1192 1196 10 T C "Mrs. " "mrs. " (PROP TITLE) (ˆPER_CIV_F) (("Mrs. " "Title" "Abbr")) NIL) PER_CIV_F – female civilian (from database) 49(110)

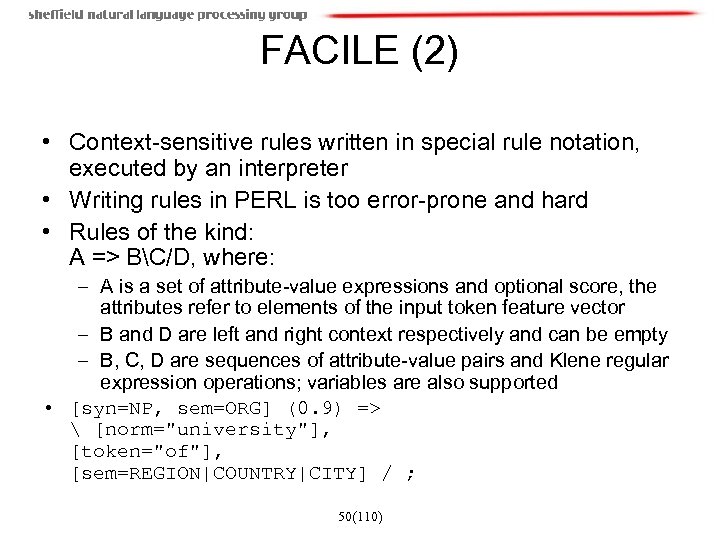

FACILE (2) • Context-sensitive rules written in special rule notation, executed by an interpreter • Writing rules in PERL is too error-prone and hard • Rules of the kind: A => BC/D, where: – A is a set of attribute-value expressions and optional score, the attributes refer to elements of the input token feature vector – B and D are left and right context respectively and can be empty – B, C, D are sequences of attribute-value pairs and Klene regular expression operations; variables are also supported • [syn=NP, sem=ORG] (0. 9) => [norm="university"], [token="of"], [sem=REGION|COUNTRY|CITY] / ; 50(110)

FACILE (2) • Context-sensitive rules written in special rule notation, executed by an interpreter • Writing rules in PERL is too error-prone and hard • Rules of the kind: A => BC/D, where: – A is a set of attribute-value expressions and optional score, the attributes refer to elements of the input token feature vector – B and D are left and right context respectively and can be empty – B, C, D are sequences of attribute-value pairs and Klene regular expression operations; variables are also supported • [syn=NP, sem=ORG] (0. 9) => [norm="university"], [token="of"], [sem=REGION|COUNTRY|CITY] / ; 50(110)

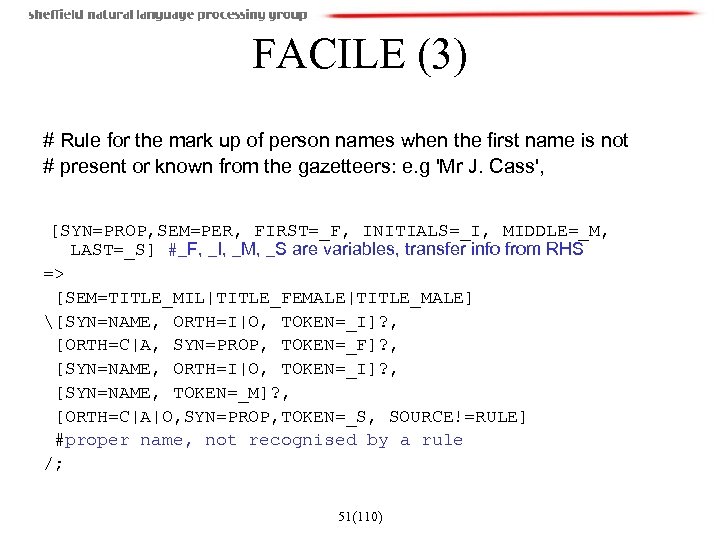

FACILE (3) # Rule for the mark up of person names when the first name is not # present or known from the gazetteers: e. g 'Mr J. Cass', [SYN=PROP, SEM=PER, FIRST=_F, INITIALS=_I, MIDDLE=_M, LAST=_S] #_F, _I, _M, _S are variables, transfer info from RHS => [SEM=TITLE_MIL|TITLE_FEMALE|TITLE_MALE] [SYN=NAME, ORTH=I|O, TOKEN=_I]? , [ORTH=C|A, SYN=PROP, TOKEN=_F]? , [SYN=NAME, ORTH=I|O, TOKEN=_I]? , [SYN=NAME, TOKEN=_M]? , [ORTH=C|A|O, SYN=PROP, TOKEN=_S, SOURCE!=RULE] #proper name, not recognised by a rule /; 51(110)

FACILE (3) # Rule for the mark up of person names when the first name is not # present or known from the gazetteers: e. g 'Mr J. Cass', [SYN=PROP, SEM=PER, FIRST=_F, INITIALS=_I, MIDDLE=_M, LAST=_S] #_F, _I, _M, _S are variables, transfer info from RHS => [SEM=TITLE_MIL|TITLE_FEMALE|TITLE_MALE] [SYN=NAME, ORTH=I|O, TOKEN=_I]? , [ORTH=C|A, SYN=PROP, TOKEN=_F]? , [SYN=NAME, ORTH=I|O, TOKEN=_I]? , [SYN=NAME, TOKEN=_M]? , [ORTH=C|A|O, SYN=PROP, TOKEN=_S, SOURCE!=RULE] #proper name, not recognised by a rule /; 51(110)

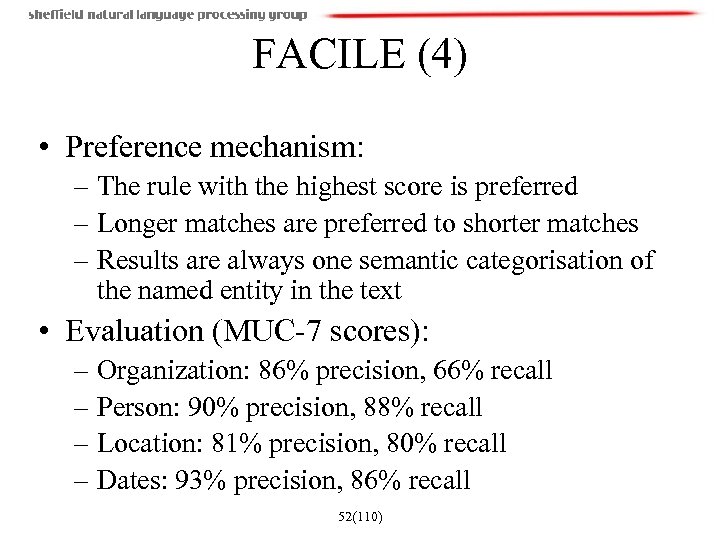

FACILE (4) • Preference mechanism: – The rule with the highest score is preferred – Longer matches are preferred to shorter matches – Results are always one semantic categorisation of the named entity in the text • Evaluation (MUC-7 scores): – Organization: 86% precision, 66% recall – Person: 90% precision, 88% recall – Location: 81% precision, 80% recall – Dates: 93% precision, 86% recall 52(110)

FACILE (4) • Preference mechanism: – The rule with the highest score is preferred – Longer matches are preferred to shorter matches – Results are always one semantic categorisation of the named entity in the text • Evaluation (MUC-7 scores): – Organization: 86% precision, 66% recall – Person: 90% precision, 88% recall – Location: 81% precision, 80% recall – Dates: 93% precision, 86% recall 52(110)

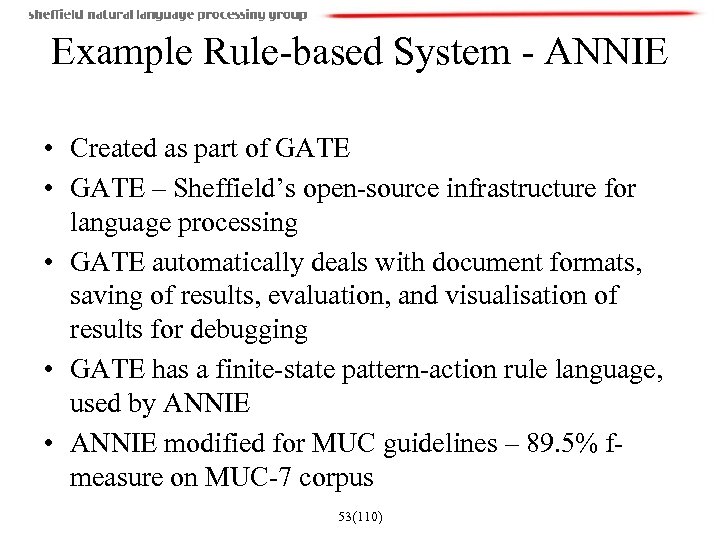

Example Rule-based System - ANNIE • Created as part of GATE • GATE – Sheffield’s open-source infrastructure for language processing • GATE automatically deals with document formats, saving of results, evaluation, and visualisation of results for debugging • GATE has a finite-state pattern-action rule language, used by ANNIE • ANNIE modified for MUC guidelines – 89. 5% fmeasure on MUC-7 corpus 53(110)

Example Rule-based System - ANNIE • Created as part of GATE • GATE – Sheffield’s open-source infrastructure for language processing • GATE automatically deals with document formats, saving of results, evaluation, and visualisation of results for debugging • GATE has a finite-state pattern-action rule language, used by ANNIE • ANNIE modified for MUC guidelines – 89. 5% fmeasure on MUC-7 corpus 53(110)

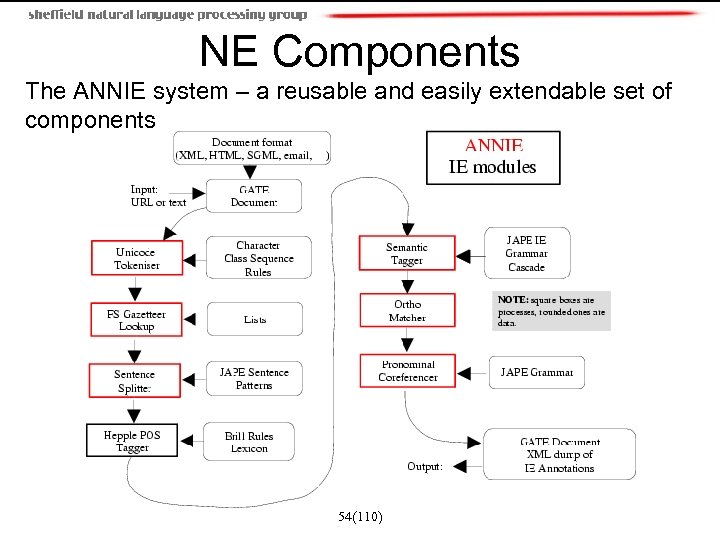

NE Components The ANNIE system – a reusable and easily extendable set of components 54(110)

NE Components The ANNIE system – a reusable and easily extendable set of components 54(110)

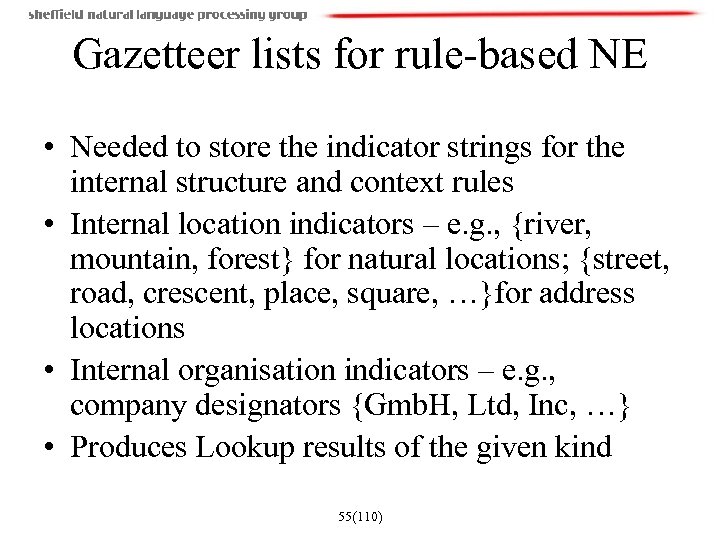

Gazetteer lists for rule-based NE • Needed to store the indicator strings for the internal structure and context rules • Internal location indicators – e. g. , {river, mountain, forest} for natural locations; {street, road, crescent, place, square, …}for address locations • Internal organisation indicators – e. g. , company designators {Gmb. H, Ltd, Inc, …} • Produces Lookup results of the given kind 55(110)

Gazetteer lists for rule-based NE • Needed to store the indicator strings for the internal structure and context rules • Internal location indicators – e. g. , {river, mountain, forest} for natural locations; {street, road, crescent, place, square, …}for address locations • Internal organisation indicators – e. g. , company designators {Gmb. H, Ltd, Inc, …} • Produces Lookup results of the given kind 55(110)

The Named Entity Grammars • Phases run sequentially and constitute a cascade of FSTs over the pre-processing results • Hand-coded rules applied to annotations to identify NEs • Annotations from format analysis, tokeniser, sentence splitter, POS tagger, and gazetteer modules • Use of contextual information • Finds person names, locations, organisations, dates, addresses. 56(110)

The Named Entity Grammars • Phases run sequentially and constitute a cascade of FSTs over the pre-processing results • Hand-coded rules applied to annotations to identify NEs • Annotations from format analysis, tokeniser, sentence splitter, POS tagger, and gazetteer modules • Use of contextual information • Finds person names, locations, organisations, dates, addresses. 56(110)

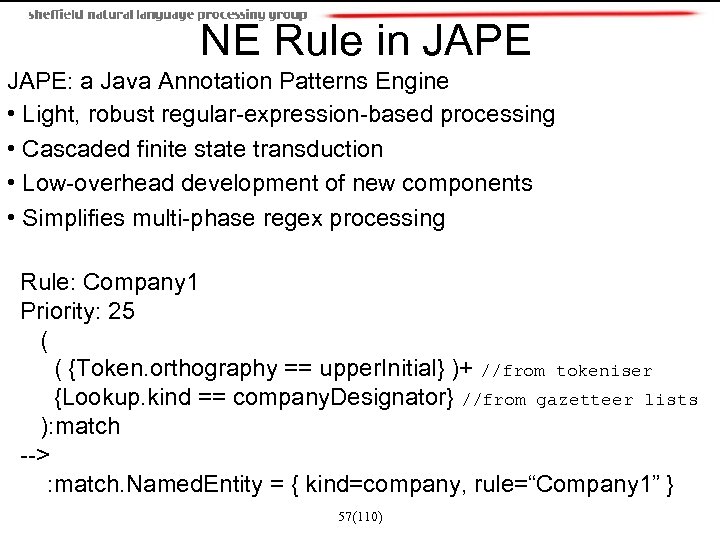

NE Rule in JAPE: a Java Annotation Patterns Engine • Light, robust regular-expression-based processing • Cascaded finite state transduction • Low-overhead development of new components • Simplifies multi-phase regex processing Rule: Company 1 Priority: 25 ( {Token. orthography == upper. Initial} )+ //from tokeniser {Lookup. kind == company. Designator} //from gazetteer lists ): match --> : match. Named. Entity = { kind=company, rule=“Company 1” } 57(110)

NE Rule in JAPE: a Java Annotation Patterns Engine • Light, robust regular-expression-based processing • Cascaded finite state transduction • Low-overhead development of new components • Simplifies multi-phase regex processing Rule: Company 1 Priority: 25 ( {Token. orthography == upper. Initial} )+ //from tokeniser {Lookup. kind == company. Designator} //from gazetteer lists ): match --> : match. Named. Entity = { kind=company, rule=“Company 1” } 57(110)

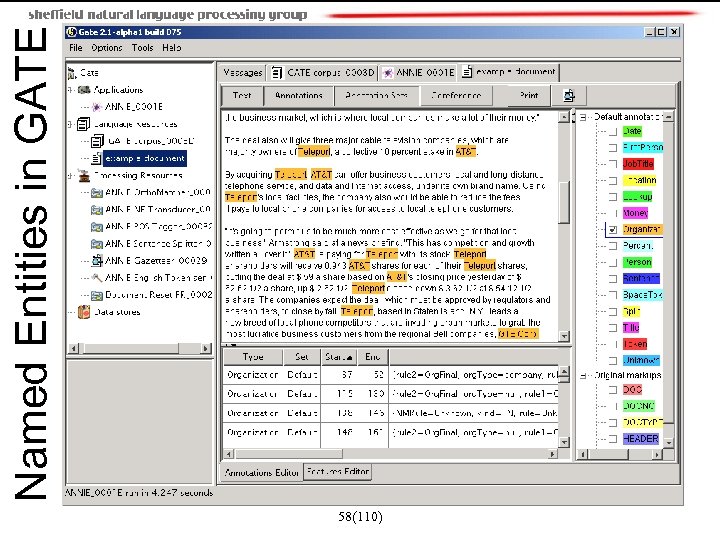

58(110) Named Entities in GATE

58(110) Named Entities in GATE

Using co-reference to classify ambiguous NEs • Orthographic co-reference module that matches proper names in a document • Improves NE results by assigning entity type to previously unclassified names, based on relations with classified NEs • May not reclassify already classified entities • Classification of unknown entities very useful for surnames which match a full name, or abbreviations, e. g. [Bonfield] will match [Sir Peter Bonfield]; [International Business Machines Ltd. ] will match [IBM] 59(110)

Using co-reference to classify ambiguous NEs • Orthographic co-reference module that matches proper names in a document • Improves NE results by assigning entity type to previously unclassified names, based on relations with classified NEs • May not reclassify already classified entities • Classification of unknown entities very useful for surnames which match a full name, or abbreviations, e. g. [Bonfield] will match [Sir Peter Bonfield]; [International Business Machines Ltd. ] will match [IBM] 59(110)

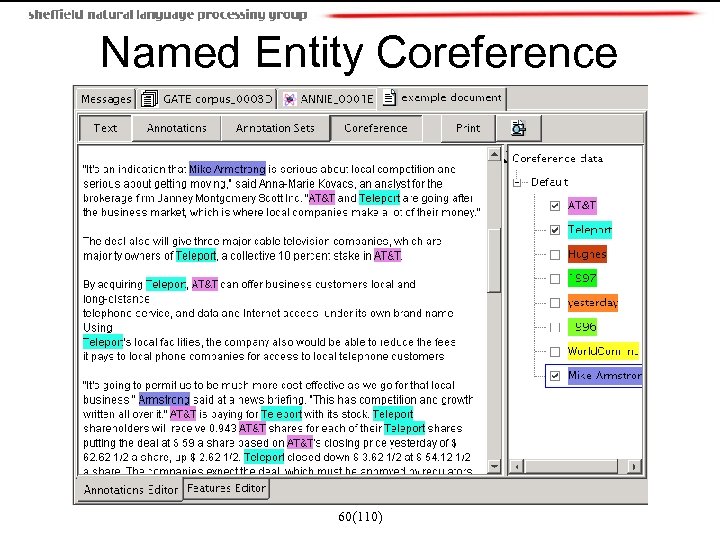

Named Entity Coreference 60(110)

Named Entity Coreference 60(110)

DEMO 61(110)

DEMO 61(110)

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges 62(110)

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges 62(110)

Machine Learning Approaches • ML approaches frequently break down the NE task in two parts: – Recognising the entity boundaries – Classifying the entities in the NE categories • Some work is only on one task or the other • Tokens in text are often coded with the IOB scheme – O – outside, B-XXX – first word in NE, I-XXX – all other words in NE – Easy to convert to/from inline MUC-style markup – Argentina B-LOC played O with O Del B-PER Bosque I-PER 63(110)

Machine Learning Approaches • ML approaches frequently break down the NE task in two parts: – Recognising the entity boundaries – Classifying the entities in the NE categories • Some work is only on one task or the other • Tokens in text are often coded with the IOB scheme – O – outside, B-XXX – first word in NE, I-XXX – all other words in NE – Easy to convert to/from inline MUC-style markup – Argentina B-LOC played O with O Del B-PER Bosque I-PER 63(110)

![Identi. Finder [Bikel et al 99] • Based on Hidden Markov Models • Features Identi. Finder [Bikel et al 99] • Based on Hidden Markov Models • Features](https://present5.com/presentation/2e1351b696d3c7c34624d04a52866b1e/image-60.jpg) Identi. Finder [Bikel et al 99] • Based on Hidden Markov Models • Features – Capitalisation – Numeric symbols – Punctuation marks – Position in the sentence – 14 features in total, combining above info, e. g. , contains. Digit. And. Dash (09 -96), contains. Digit. And. Comma (23, 000. 00) 64(110)

Identi. Finder [Bikel et al 99] • Based on Hidden Markov Models • Features – Capitalisation – Numeric symbols – Punctuation marks – Position in the sentence – 14 features in total, combining above info, e. g. , contains. Digit. And. Dash (09 -96), contains. Digit. And. Comma (23, 000. 00) 64(110)

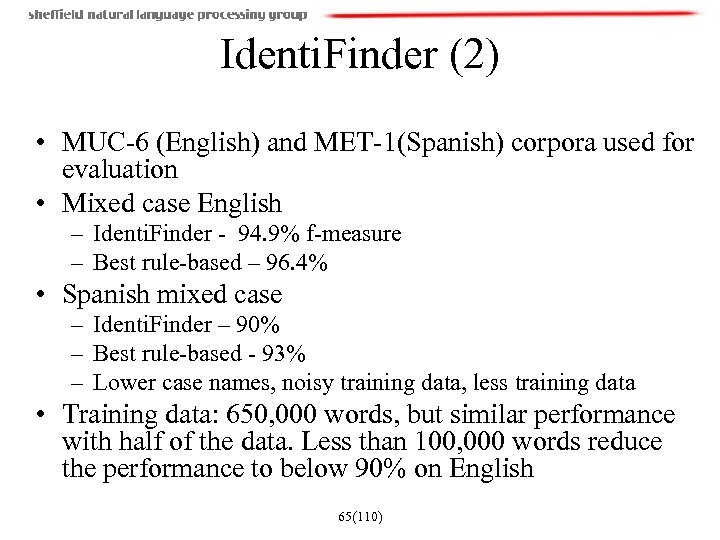

Identi. Finder (2) • MUC-6 (English) and MET-1(Spanish) corpora used for evaluation • Mixed case English – Identi. Finder - 94. 9% f-measure – Best rule-based – 96. 4% • Spanish mixed case – Identi. Finder – 90% – Best rule-based - 93% – Lower case names, noisy training data, less training data • Training data: 650, 000 words, but similar performance with half of the data. Less than 100, 000 words reduce the performance to below 90% on English 65(110)

Identi. Finder (2) • MUC-6 (English) and MET-1(Spanish) corpora used for evaluation • Mixed case English – Identi. Finder - 94. 9% f-measure – Best rule-based – 96. 4% • Spanish mixed case – Identi. Finder – 90% – Best rule-based - 93% – Lower case names, noisy training data, less training data • Training data: 650, 000 words, but similar performance with half of the data. Less than 100, 000 words reduce the performance to below 90% on English 65(110)

![MENE [Borthwick et al 98] • Combining rule-based and ML NE to achieve better MENE [Borthwick et al 98] • Combining rule-based and ML NE to achieve better](https://present5.com/presentation/2e1351b696d3c7c34624d04a52866b1e/image-62.jpg) MENE [Borthwick et al 98] • Combining rule-based and ML NE to achieve better performance • Tokens tagged as: XXX_start, XXX_continue, XXX_end, XXX_unique, other (non-NE), where XXX is an NE category • Uses Maximum Entropy – One only needs to find the best features for the problem – ME estimation routine finds the best relative weights for the features 66(110)

MENE [Borthwick et al 98] • Combining rule-based and ML NE to achieve better performance • Tokens tagged as: XXX_start, XXX_continue, XXX_end, XXX_unique, other (non-NE), where XXX is an NE category • Uses Maximum Entropy – One only needs to find the best features for the problem – ME estimation routine finds the best relative weights for the features 66(110)

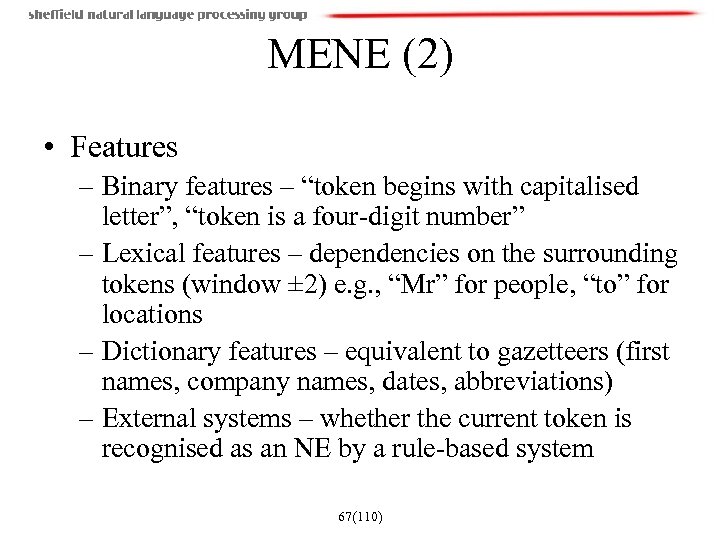

MENE (2) • Features – Binary features – “token begins with capitalised letter”, “token is a four-digit number” – Lexical features – dependencies on the surrounding tokens (window ± 2) e. g. , “Mr” for people, “to” for locations – Dictionary features – equivalent to gazetteers (first names, company names, dates, abbreviations) – External systems – whether the current token is recognised as an NE by a rule-based system 67(110)

MENE (2) • Features – Binary features – “token begins with capitalised letter”, “token is a four-digit number” – Lexical features – dependencies on the surrounding tokens (window ± 2) e. g. , “Mr” for people, “to” for locations – Dictionary features – equivalent to gazetteers (first names, company names, dates, abbreviations) – External systems – whether the current token is recognised as an NE by a rule-based system 67(110)

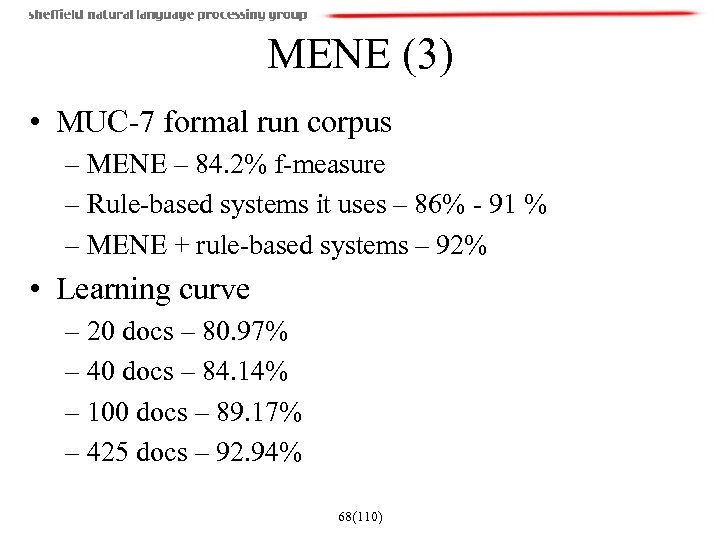

MENE (3) • MUC-7 formal run corpus – MENE – 84. 2% f-measure – Rule-based systems it uses – 86% - 91 % – MENE + rule-based systems – 92% • Learning curve – 20 docs – 80. 97% – 40 docs – 84. 14% – 100 docs – 89. 17% – 425 docs – 92. 94% 68(110)

MENE (3) • MUC-7 formal run corpus – MENE – 84. 2% f-measure – Rule-based systems it uses – 86% - 91 % – MENE + rule-based systems – 92% • Learning curve – 20 docs – 80. 97% – 40 docs – 84. 14% – 100 docs – 89. 17% – 425 docs – 92. 94% 68(110)

![NE Recognition without Gazetteers [Mikheev et al 99] • How big should gazetteer lists NE Recognition without Gazetteers [Mikheev et al 99] • How big should gazetteer lists](https://present5.com/presentation/2e1351b696d3c7c34624d04a52866b1e/image-65.jpg) NE Recognition without Gazetteers [Mikheev et al 99] • How big should gazetteer lists be? • Experiment with simple list lookup approach on MUC-7 corpus • Learned lists – MUC-7 training corpus – 1228 person names – 809 organisations – 770 locations • Common lists (from the Web) – 5000 locations – 33, 000 organisations – 27, 000 person names 69(110)

NE Recognition without Gazetteers [Mikheev et al 99] • How big should gazetteer lists be? • Experiment with simple list lookup approach on MUC-7 corpus • Learned lists – MUC-7 training corpus – 1228 person names – 809 organisations – 770 locations • Common lists (from the Web) – 5000 locations – 33, 000 organisations – 27, 000 person names 69(110)

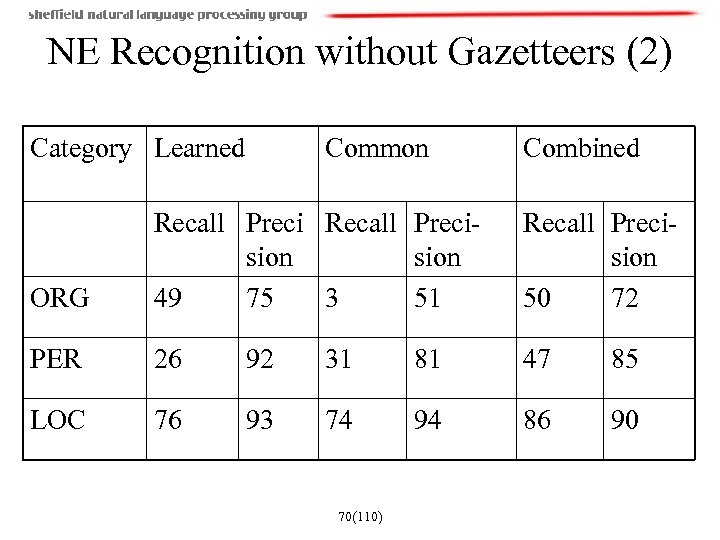

NE Recognition without Gazetteers (2) Category Learned Common Combined ORG Recall Precision 49 75 3 51 Recall Precision 50 72 PER 26 92 31 81 47 85 LOC 76 93 74 94 86 90 70(110)

NE Recognition without Gazetteers (2) Category Learned Common Combined ORG Recall Precision 49 75 3 51 Recall Precision 50 72 PER 26 92 31 81 47 85 LOC 76 93 74 94 86 90 70(110)

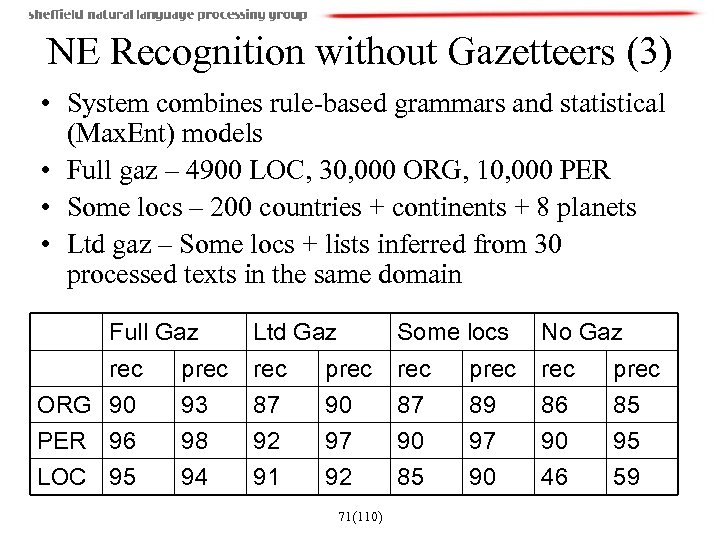

NE Recognition without Gazetteers (3) • System combines rule-based grammars and statistical (Max. Ent) models • Full gaz – 4900 LOC, 30, 000 ORG, 10, 000 PER • Some locs – 200 countries + continents + 8 planets • Ltd gaz – Some locs + lists inferred from 30 processed texts in the same domain Full Gaz Ltd Gaz Some locs No Gaz rec ORG 90 PER 96 prec 93 98 rec 87 92 prec 90 97 rec 87 90 prec 89 97 rec 86 90 prec 85 95 LOC 95 94 91 92 85 90 46 59 71(110)

NE Recognition without Gazetteers (3) • System combines rule-based grammars and statistical (Max. Ent) models • Full gaz – 4900 LOC, 30, 000 ORG, 10, 000 PER • Some locs – 200 countries + continents + 8 planets • Ltd gaz – Some locs + lists inferred from 30 processed texts in the same domain Full Gaz Ltd Gaz Some locs No Gaz rec ORG 90 PER 96 prec 93 98 rec 87 92 prec 90 97 rec 87 90 prec 89 97 rec 86 90 prec 85 95 LOC 95 94 91 92 85 90 46 59 71(110)

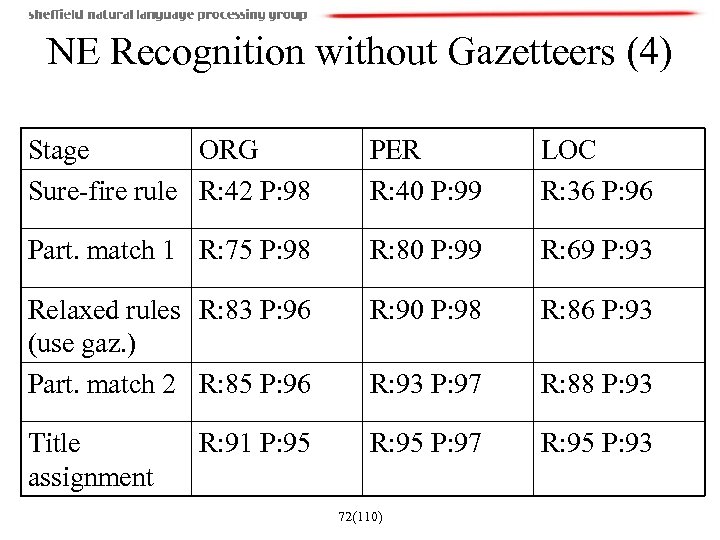

NE Recognition without Gazetteers (4) Stage ORG Sure-fire rule R: 42 P: 98 PER R: 40 P: 99 LOC R: 36 P: 96 Part. match 1 R: 75 P: 98 R: 80 P: 99 R: 69 P: 93 Relaxed rules R: 83 P: 96 (use gaz. ) Part. match 2 R: 85 P: 96 R: 90 P: 98 R: 86 P: 93 R: 93 P: 97 R: 88 P: 93 Title assignment R: 95 P: 97 R: 95 P: 93 R: 91 P: 95 72(110)

NE Recognition without Gazetteers (4) Stage ORG Sure-fire rule R: 42 P: 98 PER R: 40 P: 99 LOC R: 36 P: 96 Part. match 1 R: 75 P: 98 R: 80 P: 99 R: 69 P: 93 Relaxed rules R: 83 P: 96 (use gaz. ) Part. match 2 R: 85 P: 96 R: 90 P: 98 R: 86 P: 93 R: 93 P: 97 R: 88 P: 93 Title assignment R: 95 P: 97 R: 95 P: 93 R: 91 P: 95 72(110)

![Fine-grained Classification of NEs [Fleischman 02] • Finer-grained categorisation needed for applications like question Fine-grained Classification of NEs [Fleischman 02] • Finer-grained categorisation needed for applications like question](https://present5.com/presentation/2e1351b696d3c7c34624d04a52866b1e/image-69.jpg) Fine-grained Classification of NEs [Fleischman 02] • Finer-grained categorisation needed for applications like question answering • Person classification into 8 sub-categories – athlete, politician/government, clergy, businessperson, entertainer/artist, lawyer, doctor/scientist, police. • Approach using local context and global semantic information such as Word. Net • Used a decision list classifier and Identifinder to construct automatically training set from untagged data • Held-out set of 1300 instances hand annotated 73(110)

Fine-grained Classification of NEs [Fleischman 02] • Finer-grained categorisation needed for applications like question answering • Person classification into 8 sub-categories – athlete, politician/government, clergy, businessperson, entertainer/artist, lawyer, doctor/scientist, police. • Approach using local context and global semantic information such as Word. Net • Used a decision list classifier and Identifinder to construct automatically training set from untagged data • Held-out set of 1300 instances hand annotated 73(110)

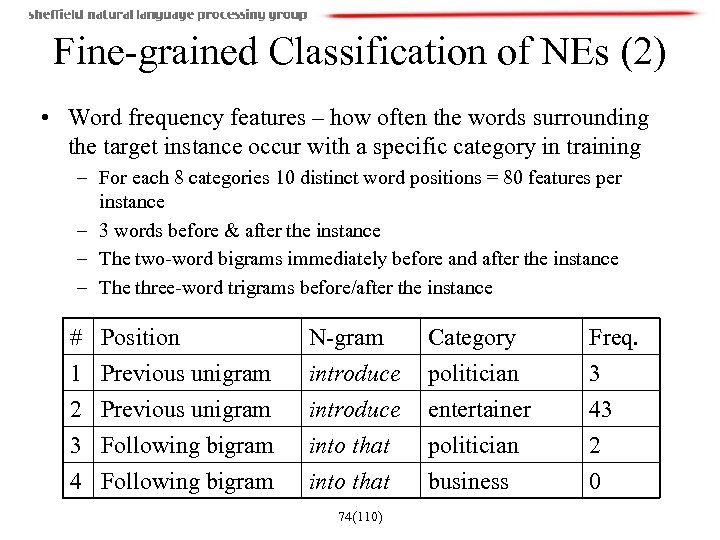

Fine-grained Classification of NEs (2) • Word frequency features – how often the words surrounding the target instance occur with a specific category in training – For each 8 categories 10 distinct word positions = 80 features per instance – 3 words before & after the instance – The two-word bigrams immediately before and after the instance – The three-word trigrams before/after the instance # 1 2 3 Position Previous unigram Following bigram 4 Following bigram N-gram introduce into that Category politician entertainer politician Freq. 3 43 2 into that business 0 74(110)

Fine-grained Classification of NEs (2) • Word frequency features – how often the words surrounding the target instance occur with a specific category in training – For each 8 categories 10 distinct word positions = 80 features per instance – 3 words before & after the instance – The two-word bigrams immediately before and after the instance – The three-word trigrams before/after the instance # 1 2 3 Position Previous unigram Following bigram 4 Following bigram N-gram introduce into that Category politician entertainer politician Freq. 3 43 2 into that business 0 74(110)

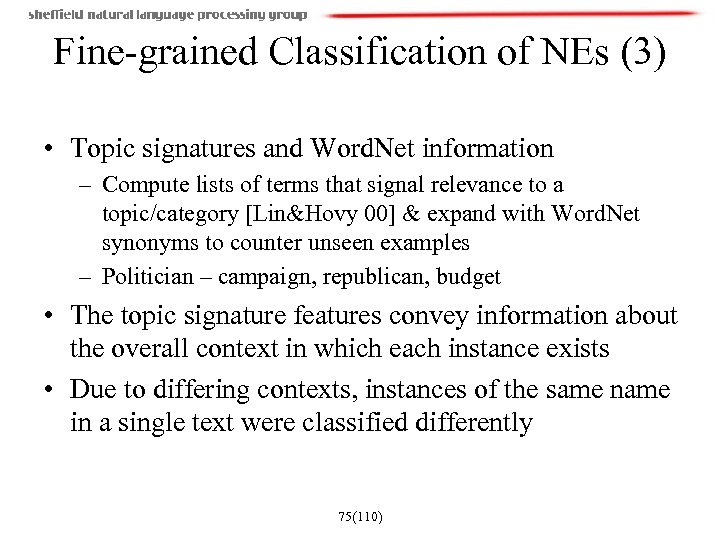

Fine-grained Classification of NEs (3) • Topic signatures and Word. Net information – Compute lists of terms that signal relevance to a topic/category [Lin&Hovy 00] & expand with Word. Net synonyms to counter unseen examples – Politician – campaign, republican, budget • The topic signature features convey information about the overall context in which each instance exists • Due to differing contexts, instances of the same name in a single text were classified differently 75(110)

Fine-grained Classification of NEs (3) • Topic signatures and Word. Net information – Compute lists of terms that signal relevance to a topic/category [Lin&Hovy 00] & expand with Word. Net synonyms to counter unseen examples – Politician – campaign, republican, budget • The topic signature features convey information about the overall context in which each instance exists • Due to differing contexts, instances of the same name in a single text were classified differently 75(110)

Fine-grained Classification of NEs (4) • Mem. Run chooses the prevailing sub-category based on their most frequent classification • Othomatching-like algorithm is developed to match George Bush, and George W. Bush • Expts with k-NN, Naïve Bayes, SVMs, Neural Networks and C 4. 5 show that C 4. 5 is best • Expts with different feature configurations – 70. 4% with all features discussed here • Future work: treating finer grained classification as a WSD task (categories are different senses of a person) 76(110)

Fine-grained Classification of NEs (4) • Mem. Run chooses the prevailing sub-category based on their most frequent classification • Othomatching-like algorithm is developed to match George Bush, and George W. Bush • Expts with k-NN, Naïve Bayes, SVMs, Neural Networks and C 4. 5 show that C 4. 5 is best • Expts with different feature configurations – 70. 4% with all features discussed here • Future work: treating finer grained classification as a WSD task (categories are different senses of a person) 76(110)

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges 77(110)

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges 77(110)

Multilingual Named Entity Recognition • Recent experiments are aimed at NE recognition in multiple languages • TIDES surprise language evaluation exercise measures how quickly researchers can develop NLP components in a new language • CONLL’ 02, CONLL’ 03 focus on languageindependent NE recognition 78(110)

Multilingual Named Entity Recognition • Recent experiments are aimed at NE recognition in multiple languages • TIDES surprise language evaluation exercise measures how quickly researchers can develop NLP components in a new language • CONLL’ 02, CONLL’ 03 focus on languageindependent NE recognition 78(110)

![Analysis of the NE Task in Multiple Languages [Palmer&Day 97] Language NE 4454 Time/ Analysis of the NE Task in Multiple Languages [Palmer&Day 97] Language NE 4454 Time/](https://present5.com/presentation/2e1351b696d3c7c34624d04a52866b1e/image-75.jpg) Analysis of the NE Task in Multiple Languages [Palmer&Day 97] Language NE 4454 Time/ Date 17. 2% Numeric Org/Per/ exprs. Loc 1. 8% 80. 9% Chinese English 2242 10. 7% 9. 5% 79. 8% French 2321 18. 6% 3% 78. 4% Japanese 2146 26. 4% 4% 69. 6% Portuguese 3839 17. 7% 12. 1% 70. 3% Spanish 24. 6% 3% 72. 5% 3579 79(110)

Analysis of the NE Task in Multiple Languages [Palmer&Day 97] Language NE 4454 Time/ Date 17. 2% Numeric Org/Per/ exprs. Loc 1. 8% 80. 9% Chinese English 2242 10. 7% 9. 5% 79. 8% French 2321 18. 6% 3% 78. 4% Japanese 2146 26. 4% 4% 69. 6% Portuguese 3839 17. 7% 12. 1% 70. 3% Spanish 24. 6% 3% 72. 5% 3579 79(110)

Analysis of Multilingual NE (2) • Numerical and time expressions are very easy to capture using rules • Constitute together about 20 -30% of all NEs • All numerical expressions in the 6 languages required only 5 patterns • Time expressions similarly require only a few rules (less than 30 per language) • Many of these rules are reusable across the languages 80(110)

Analysis of Multilingual NE (2) • Numerical and time expressions are very easy to capture using rules • Constitute together about 20 -30% of all NEs • All numerical expressions in the 6 languages required only 5 patterns • Time expressions similarly require only a few rules (less than 30 per language) • Many of these rules are reusable across the languages 80(110)

Analysis of Multilingual NE (3) • Suggest a method for calculating the lower bound for system performance given a corpus in the target language • Conclusion: Much of the NE task can be achieved by simple string analysis and common phrasal contexts • Zipf’s law: the prevalence of frequent phenomena allow high scores to be achieved directly from the training data • Chinese, Japanese, and Portuguese corpora had a lower bound above 70% • Substantial further advances require language specificity 81(110)

Analysis of Multilingual NE (3) • Suggest a method for calculating the lower bound for system performance given a corpus in the target language • Conclusion: Much of the NE task can be achieved by simple string analysis and common phrasal contexts • Zipf’s law: the prevalence of frequent phenomena allow high scores to be achieved directly from the training data • Chinese, Japanese, and Portuguese corpora had a lower bound above 70% • Substantial further advances require language specificity 81(110)

What is needed for multilingual NE • Extensive support for non-Latin scripts and text encodings, including conversion utilities – Automatic recognition of encoding [Ignat et al 03] – Occupied up to 2/3 of the TIDES Hindi effort • Bi-lingual dictionaries • Annotated corpus for evaluation • Internet resources for gazetteer list collection (e. g. , phone books, yellow pages, bi-lingual pages) 82(110)

What is needed for multilingual NE • Extensive support for non-Latin scripts and text encodings, including conversion utilities – Automatic recognition of encoding [Ignat et al 03] – Occupied up to 2/3 of the TIDES Hindi effort • Bi-lingual dictionaries • Annotated corpus for evaluation • Internet resources for gazetteer list collection (e. g. , phone books, yellow pages, bi-lingual pages) 82(110)

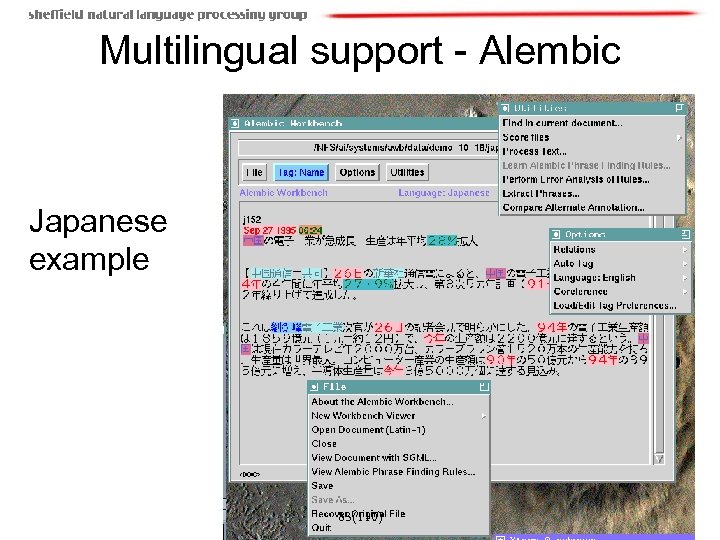

Multilingual support - Alembic Japanese example 83(110)

Multilingual support - Alembic Japanese example 83(110)

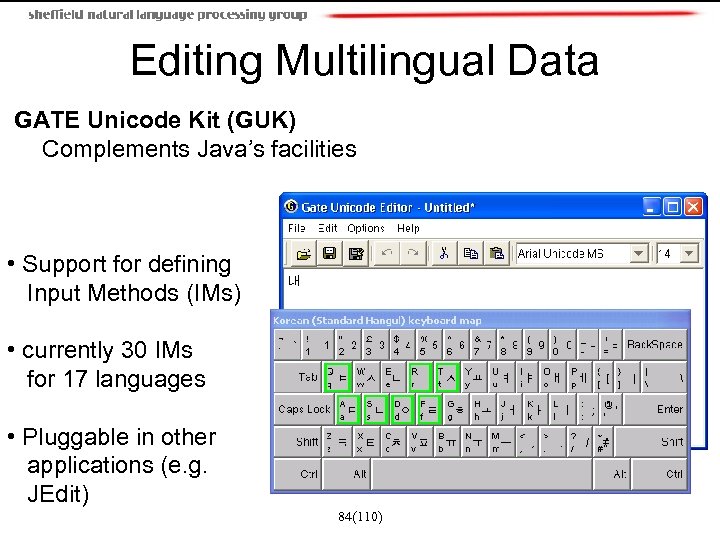

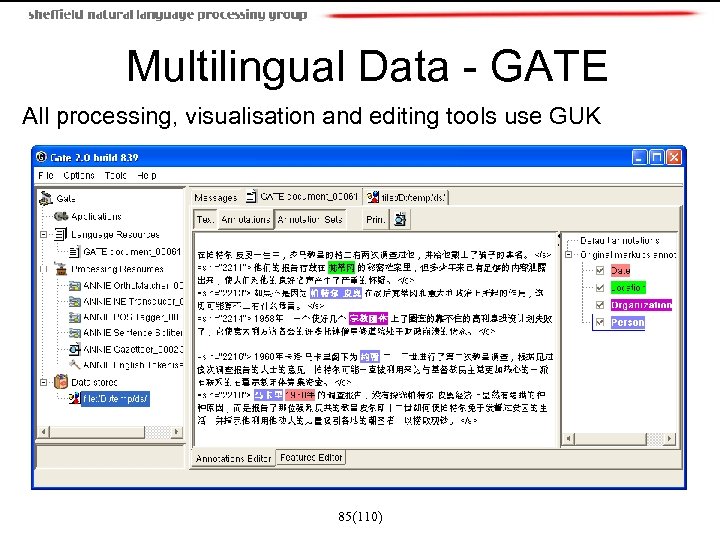

Editing Multilingual Data GATE Unicode Kit (GUK) Complements Java’s facilities • Support for defining Input Methods (IMs) • currently 30 IMs for 17 languages • Pluggable in other applications (e. g. JEdit) 84(110)

Editing Multilingual Data GATE Unicode Kit (GUK) Complements Java’s facilities • Support for defining Input Methods (IMs) • currently 30 IMs for 17 languages • Pluggable in other applications (e. g. JEdit) 84(110)

Multilingual Data - GATE All processing, visualisation and editing tools use GUK 85(110)

Multilingual Data - GATE All processing, visualisation and editing tools use GUK 85(110)

![Gazetteer-based Approach to Multilingual NE [Ignat et al 03] • Deals with locations only Gazetteer-based Approach to Multilingual NE [Ignat et al 03] • Deals with locations only](https://present5.com/presentation/2e1351b696d3c7c34624d04a52866b1e/image-82.jpg) Gazetteer-based Approach to Multilingual NE [Ignat et al 03] • Deals with locations only • Even more ambiguity than in one language: – Multiple places that share the same name, such as the fourteen cities and villages in the world called ‘Paris’ – Place names that are also words in one or more languages, such as ‘And’ (Iran), ‘Split’ (Croatia) – Places have varying names in different languages (Italian ‘Venezia’ vs. English ‘Venice’, German ‘Venedig’, French ‘Venise’) 86(110)

Gazetteer-based Approach to Multilingual NE [Ignat et al 03] • Deals with locations only • Even more ambiguity than in one language: – Multiple places that share the same name, such as the fourteen cities and villages in the world called ‘Paris’ – Place names that are also words in one or more languages, such as ‘And’ (Iran), ‘Split’ (Croatia) – Places have varying names in different languages (Italian ‘Venezia’ vs. English ‘Venice’, German ‘Venedig’, French ‘Venise’) 86(110)

Gazetteer-based multilingual NE (2) • Disambiguation module applies heuristics based on location size and country mentions (prefer the locations from the country mentioned most) • Performance evaluation: – 853 locations from 80 English texts – 96. 8% precision – 96. 5% recall 87(110)

Gazetteer-based multilingual NE (2) • Disambiguation module applies heuristics based on location size and country mentions (prefer the locations from the country mentioned most) • Performance evaluation: – 853 locations from 80 English texts – 96. 8% precision – 96. 5% recall 87(110)

Machine Learning for Multilingual NE • CONLL’ 2002 and 2003 shared tasks were NE in Spanish, Dutch, English, and German • The most popular ML techniques used: – Maximum Entropy (5 systems) – Hidden Markov Models (4 systems) – Connectionist methods (4 systems) • Combining ML methods has been shown to boost results 88(110)

Machine Learning for Multilingual NE • CONLL’ 2002 and 2003 shared tasks were NE in Spanish, Dutch, English, and German • The most popular ML techniques used: – Maximum Entropy (5 systems) – Hidden Markov Models (4 systems) – Connectionist methods (4 systems) • Combining ML methods has been shown to boost results 88(110)

ML for NE at CONLL (2) • The choice of features is at least as important as the choice of ML algorithm – Lexical features (words) – Part-of-speech – Orthographic information – Affixes – Gazetteers • External, unmarked data is useful to derive gazetteers and for extracting training instances 89(110)

ML for NE at CONLL (2) • The choice of features is at least as important as the choice of ML algorithm – Lexical features (words) – Part-of-speech – Orthographic information – Affixes – Gazetteers • External, unmarked data is useful to derive gazetteers and for extracting training instances 89(110)

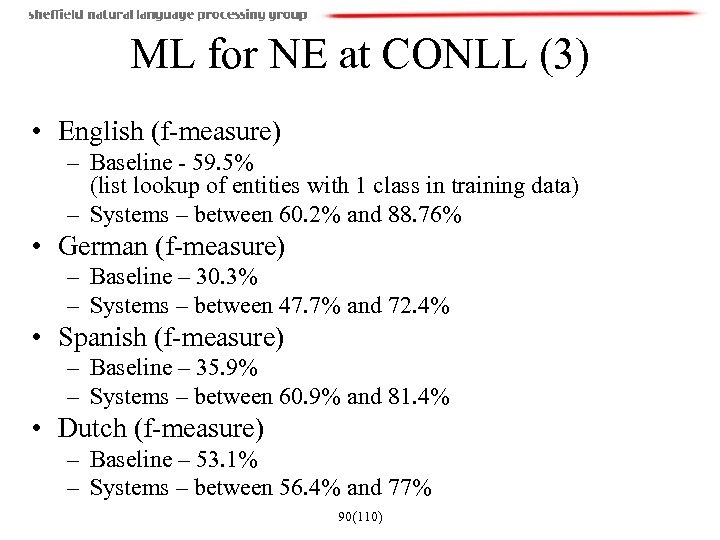

ML for NE at CONLL (3) • English (f-measure) – Baseline - 59. 5% (list lookup of entities with 1 class in training data) – Systems – between 60. 2% and 88. 76% • German (f-measure) – Baseline – 30. 3% – Systems – between 47. 7% and 72. 4% • Spanish (f-measure) – Baseline – 35. 9% – Systems – between 60. 9% and 81. 4% • Dutch (f-measure) – Baseline – 53. 1% – Systems – between 56. 4% and 77% 90(110)

ML for NE at CONLL (3) • English (f-measure) – Baseline - 59. 5% (list lookup of entities with 1 class in training data) – Systems – between 60. 2% and 88. 76% • German (f-measure) – Baseline – 30. 3% – Systems – between 47. 7% and 72. 4% • Spanish (f-measure) – Baseline – 35. 9% – Systems – between 60. 9% and 81. 4% • Dutch (f-measure) – Baseline – 53. 1% – Systems – between 56. 4% and 77% 90(110)

TIDES surprise language exercise • Collaborative effort between a number of sites to develop resources and tools for various LE tasks on a surprise language • Tasks: IE (including NE), machine translation, summarisation, cross-language IR • Dry-run lasted 10 days on the Cebuano language from the Philippines • Surprise language was Hindi, announced at the start of June 2003; duration 1 month 91(110)

TIDES surprise language exercise • Collaborative effort between a number of sites to develop resources and tools for various LE tasks on a surprise language • Tasks: IE (including NE), machine translation, summarisation, cross-language IR • Dry-run lasted 10 days on the Cebuano language from the Philippines • Surprise language was Hindi, announced at the start of June 2003; duration 1 month 91(110)

Language categorisation • LDC – survey of 300 largest languages (by population) to establish what resources are available • http: //www. ldc. upenn. edu/Projects/TIDES/lan guage-summary-table. html • Classification dimensions: – Dictionaries, news texts, parallel texts, e. g. , Bible – Script, orthography, words separated by spaces 92(110)

Language categorisation • LDC – survey of 300 largest languages (by population) to establish what resources are available • http: //www. ldc. upenn. edu/Projects/TIDES/lan guage-summary-table. html • Classification dimensions: – Dictionaries, news texts, parallel texts, e. g. , Bible – Script, orthography, words separated by spaces 92(110)

The Surprise Languages • Cebuano: – Latin script and words are spaced, but – Few resources and little work, so – Medium difficulty • Hindi – Non-latin script, different encodings used, words are spaced, no capitalisation – Many resources available – Medium difficulty 93(110)

The Surprise Languages • Cebuano: – Latin script and words are spaced, but – Few resources and little work, so – Medium difficulty • Hindi – Non-latin script, different encodings used, words are spaced, no capitalisation – Many resources available – Medium difficulty 93(110)

Named Entity Recognition for TIDES • Information on other systems and results from TIDES is still unavailable to non-TIDES participants • Will be made available by the end of 2003 in a Special issue of ACM Transactions on Asian Language Information Processing (TALIP). Rapid Development of Language Capabilities: The Surprise Languages • The Sheffield approach is presented below, because it is not subject to these restrictions 94(110)

Named Entity Recognition for TIDES • Information on other systems and results from TIDES is still unavailable to non-TIDES participants • Will be made available by the end of 2003 in a Special issue of ACM Transactions on Asian Language Information Processing (TALIP). Rapid Development of Language Capabilities: The Surprise Languages • The Sheffield approach is presented below, because it is not subject to these restrictions 94(110)

Dictionary-based Adaptation of an English POS tagger • Substituted Hindi/Cebuano lexicon for English one in a Brill-like tagger • Hindi/Cebuano lexicon derived from a bi-lingual dictionary • Used empty ruleset since no training data available • Used default heuristics (e. g. return NNP for capitalised words) • Very experimental, but reasonable results 95(110)

Dictionary-based Adaptation of an English POS tagger • Substituted Hindi/Cebuano lexicon for English one in a Brill-like tagger • Hindi/Cebuano lexicon derived from a bi-lingual dictionary • Used empty ruleset since no training data available • Used default heuristics (e. g. return NNP for capitalised words) • Very experimental, but reasonable results 95(110)

Evaluation of the Tagger • No formal evaluation was possible • Estimate around 67% accuracy on Hindi – evaluated by a native speaker on 1000 words • Created in 2 person days • Results and a tagging service made available to other researchers in TIDES • Important pre-requisite for NE recognition 96(110)

Evaluation of the Tagger • No formal evaluation was possible • Estimate around 67% accuracy on Hindi – evaluated by a native speaker on 1000 words • Created in 2 person days • Results and a tagging service made available to other researchers in TIDES • Important pre-requisite for NE recognition 96(110)

NE grammars • Most English JAPE rules based on POS tags and gazetteer lookup • Grammars can be reused for languages with similar word order, orthography etc. • No time to make detailed study of Cebuano, but very similar in structure to English • Most of the rules left as for English, but some adjustments to handle especially dates • Used both English and Cebuano grammars and gazetteers, because NEs appear in both languages 97(110)

NE grammars • Most English JAPE rules based on POS tags and gazetteer lookup • Grammars can be reused for languages with similar word order, orthography etc. • No time to make detailed study of Cebuano, but very similar in structure to English • Most of the rules left as for English, but some adjustments to handle especially dates • Used both English and Cebuano grammars and gazetteers, because NEs appear in both languages 97(110)

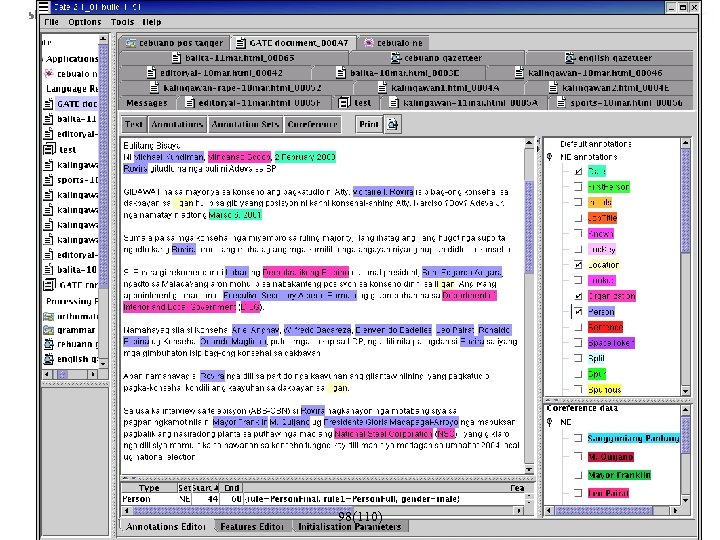

98(110)

98(110)

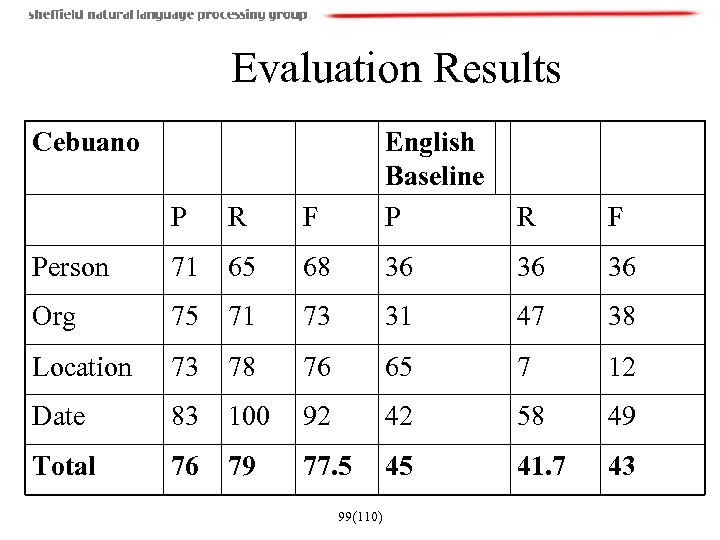

Evaluation Results Cebuano P R F English Baseline P Person 71 65 68 36 36 36 Org 75 71 73 31 47 38 Location 73 78 76 65 7 12 Date 83 100 92 42 58 49 Total 76 79 77. 5 45 41. 7 43 99(110) R F

Evaluation Results Cebuano P R F English Baseline P Person 71 65 68 36 36 36 Org 75 71 73 31 47 38 Location 73 78 76 65 7 12 Date 83 100 92 42 58 49 Total 76 79 77. 5 45 41. 7 43 99(110) R F

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges 100(110)

Structure of the Tutorial • • • task definition applications corpora, annotation evaluation and testing how to – preprocessing – approaches to NE – baseline – rule-based approaches – learning-based approaches • multilinguality • future challenges 100(110)

Future challenges • Towards semantic tagging of entities • New evaluation metrics for semantic entity recognition • Expanding the set of entities recognised – e. g. , vehicles, weapons, substances (food, drug) • Finer-grained hierarchies, e. g. , types of Organizations (government, commercial, educational, etc. ), Locations (regions, countries, cities, water, etc) 101(110)

Future challenges • Towards semantic tagging of entities • New evaluation metrics for semantic entity recognition • Expanding the set of entities recognised – e. g. , vehicles, weapons, substances (food, drug) • Finer-grained hierarchies, e. g. , types of Organizations (government, commercial, educational, etc. ), Locations (regions, countries, cities, water, etc) 101(110)

![Future challenges (2) • Standardisation of the annotation formats – [Ide & Romary 02] Future challenges (2) • Standardisation of the annotation formats – [Ide & Romary 02]](https://present5.com/presentation/2e1351b696d3c7c34624d04a52866b1e/image-98.jpg) Future challenges (2) • Standardisation of the annotation formats – [Ide & Romary 02] – RDF-based annotation standards – [Collier et al 02] – multi-lingual named entity annotation guidelines – Aimed at defining how to annotate in order to make corpora more reusable and lower the overhead of writing format conversion tools • MUC used inline markup • TIDES and ACE used stand-off markup, but two different kinds (XML vs one-word per line) 102(110)

Future challenges (2) • Standardisation of the annotation formats – [Ide & Romary 02] – RDF-based annotation standards – [Collier et al 02] – multi-lingual named entity annotation guidelines – Aimed at defining how to annotate in order to make corpora more reusable and lower the overhead of writing format conversion tools • MUC used inline markup • TIDES and ACE used stand-off markup, but two different kinds (XML vs one-word per line) 102(110)

Towards Semantic Tagging of Entities • The MUC NE task tagged selected segments of text whenever that text represents the name of an entity. • In ACE (Automated Content Extraction), these names are viewed as mentions of the underlying entities. The main task is to detect (or infer) the mentions in the text of the entities themselves. • ACE focuses on domain- and genre-independent approaches • ACE corpus contains newswire, broadcast news (ASR output and cleaned), and newspaper reports (OCR output and cleaned) 103(110)

Towards Semantic Tagging of Entities • The MUC NE task tagged selected segments of text whenever that text represents the name of an entity. • In ACE (Automated Content Extraction), these names are viewed as mentions of the underlying entities. The main task is to detect (or infer) the mentions in the text of the entities themselves. • ACE focuses on domain- and genre-independent approaches • ACE corpus contains newswire, broadcast news (ASR output and cleaned), and newspaper reports (OCR output and cleaned) 103(110)

ACE Entities • Dealing with – Proper names – e. g. , England, Mr. Smith, IBM – Pronouns – e. g. , he, she, it – Nominal mentions – the company, the spokesman • Identify which mentions in the text refer to which entities, e. g. , – Tony Blair, Mr. Blair, he, the prime minister, he – Gordon Brown, he, Mr. Brown, the chancellor 104(110)

ACE Entities • Dealing with – Proper names – e. g. , England, Mr. Smith, IBM – Pronouns – e. g. , he, she, it – Nominal mentions – the company, the spokesman • Identify which mentions in the text refer to which entities, e. g. , – Tony Blair, Mr. Blair, he, the prime minister, he – Gordon Brown, he, Mr. Brown, the chancellor 104(110)

ACE Entities (2) • Some entities can have different roles, i. e. , behave as Organizations, Locations, or Persons – GPEs (Geo-political entities) • New York [GPE – role: Person], flush with Wall Street money, has a lot of loose change jangling in its pockets. • All three New York [GPE – role: Location] regional commuter train systems were found to be punctual more than 90 percent of the time. 106(110)

ACE Entities (2) • Some entities can have different roles, i. e. , behave as Organizations, Locations, or Persons – GPEs (Geo-political entities) • New York [GPE – role: Person], flush with Wall Street money, has a lot of loose change jangling in its pockets. • All three New York [GPE – role: Location] regional commuter train systems were found to be punctual more than 90 percent of the time. 106(110)

Further information on ACE • ACE is a closed-evaluation initiative, which does not allow the publication of results • Further information on guidelines and corpora is available at: • http: //www. ldc. upenn. edu/Projects/ACE/ • ACE also includes other IE tasks, for further details see Doug Appelt’s presentation: http: //www. clsp. jhu. edu/ws 03/groups/sparse/pr esentations/doug. ppt 107(110)

Further information on ACE • ACE is a closed-evaluation initiative, which does not allow the publication of results • Further information on guidelines and corpora is available at: • http: //www. ldc. upenn. edu/Projects/ACE/ • ACE also includes other IE tasks, for further details see Doug Appelt’s presentation: http: //www. clsp. jhu. edu/ws 03/groups/sparse/pr esentations/doug. ppt 107(110)

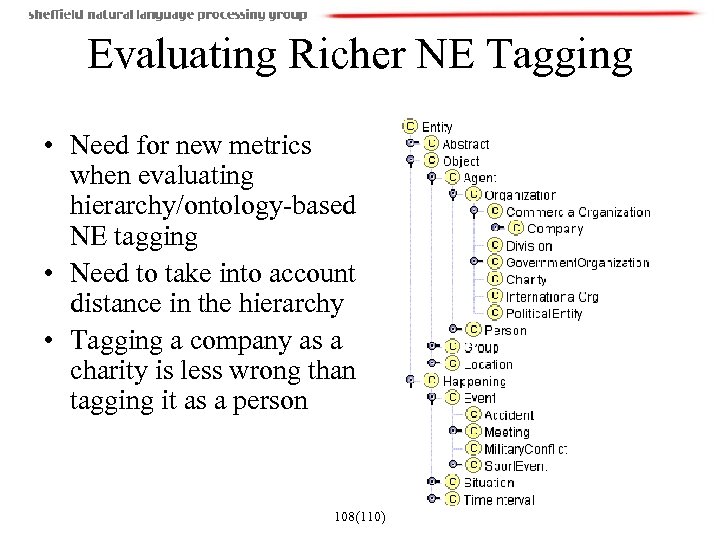

Evaluating Richer NE Tagging • Need for new metrics when evaluating hierarchy/ontology-based NE tagging • Need to take into account distance in the hierarchy • Tagging a company as a charity is less wrong than tagging it as a person 108(110)

Evaluating Richer NE Tagging • Need for new metrics when evaluating hierarchy/ontology-based NE tagging • Need to take into account distance in the hierarchy • Tagging a company as a charity is less wrong than tagging it as a person 108(110)

Further Reading • • Aberdeen J. , Day D. , Hirschman L. , Robinson P. and Vilain M. 1995. MITRE: Description of the Alembic System Used for MUC-6 proceedings. Pages 141 -155. Columbia, Maryland. 1995. Black W. J. , Rinaldi F. , Mowatt D. Facile: Description of the NE System Used For MUC-7. Proceedings of 7 th Message Understanding Conference, Fairfax, VA, 19 April - 1 May, 1998. Borthwick. A. A Maximum Entropy Approach to Named Entity Recognition. Ph. D Dissertation. 1999 Bikel D. , Schwarta R. , Weischedel. R. An algorithm that learns what’s in a name. Machine Learning 34, pp. 211 -231, 1999 Carreras X. , Màrquez L. , Padró. 2002. Named Entity Extraction using Ada. Boost. The 6 th Conference on Natural Language Learning. 2002 Chang J. S. , Chen S. D. , Zheng Y. , Liu X. Z. , and Ke S. J. Large-corpus-based methods for Chinese personal name recognition. Journal of Chinese Information Processing, 6(3): 7 -15, 1992 Chen H. H. , Ding Y. W. , Tsai S. C. and Bian G. W. Description of the NTU System Used for MET 2. Proceedings of 7 th Message Understanding Conference, Fairfax, VA, 19 April - 1 May, 1998. Chinchor. N. MUC-7 Named Entity Task Definition Version 3. 5. Available by from ftp. muc. saic. com/pub/MUC 7 -guidelines, 1997 109(110)

Further Reading • • Aberdeen J. , Day D. , Hirschman L. , Robinson P. and Vilain M. 1995. MITRE: Description of the Alembic System Used for MUC-6 proceedings. Pages 141 -155. Columbia, Maryland. 1995. Black W. J. , Rinaldi F. , Mowatt D. Facile: Description of the NE System Used For MUC-7. Proceedings of 7 th Message Understanding Conference, Fairfax, VA, 19 April - 1 May, 1998. Borthwick. A. A Maximum Entropy Approach to Named Entity Recognition. Ph. D Dissertation. 1999 Bikel D. , Schwarta R. , Weischedel. R. An algorithm that learns what’s in a name. Machine Learning 34, pp. 211 -231, 1999 Carreras X. , Màrquez L. , Padró. 2002. Named Entity Extraction using Ada. Boost. The 6 th Conference on Natural Language Learning. 2002 Chang J. S. , Chen S. D. , Zheng Y. , Liu X. Z. , and Ke S. J. Large-corpus-based methods for Chinese personal name recognition. Journal of Chinese Information Processing, 6(3): 7 -15, 1992 Chen H. H. , Ding Y. W. , Tsai S. C. and Bian G. W. Description of the NTU System Used for MET 2. Proceedings of 7 th Message Understanding Conference, Fairfax, VA, 19 April - 1 May, 1998. Chinchor. N. MUC-7 Named Entity Task Definition Version 3. 5. Available by from ftp. muc. saic. com/pub/MUC 7 -guidelines, 1997 109(110)

Further reading (2) • • Collins M. , Singer Y. Unsupervised models for named entity classification In Proceedings of the Joint SIGDAT Conference on Empirical Methods in Natural Language Processing and Very Large Corpora, 1999 Collins M. Ranking Algorithms for Named-Entity Extraction: Boosting and the Voted Perceptron. Proceedings of the 40 th Annual Meeting of the ACL, Philadelphia, pp. 489 -496, July 2002 Gotoh Y. , Renals S. Information extraction from broadcast news, Philosophical Transactions of the Royal Society of London, series A: Mathematical, Physical and Engineering Sciences, 2000. Grishman R. The NYU System for MUC-6 or Where's the Syntax? Proceedings of the MUC-6 workshop, Washington. November 1995. [Ign 03 a] C. Ignat and B. Pouliquen and A. Ribeiro and R. Steinberger. Extending and Information Extraction Tool Set to Eastern-European Languages. Proceedings of Workshop on Information Extraction for Slavonic and other Central and Eastern European Languages (IESL'03). 2003. Krupka G. R. , Hausman K. Iso. Quest Inc. : Description of the Net. Owl. TM Extractor System as Used for MUC-7. Proceedings of 7 th Message Understanding Conference, Fairfax, VA, 19 April - 1 May, 1998. Mc. Donald D. Internal and External Evidence in the Identification and Semantic Categorization of Proper Names. In B. Boguraev and J. Pustejovsky editors: Corpus Processing for Lexical Acquisition. Pages 21 -39. MIT Press. Cambridge, MA. 1996 Mikheev A. , Grover C. and Moens M. Description of the LTG System Used for MUC-7. Proceedings of 7 th Message Understanding Conference, Fairfax, VA, 19 April - 1 May, 1998 Miller S. , Crystal M. , et al. BBN: Description of the SIFT System as Used for MUC-7. Proceedings of 7 th Message Understanding Conference, Fairfax, VA, 19 April - 1 May, 1998 110(110)

Further reading (2) • • Collins M. , Singer Y. Unsupervised models for named entity classification In Proceedings of the Joint SIGDAT Conference on Empirical Methods in Natural Language Processing and Very Large Corpora, 1999 Collins M. Ranking Algorithms for Named-Entity Extraction: Boosting and the Voted Perceptron. Proceedings of the 40 th Annual Meeting of the ACL, Philadelphia, pp. 489 -496, July 2002 Gotoh Y. , Renals S. Information extraction from broadcast news, Philosophical Transactions of the Royal Society of London, series A: Mathematical, Physical and Engineering Sciences, 2000. Grishman R. The NYU System for MUC-6 or Where's the Syntax? Proceedings of the MUC-6 workshop, Washington. November 1995. [Ign 03 a] C. Ignat and B. Pouliquen and A. Ribeiro and R. Steinberger. Extending and Information Extraction Tool Set to Eastern-European Languages. Proceedings of Workshop on Information Extraction for Slavonic and other Central and Eastern European Languages (IESL'03). 2003. Krupka G. R. , Hausman K. Iso. Quest Inc. : Description of the Net. Owl. TM Extractor System as Used for MUC-7. Proceedings of 7 th Message Understanding Conference, Fairfax, VA, 19 April - 1 May, 1998. Mc. Donald D. Internal and External Evidence in the Identification and Semantic Categorization of Proper Names. In B. Boguraev and J. Pustejovsky editors: Corpus Processing for Lexical Acquisition. Pages 21 -39. MIT Press. Cambridge, MA. 1996 Mikheev A. , Grover C. and Moens M. Description of the LTG System Used for MUC-7. Proceedings of 7 th Message Understanding Conference, Fairfax, VA, 19 April - 1 May, 1998 Miller S. , Crystal M. , et al. BBN: Description of the SIFT System as Used for MUC-7. Proceedings of 7 th Message Understanding Conference, Fairfax, VA, 19 April - 1 May, 1998 110(110)

Further reading (3) • • Palmer D. , Day D. S. A Statistical Profile of the Named Entity Task. Proceedings of the Fifth Conference on Applied Natural Language Processing, Washington, D. C. , March 31 - April 3, 1997. Sekine S. , Grishman R. and Shinou H. A decision tree method for finding and classifying names in Japanese texts. Proceedings of the Sixth Workshop on Very Large Corpora, Montreal, Canada, 1998 Sun J. , Gao J. F. , Zhang L. , Zhou M. , Huang C. N. Chinese Named Entity Identification Using Class-based Language Model. In proceeding of the 19 th International Conference on Computational Linguistics (COLING 2002), pp. 967 -973, 2002. Takeuchi K. , Collier N. Use of Support Vector Machines in Extended Named Entity Recognition. The 6 th Conference on Natural Language Learning. 2002 D. Maynard, K. Bontcheva and H. Cunningham. Towards a semantic extraction of named entities. Recent Advances in Natural Language Processing, Bulgaria, 2003. M. M. Wood and S. J. Lydon and V. Tablan and D. Maynard and H. Cunningham. Using parallel texts to improve recall in IE. Recent Advances in Natural Language Processing, Bulgaria, 2003. D. Maynard, V. Tablan and H. Cunningham. NE recognition without training data on a language you don't speak. ACL Workshop on Multilingual and Mixed-language Named Entity Recognition: Combining Statistical and Symbolic Models, Sapporo, Japan, 2003. 111(110)