9963437b30cf72c3fd6a6c0bec72e3f9.ppt

- Количество слайдов: 51

Munin, Clouds and Treadmarks Distributed Shared Memory course Taken from a presentation of: Maya Maimon (University of Haifa, Israel). 1

Introduction There are two fundamental models for parallel programming: Shared memory model Direct extension of the conventional uniprocessor model wherein each processor is provided with the abstraction that there is but a single memory in the machine. A update to shared data therefore becomes visible to all the processors in the system. Distributed memory model. each processor has a private memory to which no other processor has direct access. communication by explicit message passing. 2

The Goal Distributed memory machines are easier to build. The shared memory programming model is, however, more attractive since the message passing paradigm requires them to explicitly partition data and manage communication. Using a programming model that supports a global address space, an applications programmer can focus on algorithmic development rather than on managing partitioned data sets and communicating values. A distributed shared memory (DSM) system provides a shared memory programming model on distributed memory machine. 3

Distributed Shared Memory The system consists of the same hardware as distributed memory machine, with the addition of a software layer that provides the abstraction of a single shared memory. In practice, each memory remains physically independent, and all communication takes place through explicit message passing performed by the DSM software layer. DSM systems combine the best features of the two methods. They support the convenient shared memory programming model on distributed memory hardware, which is more scalable and less expensive to build. 4

Software Distributed Shared Memory Node 1 Node 2 Node n Mem Mem network distributed shared memory page based, permissions, … single system image, shared virtual address space, … 5

The challenge Performance What is the source of the overhead in DSM? The large amount of communication that is required to maintain consistency. In another words, the maintenance of the shared memory abstraction. 6

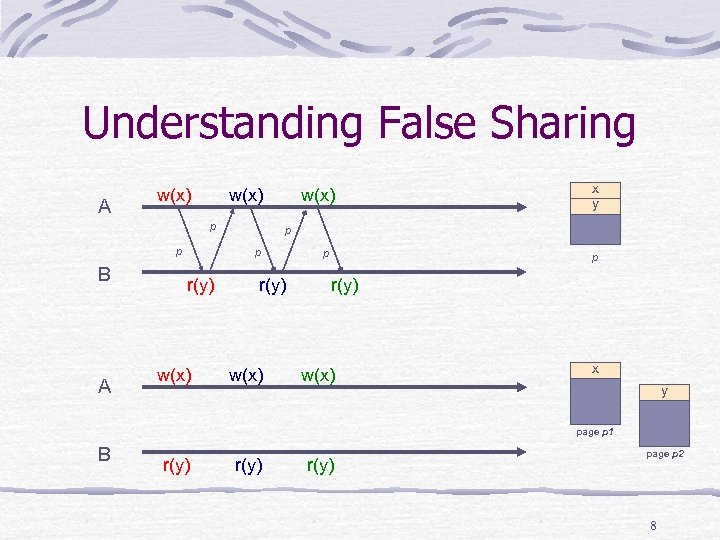

False Sharing False sharing is a situation in which two or more processes access different variables within a page and at least one of the accesses is a write. If only one process is allowed to write to a page at a time, false sharing leads to unnecessary communication, called the “ping pong” effect. 7

Understanding False Sharing A w(x) p p B A w(x) p p r(y) w(x) x y r(y) w(x) p p r(y) w(x) x y page p 1 B r(y) page p 2 8

Techniques for reducing the amount of communication 1) Software release consistency 2) Multiple consistency protocol 3) Write shared protocol 4) an update with timeout mechanism These techniques have been incorporated in the Munin DSM system. 9

The need for Relaxed Consistency Schemes In any implementation of Sequential Consistency there should be some global control mechanism. If processor write to shared data , all the other should know about it , before it can write again. Either of writes or reads require memory synchronization operations. In most implementation writes require some kind of memory synchronization: A w(x) w(y) w(x) B 10

1) Relaxed Consistency Schemes The Relaxed Consistency Schemes are designed to allow less memory synchronization operations. Writes can be delayed, aggregated, eliminated. This results in less communication and therefore higher performance. barrier A w(x) w(y) w(x) B 11

The idea Release consistency exploits the fact that programmers use synchronization to separate accesses to shared variables by different threads. The system then only needs to guarantee that memory is consistent at (select) synchronization points. 12

False Sharing in Relaxed Consistency Schemes False sharing has much smaller overhead in relaxed consistency models. The overhead induced by false sharing can be further reduced by the usage of multiplewriter protocols. Multiple writer protocols allow multiple processes to simultaneously modify their local copy of a shared page. The modifications are merged at certain points of execution. 13

![Release Consistency [Gharachorloo et al. 1990, DASH]* Introduces a special type of variables, called Release Consistency [Gharachorloo et al. 1990, DASH]* Introduces a special type of variables, called](https://present5.com/presentation/9963437b30cf72c3fd6a6c0bec72e3f9/image-14.jpg)

Release Consistency [Gharachorloo et al. 1990, DASH]* Introduces a special type of variables, called synchronization variables or locks. Locks cannot be read or written to. They can be acquired and released. For a lock L those operations are denoted by acquire(L) and release(L) respectively We will say that a process that acquired a lock L but has not released it, holds the lock L. No more than one process can hold a lock L. One process holds the lock while others wait. (*) K. Gharachorloo, D. Lenoski, J. Laudon, P. Gibbons, A. Gupta, and J. L. Hennessy. Memory consistency and event ordering in scalable shared-memory multiprocessors. In Proceedings of the 17 th Annual International Symposium on Computer Architecture, pages 15 --26. IEEE, May 1990. 14

Using Release and Acquire to define execution flow synchronization primitives Let a set of processes release tokens by reaching the operation release in their program order. Let another set (possibly with overlap) acquire those tokens by performing acquire operation, where acquire can proceed only when all tokens have already arrived from all releasing processes. 2 -way synchronization = lock unlock, 1 release, 1 acquire n-way synchronization = barrier, n releases, n acquires PARC’s synch = k-way synchronization 15

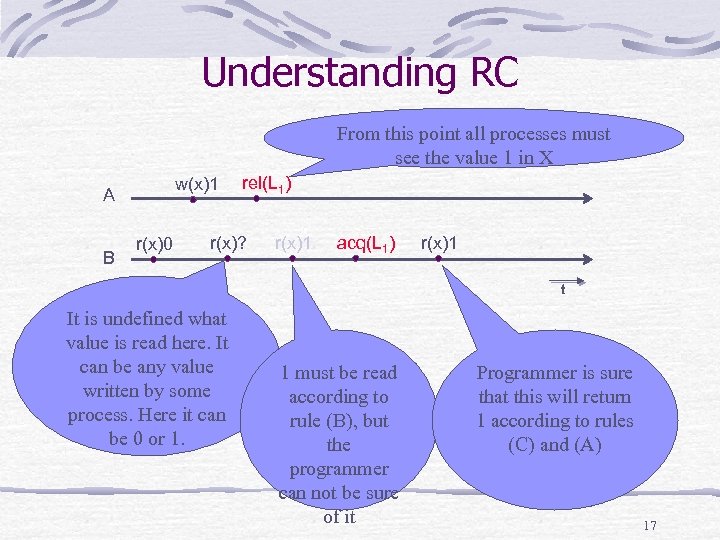

Formal Definition of Release Consistency Conditions for Release Consistency: (A) Before a read or write access is allowed to perform with respect to any other processor, all previous acquire accesses must be performed, and (B) Before a release access is allowed to perform with respect to any other processor, all previous read or write accesses must be performed, and (C) acquire and release accesses are sequentially consistent. 16

Understanding RC From this point all processes must see the value 1 in X w(x)1 A B r(x)0 rel(L 1) r(x)? r(x)1 acq(L 1) r(x)1 t It is undefined what value is read here. It can be any value written by some process. Here it can be 0 or 1. 1 must be read according to rule (B), but the programmer can not be sure of it Programmer is sure that this will return 1 according to rules (C) and (A) 17

Acquire and Release release serves as a memory-synch operation, or a flush of the local modifications to the attention of all other processes. According to the definition, the acquire and release operations are not only used for synchronization of execution, but also for synchronization of memory, i. e. for propagation of writes from/to other processes. This allows to overlap the two expensive kinds of synchronization. This turns out also simpler on the programmer from semantic point of view. 18

Acquire and Release (cont. ) A release followed by an acquire of the same lock guarantees to the programmer that all writes previous to the release will be seen by all reads following the acquire. The idea is to let the programmer decide which blocks of operations need be synchronized, and put them between matching pair of acquire-release operations. In the absence of release/acquire pairs, there is no assurance that modifications will ever propagate between processes. 19

Implementing RC The first implementation was proposed by the inventors of RC and is called DASH combats memory latency by pipelining writes to shared memory. P 2 w(y) w(z) x P 1 w(x) y stalled z ack rel(L) ack • The processor is stalled only when executing a release, at which time it must wait for all its previous writes to perform. 20

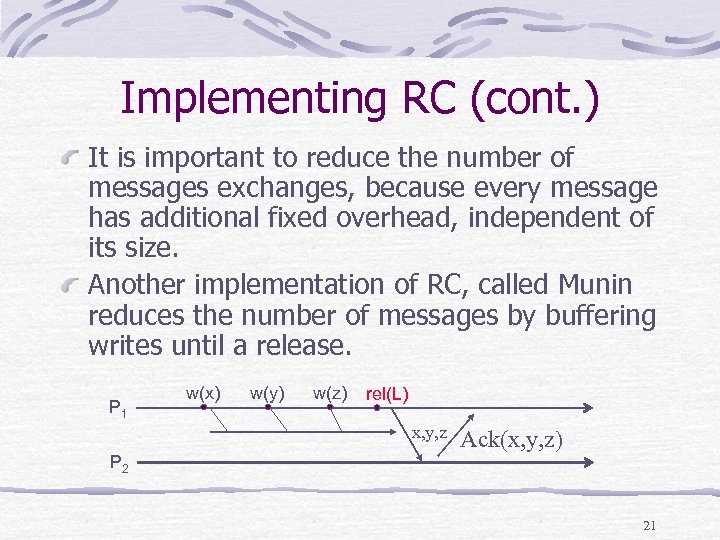

Implementing RC (cont. ) It is important to reduce the number of messages exchanges, because every message has additional fixed overhead, independent of its size. Another implementation of RC, called Munin reduces the number of messages by buffering writes until a release. P 1 w(x) w(y) w(z) rel(L) x, y, z P 2 Ack(x, y, z) 21

![Eager Release Consistency [Carter et al. 1991, Munin]* Implementation of Release Consistency (not a Eager Release Consistency [Carter et al. 1991, Munin]* Implementation of Release Consistency (not a](https://present5.com/presentation/9963437b30cf72c3fd6a6c0bec72e3f9/image-22.jpg)

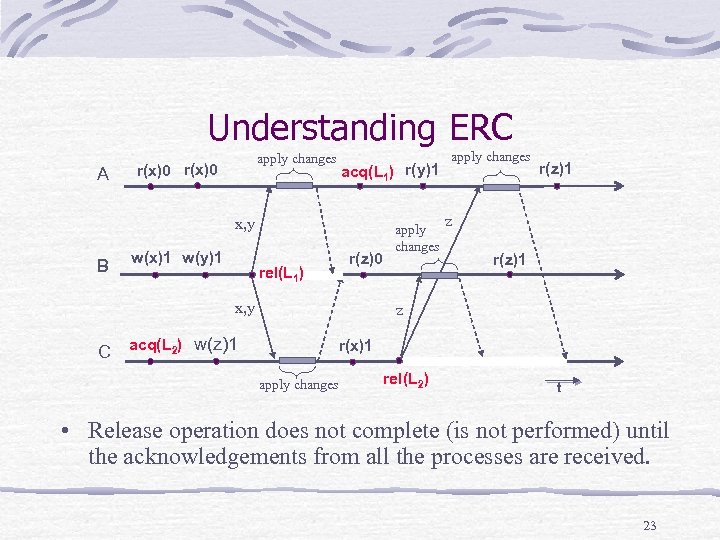

Eager Release Consistency [Carter et al. 1991, Munin]* Implementation of Release Consistency (not a new memory model). Postpone sending modifications to the next release. Upon a release send all accumulated modifications to all caching processes. No memory synchronization operations on an acquire. Upon a miss (no local caching of the variable) get latest modification from latest modifier (need some more control to store its identity, no big deal). (*) John B. Carter, John K. Bennett, and Willy Zwaenepoel. Implementation and Performance of MUNIN. In Proceedings of the 13 th ACM Symposium on Operating Systems Principles, pages 152 --164, October 1991. 22

Understanding ERC A apply changes r(x)0 acq(L 1) r(y)1 x, y B w(x)1 w(y)1 r(z)0 rel(L 1) x, y C acq(L 2) w(z)1 apply changes r(z)1 z r(x)1 apply changes rel(L 2) t • Release operation does not complete (is not performed) until the acknowledgements from all the processes are received. 23

Release Vs. Sequential consistency Ordinary reads and writes can be buffered or pipelined between synchronization points. 2. Ordinary reads and writes following a release do not have to be delayed for the release to complete. 3. An acquire access does not have to delay for previous ordinary reads and writes to complete. 1. 24

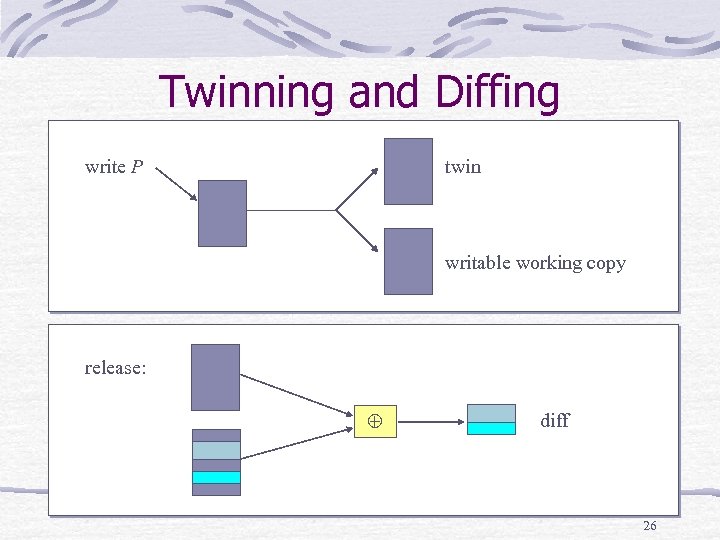

2) Write Shared Protocol (Supporting Multiple Writers in ERC) Modifications are detected by twinning. When writing to unmodified page, its twin is created. When releasing, the final copy of a page is compared to its twin. The resulting difference is called a diff. Twinning and diffing not only allow multiple writers, but also reduce communication. Sending a diff is cheaper than sending an entire page. 25

Twinning and Diffing write P twin writable working copy release: diff 26

3)Multiple Consistency Protocol The use of a single protocol for all shared data leads to a situation where some programs can be handled effectively by a given DSM system, while others cannot. Types of access patterns that should be supported: 1. 2. 3. 4. 5. Conventional shared Read only Migratory Write shared synchronization 27

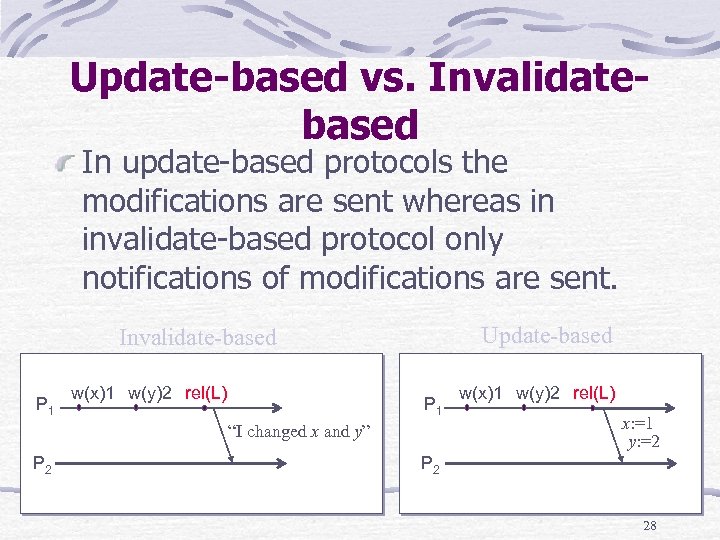

Update-based vs. Invalidatebased In update based protocols the modifications are sent whereas in invalidate based protocol only notifications of modifications are sent. Update-based Invalidate-based P 1 w(x)1 w(y)2 rel(L) P 1 “I changed x and y” P 2 w(x)1 w(y)2 rel(L) x: =1 y: =2 P 2 28

Update-Based vs. Invalidate. Based (cont. ) Invalidations are smaller than the updates. The bigger the coherency unit the bigger is the difference. In invalidation based schemes there can be significant overhead due to access misses. P 1 P 2 w(x)1 w(y)2 rel(L) inv(x) inv(y) acq(L) get(x) r(x) x=1 get(y) y=2 r(y) 29

4)update with timeout mechanism The problem: Updates to a data item are propagated to all of its replicas, including those that are no longer being used. In DSM systems, this problem becomes even more severe, because the main memories of the nodes in which the replicas are kept are very large and it takes a long time before a page gets replaced, if at all. Solution: invalid the old copies. 30

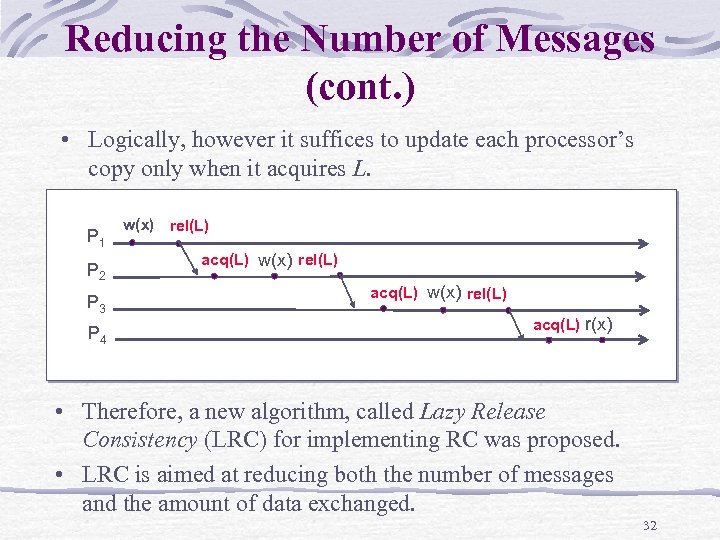

Reducing the Number of Messages In DASH and Munin systems all processes (or all processes that cache the page) see the updates of a process. Consider the following example of execution in Munin: P 1 P 2 P 3 P 4 w(x) rel(L) acq(L) r(x) • There are many unneeded messages. In DASH even more. • This problem exists in invalidation-based schemes as well. 31

Reducing the Number of Messages (cont. ) • Logically, however it suffices to update each processor’s copy only when it acquires L. P 1 P 2 P 3 P 4 w(x) rel(L) acq(L) r(x) • Therefore, a new algorithm, called Lazy Release Consistency (LRC) for implementing RC was proposed. • LRC is aimed at reducing both the number of messages and the amount of data exchanged. 32

![Lazy Release Consistency [Keleher et al. , Treadmarks 1992]* • The idea is to Lazy Release Consistency [Keleher et al. , Treadmarks 1992]* • The idea is to](https://present5.com/presentation/9963437b30cf72c3fd6a6c0bec72e3f9/image-33.jpg)

Lazy Release Consistency [Keleher et al. , Treadmarks 1992]* • The idea is to postpone sending of modifications until a remote processor actually needs them. • Invalidate-based protocol • The BIG advantage: no need to get modifications that are irrelevant, because they are already masked by newer ones. • NOTE: implements a slightly more relaxed memory model than RC! (*) P. Keleher, A. L. Cox, S. Dwarkadas, and W. Zwaenopol. Treadmarks: Distributed shared memory on standard workstations and operating systems. In Proceedings of the 1994 Winter Usenix Conference, pages 115 --132, Jan. 1994. 33

Lazy release consistency Enforces consistency at the time of an acquire. Causes fewer messages to be sent. At the time of a lock release, Munin sends messages to all processors who cache data modified by the releasing processor. In contrast, in lazy release consistency, consistency messages only travel between the last releaser and the new acquirer. But : Somewhat more complicated After a release, Munin can forget about all modifications the releasing processor made prior to the release. 34

Multiple writer protocol in Treadmarks The problem with a single writer protocol. False sharing can cause single writer protocols to perform even worse. multiple writer protocols allow multiple writers to simultaneously modify the same page, with consistency traffic deferred until a later time, in particular until synchronization occurs. Using diff. 35

Multiple writer protocol in Treadmarks The primary benefit of using diffs is they can be used to implement multiple writerprotocols, but they can also significantly reduce overall bandwidth requirements because diffs are typically much smaller than a page. 36

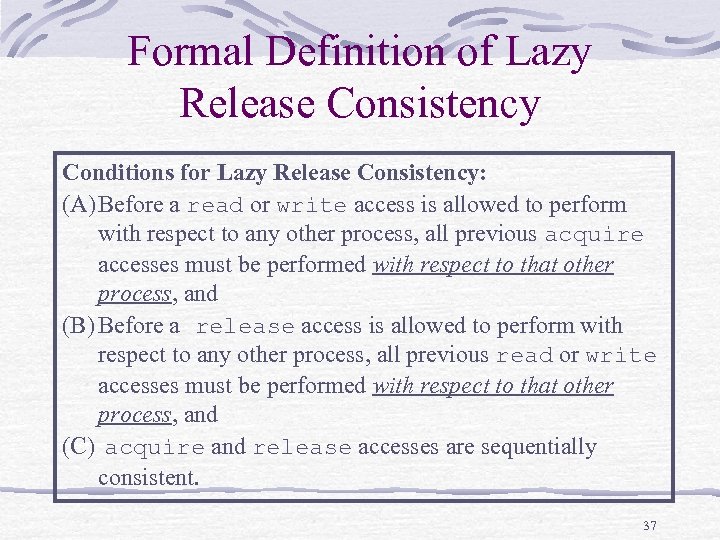

Formal Definition of Lazy Release Consistency Conditions for Lazy Release Consistency: (A) Before a read or write access is allowed to perform with respect to any other process, all previous acquire accesses must be performed with respect to that other process, and (B) Before a release access is allowed to perform with respect to any other process, all previous read or write accesses must be performed with respect to that other process, and (C) acquire and release accesses are sequentially consistent. 37

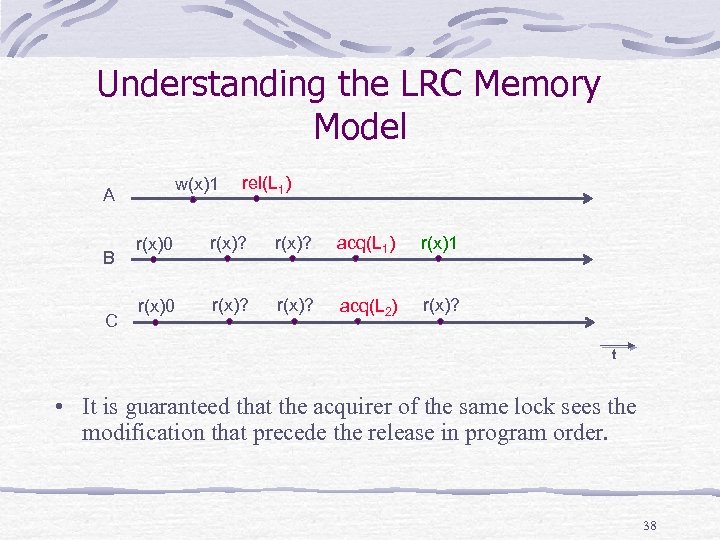

Understanding the LRC Memory Model w(x)1 A B C rel(L 1) r(x)0 r(x)? acq(L 1) r(x)1 r(x)0 r(x)? acq(L 2) r(x)? t • It is guaranteed that the acquirer of the same lock sees the modification that precede the release in program order. 38

CLOUDS Clouds is a distributed operating system that integrates a set of loosely coupled machines into a conceptually centralized system. The system is composed of: Compute servers Data servers User workstations Compute server and data server are logical designations 39

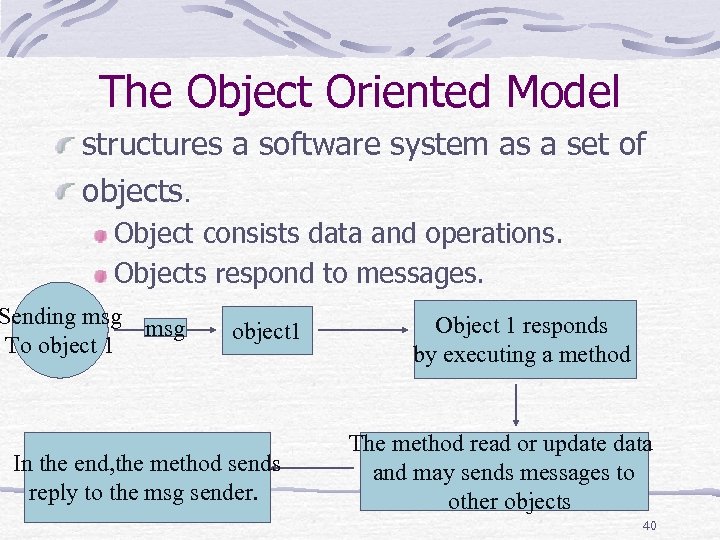

The Object Oriented Model structures a software system as a set of objects. Object consists data and operations. Objects respond to messages. Sending msg To object 1 In the end, the method sends reply to the msg sender. Object 1 responds by executing a method The method read or update data and may sends messages to other objects 40

The Object Thread Model Clouds has a similar structure as object thread, implemented at the operating system level. Clouds objects are large grained encapsulations of code and data which model distinct, persistent virtual address spaces. The contents of an object is an instance of a class is a compiled program module. Unlike virtual address spaces in conventional operating systems, the contents of a Clouds object are long lived. That is, a Clouds object exists forever and survives system crashes and shutdowns (like a file) until explicitly deleted. 41

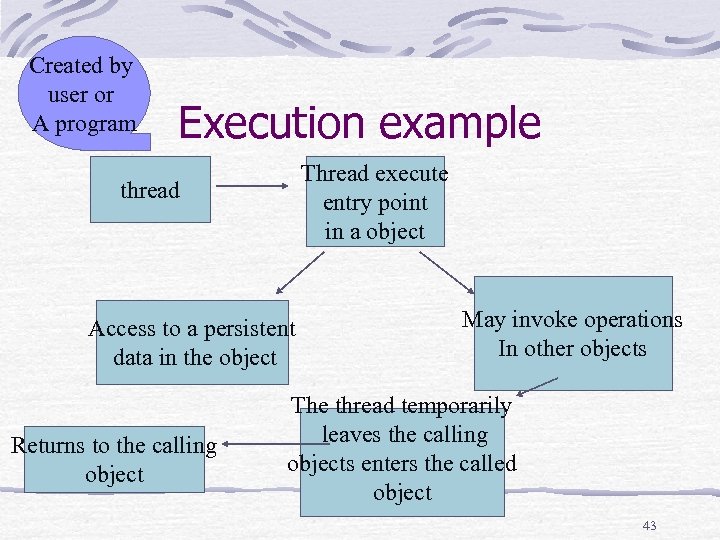

Execution A thread is a logical path of execution that executes code in objects, traversing objects as it executes. Unlike processes in a conventional operating system, a Clouds thread is not bound to a single address space, since each object represents a distinct address space. 42

Created by user or A program Execution example Thread execute entry point in a object thread Access to a persistent data in the object Returns to the calling object May invoke operations In other objects The thread temporarily leaves the calling objects enters the called object 43

Clouds… Clouds uses objects as the abstraction of storage and threads as the abstraction of computation. Clouds is a general purpose operating system. It is intended to support all types of languages and applications, distributed or not. All applications can view the system as a monolith, but distributed applications may choose to view the system as loosely coupled compute and data servers. Each compute facility in Clouds has access to all resources in the system. 44

Distributed Computations A novel feature of Clouds that makes distributed programming simpler is the automatic migration of objects via Clouds object invocation and distributed shared memory (DSM) mechanisms. 45

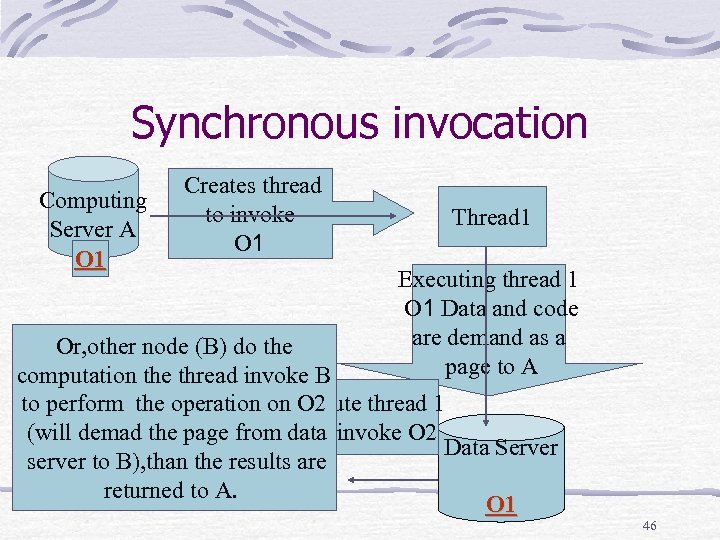

Synchronous invocation Computing Server A O 1 Creates thread to invoke O 1 Thread 1 Executing thread 1 O 1 Data and code are demand as a page to A Or, other node (B) do the If the system chose to invoke computation the thread invoke B on A , the page of O 2 Ecexecute thread 1 to perform the operationare O 2 on on other node and are fromin O 1 invoke O 2 (will demad the page demand data Data Server to node A. results are server to B), than the returned to A. O 1 46

Synchronous invocation This invocation mechanism is called synchronous, because execution in the current environment of the invoking thread waits until the invoked operation completes and results are returned. 47

Asynchronous invocation When thread t 1 executing in object 1 asynchronously invokes an operation of object 2 , a new thread t 2 is created which executes the invoked operation. Asynchronous invocation returns a variable p which can be used as a place holder for object return value of any type. Thread t 1 can continue with execution in object 1 At a later time, thread t 1 can wait for the completion of the operation being executed by t 2 by executing a claim call on p. When claim p returns, t 2 has completed and the results returned by the invoked operation are available to t 1 through p. 48

More details… The differences from RPC Objects migration In Clouds , a DSM coherence protocol is used to ensure that the data in an object is seen by concurrent threads in a consistent fashion even if they are executing on different compute servers. Since several threads can simultaneously execute in an object, it is necessary to coordinate access to the object data. This is handled by Clouds object programmers using Clouds system synchronization mechanisms such as semaphores and locks. Through these mechanisms, threads may synchronize their actions regardless of where they execute. 49

advantages An application programmer does not have to be aware of distribution. A distributed program can be written as a centralized program where processes communicate and synchronize using shared memory. The degree of distribution or concurrency does not have to be decided at the time the program is written. Clouds treats computation and storage orthogonally. Second, Clouds uses a single level persistent store for all objects. 50

Summary Munin-reduced communication mainly by release consistency. Treadmarkslazy release consistency Clouds distributed computation and data. 51

9963437b30cf72c3fd6a6c0bec72e3f9.ppt