5568a7d37c8b99412e8e049f3be491e1.ppt

- Количество слайдов: 15

Multiperspective Perceptron Predictor Daniel A. Jiménez Department of Computer Science & Engineering Texas A&M University

Multiperspective Perceptron Predictor Daniel A. Jiménez Department of Computer Science & Engineering Texas A&M University

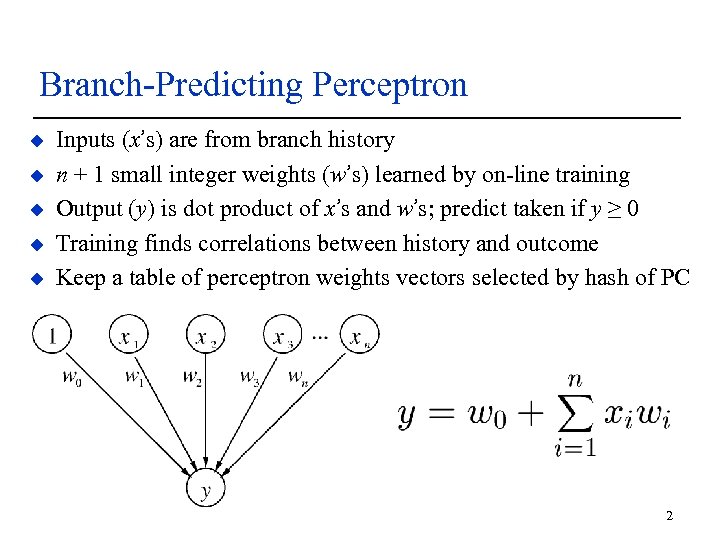

Branch-Predicting Perceptron u u u Inputs (x’s) are from branch history n + 1 small integer weights (w’s) learned by on-line training Output (y) is dot product of x’s and w’s; predict taken if y ≥ 0 Training finds correlations between history and outcome Keep a table of perceptron weights vectors selected by hash of PC 2

Branch-Predicting Perceptron u u u Inputs (x’s) are from branch history n + 1 small integer weights (w’s) learned by on-line training Output (y) is dot product of x’s and w’s; predict taken if y ≥ 0 Training finds correlations between history and outcome Keep a table of perceptron weights vectors selected by hash of PC 2

Neural Prediction in Current Processors u We introduced the perceptron predictor [Jiménez & Lin 2001] u u Today, Oracle SPARC T 4 contains S 3 core with u u u I and others improved it considerably through 2011 “perceptron branch prediction” “branch prediction using a simple neural net algorithm” Their IEEE Micro paper cites our HPCA 2001 paper You can buy one today Today, AMD “Bobcat, ” “Jaguar” and probably other cores u u Have a “neural net logic branch predictor” You can buy one today 3

Neural Prediction in Current Processors u We introduced the perceptron predictor [Jiménez & Lin 2001] u u Today, Oracle SPARC T 4 contains S 3 core with u u u I and others improved it considerably through 2011 “perceptron branch prediction” “branch prediction using a simple neural net algorithm” Their IEEE Micro paper cites our HPCA 2001 paper You can buy one today Today, AMD “Bobcat, ” “Jaguar” and probably other cores u u Have a “neural net logic branch predictor” You can buy one today 3

Hashed Perceptron u Introduced by Tarjan and Skadron 2005 u Breaks the 1 -1 correspondence between history bits and weights u Basic idea: u Hash segments of branch history into different tables u Sum weights selected by hash functions, apply threshold to predict u Update the weights using perceptron learning 4

Hashed Perceptron u Introduced by Tarjan and Skadron 2005 u Breaks the 1 -1 correspondence between history bits and weights u Basic idea: u Hash segments of branch history into different tables u Sum weights selected by hash functions, apply threshold to predict u Update the weights using perceptron learning 4

Multiperspective Idea u Rather than just global/local history, use many features u Multiple perspectives on branch history u Multiperspective Perceptron Predictor u Hashed Perceptron u u u Sum weights indexed by hashes of features Update weights using perceptron training Contribution is a wide range of features 5

Multiperspective Idea u Rather than just global/local history, use many features u Multiple perspectives on branch history u Multiperspective Perceptron Predictor u Hashed Perceptron u u u Sum weights indexed by hashes of features Update weights using perceptron training Contribution is a wide range of features 5

Traditional Features u GHIST(a, b) – hash of a to b most recent branch outcomes u PATH(a, b) – hash of recent a PCs, shifted by b u LOCAL – 11 -bit local history u I DO ADVOCATE FOR LOCAL HISTORY IN REAL BRANCH PREDICTORS! u GHISTPATH - combination of GHIST and PATH u SGHISTPATH – alternate formulation allowing range u BIAS – bias of the branch to be taken regardless of history 6

Traditional Features u GHIST(a, b) – hash of a to b most recent branch outcomes u PATH(a, b) – hash of recent a PCs, shifted by b u LOCAL – 11 -bit local history u I DO ADVOCATE FOR LOCAL HISTORY IN REAL BRANCH PREDICTORS! u GHISTPATH - combination of GHIST and PATH u SGHISTPATH – alternate formulation allowing range u BIAS – bias of the branch to be taken regardless of history 6

Novel Features u IMLI – from Seznec’s innermost loop iteration counter work: u u u I propose an alternate IMLI u u u When a backward branch is taken, count up When a backward branch is not taken, reset counter When a forward branch is not taken, count up When a forward branch is taken, reset counter This represents loops where the decision to continue is at the top Typical in code compiled for size or by JIT compilers Forward IMLI works better than backward IMLI on these traces I use both forward and backward in the predictor 7

Novel Features u IMLI – from Seznec’s innermost loop iteration counter work: u u u I propose an alternate IMLI u u u When a backward branch is taken, count up When a backward branch is not taken, reset counter When a forward branch is not taken, count up When a forward branch is taken, reset counter This represents loops where the decision to continue is at the top Typical in code compiled for size or by JIT compilers Forward IMLI works better than backward IMLI on these traces I use both forward and backward in the predictor 7

Novel Features cont. u MODHIST – modulo history u Branch histories become misaligned when some branches are skipped u MODHIST records only branches where PC ≡ 0 (mod n) for some n. u Hopefully branches responsible for misalignment will not be recorded u Try many values of n to come up with a good MODHIST feature 8

Novel Features cont. u MODHIST – modulo history u Branch histories become misaligned when some branches are skipped u MODHIST records only branches where PC ≡ 0 (mod n) for some n. u Hopefully branches responsible for misalignment will not be recorded u Try many values of n to come up with a good MODHIST feature 8

Novel Features cont. u MODPATH – same idea with path of branch PCs u GHISTMODPATH – combine two previous ideas u RECENCY u u u Keep a recency stack of n branch PCs managed with LRU replacement Hash the stack to get the feature RECENCYPOS u Position (0. . n-1) of current branch in recency stack, or n if no match u Works surprisingly well 9

Novel Features cont. u MODPATH – same idea with path of branch PCs u GHISTMODPATH – combine two previous ideas u RECENCY u u u Keep a recency stack of n branch PCs managed with LRU replacement Hash the stack to get the feature RECENCYPOS u Position (0. . n-1) of current branch in recency stack, or n if no match u Works surprisingly well 9

Novel Features cont. u BLURRYPATH u Shift higher-order bits of branch PC into an array u Only record the bits if they don’t match the current bits u Parameters are depth of array, number of bits to truncate u Indicates region a branch came from rather than the precise location 10

Novel Features cont. u BLURRYPATH u Shift higher-order bits of branch PC into an array u Only record the bits if they don’t match the current bits u Parameters are depth of array, number of bits to truncate u Indicates region a branch came from rather than the precise location 10

Novel Features cont. u ACYCLIC u Current PC indexes a small array, recording the branch outcome there u The array always has the latest outcome for a given bin of branches u Acyclic – loop or repetition behavior is not recorded u Parameter is number of bits in the array 11

Novel Features cont. u ACYCLIC u Current PC indexes a small array, recording the branch outcome there u The array always has the latest outcome for a given bin of branches u Acyclic – loop or repetition behavior is not recorded u Parameter is number of bits in the array 11

Putting it Together u Each feature computed, hashed, and XORed with current PC u Resulting index selects weight from a table u Weights are summed, thresholded to make prediction u Weights are updated with perceptron learning 12

Putting it Together u Each feature computed, hashed, and XORed with current PC u Resulting index selects weight from a table u Weights are summed, thresholded to make prediction u Weights are updated with perceptron learning 12

Optimizations u u u u Filter always/never taken branches Apply sigmoidal transfer function to weights before summing Coefficients for features to emphasize relative accuracy Bit width optimization for tables Shared magnitudes – two signs share one magnitude Alternate prediction on low confidence (see paper) Adaptive threshold training Hashing some tables together with IMLI and RECENCYPOS 13

Optimizations u u u u Filter always/never taken branches Apply sigmoidal transfer function to weights before summing Coefficients for features to emphasize relative accuracy Bit width optimization for tables Shared magnitudes – two signs share one magnitude Alternate prediction on low confidence (see paper) Adaptive threshold training Hashing some tables together with IMLI and RECENCYPOS 13

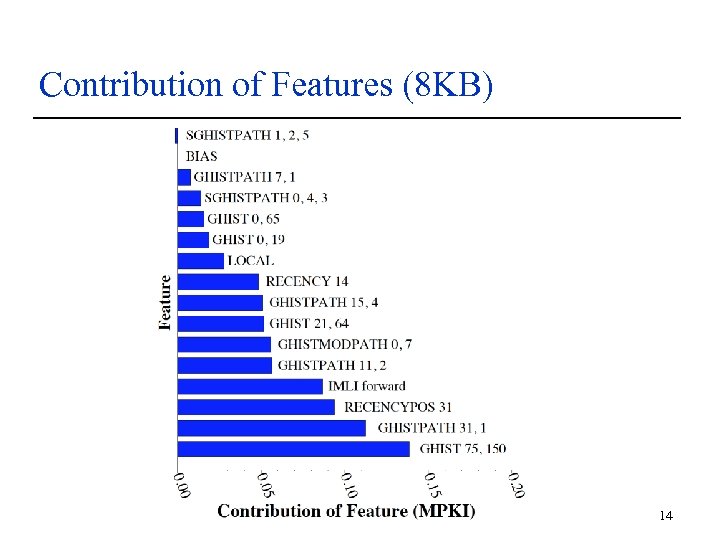

Contribution of Features (8 KB) 14

Contribution of Features (8 KB) 14

Submit to HPCA 2017! http: //hpca 2017. org Note: Deadline is August 1, 2016! 16

Submit to HPCA 2017! http: //hpca 2017. org Note: Deadline is August 1, 2016! 16