a75448b314f02a60ad3bd68bf1b06597.ppt

- Количество слайдов: 50

Mohammed and the Mountain Eric Jul DIKU Department of Computer Science University of Copenhagen 1

Mohammed and the Mountain Eric Jul DIKU Department of Computer Science University of Copenhagen 1

Mohammed and Mount Safa IF THE MOUNTAIN WILL NOT COME TO MOHAMMED, MOHAMMED WILL GO TO THE MOUNTAIN "If one cannot get one's own way, one must adjust to the inevitable. “ The legend goes that when the founder of Islam was asked to give proofs of his teaching, he ordered Mount Safa to come to him. When the mountain did not comply, Mohammed raised his hands toward heaven and said, 'God is merciful. Had it obeyed my words, it would have fallen on us to our destruction. I will therefore go to the mountain and thank God that he has had mercy on a stiff-necked generation. ” 2

Mohammed and Mount Safa IF THE MOUNTAIN WILL NOT COME TO MOHAMMED, MOHAMMED WILL GO TO THE MOUNTAIN "If one cannot get one's own way, one must adjust to the inevitable. “ The legend goes that when the founder of Islam was asked to give proofs of his teaching, he ordered Mount Safa to come to him. When the mountain did not comply, Mohammed raised his hands toward heaven and said, 'God is merciful. Had it obeyed my words, it would have fallen on us to our destruction. I will therefore go to the mountain and thank God that he has had mercy on a stiff-necked generation. ” 2

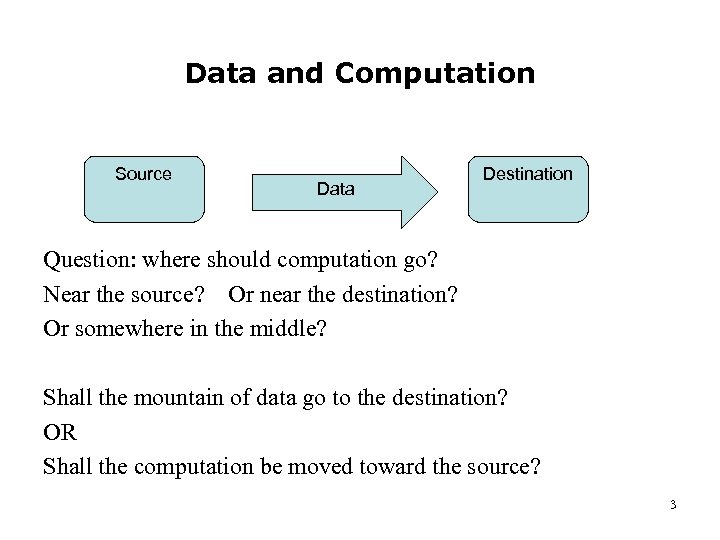

Data and Computation Source Data Destination Question: where should computation go? Near the source? Or near the destination? Or somewhere in the middle? Shall the mountain of data go to the destination? OR Shall the computation be moved toward the source? 3

Data and Computation Source Data Destination Question: where should computation go? Near the source? Or near the destination? Or somewhere in the middle? Shall the mountain of data go to the destination? OR Shall the computation be moved toward the source? 3

Problem Statement At which points should the data be processed? And how much? (Just filtering? Content-based? ) Close to destination: • may be network-inefficient Close to source: • more complicated • may require non-trivial cooperation in network 4

Problem Statement At which points should the data be processed? And how much? (Just filtering? Content-based? ) Close to destination: • may be network-inefficient Close to source: • more complicated • may require non-trivial cooperation in network 4

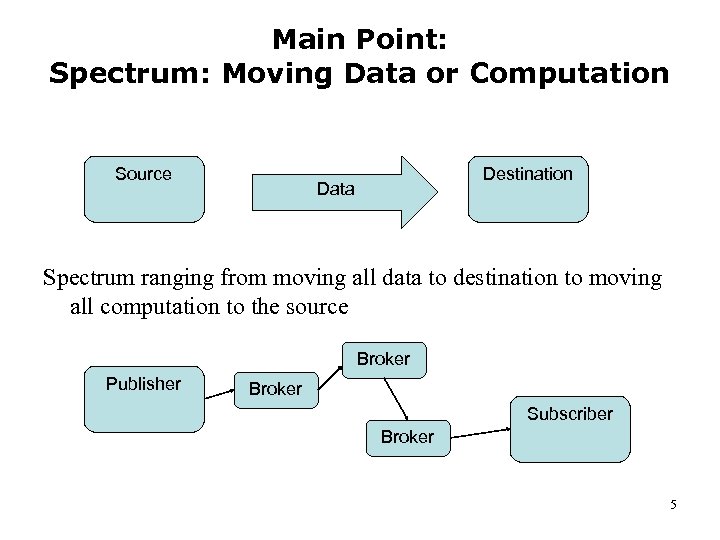

Main Point: Spectrum: Moving Data or Computation Source Destination Data Spectrum ranging from moving all data to destination to moving all computation to the source Broker Publisher Broker Subscriber Broker 5

Main Point: Spectrum: Moving Data or Computation Source Destination Data Spectrum ranging from moving all data to destination to moving all computation to the source Broker Publisher Broker Subscriber Broker 5

Overview • • • Mobility of Data and Computation Grid Computing Example Mobile Objects in Emerald Group Communication in Emerald Mobile Grid Applications – Evil Man Advice from experiences with dozens of Ph. D. projects 6

Overview • • • Mobility of Data and Computation Grid Computing Example Mobile Objects in Emerald Group Communication in Emerald Mobile Grid Applications – Evil Man Advice from experiences with dozens of Ph. D. projects 6

Mobility of Data and Computation What is computation? Pub-sub systems: just filtering? Flooding vs. Match-first Flooding: filter at destination – simple, but network inefficient Match-first: network efficient Moving work closer to source as to reduce data transferred. 7

Mobility of Data and Computation What is computation? Pub-sub systems: just filtering? Flooding vs. Match-first Flooding: filter at destination – simple, but network inefficient Match-first: network efficient Moving work closer to source as to reduce data transferred. 7

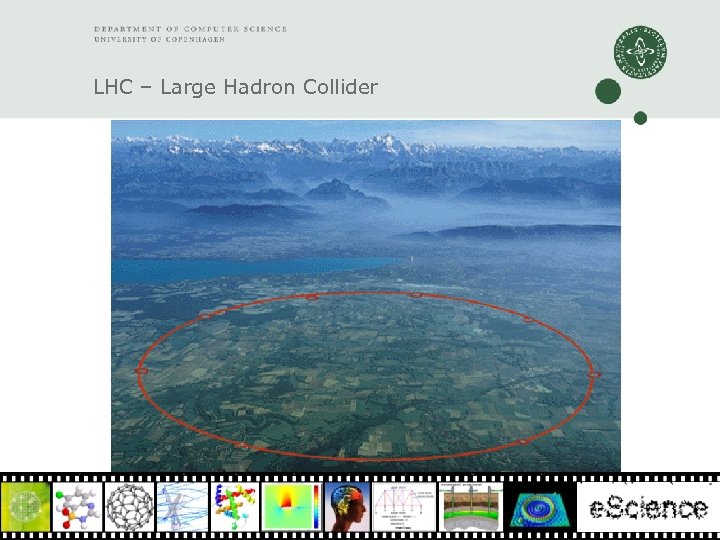

Motivating Example with huge data sets The Large Hadron Collider at CERN ATLAS project 8

Motivating Example with huge data sets The Large Hadron Collider at CERN ATLAS project 8

LHC – Large Hadron Collider

LHC – Large Hadron Collider

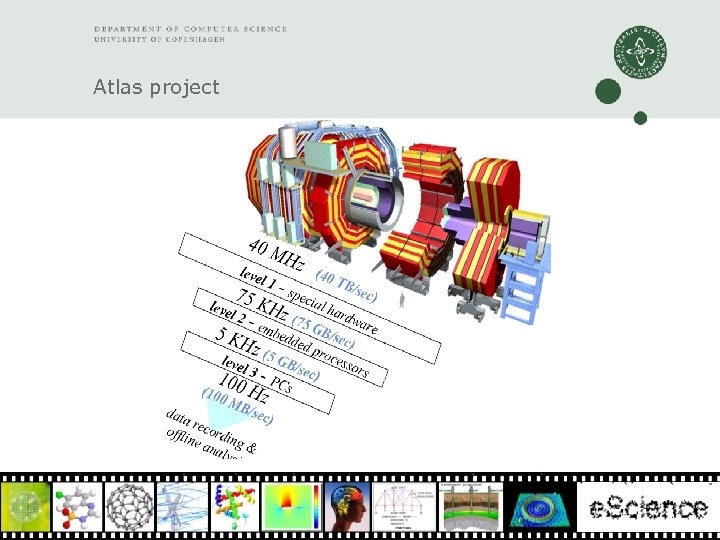

Atlas project

Atlas project

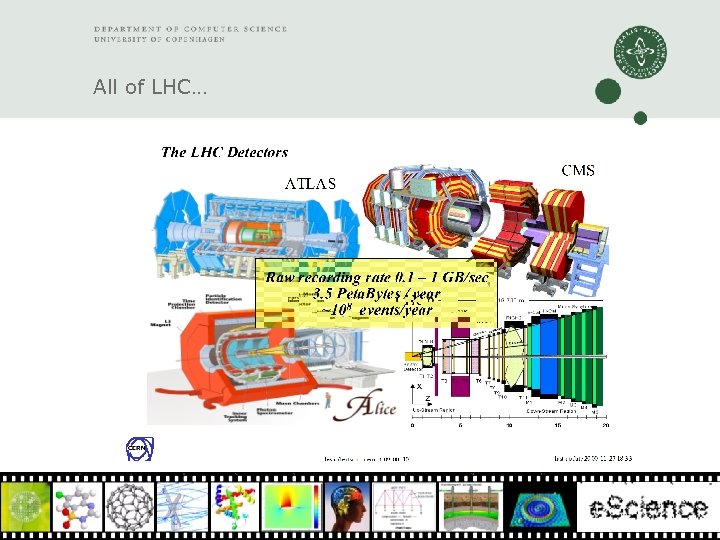

All of LHC…

All of LHC…

Problem: Huge amounts of data The problem is the large amount of data (Petabytes!) and the geographical distribution of the scientists wanting to look at / compute upon the data 12

Problem: Huge amounts of data The problem is the large amount of data (Petabytes!) and the geographical distribution of the scientists wanting to look at / compute upon the data 12

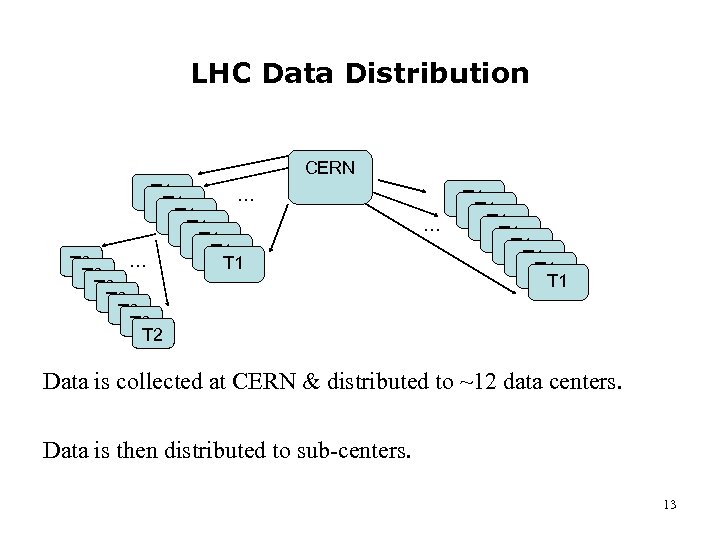

LHC Data Distribution CERN T 1 … T 1 T 1 T 1 … T 1 T 2 T 2 … T 1 T 1 Data is collected at CERN & distributed to ~12 data centers. Data is then distributed to sub-centers. 13

LHC Data Distribution CERN T 1 … T 1 T 1 T 1 … T 1 T 2 T 2 … T 1 T 1 Data is collected at CERN & distributed to ~12 data centers. Data is then distributed to sub-centers. 13

Programs and Data Meet Up Scientists can access the data and perform computation by submitting jobs to the sub-centers, e. g. , via a Grid middleware So both computation and data are moved – and meet at Grid centers 14

Programs and Data Meet Up Scientists can access the data and perform computation by submitting jobs to the sub-centers, e. g. , via a Grid middleware So both computation and data are moved – and meet at Grid centers 14

Techniques to Consider There a number of general ideas/schemes to consider: • • • Compressing Data Decoupling data and metadata, e. g. , video & videoinfo Content Routing Protocol (Pascal’s talk) Caching Multicast Providing Mobile Objects and Mobile Computation 15

Techniques to Consider There a number of general ideas/schemes to consider: • • • Compressing Data Decoupling data and metadata, e. g. , video & videoinfo Content Routing Protocol (Pascal’s talk) Caching Multicast Providing Mobile Objects and Mobile Computation 15

Mobile Objects Problem: efficient placement of data and computation in a distributed system A solution: an Object-Oriented Language with: – – objects mobility of objects transparent remote calls group communication for pub-sub 16

Mobile Objects Problem: efficient placement of data and computation in a distributed system A solution: an Object-Oriented Language with: – – objects mobility of objects transparent remote calls group communication for pub-sub 16

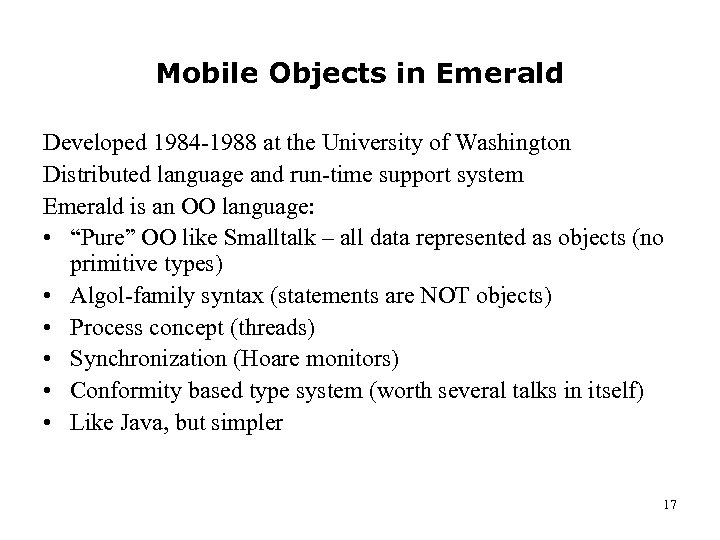

Mobile Objects in Emerald Developed 1984 -1988 at the University of Washington Distributed language and run-time support system Emerald is an OO language: • “Pure” OO like Smalltalk – all data represented as objects (no primitive types) • Algol-family syntax (statements are NOT objects) • Process concept (threads) • Synchronization (Hoare monitors) • Conformity based type system (worth several talks in itself) • Like Java, but simpler 17

Mobile Objects in Emerald Developed 1984 -1988 at the University of Washington Distributed language and run-time support system Emerald is an OO language: • “Pure” OO like Smalltalk – all data represented as objects (no primitive types) • Algol-family syntax (statements are NOT objects) • Process concept (threads) • Synchronization (Hoare monitors) • Conformity based type system (worth several talks in itself) • Like Java, but simpler 17

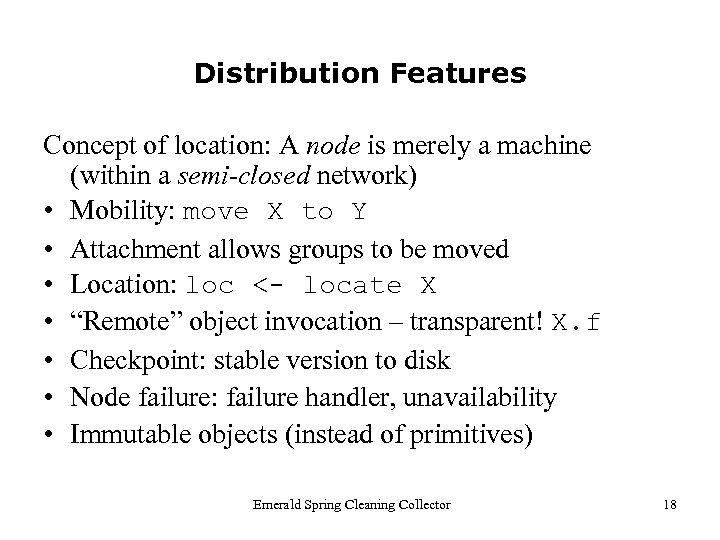

Distribution Features Concept of location: A node is merely a machine (within a semi-closed network) • Mobility: move X to Y • Attachment allows groups to be moved • Location: loc <- locate X • “Remote” object invocation – transparent! X. f • Checkpoint: stable version to disk • Node failure: failure handler, unavailability • Immutable objects (instead of primitives) Emerald Spring Cleaning Collector 18

Distribution Features Concept of location: A node is merely a machine (within a semi-closed network) • Mobility: move X to Y • Attachment allows groups to be moved • Location: loc <- locate X • “Remote” object invocation – transparent! X. f • Checkpoint: stable version to disk • Node failure: failure handler, unavailability • Immutable objects (instead of primitives) Emerald Spring Cleaning Collector 18

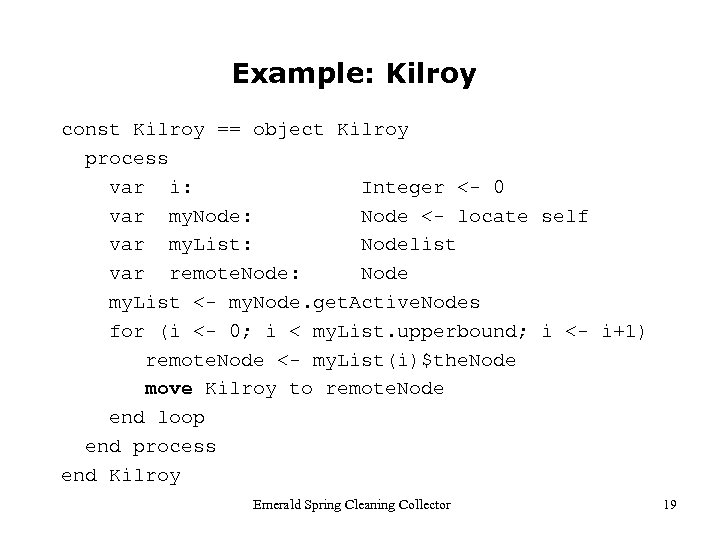

Example: Kilroy const Kilroy == object Kilroy process var i: Integer <- 0 var my. Node: Node <- locate self var my. List: Nodelist var remote. Node: Node my. List <- my. Node. get. Active. Nodes for (i <- 0; i < my. List. upperbound; i <- i+1) remote. Node <- my. List(i)$the. Node move Kilroy to remote. Node end loop end process end Kilroy Emerald Spring Cleaning Collector 19

Example: Kilroy const Kilroy == object Kilroy process var i: Integer <- 0 var my. Node: Node <- locate self var my. List: Nodelist var remote. Node: Node my. List <- my. Node. get. Active. Nodes for (i <- 0; i < my. List. upperbound; i <- i+1) remote. Node <- my. List(i)$the. Node move Kilroy to remote. Node end loop end process end Kilroy Emerald Spring Cleaning Collector 19

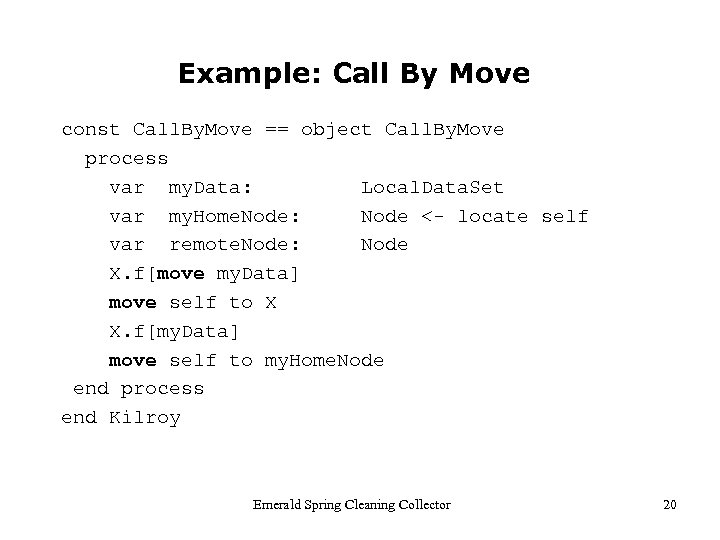

Example: Call By Move const Call. By. Move == object Call. By. Move process var my. Data: Local. Data. Set var my. Home. Node: Node <- locate self var remote. Node: Node X. f[move my. Data] move self to X X. f[my. Data] move self to my. Home. Node end process end Kilroy Emerald Spring Cleaning Collector 20

Example: Call By Move const Call. By. Move == object Call. By. Move process var my. Data: Local. Data. Set var my. Home. Node: Node <- locate self var remote. Node: Node X. f[move my. Data] move self to X X. f[my. Data] move self to my. Home. Node end process end Kilroy Emerald Spring Cleaning Collector 20

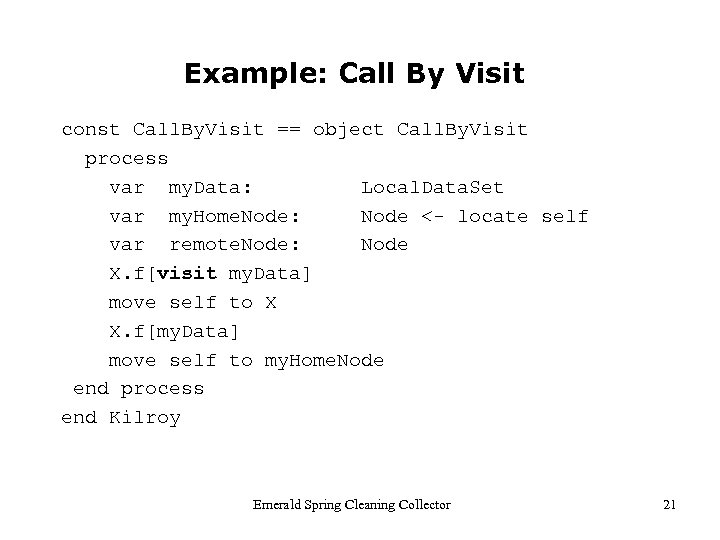

Example: Call By Visit const Call. By. Visit == object Call. By. Visit process var my. Data: Local. Data. Set var my. Home. Node: Node <- locate self var remote. Node: Node X. f[visit my. Data] move self to X X. f[my. Data] move self to my. Home. Node end process end Kilroy Emerald Spring Cleaning Collector 21

Example: Call By Visit const Call. By. Visit == object Call. By. Visit process var my. Data: Local. Data. Set var my. Home. Node: Node <- locate self var remote. Node: Node X. f[visit my. Data] move self to X X. f[my. Data] move self to my. Home. Node end process end Kilroy Emerald Spring Cleaning Collector 21

Group Communication Added group communication to Emerald Introduce a group concept and a group multi-cast call Calling a function on a group object calls all the group members 22

Group Communication Added group communication to Emerald Introduce a group concept and a group multi-cast call Calling a function on a group object calls all the group members 22

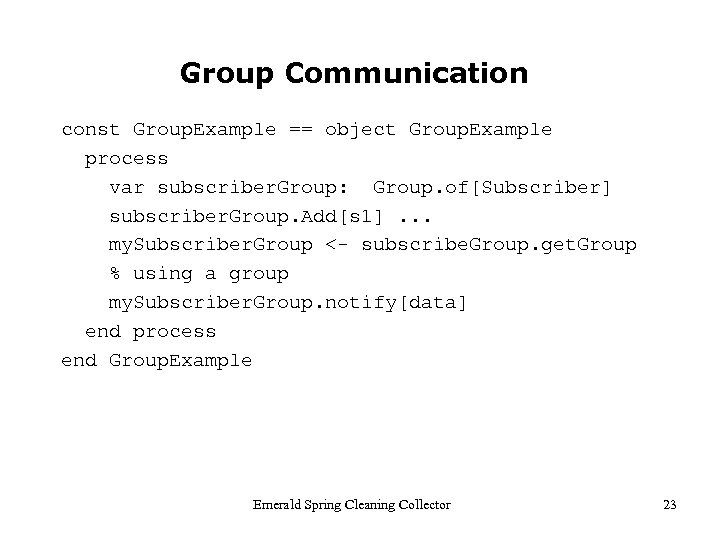

Group Communication const Group. Example == object Group. Example process var subscriber. Group: Group. of[Subscriber] subscriber. Group. Add[s 1]. . . my. Subscriber. Group <- subscribe. Group. get. Group % using a group my. Subscriber. Group. notify[data] end process end Group. Example Emerald Spring Cleaning Collector 23

Group Communication const Group. Example == object Group. Example process var subscriber. Group: Group. of[Subscriber] subscriber. Group. Add[s 1]. . . my. Subscriber. Group <- subscribe. Group. get. Group % using a group my. Subscriber. Group. notify[data] end process end Group. Example Emerald Spring Cleaning Collector 23

New Problem Area Switching to an entirely new problem area Problem: moving applications to Grid computing sites Trust code? Trust Operating System? Proposed solution – named Evil Man Move entire application including OS into a Grid Cluster 26

New Problem Area Switching to an entirely new problem area Problem: moving applications to Grid computing sites Trust code? Trust Operating System? Proposed solution – named Evil Man Move entire application including OS into a Grid Cluster 26

OS Migration Desire: move entire running applications OS solution: pick up and move the ENTIRE OS First, use a Virtual Machine Monitor (Zen, VMWare) to separate OS from hardware Second, put migration code inside OS – it has all the needed functionality already – and get a Self-migrating OS 27

OS Migration Desire: move entire running applications OS solution: pick up and move the ENTIRE OS First, use a Virtual Machine Monitor (Zen, VMWare) to separate OS from hardware Second, put migration code inside OS – it has all the needed functionality already – and get a Self-migrating OS 27

Laundromat Computing with Evil Man is based on: • Using virtual machines as containers for untrusted code • Using live VM migration to make execution independent of location • Using micro-payments for pay-as-you-go computing Evil Man uses Virtual Machine Migration to move actively executing applications into a Grid Cluster – e. g. , to numbercrush data from the Atlas project at CERN 31

Laundromat Computing with Evil Man is based on: • Using virtual machines as containers for untrusted code • Using live VM migration to make execution independent of location • Using micro-payments for pay-as-you-go computing Evil Man uses Virtual Machine Migration to move actively executing applications into a Grid Cluster – e. g. , to numbercrush data from the Atlas project at CERN 31

Evil Man Prototype cluster management system developed at the Danish Center for Grid Computing at the University of Copenhagen One great Ph. D. student deserves most of the credit for the work: Jacob Gorm Hansen 2008 Eurosys Rodger Needham award for Best Systems Ph. D. 32

Evil Man Prototype cluster management system developed at the Danish Center for Grid Computing at the University of Copenhagen One great Ph. D. student deserves most of the credit for the work: Jacob Gorm Hansen 2008 Eurosys Rodger Needham award for Best Systems Ph. D. 32

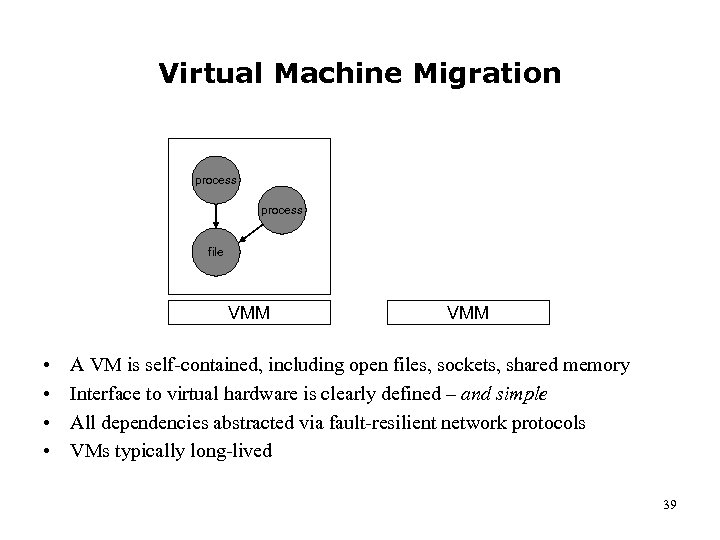

Virtual Machine Migration process file VMM • • VMM A VM is self-contained, including open files, sockets, shared memory Interface to virtual hardware is clearly defined – and simple All dependencies abstracted via fault-resilient network protocols VMs typically long-lived 39

Virtual Machine Migration process file VMM • • VMM A VM is self-contained, including open files, sockets, shared memory Interface to virtual hardware is clearly defined – and simple All dependencies abstracted via fault-resilient network protocols VMs typically long-lived 39

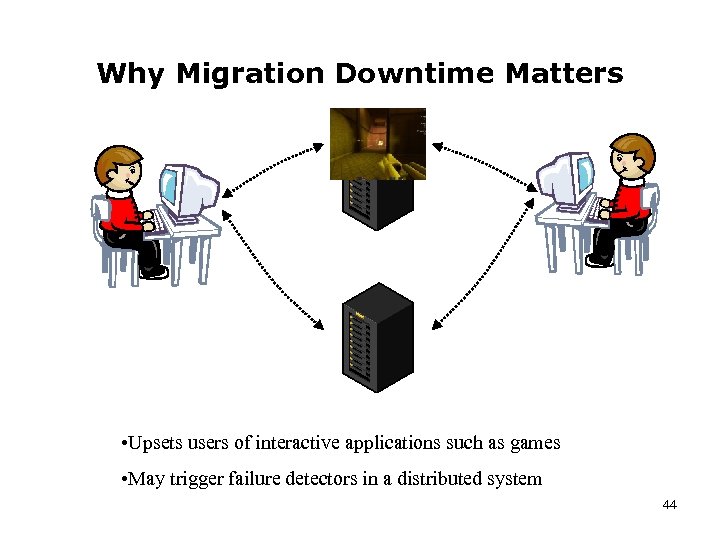

Why Migration Downtime Matters • Upsets users of interactive applications such as games • May trigger failure detectors in a distributed system 44

Why Migration Downtime Matters • Upsets users of interactive applications such as games • May trigger failure detectors in a distributed system 44

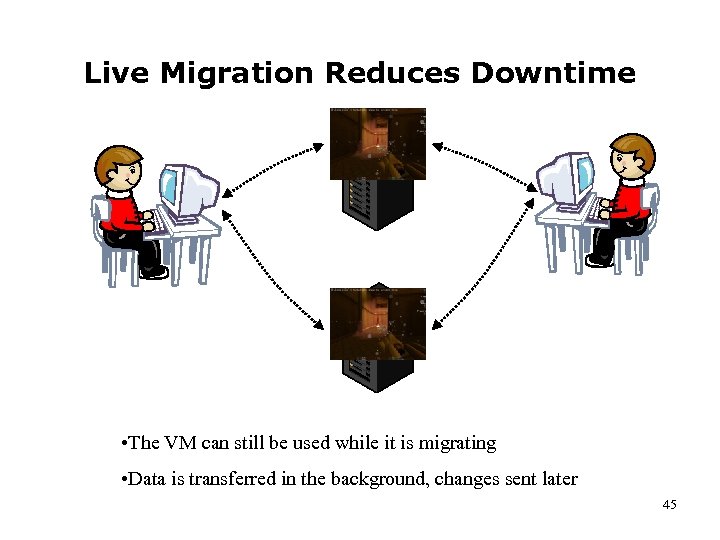

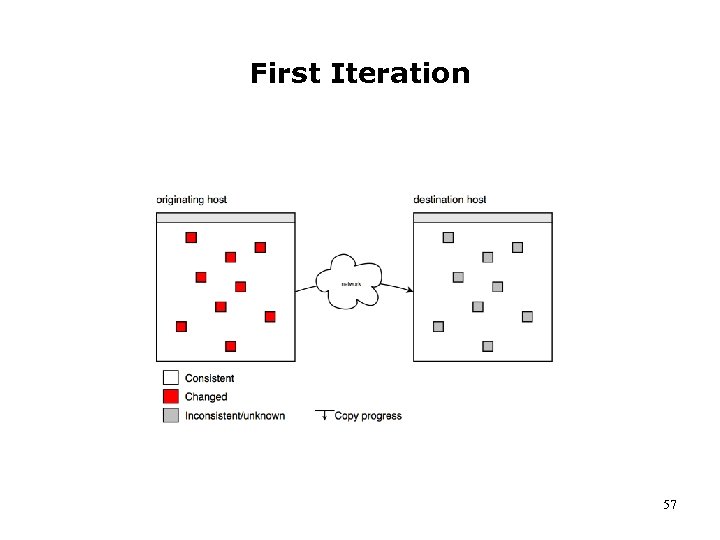

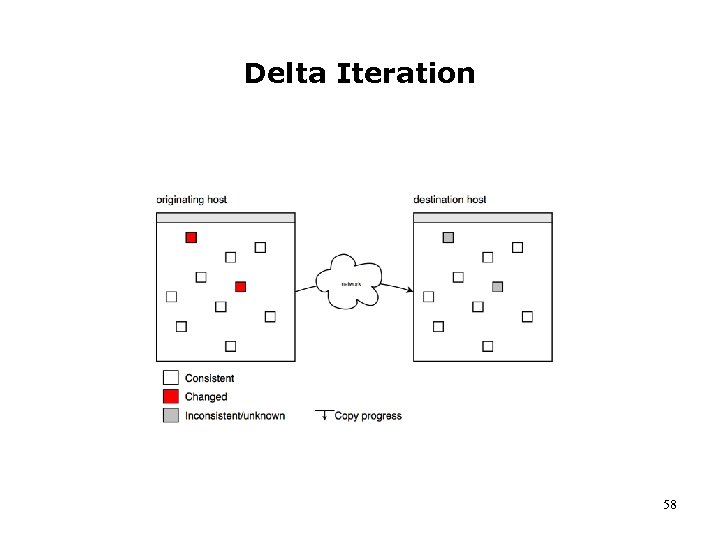

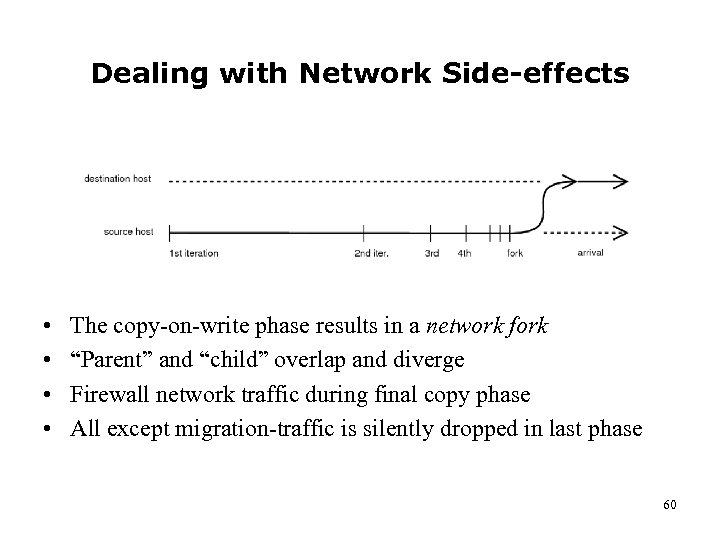

Live Migration Reduces Downtime • The VM can still be used while it is migrating • Data is transferred in the background, changes sent later 45

Live Migration Reduces Downtime • The VM can still be used while it is migrating • Data is transferred in the background, changes sent later 45

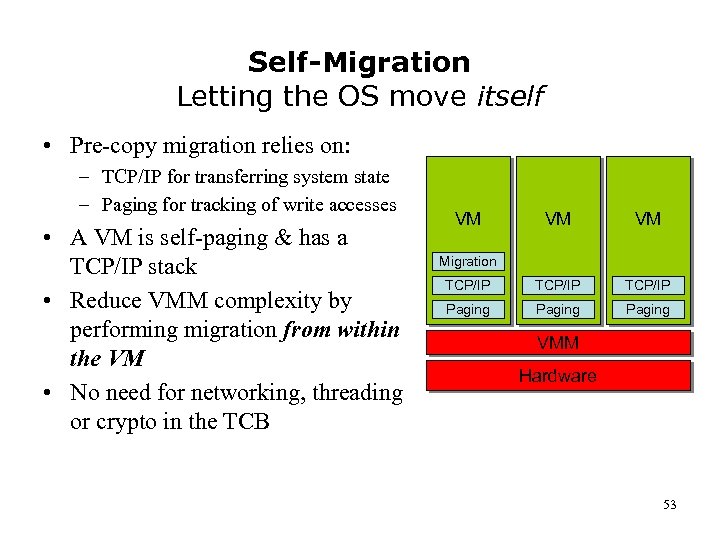

Self-Migration Letting the OS move itself • Pre-copy migration relies on: – TCP/IP for transferring system state – Paging for tracking of write accesses • A VM is self-paging & has a TCP/IP stack • Reduce VMM complexity by performing migration from within the VM • No need for networking, threading or crypto in the TCB VM VM VM TCP/IP Paging Migration VMM Hardware 53

Self-Migration Letting the OS move itself • Pre-copy migration relies on: – TCP/IP for transferring system state – Paging for tracking of write accesses • A VM is self-paging & has a TCP/IP stack • Reduce VMM complexity by performing migration from within the VM • No need for networking, threading or crypto in the TCB VM VM VM TCP/IP Paging Migration VMM Hardware 53

An Inspiring Example of Self-Migration von Münchhausen in the swamp 54

An Inspiring Example of Self-Migration von Münchhausen in the swamp 54

First Iteration 57

First Iteration 57

Delta Iteration 58

Delta Iteration 58

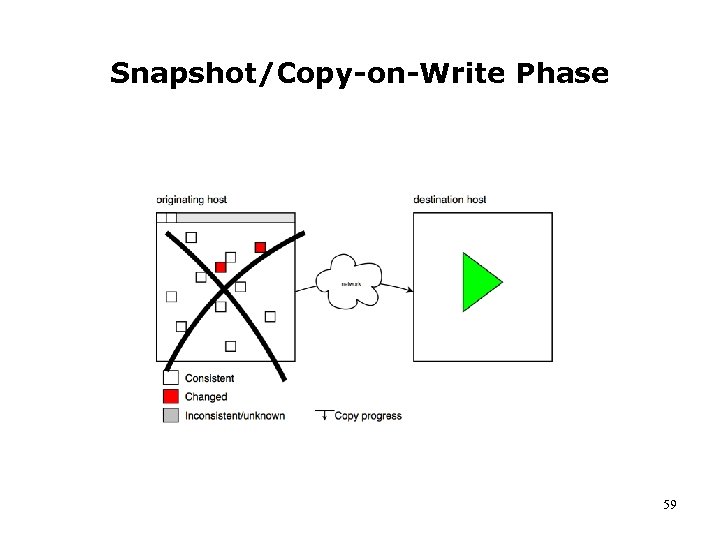

Snapshot/Copy-on-Write Phase 59

Snapshot/Copy-on-Write Phase 59

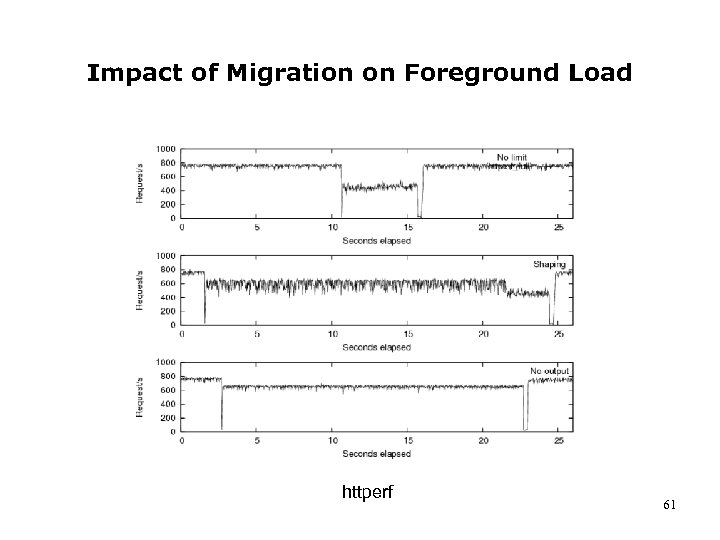

Dealing with Network Side-effects • • The copy-on-write phase results in a network fork “Parent” and “child” overlap and diverge Firewall network traffic during final copy phase All except migration-traffic is silently dropped in last phase 60

Dealing with Network Side-effects • • The copy-on-write phase results in a network fork “Parent” and “child” overlap and diverge Firewall network traffic during final copy phase All except migration-traffic is silently dropped in last phase 60

Impact of Migration on Foreground Load httperf 61

Impact of Migration on Foreground Load httperf 61

Laundromat Computing 63

Laundromat Computing 63

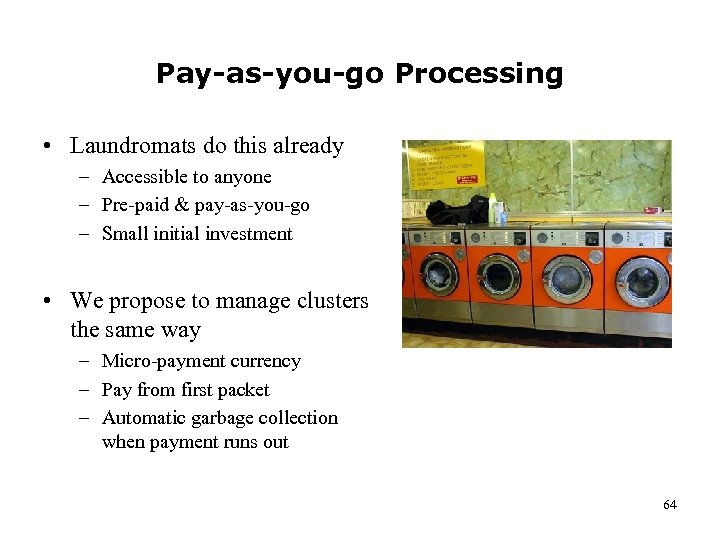

Pay-as-you-go Processing • Laundromats do this already – Accessible to anyone – Pre-paid & pay-as-you-go – Small initial investment • We propose to manage clusters the same way – Micro-payment currency – Pay from first packet – Automatic garbage collection when payment runs out 64

Pay-as-you-go Processing • Laundromats do this already – Accessible to anyone – Pre-paid & pay-as-you-go – Small initial investment • We propose to manage clusters the same way – Micro-payment currency – Pay from first packet – Automatic garbage collection when payment runs out 64

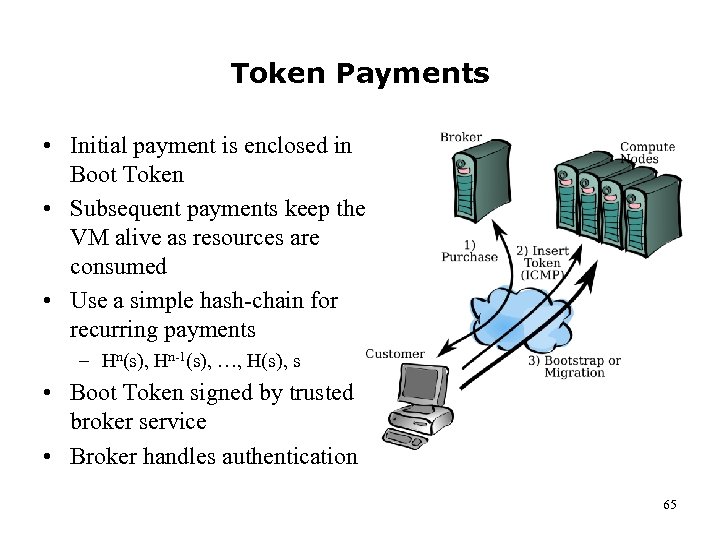

Token Payments • Initial payment is enclosed in Boot Token • Subsequent payments keep the VM alive as resources are consumed • Use a simple hash-chain for recurring payments – Hn(s), Hn-1(s), …, H(s), s • Boot Token signed by trusted broker service • Broker handles authentication 65

Token Payments • Initial payment is enclosed in Boot Token • Subsequent payments keep the VM alive as resources are consumed • Use a simple hash-chain for recurring payments – Hn(s), Hn-1(s), …, H(s), s • Boot Token signed by trusted broker service • Broker handles authentication 65

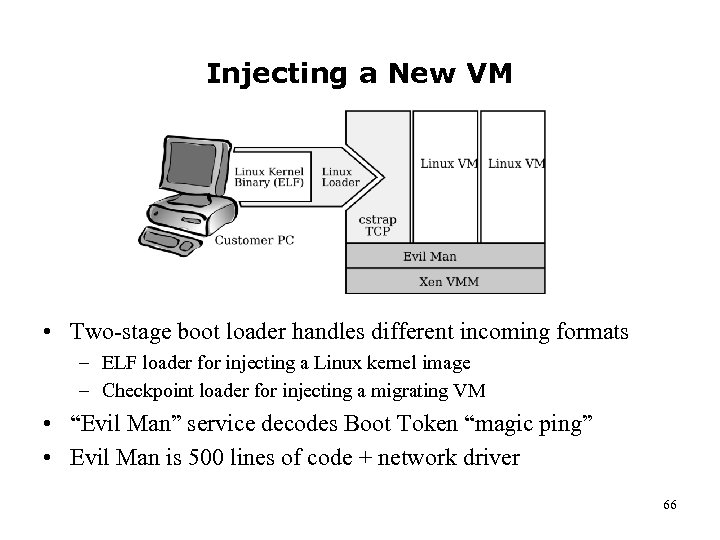

Injecting a New VM • Two-stage boot loader handles different incoming formats – ELF loader for injecting a Linux kernel image – Checkpoint loader for injecting a migrating VM • “Evil Man” service decodes Boot Token “magic ping” • Evil Man is 500 lines of code + network driver 66

Injecting a New VM • Two-stage boot loader handles different incoming formats – ELF loader for injecting a Linux kernel image – Checkpoint loader for injecting a migrating VM • “Evil Man” service decodes Boot Token “magic ping” • Evil Man is 500 lines of code + network driver 66

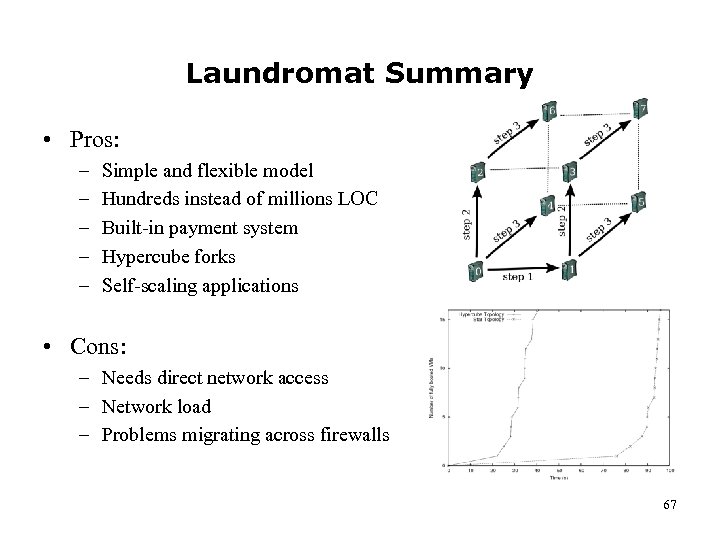

Laundromat Summary • Pros: – – – Simple and flexible model Hundreds instead of millions LOC Built-in payment system Hypercube forks Self-scaling applications • Cons: – Needs direct network access – Network load – Problems migrating across firewalls 67

Laundromat Summary • Pros: – – – Simple and flexible model Hundreds instead of millions LOC Built-in payment system Hypercube forks Self-scaling applications • Cons: – Needs direct network access – Network load – Problems migrating across firewalls 67

Yet another switch Some advice from my experience with a score of Ph. D. projects 68

Yet another switch Some advice from my experience with a score of Ph. D. projects 68

Things to think about • State your problem • Spread out initially, then focus • Stay close to your focus 69

Things to think about • State your problem • Spread out initially, then focus • Stay close to your focus 69

State your problem • Be able to answer the question: ”What is the problem you are trying to solve? ” -- don’t end up with a ”solution in search of a problem!” • State your problem • Often good with a motivating example • Often good with a narrow, less-general example that also can be used as a milestone – even a killer app 70

State your problem • Be able to answer the question: ”What is the problem you are trying to solve? ” -- don’t end up with a ”solution in search of a problem!” • State your problem • Often good with a motivating example • Often good with a narrow, less-general example that also can be used as a milestone – even a killer app 70

Spread out initially, then focus • Spread out, then narrow & focus (diagram) • Establish a dissertation title when you start to focus • Remember that your thesis focus is a SUBSET of all you have done – your dissertation is NOT a logbog of your work – nor a description of ”see what I have done” (diagram) 71

Spread out initially, then focus • Spread out, then narrow & focus (diagram) • Establish a dissertation title when you start to focus • Remember that your thesis focus is a SUBSET of all you have done – your dissertation is NOT a logbog of your work – nor a description of ”see what I have done” (diagram) 71

Stay close to your focus • Write a draft of your conclusion early – use it for focus • Concentrate on your main contribution (diagram) • Try for a good, narrow example that is implementable and can be a good demo and an excellent milestone 72

Stay close to your focus • Write a draft of your conclusion early – use it for focus • Concentrate on your main contribution (diagram) • Try for a good, narrow example that is implementable and can be a good demo and an excellent milestone 72

Walk away with… • Spectrum: moving data to computation site or moving computation to the data site • Spectrum underlies many decisions concerning architecture of pub-sub systems • Moving data is well-known; moving computation more tricky • Don’t have a solution in search of a problem • State the problem clearly – and state which part of it you will solve – use examples/killer apps • As you go narrow your focus • Shot for a good contribution 73

Walk away with… • Spectrum: moving data to computation site or moving computation to the data site • Spectrum underlies many decisions concerning architecture of pub-sub systems • Moving data is well-known; moving computation more tricky • Don’t have a solution in search of a problem • State the problem clearly – and state which part of it you will solve – use examples/killer apps • As you go narrow your focus • Shot for a good contribution 73

Questions? 74

Questions? 74

The End Thank you for subscribing 75

The End Thank you for subscribing 75