3de7cad38e5c8d5875419c01489a6a7f.ppt

- Количество слайдов: 40

Measuring Adversaries Vern Paxson International Computer Science Institute / Lawrence Berkeley National Laboratory vern@icir. org June 15, 2004

Measuring Adversaries Vern Paxson International Computer Science Institute / Lawrence Berkeley National Laboratory vern@icir. org June 15, 2004

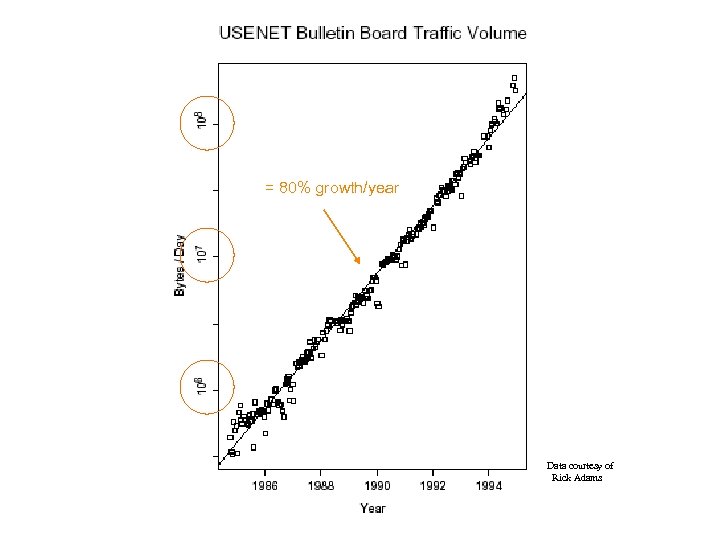

= 80% growth/year Data courtesy of Rick Adams

= 80% growth/year Data courtesy of Rick Adams

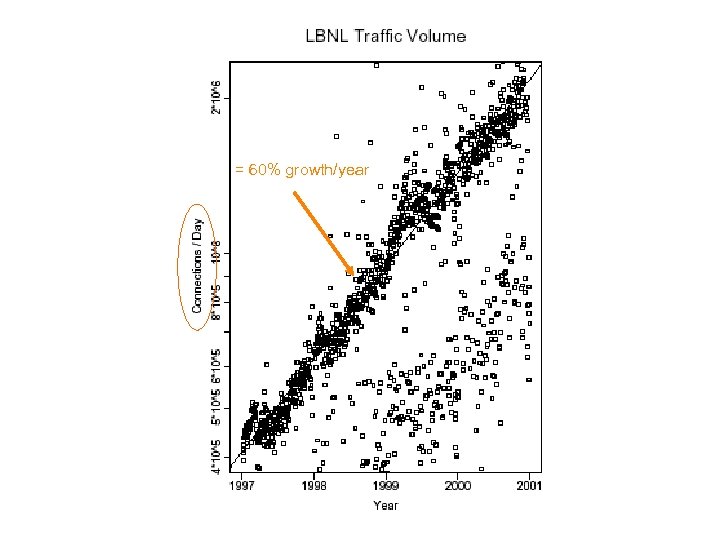

= 60% growth/year

= 60% growth/year

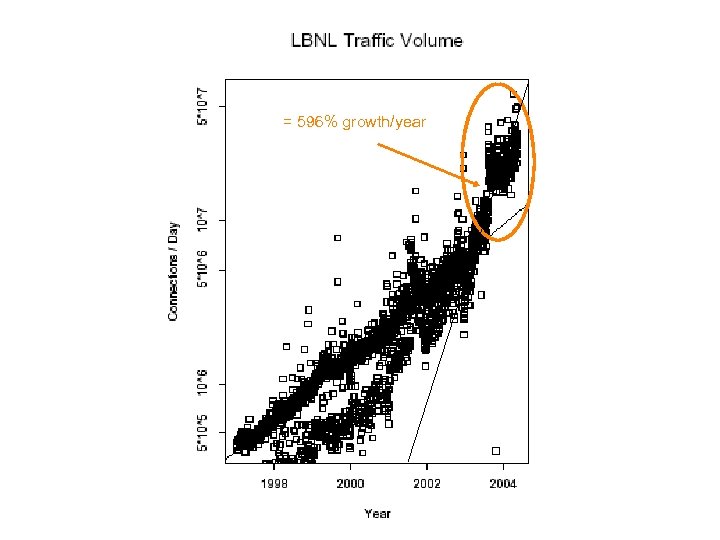

= 596% growth/year

= 596% growth/year

The Point of the Talk • Measuring adversaries is fun: – Increasingly of pressing interest – Involves misbehavior and sneakiness – Includes true Internet-scale phenomena – Under-characterized – The rules change

The Point of the Talk • Measuring adversaries is fun: – Increasingly of pressing interest – Involves misbehavior and sneakiness – Includes true Internet-scale phenomena – Under-characterized – The rules change

The Point of the Talk, con’t • Measuring adversaries is challenging: – Spans very wide range of layers, semantics, scope – New notions of “active” and “passive” measurement – Extra-thorny dataset problems – Very rapid evolution: arms race

The Point of the Talk, con’t • Measuring adversaries is challenging: – Spans very wide range of layers, semantics, scope – New notions of “active” and “passive” measurement – Extra-thorny dataset problems – Very rapid evolution: arms race

Adversaries & Evasion • Consider passive measurement: scanning traffic for a particular string (“USER root”) • Easiest: scan for the text in each packet – No good: text might be split across multiple packets • Okay, remember text from previous packet – No good: out-of-order delivery • Okay, fully reassemble byte stream – Costs state …. – …. and still evadable

Adversaries & Evasion • Consider passive measurement: scanning traffic for a particular string (“USER root”) • Easiest: scan for the text in each packet – No good: text might be split across multiple packets • Okay, remember text from previous packet – No good: out-of-order delivery • Okay, fully reassemble byte stream – Costs state …. – …. and still evadable

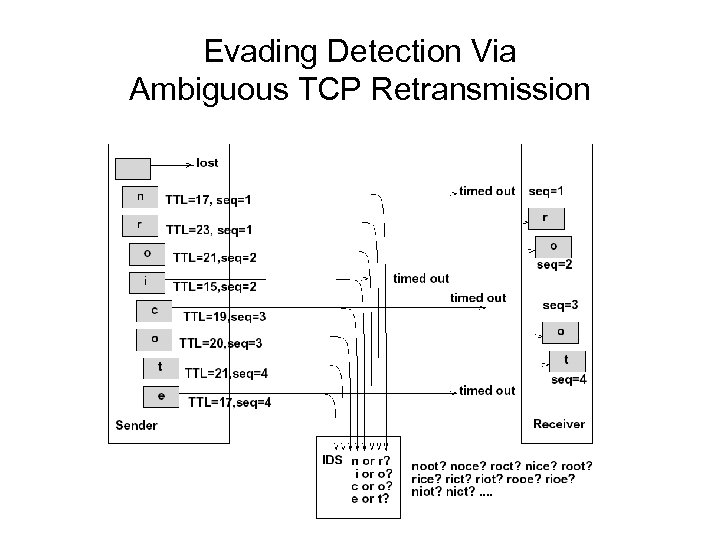

Evading Detection Via Ambiguous TCP Retransmission

Evading Detection Via Ambiguous TCP Retransmission

The Problem of Evasion • Fundamental problem passively measuring traffic on a link: Network traffic is inherently ambiguous • Generally not a significant issue for traffic characterization … • … But is in the presence of an adversary: Attackers can craft traffic to confuse/fool monitor

The Problem of Evasion • Fundamental problem passively measuring traffic on a link: Network traffic is inherently ambiguous • Generally not a significant issue for traffic characterization … • … But is in the presence of an adversary: Attackers can craft traffic to confuse/fool monitor

The Problem of “Crud” • There are many such ambiguities attackers can leverage • A type of measurement vantage-point problem • Unfortunately, these occur in benign traffic, too: – Legitimate tiny fragments, overlapping fragments – Receivers that acknowledge data they did not receive – Senders that retransmit different data than originally • In a diverse traffic stream, you will see these: – What is the intent?

The Problem of “Crud” • There are many such ambiguities attackers can leverage • A type of measurement vantage-point problem • Unfortunately, these occur in benign traffic, too: – Legitimate tiny fragments, overlapping fragments – Receivers that acknowledge data they did not receive – Senders that retransmit different data than originally • In a diverse traffic stream, you will see these: – What is the intent?

Countering Evasion-by-Ambiguity • Involve end-host: have it tell you what it saw • Probe end-host in advance to resolve vantage -point ambiguities (“active mapping”) – E. g. , how many hops to it? – E. g. , how does it resolve ambiguous retransmisions? • Change the rules - Perturb – Introduce a network element that “normalizes” the traffic passing through it to eliminate ambiguities • E. g. , regenerate low TTLs (dicey!) • E. g. , reassemble streams & remove inconsistent retransmissions

Countering Evasion-by-Ambiguity • Involve end-host: have it tell you what it saw • Probe end-host in advance to resolve vantage -point ambiguities (“active mapping”) – E. g. , how many hops to it? – E. g. , how does it resolve ambiguous retransmisions? • Change the rules - Perturb – Introduce a network element that “normalizes” the traffic passing through it to eliminate ambiguities • E. g. , regenerate low TTLs (dicey!) • E. g. , reassemble streams & remove inconsistent retransmissions

Adversaries & Identity • Usual notions of identifying services by port numbers and users by IP addresses become untrustworthy • E. g. , backdoors installed by attackers on nonstandard ports to facilitate return / control • E. g. , P 2 P traffic tunneled over HTTP • General measurement problem: inferring structure

Adversaries & Identity • Usual notions of identifying services by port numbers and users by IP addresses become untrustworthy • E. g. , backdoors installed by attackers on nonstandard ports to facilitate return / control • E. g. , P 2 P traffic tunneled over HTTP • General measurement problem: inferring structure

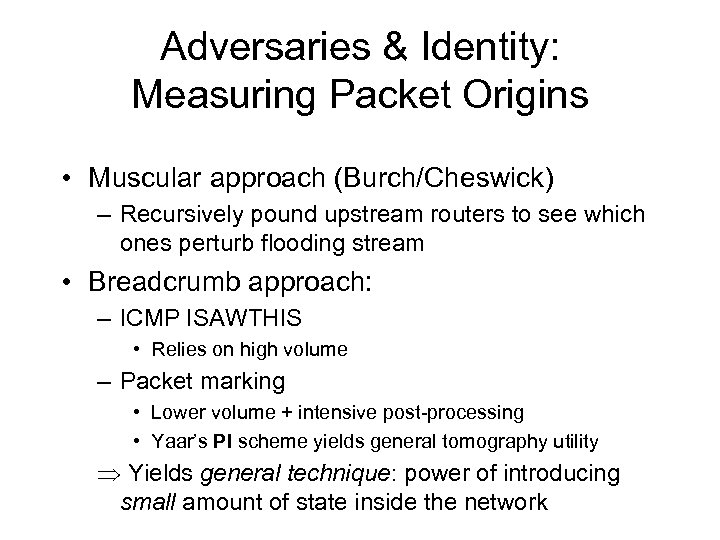

Adversaries & Identity: Measuring Packet Origins • Muscular approach (Burch/Cheswick) – Recursively pound upstream routers to see which ones perturb flooding stream • Breadcrumb approach: – ICMP ISAWTHIS • Relies on high volume – Packet marking • Lower volume + intensive post-processing • Yaar’s PI scheme yields general tomography utility Yields general technique: power of introducing small amount of state inside the network

Adversaries & Identity: Measuring Packet Origins • Muscular approach (Burch/Cheswick) – Recursively pound upstream routers to see which ones perturb flooding stream • Breadcrumb approach: – ICMP ISAWTHIS • Relies on high volume – Packet marking • Lower volume + intensive post-processing • Yaar’s PI scheme yields general tomography utility Yields general technique: power of introducing small amount of state inside the network

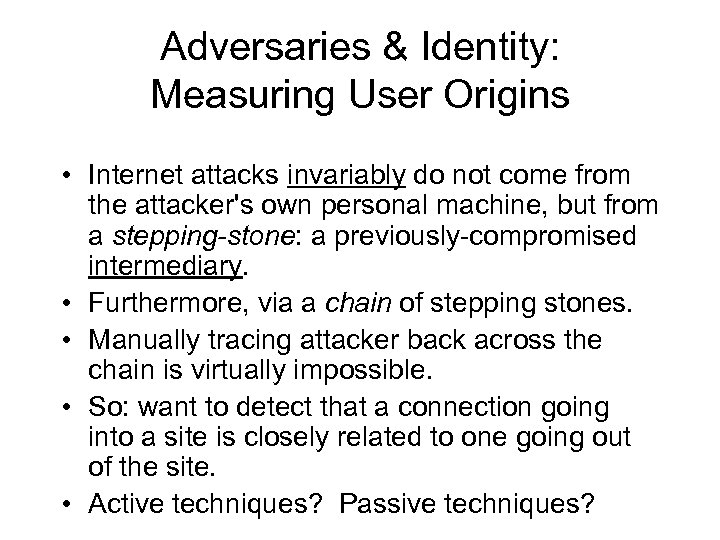

Adversaries & Identity: Measuring User Origins • Internet attacks invariably do not come from the attacker's own personal machine, but from a stepping-stone: a previously-compromised intermediary. • Furthermore, via a chain of stepping stones. • Manually tracing attacker back across the chain is virtually impossible. • So: want to detect that a connection going into a site is closely related to one going out of the site. • Active techniques? Passive techniques?

Adversaries & Identity: Measuring User Origins • Internet attacks invariably do not come from the attacker's own personal machine, but from a stepping-stone: a previously-compromised intermediary. • Furthermore, via a chain of stepping stones. • Manually tracing attacker back across the chain is virtually impossible. • So: want to detect that a connection going into a site is closely related to one going out of the site. • Active techniques? Passive techniques?

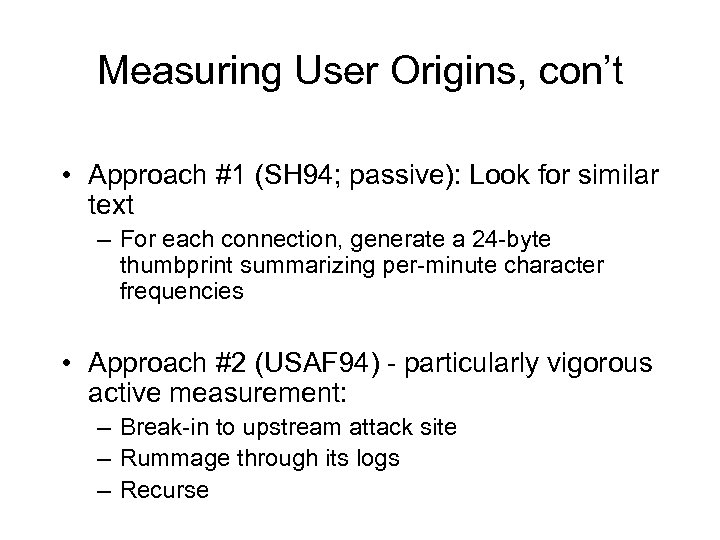

Measuring User Origins, con’t • Approach #1 (SH 94; passive): Look for similar text – For each connection, generate a 24 -byte thumbprint summarizing per-minute character frequencies • Approach #2 (USAF 94) - particularly vigorous active measurement: – Break-in to upstream attack site – Rummage through its logs – Recurse

Measuring User Origins, con’t • Approach #1 (SH 94; passive): Look for similar text – For each connection, generate a 24 -byte thumbprint summarizing per-minute character frequencies • Approach #2 (USAF 94) - particularly vigorous active measurement: – Break-in to upstream attack site – Rummage through its logs – Recurse

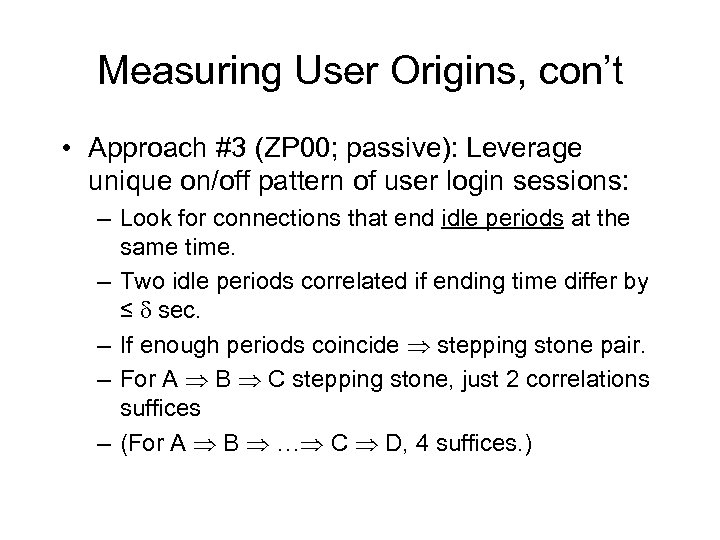

Measuring User Origins, con’t • Approach #3 (ZP 00; passive): Leverage unique on/off pattern of user login sessions: – Look for connections that end idle periods at the same time. – Two idle periods correlated if ending time differ by ≤ sec. – If enough periods coincide stepping stone pair. – For A B C stepping stone, just 2 correlations suffices – (For A B … C D, 4 suffices. )

Measuring User Origins, con’t • Approach #3 (ZP 00; passive): Leverage unique on/off pattern of user login sessions: – Look for connections that end idle periods at the same time. – Two idle periods correlated if ending time differ by ≤ sec. – If enough periods coincide stepping stone pair. – For A B C stepping stone, just 2 correlations suffices – (For A B … C D, 4 suffices. )

Measuring User Origins, con’t • Works very well, even for encrypted traffic • But: easy to evade, if attacker cognizant of algorithm – C’est la arms race • And: also turns out there are frequent legit stepping stones • Untried active approach: imprint traffic with low-frequency timing signature unique to each site (“breadcrumb”). Deconvolve recorded traffic to extract.

Measuring User Origins, con’t • Works very well, even for encrypted traffic • But: easy to evade, if attacker cognizant of algorithm – C’est la arms race • And: also turns out there are frequent legit stepping stones • Untried active approach: imprint traffic with low-frequency timing signature unique to each site (“breadcrumb”). Deconvolve recorded traffic to extract.

Global-scale Adversaries: Worms • Worm = Self-replicating/self-propagating code • Spreads across a network by exploiting flaws in open services, or fooling humans (viruses) • Not new --- Morris Worm, Nov. 1988 – 6 -10% of all Internet hosts infected • Many more small ones since … … but came into its own July, 2001

Global-scale Adversaries: Worms • Worm = Self-replicating/self-propagating code • Spreads across a network by exploiting flaws in open services, or fooling humans (viruses) • Not new --- Morris Worm, Nov. 1988 – 6 -10% of all Internet hosts infected • Many more small ones since … … but came into its own July, 2001

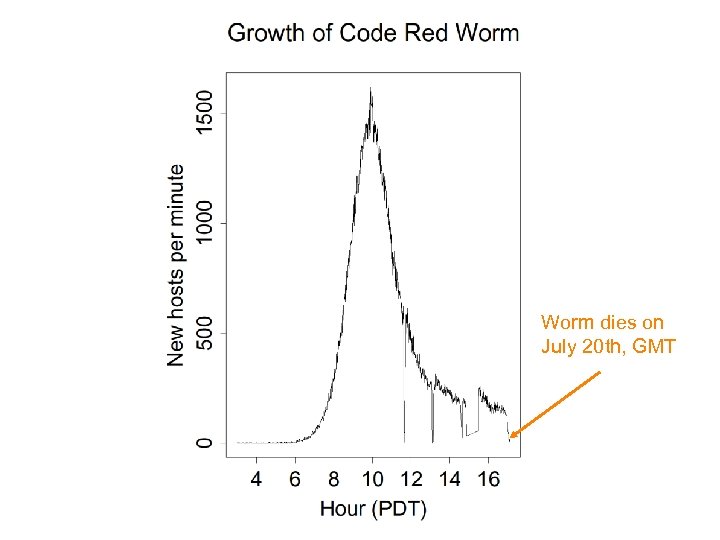

Code Red • Initial version released July 13, 2001. • Exploited known bug in Microsoft IIS Web servers. • 1 st through 20 th of each month: spread. 20 th through end of each month: attack. • Spread: via random scanning of 32 -bit IP address space. • But: failure to seed random number generator linear growth reverse engineering enables forensics

Code Red • Initial version released July 13, 2001. • Exploited known bug in Microsoft IIS Web servers. • 1 st through 20 th of each month: spread. 20 th through end of each month: attack. • Spread: via random scanning of 32 -bit IP address space. • But: failure to seed random number generator linear growth reverse engineering enables forensics

Code Red, con’t • Revision released July 19, 2001. • Payload: flooding attack on www. whitehouse. gov. • Bug lead to it dying for date ≥ 20 th of the month. • But: this time random number generator correctly seeded. Bingo!

Code Red, con’t • Revision released July 19, 2001. • Payload: flooding attack on www. whitehouse. gov. • Bug lead to it dying for date ≥ 20 th of the month. • But: this time random number generator correctly seeded. Bingo!

Worm dies on July 20 th, GMT

Worm dies on July 20 th, GMT

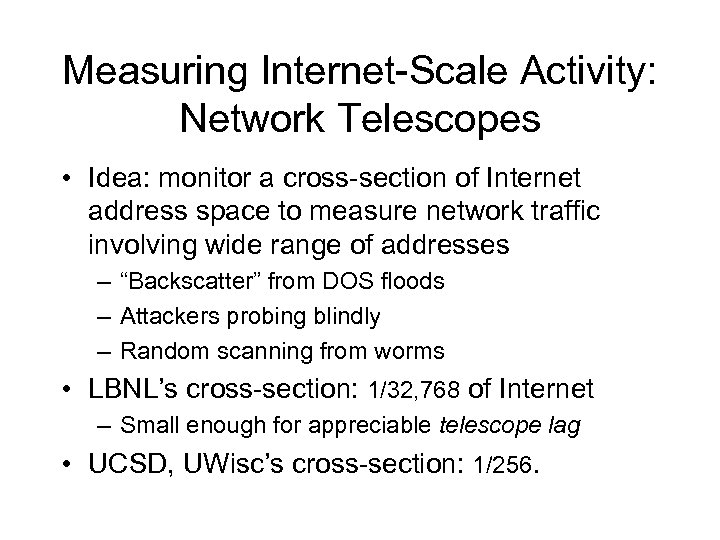

Measuring Internet-Scale Activity: Network Telescopes • Idea: monitor a cross-section of Internet address space to measure network traffic involving wide range of addresses – “Backscatter” from DOS floods – Attackers probing blindly – Random scanning from worms • LBNL’s cross-section: 1/32, 768 of Internet – Small enough for appreciable telescope lag • UCSD, UWisc’s cross-section: 1/256.

Measuring Internet-Scale Activity: Network Telescopes • Idea: monitor a cross-section of Internet address space to measure network traffic involving wide range of addresses – “Backscatter” from DOS floods – Attackers probing blindly – Random scanning from worms • LBNL’s cross-section: 1/32, 768 of Internet – Small enough for appreciable telescope lag • UCSD, UWisc’s cross-section: 1/256.

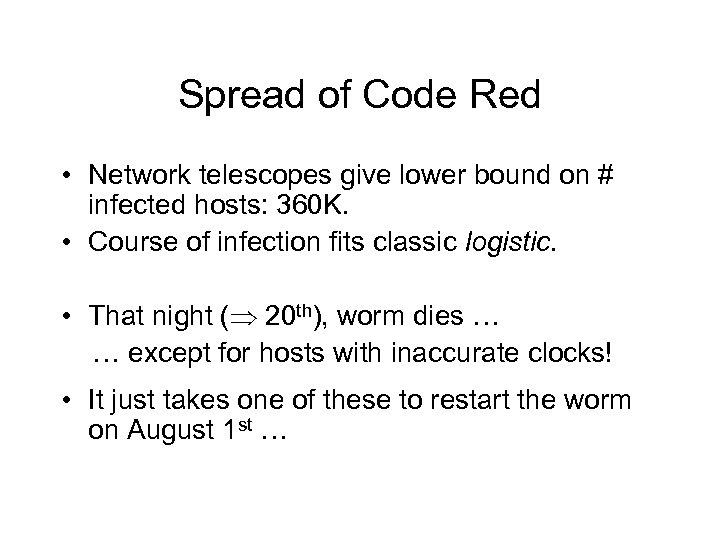

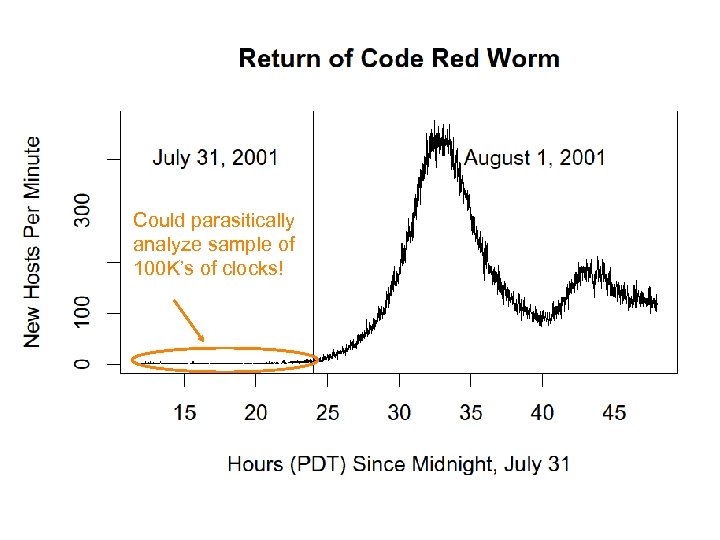

Spread of Code Red • Network telescopes give lower bound on # infected hosts: 360 K. • Course of infection fits classic logistic. • That night ( 20 th), worm dies … … except for hosts with inaccurate clocks! • It just takes one of these to restart the worm on August 1 st …

Spread of Code Red • Network telescopes give lower bound on # infected hosts: 360 K. • Course of infection fits classic logistic. • That night ( 20 th), worm dies … … except for hosts with inaccurate clocks! • It just takes one of these to restart the worm on August 1 st …

Could parasitically analyze sample of 100 K’s of clocks!

Could parasitically analyze sample of 100 K’s of clocks!

The Worms Keep Coming • Code Red 2: – – August 4 th, 2001 Localized scanning: prefers nearby addresses Payload: root backdoor Programmed to die Oct 1, 2001. • Nimda: – September 18, 2001 – Multi-mode spreading, including via Code Red 2 backdoors!

The Worms Keep Coming • Code Red 2: – – August 4 th, 2001 Localized scanning: prefers nearby addresses Payload: root backdoor Programmed to die Oct 1, 2001. • Nimda: – September 18, 2001 – Multi-mode spreading, including via Code Red 2 backdoors!

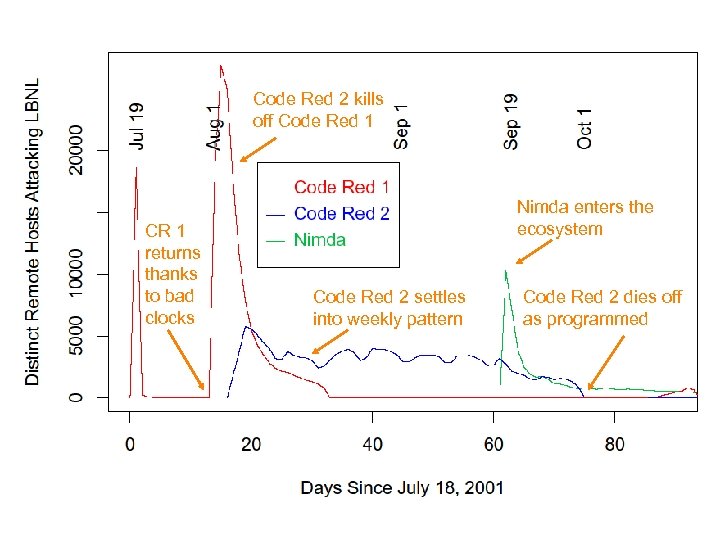

Code Red 2 kills off Code Red 1 CR 1 returns thanks to bad clocks Nimda enters the ecosystem Code Red 2 settles into weekly pattern Code Red 2 dies off as programmed

Code Red 2 kills off Code Red 1 CR 1 returns thanks to bad clocks Nimda enters the ecosystem Code Red 2 settles into weekly pattern Code Red 2 dies off as programmed

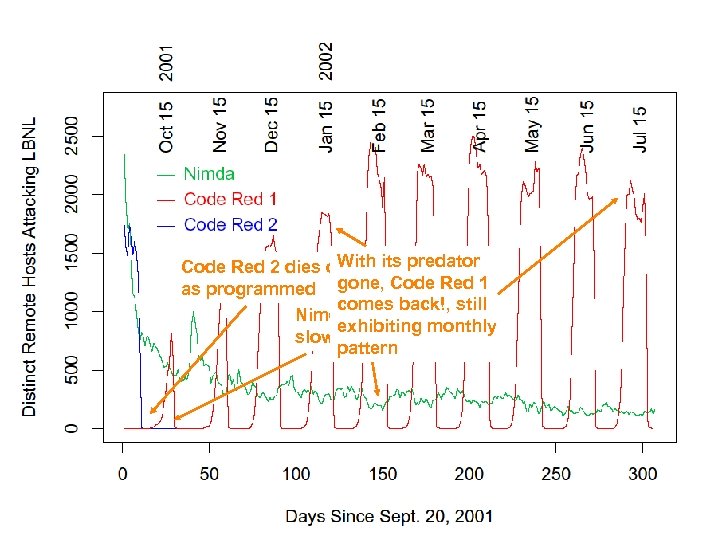

With its predator Code Red 2 dies off as programmed gone, Code Red 1 comes back!, still Nimda hums along, exhibiting monthly slowly cleaned up pattern

With its predator Code Red 2 dies off as programmed gone, Code Red 1 comes back!, still Nimda hums along, exhibiting monthly slowly cleaned up pattern

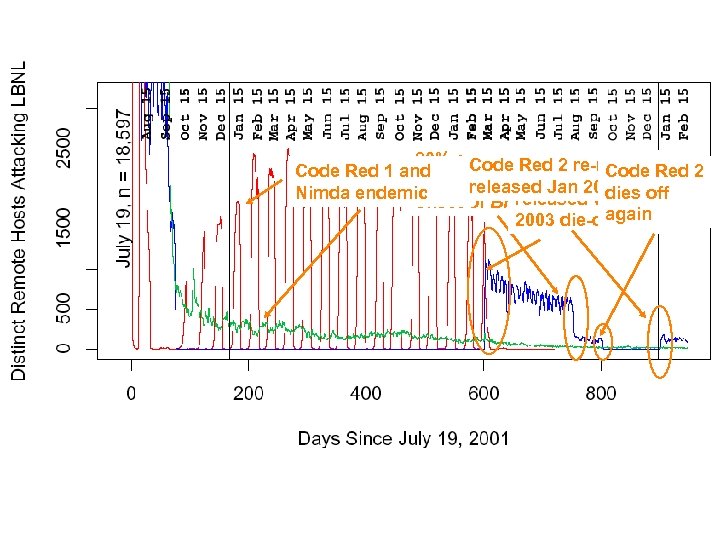

80% of Code Red 2 re-re. Code Red 1 and Code Red 2 re- Red 2 cleaned up due Jan 2004 to released dies off Nimda endemic released with Oct. onset of Blaster again 2003 die-off

80% of Code Red 2 re-re. Code Red 1 and Code Red 2 re- Red 2 cleaned up due Jan 2004 to released dies off Nimda endemic released with Oct. onset of Blaster again 2003 die-off

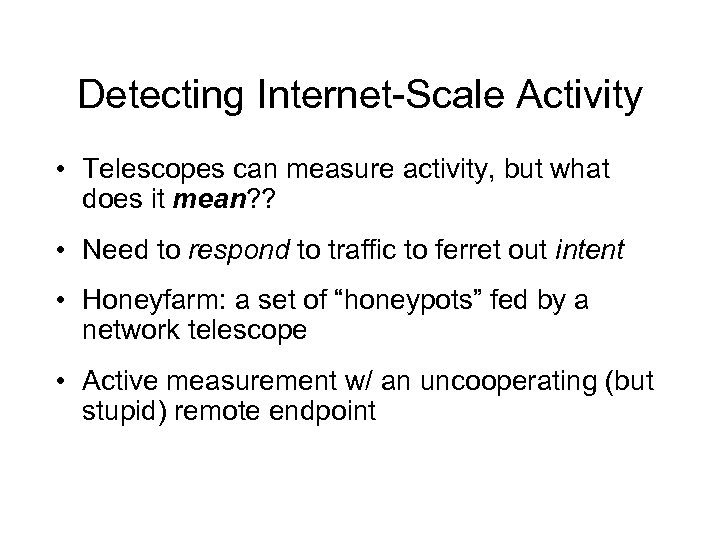

Detecting Internet-Scale Activity • Telescopes can measure activity, but what does it mean? ? • Need to respond to traffic to ferret out intent • Honeyfarm: a set of “honeypots” fed by a network telescope • Active measurement w/ an uncooperating (but stupid) remote endpoint

Detecting Internet-Scale Activity • Telescopes can measure activity, but what does it mean? ? • Need to respond to traffic to ferret out intent • Honeyfarm: a set of “honeypots” fed by a network telescope • Active measurement w/ an uncooperating (but stupid) remote endpoint

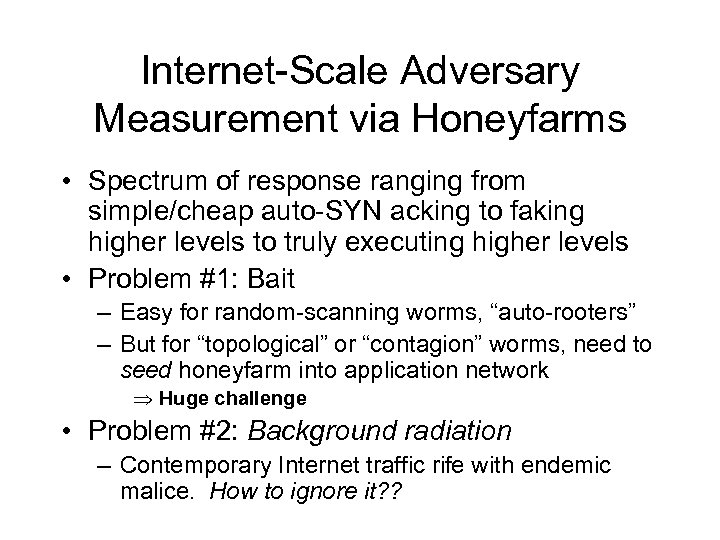

Internet-Scale Adversary Measurement via Honeyfarms • Spectrum of response ranging from simple/cheap auto-SYN acking to faking higher levels to truly executing higher levels • Problem #1: Bait – Easy for random-scanning worms, “auto-rooters” – But for “topological” or “contagion” worms, need to seed honeyfarm into application network Huge challenge • Problem #2: Background radiation – Contemporary Internet traffic rife with endemic malice. How to ignore it? ?

Internet-Scale Adversary Measurement via Honeyfarms • Spectrum of response ranging from simple/cheap auto-SYN acking to faking higher levels to truly executing higher levels • Problem #1: Bait – Easy for random-scanning worms, “auto-rooters” – But for “topological” or “contagion” worms, need to seed honeyfarm into application network Huge challenge • Problem #2: Background radiation – Contemporary Internet traffic rife with endemic malice. How to ignore it? ?

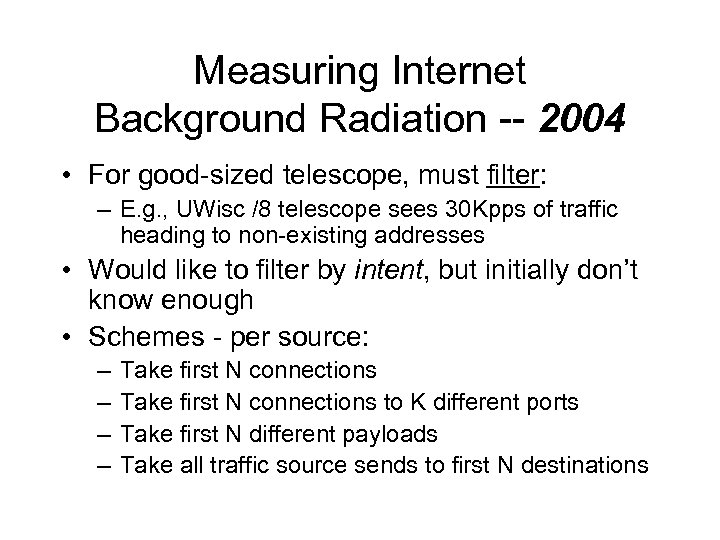

Measuring Internet Background Radiation -- 2004 • For good-sized telescope, must filter: – E. g. , UWisc /8 telescope sees 30 Kpps of traffic heading to non-existing addresses • Would like to filter by intent, but initially don’t know enough • Schemes - per source: – – Take first N connections to K different ports Take first N different payloads Take all traffic source sends to first N destinations

Measuring Internet Background Radiation -- 2004 • For good-sized telescope, must filter: – E. g. , UWisc /8 telescope sees 30 Kpps of traffic heading to non-existing addresses • Would like to filter by intent, but initially don’t know enough • Schemes - per source: – – Take first N connections to K different ports Take first N different payloads Take all traffic source sends to first N destinations

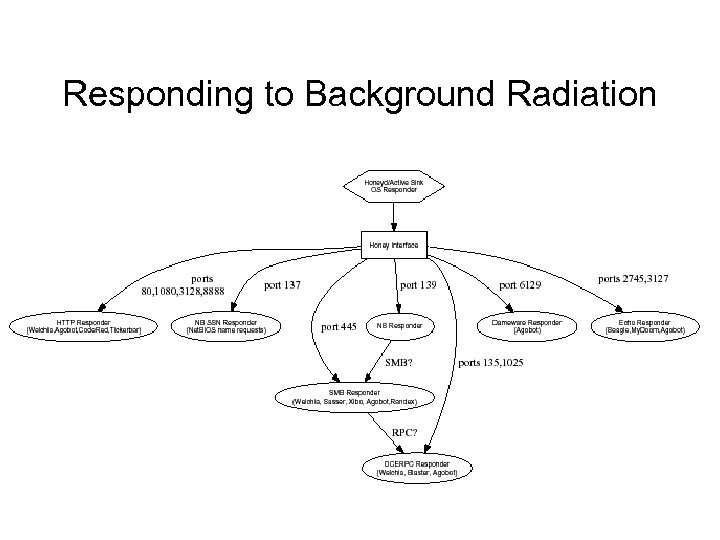

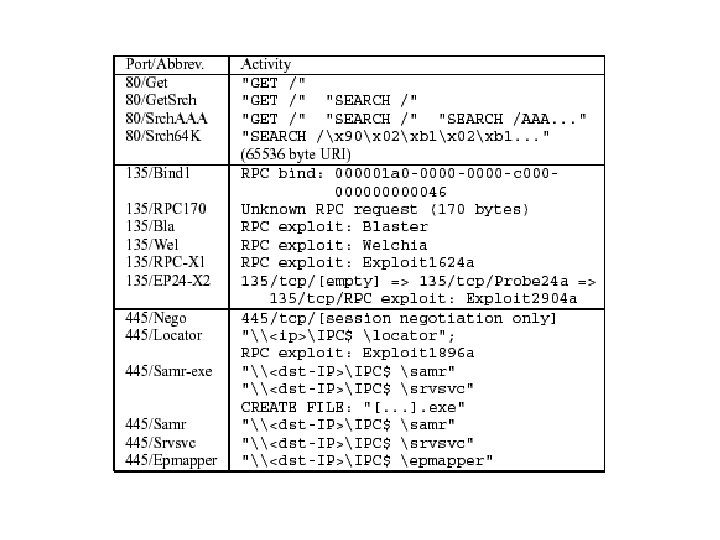

Responding to Background Radiation

Responding to Background Radiation

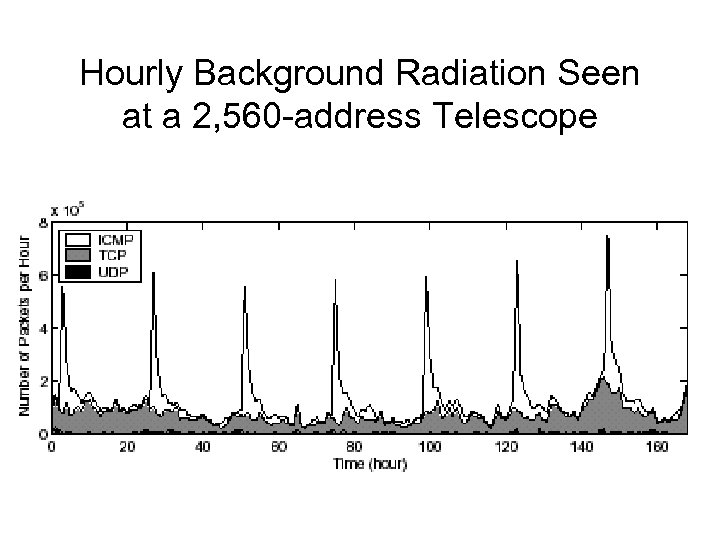

Hourly Background Radiation Seen at a 2, 560 -address Telescope

Hourly Background Radiation Seen at a 2, 560 -address Telescope

Measuring Internet-scale Adversaries: Summary • New tools & forms of measurement: – Telescopes, honeypots, filtering • New needs to automate measurement: – Worm defense must be faster-than-human • The lay of the land has changed: – Endemic worms, malicious scanning – Majority of Internet connection (attempts) are hostile (80+% at LBNL) • Increasing requirement for applicationlevel analysis

Measuring Internet-scale Adversaries: Summary • New tools & forms of measurement: – Telescopes, honeypots, filtering • New needs to automate measurement: – Worm defense must be faster-than-human • The lay of the land has changed: – Endemic worms, malicious scanning – Majority of Internet connection (attempts) are hostile (80+% at LBNL) • Increasing requirement for applicationlevel analysis

The Huge Dataset Headache • Adversary measurement particularly requires packet contents – Much analysis is application-layer • Huge privacy/legal/policy/commercial hurdles • Major challenge: anonymization/agents technologies – E. g. [PP 03] “semantic trace transformation” – Use intrusion detection system’s application analyzers to anonymize trace at semantic level (e. g. , filenames vs. users vs. commands) – Note: general measurement increasingly benefits from such application analyzers, too

The Huge Dataset Headache • Adversary measurement particularly requires packet contents – Much analysis is application-layer • Huge privacy/legal/policy/commercial hurdles • Major challenge: anonymization/agents technologies – E. g. [PP 03] “semantic trace transformation” – Use intrusion detection system’s application analyzers to anonymize trace at semantic level (e. g. , filenames vs. users vs. commands) – Note: general measurement increasingly benefits from such application analyzers, too

Attacks on Passive Monitoring • State-flooding: – E. g. if tracking connections, each new SYN requires state; each undelivered TCP segment requires state • Analysis flooding: – E. g. stick, snot, trichinosis • But surely just peering at the adversary we’re ourselves safe from direct attack?

Attacks on Passive Monitoring • State-flooding: – E. g. if tracking connections, each new SYN requires state; each undelivered TCP segment requires state • Analysis flooding: – E. g. stick, snot, trichinosis • But surely just peering at the adversary we’re ourselves safe from direct attack?

Attacks on Passive Monitoring • Exploits for bugs in passive analyzers! • Suppose protocol analyzer has an error parsing unusual type of packet – E. g. , tcpdump and malformed options • Adversary crafts such a packet, overruns buffer, causes analyzer to execute arbitrary code • E. g. Witty, Black. Ice & packets sprayed to random UDP ports – 12, 000 infectees in < 60 minutes!

Attacks on Passive Monitoring • Exploits for bugs in passive analyzers! • Suppose protocol analyzer has an error parsing unusual type of packet – E. g. , tcpdump and malformed options • Adversary crafts such a packet, overruns buffer, causes analyzer to execute arbitrary code • E. g. Witty, Black. Ice & packets sprayed to random UDP ports – 12, 000 infectees in < 60 minutes!

Summary • The lay of the land has changed – Ecosystem of endemic hostility – “Traffic characterization” of adversaries as ripe as characterizing regular Internet traffic was 10 years ago – People care • Very challenging: – Arms race – Heavy on application analysis – Major dataset difficulties

Summary • The lay of the land has changed – Ecosystem of endemic hostility – “Traffic characterization” of adversaries as ripe as characterizing regular Internet traffic was 10 years ago – People care • Very challenging: – Arms race – Heavy on application analysis – Major dataset difficulties

Summary, con’t • Revisit “passive” measurement: – evasion – telescopes/Internet scope – no longer isolated observer, but vulnerable • Revisit “active” measurement – perturbing traffic to unmask hiding & evasion – engaging attacker to discover intent • IMHO, this is "where the action is” … • … And the fun!

Summary, con’t • Revisit “passive” measurement: – evasion – telescopes/Internet scope – no longer isolated observer, but vulnerable • Revisit “active” measurement – perturbing traffic to unmask hiding & evasion – engaging attacker to discover intent • IMHO, this is "where the action is” … • … And the fun!