4235ad2316f178ae4f81c7e0e4afe8af.ppt

- Количество слайдов: 33

Massively Distributed Computing and An NRPGM Project on Protein Structure and Function Computation Biology Lab Physics Dept & Life Science Dept National Central University

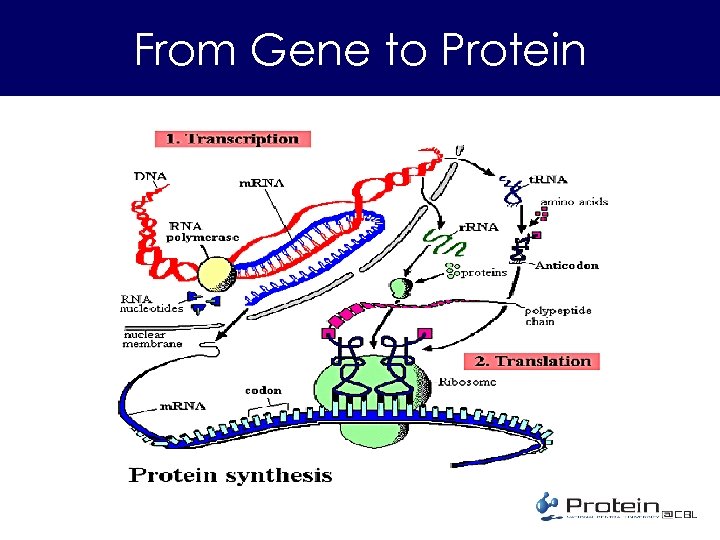

From Gene to Protein

About Protein • Function – Storage, Transport, Messengers, Regulation… Everything that sustains life – Structure: shell, silk, spider-silk, etc. • Structure – String of amino acid with 3 D structure – Homology and Topology • Importance – Science, Health & Medicine – Industry – enzyme, detergent, etc. • An example – 3 hvt. pdb

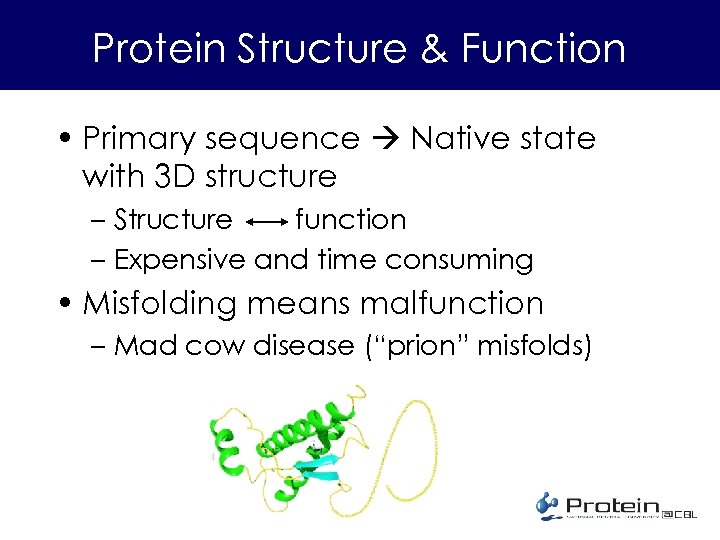

Protein Structure & Function • Primary sequence Native state with 3 D structure – Structure function – Expensive and time consuming • Misfolding means malfunction – Mad cow disease (“prion” misfolds)

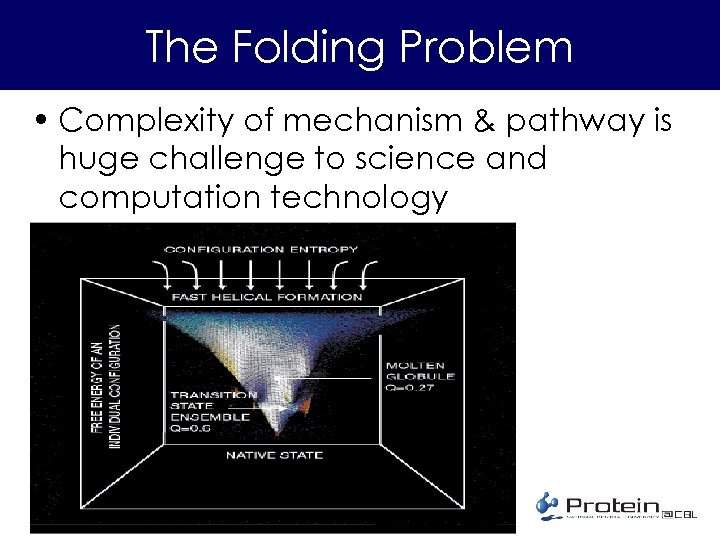

The Folding Problem • Complexity of mechanism & pathway is huge challenge to science and computation technology

Molecular Dynamics (MD) • Molecular’s behavior determined by – Ensemble statistics – Newtonian mechanics • Experiment in silico • All-atom w. water – Huge number of particles • Super-heavyduty computation • Software for macromolecular MD available – CHARMm, AMBER, GROMACS

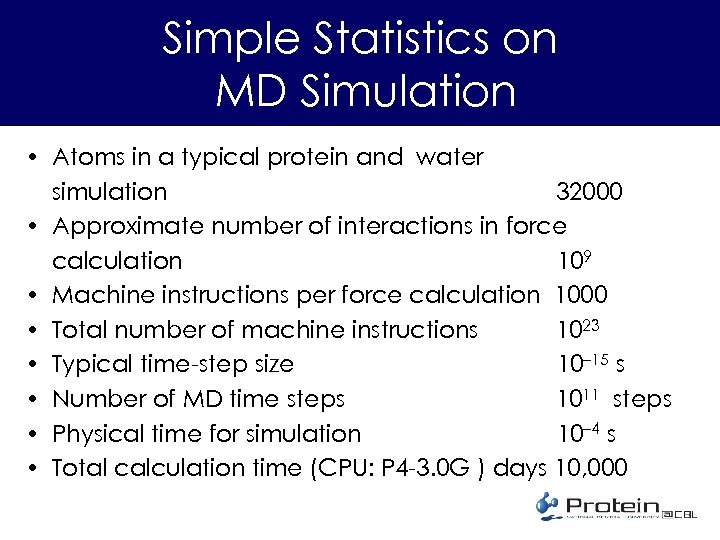

Simple Statistics on MD Simulation • Atoms in a typical protein and water simulation 32000 • Approximate number of interactions in force calculation 109 • Machine instructions per force calculation 1000 • Total number of machine instructions 1023 • Typical time-step size 10– 15 s • Number of MD time steps 1011 steps • Physical time for simulation 10– 4 s • Total calculation time (CPU: P 4 -3. 0 G ) days 10, 000

Protein Studies by Massively Distributed Computing A Project in National Research Program on Genomic Medicine • Scientific – Protein folding, structure, function, proteinmolecule interaction – Algorithm, force-field • Computing – Massive distributive computing • Education – Everyone and Anyone with a personal PC can take part • Industry – collaborative development

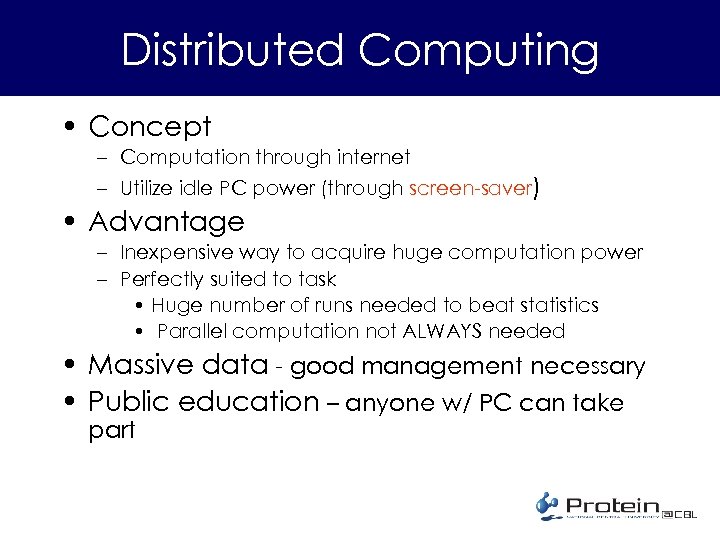

Distributed Computing • Concept – Computation through internet – Utilize idle PC power (through screen-saver) • Advantage – Inexpensive way to acquire huge computation power – Perfectly suited to task • Huge number of runs needed to beat statistics • Parallel computation not ALWAYS needed • Massive data - good management necessary • Public education – anyone w/ PC can take part

Hardware Strategies • Parallel computation (we are not this) – PC cluster – IBM (The blue gene), 106 CPU • Massive distributive computing – Grid computing (formal and in the future) – Server to individual client (now in inexpensive) • Examples: SETI, folding@home, genome@home • Our project: protein@CBL

Software Components • Dynamics of macromolecules – Molecular dynamics, all atomistic or meanfield solvent – Computer codes • GROMACS (for distributive comp; freeware) • AMBER and others (for in-house comp; licensed) • Distributed Computing – COSM - a stable, reliable, and secure system for large scale distributed processing (freeware)

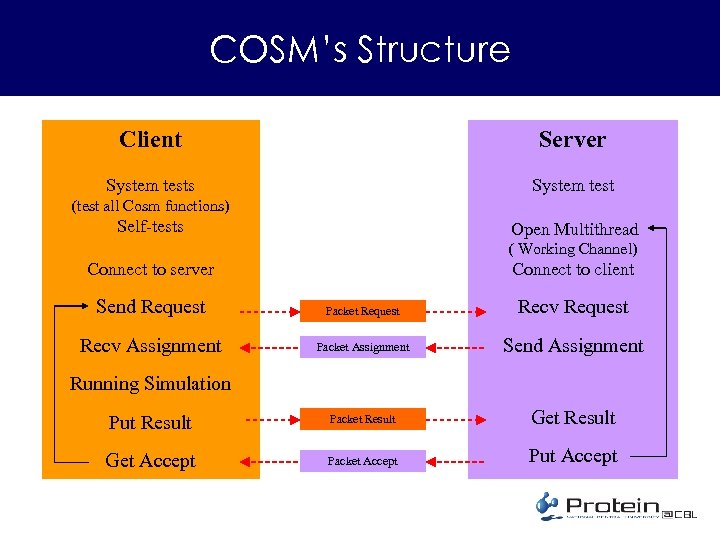

COSM’s Structure Client Server System tests System test (test all Cosm functions) Self-tests Open Multithread ( Working Channel) Connect to client Connect to server Send Request Packet Request Recv Assignment Packet Assignment Send Assignment Put Result Packet Result Get Accept Packet Accept Put Accept Running Simulation

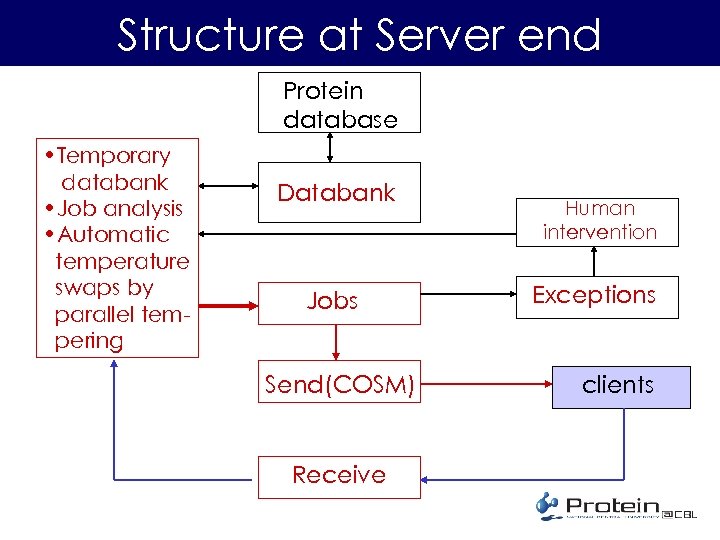

Structure at Server end Protein database • Temporary databank • Job analysis • Automatic temperature swaps by parallel tempering Databank Jobs Send(COSM) Receive Human intervention Exceptions clients

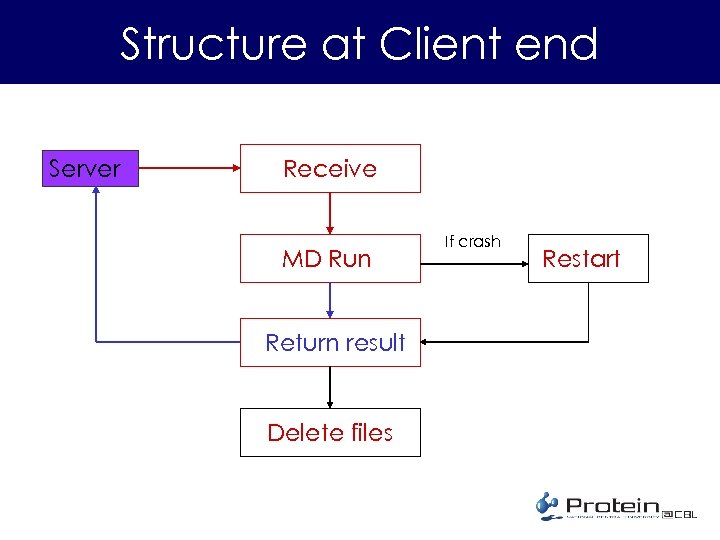

Structure at Client end Server Receive MD Run Return result Delete files If crash Restart

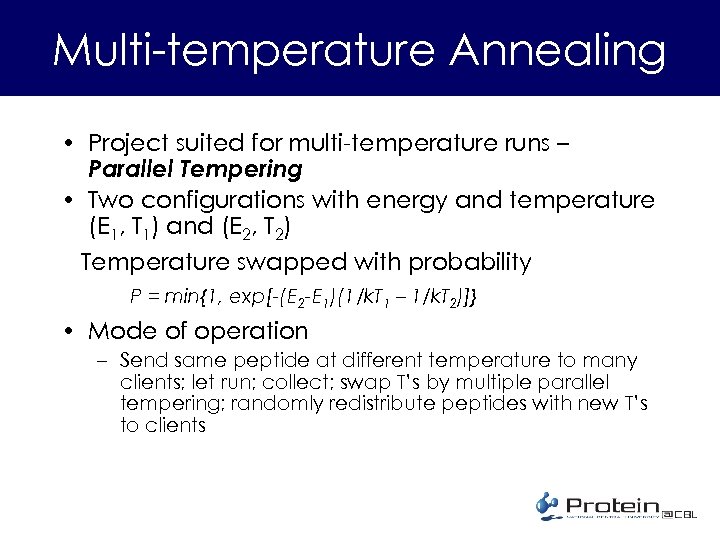

Multi-temperature Annealing • Project suited for multi-temperature runs – Parallel Tempering • Two configurations with energy and temperature (E 1, T 1) and (E 2, T 2) Temperature swapped with probability P = min{1, exp[-(E 2 -E 1)(1/k. T 1 – 1/k. T 2)]} • Mode of operation – Send same peptide at different temperature to many clients; let run; collect; swap T’s by multiple parallel tempering; randomly redistribute peptides with new T’s to clients

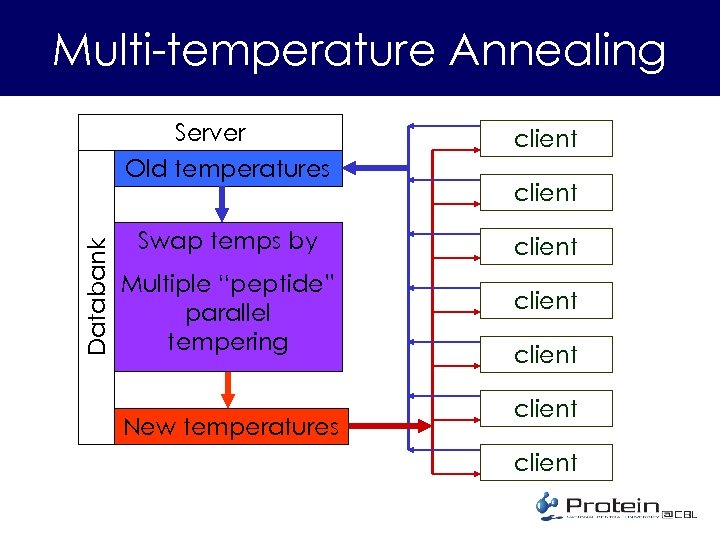

Multi-temperature Annealing Databank Server Old temperatures client Swap temps by client Multiple “peptide” parallel tempering New temperatures client client

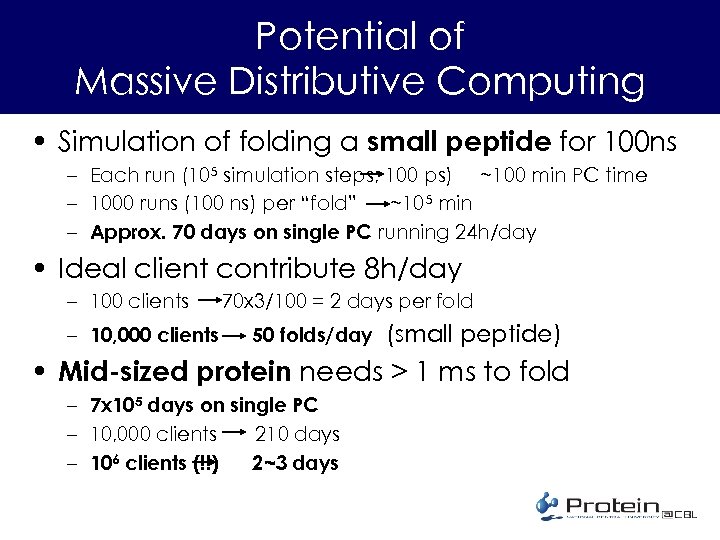

Potential of Massive Distributive Computing • Simulation of folding a small peptide for 100 ns – Each run (105 simulation steps; 100 ps) ~100 min PC time – 1000 runs (100 ns) per “fold” ~105 min – Approx. 70 days on single PC running 24 h/day • Ideal client contribute 8 h/day – 100 clients – 10, 000 clients 70 x 3/100 = 2 days per fold 50 folds/day (small peptide) • Mid-sized protein needs > 1 ms to fold – 7 x 105 days on single PC – 10, 000 clients 210 days – 106 clients (!!) 2~3 days

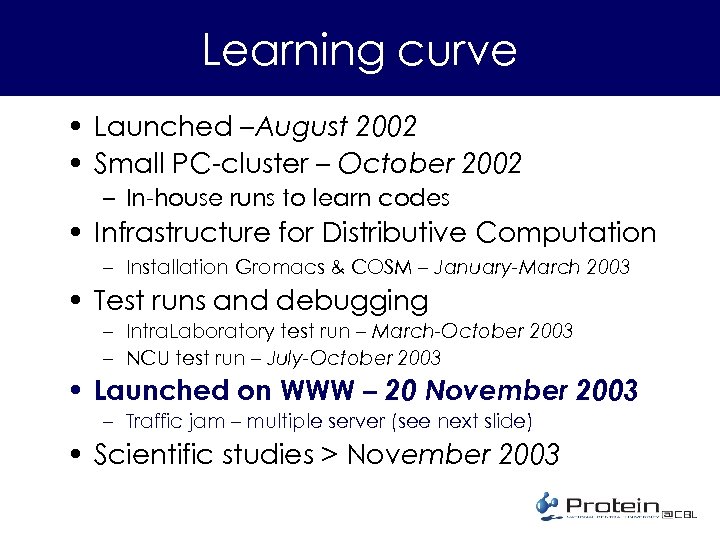

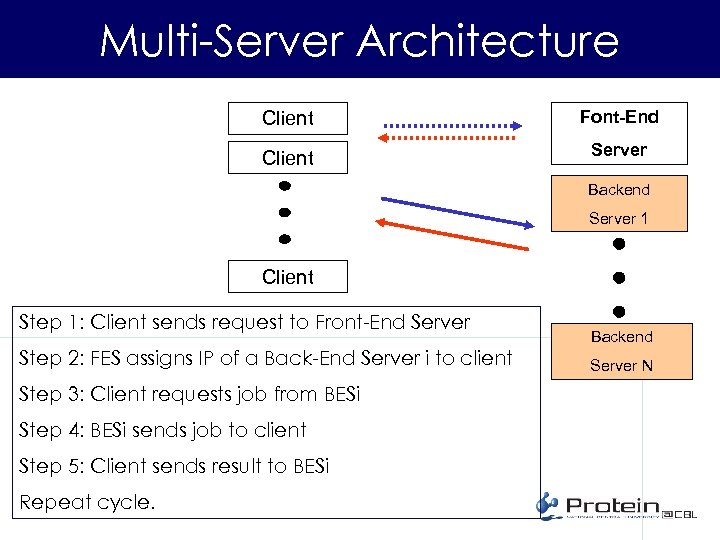

Learning curve • Launched –August 2002 • Small PC-cluster – October 2002 – In-house runs to learn codes • Infrastructure for Distributive Computation – Installation Gromacs & COSM – January-March 2003 • Test runs and debugging – Intra. Laboratory test run – March-October 2003 – NCU test run – July-October 2003 • Launched on WWW – 20 November 2003 – Traffic jam – multiple server (see next slide) • Scientific studies > November 2003

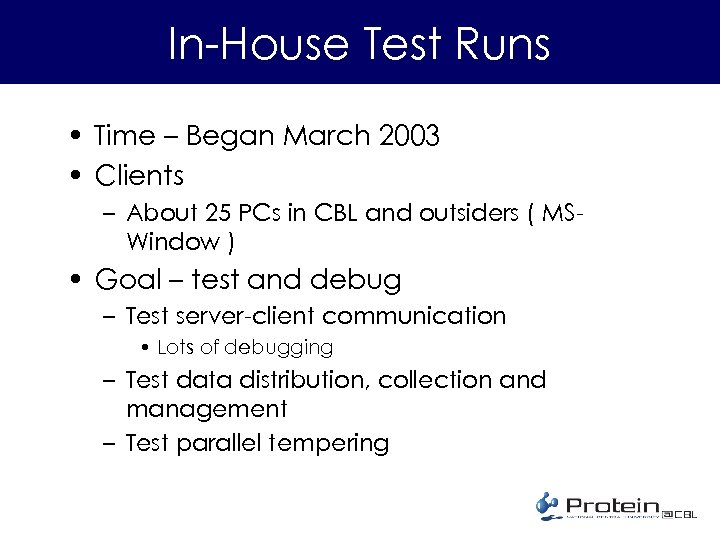

In-House Test Runs • Time – Began March 2003 • Clients – About 25 PCs in CBL and outsiders ( MSWindow ) • Goal – test and debug – Test server-client communication • Lots of debugging – Test data distribution, collection and management – Test parallel tempering

Multi-Server Architecture Client Font-End Client Server Backend Server 1 Client Step 1: Client sends request to Front-End Server Step 2: FES assigns IP of a Back-End Server i to client Step 3: Client requests job from BESi Step 4: BESi sends job to client Step 5: Client sends result to BESi Repeat cycle. Backend Server N

Current status and Plans for immediate future • Last beta version Pac v 0. 9 – Released on July 15 – To lab CBL members & physics dept – About 25 clients • First alpha version Pac v 1. 0 released October 1 2003 • Current version Pac v 1. 2 – Releases for distributed computing on 20 November 2003 – In search of clients • Portal in “Educities” http: //www. educities. edu. tw/ ~2, 500 downloads, ~500 real clients • PC’s in university administrative units • City halls and county government offices • Talks and visits to universities and high schools

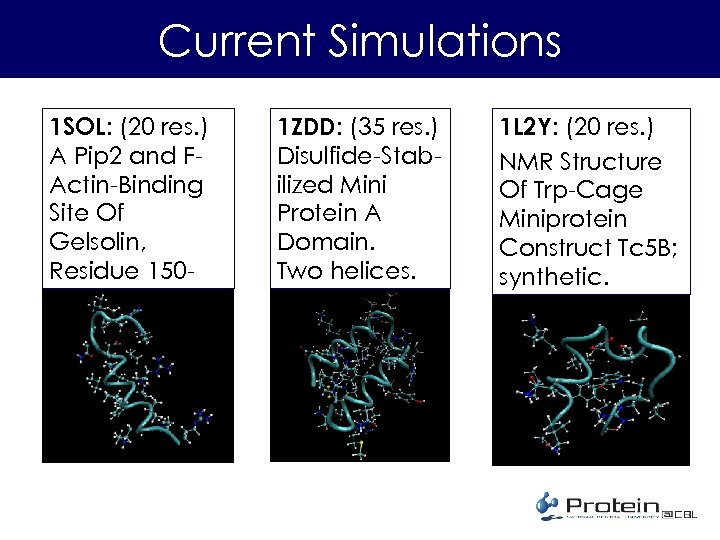

Current Simulations 1 SOL: (20 res. ) A Pip 2 and FActin-Binding Site Of Gelsolin, Residue 150169. One helix. 1 ZDD: (35 res. ) Disulfide-Stabilized Mini Protein A Domain. Two helices. 1 L 2 Y: (20 res. ) NMR Structure Of Trp-Cage Miniprotein Construct Tc 5 B; synthetic.

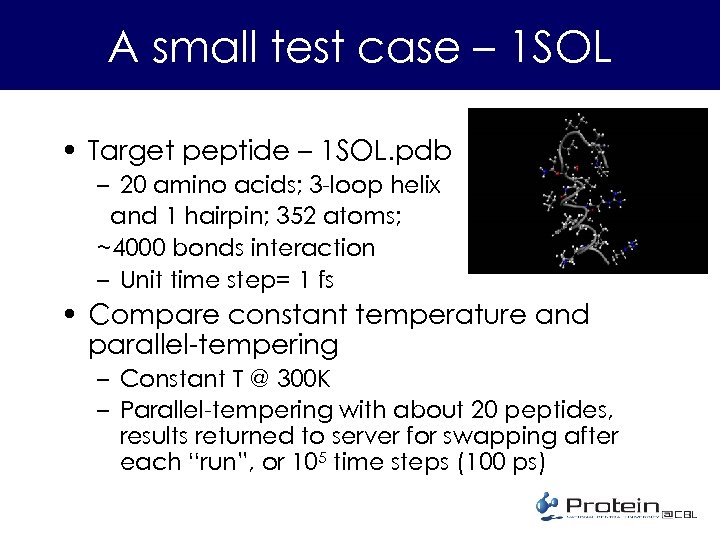

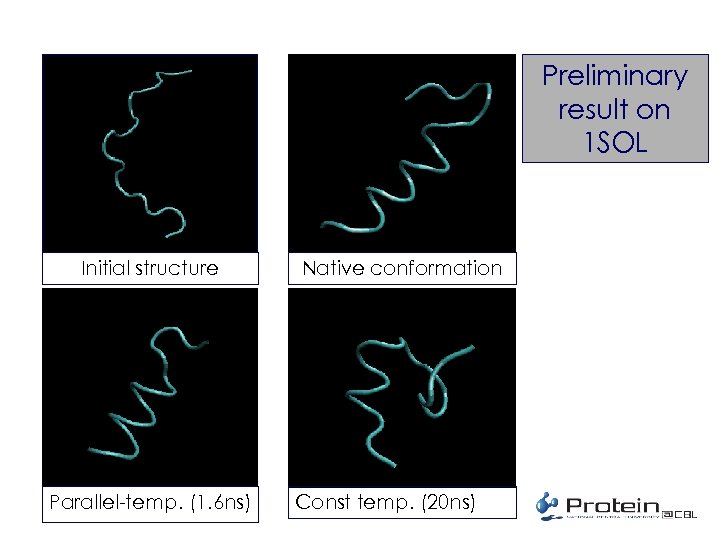

A small test case – 1 SOL • Target peptide – 1 SOL. pdb – 20 amino acids; 3 -loop helix and 1 hairpin; 352 atoms; ~4000 bonds interaction – Unit time step= 1 fs • Compare constant temperature and parallel-tempering – Constant T @ 300 K – Parallel-tempering with about 20 peptides, results returned to server for swapping after each “run”, or 105 time steps (100 ps)

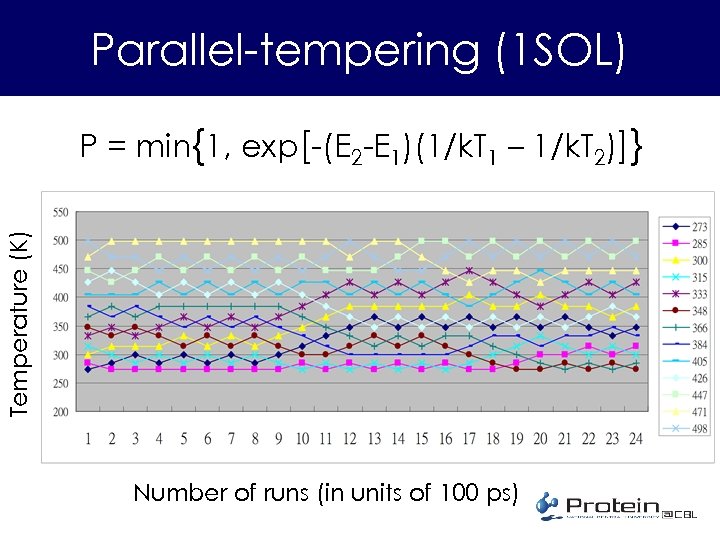

Parallel-tempering (1 SOL) Temperature (K) P = min{1, exp[-(E 2 -E 1)(1/k. T 1 – 1/k. T 2)]} Number of runs (in units of 100 ps)

Preliminary result on 1 SOL Initial structure Parallel-temp. (1. 6 ns) Native conformation Const temp. (20 ns)

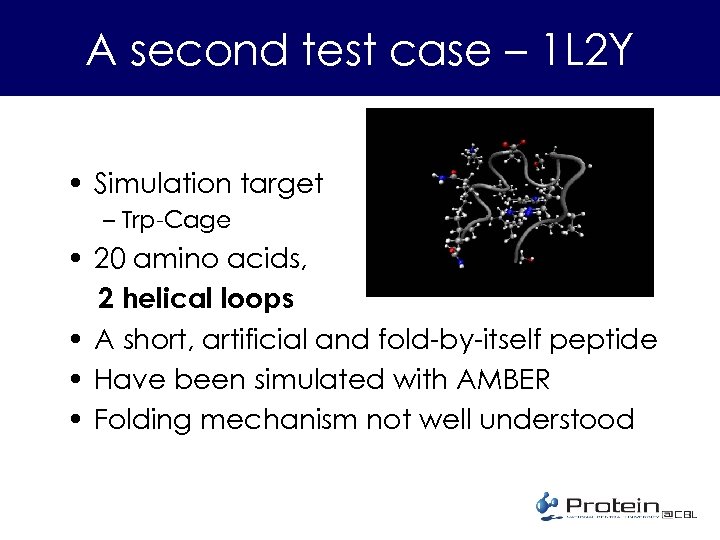

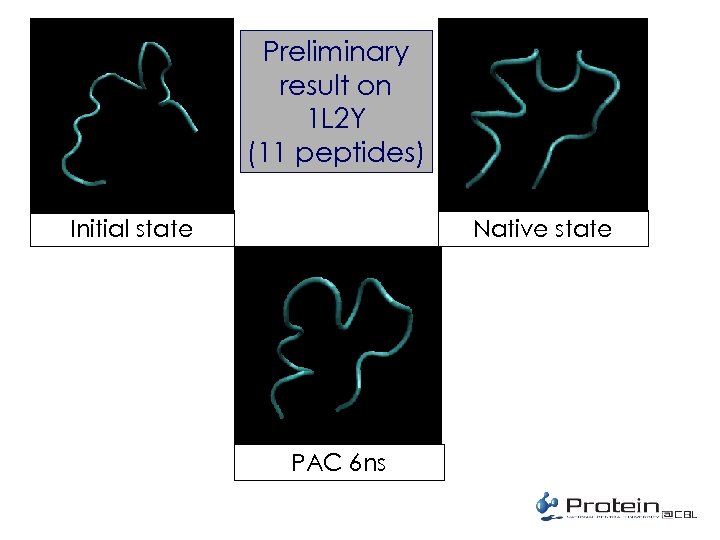

A second test case – 1 L 2 Y • Simulation target – Trp-Cage • 20 amino acids, 2 helical loops • A short, artificial and fold-by-itself peptide • Have been simulated with AMBER • Folding mechanism not well understood

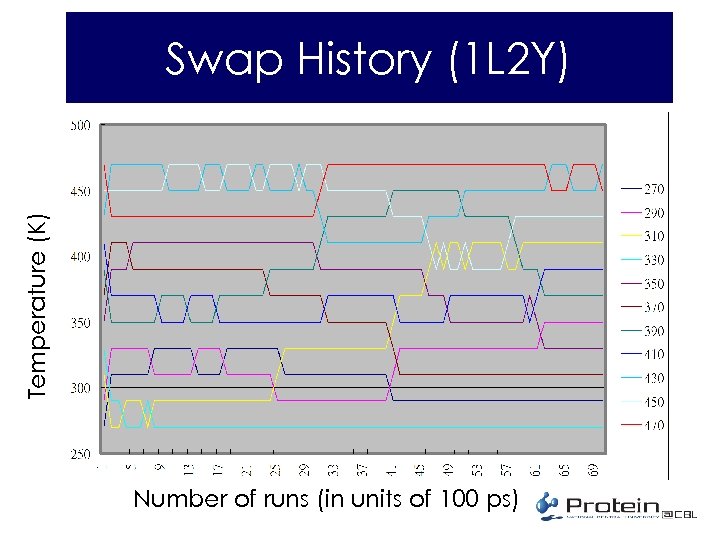

Temperature (K) Swap History (1 L 2 Y) Number of runs (in units of 100 ps)

Preliminary result on 1 L 2 Y (11 peptides) Native state Initial state PAC 6 ns

Modifications needed • Reduce size of water box – Save computation time • Rewrite the energy function – Ignore the water-water interaction • Increase cut-off radius • Try different simulation algorithms for changing pressure and temperature • Others…

Looking ahead • • Better understanding of annealing procedure Better understanding of energetics Expand client community Develop serious collaboration with biologists – Structure biologists, e. g. , NMR people – Protein function people – Drug designers • “…investigation of motions that have particular functional implications and to obtain information that is not accessible to experiment. ” Karplus and Mc. Cammon, Nature Strct. Biol. 2002

The Team • Funded by NRPGM/NSC • Computational Biology Laboratory Physics Dept & Life Sciences Dept National Central University – – – – PI: Professor HC Lee (Phys & LS/NCU) Co-PI: Professor Hsuen-Yi Chen (Phys/NCU) Jia-Lin Lo (Ph. D student) Jun-Ping Yiu (MSc Res. Assistant) Chien-Hao Wei (MSc RA) Engin Lee ( MSc student ) PDF (TBA) We are looking for collaborators, research associates, programmers, students, etc.

http: //protein. ncu. edu. tw

Thank you for your attention

4235ad2316f178ae4f81c7e0e4afe8af.ppt