56b384a42542592df55f1fd81b4208da.ppt

- Количество слайдов: 64

Linguistics 187 Week 4 Ambiguity and Robustness

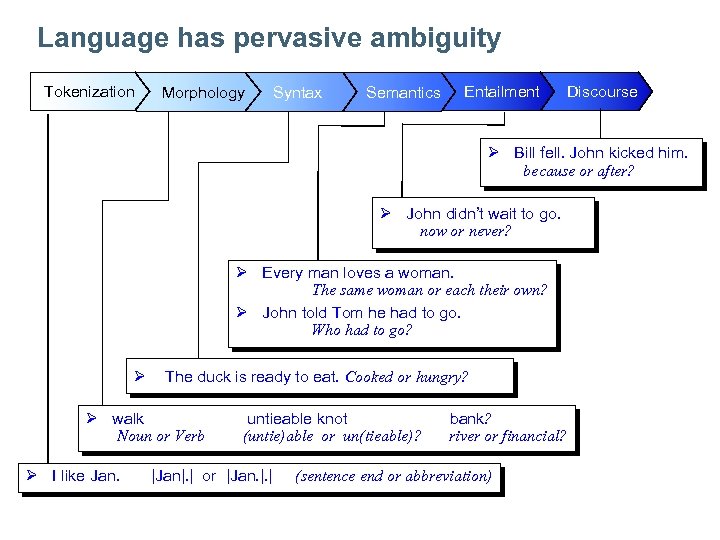

Language has pervasive ambiguity Tokenization Morphology Syntax Semantics Entailment Discourse Ø Bill fell. John kicked him. because or after? Ø John didn’t wait to go. now or never? Ø Every man loves a woman. The same woman or each their own? Ø John told Tom he had to go. Who had to go? Ø The duck is ready to eat. Cooked or hungry? Ø walk Noun or Verb Ø I like Jan. untieable knot (untie)able or un(tieable)? |Jan|. | or |Jan. |. | bank? river or financial? (sentence end or abbreviation)

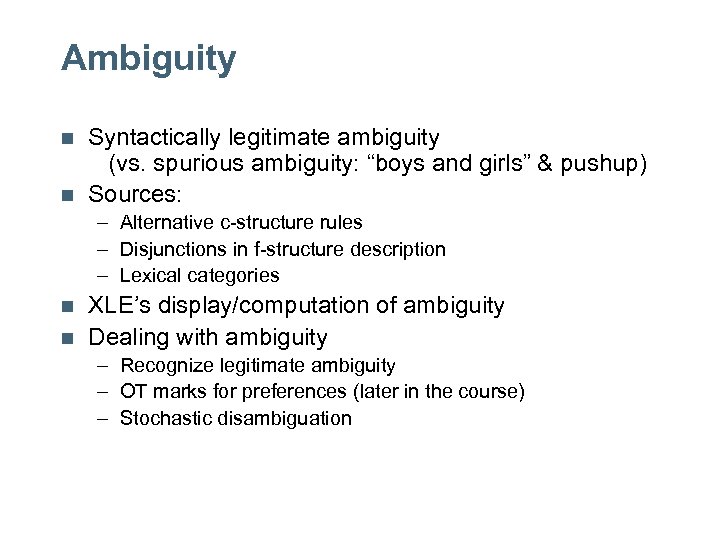

Ambiguity n n Syntactically legitimate ambiguity (vs. spurious ambiguity: “boys and girls” & pushup) Sources: – Alternative c-structure rules – Disjunctions in f-structure description – Lexical categories n n XLE’s display/computation of ambiguity Dealing with ambiguity – Recognize legitimate ambiguity – OT marks for preferences (later in the course) – Stochastic disambiguation

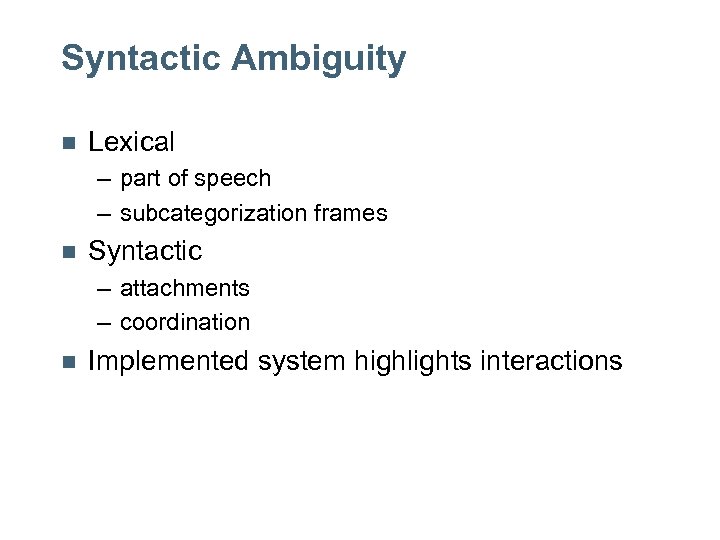

Syntactic Ambiguity n Lexical – part of speech – subcategorization frames n Syntactic – attachments – coordination n Implemented system highlights interactions

![Lexical Ambiguity: POS n verb-noun I saw her duck. I saw [NP her duck]. Lexical Ambiguity: POS n verb-noun I saw her duck. I saw [NP her duck].](https://present5.com/presentation/56b384a42542592df55f1fd81b4208da/image-5.jpg)

Lexical Ambiguity: POS n verb-noun I saw her duck. I saw [NP her duck]. I saw [NP her] [VP duck]. n noun-adjective the [N/A mean] rule that child is [A mean]. he calculated the [N mean].

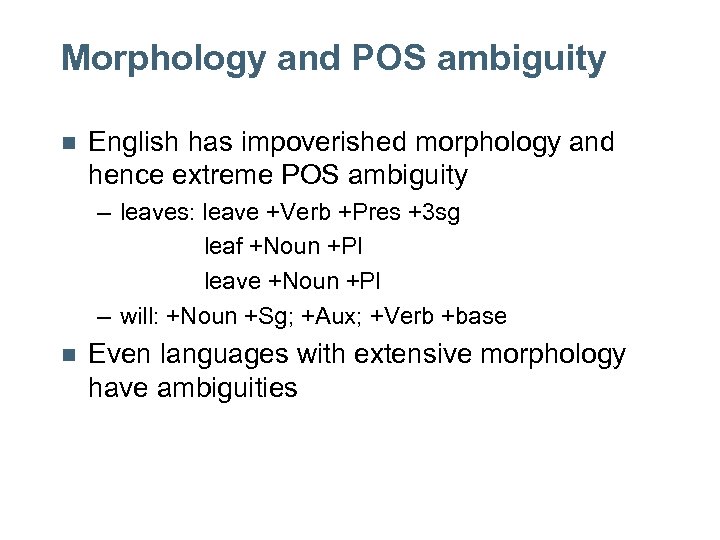

Morphology and POS ambiguity n English has impoverished morphology and hence extreme POS ambiguity – leaves: leave +Verb +Pres +3 sg leaf +Noun +Pl leave +Noun +Pl – will: +Noun +Sg; +Aux; +Verb +base n Even languages with extensive morphology have ambiguities

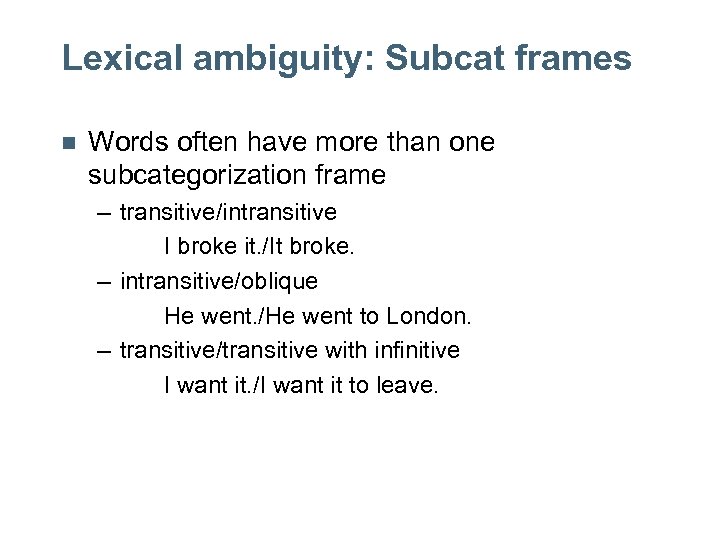

Lexical ambiguity: Subcat frames n Words often have more than one subcategorization frame – transitive/intransitive I broke it. /It broke. – intransitive/oblique He went. /He went to London. – transitive/transitive with infinitive I want it. /I want it to leave.

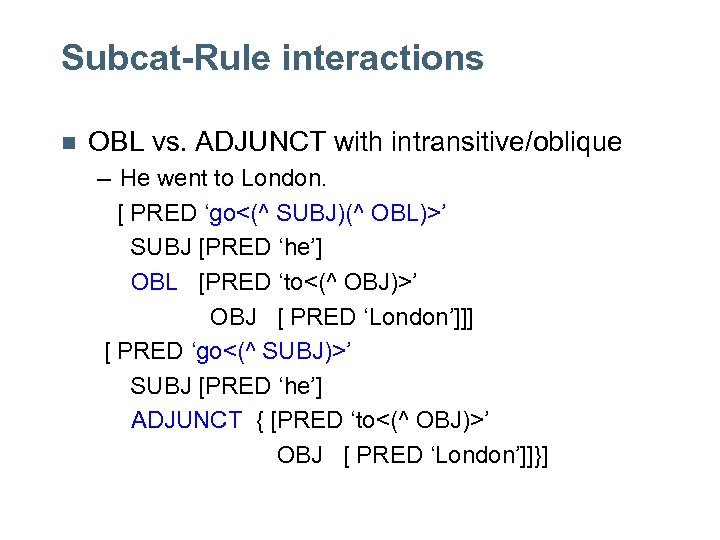

Subcat-Rule interactions n OBL vs. ADJUNCT with intransitive/oblique – He went to London. [ PRED ‘go<(^ SUBJ)(^ OBL)>’ SUBJ [PRED ‘he’] OBL [PRED ‘to<(^ OBJ)>’ OBJ [ PRED ‘London’]]] [ PRED ‘go<(^ SUBJ)>’ SUBJ [PRED ‘he’] ADJUNCT { [PRED ‘to<(^ OBJ)>’ OBJ [ PRED ‘London’]]}]

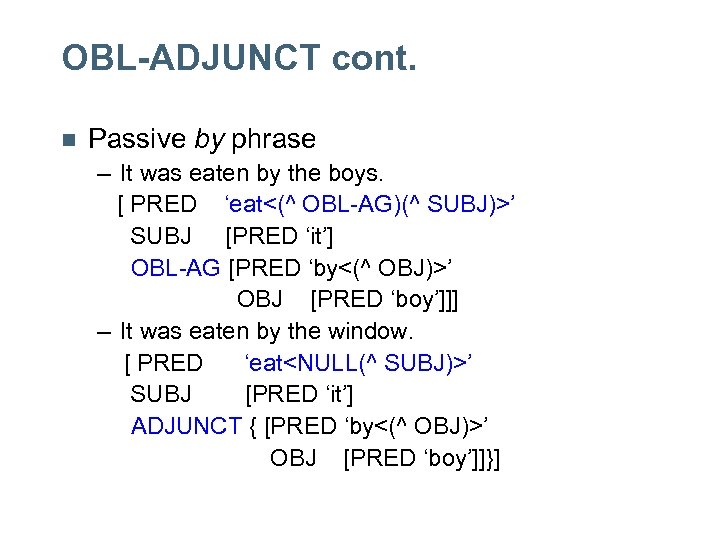

OBL-ADJUNCT cont. n Passive by phrase – It was eaten by the boys. [ PRED ‘eat<(^ OBL-AG)(^ SUBJ)>’ SUBJ [PRED ‘it’] OBL-AG [PRED ‘by<(^ OBJ)>’ OBJ [PRED ‘boy’]]] – It was eaten by the window. [ PRED ‘eat<NULL(^ SUBJ)>’ SUBJ [PRED ‘it’] ADJUNCT { [PRED ‘by<(^ OBJ)>’ OBJ [PRED ‘boy’]]}]

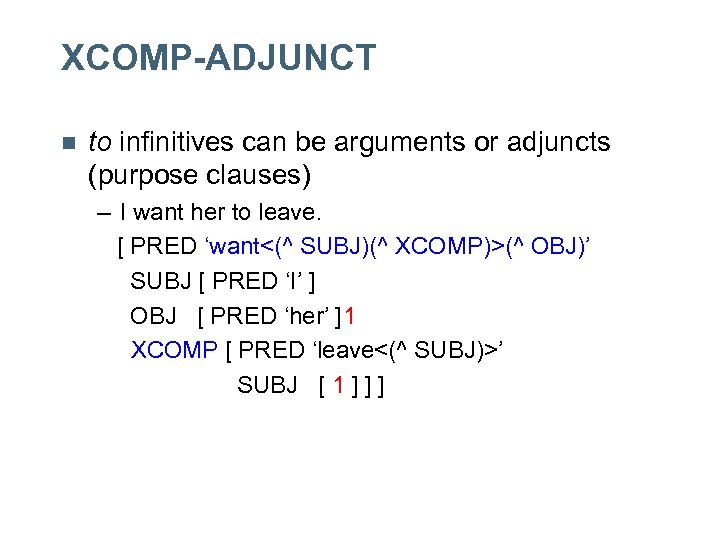

XCOMP-ADJUNCT n to infinitives can be arguments or adjuncts (purpose clauses) – I want her to leave. [ PRED ‘want<(^ SUBJ)(^ XCOMP)>(^ OBJ)’ SUBJ [ PRED ‘I’ ] OBJ [ PRED ‘her’ ]1 XCOMP [ PRED ‘leave<(^ SUBJ)>’ SUBJ [ 1 ] ] ]

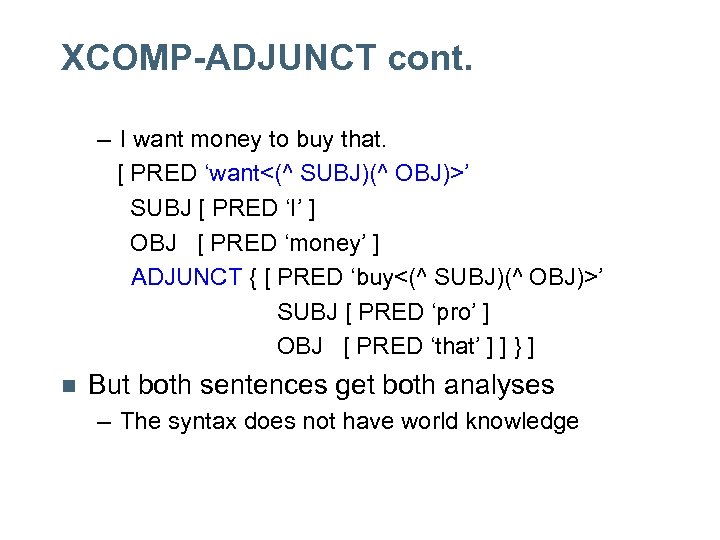

XCOMP-ADJUNCT cont. – I want money to buy that. [ PRED ‘want<(^ SUBJ)(^ OBJ)>’ SUBJ [ PRED ‘I’ ] OBJ [ PRED ‘money’ ] ADJUNCT { [ PRED ‘buy<(^ SUBJ)(^ OBJ)>’ SUBJ [ PRED ‘pro’ ] OBJ [ PRED ‘that’ ] ] } ] n But both sentences get both analyses – The syntax does not have world knowledge

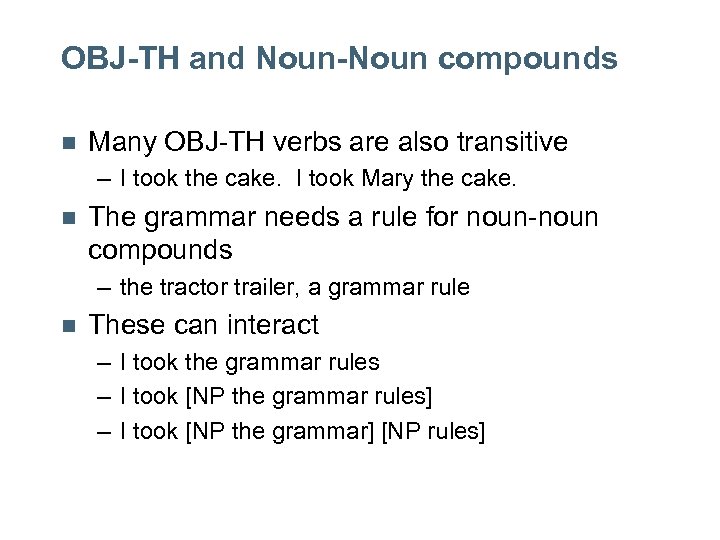

OBJ-TH and Noun-Noun compounds n Many OBJ-TH verbs are also transitive – I took the cake. I took Mary the cake. n The grammar needs a rule for noun-noun compounds – the tractor trailer, a grammar rule n These can interact – I took the grammar rules – I took [NP the grammar rules] – I took [NP the grammar] [NP rules]

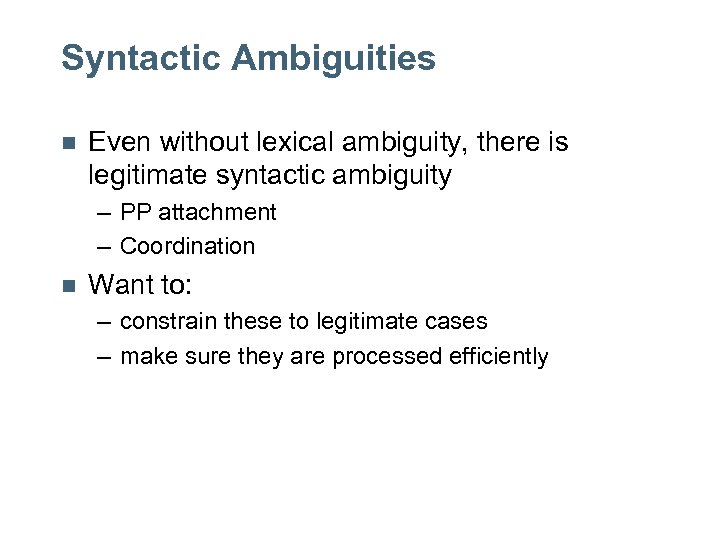

Syntactic Ambiguities n Even without lexical ambiguity, there is legitimate syntactic ambiguity – PP attachment – Coordination n Want to: – constrain these to legitimate cases – make sure they are processed efficiently

PP Attachment n n PP adjuncts can attach to VPs and NPs Strings of PPs in the VP are ambiguous – I see the girl with the telescope. I see [the girl with the telescope]. I see [the girl] [with the telescope]. n This ambiguity is reflected in: – the c-structure (constituency) – the f-structure (ADJUNCT attachment)

PP attachment cont. n This ambiguity multiplies with more PPs – I saw the girl with the telescope in the garden on the lawn n The syntax has no way to determine the attachment, even if humans can.

Ambiguity in coordination n Vacuous ambiguity of non-branching trees – this can be avoided (pushup) n Legitimate ambiguity – old men and women old [N men and women] [NP old men ] and [NP women ] – I turned and pushed the cart I [V turned and pushed ] the cart I [VP turned ] and [VP pushed the cart ]

Grammar Engineering and ambiguity n n Large-scale grammars will have lexical and syntactic ambiguities With real data they will interact, resulting in many parses – these parses are (syntactically) legitimate – they are not intuitive to humans (but more plausible words can make them better) n XLE provides tools to manage ambiguity – grammar writer interfaces – computation

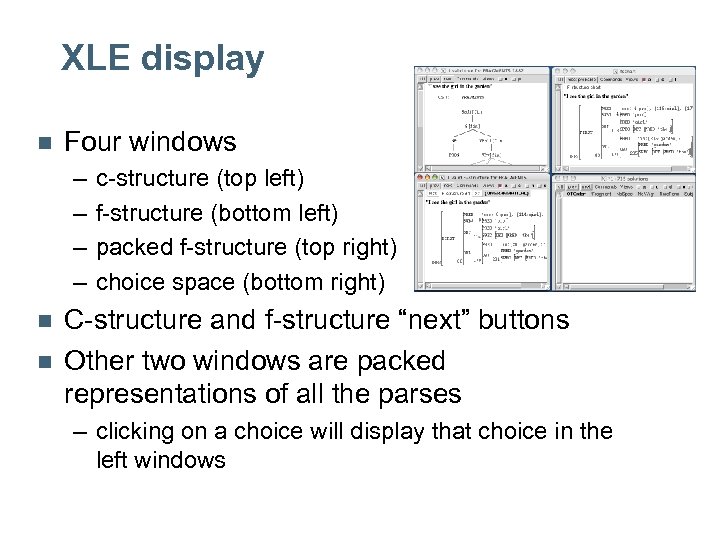

XLE display n Four windows – – n n c-structure (top left) f-structure (bottom left) packed f-structure (top right) choice space (bottom right) C-structure and f-structure “next” buttons Other two windows are packed representations of all the parses – clicking on a choice will display that choice in the left windows

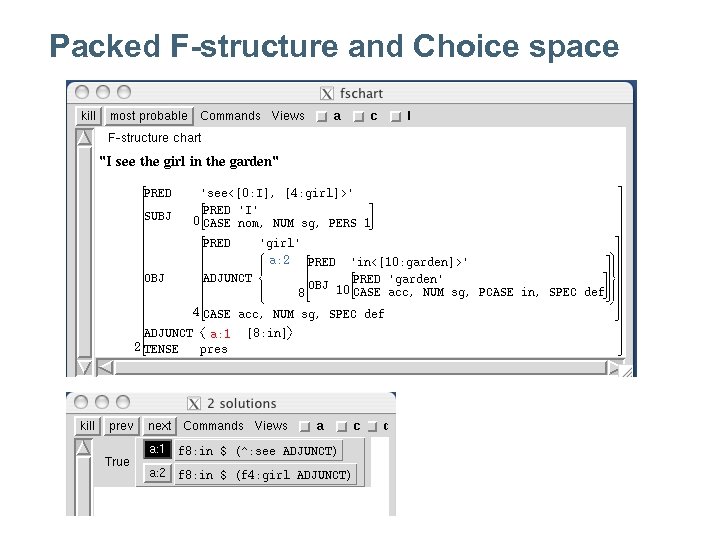

Example n n I see the girl in the garden PP attachment ambiguity – both ADJUNCTS – difference in ADJUNCT-TYPE

Packed F-structure and Choice space

Sorting through the analyses n “Next” button on c-structure and then fstructure windows – impractical with many choices – independent vs. interacting ambiguities – hard to detect spurious ambiguity n The packed representations show all the analyses at once – (in)dependence more visible – click on choice to view – spurious ambiguities appear as blank choices » but legitimate ambiguities may also do so

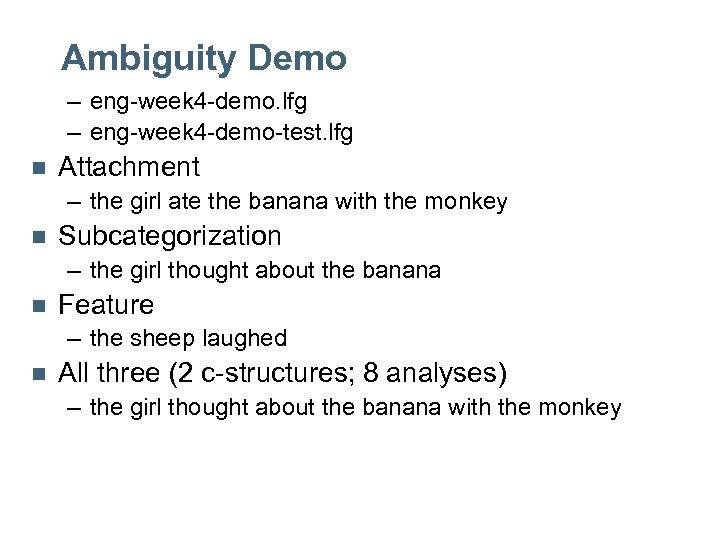

Ambiguity Demo – eng-week 4 -demo. lfg – eng-week 4 -demo-test. lfg n Attachment – the girl ate the banana with the monkey n Subcategorization – the girl thought about the banana n Feature – the sheep laughed n All three (2 c-structures; 8 analyses) – the girl thought about the banana with the monkey

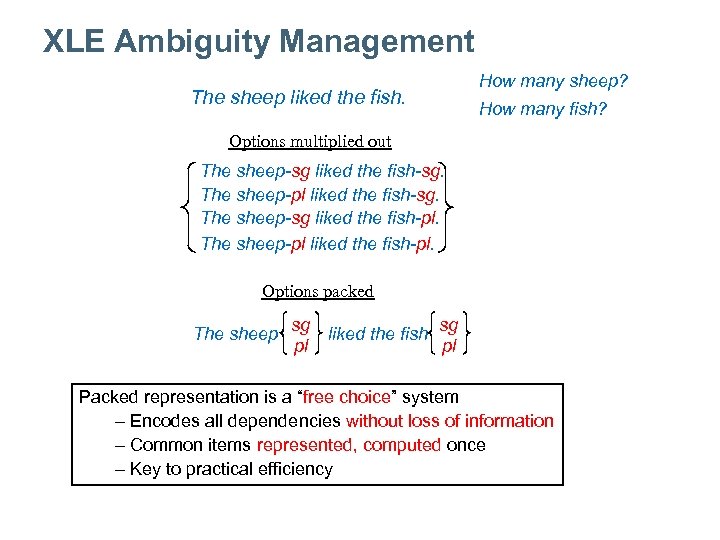

XLE Ambiguity Management The sheep liked the fish. How many sheep? How many fish? Options multiplied out The sheep-sg liked the fish-sg. The sheep-pl liked the fish-sg. The sheep-sg liked the fish-pl. The sheep-pl liked the fish-pl. Options packed The sheep sg sg liked the fish pl pl Packed representation is a “free choice” system – Encodes all dependencies without loss of information – Common items represented, computed once – Key to practical efficiency

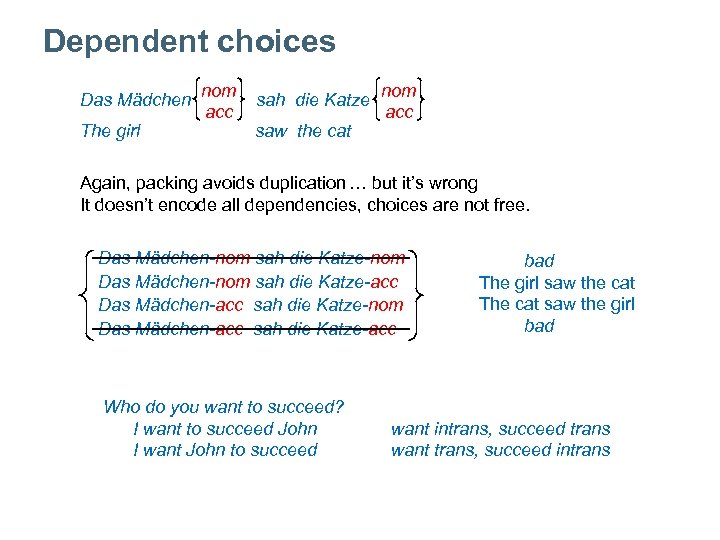

Dependent choices Das Mädchen The girl nom acc sah die Katze nom acc saw the cat Again, packing avoids duplication … but it’s wrong It doesn’t encode all dependencies, choices are not free. Das Mädchen-nom sah die Katze-nom Das Mädchen-nom sah die Katze-acc Das Mädchen-acc sah die Katze-nom Das Mädchen-acc sah die Katze-acc Who do you want to succeed? I want to succeed John I want John to succeed bad The girl saw the cat The cat saw the girl bad want intrans, succeed trans want trans, succeed intrans

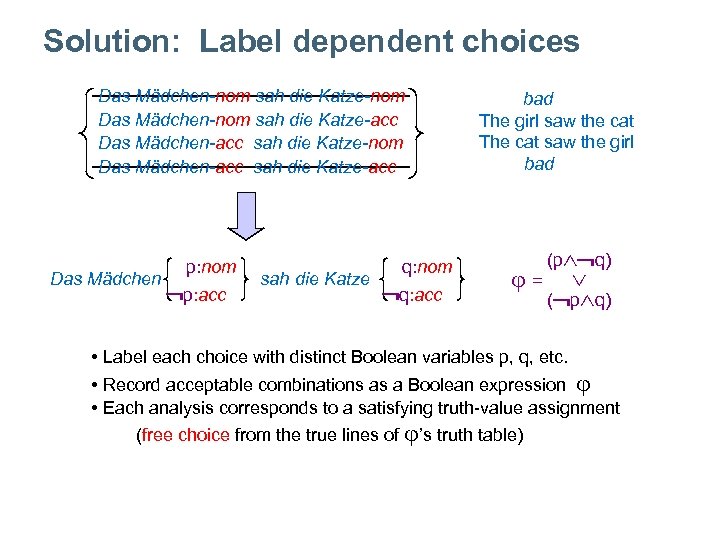

Solution: Label dependent choices Das Mädchen-nom sah die Katze-nom Das Mädchen-nom sah die Katze-acc Das Mädchen-acc sah die Katze-nom Das Mädchen-acc sah die Katze-acc Das Mädchen p: nom p: acc sah die Katze q: nom q: acc bad The girl saw the cat The cat saw the girl bad = (p q) ( p q) • Label each choice with distinct Boolean variables p, q, etc. • Record acceptable combinations as a Boolean expression • Each analysis corresponds to a satisfying truth-value assignment (free choice from the true lines of ’s truth table)

Ambiguity and Robustness n n Large-scale grammars are massively ambiguous Grammars parsing real text need to be robust – "loosening" rules to allow robustness increases ambiguity even more n Need a way to control the ambiguity – version of Optimality Theory (OT)

Theoretical OT n n Grammar has a set of violable constraints Constraints are ranked by each language – This gives cross-linguistic variation n Candidates (analyses) compete – John waited for Mary. vs. John waited for 3 hours. n n Constraint ranking determines winning candidate Issues for XLE – Candidates can be very ungrammatical » we have a grammar to produce grammatical analyses » even with robust, ungrammatical analyses, these are controlled – Generation, not parsing direction » we know what the string is already » for generation we have a very specified analysis

XLE OT n n Incorporate idea of ranking and (dis)preference Filter syntactic and lexical ambiguity Reconcile robustness and accuracy Allow parsing grammar to be used for generation

XLE OT Implementation n OT marks in – grammar rules – templates – lexical entries n CONFIG states – preference vs. dispreference – ranking – parsing vs. generation orders

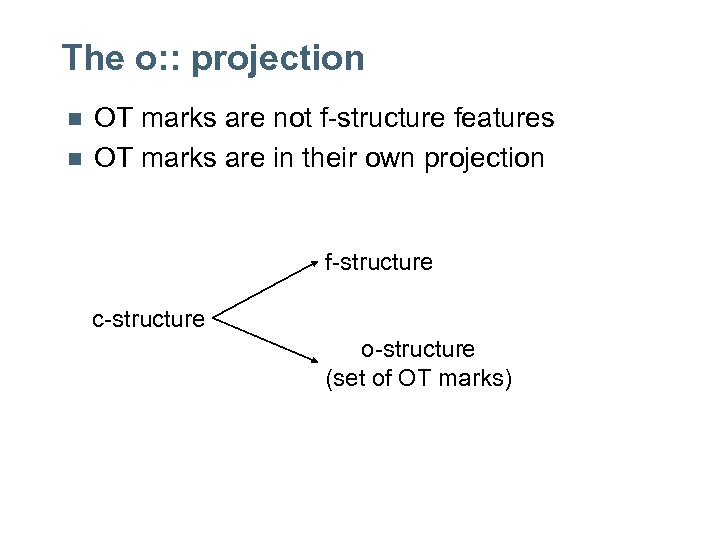

The o: : projection n n OT marks are not f-structure features OT marks are in their own projection f-structure c-structure o-structure (set of OT marks)

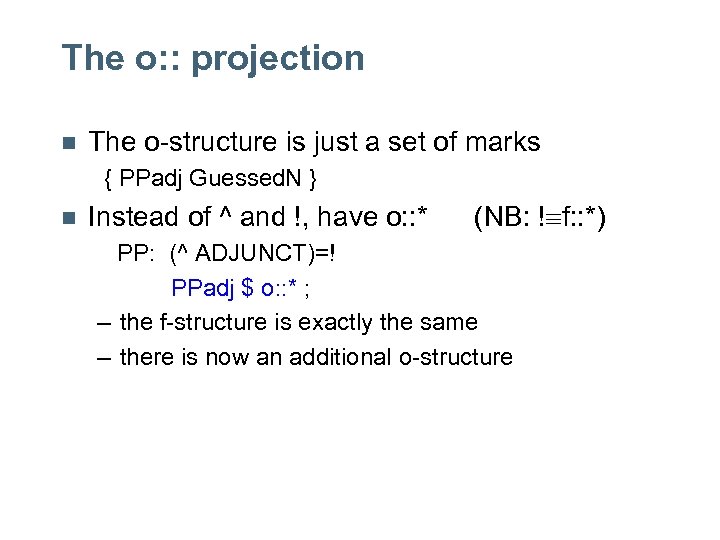

The o: : projection n The o-structure is just a set of marks { PPadj Guessed. N } n Instead of ^ and !, have o: : * (NB: ! f: : *) PP: (^ ADJUNCT)=! PPadj $ o: : * ; – the f-structure is exactly the same – there is now an additional o-structure

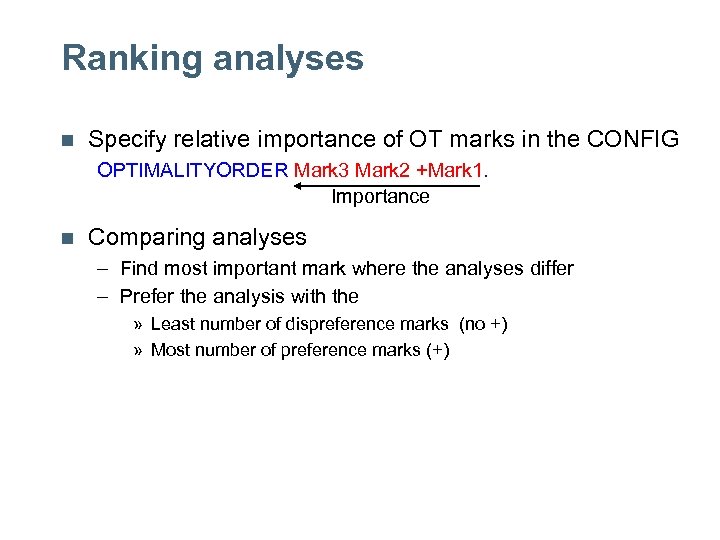

Ranking analyses n Specify relative importance of OT marks in the CONFIG OPTIMALITYORDER Mark 3 Mark 2 +Mark 1. Importance n Comparing analyses – Find most important mark where the analyses differ – Prefer the analysis with the » Least number of dispreference marks (no +) » Most number of preference marks (+)

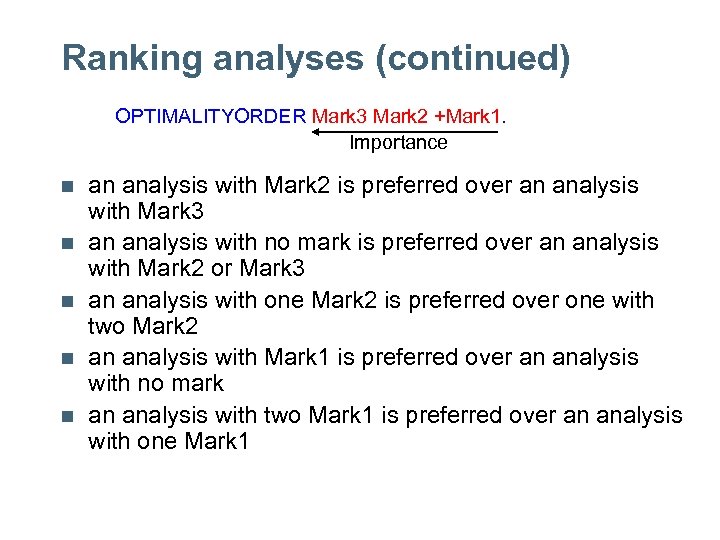

Ranking analyses (continued) OPTIMALITYORDER Mark 3 Mark 2 +Mark 1. Importance n n n an analysis with Mark 2 is preferred over an analysis with Mark 3 an analysis with no mark is preferred over an analysis with Mark 2 or Mark 3 an analysis with one Mark 2 is preferred over one with two Mark 2 an analysis with Mark 1 is preferred over an analysis with no mark an analysis with two Mark 1 is preferred over an analysis with one Mark 1

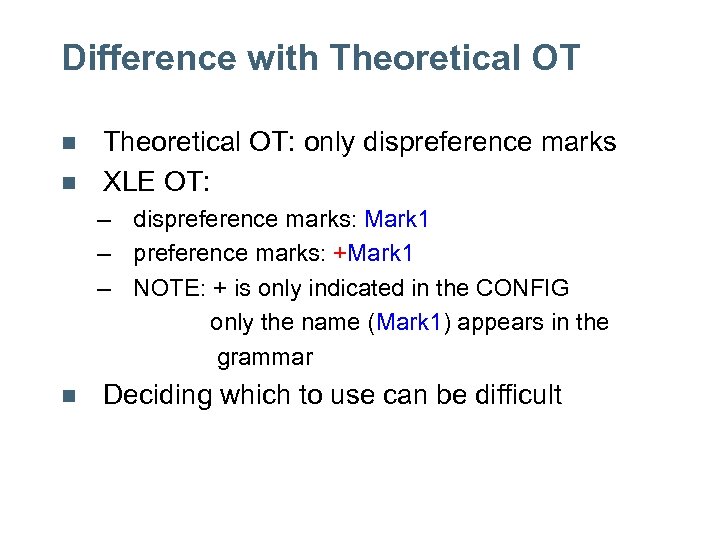

Difference with Theoretical OT n n Theoretical OT: only dispreference marks XLE OT: – dispreference marks: Mark 1 – preference marks: +Mark 1 – NOTE: + is only indicated in the CONFIG only the name (Mark 1) appears in the grammar n Deciding which to use can be difficult

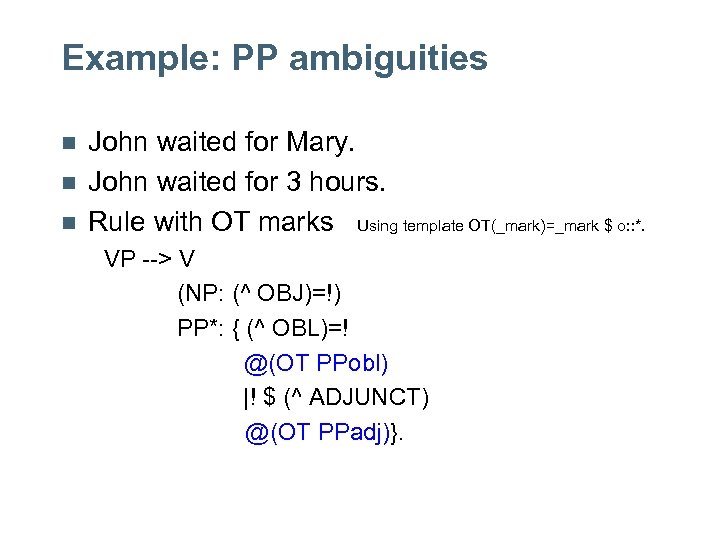

Example: PP ambiguities n n n John waited for Mary. John waited for 3 hours. Rule with OT marks Using template OT(_mark)=_mark $ o: : *. VP --> V (NP: (^ OBJ)=!) PP*: { (^ OBL)=! @(OT PPobl) |! $ (^ ADJUNCT) @(OT PPadj)}.

![Basic Structures John waited for Mary f-str: [ PRED 'wait<SUBJ>' SUBJ [ PRED 'John'] Basic Structures John waited for Mary f-str: [ PRED 'wait<SUBJ>' SUBJ [ PRED 'John']](https://present5.com/presentation/56b384a42542592df55f1fd81b4208da/image-36.jpg)

Basic Structures John waited for Mary f-str: [ PRED 'wait<SUBJ>' SUBJ [ PRED 'John'] ADJ {[ PRED 'for<OBJ>' OBJ [ PRED 'Mary' ]]}] o-str: { PPadj } John waited for Mary f-str: [ PRED 'wait<SUBJ OBL>' SUBJ [ PRED 'John'] OBL [ PRED 'for<OBJ>' OBJ [ PRED 'Mary' ]]] o-str: { PPobl }

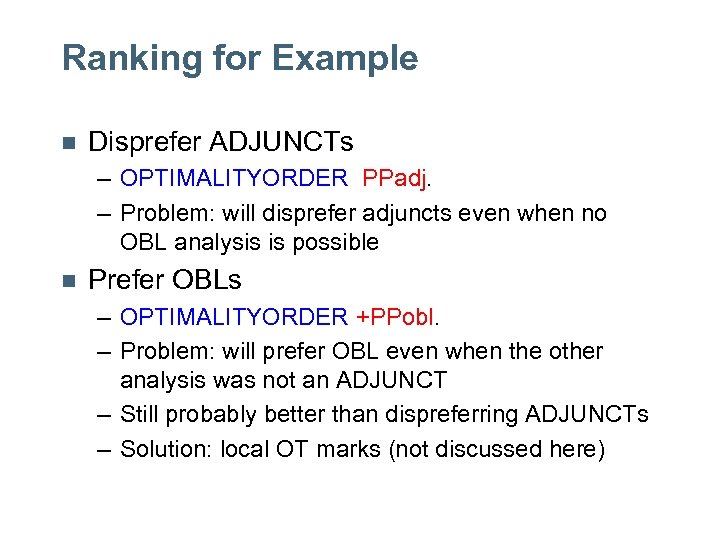

Ranking for Example n Disprefer ADJUNCTs – OPTIMALITYORDER PPadj. – Problem: will disprefer adjuncts even when no OBL analysis is possible n Prefer OBLs – OPTIMALITYORDER +PPobl. – Problem: will prefer OBL even when the other analysis was not an ADJUNCT – Still probably better than dispreferring ADJUNCTs – Solution: local OT marks (not discussed here)

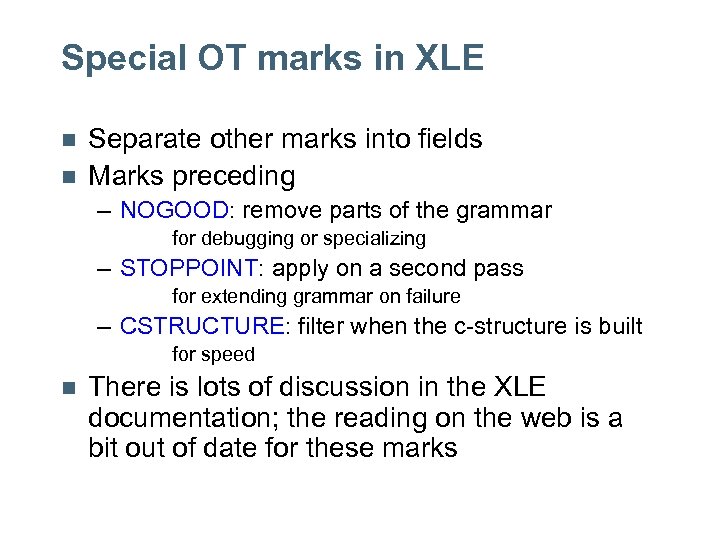

Special OT marks in XLE n n Separate other marks into fields Marks preceding – NOGOOD: remove parts of the grammar for debugging or specializing – STOPPOINT: apply on a second pass for extending grammar on failure – CSTRUCTURE: filter when the c-structure is built for speed n There is lots of discussion in the XLE documentation; the reading on the web is a bit out of date for these marks

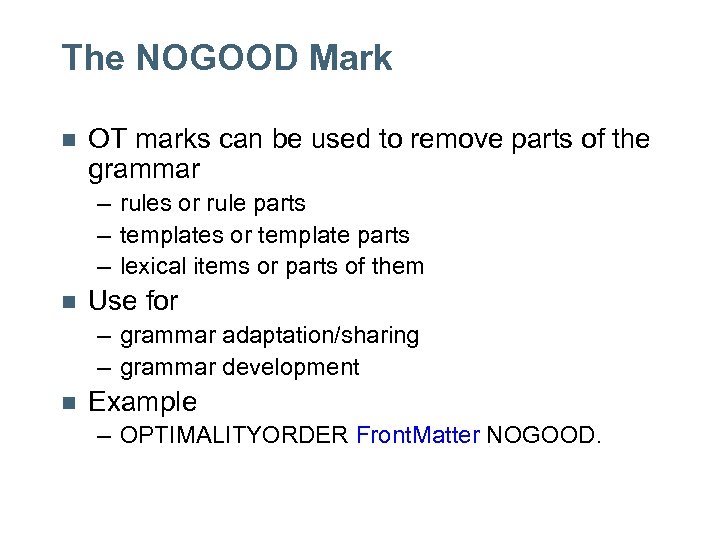

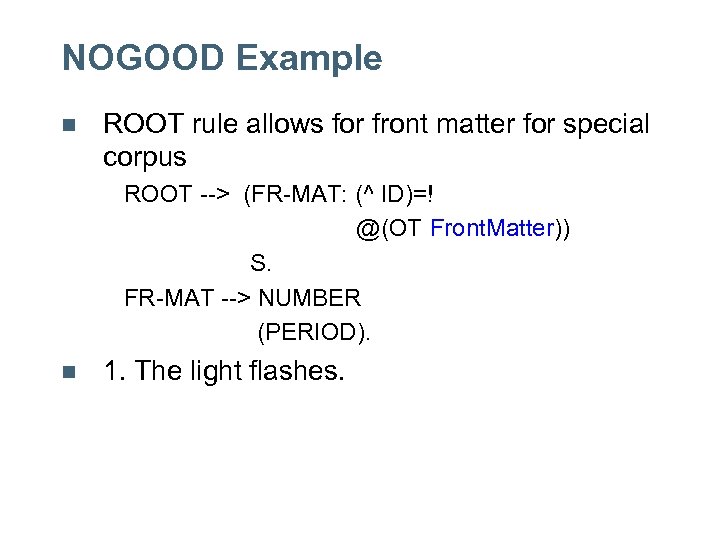

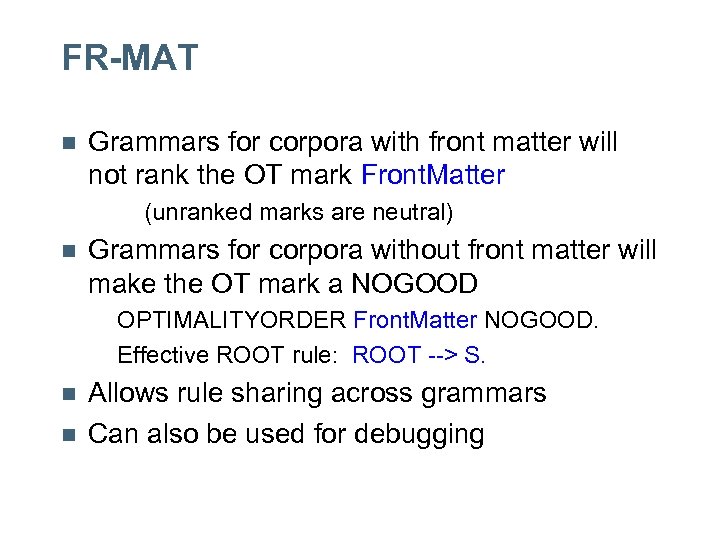

The NOGOOD Mark n OT marks can be used to remove parts of the grammar – rules or rule parts – templates or template parts – lexical items or parts of them n Use for – grammar adaptation/sharing – grammar development n Example – OPTIMALITYORDER Front. Matter NOGOOD.

NOGOOD Example n ROOT rule allows for front matter for special corpus ROOT --> (FR-MAT: (^ ID)=! @(OT Front. Matter)) S. FR-MAT --> NUMBER (PERIOD). n 1. The light flashes.

FR-MAT n Grammars for corpora with front matter will not rank the OT mark Front. Matter (unranked marks are neutral) n Grammars for corpora without front matter will make the OT mark a NOGOOD OPTIMALITYORDER Front. Matter NOGOOD. Effective ROOT rule: ROOT --> S. n n Allows rule sharing across grammars Can also be used for debugging

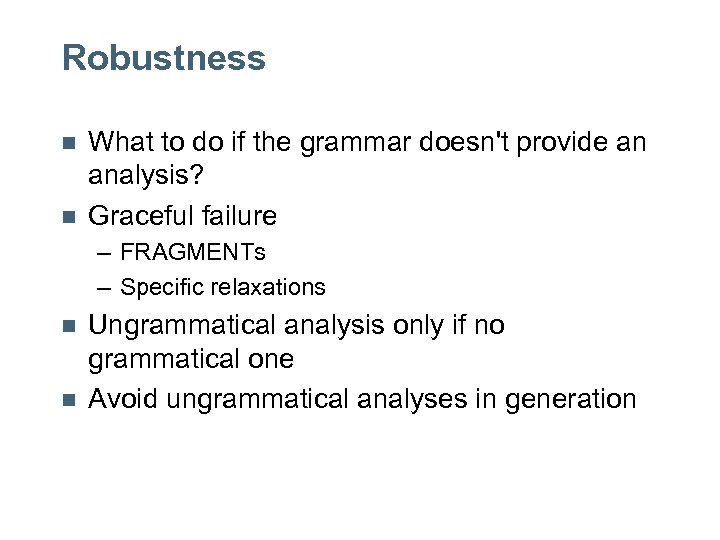

Robustness n n What to do if the grammar doesn't provide an analysis? Graceful failure – FRAGMENTs – Specific relaxations n n Ungrammatical analysis only if no grammatical one Avoid ungrammatical analyses in generation

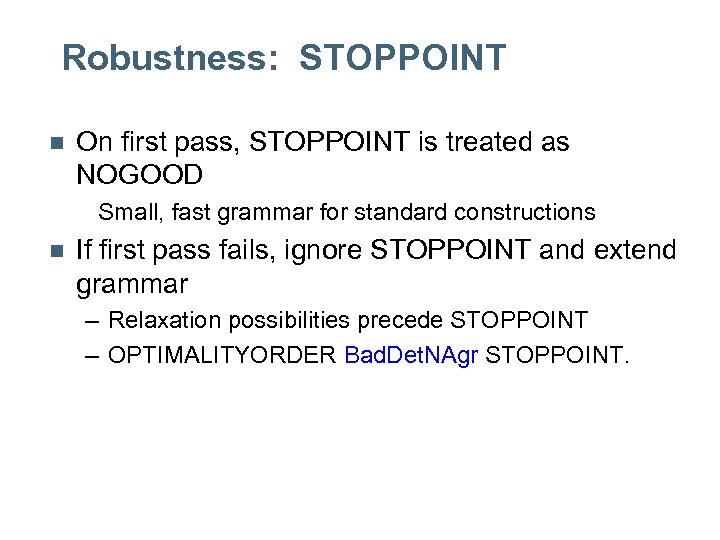

Robustness: STOPPOINT n On first pass, STOPPOINT is treated as NOGOOD Small, fast grammar for standard constructions n If first pass fails, ignore STOPPOINT and extend grammar – Relaxation possibilities precede STOPPOINT – OPTIMALITYORDER Bad. Det. NAgr STOPPOINT.

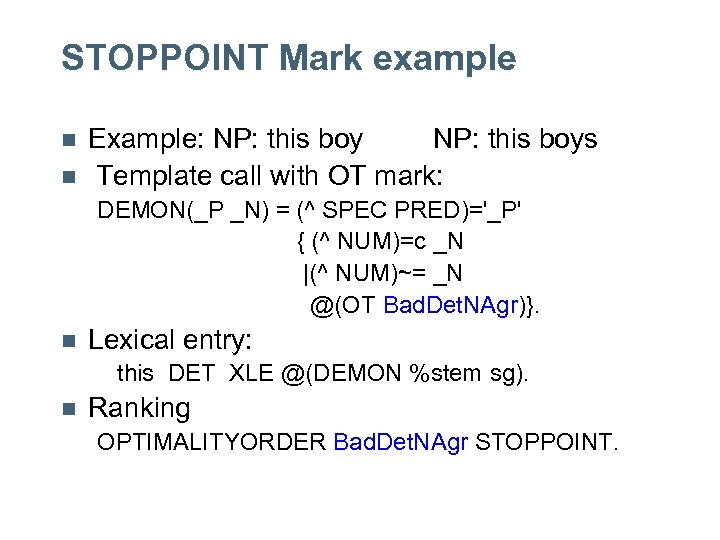

STOPPOINT Mark example n n Example: NP: this boys Template call with OT mark: DEMON(_P _N) = (^ SPEC PRED)='_P' { (^ NUM)=c _N |(^ NUM)~= _N @(OT Bad. Det. NAgr)}. n Lexical entry: this DET XLE @(DEMON %stem sg). n Ranking OPTIMALITYORDER Bad. Det. NAgr STOPPOINT.

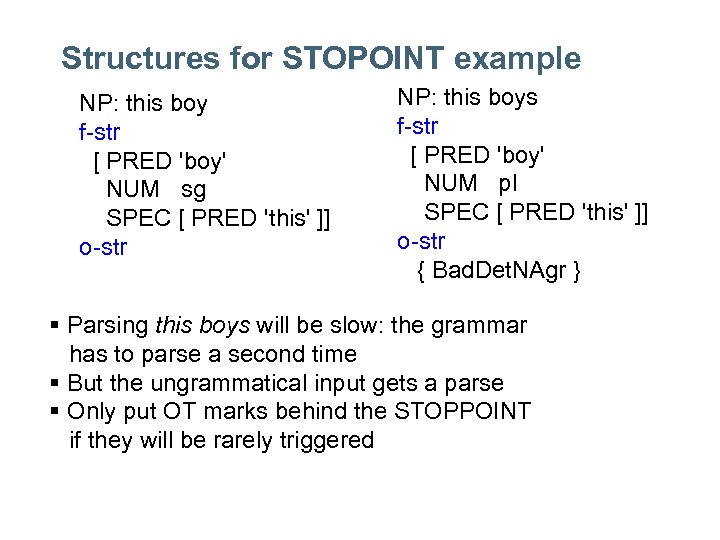

Structures for STOPOINT example NP: this boy f-str [ PRED 'boy' NUM sg SPEC [ PRED 'this' ]] o-str NP: this boys f-str [ PRED 'boy' NUM pl SPEC [ PRED 'this' ]] o-str { Bad. Det. NAgr } § Parsing this boys will be slow: the grammar has to parse a second time § But the ungrammatical input gets a parse § Only put OT marks behind the STOPPOINT if they will be rarely triggered

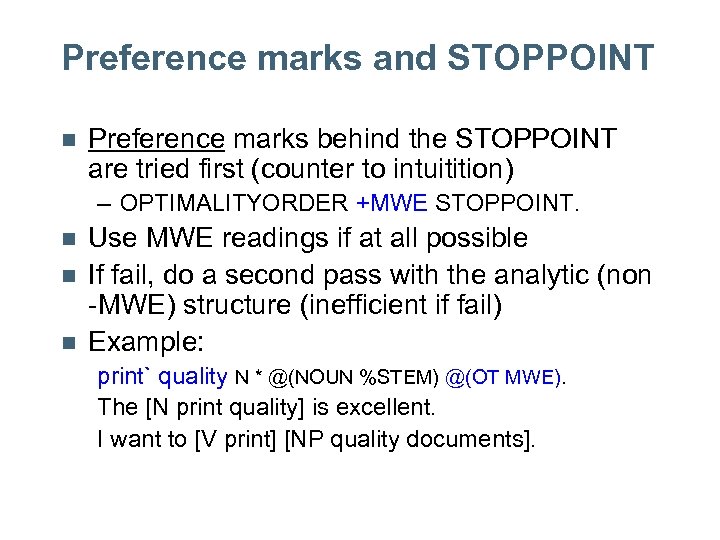

Preference marks and STOPPOINT n Preference marks behind the STOPPOINT are tried first (counter to intuitition) – OPTIMALITYORDER +MWE STOPPOINT. n n n Use MWE readings if at all possible If fail, do a second pass with the analytic (non -MWE) structure (inefficient if fail) Example: print` quality N * @(NOUN %STEM) @(OT MWE). The [N print quality] is excellent. I want to [V print] [NP quality documents].

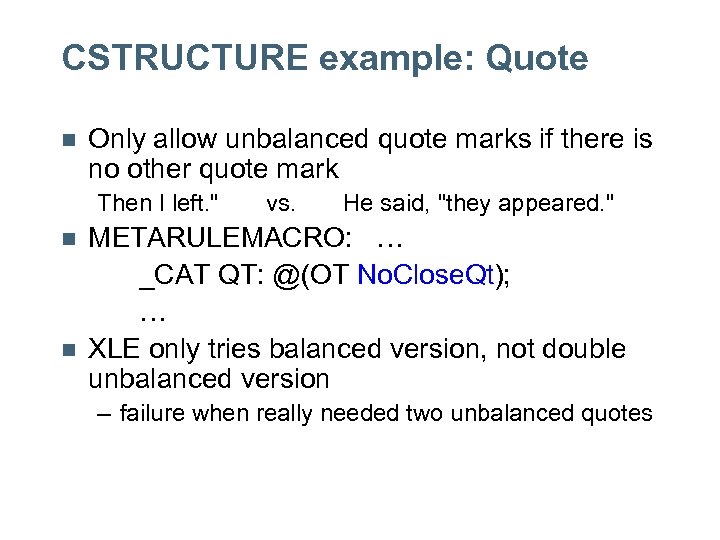

CSTRUCTURE Marks n Apply marks before f-structure constraints are processed – OPTIMALITYORDER No. Close. Quote Guessed CSTRUCTURE. n n Improve performance by filtering early May loose some analyses – coverage/efficiency tradeoff

CSTRUCTURE example: Guessed n Only use guessed form if another form is not found in the morphology/lexicon – OPTIMALITYORDER Guessed CSTRUCTURE. n Trade-off: lose some parses, but much faster The foobar is good. no entry for foobar ==> parse with guessed N The audio is good. audio: only A in morphology ==> no parse

CSTRUCTURE example: Quote n Only allow unbalanced quote marks if there is no other quote mark Then I left. " n n vs. He said, "they appeared. " METARULEMACRO: … _CAT QT: @(OT No. Close. Qt); … XLE only tries balanced version, not double unbalanced version – failure when really needed two unbalanced quotes

Combining the OT marks n All the types of OT marks can be used in one grammar – ordering of NOGOOD, CSTRUCTURE, STOPPOINT are important n Example OPTIMALITYORDER Verbmobil NOGOOD Guessed CSTRUCTURE +MWE Fragment STOPPOINT Rare. Form Stranded. P +Obl.

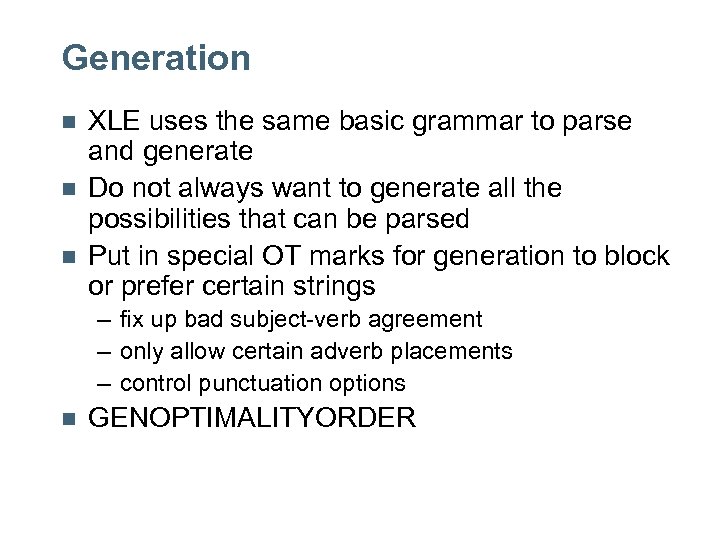

Other Features n Grouping: have marks treated as being of equal importance – OPTIMALITYORDER (Paren Appositive) Adjunct. n Ungrammatical markup: have XLE report analyses with this mark with a * – these are treated like any dispreference mark for determining the optimal analyses – OPTIMALITYORDER *No. Det. Agr STOPPOINT.

Generation n XLE uses the same basic grammar to parse and generate Do not always want to generate all the possibilities that can be parsed Put in special OT marks for generation to block or prefer certain strings – fix up bad subject-verb agreement – only allow certain adverb placements – control punctuation options n GENOPTIMALITYORDER

OT Marks: Main points n n n Ambiguity: broad coverage results in ambiguity – OT marks allow preferences Robustness: want fall back parses only when regular parses fail – OT marks allow multipass grammar XLE provides for complex orderings of OT marks – NOGOOD, CSTRUCTURE, STOPPOINT – preference, dispreference, ungrammatical – see the XLE documentation for details

FRAGMENT grammar n What to do when the grammar does not get a parse – always want some type of output – want the output to be maximally useful n Why might it fail: – construction not covered yet – "bad" input – took too long (XLE parsing parameters)

Grammar engineering approach n n First try to get a complete parse If fail, build up chunks that get complete parses (c-str and f-str) Have a fall back for things without even chunk parses Link these chunks and fall backs together in a single f-structure

Basic idea n n n XLE has a REPARSECAT which it tries if there is no complete parse Grammar writer specifies what category the possible chunks are OT marks are used to – build the fewest chunks possible – disprefer using the fall back over the chunks

Sample output n n the dog appears. Split into: – "token" the – sentence "the dog appears" – ignore the period

C-structure

F-structure

How to get this FRAGMENTS --> { NP: (^ FIRST)=! @(OT-MARK Fragment) |S: (^ FIRST)=! @(OT-MARK Fragment) |TOKEN: (^ FIRST)=! @(OT-MARK Fragment) } (FRAGMENTS: (^ REST)=! ). Lexicon: -token TOKEN * (^ TOKEN)=%stem @(OT-MARK Token).

![Why First-Rest? n FIRST-REST [ FIRST [ PRED …] REST [ FIRST [ PRED Why First-Rest? n FIRST-REST [ FIRST [ PRED …] REST [ FIRST [ PRED](https://present5.com/presentation/56b384a42542592df55f1fd81b4208da/image-61.jpg)

Why First-Rest? n FIRST-REST [ FIRST [ PRED …] REST [ FIRST [ PRED … ] REST … ] ] – Efficient – Encodes order n Possible alternative: set { [ PRED … ] } – Not as efficient (copying) – Even less efficient if mark scope facts

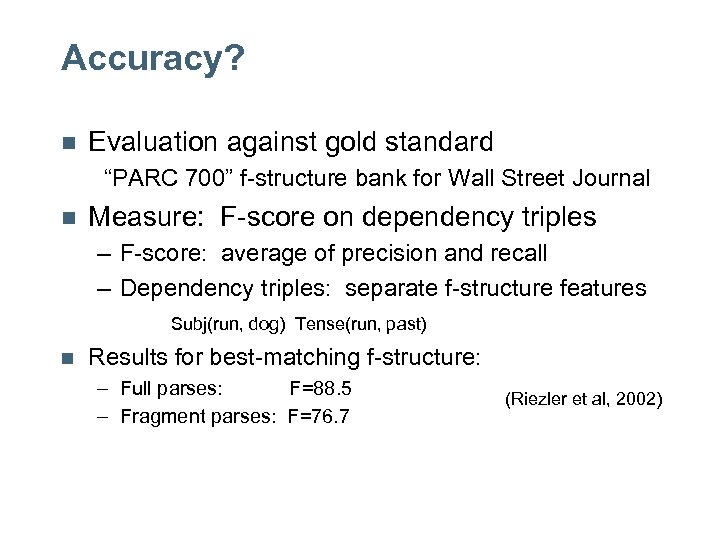

Accuracy? n Evaluation against gold standard “PARC 700” f-structure bank for Wall Street Journal n Measure: F-score on dependency triples – F-score: average of precision and recall – Dependency triples: separate f-structure features Subj(run, dog) Tense(run, past) n Results for best-matching f-structure: – Full parses: F=88. 5 – Fragment parses: F=76. 7 (Riezler et al, 2002)

Fragments summary n n XLE has a chunking strategy for when the grammar does not provide a full analysis Each chunk gets full c-str and f-str The grammar writer defines the chunks based on what will be best for that grammar and application Quality – Fragments have reasonable but degraded f-scores – Usefulness in applications is being tested

56b384a42542592df55f1fd81b4208da.ppt