175923f0e00a51729cc20b5405d8a3be.ppt

- Количество слайдов: 91

LEXICON AND LEXICAL SEMANTICS WORDNET 2004/05 ANLE 1

LEXICON AND LEXICAL SEMANTICS WORDNET 2004/05 ANLE 1

Beyond part of speech tagging: lexical information and NL applications NLE applications often need to know the MEANING of words at least, or (e. g. , in the case of spoken dialogue systems), whole utterances Many word-strings express apparently unrelated senses / meanings, even after their POS has been determined Well-known examples: BANK, SCORE, RIGHT, SET, STOCK Homonymy may affect the results of applications such as IR and machine translation The opposite case of different words with the same meaning (SYNONYMY) also important E. g. , for IR systems (synonym expansion) HOMOGRAPHY may affect Speech Synthesis 2004/05 ANLE 2

Beyond part of speech tagging: lexical information and NL applications NLE applications often need to know the MEANING of words at least, or (e. g. , in the case of spoken dialogue systems), whole utterances Many word-strings express apparently unrelated senses / meanings, even after their POS has been determined Well-known examples: BANK, SCORE, RIGHT, SET, STOCK Homonymy may affect the results of applications such as IR and machine translation The opposite case of different words with the same meaning (SYNONYMY) also important E. g. , for IR systems (synonym expansion) HOMOGRAPHY may affect Speech Synthesis 2004/05 ANLE 2

What’s in a lexicon A lexicon is a repository of lexical knowledge Already seen an example of the simplest form of lexicon: a list of words, specifying their POS tag (SYNTACTIC information only) But even for English – let alone languages with a more complex morphology – it makes sense to split WORD FORMS from LEXICAL ENTRIES or LEXEMEs: LEXEME BANK POS: N WORD BANKS LEXEME: BANK SYN: NUM: PLUR And lexical knowledge also includes information about the MEANING of words 2004/05 ANLE 3

What’s in a lexicon A lexicon is a repository of lexical knowledge Already seen an example of the simplest form of lexicon: a list of words, specifying their POS tag (SYNTACTIC information only) But even for English – let alone languages with a more complex morphology – it makes sense to split WORD FORMS from LEXICAL ENTRIES or LEXEMEs: LEXEME BANK POS: N WORD BANKS LEXEME: BANK SYN: NUM: PLUR And lexical knowledge also includes information about the MEANING of words 2004/05 ANLE 3

Meaning …. • Characterizing the meaning of words not easy • Most of the methods considered in these lecture characterize the meaning of a word by stating its relations with other words • This method however doesn’t say much about what the word ACTUALLY mean (e. g. , what can you do with a car) 2004/05 ANLE 4

Meaning …. • Characterizing the meaning of words not easy • Most of the methods considered in these lecture characterize the meaning of a word by stating its relations with other words • This method however doesn’t say much about what the word ACTUALLY mean (e. g. , what can you do with a car) 2004/05 ANLE 4

Lexical resources for CL: MACHINE READABLE DICTIONARIES A traditional DICTIONARY is a database containing information about the PRONUNCIATION of a certain word its possible PARTS of SPEECH its possible SENSES (or MEANINGS) In recent years, most dictionaries have appeared in Machine Readable form (MRD) Oxford English Dictionary Collins Longman Dictionary of Ordinary Contemporary English (LDOCE) 2004/05 ANLE 5

Lexical resources for CL: MACHINE READABLE DICTIONARIES A traditional DICTIONARY is a database containing information about the PRONUNCIATION of a certain word its possible PARTS of SPEECH its possible SENSES (or MEANINGS) In recent years, most dictionaries have appeared in Machine Readable form (MRD) Oxford English Dictionary Collins Longman Dictionary of Ordinary Contemporary English (LDOCE) 2004/05 ANLE 5

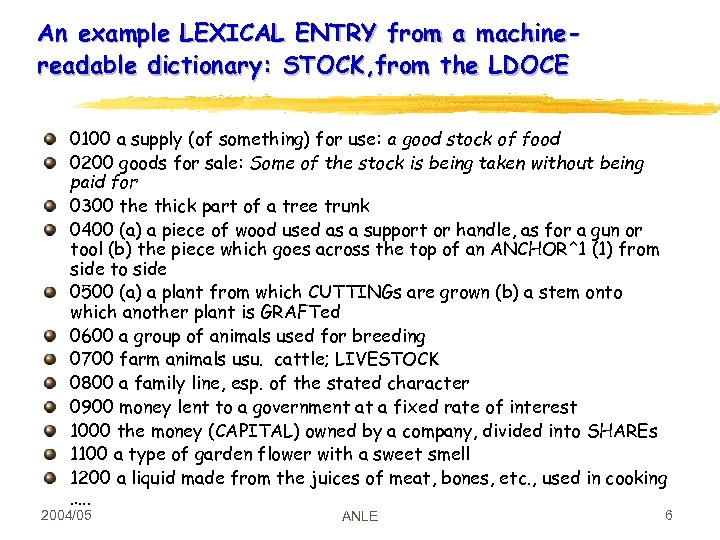

An example LEXICAL ENTRY from a machinereadable dictionary: STOCK, from the LDOCE 0100 a supply (of something) for use: a good stock of food 0200 goods for sale: Some of the stock is being taken without being paid for 0300 the thick part of a tree trunk 0400 (a) a piece of wood used as a support or handle, as for a gun or tool (b) the piece which goes across the top of an ANCHOR^1 (1) from side to side 0500 (a) a plant from which CUTTINGs are grown (b) a stem onto which another plant is GRAFTed 0600 a group of animals used for breeding 0700 farm animals usu. cattle; LIVESTOCK 0800 a family line, esp. of the stated character 0900 money lent to a government at a fixed rate of interest 1000 the money (CAPITAL) owned by a company, divided into SHAREs 1100 a type of garden flower with a sweet smell 1200 a liquid made from the juices of meat, bones, etc. , used in cooking …. . 2004/05 ANLE 6

An example LEXICAL ENTRY from a machinereadable dictionary: STOCK, from the LDOCE 0100 a supply (of something) for use: a good stock of food 0200 goods for sale: Some of the stock is being taken without being paid for 0300 the thick part of a tree trunk 0400 (a) a piece of wood used as a support or handle, as for a gun or tool (b) the piece which goes across the top of an ANCHOR^1 (1) from side to side 0500 (a) a plant from which CUTTINGs are grown (b) a stem onto which another plant is GRAFTed 0600 a group of animals used for breeding 0700 farm animals usu. cattle; LIVESTOCK 0800 a family line, esp. of the stated character 0900 money lent to a government at a fixed rate of interest 1000 the money (CAPITAL) owned by a company, divided into SHAREs 1100 a type of garden flower with a sweet smell 1200 a liquid made from the juices of meat, bones, etc. , used in cooking …. . 2004/05 ANLE 6

Meaning in a dictionary: Homonymy Word-strings like STOCK are used to express apparently unrelated senses / meanings, even in contexts in which their part-of-speech has been determined Other well-known examples: BANK, RIGHT, SET, SCALE An example of the problems homonimy may cause for IR systems Search for 'West Bank' with Google 2004/05 ANLE 7

Meaning in a dictionary: Homonymy Word-strings like STOCK are used to express apparently unrelated senses / meanings, even in contexts in which their part-of-speech has been determined Other well-known examples: BANK, RIGHT, SET, SCALE An example of the problems homonimy may cause for IR systems Search for 'West Bank' with Google 2004/05 ANLE 7

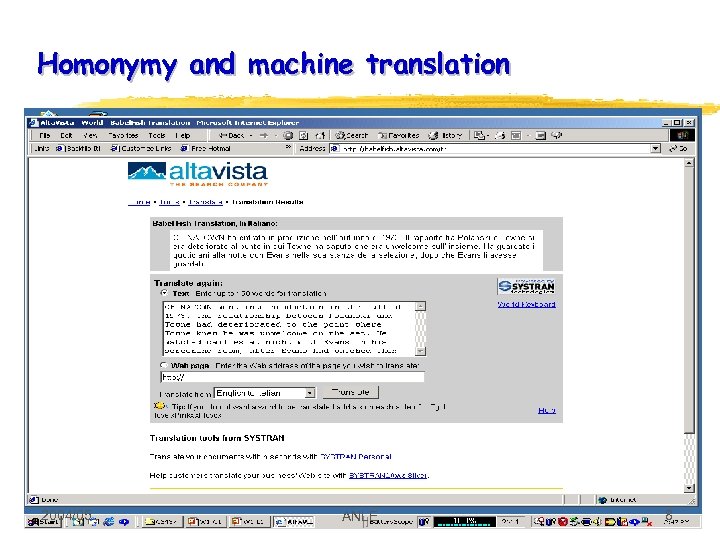

Homonymy and machine translation 2004/05 ANLE 8

Homonymy and machine translation 2004/05 ANLE 8

Pronunciation: homography, homophony HOMOGRAPHS: BASS The expert angler from Dora, Mo was fly-casting for BASS rather than the traditional trout. The curtain rises to the sound of angry dogs baying and ominous BASS chords sounding. Problems caused by homography: text to speech Rhetorical AT&T text to speech Many spelling errors are caused by HOMOPHONES – distinct lexemes with a single pronunciation Its vs. it’s weather vs. whether Examples from the sport page of the Rabbit 2004/05 ANLE 9

Pronunciation: homography, homophony HOMOGRAPHS: BASS The expert angler from Dora, Mo was fly-casting for BASS rather than the traditional trout. The curtain rises to the sound of angry dogs baying and ominous BASS chords sounding. Problems caused by homography: text to speech Rhetorical AT&T text to speech Many spelling errors are caused by HOMOPHONES – distinct lexemes with a single pronunciation Its vs. it’s weather vs. whether Examples from the sport page of the Rabbit 2004/05 ANLE 9

Meaning in MRDs, 2: SYNONYMY Two words are SYNONYMS if they have the same meaning at least in some contexts E. g. , PRICE and FARE; CHEAP and INEXPENSIVE; LAPTOP and NOTEBOOK; HOME and HOUSE I’m looking for a CHEAP FLIGHT / INEXPENSIVE FLIGHT From Roget’s thesaurus: OBLITERATION, erasure, cancellation, deletion But few words are truly synonymous in ALL contexts: I wanna go HOME / ? ? I wanna go HOUSE The flight was CANCELLED / ? ? OBLITERATED / ? ? ? DELETED Knowing about synonyms may help in IR: NOTEBOOK (get LAPTOPs as well) CHEAP PRICE (get INEXPENSIVE FARE) 2004/05 ANLE 11

Meaning in MRDs, 2: SYNONYMY Two words are SYNONYMS if they have the same meaning at least in some contexts E. g. , PRICE and FARE; CHEAP and INEXPENSIVE; LAPTOP and NOTEBOOK; HOME and HOUSE I’m looking for a CHEAP FLIGHT / INEXPENSIVE FLIGHT From Roget’s thesaurus: OBLITERATION, erasure, cancellation, deletion But few words are truly synonymous in ALL contexts: I wanna go HOME / ? ? I wanna go HOUSE The flight was CANCELLED / ? ? OBLITERATED / ? ? ? DELETED Knowing about synonyms may help in IR: NOTEBOOK (get LAPTOPs as well) CHEAP PRICE (get INEXPENSIVE FARE) 2004/05 ANLE 11

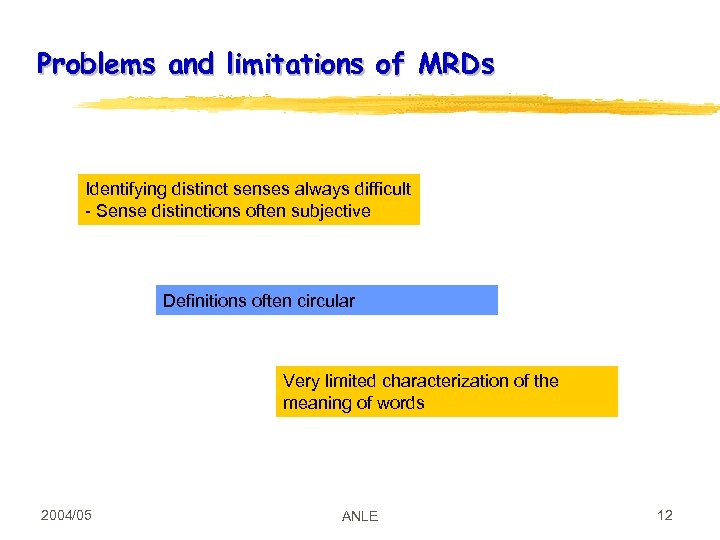

Problems and limitations of MRDs Identifying distinct senses always difficult - Sense distinctions often subjective Definitions often circular Very limited characterization of the meaning of words 2004/05 ANLE 12

Problems and limitations of MRDs Identifying distinct senses always difficult - Sense distinctions often subjective Definitions often circular Very limited characterization of the meaning of words 2004/05 ANLE 12

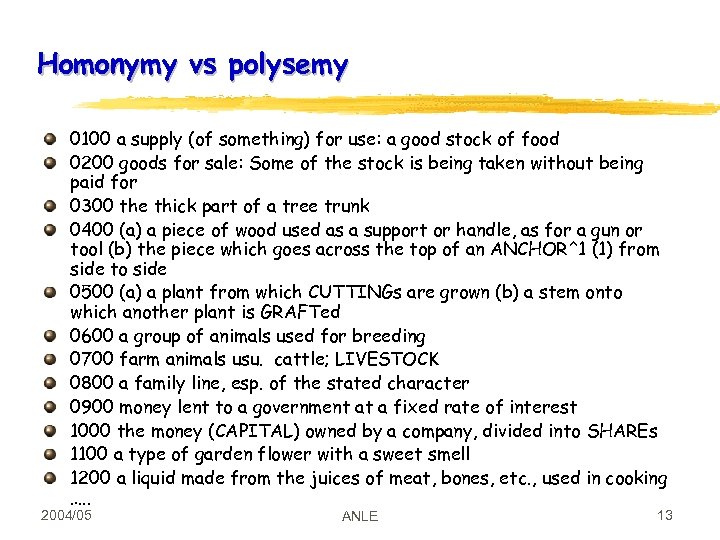

Homonymy vs polysemy 0100 a supply (of something) for use: a good stock of food 0200 goods for sale: Some of the stock is being taken without being paid for 0300 the thick part of a tree trunk 0400 (a) a piece of wood used as a support or handle, as for a gun or tool (b) the piece which goes across the top of an ANCHOR^1 (1) from side to side 0500 (a) a plant from which CUTTINGs are grown (b) a stem onto which another plant is GRAFTed 0600 a group of animals used for breeding 0700 farm animals usu. cattle; LIVESTOCK 0800 a family line, esp. of the stated character 0900 money lent to a government at a fixed rate of interest 1000 the money (CAPITAL) owned by a company, divided into SHAREs 1100 a type of garden flower with a sweet smell 1200 a liquid made from the juices of meat, bones, etc. , used in cooking …. . 2004/05 ANLE 13

Homonymy vs polysemy 0100 a supply (of something) for use: a good stock of food 0200 goods for sale: Some of the stock is being taken without being paid for 0300 the thick part of a tree trunk 0400 (a) a piece of wood used as a support or handle, as for a gun or tool (b) the piece which goes across the top of an ANCHOR^1 (1) from side to side 0500 (a) a plant from which CUTTINGs are grown (b) a stem onto which another plant is GRAFTed 0600 a group of animals used for breeding 0700 farm animals usu. cattle; LIVESTOCK 0800 a family line, esp. of the stated character 0900 money lent to a government at a fixed rate of interest 1000 the money (CAPITAL) owned by a company, divided into SHAREs 1100 a type of garden flower with a sweet smell 1200 a liquid made from the juices of meat, bones, etc. , used in cooking …. . 2004/05 ANLE 13

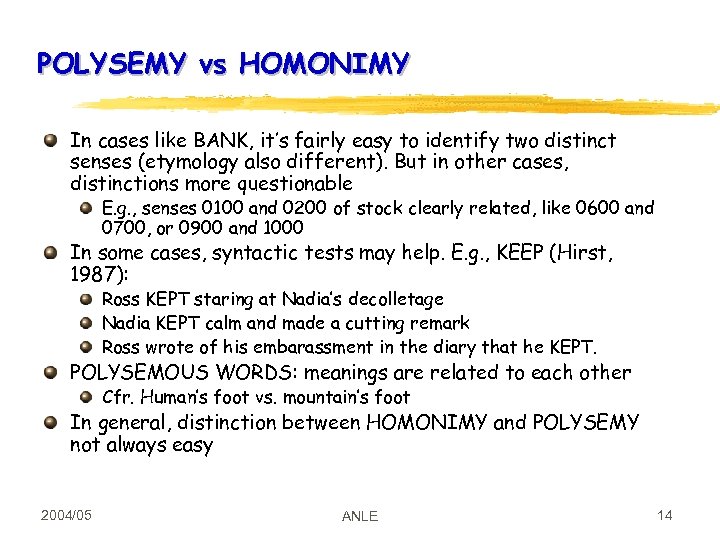

POLYSEMY vs HOMONIMY In cases like BANK, it’s fairly easy to identify two distinct senses (etymology also different). But in other cases, distinctions more questionable E. g. , senses 0100 and 0200 of stock clearly related, like 0600 and 0700, or 0900 and 1000 In some cases, syntactic tests may help. E. g. , KEEP (Hirst, 1987): Ross KEPT staring at Nadia’s decolletage Nadia KEPT calm and made a cutting remark Ross wrote of his embarassment in the diary that he KEPT. POLYSEMOUS WORDS: meanings are related to each other Cfr. Human’s foot vs. mountain’s foot In general, distinction between HOMONIMY and POLYSEMY not always easy 2004/05 ANLE 14

POLYSEMY vs HOMONIMY In cases like BANK, it’s fairly easy to identify two distinct senses (etymology also different). But in other cases, distinctions more questionable E. g. , senses 0100 and 0200 of stock clearly related, like 0600 and 0700, or 0900 and 1000 In some cases, syntactic tests may help. E. g. , KEEP (Hirst, 1987): Ross KEPT staring at Nadia’s decolletage Nadia KEPT calm and made a cutting remark Ross wrote of his embarassment in the diary that he KEPT. POLYSEMOUS WORDS: meanings are related to each other Cfr. Human’s foot vs. mountain’s foot In general, distinction between HOMONIMY and POLYSEMY not always easy 2004/05 ANLE 14

Other aspects of lexical meaning not captured by MRDs Other semantic relations: HYPONYMY ANTONYMY A lot of other information typically considered part of ENCYCLOPEDIAs: Trees grow bark and twigs Adult trees are much taller than human beings 2004/05 ANLE 15

Other aspects of lexical meaning not captured by MRDs Other semantic relations: HYPONYMY ANTONYMY A lot of other information typically considered part of ENCYCLOPEDIAs: Trees grow bark and twigs Adult trees are much taller than human beings 2004/05 ANLE 15

Hyponymy and Hypernymy HYPONYMY is the relation between a subclass and a superclass: CAR and VEHICLE DOG and ANIMAL BUNGALOW and HOUSE Generally speaking, a hyponymy relation holds between X and Y whenever it is possible to substitute Y for X: That is a X -> That is a Y E. g. , That is a CAR -> That is a VEHICLE. HYPERNYMY is the opposite relation Knowledge about TAXONOMIES useful to classify web pages Eg. , Semantic Web Automatically (e. g. , Udo Kruschwitz’s system) This information not generally contained in MRD 2004/05 ANLE 16

Hyponymy and Hypernymy HYPONYMY is the relation between a subclass and a superclass: CAR and VEHICLE DOG and ANIMAL BUNGALOW and HOUSE Generally speaking, a hyponymy relation holds between X and Y whenever it is possible to substitute Y for X: That is a X -> That is a Y E. g. , That is a CAR -> That is a VEHICLE. HYPERNYMY is the opposite relation Knowledge about TAXONOMIES useful to classify web pages Eg. , Semantic Web Automatically (e. g. , Udo Kruschwitz’s system) This information not generally contained in MRD 2004/05 ANLE 16

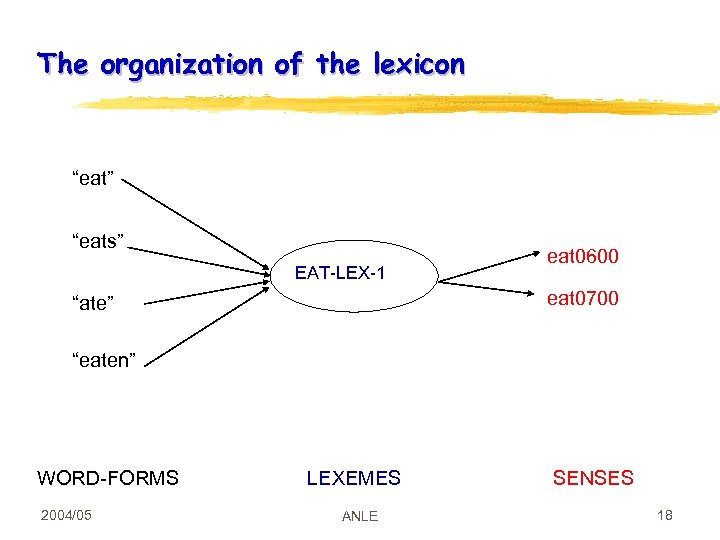

The organization of the lexicon “eat” “eats” EAT-LEX-1 eat 0600 eat 0700 “ate” “eaten” WORD-FORMS 2004/05 LEXEMES ANLE SENSES 18

The organization of the lexicon “eat” “eats” EAT-LEX-1 eat 0600 eat 0700 “ate” “eaten” WORD-FORMS 2004/05 LEXEMES ANLE SENSES 18

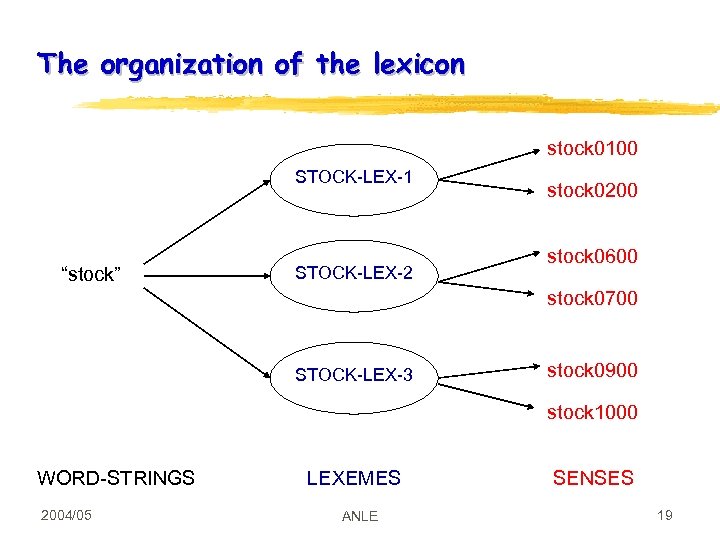

The organization of the lexicon stock 0100 STOCK-LEX-1 “stock” STOCK-LEX-2 stock 0200 stock 0600 stock 0700 STOCK-LEX-3 stock 0900 stock 1000 WORD-STRINGS 2004/05 LEXEMES ANLE SENSES 19

The organization of the lexicon stock 0100 STOCK-LEX-1 “stock” STOCK-LEX-2 stock 0200 stock 0600 stock 0700 STOCK-LEX-3 stock 0900 stock 1000 WORD-STRINGS 2004/05 LEXEMES ANLE SENSES 19

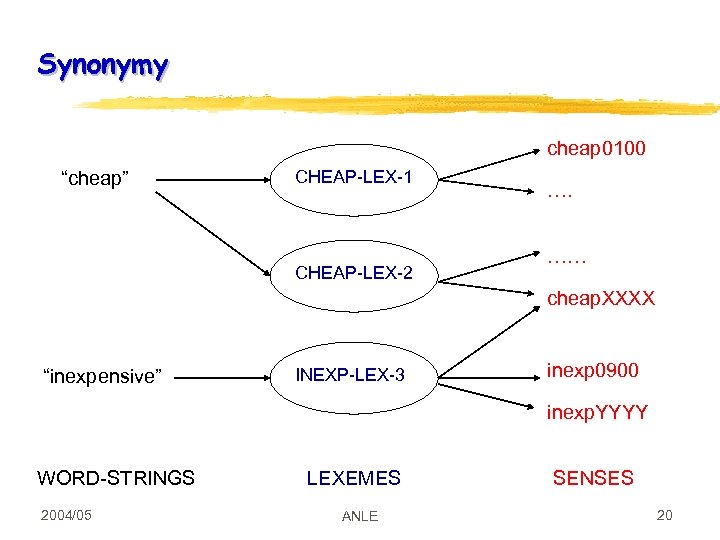

Synonymy cheap 0100 “cheap” CHEAP-LEX-1 CHEAP-LEX-2 …. …… cheap. XXXX “inexpensive” INEXP-LEX-3 inexp 0900 inexp. YYYY WORD-STRINGS 2004/05 LEXEMES ANLE SENSES 20

Synonymy cheap 0100 “cheap” CHEAP-LEX-1 CHEAP-LEX-2 …. …… cheap. XXXX “inexpensive” INEXP-LEX-3 inexp 0900 inexp. YYYY WORD-STRINGS 2004/05 LEXEMES ANLE SENSES 20

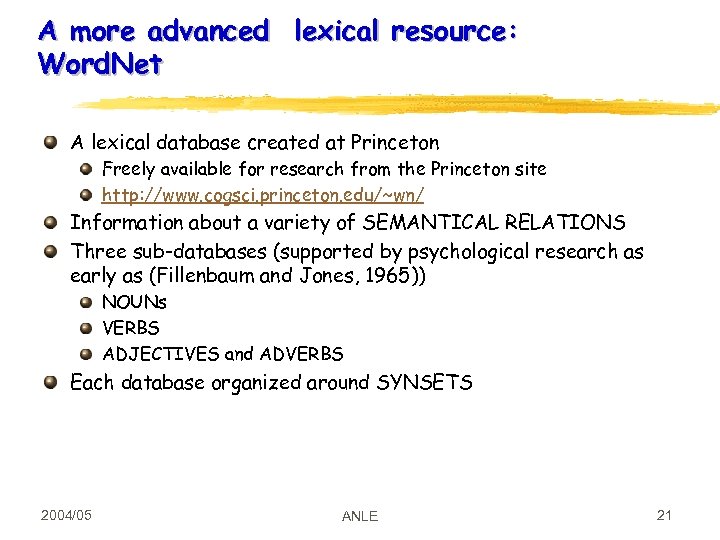

A more advanced lexical resource: Word. Net A lexical database created at Princeton Freely available for research from the Princeton site http: //www. cogsci. princeton. edu/~wn/ Information about a variety of SEMANTICAL RELATIONS Three sub-databases (supported by psychological research as early as (Fillenbaum and Jones, 1965)) NOUNs VERBS ADJECTIVES and ADVERBS Each database organized around SYNSETS 2004/05 ANLE 21

A more advanced lexical resource: Word. Net A lexical database created at Princeton Freely available for research from the Princeton site http: //www. cogsci. princeton. edu/~wn/ Information about a variety of SEMANTICAL RELATIONS Three sub-databases (supported by psychological research as early as (Fillenbaum and Jones, 1965)) NOUNs VERBS ADJECTIVES and ADVERBS Each database organized around SYNSETS 2004/05 ANLE 21

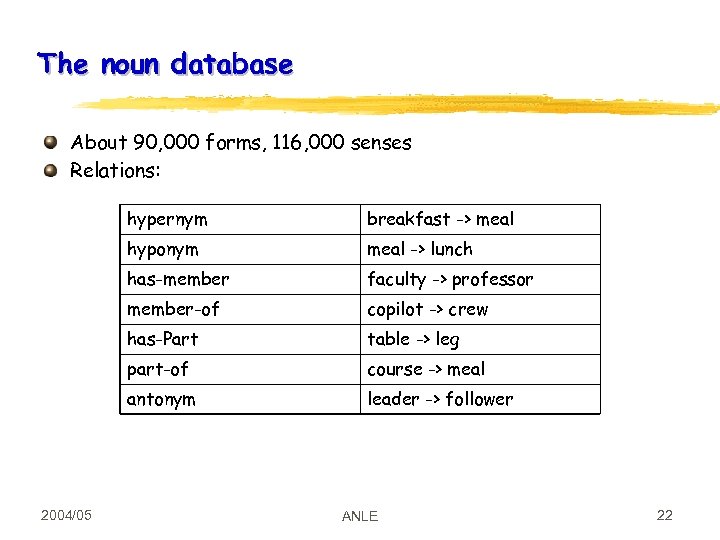

The noun database About 90, 000 forms, 116, 000 senses Relations: hypernym hyponym meal -> lunch has-member faculty -> professor member-of copilot -> crew has-Part table -> leg part-of course -> meal antonym 2004/05 breakfast -> meal leader -> follower ANLE 22

The noun database About 90, 000 forms, 116, 000 senses Relations: hypernym hyponym meal -> lunch has-member faculty -> professor member-of copilot -> crew has-Part table -> leg part-of course -> meal antonym 2004/05 breakfast -> meal leader -> follower ANLE 22

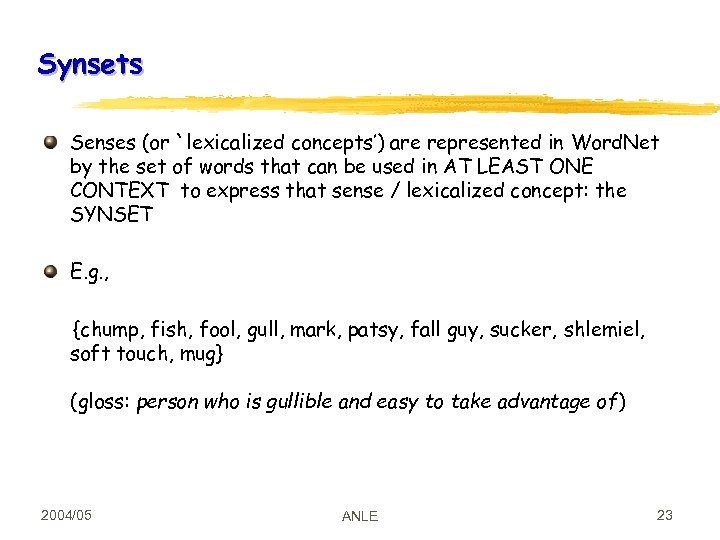

Synsets Senses (or `lexicalized concepts’) are represented in Word. Net by the set of words that can be used in AT LEAST ONE CONTEXT to express that sense / lexicalized concept: the SYNSET E. g. , {chump, fish, fool, gull, mark, patsy, fall guy, sucker, shlemiel, soft touch, mug} (gloss: person who is gullible and easy to take advantage of) 2004/05 ANLE 23

Synsets Senses (or `lexicalized concepts’) are represented in Word. Net by the set of words that can be used in AT LEAST ONE CONTEXT to express that sense / lexicalized concept: the SYNSET E. g. , {chump, fish, fool, gull, mark, patsy, fall guy, sucker, shlemiel, soft touch, mug} (gloss: person who is gullible and easy to take advantage of) 2004/05 ANLE 23

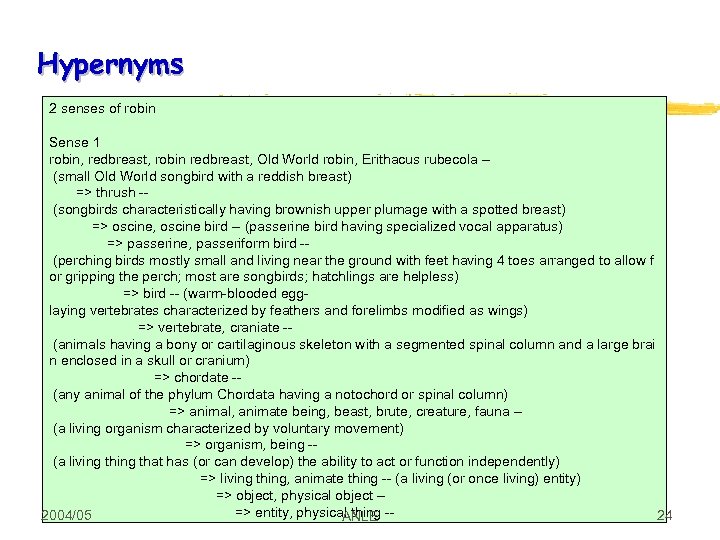

Hypernyms 2 senses of robin Sense 1 robin, redbreast, robin redbreast, Old World robin, Erithacus rubecola - (small Old World songbird with a reddish breast) => thrush - (songbirds characteristically having brownish upper plumage with a spotted breast) => oscine, oscine bird -- (passerine bird having specialized vocal apparatus) => passerine, passeriform bird - (perching birds mostly small and living near the ground with feet having 4 toes arranged to allow f or gripping the perch; most are songbirds; hatchlings are helpless) => bird -- (warm-blooded egglaying vertebrates characterized by feathers and forelimbs modified as wings) => vertebrate, craniate - (animals having a bony or cartilaginous skeleton with a segmented spinal column and a large brai n enclosed in a skull or cranium) => chordate - (any animal of the phylum Chordata having a notochord or spinal column) => animal, animate being, beast, brute, creature, fauna - (a living organism characterized by voluntary movement) => organism, being - (a living that has (or can develop) the ability to act or function independently) => living thing, animate thing -- (a living (or once living) entity) => object, physical object - => entity, physical thing -24 2004/05 ANLE

Hypernyms 2 senses of robin Sense 1 robin, redbreast, robin redbreast, Old World robin, Erithacus rubecola - (small Old World songbird with a reddish breast) => thrush - (songbirds characteristically having brownish upper plumage with a spotted breast) => oscine, oscine bird -- (passerine bird having specialized vocal apparatus) => passerine, passeriform bird - (perching birds mostly small and living near the ground with feet having 4 toes arranged to allow f or gripping the perch; most are songbirds; hatchlings are helpless) => bird -- (warm-blooded egglaying vertebrates characterized by feathers and forelimbs modified as wings) => vertebrate, craniate - (animals having a bony or cartilaginous skeleton with a segmented spinal column and a large brai n enclosed in a skull or cranium) => chordate - (any animal of the phylum Chordata having a notochord or spinal column) => animal, animate being, beast, brute, creature, fauna - (a living organism characterized by voluntary movement) => organism, being - (a living that has (or can develop) the ability to act or function independently) => living thing, animate thing -- (a living (or once living) entity) => object, physical object - => entity, physical thing -24 2004/05 ANLE

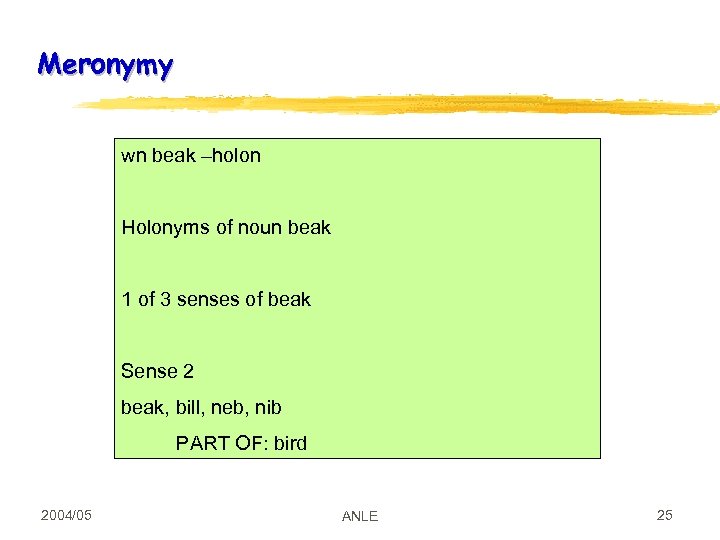

Meronymy wn beak –holon Holonyms of noun beak 1 of 3 senses of beak Sense 2 beak, bill, neb, nib PART OF: bird 2004/05 ANLE 25

Meronymy wn beak –holon Holonyms of noun beak 1 of 3 senses of beak Sense 2 beak, bill, neb, nib PART OF: bird 2004/05 ANLE 25

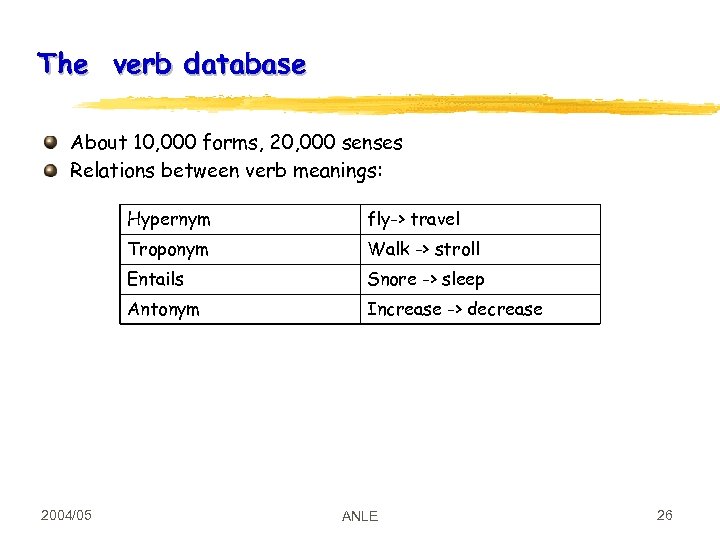

The verb database About 10, 000 forms, 20, 000 senses Relations between verb meanings: Hypernym Troponym Walk -> stroll Entails Snore -> sleep Antonym 2004/05 fly-> travel Increase -> decrease ANLE 26

The verb database About 10, 000 forms, 20, 000 senses Relations between verb meanings: Hypernym Troponym Walk -> stroll Entails Snore -> sleep Antonym 2004/05 fly-> travel Increase -> decrease ANLE 26

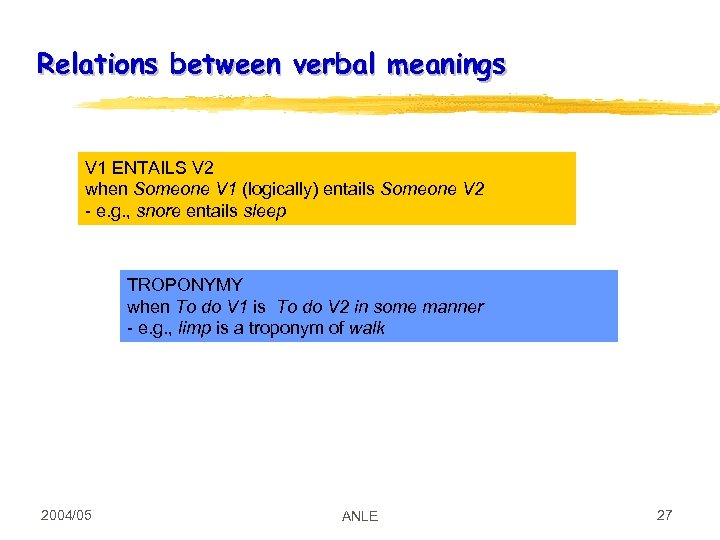

Relations between verbal meanings V 1 ENTAILS V 2 when Someone V 1 (logically) entails Someone V 2 - e. g. , snore entails sleep TROPONYMY when To do V 1 is To do V 2 in some manner - e. g. , limp is a troponym of walk 2004/05 ANLE 27

Relations between verbal meanings V 1 ENTAILS V 2 when Someone V 1 (logically) entails Someone V 2 - e. g. , snore entails sleep TROPONYMY when To do V 1 is To do V 2 in some manner - e. g. , limp is a troponym of walk 2004/05 ANLE 27

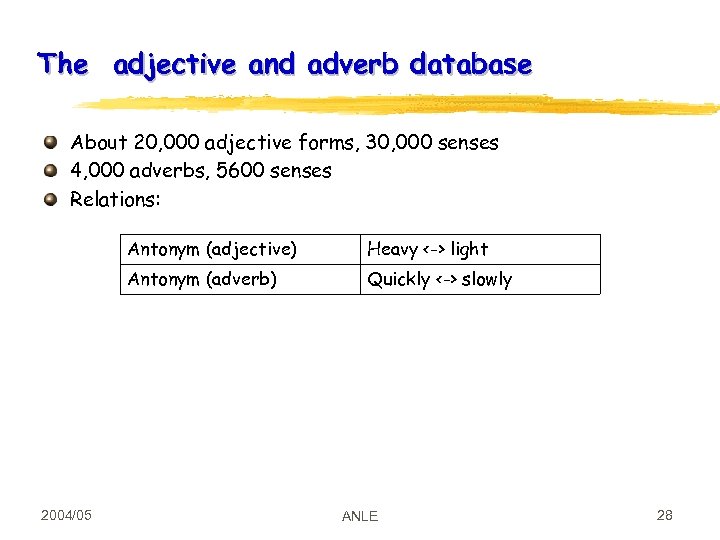

The adjective and adverb database About 20, 000 adjective forms, 30, 000 senses 4, 000 adverbs, 5600 senses Relations: Antonym (adjective) Antonym (adverb) 2004/05 Heavy <-> light Quickly <-> slowly ANLE 28

The adjective and adverb database About 20, 000 adjective forms, 30, 000 senses 4, 000 adverbs, 5600 senses Relations: Antonym (adjective) Antonym (adverb) 2004/05 Heavy <-> light Quickly <-> slowly ANLE 28

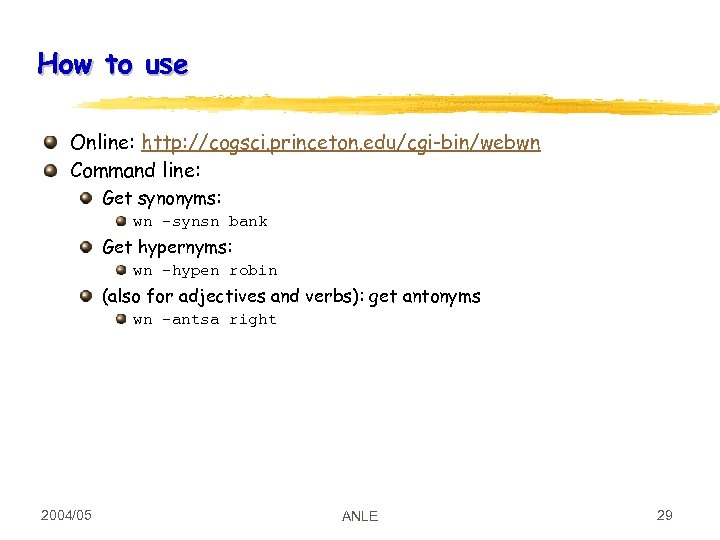

How to use Online: http: //cogsci. princeton. edu/cgi-bin/webwn Command line: Get synonyms: wn –synsn bank Get hypernyms: wn –hypen robin (also for adjectives and verbs): get antonyms wn –antsa right 2004/05 ANLE 29

How to use Online: http: //cogsci. princeton. edu/cgi-bin/webwn Command line: Get synonyms: wn –synsn bank Get hypernyms: wn –hypen robin (also for adjectives and verbs): get antonyms wn –antsa right 2004/05 ANLE 29

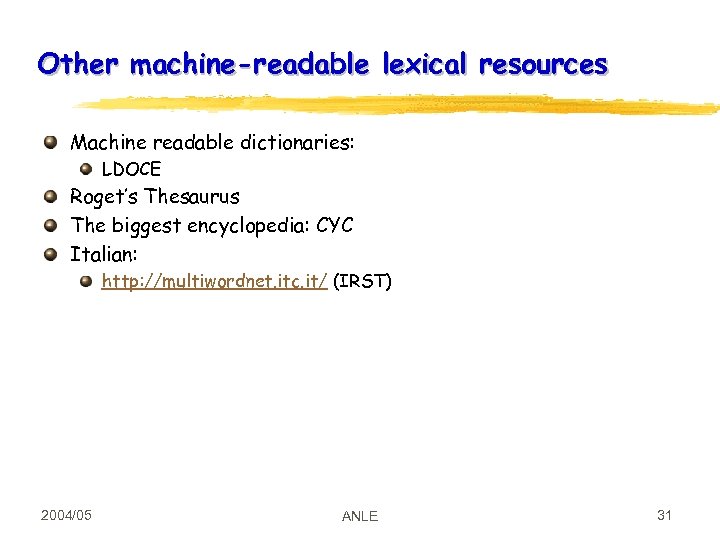

Other machine-readable lexical resources Machine readable dictionaries: LDOCE Roget’s Thesaurus The biggest encyclopedia: CYC Italian: http: //multiwordnet. itc. it/ (IRST) 2004/05 ANLE 31

Other machine-readable lexical resources Machine readable dictionaries: LDOCE Roget’s Thesaurus The biggest encyclopedia: CYC Italian: http: //multiwordnet. itc. it/ (IRST) 2004/05 ANLE 31

Readings Jurafsky and Martin, chapter 16 Word. Net online manuals C. Fellbaum (ed), Wordnet: An Electronic Lexical Database, The MIT Press 2004/05 ANLE 32

Readings Jurafsky and Martin, chapter 16 Word. Net online manuals C. Fellbaum (ed), Wordnet: An Electronic Lexical Database, The MIT Press 2004/05 ANLE 32

WORD SENSE DISAMBIGUATION 2004/05 ANLE 33

WORD SENSE DISAMBIGUATION 2004/05 ANLE 33

Identifying the sense of a word in its context In most NLE, this is viewed as a TAGGING task Many respects, a similar task to that of POS tagging This is only a simplification! But less agreement on what the senses are, so the UPPER BOUND is lower Main methods we’ll discuss: Frequency-based disambiguation Selectional restrictions Dictionary-based Statistical methods: Naïve Bayes 2004/05 ANLE 34

Identifying the sense of a word in its context In most NLE, this is viewed as a TAGGING task Many respects, a similar task to that of POS tagging This is only a simplification! But less agreement on what the senses are, so the UPPER BOUND is lower Main methods we’ll discuss: Frequency-based disambiguation Selectional restrictions Dictionary-based Statistical methods: Naïve Bayes 2004/05 ANLE 34

Psychological research on word-sense disambiguation Lexical access: the COHORT model (Marslen-Wilson and Welsh, 1978) All lexical entries accessed in parallel Frequency effects: common words such as DOOR are responded more quickly than uncommon words such as CASK (Forster and Chambers, 1973) Context effects: words are identified more quickly in context than out of context (Meyer and Schvaneveldt, 1971) bread and butter 2004/05 ANLE 35

Psychological research on word-sense disambiguation Lexical access: the COHORT model (Marslen-Wilson and Welsh, 1978) All lexical entries accessed in parallel Frequency effects: common words such as DOOR are responded more quickly than uncommon words such as CASK (Forster and Chambers, 1973) Context effects: words are identified more quickly in context than out of context (Meyer and Schvaneveldt, 1971) bread and butter 2004/05 ANLE 35

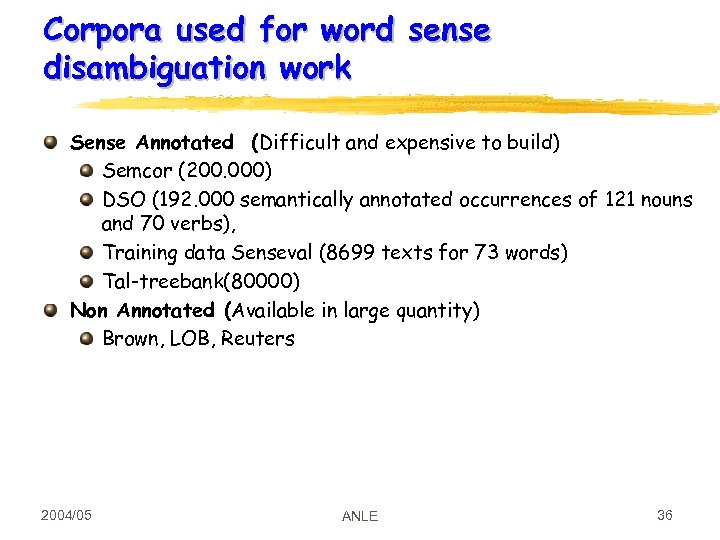

Corpora used for word sense disambiguation work Sense Annotated (Difficult and expensive to build) Semcor (200. 000) DSO (192. 000 semantically annotated occurrences of 121 nouns and 70 verbs), Training data Senseval (8699 texts for 73 words) Tal-treebank(80000) Non Annotated (Available in large quantity) Brown, LOB, Reuters 2004/05 ANLE 36

Corpora used for word sense disambiguation work Sense Annotated (Difficult and expensive to build) Semcor (200. 000) DSO (192. 000 semantically annotated occurrences of 121 nouns and 70 verbs), Training data Senseval (8699 texts for 73 words) Tal-treebank(80000) Non Annotated (Available in large quantity) Brown, LOB, Reuters 2004/05 ANLE 36

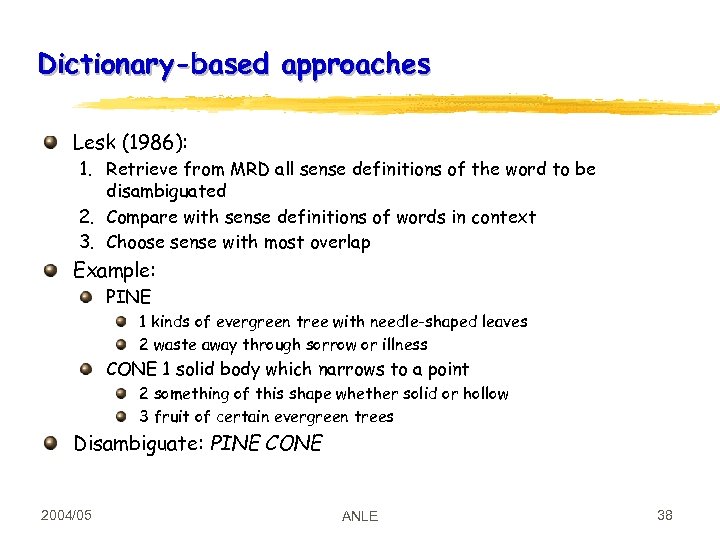

Dictionary-based approaches Lesk (1986): 1. Retrieve from MRD all sense definitions of the word to be disambiguated 2. Compare with sense definitions of words in context 3. Choose sense with most overlap Example: PINE 1 kinds of evergreen tree with needle-shaped leaves 2 waste away through sorrow or illness CONE 1 solid body which narrows to a point 2 something of this shape whether solid or hollow 3 fruit of certain evergreen trees Disambiguate: PINE CONE 2004/05 ANLE 38

Dictionary-based approaches Lesk (1986): 1. Retrieve from MRD all sense definitions of the word to be disambiguated 2. Compare with sense definitions of words in context 3. Choose sense with most overlap Example: PINE 1 kinds of evergreen tree with needle-shaped leaves 2 waste away through sorrow or illness CONE 1 solid body which narrows to a point 2 something of this shape whether solid or hollow 3 fruit of certain evergreen trees Disambiguate: PINE CONE 2004/05 ANLE 38

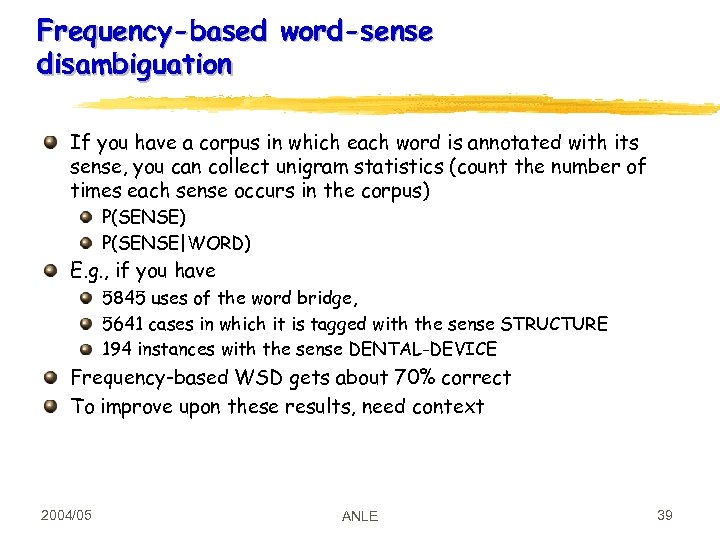

Frequency-based word-sense disambiguation If you have a corpus in which each word is annotated with its sense, you can collect unigram statistics (count the number of times each sense occurs in the corpus) P(SENSE|WORD) E. g. , if you have 5845 uses of the word bridge, 5641 cases in which it is tagged with the sense STRUCTURE 194 instances with the sense DENTAL-DEVICE Frequency-based WSD gets about 70% correct To improve upon these results, need context 2004/05 ANLE 39

Frequency-based word-sense disambiguation If you have a corpus in which each word is annotated with its sense, you can collect unigram statistics (count the number of times each sense occurs in the corpus) P(SENSE|WORD) E. g. , if you have 5845 uses of the word bridge, 5641 cases in which it is tagged with the sense STRUCTURE 194 instances with the sense DENTAL-DEVICE Frequency-based WSD gets about 70% correct To improve upon these results, need context 2004/05 ANLE 39

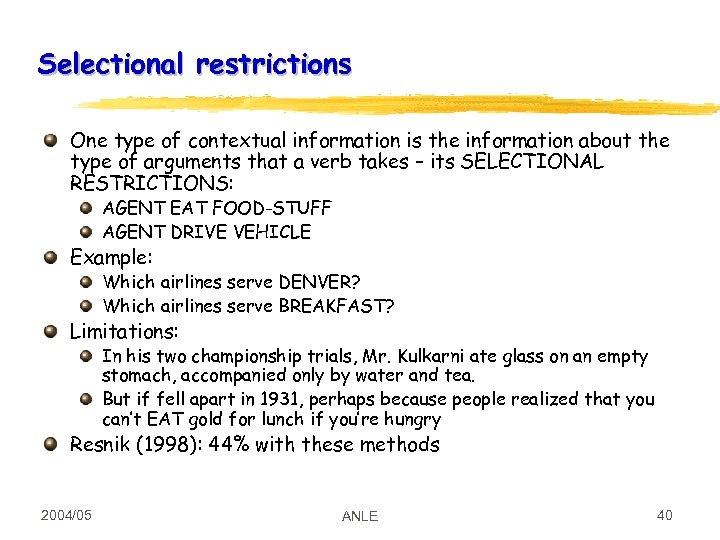

Selectional restrictions One type of contextual information is the information about the type of arguments that a verb takes – its SELECTIONAL RESTRICTIONS: AGENT EAT FOOD-STUFF AGENT DRIVE VEHICLE Example: Which airlines serve DENVER? Which airlines serve BREAKFAST? Limitations: In his two championship trials, Mr. Kulkarni ate glass on an empty stomach, accompanied only by water and tea. But if fell apart in 1931, perhaps because people realized that you can’t EAT gold for lunch if you’re hungry Resnik (1998): 44% with these methods 2004/05 ANLE 40

Selectional restrictions One type of contextual information is the information about the type of arguments that a verb takes – its SELECTIONAL RESTRICTIONS: AGENT EAT FOOD-STUFF AGENT DRIVE VEHICLE Example: Which airlines serve DENVER? Which airlines serve BREAKFAST? Limitations: In his two championship trials, Mr. Kulkarni ate glass on an empty stomach, accompanied only by water and tea. But if fell apart in 1931, perhaps because people realized that you can’t EAT gold for lunch if you’re hungry Resnik (1998): 44% with these methods 2004/05 ANLE 40

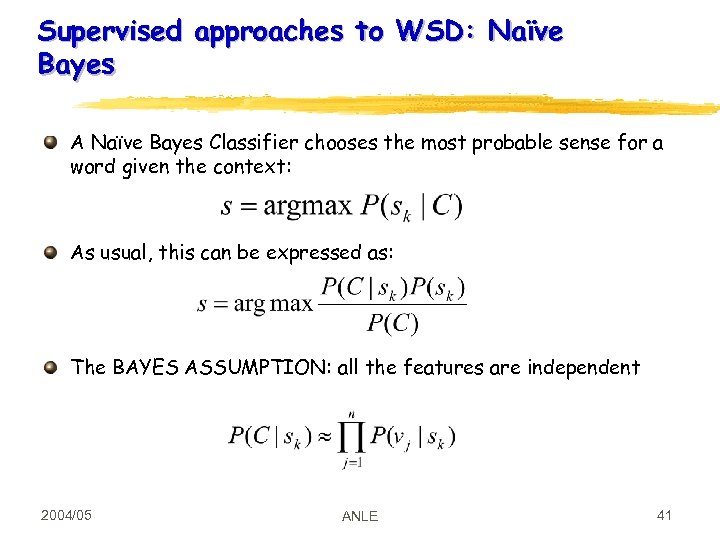

Supervised approaches to WSD: Naïve Bayes A Naïve Bayes Classifier chooses the most probable sense for a word given the context: As usual, this can be expressed as: The BAYES ASSUMPTION: all the features are independent 2004/05 ANLE 41

Supervised approaches to WSD: Naïve Bayes A Naïve Bayes Classifier chooses the most probable sense for a word given the context: As usual, this can be expressed as: The BAYES ASSUMPTION: all the features are independent 2004/05 ANLE 41

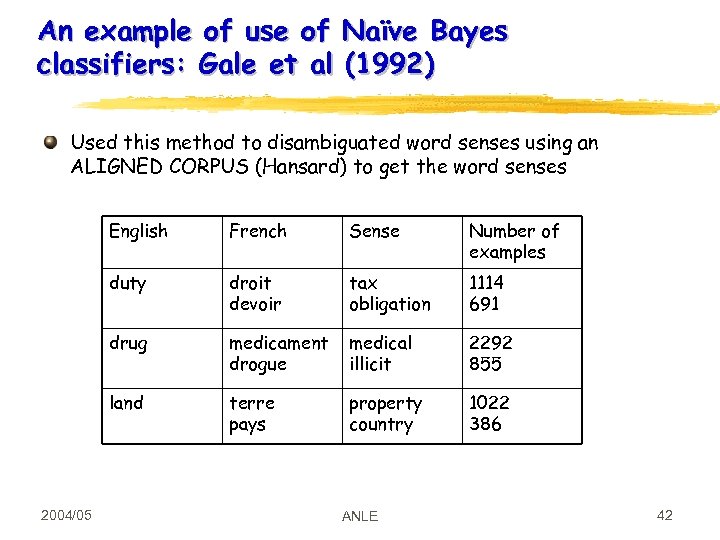

An example of use of Naïve Bayes classifiers: Gale et al (1992) Used this method to disambiguated word senses using an ALIGNED CORPUS (Hansard) to get the word senses English Sense Number of examples duty droit devoir tax obligation 1114 691 drug medicament drogue medical illicit 2292 855 land 2004/05 French terre pays property country 1022 386 ANLE 42

An example of use of Naïve Bayes classifiers: Gale et al (1992) Used this method to disambiguated word senses using an ALIGNED CORPUS (Hansard) to get the word senses English Sense Number of examples duty droit devoir tax obligation 1114 691 drug medicament drogue medical illicit 2292 855 land 2004/05 French terre pays property country 1022 386 ANLE 42

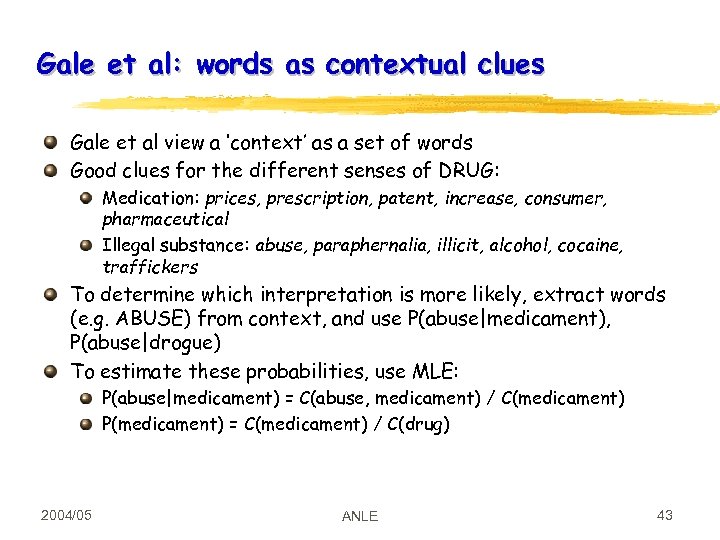

Gale et al: words as contextual clues Gale et al view a ‘context’ as a set of words Good clues for the different senses of DRUG: Medication: prices, prescription, patent, increase, consumer, pharmaceutical Illegal substance: abuse, paraphernalia, illicit, alcohol, cocaine, traffickers To determine which interpretation is more likely, extract words (e. g. ABUSE) from context, and use P(abuse|medicament), P(abuse|drogue) To estimate these probabilities, use MLE: P(abuse|medicament) = C(abuse, medicament) / C(medicament) P(medicament) = C(medicament) / C(drug) 2004/05 ANLE 43

Gale et al: words as contextual clues Gale et al view a ‘context’ as a set of words Good clues for the different senses of DRUG: Medication: prices, prescription, patent, increase, consumer, pharmaceutical Illegal substance: abuse, paraphernalia, illicit, alcohol, cocaine, traffickers To determine which interpretation is more likely, extract words (e. g. ABUSE) from context, and use P(abuse|medicament), P(abuse|drogue) To estimate these probabilities, use MLE: P(abuse|medicament) = C(abuse, medicament) / C(medicament) P(medicament) = C(medicament) / C(drug) 2004/05 ANLE 43

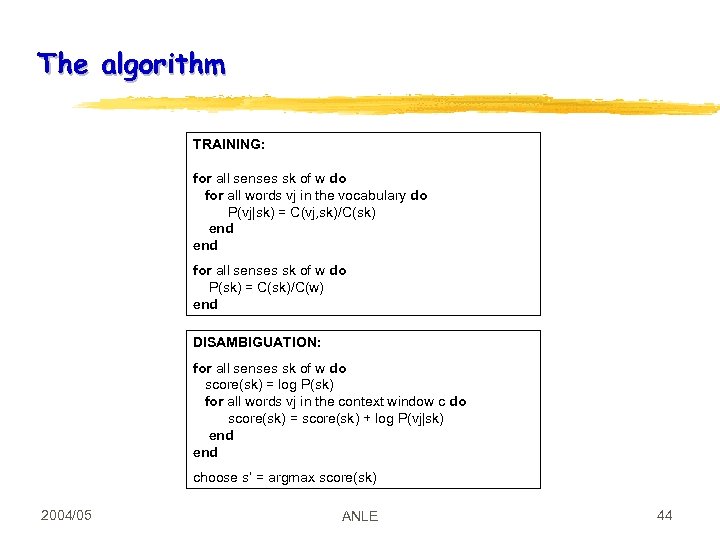

The algorithm TRAINING: for all senses sk of w do for all words vj in the vocabulary do P(vj|sk) = C(vj, sk)/C(sk) end for all senses sk of w do P(sk) = C(sk)/C(w) end DISAMBIGUATION: for all senses sk of w do score(sk) = log P(sk) for all words vj in the context window c do score(sk) = score(sk) + log P(vj|sk) end choose s’ = argmax score(sk) 2004/05 ANLE 44

The algorithm TRAINING: for all senses sk of w do for all words vj in the vocabulary do P(vj|sk) = C(vj, sk)/C(sk) end for all senses sk of w do P(sk) = C(sk)/C(w) end DISAMBIGUATION: for all senses sk of w do score(sk) = log P(sk) for all words vj in the context window c do score(sk) = score(sk) + log P(vj|sk) end choose s’ = argmax score(sk) 2004/05 ANLE 44

Results Gale et al (1992): disambiguation system using this algorithm correct for about 90% of occurrences of six ambiguous nouns in the Hansard corpus: duty, drug, land, language, position, sentence Good clues for drug: medication sense: prices, prescription, patent, increase illegal substance sense: abuse, paraphernalia, illicit, alcohol, cocaine, traffickers 2004/05 ANLE 45

Results Gale et al (1992): disambiguation system using this algorithm correct for about 90% of occurrences of six ambiguous nouns in the Hansard corpus: duty, drug, land, language, position, sentence Good clues for drug: medication sense: prices, prescription, patent, increase illegal substance sense: abuse, paraphernalia, illicit, alcohol, cocaine, traffickers 2004/05 ANLE 45

Other methods for WSD Supervised: Brown et al, 1991: using mutual information to combine senses into groups Yarowski (1992): using a thesaurus and a topic-classified corpus Unsupervised: sense DISCRIMINATION Schuetze 1996: using the EM algorithm 2004/05 ANLE 46

Other methods for WSD Supervised: Brown et al, 1991: using mutual information to combine senses into groups Yarowski (1992): using a thesaurus and a topic-classified corpus Unsupervised: sense DISCRIMINATION Schuetze 1996: using the EM algorithm 2004/05 ANLE 46

Thesaurus-based classification Basic idea (e. g. , Walker, 1987): A thesaurus assigns to a word a number of SUBJECT CODES Assumption: each subject code a distinct sense Disambiguate word W by assigning to it the subject code most commonly associated with the words in the context Problems: general topic categorization may not be very useful for a specific domain (e. g. , MOUSE in computer manuals) coverage problems: NAVRATILOVA Yarowski, 1992: Assign words to thesaurus category T if they occur more often than chance in contexts of category T in the corpus 2004/05 ANLE 47

Thesaurus-based classification Basic idea (e. g. , Walker, 1987): A thesaurus assigns to a word a number of SUBJECT CODES Assumption: each subject code a distinct sense Disambiguate word W by assigning to it the subject code most commonly associated with the words in the context Problems: general topic categorization may not be very useful for a specific domain (e. g. , MOUSE in computer manuals) coverage problems: NAVRATILOVA Yarowski, 1992: Assign words to thesaurus category T if they occur more often than chance in contexts of category T in the corpus 2004/05 ANLE 47

Evaluation Baseline: is the system an improvement? Unsupervised: Random, Simple-Lesk Supervised: Most Frequent, Lesk-plus-corpus. Upper bound: agreement between humans? 2004/05 ANLE 48

Evaluation Baseline: is the system an improvement? Unsupervised: Random, Simple-Lesk Supervised: Most Frequent, Lesk-plus-corpus. Upper bound: agreement between humans? 2004/05 ANLE 48

SENSEVAL Goals: Provide a common framework to compare WSD systems Standardise the task (especially evaluation procedures) Build and distribute new lexical resources (dictionaries and sense tagged corpora) Web site: http: //www. senseval. org/ “There are now many computer programs for automatically determining the sense of a word in context (Word Sense Disambiguation or WSD). The purpose of Senseval is to evaluate the strengths and weaknesses of such programs with respect to different words, different varieties of language, and different languages. ” from: http: //www. sle. sharp. co. uk/senseval 2 2004/05 ANLE 49

SENSEVAL Goals: Provide a common framework to compare WSD systems Standardise the task (especially evaluation procedures) Build and distribute new lexical resources (dictionaries and sense tagged corpora) Web site: http: //www. senseval. org/ “There are now many computer programs for automatically determining the sense of a word in context (Word Sense Disambiguation or WSD). The purpose of Senseval is to evaluate the strengths and weaknesses of such programs with respect to different words, different varieties of language, and different languages. ” from: http: //www. sle. sharp. co. uk/senseval 2 2004/05 ANLE 49

SENSEVAL History ACL-SIGLEX workshop (1997) Yarowsky and Resnik paper SENSEVAL-I (1998) Lexical Sample for English, French, and Italian SENSEVAL-II (Toulouse, 2001) Lexical Sample and All Words Organization: Kilkgarriff (Brighton) SENSEVAL-III (2003) 2004/05 ANLE 50

SENSEVAL History ACL-SIGLEX workshop (1997) Yarowsky and Resnik paper SENSEVAL-I (1998) Lexical Sample for English, French, and Italian SENSEVAL-II (Toulouse, 2001) Lexical Sample and All Words Organization: Kilkgarriff (Brighton) SENSEVAL-III (2003) 2004/05 ANLE 50

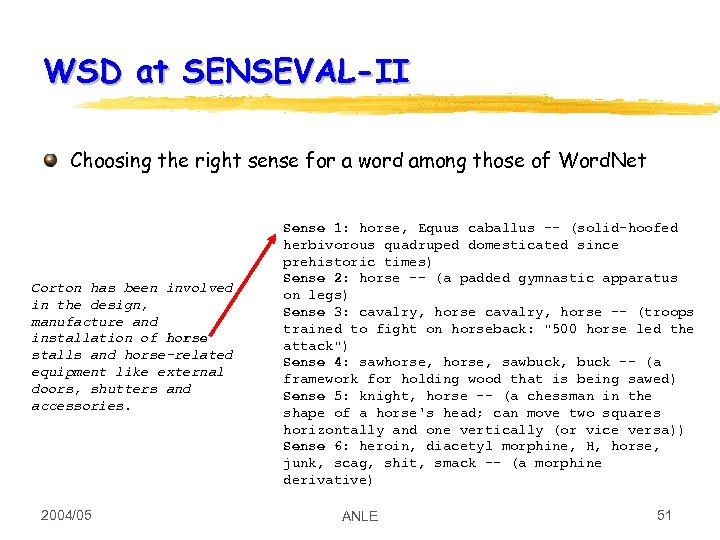

WSD at SENSEVAL-II Choosing the right sense for a word among those of Word. Net Corton has been involved in the design, manufacture and installation of horse stalls and horse-related equipment like external doors, shutters and accessories. 2004/05 Sense 1: horse, Equus caballus -- (solid-hoofed herbivorous quadruped domesticated since prehistoric times) Sense 2: horse -- (a padded gymnastic apparatus on legs) Sense 3: cavalry, horse -- (troops trained to fight on horseback: "500 horse led the attack") Sense 4: sawhorse, sawbuck, buck -- (a framework for holding wood that is being sawed) Sense 5: knight, horse -- (a chessman in the shape of a horse's head; can move two squares horizontally and one vertically (or vice versa)) Sense 6: heroin, diacetyl morphine, H, horse, junk, scag, shit, smack -- (a morphine derivative) ANLE 51

WSD at SENSEVAL-II Choosing the right sense for a word among those of Word. Net Corton has been involved in the design, manufacture and installation of horse stalls and horse-related equipment like external doors, shutters and accessories. 2004/05 Sense 1: horse, Equus caballus -- (solid-hoofed herbivorous quadruped domesticated since prehistoric times) Sense 2: horse -- (a padded gymnastic apparatus on legs) Sense 3: cavalry, horse -- (troops trained to fight on horseback: "500 horse led the attack") Sense 4: sawhorse, sawbuck, buck -- (a framework for holding wood that is being sawed) Sense 5: knight, horse -- (a chessman in the shape of a horse's head; can move two squares horizontally and one vertically (or vice versa)) Sense 6: heroin, diacetyl morphine, H, horse, junk, scag, shit, smack -- (a morphine derivative) ANLE 51

SENSEVAL-II Tasks All Words (without training data): Czech, Dutch, English, Estonian Lexical Sample (with training data): Basque, Chinese, Danish, English, Italian, Japanese, Korean, Spanish, Swedish 2004/05 ANLE 52

SENSEVAL-II Tasks All Words (without training data): Czech, Dutch, English, Estonian Lexical Sample (with training data): Basque, Chinese, Danish, English, Italian, Japanese, Korean, Spanish, Swedish 2004/05 ANLE 52

English SENSEVAL-II Organization: Martha Palmer (UPENN) Gold-standard: 2 annotators and 1 supervisor (Fellbaum) Interchange data format: XML Sense repository: Word. Net 1. 7 (special Senseval release) Competitors: All Words: 11 systems; Lexical Sample: 16 systems 2004/05 ANLE 53

English SENSEVAL-II Organization: Martha Palmer (UPENN) Gold-standard: 2 annotators and 1 supervisor (Fellbaum) Interchange data format: XML Sense repository: Word. Net 1. 7 (special Senseval release) Competitors: All Words: 11 systems; Lexical Sample: 16 systems 2004/05 ANLE 53

English All Words Data: 3 texts for a total of 1770 words Average polysemy: 6. 5 Example: (part of) Text 1 The art of change-ringing is peculiar to the English and, like most English peculiarities , unintelligible to the rest of the world. -- Dorothy L. Sayers , " The Nine Tailors " ASLACTON , England -- Of all scenes that evoke rural England , this is one of the loveliest : An ancient stone church stands amid the fields , the sound of bells cascading from its tower , calling the faithful to evensong. The parishioners of St. Michael and All Angels stop to chat at the church door , as members here always have. […] 2004/05 ANLE 54

English All Words Data: 3 texts for a total of 1770 words Average polysemy: 6. 5 Example: (part of) Text 1 The art of change-ringing is peculiar to the English and, like most English peculiarities , unintelligible to the rest of the world. -- Dorothy L. Sayers , " The Nine Tailors " ASLACTON , England -- Of all scenes that evoke rural England , this is one of the loveliest : An ancient stone church stands amid the fields , the sound of bells cascading from its tower , calling the faithful to evensong. The parishioners of St. Michael and All Angels stop to chat at the church door , as members here always have. […] 2004/05 ANLE 54

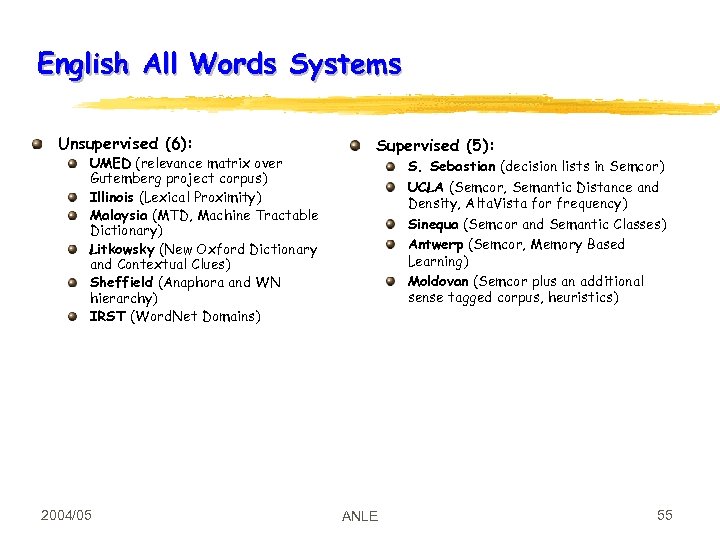

English All Words Systems Unsupervised (6): UMED (relevance matrix over Gutemberg project corpus) Illinois (Lexical Proximity) Malaysia (MTD, Machine Tractable Dictionary) Litkowsky (New Oxford Dictionary and Contextual Clues) Sheffield (Anaphora and WN hierarchy) IRST (Word. Net Domains) 2004/05 Supervised (5): S. Sebastian (decision lists in Semcor) UCLA (Semcor, Semantic Distance and Density, Alta. Vista for frequency) Sinequa (Semcor and Semantic Classes) Antwerp (Semcor, Memory Based Learning) Moldovan (Semcor plus an additional sense tagged corpus, heuristics) ANLE 55

English All Words Systems Unsupervised (6): UMED (relevance matrix over Gutemberg project corpus) Illinois (Lexical Proximity) Malaysia (MTD, Machine Tractable Dictionary) Litkowsky (New Oxford Dictionary and Contextual Clues) Sheffield (Anaphora and WN hierarchy) IRST (Word. Net Domains) 2004/05 Supervised (5): S. Sebastian (decision lists in Semcor) UCLA (Semcor, Semantic Distance and Density, Alta. Vista for frequency) Sinequa (Semcor and Semantic Classes) Antwerp (Semcor, Memory Based Learning) Moldovan (Semcor plus an additional sense tagged corpus, heuristics) ANLE 55

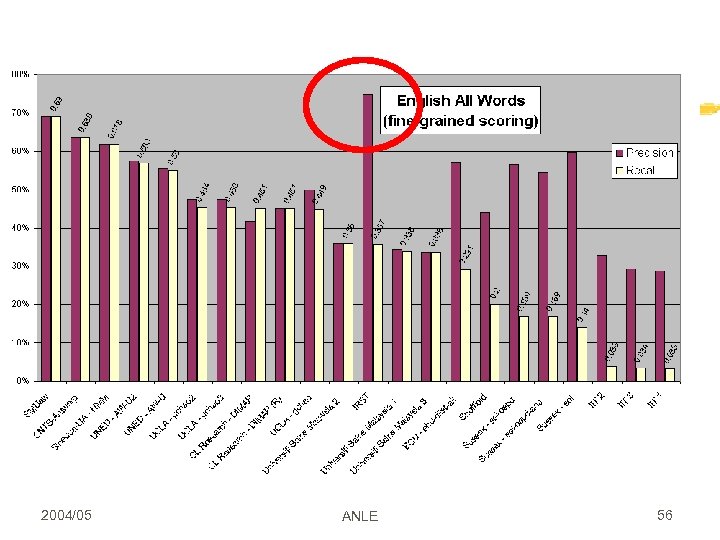

2004/05 ANLE 56

2004/05 ANLE 56

Lexical Sample Data: 8699 texts for 73 words Average WN polysemy: 9. 22 Training Data: 8166 (average 118/word) Baseline (commonest): 0. 47 precision Baseline (Lesk): 0. 51 precision 2004/05 ANLE 57

Lexical Sample Data: 8699 texts for 73 words Average WN polysemy: 9. 22 Training Data: 8166 (average 118/word) Baseline (commonest): 0. 47 precision Baseline (Lesk): 0. 51 precision 2004/05 ANLE 57

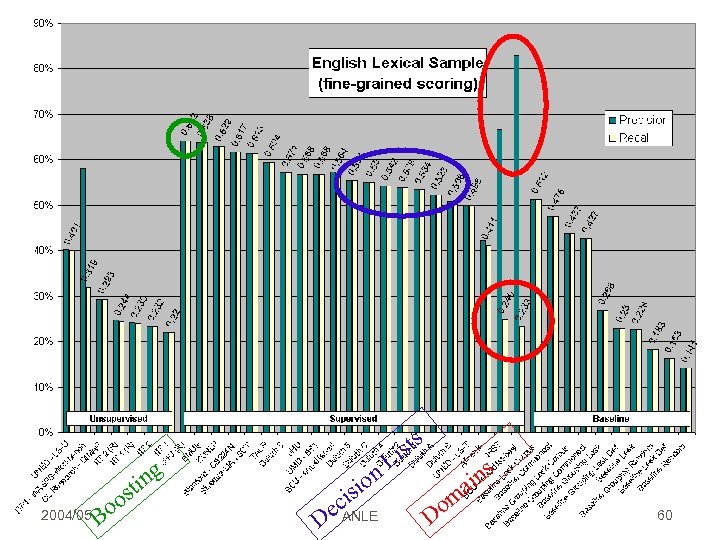

English Lexical Sample Systems Unsupervised (5): Sunderlard, UNED, Illinois, Litkowsky, ITRI Supervised (12): S. Sebastian, Sinequa, Manning, Pedersen, Korea, Yarowsky, Resnik, Pennsylvania, Barcellona, Moldovan, Alicante, IRST 2004/05 ANLE 59

English Lexical Sample Systems Unsupervised (5): Sunderlard, UNED, Illinois, Litkowsky, ITRI Supervised (12): S. Sebastian, Sinequa, Manning, Pedersen, Korea, Yarowsky, Resnik, Pennsylvania, Barcellona, Moldovan, Alicante, IRST 2004/05 ANLE 59

st o 2004/05 Bo ing sts i n. L sio i ec D ANLE om D ins a 60

st o 2004/05 Bo ing sts i n. L sio i ec D ANLE om D ins a 60

Boosting 1/2 Combine many simple and moderately accurate Weak Classifiers (WC) Train WCs sequentially, each on the examples which were most difficult to classify by the preceding WCs Examples of WCs: preceding_word=“house” domain=“sport”. . . 2004/05 ANLE 61

Boosting 1/2 Combine many simple and moderately accurate Weak Classifiers (WC) Train WCs sequentially, each on the examples which were most difficult to classify by the preceding WCs Examples of WCs: preceding_word=“house” domain=“sport”. . . 2004/05 ANLE 61

Boosting 2/2 WCi is trained and tested on the whole corpus; Each pair {word, synset} is given an “importance weight” h depending on how difficult it was for WC 1, …, WCi to classify; WCi+1 is tuned to classify the worst pairs {word, synset} correctly and it is tested on the whole corpus; so h is updated at each step At the end all the WCs are combined into a single rule, the combined hypothesis; each WCs is weighted according to its effectiveness in the tests 2004/05 ANLE 62

Boosting 2/2 WCi is trained and tested on the whole corpus; Each pair {word, synset} is given an “importance weight” h depending on how difficult it was for WC 1, …, WCi to classify; WCi+1 is tuned to classify the worst pairs {word, synset} correctly and it is tested on the whole corpus; so h is updated at each step At the end all the WCs are combined into a single rule, the combined hypothesis; each WCs is weighted according to its effectiveness in the tests 2004/05 ANLE 62

Bootstrapping Problem with supervised methods: need to have annotated corpus! Partial solution (Hearst, Yarowski): train on small set (bootstrap), then use the trained system to annotate a larger corpus, which is then use to retrain 2004/05 ANLE 63

Bootstrapping Problem with supervised methods: need to have annotated corpus! Partial solution (Hearst, Yarowski): train on small set (bootstrap), then use the trained system to annotate a larger corpus, which is then use to retrain 2004/05 ANLE 63

Readings Jurafsky and Martin, chapter 17. 1 and 17. 2 For more info: Manning and Schuetze, chapter 7 Papers: Gale, Church, and Yarowsky. 1992. A method for disambiguating word senses in large corpora. Computers and the Humanities, v. 26, 415 -439. 2004/05 ANLE 64

Readings Jurafsky and Martin, chapter 17. 1 and 17. 2 For more info: Manning and Schuetze, chapter 7 Papers: Gale, Church, and Yarowsky. 1992. A method for disambiguating word senses in large corpora. Computers and the Humanities, v. 26, 415 -439. 2004/05 ANLE 64

Acknowledgments Slides on SENSEVAL borrowed from Bernardo Magnini, IRST 2004/05 ANLE 65

Acknowledgments Slides on SENSEVAL borrowed from Bernardo Magnini, IRST 2004/05 ANLE 65

LEXICAL ACQUISITION 2004/05 ANLE 66

LEXICAL ACQUISITION 2004/05 ANLE 66

The limits of hand-encoded lexical resources Manual construction of lexical resources is very costly Because language keeps changing, these resources have to be continuously updated Some information (e. g. , about frequencies) has to be computed automatically anyway 2004/05 ANLE 67

The limits of hand-encoded lexical resources Manual construction of lexical resources is very costly Because language keeps changing, these resources have to be continuously updated Some information (e. g. , about frequencies) has to be computed automatically anyway 2004/05 ANLE 67

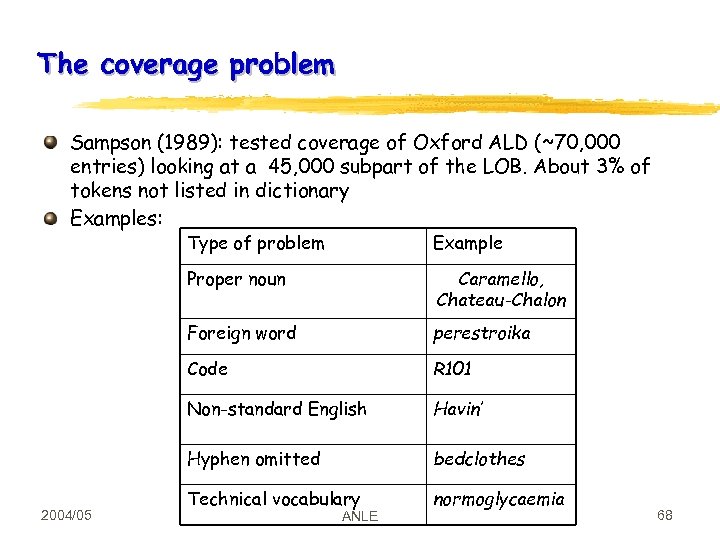

The coverage problem Sampson (1989): tested coverage of Oxford ALD (~70, 000 entries) looking at a 45, 000 subpart of the LOB. About 3% of tokens not listed in dictionary Examples: Type of problem Proper noun Caramello, Chateau-Chalon Foreign word perestroika Code R 101 Non-standard English Havin’ Hyphen omitted 2004/05 Example bedclothes Technical vocabulary normoglycaemia ANLE 68

The coverage problem Sampson (1989): tested coverage of Oxford ALD (~70, 000 entries) looking at a 45, 000 subpart of the LOB. About 3% of tokens not listed in dictionary Examples: Type of problem Proper noun Caramello, Chateau-Chalon Foreign word perestroika Code R 101 Non-standard English Havin’ Hyphen omitted 2004/05 Example bedclothes Technical vocabulary normoglycaemia ANLE 68

Lexical acquisition Most MRD these days contain at least some information derived by computational means Methods developed to Augment resources such as Word. Net with additional information (e. g. , in technical contexts such as bio informatics) Develop these resources from scratch These methods also interesting because of hope they can address the problems encountered by lexicographers when trying to specify word senses 2004/05 ANLE 69

Lexical acquisition Most MRD these days contain at least some information derived by computational means Methods developed to Augment resources such as Word. Net with additional information (e. g. , in technical contexts such as bio informatics) Develop these resources from scratch These methods also interesting because of hope they can address the problems encountered by lexicographers when trying to specify word senses 2004/05 ANLE 69

Vector-based lexical semantics Very old idea in NLE: the meaning of a word can be specified in terms of the values of certain `features’ (`DECOMPOSITIONAL SEMANTICS’) dog : ANIMATE= +, EAT=MEAT, SOCIAL=+ horse : ANIMATE= +, EAT=GRASS, SOCIAL=+ cat : ANIMATE= +, EAT=MEAT, SOCIAL=- Similarity / relatedness: proximity in feature space 2004/05 ANLE 70

Vector-based lexical semantics Very old idea in NLE: the meaning of a word can be specified in terms of the values of certain `features’ (`DECOMPOSITIONAL SEMANTICS’) dog : ANIMATE= +, EAT=MEAT, SOCIAL=+ horse : ANIMATE= +, EAT=GRASS, SOCIAL=+ cat : ANIMATE= +, EAT=MEAT, SOCIAL=- Similarity / relatedness: proximity in feature space 2004/05 ANLE 70

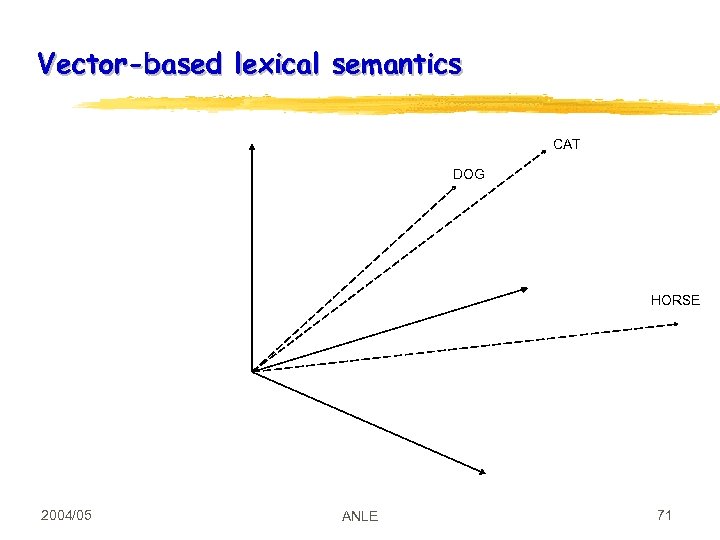

Vector-based lexical semantics CAT DOG HORSE 2004/05 ANLE 71

Vector-based lexical semantics CAT DOG HORSE 2004/05 ANLE 71

General characterization of vector-based semantics (from Charniak) Vectors as models of concepts The CLUSTERING approach to lexical semantics: 1. Define properties one cares about, and give values to each property (generally, numerical) 2. Create a vector of length n for each item to be classified 3. Viewing the n-dimensional vector as a point in n-space, cluster points that are near one another What changes between models: 1. The properties used in the vector 2. The distance metric used to decide if two points are `close’ 3. The algorithm used to cluster 2004/05 ANLE 72

General characterization of vector-based semantics (from Charniak) Vectors as models of concepts The CLUSTERING approach to lexical semantics: 1. Define properties one cares about, and give values to each property (generally, numerical) 2. Create a vector of length n for each item to be classified 3. Viewing the n-dimensional vector as a point in n-space, cluster points that are near one another What changes between models: 1. The properties used in the vector 2. The distance metric used to decide if two points are `close’ 3. The algorithm used to cluster 2004/05 ANLE 72

Using words as features in a vector-based semantics The old decompositional semantics approach requires i. Specifying the features ii. Characterizing the value of these features for each lexeme Simpler approach: use as features the WORDS that occur in the proximity of that word / lexical entry Intuition: “You can tell a word’s meaning from the company it keeps” More specifically, you can use as `values’ of these features The FREQUENCIES with which these words occur near the words whose meaning we are defining Or perhaps the PROBABILITIES that these words occur next to each other Alternative: use the DOCUMENTS in which these words occur (e. g. , LSA) Some psychological results support this view. Lund, Burgess, et al (1995, 1997): lexical associations learned this way correlate very well with priming experiments. Landauer et al: good correlation on a variety of topics, including human categorization & vocabulary tests. 2004/05 ANLE 73

Using words as features in a vector-based semantics The old decompositional semantics approach requires i. Specifying the features ii. Characterizing the value of these features for each lexeme Simpler approach: use as features the WORDS that occur in the proximity of that word / lexical entry Intuition: “You can tell a word’s meaning from the company it keeps” More specifically, you can use as `values’ of these features The FREQUENCIES with which these words occur near the words whose meaning we are defining Or perhaps the PROBABILITIES that these words occur next to each other Alternative: use the DOCUMENTS in which these words occur (e. g. , LSA) Some psychological results support this view. Lund, Burgess, et al (1995, 1997): lexical associations learned this way correlate very well with priming experiments. Landauer et al: good correlation on a variety of topics, including human categorization & vocabulary tests. 2004/05 ANLE 73

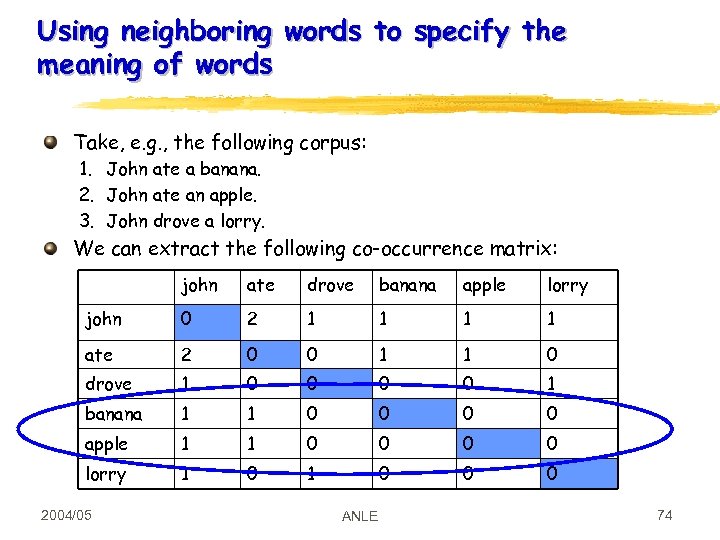

Using neighboring words to specify the meaning of words Take, e. g. , the following corpus: 1. John ate a banana. 2. John ate an apple. 3. John drove a lorry. We can extract the following co-occurrence matrix: john ate drove banana apple lorry john 0 2 1 1 ate 2 0 0 1 1 0 drove 1 0 0 1 banana 1 1 0 0 apple 1 1 0 0 lorry 1 0 0 0 2004/05 ANLE 74

Using neighboring words to specify the meaning of words Take, e. g. , the following corpus: 1. John ate a banana. 2. John ate an apple. 3. John drove a lorry. We can extract the following co-occurrence matrix: john ate drove banana apple lorry john 0 2 1 1 ate 2 0 0 1 1 0 drove 1 0 0 1 banana 1 1 0 0 apple 1 1 0 0 lorry 1 0 0 0 2004/05 ANLE 74

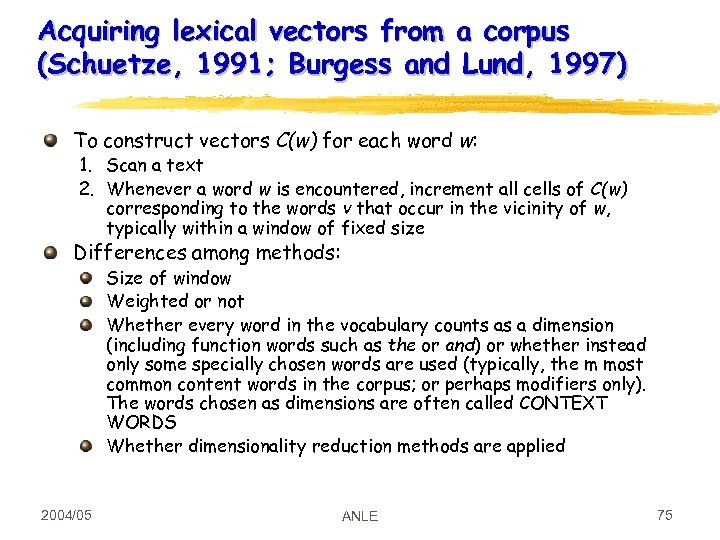

Acquiring lexical vectors from a corpus (Schuetze, 1991; Burgess and Lund, 1997) To construct vectors C(w) for each word w: 1. Scan a text 2. Whenever a word w is encountered, increment all cells of C(w) corresponding to the words v that occur in the vicinity of w, typically within a window of fixed size Differences among methods: Size of window Weighted or not Whether every word in the vocabulary counts as a dimension (including function words such as the or and) or whether instead only some specially chosen words are used (typically, the m most common content words in the corpus; or perhaps modifiers only). The words chosen as dimensions are often called CONTEXT WORDS Whether dimensionality reduction methods are applied 2004/05 ANLE 75

Acquiring lexical vectors from a corpus (Schuetze, 1991; Burgess and Lund, 1997) To construct vectors C(w) for each word w: 1. Scan a text 2. Whenever a word w is encountered, increment all cells of C(w) corresponding to the words v that occur in the vicinity of w, typically within a window of fixed size Differences among methods: Size of window Weighted or not Whether every word in the vocabulary counts as a dimension (including function words such as the or and) or whether instead only some specially chosen words are used (typically, the m most common content words in the corpus; or perhaps modifiers only). The words chosen as dimensions are often called CONTEXT WORDS Whether dimensionality reduction methods are applied 2004/05 ANLE 75

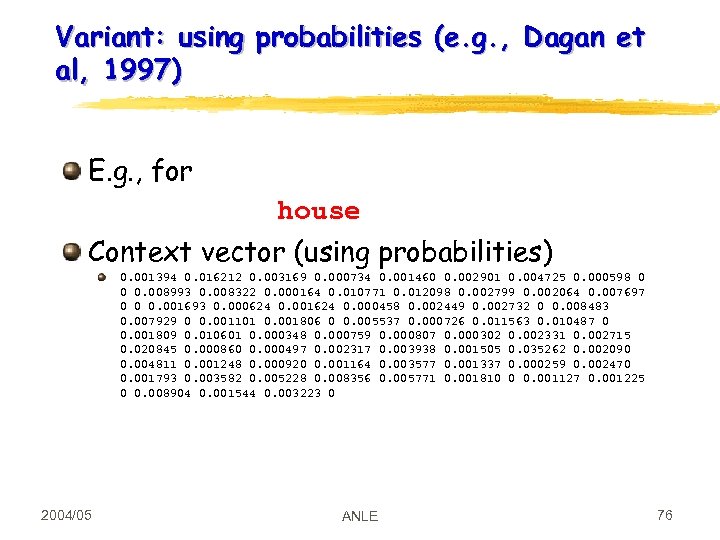

Variant: using probabilities (e. g. , Dagan et al, 1997) E. g. , for house Context vector (using probabilities) 0. 001394 0. 016212 0. 003169 0. 000734 0. 001460 0. 002901 0. 004725 0. 000598 0 0 0. 008993 0. 008322 0. 000164 0. 010771 0. 012098 0. 002799 0. 002064 0. 007697 0 0 0. 001693 0. 000624 0. 001624 0. 000458 0. 002449 0. 002732 0 0. 008483 0. 007929 0 0. 001101 0. 001806 0 0. 005537 0. 000726 0. 011563 0. 010487 0 0. 001809 0. 010601 0. 000348 0. 000759 0. 000807 0. 000302 0. 002331 0. 002715 0. 020845 0. 000860 0. 000497 0. 002317 0. 003938 0. 001505 0. 035262 0. 002090 0. 004811 0. 001248 0. 000920 0. 001164 0. 003577 0. 001337 0. 000259 0. 002470 0. 001793 0. 003582 0. 005228 0. 008356 0. 005771 0. 001810 0 0. 001127 0. 001225 0 0. 008904 0. 001544 0. 003223 0 2004/05 ANLE 76

Variant: using probabilities (e. g. , Dagan et al, 1997) E. g. , for house Context vector (using probabilities) 0. 001394 0. 016212 0. 003169 0. 000734 0. 001460 0. 002901 0. 004725 0. 000598 0 0 0. 008993 0. 008322 0. 000164 0. 010771 0. 012098 0. 002799 0. 002064 0. 007697 0 0 0. 001693 0. 000624 0. 001624 0. 000458 0. 002449 0. 002732 0 0. 008483 0. 007929 0 0. 001101 0. 001806 0 0. 005537 0. 000726 0. 011563 0. 010487 0 0. 001809 0. 010601 0. 000348 0. 000759 0. 000807 0. 000302 0. 002331 0. 002715 0. 020845 0. 000860 0. 000497 0. 002317 0. 003938 0. 001505 0. 035262 0. 002090 0. 004811 0. 001248 0. 000920 0. 001164 0. 003577 0. 001337 0. 000259 0. 002470 0. 001793 0. 003582 0. 005228 0. 008356 0. 005771 0. 001810 0 0. 001127 0. 001225 0 0. 008904 0. 001544 0. 003223 0 2004/05 ANLE 76

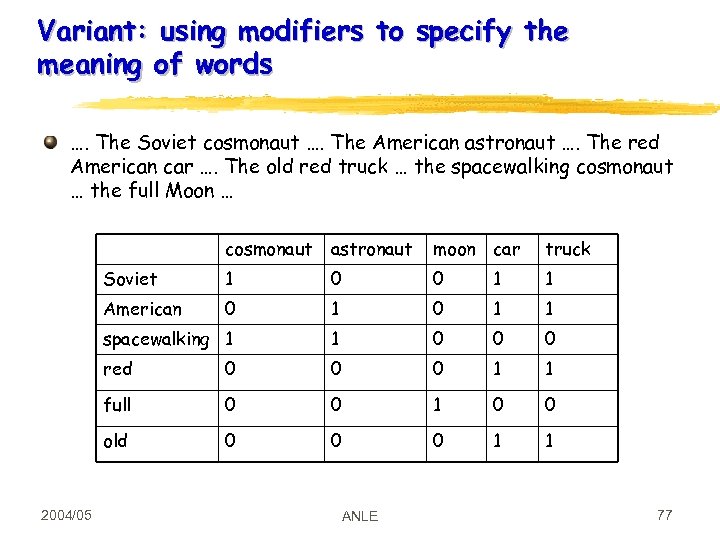

Variant: using modifiers to specify the meaning of words …. The Soviet cosmonaut …. The American astronaut …. The red American car …. The old red truck … the spacewalking cosmonaut … the full Moon … cosmonaut moon car truck Soviet 1 0 0 1 1 American 0 1 1 spacewalking 1 1 0 0 0 red 0 0 0 1 1 full 0 0 1 0 0 old 2004/05 astronaut 0 0 0 1 1 ANLE 77

Variant: using modifiers to specify the meaning of words …. The Soviet cosmonaut …. The American astronaut …. The red American car …. The old red truck … the spacewalking cosmonaut … the full Moon … cosmonaut moon car truck Soviet 1 0 0 1 1 American 0 1 1 spacewalking 1 1 0 0 0 red 0 0 0 1 1 full 0 0 1 0 0 old 2004/05 astronaut 0 0 0 1 1 ANLE 77

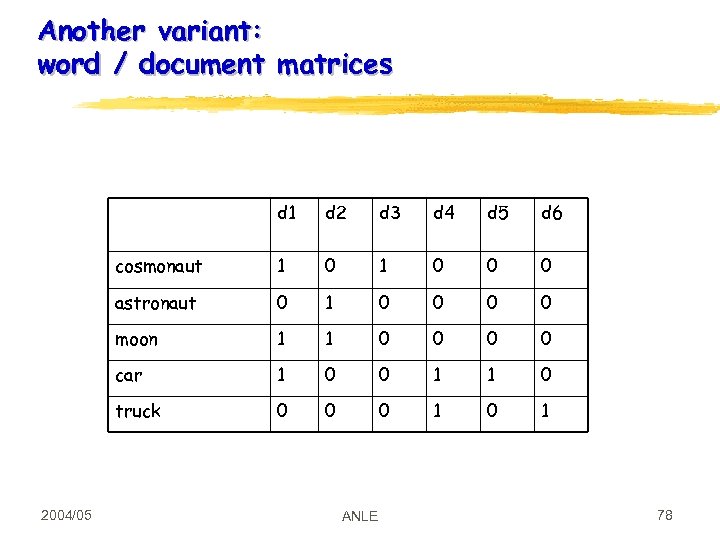

Another variant: word / document matrices d 1 d 3 d 4 d 5 d 6 cosmonaut 1 0 0 0 astronaut 0 1 0 0 moon 1 1 0 0 car 1 0 0 1 1 0 truck 2004/05 d 2 0 0 0 1 ANLE 78

Another variant: word / document matrices d 1 d 3 d 4 d 5 d 6 cosmonaut 1 0 0 0 astronaut 0 1 0 0 moon 1 1 0 0 car 1 0 0 1 1 0 truck 2004/05 d 2 0 0 0 1 ANLE 78

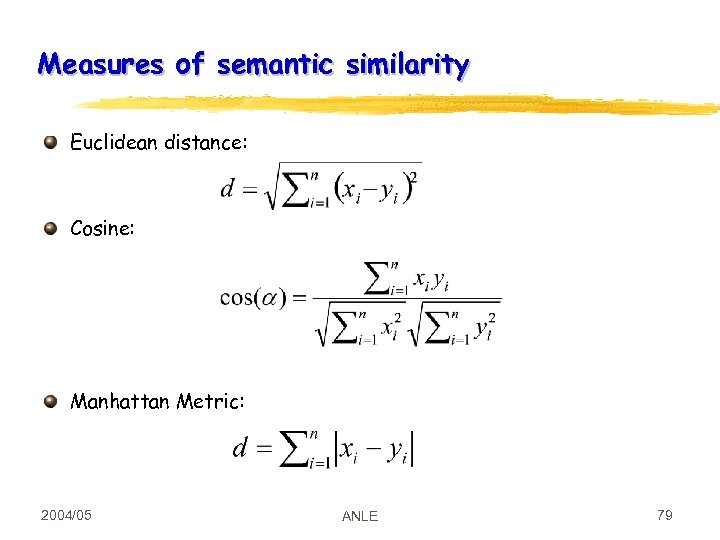

Measures of semantic similarity Euclidean distance: Cosine: Manhattan Metric: 2004/05 ANLE 79

Measures of semantic similarity Euclidean distance: Cosine: Manhattan Metric: 2004/05 ANLE 79

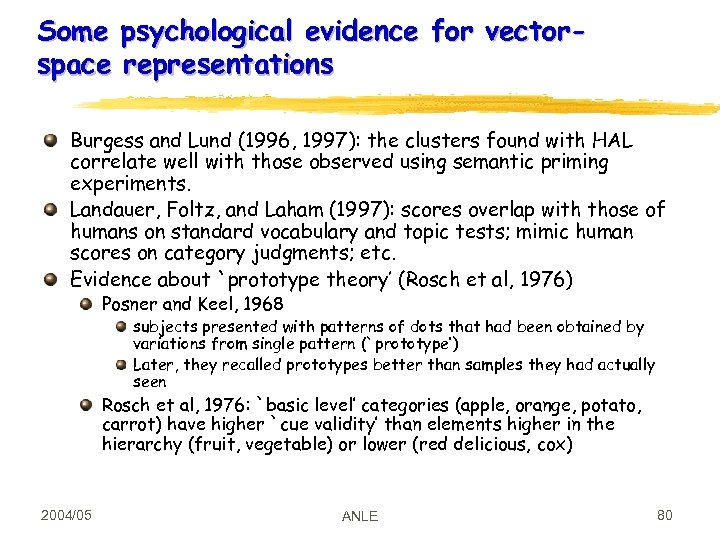

Some psychological evidence for vectorspace representations Burgess and Lund (1996, 1997): the clusters found with HAL correlate well with those observed using semantic priming experiments. Landauer, Foltz, and Laham (1997): scores overlap with those of humans on standard vocabulary and topic tests; mimic human scores on category judgments; etc. Evidence about `prototype theory’ (Rosch et al, 1976) Posner and Keel, 1968 subjects presented with patterns of dots that had been obtained by variations from single pattern (`prototype’) Later, they recalled prototypes better than samples they had actually seen Rosch et al, 1976: `basic level’ categories (apple, orange, potato, carrot) have higher `cue validity’ than elements higher in the hierarchy (fruit, vegetable) or lower (red delicious, cox) 2004/05 ANLE 80

Some psychological evidence for vectorspace representations Burgess and Lund (1996, 1997): the clusters found with HAL correlate well with those observed using semantic priming experiments. Landauer, Foltz, and Laham (1997): scores overlap with those of humans on standard vocabulary and topic tests; mimic human scores on category judgments; etc. Evidence about `prototype theory’ (Rosch et al, 1976) Posner and Keel, 1968 subjects presented with patterns of dots that had been obtained by variations from single pattern (`prototype’) Later, they recalled prototypes better than samples they had actually seen Rosch et al, 1976: `basic level’ categories (apple, orange, potato, carrot) have higher `cue validity’ than elements higher in the hierarchy (fruit, vegetable) or lower (red delicious, cox) 2004/05 ANLE 80

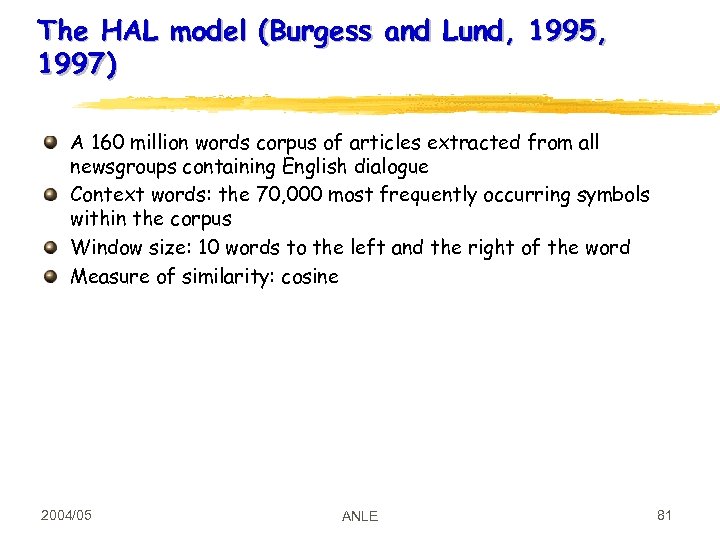

The HAL model (Burgess and Lund, 1995, 1997) A 160 million words corpus of articles extracted from all newsgroups containing English dialogue Context words: the 70, 000 most frequently occurring symbols within the corpus Window size: 10 words to the left and the right of the word Measure of similarity: cosine 2004/05 ANLE 81

The HAL model (Burgess and Lund, 1995, 1997) A 160 million words corpus of articles extracted from all newsgroups containing English dialogue Context words: the 70, 000 most frequently occurring symbols within the corpus Window size: 10 words to the left and the right of the word Measure of similarity: cosine 2004/05 ANLE 81

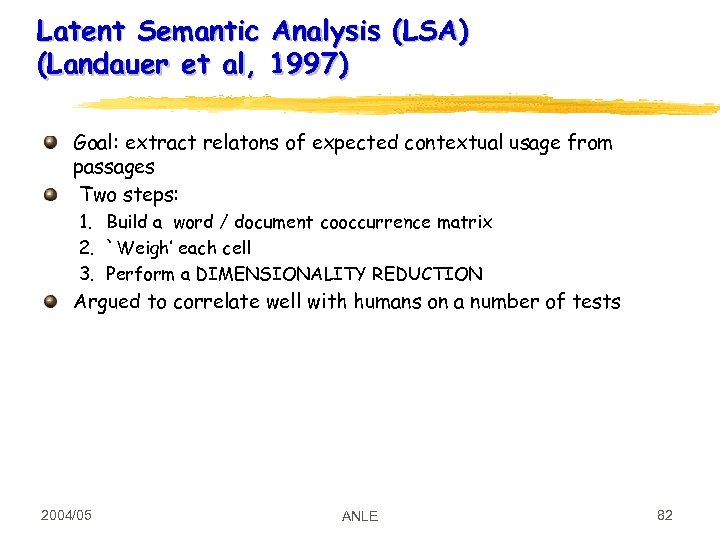

Latent Semantic Analysis (LSA) (Landauer et al, 1997) Goal: extract relatons of expected contextual usage from passages Two steps: 1. Build a word / document cooccurrence matrix 2. `Weigh’ each cell 3. Perform a DIMENSIONALITY REDUCTION Argued to correlate well with humans on a number of tests 2004/05 ANLE 82

Latent Semantic Analysis (LSA) (Landauer et al, 1997) Goal: extract relatons of expected contextual usage from passages Two steps: 1. Build a word / document cooccurrence matrix 2. `Weigh’ each cell 3. Perform a DIMENSIONALITY REDUCTION Argued to correlate well with humans on a number of tests 2004/05 ANLE 82

LSA: the method, 1 2004/05 ANLE 83

LSA: the method, 1 2004/05 ANLE 83

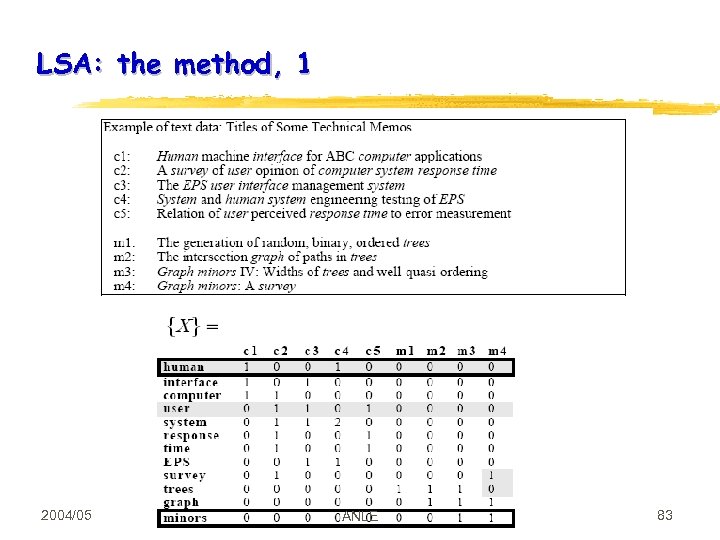

LSA: Singular Value Decomposition 2004/05 ANLE 84

LSA: Singular Value Decomposition 2004/05 ANLE 84

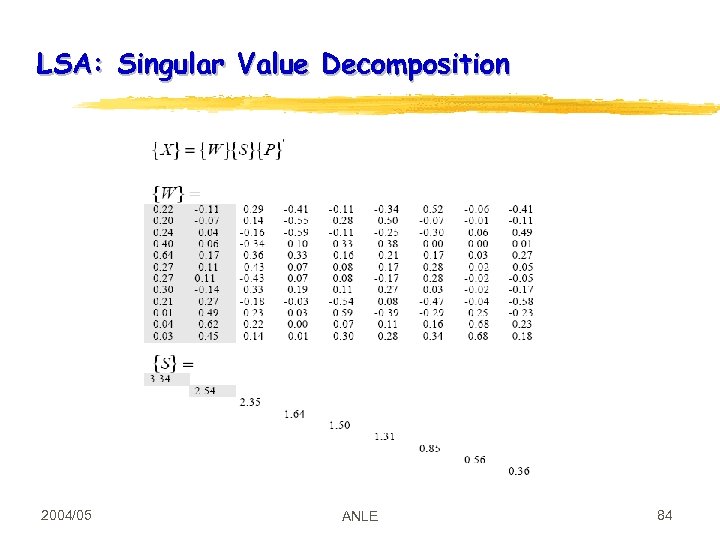

LSA: Reconstructed matrix 2004/05 ANLE 85

LSA: Reconstructed matrix 2004/05 ANLE 85

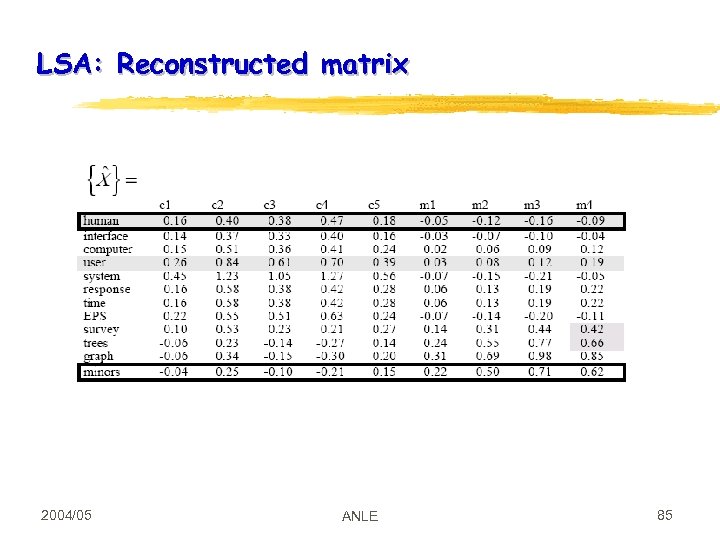

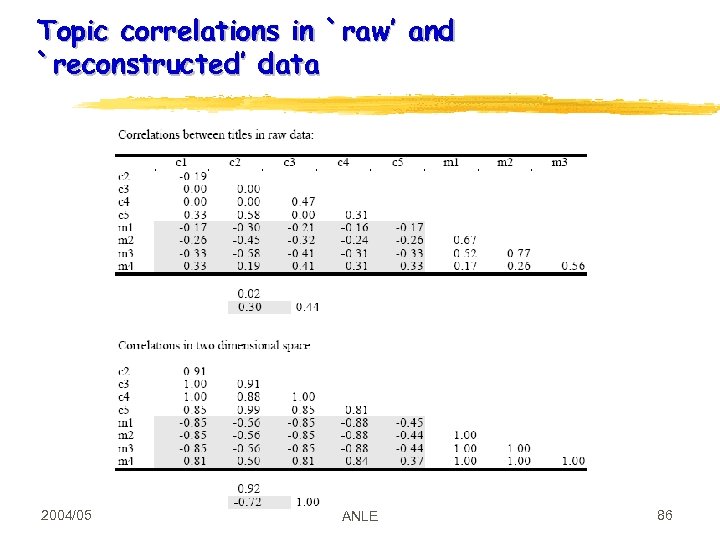

Topic correlations in `raw’ and `reconstructed’ data 2004/05 ANLE 86

Topic correlations in `raw’ and `reconstructed’ data 2004/05 ANLE 86

Clustering algorithms partition a set of objects into CLUSTERS An UNSUPERVISED method Two applications: Exploratory Data Analysis GENERALIZATION 2004/05 ANLE 87

Clustering algorithms partition a set of objects into CLUSTERS An UNSUPERVISED method Two applications: Exploratory Data Analysis GENERALIZATION 2004/05 ANLE 87

Clustering X X 2004/05 X X ANLE 88

Clustering X X 2004/05 X X ANLE 88

Clustering with Syntactic Information Pereira and Tishby, 1992: two words are similar if they occur as objects of the same verbs John ate POPCORN John ate BANANAS C(w) is the distribution of verbs for which w served as direct object. First approximation: just counts In fact: probabilities Similarity: RELATIVE ENTROPY 2004/05 ANLE 89

Clustering with Syntactic Information Pereira and Tishby, 1992: two words are similar if they occur as objects of the same verbs John ate POPCORN John ate BANANAS C(w) is the distribution of verbs for which w served as direct object. First approximation: just counts In fact: probabilities Similarity: RELATIVE ENTROPY 2004/05 ANLE 89

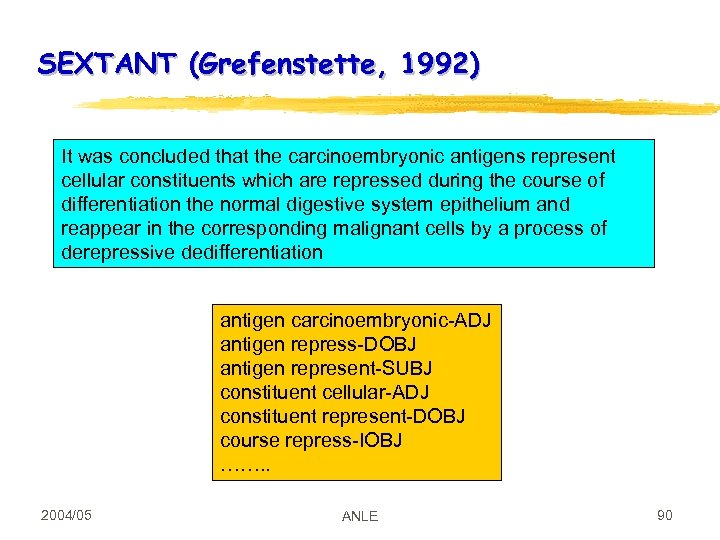

SEXTANT (Grefenstette, 1992) It was concluded that the carcinoembryonic antigens represent cellular constituents which are repressed during the course of differentiation the normal digestive system epithelium and reappear in the corresponding malignant cells by a process of derepressive dedifferentiation antigen carcinoembryonic-ADJ antigen repress-DOBJ antigen represent-SUBJ constituent cellular-ADJ constituent represent-DOBJ course repress-IOBJ ……. . 2004/05 ANLE 90

SEXTANT (Grefenstette, 1992) It was concluded that the carcinoembryonic antigens represent cellular constituents which are repressed during the course of differentiation the normal digestive system epithelium and reappear in the corresponding malignant cells by a process of derepressive dedifferentiation antigen carcinoembryonic-ADJ antigen repress-DOBJ antigen represent-SUBJ constituent cellular-ADJ constituent represent-DOBJ course repress-IOBJ ……. . 2004/05 ANLE 90

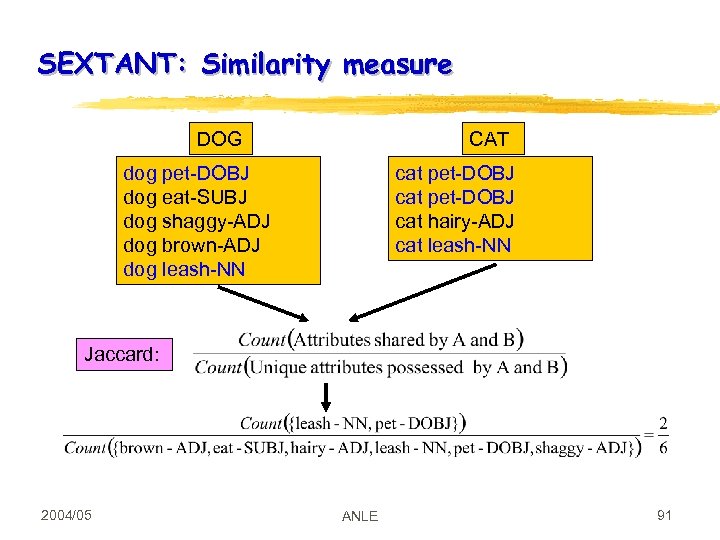

SEXTANT: Similarity measure DOG CAT dog pet-DOBJ dog eat-SUBJ dog shaggy-ADJ dog brown-ADJ dog leash-NN cat pet-DOBJ cat hairy-ADJ cat leash-NN Jaccard: 2004/05 ANLE 91

SEXTANT: Similarity measure DOG CAT dog pet-DOBJ dog eat-SUBJ dog shaggy-ADJ dog brown-ADJ dog leash-NN cat pet-DOBJ cat hairy-ADJ cat leash-NN Jaccard: 2004/05 ANLE 91

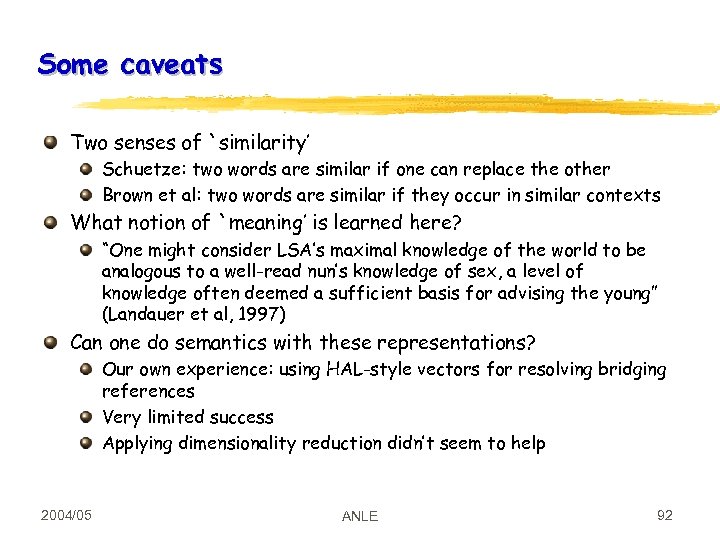

Some caveats Two senses of `similarity’ Schuetze: two words are similar if one can replace the other Brown et al: two words are similar if they occur in similar contexts What notion of `meaning’ is learned here? “One might consider LSA’s maximal knowledge of the world to be analogous to a well-read nun’s knowledge of sex, a level of knowledge often deemed a sufficient basis for advising the young” (Landauer et al, 1997) Can one do semantics with these representations? Our own experience: using HAL-style vectors for resolving bridging references Very limited success Applying dimensionality reduction didn’t seem to help 2004/05 ANLE 92

Some caveats Two senses of `similarity’ Schuetze: two words are similar if one can replace the other Brown et al: two words are similar if they occur in similar contexts What notion of `meaning’ is learned here? “One might consider LSA’s maximal knowledge of the world to be analogous to a well-read nun’s knowledge of sex, a level of knowledge often deemed a sufficient basis for advising the young” (Landauer et al, 1997) Can one do semantics with these representations? Our own experience: using HAL-style vectors for resolving bridging references Very limited success Applying dimensionality reduction didn’t seem to help 2004/05 ANLE 92

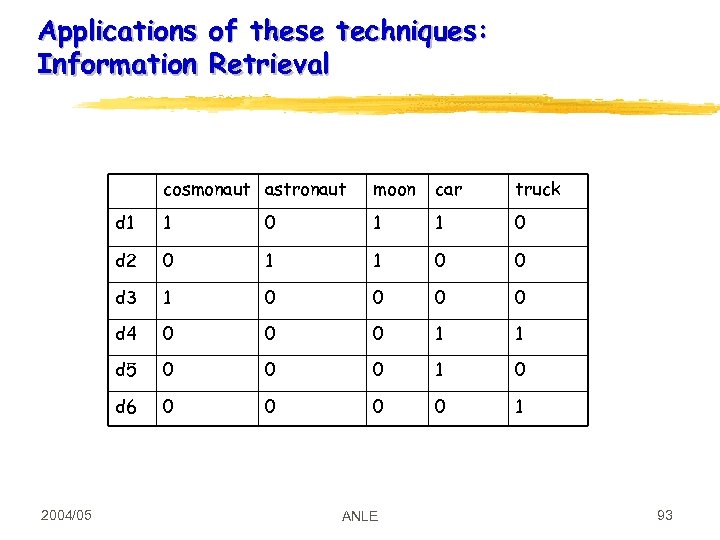

Applications of these techniques: Information Retrieval cosmonaut astronaut car truck d 1 1 0 d 2 0 1 1 0 0 d 3 1 0 0 d 4 0 0 0 1 1 d 5 0 0 0 1 0 d 6 2004/05 moon 0 0 1 ANLE 93

Applications of these techniques: Information Retrieval cosmonaut astronaut car truck d 1 1 0 d 2 0 1 1 0 0 d 3 1 0 0 d 4 0 0 0 1 1 d 5 0 0 0 1 0 d 6 2004/05 moon 0 0 1 ANLE 93

Readings Jurafsky and Martin, chapter 17. 3 Also useful: Manning and Schuetze, chapter 8 Charniak, chapters 9 -10 Some papers: HAL: see the Higher Dimensional Space page LSA: Various papers on the Colorado site Good reference: Landauer, Foltz, and Laham. (1997). Introduction to Latent Semantic Analysis. Discourse Processes. 2004/05 ANLE 94

Readings Jurafsky and Martin, chapter 17. 3 Also useful: Manning and Schuetze, chapter 8 Charniak, chapters 9 -10 Some papers: HAL: see the Higher Dimensional Space page LSA: Various papers on the Colorado site Good reference: Landauer, Foltz, and Laham. (1997). Introduction to Latent Semantic Analysis. Discourse Processes. 2004/05 ANLE 94