6f52b374572a5739e4d81d977d5593b7.ppt

- Количество слайдов: 36

Lecture Notes 16: Bayes’ Theorem and Data Mining Zhangxi Lin ISQS 6347 1

Modeling Uncertainty n Probability Review n Bayes Classifier n Value of Information n Conditional Probability and Bayes’ Theorem n Expected Value of Perfect Information n Expected Value of Imperfect Information 2

Probability Review n P(A|B) = P(A and B) / P(B) n “Probability of A given B” n Example, there are 40 female students in a class of 100. 10 of them are from some foreign countries. 20 male students are also foreign students. n Even A: student from a foreign country n Even B: a female student n If randomly choosing a female student to present in the class, the probability she is a foreign student: P(A|B) = 10 / 40 = 0. 25, or P(A|B) = P (A & B) / P (B) = (10 /100) / (40 / 100) = 0. 1 / 0. 4 = 0. 25 n That is, P(A|B) = # of A&B / # of B = (# of A&B / Total) / (# of B / Total) = P(A & B) / P(B) 3

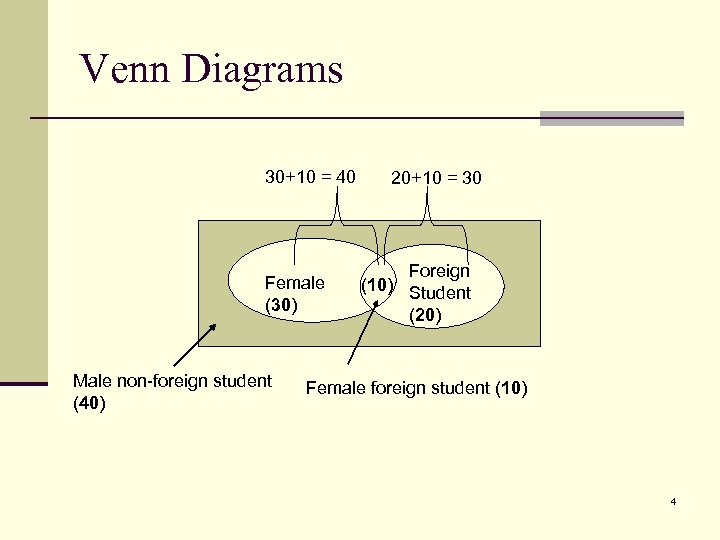

Venn Diagrams 30+10 = 40 Female (30) Male non-foreign student (40) 20+10 = 30 Foreign (10) Student (20) Female foreign student (10) 4

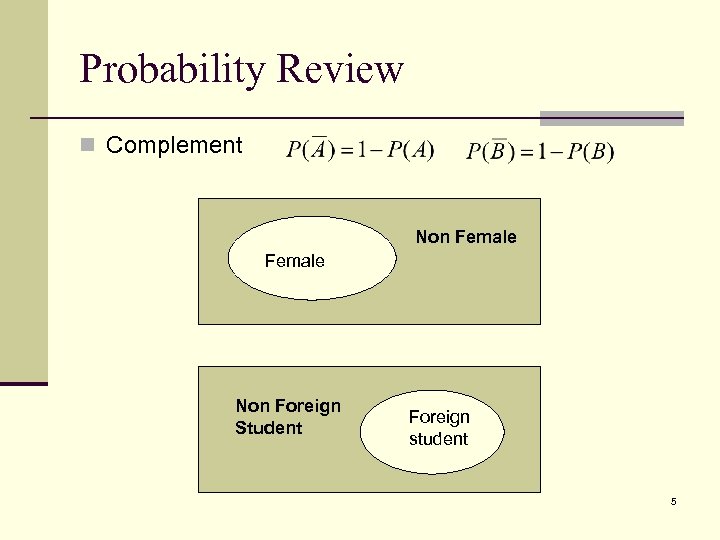

Probability Review n Complement Non Female Non Foreign Student Foreign student 5

Bayes Classifier 6

Bayes’ Theorem (From Wikipedia) n In probability theory, Bayes' theorem (often called Bayes' Law) relates the conditional and marginal probabilities of two random events. It is often used to compute posterior probabilities given observations. For example, a patient may be observed to have certain symptoms. Bayes' theorem can be used to compute the probability that a proposed diagnosis is correct, given that observation. n As a formal theorem, Bayes' theorem is valid in all interpretations of probability. However, it plays a central role in the debate around the foundations of statistics: frequentist and Bayesian interpretations disagree about the ways in which probabilities should be assigned in applications. Frequentists assign probabilities to random events according to their frequencies of occurrence or to subsets of populations as proportions of the whole, while Bayesians describe probabilities in terms of beliefs and degrees of uncertainty. The articles on Bayesian probability and frequentist probability discuss these debates at greater length. 7

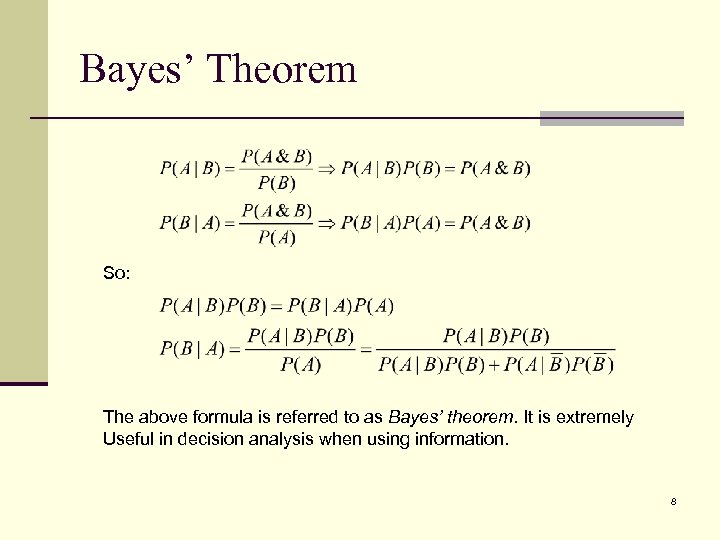

Bayes’ Theorem So: The above formula is referred to as Bayes’ theorem. It is extremely Useful in decision analysis when using information. 8

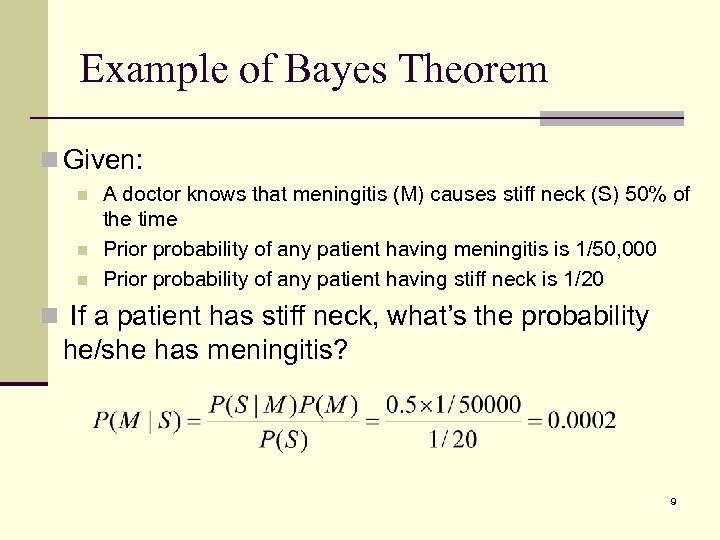

Example of Bayes Theorem n Given: n n n A doctor knows that meningitis (M) causes stiff neck (S) 50% of the time Prior probability of any patient having meningitis is 1/50, 000 Prior probability of any patient having stiff neck is 1/20 n If a patient has stiff neck, what’s the probability he/she has meningitis? 9

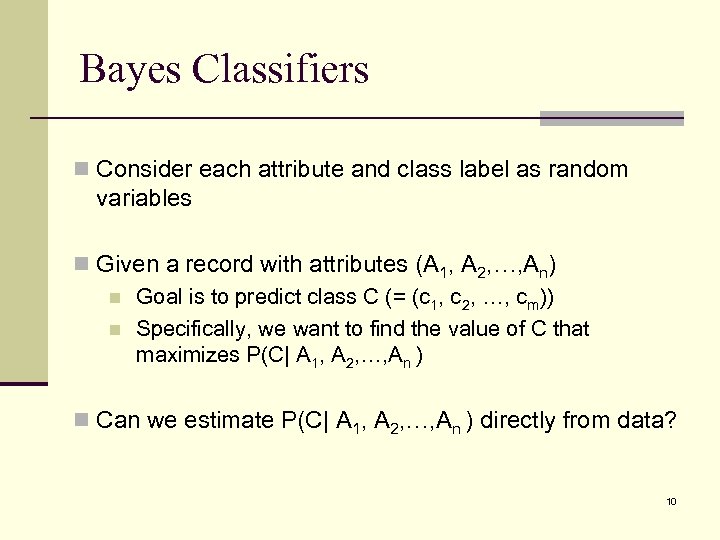

Bayes Classifiers n Consider each attribute and class label as random variables n Given a record with attributes (A 1, A 2, …, An) n Goal is to predict class C (= (c 1, c 2, …, cm)) n Specifically, we want to find the value of C that maximizes P(C| A 1, A 2, …, An ) n Can we estimate P(C| A 1, A 2, …, An ) directly from data? 10

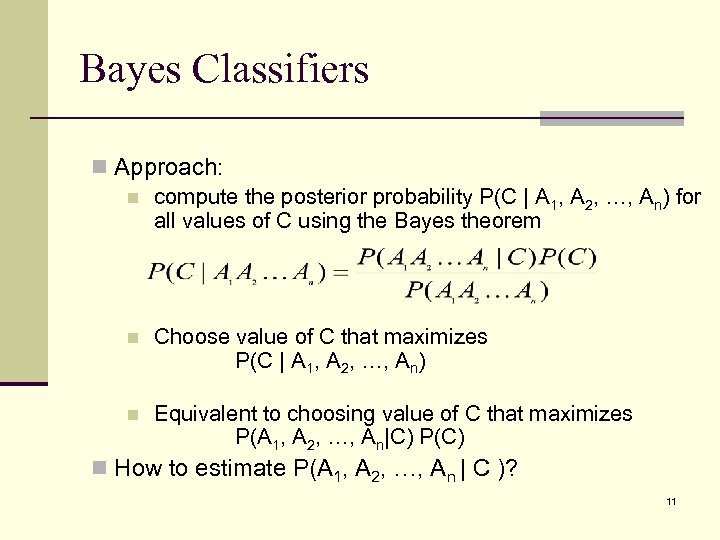

Bayes Classifiers n Approach: n compute the posterior probability P(C | A 1, A 2, …, An) for all values of C using the Bayes theorem n Choose value of C that maximizes P(C | A 1, A 2, …, An) n Equivalent to choosing value of C that maximizes P(A 1, A 2, …, An|C) P(C) n How to estimate P(A 1, A 2, …, An | C )? 11

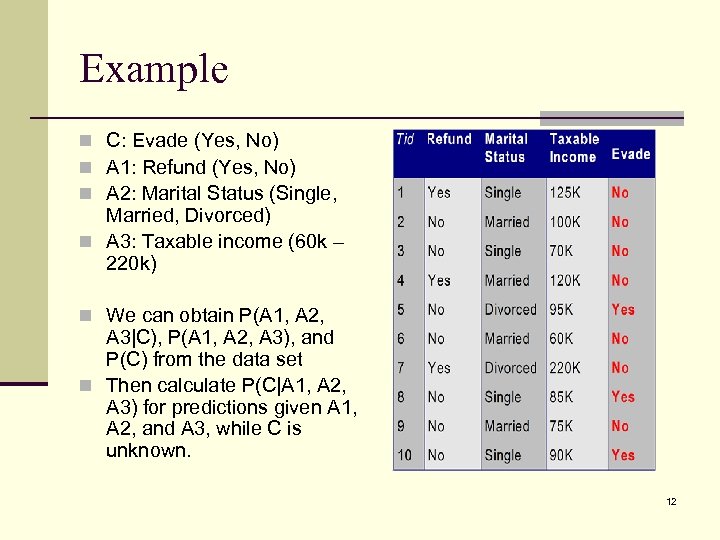

Example n C: Evade (Yes, No) n A 1: Refund (Yes, No) n A 2: Marital Status (Single, Married, Divorced) n A 3: Taxable income (60 k – 220 k) n We can obtain P(A 1, A 2, A 3|C), P(A 1, A 2, A 3), and P(C) from the data set n Then calculate P(C|A 1, A 2, A 3) for predictions given A 1, A 2, and A 3, while C is unknown. 12

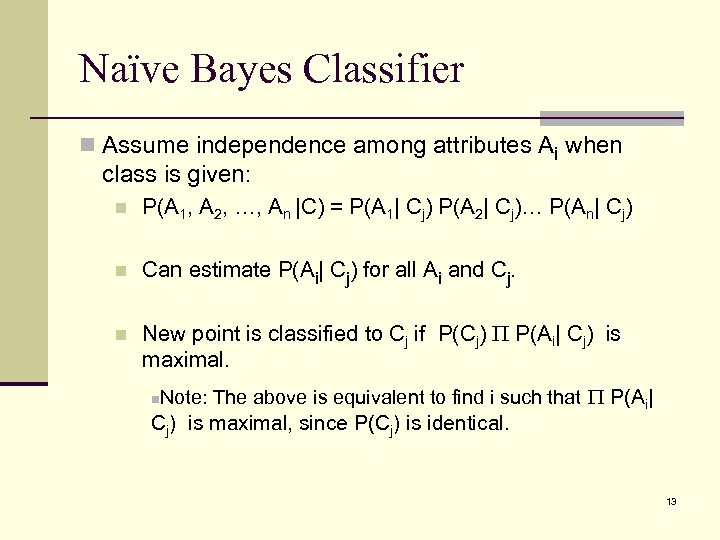

Naïve Bayes Classifier n Assume independence among attributes Ai when class is given: n P(A 1, A 2, …, An |C) = P(A 1| Cj) P(A 2| Cj)… P(An| Cj) n Can estimate P(Ai| Cj) for all Ai and Cj. n New point is classified to Cj if P(Cj) P(Ai| Cj) is maximal. n Note: The above is equivalent to find i such that P(Ai| Cj) is maximal, since P(Cj) is identical. 13

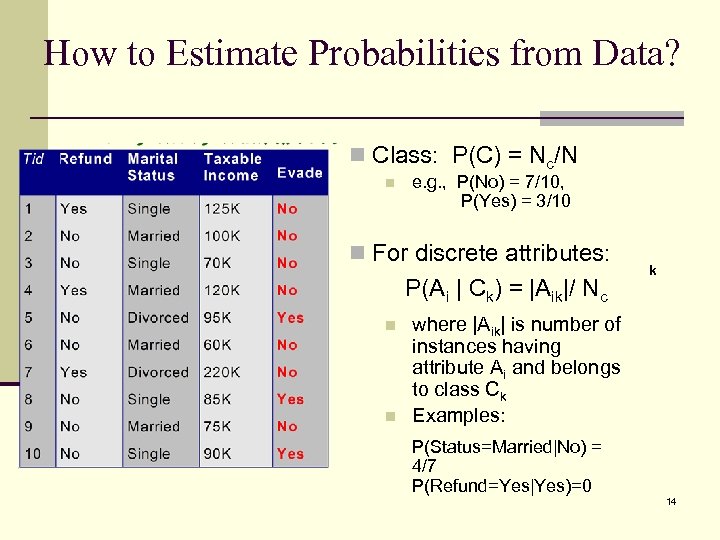

How to Estimate Probabilities from Data? n Class: P(C) = Nc/N n e. g. , P(No) = 7/10, P(Yes) = 3/10 n For discrete attributes: P(Ai | Ck) = |Aik|/ Nc n n k where |Aik| is number of instances having attribute Ai and belongs to class Ck Examples: P(Status=Married|No) = 4/7 P(Refund=Yes|Yes)=0 14

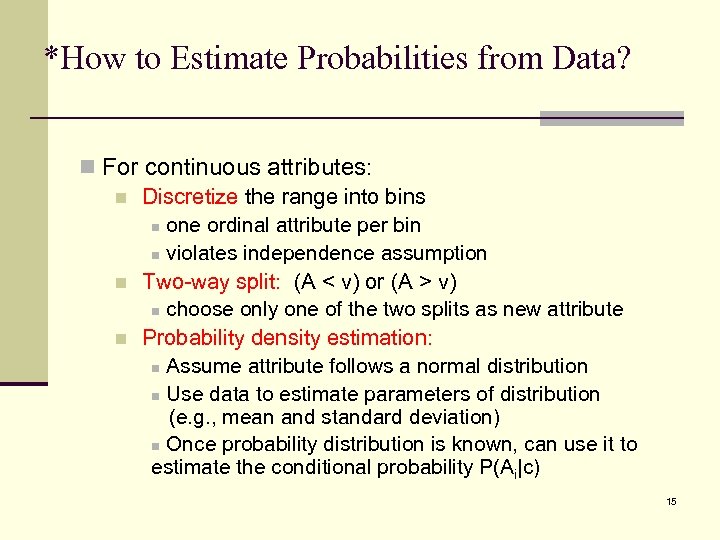

*How to Estimate Probabilities from Data? n For continuous attributes: n Discretize the range into bins n one ordinal attribute per bin n violates independence assumption n Two-way split: (A < v) or (A > v) n choose only one of the two splits as new attribute n Probability density estimation: n Assume attribute follows a normal distribution n Use data to estimate parameters of distribution (e. g. , mean and standard deviation) n Once probability distribution is known, can use it to estimate the conditional probability P(Ai|c) 15

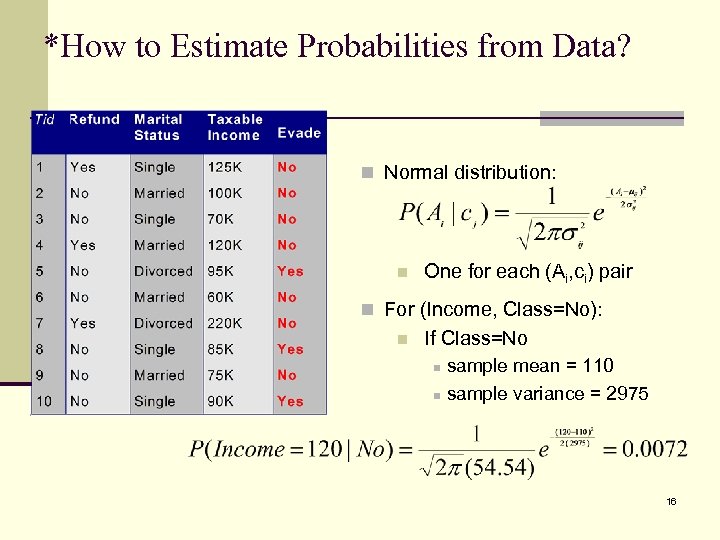

*How to Estimate Probabilities from Data? n Normal distribution: n One for each (Ai, ci) pair n For (Income, Class=No): n If Class=No sample mean = 110 n sample variance = 2975 n 16

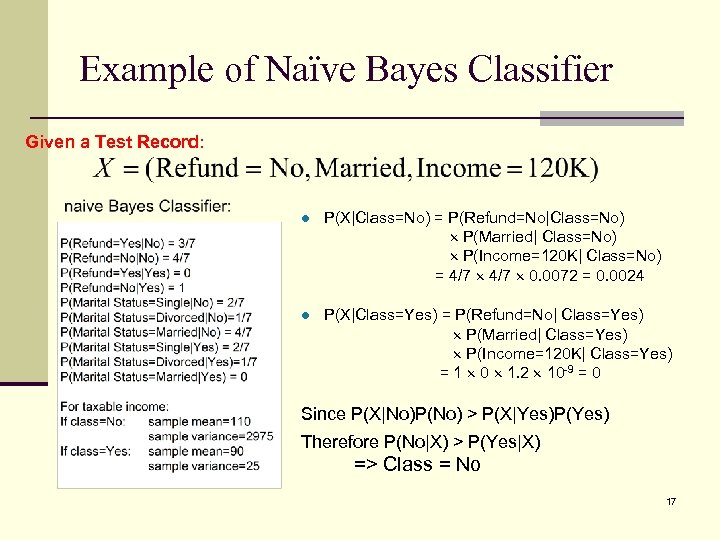

Example of Naïve Bayes Classifier Given a Test Record: l P(X|Class=No) = P(Refund=No|Class=No) P(Married| Class=No) P(Income=120 K| Class=No) = 4/7 0. 0072 = 0. 0024 l P(X|Class=Yes) = P(Refund=No| Class=Yes) P(Married| Class=Yes) P(Income=120 K| Class=Yes) = 1 0 1. 2 10 -9 = 0 Since P(X|No)P(No) > P(X|Yes)P(Yes) Therefore P(No|X) > P(Yes|X) => Class = No 17

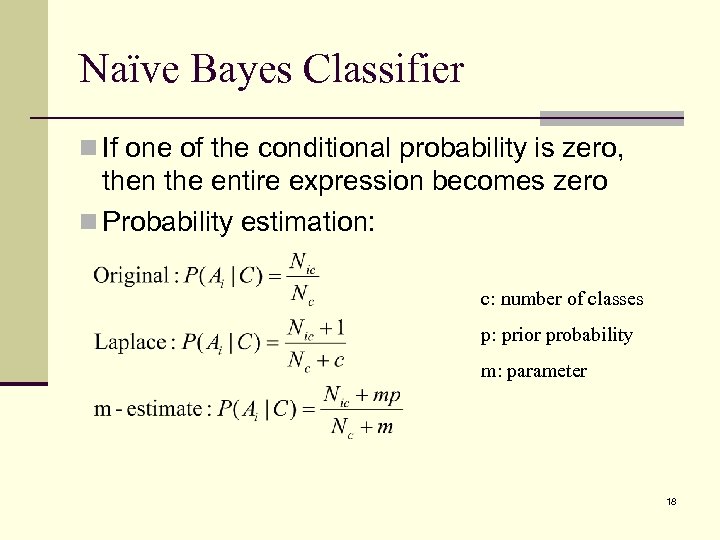

Naïve Bayes Classifier n If one of the conditional probability is zero, then the entire expression becomes zero n Probability estimation: c: number of classes p: prior probability m: parameter 18

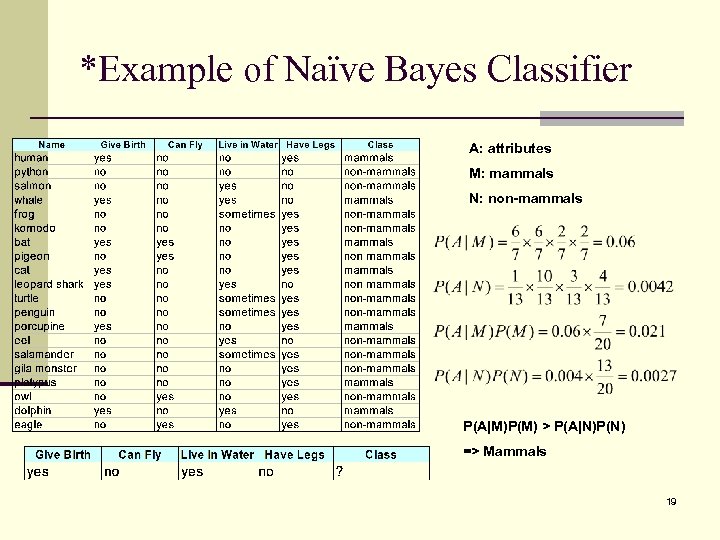

*Example of Naïve Bayes Classifier A: attributes M: mammals N: non-mammals P(A|M)P(M) > P(A|N)P(N) => Mammals 19

Naïve Bayes (Summary) n Robust to isolated noise points n Handle missing values by ignoring the instance during probability estimate calculations n Robust to irrelevant attributes n Independence assumption may not hold for some attributes n Use other techniques such as Bayesian Belief Networks (BBN) 20

Value of Information n. When facing uncertain prospects we need information in order to reduce uncertainty n. Information gathering includes consulting experts, conducting surveys, performing mathematical or statistical analyses, etc. 21

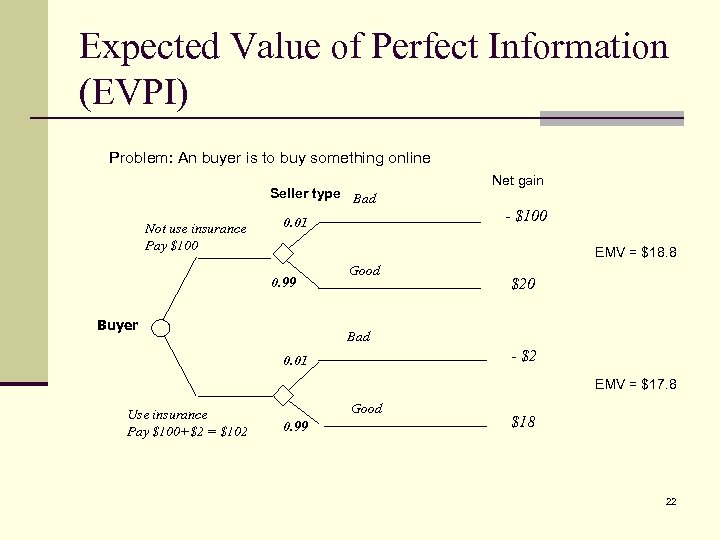

Expected Value of Perfect Information (EVPI) Problem: An buyer is to buy something online Seller type Bad Not use insurance Pay $100 Net gain - $100 0. 01 EMV = $18. 8 0. 99 Buyer Good $20 Bad - $2 0. 01 EMV = $17. 8 Use insurance Pay $100+$2 = $102 Good 0. 99 $18 22

Expected Value of Imperfect Information (EVII) n We rarely access to perfect information, which is common. Thus we must extend our analysis to deal with imperfect information. n Now suppose we can access the online reputation to estimate the risk in trading with a seller. n Someone provide their suggestions to you according to their experience. Their predictions are not 100% correct: n If the product is actually good, the person’s prediction is 90% correct, whereas the remaining 10% is suggested bad. n If the product is actually bad, the person’s prediction is 80% correct, whereas the remaining 20% is suggested good. n Although the estimate is not accurate enough, it can be used to improve our decision making: n If we predict the risk is high to buy the product online, we purchase insurance 23

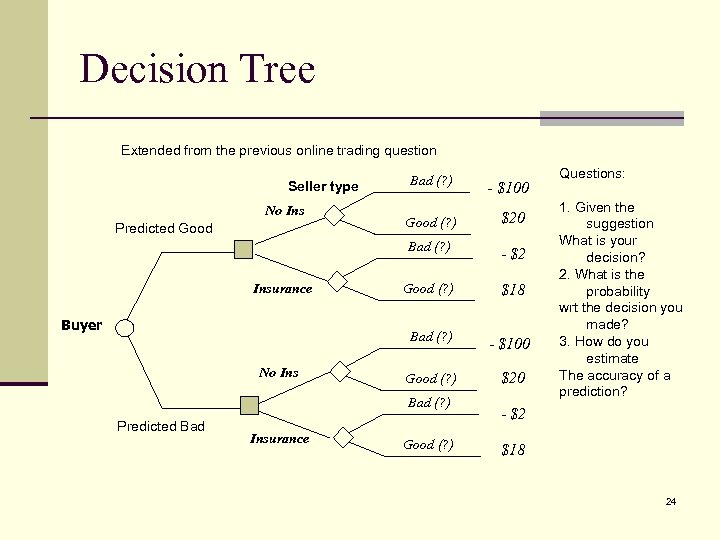

Decision Tree Extended from the previous online trading question Predicted Good Insurance Buyer No Ins - $100 Good (? ) $20 - $2 Good (? ) $18 Bad (? ) No Ins Bad (? ) Seller type - $100 Good (? ) $20 Bad (? ) Predicted Bad Insurance Good (? ) Questions: 1. Given the suggestion What is your decision? 2. What is the probability wrt the decision you made? 3. How do you estimate The accuracy of a prediction? - $2 $18 24

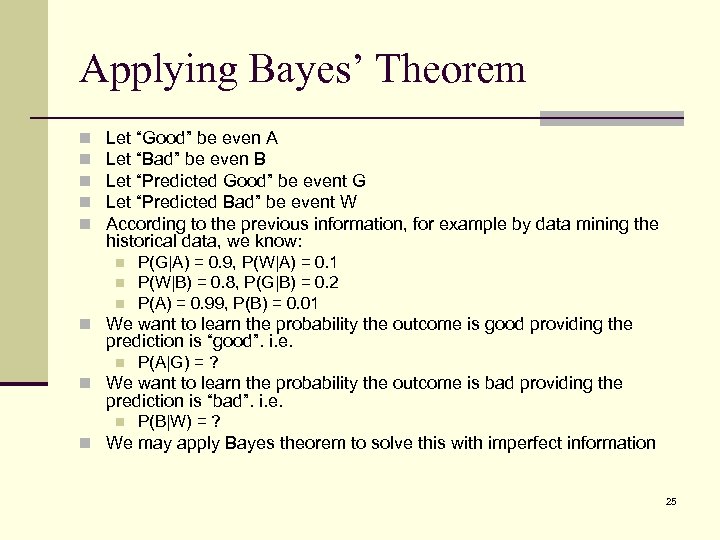

Applying Bayes’ Theorem n n n Let “Good” be even A Let “Bad” be even B Let “Predicted Good” be event G Let “Predicted Bad” be event W According to the previous information, for example by data mining the historical data, we know: n n n P(G|A) = 0. 9, P(W|A) = 0. 1 P(W|B) = 0. 8, P(G|B) = 0. 2 P(A) = 0. 99, P(B) = 0. 01 n We want to learn the probability the outcome is good providing the prediction is “good”. i. e. n P(A|G) = ? n We want to learn the probability the outcome is bad providing the prediction is “bad”. i. e. n P(B|W) = ? n We may apply Bayes theorem to solve this with imperfect information 25

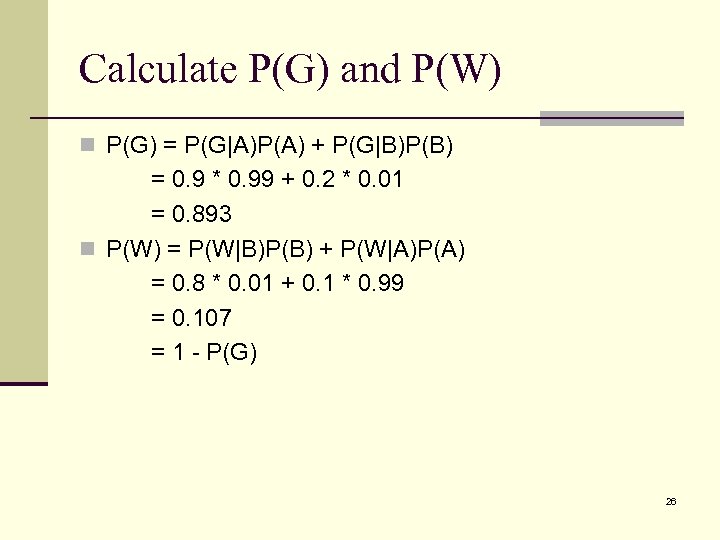

Calculate P(G) and P(W) n P(G) = P(G|A)P(A) + P(G|B)P(B) = 0. 9 * 0. 99 + 0. 2 * 0. 01 = 0. 893 n P(W) = P(W|B)P(B) + P(W|A)P(A) = 0. 8 * 0. 01 + 0. 1 * 0. 99 = 0. 107 = 1 - P(G) 26

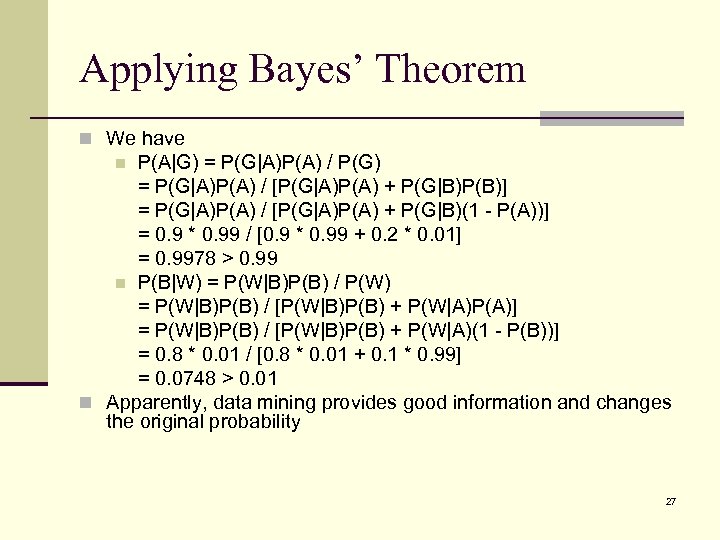

Applying Bayes’ Theorem n We have P(A|G) = P(G|A)P(A) / P(G) = P(G|A)P(A) / [P(G|A)P(A) + P(G|B)P(B)] = P(G|A)P(A) / [P(G|A)P(A) + P(G|B)(1 - P(A))] = 0. 9 * 0. 99 / [0. 9 * 0. 99 + 0. 2 * 0. 01] = 0. 9978 > 0. 99 n P(B|W) = P(W|B)P(B) / P(W) = P(W|B)P(B) / [P(W|B)P(B) + P(W|A)P(A)] = P(W|B)P(B) / [P(W|B)P(B) + P(W|A)(1 - P(B))] = 0. 8 * 0. 01 / [0. 8 * 0. 01 + 0. 1 * 0. 99] = 0. 0748 > 0. 01 n Apparently, data mining provides good information and changes the original probability n 27

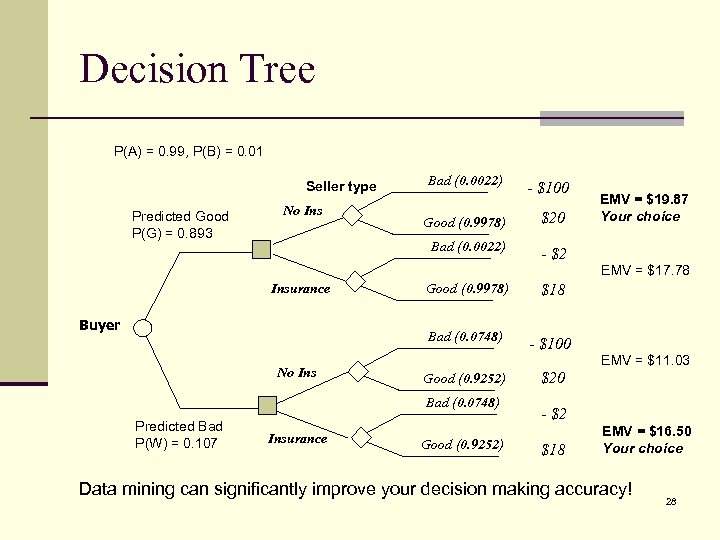

Decision Tree P(A) = 0. 99, P(B) = 0. 01 Seller type Predicted Good P(G) = 0. 893 No Ins Bad (0. 0022) - $100 Good (0. 9978) $20 Bad (0. 0022) EMV = $19. 87 Your choice - $2 EMV = $17. 78 Insurance No Ins $18 Bad (0. 0748) Buyer Good (0. 9978) - $100 EMV = $11. 03 Good (0. 9252) Bad (0. 0748) Predicted Bad P(W) = 0. 107 Insurance Good (0. 9252) $20 - $2 $18 EMV = $16. 50 Your choice Data mining can significantly improve your decision making accuracy! 28

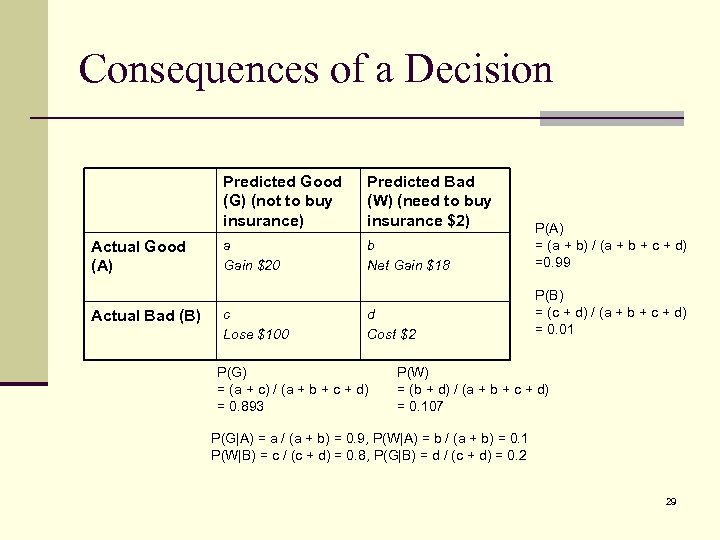

Consequences of a Decision Predicted Good (G) (not to buy insurance) Actual Good (A) Actual Bad (B) Predicted Bad (W) (need to buy insurance $2) a Gain $20 b Net Gain $18 P(A) = (a + b) / (a + b + c + d) =0. 99 d Cost $2 P(B) = (c + d) / (a + b + c + d) = 0. 01 c Lose $100 P(G) = (a + c) / (a + b + c + d) = 0. 893 P(W) = (b + d) / (a + b + c + d) = 0. 107 P(G|A) = a / (a + b) = 0. 9, P(W|A) = b / (a + b) = 0. 1 P(W|B) = c / (c + d) = 0. 8, P(G|B) = d / (c + d) = 0. 2 29

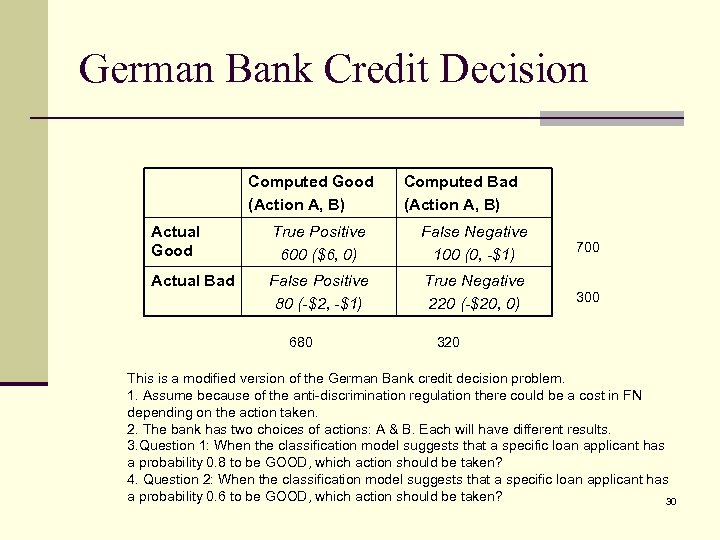

German Bank Credit Decision Computed Good (Action A, B) Computed Bad (Action A, B) Actual Good True Positive 600 ($6, 0) False Negative 100 (0, -$1) 700 Actual Bad False Positive 80 (-$2, -$1) True Negative 220 (-$20, 0) 300 680 320 This is a modified version of the German Bank credit decision problem. 1. Assume because of the anti-discrimination regulation there could be a cost in FN depending on the action taken. 2. The bank has two choices of actions: A & B. Each will have different results. 3. Question 1: When the classification model suggests that a specific loan applicant has a probability 0. 8 to be GOOD, which action should be taken? 4. Question 2: When the classification model suggests that a specific loan applicant has a probability 0. 6 to be GOOD, which action should be taken? 30

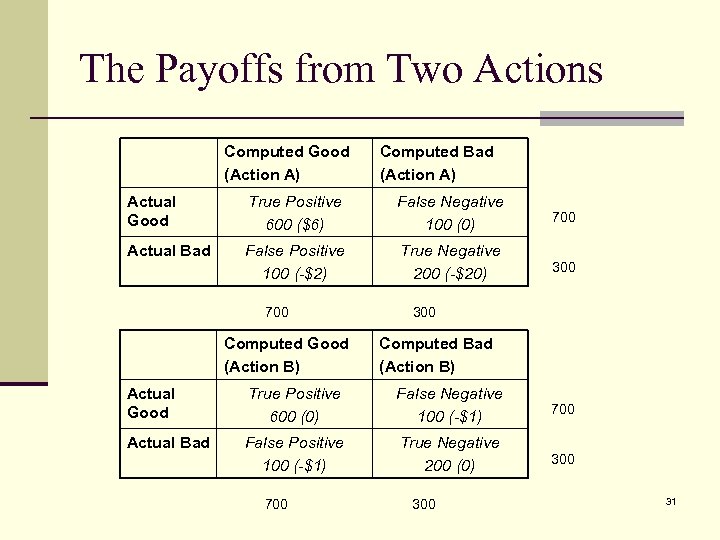

The Payoffs from Two Actions Computed Good (Action A) Computed Bad (Action A) Actual Good True Positive 600 ($6) False Negative 100 (0) 700 Actual Bad False Positive 100 (-$2) True Negative 200 (-$20) 300 700 Computed Good (Action B) 300 Computed Bad (Action B) Actual Good True Positive 600 (0) False Negative 100 (-$1) 700 Actual Bad False Positive 100 (-$1) True Negative 200 (0) 300 700 31

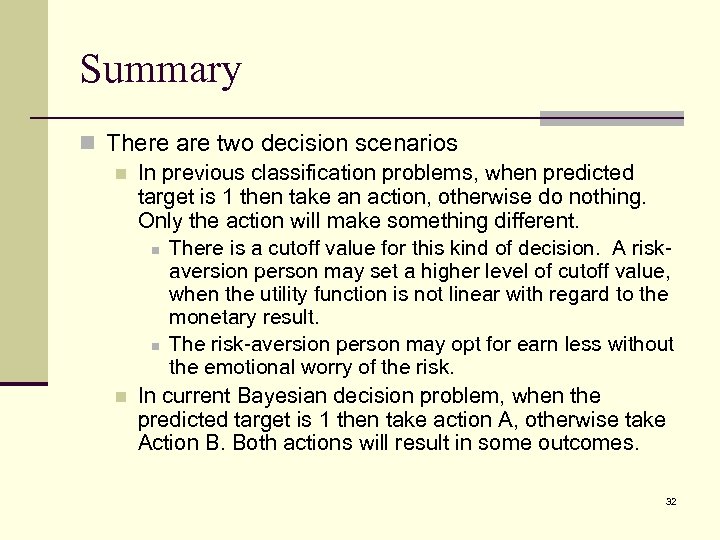

Summary n There are two decision scenarios n In previous classification problems, when predicted target is 1 then take an action, otherwise do nothing. Only the action will make something different. n There is a cutoff value for this kind of decision. A riskaversion person may set a higher level of cutoff value, when the utility function is not linear with regard to the monetary result. n The risk-aversion person may opt for earn less without the emotional worry of the risk. n In current Bayesian decision problem, when the predicted target is 1 then take action A, otherwise take Action B. Both actions will result in some outcomes. 32

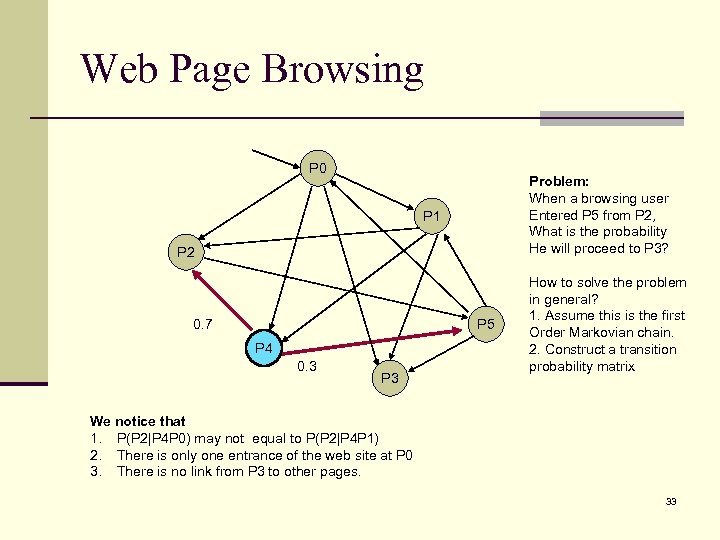

Web Page Browsing P 0 Problem: When a browsing user Entered P 5 from P 2, What is the probability He will proceed to P 3? P 1 P 2 P 5 0. 7 P 4 0. 3 P 3 How to solve the problem in general? 1. Assume this is the first Order Markovian chain. 2. Construct a transition probability matrix We notice that 1. P(P 2|P 4 P 0) may not equal to P(P 2|P 4 P 1) 2. There is only one entrance of the web site at P 0 3. There is no link from P 3 to other pages. 33

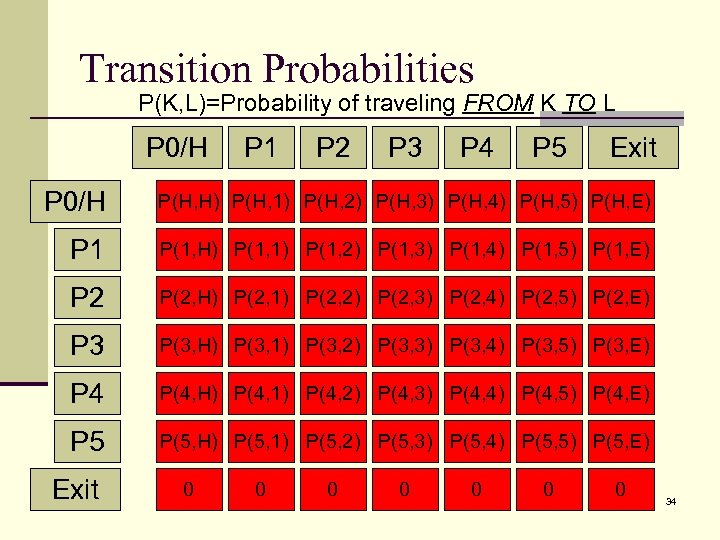

Transition Probabilities P(K, L)=Probability of traveling FROM K TO L P 0/H P 1 P 2 P 3 P 4 P 5 Exit P 0/H P(H, H) P(H, 1) P(H, 2) P(H, 3) P(H, 4) P(H, 5) P(H, E) P 1 P(1, H) P(1, 1) P(1, 2) P(1, 3) P(1, 4) P(1, 5) P(1, E) P 2 P(2, H) P(2, 1) P(2, 2) P(2, 3) P(2, 4) P(2, 5) P(2, E) P 3 P(3, H) P(3, 1) P(3, 2) P(3, 3) P(3, 4) P(3, 5) P(3, E) P 4 P(4, H) P(4, 1) P(4, 2) P(4, 3) P(4, 4) P(4, 5) P(4, E) P 5 P(5, H) P(5, 1) P(5, 2) P(5, 3) P(5, 4) P(5, 5) P(5, E) Exit 0 0 0 0 34

Demonstration n Dataset: Commrex web log data n Data Exploration n Link analysis n The links among nodes n Calculate the transition matrix n The Bayesian network model for the web log data n Reference: n David Heckerman, “A Tutorial on Learning With Bayesian Networks, ” March 1995 (Revised November 1996), Technical Report, MSR-TR-9506\BASRV 1ISQS 6347tr-95 -06. pdf 35

Readings n SPB, Chapter 3 n RG, Chapter 10 36

6f52b374572a5739e4d81d977d5593b7.ppt