7d88d846de8b09d57157a547c4fd4d6e.ppt

- Количество слайдов: 35

Lecture 18 Mass Storage Devices: RAID, Data Library, MSS Mass Storage CS 510 Computer Architectures 1

Review: Improving Bandwidth of Secondary Storage • Processor performance growth phenomenal • I/O? “I/O certainly has been lagging in the last decade” Seymour Cray, Public Lecture (1976) “Also, I/O needs a lot of work” David Kuck, Keynote Address, (1988) Mass Storage CS 510 Computer Architectures 2

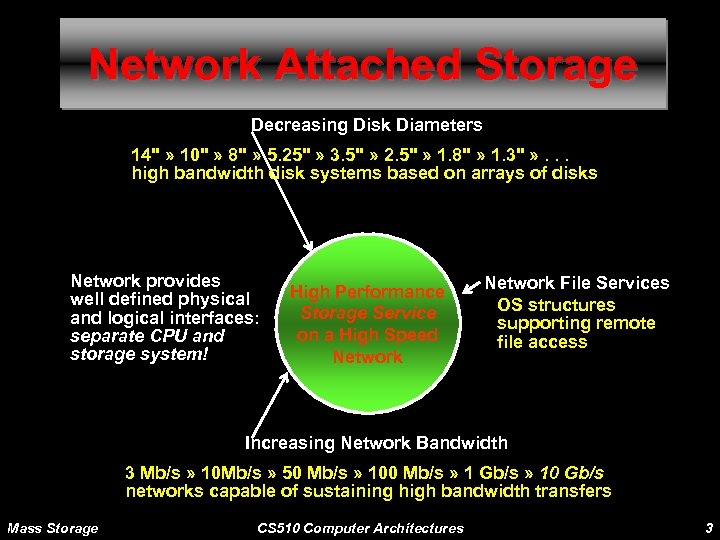

Network Attached Storage Decreasing Disk Diameters 14" » 10" » 8" » 5. 25" » 3. 5" » 2. 5" » 1. 8" » 1. 3" » . . . high bandwidth disk systems based on arrays of disks Network provides well defined physical and logical interfaces: separate CPU and storage system! High Performance Storage Service on a High Speed Network File Services OS structures supporting remote file access Increasing Network Bandwidth 3 Mb/s » 10 Mb/s » 50 Mb/s » 100 Mb/s » 1 Gb/s » 10 Gb/s networks capable of sustaining high bandwidth transfers Mass Storage CS 510 Computer Architectures 3

RAID Mass Storage CS 510 Computer Architectures 4

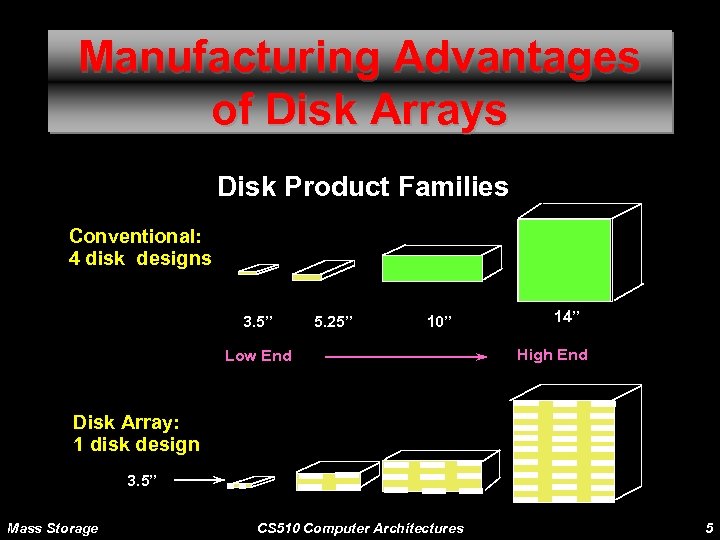

Manufacturing Advantages of Disk Arrays Disk Product Families Conventional: 4 disk designs 3. 5” 5. 25” 10” Low End 14” High End Disk Array: 1 disk design 3. 5” Mass Storage CS 510 Computer Architectures 5

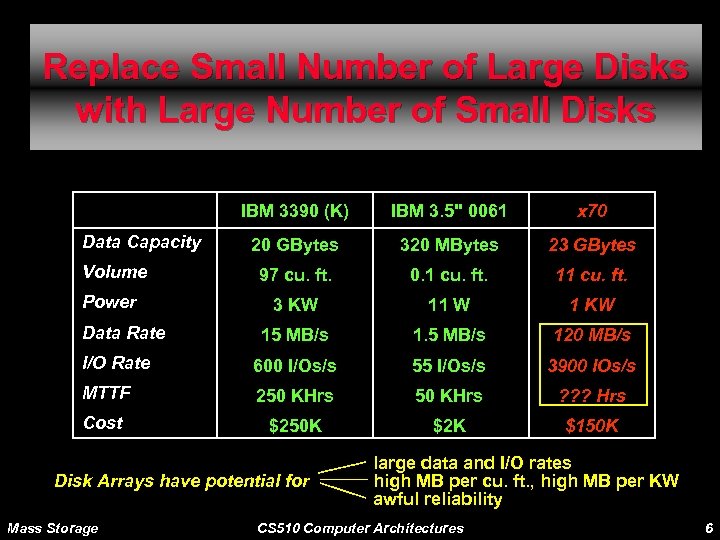

Replace Small Number of Large Disks with Large Number of Small Disks IBM 3390 (K) IBM 3. 5" 0061 x 70 20 GBytes 320 MBytes 23 GBytes 97 cu. ft. 0. 1 cu. ft. 11 cu. ft. 3 KW 11 W 1 KW 15 MB/s 120 MB/s I/O Rate 600 I/Os/s 55 I/Os/s 3900 IOs/s MTTF 250 KHrs ? ? ? Hrs Cost $250 K $2 K $150 K Data Capacity Volume Power Data Rate Disk Arrays have potential for Mass Storage large data and I/O rates high MB per cu. ft. , high MB per KW awful reliability CS 510 Computer Architectures 6

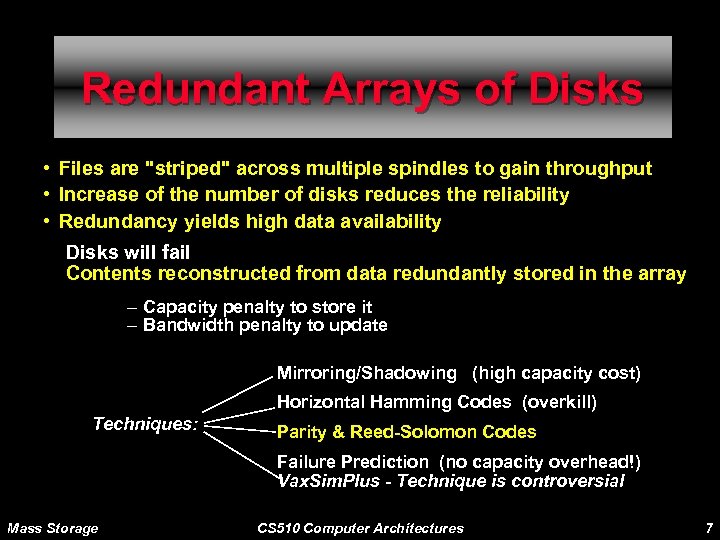

Redundant Arrays of Disks • Files are "striped" across multiple spindles to gain throughput • Increase of the number of disks reduces the reliability • Redundancy yields high data availability Disks will fail Contents reconstructed from data redundantly stored in the array – Capacity penalty to store it – Bandwidth penalty to update Mirroring/Shadowing (high capacity cost) Horizontal Hamming Codes (overkill) Techniques: Parity & Reed-Solomon Codes Failure Prediction (no capacity overhead!) Vax. Sim. Plus - Technique is controversial Mass Storage CS 510 Computer Architectures 7

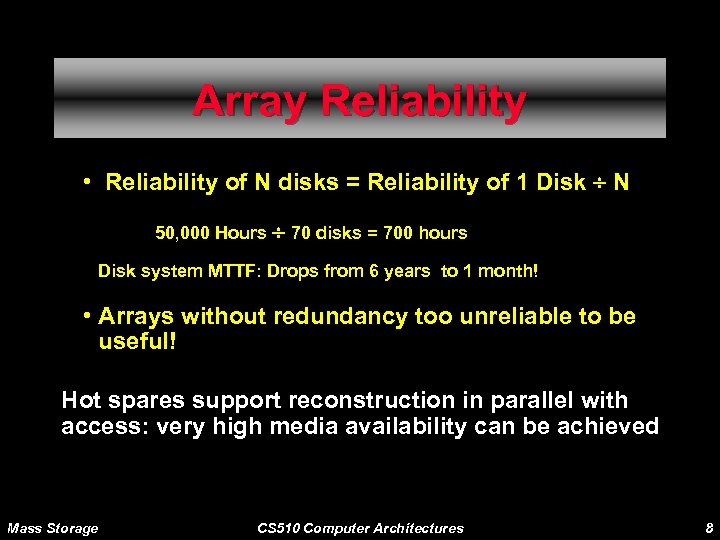

Array Reliability • Reliability of N disks = Reliability of 1 Disk ¸ N 50, 000 Hours ¸ 70 disks = 700 hours Disk system MTTF: Drops from 6 years to 1 month! • Arrays without redundancy too unreliable to be useful! Hot spares support reconstruction in parallel with access: very high media availability can be achieved Mass Storage CS 510 Computer Architectures 8

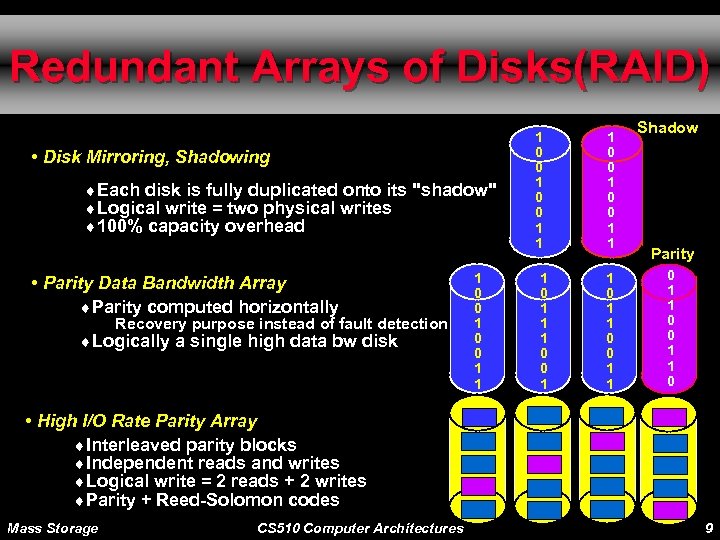

Redundant Arrays of Disks(RAID) • Disk Mirroring, Shadowing ¨Each disk is fully duplicated onto its "shadow" ¨Logical write = two physical writes ¨ 100% capacity overhead • Parity Data Bandwidth Array ¨Parity computed horizontally Recovery purpose instead of fault detection ¨Logically a single high data bw disk 1 0 0 1 1 1 0 0 1 0 0 1 1 Shadow Parity 0 1 1 0 • High I/O Rate Parity Array ¨Interleaved parity blocks ¨Independent reads and writes ¨Logical write = 2 reads + 2 writes ¨Parity + Reed-Solomon codes Mass Storage CS 510 Computer Architectures 9

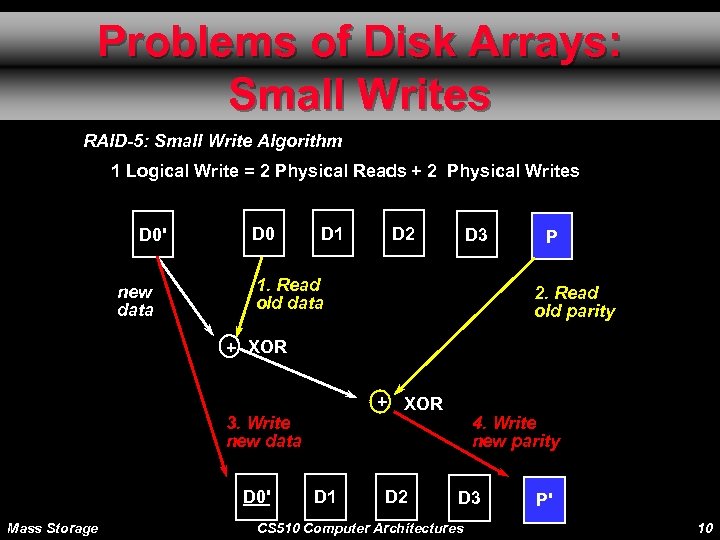

Problems of Disk Arrays: Small Writes RAID-5: Small Write Algorithm 1 Logical Write = 2 Physical Reads + 2 Physical Writes D 0' new data D 0 D 1 D 2 D 3 1. Read old data P 2. Read old parity + XOR 3. Write new data D 0' Mass Storage D 1 D 2 4. Write new parity D 3 CS 510 Computer Architectures P' 10

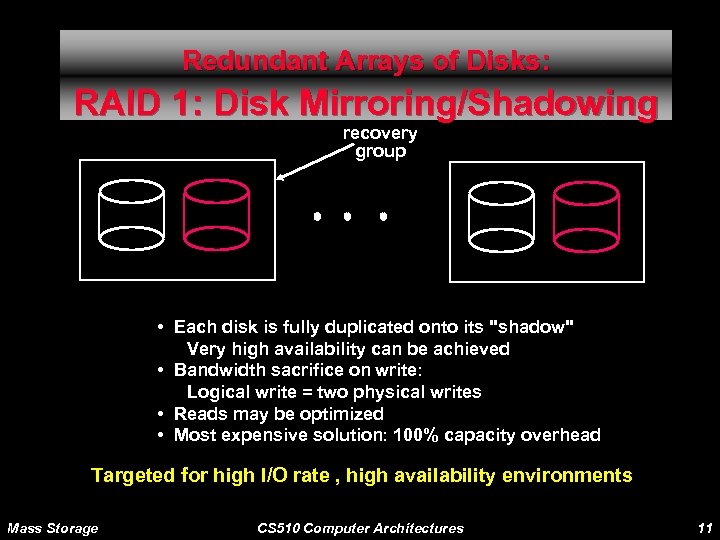

Redundant Arrays of Disks: RAID 1: Disk Mirroring/Shadowing recovery group • Each disk is fully duplicated onto its "shadow" Very high availability can be achieved • Bandwidth sacrifice on write: Logical write = two physical writes • Reads may be optimized • Most expensive solution: 100% capacity overhead Targeted for high I/O rate , high availability environments Mass Storage CS 510 Computer Architectures 11

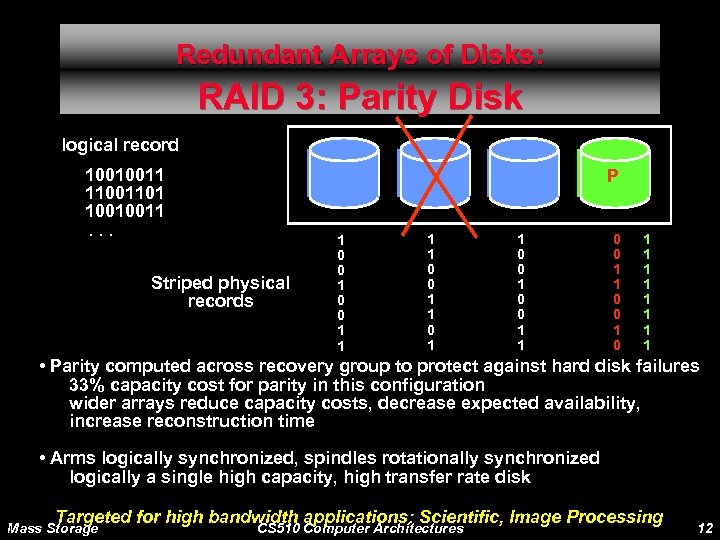

Redundant Arrays of Disks: RAID 3: Parity Disk logical record 10010011 11001101 10010011. . . P Striped physical records 1 0 0 1 1 0 0 1 0 1 1 1 1 • Parity computed across recovery group to protect against hard disk failures 33% capacity cost for parity in this configuration wider arrays reduce capacity costs, decrease expected availability, increase reconstruction time • Arms logically synchronized, spindles rotationally synchronized logically a single high capacity, high transfer rate disk Targeted for high bandwidth applications: Scientific, Image Processing Mass Storage CS 510 Computer Architectures 12

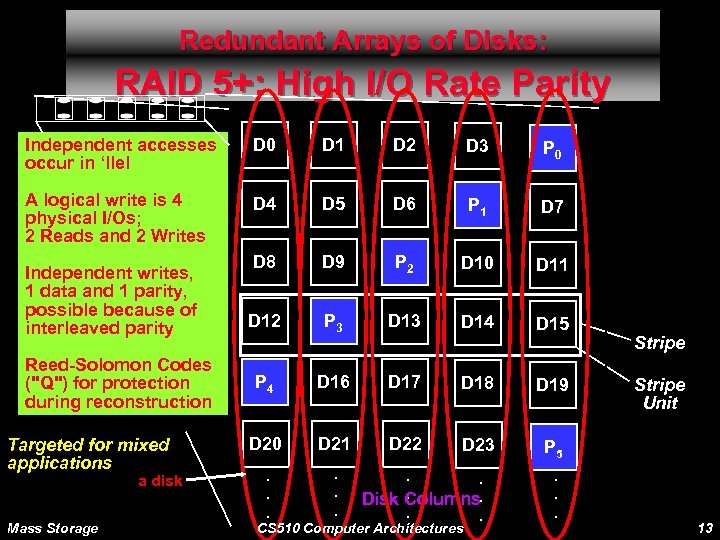

Redundant Arrays of Disks: RAID 5+: High I/O Rate Parity Independent accesses occur in ‘llel D 0 D 1 D 2 D 3 P 0 A logical write is 4 physical I/Os; 2 Reads and 2 Writes D 4 D 5 D 6 P 1 D 7 D 8 D 9 P 2 D 10 D 11 D 12 P 3 D 14 D 15 P 4 D 16 D 17 D 18 D 19 D 20 D 21 D 22 D 23 P 5 . . . Independent writes, 1 data and 1 parity, possible because of interleaved parity Reed-Solomon Codes ("Q") for protection during reconstruction Targeted for mixed applications a disk Mass Storage . . . Disk Columns. . . CS 510 Computer Architectures . . . Stripe Unit 13

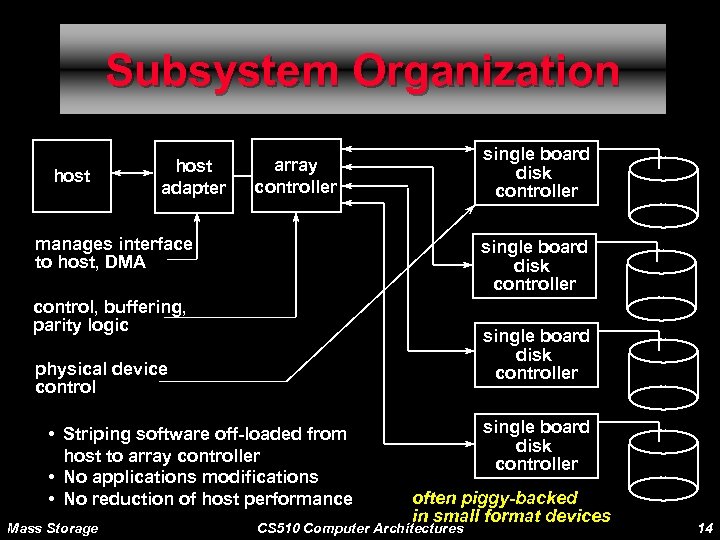

Subsystem Organization host adapter single board disk controller array controller manages interface to host, DMA single board disk controller control, buffering, parity logic single board disk controller physical device control • Striping software off-loaded from host to array controller • No applications modifications • No reduction of host performance Mass Storage single board disk controller often piggy-backed in small format devices CS 510 Computer Architectures 14

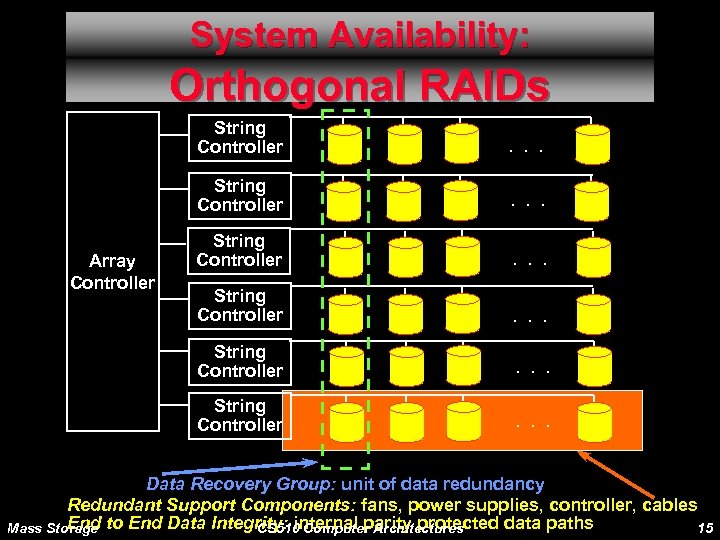

System Availability: Orthogonal RAIDs String Controller . . . String Controller Array Controller . . . Data Recovery Group: unit of data redundancy Redundant Support Components: fans, power supplies, controller, cables End Mass Storage to End Data Integrity: internal parity protected data paths CS 510 Computer Architectures 15

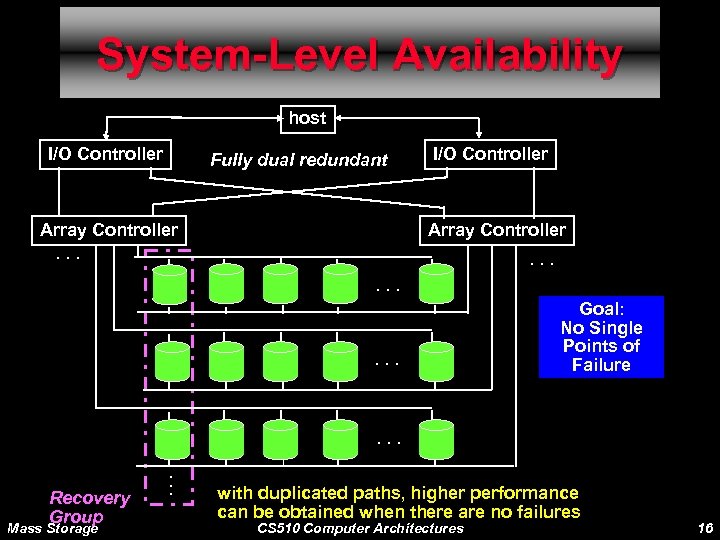

System-Level Availability host I/O Controller Fully dual redundant Array Controller. . . I/O Controller Array Controller. . Goal: No Single Points of Failure . . . Recovery Group Mass Storage . . . with duplicated paths, higher performance can be obtained when there are no failures CS 510 Computer Architectures 16

Magnetic Tapes Mass Storage CS 510 Computer Architectures 17

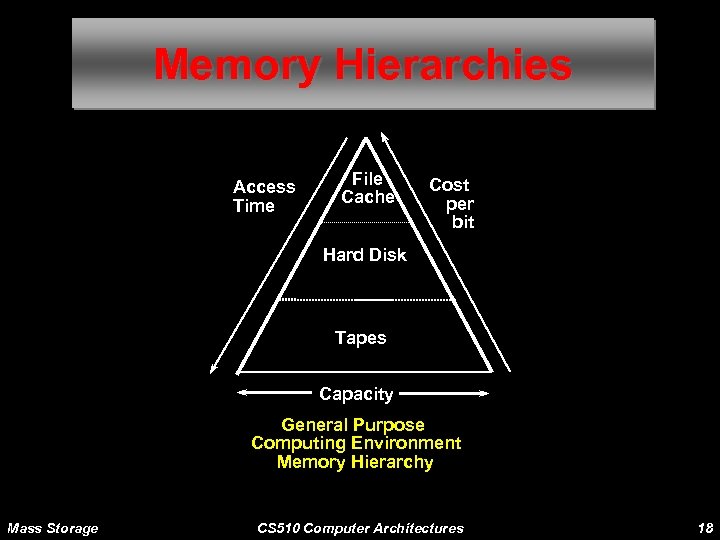

Memory Hierarchies Access Time File Cache Cost per bit Hard Disk Tapes Capacity General Purpose Computing Environment Memory Hierarchy Mass Storage CS 510 Computer Architectures 18

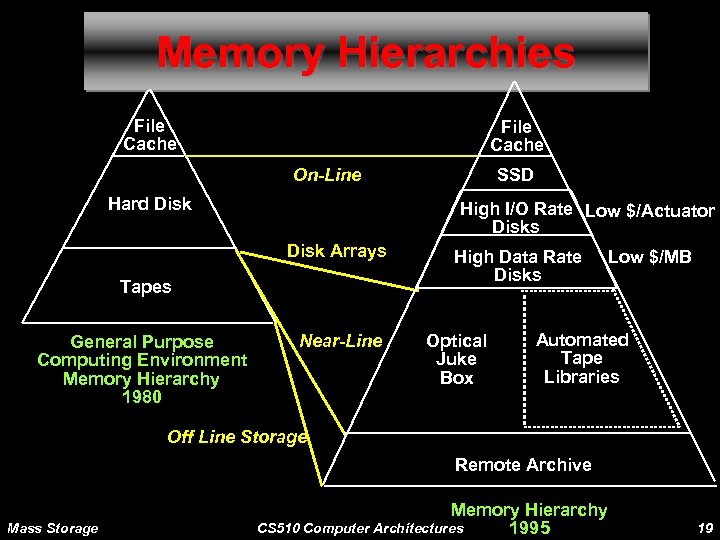

Memory Hierarchies File Cache On-Line Hard Disk High I/O Rate Low $/Actuator Disks Disk Arrays Tapes General Purpose Computing Environment Memory Hierarchy 1980 SSD Near-Line High Data Rate Disks Optical Juke Box Low $/MB Automated Tape Libraries Off Line Storage Remote Archive Mass Storage Memory Hierarchy CS 510 Computer Architectures 1995 19

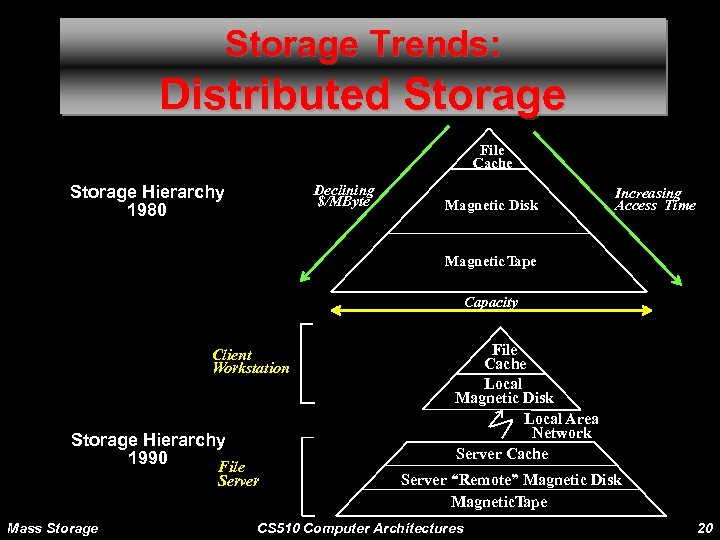

Storage Trends: Distributed Storage File Cache Storage Hierarchy 1980 Declining $/MByte Magnetic Disk Increasing Access Time Magnetic Tape Capacity Client Workstation Storage Hierarchy 1990 File Server Mass Storage File Cache Local Magnetic Disk Local Area Network Server Cache Server “Remote” Magnetic Disk Magnetic. Tape CS 510 Computer Architectures 20

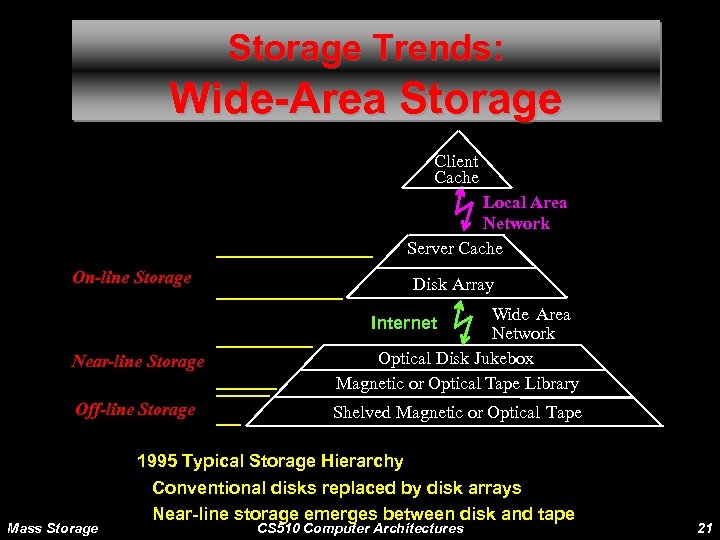

Storage Trends: Wide-Area Storage Client Cache Local Area Network Server Cache On-line Storage Disk Array Wide Area Network Optical Disk Jukebox Magnetic or Optical Tape Library Internet Near-line Storage Off-line Storage Mass Storage Shelved Magnetic or Optical Tape 1995 Typical Storage Hierarchy Conventional disks replaced by disk arrays Near-line storage emerges between disk and tape CS 510 Computer Architectures 21

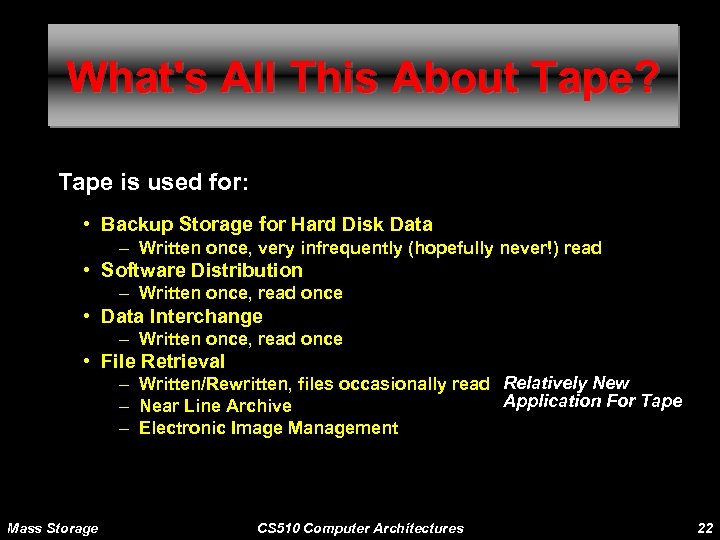

What's All This About Tape? Tape is used for: • Backup Storage for Hard Disk Data – Written once, very infrequently (hopefully never!) read • Software Distribution – Written once, read once • Data Interchange – Written once, read once • File Retrieval – Written/Rewritten, files occasionally read Relatively New Application For Tape – Near Line Archive – Electronic Image Management Mass Storage CS 510 Computer Architectures 22

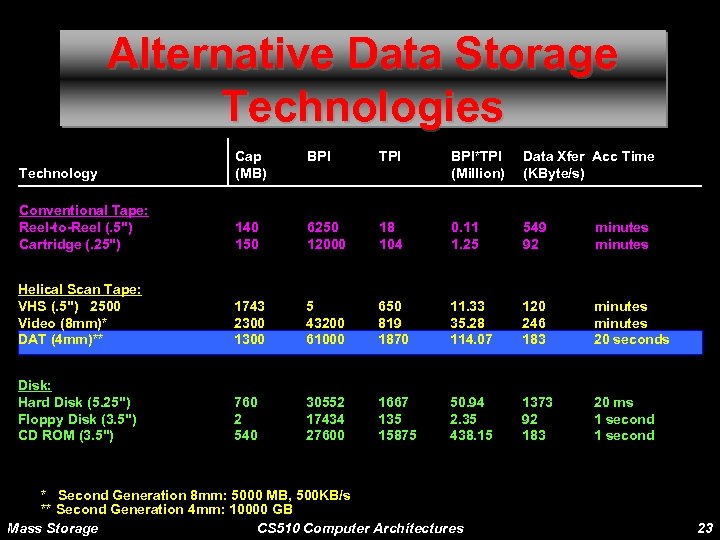

Alternative Data Storage Technologies BPI Technology Cap (MB) BPI*TPI (Million) Data Xfer Acc Time (KByte/s) Conventional Tape: Reel-to-Reel (. 5") Cartridge (. 25") 140 150 6250 12000 18 104 0. 11 1. 25 549 92 minutes Helical Scan Tape: VHS (. 5") 2500 Video (8 mm)* DAT (4 mm)** 1743 2300 1300 5 43200 61000 650 819 1870 11. 33 35. 28 114. 07 120 246 183 minutes 20 seconds Disk: Hard Disk (5. 25") Floppy Disk (3. 5") CD ROM (3. 5") 760 2 540 30552 17434 27600 1667 135 15875 50. 94 2. 35 438. 15 1373 92 183 20 ms 1 second * Second Generation 8 mm: 5000 MB, 500 KB/s ** Second Generation 4 mm: 10000 GB Mass Storage CS 510 Computer Architectures 23

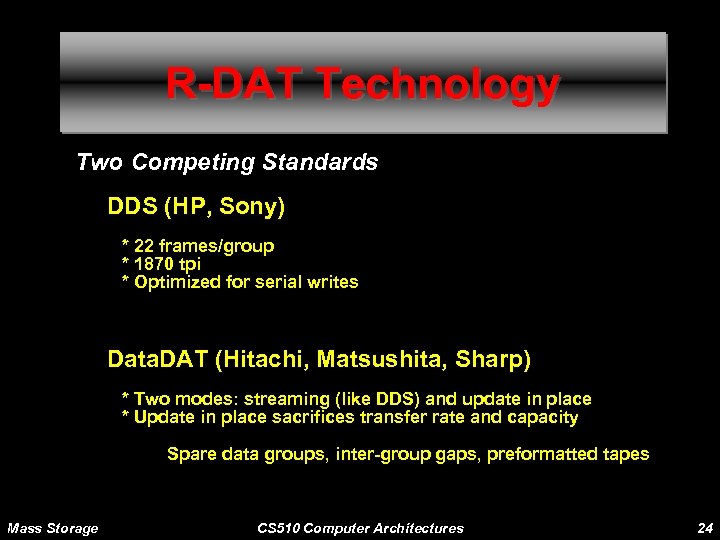

R-DAT Technology Two Competing Standards DDS (HP, Sony) * 22 frames/group * 1870 tpi * Optimized for serial writes Data. DAT (Hitachi, Matsushita, Sharp) * Two modes: streaming (like DDS) and update in place * Update in place sacrifices transfer rate and capacity Spare data groups, inter-group gaps, preformatted tapes Mass Storage CS 510 Computer Architectures 24

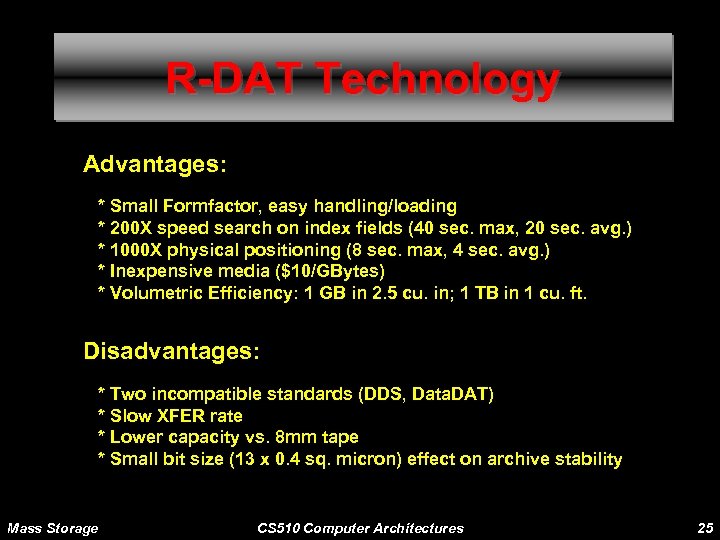

R-DAT Technology Advantages: * Small Formfactor, easy handling/loading * 200 X speed search on index fields (40 sec. max, 20 sec. avg. ) * 1000 X physical positioning (8 sec. max, 4 sec. avg. ) * Inexpensive media ($10/GBytes) * Volumetric Efficiency: 1 GB in 2. 5 cu. in; 1 TB in 1 cu. ft. Disadvantages: * Two incompatible standards (DDS, Data. DAT) * Slow XFER rate * Lower capacity vs. 8 mm tape * Small bit size (13 x 0. 4 sq. micron) effect on archive stability Mass Storage CS 510 Computer Architectures 25

R-DAT Technical Challenges Tape Capacity * Data Compression is key Tape Bandwidth * Data Compression * Striped Tape Mass Storage CS 510 Computer Architectures 26

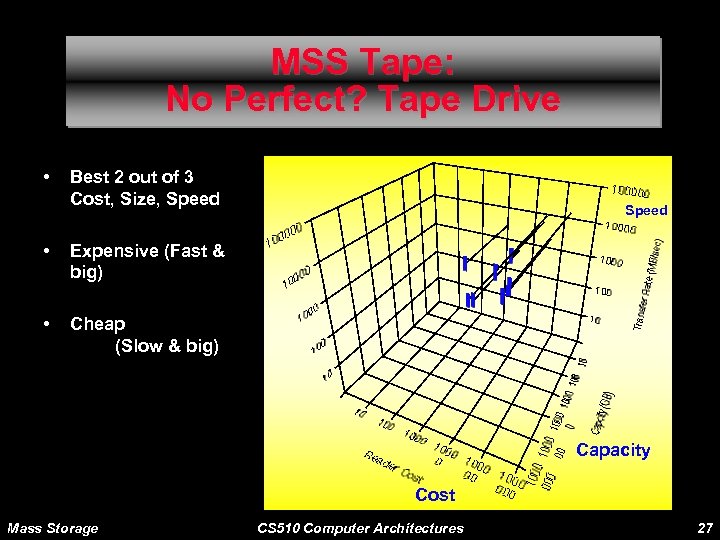

MSS Tape: No Perfect? Tape Drive • Best 2 out of 3 Cost, Size, Speed • Expensive (Fast & big) • Speed Cheap (Slow & big) Capacity Cost Mass Storage CS 510 Computer Architectures 27

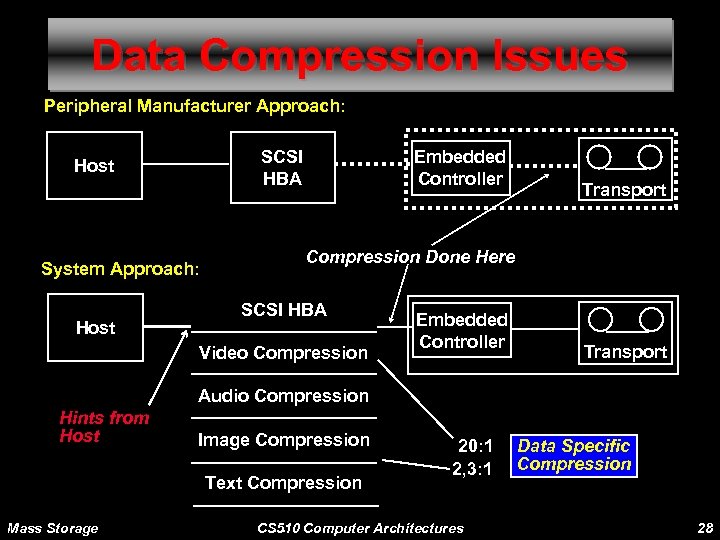

Data Compression Issues Peripheral Manufacturer Approach: SCSI HBA Host System Approach: Host Embedded Controller Transport Compression Done Here SCSI HBA Video Compression Embedded Controller Transport Audio Compression Hints from Host Image Compression Text Compression Mass Storage 20: 1 2, 3: 1 . . . CS 510 Computer Architectures Data Specific Compression 28

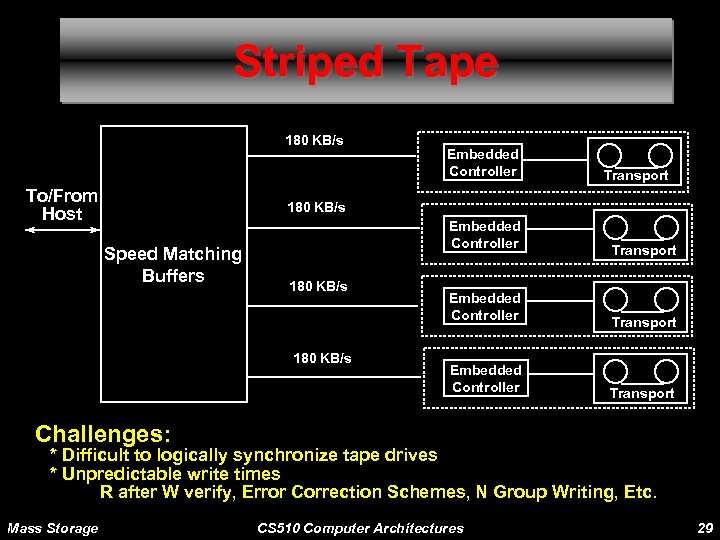

Striped Tape 180 KB/s To/From Host Embedded Controller Transport 180 KB/s Speed Matching Buffers Embedded Controller 180 KB/s Embedded Controller Transport Challenges: * Difficult to logically synchronize tape drives * Unpredictable write times R after W verify, Error Correction Schemes, N Group Writing, Etc. Mass Storage CS 510 Computer Architectures 29

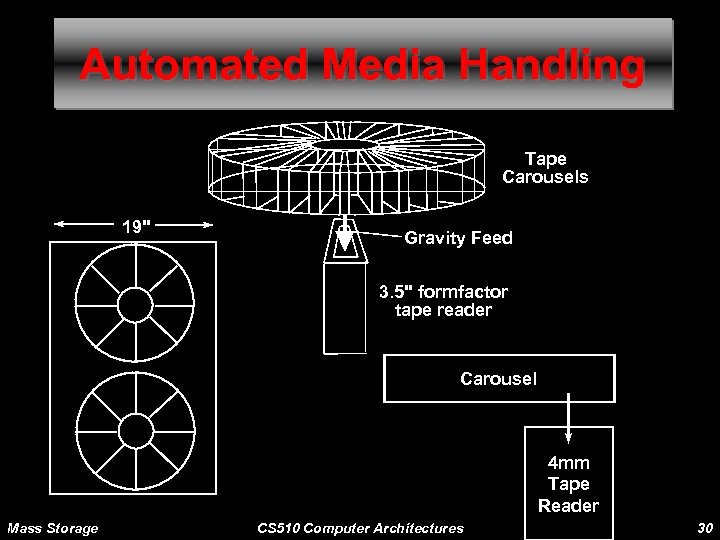

Automated Media Handling Tape Carousels 19" Gravity Feed 3. 5" formfactor tape reader Carousel 4 mm Tape Reader Mass Storage CS 510 Computer Architectures 30

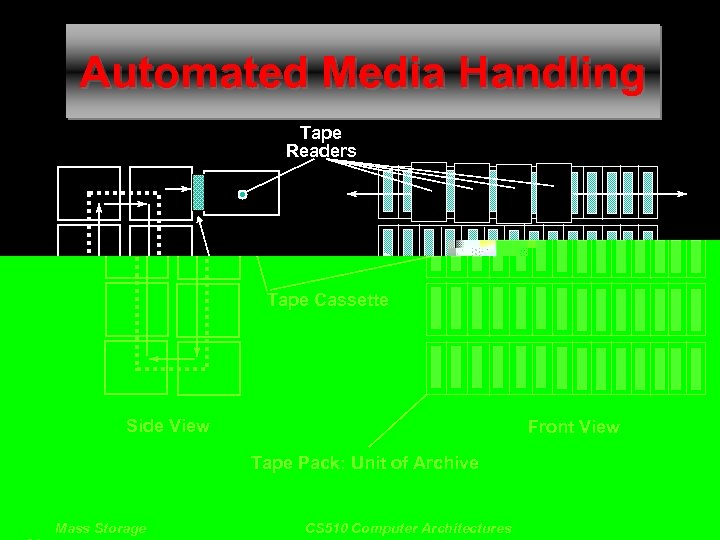

Automated Media Handling Tape Readers Tape Cassette Side View Front View Tape Pack: Unit of Archive Mass Storage CS 510 Computer Architectures 31

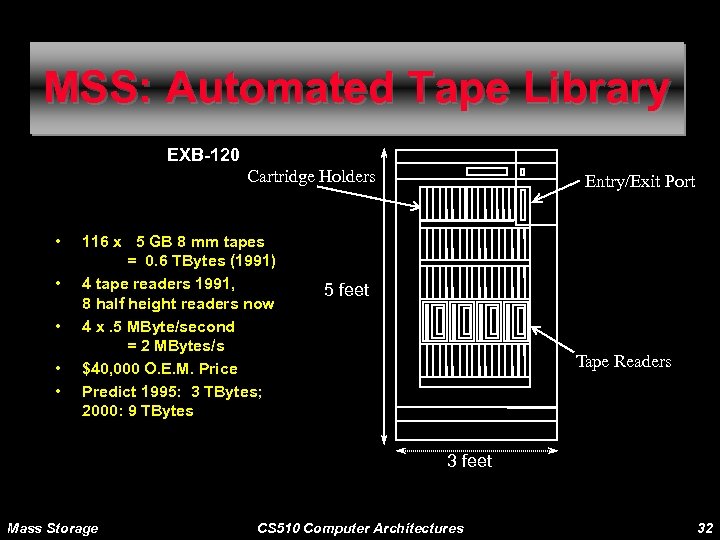

MSS: Automated Tape Library EXB-120 Cartridge Holders • • • 116 x 5 GB 8 mm tapes = 0. 6 TBytes (1991) 4 tape readers 1991, 8 half height readers now 4 x. 5 MByte/second = 2 MBytes/s $40, 000 O. E. M. Price Predict 1995: 3 TBytes; 2000: 9 TBytes Entry/Exit Port 5 feet Tape Readers 3 feet Mass Storage CS 510 Computer Architectures 32

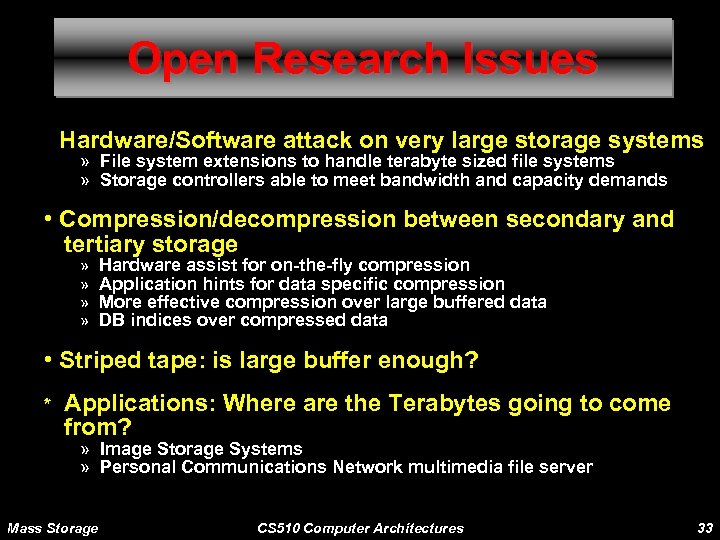

Open Research Issues • Hardware/Software attack on very large storage systems » File system extensions to handle terabyte sized file systems » Storage controllers able to meet bandwidth and capacity demands • Compression/decompression between secondary and tertiary storage » » Hardware assist for on-the-fly compression Application hints for data specific compression More effective compression over large buffered data DB indices over compressed data • Striped tape: is large buffer enough? * Applications: Where are the Terabytes going to come from? » Image Storage Systems » Personal Communications Network multimedia file server Mass Storage CS 510 Computer Architectures 33

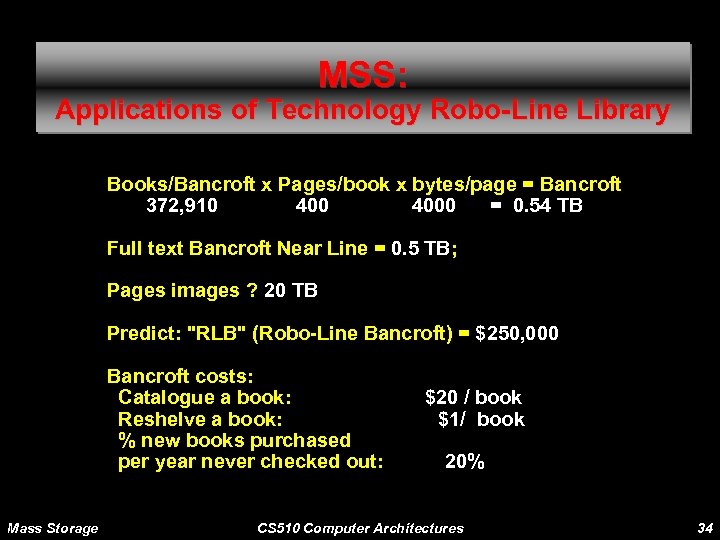

MSS: Applications of Technology Robo-Line Library Books/Bancroft x Pages/book x bytes/page = Bancroft 372, 910 4000 = 0. 54 TB Full text Bancroft Near Line = 0. 5 TB; Pages images ? 20 TB Predict: "RLB" (Robo-Line Bancroft) = $250, 000 Bancroft costs: Catalogue a book: Reshelve a book: % new books purchased per year never checked out: Mass Storage $20 / book $1/ book 20% CS 510 Computer Architectures 34

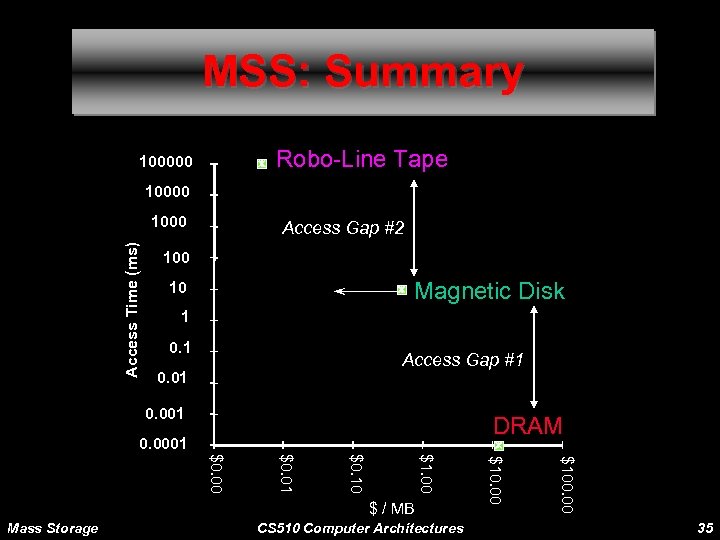

MSS: Summary Robo-Line Tape 100000 10000 Access Time (ms) 1000 Access Gap #2 100 Magnetic Disk 10 1 0. 1 Access Gap #1 0. 001 DRAM 0. 0001 CS 510 Computer Architectures $100. 00 Mass Storage $10. 00 $1. 00 $0. 10 $0. 01 $0. 00 $ / MB 35

7d88d846de8b09d57157a547c4fd4d6e.ppt