6eab2d06cfc1a357407496908b463180.ppt

- Количество слайдов: 37

LCG-France activities Fairouz Malek Scientific Project leader Istitut des Grilles Scientific Council Paris, June 1 st 2010

LCG-France activities Fairouz Malek Scientific Project leader Istitut des Grilles Scientific Council Paris, June 1 st 2010

Contents • LHC and WLCG • LCG-France in WLCG • Data distribution • Data storage • Data processing • Perspectives • Conclusions F. Malek 2

Contents • LHC and WLCG • LCG-France in WLCG • Data distribution • Data storage • Data processing • Perspectives • Conclusions F. Malek 2

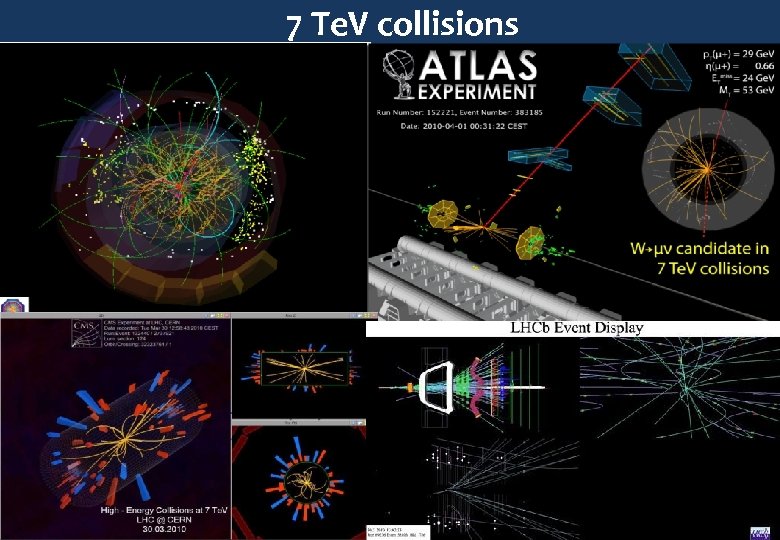

7 Te. V collisions F. Malek Sergio Bertolucci, CERN 3

7 Te. V collisions F. Malek Sergio Bertolucci, CERN 3

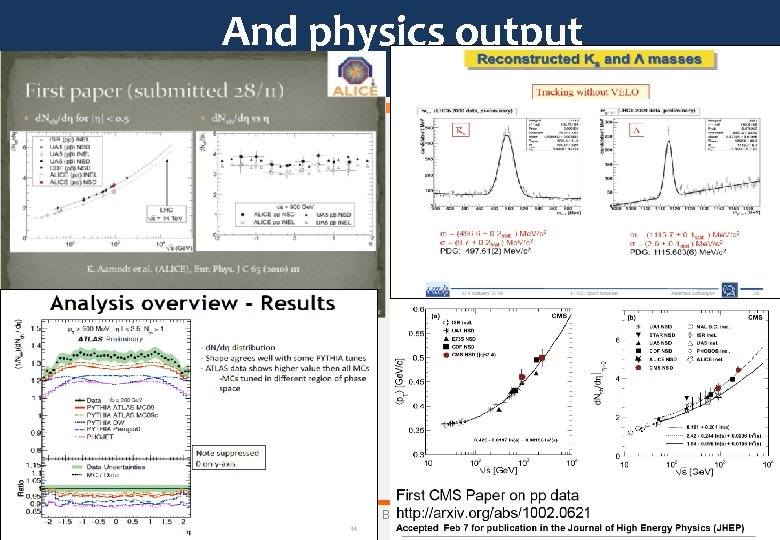

And physics output F. Malek Sergio Bertolucci, CERN 4

And physics output F. Malek Sergio Bertolucci, CERN 4

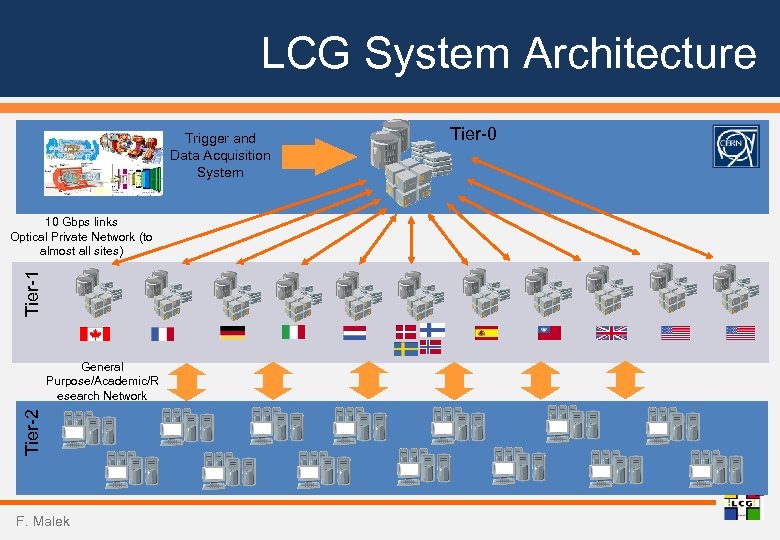

LCG System Architecture Trigger and Data Acquisition System Tier-1 10 Gbps links Optical Private Network (to almost all sites) Tier-2 General Purpose/Academic/R esearch Network F. Malek Tier-0

LCG System Architecture Trigger and Data Acquisition System Tier-1 10 Gbps links Optical Private Network (to almost all sites) Tier-2 General Purpose/Academic/R esearch Network F. Malek Tier-0

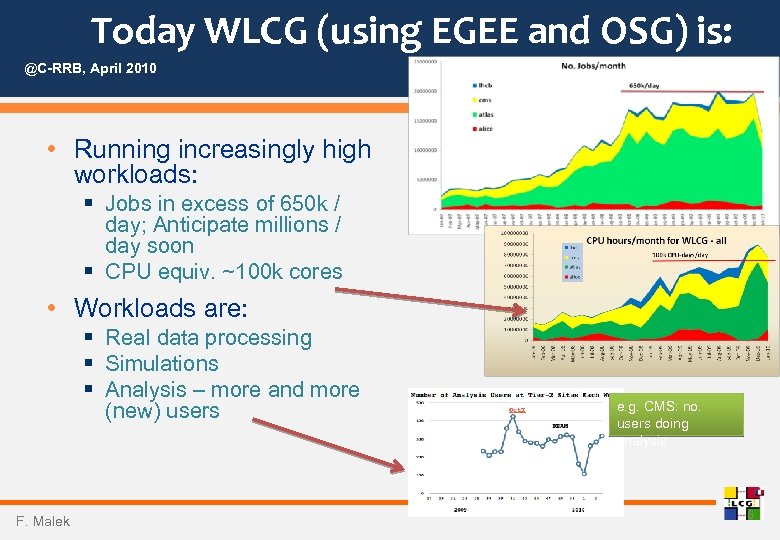

Today WLCG (using EGEE and OSG) is: @C-RRB, April 2010 • Running increasingly high workloads: § Jobs in excess of 650 k / day; Anticipate millions / day soon § CPU equiv. ~100 k cores • Workloads are: § Real data processing § Simulations § Analysis – more and more (new) users F. Malek e. g. CMS: no. users doing analysis 6

Today WLCG (using EGEE and OSG) is: @C-RRB, April 2010 • Running increasingly high workloads: § Jobs in excess of 650 k / day; Anticipate millions / day soon § CPU equiv. ~100 k cores • Workloads are: § Real data processing § Simulations § Analysis – more and more (new) users F. Malek e. g. CMS: no. users doing analysis 6

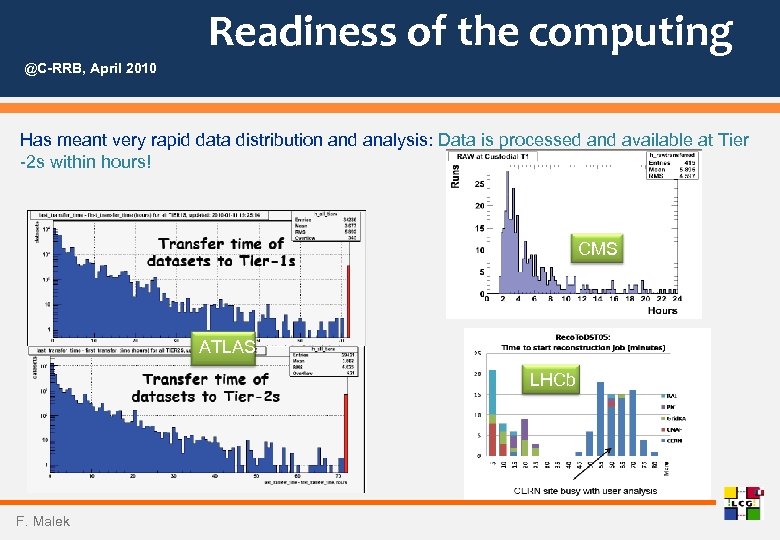

Readiness of the computing @C-RRB, April 2010 Has meant very rapid data distribution and analysis: Data is processed and available at Tier -2 s within hours! CMS ATLAS LHCb 7 F. Malek

Readiness of the computing @C-RRB, April 2010 Has meant very rapid data distribution and analysis: Data is processed and available at Tier -2 s within hours! CMS ATLAS LHCb 7 F. Malek

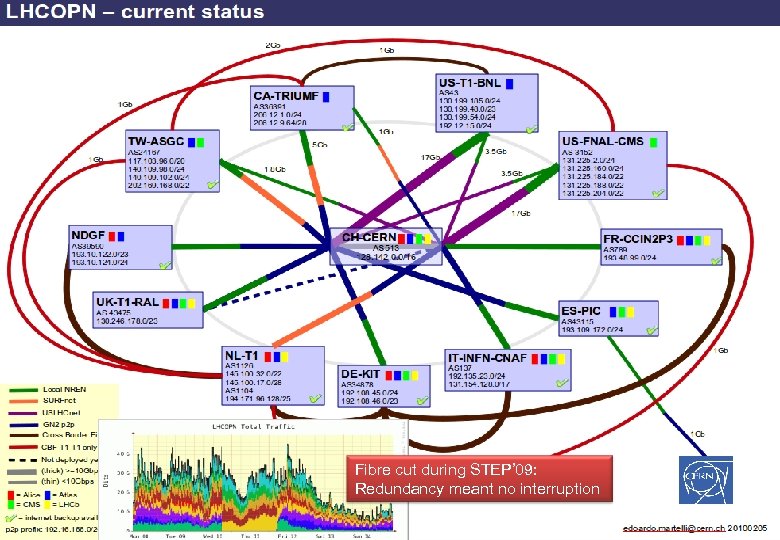

Fibre cut during STEP’ 09: Redundancy meant no interruption F. Malek Sergio Bertolucci, CERN 8

Fibre cut during STEP’ 09: Redundancy meant no interruption F. Malek Sergio Bertolucci, CERN 8

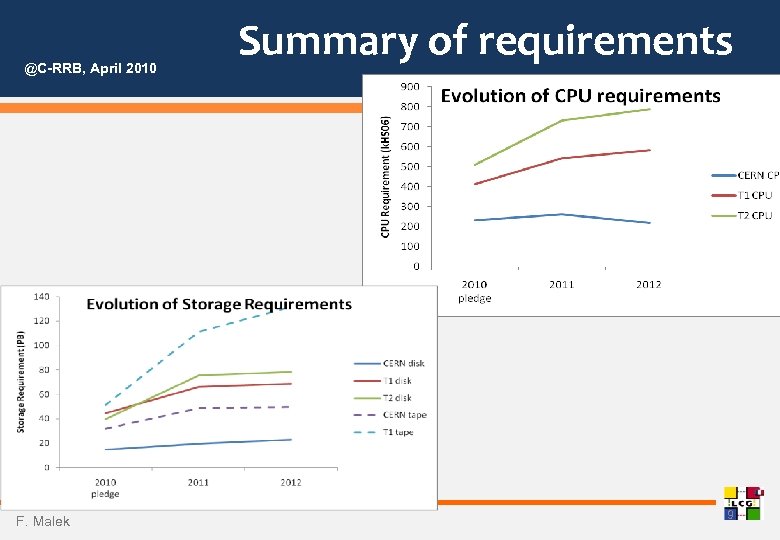

@C-RRB, April 2010 F. Malek Summary of requirements 9

@C-RRB, April 2010 F. Malek Summary of requirements 9

LCG-France within WLCG F. Malek 10

LCG-France within WLCG F. Malek 10

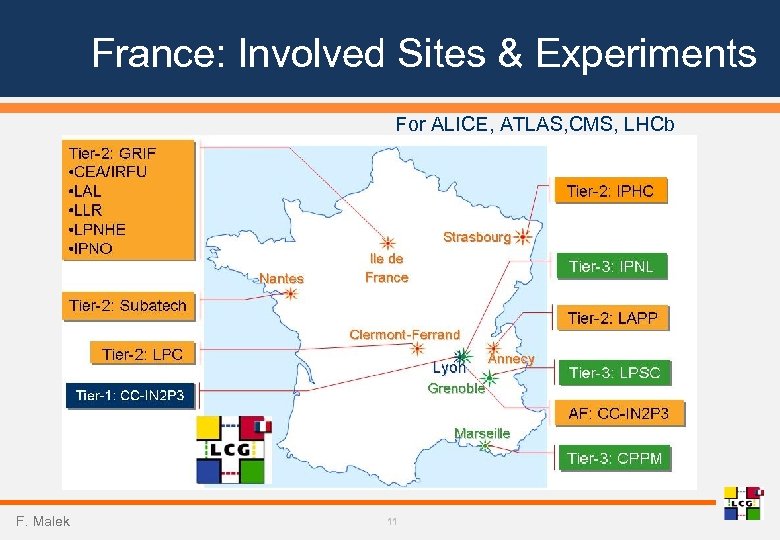

France: Involved Sites & Experiments For ALICE, ATLAS, CMS, LHCb F. Malek 11

France: Involved Sites & Experiments For ALICE, ATLAS, CMS, LHCb F. Malek 11

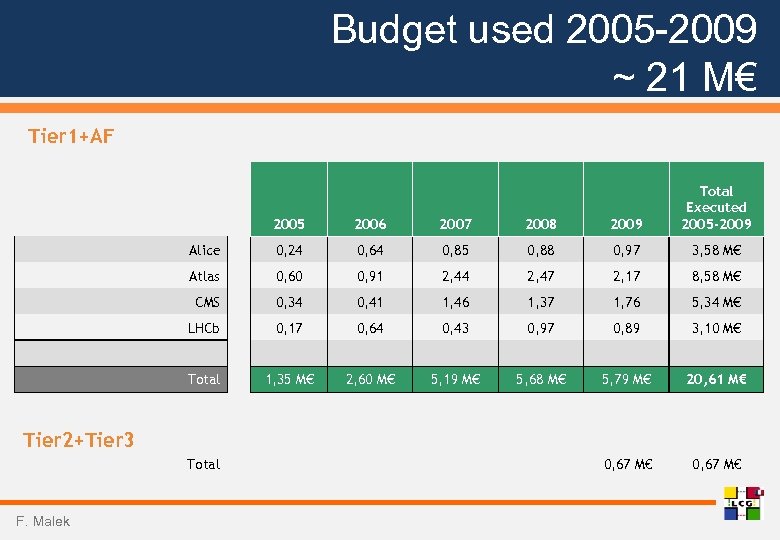

Budget used 2005 -2009 ~ 21 M€ Tier 1+AF 2005 2006 2007 2008 2009 Total Executed 2005 -2009 Alice 0, 24 0, 64 0, 85 0, 88 0, 97 3, 58 M€ Atlas 0, 60 0, 91 2, 44 2, 47 2, 17 8, 58 M€ CMS 0, 34 0, 41 1, 46 1, 37 1, 76 5, 34 M€ LHCb 0, 17 0, 64 0, 43 0, 97 0, 89 3, 10 M€ 1, 35 M€ 2, 60 M€ 5, 19 M€ 5, 68 M€ 5, 79 M€ 20, 61 M€ 0, 67 M€ Total Tier 2+Tier 3 Total F. Malek

Budget used 2005 -2009 ~ 21 M€ Tier 1+AF 2005 2006 2007 2008 2009 Total Executed 2005 -2009 Alice 0, 24 0, 64 0, 85 0, 88 0, 97 3, 58 M€ Atlas 0, 60 0, 91 2, 44 2, 47 2, 17 8, 58 M€ CMS 0, 34 0, 41 1, 46 1, 37 1, 76 5, 34 M€ LHCb 0, 17 0, 64 0, 43 0, 97 0, 89 3, 10 M€ 1, 35 M€ 2, 60 M€ 5, 19 M€ 5, 68 M€ 5, 79 M€ 20, 61 M€ 0, 67 M€ Total Tier 2+Tier 3 Total F. Malek

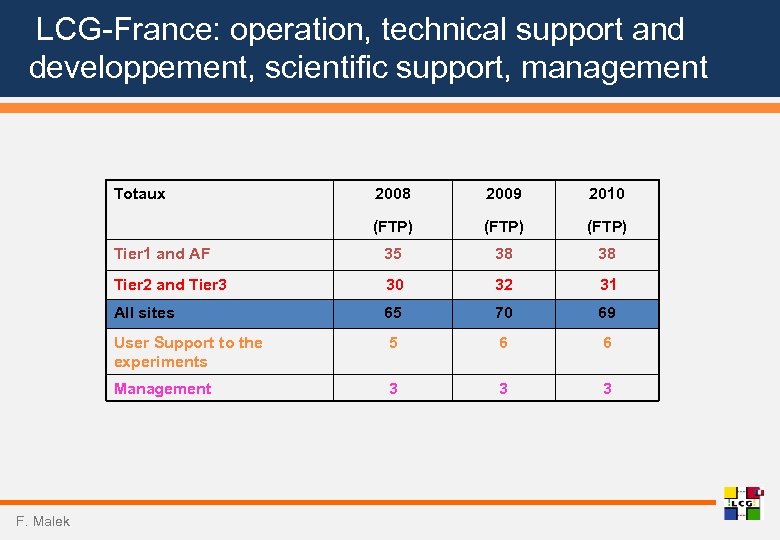

LCG-France: operation, technical support and developpement, scientific support, management Totaux 2009 2010 (FTP) Tier 1 and AF 35 38 38 Tier 2 and Tier 3 30 32 31 All sites 65 70 69 User Support to the experiments 5 6 6 Management F. Malek 2008 3 3 3

LCG-France: operation, technical support and developpement, scientific support, management Totaux 2009 2010 (FTP) Tier 1 and AF 35 38 38 Tier 2 and Tier 3 30 32 31 All sites 65 70 69 User Support to the experiments 5 6 6 Management F. Malek 2008 3 3 3

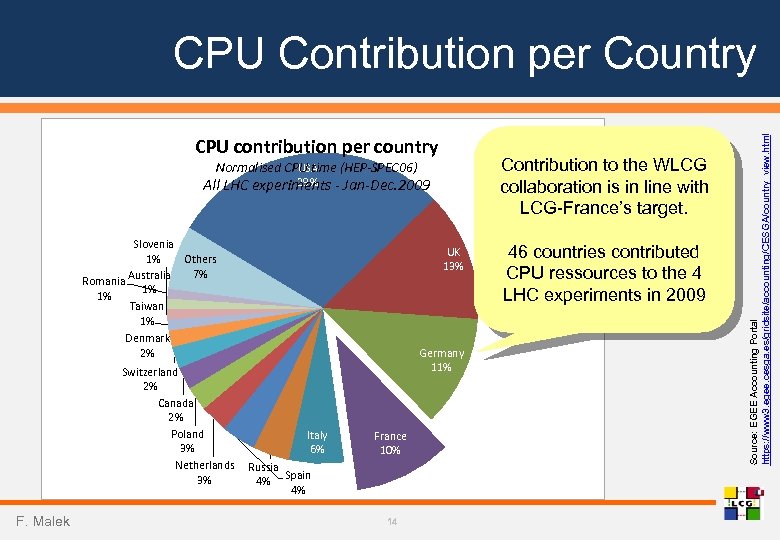

CPU contribution per country Contribution to the WLCG collaboration is in line with LCG-France’s target. Normalised CPU time (HEP-SPEC 06) USA 29% All LHC experiments - Jan-Dec. 2009 Slovenia 1% Others 7% Australia Romania 1% 1% Taiwan 1% Denmark 2% Switzerland 2% Canada 2% Poland 3% Netherlands 3% F. Malek UK 13% Germany 11% Italy 6% France 10% Russia 4% Spain 4% 14 46 countries contributed CPU ressources to the 4 LHC experiments in 2009 Source: EGEE Accounting Portal https: //www 3. egee. cesga. es/gridsite/accounting/CESGA/country_view. html CPU Contribution per Country

CPU contribution per country Contribution to the WLCG collaboration is in line with LCG-France’s target. Normalised CPU time (HEP-SPEC 06) USA 29% All LHC experiments - Jan-Dec. 2009 Slovenia 1% Others 7% Australia Romania 1% 1% Taiwan 1% Denmark 2% Switzerland 2% Canada 2% Poland 3% Netherlands 3% F. Malek UK 13% Germany 11% Italy 6% France 10% Russia 4% Spain 4% 14 46 countries contributed CPU ressources to the 4 LHC experiments in 2009 Source: EGEE Accounting Portal https: //www 3. egee. cesga. es/gridsite/accounting/CESGA/country_view. html CPU Contribution per Country

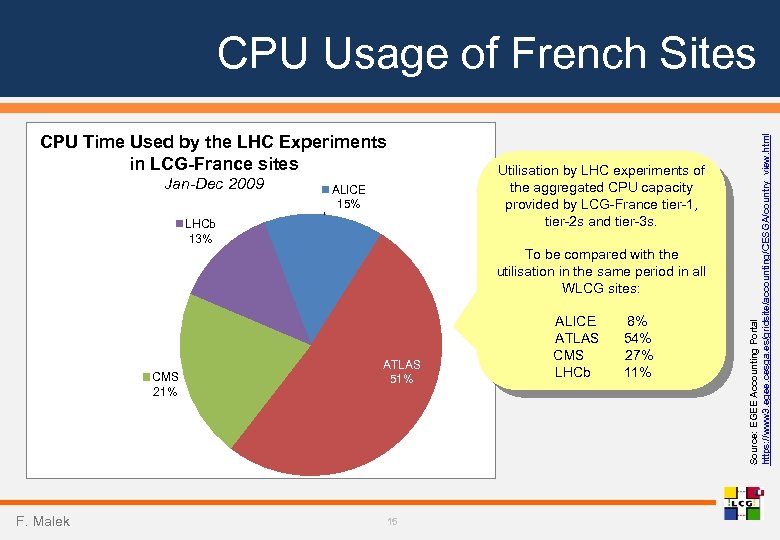

CPU Time Used by the LHC Experiments in LCG-France sites Jan-Dec 2009 Utilisation by LHC experiments of the aggregated CPU capacity provided by LCG-France tier-1, tier-2 s and tier-3 s. ALICE 15% LHCb 13% To be compared with the utilisation in the same period in all WLCG sites: CMS 21% F. Malek ATLAS 51% 15 ALICE ATLAS CMS LHCb 8% 54% 27% 11% Source: EGEE Accounting Portal https: //www 3. egee. cesga. es/gridsite/accounting/CESGA/country_view. html CPU Usage of French Sites

CPU Time Used by the LHC Experiments in LCG-France sites Jan-Dec 2009 Utilisation by LHC experiments of the aggregated CPU capacity provided by LCG-France tier-1, tier-2 s and tier-3 s. ALICE 15% LHCb 13% To be compared with the utilisation in the same period in all WLCG sites: CMS 21% F. Malek ATLAS 51% 15 ALICE ATLAS CMS LHCb 8% 54% 27% 11% Source: EGEE Accounting Portal https: //www 3. egee. cesga. es/gridsite/accounting/CESGA/country_view. html CPU Usage of French Sites

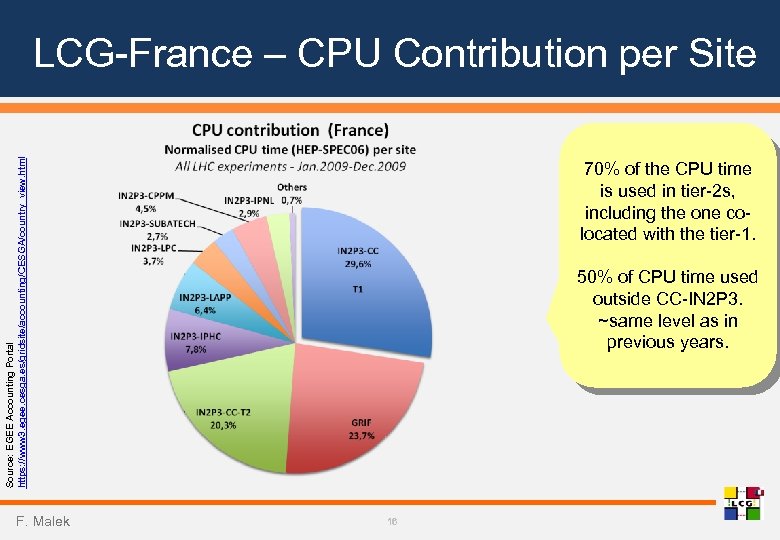

Source: EGEE Accounting Portal https: //www 3. egee. cesga. es/gridsite/accounting/CESGA/country_view. html LCG-France – CPU Contribution per Site F. Malek 70% of the CPU time is used in tier-2 s, including the one colocated with the tier-1. 50% of CPU time used outside CC-IN 2 P 3. ~same level as in previous years. 16

Source: EGEE Accounting Portal https: //www 3. egee. cesga. es/gridsite/accounting/CESGA/country_view. html LCG-France – CPU Contribution per Site F. Malek 70% of the CPU time is used in tier-2 s, including the one colocated with the tier-1. 50% of CPU time used outside CC-IN 2 P 3. ~same level as in previous years. 16

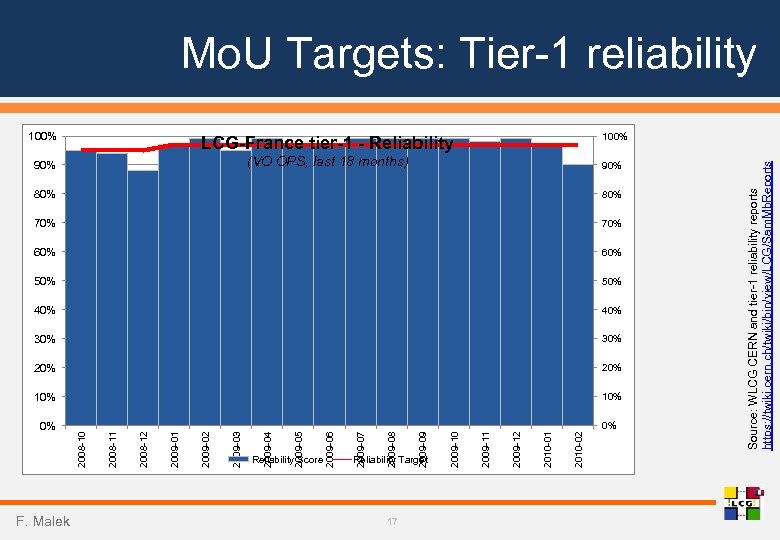

0% F. Malek 2009 -03 2009 -02 2009 -01 2008 -12 2008 -11 2008 -10 Reliability Score 2009 -06 2009 -05 2009 -04 Reliability Target 17 2010 -02 2010 -01 LCG-France tier-1 - Reliability 90% 2009 -12 100% 2009 -11 2009 -10 2009 -09 2009 -08 2009 -07 (VO OPS, last 18 months) 90% 80% 70% 60% 50% 40% 30% 20% 10% 0% Source: WLCG CERN and tier-1 reliability reports https: //twiki. cern. ch/twiki/bin/view/LCG/Sam. Mb. Reports Mo. U Targets: Tier-1 reliability 100%

0% F. Malek 2009 -03 2009 -02 2009 -01 2008 -12 2008 -11 2008 -10 Reliability Score 2009 -06 2009 -05 2009 -04 Reliability Target 17 2010 -02 2010 -01 LCG-France tier-1 - Reliability 90% 2009 -12 100% 2009 -11 2009 -10 2009 -09 2009 -08 2009 -07 (VO OPS, last 18 months) 90% 80% 70% 60% 50% 40% 30% 20% 10% 0% Source: WLCG CERN and tier-1 reliability reports https: //twiki. cern. ch/twiki/bin/view/LCG/Sam. Mb. Reports Mo. U Targets: Tier-1 reliability 100%

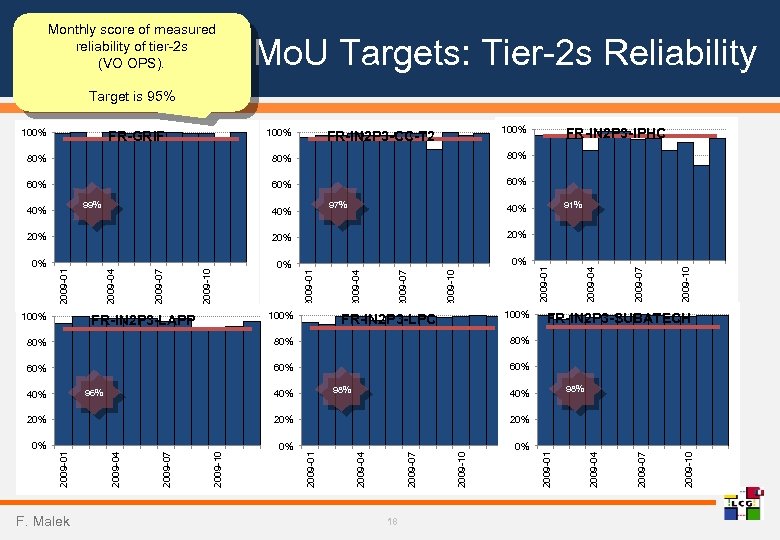

Monthly score of measured reliability of tier-2 s (VO OPS). Mo. U Targets: Tier-2 s Reliability Target is 95% 100% FR-GRIF 100% FR-IN 2 P 3 -CC-T 2 80% 80% 60% FR-IN 2 P 3 -IPHC 60% 99% 40% 97% 40% 91% 40% 100% FR-IN 2 P 3 -LAPP 100% FR-IN 2 P 3 -LPC 80% 60% 2009 -10 2009 -07 2009 -04 FR-IN 2 P 3 -SUBATECH 80% 60% 2009 -01 2009 -10 2009 -07 2009 -04 0% 2009 -01 0% 2009 -10 0% 2009 -07 20% 2009 -04 20% 2009 -01 20% 60% 96% 40% 98% 40% F. Malek 18 2009 -10 2009 -07 2009 -04 2009 -01 2009 -10 2009 -07 2009 -04 0% 2009 -01 0% 2009 -10 0% 2009 -07 20% 2009 -04 20% 2009 -01 20%

Monthly score of measured reliability of tier-2 s (VO OPS). Mo. U Targets: Tier-2 s Reliability Target is 95% 100% FR-GRIF 100% FR-IN 2 P 3 -CC-T 2 80% 80% 60% FR-IN 2 P 3 -IPHC 60% 99% 40% 97% 40% 91% 40% 100% FR-IN 2 P 3 -LAPP 100% FR-IN 2 P 3 -LPC 80% 60% 2009 -10 2009 -07 2009 -04 FR-IN 2 P 3 -SUBATECH 80% 60% 2009 -01 2009 -10 2009 -07 2009 -04 0% 2009 -01 0% 2009 -10 0% 2009 -07 20% 2009 -04 20% 2009 -01 20% 60% 96% 40% 98% 40% F. Malek 18 2009 -10 2009 -07 2009 -04 2009 -01 2009 -10 2009 -07 2009 -04 0% 2009 -01 0% 2009 -10 0% 2009 -07 20% 2009 -04 20% 2009 -01 20%

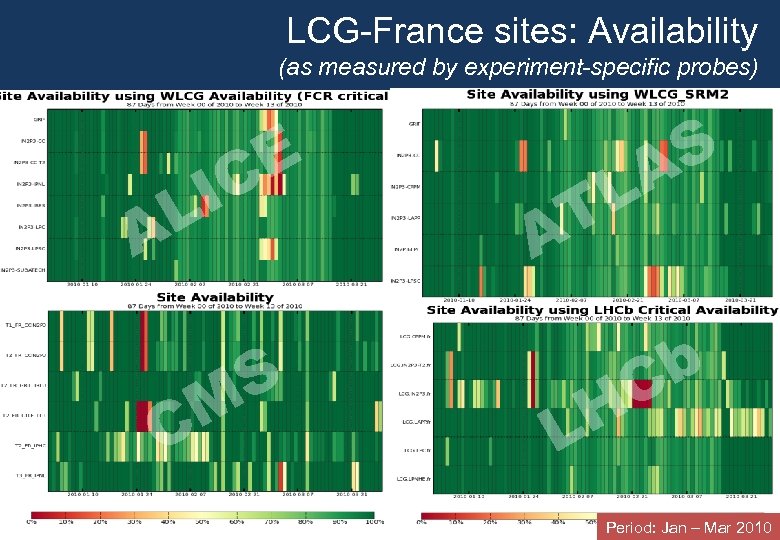

LCG-France sites: Availability (as measured by experiment-specific probes) E IC L A C F. Malek L T A S M H L 19 S A b C Period: Jan – Mar 2010

LCG-France sites: Availability (as measured by experiment-specific probes) E IC L A C F. Malek L T A S M H L 19 S A b C Period: Jan – Mar 2010

Data Distribution F. Malek 20

Data Distribution F. Malek 20

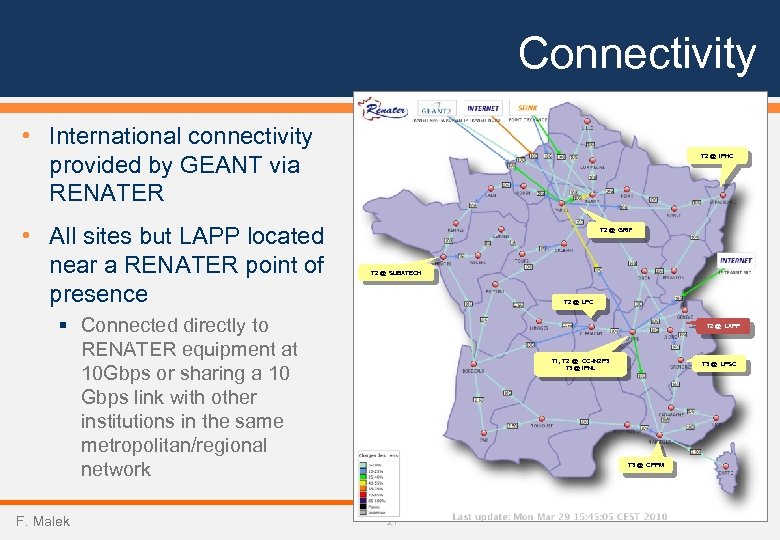

Connectivity • International connectivity provided by GEANT via RENATER • All sites but LAPP located near a RENATER point of presence T 2 @ IPHC T 2 @ GRIF T 2 @ SUBATECH T 2 @ LPC § Connected directly to RENATER equipment at 10 Gbps or sharing a 10 Gbps link with other institutions in the same metropolitan/regional network F. Malek T 2 @ LAPP T 1, T 2 @ CC-IN 2 P 3 T 3 @ IPNL T 3 @ LPSC T 3 @ CPPM 21

Connectivity • International connectivity provided by GEANT via RENATER • All sites but LAPP located near a RENATER point of presence T 2 @ IPHC T 2 @ GRIF T 2 @ SUBATECH T 2 @ LPC § Connected directly to RENATER equipment at 10 Gbps or sharing a 10 Gbps link with other institutions in the same metropolitan/regional network F. Malek T 2 @ LAPP T 1, T 2 @ CC-IN 2 P 3 T 3 @ IPNL T 3 @ LPSC T 3 @ CPPM 21

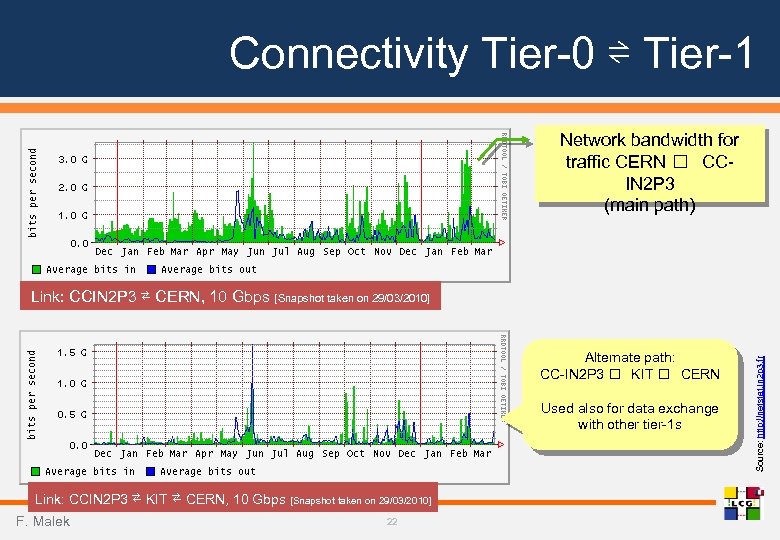

Connectivity Tier-0 ⇌ Tier-1 Network bandwidth for traffic CERN CCIN 2 P 3 (main path) Alternate path: CC-IN 2 P 3 KIT CERN Used also for data exchange with other tier-1 s Link: CCIN 2 P 3 ⇄ KIT ⇄ CERN, 10 Gbps [Snapshot taken on 29/03/2010] F. Malek 22 Source: http: //netstat. in 2 p 3. fr Link: CCIN 2 P 3 ⇄ CERN, 10 Gbps [Snapshot taken on 29/03/2010]

Connectivity Tier-0 ⇌ Tier-1 Network bandwidth for traffic CERN CCIN 2 P 3 (main path) Alternate path: CC-IN 2 P 3 KIT CERN Used also for data exchange with other tier-1 s Link: CCIN 2 P 3 ⇄ KIT ⇄ CERN, 10 Gbps [Snapshot taken on 29/03/2010] F. Malek 22 Source: http: //netstat. in 2 p 3. fr Link: CCIN 2 P 3 ⇄ CERN, 10 Gbps [Snapshot taken on 29/03/2010]

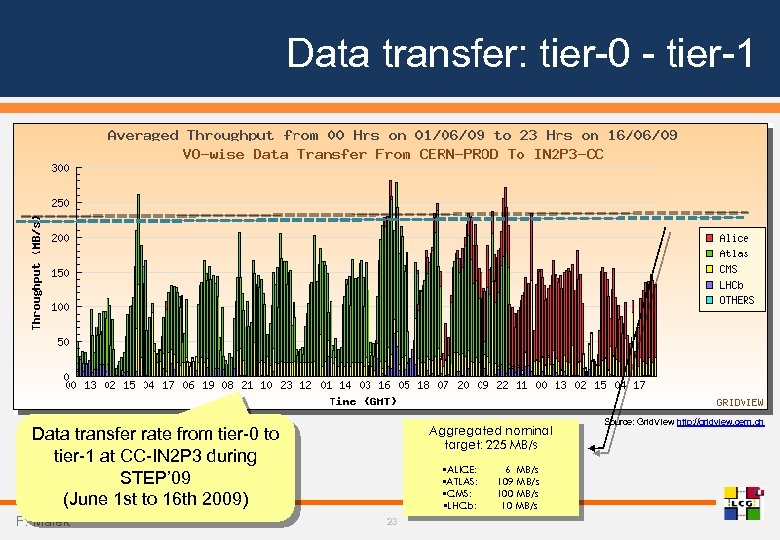

Data transfer: tier-0 - tier-1 Aggregated nominal target: 225 MB/s Data transfer rate from tier-0 to tier-1 at CC-IN 2 P 3 during STEP’ 09 (June 1 st to 16 th 2009) F. Malek • ALICE: • ATLAS: • CMS: • LHCb: 23 6 MB/s 109 MB/s 100 MB/s 10 MB/s Source: Grid. VIew http: //gridview. cern. ch

Data transfer: tier-0 - tier-1 Aggregated nominal target: 225 MB/s Data transfer rate from tier-0 to tier-1 at CC-IN 2 P 3 during STEP’ 09 (June 1 st to 16 th 2009) F. Malek • ALICE: • ATLAS: • CMS: • LHCb: 23 6 MB/s 109 MB/s 100 MB/s 10 MB/s Source: Grid. VIew http: //gridview. cern. ch

Data Storage & Processing F. Malek 24

Data Storage & Processing F. Malek 24

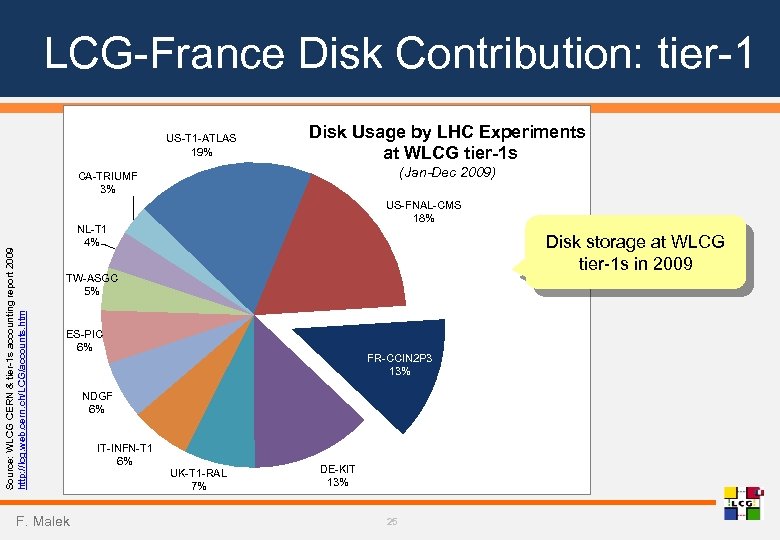

LCG-France Disk Contribution: tier-1 US-T 1 -ATLAS 19% Disk Usage by LHC Experiments at WLCG tier-1 s (Jan-Dec 2009) Source: WLCG CERN & tier-1 s accounting report 2009 http: //lcg. web. cern. ch/LCG/accounts. htm CA-TRIUMF 3% US-FNAL-CMS 18% NL-T 1 4% Disk storage at WLCG tier-1 s in 2009 TW-ASGC 5% ES-PIC 6% F. Malek FR-CCIN 2 P 3 13% NDGF 6% IT-INFN-T 1 6% UK-T 1 -RAL 7% DE-KIT 13% 25

LCG-France Disk Contribution: tier-1 US-T 1 -ATLAS 19% Disk Usage by LHC Experiments at WLCG tier-1 s (Jan-Dec 2009) Source: WLCG CERN & tier-1 s accounting report 2009 http: //lcg. web. cern. ch/LCG/accounts. htm CA-TRIUMF 3% US-FNAL-CMS 18% NL-T 1 4% Disk storage at WLCG tier-1 s in 2009 TW-ASGC 5% ES-PIC 6% F. Malek FR-CCIN 2 P 3 13% NDGF 6% IT-INFN-T 1 6% UK-T 1 -RAL 7% DE-KIT 13% 25

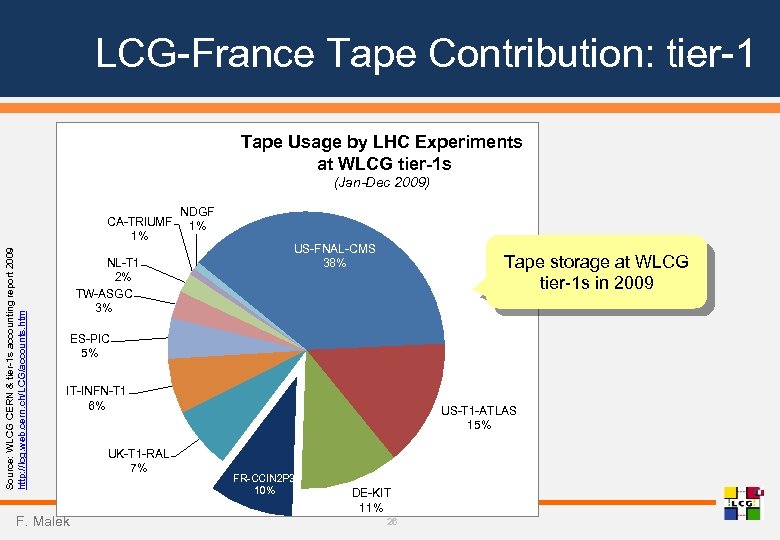

LCG-France Tape Contribution: tier-1 Tape Usage by LHC Experiments at WLCG tier-1 s (Jan-Dec 2009) Source: WLCG CERN & tier-1 s accounting report 2009 http: //lcg. web. cern. ch/LCG/accounts. htm CA-TRIUMF 1% NL-T 1 2% TW-ASGC 3% NDGF 1% US-FNAL-CMS 38% Tape storage at WLCG tier-1 s in 2009 ES-PIC 5% IT-INFN-T 1 6% F. Malek UK-T 1 -RAL 7% US-T 1 -ATLAS 15% FR-CCIN 2 P 3 10% DE-KIT 11% 26

LCG-France Tape Contribution: tier-1 Tape Usage by LHC Experiments at WLCG tier-1 s (Jan-Dec 2009) Source: WLCG CERN & tier-1 s accounting report 2009 http: //lcg. web. cern. ch/LCG/accounts. htm CA-TRIUMF 1% NL-T 1 2% TW-ASGC 3% NDGF 1% US-FNAL-CMS 38% Tape storage at WLCG tier-1 s in 2009 ES-PIC 5% IT-INFN-T 1 6% F. Malek UK-T 1 -RAL 7% US-T 1 -ATLAS 15% FR-CCIN 2 P 3 10% DE-KIT 11% 26

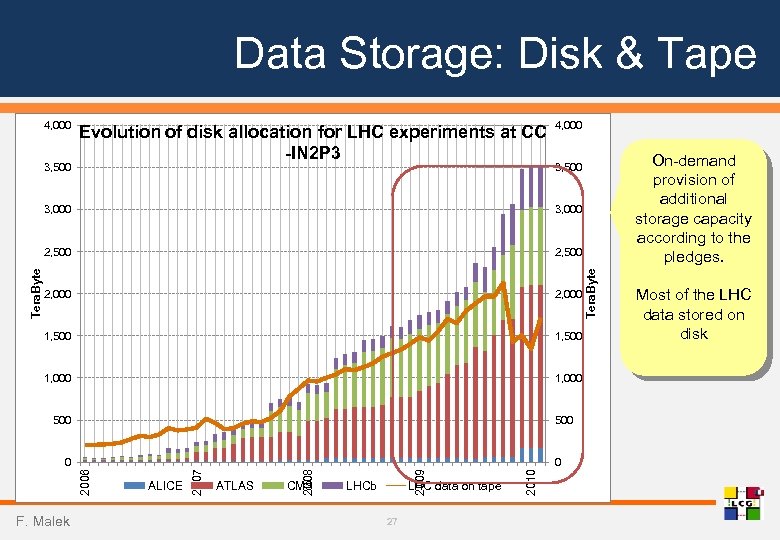

Data Storage: Disk & Tape 4, 000 3, 500 Evolution of disk allocation for LHC experiments at CC -IN 2 P 3 4, 000 3, 000 2, 500 2, 000 1, 500 1, 000 500 F. Malek LHCb LHC data on tape 27 2010 CMS 2009 ATLAS 2008 ALICE 2007 0 2006 0 Tera. Byte 3, 000 Tera. Byte On-demand provision of additional storage capacity according to the pledges. 3, 500 Most of the LHC data stored on disk

Data Storage: Disk & Tape 4, 000 3, 500 Evolution of disk allocation for LHC experiments at CC -IN 2 P 3 4, 000 3, 000 2, 500 2, 000 1, 500 1, 000 500 F. Malek LHCb LHC data on tape 27 2010 CMS 2009 ATLAS 2008 ALICE 2007 0 2006 0 Tera. Byte 3, 000 Tera. Byte On-demand provision of additional storage capacity according to the pledges. 3, 500 Most of the LHC data stored on disk

Data Processing at CC-IN 2 P 3 • Storage chain composed of 3 main components § d. Cache: disk-based cache system exposing gridified interfaces w One instance serving the 4 LHC experiments § HPSS: mass storage system, used as a permanent storage back-end for d. Cache w One instance for all the experiments served by CC-IN 2 P 3 § TReq. S: mediator between d. Cache and HPSS for scheduling access to tapes for optimisation purposes w To minimize tape mounts/dismounts and to optimize the way of reading files within a single tape F. Malek 28

Data Processing at CC-IN 2 P 3 • Storage chain composed of 3 main components § d. Cache: disk-based cache system exposing gridified interfaces w One instance serving the 4 LHC experiments § HPSS: mass storage system, used as a permanent storage back-end for d. Cache w One instance for all the experiments served by CC-IN 2 P 3 § TReq. S: mediator between d. Cache and HPSS for scheduling access to tapes for optimisation purposes w To minimize tape mounts/dismounts and to optimize the way of reading files within a single tape F. Malek 28

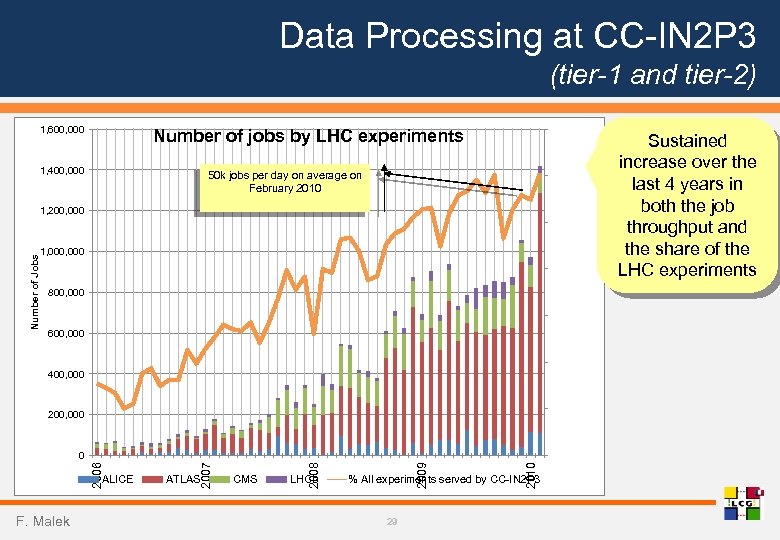

Data Processing at CC-IN 2 P 3 (tier-1 and tier-2) 1, 600, 000 70% Number of jobs by LHC experiments 1, 400, 000 50 k jobs per day on average on February 2010 60% 50% 1, 000 40% 800, 000 30% 600, 000 20% 400, 000 10% 200, 000 F. Malek LHCb 2010 CMS 2009 ATLAS 2008 ALICE 2007 2006 0 % All experiments served by CC-IN 2 P 3 29 0% % All Experiments Number of Jobs 1, 200, 000 Sustained increase over the last 4 years in both the job throughput and the share of the LHC experiments

Data Processing at CC-IN 2 P 3 (tier-1 and tier-2) 1, 600, 000 70% Number of jobs by LHC experiments 1, 400, 000 50 k jobs per day on average on February 2010 60% 50% 1, 000 40% 800, 000 30% 600, 000 20% 400, 000 10% 200, 000 F. Malek LHCb 2010 CMS 2009 ATLAS 2008 ALICE 2007 2006 0 % All experiments served by CC-IN 2 P 3 29 0% % All Experiments Number of Jobs 1, 200, 000 Sustained increase over the last 4 years in both the job throughput and the share of the LHC experiments

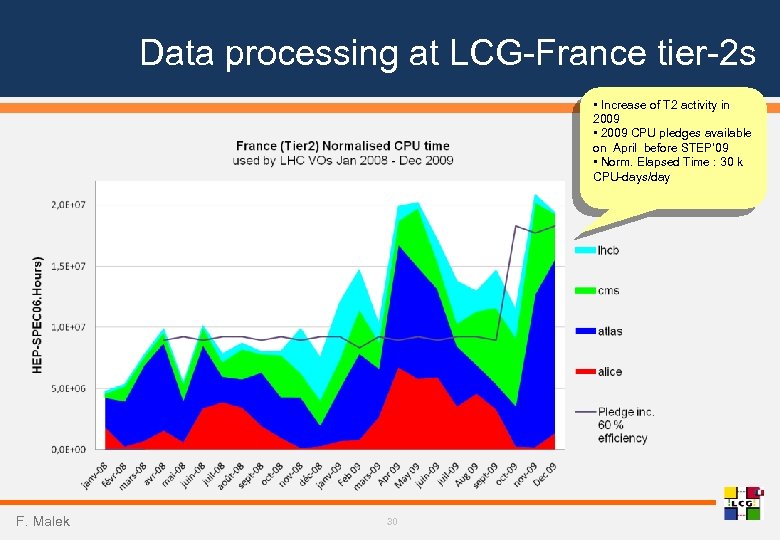

Data processing at LCG-France tier-2 s • Increase of T 2 activity in 2009 • 2009 CPU pledges available on April before STEP’ 09 • Norm. Elapsed Time : 30 k CPU-days/day F. Malek 30

Data processing at LCG-France tier-2 s • Increase of T 2 activity in 2009 • 2009 CPU pledges available on April before STEP’ 09 • Norm. Elapsed Time : 30 k CPU-days/day F. Malek 30

Perspectives • With the startup of the LHC, we entered the operational phase of the WLCG infrastructure Not yet a « real » experience! • Priority is now to make the grid more useable for the individual scientist and in particular for data analysis § We need to improve our collective understanding of requirements for LHC data analysis activities § Improve the integration of the national analysis farm to the grid, in particular regarding the import and export of data samples F. Malek 31

Perspectives • With the startup of the LHC, we entered the operational phase of the WLCG infrastructure Not yet a « real » experience! • Priority is now to make the grid more useable for the individual scientist and in particular for data analysis § We need to improve our collective understanding of requirements for LHC data analysis activities § Improve the integration of the national analysis farm to the grid, in particular regarding the import and export of data samples F. Malek 31

Conclusions • Significant amount of work performed both by experiments and by sites during the last year § Improvements in the service were measured • Data distribution and most data processing activities on the grid platform are understood and have been routinely exercised § Understanding the needs for analysis needs still more work and will be the priority from now on • LCG-France has contributed to create a community of IN 2 P 3 & Irfu people involved in processing the data coming out of the LHC § All the efforts made in the framework of this project in the last several years should directly benefit the physics research community, which now have interesting real data just coming out of the accelerator § The French contribution to the worldwide LCG community is undoubtely visible F. Malek 32

Conclusions • Significant amount of work performed both by experiments and by sites during the last year § Improvements in the service were measured • Data distribution and most data processing activities on the grid platform are understood and have been routinely exercised § Understanding the needs for analysis needs still more work and will be the priority from now on • LCG-France has contributed to create a community of IN 2 P 3 & Irfu people involved in processing the data coming out of the LHC § All the efforts made in the framework of this project in the last several years should directly benefit the physics research community, which now have interesting real data just coming out of the accelerator § The French contribution to the worldwide LCG community is undoubtely visible F. Malek 32

Back-up slides F. Malek

Back-up slides F. Malek

Information Flow • Several communication channels established and regularly used § Tier-2 and tier-3 s monthly meetings, often involving experiment representatives § Regular meetings with tier-1 experts, mainly for ATLAS and CMS § WLCG collaboration workshops, tier-1 and tier-2 s jamborees at CERN and elsewhere § Close interaction with associated foreign tier-2 s, in particular Univ. Tokyo (Japan) for ATLAS and IHEP (China) for ATLAS and CMS § Wiki - main tool for sharing technical information: http: //lcg. in 2 p 3. fr F. Malek 34

Information Flow • Several communication channels established and regularly used § Tier-2 and tier-3 s monthly meetings, often involving experiment representatives § Regular meetings with tier-1 experts, mainly for ATLAS and CMS § WLCG collaboration workshops, tier-1 and tier-2 s jamborees at CERN and elsewhere § Close interaction with associated foreign tier-2 s, in particular Univ. Tokyo (Japan) for ATLAS and IHEP (China) for ATLAS and CMS § Wiki - main tool for sharing technical information: http: //lcg. in 2 p 3. fr F. Malek 34

Technical Work on Various Fronts • Introduction of new unit for CPU performance measurement (HEP-SPEC 06) § Impact on equipment purchase, accounting, pledges, publication of site information • Improvements in the publication of the installed CPU and storage capacities at sites, to allow for an automated collection of this information by WLCG • Migration to Scientific Linux v 5 at all sites, in particular for worker nodes • Introduction in production of the next generation of computing elements (CREAM) § Initialiy requested by ALICE, other experiments currently integrating it with their job management software stacks • Working groups on CPU accounting and monitoring at the national level § As a result, permission obtained from CNIL for storing and exploiting information for user-level accounting purposes in all the French grid sites associated to the Institut des Grilles § NAGIOS-based national monitoring infrastructure being deployed. It will collect monitoring information for all French sites and feed the European-wide repository in the EGI era F. Malek 35

Technical Work on Various Fronts • Introduction of new unit for CPU performance measurement (HEP-SPEC 06) § Impact on equipment purchase, accounting, pledges, publication of site information • Improvements in the publication of the installed CPU and storage capacities at sites, to allow for an automated collection of this information by WLCG • Migration to Scientific Linux v 5 at all sites, in particular for worker nodes • Introduction in production of the next generation of computing elements (CREAM) § Initialiy requested by ALICE, other experiments currently integrating it with their job management software stacks • Working groups on CPU accounting and monitoring at the national level § As a result, permission obtained from CNIL for storing and exploiting information for user-level accounting purposes in all the French grid sites associated to the Institut des Grilles § NAGIOS-based national monitoring infrastructure being deployed. It will collect monitoring information for all French sites and feed the European-wide repository in the EGI era F. Malek 35

National Analysis Farm: current status • Dedicated PROOF-based 160 cores farm for interactive analysis § Data stored in dedicated xrootd servers (20 TB) connected to the IP-based local area network § Bandwidth between compute nodes and storage servers seems to be the current limiting factor • Well suited and integrated to ALICE tools • However, not good enough integration with the grid world § Manual copies of data to/from the farm disk servers are currently necessary • ATLAS physicists at CPPM starting to look at this plaform § First experience reported early March 2010 § A national analysis facility could complement the analysis farms located at the laboratory, in particular, when big data samples are to be processed • Feedback from other experiments would be very welcome F. Malek 36

National Analysis Farm: current status • Dedicated PROOF-based 160 cores farm for interactive analysis § Data stored in dedicated xrootd servers (20 TB) connected to the IP-based local area network § Bandwidth between compute nodes and storage servers seems to be the current limiting factor • Well suited and integrated to ALICE tools • However, not good enough integration with the grid world § Manual copies of data to/from the farm disk servers are currently necessary • ATLAS physicists at CPPM starting to look at this plaform § First experience reported early March 2010 § A national analysis facility could complement the analysis farms located at the laboratory, in particular, when big data samples are to be processed • Feedback from other experiments would be very welcome F. Malek 36

National Analysis Farm: ongoing work • Currently working on upgrading the hardware infrastructure, in particular to increase the data bandwidth between compute nodes and storage servers § Deploying a pilot configuration with a storage area network at 10 Gbps over i. SCSI § Total volume: 20 TB § Number of cores: 128 (in 16 blades) F. Malek 37

National Analysis Farm: ongoing work • Currently working on upgrading the hardware infrastructure, in particular to increase the data bandwidth between compute nodes and storage servers § Deploying a pilot configuration with a storage area network at 10 Gbps over i. SCSI § Total volume: 20 TB § Number of cores: 128 (in 16 blades) F. Malek 37