dc7f81a301f9fb6e35e66c293f8bbb2d.ppt

- Количество слайдов: 42

Latent Semantic Analysis John Martin Small Bear Technologies, Inc. <John. Martin@Small. Bear. Technologies. com> www. Small. Bear. Technologies. com

Problem

Don't just search. . . Understand

The Goal Maintaining and increasing the value of information by facilitating the understanding of meaning Collecting information is not the problem – gaining understanding is the issue Too much information is just as useless at too little information © 2011 Small Bear Technologies, Inc. 4

Some Terms Data – individual items or facts Information – data with context Meaning – how we understand collections of information

Unstructured Text News feeds Call center logs E-mail traffic Surveys Social network postings Publishing Observational data © 2011 Small Bear Technologies, Inc. 6

Automated Methods Required Volume of information Speed of change/production Complexity Need impartial/consistent analysis © 2011 Small Bear Technologies, Inc. 7

Some Common Methods Lexical matching Statistical evaluation Vector space models Rule based systems Parts of speech analysis © 2011 Small Bear Technologies, Inc. 8

The Problem Failure to capture meaning and provide insight Methods not universally applicable Language or domain dependent Need for specialized prior knowledge of data Require human interaction Tagging, Keyword identification, Categorizations Not practical for large data sets © 2011 Small Bear Technologies, Inc. 9

The Cost Too much information is just as useless at too little information Failure to understand the information we have leads to: Lost opportunities Unsatisfied customers Inability to fulfill mission Financial repercussions © 2011 Small Bear Technologies, Inc. 10

![Latent Semantic Analysis Theory of meaning[8] Creates a mapping of meaning acquired from the Latent Semantic Analysis Theory of meaning[8] Creates a mapping of meaning acquired from the](https://present5.com/presentation/dc7f81a301f9fb6e35e66c293f8bbb2d/image-11.jpg)

Latent Semantic Analysis Theory of meaning[8] Creates a mapping of meaning acquired from the text itself Computational model Can perform many of the cognitive tasks that humans do essentially as well as humans [7] © 2011 Small Bear Technologies, Inc. 11

A Cognitive Model LSA processing constructs a mapping of meaning in a semantic space The mapping gives the meaning of words and documents not vice versa © 2011 Small Bear Technologies, Inc. 12

Compositionality Constraint The meaning of a document is the sum of the meaning of its words The meaning of a word is defined by the documents in which it appears (and does not appear) © 2011 Small Bear Technologies, Inc. 15

LSA Space Construction LSA models a document as a simple linear equation A collection of documents (corpus) is a large set of simultaneous equations © 2011 Small Bear Technologies, Inc. 16

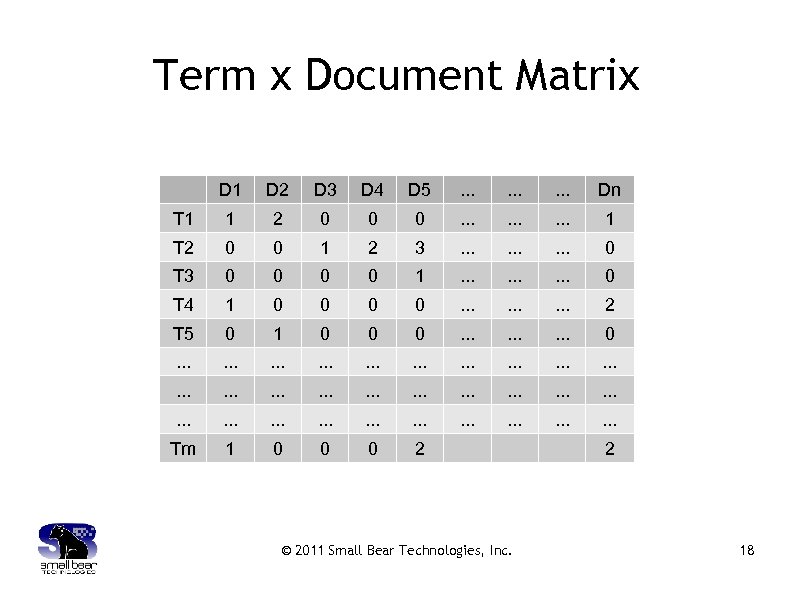

Processing a Corpus Divide text corpus into units (documents) Typically paragraphs of text Raw matrix is constructed from units One row for each word type One column for each unit (document) Cells contain the number of times a particular word appears in a particular document Weighting functions may be applied © 2011 Small Bear Technologies, Inc. [5] 17

Term x Document Matrix D 1 D 2 D 3 D 4 D 5 . . Dn T 1 1 2 0 0 0 . . 1 T 2 0 0 1 2 3 . . 0 T 3 0 0 1 . . 0 T 4 1 0 0 . . 2 T 5 0 1 0 0 0 . . . . . . Tm 1 0 0 0 2 © 2011 Small Bear Technologies, Inc. 2 18

Sparse Matrix The weighted term by document matrix represents a large set of simultaneous equations The term by document matrix is sparse Typically less than 1% of the values are nonzero © 2011 Small Bear Technologies, Inc. [2] 19

Solving Simultaneous Equations The system of simultaneous equations is solved for the meaning of each word type and document Sparse matrix Singular Value Decomposition Lanczos algorithm is typically used Only solve for a reduced number of dimensions Produces vectors representing the meaning of each term and document © 2011 Small Bear Technologies, Inc. 20

![Singular Value Decomposition[10] The rows of matrix U are the vectors for the word Singular Value Decomposition[10] The rows of matrix U are the vectors for the word](https://present5.com/presentation/dc7f81a301f9fb6e35e66c293f8bbb2d/image-21.jpg)

Singular Value Decomposition[10] The rows of matrix U are the vectors for the word types Columns of U are the eigenvectors defining the axes for word type space © 2011 Small Bear Technologies, Inc. 21

![Singular Value Decomposition[10] The rows of matrix V are the text unit (document) vectors Singular Value Decomposition[10] The rows of matrix V are the text unit (document) vectors](https://present5.com/presentation/dc7f81a301f9fb6e35e66c293f8bbb2d/image-22.jpg)

Singular Value Decomposition[10] The rows of matrix V are the text unit (document) vectors Columns of V are eigenvectors defining the axes for document space © 2011 Small Bear Technologies, Inc. 22

Orthogonal Axes Every dimension is independent of every other dimension In the Term/Document matrix direct comparison was not possible © 2011 Small Bear Technologies, Inc. 23

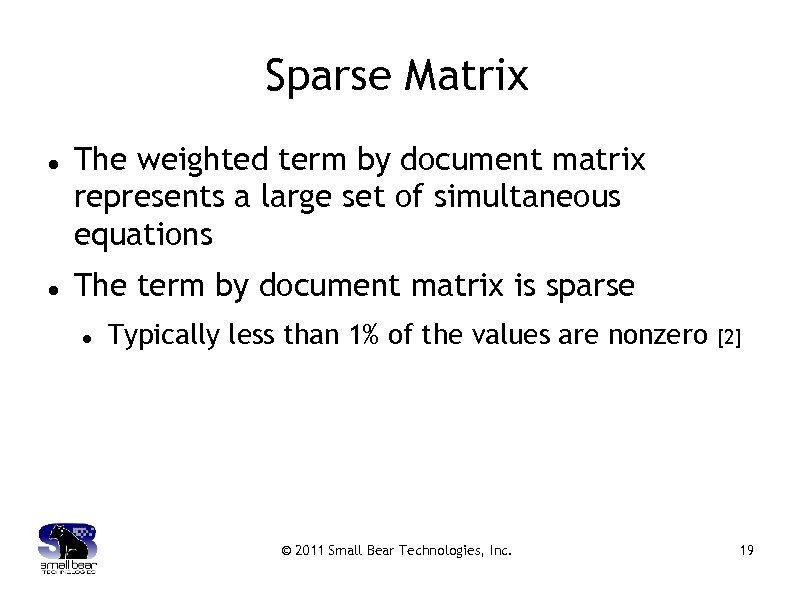

Dimensional Reduction With enough variables every object is different With too few variables every object is the same [a r f j] [a r c g] Typically solve for 300 – 500 dimensions [10] © 2011 Small Bear Technologies, Inc. 24

Consider a geographic map Knoxville Cincinnati Nashville Atlanta Cincinnati Nashville Knoxville Atlanta © 2011 Small Bear Technologies, Inc. 25

Semantic Space Vectors represent the meaning of a document (or term) Items similar in meaning are near each other in the semantic space © 2011 Small Bear Technologies, Inc. 26

![Computational Issues Nontrivial computation Large sparse symmetric eigenproblem Scalability concerns [11] Size of document Computational Issues Nontrivial computation Large sparse symmetric eigenproblem Scalability concerns [11] Size of document](https://present5.com/presentation/dc7f81a301f9fb6e35e66c293f8bbb2d/image-27.jpg)

Computational Issues Nontrivial computation Large sparse symmetric eigenproblem Scalability concerns [11] Size of document set Speed of processing Accuracy issues Finite arithmetic introduces significant error © 2011 Small Bear Technologies, Inc. 27

Misconceptions and Misunderstandings Driven by term co-occurrence Word order issues Data collection size Content and Meaning © 2011 Small Bear Technologies, Inc. 28

Co-occurrence LSA starts with a kind of co-occurrence Appearing in the same document does not make words similar Similarity is determined by the effect of the word meaning on the system of equations © 2011 Small Bear Technologies, Inc. 29

Word Order Word order effects almost entirely within single sentences Research indicates only around 10% of meaning is word order dependent (for English) [“order syntax? Much. Ignoring word Missed by is how”][8] © 2011 Small Bear Technologies, Inc. 30

Data Collection Size Beware of small data collections Generally - Use at least 100, 000 documents © 2011 Small Bear Technologies, Inc. 31

Content LSA builds its notion of meaning from the content of the data collection Garbage In = Garbage Out © 2011 Small Bear Technologies, Inc. 32

The Problem Revisited Failure to capture meaning and provide insight Methods not universally applicable Need for specialized prior knowledge of data Require human interaction Not practical for large data sets © 2011 Small Bear Technologies, Inc. 33

Operations Retrieval Clustering Comparison Interpretation Completion © 2011 Small Bear Technologies, Inc. 34

Applications of LSA Library Illustration Retrieval Content analysis Evaluation of “fit” into an existing collection Comparison of multiple collections Indexing of multilingual collections © 2011 Small Bear Technologies, Inc. 35

Applications of LSA Repairing/cleaning data Education Grading Summarizing Non-textual applications Bio-informatics Personality profiles/compatibility analysis © 2011 Small Bear Technologies, Inc. 36

Conclusion LSA offers powerful capabilities for gaining insight and understanding the contents of a data collection LSA provides analysis techniques not available with other Text Analytic methods Small Bear Technologies provides the core technology, tools, and support for performing Latent Semantic Analysis © 2011 Small Bear Technologies, Inc. 37

Suggested Reading Handbook of Latent Semantic Analysis; Landauer, T. , Mc. Namara D. , Dennis, S. , Kintsch, W. , Eds. ; Lawrence Erlbaum Associates, Inc. : Mahwah, New Jersey, 2007. “Indexing by Latent Semantic Analysis” Deerwester, S. ; Dumais, S. ; Furnas, G. ; Landauer, T. ; Harshman, R. , Journal of the American Society for Information Sciences 1990, 41, 391 -407. “Improving the retrieval of information from external sources” Dumais, S. , Behavior Research Methods, Instruments, & Computers 1991, 23, 229 -236. “An Introduction to Latent Semantic Analysis” Landauer, T. ; Foltz, P. ; Laham, D. , Discourse Processes 1998, 259 -284. “A solution to Plato's problem: The Latent Semantic Analysis Theory of acquisition, induction, and representation of Knowledge” Landauer, T. ; Dumais, S. , Psychological Review 1997, 104, 211 -240. © 2011 Small Bear Technologies, Inc. 38

![References [1] Berry, M. W. , Large Sparse Singular Value Computations. In International Journal References [1] Berry, M. W. , Large Sparse Singular Value Computations. In International Journal](https://present5.com/presentation/dc7f81a301f9fb6e35e66c293f8bbb2d/image-39.jpg)

References [1] Berry, M. W. , Large Sparse Singular Value Computations. In International Journal of Supercomputer Applications, 1992, Vol. 6, pp. 13 -49. [2] Berry, M. W. , & Browne, M. , Understanding Search Engines: Mathematical Modeling and Text Retrieval (2 nd ed. ) SIAM, Philadelphia, 2005. [3] Berry, M. W. , & Martin, D. , Principle Component Analysis for Information Retrieval Applications. In Statistics: A series of textbooks and monographs: Handbook of Parallel Computing and Statistics, Chapman & Hall/CRC, Boca Raton, 2005, pp. 399 -413. [4] Deerwester, S. , Dumais, S, Furnas, G. , Landauer, T. , & Harshman, R, Indexing by Latent Semantic Analysis. In Journal of the American Society of Information Sciences, 1990, Vol. 41, pp. 391 -407. [5] Dumais, S. , Improving the Retrieval of Information from External Sources. In Behavior Research Methods, Instruments, and Computers, 1991, Vol. 23, pp. 229 -236. © 2011 Small Bear Technologies, Inc. 39

![References (cont. ) [6] Grimes, S. , Text Analytics 2009: User Perspectives on Solutions References (cont. ) [6] Grimes, S. , Text Analytics 2009: User Perspectives on Solutions](https://present5.com/presentation/dc7f81a301f9fb6e35e66c293f8bbb2d/image-40.jpg)

References (cont. ) [6] Grimes, S. , Text Analytics 2009: User Perspectives on Solutions and Providers. Alta Plana Research: 2009 http: //altaplana. com/TA 2009 [7] Landauer, T. K. , On the Computational Basis of Cognition: Arguments from LSA. In The Psychology of Learning and Motivation. B. H. Ross (Ed. ), Academic Press, New York, 2002; pp. 43 -84. [8] Landauer, T. K. , LSA as a Theory of Meaning. In The Handbook of Latent Semantic Analysis, Landauer, Mc. Namara, Dennis, & Kintsch (Eds. ), Lawrence Erlbaum Associates, Inc. , Mahwah, New Jersey, 2007; pp. 3 -34. [9] Landauer, T. K. , & Dumais, S. , A Solution to Plato's Problem: The Latent Semantic Analysis Theory of Acquisition, Induction, and Representation of Knowledge. In Psychological Review, 1997, Vol. 104, pp. 322 -346. [10] Martin, D. , Berry, M. , Mathematical Foundations Behind Latent Semantic Analysis, In The Handbook of Latent Semantic Analysis, Landauer, Mc. Namara, Dennis, & Kintsch (Eds. ), Lawrence Erlbaum Associates, Inc. , Mahwah, New Jersey, 2007; pp. 35 -55. © 2011 Small Bear Technologies, Inc. 40

![References (cont. ) [11] Martin, D. , Martin, J. , Berry, M. , Browne, References (cont. ) [11] Martin, D. , Martin, J. , Berry, M. , Browne,](https://present5.com/presentation/dc7f81a301f9fb6e35e66c293f8bbb2d/image-41.jpg)

References (cont. ) [11] Martin, D. , Martin, J. , Berry, M. , Browne, M. , Out-of-Core SVD performance for document indexing. In Applied Numerical Mathematics, 2007, Vol. 14, No. 10.

John Martin Small Bear Technologies, Inc. <John. Martin@Small. Bear. Technologies. com> www. Small. Bear. Technologies. com

dc7f81a301f9fb6e35e66c293f8bbb2d.ppt