62f31d82ee02189ee58f59b35541ea74.ppt

- Количество слайдов: 24

Knowledge Engineering for Bayesian Networks Ann Nicholson School of Computer Science and Software Engineering Monash University

Overview l The BN Knowledge Engineering Process » focus on combining expert elicitation and automated methods l l l Case Study I: Seabreeze prediction Case Study II: Intelligent Tutoring System for decimal misconceptions Conclusions 2

Elicitation from experts l Variables » important variables? values/states? l Structure » causal relationships? » dependencies/independencies? l Parameters (probabilities) » quantify relationships and interactions? l Preferences (utilities) (for decision networks) 3

Expert Elicitation Process l l l These stages are done iteratively Stops when further expert input is no longer cost effective Process is difficult and time consuming. 4

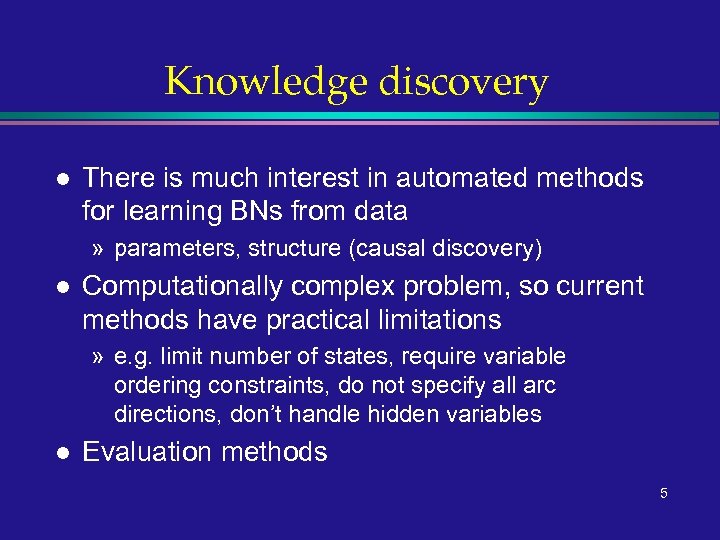

Knowledge discovery l There is much interest in automated methods for learning BNs from data » parameters, structure (causal discovery) l Computationally complex problem, so current methods have practical limitations » e. g. limit number of states, require variable ordering constraints, do not specify all arc directions, don’t handle hidden variables l Evaluation methods 5

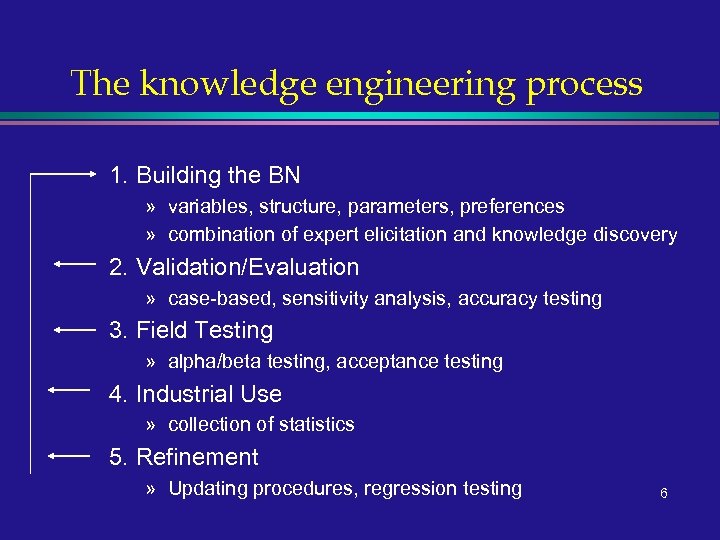

The knowledge engineering process 1. Building the BN » variables, structure, parameters, preferences » combination of expert elicitation and knowledge discovery 2. Validation/Evaluation » case-based, sensitivity analysis, accuracy testing 3. Field Testing » alpha/beta testing, acceptance testing 4. Industrial Use » collection of statistics 5. Refinement » Updating procedures, regression testing 6

Case Study: Seabreeze prediction l Joint project with Bureau of Meteorology » (Kennet, Korb & Nicholson, PAKDD’ 2001) l Goal: proof of concept; test ideas about integration of automated learners & elicitation What is a seabreeze? (separate picture) 7

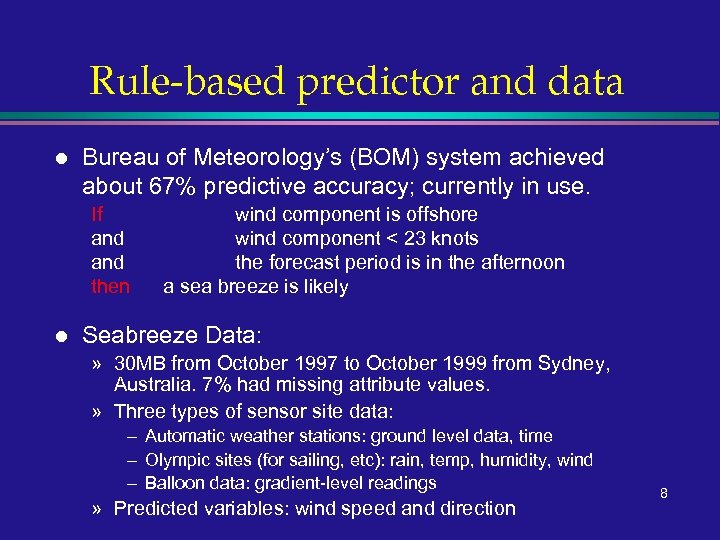

Rule-based predictor and data l Bureau of Meteorology’s (BOM) system achieved about 67% predictive accuracy; currently in use. If and then l wind component is offshore wind component < 23 knots the forecast period is in the afternoon a sea breeze is likely Seabreeze Data: » 30 MB from October 1997 to October 1999 from Sydney, Australia. 7% had missing attribute values. » Three types of sensor site data: – Automatic weather stations: ground level data, time – Olympic sites (for sailing, etc): rain, temp, humidity, wind – Balloon data: gradient-level readings » Predicted variables: wind speed and direction 8

Methodology 1. 2. 3. l Expert Elicitation. Using variables with available data, forecasters provided causal relations between them. Tetrad II (Spirtes, et al. , 1993) uses the Verma. Pearl algorithm (1991) with significance testing to recover causal structure. (NB: usability problems) Ca. MML (Wallace and Korb, 1999) uses Minimum message Length (MML) to discover causal structure. BNs for Seabreeze Predictions (see separate slide) » All parameterization was performed by Netica keeping different methods on an equal footing. » Uses simple counting over training data to estimate conditional probabilities (Spiegelhalter & Lauritzen, 1990) 9

Predictive accuracy l l l Instead of seabreeze existence prediction, we substituted more demanding task: prediction of wind direction at ground level From this (and gradient-level wind direction), seabreezes can be inferred. Training/testing regime » randomly select 80% of data for training » use remainder for testing accuracy l Results » See separate slide (comparison of airport site type network versions) 10

Predictive accuracy conclusions l l l Elicited and discovered nets (MML + Tetrad II) are systematically superior to BOM RB Discovered networks are superior to elicited nets in first 3 hrs (conf intervals are ~10%) Strong time component to accuracy 11

Adaptation: Incremental learning l l Learn structure from first year’s data (using MML) Reparameterise nets over second year’s data, while predicting seabreezes » greedy search yielded a time decay factor of e-t 0. 05 l Results (see separate slides) » comparison of incremental and normal training methods by BN type and by time of year » incremental performed better 12

Case Study II: Intelligent tutoring l l Tutoring domain: primary and secondary school students’ misconceptions about decimals Based on Decimal Comparison Test (DCT) » student asked to choose the larger of pairs of decimals » different types of pairs reveal different misconceptions l l ITS System involves computer games involving decimals This research also looks at a combination of expert elicitation and automated methods 13

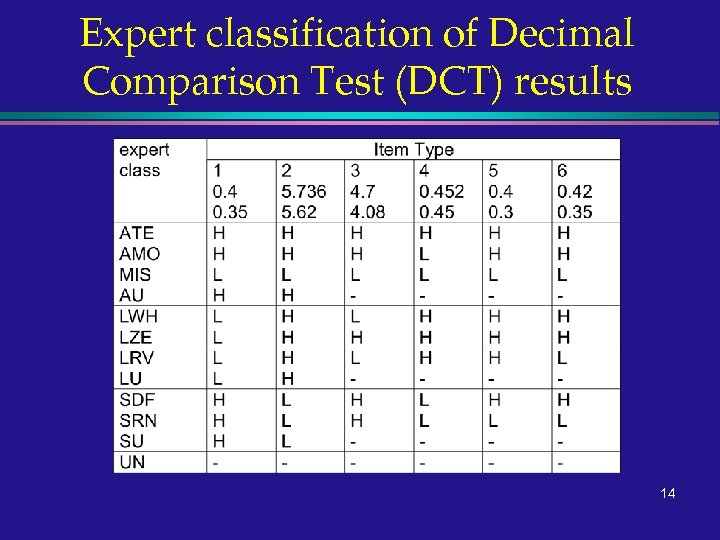

Expert classification of Decimal Comparison Test (DCT) results 14

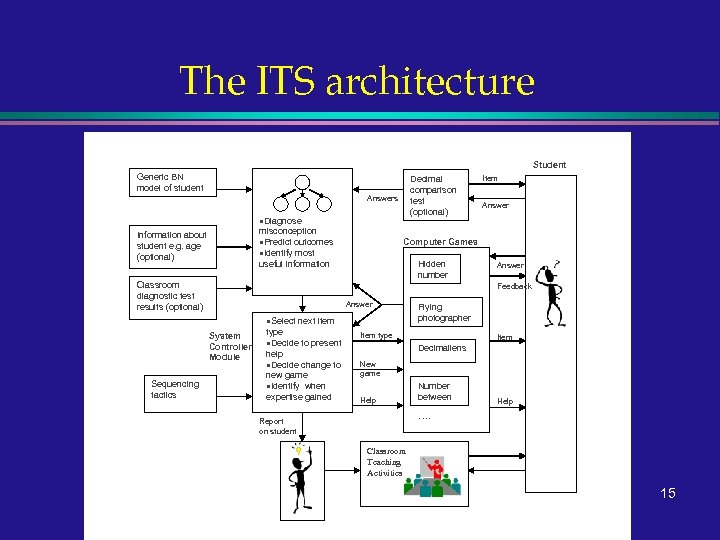

The ITS architecture Adaptive Bayesian Network Inputs Student Generic BN model of student Decimal comparison test (optional) Answers ·Diagnose misconception ·Predict outcomes ·Identify most useful information Information about student e. g. age (optional) Classroom diagnostic test results (optional) Answer Computer Games Hidden number Answer Feedback Answer ·Select next item System Controller Module Sequencing tactics Item type ·Decide to present help ·Decide change to new game ·Identify when expertise gained Flying photographer Item type Item Decimaliens New game Help Number between Help …. Report on student Classroom Teaching Activities 15 Teacher

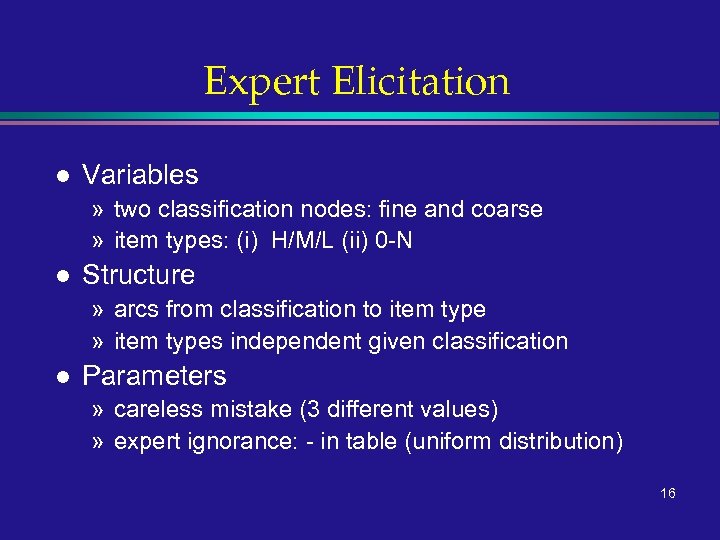

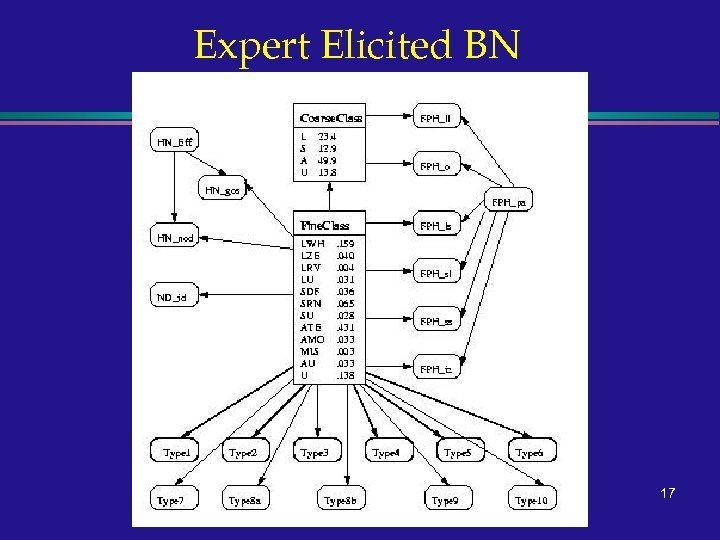

Expert Elicitation l Variables » two classification nodes: fine and coarse » item types: (i) H/M/L (ii) 0 -N l Structure » arcs from classification to item type » item types independent given classification l Parameters » careless mistake (3 different values) » expert ignorance: - in table (uniform distribution) 16

Expert Elicited BN 17

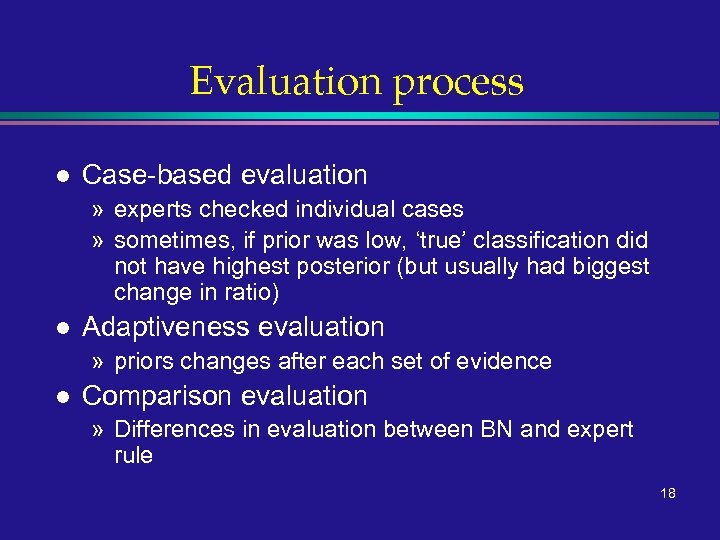

Evaluation process l Case-based evaluation » experts checked individual cases » sometimes, if prior was low, ‘true’ classification did not have highest posterior (but usually had biggest change in ratio) l Adaptiveness evaluation » priors changes after each set of evidence l Comparison evaluation » Differences in evaluation between BN and expert rule 18

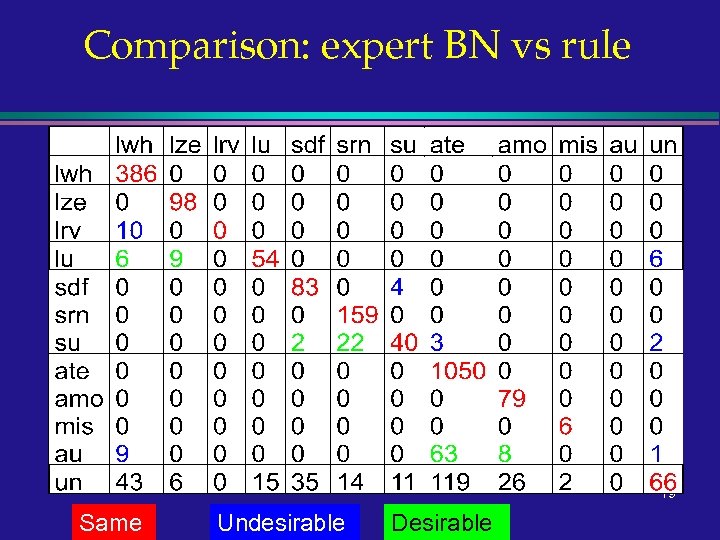

Comparison: expert BN vs rule 19 Same Undesirable Desirable

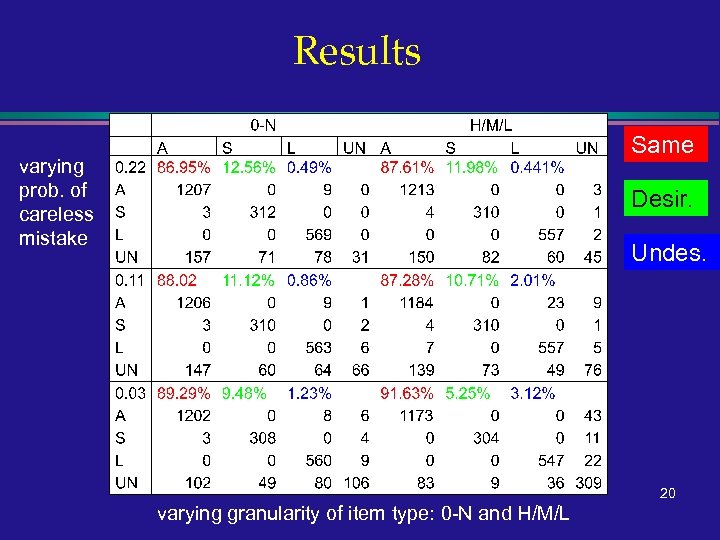

Results Same varying prob. of careless mistake Desir. Undes. 20 varying granularity of item type: 0 -N and H/M/L

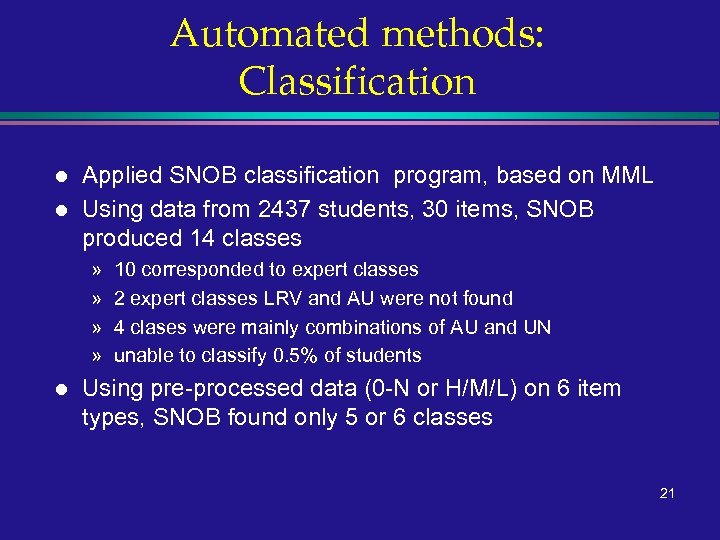

Automated methods: Classification l l Applied SNOB classification program, based on MML Using data from 2437 students, 30 items, SNOB produced 14 classes » » l 10 corresponded to expert classes 2 expert classes LRV and AU were not found 4 clases were mainly combinations of AU and UN unable to classify 0. 5% of students Using pre-processed data (0 -N or H/M/L) on 6 item types, SNOB found only 5 or 6 classes 21

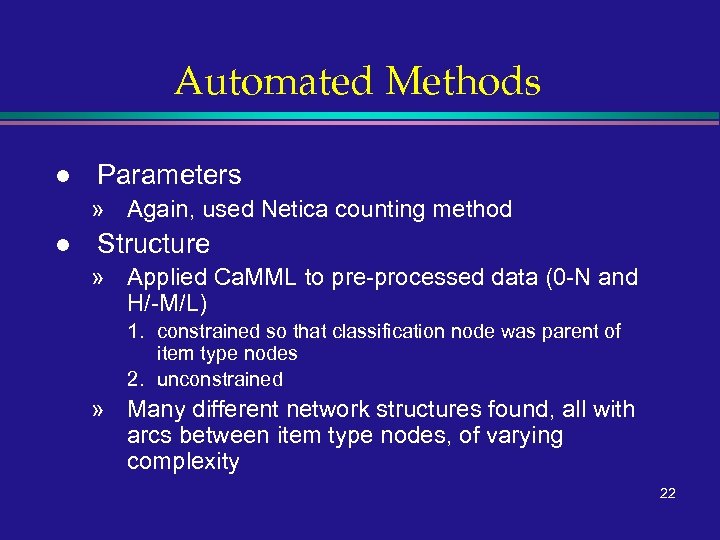

Automated Methods l Parameters » Again, used Netica counting method l Structure » Applied Ca. MML to pre-processed data (0 -N and H/-M/L) 1. constrained so that classification node was parent of item type nodes 2. unconstrained » Many different network structures found, all with arcs between item type nodes, of varying complexity 22

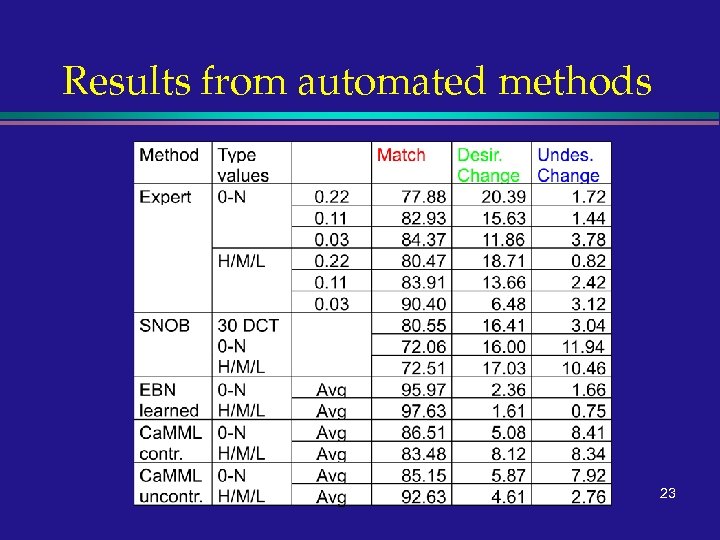

Results from automated methods 23

Conclusions l Automated methods yielded BNs which gave quantitative results comparable to or better than elicited BNs » validation of automated methods (? ) l l Undertaking both elicitation and automated KE resulted in additional domain analysis (e. g. 0 -N vs H/M/L) Hybrid of expert and automated approaches is feasible » methodology for combining is needed » evaluation measures and methods needed (may be domain specific) 24

62f31d82ee02189ee58f59b35541ea74.ppt