66e7fafb9cf342909f1277408b98842e.ppt

- Количество слайдов: 47

JAVELIN Project Briefing Eric Nyberg, Teruko Mitamura, Jamie Callan, Robert Frederking, Jaime Carbonell, Matthew Bilotti, Jeongwoo Ko, Frank Lin, Lucian Lita, Vasco Pedro, Andrew Schlaikjer, Hideki Shima, Luo Si, David Svoboda Language Technologies Institute Carnegie Mellon University JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 1

Status Update • Project Start: September 30, 2004 (now in Month 13) • Last Six Months: – Initial CLQA system evaluated in NTCIR (English-Japanese, English-Chinese) – Multilingual Distributed IR evaluated in CLEF competition – Initial Phase II English system in TREC relationship track JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 2

Multilingual QA JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 3

Javelin Multilingual QA • End-to-end systems for English to Chinese and English to Japanese • Participated in NTCIR-5 CLQA-1 (E-C, E-J) evaluation – http: //www. slt. atr. jp/CLQA/ • NTCIR 5 workshop will be held in Tokyo, Japan, December 6 -9, 2005 JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 4

NTCIR CLQA 1 task overview • EC, CE, EJ, JE subtasks – Answer named entities (e. g. person name, organization name, location, artifact, date, money, time, etc. ) – We were the only team that participated in both EC and EJ subtasks • Question/answer data set – EC: 200 for training and formal run – EJ: 300 for training and 200 formal run • Corpus – EC: United Daily News 2000 -2001 (466, 564 articles) – EJ: Yomiuri Newspaper 2000 -2001 (658, 719 articles) JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 5

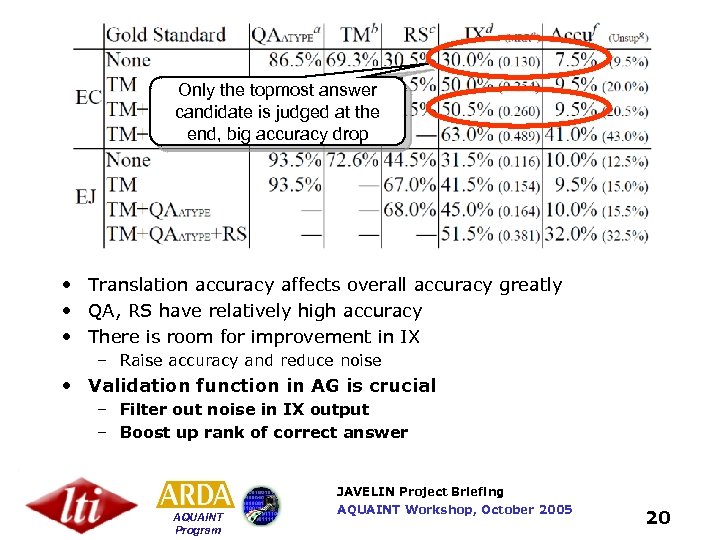

CLQA 1 Evaluation Criteria • Only the top answer candidate is judged, along with its supporting document • Correct answers that were not properly supported by the returned document were judged to be unsupported • Answer is incorrect even if a substring of the answer is correct • Issue: we found that the gold-standard document set (supported) is not complete JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 6

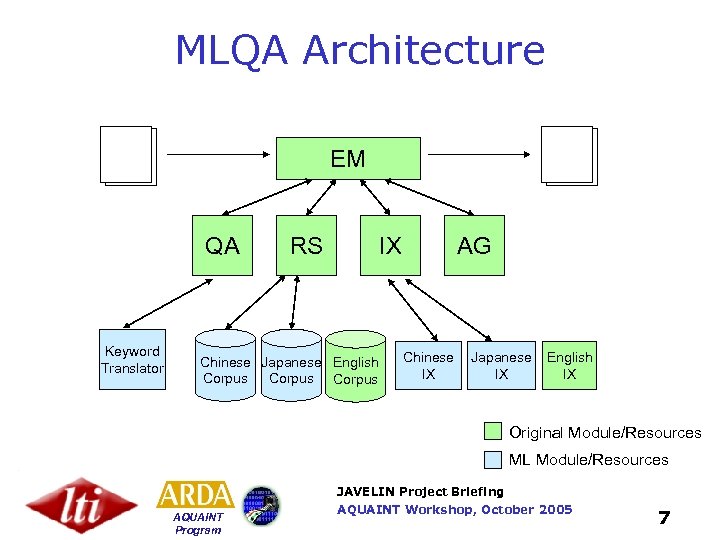

MLQA Architecture EM QA Keyword Translator RS IX Chinese Japanese English Corpus AG Chinese IX Japanese IX English IX Original Module/Resources ML Module/Resources JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 7

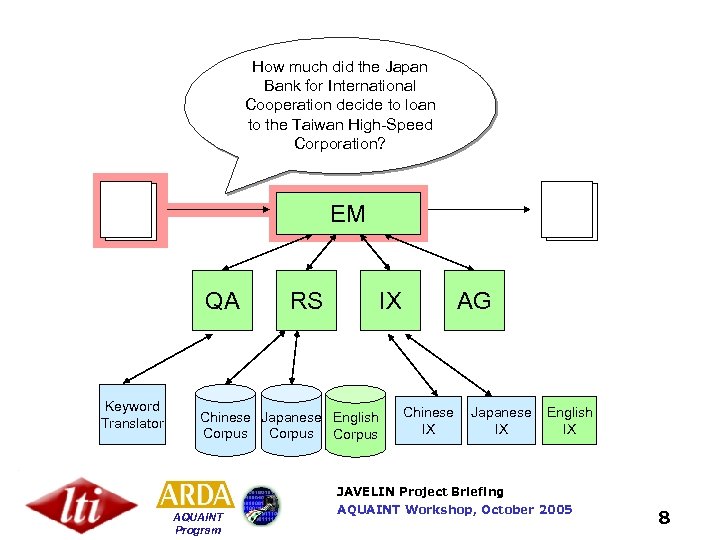

How much did the Japan Bank for International Cooperation decide to loan to the Taiwan High-Speed Corporation? EM QA Keyword Translator RS IX Chinese Japanese English Corpus AG Chinese IX Japanese IX English IX JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 8

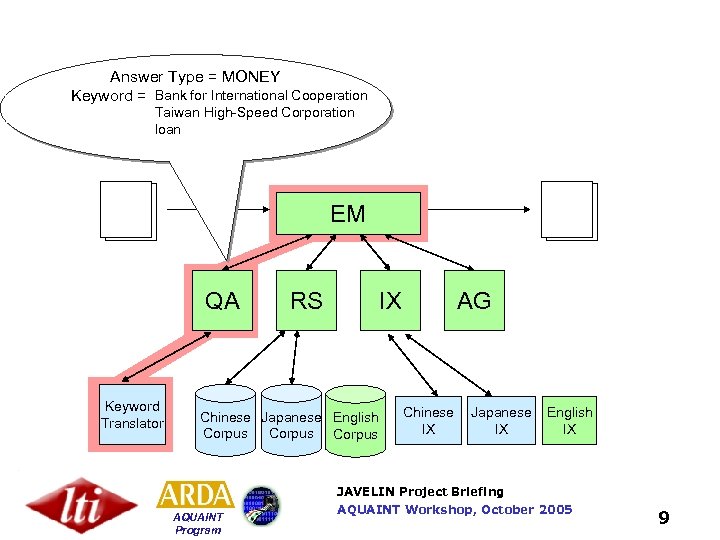

Answer Type = MONEY Bank for International Cooperation Keyword = __________ Taiwan High-Speed Corporation loan EM QA Keyword Translator RS IX Chinese Japanese English Corpus AG Chinese IX Japanese IX English IX JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 9

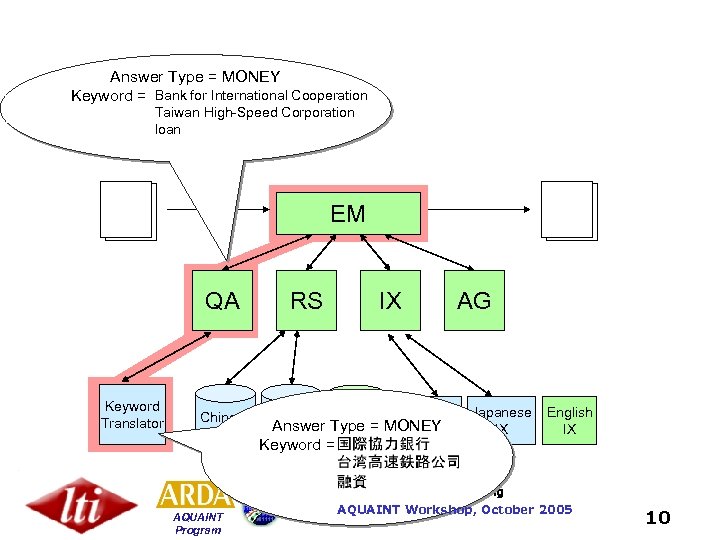

Answer Type = MONEY Bank for International Cooperation Keyword = __________ Taiwan High-Speed Corporation loan EM QA Keyword Translator RS IX Chinese Japanese English Answer Type = MONEY IX Corpus Keyword = _______ AG Japanese IX English IX JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 10

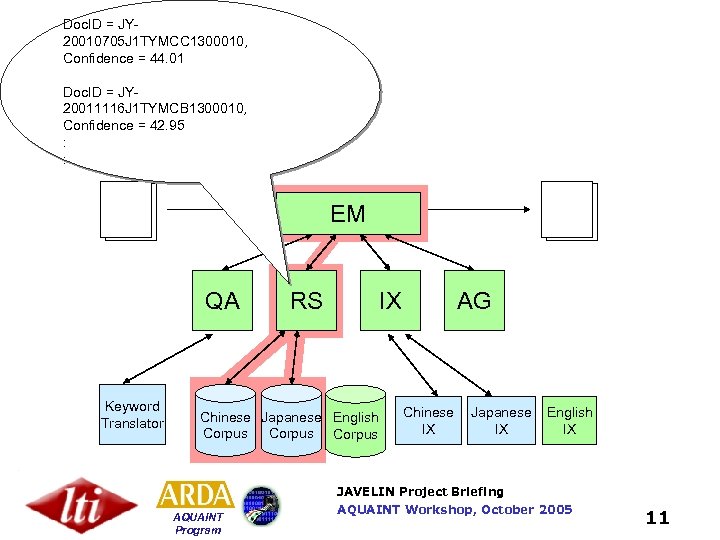

Doc. ID = JY 20010705 J 1 TYMCC 1300010, Confidence = 44. 01 Doc. ID = JY 20011116 J 1 TYMCB 1300010, Confidence = 42. 95 : : EM QA Keyword Translator RS IX Chinese Japanese English Corpus AG Chinese IX Japanese IX English IX JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 11

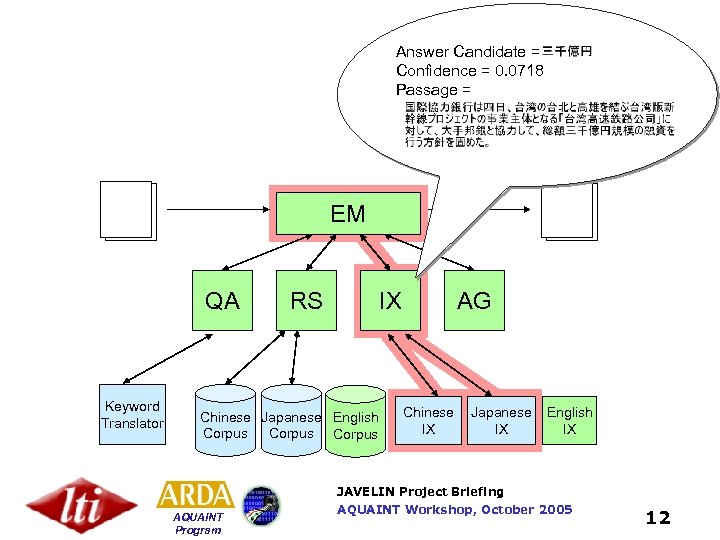

Answer Candidate = Confidence = 0. 0718 Passage = EM QA Keyword Translator RS IX Chinese Japanese English Corpus AG Chinese IX Japanese IX English IX JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 12

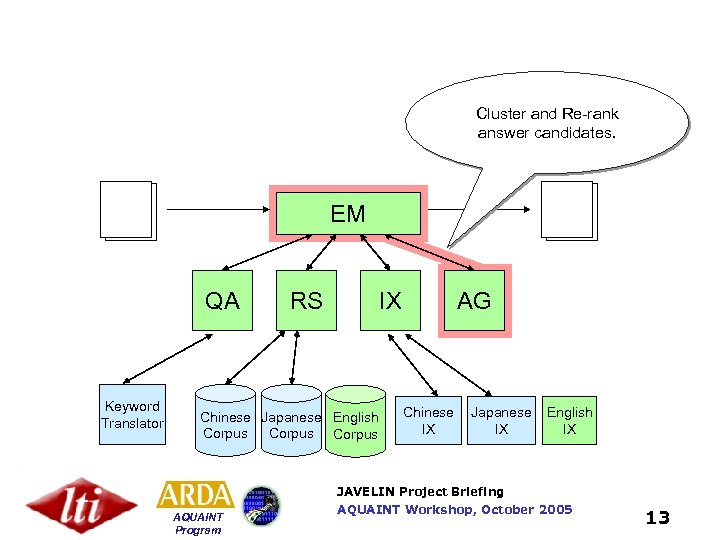

Cluster and Re-rank answer candidates. EM QA Keyword Translator RS IX Chinese Japanese English Corpus AG Chinese IX Japanese IX English IX JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 13

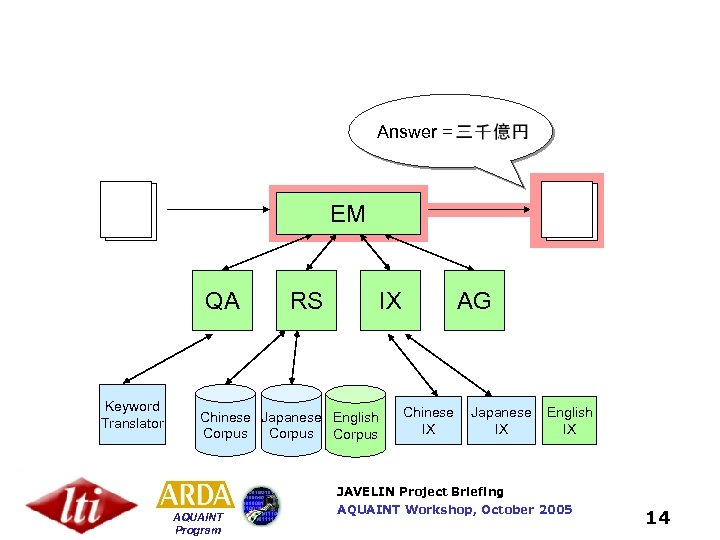

Answer = EM QA Keyword Translator RS IX Chinese Japanese English Corpus AG Chinese IX Japanese IX English IX JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 14

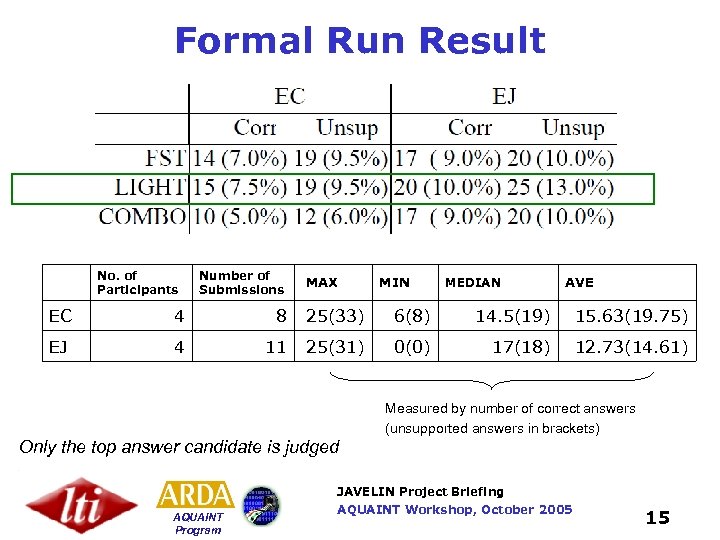

Formal Run Result No. of Participants Number of Submissions MAX MIN MEDIAN AVE EC 4 8 25(33) 6(8) 14. 5(19) 15. 63(19. 75) EJ 4 11 25(31) 0(0) 17(18) 12. 73(14. 61) Measured by number of correct answers (unsupported answers in brackets) Only the top answer candidate is judged JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 15

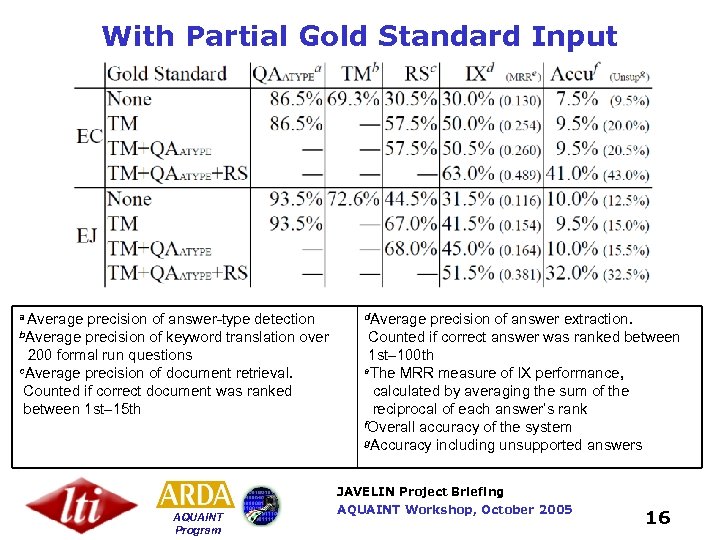

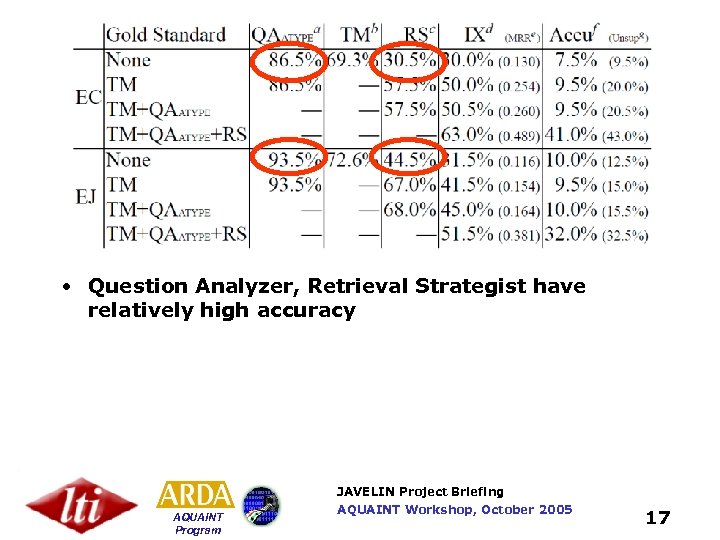

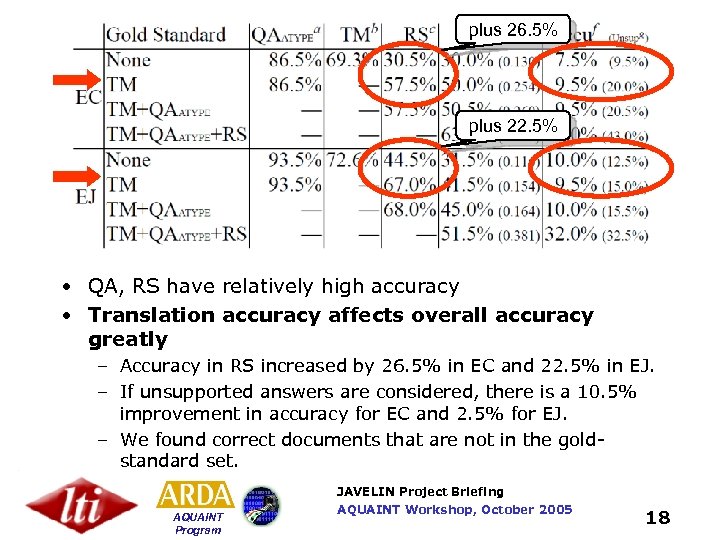

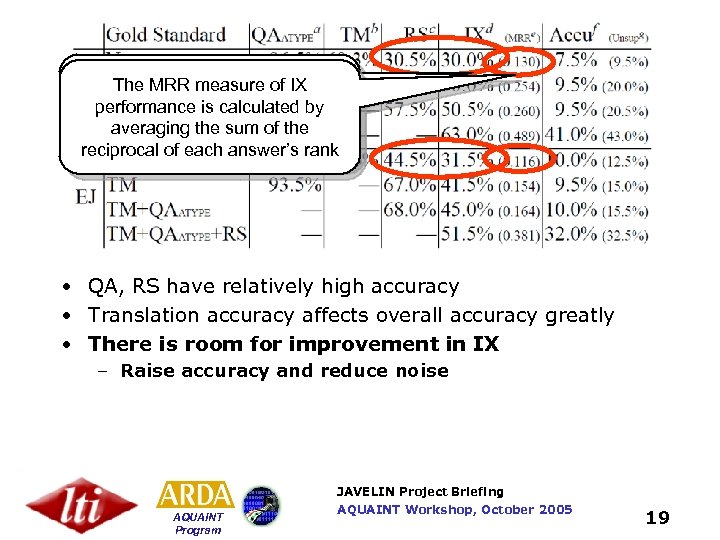

With Partial Gold Standard Input a Average precision of answer-type detection b. Average precision of keyword translation over 200 formal run questions c. Average precision of document retrieval. Counted if correct document was ranked between 1 st– 15 th d. Average precision of answer extraction. Counted if correct answer was ranked between 1 st– 100 th e. The MRR measure of IX performance, calculated by averaging the sum of the reciprocal of each answer’s rank f. Overall accuracy of the system g. Accuracy including unsupported answers JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 16

• Question Analyzer, Retrieval Strategist have relatively high accuracy JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 17

plus 26. 5% plus 22. 5% • QA, RS have relatively high accuracy • Translation accuracy affects overall accuracy greatly – Accuracy in RS increased by 26. 5% in EC and 22. 5% in EJ. – If unsupported answers are considered, there is a 10. 5% improvement in accuracy for EC and 2. 5% for EJ. – We found correct documents that are not in the goldstandard set. JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 18

Average precision of answer The MRR measure of IX extraction is calculated by performance is calculated by counting correct answers the averaging the sum of ranked between 1 st– 100 th reciprocal of each answer’s rank • QA, RS have relatively high accuracy • Translation accuracy affects overall accuracy greatly • There is room for improvement in IX – Raise accuracy and reduce noise JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 19

Only the topmost answer candidate is judged at the end, big accuracy drop • Translation accuracy affects overall accuracy greatly • QA, RS have relatively high accuracy • There is room for improvement in IX – Raise accuracy and reduce noise • Validation function in AG is crucial – Filter out noise in IX output – Boost up rank of correct answer JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 20

Next Steps for Multilingual QA • Improve translation of keywords from E-C and E-J (e. g. named entity translation) • Improve extraction using syntactic and semantic information in Chinese and Japanese (e. g. use of Cabocha) • Improve Validation function in AG • Upcoming Evaluation(s): – NTCIR CLQA-2, if available in 2006 – AQUAINT E-C definition question pilot when training/test data is available • Integrate with Distributed IR (next slides) JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 21

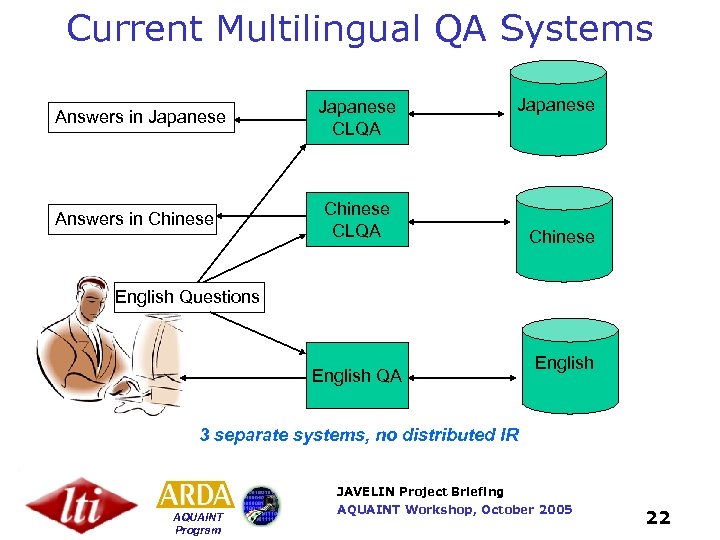

Current Multilingual QA Systems Answers in Japanese Answers in Chinese Japanese CLQA Japanese Chinese CLQA Chinese English Questions English QA English 3 separate systems, no distributed IR JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 22

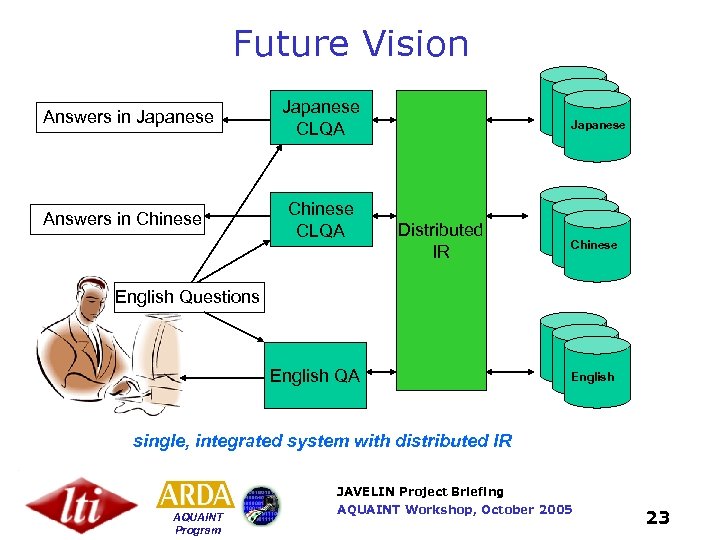

Future Vision Answers in Japanese Answers in Chinese Japanese CLQA Chinese CLQA Japanese Distributed IR Chinese English Questions English QA English single, integrated system with distributed IR JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 23

Multilingual Distributed Information Retrieval JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 24

What Is Distributed IR? • A method of searching across multiple fulltext search engines – “federated search”, “the hidden Web” • It is important when relevant information is scattered across many search engines – Within an organization – On the Web – Which ones have the information you need? JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 25

Many Search Engines Don’t Speak English JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 26

Multilingual Distributed IR: Recent Progress Research Extend monolingual algorithms to multilingual environments • Multilingual query-based sampling – Monolingual corpora • Multilingual result-merging – Given retrieval results in N languages, produce a single multilingual ranked list JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 27

Multilingual Distributed IR: Recent Progress Evaluation • CLEF Multi-8 Ad-hoc Retrieval task – English (2), Spanish (1), French (2), Italian (2), Swedish (1), German (2), Finnish (1), Dutch (2) • Why CLEF? – More languages than NTCIR • More languages is harder – CLEF is focusing on result-merging this year • Models uncooperative environments, where we have no control over individual search engines JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 28

CLEF 2005: Two Cross-Lingual Retrieval Tasks • Usual Ad-hoc Cross-lingual Retrieval – Cooperative search engines, under our control – English queries, documents in 8 languages, 8 search engines • Multilingual Results Merging Task – Uncooperative search engines, nothing under our control • We get only ranked lists of documents from each engine – We treat the task as a multilingual federated search problem • Documents in language l are stored in search engine s • Minimize cost of downloading, indexing and translating documents JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 29

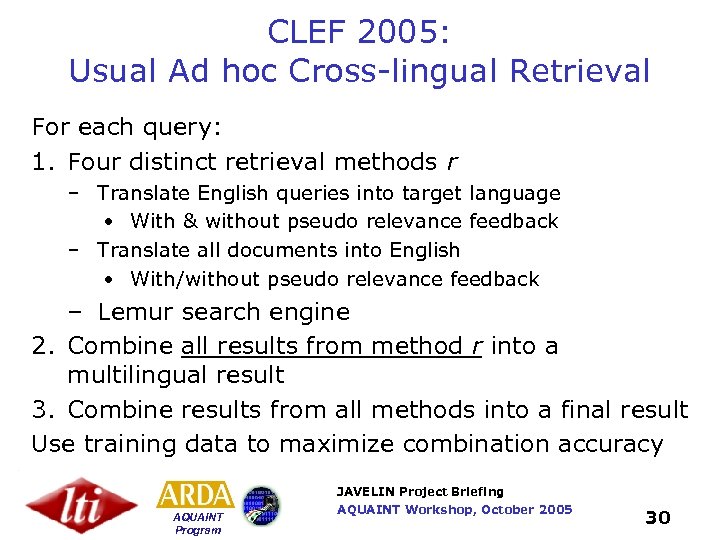

CLEF 2005: Usual Ad hoc Cross-lingual Retrieval For each query: 1. Four distinct retrieval methods r – Translate English queries into target language • With & without pseudo relevance feedback – Translate all documents into English • With/without pseudo relevance feedback – Lemur search engine 2. Combine all results from method r into a multilingual result 3. Combine results from all methods into a final result Use training data to maximize combination accuracy JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 30

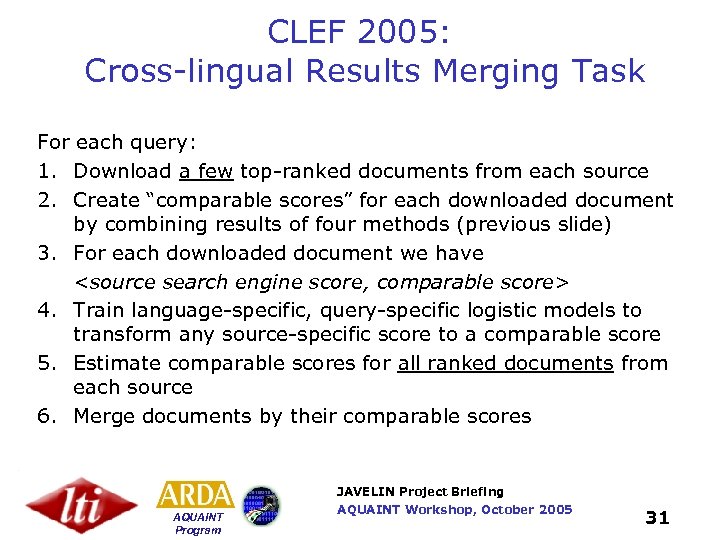

CLEF 2005: Cross-lingual Results Merging Task For each query: 1. Download a few top-ranked documents from each source 2. Create “comparable scores” for each downloaded document by combining results of four methods (previous slide) 3. For each downloaded document we have <source search engine score, comparable score> 4. Train language-specific, query-specific logistic models to transform any source-specific score to a comparable score 5. Estimate comparable scores for all ranked documents from each source 6. Merge documents by their comparable scores JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 31

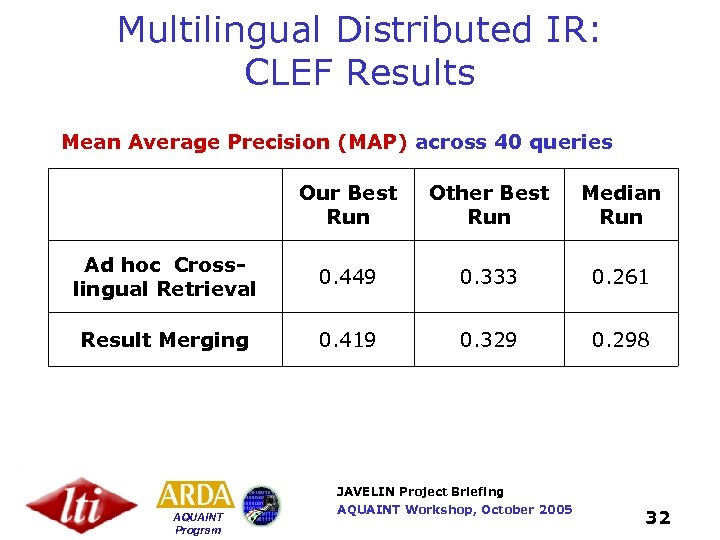

Multilingual Distributed IR: CLEF Results Mean Average Precision (MAP) across 40 queries Our Best Run Other Best Run Median Run Ad hoc Crosslingual Retrieval 0. 449 0. 333 0. 261 Result Merging 0. 419 0. 329 0. 298 JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 32

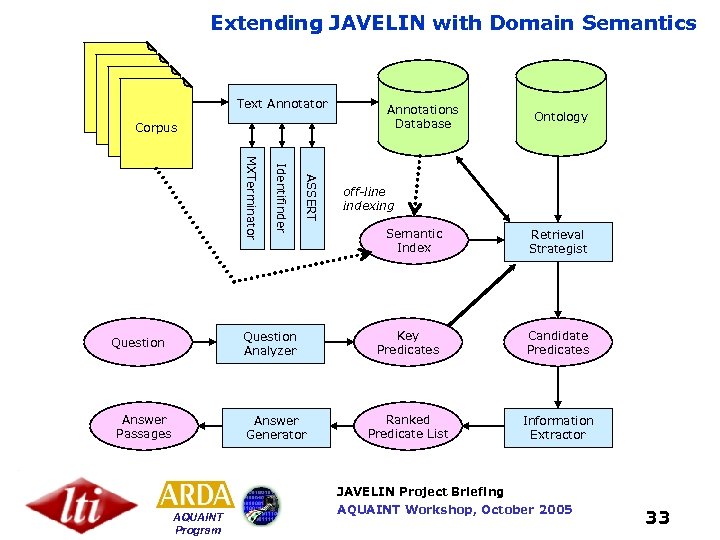

Extending JAVELIN with Domain Semantics Text Annotator Corpus ASSERT Identifinder MXTerminator Question Analyzer Question Answer Passages Answer Generator Annotations Database Ontology off-line indexing Semantic Index Retrieval Strategist Key Predicates Candidate Predicates Ranked Predicate List Information Extractor JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 33

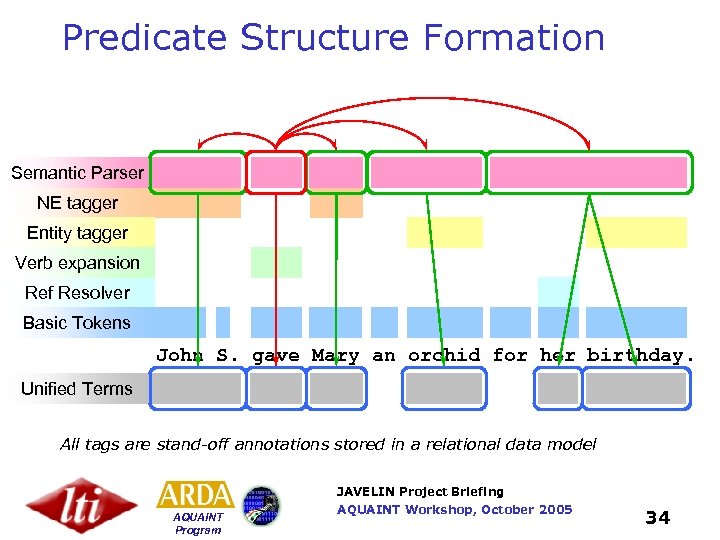

Predicate Structure Formation Semantic Parser NE tagger Entity tagger Verb expansion Ref Resolver Basic Tokens John S. gave Mary an orchid for her birthday. Unified Terms All tags are stand-off annotations stored in a relational data model JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 34

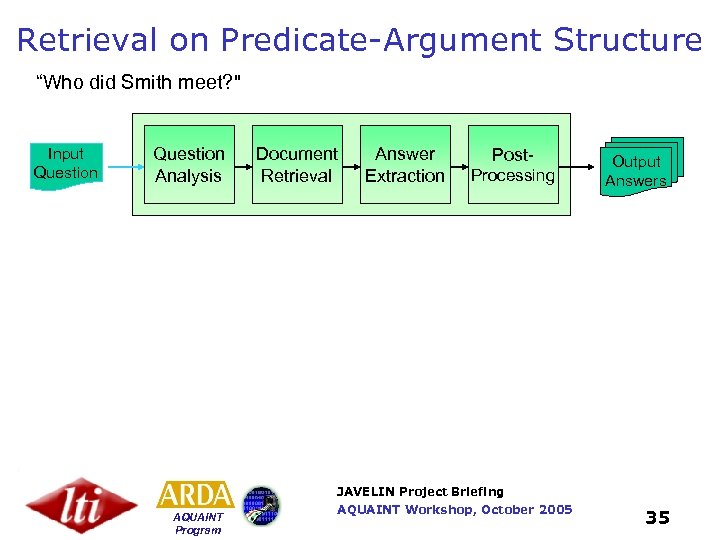

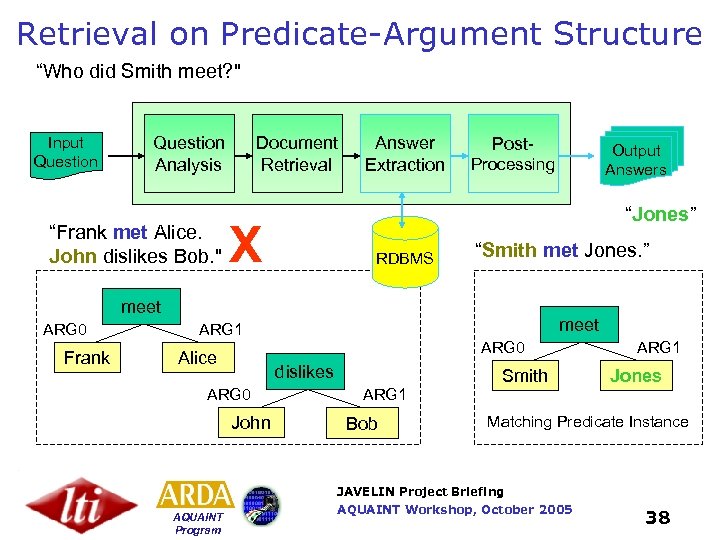

Retrieval on Predicate-Argument Structure “Who did Smith meet? " Input Question Analysis Document Retrieval Answer Extraction Post. Processing Output Answers JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 35

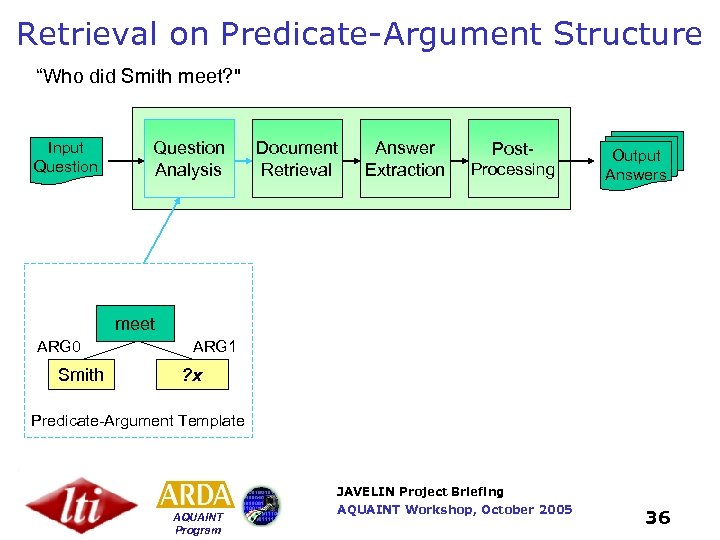

Retrieval on Predicate-Argument Structure “Who did Smith meet? " Input Question Analysis Document Retrieval Answer Extraction Post. Processing Output Answers meet ARG 0 Smith ARG 1 ? x Predicate-Argument Template JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 36

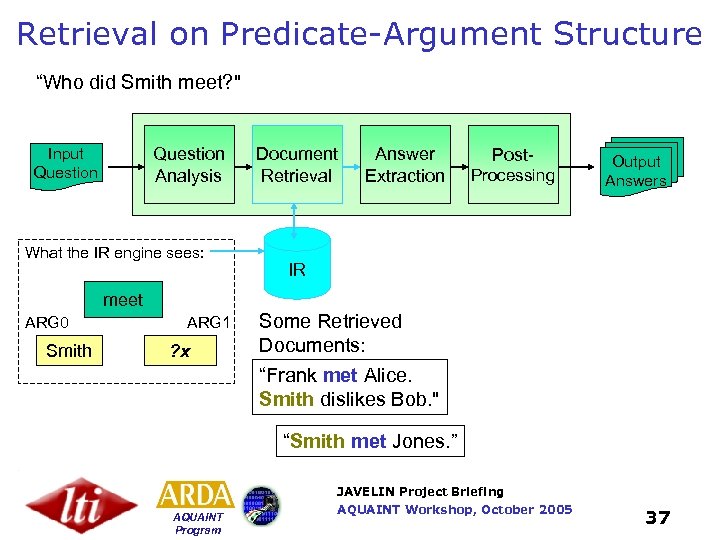

Retrieval on Predicate-Argument Structure “Who did Smith meet? " Question Analysis Input Question What the IR engine sees: Document Retrieval Answer Extraction Post. Processing Output Answers IR meet ARG 0 Smith ARG 1 ? x Some Retrieved Documents: “Frank met Alice. Smith dislikes Bob. " “Smith met Jones. ” JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 37

Retrieval on Predicate-Argument Structure “Who did Smith meet? " Input Question Analysis “Frank met Alice. John dislikes Bob. " Document Retrieval Answer Extraction Post- “Jones” X RDBMS “Smith met Jones. ” meet ARG 0 Frank Output Answers Processing meet ARG 1 ARG 0 Alice dislikes ARG 0 John Smith ARG 1 Jones ARG 1 Bob Matching Predicate Instance JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 38

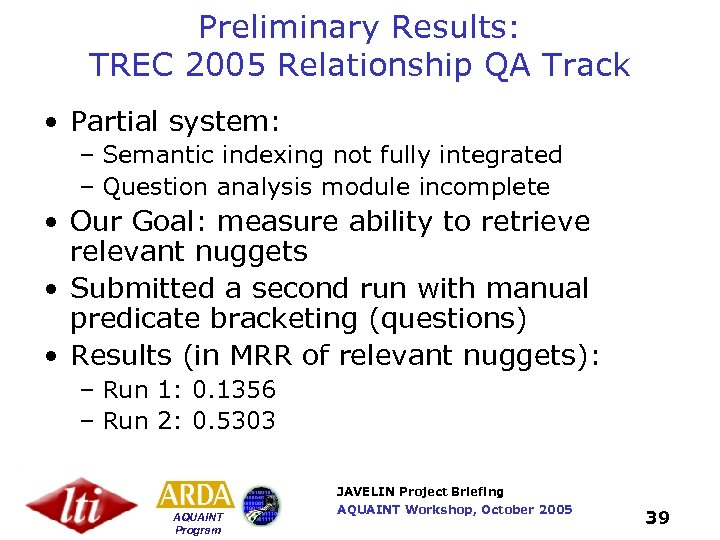

Preliminary Results: TREC 2005 Relationship QA Track • Partial system: – Semantic indexing not fully integrated – Question analysis module incomplete • Our Goal: measure ability to retrieve relevant nuggets • Submitted a second run with manual predicate bracketing (questions) • Results (in MRR of relevant nuggets): – Run 1: 0. 1356 – Run 2: 0. 5303 JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 39

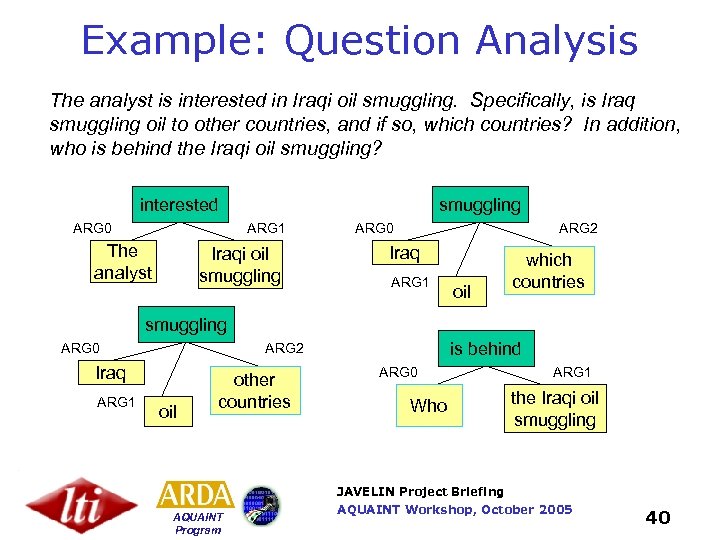

Example: Question Analysis The analyst is interested in Iraqi oil smuggling. Specifically, is Iraq smuggling oil to other countries, and if so, which countries? In addition, who is behind the Iraqi oil smuggling? interested ARG 0 smuggling ARG 1 The analyst Iraqi oil smuggling ARG 0 ARG 2 Iraq ARG 1 oil which countries smuggling ARG 0 Iraq ARG 1 is behind ARG 2 oil other countries ARG 0 Who ARG 1 the Iraqi oil smuggling JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 40

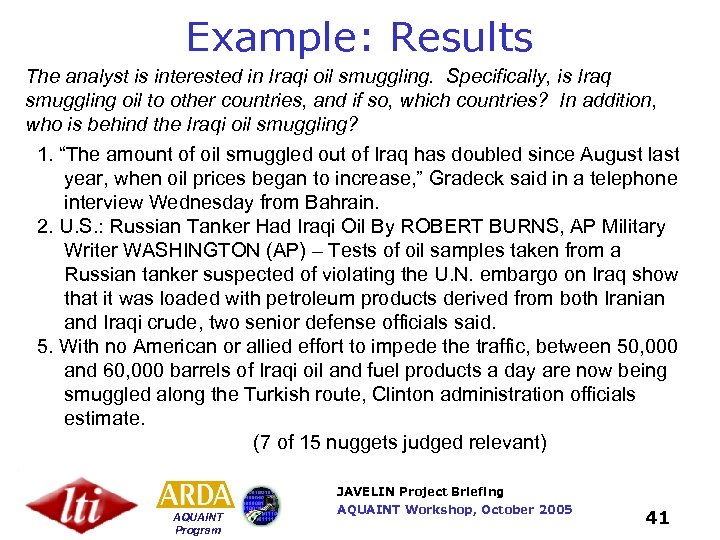

Example: Results The analyst is interested in Iraqi oil smuggling. Specifically, is Iraq smuggling oil to other countries, and if so, which countries? In addition, who is behind the Iraqi oil smuggling? 1. “The amount of oil smuggled out of Iraq has doubled since August last year, when oil prices began to increase, ” Gradeck said in a telephone interview Wednesday from Bahrain. 2. U. S. : Russian Tanker Had Iraqi Oil By ROBERT BURNS, AP Military Writer WASHINGTON (AP) – Tests of oil samples taken from a Russian tanker suspected of violating the U. N. embargo on Iraq show that it was loaded with petroleum products derived from both Iranian and Iraqi crude, two senior defense officials said. 5. With no American or allied effort to impede the traffic, between 50, 000 and 60, 000 barrels of Iraqi oil and fuel products a day are now being smuggled along the Turkish route, Clinton administration officials estimate. (7 of 15 nuggets judged relevant) JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 41

Next Steps • Better Question Analysis – Retrain ASSERT-style annotator –orincorporate rule-based NLP from HALO (KANTOO) • Semantic Indexing and Retrieval – Moving to Indri allows exact representation of our predicate structure in the index and in queries • Ranking retrieved predicate instances – Aggregating information across documents • Extracting answers from predicate-argument structure JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 42

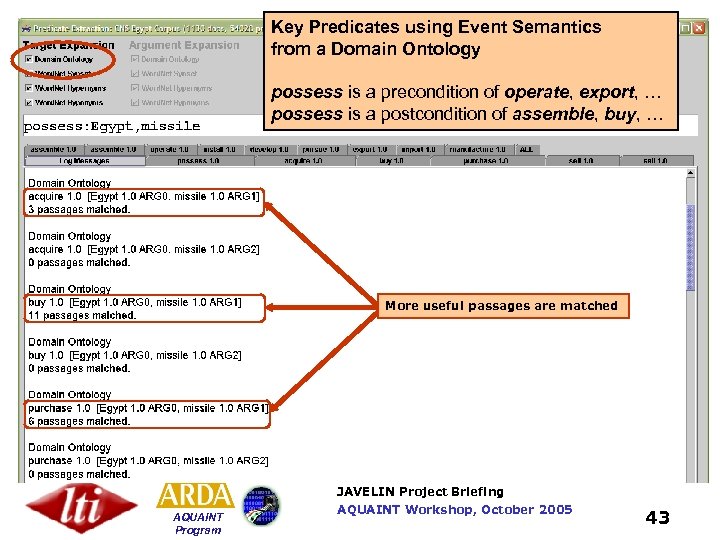

Key Predicates using Event Semantics from a Domain Ontology possess is a precondition of operate, export, … possess is a postcondition of assemble, buy, … More useful passages are matched JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 43

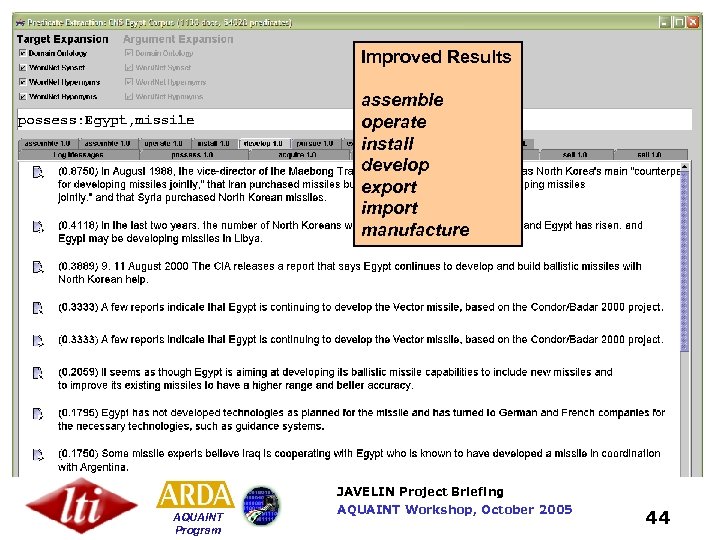

Improved Results assemble operate install develop export import manufacture JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 44

(web demo available) #combine[predicate](](https://present5.com/presentation/66e7fafb9cf342909f1277408b98842e/image-45.jpg)

Indexing of Predicate Structures Implemented Using Indri (October ’ 05) (web demo available) #combine[predicate]( buy. target #any: gpe. arg 0 weapon. arg 1 ) JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 45

Some Recent Papers E. Nyberg, R. Frederking, T. Mitamura, M. Bilotti, K. Hannan, L. Hiyakumoto, J. Ko, F. Lin, L. Lita, V. Pedro, and A. Schlaikjer , "JAVELIN I and II Systems at TREC 2005", notebook paper submitted to TREC 2005. "CMU JAVELIN System for NTCIR 5 CLQA 1", F. Lin, H. Shima, M. Wang, T. Mitamura, to appear in Proceedings of the 5 th NTCIR Workshop. L. Si and J. Callan, "Modeling search engine effectiveness for federated search. ", Proceedings of the Twenty Eighth Annual International ACM SIGIR Conference on Research and Development in Information Retrieval, Salvador, Brazil. L. Si and J. Callan, "CLEF 2005: Multilingual retrieval by combining multiple multilingual ranked lists. ", Sixth Workshop of the Cross-Language Evaluation Forum, CLEF 2005, Vienna, Austria. E. Nyberg, T. Mitamura, R. Frederking, V. Pedro, M. Bilotti, A. Schlaikjer and K. Hannan (2005). "Extending the JAVELIN QA System with Domain Semantics", to appear in Proceedings of AAAI 2005 (Workshop on Question Answering in Restricted Domains) L. Hiyakumoto, L. V. Lita, E. Nyberg (2005). “Multi-Strategy Information Extraction for Question Answering”, FLAIRS 2005, to appear. http: //www. cs. cmu. edu/~ehn/JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 46

Questions ? JAVELIN Project Briefing AQUAINT Program AQUAINT Workshop, October 2005 47

66e7fafb9cf342909f1277408b98842e.ppt