12019167bdbe2a22ed13c49d9daa4989.ppt

- Количество слайдов: 57

Introduction Recherche d’information Jian-Yun Nie (Based on van Rijsbergen’s introduction)

Plan n n Definition History Experimental tradition Methods n n n n Query Indexing Matching Results Evaluation Current situation Research

Definition

Important concepts n n n Document: an entity that contains some description of information, may be in form of text, image, graphic, video, speech, etc. Document collection: a set of documents (may be static or dynamic) User Information need: the user’s requirement of information Query (request): a description of information need, usually in natural language Relevance (relevant document): a document that contains the required information (Pertinence) Correspondence, degree of relevance, relevance score, …: the degree of belief (by the system) that a document is relevant Judge = user/system Indexing: a process that transforms a document into a form of internal representation Retrieval: an operation that determines the documents to be retrieved Response (answer): the documents returned by the system (usually a ranked list)

What is IR? n n (Salton, 1968) Information retrieval is a field concerned with the structure, analysis, organization, storage, searching, and retrieval of information. (Lancaster, 1968) An information retrieval system does not inform (i. e. change the knowledge of) the user on the subject of his inquiry. It merely informs on the existence (or non-existence) and whereabouts of documents relating to his request. (Needham, 1977)…. . the complexity arises from the impossibility of describing the content of a document, or the intent of request, precisely, or unambiguously …

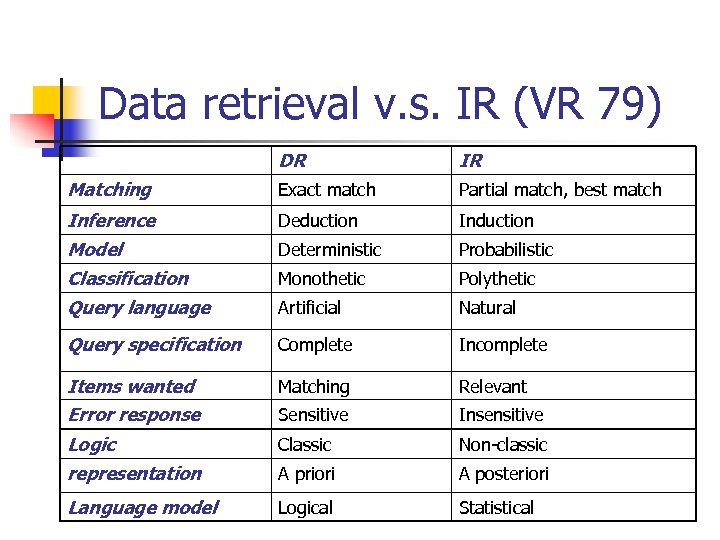

Data retrieval v. s. IR (VR 79) DR IR Matching Exact match Partial match, best match Inference Deduction Induction Model Deterministic Probabilistic Classification Monothetic Polythetic Query language Artificial Natural Query specification Complete Incomplete Items wanted Matching Relevant Error response Sensitive Insensitive Logic Classic Non-classic representation A priori A posteriori Language model Logical Statistical

History

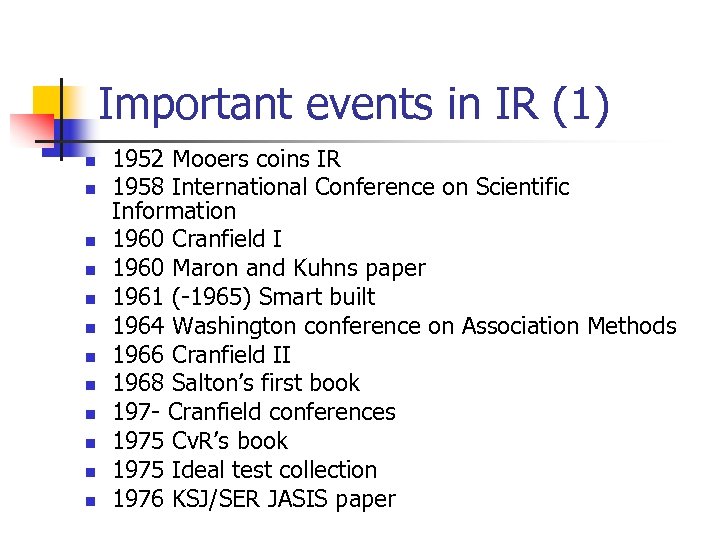

Important events in IR (1) n n n 1952 Mooers coins IR 1958 International Conference on Scientific Information 1960 Cranfield I 1960 Maron and Kuhns paper 1961 (-1965) Smart built 1964 Washington conference on Association Methods 1966 Cranfield II 1968 Salton’s first book 197 - Cranfield conferences 1975 Cv. R’s book 1975 Ideal test collection 1976 KSJ/SER JASIS paper

Important events in IR (2) n n n 1978 1 st SIGIR 1979 1 st BCSIRSG 1980 1 st joint ACM/BCS conference on IR 1981 KSJ book on IR Experiments 1982 Belkin et al ASK hypothesis 1983 - Okapi started 1985 RIAO-1 1986 Cv. R logic model 1990 Deerwester et al, LSI paper 1991 – Inquiry started 1992 TREC-1 1998 Croft & Ponte paper on language models

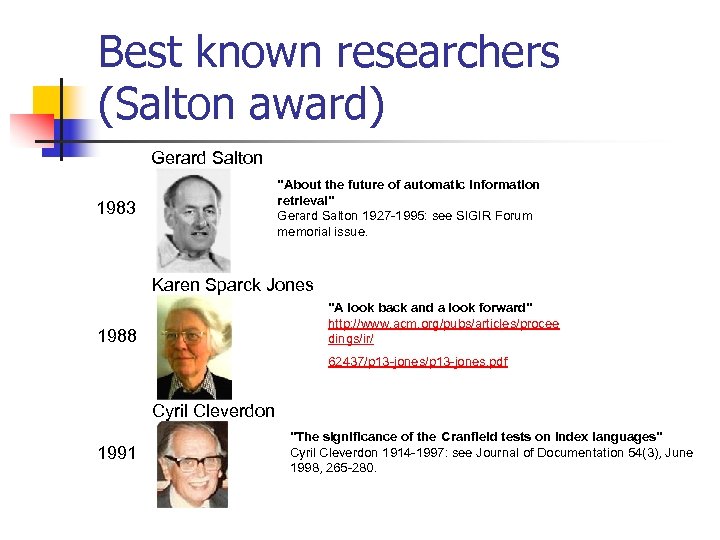

Best known researchers (Salton award) Gerard Salton 1983 "About the future of automatic information retrieval" Gerard Salton 1927 -1995: see SIGIR Forum memorial issue. Karen Sparck Jones 1988 "A look back and a look forward" http: //www. acm. org/pubs/articles/procee dings/ir/ 62437/p 13 -jones. pdf Cyril Cleverdon 1991 "The significance of the Cranfield tests on index languages" Cyril Cleverdon 1914 -1997: see Journal of Documentation 54(3), June 1998, 265 -280.

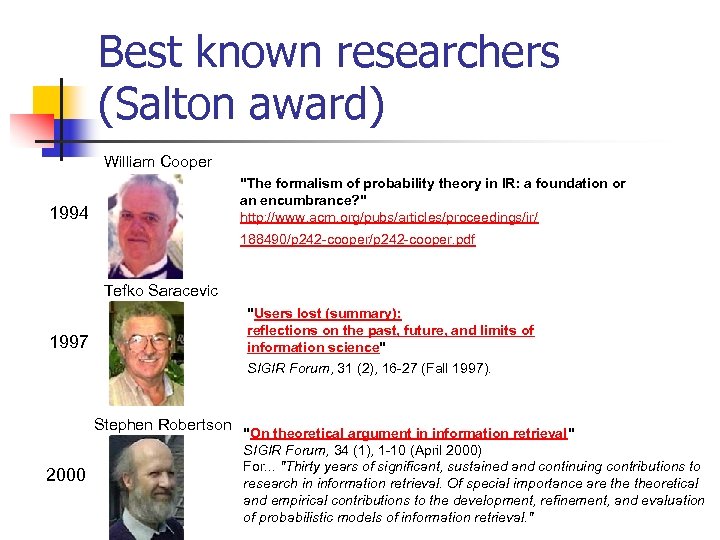

Best known researchers (Salton award) William Cooper 1994 "The formalism of probability theory in IR: a foundation or an encumbrance? " http: //www. acm. org/pubs/articles/proceedings/ir/ 188490/p 242 -cooper. pdf Tefko Saracevic 1997 "Users lost (summary): reflections on the past, future, and limits of information science" SIGIR Forum, 31 (2), 16 -27 (Fall 1997). Stephen Robertson "On theoretical argument in information retrieval" 2000 SIGIR Forum, 34 (1), 1 -10 (April 2000) For. . . "Thirty years of significant, sustained and continuing contributions to research in information retrieval. Of special importance are theoretical and empirical contributions to the development, refinement, and evaluation of probabilistic models of information retrieval. "

Best known researchers (Salton award) W. Bruce Croft 2003 C. J. (Keith) van Rijsbergen "Information Retrieval and Computer Science: An Evolving Relationship" For. . . "More than twenty years of significant, sustained and continuing contributions to research in information retrieval. His contributions to theoretical development and practical use of Bayesian inference networks and language modelling for retrieval, and to their evaluation through extensive experiment and application, are particularly important. The Center for Intelligent Information Retrieval which he founded illustrates the strong synergies between fundamental research and its application to a wide range of practical information management problems. "

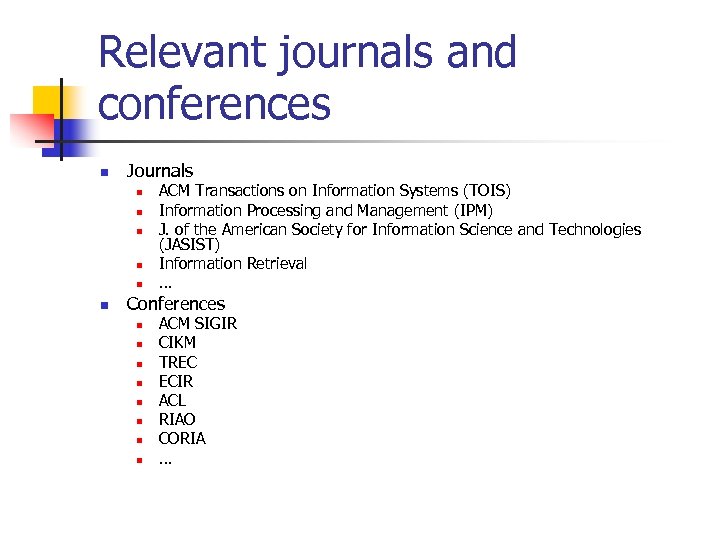

Relevant journals and conferences n Journals n n n ACM Transactions on Information Systems (TOIS) Information Processing and Management (IPM) J. of the American Society for Information Science and Technologies (JASIST) Information Retrieval … Conferences n n n n ACM SIGIR CIKM TREC ECIR ACL RIAO CORIA …

Experimental tradition

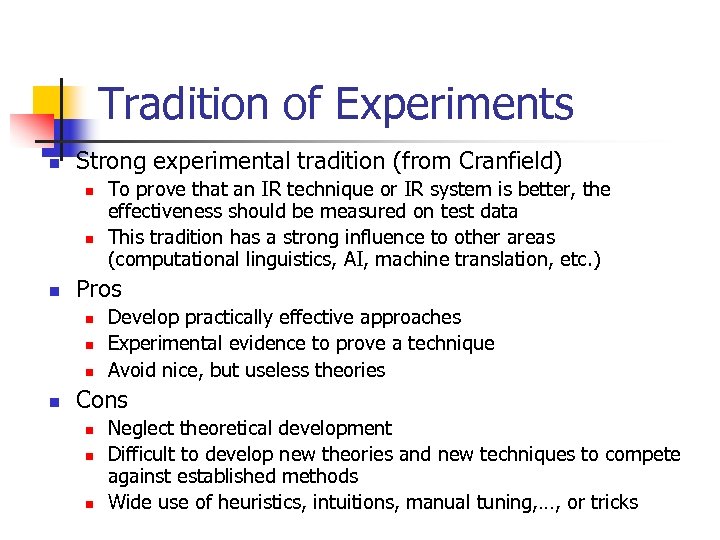

Tradition of Experiments n Strong experimental tradition (from Cranfield) n n n Pros n n To prove that an IR technique or IR system is better, the effectiveness should be measured on test data This tradition has a strong influence to other areas (computational linguistics, AI, machine translation, etc. ) Develop practically effective approaches Experimental evidence to prove a technique Avoid nice, but useless theories Cons n n n Neglect theoretical development Difficult to develop new theories and new techniques to compete against established methods Wide use of heuristics, intuitions, manual tuning, …, or tricks

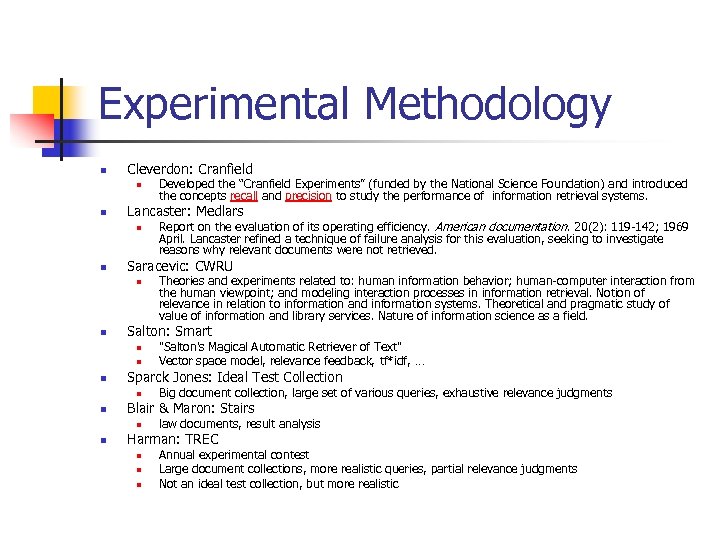

Experimental Methodology n Cleverdon: Cranfield n n Lancaster: Medlars n n n Big document collection, large set of various queries, exhaustive relevance judgments Blair & Maron: Stairs n n "Salton's Magical Automatic Retriever of Text" Vector space model, relevance feedback, tf*idf, … Sparck Jones: Ideal Test Collection n n Theories and experiments related to: human information behavior; human-computer interaction from the human viewpoint; and modeling interaction processes in information retrieval. Notion of relevance in relation to information and information systems. Theoretical and pragmatic study of value of information and library services. Nature of information science as a field. Salton: Smart n n Report on the evaluation of its operating efficiency. American documentation. 20(2): 119 -142; 1969 April. Lancaster refined a technique of failure analysis for this evaluation, seeking to investigate reasons why relevant documents were not retrieved. Saracevic: CWRU n n Developed the “Cranfield Experiments” (funded by the National Science Foundation) and introduced the concepts recall and precision to study the performance of information retrieval systems. law documents, result analysis Harman: TREC n n n Annual experimental contest Large document collections, more realistic queries, partial relevance judgments Not an ideal test collection, but more realistic

Some References on the Web n n Cyril W. Cleverdon, The significance of the Cranfield tests on index languages, ACM-SIGIR, 1991, pp. 3 – 12, (http: //portal. acm. org/citation. cfm? id=122861) David C. Blair , M. E. Maron, An evaluation of retrieval effectiveness for a full-text document-retrieval system, Communications of the ACM, v. 28 n. 3, p. 289 -299, March 1985 (http: //portal. acm. org/citation. cfm? id=3197&dl=GUIDE&coll=G UIDE&CFID=65359706&CFTOKEN=94782922) G. Salton , M. E. Lesk, Computer Evaluation of Indexing and Text Processing, Journal of the ACM (JACM), v. 15 n. 1, p. 8 -36, Jan. 1968 (http: //portal. acm. org/citation. cfm? id=321441&dl=GUIDE&coll= GUIDE&CFID=65359986&CFTOKEN=40286) TREC: http: //trec. nist. gov

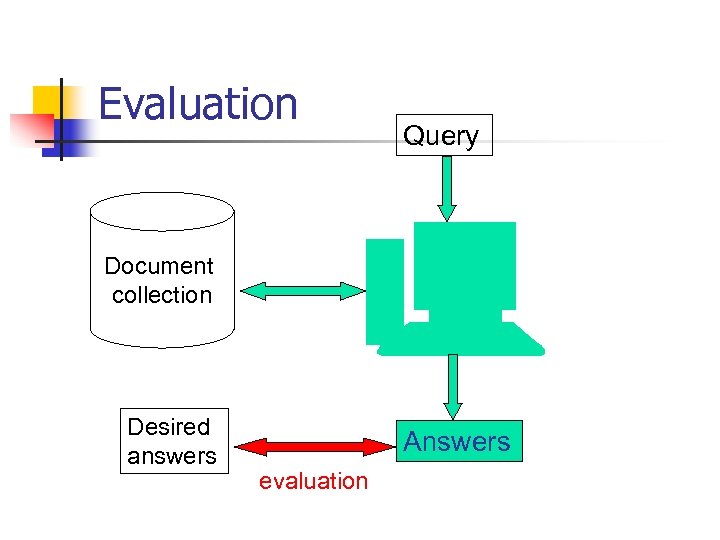

Evaluation Query Document collection Desired answers Answers evaluation

Test collection n Document collection: a large set of documents Query set: a set of queries (usually 50 or more) Relevance judgments: for each query, determine manually the relevant documents in the document collection n In TREC: the judgments are not known priori to the experiments

Methods

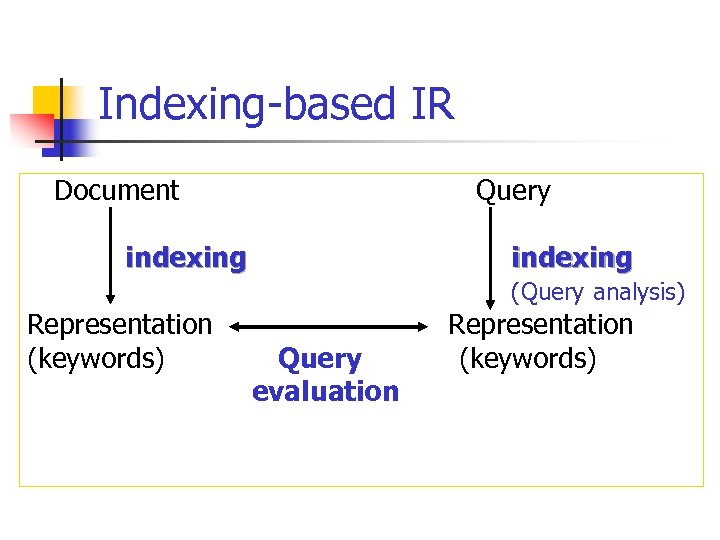

Indexing-based IR Document Query indexing Representation (keywords) indexing (Query analysis) Representation Query (keywords) evaluation

Query

Query Language n n Artificial/Natural (web) multilingual/cross-lingual images none at all!

Query Definition n n n Complete/Incomplete Independence/Dependence Weighted/Unweighted (tf × idf) Query expansion/one shot (feedback, web) Sense disambiguation Cross-lingual

Indexing

Maron’s theory of indexing n n …. . in the case where the query consists of single term, call it B, the probability that a given document will be judged relevant by a patron submitting B is simply the ratio of the number of patrons who submit B as their query and judge that document as relevant, to the number of patrons, who submit B as their search query P(D|B) = P(D, B) / P(B)

Representation of Information n Discrimination without Representation (specificity) Representation with Discrimination (exhaustivity) TF*IDF: n n n TF: importance of term for a document IDF: Importance of document for term (specificity) . . . defining a concept of ‘information’, . . [that] once this notion is properly explicated a document can be represented by the ‘information’ it contains (Cv. R, 1979)

Maching (query evaluation)

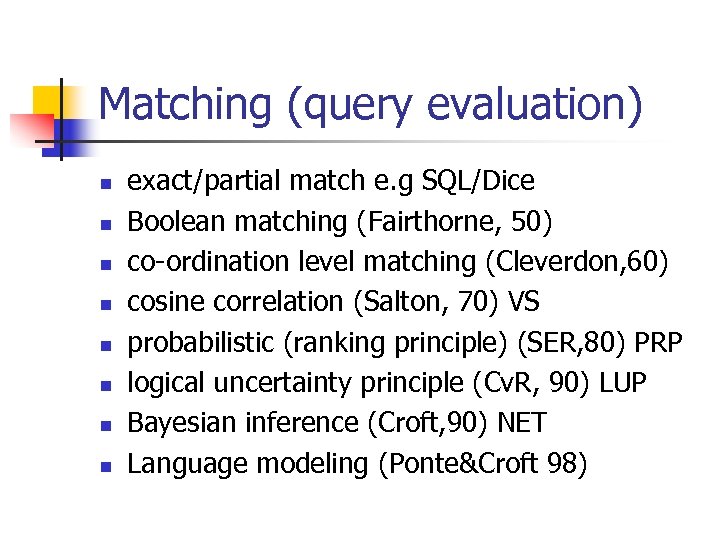

Matching (query evaluation) n n n n exact/partial match e. g SQL/Dice Boolean matching (Fairthorne, 50) co-ordination level matching (Cleverdon, 60) cosine correlation (Salton, 70) VS probabilistic (ranking principle) (SER, 80) PRP logical uncertainty principle (Cv. R, 90) LUP Bayesian inference (Croft, 90) NET Language modeling (Ponte&Croft 98)

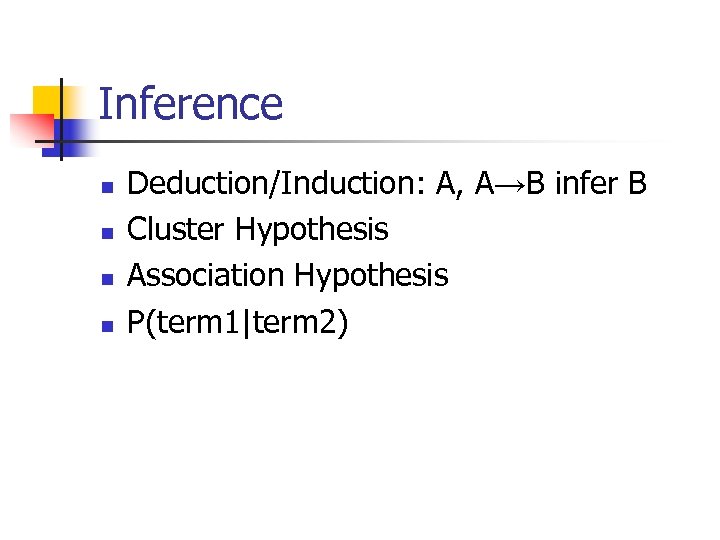

Inference n n Deduction/Induction: A, A→B infer B Cluster Hypothesis Association Hypothesis P(term 1|term 2)

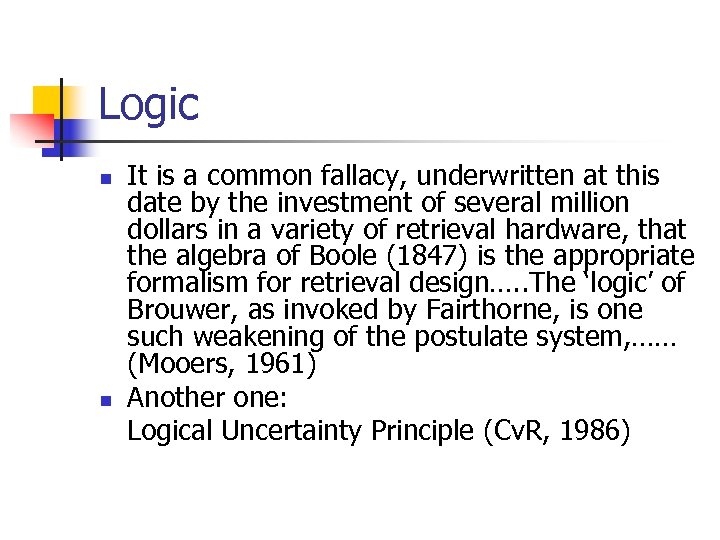

Logic n n It is a common fallacy, underwritten at this date by the investment of several million dollars in a variety of retrieval hardware, that the algebra of Boole (1847) is the appropriate formalism for retrieval design…. . The ‘logic’ of Brouwer, as invoked by Fairthorne, is one such weakening of the postulate system, …… (Mooers, 1961) Another one: Logical Uncertainty Principle (Cv. R, 1986)

Logic n n n If Mark were to loose his job, he would work less If Mark were to work less, he would be less tense If Mark were to loose his job, he would be less tense

Cluster Hypothesis n n If document X is closely associated with Y, then over the population of potential queries the probability of relevance for X will be approximately the same as the probability of relevance for Y, or in symbols P(relevance|X) ~ P(relevance|Y) Document clustering

Association Hypothesis n n If one index term X is good at discriminating relevant from nonrelevant documents, then any closely associated index term Y is also likely to be good at this. P(relevance|X) ~ P(relevance|Y) Query expansion

Models n n n Boolean Vector Space (metrics) - mixture of things Probabilistic (3 models) Logical (implication) - what kind of logic Language models Cognitive (users)

Retrieval Result

Items Wanted n n Matching/Relevant or Correct/Useful The function of a document retrieval system n n n cannot be to retrieve all and only the relevant documents. . but to guide the patron in his search for information (Maron) Topical/tasks Meaning/content

Some difficulties with ‘relevance’ n Goffman, 1969: n n ‘. . that the relevance of the information from one document depends upon what is already known about the subject, and in turn affects the relevance of other documents subsequently examined. ’ Maron, : n ‘Just because a document is about the subject sought by a patron, that fact does not imply that he would judge it relevant. ’

Relevance (Borlund, 2000) n ‘That is the relevance or irrelevance of a given retrieved document may affect the user’s current state of knowledge resulting in a change of the user’s information need, which may lead to a change of the user’s perception/ interpretation of the subsequent retrieved documents…. ’

Evaluation

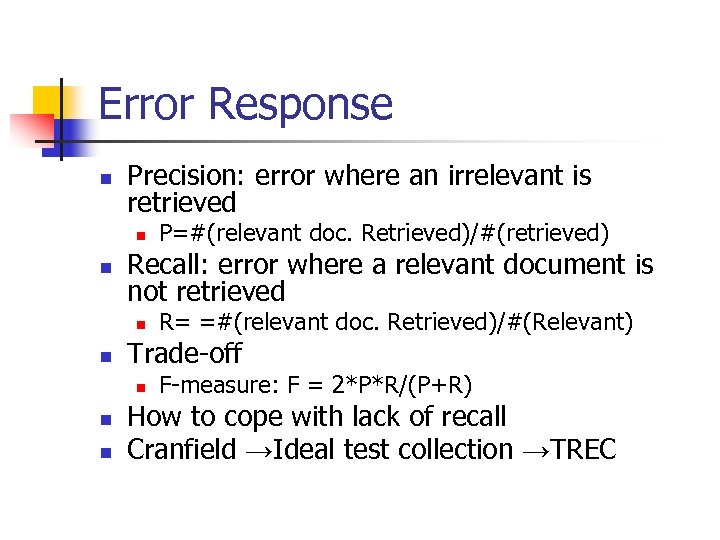

Error Response n Precision: error where an irrelevant is retrieved n n Recall: error where a relevant document is not retrieved n n n R= =#(relevant doc. Retrieved)/#(Relevant) Trade-off n n P=#(relevant doc. Retrieved)/#(retrieved) F-measure: F = 2*P*R/(P+R) How to cope with lack of recall Cranfield →Ideal test collection →TREC

What is a relevant document? n Relevance is: n n n The correspondence between a document and a query, a measure of informativeness to the query; A degree of relation (overlap, relatedness, …) between document and query A measure of utility of the document to the user; … Judged by user / system n User relevance / system relevance

How should relevance be judged? n Relevance is dependent on n n Document contents Information need (query) Time constraint Purpose of retrieval Retrieval environment n n Domain of application (newspaper articles, law, patent, medicine …) User’s knowledge n n n Computer/connection speed User interface … About the domain of application about the system

How is relevance judged? (TREC) n n n Candidate answers by merging the answers from different participating systems Several human assessors judge for the same query Agreement/disagreement Binary value (rel. / irrel. ) / multi-valued Workable strategy but potential problems: n n n Some relevant document may not be found by any system Subjective judgments Disagreement between assessors and participants (but participants usually respect the judgments of assessors)

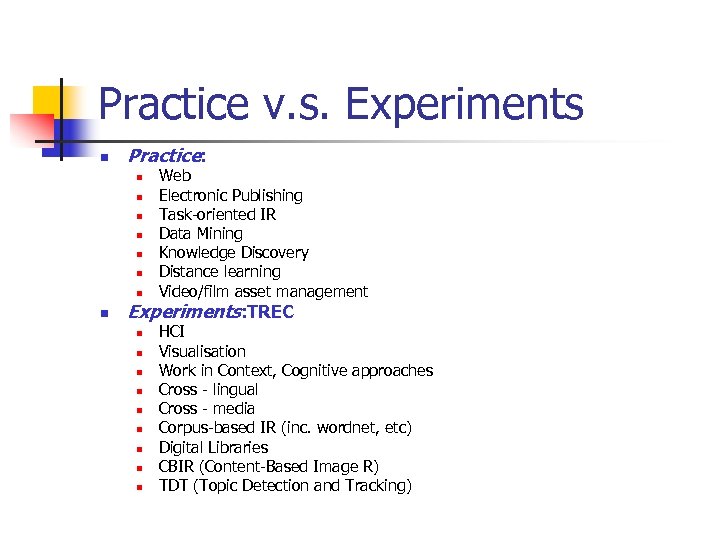

Practice v. s. Experiments n Practice: n n n n Web Electronic Publishing Task-oriented IR Data Mining Knowledge Discovery Distance learning Video/film asset management Experiments: TREC n n n n n HCI Visualisation Work in Context, Cognitive approaches Cross - lingual Cross - media Corpus-based IR (inc. wordnet, etc) Digital Libraries CBIR (Content-Based Image R) TDT (Topic Detection and Tracking)

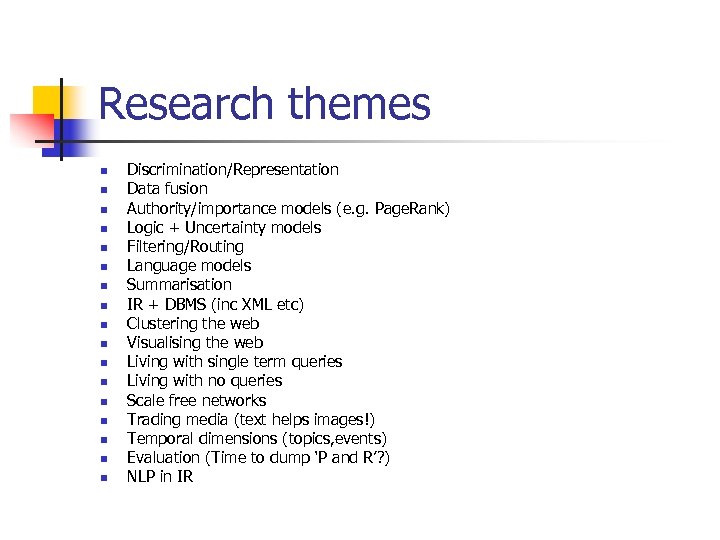

Research themes n n n n n Discrimination/Representation Data fusion Authority/importance models (e. g. Page. Rank) Logic + Uncertainty models Filtering/Routing Language models Summarisation IR + DBMS (inc XML etc) Clustering the web Visualising the web Living with single term queries Living with no queries Scale free networks Trading media (text helps images!) Temporal dimensions (topics, events) Evaluation (Time to dump ‘P and R’? ) NLP in IR

Current situation

Where are we now in IR? n n n Landmarks Hypotheses/Principles Postulates of Impotence Long-term challenges Areas of research

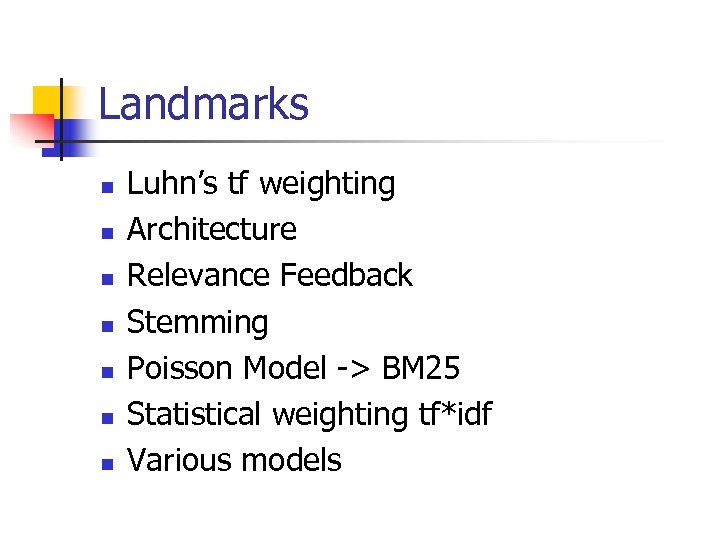

Landmarks n n n n Luhn’s tf weighting Architecture Relevance Feedback Stemming Poisson Model -> BM 25 Statistical weighting tf*idf Various models

Hypotheses/Principles n n n n n Items may be associated without apparent meaning but exploiting their association may help retrieval P & R trade-off – ABNO/OBNA Exhaustivity/Specificity Cluster Hypothesis Association Hypothesis Probability Ranking Principle Logical Uncertainty Principle ASK Polyrepresentation

Postulates of Impotence (according to Swanson, 1988) n n n An information need cannot be expressed independent of context It is impossible to instruct a machine to translate a request into adequate search terms A document’s relevance depends on other seen documents It is never possible to verify whether all relevant documents have been found Machines cannot recognize meaning -> can’t beat human indexing etc

…. more postulates n n Word-occurrence statistics can neither represent meaning nor substitute for it The ability of an IR system to support an iterative process cannot be evaluated in terms of single-iteration human relevance judgment You can have either subtle relevance judgments or highly effective mechanised procedures, but not both Thus, consistently effective fully automatic indexing and retrieval is not possible

Long-term Challenges – workshop Umass. 9/2002 n n Global information access: Satisfy human information needs through natural efficient interaction with an automated system that leverages world-wide structured and unstructured data in any language. Contextual Retrieval: Combine search technologies and knowledge about query and user context into a single framework in order to provide the most “appropriate” answer for a user’s information need.

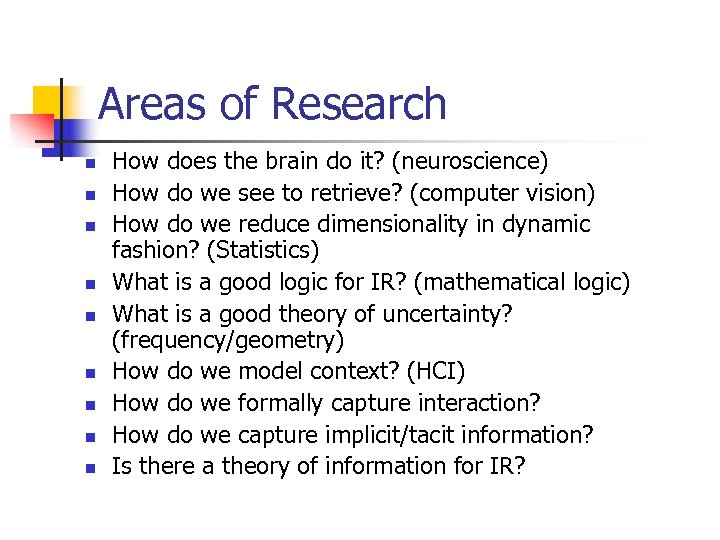

Areas of Research n n n n n How does the brain do it? (neuroscience) How do we see to retrieve? (computer vision) How do we reduce dimensionality in dynamic fashion? (Statistics) What is a good logic for IR? (mathematical logic) What is a good theory of uncertainty? (frequency/geometry) How do we model context? (HCI) How do we formally capture interaction? How do we capture implicit/tacit information? Is there a theory of information for IR?

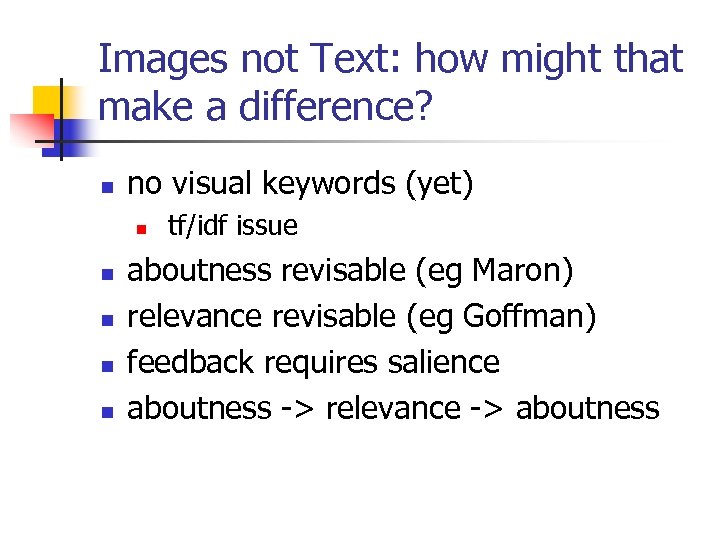

Images not Text: how might that make a difference? n no visual keywords (yet) n n n tf/idf issue aboutness revisable (eg Maron) relevance revisable (eg Goffman) feedback requires salience aboutness -> relevance -> aboutness

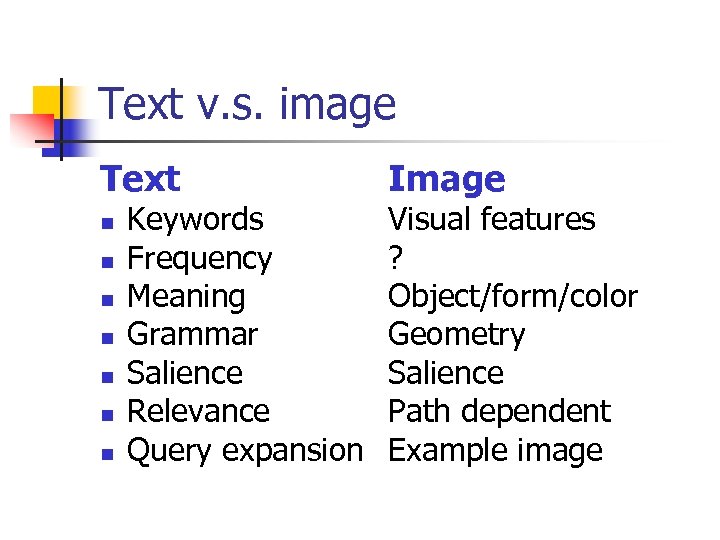

Text v. s. image Text n n n n Keywords Frequency Meaning Grammar Salience Relevance Query expansion Image Visual features ? Object/form/color Geometry Salience Path dependent Example image

References n Van Rijsbergen’s talks: n n n http: //ir. dcs. gla. ac. uk/oldseminars/Keith 2. ppt http: //wwwclips. imag. fr/mrim/essir 03/PDF/4. Rijsbergen. pdf S. E. ROBERTSON, COMPUTER RETRIEVAL (http: //www. soi. city. ac. uk/~ser/papers/j_doc _history/npap. html)

12019167bdbe2a22ed13c49d9daa4989.ppt