4e79beaa1cdf49d8baa5433d92c0e980.ppt

- Количество слайдов: 46

Introduction in Computational Intelligence q What is this course about ? q Neural Computing q Evolutionary Computing q Fuzzy Computing q Course structure and other stuff Neural Networks - Lecture 1 1

Introduction in Computational Intelligence q What is this course about ? q Neural Computing q Evolutionary Computing q Fuzzy Computing q Course structure and other stuff Neural Networks - Lecture 1 1

What is this course about ? q About solving ill-posed problems Well posed problem: - It exists a formal model associated to the problem - It exists a solving algorithm Example: classifying the employees in two classes based on their income (based on a known threshold) Ill posed problem: - It is not easy to formalize it - There exist only some solving examples - The classical methods can not be applied Example: classifying the employees in two classes based on their credibility concerning a bank credit Neural Networks - Lecture 1 2

What is this course about ? q About solving ill-posed problems Well posed problem: - It exists a formal model associated to the problem - It exists a solving algorithm Example: classifying the employees in two classes based on their income (based on a known threshold) Ill posed problem: - It is not easy to formalize it - There exist only some solving examples - The classical methods can not be applied Example: classifying the employees in two classes based on their credibility concerning a bank credit Neural Networks - Lecture 1 2

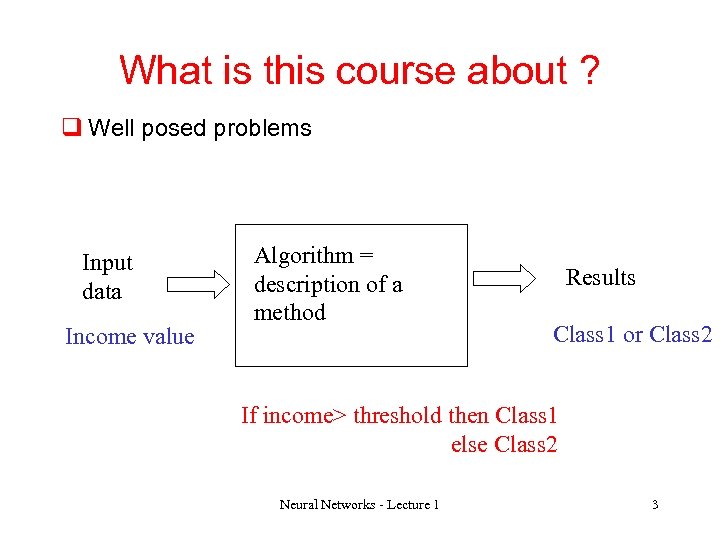

What is this course about ? q Well posed problems Input data Income value Algorithm = description of a method Results Class 1 or Class 2 If income> threshold then Class 1 else Class 2 Neural Networks - Lecture 1 3

What is this course about ? q Well posed problems Input data Income value Algorithm = description of a method Results Class 1 or Class 2 If income> threshold then Class 1 else Class 2 Neural Networks - Lecture 1 3

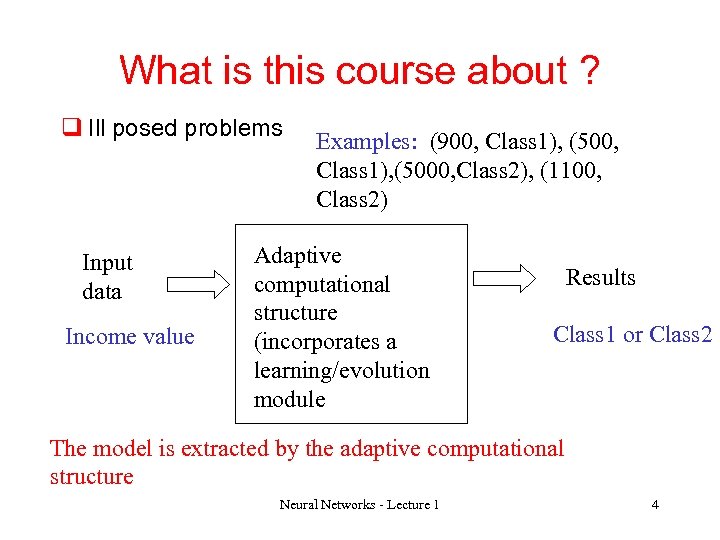

What is this course about ? q Ill posed problems Input data Income value Examples: (900, Class 1), (5000, Class 2), (1100, Class 2) Adaptive computational structure (incorporates a learning/evolution module Results Class 1 or Class 2 The model is extracted by the adaptive computational structure Neural Networks - Lecture 1 4

What is this course about ? q Ill posed problems Input data Income value Examples: (900, Class 1), (5000, Class 2), (1100, Class 2) Adaptive computational structure (incorporates a learning/evolution module Results Class 1 or Class 2 The model is extracted by the adaptive computational structure Neural Networks - Lecture 1 4

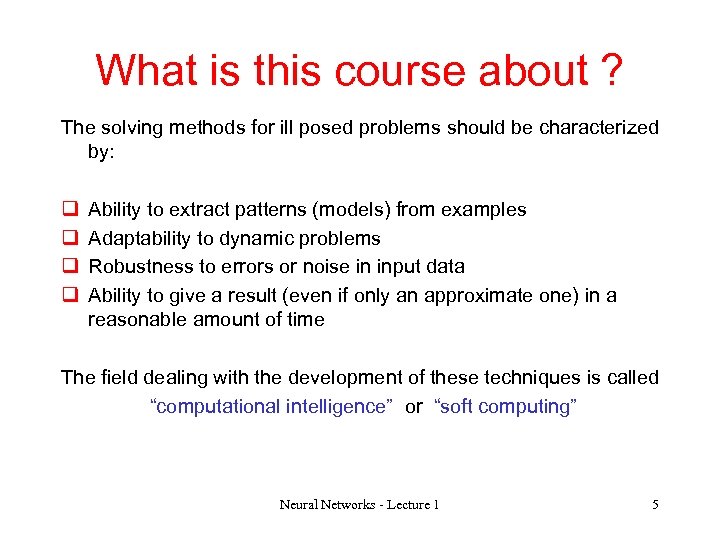

What is this course about ? The solving methods for ill posed problems should be characterized by: q q Ability to extract patterns (models) from examples Adaptability to dynamic problems Robustness to errors or noise in input data Ability to give a result (even if only an approximate one) in a reasonable amount of time The field dealing with the development of these techniques is called “computational intelligence” or “soft computing” Neural Networks - Lecture 1 5

What is this course about ? The solving methods for ill posed problems should be characterized by: q q Ability to extract patterns (models) from examples Adaptability to dynamic problems Robustness to errors or noise in input data Ability to give a result (even if only an approximate one) in a reasonable amount of time The field dealing with the development of these techniques is called “computational intelligence” or “soft computing” Neural Networks - Lecture 1 5

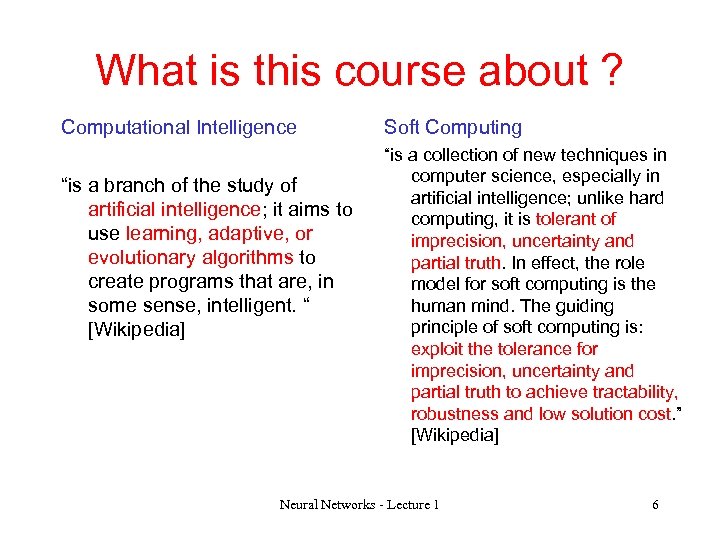

What is this course about ? Computational Intelligence “is a branch of the study of artificial intelligence; it aims to use learning, adaptive, or evolutionary algorithms to create programs that are, in some sense, intelligent. “ [Wikipedia] Soft Computing “is a collection of new techniques in computer science, especially in artificial intelligence; unlike hard computing, it is tolerant of imprecision, uncertainty and partial truth. In effect, the role model for soft computing is the human mind. The guiding principle of soft computing is: exploit the tolerance for imprecision, uncertainty and partial truth to achieve tractability, robustness and low solution cost. ” [Wikipedia] Neural Networks - Lecture 1 6

What is this course about ? Computational Intelligence “is a branch of the study of artificial intelligence; it aims to use learning, adaptive, or evolutionary algorithms to create programs that are, in some sense, intelligent. “ [Wikipedia] Soft Computing “is a collection of new techniques in computer science, especially in artificial intelligence; unlike hard computing, it is tolerant of imprecision, uncertainty and partial truth. In effect, the role model for soft computing is the human mind. The guiding principle of soft computing is: exploit the tolerance for imprecision, uncertainty and partial truth to achieve tractability, robustness and low solution cost. ” [Wikipedia] Neural Networks - Lecture 1 6

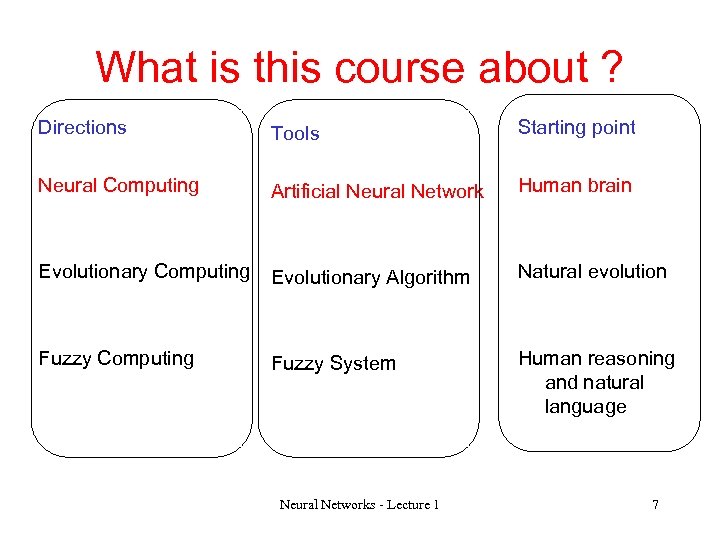

What is this course about ? Directions Tools Starting point Neural Computing Artificial Neural Network Human brain Evolutionary Computing Evolutionary Algorithm Natural evolution Fuzzy Computing Fuzzy System Human reasoning and natural language Neural Networks - Lecture 1 7

What is this course about ? Directions Tools Starting point Neural Computing Artificial Neural Network Human brain Evolutionary Computing Evolutionary Algorithm Natural evolution Fuzzy Computing Fuzzy System Human reasoning and natural language Neural Networks - Lecture 1 7

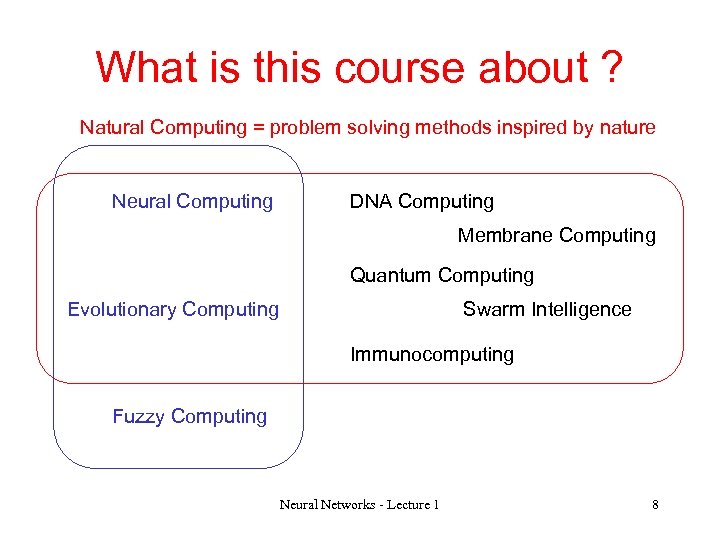

What is this course about ? Natural Computing = problem solving methods inspired by nature Neural Computing DNA Computing Membrane Computing Quantum Computing Evolutionary Computing Swarm Intelligence Immunocomputing Fuzzy Computing Neural Networks - Lecture 1 8

What is this course about ? Natural Computing = problem solving methods inspired by nature Neural Computing DNA Computing Membrane Computing Quantum Computing Evolutionary Computing Swarm Intelligence Immunocomputing Fuzzy Computing Neural Networks - Lecture 1 8

Neural Computing - overview q Basic principles q The biological model q Artificial neural networks q Applications q History Neural Networks - Lecture 1 9

Neural Computing - overview q Basic principles q The biological model q Artificial neural networks q Applications q History Neural Networks - Lecture 1 9

Basic principles A functional point of view: neural network = adaptive system which give answers to a problem after it has been trained for similar problems Examples Input data Neural network = learning system Neural Networks - Lecture 1 Answer 10

Basic principles A functional point of view: neural network = adaptive system which give answers to a problem after it has been trained for similar problems Examples Input data Neural network = learning system Neural Networks - Lecture 1 Answer 10

Basic principles A structural point of view: Artificial neural network (ANN)= set of highly interconnected simply processing units (also called neurons) Processing unit = simplified model of a neuron Artificial neural network = (very) simplified model of the brain Neural Networks - Lecture 1 11

Basic principles A structural point of view: Artificial neural network (ANN)= set of highly interconnected simply processing units (also called neurons) Processing unit = simplified model of a neuron Artificial neural network = (very) simplified model of the brain Neural Networks - Lecture 1 11

Basic principles q The complex behavior emerges from simple rules which interact and are applied in parallel q This bottom-up approach is opposite to the top down approach particular to classical artificial intelligence q The learning ability derives from the adaptability of some parameters associated with the processing units behaviour (the parameters are modified by learning) q The processing is mainly numerical unlike the symbolical approach of AI Neural Networks - Lecture 1 12

Basic principles q The complex behavior emerges from simple rules which interact and are applied in parallel q This bottom-up approach is opposite to the top down approach particular to classical artificial intelligence q The learning ability derives from the adaptability of some parameters associated with the processing units behaviour (the parameters are modified by learning) q The processing is mainly numerical unlike the symbolical approach of AI Neural Networks - Lecture 1 12

Neural Computing - overview q Basic principles q The biological model q Artificial neural networks q Applications q History Neural Networks - Lecture 1 13

Neural Computing - overview q Basic principles q The biological model q Artificial neural networks q Applications q History Neural Networks - Lecture 1 13

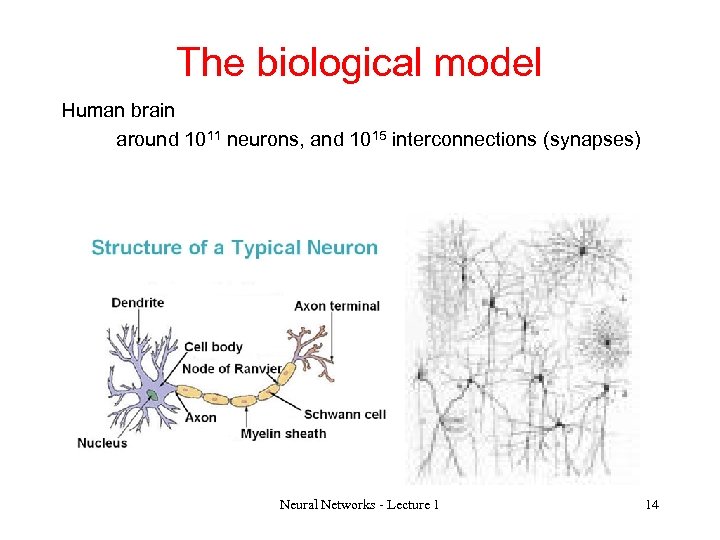

The biological model Human brain around 1011 neurons, and 1015 interconnections (synapses) Neural Networks - Lecture 1 14

The biological model Human brain around 1011 neurons, and 1015 interconnections (synapses) Neural Networks - Lecture 1 14

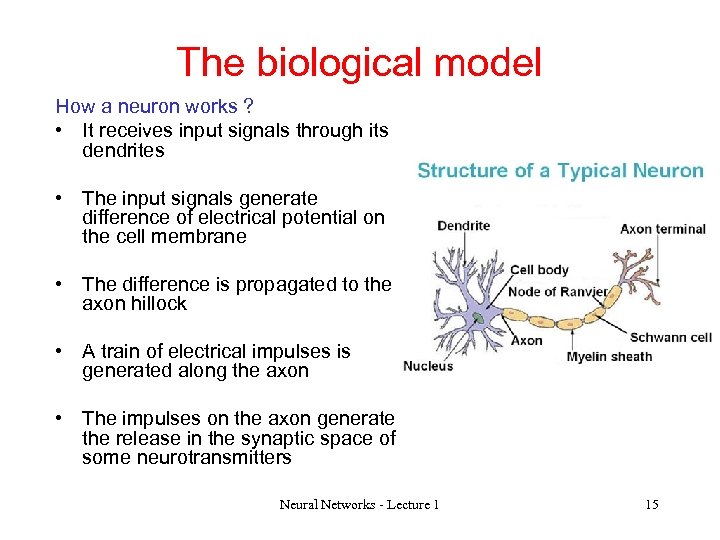

The biological model How a neuron works ? • It receives input signals through its dendrites • The input signals generate difference of electrical potential on the cell membrane • The difference is propagated to the axon hillock • A train of electrical impulses is generated along the axon • The impulses on the axon generate the release in the synaptic space of some neurotransmitters Neural Networks - Lecture 1 15

The biological model How a neuron works ? • It receives input signals through its dendrites • The input signals generate difference of electrical potential on the cell membrane • The difference is propagated to the axon hillock • A train of electrical impulses is generated along the axon • The impulses on the axon generate the release in the synaptic space of some neurotransmitters Neural Networks - Lecture 1 15

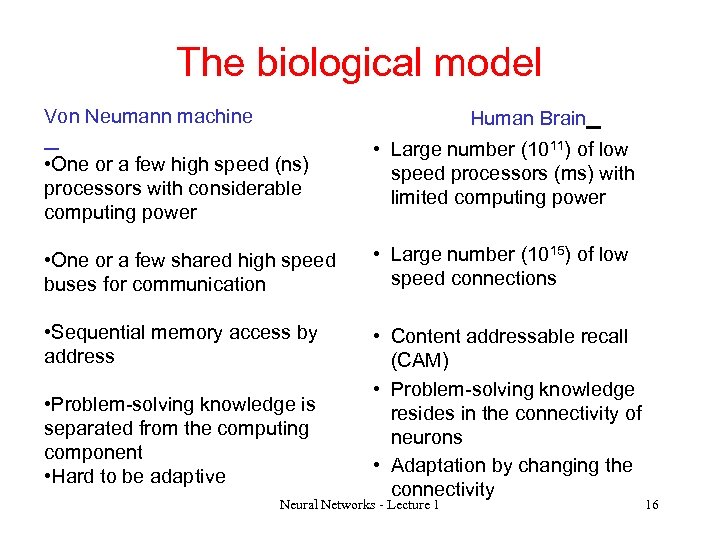

The biological model Von Neumann machine Human Brain • One or a few high speed (ns) processors with considerable computing power • Large number (1011) of low speed processors (ms) with limited computing power • One or a few shared high speed buses for communication • Large number (1015) of low speed connections • Sequential memory access by address • Content addressable recall (CAM) • Problem-solving knowledge resides in the connectivity of neurons • Adaptation by changing the connectivity • Problem-solving knowledge is separated from the computing component • Hard to be adaptive Neural Networks - Lecture 1 16

The biological model Von Neumann machine Human Brain • One or a few high speed (ns) processors with considerable computing power • Large number (1011) of low speed processors (ms) with limited computing power • One or a few shared high speed buses for communication • Large number (1015) of low speed connections • Sequential memory access by address • Content addressable recall (CAM) • Problem-solving knowledge resides in the connectivity of neurons • Adaptation by changing the connectivity • Problem-solving knowledge is separated from the computing component • Hard to be adaptive Neural Networks - Lecture 1 16

Neural Computing - overview q Basic principles q The biological model q Artificial neural networks q Applications q History Neural Networks - Lecture 1 17

Neural Computing - overview q Basic principles q The biological model q Artificial neural networks q Applications q History Neural Networks - Lecture 1 17

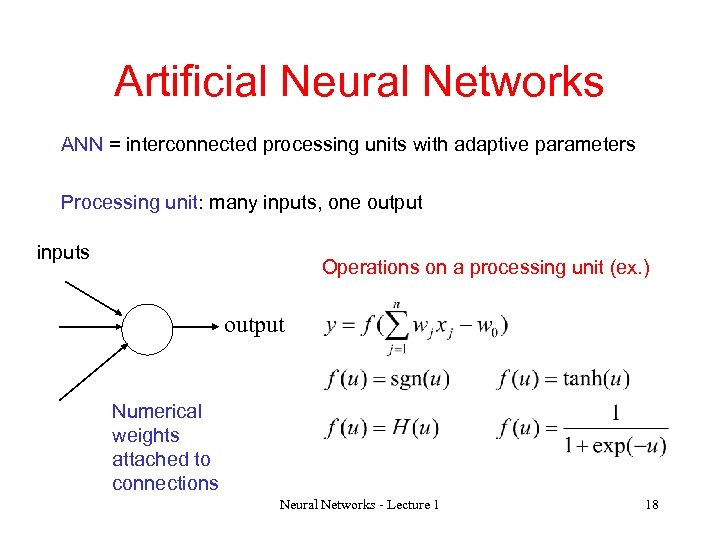

Artificial Neural Networks ANN = interconnected processing units with adaptive parameters Processing unit: many inputs, one output inputs Operations on a processing unit (ex. ) output Numerical weights attached to connections Neural Networks - Lecture 1 18

Artificial Neural Networks ANN = interconnected processing units with adaptive parameters Processing unit: many inputs, one output inputs Operations on a processing unit (ex. ) output Numerical weights attached to connections Neural Networks - Lecture 1 18

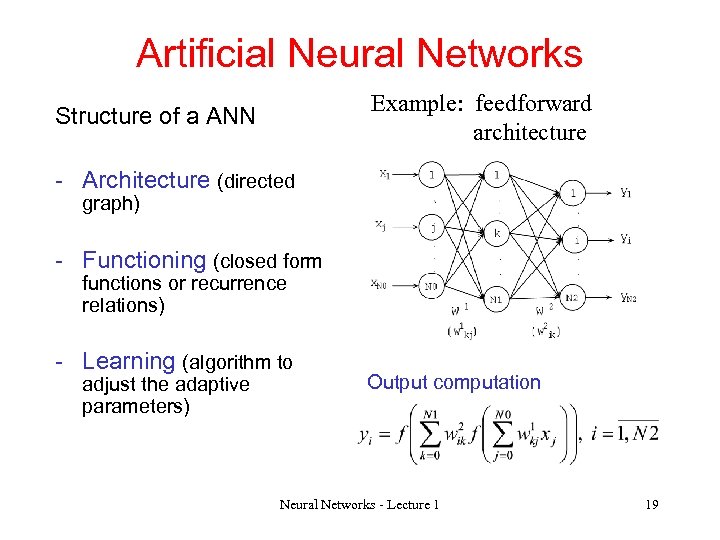

Artificial Neural Networks Example: feedforward architecture Structure of a ANN - Architecture (directed graph) - Functioning (closed form functions or recurrence relations) - Learning (algorithm to adjust the adaptive parameters) Output computation Neural Networks - Lecture 1 19

Artificial Neural Networks Example: feedforward architecture Structure of a ANN - Architecture (directed graph) - Functioning (closed form functions or recurrence relations) - Learning (algorithm to adjust the adaptive parameters) Output computation Neural Networks - Lecture 1 19

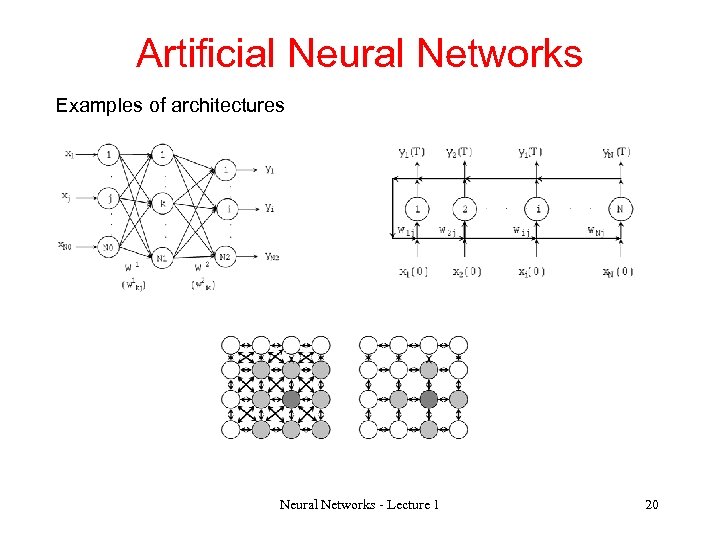

Artificial Neural Networks Examples of architectures Neural Networks - Lecture 1 20

Artificial Neural Networks Examples of architectures Neural Networks - Lecture 1 20

Artificial Neural Networks Learning = extracting the model corresponding to the problem starting from examples = finding the network parameters Learning strategies: • Supervised (i. e. learning with a teacher) • Unsupervised (i. e. learning without a teacher) • Reinforcement (i. e. based on reward and penalization) Neural Networks - Lecture 1 21

Artificial Neural Networks Learning = extracting the model corresponding to the problem starting from examples = finding the network parameters Learning strategies: • Supervised (i. e. learning with a teacher) • Unsupervised (i. e. learning without a teacher) • Reinforcement (i. e. based on reward and penalization) Neural Networks - Lecture 1 21

Neural Computing - overview q Basic principles q The biological model q Artificial neural networks q Applications q History Neural Networks - Lecture 1 22

Neural Computing - overview q Basic principles q The biological model q Artificial neural networks q Applications q History Neural Networks - Lecture 1 22

Applications q Classification and pattern recognition Input data: set of features of an object Ex: information about the employee Output: Class to which the object belongs Ex: eligible/non eligible for a bank credit Other examples: character recognition, image/texture classification, speech recognition, signal classification (EEG, EKG), land type classification Particularity: supervised learning (based on examples of correctly classified data) Neural Networks - Lecture 1 23

Applications q Classification and pattern recognition Input data: set of features of an object Ex: information about the employee Output: Class to which the object belongs Ex: eligible/non eligible for a bank credit Other examples: character recognition, image/texture classification, speech recognition, signal classification (EEG, EKG), land type classification Particularity: supervised learning (based on examples of correctly classified data) Neural Networks - Lecture 1 23

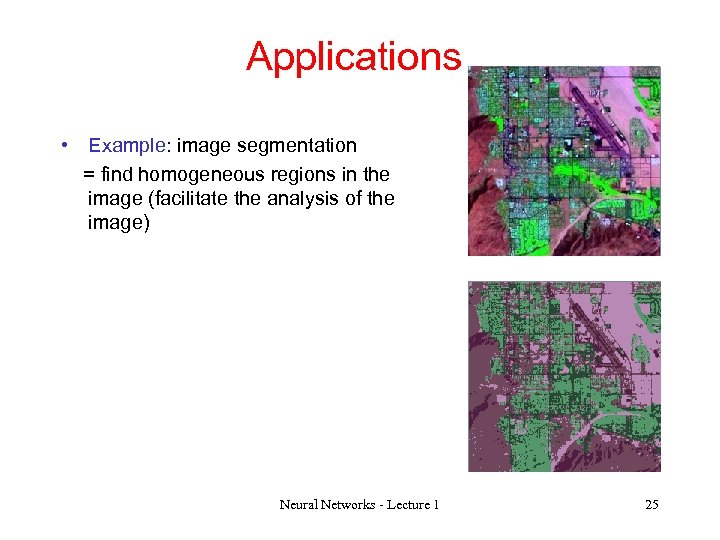

Applications q Data clustering (the unsupervised version of classification – the data are grouped based on their similarities) Input data: set of features of an object Ex: information concerning a user session in a e-commerce system Output: class to which the object belongs Ex: group of users with similar behavior Other examples: image segmentation Neural Networks - Lecture 1 24

Applications q Data clustering (the unsupervised version of classification – the data are grouped based on their similarities) Input data: set of features of an object Ex: information concerning a user session in a e-commerce system Output: class to which the object belongs Ex: group of users with similar behavior Other examples: image segmentation Neural Networks - Lecture 1 24

Applications • Example: image segmentation = find homogeneous regions in the image (facilitate the analysis of the image) Neural Networks - Lecture 1 25

Applications • Example: image segmentation = find homogeneous regions in the image (facilitate the analysis of the image) Neural Networks - Lecture 1 25

Applications q Approximation / estimation = finding the relationship between two variables Input data: values of some independent variables Ex: values obtained by measurements Output: value corresponding to the dependent variable Ex: estimated value for the measure which depends on the independent variables Neural Networks - Lecture 1 26

Applications q Approximation / estimation = finding the relationship between two variables Input data: values of some independent variables Ex: values obtained by measurements Output: value corresponding to the dependent variable Ex: estimated value for the measure which depends on the independent variables Neural Networks - Lecture 1 26

Applications q Prediction = estimating the future value(s) in a time series Input data: sequence of values Ex: currency exchange rate for the last month Output: estimation of the next value Ex: estimation of the currency exchange rate for tomorrow Other examples: stock prediction, prediction in meteorology Neural Networks - Lecture 1 27

Applications q Prediction = estimating the future value(s) in a time series Input data: sequence of values Ex: currency exchange rate for the last month Output: estimation of the next value Ex: estimation of the currency exchange rate for tomorrow Other examples: stock prediction, prediction in meteorology Neural Networks - Lecture 1 27

Applications q Optimization = finding an approximation of the optimum of a difficult problem q Associative memories = the recall process is based on stored content not on an address q Adaptive control = find a control signal which ensure a given output of a system Neural Networks - Lecture 1 28

Applications q Optimization = finding an approximation of the optimum of a difficult problem q Associative memories = the recall process is based on stored content not on an address q Adaptive control = find a control signal which ensure a given output of a system Neural Networks - Lecture 1 28

Neural Computing - overview q Basic principles q The biological model q Artificial neural networks q Applications q History Neural Networks - Lecture 1 29

Neural Computing - overview q Basic principles q The biological model q Artificial neural networks q Applications q History Neural Networks - Lecture 1 29

ANN History q Pitts & Mc. Culloch (1943) q First mathematical model of biological neurons q All Boolean operations can be implemented by these neuronlike nodes (with different threshold and excitatory/inhibitory connections). q Competitor to Von Neumann model for general purpose computing device q Origin of automata theory. q Hebb (1949) q Hebbian rule of learning: increase the connection strength between neurons i and j whenever both i and j are activated. q Or increase the connection strength between nodes i and j whenever both nodes are simultaneously ON or OFF. Neural Networks - Lecture 1 30

ANN History q Pitts & Mc. Culloch (1943) q First mathematical model of biological neurons q All Boolean operations can be implemented by these neuronlike nodes (with different threshold and excitatory/inhibitory connections). q Competitor to Von Neumann model for general purpose computing device q Origin of automata theory. q Hebb (1949) q Hebbian rule of learning: increase the connection strength between neurons i and j whenever both i and j are activated. q Or increase the connection strength between nodes i and j whenever both nodes are simultaneously ON or OFF. Neural Networks - Lecture 1 30

ANN History q Early booming (50’s – early 60’s) – Rosenblatt (1958) • Perceptron: network of threshold nodes for pattern classification. Perceptron learning rule – first learning algorithm • Perceptron convergence theorem: everything that can be represented by a perceptron can be learned – Widow and Hoff (1960, 19062) • Learning rule based on minimization methods – Minsky’s attempt to build a general purpose machine with Pitts/Mc. Cullock units Neural Networks - Lecture 1 31

ANN History q Early booming (50’s – early 60’s) – Rosenblatt (1958) • Perceptron: network of threshold nodes for pattern classification. Perceptron learning rule – first learning algorithm • Perceptron convergence theorem: everything that can be represented by a perceptron can be learned – Widow and Hoff (1960, 19062) • Learning rule based on minimization methods – Minsky’s attempt to build a general purpose machine with Pitts/Mc. Cullock units Neural Networks - Lecture 1 31

ANN History • The setback (mid 60’s – late 70’s) – Serious problems with perceptron model (Minsky’s book 1969) • Single layer perceoptrons cannot represent (learn) simple functions such as XOR • Multi-layer of non-linear units may have greater power but there was no learning rule for such nets • Scaling problem: connection weights may grow infinitely – The first two problems overcame by latter effort in 80’s, but the scaling problem persists Neural Networks - Lecture 1 32

ANN History • The setback (mid 60’s – late 70’s) – Serious problems with perceptron model (Minsky’s book 1969) • Single layer perceoptrons cannot represent (learn) simple functions such as XOR • Multi-layer of non-linear units may have greater power but there was no learning rule for such nets • Scaling problem: connection weights may grow infinitely – The first two problems overcame by latter effort in 80’s, but the scaling problem persists Neural Networks - Lecture 1 32

ANN History • Renewed enthusiasm and flourish (80’s – present) – New techniques • Backpropagation learning for multi-layer feed forward nets (with non-linear, differentiable node functions) • Physics inspired models (Hopfield net, Boltzmann machine, etc. ) • Unsupervised learning – Impressive application (character recognition, speech recognition, text-to-speech transformation, process control, associative memory, etc. ) – Traditional approaches face difficult challenges – Caution: • Don’t underestimate difficulties and limitations • Poses more problems than solutions Neural Networks - Lecture 1 33

ANN History • Renewed enthusiasm and flourish (80’s – present) – New techniques • Backpropagation learning for multi-layer feed forward nets (with non-linear, differentiable node functions) • Physics inspired models (Hopfield net, Boltzmann machine, etc. ) • Unsupervised learning – Impressive application (character recognition, speech recognition, text-to-speech transformation, process control, associative memory, etc. ) – Traditional approaches face difficult challenges – Caution: • Don’t underestimate difficulties and limitations • Poses more problems than solutions Neural Networks - Lecture 1 33

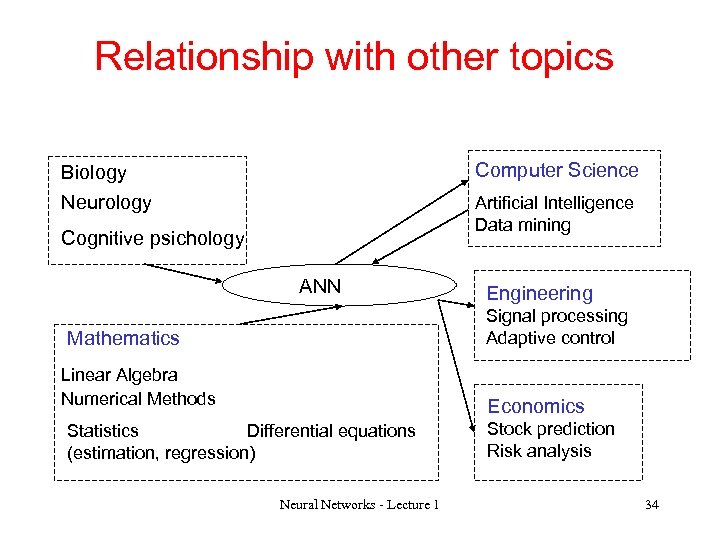

Relationship with other topics Computer Science Biology Neurology Artificial Intelligence Data mining Cognitive psichology ANN Engineering Signal processing Adaptive control Mathematics Linear Algebra Numerical Methods Economics Statistics Differential equations (estimation, regression) Neural Networks - Lecture 1 Stock prediction Risk analysis 34

Relationship with other topics Computer Science Biology Neurology Artificial Intelligence Data mining Cognitive psichology ANN Engineering Signal processing Adaptive control Mathematics Linear Algebra Numerical Methods Economics Statistics Differential equations (estimation, regression) Neural Networks - Lecture 1 Stock prediction Risk analysis 34

Evolutionary Computing q Basic principles q Structure of an Evolutionary Algorithm (EA) q Variants of EAs q Applications Neural Networks - Lecture 1 35

Evolutionary Computing q Basic principles q Structure of an Evolutionary Algorithm (EA) q Variants of EAs q Applications Neural Networks - Lecture 1 35

Evolutionary Computing: basic principles q EC is inspired by the natural evolution processes based on the heredity principles and the darwinian principal of survival of the fittest q The solution of the problem is found by exploring the space of possible solutions; a populations of individuals (agents) is used to explore the space q The population elements are coded based on the particularities of the problem (bit strings, real value vectors, trees, graphs etc. ) Neural Networks - Lecture 1 36

Evolutionary Computing: basic principles q EC is inspired by the natural evolution processes based on the heredity principles and the darwinian principal of survival of the fittest q The solution of the problem is found by exploring the space of possible solutions; a populations of individuals (agents) is used to explore the space q The population elements are coded based on the particularities of the problem (bit strings, real value vectors, trees, graphs etc. ) Neural Networks - Lecture 1 36

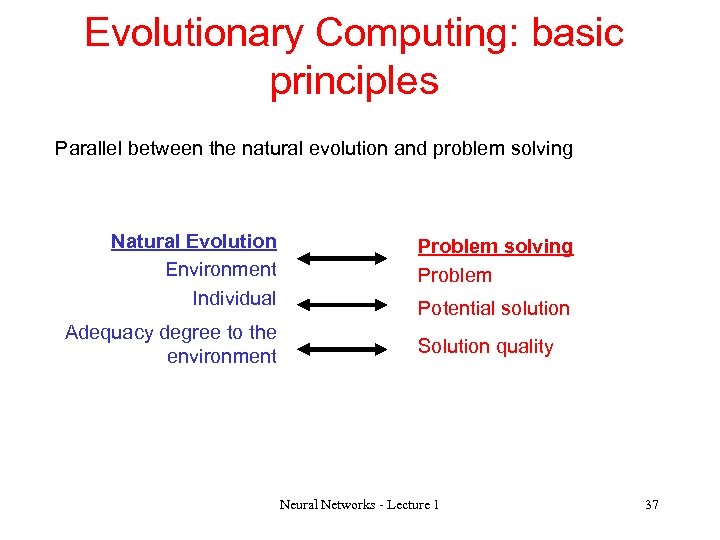

Evolutionary Computing: basic principles Parallel between the natural evolution and problem solving Natural Evolution Environment Individual Adequacy degree to the environment Problem solving Problem Potential solution Solution quality Neural Networks - Lecture 1 37

Evolutionary Computing: basic principles Parallel between the natural evolution and problem solving Natural Evolution Environment Individual Adequacy degree to the environment Problem solving Problem Potential solution Solution quality Neural Networks - Lecture 1 37

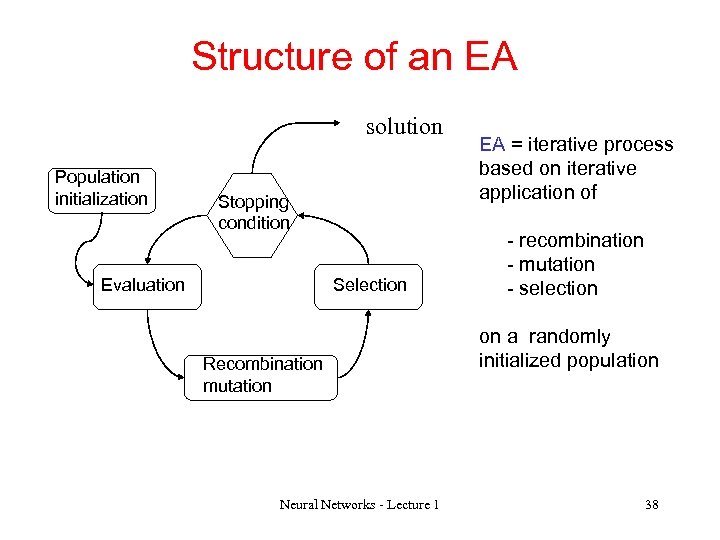

Structure of an EA solution Population initialization Stopping condition Evaluation Selection Recombination mutation Neural Networks - Lecture 1 EA = iterative process based on iterative application of - recombination - mutation - selection on a randomly initialized population 38

Structure of an EA solution Population initialization Stopping condition Evaluation Selection Recombination mutation Neural Networks - Lecture 1 EA = iterative process based on iterative application of - recombination - mutation - selection on a randomly initialized population 38

Variants of EAs q Genetic Algorithms: q Binary codification q Primary operator: crossing over q Secondary operator: mutation q Target: combinatorial optimization problems q Evolution strategies: q Real codification q Primary operator: mutation q Secondary operator: recombination q Target: real optimization problems Neural Networks - Lecture 1 39

Variants of EAs q Genetic Algorithms: q Binary codification q Primary operator: crossing over q Secondary operator: mutation q Target: combinatorial optimization problems q Evolution strategies: q Real codification q Primary operator: mutation q Secondary operator: recombination q Target: real optimization problems Neural Networks - Lecture 1 39

Variants of EAs q Genetic programming: q Structured individuals (trees, expressions, programs etc) q Target: Generate computing structures through evolution processes q Evolutionary programming: q Real codification q Only mutation q Target: optimization on continuous domains, neural networks architectures evolution Neural Networks - Lecture 1 40

Variants of EAs q Genetic programming: q Structured individuals (trees, expressions, programs etc) q Target: Generate computing structures through evolution processes q Evolutionary programming: q Real codification q Only mutation q Target: optimization on continuous domains, neural networks architectures evolution Neural Networks - Lecture 1 40

EAs applications q Prediction q Scheduling (automated timetabling generation, tasks scheduling). q Image processing (image filters design) q Neural networks design q Structures identification (bioinformatics) q Evolutionary design & art Neural Networks - Lecture 1 41

EAs applications q Prediction q Scheduling (automated timetabling generation, tasks scheduling). q Image processing (image filters design) q Neural networks design q Structures identification (bioinformatics) q Evolutionary design & art Neural Networks - Lecture 1 41

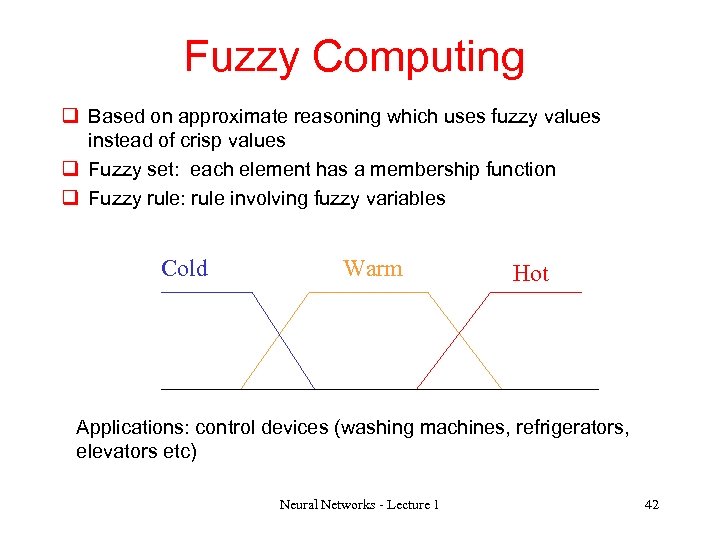

Fuzzy Computing q Based on approximate reasoning which uses fuzzy values instead of crisp values q Fuzzy set: each element has a membership function q Fuzzy rule: rule involving fuzzy variables Cold Warm Hot Applications: control devices (washing machines, refrigerators, elevators etc) Neural Networks - Lecture 1 42

Fuzzy Computing q Based on approximate reasoning which uses fuzzy values instead of crisp values q Fuzzy set: each element has a membership function q Fuzzy rule: rule involving fuzzy variables Cold Warm Hot Applications: control devices (washing machines, refrigerators, elevators etc) Neural Networks - Lecture 1 42

Structure of the course q ANN design q One layer perceptrons. Linear separable classification problems. Widrow-Hoff and Delta learning rules. q Multilayer perceptrons. Back. Progation learning algorithm and variants. q Radial basis function networks q Competitive learning. Kohonen maps. Adaptive resonance theory q Correlative learning. Principal component analysis q Recurrent ANN (Hopfield, Elman, Cellular NN) q Evolutionary design of NN Neural Networks - Lecture 1 43

Structure of the course q ANN design q One layer perceptrons. Linear separable classification problems. Widrow-Hoff and Delta learning rules. q Multilayer perceptrons. Back. Progation learning algorithm and variants. q Radial basis function networks q Competitive learning. Kohonen maps. Adaptive resonance theory q Correlative learning. Principal component analysis q Recurrent ANN (Hopfield, Elman, Cellular NN) q Evolutionary design of NN Neural Networks - Lecture 1 43

Structure of the lab Aim: Simulating basic ANNs Tools: Mat. Lab (Sci. Lab, Weka, Java. SNNS) Lab assignments 1. Simple perceptron (e. g. character recognition) 2. Backpropagation network (e. g. nonliner mapping, classification) 3. RBF network (e. g. nonliner mapping, classification) 4. Unsupervised learning (e. g. clustering) Neural Networks - Lecture 1 44

Structure of the lab Aim: Simulating basic ANNs Tools: Mat. Lab (Sci. Lab, Weka, Java. SNNS) Lab assignments 1. Simple perceptron (e. g. character recognition) 2. Backpropagation network (e. g. nonliner mapping, classification) 3. RBF network (e. g. nonliner mapping, classification) 4. Unsupervised learning (e. g. clustering) Neural Networks - Lecture 1 44

Grading Lab assignments: 40% (1 point / assignment) Final test: 60% Optional project: 60%-80% Any combination is allowed ! Neural Networks - Lecture 1 45

Grading Lab assignments: 40% (1 point / assignment) Final test: 60% Optional project: 60%-80% Any combination is allowed ! Neural Networks - Lecture 1 45

Course materials http: //web. info. uvt. ro/~dzaharie/ • C. Bishop – Neural Networks for Pattern Recognition, Clarendon Press, 1995 • L. Chua, T. Roska – Cellular neural networks and visual computing , Cambridge. University Press, 2004. • A. Engelbrecht – Computational Intelligence. An Introduction, 2007 • M. Hagan, H. Demuth, M. Beale – Neural Networks Design, PWS Publishing 1996 • Hertz, J. ; Krogh, A. ; Palmer, R. - Introduction to the Theory of Neural Computation, Addison-Wesley P. C. , 1991. • Ripley, B. D. - Pattern Recognition and Neural Networks, Cambridge University Press, 1996. Neural Networks - Lecture 1 46

Course materials http: //web. info. uvt. ro/~dzaharie/ • C. Bishop – Neural Networks for Pattern Recognition, Clarendon Press, 1995 • L. Chua, T. Roska – Cellular neural networks and visual computing , Cambridge. University Press, 2004. • A. Engelbrecht – Computational Intelligence. An Introduction, 2007 • M. Hagan, H. Demuth, M. Beale – Neural Networks Design, PWS Publishing 1996 • Hertz, J. ; Krogh, A. ; Palmer, R. - Introduction to the Theory of Neural Computation, Addison-Wesley P. C. , 1991. • Ripley, B. D. - Pattern Recognition and Neural Networks, Cambridge University Press, 1996. Neural Networks - Lecture 1 46