83a01b955d6e4c6562345653de25858d.ppt

- Количество слайдов: 89

Information Extraction Peng. Bo Dec 2, 2010

Topics of today n n IE: Information Extraction Techniques n n n Wrapper Induction Sliding Windows From FST to HMM

What is IE?

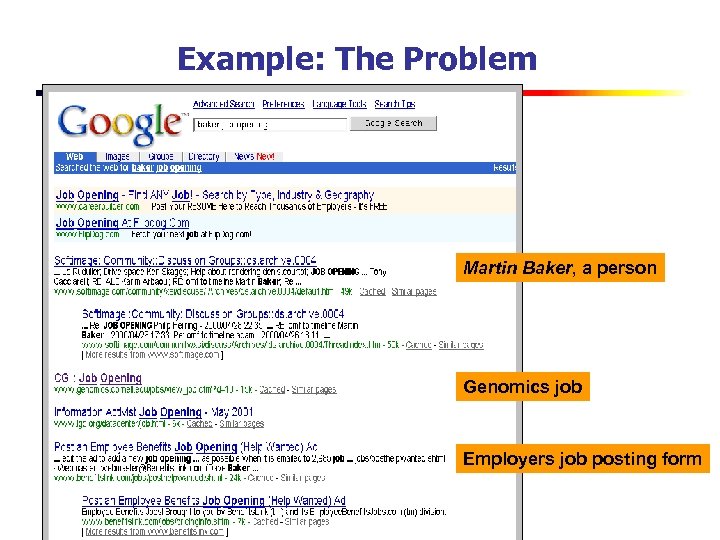

Example: The Problem Martin Baker, a person Genomics job Employers job posting form

Example: A Solution

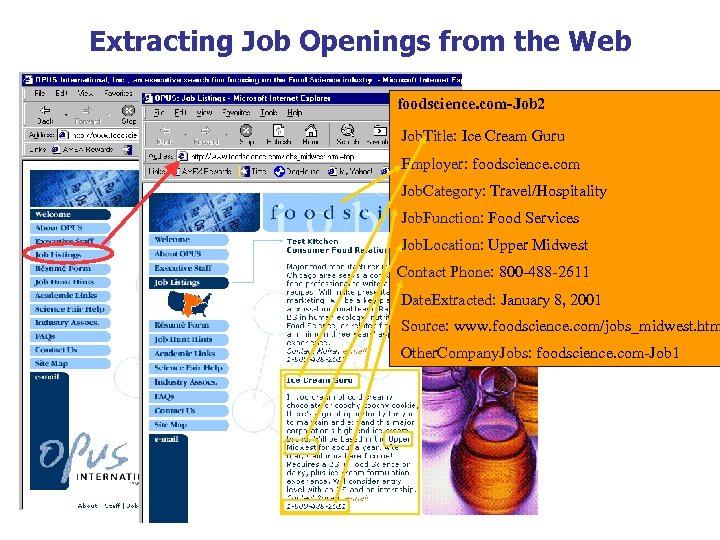

Extracting Job Openings from the Web foodscience. com-Job 2 Job. Title: Ice Cream Guru Employer: foodscience. com Job. Category: Travel/Hospitality Job. Function: Food Services Job. Location: Upper Midwest Contact Phone: 800 -488 -2611 Date. Extracted: January 8, 2001 Source: www. foodscience. com/jobs_midwest. htm Other. Company. Jobs: foodscience. com-Job 1

Job Openings: Category = Food Services Keyword = Baker Location = Continental U. S.

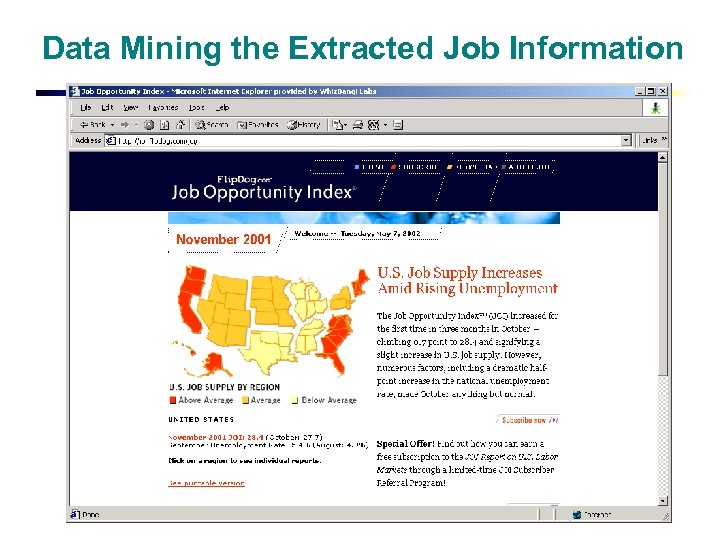

Data Mining the Extracted Job Information

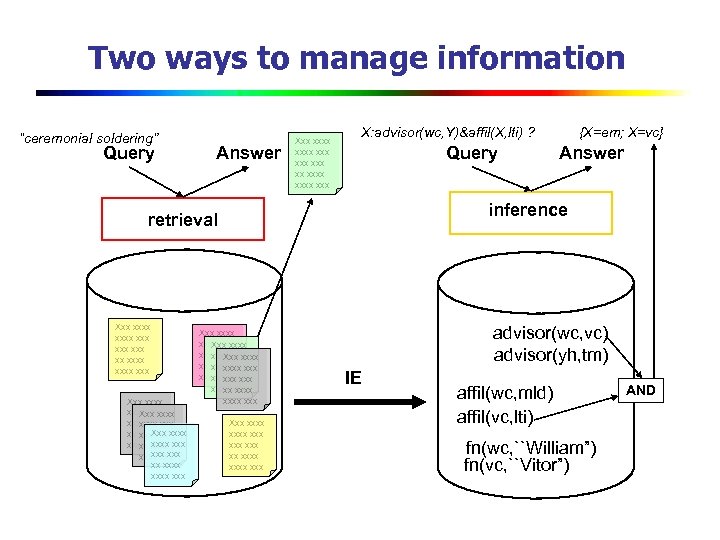

Two ways to manage information “ceremonial soldering” Query Answer Xxx xxxx xxx X: advisor(wc, Y)&affil(X, lti) ? Query Xxx xxxx xxx Xxx xxxxxxx xxxxxx xxx. Xxx xxxx xxx xx xxxx xxx Answer inference retrieval Xxx xxxx xxx {X=em; X=vc} Xxx xxxx xxx Xxx xxxxxxx xxx xxxx Xxx xx xxxxxxx xxx xx xxxx xxx Xxx xxxx xxx advisor(wc, vc) advisor(yh, tm) IE affil(wc, mld) affil(vc, lti) fn(wc, ``William”) fn(vc, ``Vitor”) AND

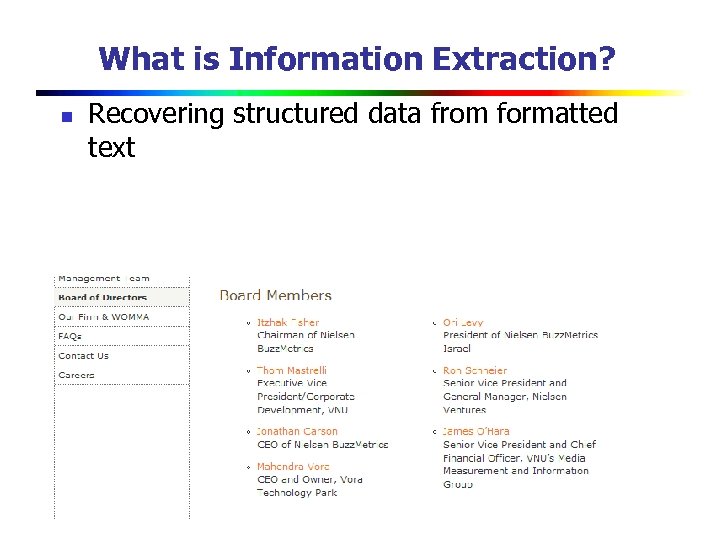

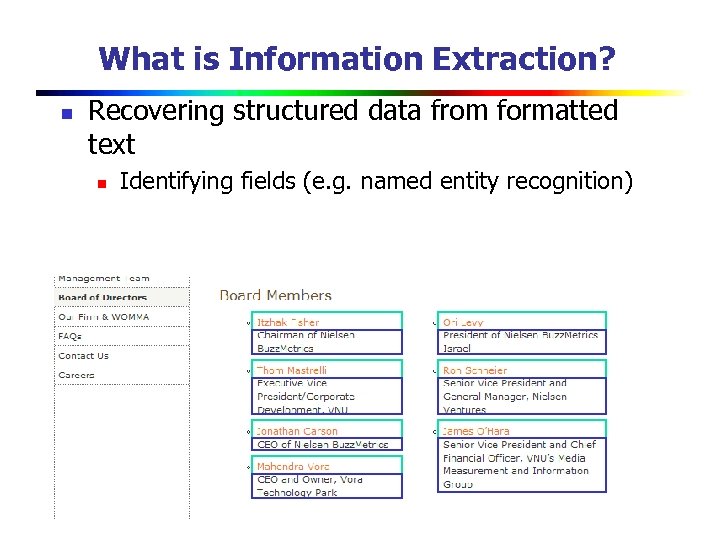

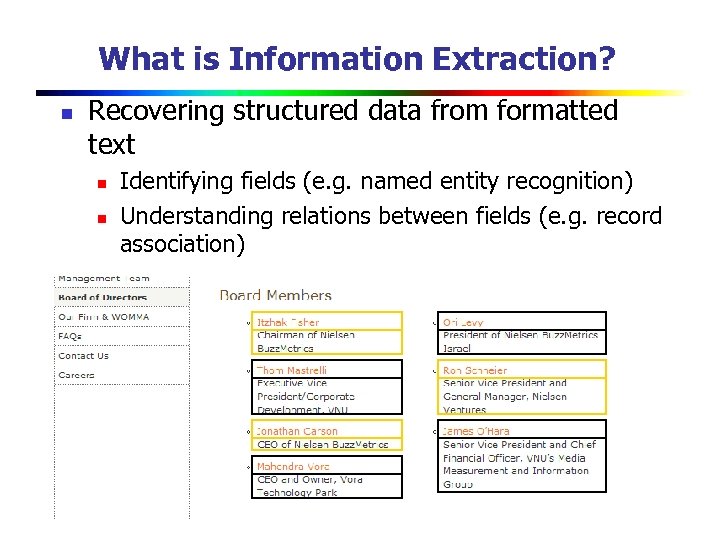

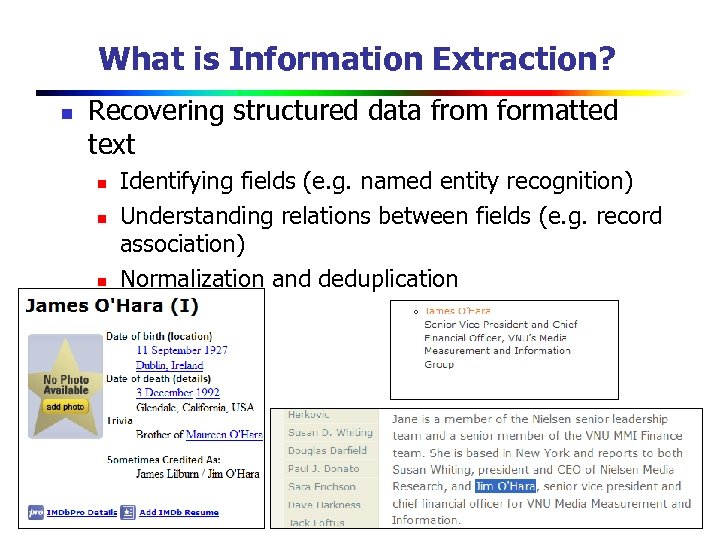

What is Information Extraction? n Recovering structured data from formatted text

What is Information Extraction? n Recovering structured data from formatted text n Identifying fields (e. g. named entity recognition)

What is Information Extraction? n Recovering structured data from formatted text n n Identifying fields (e. g. named entity recognition) Understanding relations between fields (e. g. record association)

What is Information Extraction? n Recovering structured data from formatted text n n n Identifying fields (e. g. named entity recognition) Understanding relations between fields (e. g. record association) Normalization and deduplication

What is Information Extraction? n Recovering structured data from formatted text n n Identifying fields (e. g. named entity recognition) Understanding relations between fields (e. g. record association) Normalization and deduplication Today, focus mostly on field identification & a little on record association

Applications

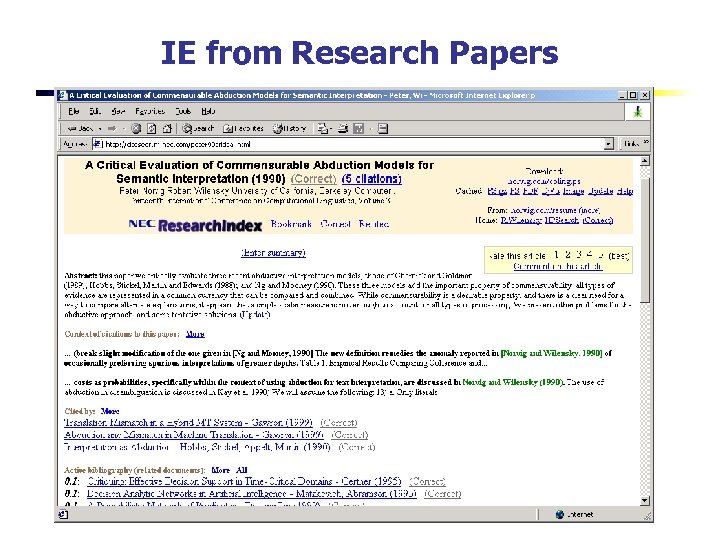

IE from Research Papers

Chinese Documents regarding Weather Chinese Academy of Sciences 200 k+ documents several millennia old - Qing Dynasty Archives - memos - newspaper articles - diaries

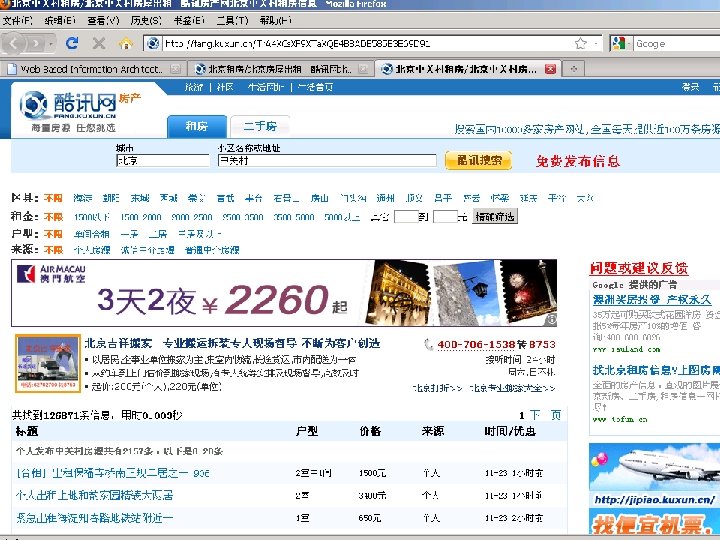

Wrapper Induction

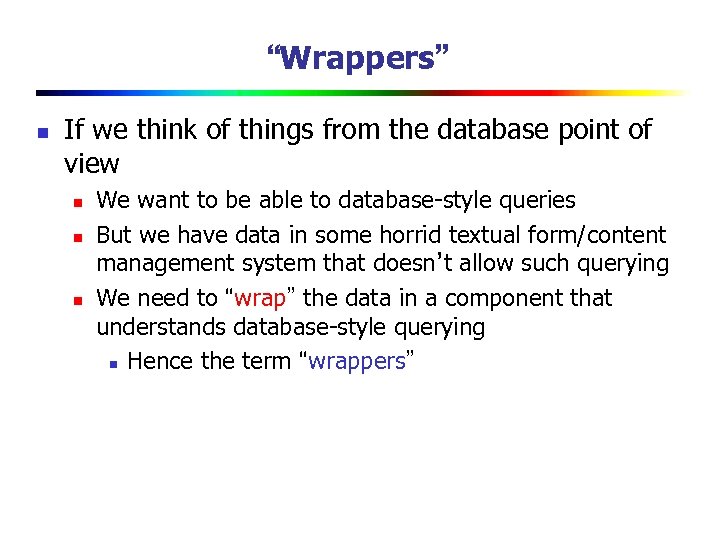

“Wrappers” n If we think of things from the database point of view n n n We want to be able to database-style queries But we have data in some horrid textual form/content management system that doesn’t allow such querying We need to “wrap” the data in a component that understands database-style querying n Hence the term “wrappers”

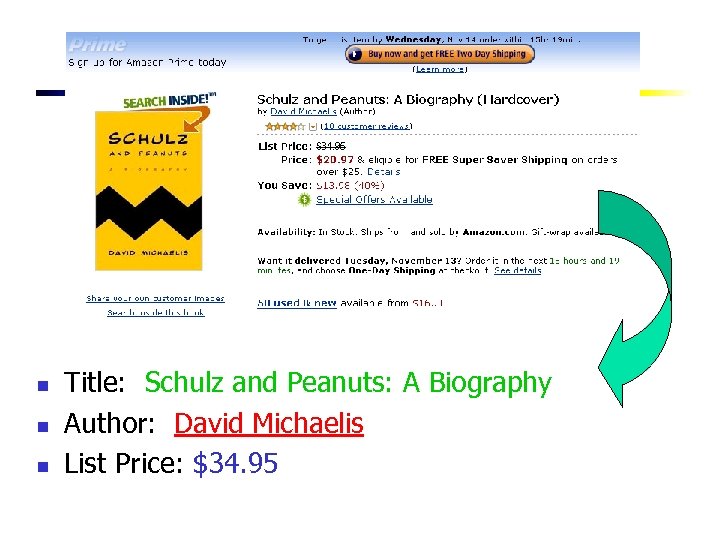

n n n Title: Schulz and Peanuts: A Biography Author: David Michaelis List Price: $34. 95

Wrappers: Simple Extraction Patterns n Specify an item to extract for a slot using a regular expression pattern. n n Price pattern: “b$d+(. d{2})? b” May require preceding (pre-filler) pattern and succeeding (post-filler) pattern to identify the end of the filler. n Amazon list price: n Pre-filler pattern: “<b>List Price: </b> <span class=listprice> ” n Filler pattern: “b$d+(. d{2})? b” n Post-filler pattern: “</span>”

Wrapper tool-kits n Wrapper toolkits n n Specialized programming environments for writing & debugging wrappers by hand Some Resources n n Wrapper Development Tools LAPIS

Wrapper Induction n n Problem description: Task: learn extraction rules based on labeled examples n n Hand-writing rules is tedious, error prone, and time consuming Learning wrappers is wrapper induction

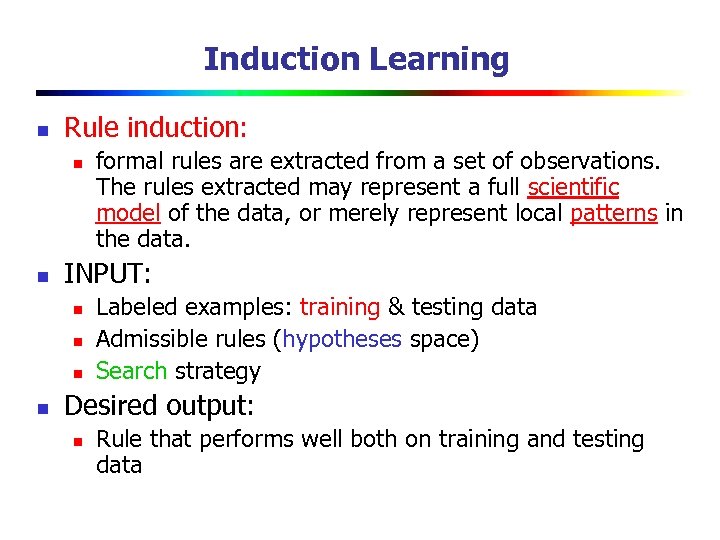

Induction Learning n Rule induction: n n INPUT: n n formal rules are extracted from a set of observations. The rules extracted may represent a full scientific model of the data, or merely represent local patterns in the data. Labeled examples: training & testing data Admissible rules (hypotheses space) Search strategy Desired output: n Rule that performs well both on training and testing data

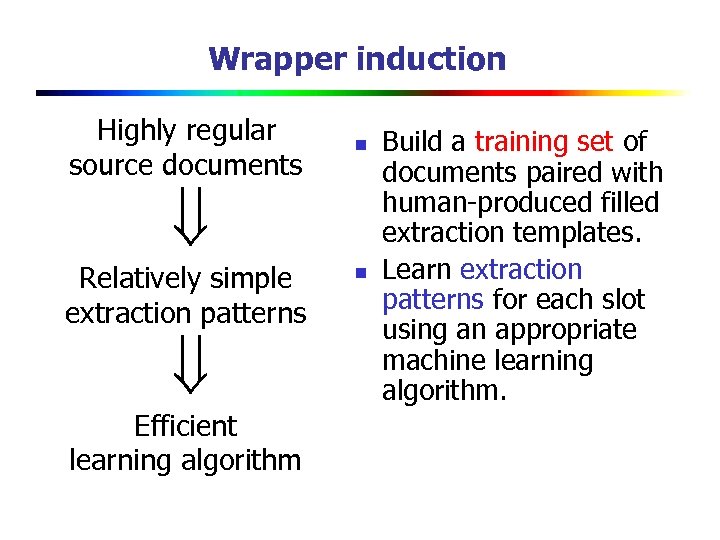

Wrapper induction Highly regular source documents n Relatively simple extraction patterns Efficient learning algorithm n Build a training set of documents paired with human-produced filled extraction templates. Learn extraction patterns for each slot using an appropriate machine learning algorithm.

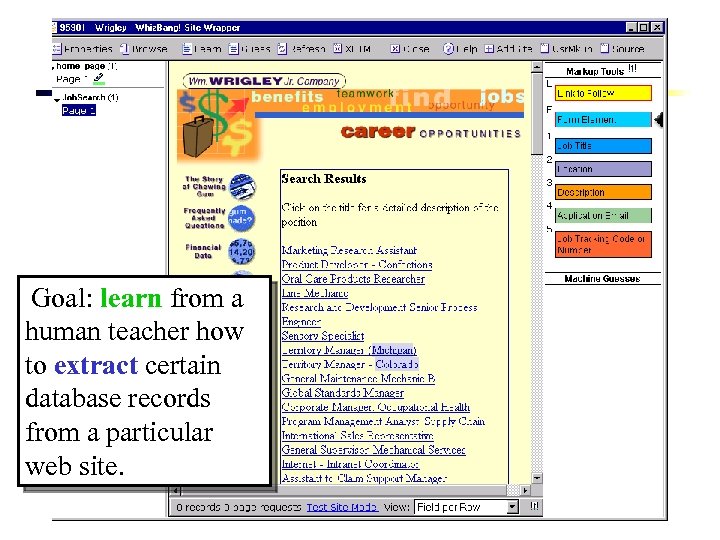

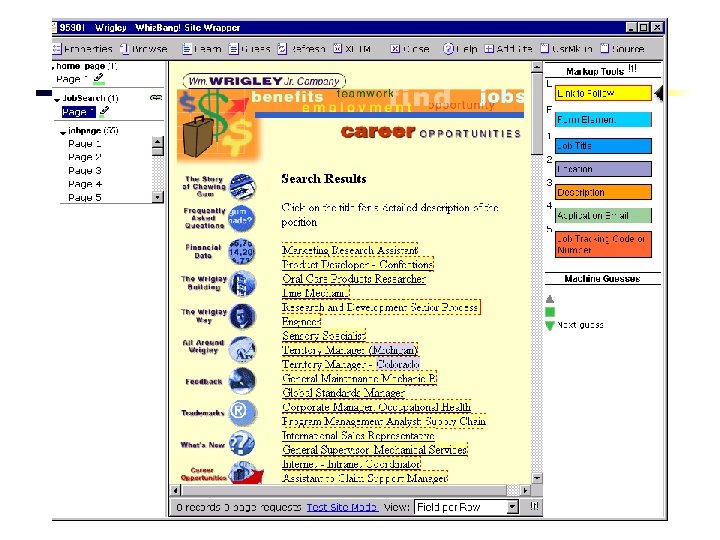

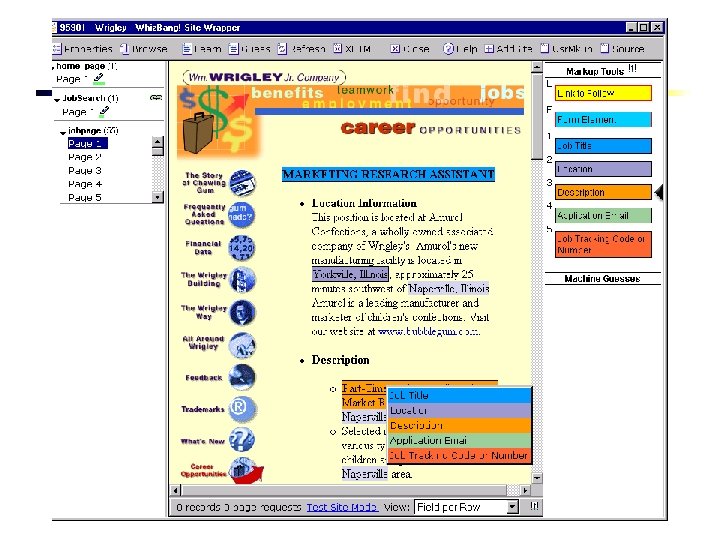

Goal: learn from a human teacher how to extract certain database records from a particular web site.

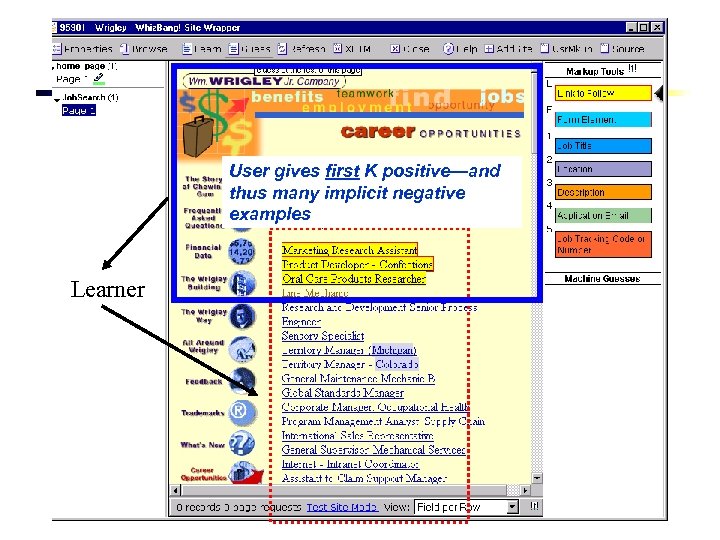

User gives first K positive—and thus many implicit negative examples Learner

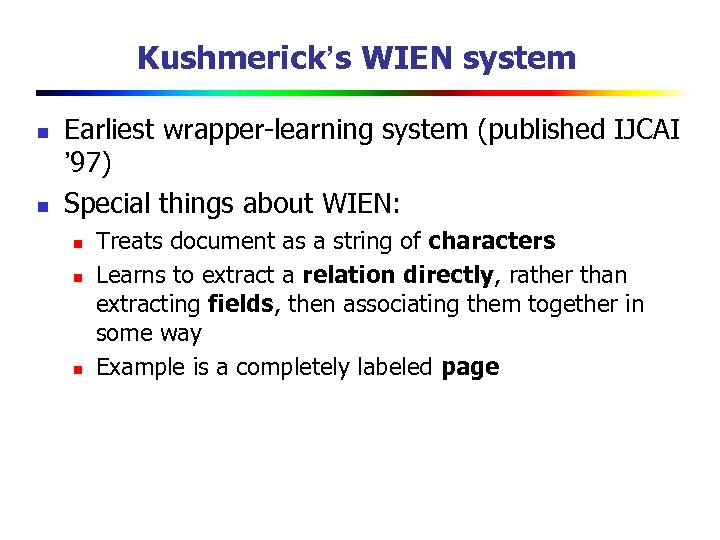

Kushmerick’s WIEN system n n Earliest wrapper-learning system (published IJCAI ’ 97) Special things about WIEN: n n n Treats document as a string of characters Learns to extract a relation directly, rather than extracting fields, then associating them together in some way Example is a completely labeled page

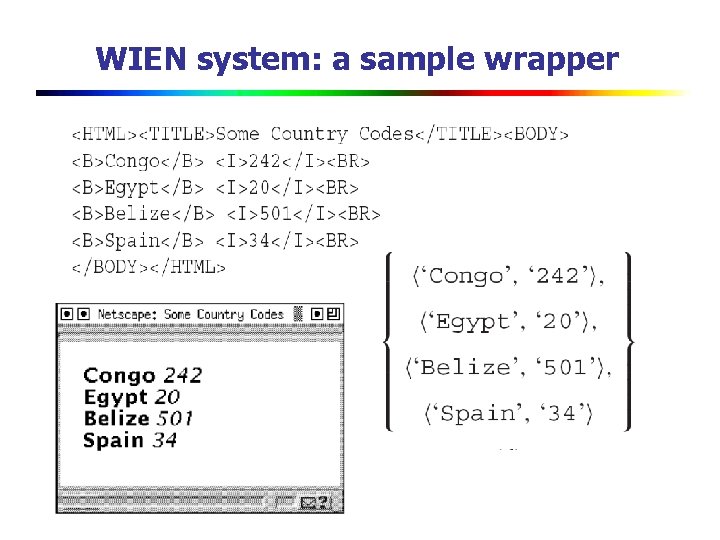

WIEN system: a sample wrapper

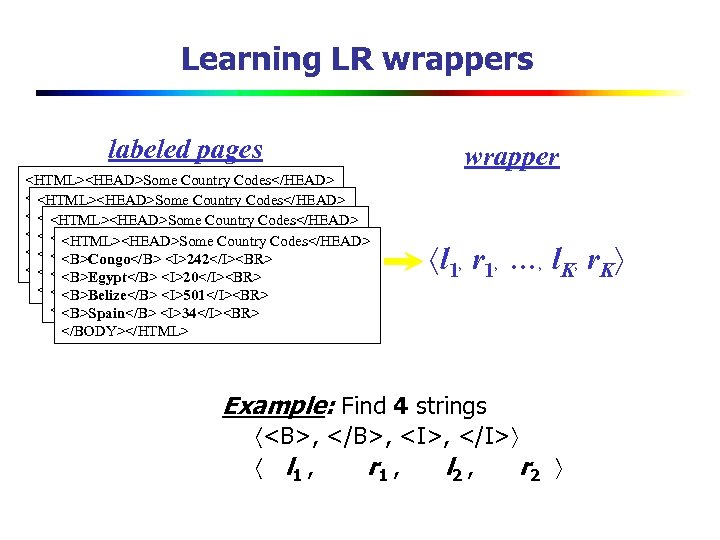

Learning LR wrappers labeled pages <HTML><HEAD>Some Country Codes</HEAD> <B>Congo</B> <I>242</I><BR> Codes</HEAD> <HTML><HEAD>Some Country <B>Egypt</B> <I>20</I><BR> <B>Congo</B> <I>242</I><BR> Codes</HEAD> <HTML><HEAD>Some Country <B>Belize</B> <I>501</I><BR> <B>Egypt</B> <I>20</I><BR> <B>Congo</B> <I>242</I><BR> Codes</HEAD> <HTML><HEAD>Some Country <B>Spain</B> <I>34</I><BR> <B>Belize</B> <I>501</I><BR> <B>Egypt</B> <I>20</I><BR> <B>Congo</B> <I>242</I><BR> </BODY></HTML><I>501</I><BR> <B>Spain</B> <I>34</I><BR> <B>Belize</B> <I>20</I><BR> <B>Egypt</B> </BODY></HTML><I>501</I><BR> <B>Spain</B> <I>34</I><BR> <B>Belize</B> </BODY></HTML> <B>Spain</B> <I>34</I><BR> </BODY></HTML> wrapper l 1, r 1, …, l. K, r. K Example: Find 4 strings <B>, </B>, <I>, </I> l 1 , r 1 , l 2 , r 2

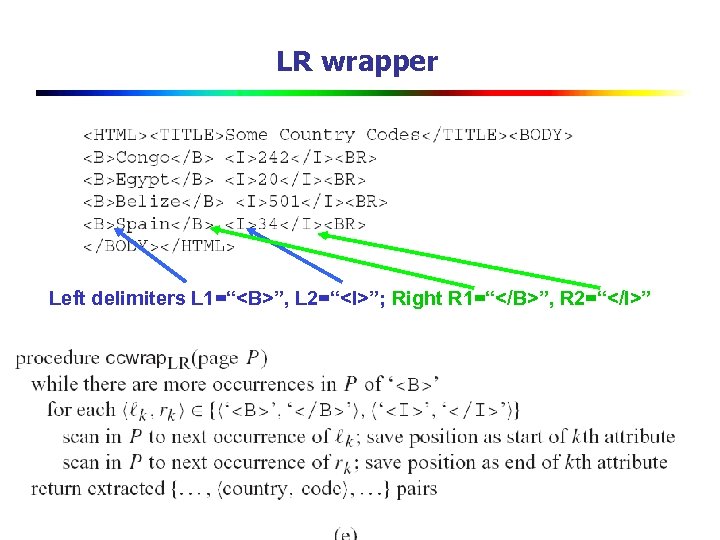

LR wrapper Left delimiters L 1=“<B>”, L 2=“<I>”; Right R 1=“</B>”, R 2=“</I>”

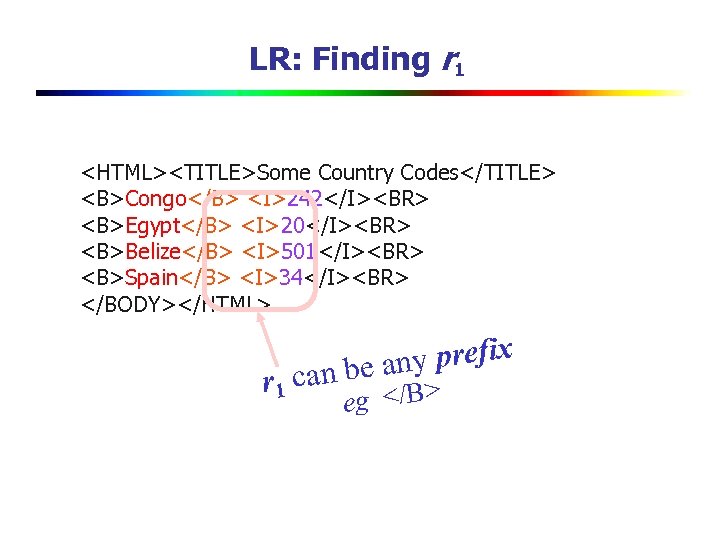

LR: Finding r 1 <HTML><TITLE>Some Country Codes</TITLE> <B>Congo</B> <I>242</I><BR> <B>Egypt</B> <I>20</I><BR> <B>Belize</B> <I>501</I><BR> <B>Spain</B> <I>34</I><BR> </BODY></HTML> r 1 y prefix can be an eg </B>

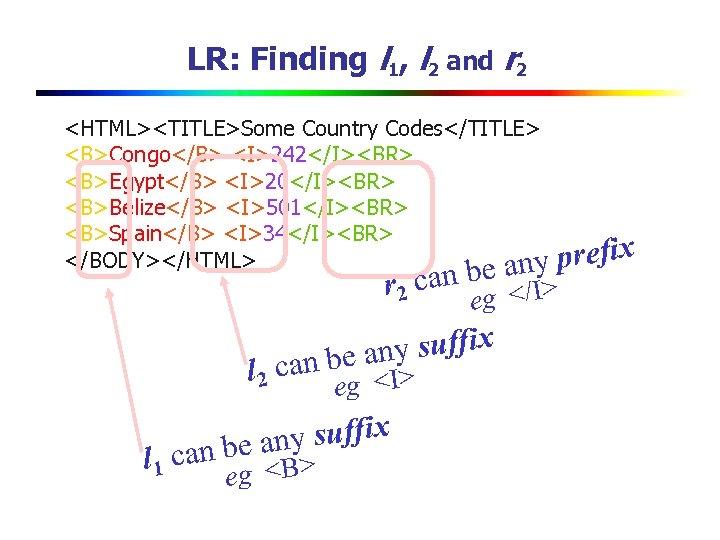

LR: Finding l 1, l 2 and r 2 <HTML><TITLE>Some Country Codes</TITLE> <B>Congo</B> <I>242</I><BR> <B>Egypt</B> <I>20</I><BR> <B>Belize</B> <I>501</I><BR> <B>Spain</B> <I>34</I><BR> </BODY></HTML> any prefix can be </I> r 2 eg ny suffix a can be <I> l 2 eg ny suffix can be a B> l 1 g < e

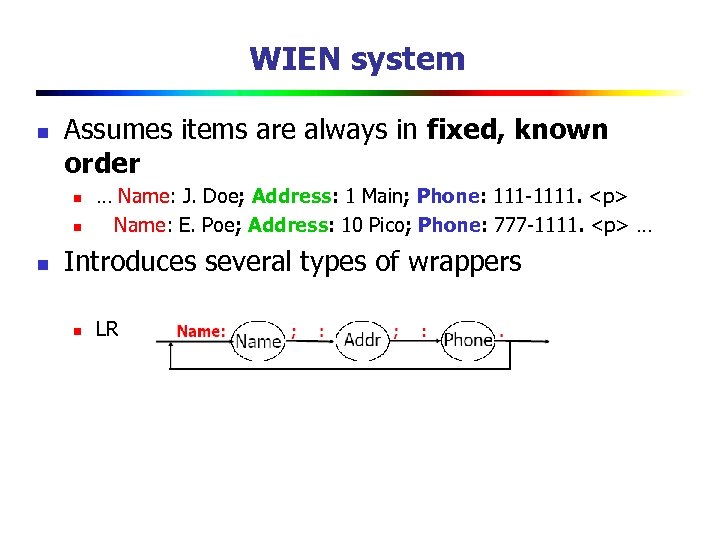

WIEN system n Assumes items are always in fixed, known order n n n … Name: J. Doe; Address: 1 Main; Phone: 111 -1111. <p> Name: E. Poe; Address: 10 Pico; Phone: 777 -1111. <p> … Introduces several types of wrappers n LR

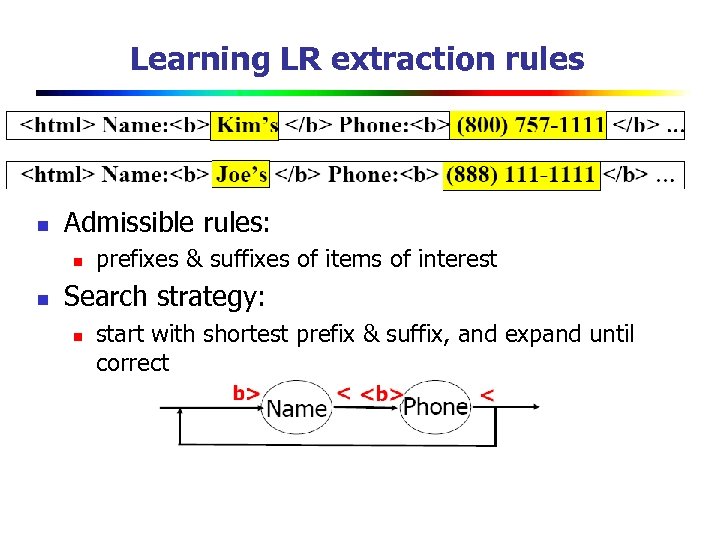

Learning LR extraction rules n Admissible rules: n n prefixes & suffixes of items of interest Search strategy: n start with shortest prefix & suffix, and expand until correct

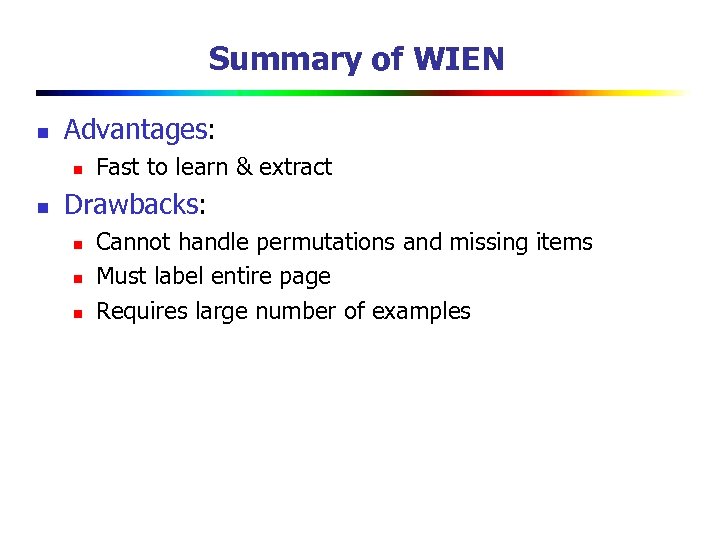

Summary of WIEN n Advantages: n n Fast to learn & extract Drawbacks: n n n Cannot handle permutations and missing items Must label entire page Requires large number of examples

Sliding Windows

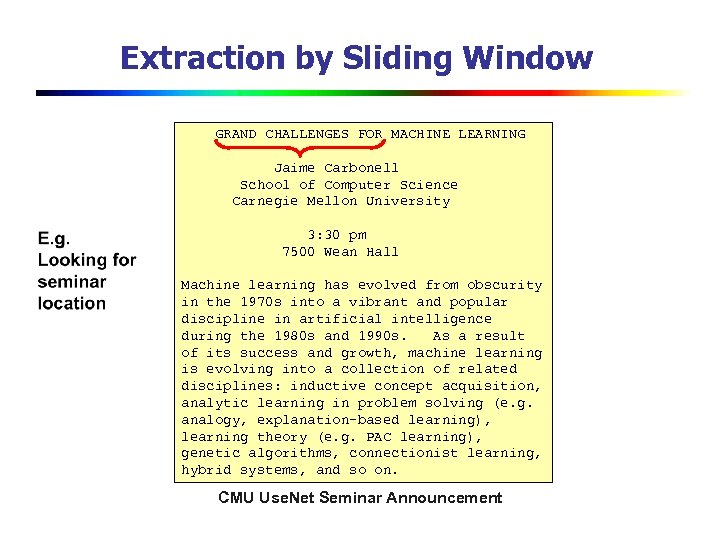

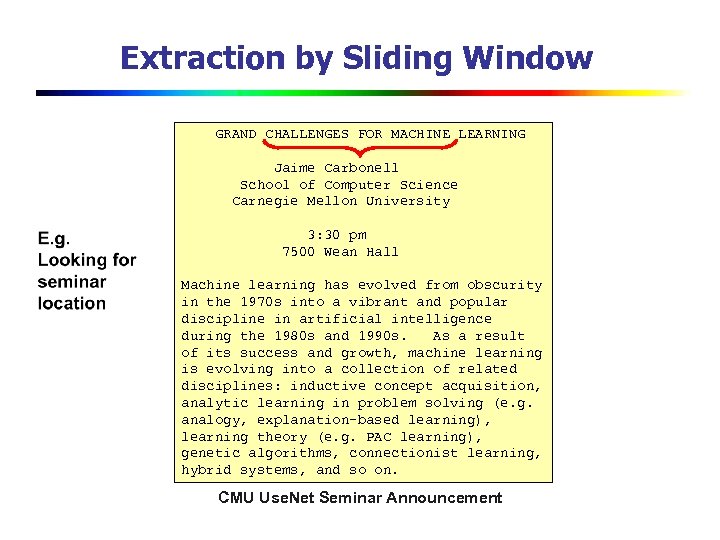

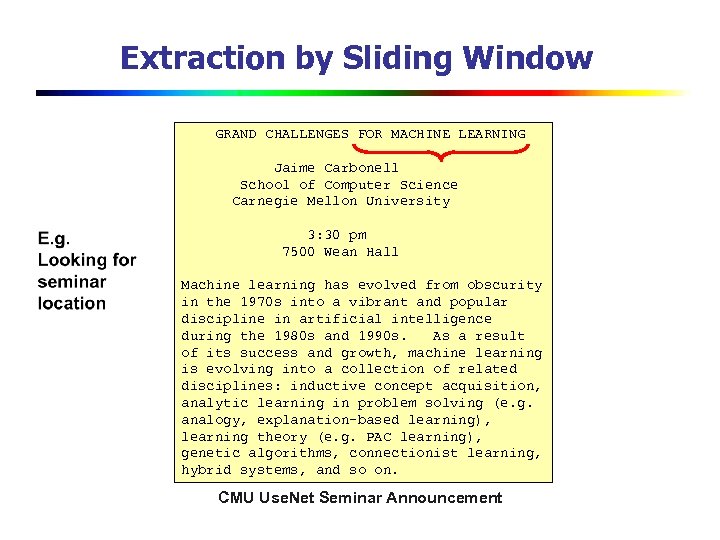

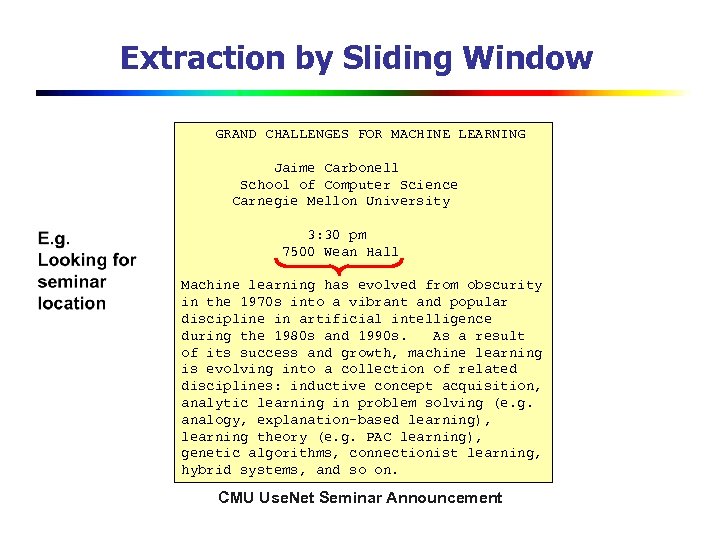

Extraction by Sliding Window GRAND CHALLENGES FOR MACHINE LEARNING Jaime Carbonell School of Computer Science Carnegie Mellon University 3: 30 pm 7500 Wean Hall Machine learning has evolved from obscurity in the 1970 s into a vibrant and popular discipline in artificial intelligence during the 1980 s and 1990 s. As a result of its success and growth, machine learning is evolving into a collection of related disciplines: inductive concept acquisition, analytic learning in problem solving (e. g. analogy, explanation-based learning), learning theory (e. g. PAC learning), genetic algorithms, connectionist learning, hybrid systems, and so on. CMU Use. Net Seminar Announcement

Extraction by Sliding Window GRAND CHALLENGES FOR MACHINE LEARNING Jaime Carbonell School of Computer Science Carnegie Mellon University 3: 30 pm 7500 Wean Hall Machine learning has evolved from obscurity in the 1970 s into a vibrant and popular discipline in artificial intelligence during the 1980 s and 1990 s. As a result of its success and growth, machine learning is evolving into a collection of related disciplines: inductive concept acquisition, analytic learning in problem solving (e. g. analogy, explanation-based learning), learning theory (e. g. PAC learning), genetic algorithms, connectionist learning, hybrid systems, and so on. CMU Use. Net Seminar Announcement

Extraction by Sliding Window GRAND CHALLENGES FOR MACHINE LEARNING Jaime Carbonell School of Computer Science Carnegie Mellon University 3: 30 pm 7500 Wean Hall Machine learning has evolved from obscurity in the 1970 s into a vibrant and popular discipline in artificial intelligence during the 1980 s and 1990 s. As a result of its success and growth, machine learning is evolving into a collection of related disciplines: inductive concept acquisition, analytic learning in problem solving (e. g. analogy, explanation-based learning), learning theory (e. g. PAC learning), genetic algorithms, connectionist learning, hybrid systems, and so on. CMU Use. Net Seminar Announcement

Extraction by Sliding Window GRAND CHALLENGES FOR MACHINE LEARNING Jaime Carbonell School of Computer Science Carnegie Mellon University 3: 30 pm 7500 Wean Hall Machine learning has evolved from obscurity in the 1970 s into a vibrant and popular discipline in artificial intelligence during the 1980 s and 1990 s. As a result of its success and growth, machine learning is evolving into a collection of related disciplines: inductive concept acquisition, analytic learning in problem solving (e. g. analogy, explanation-based learning), learning theory (e. g. PAC learning), genetic algorithms, connectionist learning, hybrid systems, and so on. CMU Use. Net Seminar Announcement

![A “Naïve Bayes” Sliding Window Model [Freitag 1997] … 00 : pm Place : A “Naïve Bayes” Sliding Window Model [Freitag 1997] … 00 : pm Place :](https://present5.com/presentation/83a01b955d6e4c6562345653de25858d/image-46.jpg)

A “Naïve Bayes” Sliding Window Model [Freitag 1997] … 00 : pm Place : Wean Hall Rm 5409 Speaker : Sebastian Thrun … w t-m w t-1 w t+n+1 w t+n+m prefix contents suffix Estimate Pr(LOCATION|window) using Bayes rule Try all “reasonable” windows (vary length, position) Assume independence for length, prefix words, suffix words, content words Estimate from data quantities like: Pr(“Place” in prefix|LOCATION) If P(“Wean Hall Rm 5409” = LOCATION) is above some threshold, extract it.

![A “Naïve Bayes” Sliding Window Model [Freitag 1997] … 00 : pm Place : A “Naïve Bayes” Sliding Window Model [Freitag 1997] … 00 : pm Place :](https://present5.com/presentation/83a01b955d6e4c6562345653de25858d/image-47.jpg)

A “Naïve Bayes” Sliding Window Model [Freitag 1997] … 00 : pm Place : Wean Hall Rm 5409 Speaker : Sebastian Thrun … w t-m w t-1 w t+n+1 w t+n+m prefix contents suffix Create dataset of examples like these: 1. +(prefix 00, …, prefix. Colon, content. Wean, content. Hall, …. , suffix. Speaker, …) - (prefix. Colon, …, prefix. Wean, content. Hall, …. , Content. Speaker, suffix. Colon, …. ) … Train a Naive. Bayes classifier If Pr(class=+|prefix, contents, suffix) > threshold, predict the content window is a location. 2. 3. • To think about: what if the extracted entities aren’t consistent, eg if the location overlaps with the speaker?

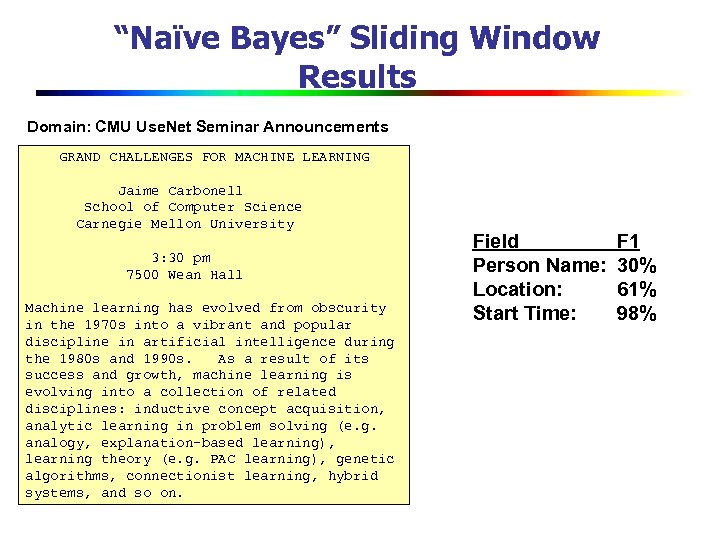

“Naïve Bayes” Sliding Window Results Domain: CMU Use. Net Seminar Announcements GRAND CHALLENGES FOR MACHINE LEARNING Jaime Carbonell School of Computer Science Carnegie Mellon University 3: 30 pm 7500 Wean Hall Machine learning has evolved from obscurity in the 1970 s into a vibrant and popular discipline in artificial intelligence during the 1980 s and 1990 s. As a result of its success and growth, machine learning is evolving into a collection of related disciplines: inductive concept acquisition, analytic learning in problem solving (e. g. analogy, explanation-based learning), learning theory (e. g. PAC learning), genetic algorithms, connectionist learning, hybrid systems, and so on. Field Person Name: Location: Start Time: F 1 30% 61% 98%

Finite State Transducers

Finite State Transducers for IE n n Basic method for extracting relevant information IE systems generally use a collection of specialized FSTs n n n Company Name detection Person Name detection Relationship detection

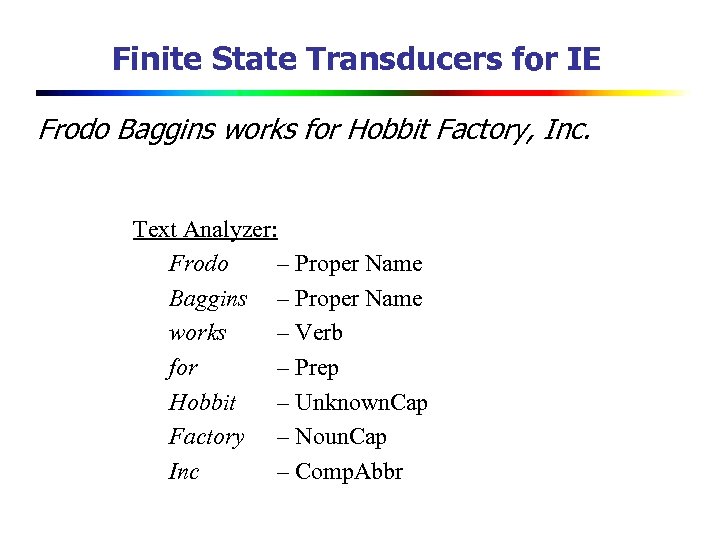

Finite State Transducers for IE Frodo Baggins works for Hobbit Factory, Inc. Text Analyzer: Frodo – Proper Name Baggins – Proper Name works – Verb for – Prep Hobbit – Unknown. Cap Factory – Noun. Cap Inc – Comp. Abbr

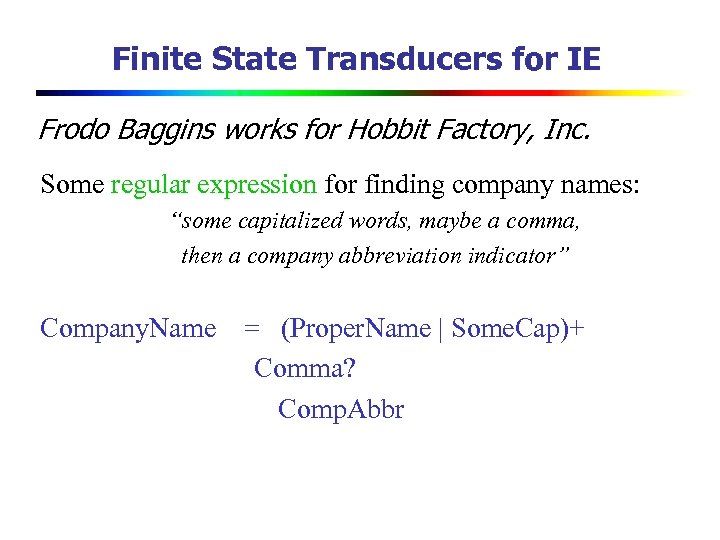

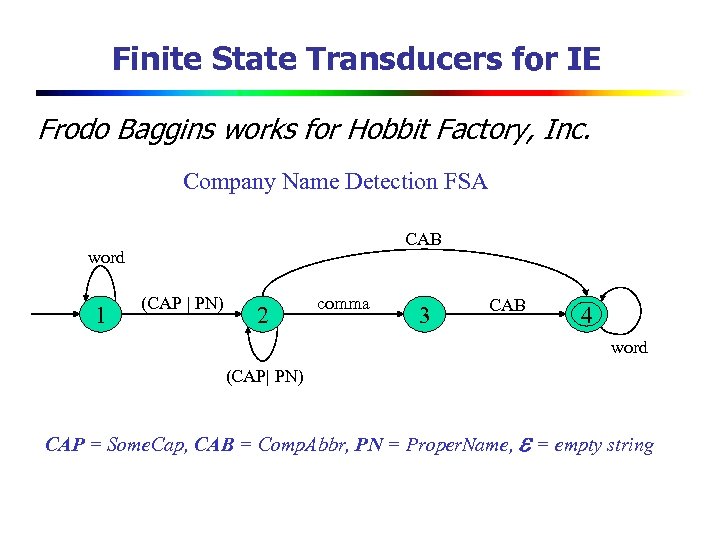

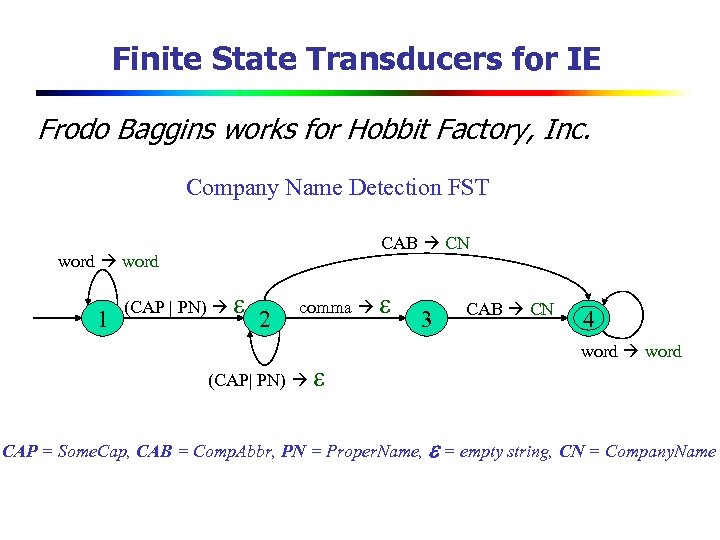

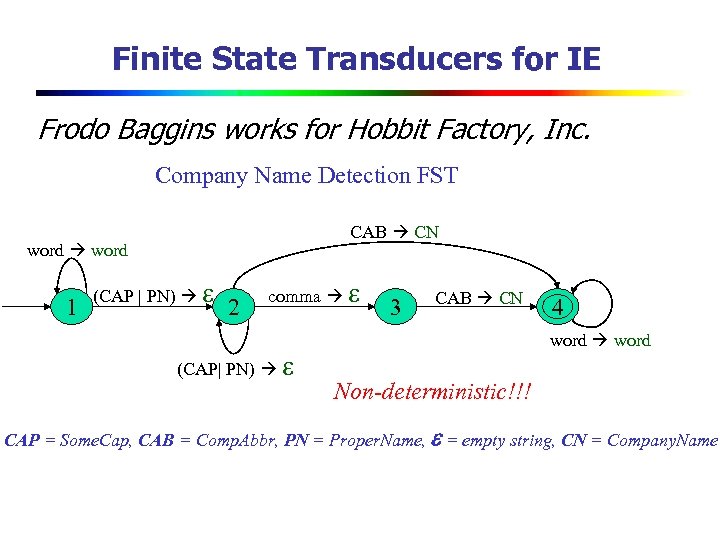

Finite State Transducers for IE Frodo Baggins works for Hobbit Factory, Inc. Some regular expression for finding company names: “some capitalized words, maybe a comma, then a company abbreviation indicator” Company. Name = (Proper. Name | Some. Cap)+ Comma? Comp. Abbr

Finite State Transducers for IE Frodo Baggins works for Hobbit Factory, Inc. Company Name Detection FSA CAB word 1 (CAP | PN) 2 comma 3 CAB 4 word (CAP| PN) CAP = Some. Cap, CAB = Comp. Abbr, PN = Proper. Name, = empty string

Finite State Transducers for IE Frodo Baggins works for Hobbit Factory, Inc. Company Name Detection FST CAB CN word 1 (CAP | PN) 2 comma (CAP| PN) 3 CAB CN 4 word CAP = Some. Cap, CAB = Comp. Abbr, PN = Proper. Name, = empty string, CN = Company. Name

Finite State Transducers for IE Frodo Baggins works for Hobbit Factory, Inc. Company Name Detection FST CAB CN word 1 (CAP | PN) 2 comma (CAP| PN) 3 CAB CN 4 word Non-deterministic!!! CAP = Some. Cap, CAB = Comp. Abbr, PN = Proper. Name, = empty string, CN = Company. Name

Finite State Transducers for IE n n n Several FSTs or a more complex FST can be used to find one type of information (e. g. company names) FSTs are often compiled from regular expressions Probabilistic (weighted) FSTs

Finite State Transducers for IE FSTs mean different things to different researchers in IE. n Based on lexical items (words) n Based on statistical language models n Based on deep syntactic/semantic analysis

Example: FASTUS n n Finite State Automaton Text Understanding System (SRI International) Cascading FSTs n n n Recognize names Recognize noun groups, verb groups etc Complex noun/verb groups are constructed Identify patterns of interest Identify and merge event structures

Hidden Markov Models

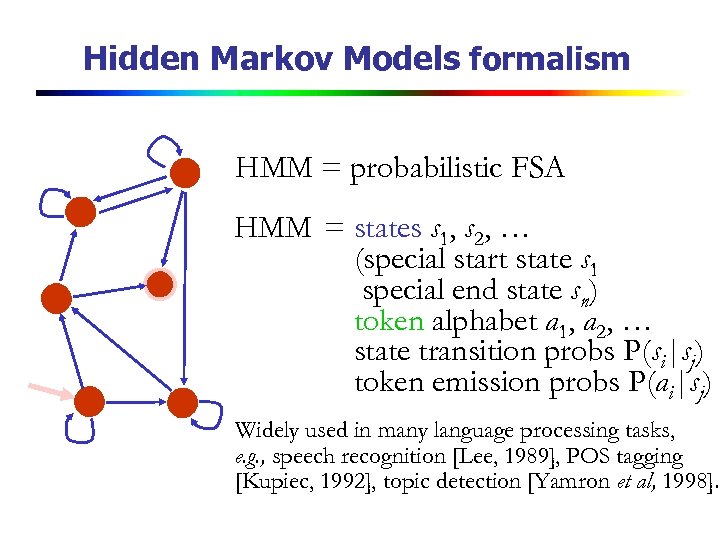

Hidden Markov Models formalism HMM = probabilistic FSA HMM = states s 1, s 2, … (special start state s 1 special end state sn) token alphabet a 1, a 2, … state transition probs P(si|sj) token emission probs P(ai|sj) Widely used in many language processing tasks, e. g. , speech recognition [Lee, 1989], POS tagging [Kupiec, 1992], topic detection [Yamron et al, 1998].

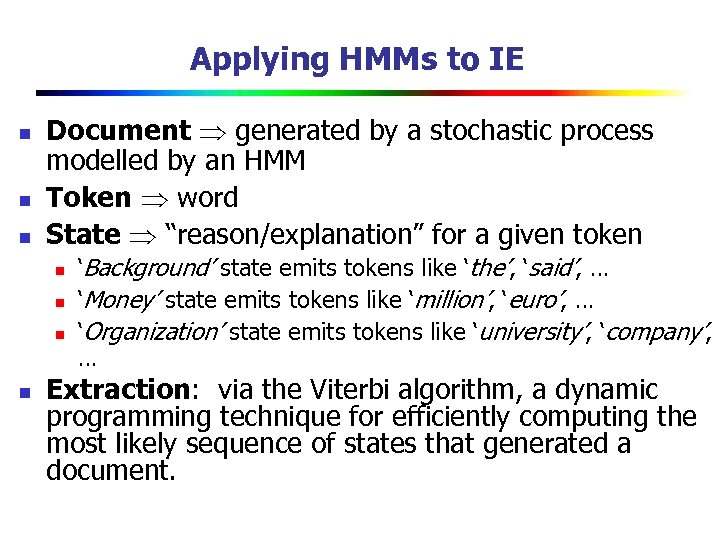

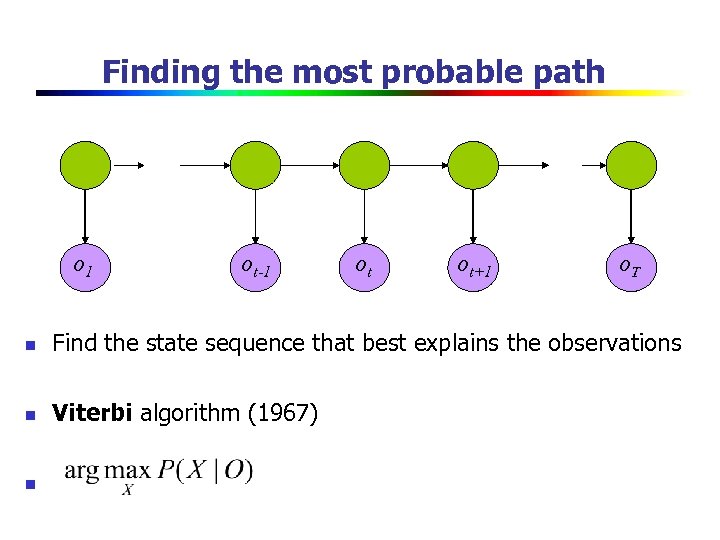

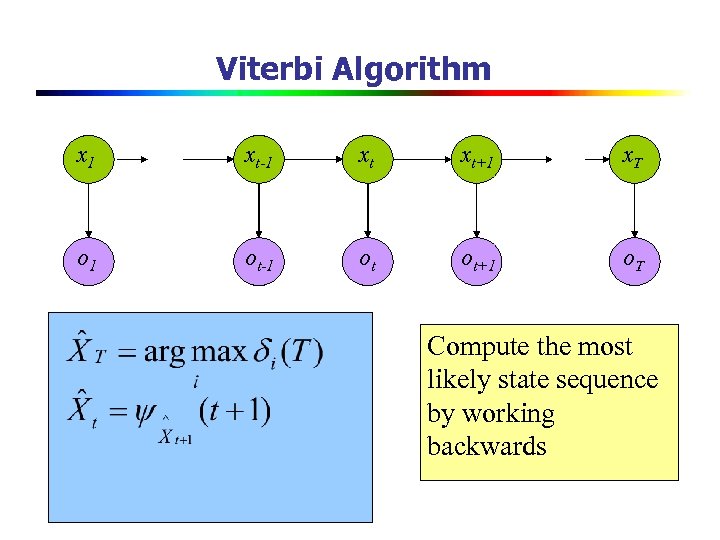

Applying HMMs to IE n n n Document generated by a stochastic process modelled by an HMM Token word State “reason/explanation” for a given token n n ‘Background’ state emits tokens like ‘the’, ‘said’, … ‘Money’ state emits tokens like ‘million’, ‘euro’, … ‘Organization’ state emits tokens like ‘university’, ‘company’, … Extraction: via the Viterbi algorithm, a dynamic programming technique for efficiently computing the most likely sequence of states that generated a document.

![HMM for research papers: transitions [Seymore et al. , 99] HMM for research papers: transitions [Seymore et al. , 99]](https://present5.com/presentation/83a01b955d6e4c6562345653de25858d/image-62.jpg)

HMM for research papers: transitions [Seymore et al. , 99]

![HMM for research papers: emissions [Seymore et al. , 99] ICML 1997. . . HMM for research papers: emissions [Seymore et al. , 99] ICML 1997. . .](https://present5.com/presentation/83a01b955d6e4c6562345653de25858d/image-63.jpg)

HMM for research papers: emissions [Seymore et al. , 99] ICML 1997. . . submission to… to appear in… carnegie mellon university… university of california dartmouth college stochastic optimization. . . reinforcement learning… model building mobile robot. . . supported in part… copyright. . . author title institution note Trained on 2 million words of Bib. Te. X data from the Web . . .

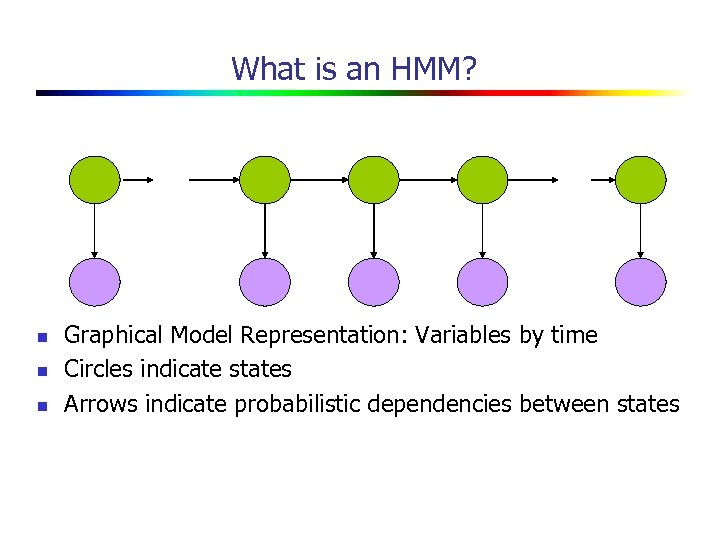

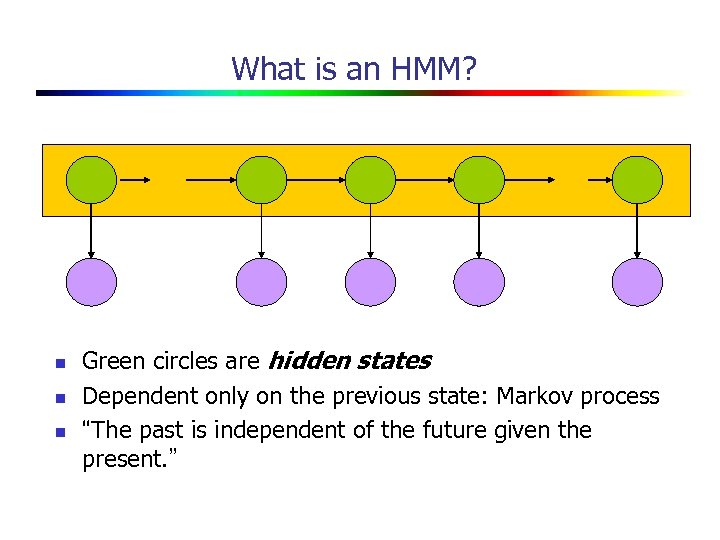

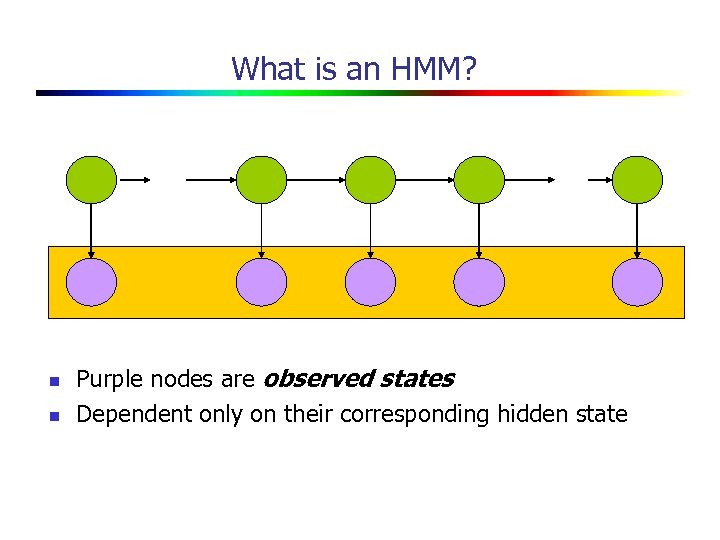

What is an HMM? n n n Graphical Model Representation: Variables by time Circles indicate states Arrows indicate probabilistic dependencies between states

What is an HMM? n n n Green circles are hidden states Dependent only on the previous state: Markov process “The past is independent of the future given the present. ”

What is an HMM? n n Purple nodes are observed states Dependent only on their corresponding hidden state

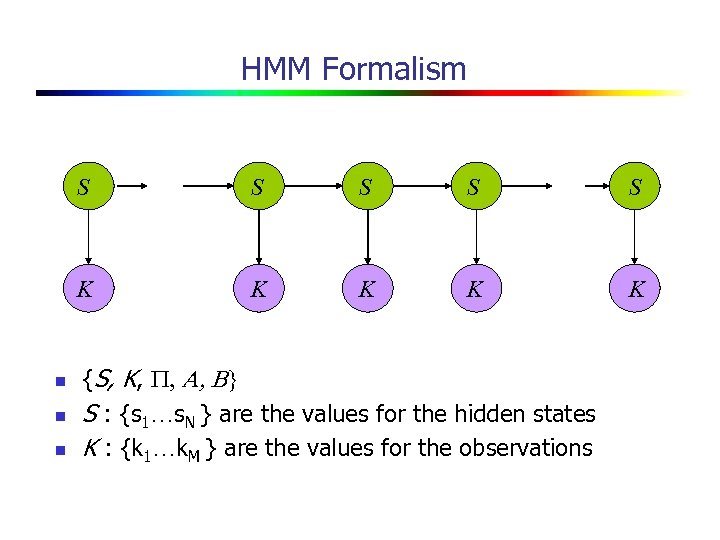

HMM Formalism S n n S S S K n S K K {S, K, P, A, B} S : {s 1…s. N } are the values for the hidden states K : {k 1…k. M } are the values for the observations

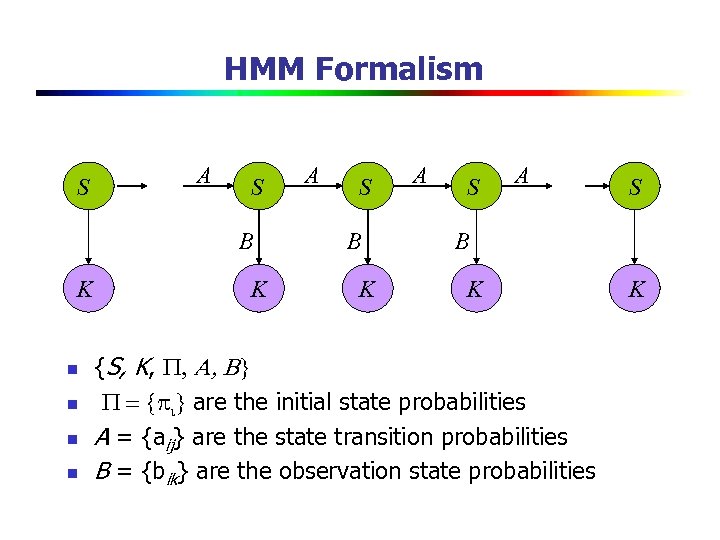

HMM Formalism S A S B K n n K A S B K {S, K, P, A, B} P = {pi} are the initial state probabilities A = {aij} are the state transition probabilities B = {bik} are the observation state probabilities K

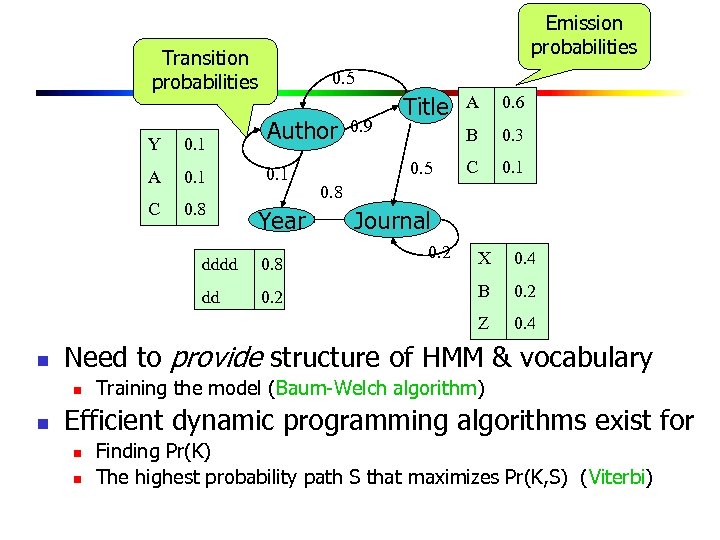

Emission probabilities Transition probabilities 0. 5 Author Y 0. 1 A 0. 1 C 0. 8 Year 0. 9 Title 0. 6 B 0. 5 A 0. 3 C 0. 1 0. 8 Journal 0. 2 dd n 0. 2 0. 4 B 0. 2 0. 4 Need to provide structure of HMM & vocabulary n n 0. 8 X Z dddd Training the model (Baum-Welch algorithm) Efficient dynamic programming algorithms exist for n n Finding Pr(K) The highest probability path S that maximizes Pr(K, S) (Viterbi)

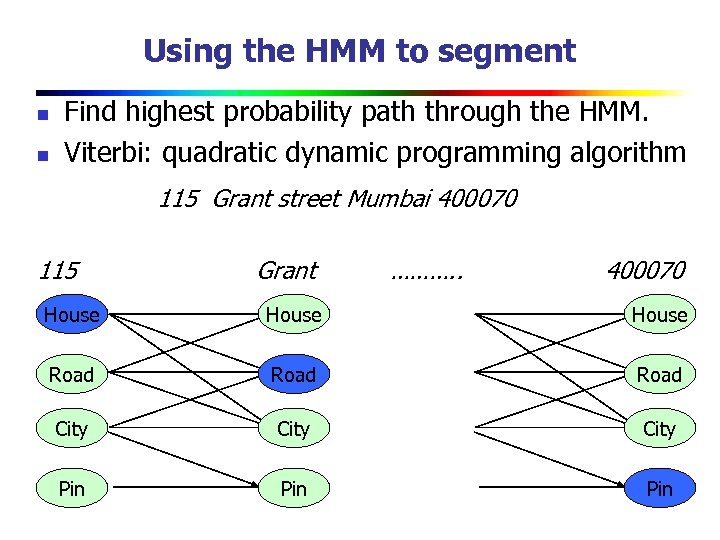

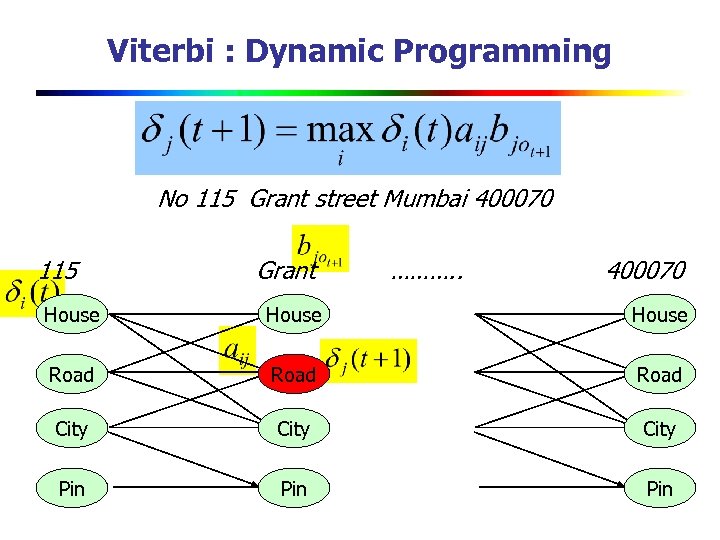

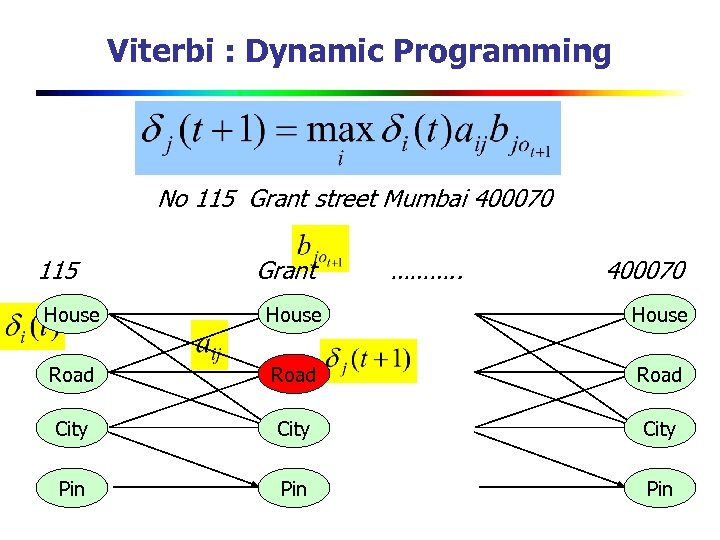

Using the HMM to segment n n Find highest probability path through the HMM. Viterbi: quadratic dynamic programming algorithm 115 Grant street Mumbai 400070 115 Grant ………. . 400070 House Road City Pin t Pin o o t Pin

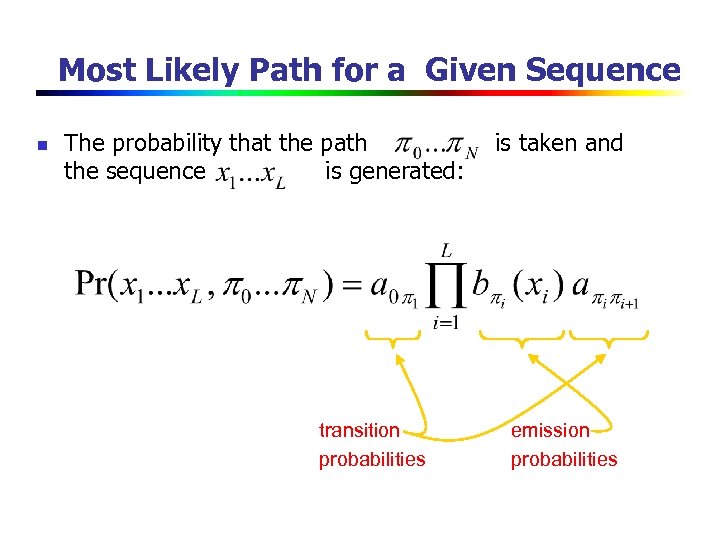

Most Likely Path for a Given Sequence n The probability that the path the sequence is generated: transition probabilities is taken and emission probabilities

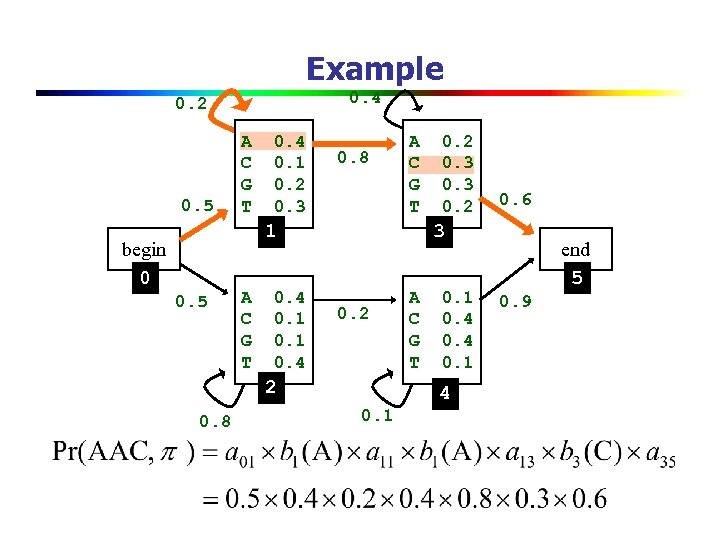

Example 0. 4 0. 2 0. 5 A C G T 0. 4 0. 1 0. 2 0. 3 0. 8 A C G T 1 begin 0 0. 5 A C G T 0. 4 0. 1 0. 4 0. 6 3 0. 2 2 0. 8 0. 2 0. 3 0. 2 A C G T 0. 1 0. 4 0. 1 end 5 0. 9

Finding the most probable path o 1 ot-1 ot ot+1 o. T n Find the state sequence that best explains the observations n Viterbi algorithm (1967) n

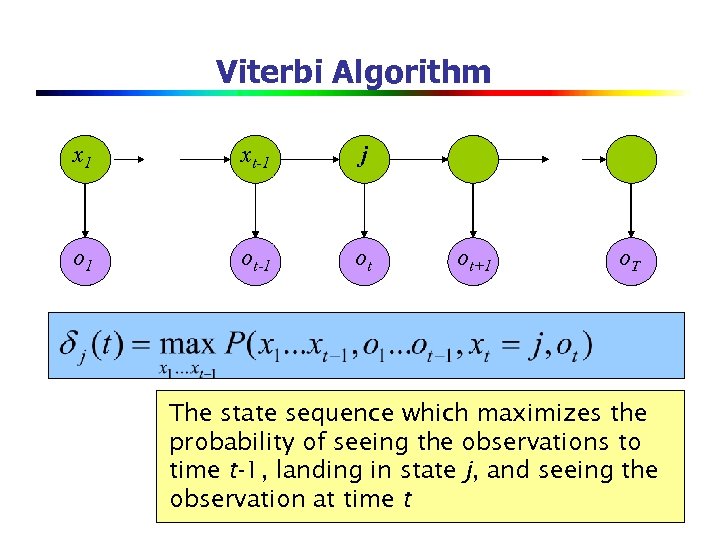

Viterbi Algorithm x 1 xt-1 j o 1 ot-1 ot ot+1 o. T The state sequence which maximizes the probability of seeing the observations to time t-1, landing in state j, and seeing the observation at time t

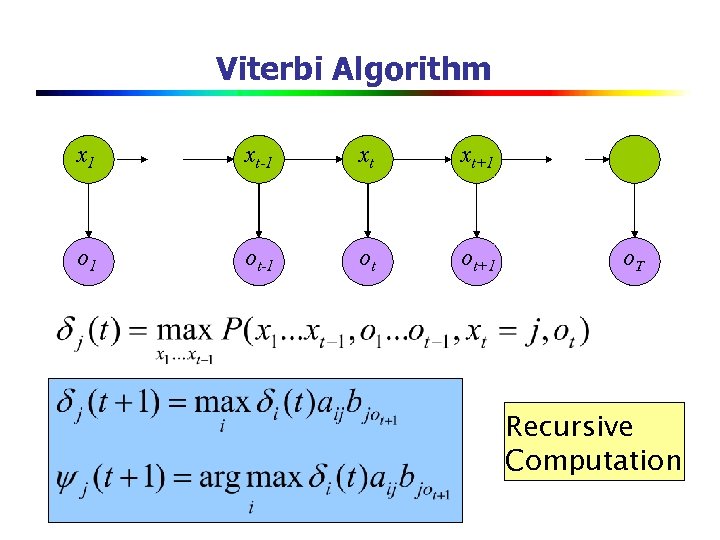

Viterbi Algorithm x 1 xt-1 xt xt+1 o 1 ot-1 ot ot+1 o. T Recursive Computation

Viterbi : Dynamic Programming No 115 Grant street Mumbai 400070 115 Grant ………. . 400070 House Road City Pin t Pin o o t Pin

Viterbi : Dynamic Programming No 115 Grant street Mumbai 400070 115 Grant ………. . 400070 House Road City Pin t Pin o o t Pin

Viterbi Algorithm x 1 xt-1 xt xt+1 x. T o 1 ot-1 ot ot+1 o. T Compute the most likely state sequence by working backwards

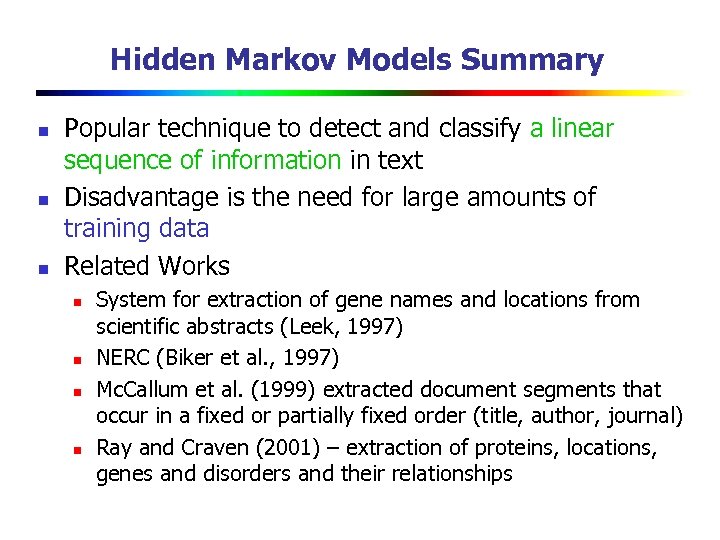

Hidden Markov Models Summary n n n Popular technique to detect and classify a linear sequence of information in text Disadvantage is the need for large amounts of training data Related Works n n System for extraction of gene names and locations from scientific abstracts (Leek, 1997) NERC (Biker et al. , 1997) Mc. Callum et al. (1999) extracted document segments that occur in a fixed or partially fixed order (title, author, journal) Ray and Craven (2001) – extraction of proteins, locations, genes and disorders and their relationships

IE Technique Landscape

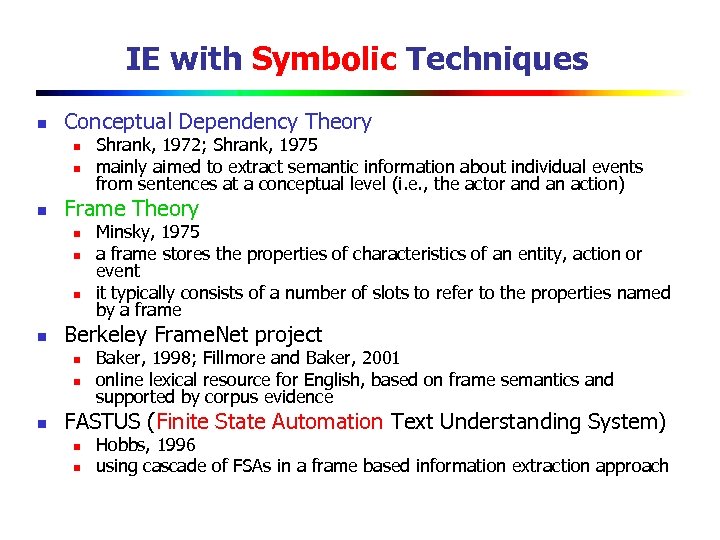

IE with Symbolic Techniques n Conceptual Dependency Theory n n n Frame Theory n n Minsky, 1975 a frame stores the properties of characteristics of an entity, action or event it typically consists of a number of slots to refer to the properties named by a frame Berkeley Frame. Net project n n n Shrank, 1972; Shrank, 1975 mainly aimed to extract semantic information about individual events from sentences at a conceptual level (i. e. , the actor and an action) Baker, 1998; Fillmore and Baker, 2001 online lexical resource for English, based on frame semantics and supported by corpus evidence FASTUS (Finite State Automation Text Understanding System) n n Hobbs, 1996 using cascade of FSAs in a frame based information extraction approach

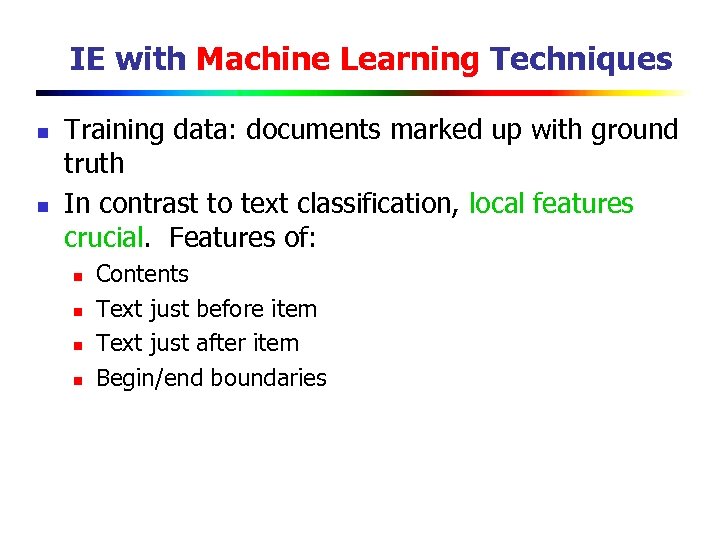

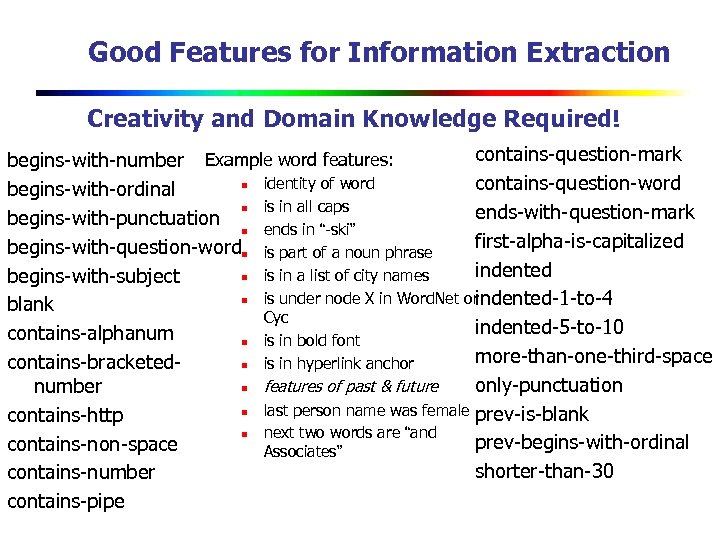

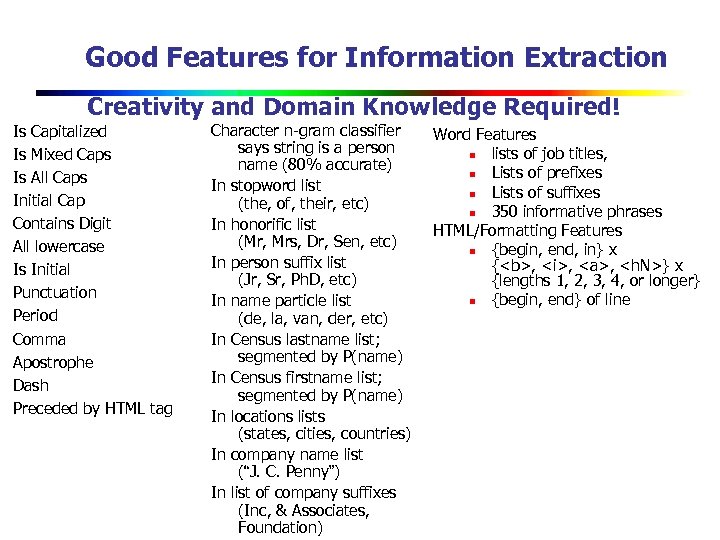

IE with Machine Learning Techniques n n Training data: documents marked up with ground truth In contrast to text classification, local features crucial. Features of: n n Contents Text just before item Text just after item Begin/end boundaries

Good Features for Information Extraction Creativity and Domain Knowledge Required! contains-question-mark begins-with-number Example word features: n identity of word contains-question-word begins-with-ordinal n is in all caps ends-with-question-mark begins-with-punctuation n ends in “-ski” first-alpha-is-capitalized begins-with-question-wordn is part of a noun phrase indented n is in a list of city names begins-with-subject n is under node X in Word. Net or indented-1 -to-4 blank Cyc indented-5 -to-10 contains-alphanum n is in bold font more-than-one-third-space contains-bracketedn is in hyperlink anchor only-punctuation n features of past & future number n last person name was female prev-is-blank contains-http n next two words are “and prev-begins-with-ordinal contains-non-space Associates” shorter-than-30 contains-number contains-pipe

Good Features for Information Extraction Creativity and Domain Knowledge Required! Is Capitalized Is Mixed Caps Is All Caps Initial Cap Contains Digit All lowercase Is Initial Punctuation Period Comma Apostrophe Dash Preceded by HTML tag Character n-gram classifier says string is a person name (80% accurate) In stopword list (the, of, their, etc) In honorific list (Mr, Mrs, Dr, Sen, etc) In person suffix list (Jr, Sr, Ph. D, etc) In name particle list (de, la, van, der, etc) In Census lastname list; segmented by P(name) In Census firstname list; segmented by P(name) In locations lists (states, cities, countries) In company name list (“J. C. Penny”) In list of company suffixes (Inc, & Associates, Foundation) Word Features n lists of job titles, n Lists of prefixes n Lists of suffixes n 350 informative phrases HTML/Formatting Features n {begin, end, in} x {<b>, <i>, <a>, <h. N>} x {lengths 1, 2, 3, 4, or longer} n {begin, end} of line

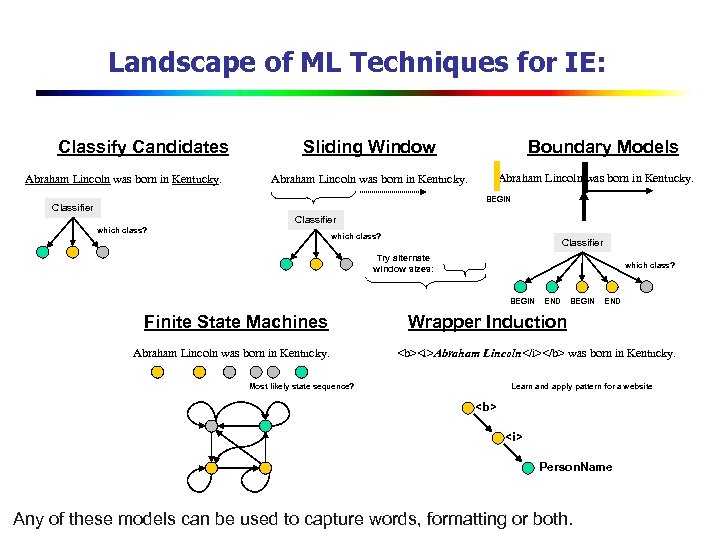

Landscape of ML Techniques for IE: Classify Candidates Abraham Lincoln was born in Kentucky. Sliding Window Boundary Models Abraham Lincoln was born in Kentucky. BEGIN Classifier which class? Classifier Try alternate window sizes: which class? BEGIN Finite State Machines Abraham Lincoln was born in Kentucky. END BEGIN END Wrapper Induction <b><i>Abraham Lincoln</i></b> was born in Kentucky. Most likely state sequence? Learn and apply pattern for a website <b> <i> Person. Name Any of these models can be used to capture words, formatting or both.

![IE History Pre-Web n Mostly news articles n De Jong’s FRUMP [1982] Hand-built system IE History Pre-Web n Mostly news articles n De Jong’s FRUMP [1982] Hand-built system](https://present5.com/presentation/83a01b955d6e4c6562345653de25858d/image-86.jpg)

IE History Pre-Web n Mostly news articles n De Jong’s FRUMP [1982] Hand-built system to fill Schank-style “scripts” from news wire Message Understanding Conference (MUC) DARPA [’ 87 -’ 95], TIPSTER [’ 92 -’ 96] n n n Most early work dominated by hand-built models n E. g. SRI’s FASTUS, hand-built FSMs. n But by 1990’s, some machine learning: Lehnert, Cardie, Grishman and then HMMs: Elkan [Leek ’ 97], BBN [Bikel et al ’ 98] Web n AAAI ’ 94 Spring Symposium on “Software Agents” n n Tom Mitchell’s Web. KB, ‘ 96 n n Much discussion of ML applied to Web. Maes, Mitchell, Etzioni. Build KB’s from the Web. Wrapper Induction n Initially hand-build, then ML: [Soderland ’ 96], [Kushmeric ’ 97], …

Summary Sliding Window n Abraham Lincoln was born in Kentucky. n Classifier which class? n Try alternate window sizes: n Finite State Machines Abraham Lincoln was born in Kentucky. Most likely state sequence? Information Extraction Sliding Window From FST(Finite State Transducer) to HMM Wrapper Induction n n Wrapper toolkits LR Wrapper

![Readings n [1] M. Ion, M. Steve, and K. Craig, "A hierarchical approach to Readings n [1] M. Ion, M. Steve, and K. Craig, "A hierarchical approach to](https://present5.com/presentation/83a01b955d6e4c6562345653de25858d/image-88.jpg)

Readings n [1] M. Ion, M. Steve, and K. Craig, "A hierarchical approach to wrapper induction, " in Proceedings of the third annual conference on Autonomous Agents. Seattle, Washington, United States: ACM, 1999.

Thank You! Q&A

83a01b955d6e4c6562345653de25858d.ppt