78ee5e2550630af975cf47376460ab4b.ppt

- Количество слайдов: 48

Improving Systems Management Policies Using Hybrid Reinforcement Learning Gerry Tesauro <gtesauro@us. ibm. com> IBM TJ Watson Research Center Joint work with Rajarshi Das (IBM), Nick Jong (U. Texas) Mohamed Bennani (George Mason Univ. )

Outline: Main points of the talk n Introduction: Brief Overview of “Autonomic Computing” ¨ n Grandiose Motivation: Combining Machine Learning with domain knowledge in Autonomic Computing Problem Description ¨ ¨ n Scenario: Online server allocation in Internet Data Center Prototype Implementation Reinforcement Learning Approach ¨ Quick RL Overview ¨ Prior Online RL Approach ¨ New Hybrid RL Approach n Results/Insights into Hybrid RL outperformance n Fresh results on new application: Power Management 2

Challenges in Systems Management n Large-scale, heterogeneous distributed systems with highly dynamic, complex multicomponent interactions n Large volumes of real-time high -dimensional data, but also lots of missing information and uncertainty n Too much complexity, too few (skilled) administrators Need for “self-managing” systems autonomic computing 3

What is Autonomic Computing? “Computing systems that manage themselves in accordance with high-level objectives from humans” Kephart and Chess, A Vision of Autonomic Computing, IEEE Computer, 2003 n “Self-management” capabilities include ¨ ¨ Self-Healing: Automated detection, diagnosis, and repair of localized software/hardware problems. ¨ Self-Optimization: Automatic and continual adaptive tuning of hundreds of parameters (database params, server params, …) affecting performance & efficiency ¨ n Self-Configuration: Automated configuration of components, systems according to high-level policies; rest of system adjusts seamlessly. Self-Protection: Automated defense against malicious attacks or cascading failures; use early warning to anticipate and prevent system-wide failures. Good application domain for ML: rich opportunities, little previously done 4

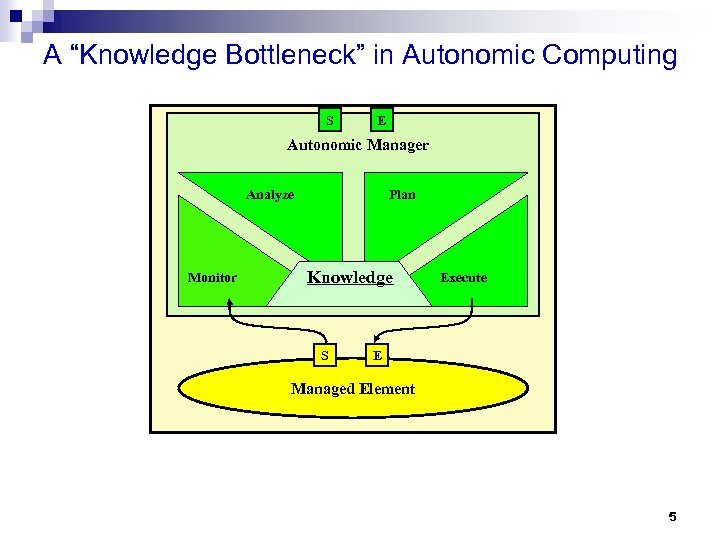

A “Knowledge Bottleneck” in Autonomic Computing S E Autonomic Manager Analyze Monitor Plan Knowledge S Execute E Managed Element 5

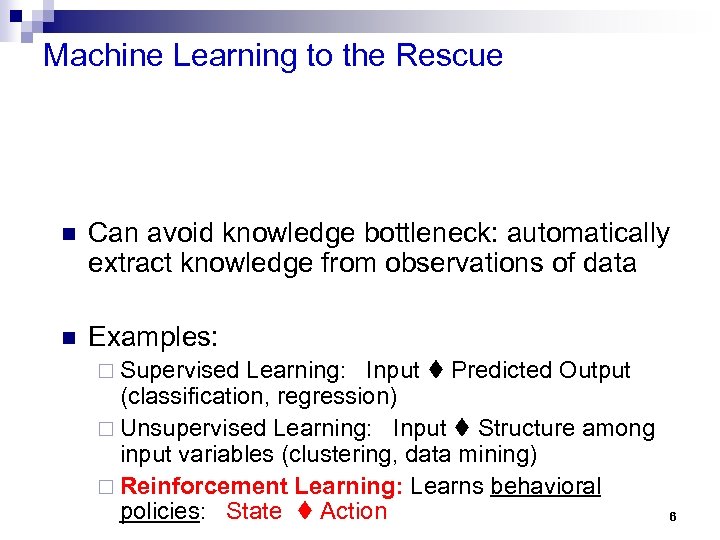

Machine Learning to the Rescue n Can avoid knowledge bottleneck: automatically extract knowledge from observations of data n Examples: ¨ Supervised Learning: Input Predicted Output (classification, regression) ¨ Unsupervised Learning: Input Structure among input variables (clustering, data mining) ¨ Reinforcement Learning: Learns behavioral policies: State Action 6

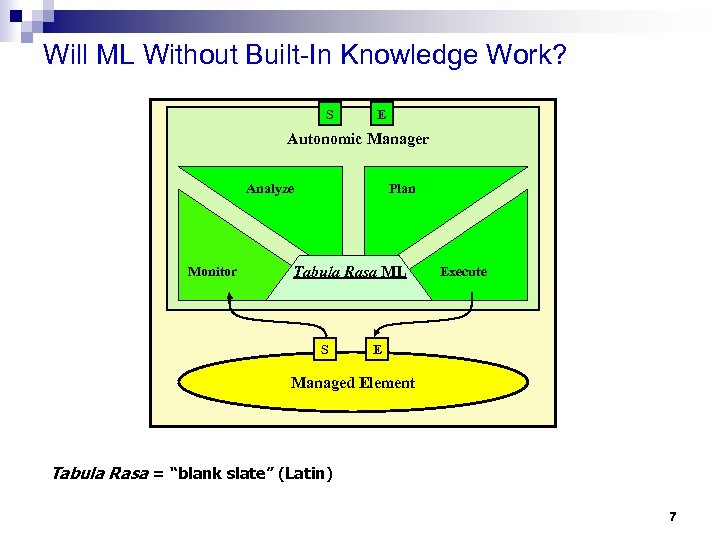

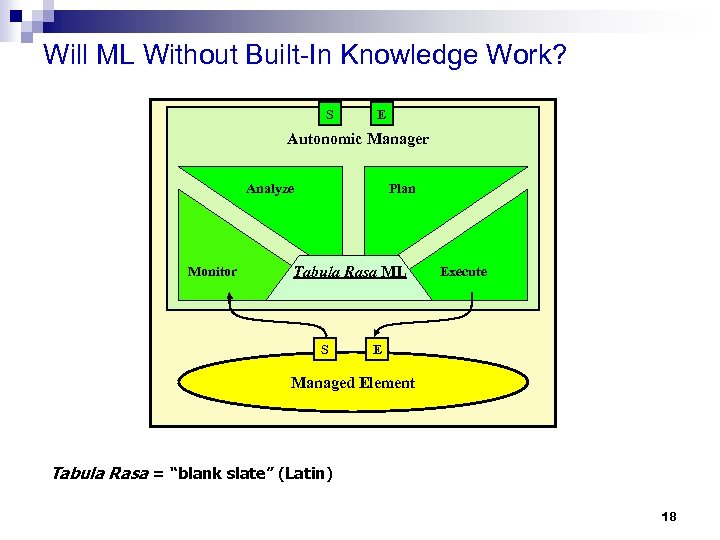

Will ML Without Built-In Knowledge Work? S E Autonomic Manager Analyze Monitor Plan Tabula Rasa ML S Execute E Managed Element Tabula Rasa = “blank slate” (Latin) 7

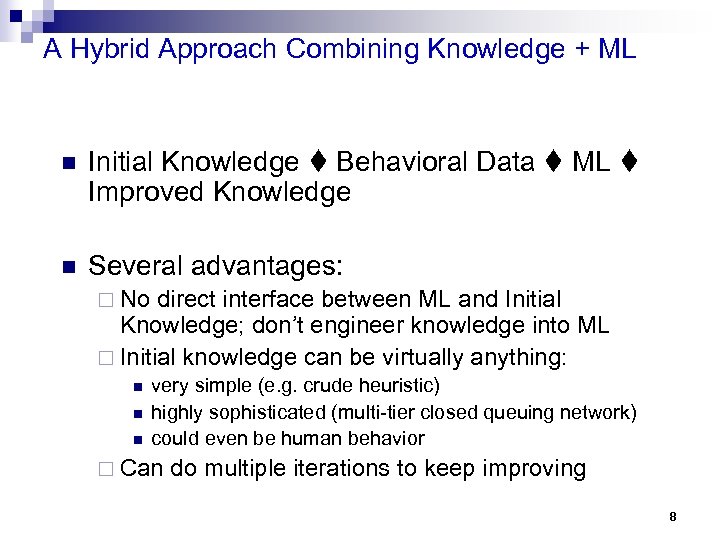

A Hybrid Approach Combining Knowledge + ML n Initial Knowledge Behavioral Data ML Improved Knowledge n Several advantages: ¨ No direct interface between ML and Initial Knowledge; don’t engineer knowledge into ML ¨ Initial knowledge can be virtually anything: n n n very simple (e. g. crude heuristic) highly sophisticated (multi-tier closed queuing network) could even be human behavior ¨ Can do multiple iterations to keep improving 8

Outline: Main points of the talk n Introduction: n Problem Description ¨ Scenario: Online server allocation in Internet Data Center ¨ Data Center Prototype Implementation n Reinforcement Learning Approach n Results n Insights into Hybrid RL outperformance n Wrapup 9

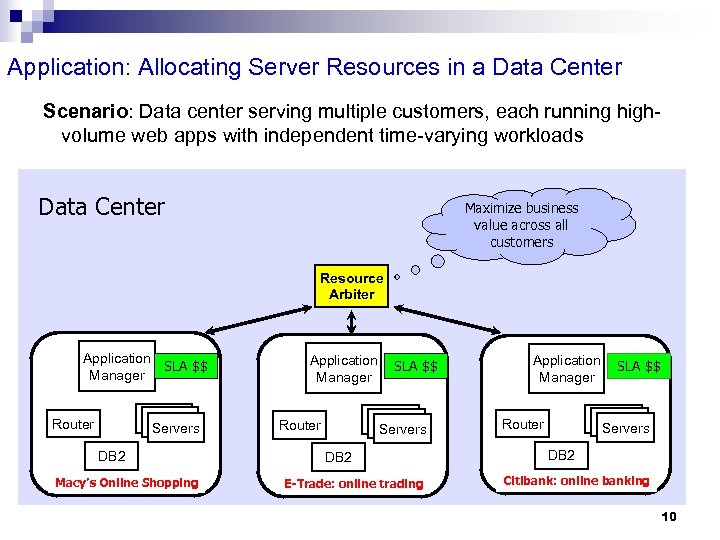

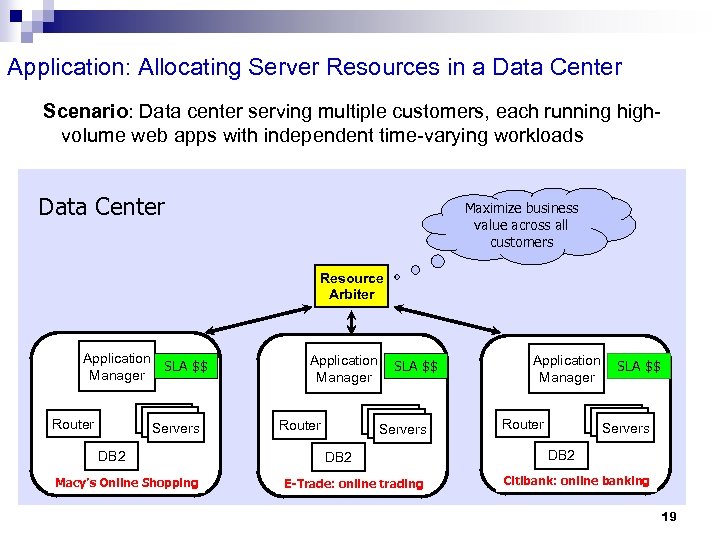

Application: Allocating Server Resources in a Data Center Scenario: Data center serving multiple customers, each running highvolume web apps with independent time-varying workloads Data Center Maximize business value across all customers Resource Arbiter Application SLA $$ Manager Servers Router DB 2 Macy’s Online Shopping Application Manager SLA $$ Servers Router DB 2 E-Trade: online trading Application Manager SLA $$ Servers Router DB 2 Citibank: online banking 10

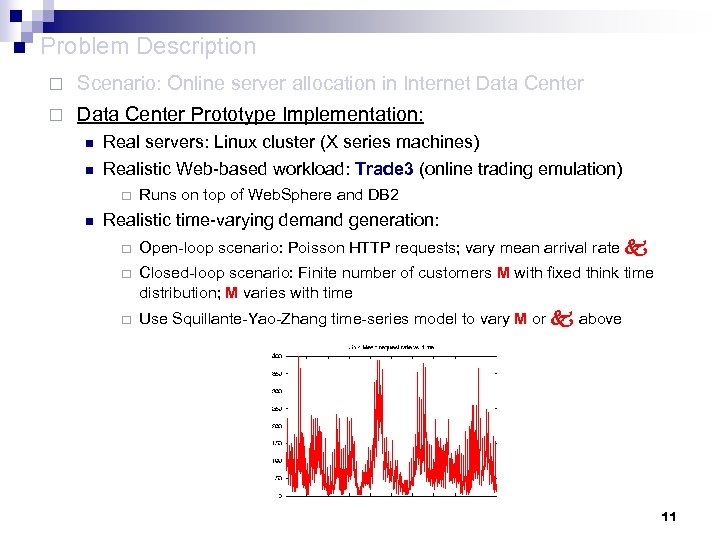

n Problem Description ¨ Scenario: Online server allocation in Internet Data Center ¨ Data Center Prototype Implementation: n Real servers: Linux cluster (X series machines) n Realistic Web-based workload: Trade 3 (online trading emulation) ¨ n Runs on top of Web. Sphere and DB 2 Realistic time-varying demand generation: ¨ Open-loop scenario: Poisson HTTP requests; vary mean arrival rate ¨ Closed-loop scenario: Finite number of customers M with fixed think time distribution; M varies with time ¨ Use Squillante-Yao-Zhang time-series model to vary M or above 11

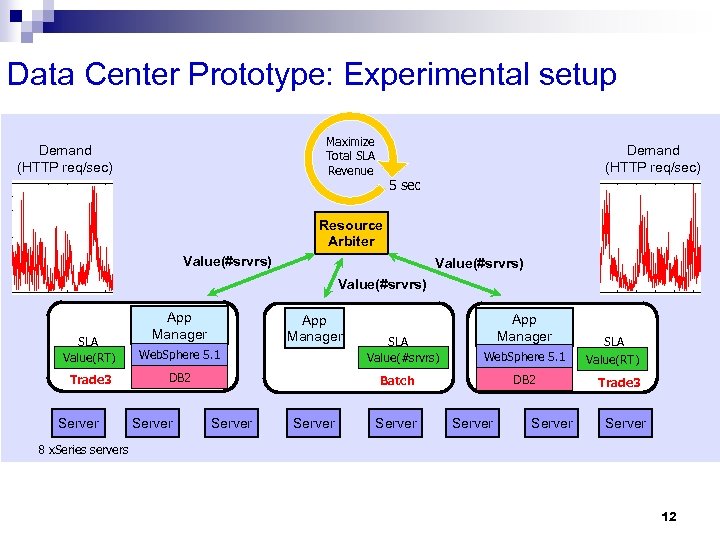

Data Center Prototype: Experimental setup Maximize Total SLA Revenue Demand (HTTP req/sec) 5 sec Resource Arbiter Value(#srvrs) App Manager SLA Value(RT) Web. Sphere 5. 1 Trade 3 DB 2 Server SLA Value(#srvrs) App Manager Web. Sphere 5. 1 DB 2 Batch Server Server SLA Value(RT) Trade 3 Server 8 x. Series servers 12

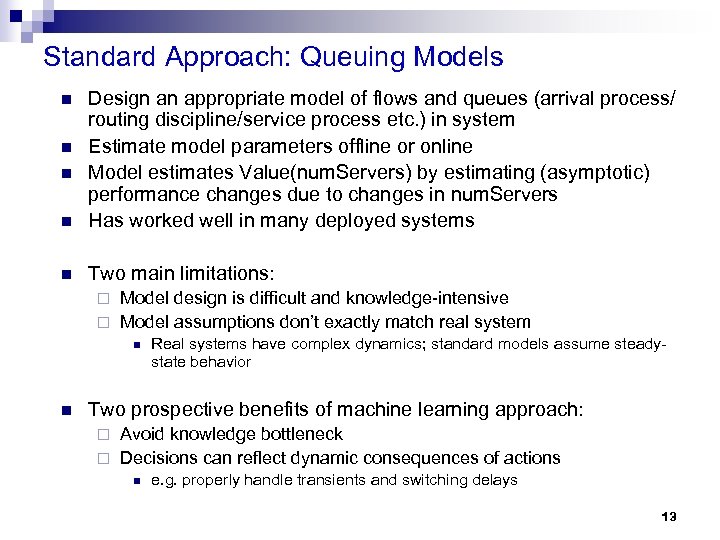

Standard Approach: Queuing Models n Design an appropriate model of flows and queues (arrival process/ routing discipline/service process etc. ) in system Estimate model parameters offline or online Model estimates Value(num. Servers) by estimating (asymptotic) performance changes due to changes in num. Servers Has worked well in many deployed systems n Two main limitations: n n n Model design is difficult and knowledge-intensive ¨ Model assumptions don’t exactly match real system ¨ n n Real systems have complex dynamics; standard models assume steadystate behavior Two prospective benefits of machine learning approach: Avoid knowledge bottleneck ¨ Decisions can reflect dynamic consequences of actions ¨ n e. g. properly handle transients and switching delays 13

Outline: Main points of the talk n Introduction n Problem Description n Reinforcement Learning Approach ¨ Quick RL Overview n Results n Insights into Hybrid RL outperformance n Wrapup 14

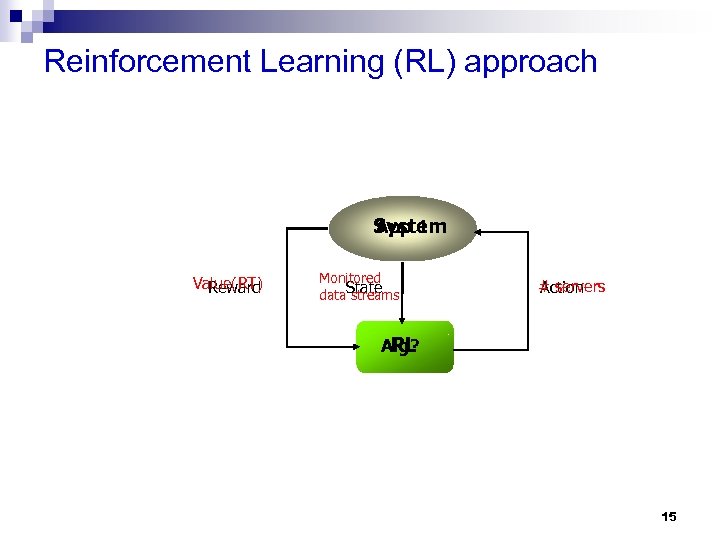

Reinforcement Learning (RL) approach App 1 System Value(RT) Reward Monitored data. State streams # servers Action Alg? RL 15

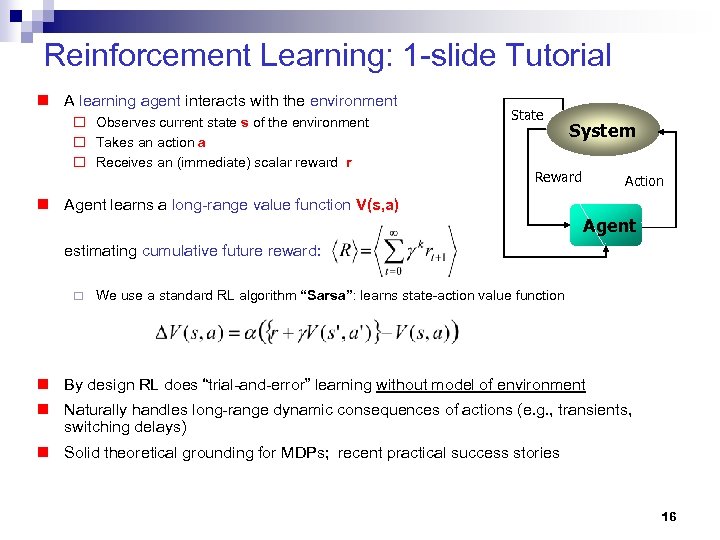

Reinforcement Learning: 1 -slide Tutorial n A learning agent interacts with the environment ¨ Observes current state s of the environment ¨ Takes an action a ¨ Receives an (immediate) scalar reward r State System Reward Action n Agent learns a long-range value function V(s, a) Agent estimating cumulative future reward: ¨ We use a standard RL algorithm “Sarsa”: learns state-action value function n By design RL does “trial-and-error” learning without model of environment n Naturally handles long-range dynamic consequences of actions (e. g. , transients, switching delays) n Solid theoretical grounding for MDPs; recent practical success stories 16

Outline: Main points of the talk n Introduction n Problem Description n Reinforcement Learning Approach ¨ Quick RL Overview ¨ Online RL Approach n Results n Insights into Hybrid RL outperformance n Wrapup 17

Will ML Without Built-In Knowledge Work? S E Autonomic Manager Analyze Monitor Plan Tabula Rasa ML S Execute E Managed Element Tabula Rasa = “blank slate” (Latin) 18

Application: Allocating Server Resources in a Data Center Scenario: Data center serving multiple customers, each running highvolume web apps with independent time-varying workloads Data Center Maximize business value across all customers Resource Arbiter Application SLA $$ Manager Servers Router DB 2 Macy’s Online Shopping Application Manager SLA $$ Servers Router DB 2 E-Trade: online trading Application Manager SLA $$ Servers Router DB 2 Citibank: online banking 19

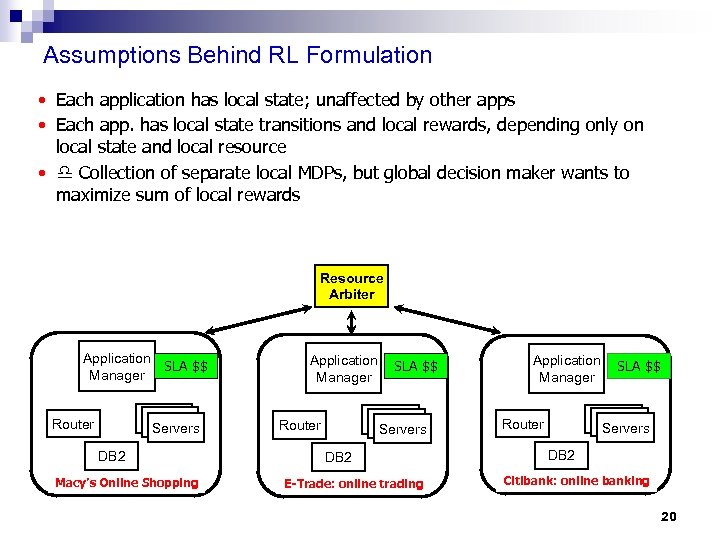

Assumptions Behind RL Formulation • Each application has local state; unaffected by other apps • Each app. has local state transitions and local rewards, depending only on local state and local resource • Collection of separate local MDPs, but global decision maker wants to maximize sum of local rewards Resource Arbiter Application SLA $$ Manager Servers Router DB 2 Macy’s Online Shopping Application Manager SLA $$ Servers Router DB 2 E-Trade: online trading Application Manager SLA $$ Servers Router DB 2 Citibank: online banking 20

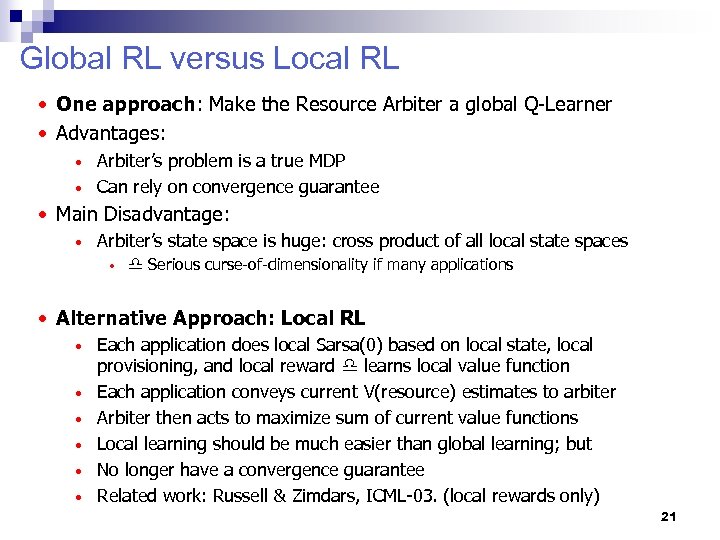

Global RL versus Local RL • One approach: Make the Resource Arbiter a global Q-Learner • Advantages: Arbiter’s problem is a true MDP • Can rely on convergence guarantee • • Main Disadvantage: • Arbiter’s state space is huge: cross product of all local state spaces • Serious curse-of-dimensionality if many applications • Alternative Approach: Local RL • • • Each application does local Sarsa(0) based on local state, local provisioning, and local reward learns local value function Each application conveys current V(resource) estimates to arbiter Arbiter then acts to maximize sum of current value functions Local learning should be much easier than global learning; but No longer have a convergence guarantee Related work: Russell & Zimdars, ICML-03. (local rewards only) 21

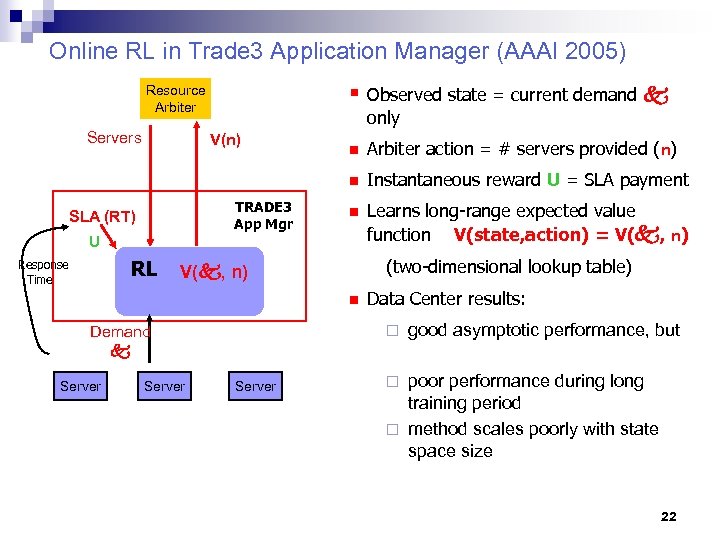

Online RL in Trade 3 Application Manager (AAAI 2005) § Observed state = current demand Resource Arbiter Servers only V(n) TRADE 3 App Mgr Arbiter action = # servers provided (n) n SLA (RT) n Instantaneous reward U = SLA payment n Learns long-range expected value function V(state, action) = V( , n) U RL Response Time (two-dimensional lookup table) V( , n) n ¨ Demand Server Data Center results: Server Application Environment good asymptotic performance, but poor performance during long training period ¨ method scales poorly with state space size ¨ 22

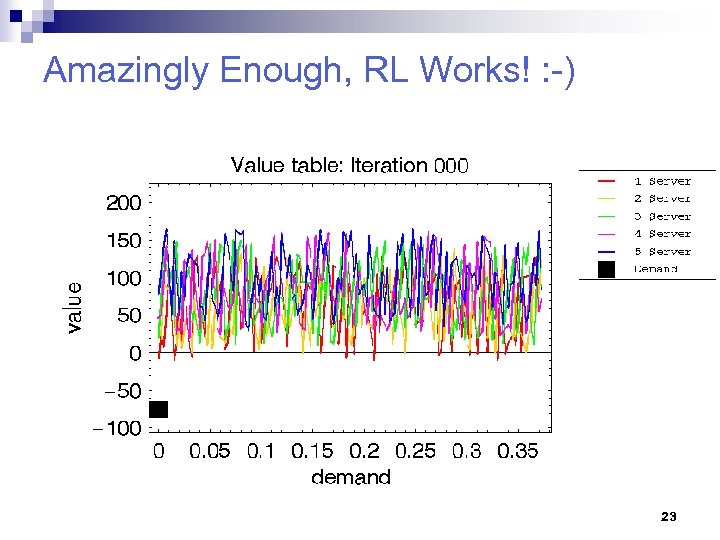

Amazingly Enough, RL Works! : -) Results of overnight training (~25 k RL updates = 16 hours real time) with random initial condition 23

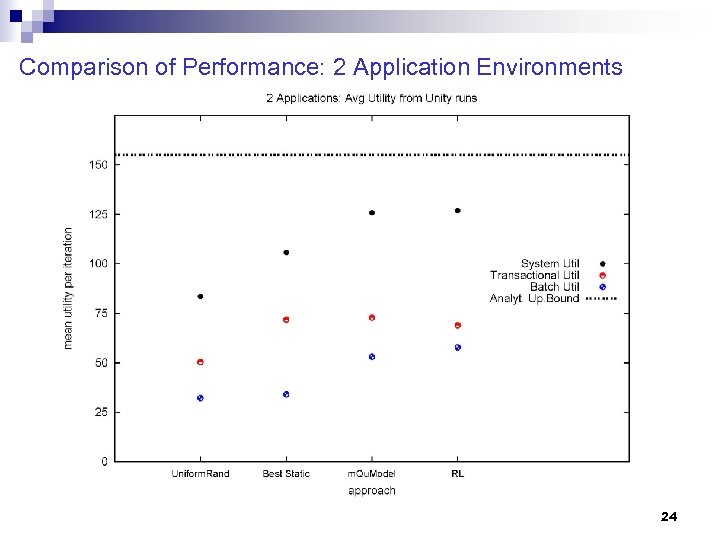

Comparison of Performance: 2 Application Environments 24

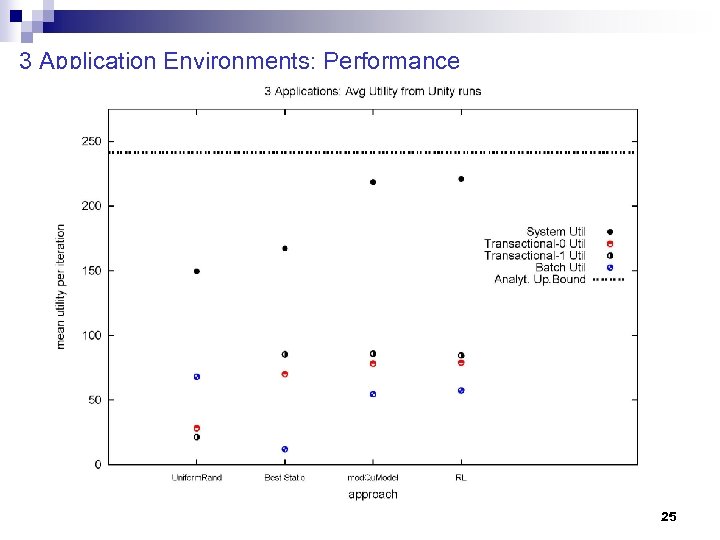

3 Application Environments: Performance 25

Outline: Main points of the talk n Introduction n Problem Description n Reinforcement Learning Approach ¨ Quick RL Overview ¨ Online RL Approach ¨ Hybrid RL Approach (Tesauro et al. , ICAC 2006) n Results n Insights into Hybrid RL outperformance n Wrapup 26

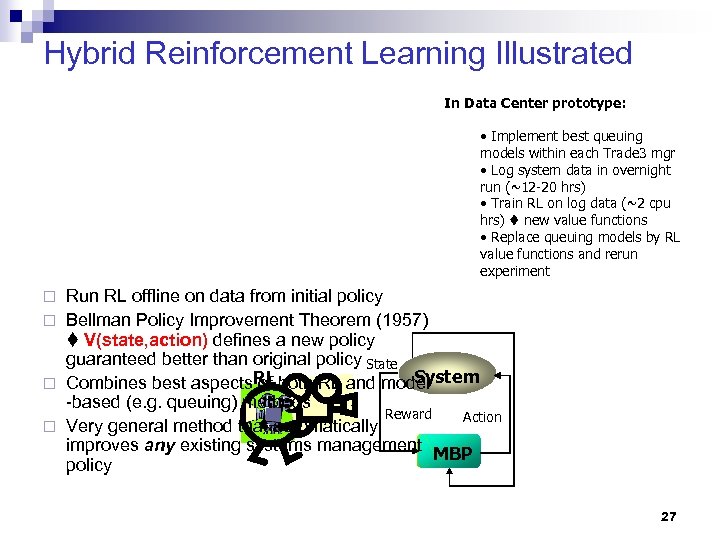

Hybrid Reinforcement Learning Illustrated In Data Center prototype: • Implement best queuing models within each Trade 3 mgr • Log system data in overnight run (~12 -20 hrs) • Train RL on log data (~2 cpu hrs) new value functions • Replace queuing models by RL value functions and rerun experiment Run RL offline on data from initial policy ¨ Bellman Policy Improvement Theorem (1957) V(state, action) defines a new policy guaranteed better than original policy State System ¨ Combines best aspects RL both RL and model of -based (e. g. queuing) methods RL Reward Action ¨ Very general method that automatically improves any existing systems management MBP RL policy ¨ 27

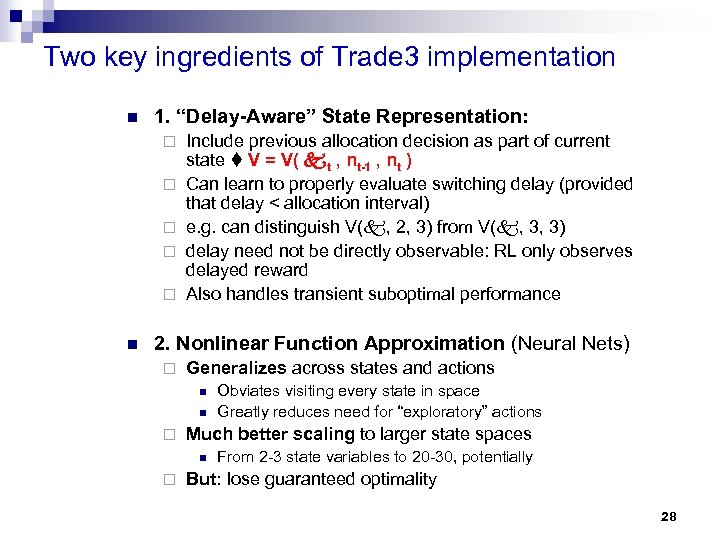

Two key ingredients of Trade 3 implementation n 1. “Delay-Aware” State Representation: ¨ ¨ ¨ n Include previous allocation decision as part of current state V = V( t , nt-1 , nt ) Can learn to properly evaluate switching delay (provided that delay < allocation interval) e. g. can distinguish V( , 2, 3) from V( , 3, 3) delay need not be directly observable: RL only observes delayed reward Also handles transient suboptimal performance 2. Nonlinear Function Approximation (Neural Nets) ¨ Generalizes across states and actions n n ¨ Much better scaling to larger state spaces n ¨ Obviates visiting every state in space Greatly reduces need for “exploratory” actions From 2 -3 state variables to 20 -30, potentially But: lose guaranteed optimality 28

Outline: Main points of the talk n Introduction n Problem Description n Reinforcement Learning Approach n Results n Insights into Hybrid RL outperformance n Wrapup 29

Results: Open Loop, No Switching Delay +2. 6% Trade 3 RT +12. 7% Batch thrput -0. 4% Trade 3 RT +38. 9% Batch thrput +73% Trade 3 RT +221% Batch thrput 30

Results: Closed Loop, No Switching Delay 31

Results: Effects of Switching Delay 32

Outline: Main points of the talk n Introduction n Problem Description n Reinforcement Learning Approach n Results n Insights into Hybrid RL outperformance n Wrapup 33

Insights into Hybrid RL outperformance n 1. Less biased estimation errors ¨ Queuing model predicts indirectly: RT SLA(RT) V n ¨ n RL estimates utility directly less biased estimate of V 2. RL handles transients and switching delays ¨ n Nonlinear SLA induces overprovisioning bias Steady-state queuing models cannot 3. RL learns to avoid thrashing 34

Policy Hysteresis in Learned Value Function Stable joint allocations (T 1, T 2, Batch) at fixed 2 35

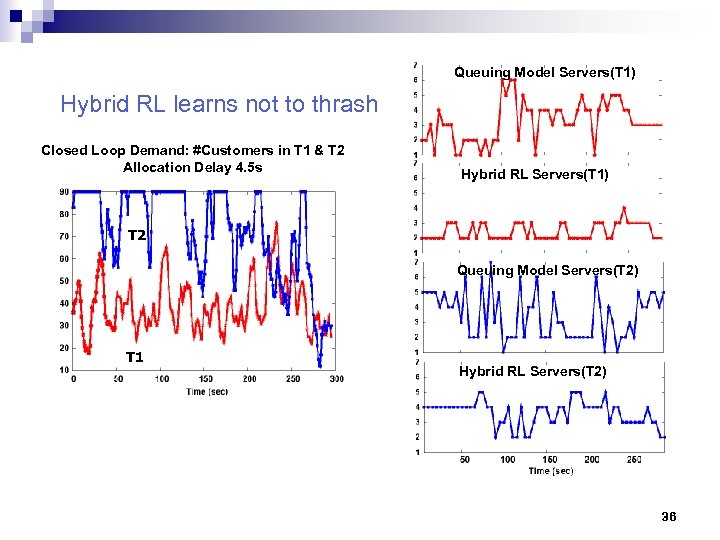

Queuing Model Servers(T 1) Hybrid RL learns not to thrash Closed Loop Demand: #Customers in T 1 & T 2 Allocation Delay 4. 5 s Hybrid RL Servers(T 1) T 2 Queuing Model Servers(T 2) T 1 Hybrid RL Servers(T 2) 36

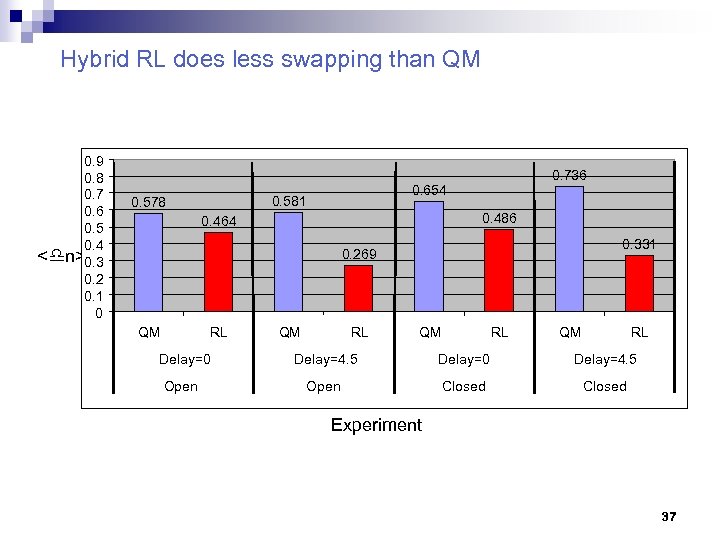

Hybrid RL does less swapping than QM 0. 9 0. 8 0. 7 0. 6 0. 5 0. 4 < n>0. 3 0. 2 0. 1 0 0. 581 0. 578 0. 736 0. 654 0. 486 0. 464 0. 331 0. 269 QM RL Delay=0 Open QM RL Delay=4. 5 Delay=0 Delay=4. 5 Open Closed Experiment 37

Outline: Main points of the talk n Introduction n Problem Description n Reinforcement Learning Approach n Results n Insights into Hybrid RL outperformance n Power Management (Kephart et al. , ICAC 2007) 38

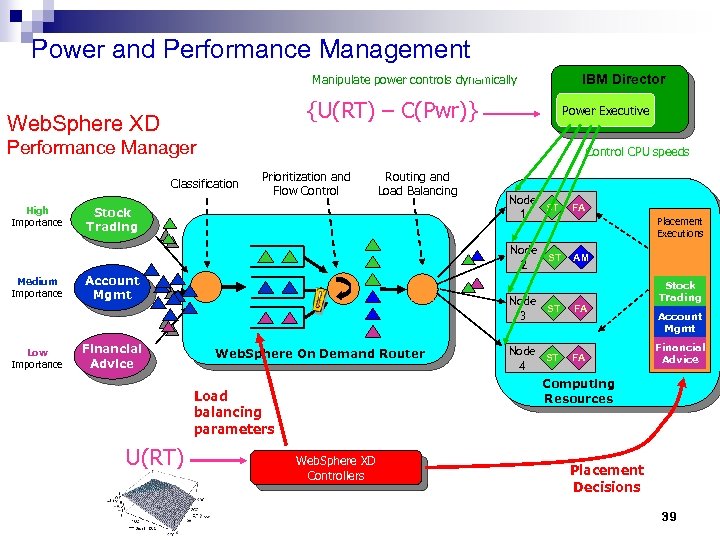

Power and Performance Management IBM Director Manipulate power controls dynamically {U(RT) – C(Pwr)} Web. Sphere XD Power Executive Performance Manager Control CPU speeds Classification Prioritization and Flow Control Routing and Load Balancing Medium Importance Low Importance Stock Trading Account Mgmt Financial Advice AM FA AM Placement Executions Node ST 3 Web. Sphere On Demand Router FA Node ST 4 FA Stock Trading Account Mgmt Financial Advice Computing Resources Load balancing parameters U(RT) Node ST 1 Node ST 2 High Importance Web. Sphere XD Controllers Placement Decisions 39

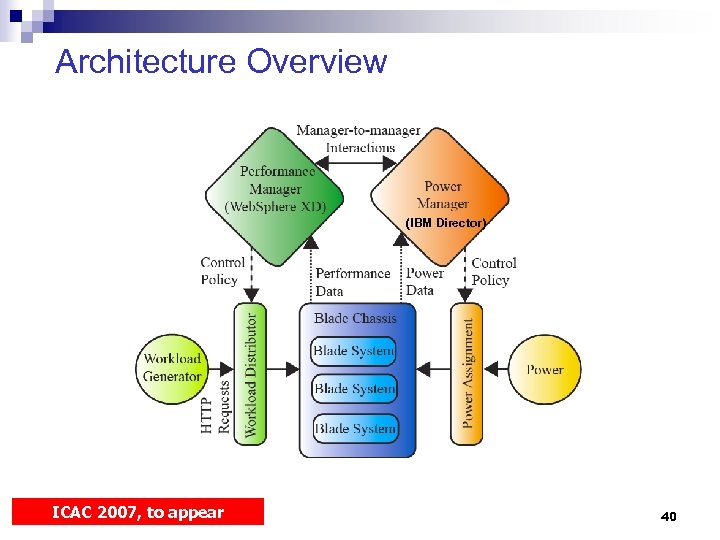

Architecture Overview (IBM Director) ICAC 2007, to appear 40

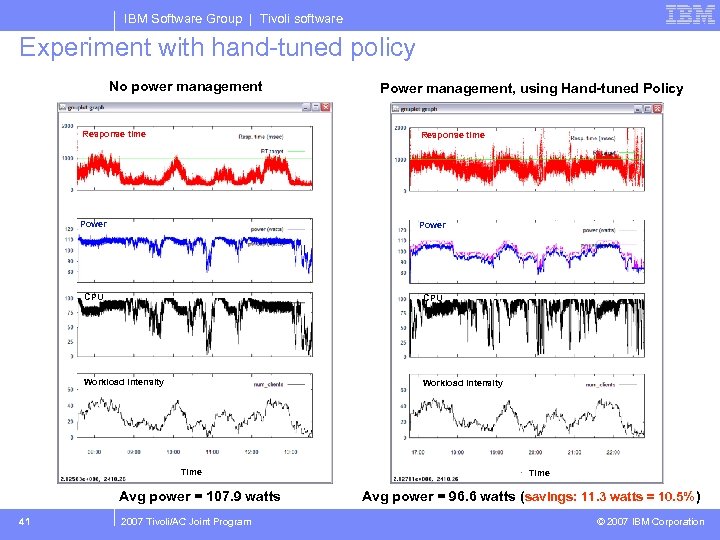

IBM Software Group | Tivoli software Experiment with hand-tuned policy No power management Power management, using Hand-tuned Policy Response time Power CPU Workload intensity Time Avg power = 107. 9 watts 41 2007 Tivoli/AC Joint Program Time Avg power = 96. 6 watts (savings: 11. 3 watts = 10. 5% ) © 2007 IBM Corporation

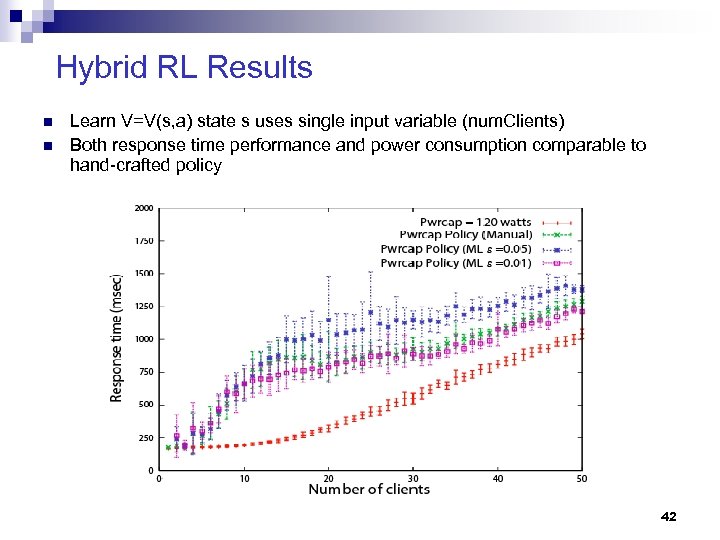

Hybrid RL Results n n Learn V=V(s, a) state s uses single input variable (num. Clients) Both response time performance and power consumption comparable to hand-crafted policy 42

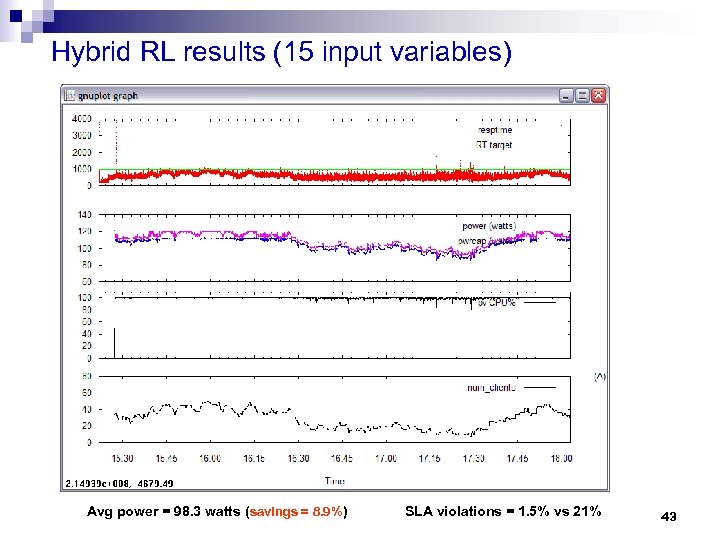

Hybrid RL results (15 input variables) Avg power = 98. 3 watts (savings = 8. 9%) SLA violations = 1. 5% vs 21% 43

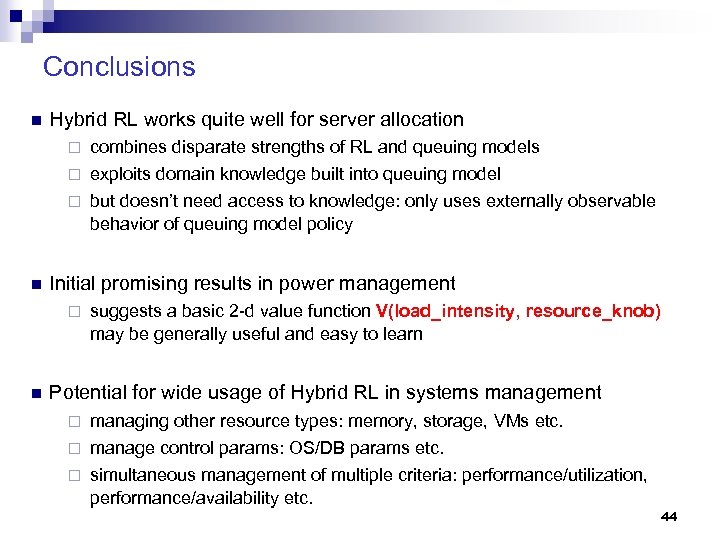

Conclusions n Hybrid RL works quite well for server allocation combines disparate strengths of RL and queuing models ¨ exploits domain knowledge built into queuing model ¨ but doesn’t need access to knowledge: only uses externally observable behavior of queuing model policy ¨ n Initial promising results in power management ¨ n suggests a basic 2 -d value function V(load_intensity, resource_knob) may be generally useful and easy to learn Potential for wide usage of Hybrid RL in systems management managing other resource types: memory, storage, VMs etc. ¨ manage control params: OS/DB params etc. ¨ simultaneous management of multiple criteria: performance/utilization, performance/availability etc. ¨ 44

For further info/reading material n Papers: “Online Resource Allocation using Decompositional Reinforcement Learning, ” G. Tesauro, Proc. of AAAI-05. ¨ “A Hybrid Reinforcement Learning Approach to Autonomic Computing” G. Tesauro et al. , Proc. of ICAC-06. ¨ “Coordinating Multiple Autonomic Managers to Achieve Specified Power. Performance Tradeoffs, ” J. Kephart et al. , Proc. of ICAC-07. ¨ n More info about R & D in Autonomic Computing: Our work: www. research. ibm. com/nedar ¨ AC toolkit (Autonomic Manager Tool. Set): AMTS v 1. 0 available as part of Emerging Technologies Toolkit v 1. 1 on IBM alpha. Works: www. alphaworks. com ¨ IBM: www. research. ibm. com/autonomic ¨ Intl. Conf. on Autonomic Computing (ICAC-07): www. autonomicconference. org ¨ n n Summer internships: email me: gtesauro@us. ibm. com Thanks! Any questions? ? 45

The End 46

47

Evolution of Computing 48

78ee5e2550630af975cf47376460ab4b.ppt