5571aa8adec2d626a6ddc74dcdc3466d.ppt

- Количество слайдов: 111

IEEE International Conference on Consumer Electronics Los Angeles, CA, June 2001 MPEG-7 Visual Descriptors B. S. Manjunath University of California, Santa Barbara, USA manj@ece. ucsb. edu

IEEE International Conference on Consumer Electronics Los Angeles, CA, June 2001 MPEG-7 Visual Descriptors B. S. Manjunath University of California, Santa Barbara, USA manj@ece. ucsb. edu

Acknowledgements n n n n n Prof. P. Salembier (for permitting me to use some of his slides fr our tutorial at ICIP’ 2000). Editors of the MPEG-7 XM and WD. Dr. L. Cieplensky, Mitsubishi Electric Dr. A. Divakaran, Mitsubishi Dr. S. Jeannin, Philips research lab Prof. W. Y. Kim, Hanyang University Dr. M. Bober, Mitsubishi Electric Dr. H. J. Kim, LG Electronics Dr. S. Park, ETRI

Acknowledgements n n n n n Prof. P. Salembier (for permitting me to use some of his slides fr our tutorial at ICIP’ 2000). Editors of the MPEG-7 XM and WD. Dr. L. Cieplensky, Mitsubishi Electric Dr. A. Divakaran, Mitsubishi Dr. S. Jeannin, Philips research lab Prof. W. Y. Kim, Hanyang University Dr. M. Bober, Mitsubishi Electric Dr. H. J. Kim, LG Electronics Dr. S. Park, ETRI

What you may (may not) expect. . n n n Overview of the visual descriptors Their capabilities and limitations Some application examples Pointers to publicly available documents Do not expect Programming and implementation details – these are available in the MPEG-7 e. Xperimental Model (XM) document. u Binary bit stream syntax – see the MPEG-7 Committee Draft(s). u

What you may (may not) expect. . n n n Overview of the visual descriptors Their capabilities and limitations Some application examples Pointers to publicly available documents Do not expect Programming and implementation details – these are available in the MPEG-7 e. Xperimental Model (XM) document. u Binary bit stream syntax – see the MPEG-7 Committee Draft(s). u

Audio-Visual Content Description and the MPEG-7 Standard n n Objective, goals, requirements and applications Basic component of the MPEG-7 standard u Description Definition Language u Description Schemes u A/V Descriptors

Audio-Visual Content Description and the MPEG-7 Standard n n Objective, goals, requirements and applications Basic component of the MPEG-7 standard u Description Definition Language u Description Schemes u A/V Descriptors

Low level Visual Information Description n n Color Shape Texture Motion Face

Low level Visual Information Description n n Color Shape Texture Motion Face

Motivation n ©Salembier The multimedia context: More information is in digital form and is on-line. u AV content covers: still pictures, audio, speech, video, graphics, 3 D models, etc. u AV content is available at all bitrates and on all networks. u Increasing number of multimedia applications, services. u n Necessity of describing content: Increasing amount of information. u More needs to have “information about the content”. u Difficult to manage (find, select, filter, organize, etc) content. u User: human or computational systems. u

Motivation n ©Salembier The multimedia context: More information is in digital form and is on-line. u AV content covers: still pictures, audio, speech, video, graphics, 3 D models, etc. u AV content is available at all bitrates and on all networks. u Increasing number of multimedia applications, services. u n Necessity of describing content: Increasing amount of information. u More needs to have “information about the content”. u Difficult to manage (find, select, filter, organize, etc) content. u User: human or computational systems. u

MPEG Standards n n n ©Salembier MPEG-1: Storage of moving picture and audio on storage media (CD-ROM) 11 / 1992 MPEG-2: Digital television 11 / 1994 MPEG-4: Coding of natural and synthetic media objects for multimedia applications v 1: 09 / 1998 v 2: 11 / 1999 MPEG-7: Multimedia content description for AV material 08 / 2001 MPEG-21: Digital audiovisual framework: Integration of multimedia technologies (identification, copyright, protection, etc. ) 11 / 2001

MPEG Standards n n n ©Salembier MPEG-1: Storage of moving picture and audio on storage media (CD-ROM) 11 / 1992 MPEG-2: Digital television 11 / 1994 MPEG-4: Coding of natural and synthetic media objects for multimedia applications v 1: 09 / 1998 v 2: 11 / 1999 MPEG-7: Multimedia content description for AV material 08 / 2001 MPEG-21: Digital audiovisual framework: Integration of multimedia technologies (identification, copyright, protection, etc. ) 11 / 2001

Objective of MPEG-7 n n ©Salembier Standardize content-based description for various types of audiovisual information u Enable fast and efficient content searching, filtering and identification u Describe several aspects of the content (low-level features, structure, semantic, models, collections, creation, etc. ) u Address a large range of applications ( user preferences) Types of audiovisual information: u Audio, speech u Moving video, still pictures, graphics, 3 D models u Information on how objects are combined in scenes Descriptions independent of the data support Existing solutions for textual content or description

Objective of MPEG-7 n n ©Salembier Standardize content-based description for various types of audiovisual information u Enable fast and efficient content searching, filtering and identification u Describe several aspects of the content (low-level features, structure, semantic, models, collections, creation, etc. ) u Address a large range of applications ( user preferences) Types of audiovisual information: u Audio, speech u Moving video, still pictures, graphics, 3 D models u Information on how objects are combined in scenes Descriptions independent of the data support Existing solutions for textual content or description

Type of applications n Pull Applications: Example: u Advantage: u n Example: u Advantage: n Internet search engines and databases Queries based on standardized descriptions “Filtering” Broadcast video, Interactive television Intelligent agents filter on the basis of standardized descriptions Universal Multimedia Access: u n “Search and Browsing” Push Applications: u ©Salembier Adapt delivery to network and terminal characteristics (Qo. S) Specialized Professional and Control Applications

Type of applications n Pull Applications: Example: u Advantage: u n Example: u Advantage: n Internet search engines and databases Queries based on standardized descriptions “Filtering” Broadcast video, Interactive television Intelligent agents filter on the basis of standardized descriptions Universal Multimedia Access: u n “Search and Browsing” Push Applications: u ©Salembier Adapt delivery to network and terminal characteristics (Qo. S) Specialized Professional and Control Applications

Example of application areas n n n ©Salembier Storage and retrieval of audiovisual databases (image, film, radio archives) Broadcast media selection (radio, TV programs) Surveillance(traffic control, surface transportation, production chains) E-commerce and Tele-shopping (searching for clothes / patterns) Remote sensing(cartography, ecology, natural resources management) Entertainment (searching for a game, for a karaoke) Cultural services (museums, art galleries) Journalism (searching for events, persons) Personalized news service on Internet (push media filtering) Intelligent multimedia presentations Educational applications Bio-medical applications

Example of application areas n n n ©Salembier Storage and retrieval of audiovisual databases (image, film, radio archives) Broadcast media selection (radio, TV programs) Surveillance(traffic control, surface transportation, production chains) E-commerce and Tele-shopping (searching for clothes / patterns) Remote sensing(cartography, ecology, natural resources management) Entertainment (searching for a game, for a karaoke) Cultural services (museums, art galleries) Journalism (searching for events, persons) Personalized news service on Internet (push media filtering) Intelligent multimedia presentations Educational applications Bio-medical applications

Example of queries n Text: u n Find an image with a similar characteristic (global or local) Music: u n Find AV material corresponding to the specified semantic Image: u n Find AV material with the concepts described by the text Semantic: u n ©Salembier Play a few notes and search for corresponding musical pieces Motion: u Find video with specific object motion trajectories

Example of queries n Text: u n Find an image with a similar characteristic (global or local) Music: u n Find AV material corresponding to the specified semantic Image: u n Find AV material with the concepts described by the text Semantic: u n ©Salembier Play a few notes and search for corresponding musical pieces Motion: u Find video with specific object motion trajectories

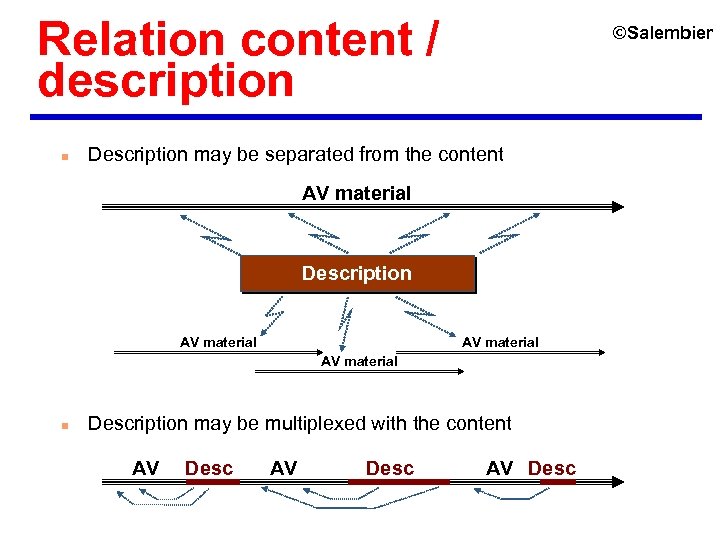

Relation content / description n ©Salembier Description may be separated from the content AV material Description AV material n Description may be multiplexed with the content AV Desc

Relation content / description n ©Salembier Description may be separated from the content AV material Description AV material n Description may be multiplexed with the content AV Desc

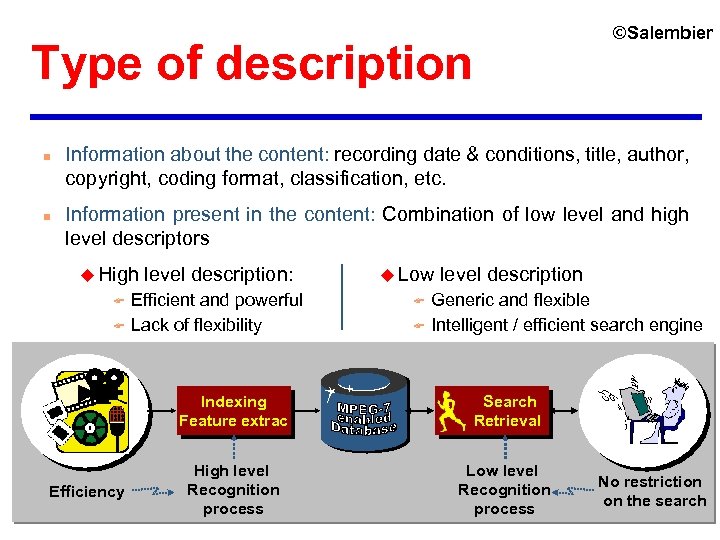

©Salembier Type of description n n Information about the content: recording date & conditions, title, author, copyright, coding format, classification, etc. Information present in the content: Combination of low level and high level descriptors u High F F level description: Efficient and powerful Lack of flexibility u Low F F level description Generic and flexible Intelligent / efficient search engine Indexing Feature extrac Efficiency Search Retrieval High level Recognition process Low level Recognition process No restriction on the search

©Salembier Type of description n n Information about the content: recording date & conditions, title, author, copyright, coding format, classification, etc. Information present in the content: Combination of low level and high level descriptors u High F F level description: Efficient and powerful Lack of flexibility u Low F F level description Generic and flexible Intelligent / efficient search engine Indexing Feature extrac Efficiency Search Retrieval High level Recognition process Low level Recognition process No restriction on the search

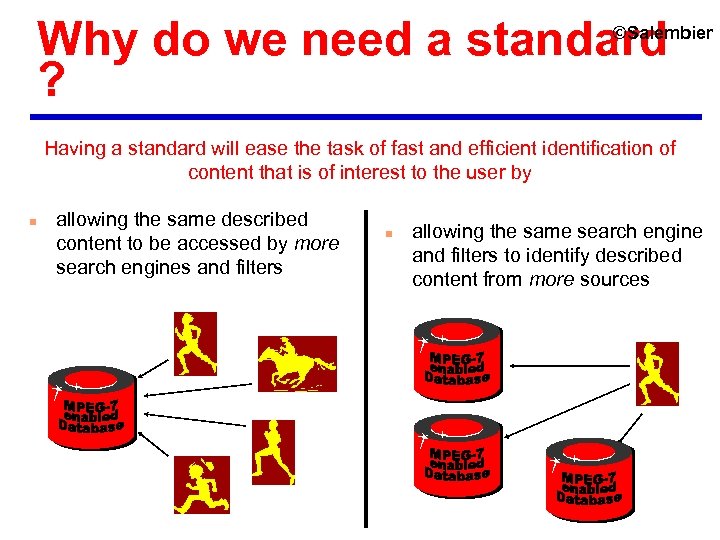

Why do we need a standard ? ©Salembier Having a standard will ease the task of fast and efficient identification of content that is of interest to the user by n allowing the same described content to be accessed by more search engines and filters n allowing the same search engine and filters to identify described content from more sources

Why do we need a standard ? ©Salembier Having a standard will ease the task of fast and efficient identification of content that is of interest to the user by n allowing the same described content to be accessed by more search engines and filters n allowing the same search engine and filters to identify described content from more sources

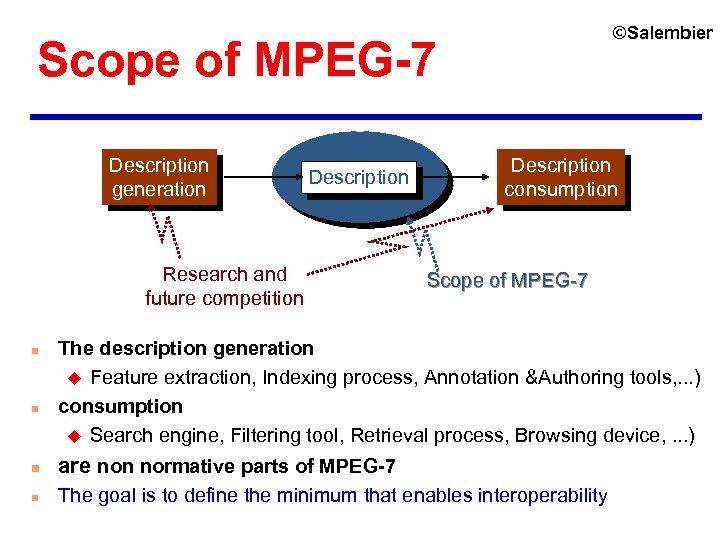

©Salembier Scope of MPEG-7 Description generation Description Research and future competition n n Description consumption Scope of MPEG-7 The description generation u Feature extraction, Indexing process, Annotation &Authoring tools, . . . ) consumption u Search engine, Filtering tool, Retrieval process, Browsing device, . . . ) n are non normative parts of MPEG-7 n The goal is to define the minimum that enables interoperability

©Salembier Scope of MPEG-7 Description generation Description Research and future competition n n Description consumption Scope of MPEG-7 The description generation u Feature extraction, Indexing process, Annotation &Authoring tools, . . . ) consumption u Search engine, Filtering tool, Retrieval process, Browsing device, . . . ) n are non normative parts of MPEG-7 n The goal is to define the minimum that enables interoperability

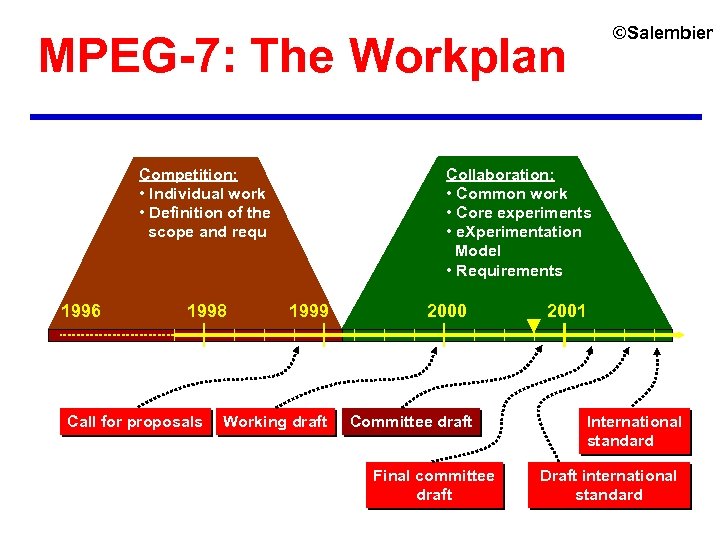

©Salembier MPEG-7: The Workplan Collaboration: • Common work • Core experiments • e. Xperimentation Model • Requirements Competition: • Individual work • Definition of the scope and requ 1996 1998 Call for proposals 1999 Working draft 2000 Committee draft Final committee draft 2001 International standard Draft international standard

©Salembier MPEG-7: The Workplan Collaboration: • Common work • Core experiments • e. Xperimentation Model • Requirements Competition: • Individual work • Definition of the scope and requ 1996 1998 Call for proposals 1999 Working draft 2000 Committee draft Final committee draft 2001 International standard Draft international standard

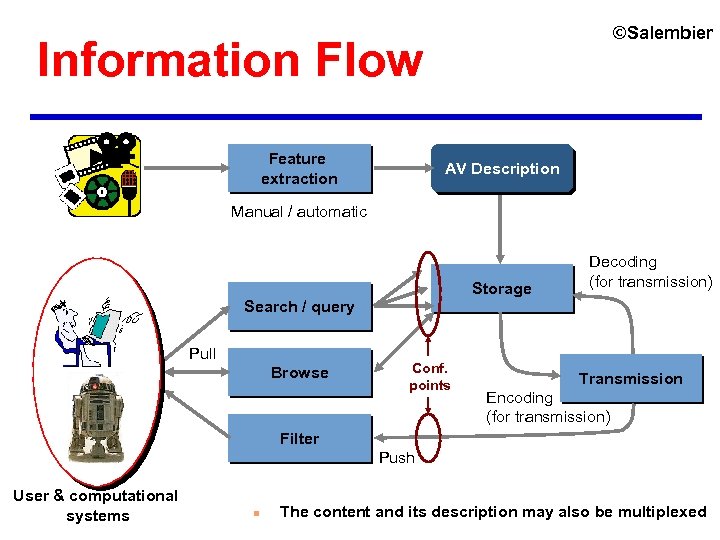

©Salembier Information Flow Feature extraction AV Description Manual / automatic Storage Decoding (for transmission) Search / query Pull Browse Conf. points Transmission Encoding (for transmission) Filter Push User & computational systems n The content and its description may also be multiplexed

©Salembier Information Flow Feature extraction AV Description Manual / automatic Storage Decoding (for transmission) Search / query Pull Browse Conf. points Transmission Encoding (for transmission) Filter Push User & computational systems n The content and its description may also be multiplexed

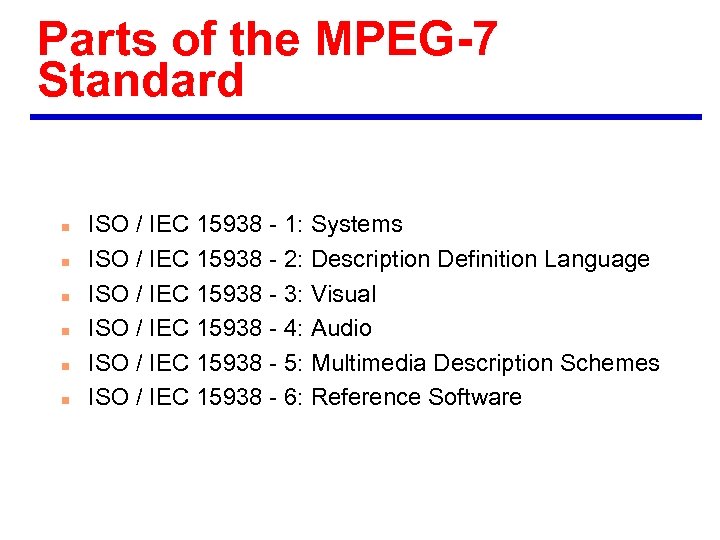

Parts of the MPEG-7 Standard n n n ISO / IEC 15938 - 1: Systems ISO / IEC 15938 - 2: Description Definition Language ISO / IEC 15938 - 3: Visual ISO / IEC 15938 - 4: Audio ISO / IEC 15938 - 5: Multimedia Description Schemes ISO / IEC 15938 - 6: Reference Software

Parts of the MPEG-7 Standard n n n ISO / IEC 15938 - 1: Systems ISO / IEC 15938 - 2: Description Definition Language ISO / IEC 15938 - 3: Visual ISO / IEC 15938 - 4: Audio ISO / IEC 15938 - 5: Multimedia Description Schemes ISO / IEC 15938 - 6: Reference Software

Visual Descriptors

Visual Descriptors

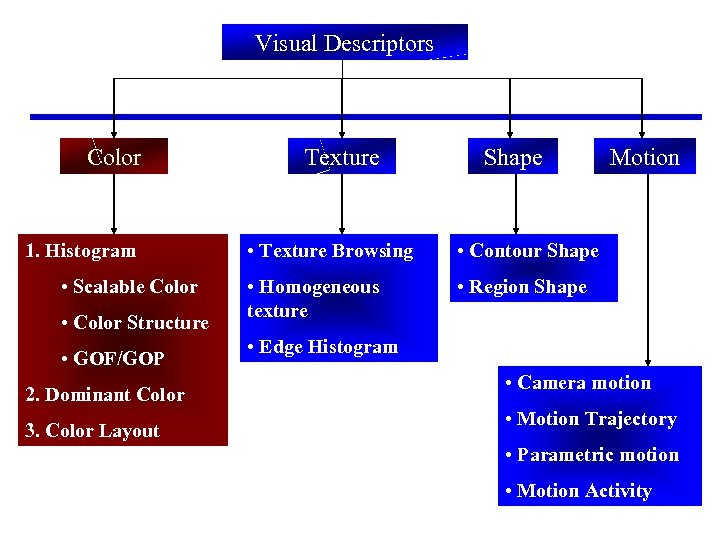

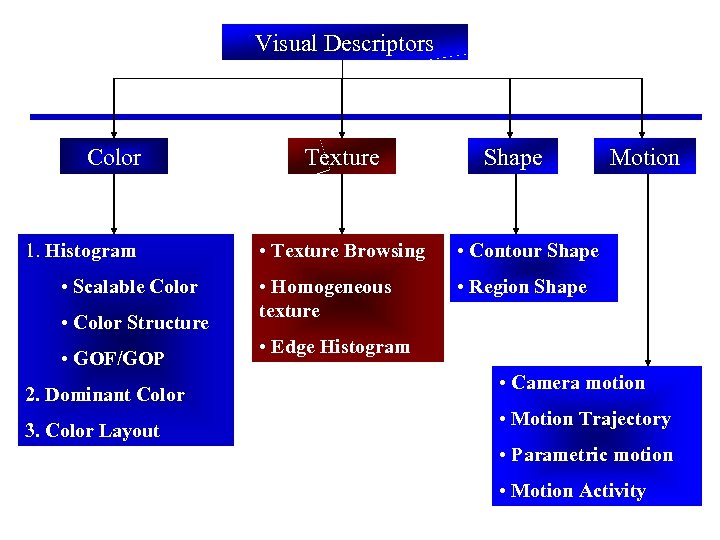

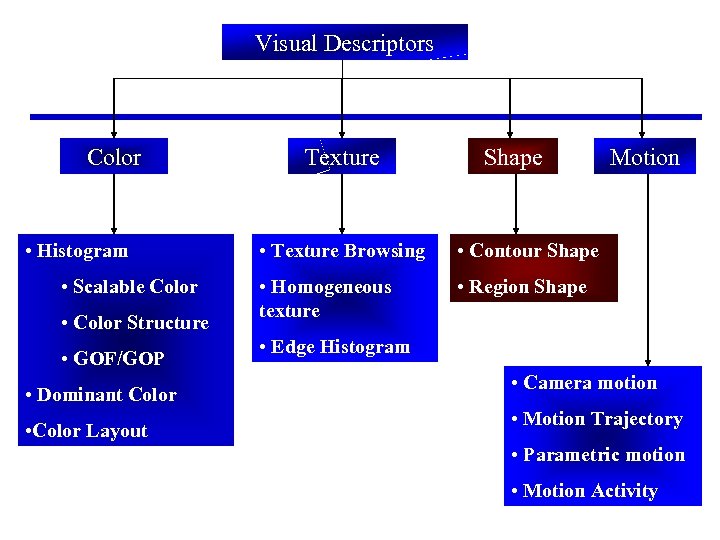

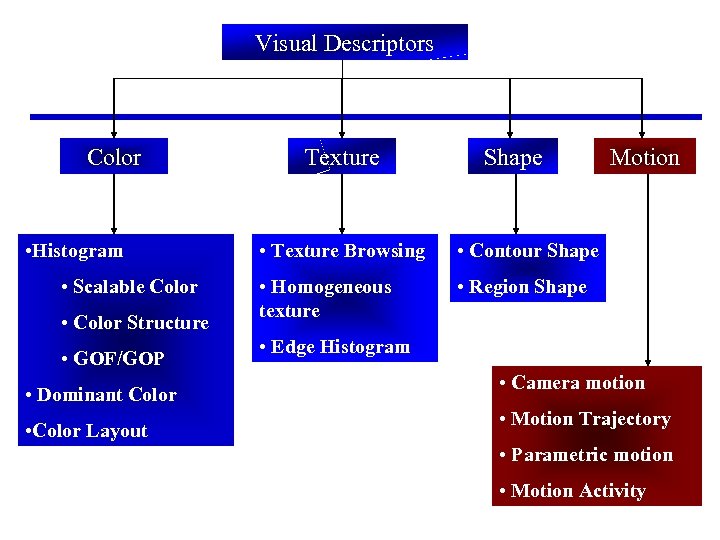

Visual Descriptors Color 1. Histogram • Scalable Color • Color Structure • GOF/GOP 2. Dominant Color 3. Color Layout Texture Shape • Texture Browsing • Contour Shape • Homogeneous texture Motion • Region Shape • Edge Histogram • Camera motion • Motion Trajectory • Parametric motion • Motion Activity

Visual Descriptors Color 1. Histogram • Scalable Color • Color Structure • GOF/GOP 2. Dominant Color 3. Color Layout Texture Shape • Texture Browsing • Contour Shape • Homogeneous texture Motion • Region Shape • Edge Histogram • Camera motion • Motion Trajectory • Parametric motion • Motion Activity

Color Datasets and Evaluation Criteria n n Color: about 5400 color images and 50 queries. See MPEG document M 5060 from Melbourne, October 1999. Texture: various data sets– Brodatz texture, aerial pictures, Corel photos.

Color Datasets and Evaluation Criteria n n Color: about 5400 color images and 50 queries. See MPEG document M 5060 from Melbourne, October 1999. Texture: various data sets– Brodatz texture, aerial pictures, Corel photos.

Performance evaluation n n n Let the number of ground truth images for a query q be NG(q) Compute NR(q), number of found items in first K(q) retrievals, where - K(q)=min(4*NG(q), 2*GTM) Where GTM is max{NG(q)} for all q’s of a data set. - Compute MR(q)=NG(q)-NR(q), number of missed items - Compute from the ranks Rank(k) of the found items counting the rank of the first retrieved item as one. - A Rank of (1. 25 K(q)) is assigned to each of the ground truth images which are not in the first K(q) retrievals. - Compute the normalized modified retrieval rank as follows (next slide). Note that the NMRR(q) will always be in the range of [0. 0, 1. 0].

Performance evaluation n n n Let the number of ground truth images for a query q be NG(q) Compute NR(q), number of found items in first K(q) retrievals, where - K(q)=min(4*NG(q), 2*GTM) Where GTM is max{NG(q)} for all q’s of a data set. - Compute MR(q)=NG(q)-NR(q), number of missed items - Compute from the ranks Rank(k) of the found items counting the rank of the first retrieved item as one. - A Rank of (1. 25 K(q)) is assigned to each of the ground truth images which are not in the first K(q) retrievals. - Compute the normalized modified retrieval rank as follows (next slide). Note that the NMRR(q) will always be in the range of [0. 0, 1. 0].

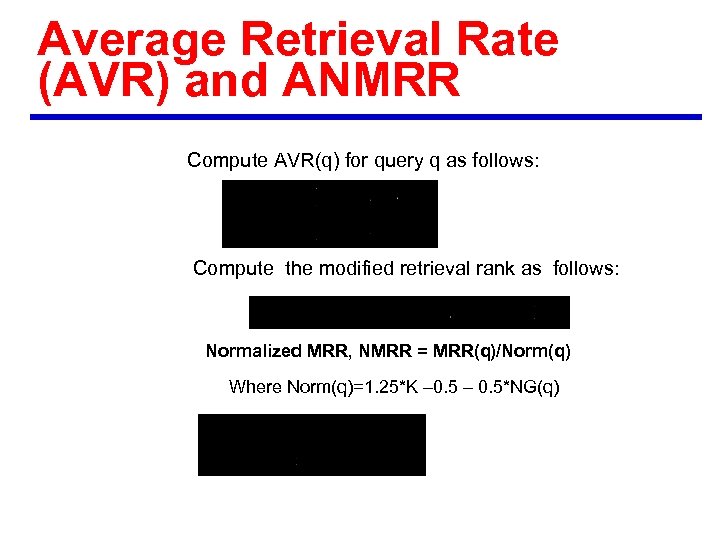

Average Retrieval Rate (AVR) and ANMRR Compute AVR(q) for query q as follows: Compute the modified retrieval rank as follows: Normalized MRR, NMRR = MRR(q)/Norm(q) Where Norm(q)=1. 25*K – 0. 5*NG(q)

Average Retrieval Rate (AVR) and ANMRR Compute AVR(q) for query q as follows: Compute the modified retrieval rank as follows: Normalized MRR, NMRR = MRR(q)/Norm(q) Where Norm(q)=1. 25*K – 0. 5*NG(q)

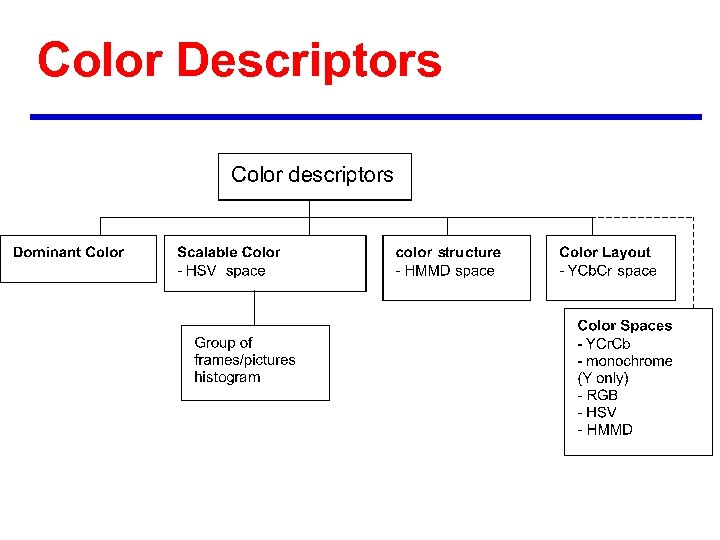

Color Descriptors

Color Descriptors

Color spaces n · The Color Space Descriptor allows a selection of a color space to be used in the description, the Color Quantization Descriptor specifies the partitioning of the given color space into discrete bins. These two descriptors are rather to be used in the context of other descriptors, not standalone. RGB u YCr. Cb color layout u HSV scalable color u HMMD color structure u Arbitrary 3 x 3 color transformation matrix u

Color spaces n · The Color Space Descriptor allows a selection of a color space to be used in the description, the Color Quantization Descriptor specifies the partitioning of the given color space into discrete bins. These two descriptors are rather to be used in the context of other descriptors, not standalone. RGB u YCr. Cb color layout u HSV scalable color u HMMD color structure u Arbitrary 3 x 3 color transformation matrix u

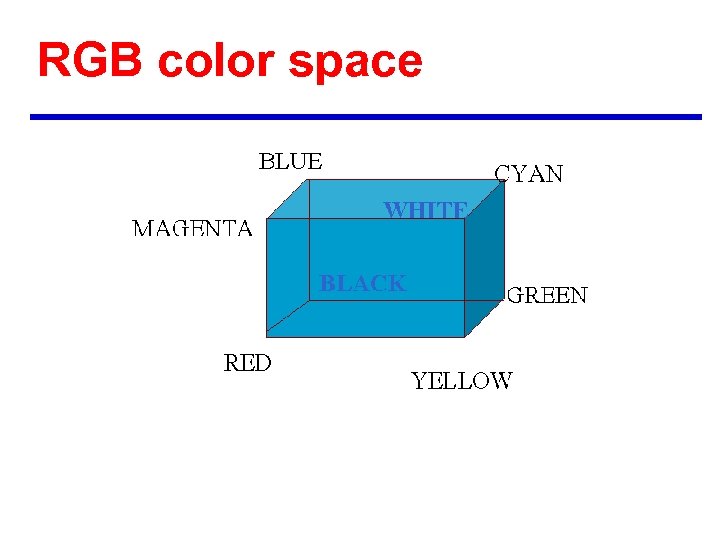

RGB color space

RGB color space

HSV color space

HSV color space

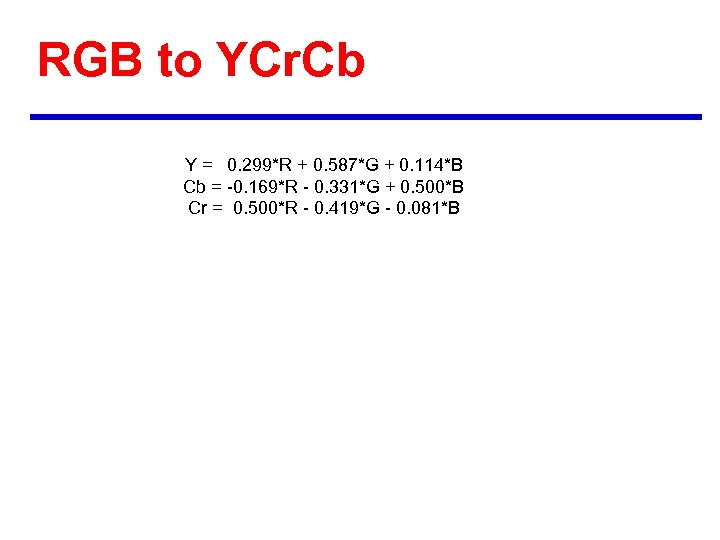

RGB to YCr. Cb Y = 0. 299*R + 0. 587*G + 0. 114*B Cb = -0. 169*R - 0. 331*G + 0. 500*B Cr = 0. 500*R - 0. 419*G - 0. 081*B

RGB to YCr. Cb Y = 0. 299*R + 0. 587*G + 0. 114*B Cb = -0. 169*R - 0. 331*G + 0. 500*B Cr = 0. 500*R - 0. 419*G - 0. 081*B

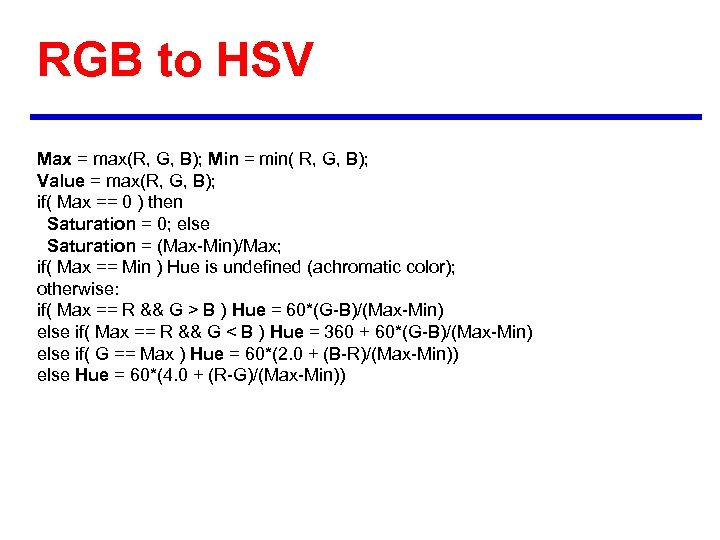

RGB to HSV Max = max(R, G, B); Min = min( R, G, B); Value = max(R, G, B); if( Max == 0 ) then Saturation = 0; else Saturation = (Max-Min)/Max; if( Max == Min ) Hue is undefined (achromatic color); otherwise: if( Max == R && G > B ) Hue = 60*(G-B)/(Max-Min) else if( Max == R && G < B ) Hue = 360 + 60*(G-B)/(Max-Min) else if( G == Max ) Hue = 60*(2. 0 + (B-R)/(Max-Min)) else Hue = 60*(4. 0 + (R-G)/(Max-Min))

RGB to HSV Max = max(R, G, B); Min = min( R, G, B); Value = max(R, G, B); if( Max == 0 ) then Saturation = 0; else Saturation = (Max-Min)/Max; if( Max == Min ) Hue is undefined (achromatic color); otherwise: if( Max == R && G > B ) Hue = 60*(G-B)/(Max-Min) else if( Max == R && G < B ) Hue = 360 + 60*(G-B)/(Max-Min) else if( G == Max ) Hue = 60*(2. 0 + (B-R)/(Max-Min)) else Hue = 60*(4. 0 + (R-G)/(Max-Min))

RGB to HMMD n n n Diff=Max-Min Sum=(max+min)/2 Hue as defined for the HSV.

RGB to HMMD n n n Diff=Max-Min Sum=(max+min)/2 Hue as defined for the HSV.

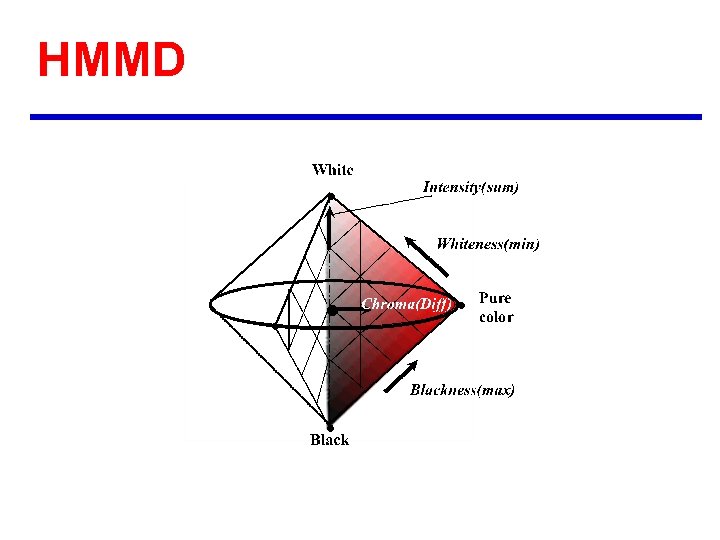

HMMD

HMMD

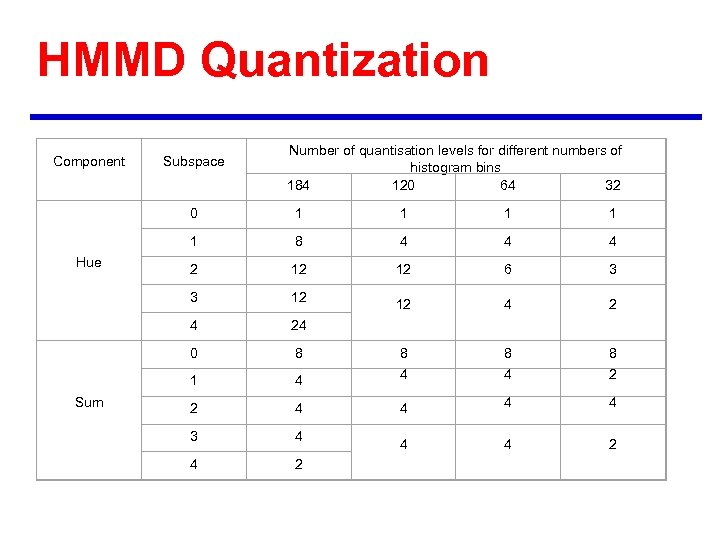

HMMD Quantization Component Subspace Number of quantisation levels for different numbers of histogram bins 184 120 64 32 0 1 1 8 4 4 4 2 12 12 6 3 3 12 12 4 24 0 8 8 1 Sum 1 1 Hue 1 4 4 4 2 2 4 4 3 4 4 4 2

HMMD Quantization Component Subspace Number of quantisation levels for different numbers of histogram bins 184 120 64 32 0 1 1 8 4 4 4 2 12 12 6 3 3 12 12 4 24 0 8 8 1 Sum 1 1 Hue 1 4 4 4 2 2 4 4 3 4 4 4 2

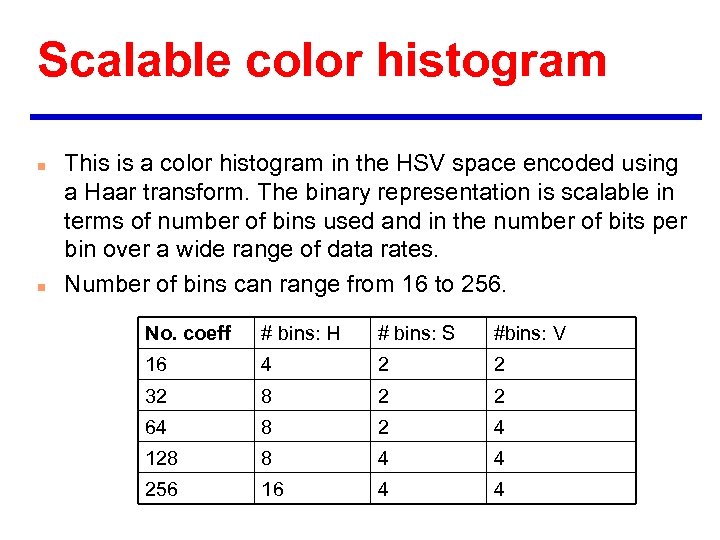

Scalable color histogram n n This is a color histogram in the HSV space encoded using a Haar transform. The binary representation is scalable in terms of number of bins used and in the number of bits per bin over a wide range of data rates. Number of bins can range from 16 to 256. No. coeff # bins: H # bins: S #bins: V 16 4 2 2 32 8 2 2 64 8 2 4 128 8 4 4 256 16 4 4

Scalable color histogram n n This is a color histogram in the HSV space encoded using a Haar transform. The binary representation is scalable in terms of number of bins used and in the number of bits per bin over a wide range of data rates. Number of bins can range from 16 to 256. No. coeff # bins: H # bins: S #bins: V 16 4 2 2 32 8 2 2 64 8 2 4 128 8 4 4 256 16 4 4

Scalable color descriptor

Scalable color descriptor

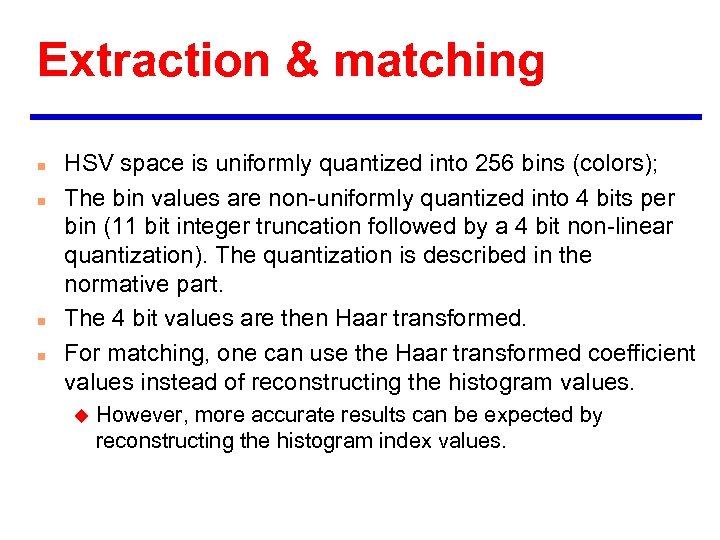

Extraction & matching n n HSV space is uniformly quantized into 256 bins (colors); The bin values are non-uniformly quantized into 4 bits per bin (11 bit integer truncation followed by a 4 bit non-linear quantization). The quantization is described in the normative part. The 4 bit values are then Haar transformed. For matching, one can use the Haar transformed coefficient values instead of reconstructing the histogram values. u However, more accurate results can be expected by reconstructing the histogram index values.

Extraction & matching n n HSV space is uniformly quantized into 256 bins (colors); The bin values are non-uniformly quantized into 4 bits per bin (11 bit integer truncation followed by a 4 bit non-linear quantization). The quantization is described in the normative part. The 4 bit values are then Haar transformed. For matching, one can use the Haar transformed coefficient values instead of reconstructing the histogram values. u However, more accurate results can be expected by reconstructing the histogram index values.

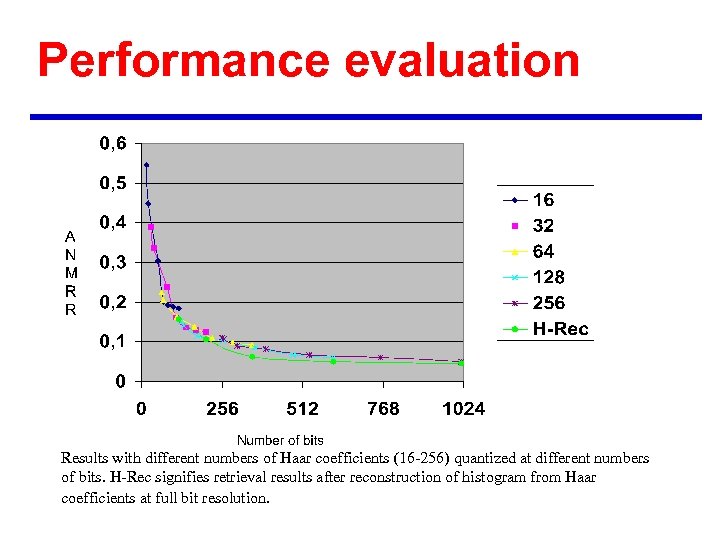

Performance evaluation Results with different numbers of Haar coefficients (16 -256) quantized at different numbers of bits. H-Rec signifies retrieval results after reconstruction of histogram from Haar coefficients at full bit resolution.

Performance evaluation Results with different numbers of Haar coefficients (16 -256) quantized at different numbers of bits. H-Rec signifies retrieval results after reconstruction of histogram from Haar coefficients at full bit resolution.

Go. P/Go. F descriptor n n The Group of Frames/Group of Pictures Descriptor (Go. P) extends the SCD application to a collection of images, video segments, or moving regions. In the Go. P descriptor, three different ways of computing the joint color histogram values for the whole series using the individual histograms from items within the collection are identified: averaging, median filtering, and histogram intersection. u This joint color histogram is then processed as in the SCD using the Haar transform and encoded. u

Go. P/Go. F descriptor n n The Group of Frames/Group of Pictures Descriptor (Go. P) extends the SCD application to a collection of images, video segments, or moving regions. In the Go. P descriptor, three different ways of computing the joint color histogram values for the whole series using the individual histograms from items within the collection are identified: averaging, median filtering, and histogram intersection. u This joint color histogram is then processed as in the SCD using the Haar transform and encoded. u

Color structure n n Similar to a histogram, but a 8 x 8 structuring element is used to compute the bin values. HMMD color space should be used with this descriptor. The quantization of the HMMD space to 32, 64, 128 and 180 bins is specified.

Color structure n n Similar to a histogram, but a 8 x 8 structuring element is used to compute the bin values. HMMD color space should be used with this descriptor. The quantization of the HMMD space to 32, 64, 128 and 180 bins is specified.

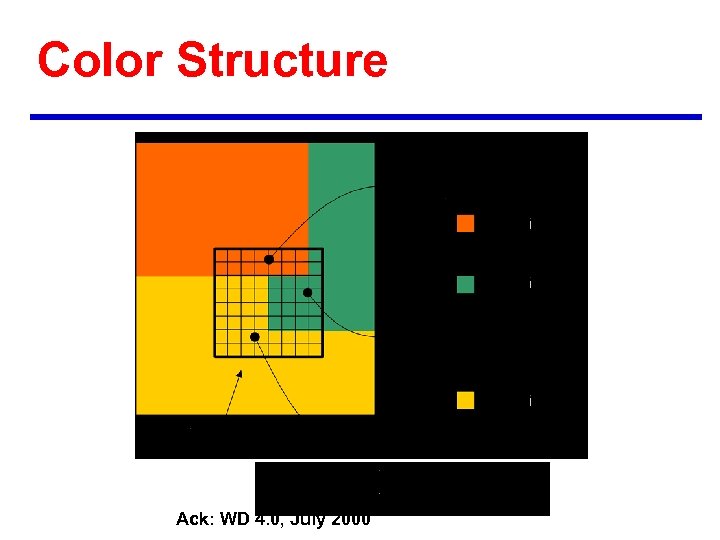

Color Structure Ack: WD 4. 0, July 2000

Color Structure Ack: WD 4. 0, July 2000

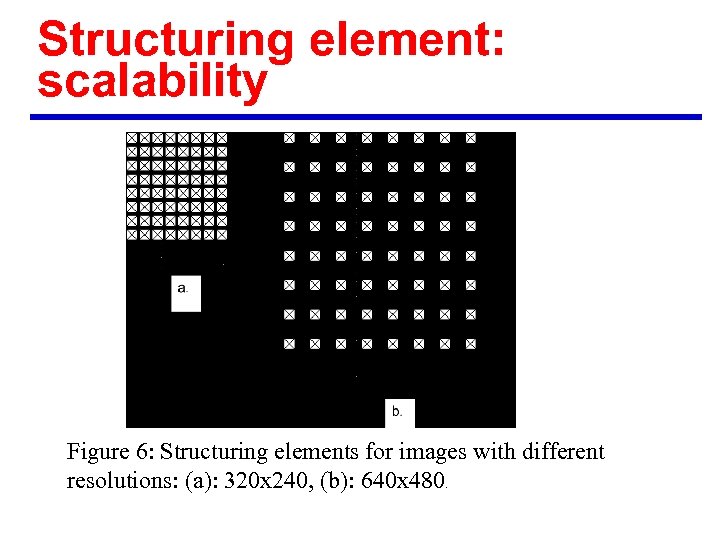

Structuring element: scalability Figure 6: Structuring elements for images with different resolutions: (a): 320 x 240, (b): 640 x 480.

Structuring element: scalability Figure 6: Structuring elements for images with different resolutions: (a): 320 x 240, (b): 640 x 480.

Interoperability n n n The Color-Structure Descriptor can be used in a limited range of Color Quantization settings, such that the total number of bins lies between 32 and 256. Re-quantization of a Color Structure Descriptor from fine color space quantization to course can be performed in HMMD color space using the re-quantization method defined in the WD. The Color-Structure Descriptor is not interoperable with other Color Descriptors.

Interoperability n n n The Color-Structure Descriptor can be used in a limited range of Color Quantization settings, such that the total number of bins lies between 32 and 256. Re-quantization of a Color Structure Descriptor from fine color space quantization to course can be performed in HMMD color space using the re-quantization method defined in the WD. The Color-Structure Descriptor is not interoperable with other Color Descriptors.

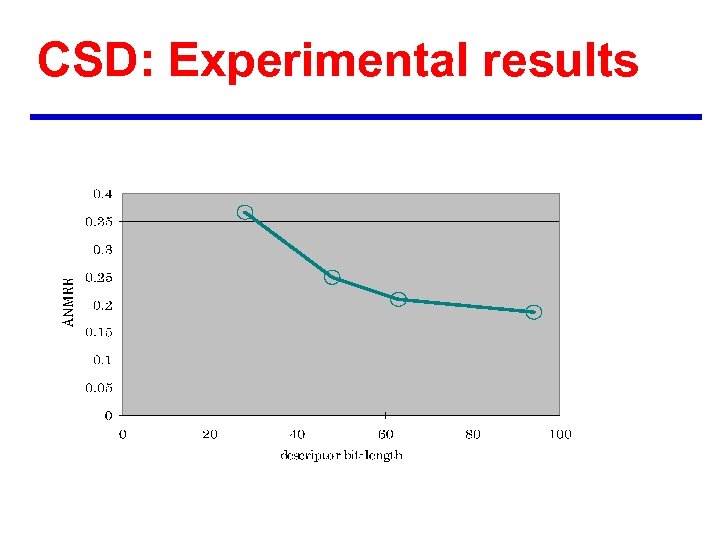

CSD: Experimental results

CSD: Experimental results

Dominant color n n Best suitable for local (object or region) features u a small number of colors enough to characterize the color information Before feature extraction, images segmented into regions Similar to color histogram EXCEPT: u color bins not fixed, depending on quantization in each region u number of bins not fixed, on average only 3 bins per region To extract feature u quantize to a small number of representing colors in each region u calculate percentages of quantized colors in the region

Dominant color n n Best suitable for local (object or region) features u a small number of colors enough to characterize the color information Before feature extraction, images segmented into regions Similar to color histogram EXCEPT: u color bins not fixed, depending on quantization in each region u number of bins not fixed, on average only 3 bins per region To extract feature u quantize to a small number of representing colors in each region u calculate percentages of quantized colors in the region

Descriptor Definition n A given image is described in terms of a set of region labels and the associated color descriptors Each pixel has a unique region label. u Each region is characterized by a variable bin color histogram u n Color feature descriptor for a given region in the image F : where ci is the i-th dominant color, pi is its percentage value, and vi is its color variance. The color variance is an optional field. N: total number of quantized colors in the region. u The spatial coherency s is a single number that represents the overall spatial homogeneity of the dominant colors in the image. u

Descriptor Definition n A given image is described in terms of a set of region labels and the associated color descriptors Each pixel has a unique region label. u Each region is characterized by a variable bin color histogram u n Color feature descriptor for a given region in the image F : where ci is the i-th dominant color, pi is its percentage value, and vi is its color variance. The color variance is an optional field. N: total number of quantized colors in the region. u The spatial coherency s is a single number that represents the overall spatial homogeneity of the dominant colors in the image. u

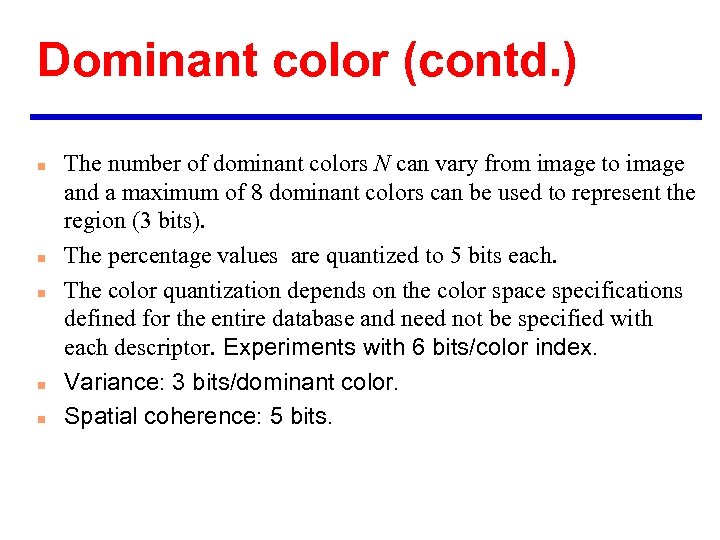

Dominant color (contd. ) n n n The number of dominant colors N can vary from image to image and a maximum of 8 dominant colors can be used to represent the region (3 bits). The percentage values are quantized to 5 bits each. The color quantization depends on the color space specifications defined for the entire database and need not be specified with each descriptor. Experiments with 6 bits/color index. Variance: 3 bits/dominant color. Spatial coherence: 5 bits.

Dominant color (contd. ) n n n The number of dominant colors N can vary from image to image and a maximum of 8 dominant colors can be used to represent the region (3 bits). The percentage values are quantized to 5 bits each. The color quantization depends on the color space specifications defined for the entire database and need not be specified with each descriptor. Experiments with 6 bits/color index. Variance: 3 bits/dominant color. Spatial coherence: 5 bits.

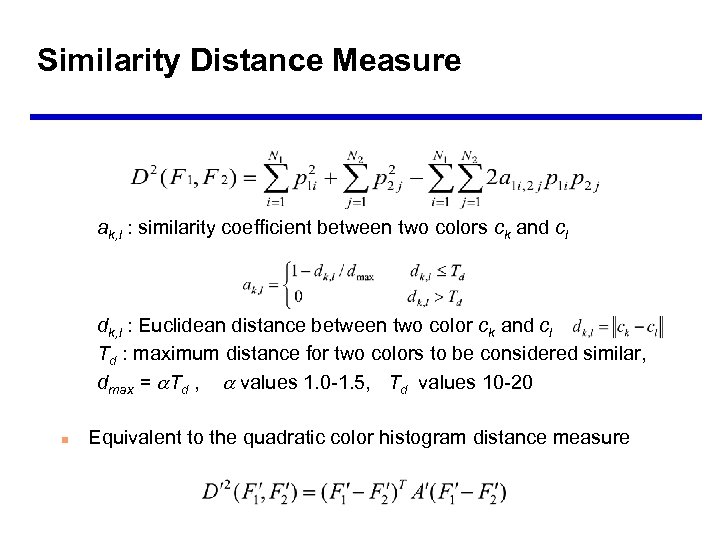

Similarity Distance Measure ak, l : similarity coefficient between two colors ck and cl dk, l : Euclidean distance between two color ck and cl Td : maximum distance for two colors to be considered similar, dmax = Td , values 1. 0 -1. 5, Td values 10 -20 n Equivalent to the quadratic color histogram distance measure

Similarity Distance Measure ak, l : similarity coefficient between two colors ck and cl dk, l : Euclidean distance between two color ck and cl Td : maximum distance for two colors to be considered similar, dmax = Td , values 1. 0 -1. 5, Td values 10 -20 n Equivalent to the quadratic color histogram distance measure

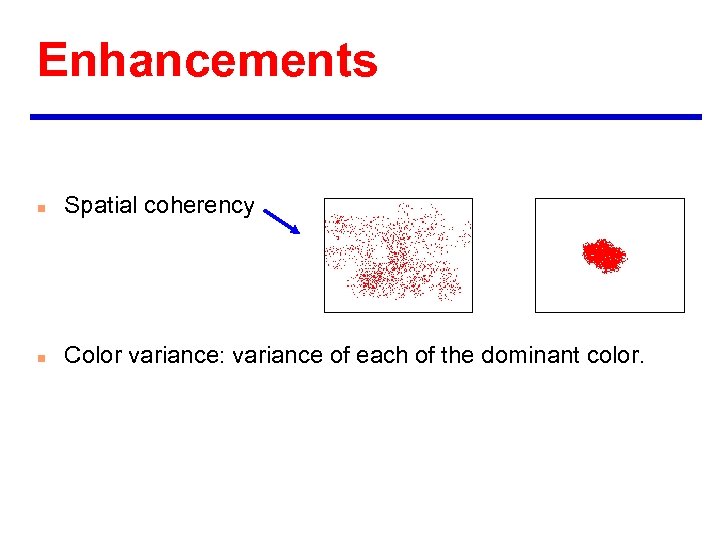

Enhancements n Spatial coherency n Color variance: variance of each of the dominant color.

Enhancements n Spatial coherency n Color variance: variance of each of the dominant color.

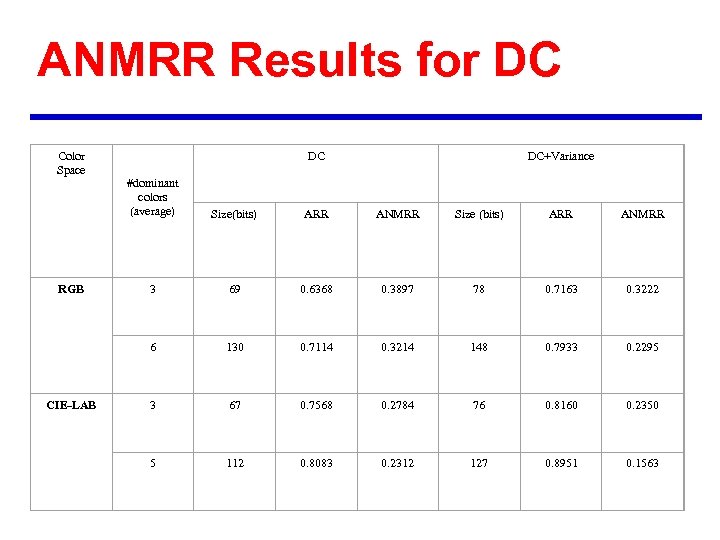

ANMRR Results for DC Color Space DC DC+Variance CIE-LAB Size(bits) ARR ANMRR Size (bits) ARR ANMRR 3 69 0. 6368 0. 3897 78 0. 7163 0. 3222 6 RGB #dominant colors (average) 130 0. 7114 0. 3214 148 0. 7933 0. 2295 3 67 0. 7568 0. 2784 76 0. 8160 0. 2350 5 112 0. 8083 0. 2312 127 0. 8951 0. 1563

ANMRR Results for DC Color Space DC DC+Variance CIE-LAB Size(bits) ARR ANMRR Size (bits) ARR ANMRR 3 69 0. 6368 0. 3897 78 0. 7163 0. 3222 6 RGB #dominant colors (average) 130 0. 7114 0. 3214 148 0. 7933 0. 2295 3 67 0. 7568 0. 2784 76 0. 8160 0. 2350 5 112 0. 8083 0. 2312 127 0. 8951 0. 1563

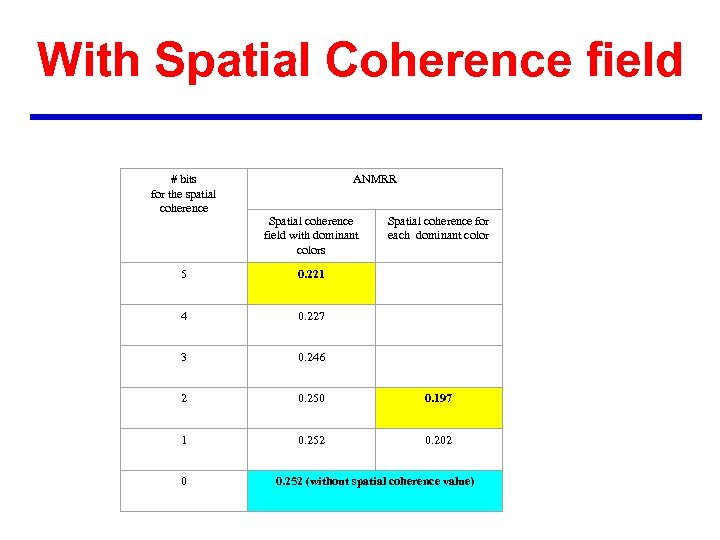

With Spatial Coherence field # bits for the spatial coherence ANMRR Spatial coherence field with dominant colors Spatial coherence for each dominant color 5 0. 221 4 0. 227 3 0. 246 2 0. 250 0. 197 1 0. 252 0. 202 0 0. 252 (without spatial coherence value)

With Spatial Coherence field # bits for the spatial coherence ANMRR Spatial coherence field with dominant colors Spatial coherence for each dominant color 5 0. 221 4 0. 227 3 0. 246 2 0. 250 0. 197 1 0. 252 0. 202 0 0. 252 (without spatial coherence value)

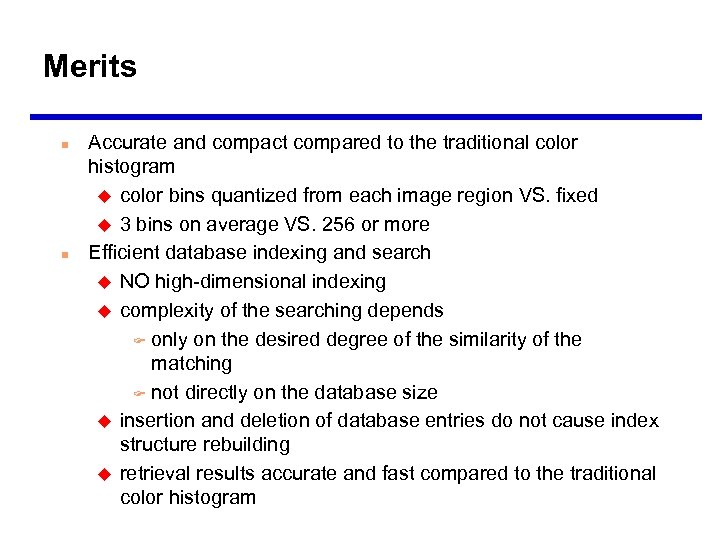

Merits n n Accurate and compact compared to the traditional color histogram u color bins quantized from each image region VS. fixed u 3 bins on average VS. 256 or more Efficient database indexing and search u NO high-dimensional indexing u complexity of the searching depends F only on the desired degree of the similarity of the matching F not directly on the database size u insertion and deletion of database entries do not cause index structure rebuilding u retrieval results accurate and fast compared to the traditional color histogram

Merits n n Accurate and compact compared to the traditional color histogram u color bins quantized from each image region VS. fixed u 3 bins on average VS. 256 or more Efficient database indexing and search u NO high-dimensional indexing u complexity of the searching depends F only on the desired degree of the similarity of the matching F not directly on the database size u insertion and deletion of database entries do not cause index structure rebuilding u retrieval results accurate and fast compared to the traditional color histogram

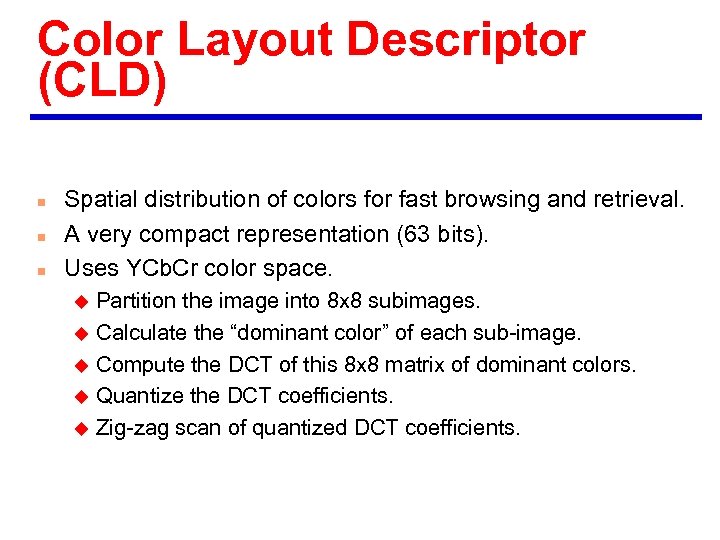

Color Layout Descriptor (CLD) n n n Spatial distribution of colors for fast browsing and retrieval. A very compact representation (63 bits). Uses YCb. Cr color space. Partition the image into 8 x 8 subimages. u Calculate the “dominant color” of each sub-image. u Compute the DCT of this 8 x 8 matrix of dominant colors. u Quantize the DCT coefficients. u Zig-zag scan of quantized DCT coefficients. u

Color Layout Descriptor (CLD) n n n Spatial distribution of colors for fast browsing and retrieval. A very compact representation (63 bits). Uses YCb. Cr color space. Partition the image into 8 x 8 subimages. u Calculate the “dominant color” of each sub-image. u Compute the DCT of this 8 x 8 matrix of dominant colors. u Quantize the DCT coefficients. u Zig-zag scan of quantized DCT coefficients. u

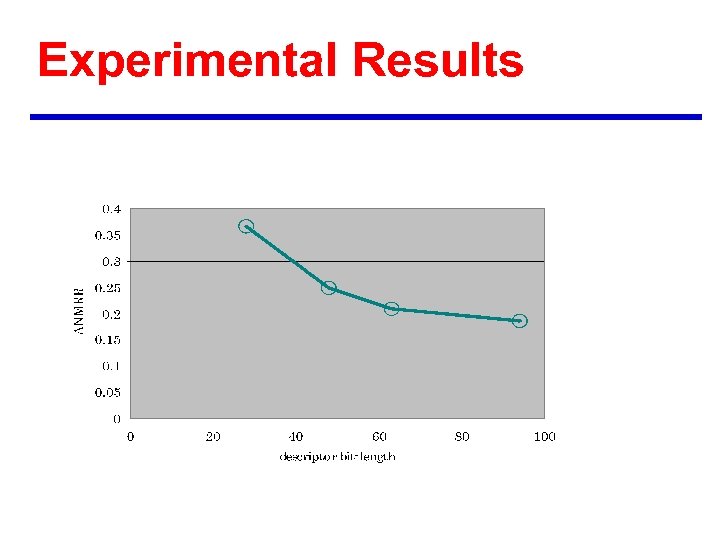

Experimental Results

Experimental Results

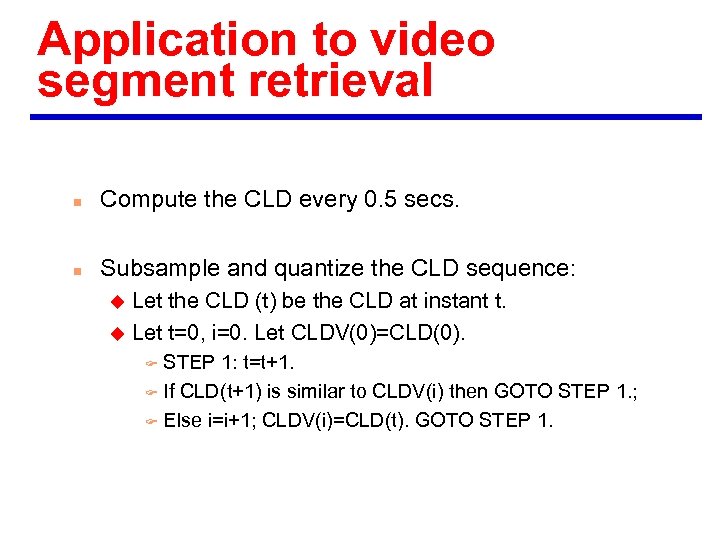

Application to video segment retrieval n Compute the CLD every 0. 5 secs. n Subsample and quantize the CLD sequence: Let the CLD (t) be the CLD at instant t. u Let t=0, i=0. Let CLDV(0)=CLD(0). u STEP 1: t=t+1. F If CLD(t+1) is similar to CLDV(i) then GOTO STEP 1. ; F Else i=i+1; CLDV(i)=CLD(t). GOTO STEP 1. F

Application to video segment retrieval n Compute the CLD every 0. 5 secs. n Subsample and quantize the CLD sequence: Let the CLD (t) be the CLD at instant t. u Let t=0, i=0. Let CLDV(0)=CLD(0). u STEP 1: t=t+1. F If CLD(t+1) is similar to CLDV(i) then GOTO STEP 1. ; F Else i=i+1; CLDV(i)=CLD(t). GOTO STEP 1. F

Visual Descriptors Color 1. Histogram • Scalable Color • Color Structure • GOF/GOP 2. Dominant Color 3. Color Layout Texture Shape • Texture Browsing • Contour Shape • Homogeneous texture Motion • Region Shape • Edge Histogram • Camera motion • Motion Trajectory • Parametric motion • Motion Activity

Visual Descriptors Color 1. Histogram • Scalable Color • Color Structure • GOF/GOP 2. Dominant Color 3. Color Layout Texture Shape • Texture Browsing • Contour Shape • Homogeneous texture Motion • Region Shape • Edge Histogram • Camera motion • Motion Trajectory • Parametric motion • Motion Activity

Texture descriptors n n n Texture browsing Homogeneous texture Edge histogram

Texture descriptors n n n Texture browsing Homogeneous texture Edge histogram

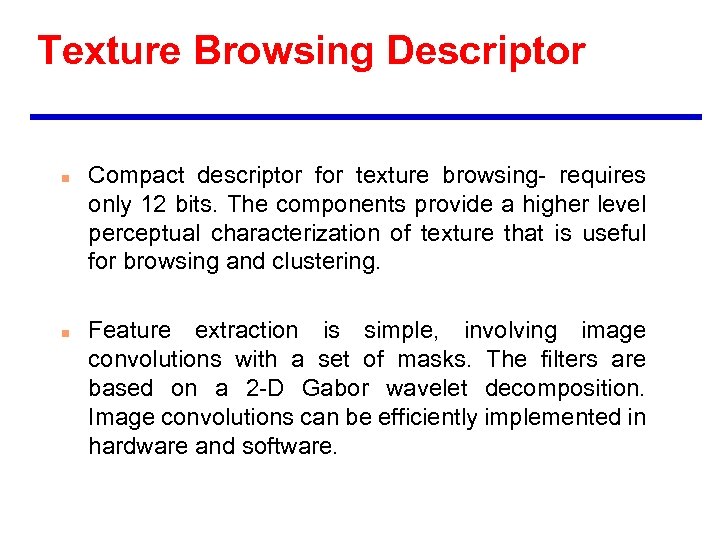

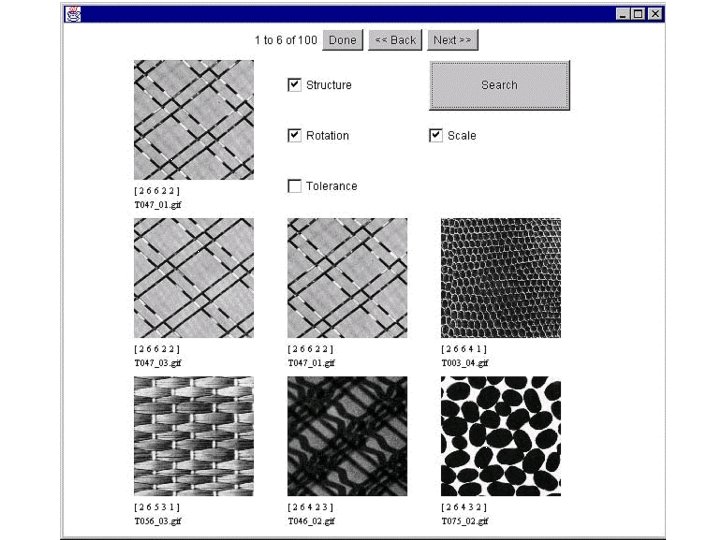

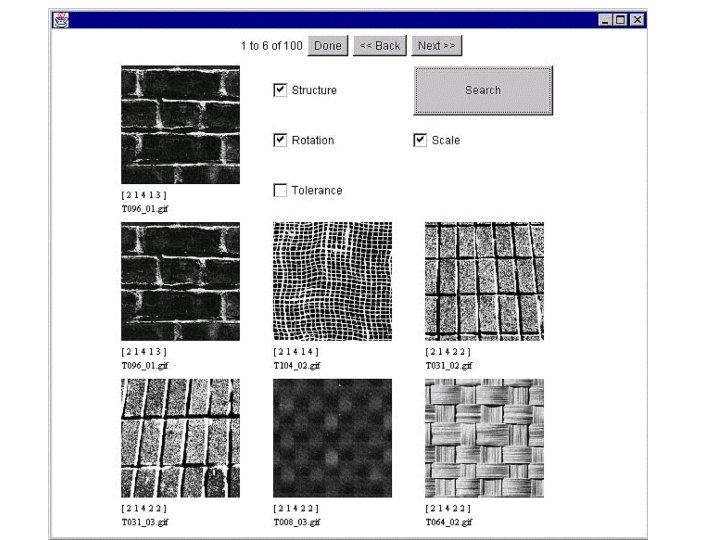

Texture Browsing Descriptor n n Compact descriptor for texture browsing- requires only 12 bits. The components provide a higher level perceptual characterization of texture that is useful for browsing and clustering. Feature extraction is simple, involving image convolutions with a set of masks. The filters are based on a 2 -D Gabor wavelet decomposition. Image convolutions can be efficiently implemented in hardware and software.

Texture Browsing Descriptor n n Compact descriptor for texture browsing- requires only 12 bits. The components provide a higher level perceptual characterization of texture that is useful for browsing and clustering. Feature extraction is simple, involving image convolutions with a set of masks. The filters are based on a 2 -D Gabor wavelet decomposition. Image convolutions can be efficiently implemented in hardware and software.

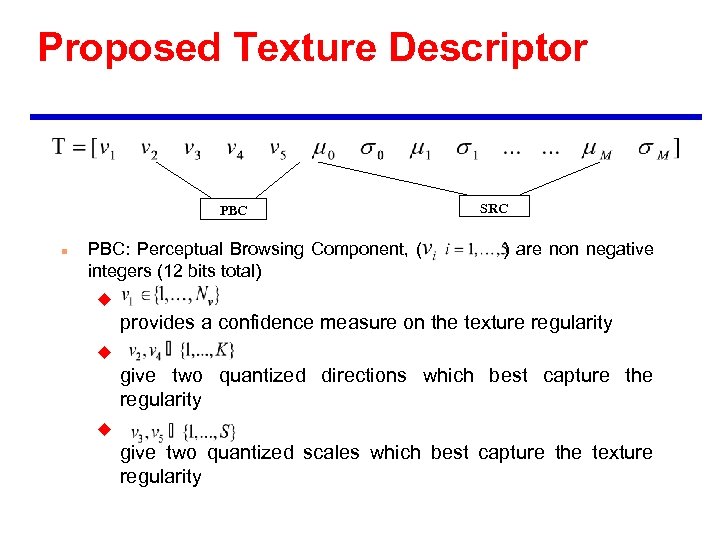

Proposed Texture Descriptor PBC n PBC: Perceptual Browsing Component, ( integers (12 bits total) SRC ) are non negative u provides a confidence measure on the texture regularity u give two quantized directions which best capture the regularity u give two quantized scales which best capture the texture regularity

Proposed Texture Descriptor PBC n PBC: Perceptual Browsing Component, ( integers (12 bits total) SRC ) are non negative u provides a confidence measure on the texture regularity u give two quantized directions which best capture the regularity u give two quantized scales which best capture the texture regularity

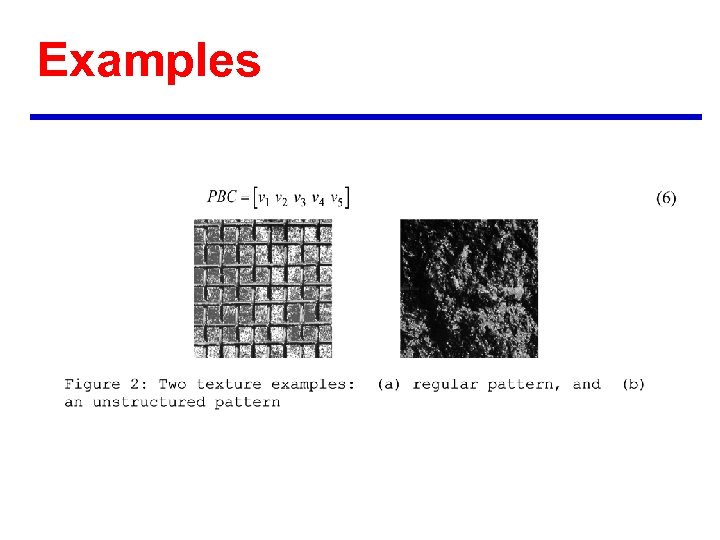

Examples

Examples

![Some sample textures and corresponding PBC vectors [1 3 3 1 1] [1 3 Some sample textures and corresponding PBC vectors [1 3 3 1 1] [1 3](https://present5.com/presentation/5571aa8adec2d626a6ddc74dcdc3466d/image-59.jpg) Some sample textures and corresponding PBC vectors [1 3 3 1 1] [1 3 3 1 3] [1 4 1 1 1] [1 6 3 1 1] [1 1 5 1 2] [2 6 2 3 3] [2 2 6 4 1] [2 6 4 1 3] [2 2 4 2 1] [3 1 4] [4 1 4 3 3] [4 1 4 4 4] [4 2 3 3 2] [4 1 4 3 3]

Some sample textures and corresponding PBC vectors [1 3 3 1 1] [1 3 3 1 3] [1 4 1 1 1] [1 6 3 1 1] [1 1 5 1 2] [2 6 2 3 3] [2 2 6 4 1] [2 6 4 1 3] [2 2 4 2 1] [3 1 4] [4 1 4 3 3] [4 1 4 4 4] [4 2 3 3 2] [4 1 4 3 3]

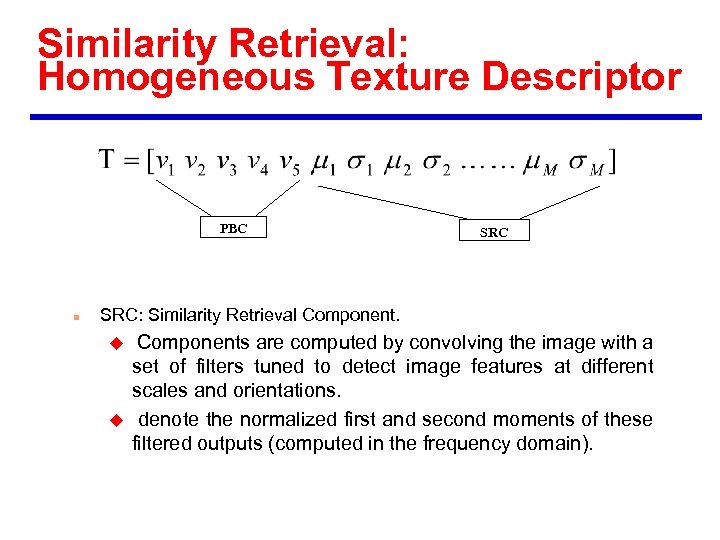

Similarity Retrieval: Homogeneous Texture Descriptor PBC n SRC: Similarity Retrieval Component. u u Components are computed by convolving the image with a set of filters tuned to detect image features at different scales and orientations. denote the normalized first and second moments of these filtered outputs (computed in the frequency domain).

Similarity Retrieval: Homogeneous Texture Descriptor PBC n SRC: Similarity Retrieval Component. u u Components are computed by convolving the image with a set of filters tuned to detect image features at different scales and orientations. denote the normalized first and second moments of these filtered outputs (computed in the frequency domain).

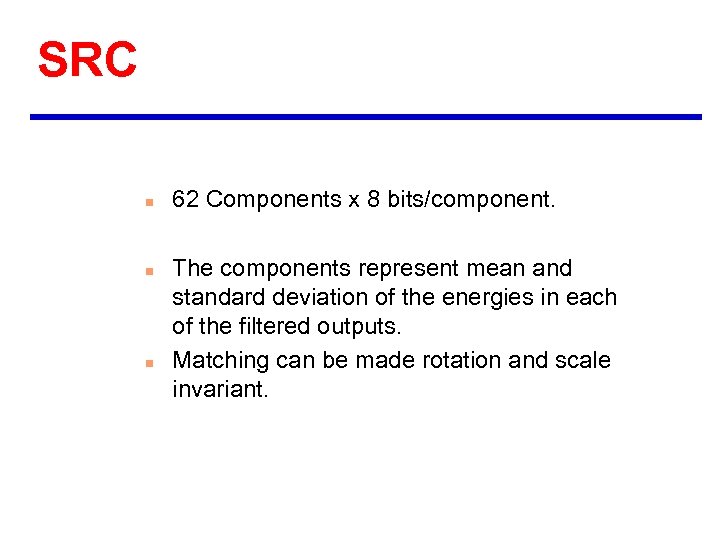

SRC n n n 62 Components x 8 bits/component. The components represent mean and standard deviation of the energies in each of the filtered outputs. Matching can be made rotation and scale invariant.

SRC n n n 62 Components x 8 bits/component. The components represent mean and standard deviation of the energies in each of the filtered outputs. Matching can be made rotation and scale invariant.

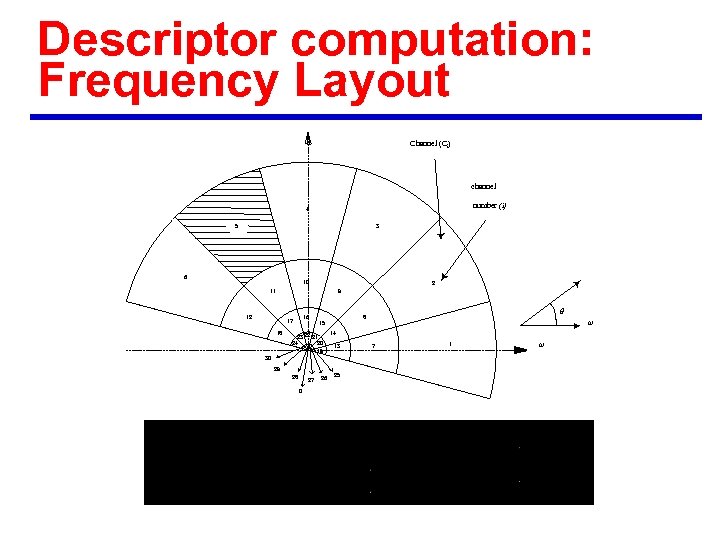

Descriptor computation: Frequency Layout Channel (Ci) channel number (i) 4 5 3 6 10 2 11 9 12 16 17 18 q 8 w 15 23 22 21 24 20 14 13 19 30 29 28 27 0 26 25 7 1 w

Descriptor computation: Frequency Layout Channel (Ci) channel number (i) 4 5 3 6 10 2 11 9 12 16 17 18 q 8 w 15 23 22 21 24 20 14 13 19 30 29 28 27 0 26 25 7 1 w

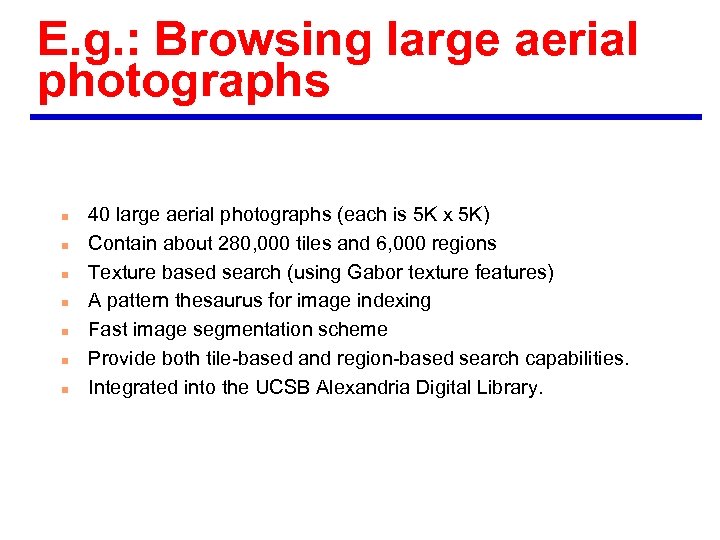

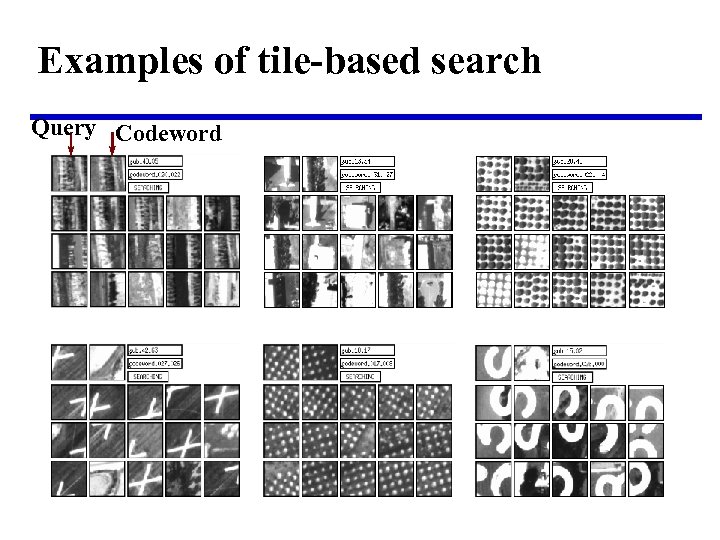

E. g. : Browsing large aerial photographs n n n n 40 large aerial photographs (each is 5 K x 5 K) Contain about 280, 000 tiles and 6, 000 regions Texture based search (using Gabor texture features) A pattern thesaurus for image indexing Fast image segmentation scheme Provide both tile-based and region-based search capabilities. Integrated into the UCSB Alexandria Digital Library.

E. g. : Browsing large aerial photographs n n n n 40 large aerial photographs (each is 5 K x 5 K) Contain about 280, 000 tiles and 6, 000 regions Texture based search (using Gabor texture features) A pattern thesaurus for image indexing Fast image segmentation scheme Provide both tile-based and region-based search capabilities. Integrated into the UCSB Alexandria Digital Library.

Examples of tile-based search Query Codeword

Examples of tile-based search Query Codeword

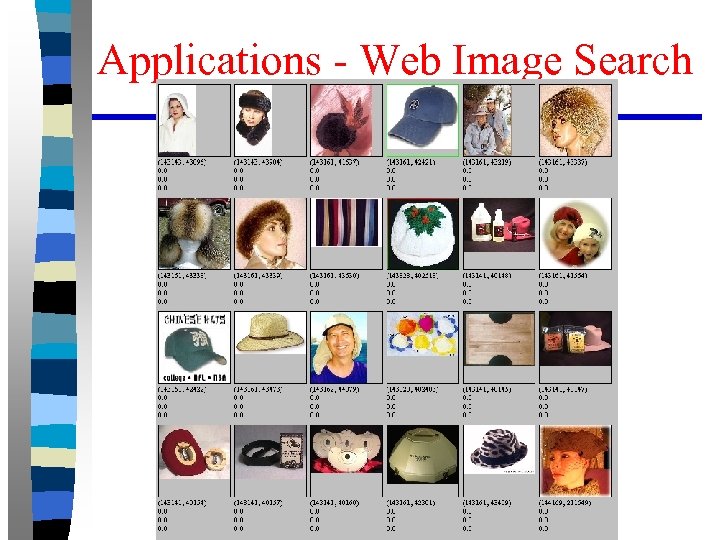

Applications - Web Image Search

Applications - Web Image Search

Web image search

Web image search

Applications - Web Image Search

Applications - Web Image Search

More details n http: //vision. ece. ucsb. edu/ u Go to category based image search demo.

More details n http: //vision. ece. ucsb. edu/ u Go to category based image search demo.

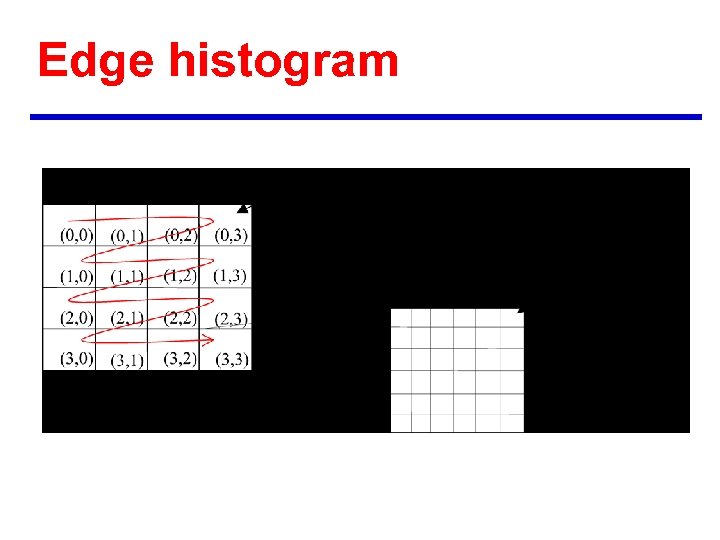

Edge histogram

Edge histogram

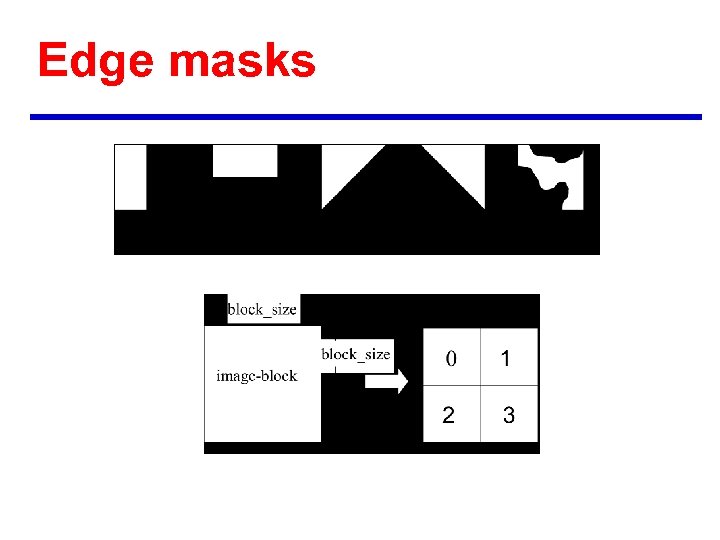

Edge masks

Edge masks

![Local edge histogram Histogram bins Local_Edge [0] Local_Edge [1] Local_Edge [2] Local_Edge [3] Local_Edge Local edge histogram Histogram bins Local_Edge [0] Local_Edge [1] Local_Edge [2] Local_Edge [3] Local_Edge](https://present5.com/presentation/5571aa8adec2d626a6ddc74dcdc3466d/image-73.jpg) Local edge histogram Histogram bins Local_Edge [0] Local_Edge [1] Local_Edge [2] Local_Edge [3] Local_Edge [4] Local_Edge [5] : : : Local_Edge [74] Local_Edge [75] Local_Edge [76] Local_Edge [77] Local_Edge [78] Local_Edge [79] Semantics Vertical edge of sub-image at (0, 0) Horizontal edge of sub-image at (0, 0) 45 degree edge of sub-image at (0, 0) 135 degree edge of sub-image at (0, 0) Non-directional edge of sub-image at (0, 0) Vertical edge of sub-image at (0, 1) : : : Non-directional edge of sub-image at (3, 2) Vertical edge of sub-image at (3, 3) Horizontal edge of sub-image at (3, 3) 45 degree edge of sub-image at (3, 3) 135 degree edge of sub-image at (3, 3) Non-directional edge of sub-image at (3, 3)

Local edge histogram Histogram bins Local_Edge [0] Local_Edge [1] Local_Edge [2] Local_Edge [3] Local_Edge [4] Local_Edge [5] : : : Local_Edge [74] Local_Edge [75] Local_Edge [76] Local_Edge [77] Local_Edge [78] Local_Edge [79] Semantics Vertical edge of sub-image at (0, 0) Horizontal edge of sub-image at (0, 0) 45 degree edge of sub-image at (0, 0) 135 degree edge of sub-image at (0, 0) Non-directional edge of sub-image at (0, 0) Vertical edge of sub-image at (0, 1) : : : Non-directional edge of sub-image at (3, 2) Vertical edge of sub-image at (3, 3) Horizontal edge of sub-image at (3, 3) 45 degree edge of sub-image at (3, 3) 135 degree edge of sub-image at (3, 3) Non-directional edge of sub-image at (3, 3)

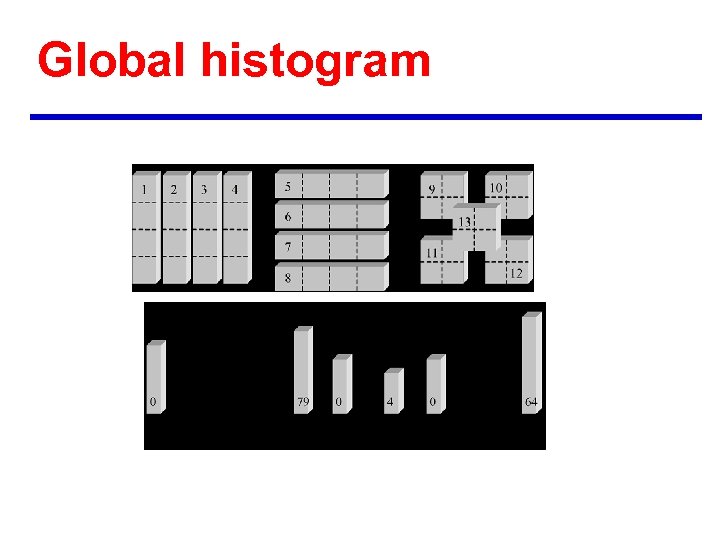

Global histogram

Global histogram

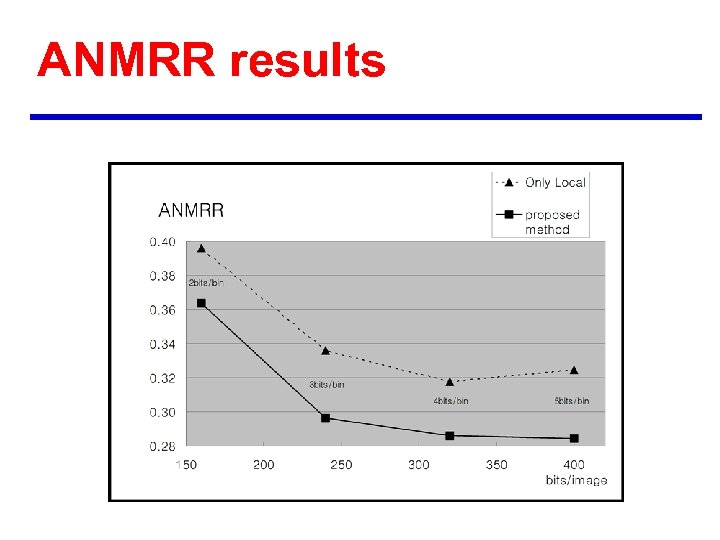

ANMRR results

ANMRR results

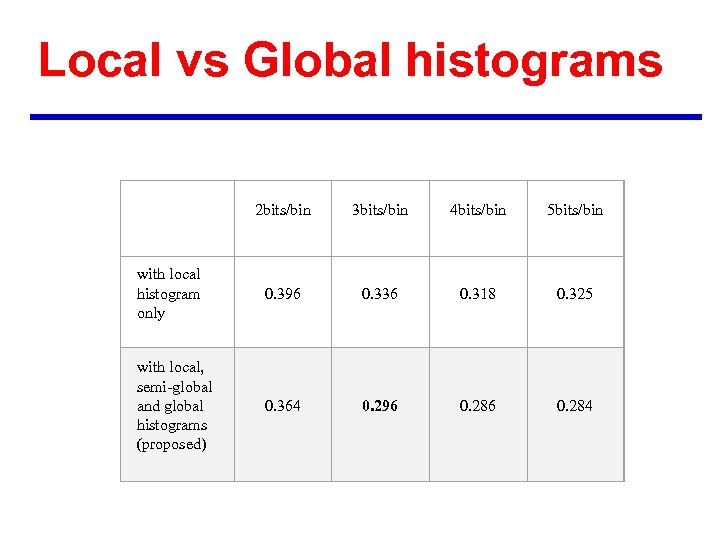

Local vs Global histograms 2 bits/bin 3 bits/bin 4 bits/bin 5 bits/bin with local histogram only 0. 396 0. 336 0. 318 0. 325 with local, semi-global and global histograms (proposed) 0. 364 0. 296 0. 284

Local vs Global histograms 2 bits/bin 3 bits/bin 4 bits/bin 5 bits/bin with local histogram only 0. 396 0. 336 0. 318 0. 325 with local, semi-global and global histograms (proposed) 0. 364 0. 296 0. 284

Some example results

Some example results

Visual Descriptors Color • Histogram • Scalable Color • Color Structure • GOF/GOP • Dominant Color • Color Layout Texture Shape • Texture Browsing • Contour Shape • Homogeneous texture Motion • Region Shape • Edge Histogram • Camera motion • Motion Trajectory • Parametric motion • Motion Activity

Visual Descriptors Color • Histogram • Scalable Color • Color Structure • GOF/GOP • Dominant Color • Color Layout Texture Shape • Texture Browsing • Contour Shape • Homogeneous texture Motion • Region Shape • Edge Histogram • Camera motion • Motion Trajectory • Parametric motion • Motion Activity

Shape Descriptors n n Region shape Contour shape

Shape Descriptors n n Region shape Contour shape

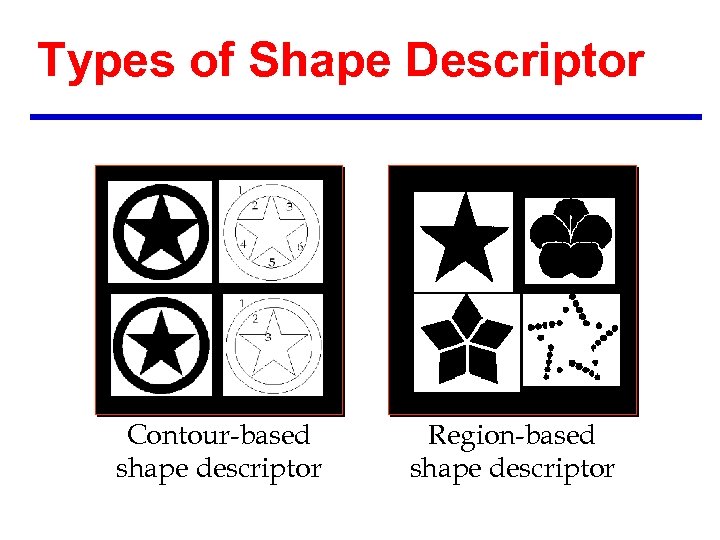

Types of Shape Descriptor Contour-based shape descriptor Region-based shape descriptor

Types of Shape Descriptor Contour-based shape descriptor Region-based shape descriptor

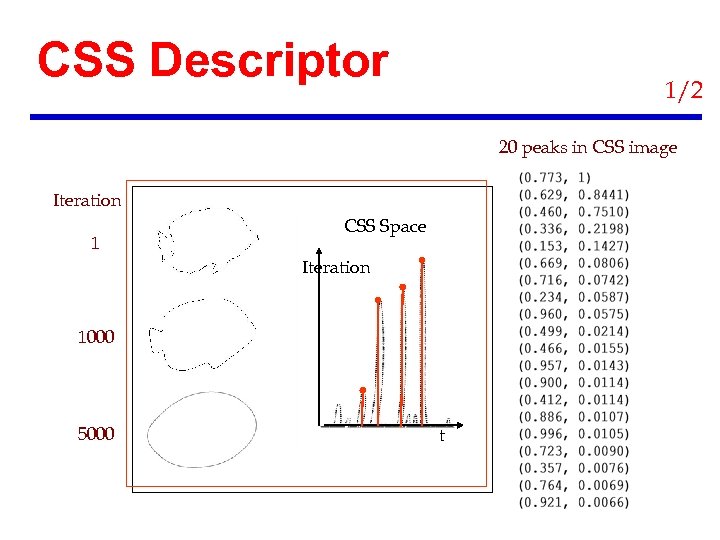

CSS Descriptor 1/2 20 peaks in CSS image Iteration 1 CSS Space Iteration 1000 5000 t

CSS Descriptor 1/2 20 peaks in CSS image Iteration 1 CSS Space Iteration 1000 5000 t

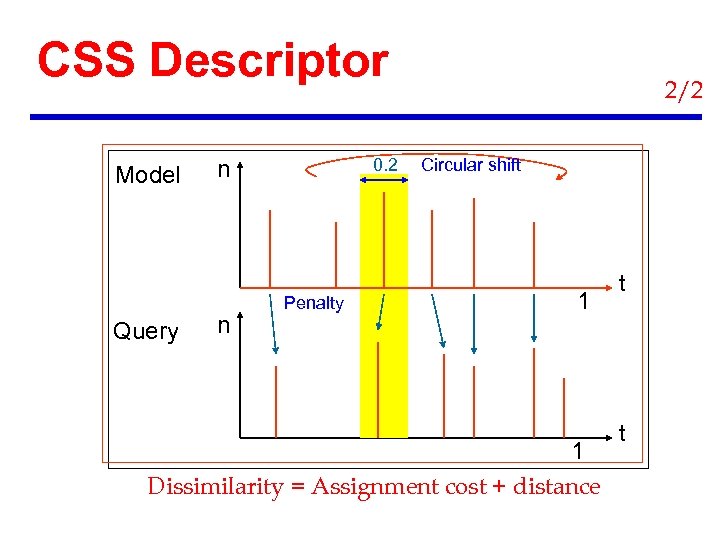

CSS Descriptor Model Query 0. 2 n n Penalty 2/2 Circular shift 1 1 Dissimilarity = Assignment cost + distance t t

CSS Descriptor Model Query 0. 2 n n Penalty 2/2 Circular shift 1 1 Dissimilarity = Assignment cost + distance t t

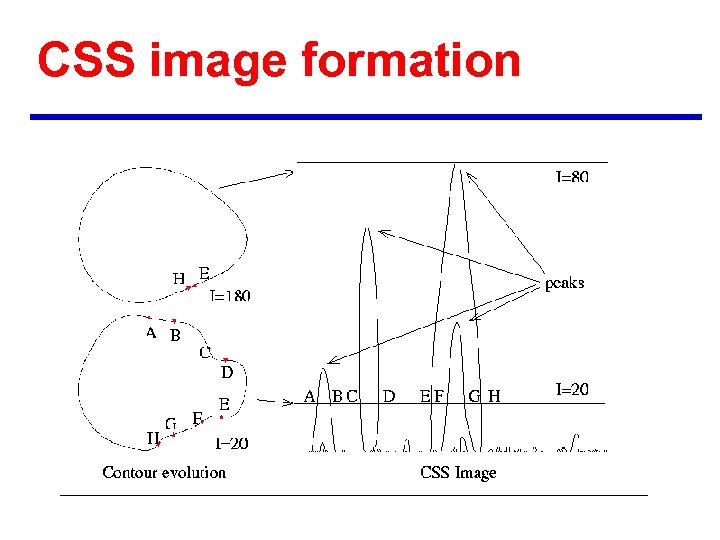

CSS image formation

CSS image formation

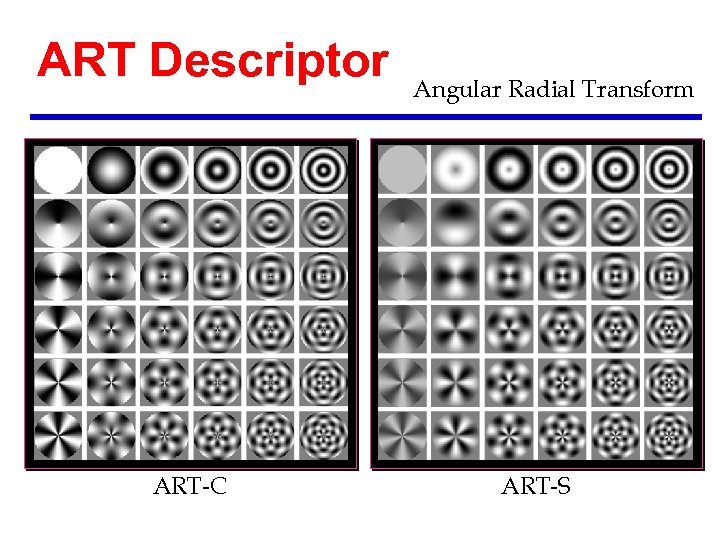

ART Descriptor ART-C Angular Radial Transform ART-S

ART Descriptor ART-C Angular Radial Transform ART-S

The Definition of ART basis function Angular function Radial function ART coefficients

The Definition of ART basis function Angular function Radial function ART coefficients

Rotation Invariance of the ART A Rotated image Its ART coefficients Relationship with the original Magnitude has rotation invariance

Rotation Invariance of the ART A Rotated image Its ART coefficients Relationship with the original Magnitude has rotation invariance

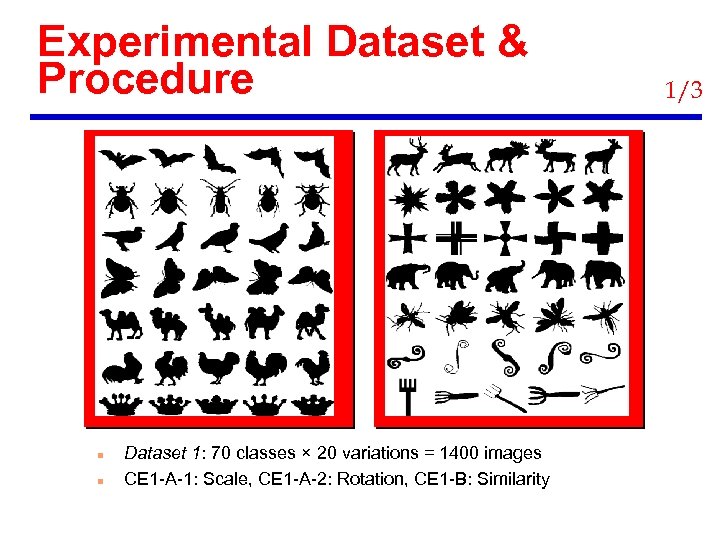

Experimental Dataset & Procedure n n Dataset 1: 70 classes × 20 variations = 1400 images CE 1 -A-1: Scale, CE 1 -A-2: Rotation, CE 1 -B: Similarity 1/3

Experimental Dataset & Procedure n n Dataset 1: 70 classes × 20 variations = 1400 images CE 1 -A-1: Scale, CE 1 -A-2: Rotation, CE 1 -B: Similarity 1/3

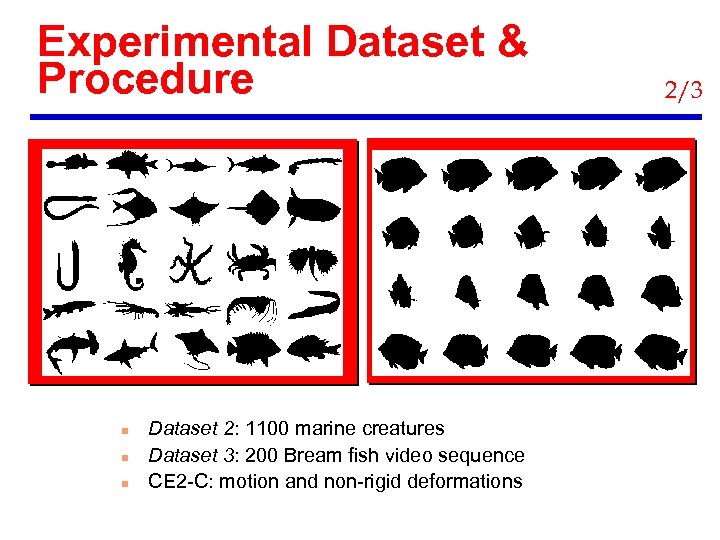

Experimental Dataset & Procedure n n n Dataset 2: 1100 marine creatures Dataset 3: 200 Bream fish video sequence CE 2 -C: motion and non-rigid deformations 2/3

Experimental Dataset & Procedure n n n Dataset 2: 1100 marine creatures Dataset 3: 200 Bream fish video sequence CE 2 -C: motion and non-rigid deformations 2/3

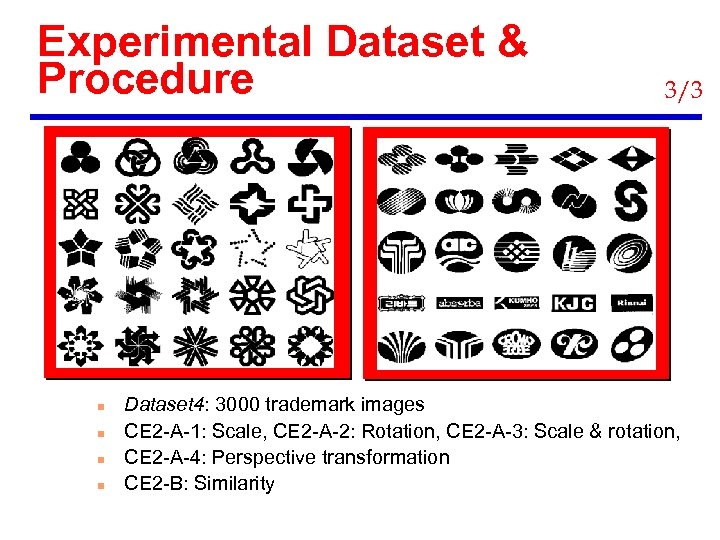

Experimental Dataset & Procedure n n 3/3 Dataset 4: 3000 trademark images CE 2 -A-1: Scale, CE 2 -A-2: Rotation, CE 2 -A-3: Scale & rotation, CE 2 -A-4: Perspective transformation CE 2 -B: Similarity

Experimental Dataset & Procedure n n 3/3 Dataset 4: 3000 trademark images CE 2 -A-1: Scale, CE 2 -A-2: Rotation, CE 2 -A-3: Scale & rotation, CE 2 -A-4: Perspective transformation CE 2 -B: Similarity

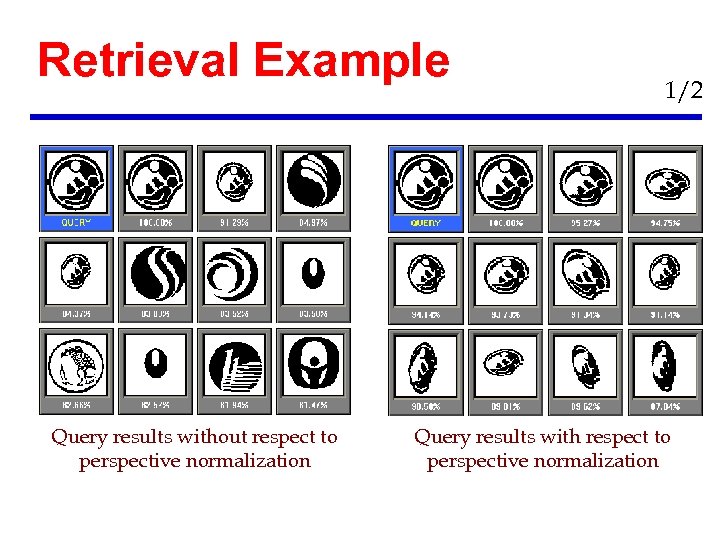

Retrieval Example Query results without respect to perspective normalization 1/2 Query results with respect to perspective normalization

Retrieval Example Query results without respect to perspective normalization 1/2 Query results with respect to perspective normalization

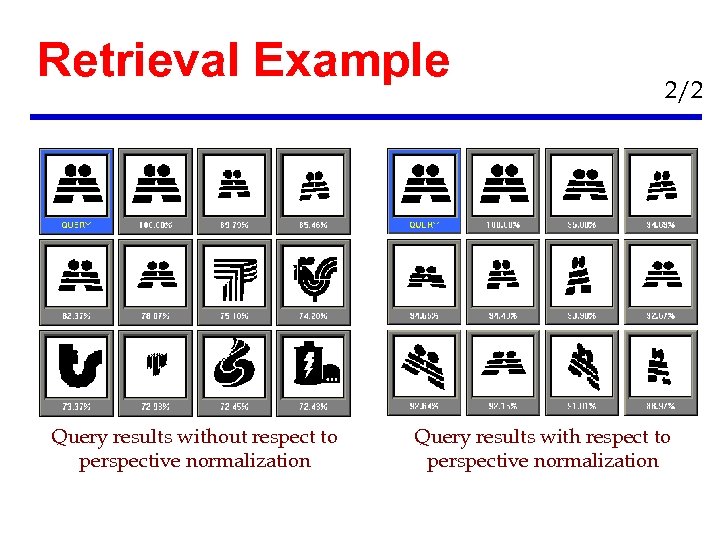

Retrieval Example Query results without respect to perspective normalization 2/2 Query results with respect to perspective normalization

Retrieval Example Query results without respect to perspective normalization 2/2 Query results with respect to perspective normalization

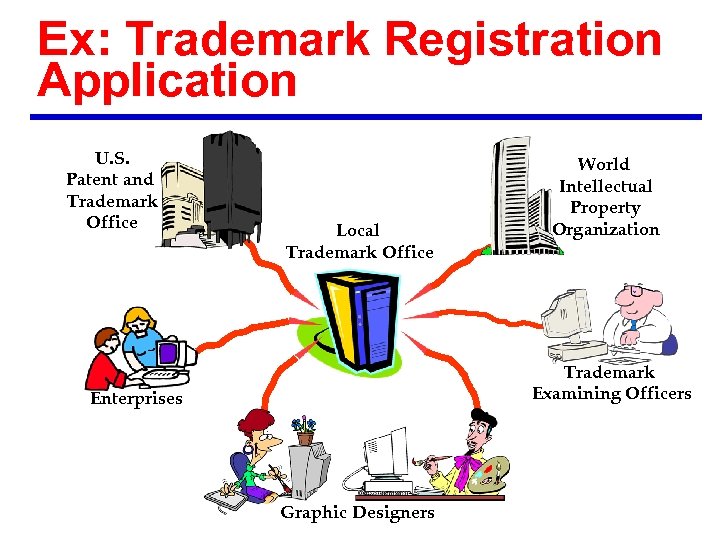

Ex: Trademark Registration Application U. S. Patent and Trademark Office Local Trademark Office World Intellectual Property Organization Trademark Examining Officers Enterprises Graphic Designers

Ex: Trademark Registration Application U. S. Patent and Trademark Office Local Trademark Office World Intellectual Property Organization Trademark Examining Officers Enterprises Graphic Designers

Visual Descriptors Color • Histogram • Scalable Color • Color Structure • GOF/GOP • Dominant Color • Color Layout Texture Shape • Texture Browsing • Contour Shape • Homogeneous texture Motion • Region Shape • Edge Histogram • Camera motion • Motion Trajectory • Parametric motion • Motion Activity

Visual Descriptors Color • Histogram • Scalable Color • Color Structure • GOF/GOP • Dominant Color • Color Layout Texture Shape • Texture Browsing • Contour Shape • Homogeneous texture Motion • Region Shape • Edge Histogram • Camera motion • Motion Trajectory • Parametric motion • Motion Activity

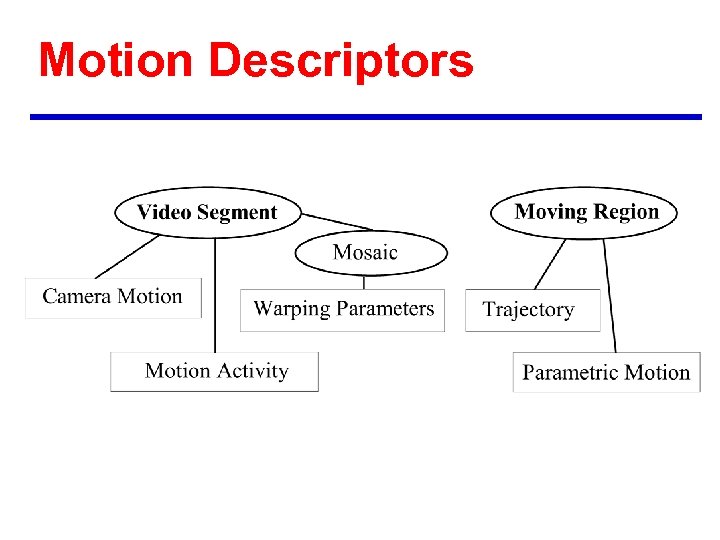

Motion Descriptors

Motion Descriptors

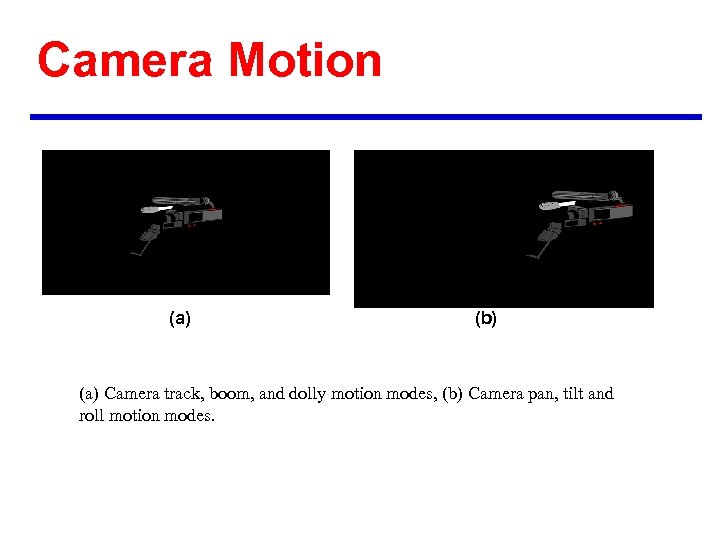

Camera Motion (a) (b) (a) Camera track, boom, and dolly motion modes, (b) Camera pan, tilt and roll motion modes.

Camera Motion (a) (b) (a) Camera track, boom, and dolly motion modes, (b) Camera pan, tilt and roll motion modes.

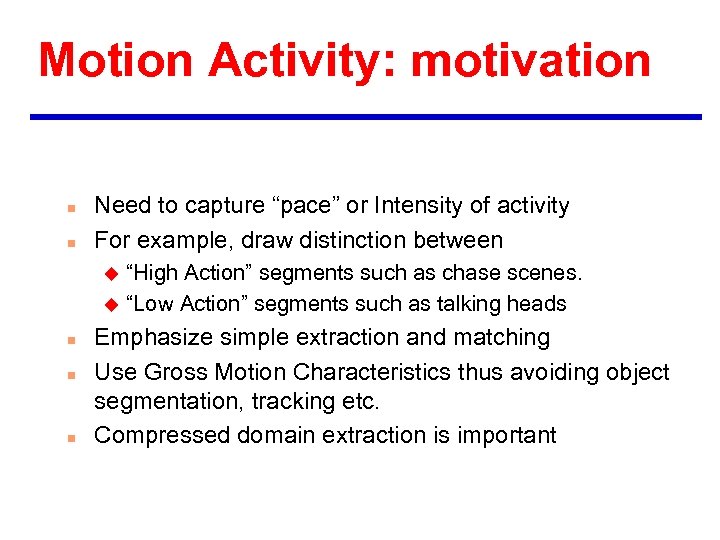

Motion Activity: motivation n n Need to capture “pace” or Intensity of activity For example, draw distinction between “High Action” segments such as chase scenes. u “Low Action” segments such as talking heads u n n n Emphasize simple extraction and matching Use Gross Motion Characteristics thus avoiding object segmentation, tracking etc. Compressed domain extraction is important

Motion Activity: motivation n n Need to capture “pace” or Intensity of activity For example, draw distinction between “High Action” segments such as chase scenes. u “Low Action” segments such as talking heads u n n n Emphasize simple extraction and matching Use Gross Motion Characteristics thus avoiding object segmentation, tracking etc. Compressed domain extraction is important

PROPOSED MOTION ACTIVITY DESCRIPTOR n Attributes of Motion Activity Descriptor Intensity/Magnitude - 3 bits u Spatial Characteristics - 16 bits u Temporal Characteristics - 30 bits u Directional Characteristics - 3 bits u

PROPOSED MOTION ACTIVITY DESCRIPTOR n Attributes of Motion Activity Descriptor Intensity/Magnitude - 3 bits u Spatial Characteristics - 16 bits u Temporal Characteristics - 30 bits u Directional Characteristics - 3 bits u

INTENSITY n n Expresses “pace” or Intensity of Action Uses scale of 1 -5, very low - medium - high very high Extracted by suitably quantizing variance of motion vector magnitude Successfully tested with subjectively constructed Ground Truth

INTENSITY n n Expresses “pace” or Intensity of Action Uses scale of 1 -5, very low - medium - high very high Extracted by suitably quantizing variance of motion vector magnitude Successfully tested with subjectively constructed Ground Truth

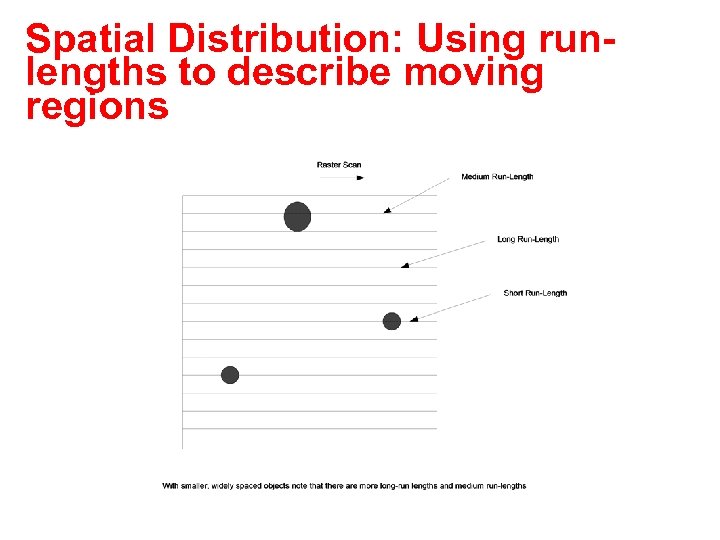

Spatial Distribution: Using runlengths to describe moving regions

Spatial Distribution: Using runlengths to describe moving regions

SPATIAL DISTRIBUTION n n n Captures the size and number of moving regions in the shot on a frame by frame basis Enables distinction between shots with one large region in the middle such as talking heads and shots with several small moving regions such as aerial soccer shots Thus “sparse” shots have many long runs while “dense” shots do not have many long runs.

SPATIAL DISTRIBUTION n n n Captures the size and number of moving regions in the shot on a frame by frame basis Enables distinction between shots with one large region in the middle such as talking heads and shots with several small moving regions such as aerial soccer shots Thus “sparse” shots have many long runs while “dense” shots do not have many long runs.

TEMPORAL DISTRIBUTION n n n Expresses fraction of the duration of each level of activity in the total duration of the shot Straightforward extension of the intensity of motion activity to the temporal dimension For instance, since a talking head is typically exclusively low activity it would have zero entries for all levels except one

TEMPORAL DISTRIBUTION n n n Expresses fraction of the duration of each level of activity in the total duration of the shot Straightforward extension of the intensity of motion activity to the temporal dimension For instance, since a talking head is typically exclusively low activity it would have zero entries for all levels except one

DIRECTION n n n Expresses dominant direction if definable as one of a set of eight equally spaced directions Extracted by using averages of angle (direction) of each motion vector Useful where there is strong directional motion

DIRECTION n n n Expresses dominant direction if definable as one of a set of eight equally spaced directions Extracted by using averages of angle (direction) of each motion vector Useful where there is strong directional motion

APPLICATION TO VIDEO BROWSING n Extraction of 10 most active segments in a news program

APPLICATION TO VIDEO BROWSING n Extraction of 10 most active segments in a news program

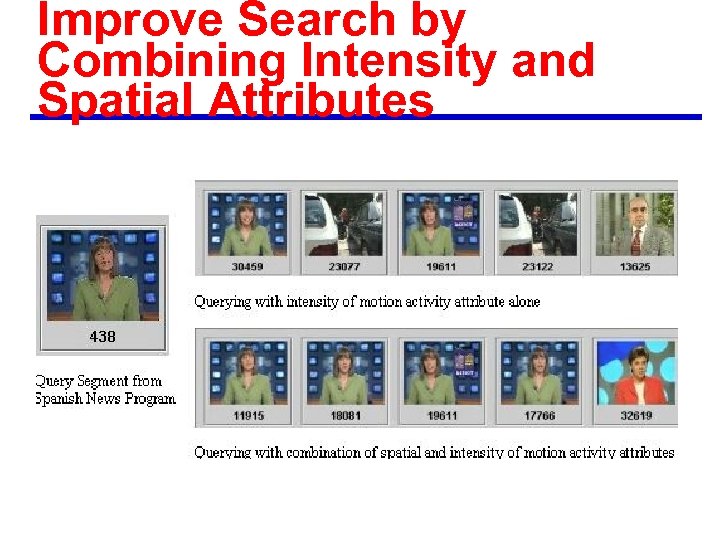

Improve Search by Combining Intensity and Spatial Attributes

Improve Search by Combining Intensity and Spatial Attributes

APPLICATIONS n n n VIDEO BROWSING RETRIEVAL FROM STORED VIDEO CONTENT RE-PURPOSING CONTENT BASED PRESENTATION SURVEILLANCE

APPLICATIONS n n n VIDEO BROWSING RETRIEVAL FROM STORED VIDEO CONTENT RE-PURPOSING CONTENT BASED PRESENTATION SURVEILLANCE

Motion activity: conclusions n n n COMPACT DESCRIPTOR EASY TO EXTRACT AND MATCH EFFECTIVE BY ITSELF NUMEROUS APPLICATIONS EFFECTIVE IN COMBINATION WITH OTHER DESCRIPTORS DEMO at the end.

Motion activity: conclusions n n n COMPACT DESCRIPTOR EASY TO EXTRACT AND MATCH EFFECTIVE BY ITSELF NUMEROUS APPLICATIONS EFFECTIVE IN COMBINATION WITH OTHER DESCRIPTORS DEMO at the end.

Motion Trajectory First order approximation: second order approximation:

Motion Trajectory First order approximation: second order approximation:

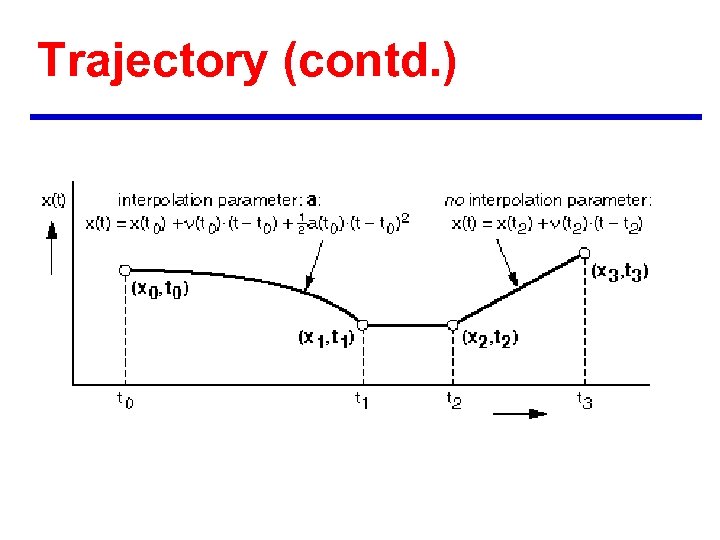

Trajectory (contd. )

Trajectory (contd. )

Conclusions n n n All of the visual descriptors in the MPEG-7 working draft have undergone rigorous testing and evaluation. They represent the state of the art descriptors in image and video retrieval. For further information, refer to the MPEG documents (see the next slide; )

Conclusions n n n All of the visual descriptors in the MPEG-7 working draft have undergone rigorous testing and evaluation. They represent the state of the art descriptors in image and video retrieval. For further information, refer to the MPEG documents (see the next slide; )

Ds to DS Are we ready for this leap of faith?

Ds to DS Are we ready for this leap of faith?

Further information n n Major MPEG-7 documents are public: u MPEG Home page: http: //www. cselt. it/mpeg/ u Public documents: http: //www. cselt. it/mpeg/working_documents. htm u Also check: http: //www. mpeg-7. com Special issues of journals: u Signal Processing: Image Communications, Vol. 16(1 -2), Sept. 2000: http: //www. elsevier. com/locate/image u IEEE Trans. on Circuits and Systems on Video Technology (June 2001) u IEEE Trans. Image Processing (Jan 2000 special issue on content based retrieval. ) IBM MPEG-7 Visual Annotation Tool: u http: //www. alphaworks. ibm. com/tech/mpeg-7 Book on MPEG-7: to be published later this year (Manjunath, Salembier and Sikora, Wiley International, 2001. )

Further information n n Major MPEG-7 documents are public: u MPEG Home page: http: //www. cselt. it/mpeg/ u Public documents: http: //www. cselt. it/mpeg/working_documents. htm u Also check: http: //www. mpeg-7. com Special issues of journals: u Signal Processing: Image Communications, Vol. 16(1 -2), Sept. 2000: http: //www. elsevier. com/locate/image u IEEE Trans. on Circuits and Systems on Video Technology (June 2001) u IEEE Trans. Image Processing (Jan 2000 special issue on content based retrieval. ) IBM MPEG-7 Visual Annotation Tool: u http: //www. alphaworks. ibm. com/tech/mpeg-7 Book on MPEG-7: to be published later this year (Manjunath, Salembier and Sikora, Wiley International, 2001. )