3003acaca0792119fb4ba728d5f2ea8c.ppt

- Количество слайдов: 58

I 256 Applied Natural Language Processing Fall 2009 Text Summarization Barbara Rosario 1

I 256 Applied Natural Language Processing Fall 2009 Text Summarization Barbara Rosario 1

Outline • • Introduction and Applications Types of summarization tasks Approaches and paradigms Evaluation methods • Acknowledgments – Slides inspired (and taken) from • • Automatic Summarization by Inderjeet Mani www. isi. edu/~marcu/acl-tutorial. ppt (Hovy and Marcu) http: //summarization. com/ http: //www. summarization. com/sigirtutorial 2004. ppt (Radez) 2

Outline • • Introduction and Applications Types of summarization tasks Approaches and paradigms Evaluation methods • Acknowledgments – Slides inspired (and taken) from • • Automatic Summarization by Inderjeet Mani www. isi. edu/~marcu/acl-tutorial. ppt (Hovy and Marcu) http: //summarization. com/ http: //www. summarization. com/sigirtutorial 2004. ppt (Radez) 2

Introduction • The problem – Information overload • 4 Billion URLs indexed by Google • 200 TB of data on the Web [Lyman and Varian 03] • Information is created every day in enormous amounts • One solution – summarization • The goal of an automatic summarization is to take an information source, extract content from it and present the most important content to the user in a condensed form and in a manner sensitive to the user’s or application’s needs – Other solutions are QA, information extraction, IR, indexing, document clustering, visualization 3

Introduction • The problem – Information overload • 4 Billion URLs indexed by Google • 200 TB of data on the Web [Lyman and Varian 03] • Information is created every day in enormous amounts • One solution – summarization • The goal of an automatic summarization is to take an information source, extract content from it and present the most important content to the user in a condensed form and in a manner sensitive to the user’s or application’s needs – Other solutions are QA, information extraction, IR, indexing, document clustering, visualization 3

Applications • Abstracts for scientific and other articles • News summarization (mostly multiple document summarization) – Multimedia news summaries (“watch the news and tell me what happened while I was away”) • • Web pages for search engines Hand-held devices Question answering and data/intelligence gathering Physicians’ aid – Provide physicians with summaries of on-line medical literature related to a patient’s medical record • Meetings summarization • Aid for the handicapped – Summarization for reading machine for the blind. 4

Applications • Abstracts for scientific and other articles • News summarization (mostly multiple document summarization) – Multimedia news summaries (“watch the news and tell me what happened while I was away”) • • Web pages for search engines Hand-held devices Question answering and data/intelligence gathering Physicians’ aid – Provide physicians with summaries of on-line medical literature related to a patient’s medical record • Meetings summarization • Aid for the handicapped – Summarization for reading machine for the blind. 4

Current applications • General purpose commercial summarization tools: – Auto. Summarize MS Word – In. Xight Summarizer 5

Current applications • General purpose commercial summarization tools: – Auto. Summarize MS Word – In. Xight Summarizer 5

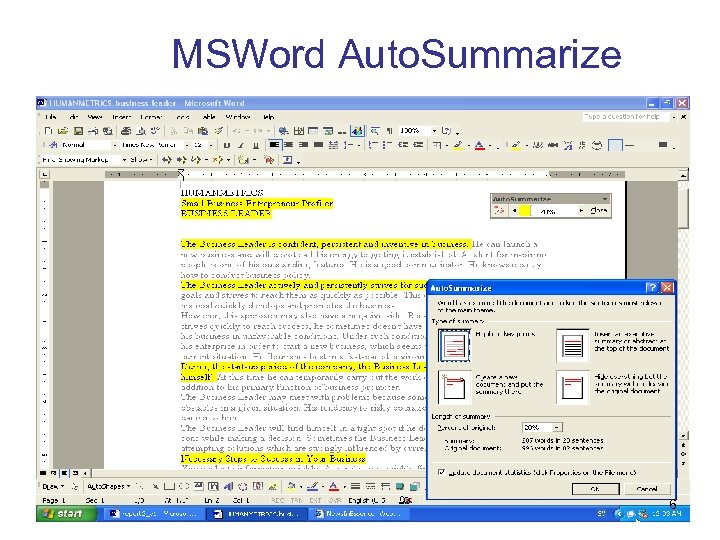

MSWord Auto. Summarize 6

MSWord Auto. Summarize 6

Human summarization and abstracting • What professional abstractors do – “To take an original article, understand it and pack it neatly into a nutshell without loss of substance or clarity presents a challenge which many have felt worth taking up for the joys of achievement alone. These are the characteristics of an art form”. (Ashworth) 7

Human summarization and abstracting • What professional abstractors do – “To take an original article, understand it and pack it neatly into a nutshell without loss of substance or clarity presents a challenge which many have felt worth taking up for the joys of achievement alone. These are the characteristics of an art form”. (Ashworth) 7

Human summarization and abstracting • The abstract and its use: – To promote current awareness – To save reading time – To facilitate selection – To facilitate literature searches – To improve indexing efficiency – To aid in the preparation of reviews 8

Human summarization and abstracting • The abstract and its use: – To promote current awareness – To save reading time – To facilitate selection – To facilitate literature searches – To improve indexing efficiency – To aid in the preparation of reviews 8

American National Standard for Writing Abstracts – State the purpose, methods, results, and conclusions presented in the original document, either in that order or with an initial emphasis on results and conclusions. – Make the abstract as informative as the nature of the document will permit, so that readers may decide, quickly and accurately, whether they need to read the entire document. – Avoid including background information or citing the work of others in the abstract, unless the study is a replication or evaluation of their work. Cremmins 82, 96 9

American National Standard for Writing Abstracts – State the purpose, methods, results, and conclusions presented in the original document, either in that order or with an initial emphasis on results and conclusions. – Make the abstract as informative as the nature of the document will permit, so that readers may decide, quickly and accurately, whether they need to read the entire document. – Avoid including background information or citing the work of others in the abstract, unless the study is a replication or evaluation of their work. Cremmins 82, 96 9

American National Standard for Writing Abstracts – Do not include information in the abstract that is not contained in the textual material being abstracted. – Verify that all quantitative and qualitative information used in the abstract agrees with the information contained in the full text of the document. – Use standard English and precise technical terms, and follow conventional grammar and punctuation rules. – Give expanded versions of lesser known abbreviations and acronyms, and verbalize symbols that may be unfamiliar to readers of the abstract. – Omit needless words, phrases, and sentences. Cremmins 82, 96 10

American National Standard for Writing Abstracts – Do not include information in the abstract that is not contained in the textual material being abstracted. – Verify that all quantitative and qualitative information used in the abstract agrees with the information contained in the full text of the document. – Use standard English and precise technical terms, and follow conventional grammar and punctuation rules. – Give expanded versions of lesser known abbreviations and acronyms, and verbalize symbols that may be unfamiliar to readers of the abstract. – Omit needless words, phrases, and sentences. Cremmins 82, 96 10

Types of Summaries • Indicative vs. Informative vs. Critical – Indicative: give an idea of what is there, provides a reference function for selecting documents for more in-depth reading – Informative: a substitute for the entire document, covers all the salient information in the source at some level of detail – Critical: evaluates the subject matter of the source, expressing the abstractor’s view on the quality of the work of the author 11

Types of Summaries • Indicative vs. Informative vs. Critical – Indicative: give an idea of what is there, provides a reference function for selecting documents for more in-depth reading – Informative: a substitute for the entire document, covers all the salient information in the source at some level of detail – Critical: evaluates the subject matter of the source, expressing the abstractor’s view on the quality of the work of the author 11

Types of Summaries (cont. ) • Input: single document vs. multi-document (MDS) – MDS: what’s common across documents or different in a particular one • Input: media types (text, audio, table, pictures, diagrams) • Output: media types (text, audio, table, pictures, diagrams) 12

Types of Summaries (cont. ) • Input: single document vs. multi-document (MDS) – MDS: what’s common across documents or different in a particular one • Input: media types (text, audio, table, pictures, diagrams) • Output: media types (text, audio, table, pictures, diagrams) 12

Types of Summaries (cont. ) • Output: Extract vs. Abstract – Extract: summary consisting entirely of material copied from the input – Abstract: summary where some material is not present in the input • Paraphrase, generation – Research shows that sometimes readers prefer extracts 13

Types of Summaries (cont. ) • Output: Extract vs. Abstract – Extract: summary consisting entirely of material copied from the input – Abstract: summary where some material is not present in the input • Paraphrase, generation – Research shows that sometimes readers prefer extracts 13

Types of Summaries (cont. ) • Output: User-focused (or topic-focused or query focused): summaries that are tailored to the requirements of a particular user or group of users – Background • Does the reader have the needed prior knowledge? Expert reader vs. Novice reader • General: summaries aimed at a particular – usually broad –readership community 14

Types of Summaries (cont. ) • Output: User-focused (or topic-focused or query focused): summaries that are tailored to the requirements of a particular user or group of users – Background • Does the reader have the needed prior knowledge? Expert reader vs. Novice reader • General: summaries aimed at a particular – usually broad –readership community 14

Types of Summaries (cont. ) • Output: – language chosen for summarization – format of the resulting summary (table/paragraph/key words/documents with different sections and headings) 15

Types of Summaries (cont. ) • Output: – language chosen for summarization – format of the resulting summary (table/paragraph/key words/documents with different sections and headings) 15

Parameters • Compression rate (summary length/source length) • Audience (user-focused vs. generic) • Relation to source (extract vs. abstract) • Function (indicative vs. informative vs. critical) • Coherence: the way the parts of the text gather together to form an integrated whole – Coherent vs. incoherent • Incoherent: unresolved anaphors, gaps in the reasoning, sentences which repeat the same or similar meaning (redundancy) a lack of organization 16

Parameters • Compression rate (summary length/source length) • Audience (user-focused vs. generic) • Relation to source (extract vs. abstract) • Function (indicative vs. informative vs. critical) • Coherence: the way the parts of the text gather together to form an integrated whole – Coherent vs. incoherent • Incoherent: unresolved anaphors, gaps in the reasoning, sentences which repeat the same or similar meaning (redundancy) a lack of organization 16

Parameters (cont. ) • Span (single or MDS) • Language – monolingual, multi-lingual, cross-lingual, sublanguages (technical, tourism) • Media • Genres – Headlines, minutes, biographies, movie summaries, chronologies, etc. 17

Parameters (cont. ) • Span (single or MDS) • Language – monolingual, multi-lingual, cross-lingual, sublanguages (technical, tourism) • Media • Genres – Headlines, minutes, biographies, movie summaries, chronologies, etc. 17

Process • Three phases (typically) 1. Analysis – content identification – Analyze the input, build an internal representation of it – Can be done at different levels • Morphology, syntax, semantics, discourse – And looking at different elements • Sub-word, phrase, sentence, paragraph, document 2. Transformation (or refinement) -- conceptual organization – Transform the internal representation into a summary representation (mostly for abstracts or MDS) 3. Synthesis (Realization) – Summary representation is rendered into natural language 18

Process • Three phases (typically) 1. Analysis – content identification – Analyze the input, build an internal representation of it – Can be done at different levels • Morphology, syntax, semantics, discourse – And looking at different elements • Sub-word, phrase, sentence, paragraph, document 2. Transformation (or refinement) -- conceptual organization – Transform the internal representation into a summary representation (mostly for abstracts or MDS) 3. Synthesis (Realization) – Summary representation is rendered into natural language 18

Summarization approaches • Shallow approaches – Syntactic level at most – Typically produce extracts – Extract salient parts of the source text and then arrange and present them in some effective manner • Deeper approaches – Sentential semantic level – Produce abstracts and the synthesis phase involves natural language generation. – Knowledge-intensive, may require some domain specific coding • Example: generation summaries of basketball statistics or stock markets bulletins 19

Summarization approaches • Shallow approaches – Syntactic level at most – Typically produce extracts – Extract salient parts of the source text and then arrange and present them in some effective manner • Deeper approaches – Sentential semantic level – Produce abstracts and the synthesis phase involves natural language generation. – Knowledge-intensive, may require some domain specific coding • Example: generation summaries of basketball statistics or stock markets bulletins 19

Outline • • Introduction and Applications Types of summarization tasks Approaches and paradigms Evaluation methods 20

Outline • • Introduction and Applications Types of summarization tasks Approaches and paradigms Evaluation methods 20

Overview of Extraction Methods • General method: – score each entity (sentence, word) ; combine scores; choose best sentence(s) • Word frequencies throughout the text • Position in the text – lead method; optimal position policy – title/heading method • Cue phrases in sentences • Cohesion: links among words – word co-occurrence – coreference – lexical chains • Discourse structure of the text • Information Extraction: parsing and analysis 21

Overview of Extraction Methods • General method: – score each entity (sentence, word) ; combine scores; choose best sentence(s) • Word frequencies throughout the text • Position in the text – lead method; optimal position policy – title/heading method • Cue phrases in sentences • Cohesion: links among words – word co-occurrence – coreference – lexical chains • Discourse structure of the text • Information Extraction: parsing and analysis 21

Using Word Frequencies • Luhn 58: Very first work in automated summarization • Assumptions: – Frequent words indicate the topic – Frequent means with reference to the corpus frequency – Clusters of frequent words indicate summarizing sentence • Stemming based on similar prefix characters • Very common words and very rare words are ignored • Evaluation: straightforward approach empirically shown to be mostly detrimental in summarization systems. 22

Using Word Frequencies • Luhn 58: Very first work in automated summarization • Assumptions: – Frequent words indicate the topic – Frequent means with reference to the corpus frequency – Clusters of frequent words indicate summarizing sentence • Stemming based on similar prefix characters • Very common words and very rare words are ignored • Evaluation: straightforward approach empirically shown to be mostly detrimental in summarization systems. 22

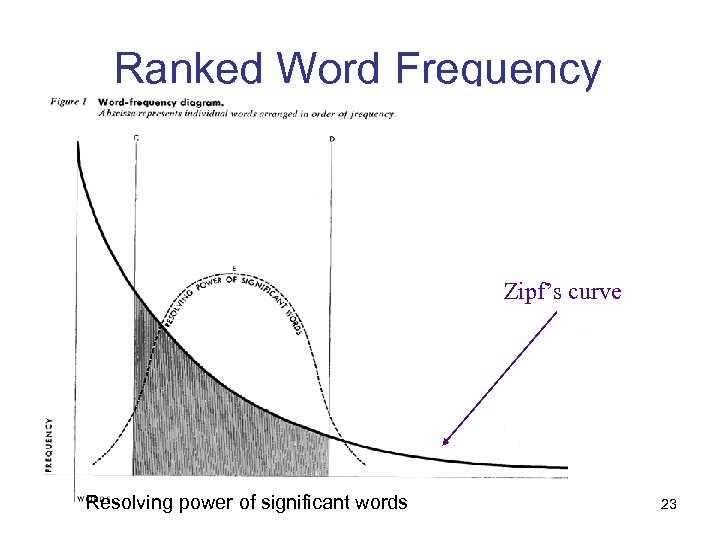

Ranked Word Frequency Zipf’s curve Resolving power of significant words 23

Ranked Word Frequency Zipf’s curve Resolving power of significant words 23

Position in the text • Claim : Important sentences occur in specific positions – “lead-based” summary • just take first sentence(s)! – Important information occurs in specific sections of the document (introduction/conclusion) • Experiments: – In 85% of 200 individual paragraphs the topic sentences occurred in initial position and in 7% in final position 24

Position in the text • Claim : Important sentences occur in specific positions – “lead-based” summary • just take first sentence(s)! – Important information occurs in specific sections of the document (introduction/conclusion) • Experiments: – In 85% of 200 individual paragraphs the topic sentences occurred in initial position and in 7% in final position 24

Title method • Claim : title of document indicates its content – Unless editors are being cute – Not true for novels usually – What about blogs …? • Words in title help find relevant content – Create a list of title words, remove “stop words” – Use those as keywords in order to find important sentences 25

Title method • Claim : title of document indicates its content – Unless editors are being cute – Not true for novels usually – What about blogs …? • Words in title help find relevant content – Create a list of title words, remove “stop words” – Use those as keywords in order to find important sentences 25

Optimum Position Policy (OPP) • Claim: Important sentences are located at positions that are genre-dependent; these positions can be determined automatically through training – Corpus: 13000 newspaper articles (ZIFF corpus). – Step 1: For each article, determine overlap between sentences and the index terms for the article. – Step 2: Determine a partial ordering over the locations where sentences containing important words occur: Optimal Position Policy (OPP) • (Some recent work looked at the use of citation sentences. ) 26

Optimum Position Policy (OPP) • Claim: Important sentences are located at positions that are genre-dependent; these positions can be determined automatically through training – Corpus: 13000 newspaper articles (ZIFF corpus). – Step 1: For each article, determine overlap between sentences and the index terms for the article. – Step 2: Determine a partial ordering over the locations where sentences containing important words occur: Optimal Position Policy (OPP) • (Some recent work looked at the use of citation sentences. ) 26

Cue phrases method • Claim : Important sentences contain cue words/indicative phrases – – “The main aim of the present paper is to describe…” “The purpose of this article is to review…” “In this report, we outline…” “Our investigation has shown that…” • Some words are considered bonus others stigma – bonus: comparatives, superlatives, conclusive expressions, etc. – stigma: negatives, pronouns, etc. non-important sentences contain ‘stigma phrases’ such as hardly and impossible. • These phrases can be detected automatically • Method: Add to sentence score if it contains a bonus phrase, penalize if it contains a stigma phrase. 27

Cue phrases method • Claim : Important sentences contain cue words/indicative phrases – – “The main aim of the present paper is to describe…” “The purpose of this article is to review…” “In this report, we outline…” “Our investigation has shown that…” • Some words are considered bonus others stigma – bonus: comparatives, superlatives, conclusive expressions, etc. – stigma: negatives, pronouns, etc. non-important sentences contain ‘stigma phrases’ such as hardly and impossible. • These phrases can be detected automatically • Method: Add to sentence score if it contains a bonus phrase, penalize if it contains a stigma phrase. 27

Bayesian Classifier • Statistical learning method • Corpus – 188 document + summary pairs from scientific journals 28

Bayesian Classifier • Statistical learning method • Corpus – 188 document + summary pairs from scientific journals 28

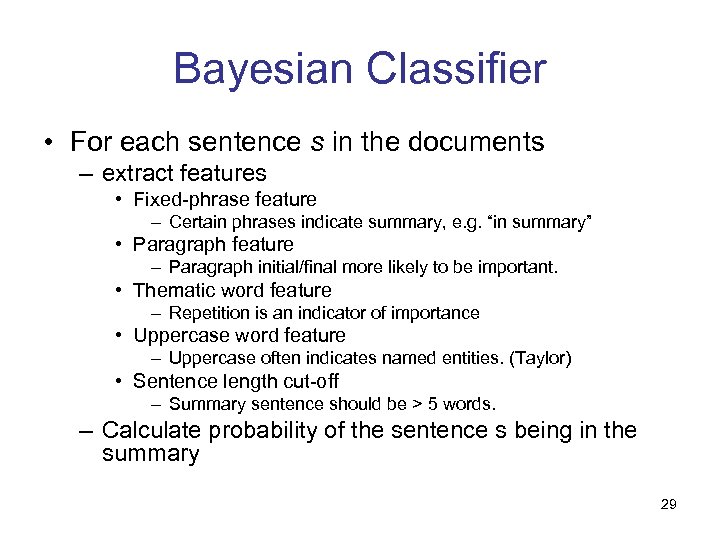

Bayesian Classifier • For each sentence s in the documents – extract features • Fixed-phrase feature – Certain phrases indicate summary, e. g. “in summary” • Paragraph feature – Paragraph initial/final more likely to be important. • Thematic word feature – Repetition is an indicator of importance • Uppercase word feature – Uppercase often indicates named entities. (Taylor) • Sentence length cut-off – Summary sentence should be > 5 words. – Calculate probability of the sentence s being in the summary 29

Bayesian Classifier • For each sentence s in the documents – extract features • Fixed-phrase feature – Certain phrases indicate summary, e. g. “in summary” • Paragraph feature – Paragraph initial/final more likely to be important. • Thematic word feature – Repetition is an indicator of importance • Uppercase word feature – Uppercase often indicates named entities. (Taylor) • Sentence length cut-off – Summary sentence should be > 5 words. – Calculate probability of the sentence s being in the summary 29

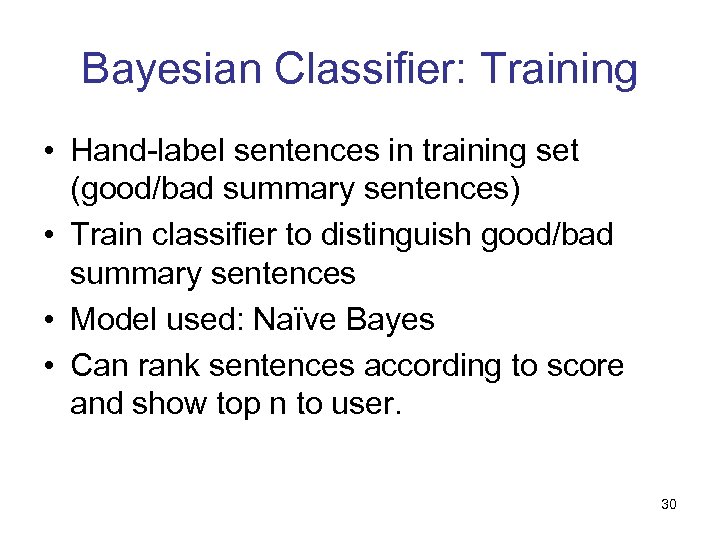

Bayesian Classifier: Training • Hand-label sentences in training set (good/bad summary sentences) • Train classifier to distinguish good/bad summary sentences • Model used: Naïve Bayes • Can rank sentences according to score and show top n to user. 30

Bayesian Classifier: Training • Hand-label sentences in training set (good/bad summary sentences) • Train classifier to distinguish good/bad summary sentences • Model used: Naïve Bayes • Can rank sentences according to score and show top n to user. 30

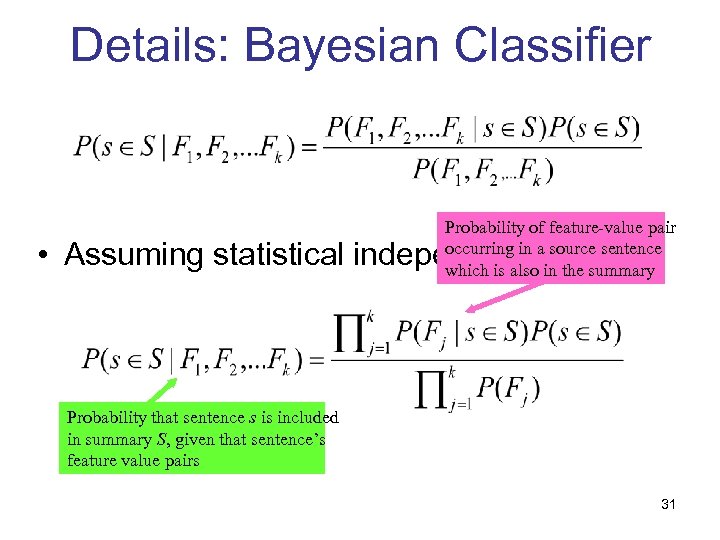

Details: Bayesian Classifier Probability of feature-value pair occurring in a source sentence which is also in the summary • Assuming statistical independence: Probability that sentence s is included in summary S, given that sentence’s feature value pairs 31

Details: Bayesian Classifier Probability of feature-value pair occurring in a source sentence which is also in the summary • Assuming statistical independence: Probability that sentence s is included in summary S, given that sentence’s feature value pairs 31

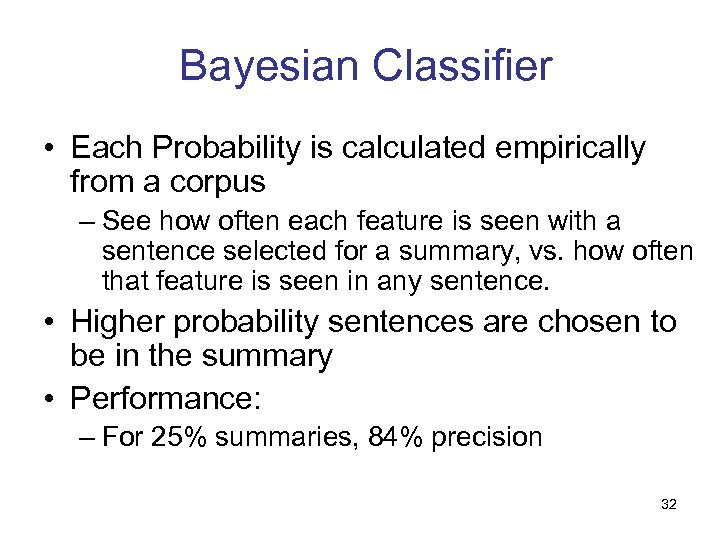

Bayesian Classifier • Each Probability is calculated empirically from a corpus – See how often each feature is seen with a sentence selected for a summary, vs. how often that feature is seen in any sentence. • Higher probability sentences are chosen to be in the summary • Performance: – For 25% summaries, 84% precision 32

Bayesian Classifier • Each Probability is calculated empirically from a corpus – See how often each feature is seen with a sentence selected for a summary, vs. how often that feature is seen in any sentence. • Higher probability sentences are chosen to be in the summary • Performance: – For 25% summaries, 84% precision 32

Max. Entropy model • Maxent model – no independence assumptions • Features: word pairs, sentence length, sentence position, discourse features (e. g. , whether sentence follows the “Introduction”, etc. ) – Max. Ent outperforms Naïve Bayes 33

Max. Entropy model • Maxent model – no independence assumptions • Features: word pairs, sentence length, sentence position, discourse features (e. g. , whether sentence follows the “Introduction”, etc. ) – Max. Ent outperforms Naïve Bayes 33

Cohesion-based methods • Claim: Important sentences/paragraphs are the highest connected entities in more or less elaborate semantic structures. • Classes of approaches – word co-occurrences; – local salience and grammatical relations; – co-reference; – lexical similarity (Word. Net, lexical chains); – combinations of the above. 34

Cohesion-based methods • Claim: Important sentences/paragraphs are the highest connected entities in more or less elaborate semantic structures. • Classes of approaches – word co-occurrences; – local salience and grammatical relations; – co-reference; – lexical similarity (Word. Net, lexical chains); – combinations of the above. 34

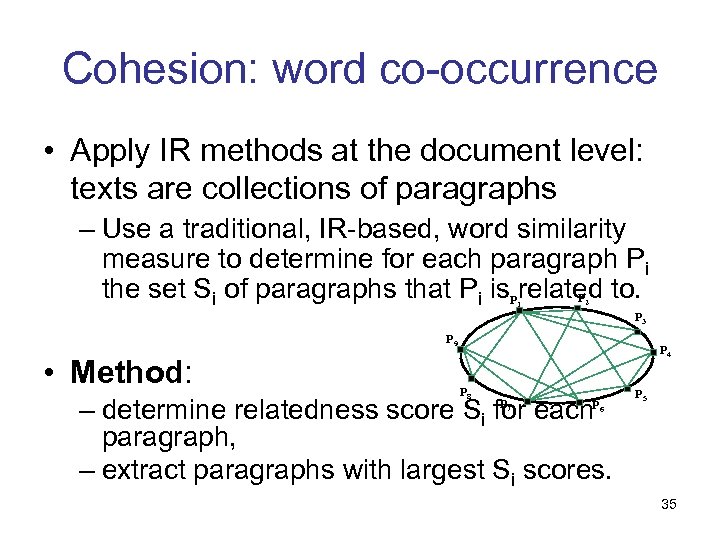

Cohesion: word co-occurrence • Apply IR methods at the document level: texts are collections of paragraphs – Use a traditional, IR-based, word similarity measure to determine for each paragraph Pi the set Si of paragraphs that Pi is. Prelated to. P 1 2 P 3 P 9 • Method: P 4 P 8 – determine relatedness score Si for each paragraph, – extract paragraphs with largest Si scores. P 7 P 6 P 5 35

Cohesion: word co-occurrence • Apply IR methods at the document level: texts are collections of paragraphs – Use a traditional, IR-based, word similarity measure to determine for each paragraph Pi the set Si of paragraphs that Pi is. Prelated to. P 1 2 P 3 P 9 • Method: P 4 P 8 – determine relatedness score Si for each paragraph, – extract paragraphs with largest Si scores. P 7 P 6 P 5 35

Combining the Evidence • Problem: which extraction methods to believe? • Answer: assume they are independent, and combine their evidence: merge individual sentence scores. 36

Combining the Evidence • Problem: which extraction methods to believe? • Answer: assume they are independent, and combine their evidence: merge individual sentence scores. 36

Information extraction methods • Idea: content selection using templates – Predefine a template, whose slots specify what is of interest. – Use a canonical IE system to extract from a (set of) document(s) the relevant information; fill the template. – Generate the content of the template as the summary. 37

Information extraction methods • Idea: content selection using templates – Predefine a template, whose slots specify what is of interest. – Use a canonical IE system to extract from a (set of) document(s) the relevant information; fill the template. – Generate the content of the template as the summary. 37

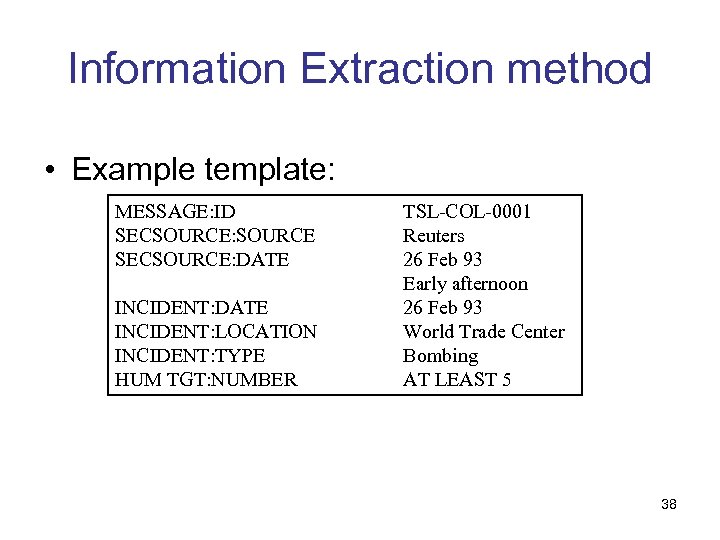

Information Extraction method • Example template: MESSAGE: ID SECSOURCE: SOURCE SECSOURCE: DATE INCIDENT: LOCATION INCIDENT: TYPE HUM TGT: NUMBER TSL-COL-0001 Reuters 26 Feb 93 Early afternoon 26 Feb 93 World Trade Center Bombing AT LEAST 5 38

Information Extraction method • Example template: MESSAGE: ID SECSOURCE: SOURCE SECSOURCE: DATE INCIDENT: LOCATION INCIDENT: TYPE HUM TGT: NUMBER TSL-COL-0001 Reuters 26 Feb 93 Early afternoon 26 Feb 93 World Trade Center Bombing AT LEAST 5 38

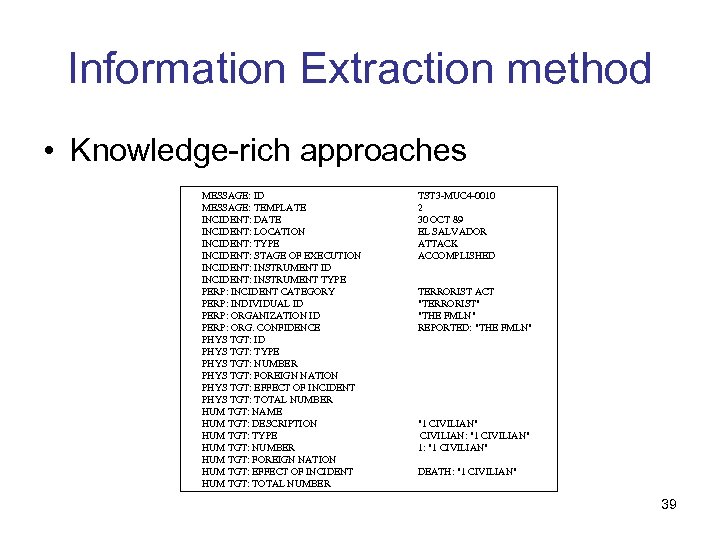

Information Extraction method • Knowledge-rich approaches MESSAGE: ID MESSAGE: TEMPLATE INCIDENT: DATE INCIDENT: LOCATION INCIDENT: TYPE INCIDENT: STAGE OF EXECUTION INCIDENT: INSTRUMENT ID INCIDENT: INSTRUMENT TYPE PERP: INCIDENT CATEGORY PERP: INDIVIDUAL ID PERP: ORGANIZATION ID PERP: ORG. CONFIDENCE PHYS TGT: ID PHYS TGT: TYPE PHYS TGT: NUMBER PHYS TGT: FOREIGN NATION PHYS TGT: EFFECT OF INCIDENT PHYS TGT: TOTAL NUMBER HUM TGT: NAME HUM TGT: DESCRIPTION HUM TGT: TYPE HUM TGT: NUMBER HUM TGT: FOREIGN NATION HUM TGT: EFFECT OF INCIDENT HUM TGT: TOTAL NUMBER TST 3 -MUC 4 -0010 2 30 OCT 89 EL SALVADOR ATTACK ACCOMPLISHED TERRORIST ACT "TERRORIST" "THE FMLN" REPORTED: "THE FMLN" "1 CIVILIAN" CIVILIAN: "1 CIVILIAN" 1: "1 CIVILIAN" DEATH: "1 CIVILIAN" 39

Information Extraction method • Knowledge-rich approaches MESSAGE: ID MESSAGE: TEMPLATE INCIDENT: DATE INCIDENT: LOCATION INCIDENT: TYPE INCIDENT: STAGE OF EXECUTION INCIDENT: INSTRUMENT ID INCIDENT: INSTRUMENT TYPE PERP: INCIDENT CATEGORY PERP: INDIVIDUAL ID PERP: ORGANIZATION ID PERP: ORG. CONFIDENCE PHYS TGT: ID PHYS TGT: TYPE PHYS TGT: NUMBER PHYS TGT: FOREIGN NATION PHYS TGT: EFFECT OF INCIDENT PHYS TGT: TOTAL NUMBER HUM TGT: NAME HUM TGT: DESCRIPTION HUM TGT: TYPE HUM TGT: NUMBER HUM TGT: FOREIGN NATION HUM TGT: EFFECT OF INCIDENT HUM TGT: TOTAL NUMBER TST 3 -MUC 4 -0010 2 30 OCT 89 EL SALVADOR ATTACK ACCOMPLISHED TERRORIST ACT "TERRORIST" "THE FMLN" REPORTED: "THE FMLN" "1 CIVILIAN" CIVILIAN: "1 CIVILIAN" 1: "1 CIVILIAN" DEATH: "1 CIVILIAN" 39

Generation • Generating text from templates On October 30, 1989, one civilian was killed in a reported FMLN attack in El Salvador. 40

Generation • Generating text from templates On October 30, 1989, one civilian was killed in a reported FMLN attack in El Salvador. 40

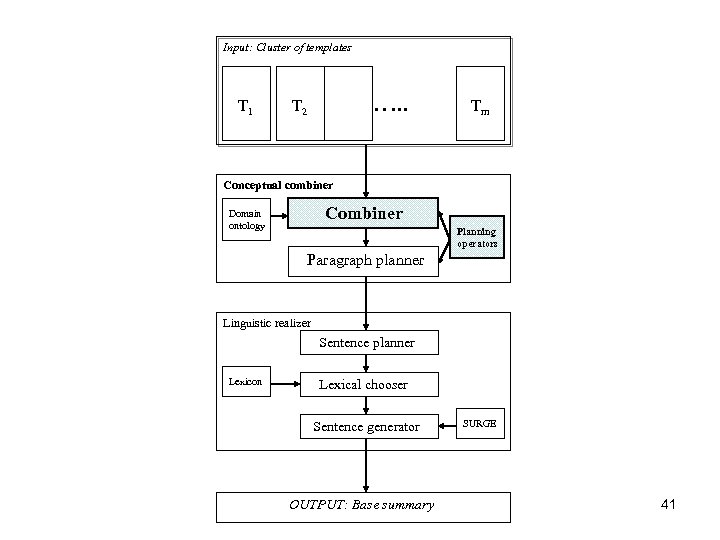

Input: Cluster of templates T 1 …. . T 2 Tm Conceptual combiner Combiner Domain ontology Planning operators Paragraph planner Linguistic realizer Sentence planner Lexicon Lexical chooser Sentence generator OUTPUT: Base summary SURGE 41

Input: Cluster of templates T 1 …. . T 2 Tm Conceptual combiner Combiner Domain ontology Planning operators Paragraph planner Linguistic realizer Sentence planner Lexicon Lexical chooser Sentence generator OUTPUT: Base summary SURGE 41

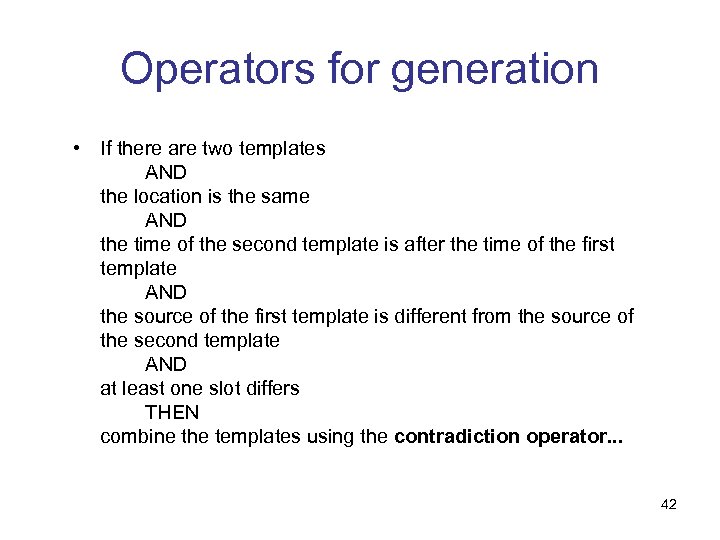

Operators for generation • If there are two templates AND the location is the same AND the time of the second template is after the time of the first template AND the source of the first template is different from the source of the second template AND at least one slot differs THEN combine the templates using the contradiction operator. . . 42

Operators for generation • If there are two templates AND the location is the same AND the time of the second template is after the time of the first template AND the source of the first template is different from the source of the second template AND at least one slot differs THEN combine the templates using the contradiction operator. . . 42

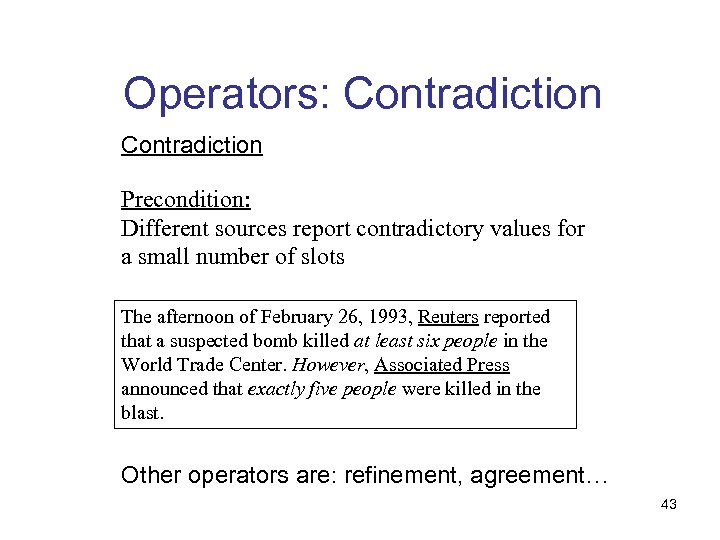

Operators: Contradiction Precondition: Different sources report contradictory values for a small number of slots The afternoon of February 26, 1993, Reuters reported that a suspected bomb killed at least six people in the World Trade Center. However, Associated Press announced that exactly five people were killed in the blast. Other operators are: refinement, agreement… 43

Operators: Contradiction Precondition: Different sources report contradictory values for a small number of slots The afternoon of February 26, 1993, Reuters reported that a suspected bomb killed at least six people in the World Trade Center. However, Associated Press announced that exactly five people were killed in the blast. Other operators are: refinement, agreement… 43

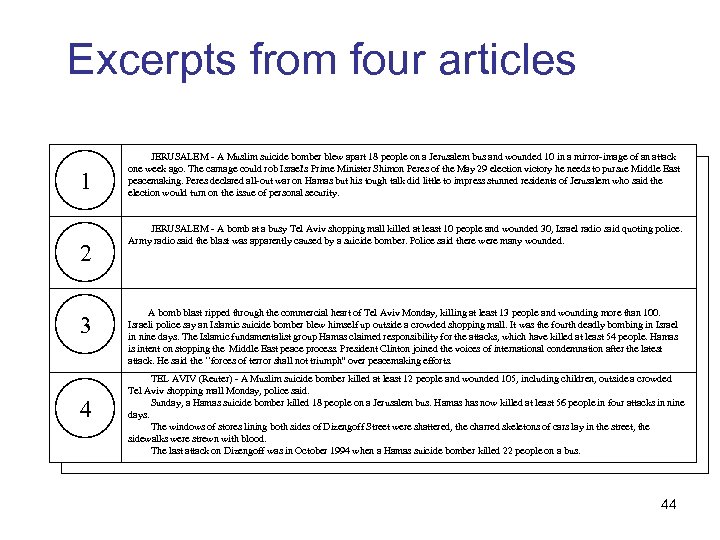

Excerpts from four articles 1 2 3 4 JERUSALEM - A Muslim suicide bomber blew apart 18 people on a Jerusalem bus and wounded 10 in a mirror-image of an attack one week ago. The carnage could rob Israel's Prime Minister Shimon Peres of the May 29 election victory he needs to pursue Middle East peacemaking. Peres declared all-out war on Hamas but his tough talk did little to impress stunned residents of Jerusalem who said the election would turn on the issue of personal security. JERUSALEM - A bomb at a busy Tel Aviv shopping mall killed at least 10 people and wounded 30, Israel radio said quoting police. Army radio said the blast was apparently caused by a suicide bomber. Police said there were many wounded. A bomb blast ripped through the commercial heart of Tel Aviv Monday, killing at least 13 people and wounding more than 100. Israeli police say an Islamic suicide bomber blew himself up outside a crowded shopping mall. It was the fourth deadly bombing in Israel in nine days. The Islamic fundamentalist group Hamas claimed responsibility for the attacks, which have killed at least 54 people. Hamas is intent on stopping the Middle East peace process. President Clinton joined the voices of international condemnation after the latest attack. He said the ``forces of terror shall not triumph'' over peacemaking efforts. TEL AVIV (Reuter) - A Muslim suicide bomber killed at least 12 people and wounded 105, including children, outside a crowded Tel Aviv shopping mall Monday, police said. Sunday, a Hamas suicide bomber killed 18 people on a Jerusalem bus. Hamas has now killed at least 56 people in four attacks in nine days. The windows of stores lining both sides of Dizengoff Street were shattered, the charred skeletons of cars lay in the street, the sidewalks were strewn with blood. The last attack on Dizengoff was in October 1994 when a Hamas suicide bomber killed 22 people on a bus. 44

Excerpts from four articles 1 2 3 4 JERUSALEM - A Muslim suicide bomber blew apart 18 people on a Jerusalem bus and wounded 10 in a mirror-image of an attack one week ago. The carnage could rob Israel's Prime Minister Shimon Peres of the May 29 election victory he needs to pursue Middle East peacemaking. Peres declared all-out war on Hamas but his tough talk did little to impress stunned residents of Jerusalem who said the election would turn on the issue of personal security. JERUSALEM - A bomb at a busy Tel Aviv shopping mall killed at least 10 people and wounded 30, Israel radio said quoting police. Army radio said the blast was apparently caused by a suicide bomber. Police said there were many wounded. A bomb blast ripped through the commercial heart of Tel Aviv Monday, killing at least 13 people and wounding more than 100. Israeli police say an Islamic suicide bomber blew himself up outside a crowded shopping mall. It was the fourth deadly bombing in Israel in nine days. The Islamic fundamentalist group Hamas claimed responsibility for the attacks, which have killed at least 54 people. Hamas is intent on stopping the Middle East peace process. President Clinton joined the voices of international condemnation after the latest attack. He said the ``forces of terror shall not triumph'' over peacemaking efforts. TEL AVIV (Reuter) - A Muslim suicide bomber killed at least 12 people and wounded 105, including children, outside a crowded Tel Aviv shopping mall Monday, police said. Sunday, a Hamas suicide bomber killed 18 people on a Jerusalem bus. Hamas has now killed at least 56 people in four attacks in nine days. The windows of stores lining both sides of Dizengoff Street were shattered, the charred skeletons of cars lay in the street, the sidewalks were strewn with blood. The last attack on Dizengoff was in October 1994 when a Hamas suicide bomber killed 22 people on a bus. 44

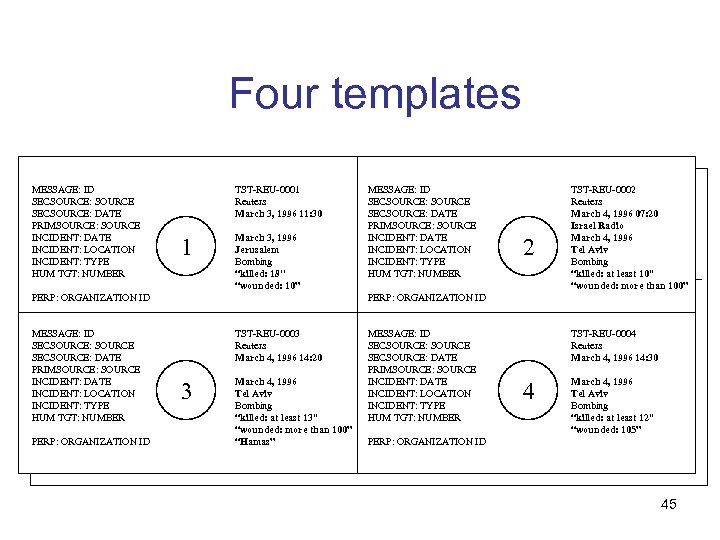

Four templates MESSAGE: ID SECSOURCE: SOURCE SECSOURCE: DATE PRIMSOURCE: SOURCE INCIDENT: DATE INCIDENT: LOCATION INCIDENT: TYPE HUM TGT: NUMBER TST-REU-0001 Reuters March 3, 1996 11: 30 1 March 3, 1996 Jerusalem Bombing “killed: 18'' “wounded: 10” PERP: ORGANIZATION ID MESSAGE: ID SECSOURCE: SOURCE SECSOURCE: DATE PRIMSOURCE: SOURCE INCIDENT: DATE INCIDENT: LOCATION INCIDENT: TYPE HUM TGT: NUMBER 2 TST-REU-0002 Reuters March 4, 1996 07: 20 Israel Radio March 4, 1996 Tel Aviv Bombing “killed: at least 10'' “wounded: more than 100” PERP: ORGANIZATION ID TST-REU-0003 Reuters March 4, 1996 14: 20 3 March 4, 1996 Tel Aviv Bombing “killed: at least 13'' “wounded: more than 100” “Hamas” MESSAGE: ID SECSOURCE: SOURCE SECSOURCE: DATE PRIMSOURCE: SOURCE INCIDENT: DATE INCIDENT: LOCATION INCIDENT: TYPE HUM TGT: NUMBER TST-REU-0004 Reuters March 4, 1996 14: 30 4 March 4, 1996 Tel Aviv Bombing “killed: at least 12'' “wounded: 105” PERP: ORGANIZATION ID 45

Four templates MESSAGE: ID SECSOURCE: SOURCE SECSOURCE: DATE PRIMSOURCE: SOURCE INCIDENT: DATE INCIDENT: LOCATION INCIDENT: TYPE HUM TGT: NUMBER TST-REU-0001 Reuters March 3, 1996 11: 30 1 March 3, 1996 Jerusalem Bombing “killed: 18'' “wounded: 10” PERP: ORGANIZATION ID MESSAGE: ID SECSOURCE: SOURCE SECSOURCE: DATE PRIMSOURCE: SOURCE INCIDENT: DATE INCIDENT: LOCATION INCIDENT: TYPE HUM TGT: NUMBER 2 TST-REU-0002 Reuters March 4, 1996 07: 20 Israel Radio March 4, 1996 Tel Aviv Bombing “killed: at least 10'' “wounded: more than 100” PERP: ORGANIZATION ID TST-REU-0003 Reuters March 4, 1996 14: 20 3 March 4, 1996 Tel Aviv Bombing “killed: at least 13'' “wounded: more than 100” “Hamas” MESSAGE: ID SECSOURCE: SOURCE SECSOURCE: DATE PRIMSOURCE: SOURCE INCIDENT: DATE INCIDENT: LOCATION INCIDENT: TYPE HUM TGT: NUMBER TST-REU-0004 Reuters March 4, 1996 14: 30 4 March 4, 1996 Tel Aviv Bombing “killed: at least 12'' “wounded: 105” PERP: ORGANIZATION ID 45

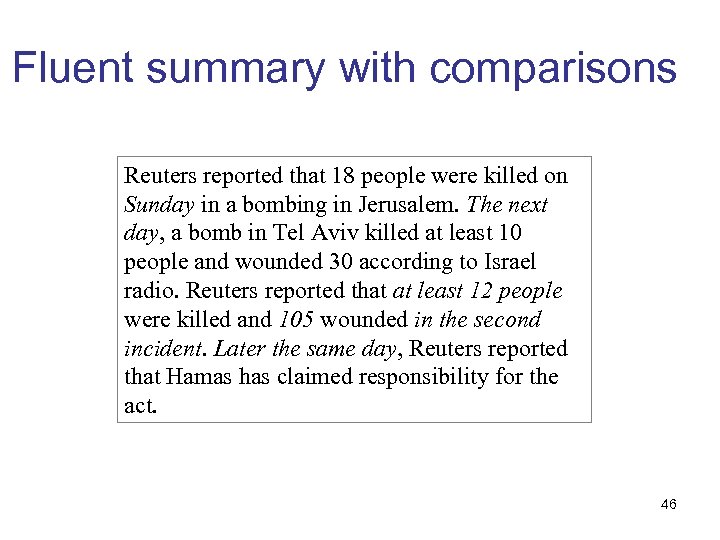

Fluent summary with comparisons Reuters reported that 18 people were killed on Sunday in a bombing in Jerusalem. The next day, a bomb in Tel Aviv killed at least 10 people and wounded 30 according to Israel radio. Reuters reported that at least 12 people were killed and 105 wounded in the second incident. Later the same day, Reuters reported that Hamas has claimed responsibility for the act. 46

Fluent summary with comparisons Reuters reported that 18 people were killed on Sunday in a bombing in Jerusalem. The next day, a bomb in Tel Aviv killed at least 10 people and wounded 30 according to Israel radio. Reuters reported that at least 12 people were killed and 105 wounded in the second incident. Later the same day, Reuters reported that Hamas has claimed responsibility for the act. 46

Outline • Introduction and Applications • Types of summarization tasks • Approaches and paradigms (for single documents summarization) • Evaluation methods 47

Outline • Introduction and Applications • Types of summarization tasks • Approaches and paradigms (for single documents summarization) • Evaluation methods 47

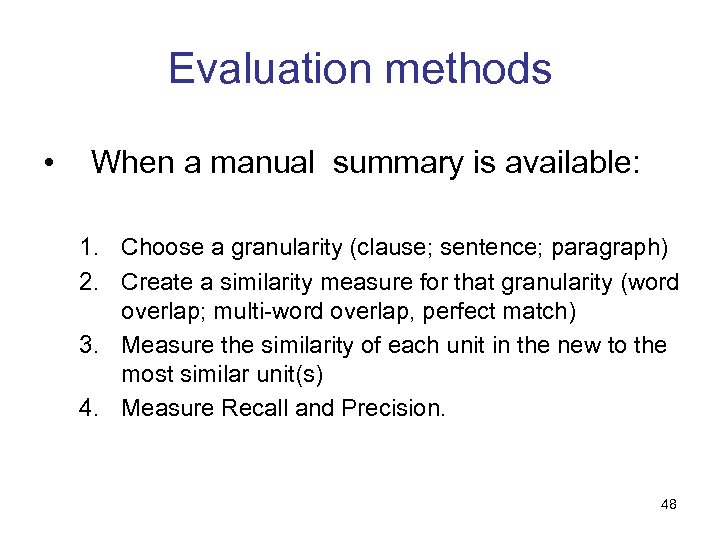

Evaluation methods • When a manual summary is available: 1. Choose a granularity (clause; sentence; paragraph) 2. Create a similarity measure for that granularity (word overlap; multi-word overlap, perfect match) 3. Measure the similarity of each unit in the new to the most similar unit(s) 4. Measure Recall and Precision. 48

Evaluation methods • When a manual summary is available: 1. Choose a granularity (clause; sentence; paragraph) 2. Create a similarity measure for that granularity (word overlap; multi-word overlap, perfect match) 3. Measure the similarity of each unit in the new to the most similar unit(s) 4. Measure Recall and Precision. 48

Evaluation methods • When a manual summary is NOT available: 1. Intrinsic –how good is the summary as a summary? 2. Extrinsic – how well does the summary help the user? 49

Evaluation methods • When a manual summary is NOT available: 1. Intrinsic –how good is the summary as a summary? 2. Extrinsic – how well does the summary help the user? 49

Intrinsic measures • Intrinsic measures: how good is the summary as a summary? – Problem: how do you measure the goodness of a summary? – Studies: compare to ideal or supply criteria—fluency, quality, informativeness, coverage, etc. • Summary evaluated on its own or comparing it with the source – Is the text cohesive and coherent? – Does it contain the main topics of the document? – Are important topics omitted? 50

Intrinsic measures • Intrinsic measures: how good is the summary as a summary? – Problem: how do you measure the goodness of a summary? – Studies: compare to ideal or supply criteria—fluency, quality, informativeness, coverage, etc. • Summary evaluated on its own or comparing it with the source – Is the text cohesive and coherent? – Does it contain the main topics of the document? – Are important topics omitted? 50

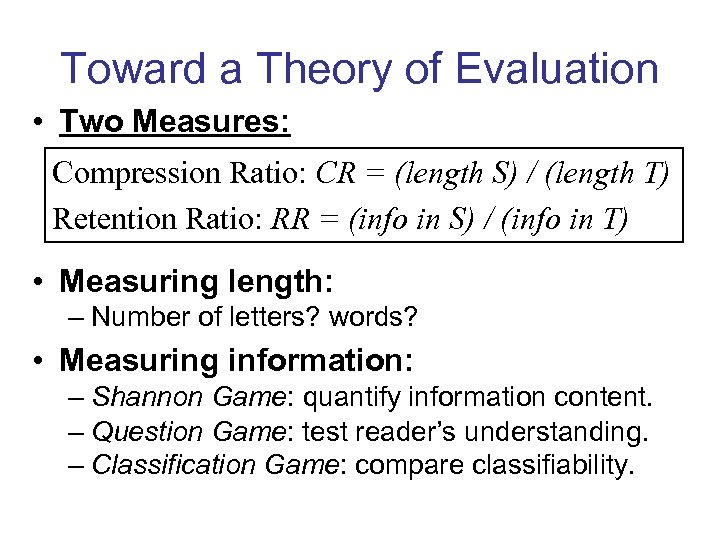

Toward a Theory of Evaluation • Two Measures: Compression Ratio: CR = (length S) / (length T) Retention Ratio: RR = (info in S) / (info in T) • Measuring length: – Number of letters? words? • Measuring information: – Shannon Game: quantify information content. – Question Game: test reader’s understanding. – Classification Game: compare classifiability.

Toward a Theory of Evaluation • Two Measures: Compression Ratio: CR = (length S) / (length T) Retention Ratio: RR = (info in S) / (info in T) • Measuring length: – Number of letters? words? • Measuring information: – Shannon Game: quantify information content. – Question Game: test reader’s understanding. – Classification Game: compare classifiability.

Extrinsic measures • How well does the summary help a user with a task? – Problem: does summary quality correlate with performance? – Studies: GMAT tests; news analysis; IR; text categorization • Evaluation in an specific task – Can the summary be used instead of the document? – Can the document be classified by reading the summary? – Can we answer questions by reading the summary? 52

Extrinsic measures • How well does the summary help a user with a task? – Problem: does summary quality correlate with performance? – Studies: GMAT tests; news analysis; IR; text categorization • Evaluation in an specific task – Can the summary be used instead of the document? – Can the document be classified by reading the summary? – Can we answer questions by reading the summary? 52

The Document Understanding Conference (DUC) • This is really the Text Summarization Competition – Started in 2001 • Task and Evaluation (for 2001 -2004): – Various target sizes were used (10 -400 words) – Both single and multiple-document summaries assessed – Summaries were manually judged for both content and readability. – Each peer (human or automatic) summary was compared against a single model summary 53

The Document Understanding Conference (DUC) • This is really the Text Summarization Competition – Started in 2001 • Task and Evaluation (for 2001 -2004): – Various target sizes were used (10 -400 words) – Both single and multiple-document summaries assessed – Summaries were manually judged for both content and readability. – Each peer (human or automatic) summary was compared against a single model summary 53

The Document Understanding Conference (DUC) • Made a big change in 2005 – Extrinsic evaluation proposed but rejected (write a natural disaster summary) – Instead: a complex question-focused summarization task that required summarizers to piece together information from multiple documents to answer a question or set of questions as posed in a DUC topic. – Also indicated a desired granularity of information 54

The Document Understanding Conference (DUC) • Made a big change in 2005 – Extrinsic evaluation proposed but rejected (write a natural disaster summary) – Instead: a complex question-focused summarization task that required summarizers to piece together information from multiple documents to answer a question or set of questions as posed in a DUC topic. – Also indicated a desired granularity of information 54

The Document Understanding Conference (DUC) • Evaluation metrics for new task: – Grammaticality – Non-redundancy – Referential clarity – Focus – Structure and Coherence – Responsiveness (content-based evaluation) • This was a difficult task to do well in. 55

The Document Understanding Conference (DUC) • Evaluation metrics for new task: – Grammaticality – Non-redundancy – Referential clarity – Focus – Structure and Coherence – Responsiveness (content-based evaluation) • This was a difficult task to do well in. 55

DUC TAC • • TAC (Text Analysis Conference) summarization track is a continuation of DUC (since 2008) http: //www. nist. gov/tac/ Two tasks: 1. Initial: a 100 -word summary of a set of 10 newswire articles about a particular topic. 2. Update: a 100 -word summary of a subsequent set of 10 newswire articles for the same topic, under the assumption that the reader has already read the first 10 documents. The purpose of the update summary is to inform the reader of new information about the topic. 56

DUC TAC • • TAC (Text Analysis Conference) summarization track is a continuation of DUC (since 2008) http: //www. nist. gov/tac/ Two tasks: 1. Initial: a 100 -word summary of a set of 10 newswire articles about a particular topic. 2. Update: a 100 -word summary of a subsequent set of 10 newswire articles for the same topic, under the assumption that the reader has already read the first 10 documents. The purpose of the update summary is to inform the reader of new information about the topic. 56

Available corpora & resources • DUC corpus (Document Understanding Conferences) – http: //duc. nist. gov • Text analysis conference (TAC 2009) http: //www. nist. gov/tac/ – TAC 2009 Summarization Track http: //www. nist. gov/tac/2009/Summarization/index. html • Summ. Bank corpus – http: //www. summarization. com/summbank • SUMMAC corpus – send mail to mani@mitre. org •

Available corpora & resources • DUC corpus (Document Understanding Conferences) – http: //duc. nist. gov • Text analysis conference (TAC 2009) http: //www. nist. gov/tac/ – TAC 2009 Summarization Track http: //www. nist. gov/tac/2009/Summarization/index. html • Summ. Bank corpus – http: //www. summarization. com/summbank • SUMMAC corpus – send mail to mani@mitre. org •

Possible research topics • Corpus creation and annotation • MMM: Multidocument, Multimedia, Multilingual • Evolving summaries • Personalized summarization • Web-based summarization • Embedded systems • Spoken document summarization 58

Possible research topics • Corpus creation and annotation • MMM: Multidocument, Multimedia, Multilingual • Evolving summaries • Personalized summarization • Web-based summarization • Embedded systems • Spoken document summarization 58