b9b57b1a3b1659d707eba50c8d38a19a.ppt

- Количество слайдов: 106

Hybrid Intelligent Systems: Design and Applications Evolutionary Neural Networks Evolving Fuzzy Systems 1

Artificial Neural Networks - Features • Typically, structure of a neural network is established and one of a variety of mathematical algorithms is used to determine what the weights of the interconnections should be to maximize the accuracy of the outputs produced. • This process by which the synaptic weights of a neural network are adapted according to the problem environment is popularly known as learning. • There are broadly three types of learning: Supervised learning, unsupervised learning and reinforcement learning 2

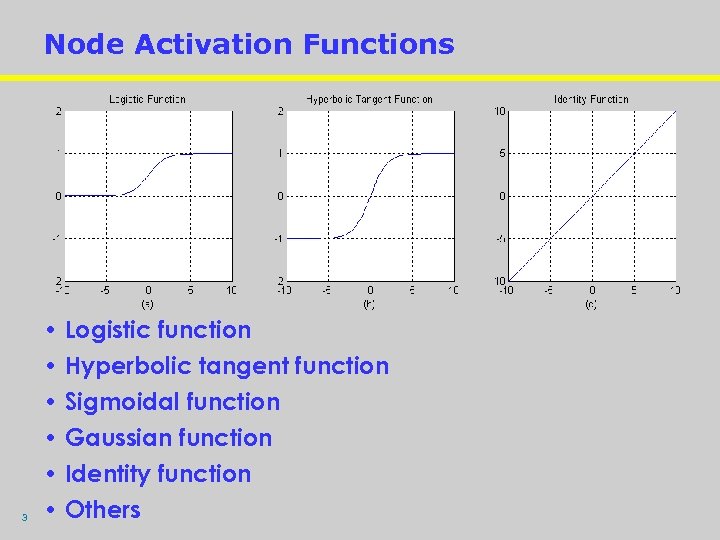

Node Activation Functions 3 • Logistic function • Hyperbolic tangent function • Sigmoidal function • Gaussian function • Identity function • Others

Common Neural Network Architectures · Feedforward architecture where the network does not possess any loops. Representative examples are the single layer/multilayer perceptron, support vector machines (SVM), radial basis function networks (RBFN) etc. · Feedback (recurrent) architecture where there exist loops due to feedback connections. Typical examples include the Hopfield network, brain-in-astate-box (BSB) model, Boltzmann machine, bidirectional associative memories (BAM), adaptive resonance theory (ART), Kohonen’s self-organizing feature maps (SOFM) etc. 4 4

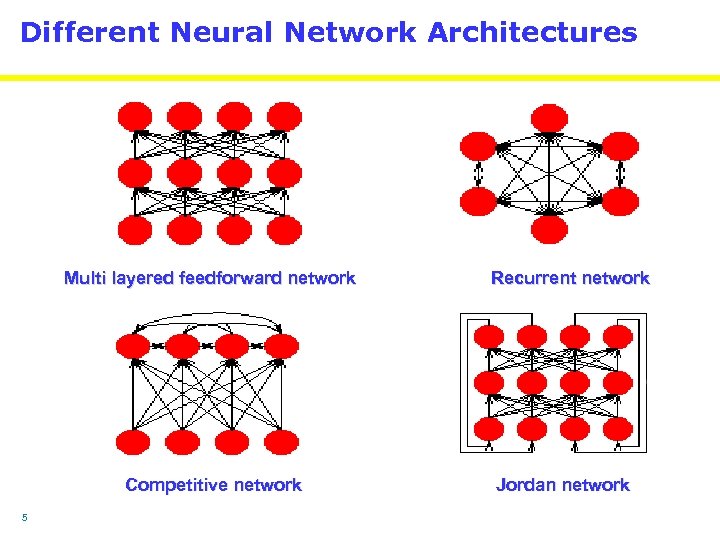

Different Neural Network Architectures Multi layered feedforward network Competitive network 5 Recurrent network Jordan network

Mathematical Formalism • Put in simple terms, a neuron receives inputs from its preceding activated neuron/neurons, sums them up and applies a characteristic activation to generate an impulse. • The summing mechanism is controlled by the interconnection weights between the receiving neuron and its preceding ones in as much they decide the activation state of the preceding neurons. • Let the n inputs {q 1, q 2, q 3, …, qn} arriving at a receiving neuron from n preceding neurons, lie in a vector space Qn. • Let the interconnection weight matrix from m such receiving neurons to their n preceding neurons is represented by Wm n={wij, i=1, 2, 3, …, n; j=1, 2, 3, …, m}. 6 6

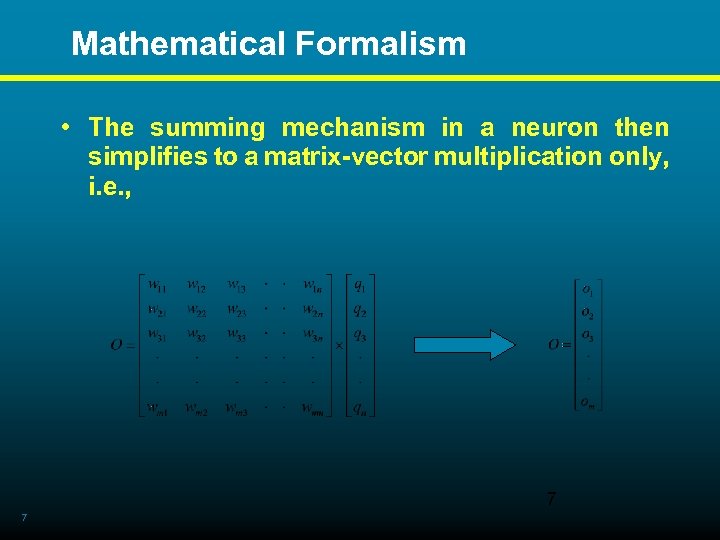

Mathematical Formalism • The summing mechanism in a neuron then simplifies to a matrix-vector multiplication only, i. e. , 7 7

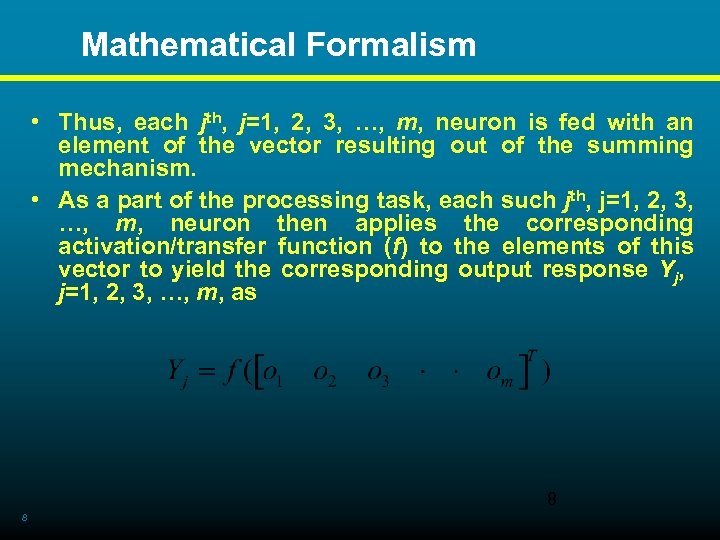

Mathematical Formalism • Thus, each jth, j=1, 2, 3, …, m, neuron is fed with an element of the vector resulting out of the summing mechanism. • As a part of the processing task, each such jth, j=1, 2, 3, …, m, neuron then applies the corresponding activation/transfer function (f) to the elements of this vector to yield the corresponding output response Yj, j=1, 2, 3, …, m, as 8 8

Mathematical Formalism • Thus, given a set of inputs in Qn, the interconnection weight matrix Wm n={wij, i=1, 2, 3, …, n; j=1, 2, 3, …, m} along with the characteristic activation function f decide the output responses of the neurons. • Any variations in Wm n={wij, i=1, 2, 3, …, n; j=1, 2, 3, …, m} would yield different responses subject to the same characteristic neuronal activation f. • Looking from the biological perspective, the interconnection weight matrix Wm n={wij, i=1, 2, 3, …, n; j=1, 2, 3, …, m} thus serves similar to the synaptic weights of a biological neuron and house the memory of an artificial neural network. 9 9

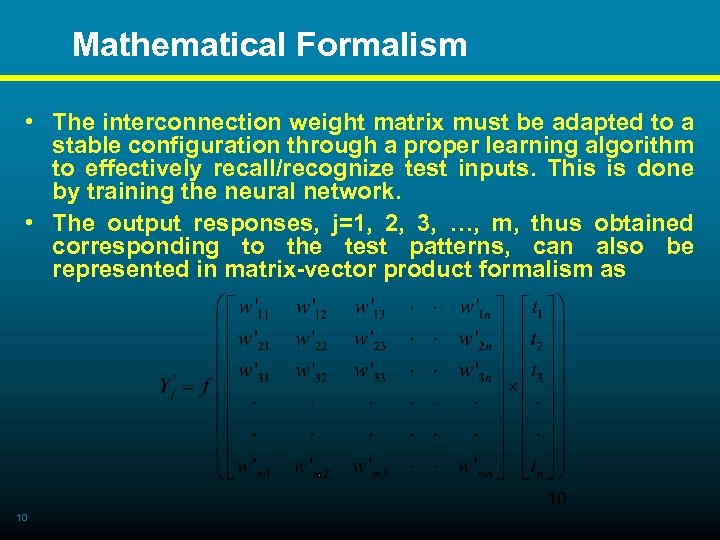

Mathematical Formalism • The interconnection weight matrix must be adapted to a stable configuration through a proper learning algorithm to effectively recall/recognize test inputs. This is done by training the neural network. • The output responses, j=1, 2, 3, …, m, thus obtained corresponding to the test patterns, can also be represented in matrix-vector product formalism as 10 10

Mathematical Formalism • T={t 1, t 2, t 3, …, tn}T is the test input vector and W m n={w ij, i=1, 2, 3, …, n; j=1, 2, 3, …, m} is the stabilized interconnection weight matrix. 11 11

Operational modes · Supervised mode: Requires a priori knowledge base to guide the learning phase. • Uses a training set of input-output patterns and an input-output relationship. • If an input vector Xk Rn is related to an output vector Dk Rp by means of a relationship Dk = T{Xk, }, the basic philosophy behind the supervised learning algorithm is to yield a mapping of the form f: Rn Rp, in consonance with T such that the input-output data relationship is learnt. • The supervised algorithm aims to reduce the error (E=Dk-Sk), where Sk is the output response generated by Xk. • This error reduction mechanism is carried out by the gradient descent method. When E gets reduced to an acceptable limit, the network would generate a response similar to Dk when fed with an input similar to Xk. 12 12

Operational modes • Unsupervised process. mode: An adaptive learning • Here, the neural network self-organizes its topological configuration by means of generation of exemplars/prototypes of input vectors Xk presented. • The algorithm stores the exemplars/prototypes of the input data set into different number of predefined categories/classes. • When an unknown input data is presented to the network, it associates it to one of the stored categories based on a match. • However, if the input data belongs to a completely new category/class, the storage states of the network are updated to incorporate the new class encountered. Other modes include the reinforcement learning, semi-supervised mode and transduction mode. 13 13

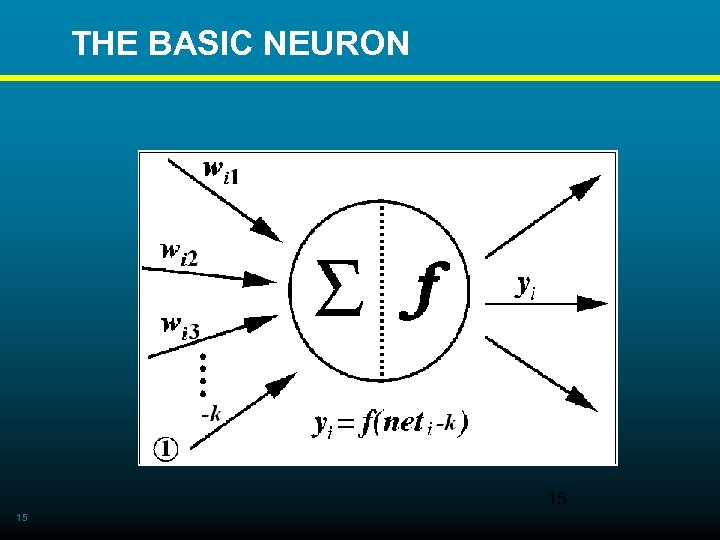

THE BASIC NEURON • Simplest form of artificial neural network model proposed by Mc. Culloch and Pitts in 1943. It operates in a supervised mode. • Also referred to as single layer perceptron. • Comprises 14 • An input and an output layer with interconnections between them. • Processed information propagates from the input layer to the output layer via the unidirectional interconnections between the input and output layer neurons. • Each of these interconnections possesses a weight w, which indicates its strength. • However, there is no interconnection from the output 14 layer back to the input layer the other way round.

THE BASIC NEURON 15 15

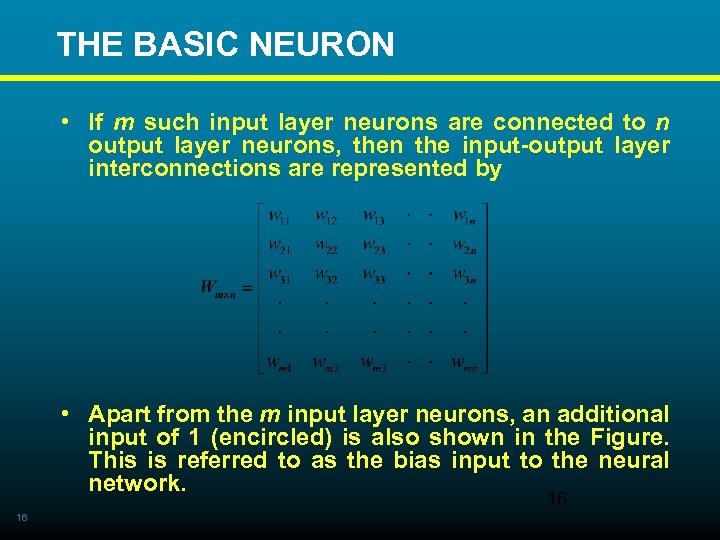

THE BASIC NEURON • If m such input layer neurons are connected to n output layer neurons, then the input-output layer interconnections are represented by • Apart from the m input layer neurons, an additional input of 1 (encircled) is also shown in the Figure. This is referred to as the bias input to the neural network. 16 16

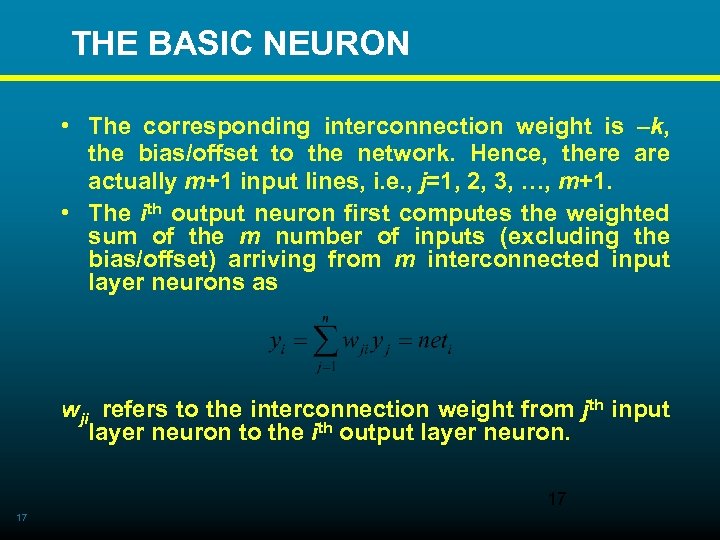

THE BASIC NEURON • The corresponding interconnection weight is –k, the bias/offset to the network. Hence, there actually m+1 input lines, i. e. , j=1, 2, 3, …, m+1. • The ith output neuron first computes the weighted sum of the m number of inputs (excluding the bias/offset) arriving from m interconnected input layer neurons as wji refers to the interconnection weight from jth input layer neuron to the ith output layer neuron. 17 17

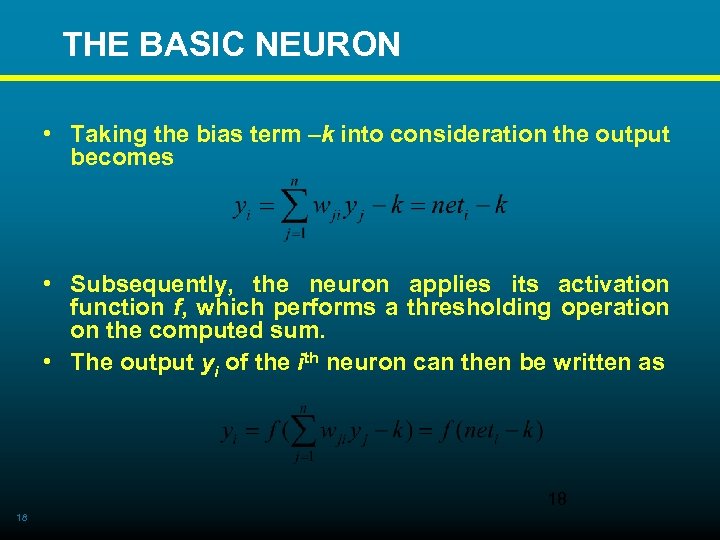

THE BASIC NEURON • Taking the bias term –k into consideration the output becomes • Subsequently, the neuron applies its activation function f, which performs a thresholding operation on the computed sum. • The output yi of the ith neuron can then be written as 18 18

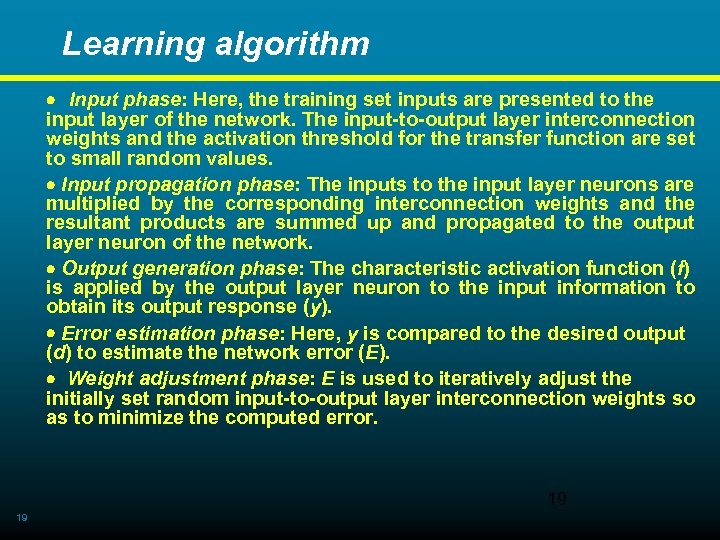

Learning algorithm · Input phase: Here, the training set inputs are presented to the input layer of the network. The input-to-output layer interconnection weights and the activation threshold for the transfer function are set to small random values. · Input propagation phase: The inputs to the input layer neurons are multiplied by the corresponding interconnection weights and the resultant products are summed up and propagated to the output layer neuron of the network. · Output generation phase: The characteristic activation function (f) is applied by the output layer neuron to the input information to obtain its output response (y). · Error estimation phase: Here, y is compared to the desired output (d) to estimate the network error (E). · Weight adjustment phase: E is used to iteratively adjust the initially set random input-to-output layer interconnection weights so as to minimize the computed error. 19 19

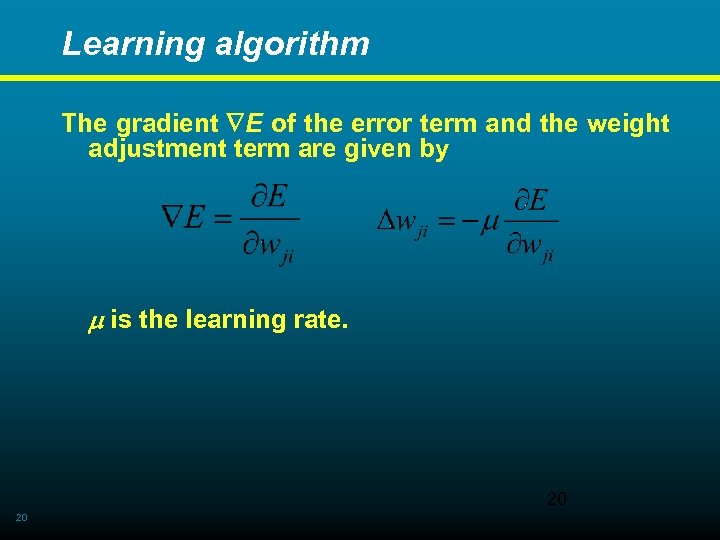

Learning algorithm The gradient E of the error term and the weight adjustment term are given by is the learning rate. 20 20

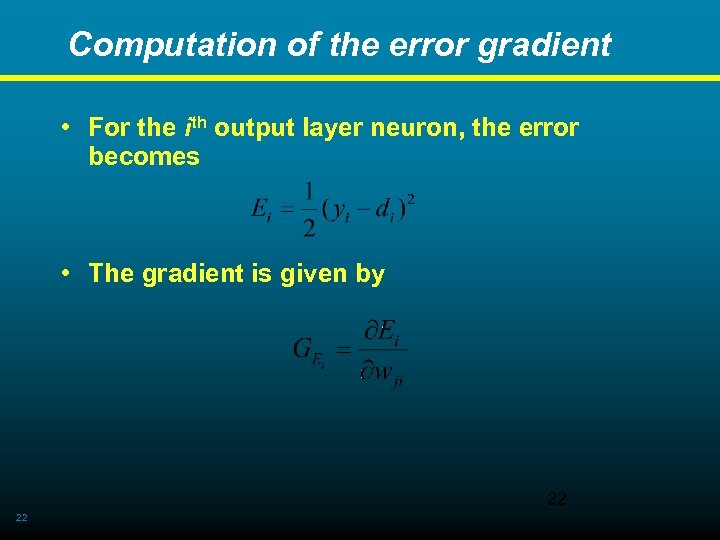

Computation of the error gradient • The gradient GE of the network error E is computed with respect to each interconnection weight wji so as to decipher the contribution of the initially set random interconnection weights towards the overall E. • The network error is represented as • N is the number of neurons in the output layer of the network. The square of the error term is taken since it will always be positive. 21 21

Computation of the error gradient • For the ith output layer neuron, the error becomes • The gradient is given by 22 22

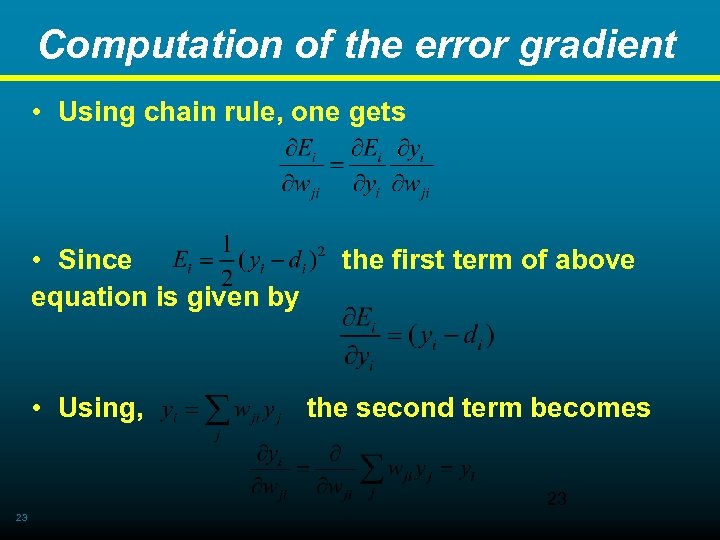

Computation of the error gradient • Using chain rule, one gets • Since the first term of above equation is given by • Using, the second term becomes 23 23

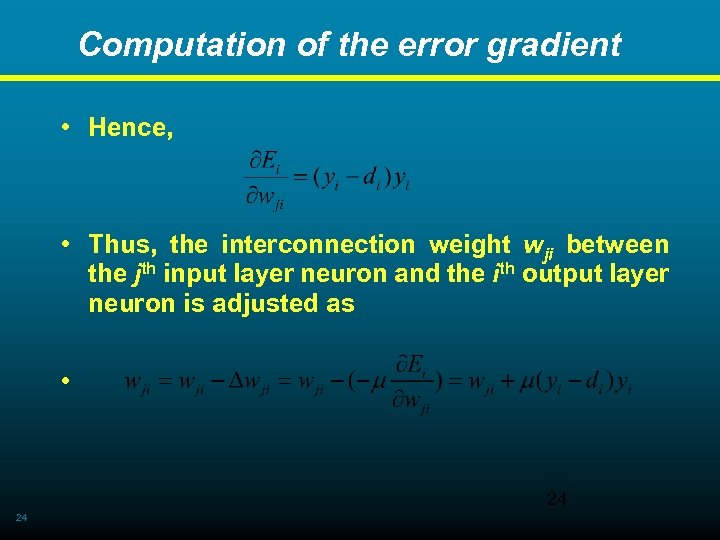

Computation of the error gradient • Hence, • Thus, the interconnection weight wji between the jth input layer neuron and the ith output layer neuron is adjusted as • 24 24

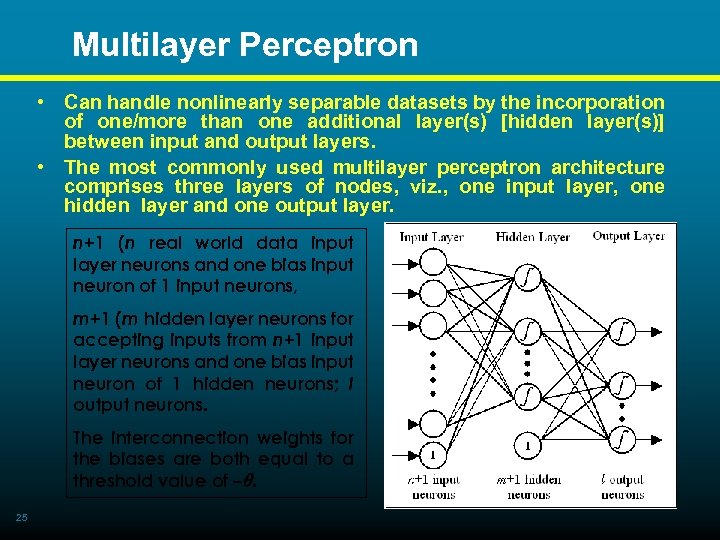

Multilayer Perceptron • Can handle nonlinearly separable datasets by the incorporation of one/more than one additional layer(s) [hidden layer(s)] between input and output layers. • The most commonly used multilayer perceptron architecture comprises three layers of nodes, viz. , one input layer, one hidden layer and one output layer. n+1 (n real world data input layer neurons and one bias input neuron of 1 input neurons, m+1 (m hidden layer neurons for accepting inputs from n+1 input layer neurons and one bias input neuron of 1 hidden neurons; l output neurons. The interconnection weights for the biases are both equal to a threshold value of –. 25 25

Multilayer Perceptron v. All the actual hidden and output layer neurons exhibit nonlinear transfer characteristics. v It is not possible to know the target values for the hidden layers. Hence, the gradient descent algorithm is not sufficient for error adjustment. v In case of a multilayer architecture with multiple hidden layers, this process of computing the partial derivatives and applying them to each of the weights can be initiated with the hidden-output layer weights and then proceeding to the preceding hidden layers to carry out the computation for the remaining hidden-hidden layer weights (for more than one hidden layers) and finally ending up with the input-hidden layer weights. 26 26

Multilayer Perceptron v This also implies that a change in a particular layerto-layer set of weights requires that the partial derivatives in the preceding layer are also known at place. This algorithm of adjusting the weights of the different network layers starting from the output layer and proceeding downstream is referred to as the backpropagation algorithm. v It is readily used for overcoming the shortcomings of the gradient descent algorithm in dealing with the error compensation and weight adjustment procedure in higher layered neural network architectures. 27 27

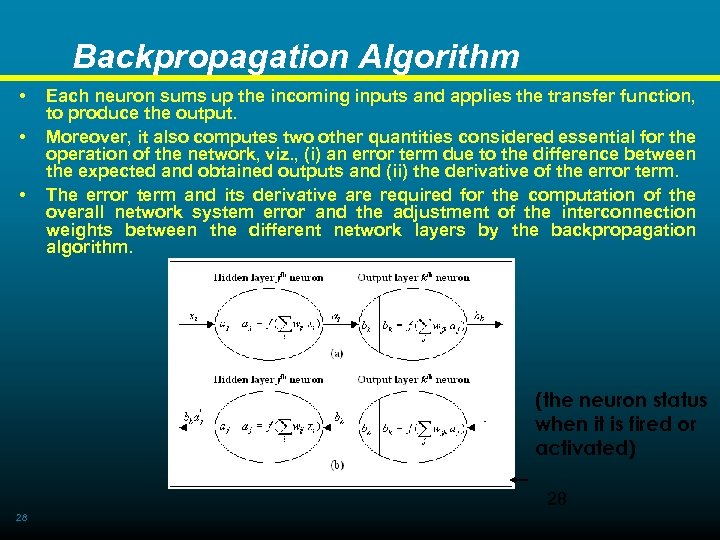

Backpropagation Algorithm • • • Each neuron sums up the incoming inputs and applies the transfer function, to produce the output. Moreover, it also computes two other quantities considered essential for the operation of the network, viz. , (i) an error term due to the difference between the expected and obtained outputs and (ii) the derivative of the error term. The error term and its derivative are required for the computation of the overall network system error and the adjustment of the interconnection weights between the different network layers by the backpropagation algorithm. (the neuron status when it is fired or activated) 28 28

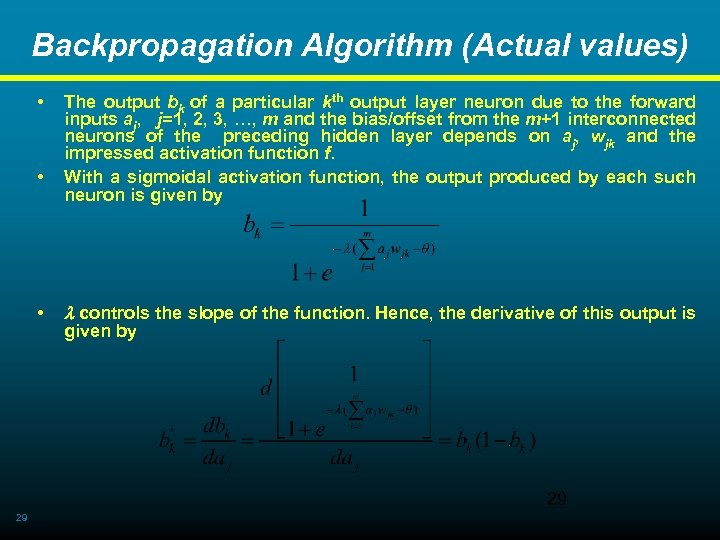

Backpropagation Algorithm (Actual values) • • • The output bk of a particular kth output layer neuron due to the forward inputs aj, j=1, 2, 3, …, m and the bias/offset from the m+1 interconnected neurons of the preceding hidden layer depends on aj, wjk and the impressed activation function f. With a sigmoidal activation function, the output produced by each such neuron is given by controls the slope of the function. Hence, the derivative of this output is given by 29 29

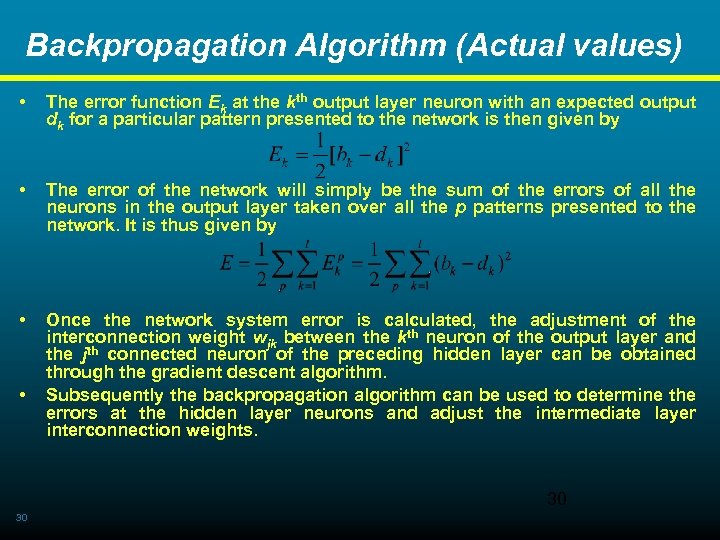

Backpropagation Algorithm (Actual values) • The error function Ek at the kth output layer neuron with an expected output dk for a particular pattern presented to the network is then given by • The error of the network will simply be the sum of the errors of all the neurons in the output layer taken over all the p patterns presented to the network. It is thus given by • Once the network system error is calculated, the adjustment of the interconnection weight wjk between the kth neuron of the output layer and the jth connected neuron of the preceding hidden layer can be obtained through the gradient descent algorithm. Subsequently the backpropagation algorithm can be used to determine the errors at the hidden layer neurons and adjust the intermediate layer interconnection weights. • 30 30

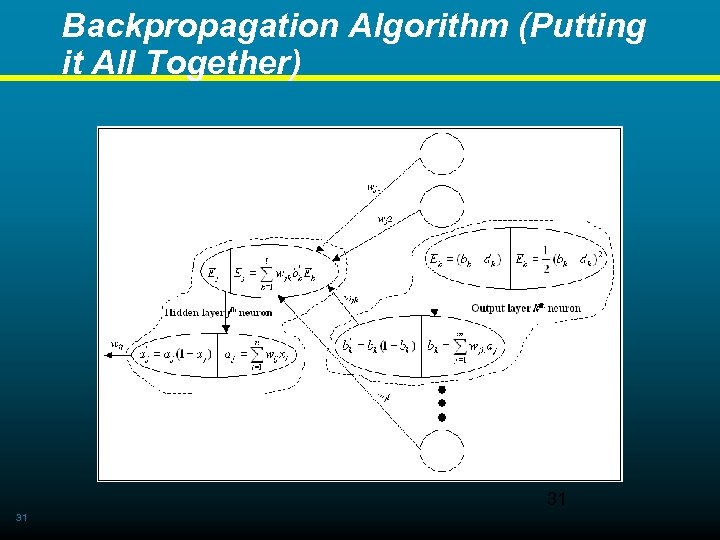

Backpropagation Algorithm (Putting it All Together) 31 31

Backpropagation Algorithm • Thus, the kth output layer neuron (shown by dotted lines) can be regarded as a collection of two connected computational/storage parts. • one part housing the output bk and its derivative • the other part housing the error term Ek, its derivative. • Following the propagation characteristics of the neurons, from one part of the kth output layer neuron is propagated to its other part containing bk and . Hence this kth output layer neuron computes the product i. e. which represents the kth component of the error to be backpropagated to the connected hidden layer neurons. • This backpropagated error term arises from the summed up product of two components (i) the forward direction propagated outputs aj, j=1, 2, 3, …, m and the bias/offset of connected hidden layer neurons and (ii) the hidden-to-output layer interconnection weights wjk. • Hence, the backpropagated error ( k) is given by the gradient of E with respect to both aj and wjk, i. e. 32 32

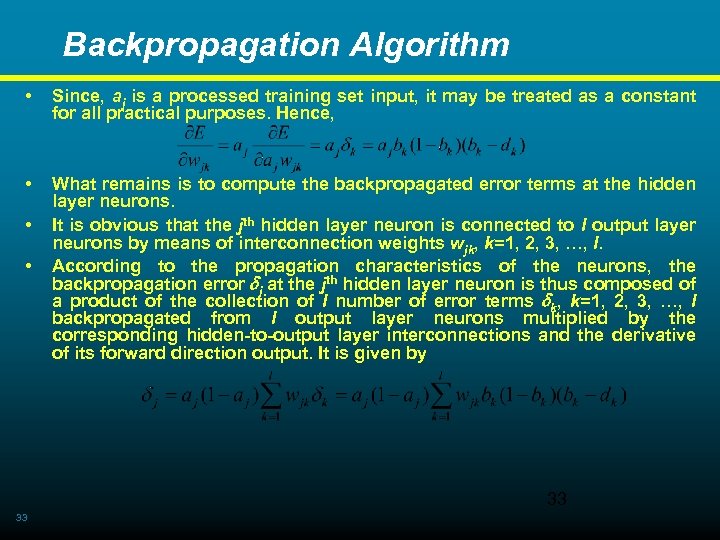

Backpropagation Algorithm • Since, aj is a processed training set input, it may be treated as a constant for all practical purposes. Hence, • What remains is to compute the backpropagated error terms at the hidden layer neurons. It is obvious that the jth hidden layer neuron is connected to l output layer neurons by means of interconnection weights wjk, k=1, 2, 3, …, l. According to the propagation characteristics of the neurons, the backpropagation error j at the jth hidden layer neuron is thus composed of a product of the collection of l number of error terms k, k=1, 2, 3, …, l backpropagated from l output layer neurons multiplied by the corresponding hidden-to-output layer interconnections and the derivative of its forward direction output. It is given by • • 33 33

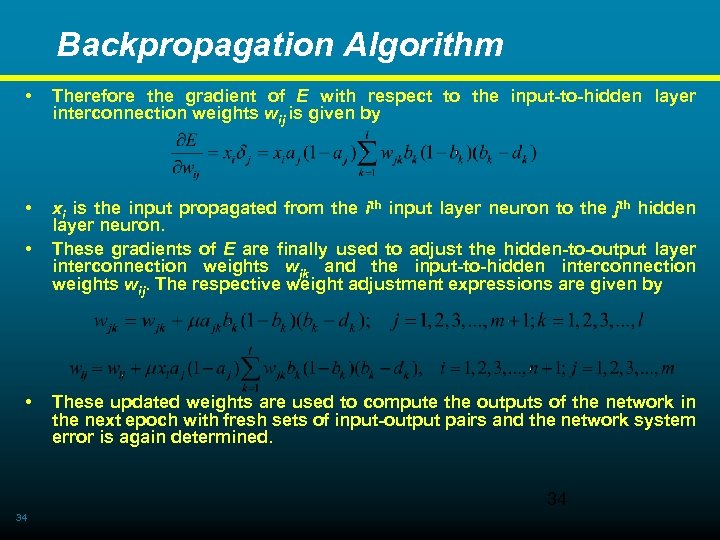

Backpropagation Algorithm • Therefore the gradient of E with respect to the input-to-hidden layer interconnection weights wij is given by • xi is the input propagated from the ith input layer neuron to the jth hidden layer neuron. These gradients of E are finally used to adjust the hidden-to-output layer interconnection weights wjk and the input-to-hidden interconnection weights wij. The respective weight adjustment expressions are given by • • These updated weights are used to compute the outputs of the network in the next epoch with fresh sets of input-output pairs and the network system error is again determined. 34 34

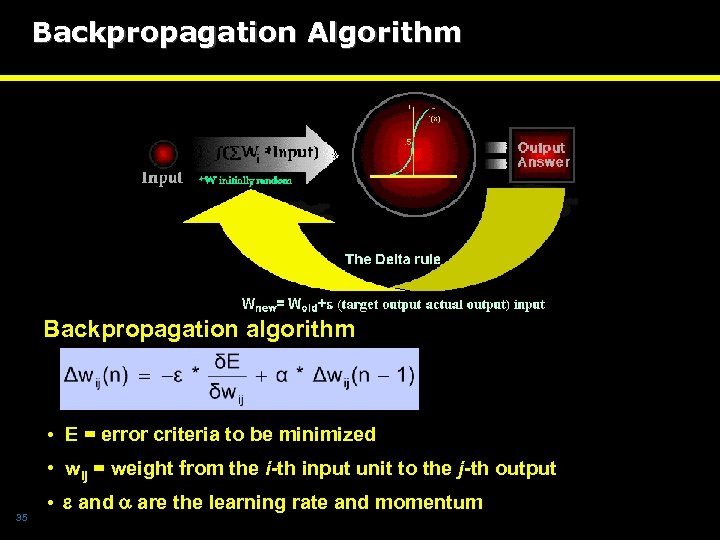

Backpropagation Algorithm Backpropagation algorithm • E = error criteria to be minimized • wij = weight from the i-th input unit to the j-th output 35 • and are the learning rate and momentum

Designing Neural Networks In the conventional design – user has to specify : • Number of neurons • Distribution of layers • Interconnection between neurons and layers Topological optimization algorithms (limitations ? ) * Network Pruning * Network Growing Tiling ( Mezard et al, 1989 ) Upstart ( Frean et al, 1990 ) Cascade Correlation ( Fahlman et al, 1990 ) Exentron (Baffles et al 1992) 36

Choosing Hidden Neurons A large number of hidden neurons will ensure the correct learning and the network is able to correctly predict the data it has been trained on, but its performance on new data, its ability to generalise, is compromised. With too few a hidden neurons, the network may be unable to learn the relationships amongst the data and the error will fail to fall below an acceptable level. Selection of the number of hidden neurons is a crucial decision. 37 Often a trial and error approach is taken.

Choosing Initial Weights The learning algorithm uses a steepest descent technique, which rolls straight downhill in weight space until the first valley is reached. This valley may not correspond to a zero error for the resulting network. This makes the choice of initial starting point in the multidimensional weight space critical. However, there are no recommended rules for this selection except trying several different starting weight values to see if the network results are improved. 38

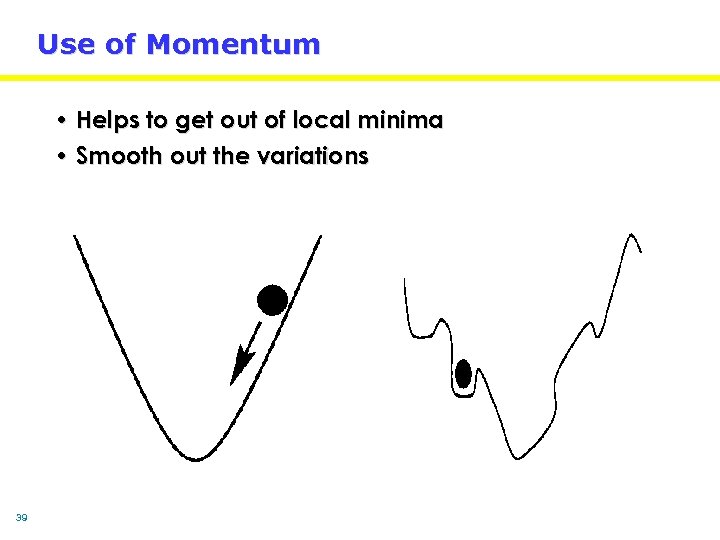

Use of Momentum • Helps to get out of local minima • Smooth out the variations 39

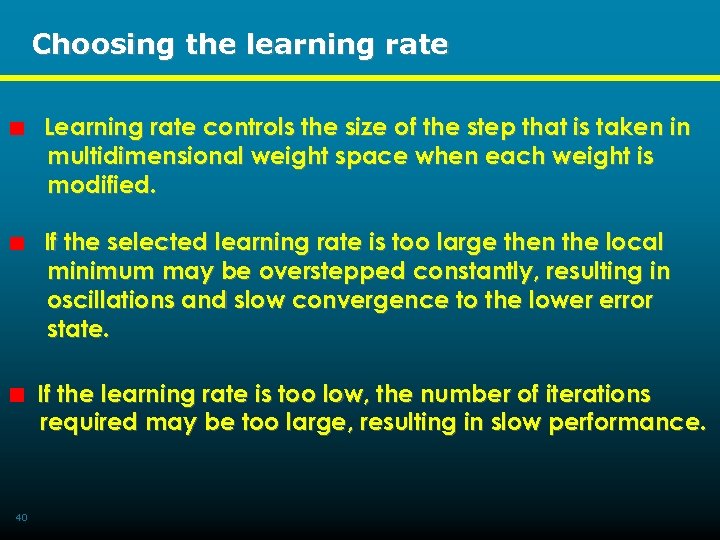

Choosing the learning rate Learning rate controls the size of the step that is taken in multidimensional weight space when each weight is modified. If the selected learning rate is too large then the local minimum may be overstepped constantly, resulting in oscillations and slow convergence to the lower error state. If the learning rate is too low, the number of iterations required may be too large, resulting in slow performance. 40

Effects of Different Learning Rates 41

Gradient Descent Performance Desired behavior Trapped in local minima Undesired behavior 42

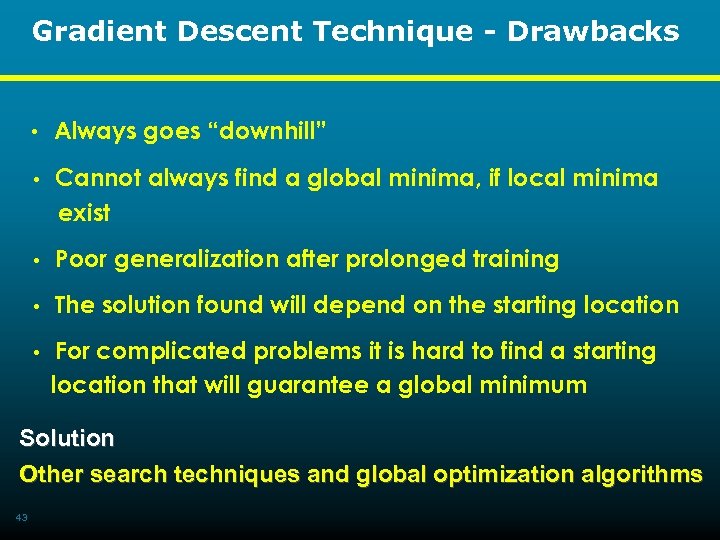

Gradient Descent Technique - Drawbacks • Always goes “downhill” • Cannot always find a global minima, if local minima exist • Poor generalization after prolonged training • The solution found will depend on the starting location • For complicated problems it is hard to find a starting location that will guarantee a global minimum Solution Other search techniques and global optimization algorithms 43

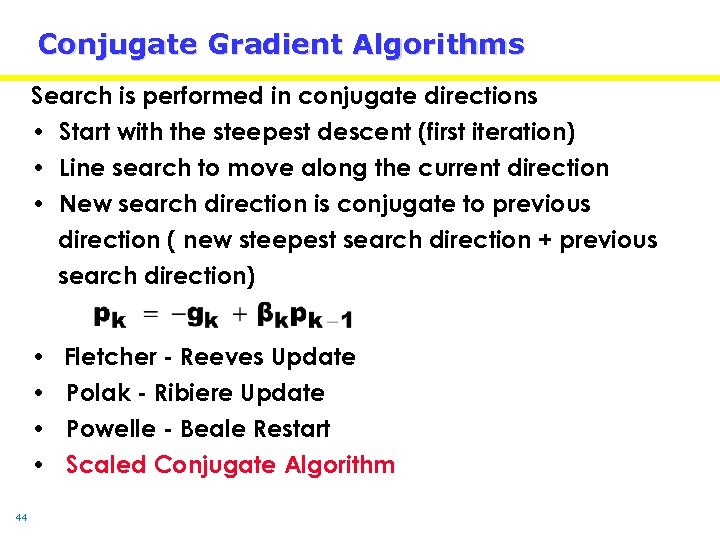

Conjugate Gradient Algorithms Search is performed in conjugate directions • Start with the steepest descent (first iteration) • Line search to move along the current direction • New search direction is conjugate to previous direction ( new steepest search direction + previous search direction) • • 44 Fletcher - Reeves Update Polak - Ribiere Update Powelle - Beale Restart Scaled Conjugate Algorithm

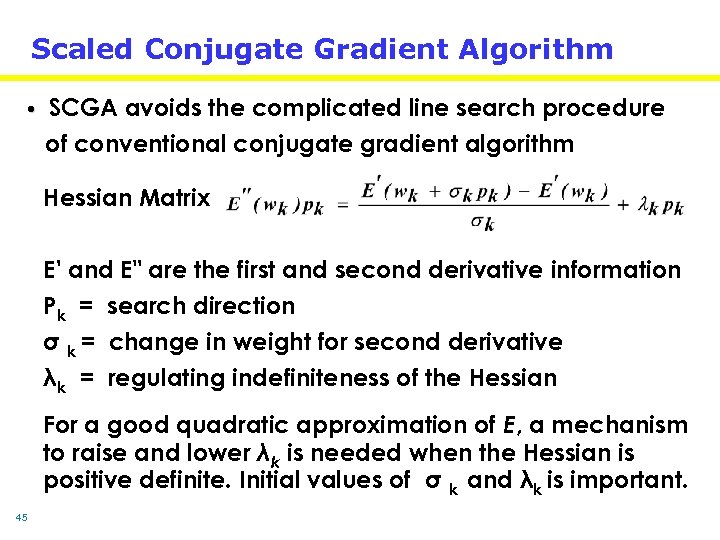

Scaled Conjugate Gradient Algorithm • SCGA avoids the complicated line search procedure of conventional conjugate gradient algorithm Hessian Matrix E' and E" are the first and second derivative information Pk = search direction σ k = change in weight for second derivative λk = regulating indefiniteness of the Hessian For a good quadratic approximation of E, a mechanism to raise and lower λk is needed when the Hessian is positive definite. Initial values of σ k and λk is important. 45

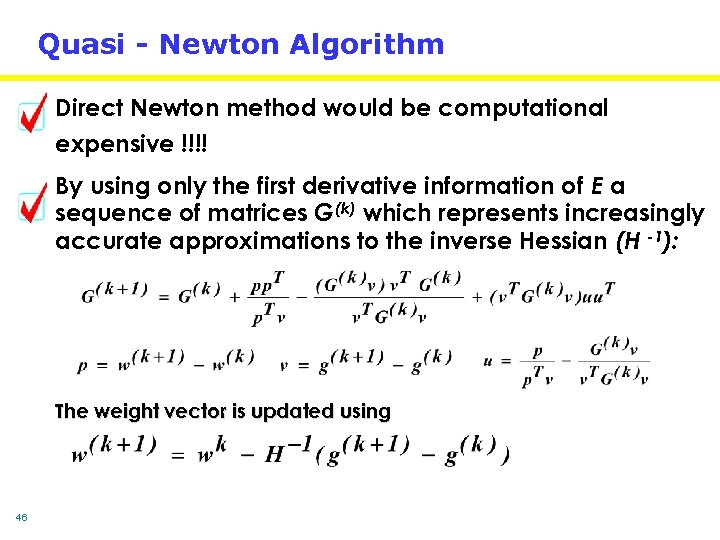

Quasi - Newton Algorithm Direct Newton method would be computational expensive !!!! By using only the first derivative information of E a sequence of matrices G(k) which represents increasingly accurate approximations to the inverse Hessian (H -1): The weight vector is updated using 46

Levenberg-Marquardt Algorithm The LM algorithm an approximation to the Hessian matrix in the following Newton-like update: When the scalar μ is zero, this is just Newton's method, using the approximate Hessian matrix. When μ is large, this becomes gradient descent with a small step size. μ is decreased after each successful step (reduction in performance function) and is increased only when a tentative step would increase the performance function. By doing this, the performance function will always be reduced at each iteration of the algorithm 47

Limitations of Conventional Design of Neural Nets • What is the optimal architecture for a given problem (no of neurons and no of hidden layers) ? • What activation function should one choose? • What is the optimal learning algorithm and its parameters? To demonstrate the difficulties in designing “optimal” neural networks we will consider three famous time series benchmark problems ! 48

Chaotic Time Series for Performance Analysis of Learning Algorithms Waste Water Flow Prediction The data set is represented as [f(t), f(t-1), a(t), b(t), f(t+1)] where f(t), f(t-1) and f(t+1) are the water flows at time t, t-1, and t+1 (hours) respectively. a(t) and b(t) are the moving averages for 12 hours and 24 hours. Mackey-Glass Chaotic Time Series Using the value x(t-18), x(t-12), x(t-6), x(t) to predict x(t+6). Gas Furnace Time Series Data This time series was used to predict the CO 2 concentration y(t+1). Data is represented as [u(t), y(t+1)] 49

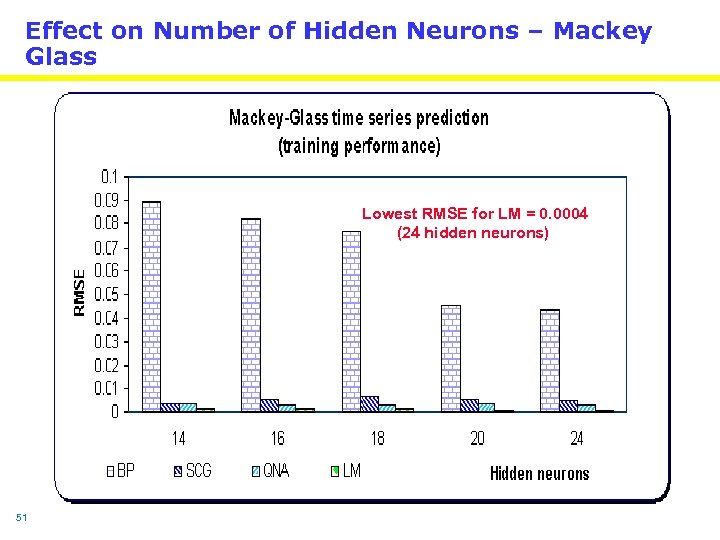

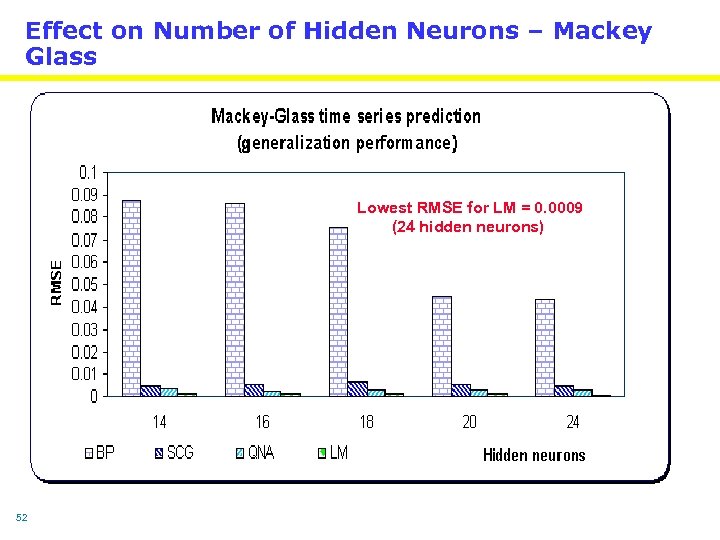

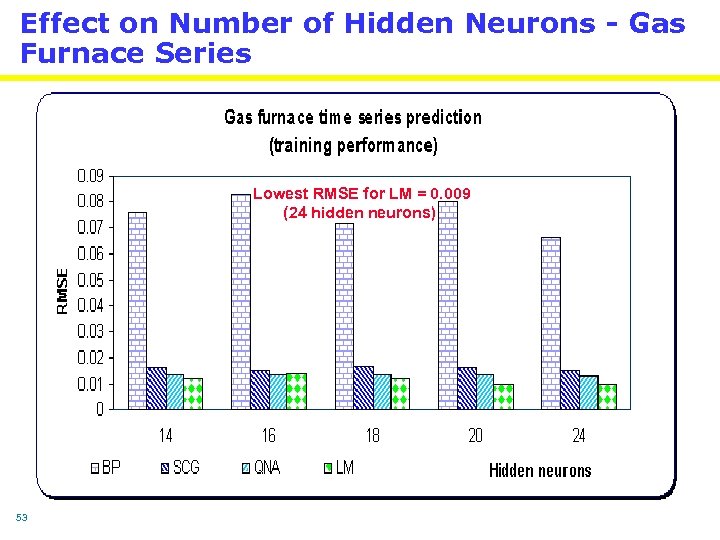

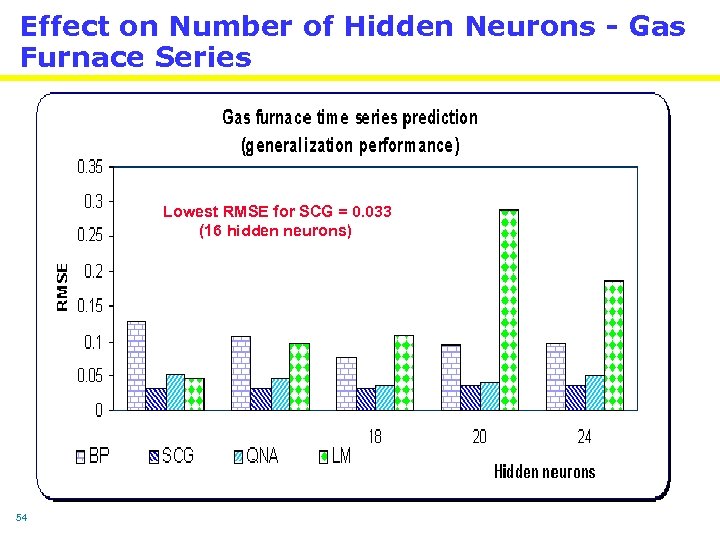

Experimentation setup 3 bench mark problems Mackey glass, Gas furnace and waster water time series Four Learning algorithms Backpropagation (BP), scaled conjugate algorithm (SCG), Quasi Newton (QNA) and Levenberg Marquardt algorithm (LM). Training terminated after 2500 epochs Changing number of hidden neurons 14, 16, 18, 20 and 24 Changing the activation functions of hidden neurons Analyze the computational complexity of the different algorithms 50

Effect on Number of Hidden Neurons – Mackey Glass Lowest RMSE for LM = 0. 0004 (24 hidden neurons) 51

Effect on Number of Hidden Neurons – Mackey Glass Lowest RMSE for LM = 0. 0009 (24 hidden neurons) 52

Effect on Number of Hidden Neurons - Gas Furnace Series Lowest RMSE for LM = 0. 009 (24 hidden neurons) 53

Effect on Number of Hidden Neurons - Gas Furnace Series Lowest RMSE for SCG = 0. 033 (16 hidden neurons) 54

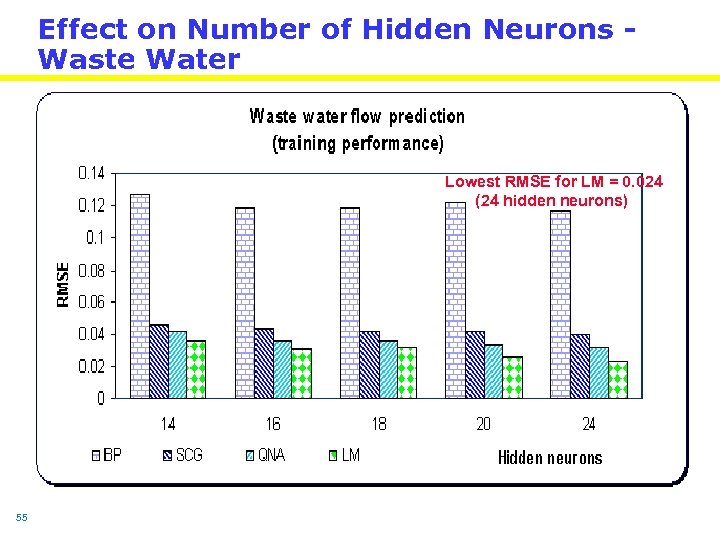

Effect on Number of Hidden Neurons Waste Water Lowest RMSE for LM = 0. 024 (24 hidden neurons) 55

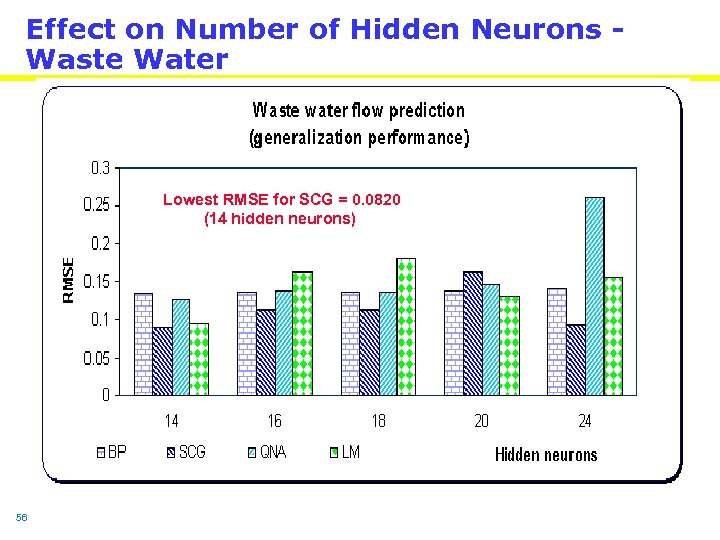

Effect on Number of Hidden Neurons Waste Water Lowest RMSE for SCG = 0. 0820 (14 hidden neurons) 56

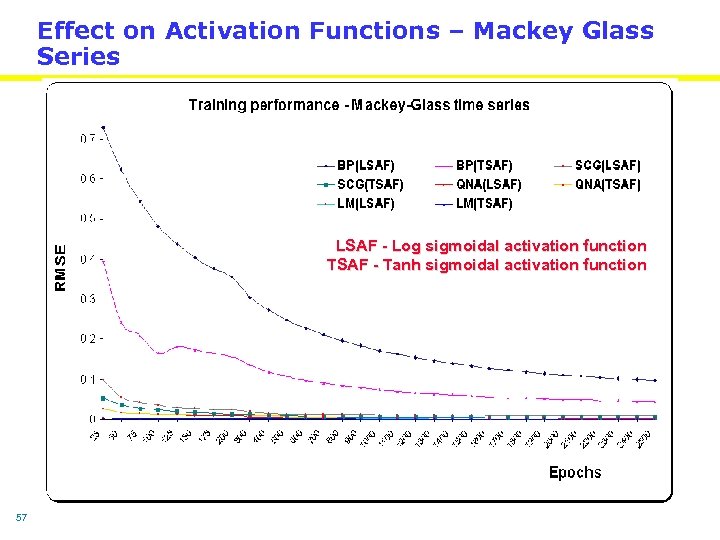

Effect on Activation Functions – Mackey Glass Series LSAF - Log sigmoidal activation function TSAF - Tanh sigmoidal activation function 57

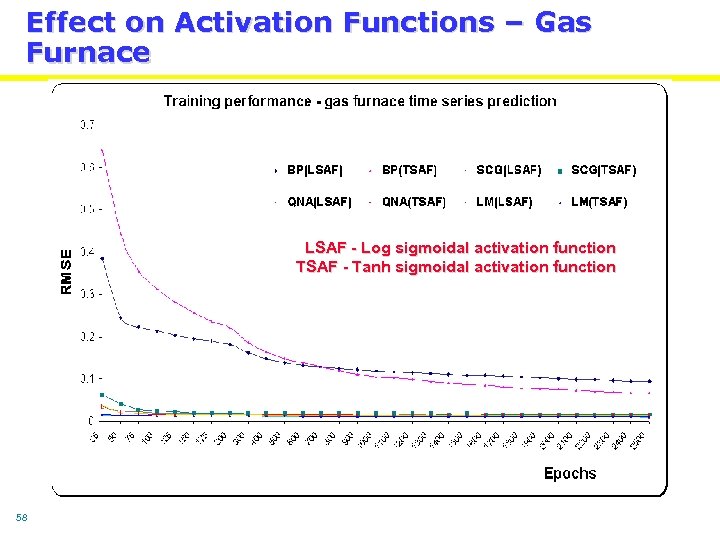

Effect on Activation Functions – Gas Furnace LSAF - Log sigmoidal activation function TSAF - Tanh sigmoidal activation function 58

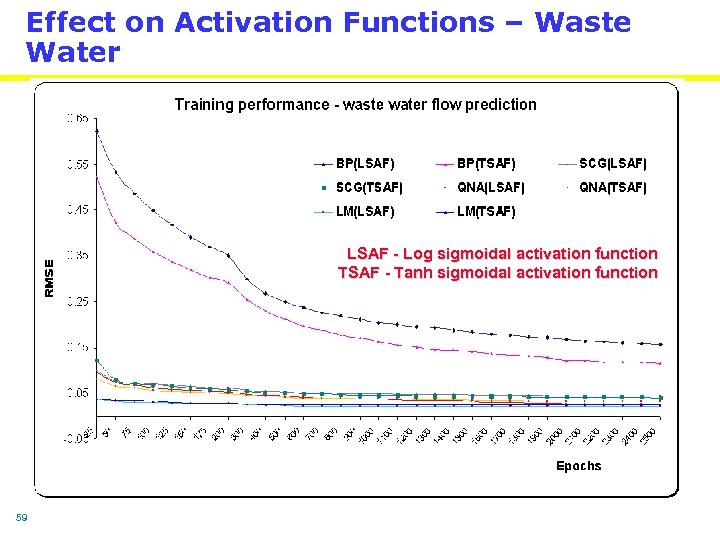

Effect on Activation Functions – Waste Water LSAF - Log sigmoidal activation function TSAF - Tanh sigmoidal activation function 59

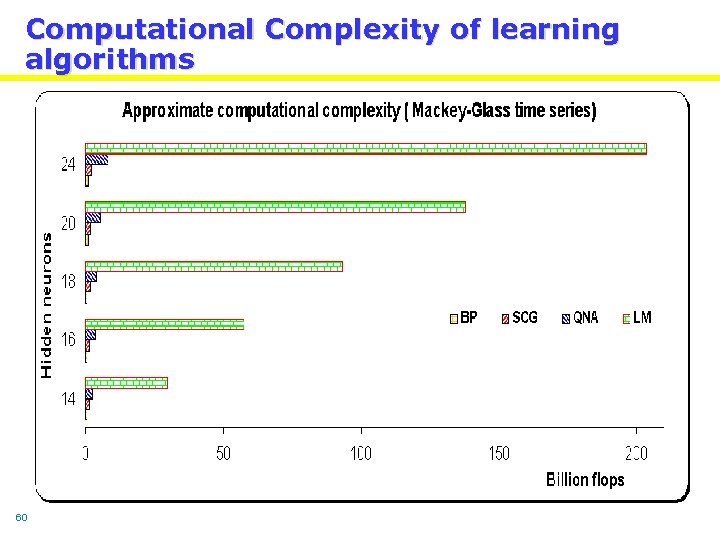

Computational Complexity of learning algorithms 60

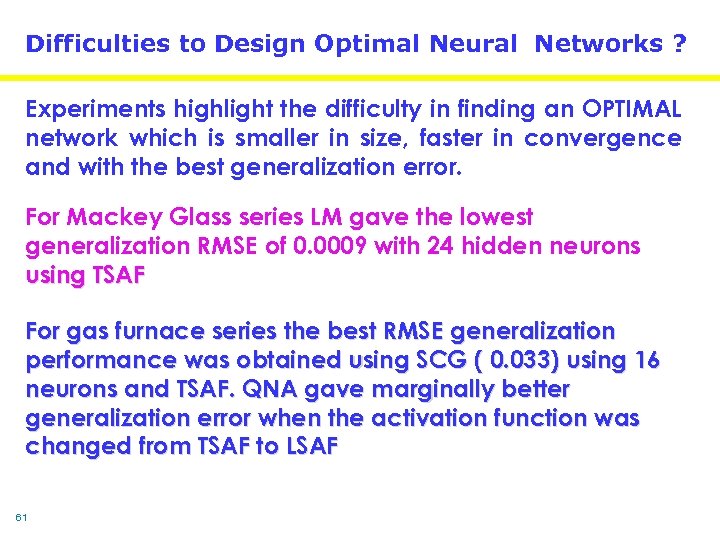

Difficulties to Design Optimal Neural Networks ? Experiments highlight the difficulty in finding an OPTIMAL network which is smaller in size, faster in convergence and with the best generalization error. For Mackey Glass series LM gave the lowest generalization RMSE of 0. 0009 with 24 hidden neurons using TSAF For gas furnace series the best RMSE generalization performance was obtained using SCG ( 0. 033) using 16 neurons and TSAF. QNA gave marginally better generalization error when the activation function was changed from TSAF to LSAF 61

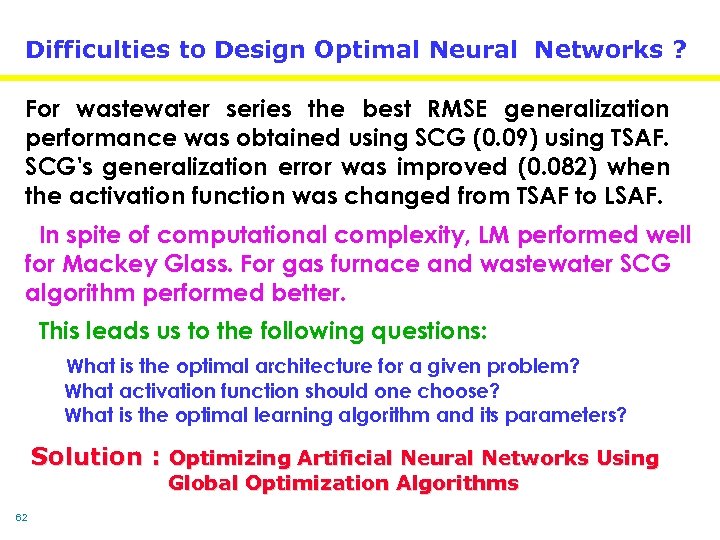

Difficulties to Design Optimal Neural Networks ? For wastewater series the best RMSE generalization performance was obtained using SCG (0. 09) using TSAF. SCG's generalization error was improved (0. 082) when the activation function was changed from TSAF to LSAF. In spite of computational complexity, LM performed well for Mackey Glass. For gas furnace and wastewater SCG algorithm performed better. This leads us to the following questions: What is the optimal architecture for a given problem? What activation function should one choose? What is the optimal learning algorithm and its parameters? Solution : Optimizing Artificial Neural Networks Using Global Optimization Algorithms 62

Global Optimization Algorithms • No need for functional derivative information • Repeated evaluations of objective functions • Intuitive guidelines (simplicity) • Randomness • Analytic opacity • Self optimization • Ability to handle complicated tasks • Broad applicability Disadvantage Computational expensive (use parallel engines) 63

Popular Global Optimization Algorithms Genetic algorithms Simulated annealing Tabu search Random search Down hill simplex search GRASP Clustering methods Many others 64

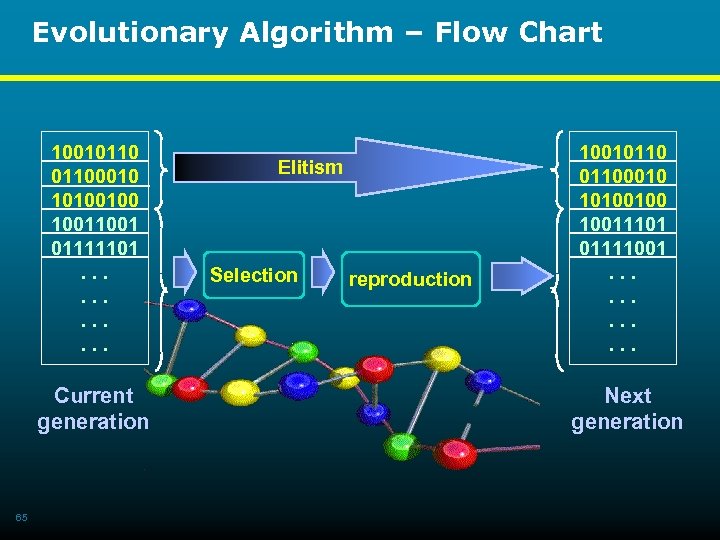

Evolutionary Algorithm – Flow Chart 100101100010 10100100 1001 01111101. . . Current generation 65 Elitism Selection reproduction 100101100010 10100100 10011101 01111001. . . Next generation

Evolutionary Artificial Neural Networks 66

Evolutionary Neural Networks – Design Strategy • Complete adaptation is achieved through three levels of evolution, i. e. , the evolution of connection weights, architectures and learning rules (algorithms), which progress on different time scales. 67

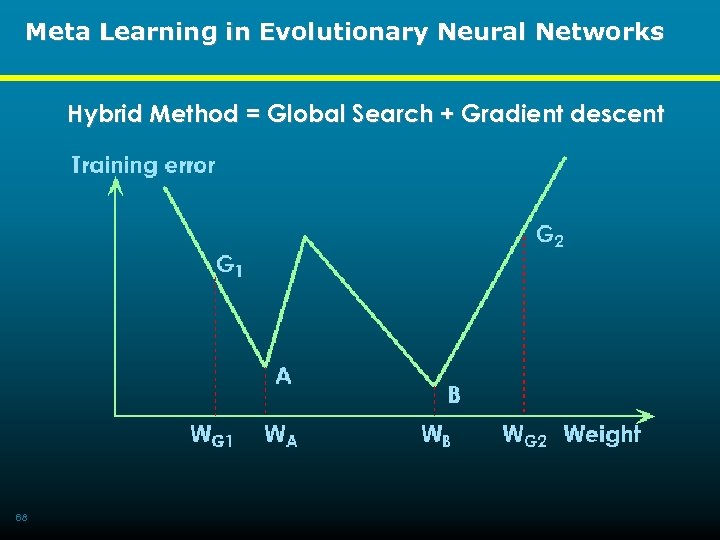

Meta Learning in Evolutionary Neural Networks Hybrid Method = Global Search + Gradient descent 68

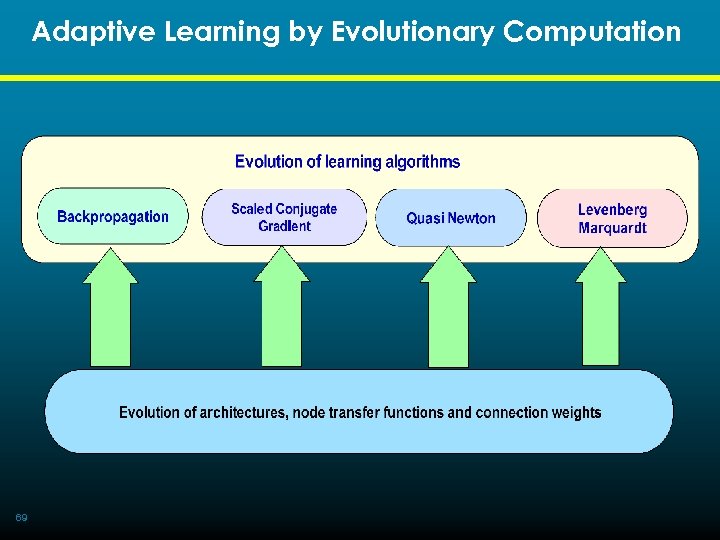

Adaptive Learning by Evolutionary Computation 69

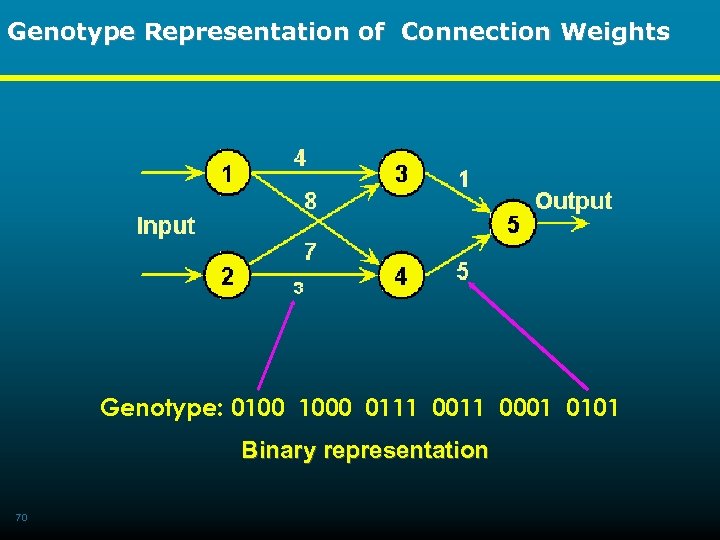

Genotype Representation of Connection Weights Genotype: 0100 1000 0111 0001 0101 Binary representation 70

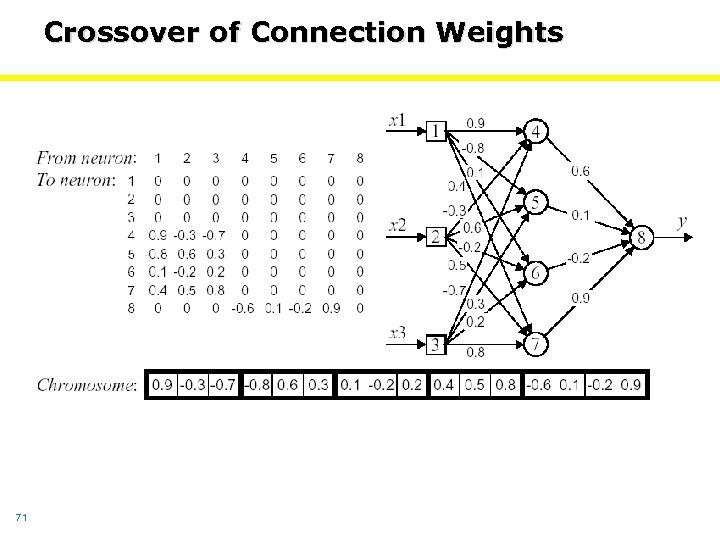

Crossover of Connection Weights 71

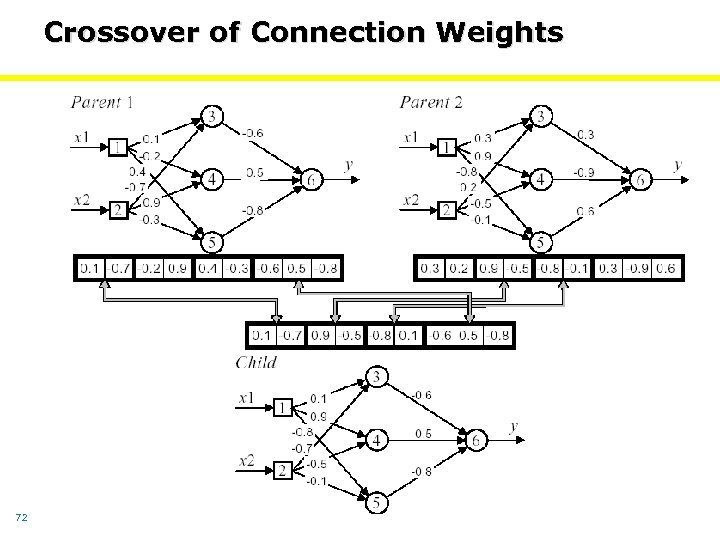

Crossover of Connection Weights 72

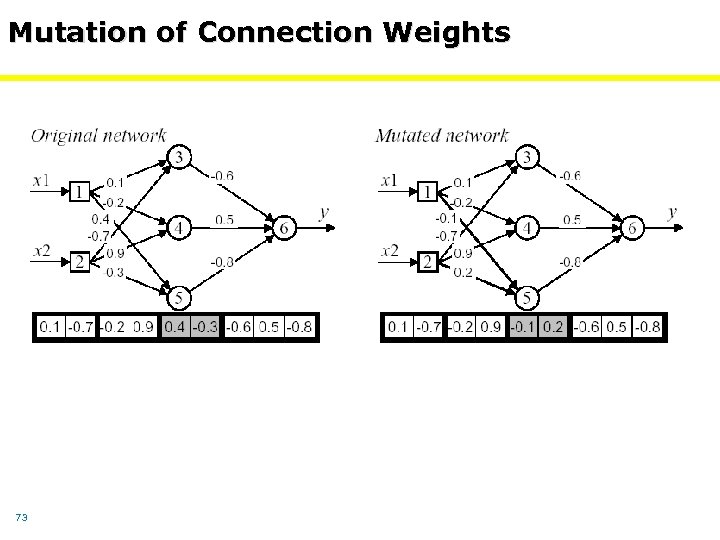

Mutation of Connection Weights 73

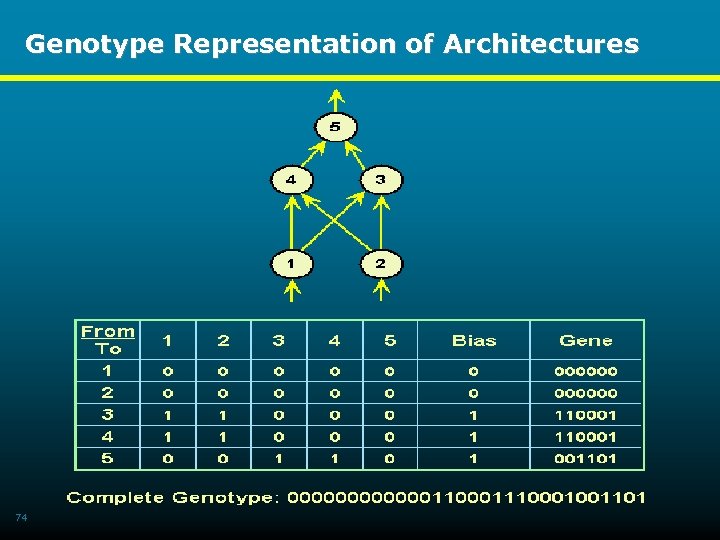

Genotype Representation of Architectures 74

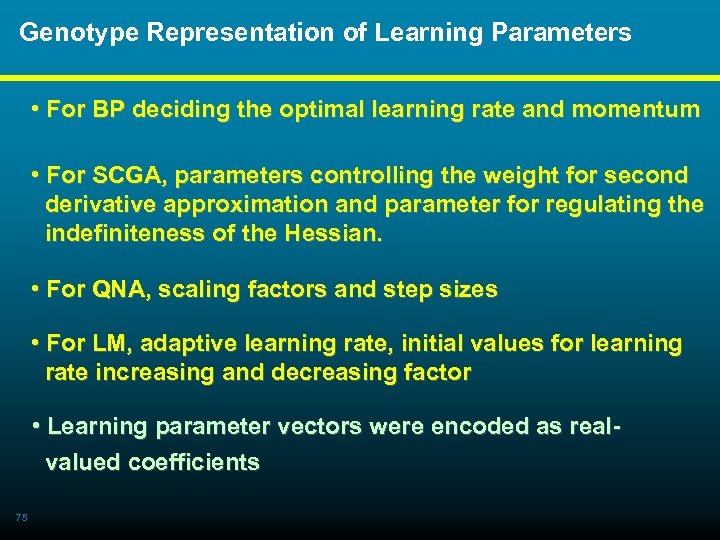

Genotype Representation of Learning Parameters • For BP deciding the optimal learning rate and momentum • For SCGA, parameters controlling the weight for second derivative approximation and parameter for regulating the indefiniteness of the Hessian. • For QNA, scaling factors and step sizes • For LM, adaptive learning rate, initial values for learning rate increasing and decreasing factor • Learning parameter vectors were encoded as real valued coefficients 75

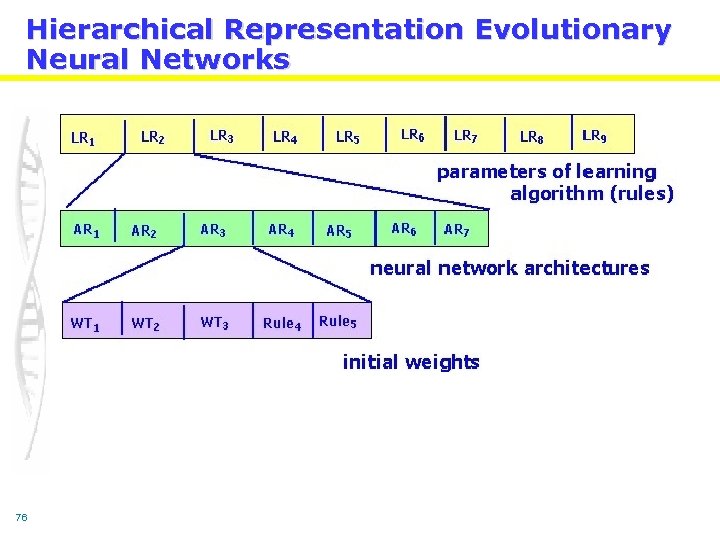

Hierarchical Representation Evolutionary Neural Networks 76

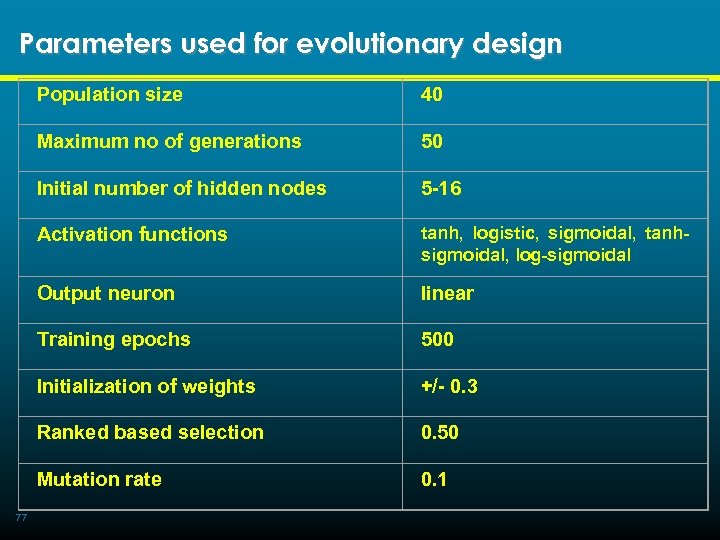

Parameters used for evolutionary design Population size Maximum no of generations 50 Initial number of hidden nodes 5 -16 Activation functions tanh, logistic, sigmoidal, tanhsigmoidal, log-sigmoidal Output neuron linear Training epochs 500 Initialization of weights +/- 0. 3 Ranked based selection 0. 50 Mutation rate 77 40 0. 1

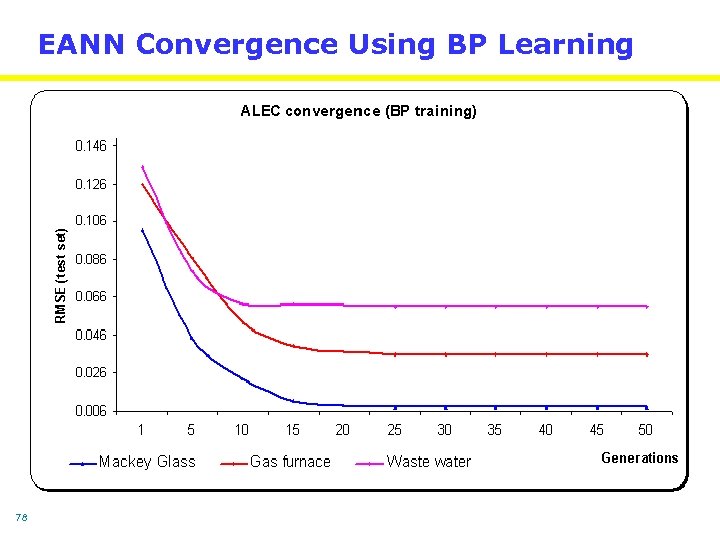

EANN Convergence Using BP Learning 78

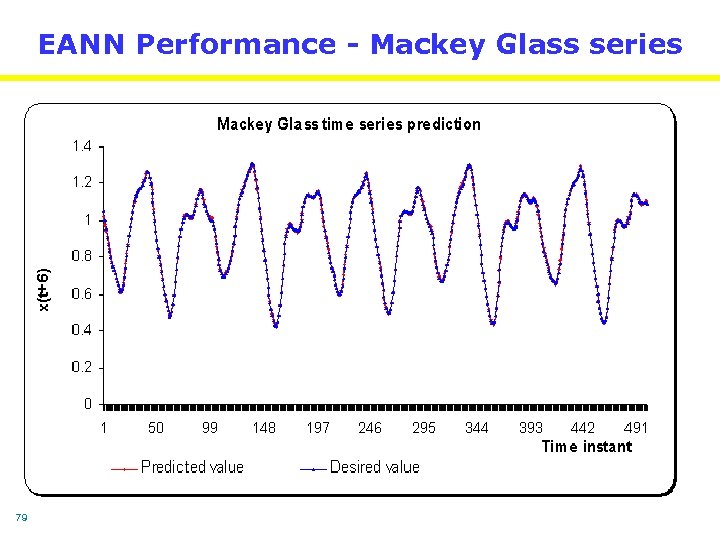

EANN Performance - Mackey Glass series 79

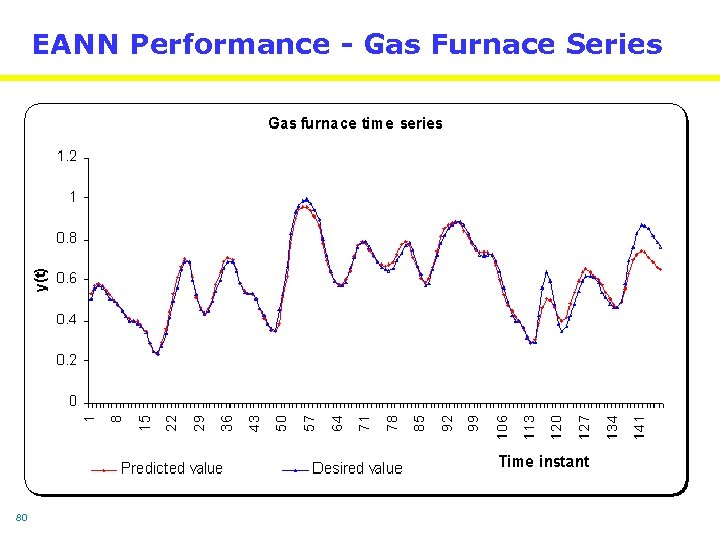

EANN Performance - Gas Furnace Series 80

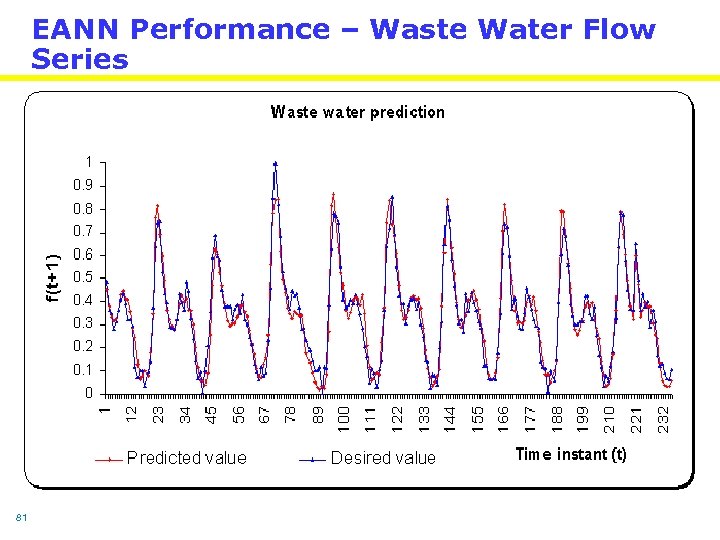

EANN Performance – Waste Water Flow Series 81

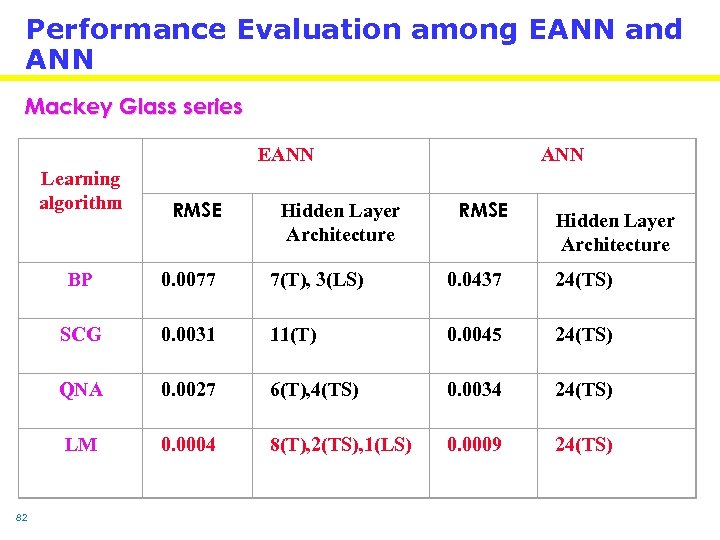

Performance Evaluation among EANN and ANN Mackey Glass series EANN Learning algorithm RMSE Hidden Layer Architecture ANN RMSE Hidden Layer Architecture BP 7(T), 3(LS) 0. 0437 24(TS) SCG 0. 0031 11(T) 0. 0045 24(TS) QNA 0. 0027 6(T), 4(TS) 0. 0034 24(TS) LM 82 0. 0077 0. 0004 8(T), 2(TS), 1(LS) 0. 0009 24(TS)

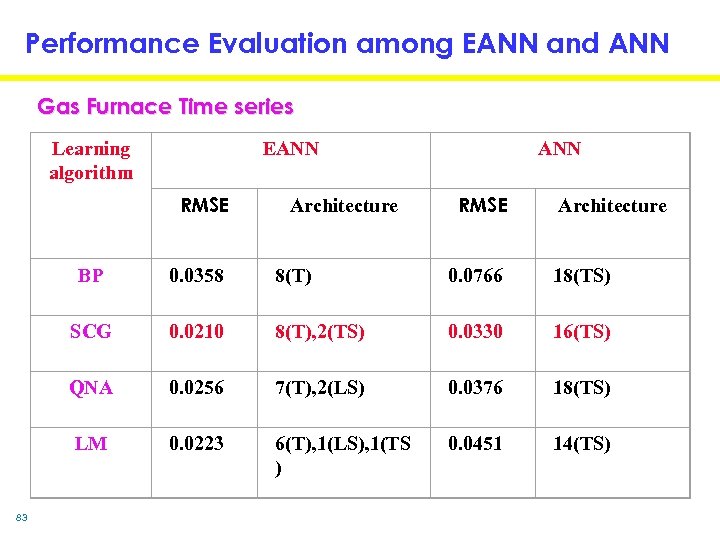

Performance Evaluation among EANN and ANN Gas Furnace Time series EANN Learning algorithm RMSE Architecture ANN RMSE Architecture BP 8(T) 0. 0766 18(TS) SCG 0. 0210 8(T), 2(TS) 0. 0330 16(TS) QNA 0. 0256 7(T), 2(LS) 0. 0376 18(TS) LM 83 0. 0358 0. 0223 6(T), 1(LS), 1(TS ) 0. 0451 14(TS)

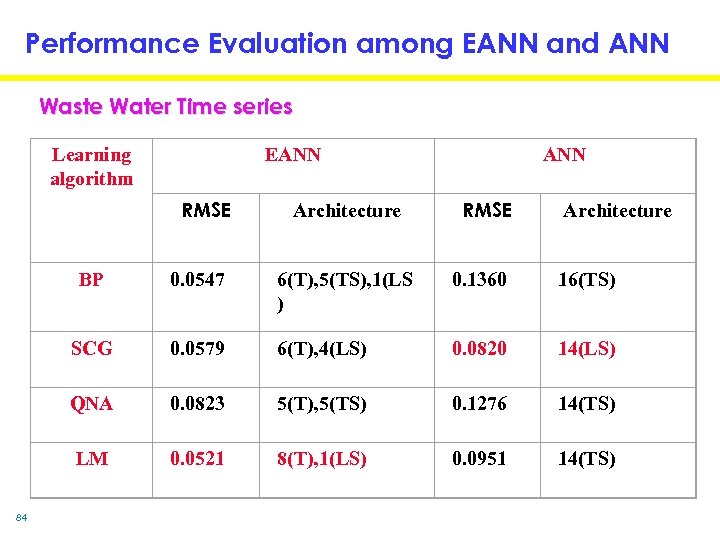

Performance Evaluation among EANN and ANN Waste Water Time series Learning algorithm EANN RMSE Architecture BP 6(T), 5(TS), 1(LS ) 0. 1360 16(TS) SCG 0. 0579 6(T), 4(LS) 0. 0820 14(LS) QNA 0. 0823 5(T), 5(TS) 0. 1276 14(TS) LM 84 0. 0547 0. 0521 8(T), 1(LS) 0. 0951 14(TS)

Efficiency of Evolutionary Neural Nets • Designing the architecture and correct learning algorithm is a tedious task for designing an optimal artificial neural network. • For critical applications and H/W implementations optimal design often becomes a necessity. Empirical results show the efficiency of EANN procedure • Average Number of hidden neurons reduced by more than 45% • Average RMSE on test set down by 65% Disadvantages of EANNs Computational complexity , Success depends on genotype representation. 85 Future works • More learning algorithms, evaluation of full population information (final generation).

Advantages of Neural Networks • Universal approximators • Capturing associations or discovering regularities within a set of patterns • Can handle large no of variables and huge volume of data • Useful when conventional approaches can’t be used to model relationships that are vaguely understood 86

Evolutionary Fuzzy Systems 87

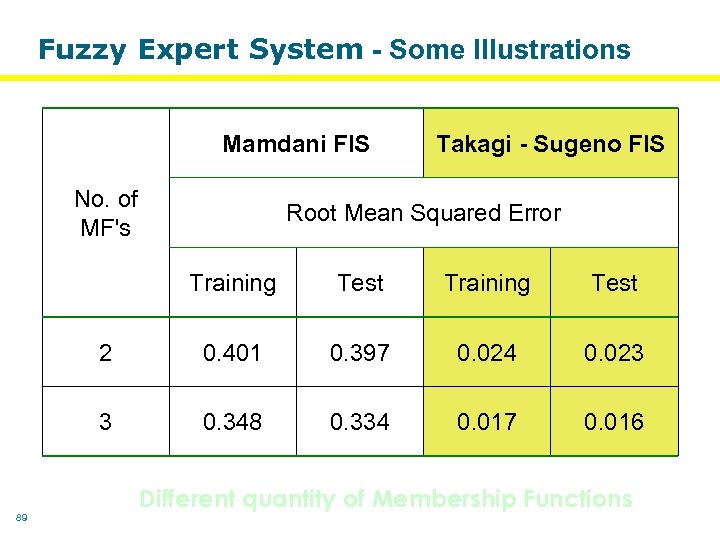

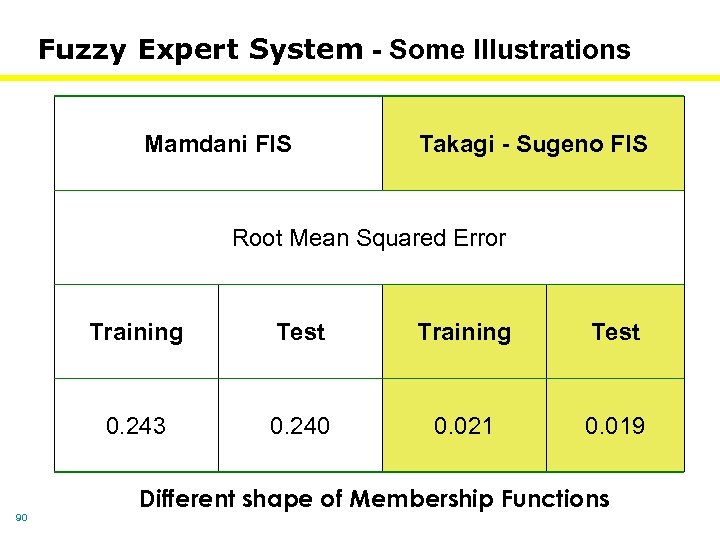

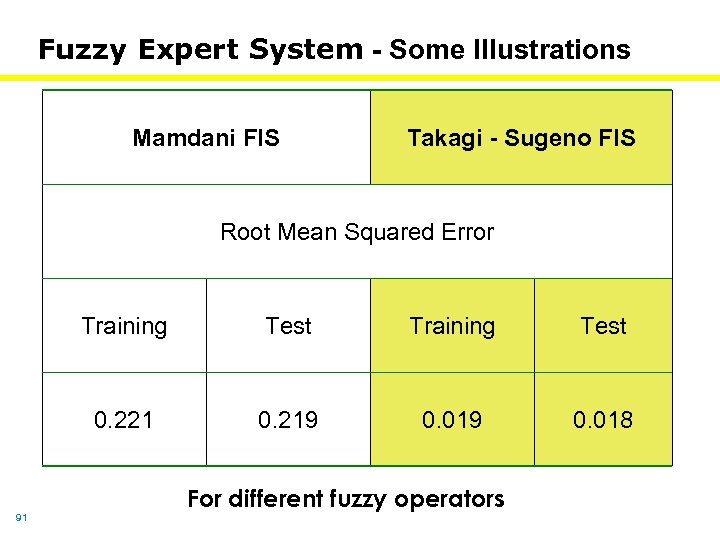

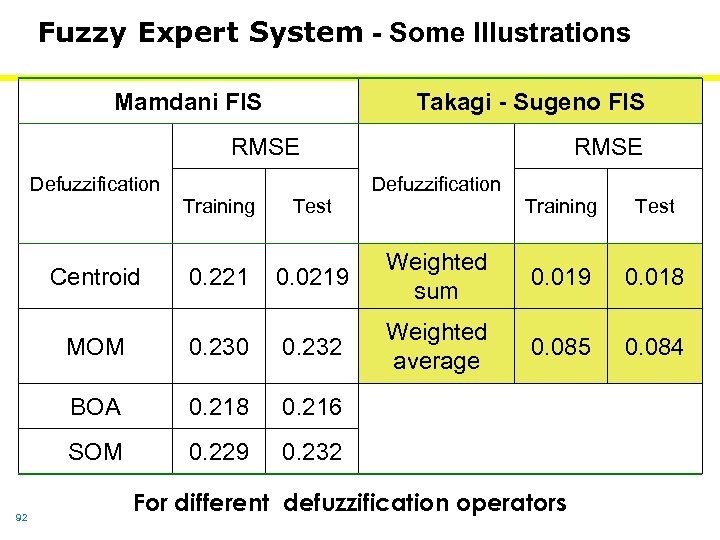

Fuzzy Expert System A fuzzy expert system to forecast the reactive power (P) at time t+1 by knowing the load current (I) and voltage (V) at time t. The experiment system consists of two stages: Developing the fuzzy expert system and performance evaluation using the test data. The model has two input variables (V and I) and one output variable (P). Training and testing data sets were extracted randomly from the master dataset. 60% of data was used for training and remaining 40% for testing. 88

Fuzzy Expert System - Some Illustrations Mamdani FIS No. of MF's Takagi - Sugeno FIS Root Mean Squared Error Training Test 2 0. 401 0. 397 0. 024 0. 023 3 89 Test 0. 348 0. 334 0. 017 0. 016 Different quantity of Membership Functions

Fuzzy Expert System - Some Illustrations Mamdani FIS Takagi - Sugeno FIS Root Mean Squared Error Training Test 0. 243 90 Test 0. 240 0. 021 0. 019 Different shape of Membership Functions

Fuzzy Expert System - Some Illustrations Mamdani FIS Takagi - Sugeno FIS Root Mean Squared Error Training Test 0. 221 91 Test 0. 219 0. 018 For different fuzzy operators

Fuzzy Expert System - Some Illustrations Mamdani FIS Takagi - Sugeno FIS RMSE Defuzzification Training Test Training Centroid 0. 0219 MOM 0. 230 0. 232 Weighted average BOA 0. 218 0. 229 0. 018 0. 085 0. 084 0. 216 SOM 92 0. 221 Weighted sum Test 0. 232 For different defuzzification operators

Summary of Fuzzy Modeling • Surface structure • Relevant input and output variables • Relevant fuzzy inference system • Number of linguistic terms associated with each • input / output variable • If-then rules Deep structure Type of membership functions Building up the knowledge base Fine tune parameters of MFs using regression and optimization techniques 93

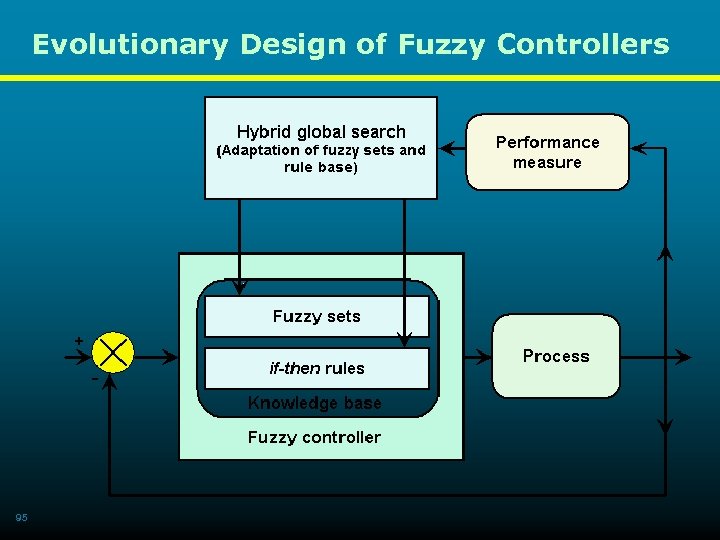

Evolutionary Design of Fuzzy Controllers Disadvantage of fuzzy controllers Requirement of expert knowledge to set up a system - Input-output variables, - Type(shape) of membership functions (MFs), - Quantity of MFs assigned to each variables, - Formulation of rule base. Advantages of evolutionary design - To minimize expert (human) input - Optimization of membership functions (type and quantity) - Optimization of rule base - Optimization / fine tuning of pre-existing fuzzy systems 94

Evolutionary Design of Fuzzy Controllers 95

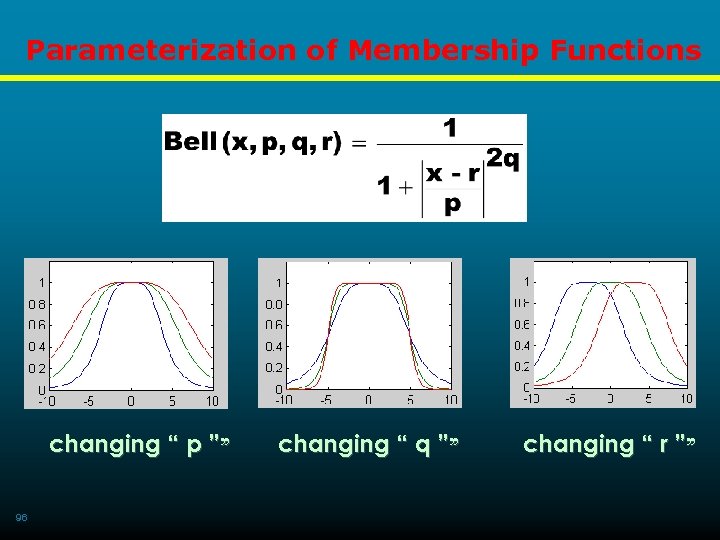

Parameterization of Membership Functions changing “ p ”” 96 changing “ q ”” changing “ r ””

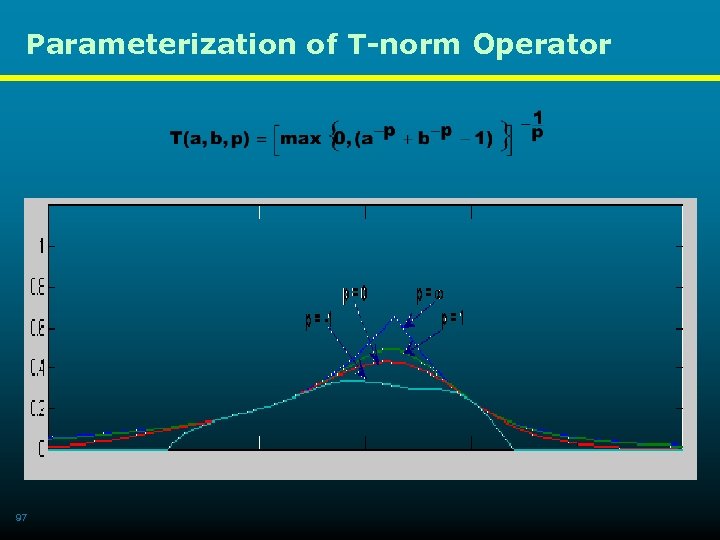

Parameterization of T-norm Operator 97

Parameterization of T-conorm Operator 98

Learning with Evolutionary Fuzzy Systems • Evolutionary algorithms are not learning algorithms. They offer a powerful and domain independent search method for a variety of learning tasks. • Three popular approaches in which evolutionary algorithms have been applied to the learning process of the fuzzy systems: • - Michigan approach - Pittsburgh approach - Iterative rule learning • Description of the above techniques follows …. . 99

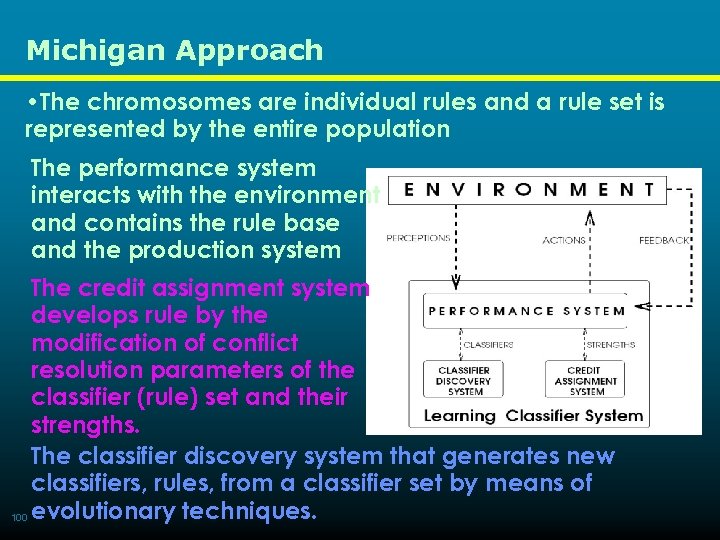

Michigan Approach • The chromosomes are individual rules and a rule set is represented by the entire population The performance system interacts with the environment and contains the rule base and the production system The credit assignment system develops rule by the modification of conflict resolution parameters of the classifier (rule) set and their strengths. The classifier discovery system that generates new classifiers, rules, from a classifier set by means of 100 evolutionary techniques.

Pittsburgh Approach • The chromosome encodes a whole rule base or • knowledge base. • Crossover helps to provide new combination of rules • Mutation provides new rules • Variable-length rule bases are used in some cases with special genetic operators for dealing with these variable-length and position independent genomes • While Michigan approach might be useful for onlinelearning Pittsburgh approach seem to be better suited for batch-mode learning. 101

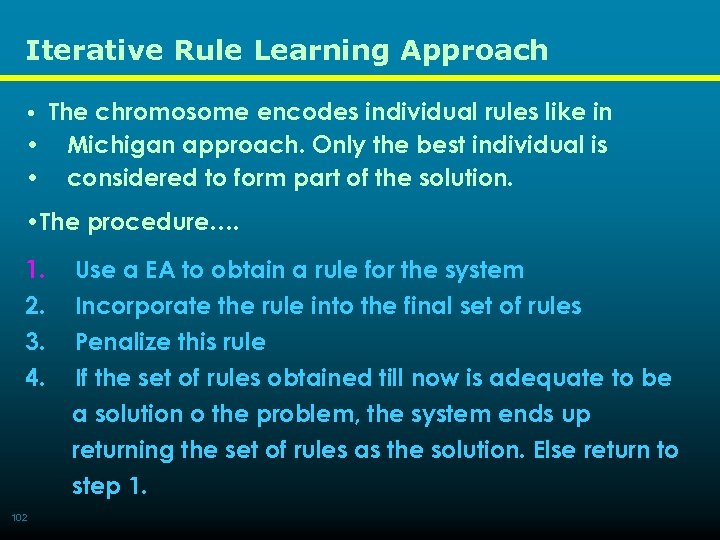

Iterative Rule Learning Approach • The chromosome encodes individual rules like in • • Michigan approach. Only the best individual is considered to form part of the solution. • The procedure…. 1. 2. 3. 4. 102 Use a EA to obtain a rule for the system Incorporate the rule into the final set of rules Penalize this rule If the set of rules obtained till now is adequate to be a solution o the problem, the system ends up returning the set of rules as the solution. Else return to step 1.

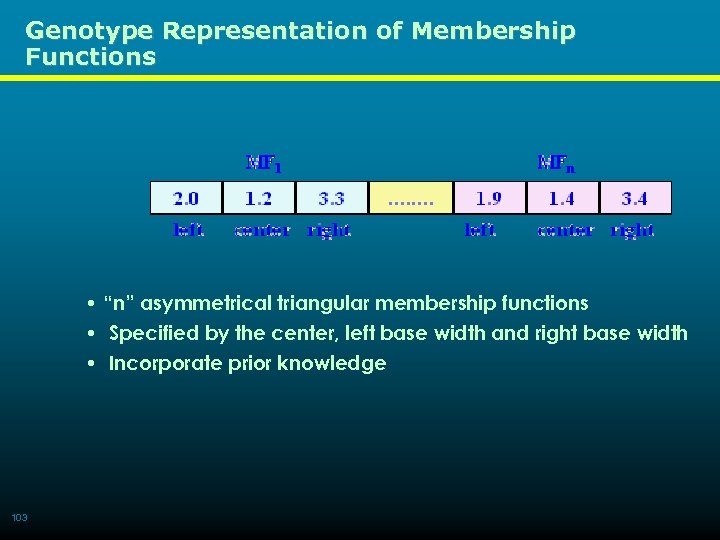

Genotype Representation of Membership Functions • “n” asymmetrical triangular membership functions • Specified by the center, left base width and right base width • Incorporate prior knowledge 103

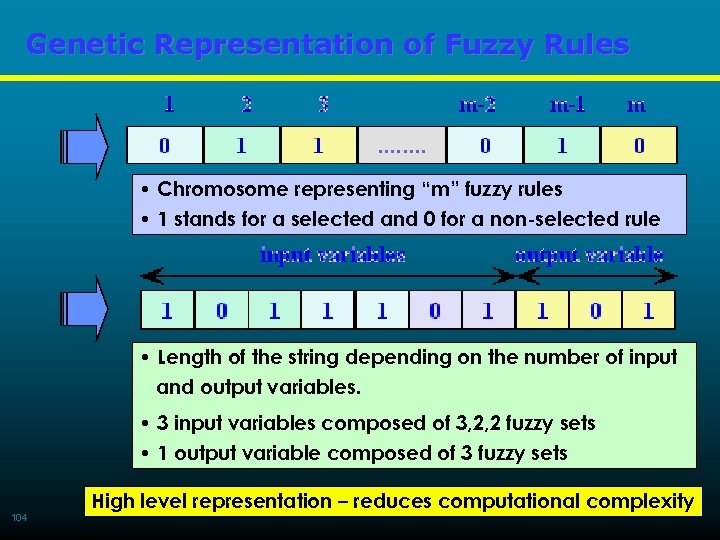

Genetic Representation of Fuzzy Rules • Chromosome representing “m” fuzzy rules • 1 stands for a selected and 0 for a non-selected rule • Length of the string depending on the number of input and output variables. • 3 input variables composed of 3, 2, 2 fuzzy sets • 1 output variable composed of 3 fuzzy sets 104 High level representation – reduces computational complexity

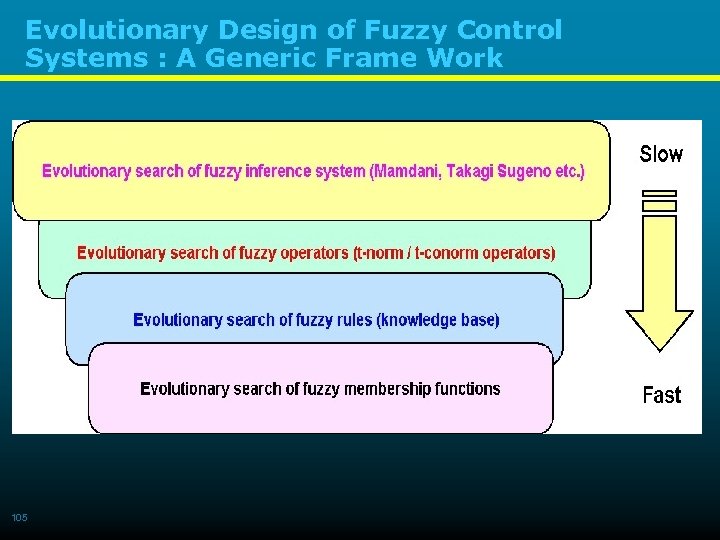

Evolutionary Design of Fuzzy Control Systems : A Generic Frame Work 105

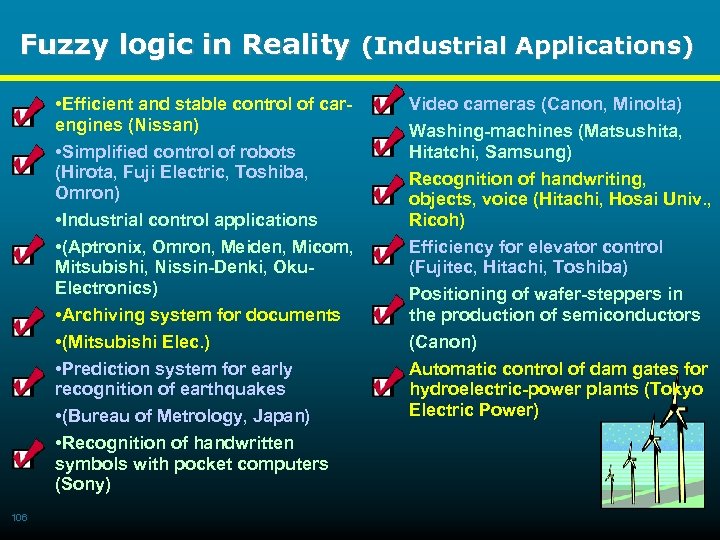

Fuzzy logic in Reality (Industrial Applications) • Efficient and stable control of carengines (Nissan) • Simplified control of robots (Hirota, Fuji Electric, Toshiba, Omron) • Industrial control applications • (Aptronix, Omron, Meiden, Micom, Mitsubishi, Nissin-Denki, Oku. Electronics) • Archiving system for documents • (Mitsubishi Elec. ) • Prediction system for early recognition of earthquakes • (Bureau of Metrology, Japan) • Recognition of handwritten symbols with pocket computers (Sony) 106 Video cameras (Canon, Minolta) Washing-machines (Matsushita, Hitatchi, Samsung) Recognition of handwriting, objects, voice (Hitachi, Hosai Univ. , Ricoh) Efficiency for elevator control (Fujitec, Hitachi, Toshiba) Positioning of wafer-steppers in the production of semiconductors (Canon) Automatic control of dam gates for hydroelectric-power plants (Tokyo Electric Power)

b9b57b1a3b1659d707eba50c8d38a19a.ppt