b928f26c4b7305c043c70b5b3abe165d.ppt

- Количество слайдов: 32

Humanoid Robots Learning to Walk Faster: From the Real World to Simulation and Back ALON FARCHY, SAMUEL BARRETT, PATRICK MACALPINE, PETER STONE

Motivation Low-level robot skills are important ◦ ◦ ◦ Robust walking and turning Precise robotic arm movement Localization Stability Etc.

Motivation Low-level robot skills are important ◦ ◦ ◦ Robust walking and turning Precise robotic arm movement Localization Stability Etc. These skills can be parameterized, but learning on a robot is challenging: ◦ ◦ Many environmental factors Robot performance degrades with use Robots take time to operate Lack of ground truth

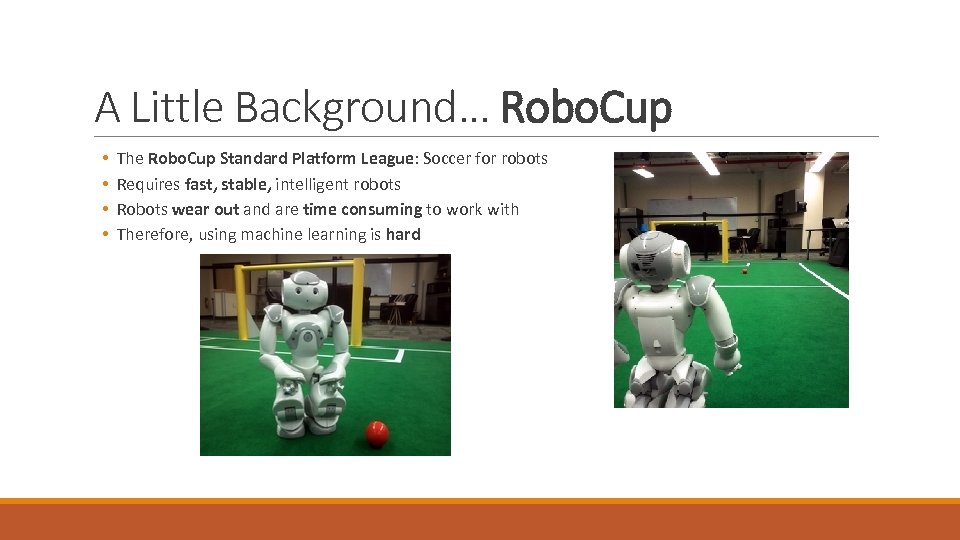

A Little Background… Robo. Cup • • The Robo. Cup Standard Platform League: Soccer for robots Requires fast, stable, intelligent robots Robots wear out and are time consuming to work with Therefore, using machine learning is hard

A Little Background… Robo. Cup Simulation • • The 3 D Simulation League: Soccer for virtual robots Requires fast, stable, intelligent robots Robots are unlimited Great environment for machine learning • In 2011 and 2012, UT Austin Villa Simulation League outpaced the competition by using machine learning.

Learn Apply Transfer How can we transfer our knowledge to the real world?

Challenges Many differences between simulation and the real world: • Open Dynamics Engine (ODE) is far from perfect • No fiction on joints • No heat simulation • Virtual Nao is greatly approximated • Equal joint strength, perfect balance, simple foot shape • Soccer environment is greatly approximated • Perfectly flat surface

Outline Grounded Simulation Learning (GSL) ◦ Assumptions, Parameters, Overview ◦ Ground, Optimize, Guide Implementation ◦ ◦ Fitness Evaluation Predicting Joint Angles Optimizing (CMA-ES) Manual Guidance Results References

Grounded Simulation Learning (GSL) Concept: Iteratively bound the search space to find areas that overlap between the simulation and the real word. Reduce disparity between simulation / real world along the way. Assumptions: 1. Evaluation in simulation can be modified. 2. A small number of evaluations can be run on the robot. 3. A small number of explorations can be run on the robot to collect data. 4. Using data from (3), the disparity between the simulation and robot can be reduced via supervised machine learning. 5. Optimization in simulation can be biased towards / against certain parameters.

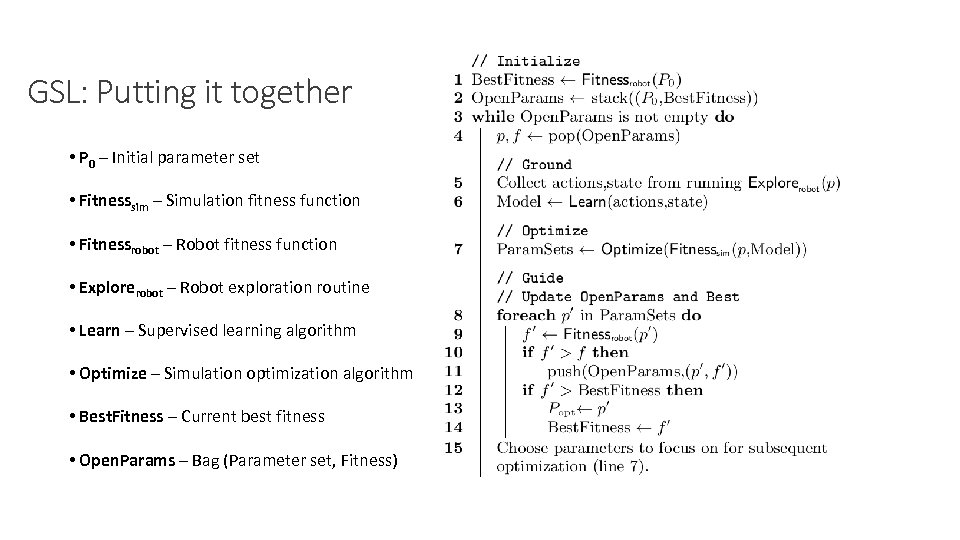

GSL: Parameters Input: • • • P 0 – Initial parameter set Fitnesssim – A simulation fitness function that uses a model that maps joint commands to outputs Fitnessrobot – a robot fitness function Explorerobot – a robot exploration routine Learn – A supervised learning algorithm Optimize – An optimization algorithm to run in simulation Output: • Popt – Optimized parameter set Variables: ◦ Best. Fitness – Current best fitness evaluation on the robot ◦ Open. Params – Bag of pairs (Parameter set, Fitness) to try

GSL: Ground Using the next parameter set in Open. Params: 1. Collect data about the robots states and actions using Explorerobot. 2. Use Learn to create a mapping between states and actions on the robot. 3. Use this mapping to reduce disparity between simulation and the real world. Force simulation to act like the robot

GSL: Optimize Use Optimize to find good parameters in the grounded simulation. Note: The optimization should not search too deeply. Searching far from the base parameters is very likely to exploit idiosyncrasies in the simulation.

GSL: Guide 1. Try some as many good parameters on the robot as is feasible. Add the good ones to Open. Params. 2. Based on results, select parameters to focus on for the next round of optimization. ◦ In our case, this selection was performed manually. Repeat ground, optimize, and guide until Open. Params is empty.

GSL: Putting it together • P 0 – Initial parameter set • Fitnesssim – Simulation fitness function • Fitnessrobot – Robot fitness function • Explorerobot – Robot exploration routine • Learn – Supervised learning algorithm • Optimize – Simulation optimization algorithm • Best. Fitness – Current best fitness • Open. Params – Bag (Parameter set, Fitness)

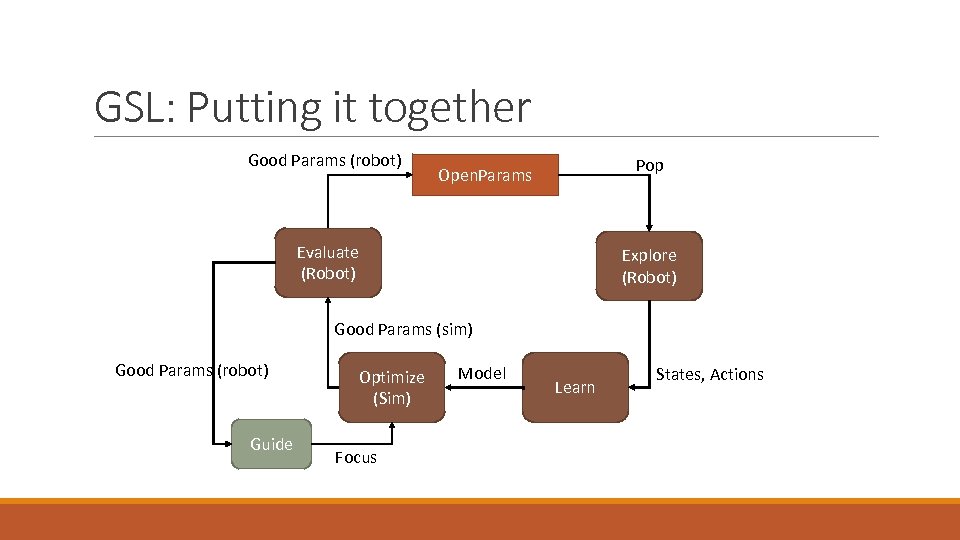

GSL: Putting it together Good Params (robot) Pop Open. Params Evaluate (Robot) Explore (Robot) Good Params (sim) Good Params (robot) Guide Optimize (Sim) Focus Model Learn States, Actions

Implementation Fitness Evaluation Predicting Joint Angles Optimizing (CMA-ES) Manual Guidance Results

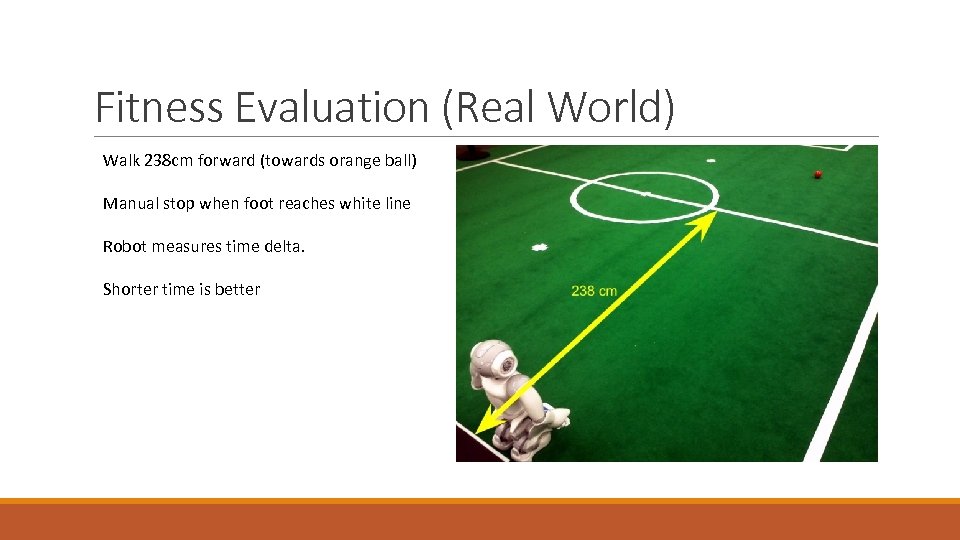

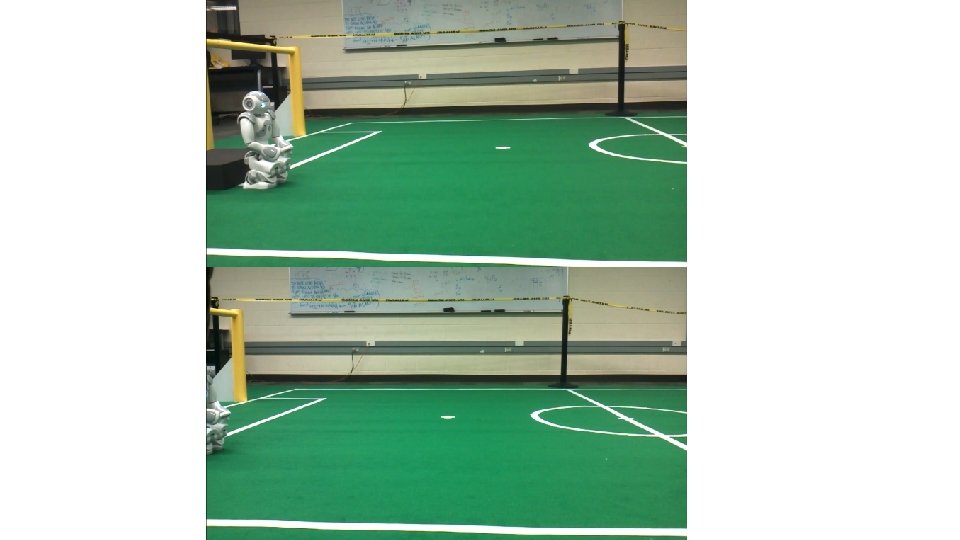

Fitness Evaluation (Real World) Walk 238 cm forward (towards orange ball) Manual stop when foot reaches white line Robot measures time delta. Shorter time is better

Fitness Evaluation (Real World)

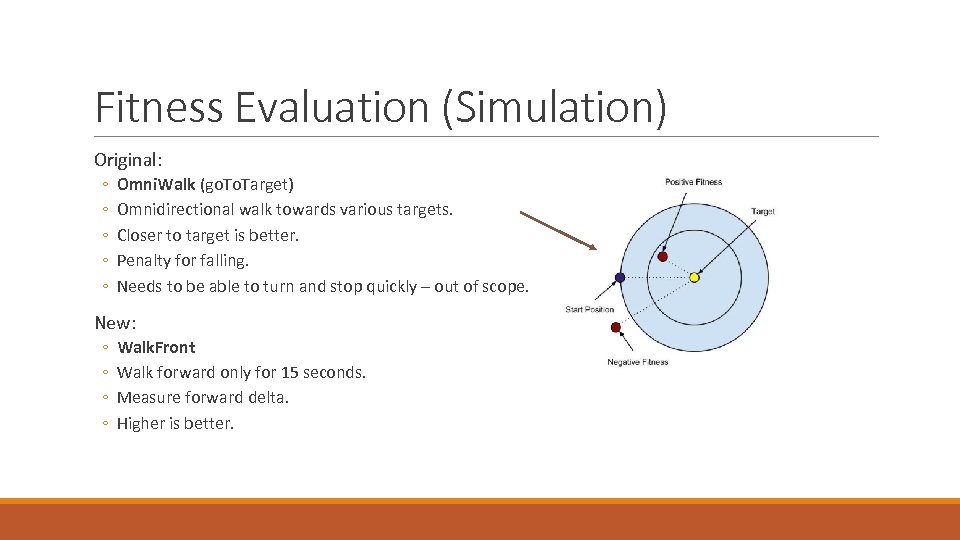

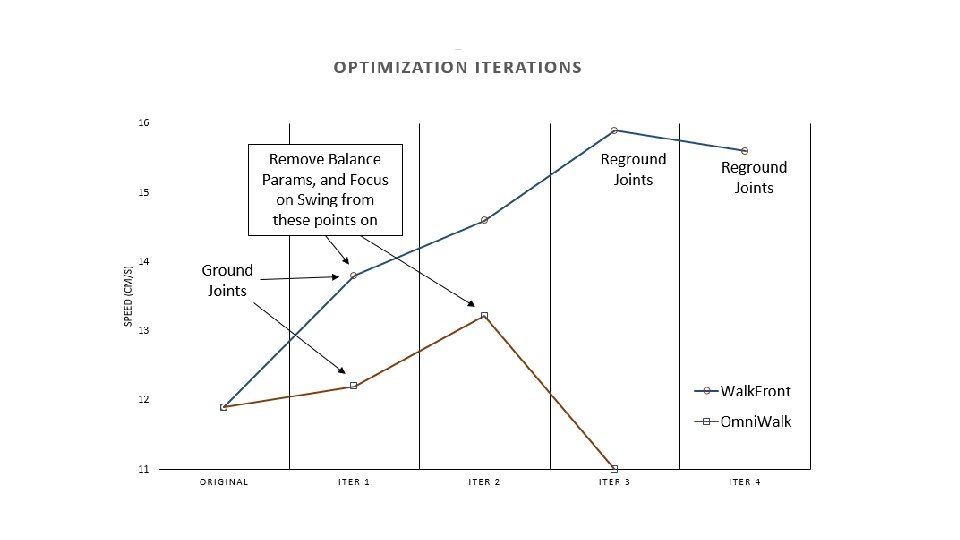

Fitness Evaluation (Simulation) Original: ◦ ◦ ◦ Omni. Walk (go. Target) Omnidirectional walk towards various targets. Closer to target is better. Penalty for falling. Needs to be able to turn and stop quickly – out of scope. New: ◦ ◦ Walk. Front Walk forward only for 15 seconds. Measure forward delta. Higher is better.

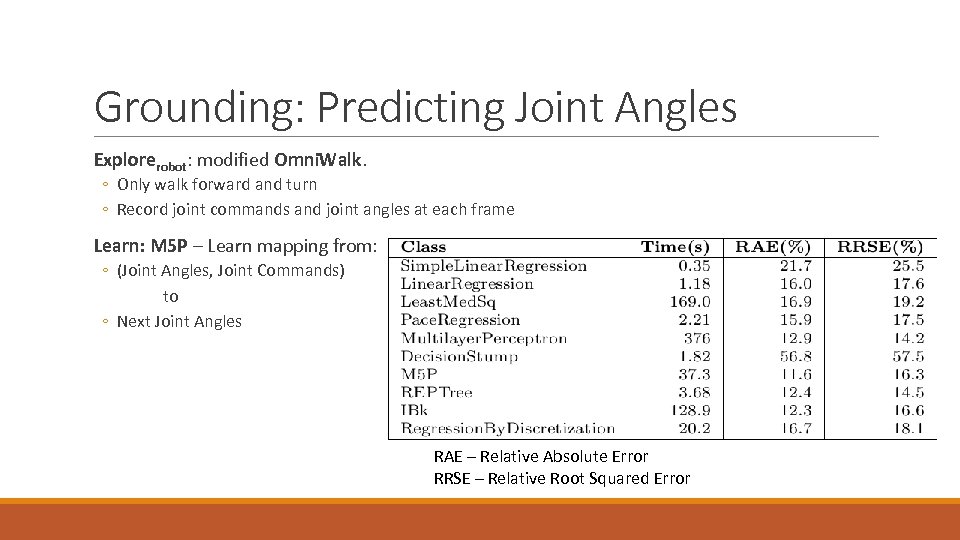

Grounding: Predicting Joint Angles Explorerobot: modified Omni. Walk. ◦ Only walk forward and turn ◦ Record joint commands and joint angles at each frame Learn: M 5 P – Learn mapping from: ◦ (Joint Angles, Joint Commands) to ◦ Next Joint Angles RAE – Relative Absolute Error RRSE – Relative Root Squared Error

Grounding: Predicting Joint Angles How to apply model to simulation? Linear combination of requested joint angles and predicted joint angles By manual testing, 70% requested / 30% predicted. Now we can use this grounded simulation in Optimize.

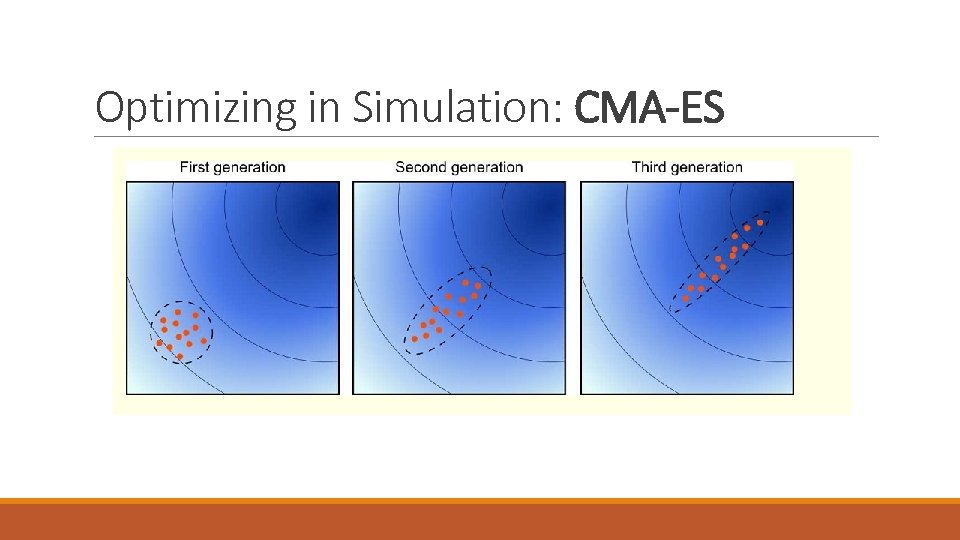

Optimizing in Simulation: CMA-ES Covariance Matrix Adaptation Evolution Strategy ◦ ◦ Candidates sampled from multidimentional Gaussian distribution. Evaluated by Fitnesssim Weighted average of members with highest fitness used to update mean of distribution Covariance updated using evolution paths controls search step sizes

Optimizing in Simulation: CMA-ES

Optimizing in Simulation: CMA-ES Condor: workload management system. 150 simultaneous fitness evaluations. Even with small number of generations (~10), explores a LOT more parameter sets than a real robot could.

Guidance Evaluate optimized parameters using Fitnessrobot. Select parameters for Open. Params (easy) ◦ Robot Falls? ◦ Robot Faster? Bias Optimize to better parameters (harder) ◦ Manually tweaked variance of parameters in the CMA-ES. ◦ Could be automated.

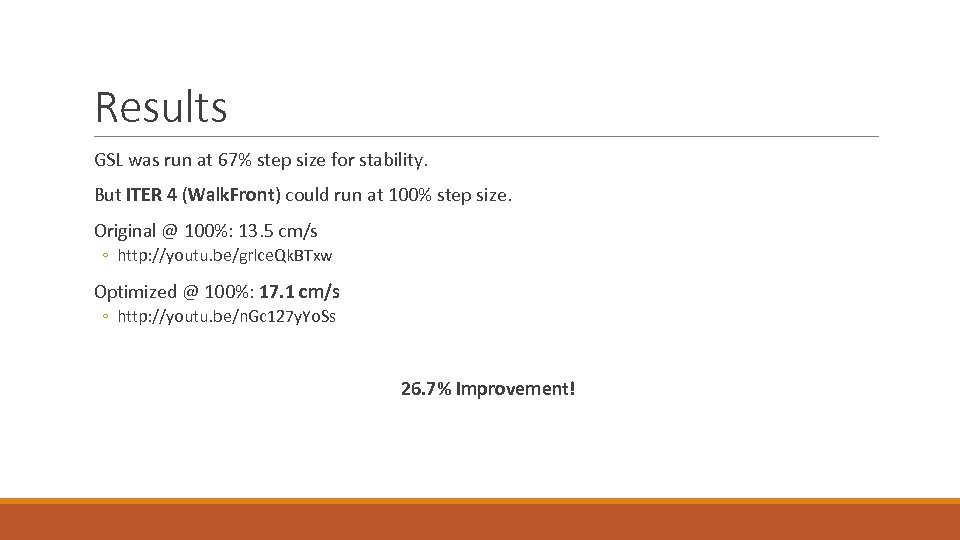

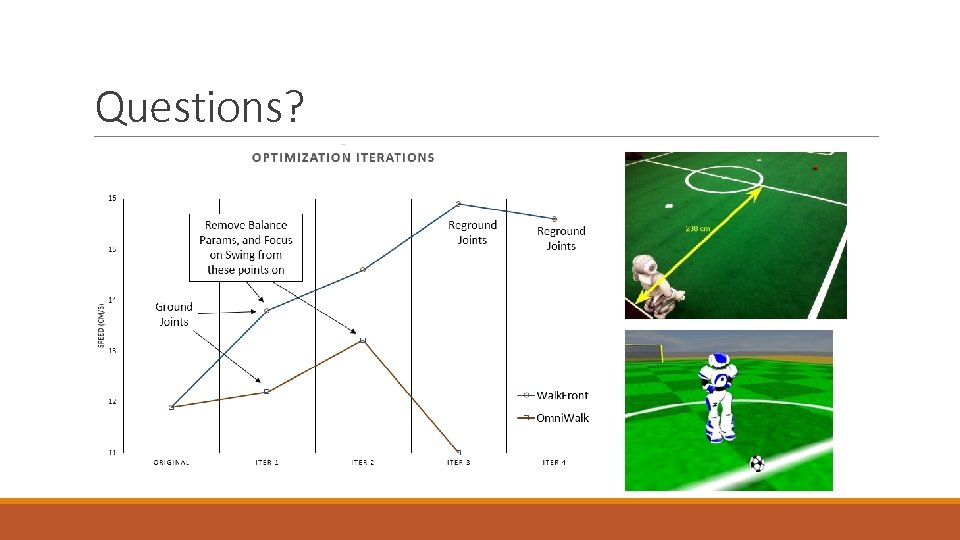

Results GSL was run at 67% step size for stability. But ITER 4 (Walk. Front) could run at 100% step size. Original @ 100%: 13. 5 cm/s ◦ http: //youtu. be/grlce. Qk. BTxw Optimized @ 100%: 17. 1 cm/s ◦ http: //youtu. be/n. Gc 127 y. Yo. Ss 26. 7% Improvement!

Related Work UT Austin Villa Robot. Cup 3 D Simulation League: P. Mac. Alpine, S. Barrett, D. Urieli, V. Vu, and P. Stone. Design and optimization of an omnidirectional humanoid walk: A winning approach at the Robo. Cup 2011 3 D simulation competition. In Twenty-Sixth Conference on Articial Intelligence (AAAI-12), July 2012. CMA-ES N. Hansen. The CMA evolution strategy: A tutorial, 2005. M 5 P R. J. Quinlan. Learning with continuous classes. In 5 th Australian Joint Conference on Articial Intelligence, pages 343{348, Singapore, 1992. World Scientific.

Related Work Simulation + Robot learning: P. Abbeel, M. Quigley, and A. Y. Ng. Using inaccurate models in reinforcement learning. In International Conference on Machine Learning (ICML) Pittsburgh, pages 1{8. ACM Press, 2006. J. C. Zagal, J. Delpiano, and J. Ruiz-del Solar. Self-modeling in humanoid soccer robots. Robot. Auton. Syst. , 57(8): 819{827, July 2009. L. Iocchi, F. D. Libera, and E. Menegatti. Learning humanoid soccer actions interleaving simulated and real data. In Second Workshop on Humanoid Soccer Robots, November 2007. S. Koos, J. -B. Mouret, and S. Doncieux. Crossing the reality gap in evolutionary robotics by promoting transferable controllers. In Proceedings of the 12 th annual conference on Genetic and volutionary computation, GECCO '10, pages 119{126, New York, NY, USA, 2010. ACM.

Questions?

b928f26c4b7305c043c70b5b3abe165d.ppt