HPC’s in ATLAS Kaushik De Univ. of Texas at Arlington OSG AHM, Clemson University March 15, 2016

HPC’s in ATLAS Kaushik De Univ. of Texas at Arlington OSG AHM, Clemson University March 15, 2016

An Opportunity and A Challenge § Opportunity § Unexplored territory – ATLAS focused on HTC, not HPC for Run 1. § Neglected use case – MC simulations use ~75% of computing cycles at the LHC, which can run easily (if not better) on HPC’s. § Software maturity – we are much better at using heterogeneous and opportunistic resources after successful demos during Run 1. § Higgs effect – HPC sites take our physics goals seriously. § Challenge § Every HPC is different. § Usually not open to outside access or submission. § Worker nodes may not have outbound connectivity. § Limited storage. Not easy to install ATLAS software. § Have to go through allocation process for time/storage. Kaushik De Mar 15, 2016 2

An Opportunity and A Challenge § Opportunity § Unexplored territory – ATLAS focused on HTC, not HPC for Run 1. § Neglected use case – MC simulations use ~75% of computing cycles at the LHC, which can run easily (if not better) on HPC’s. § Software maturity – we are much better at using heterogeneous and opportunistic resources after successful demos during Run 1. § Higgs effect – HPC sites take our physics goals seriously. § Challenge § Every HPC is different. § Usually not open to outside access or submission. § Worker nodes may not have outbound connectivity. § Limited storage. Not easy to install ATLAS software. § Have to go through allocation process for time/storage. Kaushik De Mar 15, 2016 2

HPC Strategy § Why use HPC? – ATLAS needed more CPU cycles § Started exploring HPC’s ~3 years ago § First – those which can mimic grid or cloud sites § § § Some of these were being used already, like OU OSCER Others required discussions with local experts Second – those which can be exploited by a few users § § Specific analysis, specific application, impact for a few papers § § Usually, users at affiliated institutions Examples like Stampede, MIRA, Titan and others Third – the really difficult cases § General MC simulation on large resources like Titan, NERSC § In this talk will focus mostly on US HPC sites Kaushik De Mar 15, 2016 3

HPC Strategy § Why use HPC? – ATLAS needed more CPU cycles § Started exploring HPC’s ~3 years ago § First – those which can mimic grid or cloud sites § § § Some of these were being used already, like OU OSCER Others required discussions with local experts Second – those which can be exploited by a few users § § Specific analysis, specific application, impact for a few papers § § Usually, users at affiliated institutions Examples like Stampede, MIRA, Titan and others Third – the really difficult cases § General MC simulation on large resources like Titan, NERSC § In this talk will focus mostly on US HPC sites Kaushik De Mar 15, 2016 3

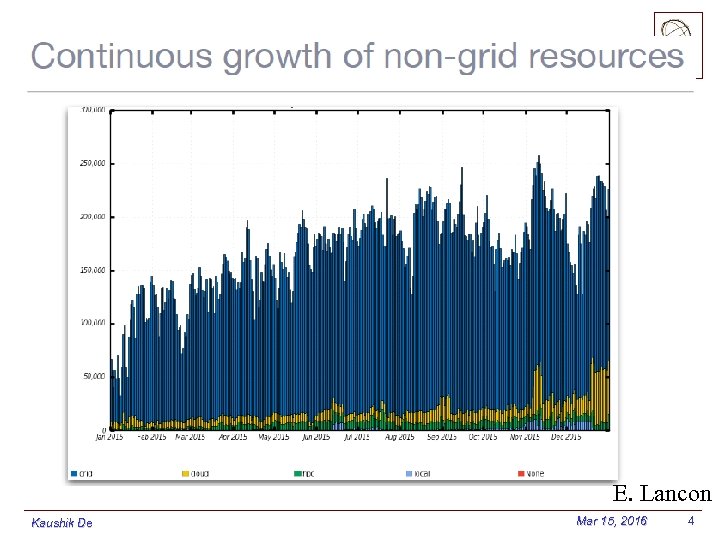

E. Lancon Kaushik De Mar 15, 2016 4

E. Lancon Kaushik De Mar 15, 2016 4

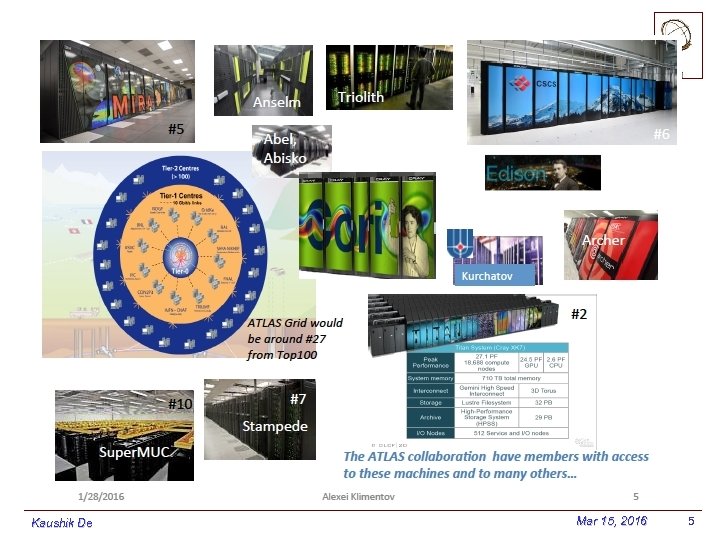

Kaushik De Mar 15, 2016 5

Kaushik De Mar 15, 2016 5

Kaushik De Mar 15, 2016 6

Kaushik De Mar 15, 2016 6

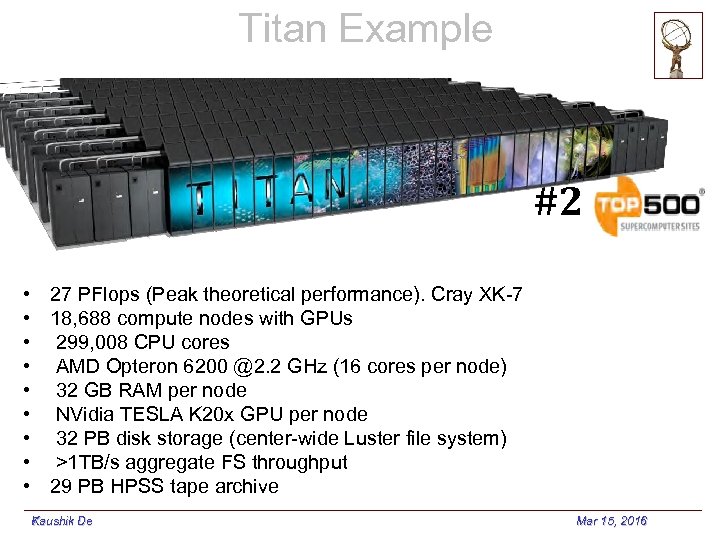

Titan Example #2 • 27 PFlops (Peak theoretical performance). Cray XK-7 • 18, 688 compute nodes with GPUs • 299, 008 CPU cores • AMD Opteron 6200 @2. 2 GHz (16 cores per node) • 32 GB RAM per node • NVidia TESLA K 20 x GPU per node • 32 PB disk storage (center-wide Luster file system) • >1 TB/s aggregate FS throughput • 29 PB HPSS tape archive Kaushik De 7 Mar 15, 2016

Titan Example #2 • 27 PFlops (Peak theoretical performance). Cray XK-7 • 18, 688 compute nodes with GPUs • 299, 008 CPU cores • AMD Opteron 6200 @2. 2 GHz (16 cores per node) • 32 GB RAM per node • NVidia TESLA K 20 x GPU per node • 32 PB disk storage (center-wide Luster file system) • >1 TB/s aggregate FS throughput • 29 PB HPSS tape archive Kaushik De 7 Mar 15, 2016

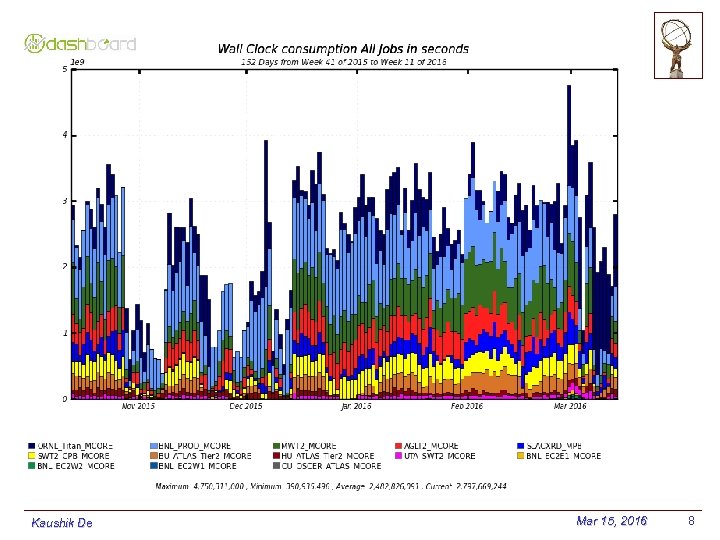

Kaushik De Mar 15, 2016 8

Kaushik De Mar 15, 2016 8

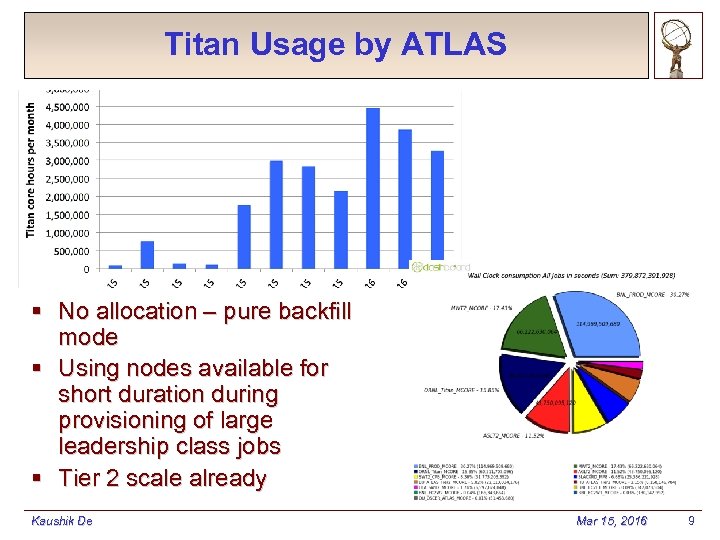

Titan Usage by ATLAS § No allocation – pure backfill mode § Using nodes available for short duration during provisioning of large leadership class jobs § Tier 2 scale already Kaushik De Mar 15, 2016 9

Titan Usage by ATLAS § No allocation – pure backfill mode § Using nodes available for short duration during provisioning of large leadership class jobs § Tier 2 scale already Kaushik De Mar 15, 2016 9

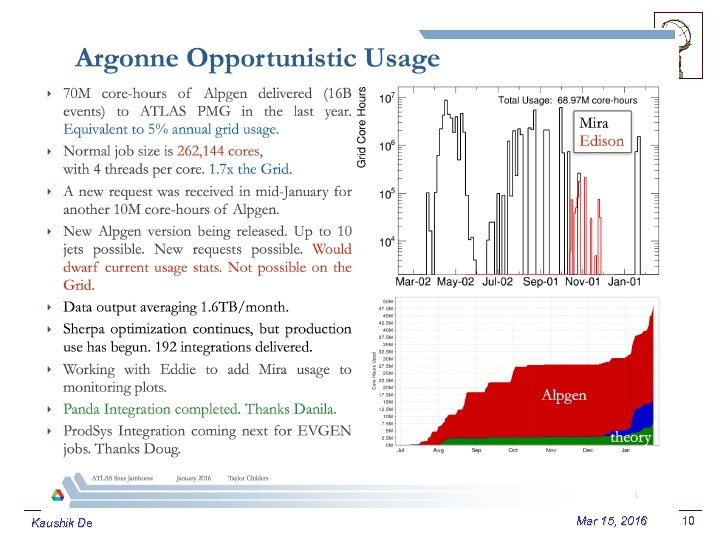

Kaushik De Mar 15, 2016 10

Kaushik De Mar 15, 2016 10

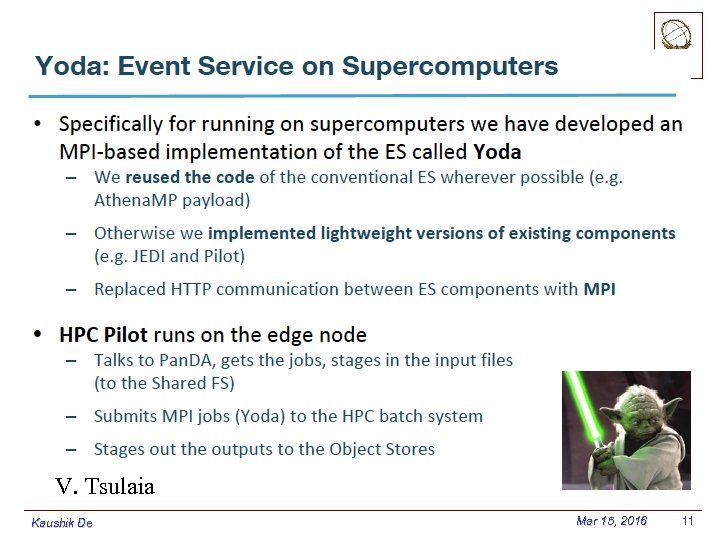

V. Tsulaia Kaushik De Mar 15, 2016 11

V. Tsulaia Kaushik De Mar 15, 2016 11

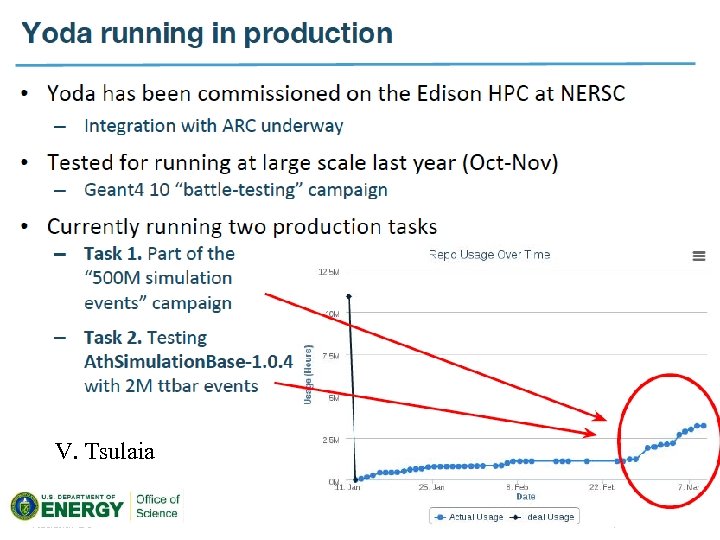

V. Tsulaia Kaushik De Mar 15, 2016 12

V. Tsulaia Kaushik De Mar 15, 2016 12

Prognosis § ATLAS needs additional processing power § We have demonstrated HPC’s can be used opportunistically § Already ~10% of all CPU usage § Acquired valuable expertise and knowledge base § How to work with HPC sites § How to install software § How to run automated Pan. DA production § Lot of work still needed § Optimizations § Non x 86 platforms § Operations Kaushik De Mar 15, 2016 13

Prognosis § ATLAS needs additional processing power § We have demonstrated HPC’s can be used opportunistically § Already ~10% of all CPU usage § Acquired valuable expertise and knowledge base § How to work with HPC sites § How to install software § How to run automated Pan. DA production § Lot of work still needed § Optimizations § Non x 86 platforms § Operations Kaushik De Mar 15, 2016 13