59d693e37f6d6c040165f2ce325c1237.ppt

- Количество слайдов: 60

Homeland Security • Obviously since 9/11, homeland security has been brought to the forefront of public concern and national research – in AI, there are numerous applications • The Dept of HS has identified the following problems as critical: – intelligence and warning – surveillance, monitoring, detection of deception – border and transportation security – traveler identification, vehicle identification location and tracking – domestic counterterrorism – tying crimes at the local/state/federal level to terrorist cells and organizations, includes tracking organized crime – protection of key assets – similar to transportation security, here the targets are fixed, security might be aided through camera-based surveillance and recording, this can include the Internet and web sites as assets – defending against catastrophic terrorism – guarding against weapons of mass destruction being brought into the country, tracking such events around the world – emergency preparedness and response – includes infrastructure to accomplish this such as wireless networks, information sources, rescue robots

• Terrorism Threats and Needs – Monitor terrorist/extremist organizations • financial operations, recruitment, purchasing of illegal materials, monitoring borders and ports, false identity detection, crossjurisdiction communication/information sharing • Global pandemics: SARS, avian flu, swine flu – Monitor national health trends • symptom outbreaks, mapping travel for infected patients, large scale absences from work/school, monitoring drug usage, simulation and training for emergency responders and better communication between emergency response organizations • Cyber security – Monitor Internet intrusions • denial of service attacks on national/international corporation websites, new viruses, botnets, zombie computers, e-commerce fraud • How much can AI contribute?

A Myriad of Technologies • To support homeland security problems, many technologies are combined, not just AI, although many have roots in AI – Biometrics – identify potential terrorists or suspects based on biometric signatures (faces, fingerprints, voice, etc) using various forms of input – Clustering – data mining – Decision support – KBS, agents, semantic web, DB – Event monitoring/detection – telecommunications, databases, intelligent agents, semantic web, GIS – Knowledge management and filtering – KBS, data mining, DB processing, semantic web – Predictive modeling – data mining, KBS, neural networks, Bayesian approaches, DB – Semantic consistency – ontologies, semantic web – Multimedia processing – DB, indexing/annotations, semantic web – Visualization – DB, data mining, visualization techniques, VR

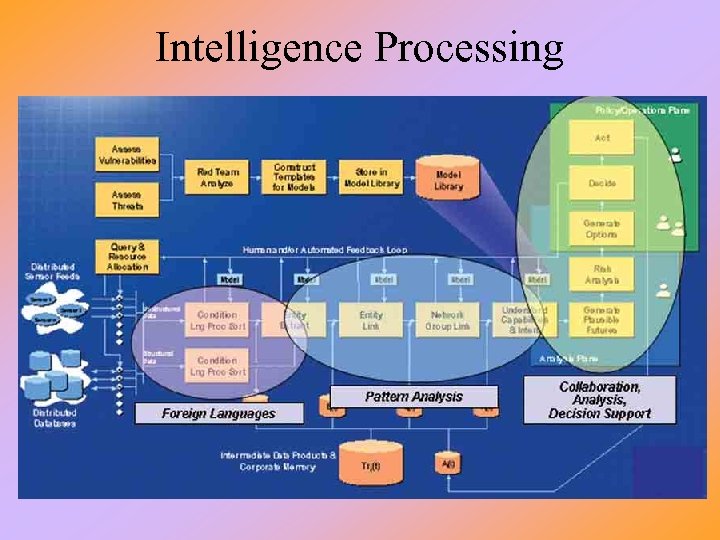

Intelligence Processing

NSA Data Mining • NSA obtained approximately 1. 9 trillion phone records to use in data mining – Looking for phone calls between known terrorists and others • Note: this does not involve wire tapping but the legality of obtaining the records is being questioned • More recently, the NSA has pruned down their records to a few million • What they are looking for are links – Add frequently called people to their list of potential terrorist suspects • One approach is to count the number of edges that connect a particular individual to known terrorists – Another is to look for chains of links to see the closeness between a suspect and known terrorists • This work follows on from research done on the 19 9/11 terrorists to show that each of them was no more than 2 links away from a known al -Qaida member and to show that terror suspect Mohamed Atta was a central figure among the 19 terrorists – They are also using trained neural networks to detect call patterns and classify such patterns

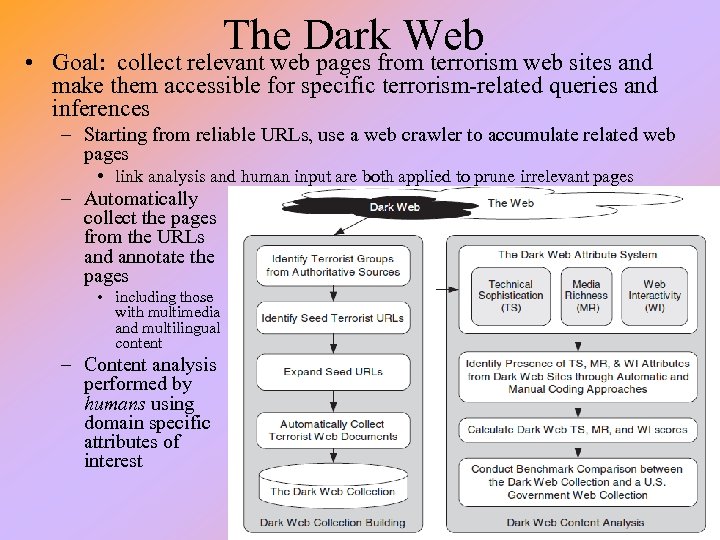

• The Dark Web web sites and Goal: collect relevant web pages from terrorism make them accessible for specific terrorism-related queries and inferences – Starting from reliable URLs, use a web crawler to accumulate related web pages • link analysis and human input are both applied to prune irrelevant pages – Automatically collect the pages from the URLs and annotate the pages • including those with multimedia and multilingual content – Content analysis performed by humans using domain specific attributes of interest

UA Dark Web Collection • University of Arizona is creating a dark web portal • Contains pages from 10, 000 sites – Over 30 identified terrorist or extremist groups – Content primarily in Arabic, Spanish, English, Japanese – Includes web pages, forums, blogs, social networking sites, multimedia content (a million images and 15, 000 videos) • Aside from gathering the pages via crawlers, they perform – Content analysis (recruitment, training, ideology, communication, propaganda) – usually human-labeled with support from software – Web metric analysis – technical features of the web site such as ability to use tables, CGI, multimedia files) – Sentiment and affect analysis – some web sites are not directly related to a terrorist/extremist organization but might display sentiment (or negativity) toward one of these organizations – by tracking these sites, the researchers can determine how “infectious” a given site or cause is – Authorship analysis – determine the most likely author of a given piece of text

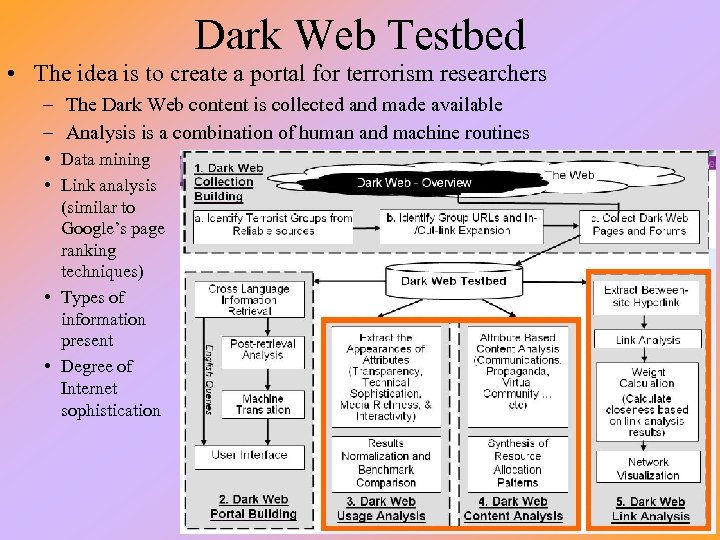

Dark Web Testbed • The idea is to create a portal for terrorism researchers – The Dark Web content is collected and made available – Analysis is a combination of human and machine routines • Data mining • Link analysis (similar to Google’s page ranking techniques) • Types of information present • Degree of Internet sophistication

Multilingual Indexing • In order to automate search, each web page/text message found must be indexed – Arizona Noun Phraser program applied to English pages • Uses a part-of-speech tagger and linguistic rules to find nouns – Mutual Information applied to Spanish and Arabic pages • Uses statistically-based methods to identify meaningful phrases in any language – Once identified, nouns were sent to a concept space program to extract pairs of co-occurring keywords that appeared on the same page • Pairs were then placed into a thesaurus demonstrating related terms • Queries in one language meant for pages in another language would undergo simple translation – Queries were limited to keywords so context, generally, would not be required for translation – Translation was performed by using two English-Arabic dictionaries – This is a rudimentary form of machine translation, but it was felt sufficient for keyword querying

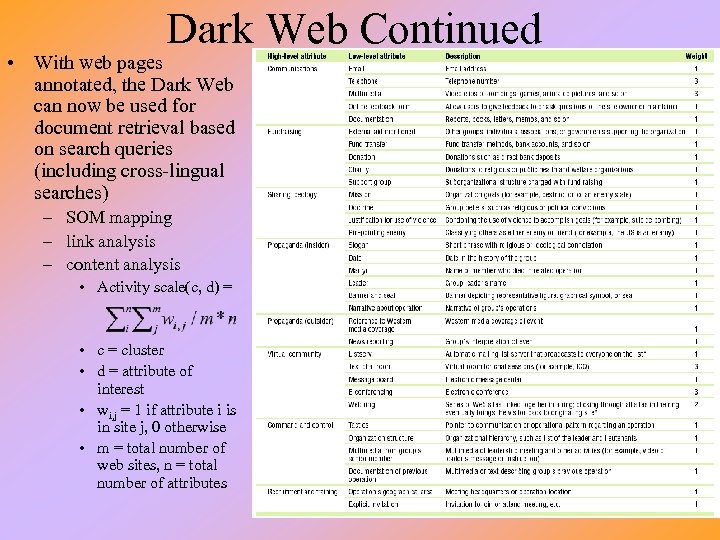

Dark Web Continued • With web pages annotated, the Dark Web can now be used for document retrieval based on search queries (including cross-lingual searches) – SOM mapping – link analysis – content analysis • Activity scale(c, d) = • c = cluster • d = attribute of interest • wi, j = 1 if attribute i is in site j, 0 otherwise • m = total number of web sites, n = total number of attributes

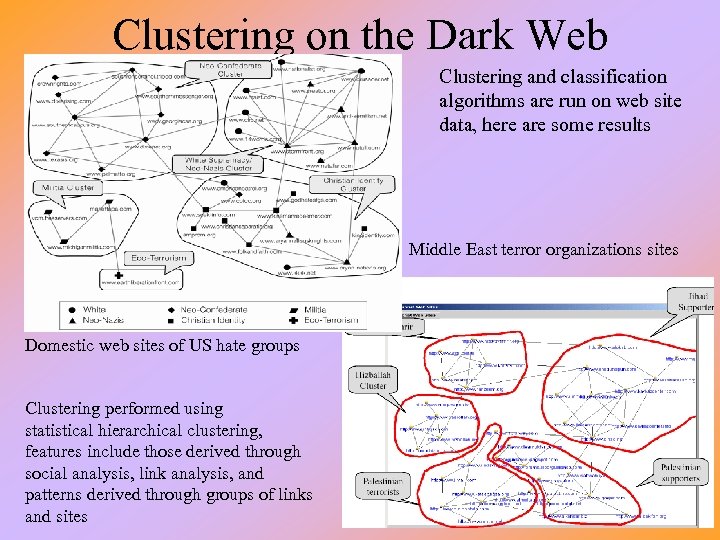

Clustering on the Dark Web Clustering and classification algorithms are run on web site data, here are some results Middle East terror organizations sites Domestic web sites of US hate groups Clustering performed using statistical hierarchical clustering, features include those derived through social analysis, link analysis, and patterns derived through groups of links and sites

• Based on Internet Sophistication – HTML techniques (lists, tables, frames, forms) – Embedded multimedia (background images, background music, streaming A/V) – Advanced HTML (dhtml, shtml), script functions – Dynamic web programming (cgi, php, jsp/asp) • Content richness is computed based on – – Number of hyperlinks Number of downloadable items Number of images, video and audio files Amount of web interactivity • Email feedback, email list, contact address, feedback form • Private messages, online forums/chat rooms • Online shopping, payment, application form • Why is determining Internet sophistication important?

MUC Information Extraction • Obviously to succeed in the face of so much data (text), some automated processing is required to extract relevant information for indexing/cataloging – DARPA sponsored a series of conferences (competitions) in which participants would compete to perform Information Extraction (IE) on test data • These conferences are referred to as MUC – message understanding conferences – IE tasks: • Named entity recognition – search text for given list of names, organizations, places of interest, etc • Coreference – identify chains of reference to the same object • Terminology extraction – find relevant terms for a given corpus (domain of interest) • Relationship extraction – identify relations between objects (e. g. , “works for”, “located at”, “associate of”, “received from”

IE Strategies • First, the NLU input needs to be put into a usable form – If speech (oral), it must be transcribed to text – Text might be simplified by removing common words and seeking roots of words (known as stemming) – NLU processing to tag the parts of speech (noun, verb, adjective, adverb, etc) through statistical or parsing techniques – Use of a word thesaurus to determine word similarities and concept thesaurus to determine relationships between words (e. g. , Word. Net might be applied) • Traditional data mining (e. g. , clustering) • Support vector machines – These are statistical/Bayesian based learning approaches which derive a hyperplane to separate data in a class from data not in a class (similar to a perceptron) • Semantic translation – using a domain model (possibly an ontology) to map concepts from one representation (e. g. , the input) to another (e. g. , target concepts) • Template filling

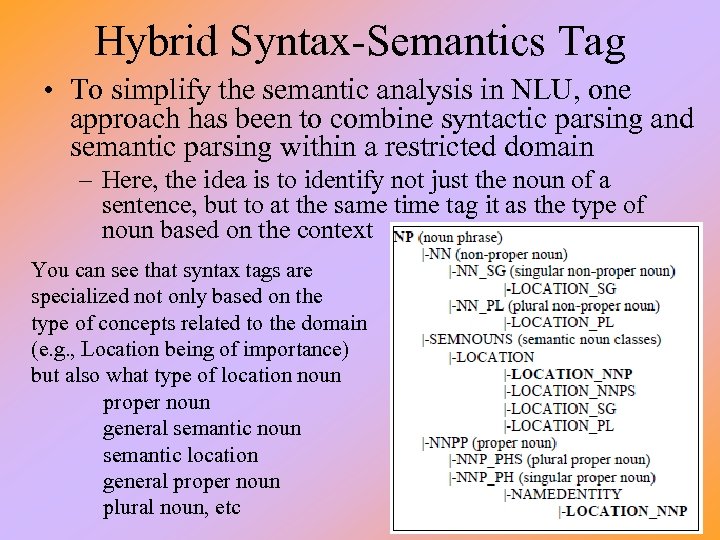

Hybrid Syntax-Semantics Tag • To simplify the semantic analysis in NLU, one approach has been to combine syntactic parsing and semantic parsing within a restricted domain – Here, the idea is to identify not just the noun of a sentence, but to at the same time tag it as the type of noun based on the context You can see that syntax tags are specialized not only based on the type of concepts related to the domain (e. g. , Location being of importance) but also what type of location noun proper noun general semantic noun semantic location general proper noun plural noun, etc

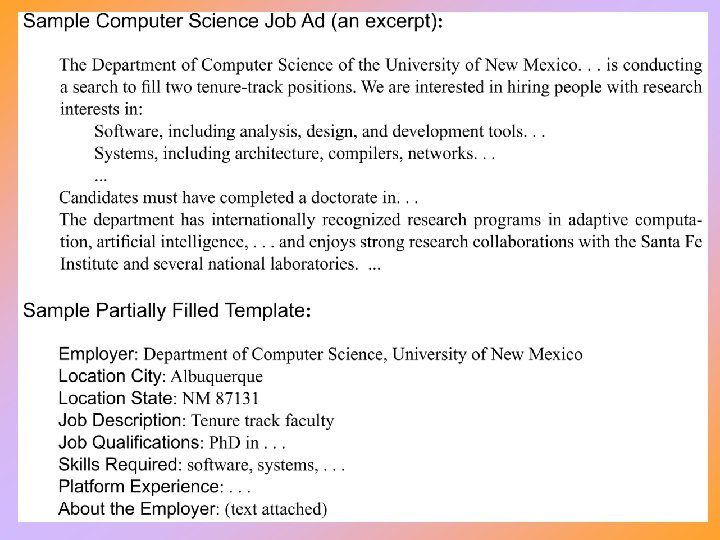

Template Based Information Extraction • One IE approach is to provide templates of events of interest and then extract information to fill in the template – Templates may also be of entities (people, organizations) – In the example on the next slide • a web page has been identified as a job ad • the job ad template is brought up and information is filled in by identifying such target information as “employer”, “location city”, “skills required”, etc – Identifying the right text for extraction is based on • • keyword matching using the tags provided by syntactic and semantic parsing statistical analysis concept-specific (fill) rules which are provided for the type of slot that is being filled in – for instance, the verb “hire” will have an agent (contact person or employer) and object (hiree)

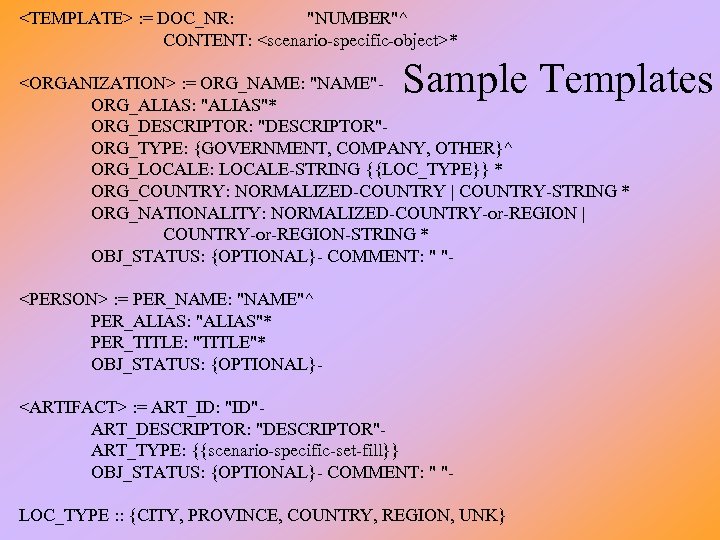

<TEMPLATE> : = DOC_NR: "NUMBER"^ CONTENT: <scenario-specific-object>* Sample Templates <ORGANIZATION> : = ORG_NAME: "NAME"ORG_ALIAS: "ALIAS"* ORG_DESCRIPTOR: "DESCRIPTOR"ORG_TYPE: {GOVERNMENT, COMPANY, OTHER}^ ORG_LOCALE: LOCALE-STRING {{LOC_TYPE}} * ORG_COUNTRY: NORMALIZED-COUNTRY | COUNTRY-STRING * ORG_NATIONALITY: NORMALIZED-COUNTRY-or-REGION | COUNTRY-or-REGION-STRING * OBJ_STATUS: {OPTIONAL}- COMMENT: " "<PERSON> : = PER_NAME: "NAME"^ PER_ALIAS: "ALIAS"* PER_TITLE: "TITLE"* OBJ_STATUS: {OPTIONAL}<ARTIFACT> : = ART_ID: "ID"ART_DESCRIPTOR: "DESCRIPTOR"ART_TYPE: {{scenario-specific-set-fill}} OBJ_STATUS: {OPTIONAL}- COMMENT: " "LOC_TYPE : : {CITY, PROVINCE, COUNTRY, REGION, UNK}

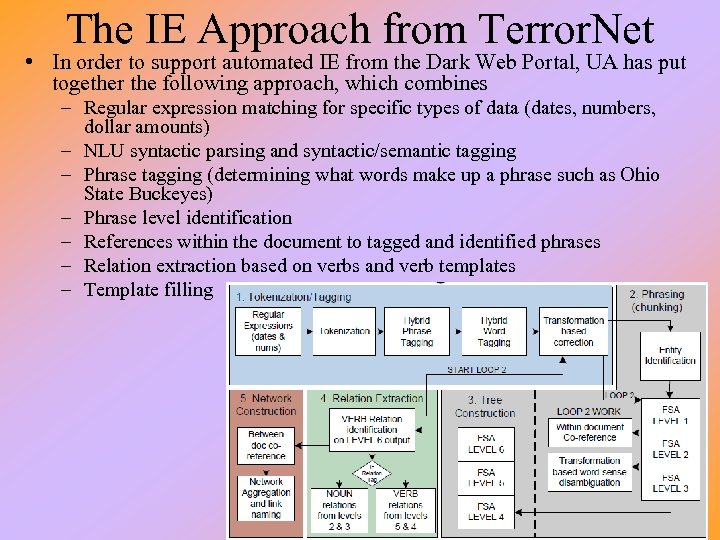

The IE Approach from Terror. Net • In order to support automated IE from the Dark Web Portal, UA has put together the following approach, which combines – Regular expression matching for specific types of data (dates, numbers, dollar amounts) – NLU syntactic parsing and syntactic/semantic tagging – Phrase tagging (determining what words make up a phrase such as Ohio State Buckeyes) – Phrase level identification – References within the document to tagged and identified phrases – Relation extraction based on verbs and verb templates – Template filling

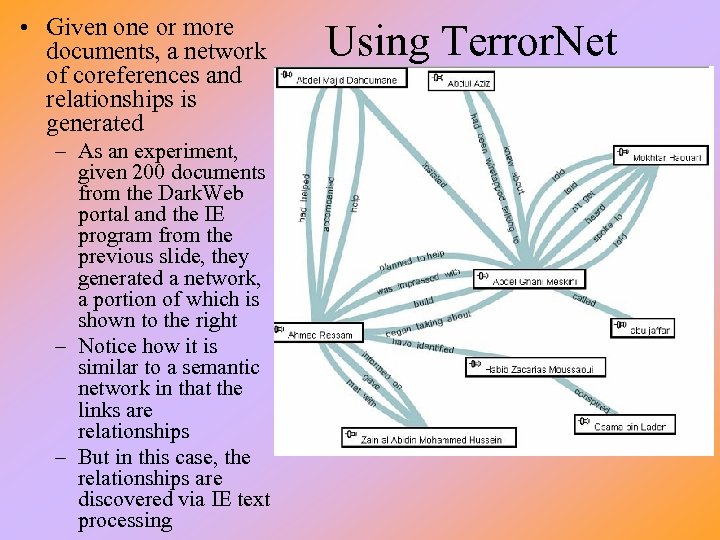

• Given one or more documents, a network of coreferences and relationships is generated – As an experiment, given 200 documents from the Dark. Web portal and the IE program from the previous slide, they generated a network, a portion of which is shown to the right – Notice how it is similar to a semantic network in that the links are relationships – But in this case, the relationships are discovered via IE text processing Using Terror. Net

Authorship Identification • Another pursuit is to identify the author of Internet web forum messages – In Arabic or English • Attributes: – – Lexical – word (or character for Arabic) choice Syntactic – choice of grammar Structural – organization layout of the message Content-specific – topic/domain • Approaches were to perform classification using C 4. 5 and SVMs – English identification uses 301 features used from a test set (87 lexical, 158 syntactic, 45 structural and 11 content-specific) – Arabic identification uses 418 features used from a test set (79 lexical, 262 syntactic, 62 structural and 15 content-specific) – used web spider to collect test set of documents from various Internet forums

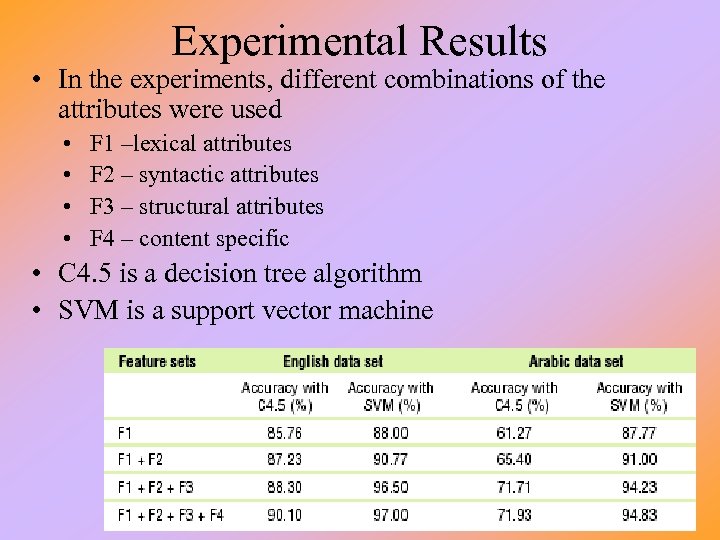

Experimental Results • In the experiments, different combinations of the attributes were used • • F 1 –lexical attributes F 2 – syntactic attributes F 3 – structural attributes F 4 – content specific • C 4. 5 is a decision tree algorithm • SVM is a support vector machine

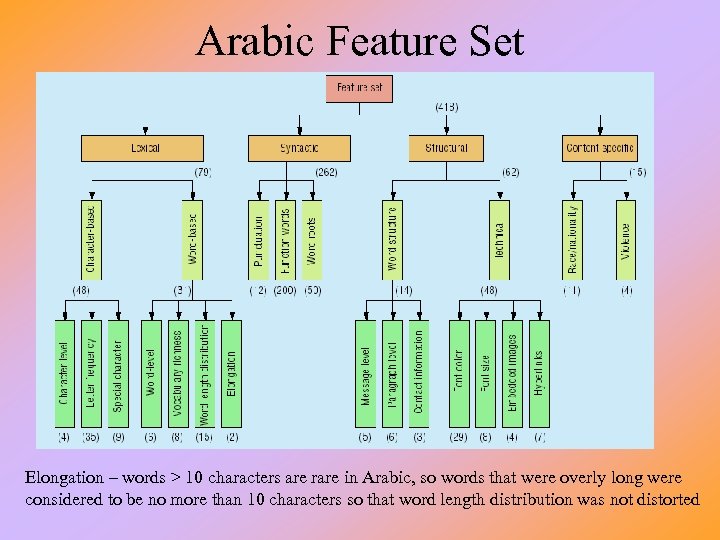

Arabic Feature Set Elongation – words > 10 characters are rare in Arabic, so words that were overly long were considered to be no more than 10 characters so that word length distribution was not distorted

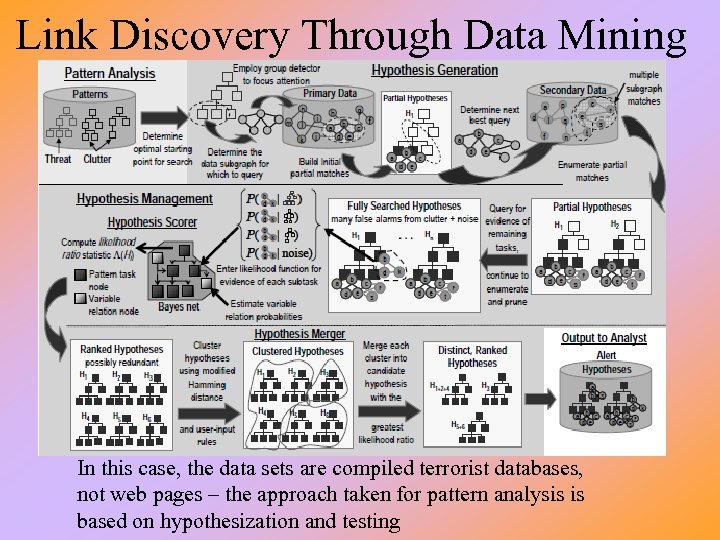

Link Discovery Through Data Mining In this case, the data sets are compiled terrorist databases, not web pages – the approach taken for pattern analysis is based on hypothesization and testing

Details • Start with databases that contain – – Transactions of known associations One or more patterns of terrorist activities Knowledge about terrorist groups/members Patterns of activities, probabilistic information on transactions • The process – Partial pattern matching to generate hypotheses of activities (e. g. , development of chemical weapon, planned attack on location, etc) along with likely participants – Hypotheses are used to generate queries to the DBs to “grow the hypotheses” and find additional support – Hypotheses are evaluated using a Bayesian network of threat activities, the network is constructed from the hypotheses found and templates – Highly ranked and related hypotheses are merged (relations based on Hamming distances and user-input rules) and the most compelling joint hypothesis is output

Beyond Link Analysis: CADRE • Continuous Analysis and Discovery from Relational Evidence – Threat patterns represented as hierarchically nested templated events (like scripts) – Given DBs that include threat activities, perform data mining and abductive inference to generate plausible hypotheses and evaluate them • Specifically, the process is one of – Triggering rules search the DBs for relatively rare events, any given rule might trigger one or more hypotheses – Hypotheses are templates and additional searching is performed to fill in slots of the template(s), this is known as hypothesis refinement • Link analysis is used to aid in the template filling similar to previous examples, but the process is more involved and utilizes KBS/rule based approaches as well

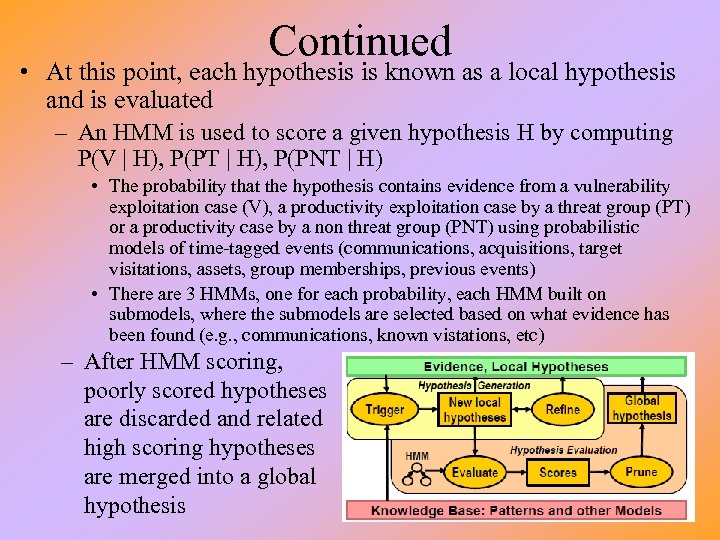

Continued • At this point, each hypothesis is known as a local hypothesis and is evaluated – An HMM is used to score a given hypothesis H by computing P(V | H), P(PT | H), P(PNT | H) • The probability that the hypothesis contains evidence from a vulnerability exploitation case (V), a productivity exploitation case by a threat group (PT) or a productivity case by a non threat group (PNT) using probabilistic models of time-tagged events (communications, acquisitions, target visitations, assets, group memberships, previous events) • There are 3 HMMs, one for each probability, each HMM built on submodels, where the submodels are selected based on what evidence has been found (e. g. , communications, known vistations, etc) – After HMM scoring, poorly scored hypotheses are discarded and related high scoring hypotheses are merged into a global hypothesis

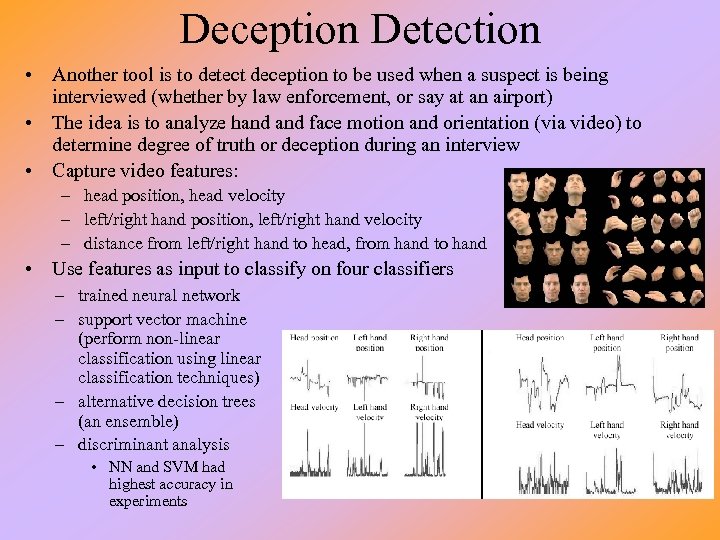

Deception Detection • Another tool is to detect deception to be used when a suspect is being interviewed (whether by law enforcement, or say at an airport) • The idea is to analyze hand face motion and orientation (via video) to determine degree of truth or deception during an interview • Capture video features: – head position, head velocity – left/right hand position, left/right hand velocity – distance from left/right hand to head, from hand to hand • Use features as input to classify on four classifiers – trained neural network – support vector machine (perform non-linear classification using linear classification techniques) – alternative decision trees (an ensemble) – discriminant analysis • NN and SVM had highest accuracy in experiments

Recommender Systems for Intelligence • Another idea to support intelligence analysis for terrorism is the use of recommender systems to help point analysts toward useful documents – An analyst develops a user profile • • • types of information needed specific key words of use data sets to search through alert condition models previously used documents (that is, documents found to be useful for the given case being studied) – The profile could also include items found not to be useful • The analyst would probably have multiple profiles, one for each case being worked on – Multiple profiles could be linked through relationships in the cases

Types of Recommenders • Collaborative recommenders – Make suggestions based on preferences of a set of users – Given the current profile, find similar profiles • Create a list of documents that the other users have used • Rank the documents (if possible) and return the ranked list or the top percentage of the ranked list • Content-based recommenders – Each item (document) is indexed by its content (usually keywords) – Based on the current task at hand (the content of documents retrieved so far), create a list of documents that similarly match, rank them and return the ranked list • Hybrid recommenders – Use both content-based and collaborative, merging the results, possibly using a weighted average or a voting scheme to rank the list of documents returned

Learning Component • To be of most use, the recommender must adapt as the user uses the system – Explicit feedback is provided when the user indicates which returned documents are of interest and which are not • In the content-based system, the list of keywords can be refined by adding words found in documents of interest and removing words that were found in documents not of interest but not in the documents of interest • In the collaborative system, document selection can be refined by increasing the chance of selecting a document that was of interest lessening the chance of selecting a document not of interest – In addition, if possible, modify the user’s current profile – Implicit feedback can only occur by continuing to watch what the user has requested in an attempt to modify what the system thinks the user is looking for

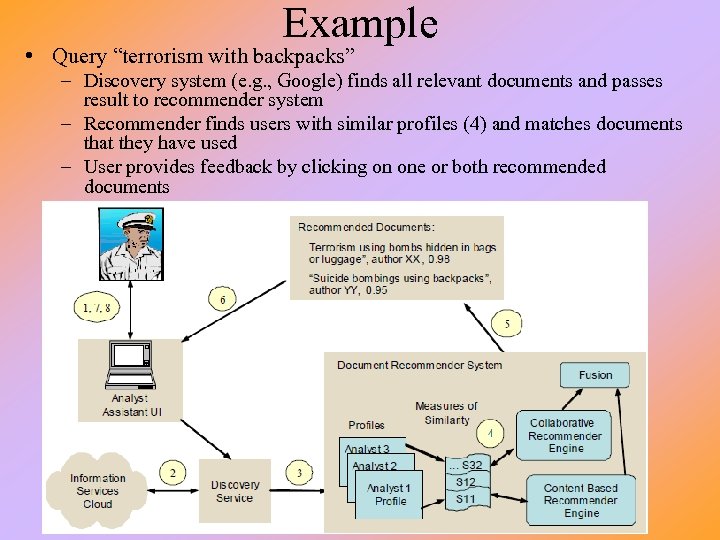

Example • Query “terrorism with backpacks” – Discovery system (e. g. , Google) finds all relevant documents and passes result to recommender system – Recommender finds users with similar profiles (4) and matches documents that they have used – User provides feedback by clicking on one or both recommended documents

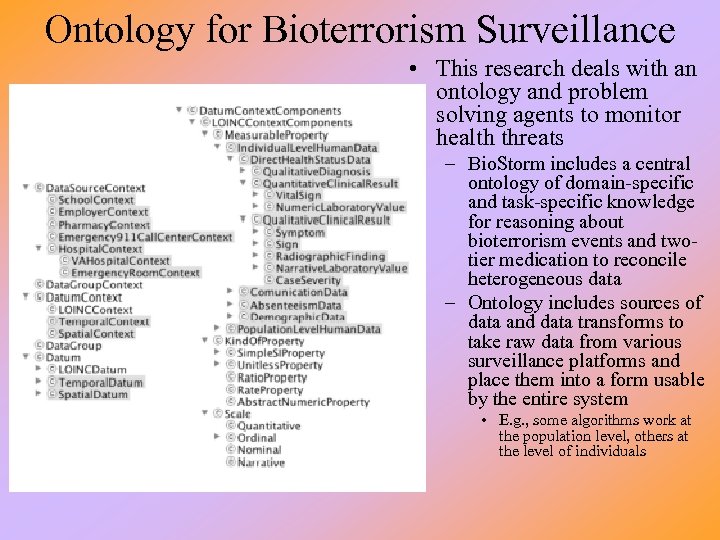

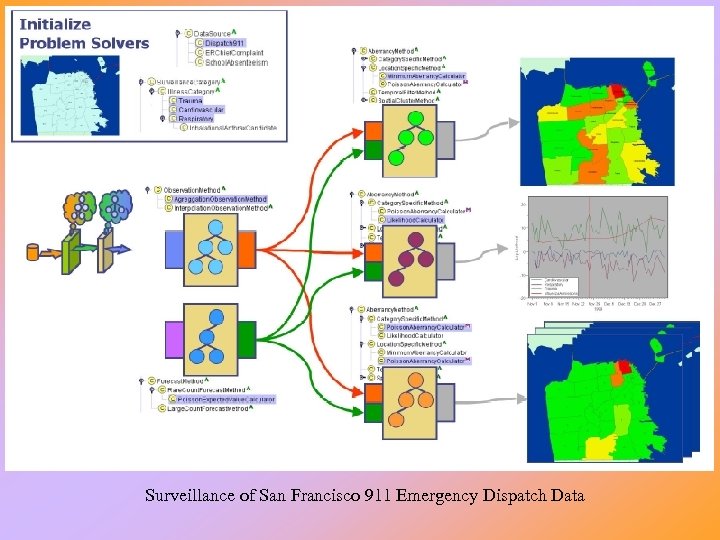

Ontology for Bioterrorism Surveillance • This research deals with an ontology and problem solving agents to monitor health threats – Bio. Storm includes a central ontology of domain-specific and task-specific knowledge for reasoning about bioterrorism events and twotier medication to reconcile heterogeneous data – Ontology includes sources of data and data transforms to take raw data from various surveillance platforms and place them into a form usable by the entire system • E. g. , some algorithms work at the population level, others at the level of individuals

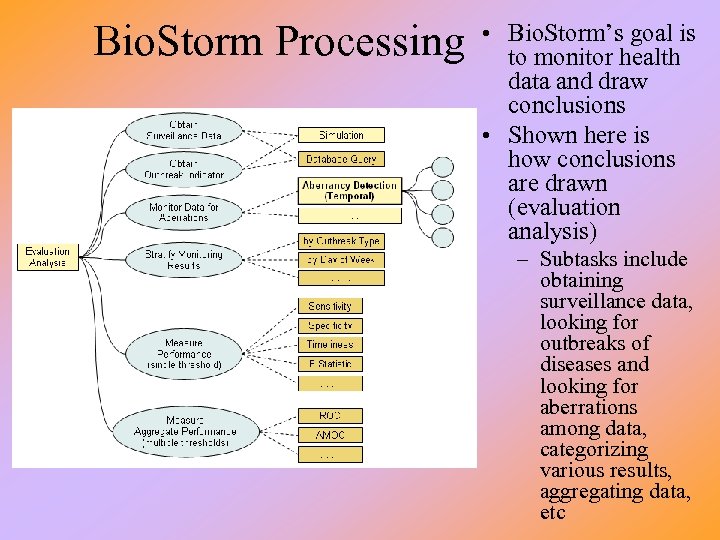

Bio. Storm Processing • Bio. Storm’s goal is to monitor health data and draw conclusions • Shown here is how conclusions are drawn (evaluation analysis) – Subtasks include obtaining surveillance data, looking for outbreaks of diseases and looking for aberrations among data, categorizing various results, aggregating data, etc

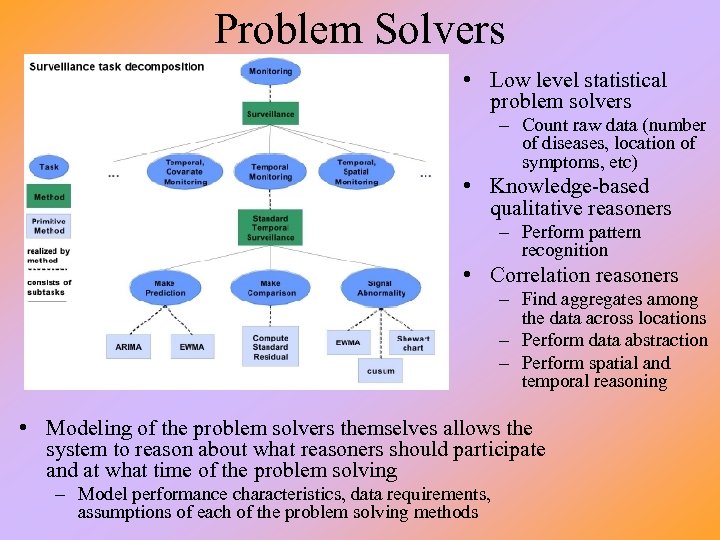

Problem Solvers • Low level statistical problem solvers – Count raw data (number of diseases, location of symptoms, etc) • Knowledge-based qualitative reasoners – Perform pattern recognition • Correlation reasoners – Find aggregates among the data across locations – Perform data abstraction – Perform spatial and temporal reasoning • Modeling of the problem solvers themselves allows the system to reason about what reasoners should participate and at what time of the problem solving – Model performance characteristics, data requirements, assumptions of each of the problem solving methods

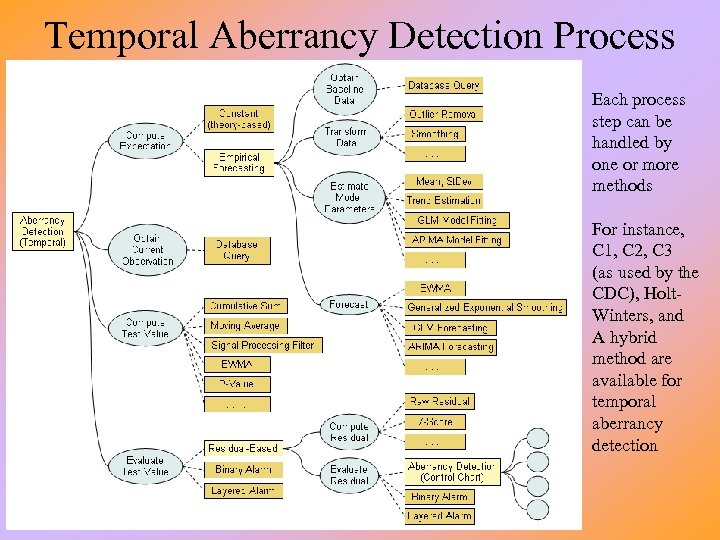

Temporal Aberrancy Detection Process Each process step can be handled by one or more methods For instance, C 1, C 2, C 3 (as used by the CDC), Holt. Winters, and A hybrid method are available for temporal aberrancy detection

Surveillance of San Francisco 911 Emergency Dispatch Data

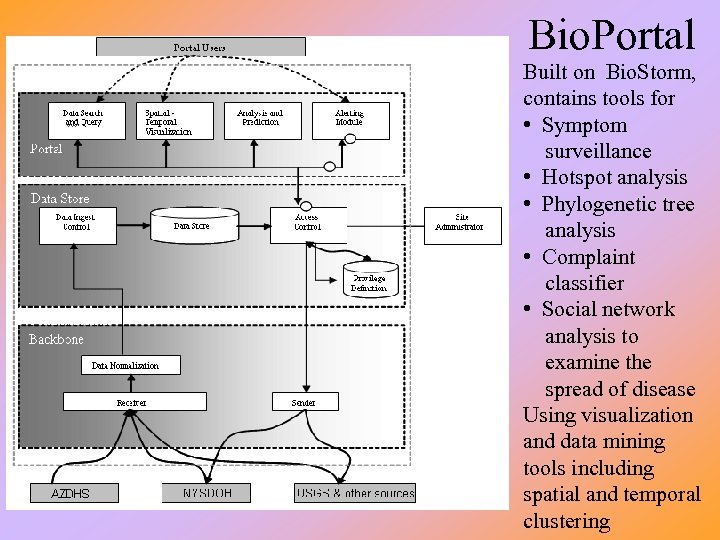

Bio. Portal Built on Bio. Storm, contains tools for • Symptom surveillance • Hotspot analysis • Phylogenetic tree analysis • Complaint classifier • Social network analysis to examine the spread of disease Using visualization and data mining tools including spatial and temporal clustering

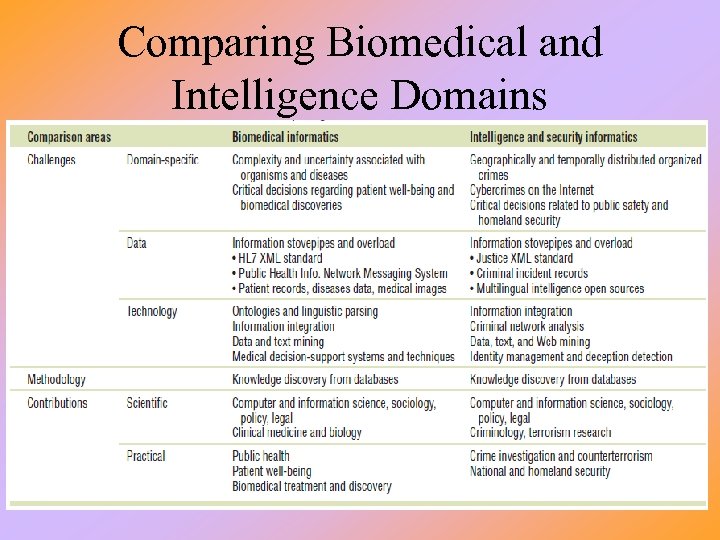

Comparing Biomedical and Intelligence Domains

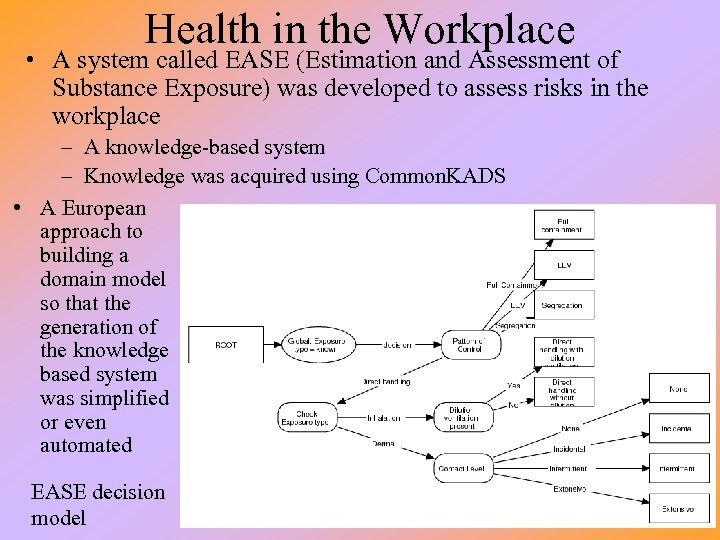

Health in the Workplace • A system called EASE (Estimation and Assessment of Substance Exposure) was developed to assess risks in the workplace – A knowledge-based system – Knowledge was acquired using Common. KADS • A European approach to building a domain model so that the generation of the knowledge based system was simplified or even automated EASE decision model

EASE Knowledge • There were 83 concepts built into EASE – – Chemical compounds Exposure types Patterns of control (ways to reduce exposure) Patterns of use (how substances can be used, the processes that might use or create substances) – Physical states of substances and substance properties – Vapor pressure values • The model includes – – Which physical states may cause the different types of exposures Hierarchy of substances and taxonomy of exposure types I/O relationships between tasks and vapor pressures Incompatibility knowledge (between patterns of use and patterns of control) • Inference process – – – Combines rule-based reasoning Shallow (associational reasoning) Both data-driven and goal-driven OOP to represent substance hierarchy and processes Implemented in CLIPS

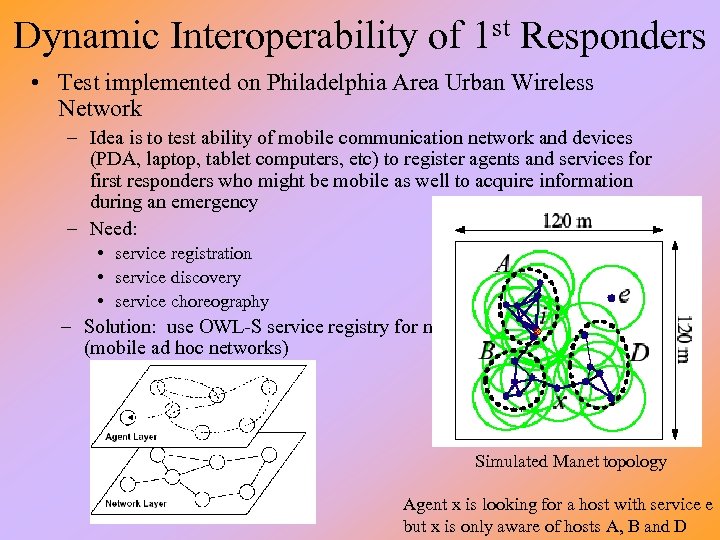

Dynamic Interoperability of 1 st Responders • Test implemented on Philadelphia Area Urban Wireless Network – Idea is to test ability of mobile communication network and devices (PDA, laptop, tablet computers, etc) to register agents and services for first responders who might be mobile as well to acquire information during an emergency – Need: • service registration • service discovery • service choreography – Solution: use OWL-S service registry for manets (mobile ad hoc networks) Simulated Manet topology Agent x is looking for a host with service e but x is only aware of hosts A, B and D

Experiment • Researchers ran two experiments, first on a simulation and second on a small portion of a network in Philly using mobile devices (in about a 2 block area) – agents had various options of when to consult host registries and how to migrate to another host (as packets or bundles) • Findings: – cross-layered design where agents reason about network and service dynamics worked well – using static lists, performing random walks, using inertia (following the path of the last known location of a service) all had problems leading to poor performance from either too many “hops” or not succeeding in locating the desired service – p-early binding – agent would consult local host’s registry to identify nearest host that contained needed service and would migrate to that host as a packet – b-early binding – agent would consult local host’s registry to identify nearest host that contained needed service and would migrate to that host as a bundle – late binding – agent would consult a local registry at each step as it migrated, always selecting the nearest host with the available service

Distributed Decision Support Approach • The following was proposed for coordinating response to a bio-chemical attack or situation – Sensible agent architecture • Perspective modeler – explicit models of the given agent’s world viewpoint and other agents – Behavioral model – current state and possible transitions to other states – Declarative model – represented facts (what is known by the agent) – Intentional model – goal structures • Action planner – interprets domain-specific goals, contains plans to achieve these goals, and executes plan steps • Autonomy reasoner – determines an appropriate decision making framework for each goal, and applies constraints based on whether or how much it is collaborating with other agents • Conflict resolution advisor – identifies possible conflicts between agents and attempts to resolve them through specialized strategies including voting, negotiation and self-modification

Example • Consider the following timeline – Day 0: large public event occurs, contagious respiratory disease released in the crowd – Day 1: disease incubates with no visible indicators (symptoms) – Day 2: many students are absent from school, EMS dispatches for respiratory problems are abnormally high – Day 3: pharmacies sales on decongestants are abnormally high • EMS information, school student attendance records, pharmacy sales information exist in disparate and unrelated databases – A sensible agent able to communicate between the databases can begin to generate a model – The agent must be able to • • identify the utility of data from any source (how trustworthy is it) how to transform and disambiguate data how to handle conflicting reports from different agents initiate queries for additional data

Example Continued • Our sensible agent will use its perspective modeler to build a perspective of the current problem solving – What agents (agencies) are involved? What knowledge sources are contributing? How trustworthy are these sources? • The action planner will formulate a plan of attack – Perhaps acquiring additional information to test out hypotheses, confirm data by sending queries to other sources • e. g. , several school records show absenteeism of students who attended a special concert, check to see if other schools whose students attended the same concert have similar absenteeism • The autonomy reasoner will become active when there are other agents actively working on the same problem (whether human or software) – The reasoner can propose actions based on deadlines, situation assessment, mandated rules, etc • e. g. , one agent has discovered that this may be a potential pandemic, the autonomy reasoner can be used to determine how this should be handled – bring in more expertise? contact officials?

INCA • An ontology for representing mixed initiative tasks (planning) – Issues: outstanding problems, plan flaws, opportunities, tasks – Nodes: activities in an emergency response plan (or components of an artifact under development) • May be organized hierarchically – Constraints: temporal or spatial, or relationships between nodes • Node constraints – nodes that must be included in a plan, other node constraints • Critical constraints – ordering and variable constraints • Auxiliary constraints –constraints on auxiliary variables, world-state constraints, resource constraints – Annotations: human-centric information • INCA can be thought of as a representation for partial plan descriptions • Uses of INCA – – Automated and mixed-initiative generation/manipulation of plans Human/system communication about plans Knowledge acquisition for planning Reasoning over plans

I-X • Using the INCA ontology, permit shared models for planning and communication – I-X has two cycles • Handle issues • Manage domain constraints – I-X system or agent carries out a process which leads to the production of a synthesized artifact (plan or plan step) – Constraints associated with artifact are propagated • The space for the model being generated is shared as follows – Shared task model (tasks to be accomplished, constraints on the model) – Shared space of options (plan steps) – Shared model of agent capabilities (handlers for issues, capabilities and constraints for managers and handlers) – Shared understanding of authority – Shared product model (using constraints)

I-X/INCA Example: Fire. Grid • The Fire. Grid ontology models fire-fighter concepts and situations – State parameters: measurable quantities to describe a situation at a given location • maximum temperature, smoke layer height, etc – Events: instantaneous and singleton occurrences at a given location • collapse, explosion – Hazards: states represented by specific state parameters and occurrences which impinge on safety • defined with a hazard level parameter (green, amber, red) – Space and time • INCA is used to model the ontology and constraints • I-X agents then communicate to each other using the ontology as a shared description of the world and use individual knowledge to generate belief states – This allows an agent to be able to cope with contradictory information coming from multiple sources – Fire. Grid would use communication to derive an interpretation of the current status of the situation in order to alert on-site responders

Experiment • Fire. Grid was tested in a controlled experiment – 3 -room apartment with 125 sensors rigged for a fire • Sensors tested temps, heat flux, gas levels and concentrations, deformation of structural elements • Sensors tested at roughly 3 second intervals and fed to an off-site DB – The model was tested for an the event flashover • When the temperature in a small region rises to above 500 degrees, all material in the area can ignite simultaneously – Fire. Grid used a number of different models of the environment to generate interpretations and predictions • The different models included different scenarios – People being trapped and different structural problems • The decision making aspect was whether to send fire-fighters into the building to conduct a search • Experts who monitored the models felt that the experiment was a success – Fire. Grid made the correct interpretations and made the proper decisions

e-Response • A larger scale system which used I-X/INCA as components – In this case, the system incorporates many technologies and people to provide an emergency response platform – Tools and technologies: • 3 Store – centralized knowledge bases based on RDF triples including OWL ontologies and can accommodate SPARQL queries • Compendium – concept mapping tool to link various documents/sources together real time • I-X/INCA to implement issues, nodes, constraints, and annotations for reasoning about disaster plans • Armadillo – real-time construction of a repository of useful available local resources using a number of services itself including search engines, crawlers, indexers, trained classifiers and NLP • CROSI mapping – performs semantic mapping and reference resolution to support interoperability • Photocopain and ACTive Media – annotation of photographs and other media • Onto. Co. PI – identifies communities of practice among ontology instances of human specializations to assess availability and usefulness • Commitment Management System – recommend allocations of resources by varying constraints and using a utility function

Example Demonstration • Incident reported: Fire downtown London – Incident node created by hand (support officer at command center), tagged automatically as from the sending agent – Command center queries the scale of incident • Fire Brigade arrives – Message contains details of allocation of resources, support officer adds new hyperlink to working map of current situation – Support officer starts Armadillo to look for available local resources • Message from fire brigade on scene – A fire fighter reports using acronyms, somewhat cryptic, support officer uses CROSI to understand the message including terms ACFO and SSU – The message is of a new incident, deployment of a specialized unit, which is tagged and added to the map • Message from police on scene request details of burn care units in area – Message added to map, support officer uses web browser and Armadillo to respond

Continued • Control center message: BBC broadcast – A member of the control center liaison team describes media coverage of the scene – Support officer uses Photocopain to upload annotate images which includes an image of a fire of a building a quarter mile away – Support officer alerts fire brigade of new fire, which respond that they are sending resources to the new site – New incident is added and the map is modified • Fire brigade requests details of upper floors of new building, specifically exit routes – Support officer queries triplestore for images of the location – Triplestore responds with images of the building, each of which is added as a link to the map • Images include plans, photographs, some of which have metaknowledge and annotations

Concluded • Fire Brigade message regarding first site is cryptic – Message includes the term “creeping” – Support officer uses CROSI and the building’s ontology to understand to infer that the statement is about concrete cracking – Control center requires expert advise, Onto. Co. PI is used to locate experts in the area regarding structural integrity of concrete building and they contact the nearest expert by phone who states that the creep does not constitute an immediate threat and can be ignored for now • Fire brigade reports new fire but requests resources to be allocated – While this is similar to the previous report from fire brigade about a new fire, here the fire brigade is not able to respond, so central command must examine available resources in the area to determine who can respond

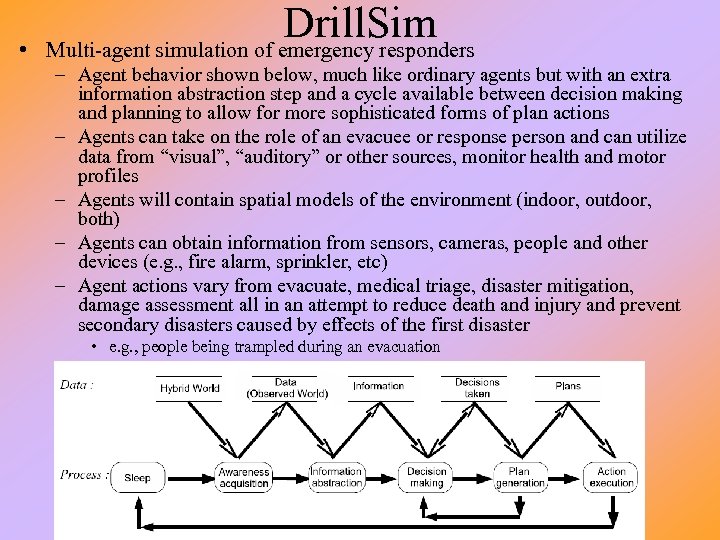

• Drill. Sim Multi-agent simulation of emergency responders – Agent behavior shown below, much like ordinary agents but with an extra information abstraction step and a cycle available between decision making and planning to allow for more sophisticated forms of plan actions – Agents can take on the role of an evacuee or response person and can utilize data from “visual”, “auditory” or other sources, monitor health and motor profiles – Agents will contain spatial models of the environment (indoor, outdoor, both) – Agents can obtain information from sensors, cameras, people and other devices (e. g. , fire alarm, sprinkler, etc) – Agent actions vary from evacuate, medical triage, disaster mitigation, damage assessment all in an attempt to reduce death and injury and prevent secondary disasters caused by effects of the first disaster • e. g. , people being trampled during an evacuation

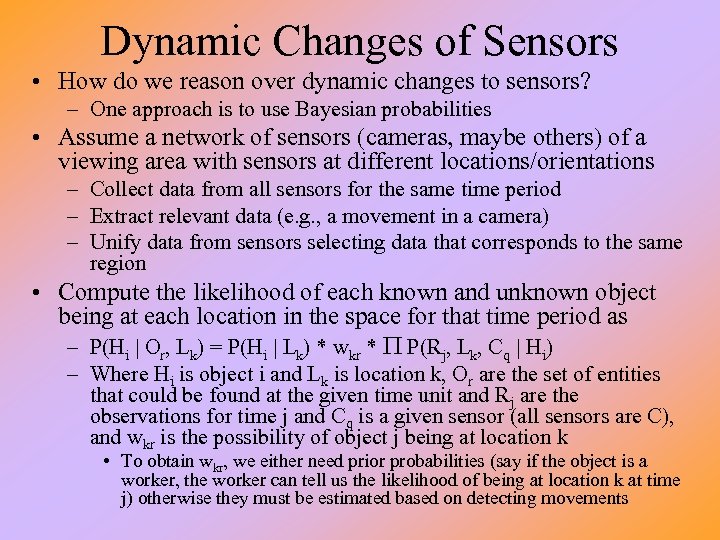

Dynamic Changes of Sensors • How do we reason over dynamic changes to sensors? – One approach is to use Bayesian probabilities • Assume a network of sensors (cameras, maybe others) of a viewing area with sensors at different locations/orientations – Collect data from all sensors for the same time period – Extract relevant data (e. g. , a movement in a camera) – Unify data from sensors selecting data that corresponds to the same region • Compute the likelihood of each known and unknown object being at each location in the space for that time period as – P(Hi | Or, Lk) = P(Hi | Lk) * wkr * P P(Rj, Lk, Cq | Hi) – Where Hi is object i and Lk is location k, Or are the set of entities that could be found at the given time unit and Rj are the observations for time j and Cq is a given sensor (all sensors are C), and wkr is the possibility of object j being at location k • To obtain wkr, we either need prior probabilities (say if the object is a worker, the worker can tell us the likelihood of being at location k at time j) otherwise they must be estimated based on detecting movements

Using This Approach • Obviously, aside from the probabilistic approach, one needs to have adequate sensor interpretation – To collect the relevant features from the input – And to unify the data • The idea is to use this approach for indoor surveillance where a number of sensors are available – Such as in a suite of rooms at a hotel, convention center, etc • A pilot experiment used 8 video cameras and four known people with up to 50 unknown people – System was able to identify about 59% of the movement occurrences correctly without domain knowledge, and 74% with domain knowledge • Prototypes have been developed for – Search and browsing of the event repository database of a web browser – Creating a people localization system based on evidence from multiple sensors and domain knowledge – Creating a real-time people tracking system using multiple sensors and predictive behavior of the target(s) – Creating an event classification and clustering system

TARA • Terrorism Activity Resource Application – Used to disseminate terrorism-related information to the general public – Uses modified ALICEbots • ALICE + terrorism domain knowledge base • the system was tested out on a variety of undergraduate and graduate students to see ifthey were able to use it to obtain reasonable and relevant information – The idea is to use TARA as a conversational search engine specific to terrorism inquiries and news • knowledge is added to ALICE in the form of XML rules along with perl code for additional pattern matching • sample XML rule: <category><pattern>WHAT IS AL QAIDA </pattern> <template>'The Base. ' An international terrorist group founded in approximately 1989 and dedicated to opposing non-Islamic governments with force and violence. </template></category>

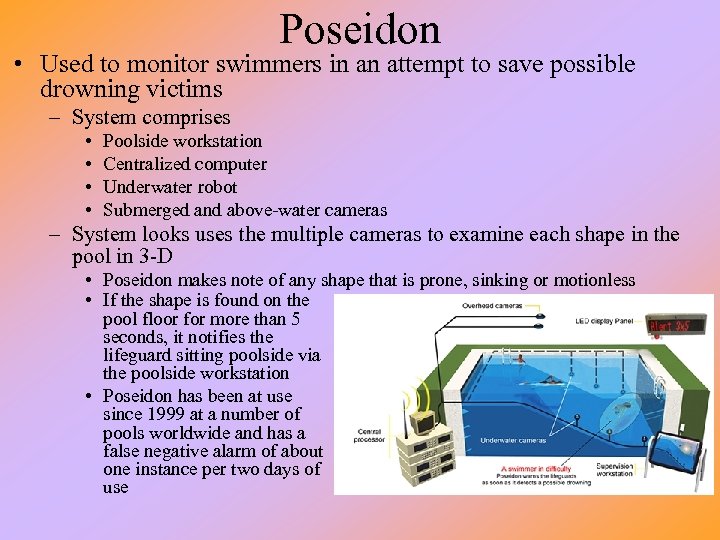

Poseidon • Used to monitor swimmers in an attempt to save possible drowning victims – System comprises • • Poolside workstation Centralized computer Underwater robot Submerged and above-water cameras – System looks uses the multiple cameras to examine each shape in the pool in 3 -D • Poseidon makes note of any shape that is prone, sinking or motionless • If the shape is found on the pool floor for more than 5 seconds, it notifies the lifeguard sitting poolside via the poolside workstation • Poseidon has been at use since 1999 at a number of pools worldwide and has a false negative alarm of about one instance per two days of use

Law Enforcement Uses • More and more cities are using AI – placing cameras around their downtown areas to watch for cars that run red lights • visual understanding used to obtain description of car • and optical character recognition is used to read license plate numbers – placing sensors on highways to detect speeders • In LA, an automatic license-plate reader scans car license plates looking for stolen vehicles – cameras placed in various locations around the city including inside of police cruisers – in one night, 4 different cameras found 7 stolen cars resulting in 3 arrests • To further support these types of activities – infrared cameras are being mounted onto patrol car light bars that can read 500 -800 plates an hour even at speeds of up to 35 mph • “false positives are practically non-existent”

59d693e37f6d6c040165f2ce325c1237.ppt