f122607130c64ecc101429ffeab9584e.ppt

- Количество слайдов: 26

High Energy Physics Data Management Richard P. Mount Stanford Linear Accelerator Center DOE Office of Science Data Management Workshop, SLAC March 16 -18, 2004

High Energy Physics Data Management Richard P. Mount Stanford Linear Accelerator Center DOE Office of Science Data Management Workshop, SLAC March 16 -18, 2004

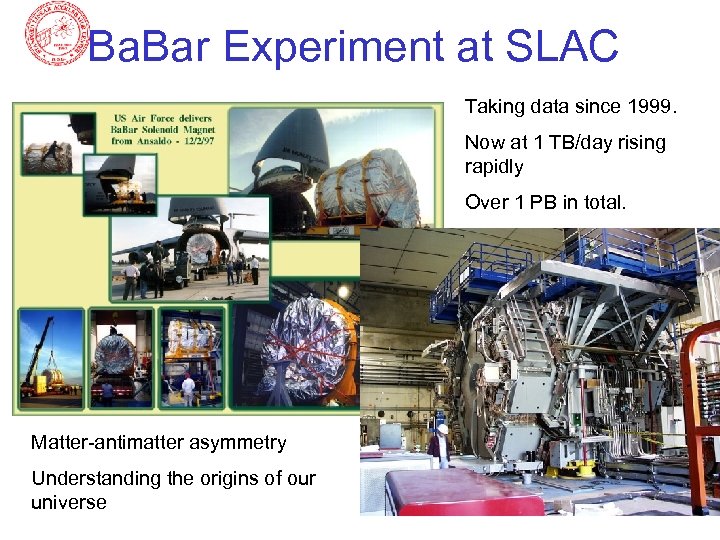

The Science (1) • Understand the nature of the Universe (experimental cosmology? ) – Ba. Bar at SLAC (1999 on): measuring the matter-antimatter asymmetry – CMS and Atlas at CERN (2007 on): understanding the origin of mass and other cosmic problems

The Science (1) • Understand the nature of the Universe (experimental cosmology? ) – Ba. Bar at SLAC (1999 on): measuring the matter-antimatter asymmetry – CMS and Atlas at CERN (2007 on): understanding the origin of mass and other cosmic problems

The Science (2) From the Fermilab Web • Research at Fermilab will address the grand questions of particle physics today. – Why do particles have mass? – Does neutrino mass come from a different source? – What is the true nature of quarks and leptons? Why are there three generations of elementary particles? – What are the truly fundamental forces? – How do we incorporate quantum gravity into particle physics? – What are the differences between matter and antimatter? – What are the dark particles that bind the universe together? – What is the dark energy that drives the universe apart? – Are there hidden dimensions beyond the ones we know? – Are we part of a multidimensional megaverse? – What is the universe made of? – How does the universe work?

The Science (2) From the Fermilab Web • Research at Fermilab will address the grand questions of particle physics today. – Why do particles have mass? – Does neutrino mass come from a different source? – What is the true nature of quarks and leptons? Why are there three generations of elementary particles? – What are the truly fundamental forces? – How do we incorporate quantum gravity into particle physics? – What are the differences between matter and antimatter? – What are the dark particles that bind the universe together? – What is the dark energy that drives the universe apart? – Are there hidden dimensions beyond the ones we know? – Are we part of a multidimensional megaverse? – What is the universe made of? – How does the universe work?

Experimental HENP • • Large (500 – 2000 physicist) international collaborations 5 – 10 years accelerator and detector construction 10 – 20 years data-taking and analysis Countable number of experiments: – Alice, Atlas, Ba. Bar, Belle, CDF, CLEO, CMS, D 0, LHCb, PHENIX, STAR … • Ba. Bar at SLAC – – Measuring matter-antimatter asymmetry (why we exist? ) 500 Physicists Data taking since 1999 More data than any other experiment (but likely to overtaken by CDF, D 0 and STAR soon and will be overtaken by Alice, Atlas and CMS later)

Experimental HENP • • Large (500 – 2000 physicist) international collaborations 5 – 10 years accelerator and detector construction 10 – 20 years data-taking and analysis Countable number of experiments: – Alice, Atlas, Ba. Bar, Belle, CDF, CLEO, CMS, D 0, LHCb, PHENIX, STAR … • Ba. Bar at SLAC – – Measuring matter-antimatter asymmetry (why we exist? ) 500 Physicists Data taking since 1999 More data than any other experiment (but likely to overtaken by CDF, D 0 and STAR soon and will be overtaken by Alice, Atlas and CMS later)

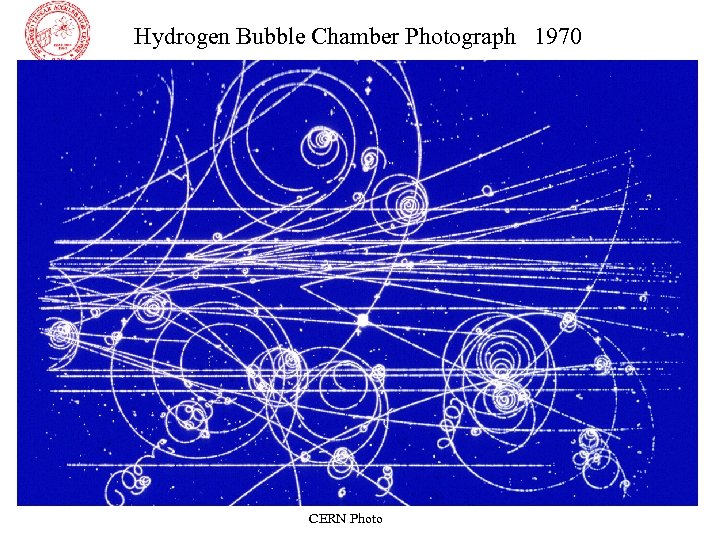

Hydrogen Bubble Chamber Photograph 1970 CERN Photo

Hydrogen Bubble Chamber Photograph 1970 CERN Photo

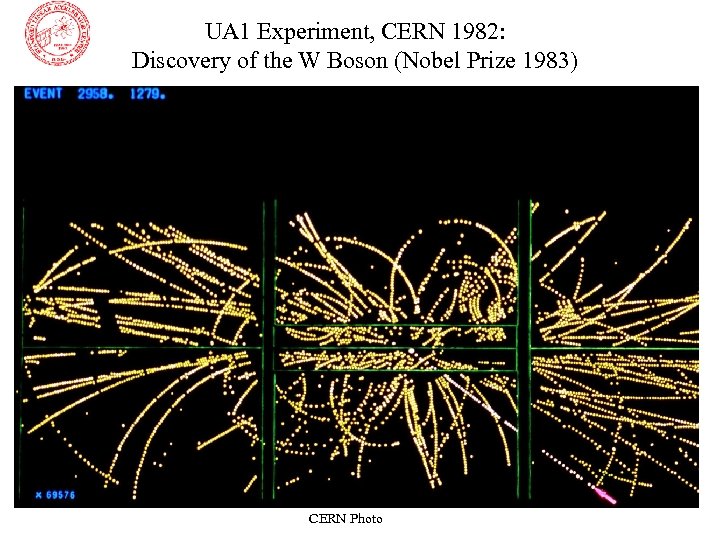

UA 1 Experiment, CERN 1982: Discovery of the W Boson (Nobel Prize 1983) CERN Photo

UA 1 Experiment, CERN 1982: Discovery of the W Boson (Nobel Prize 1983) CERN Photo

Ba. Bar Experiment at SLAC Taking data since 1999. Now at 1 TB/day rising rapidly Over 1 PB in total. Matter-antimatter asymmetry Understanding the origins of our universe

Ba. Bar Experiment at SLAC Taking data since 1999. Now at 1 TB/day rising rapidly Over 1 PB in total. Matter-antimatter asymmetry Understanding the origins of our universe

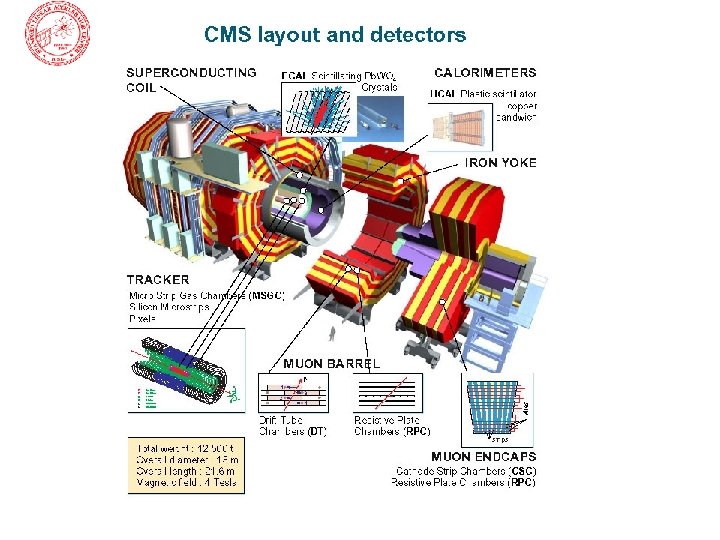

CMS Experiment: “Find the Higgs” ~10 PB/year by 2010

CMS Experiment: “Find the Higgs” ~10 PB/year by 2010

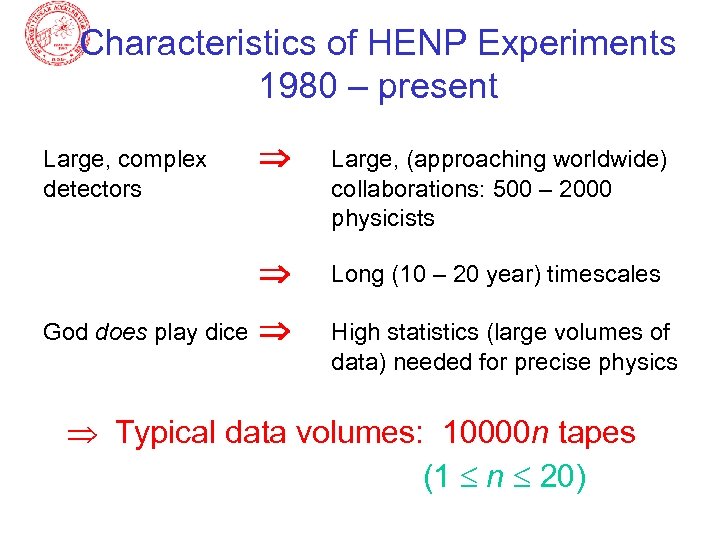

Characteristics of HENP Experiments 1980 – present God does play dice Large, (approaching worldwide) collaborations: 500 – 2000 physicists Large, complex detectors Long (10 – 20 year) timescales High statistics (large volumes of data) needed for precise physics Typical data volumes: 10000 n tapes (1 n 20)

Characteristics of HENP Experiments 1980 – present God does play dice Large, (approaching worldwide) collaborations: 500 – 2000 physicists Large, complex detectors Long (10 – 20 year) timescales High statistics (large volumes of data) needed for precise physics Typical data volumes: 10000 n tapes (1 n 20)

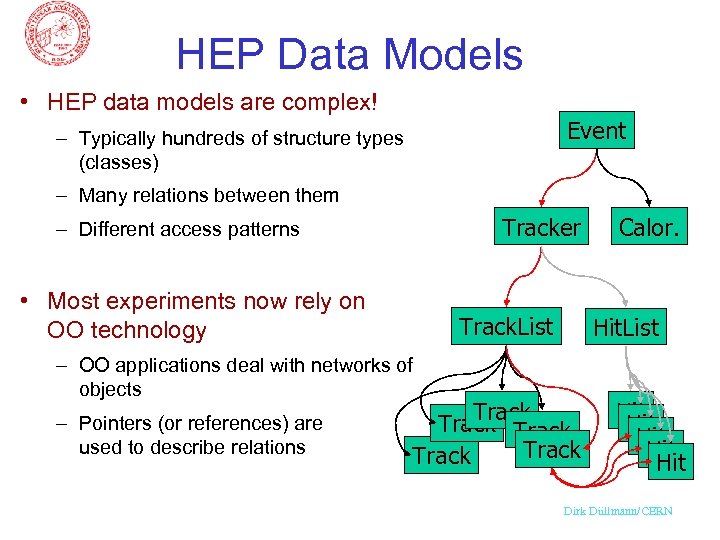

HEP Data Models • HEP data models are complex! Event – Typically hundreds of structure types (classes) – Many relations between them Tracker – Different access patterns • Most experiments now rely on OO technology Track. List – OO applications deal with networks of objects – Pointers (or references) are used to describe relations Calor. Hit. List Track Track Hit Hit Hit Dirk Düllmann/CERN

HEP Data Models • HEP data models are complex! Event – Typically hundreds of structure types (classes) – Many relations between them Tracker – Different access patterns • Most experiments now rely on OO technology Track. List – OO applications deal with networks of objects – Pointers (or references) are used to describe relations Calor. Hit. List Track Track Hit Hit Hit Dirk Düllmann/CERN

Today’s HENP Data Management Challenges • Sparse access to objects in petabyte databases: – Natural object size 100 bytes – 10 kbytes – Disk (and tape) non-streaming performance dominated by latency – Approach - current: • Instantiate richer database subsets for each analysis application – Approaches – possible • Abandon tapes (use tapes only for backup, not for data-access) • Hash data over physical disks • Queue and reorder all disk access requests • Keep the hottest objects in (tens of terabytes of) memory • etc.

Today’s HENP Data Management Challenges • Sparse access to objects in petabyte databases: – Natural object size 100 bytes – 10 kbytes – Disk (and tape) non-streaming performance dominated by latency – Approach - current: • Instantiate richer database subsets for each analysis application – Approaches – possible • Abandon tapes (use tapes only for backup, not for data-access) • Hash data over physical disks • Queue and reorder all disk access requests • Keep the hottest objects in (tens of terabytes of) memory • etc.

Today’s HENP Data Management Challenges • Millions of Real or Virtual Datasets: – Ba. Bar has a petabyte database and over 60 million “collections”. (lists of objects in the database that somebody found relevant) – Analysis groups or individuals create new collections of new and/or old objects – It is nearly impossible to make optimal use of existing collections and objects

Today’s HENP Data Management Challenges • Millions of Real or Virtual Datasets: – Ba. Bar has a petabyte database and over 60 million “collections”. (lists of objects in the database that somebody found relevant) – Analysis groups or individuals create new collections of new and/or old objects – It is nearly impossible to make optimal use of existing collections and objects

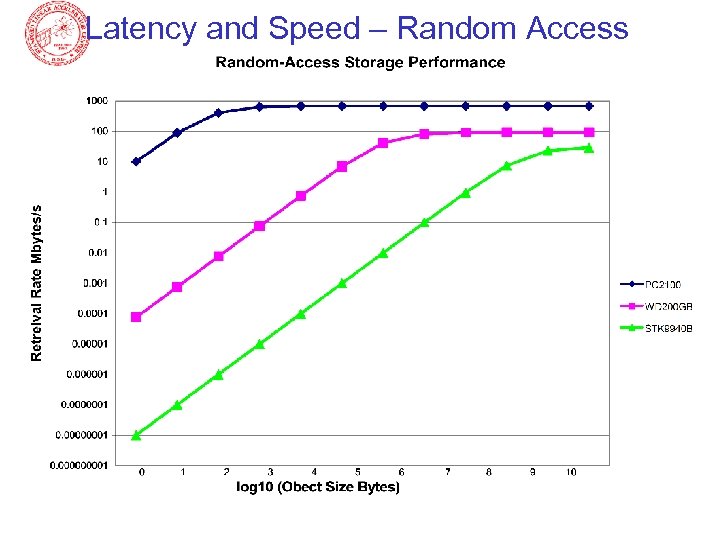

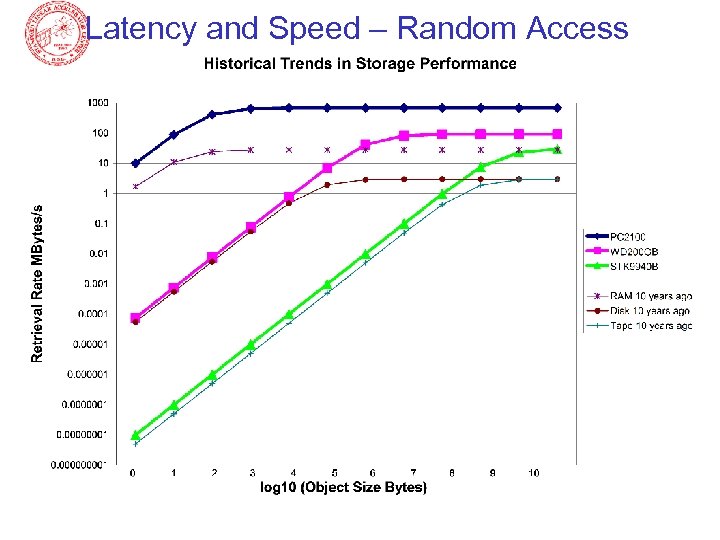

Latency and Speed – Random Access

Latency and Speed – Random Access

Latency and Speed – Random Access

Latency and Speed – Random Access

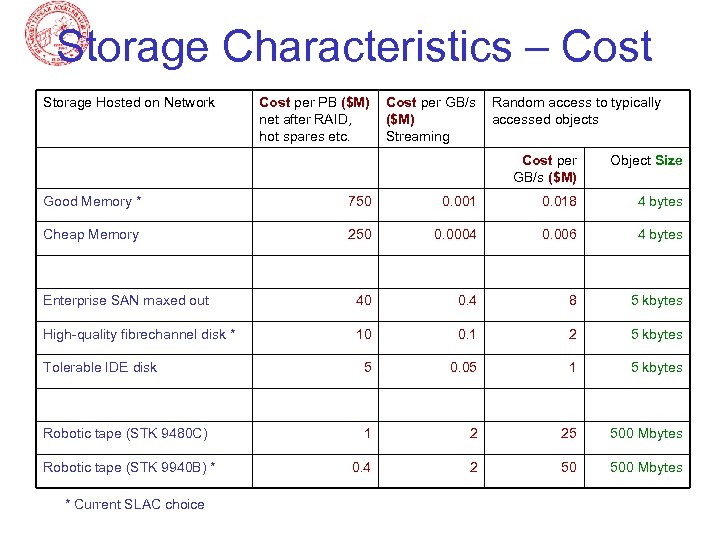

Storage Characteristics – Cost Storage Hosted on Network Cost per PB ($M) net after RAID, hot spares etc. Cost per GB/s ($M) Streaming Random access to typically accessed objects Cost per GB/s ($M) Object Size Good Memory * 750 0. 001 0. 018 4 bytes Cheap Memory 250 0. 0004 0. 006 4 bytes Enterprise SAN maxed out 40 0. 4 8 5 kbytes High-quality fibrechannel disk * 10 0. 1 2 5 kbytes Tolerable IDE disk 5 0. 05 1 5 kbytes Robotic tape (STK 9480 C) 1 2 25 500 Mbytes 0. 4 2 50 500 Mbytes Robotic tape (STK 9940 B) * * Current SLAC choice

Storage Characteristics – Cost Storage Hosted on Network Cost per PB ($M) net after RAID, hot spares etc. Cost per GB/s ($M) Streaming Random access to typically accessed objects Cost per GB/s ($M) Object Size Good Memory * 750 0. 001 0. 018 4 bytes Cheap Memory 250 0. 0004 0. 006 4 bytes Enterprise SAN maxed out 40 0. 4 8 5 kbytes High-quality fibrechannel disk * 10 0. 1 2 5 kbytes Tolerable IDE disk 5 0. 05 1 5 kbytes Robotic tape (STK 9480 C) 1 2 25 500 Mbytes 0. 4 2 50 500 Mbytes Robotic tape (STK 9940 B) * * Current SLAC choice

Storage-Cost Notes • Memory costs per TB are calculated: Cost of memory + host system • Memory costs per GB/s are calculated: (Cost of typical memory + host system)/(GB/s of memory in this system) • Disk costs per TB are calculated: Cost of disk + server system • Disk costs per GB/s are calculated: (Cost of typical disk + server system)/(GB/s of this system) • Tape costs per TB are calculated: Cost of media only • Tape costs per GB/s are calculated: (Cost of typical server+drives+robotics only)/(GB/s of this server+drives+robotics)

Storage-Cost Notes • Memory costs per TB are calculated: Cost of memory + host system • Memory costs per GB/s are calculated: (Cost of typical memory + host system)/(GB/s of memory in this system) • Disk costs per TB are calculated: Cost of disk + server system • Disk costs per GB/s are calculated: (Cost of typical disk + server system)/(GB/s of this system) • Tape costs per TB are calculated: Cost of media only • Tape costs per GB/s are calculated: (Cost of typical server+drives+robotics only)/(GB/s of this server+drives+robotics)

Storage Issues • Tapes: – Still cheaper than disk for low I/O rates – Disk becomes cheaper at, for example, 300 MB/s per petabyte for randomaccessed 500 MB files – Will SLAC every buy new tape silos?

Storage Issues • Tapes: – Still cheaper than disk for low I/O rates – Disk becomes cheaper at, for example, 300 MB/s per petabyte for randomaccessed 500 MB files – Will SLAC every buy new tape silos?

Storage Issues • Disks: – Random access performance is lousy, independent of cost unless objects are megabytes or more – Google people say: “If you were as smart as us you could have fun building reliable storage out of cheap junk” – My Systems Group says: “Accounting for TCO, we are buying the right stuff”

Storage Issues • Disks: – Random access performance is lousy, independent of cost unless objects are megabytes or more – Google people say: “If you were as smart as us you could have fun building reliable storage out of cheap junk” – My Systems Group says: “Accounting for TCO, we are buying the right stuff”

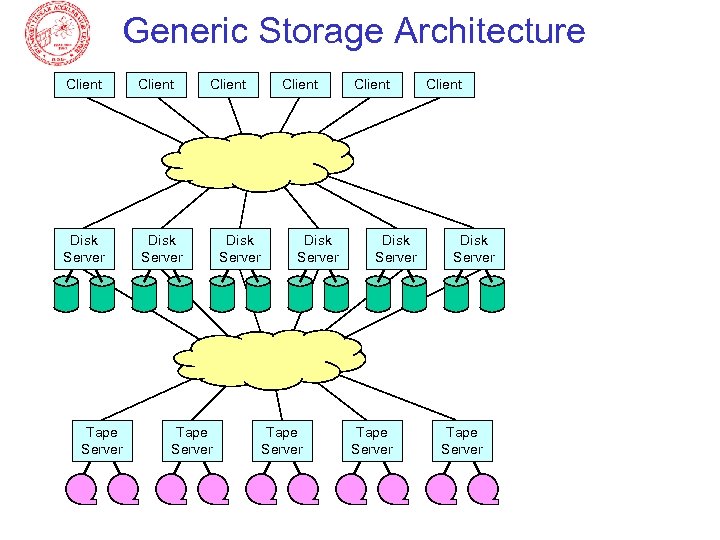

Generic Storage Architecture Client Disk Server Tape Server Client Tape Server Disk Server Client Disk Server Tape Server

Generic Storage Architecture Client Disk Server Tape Server Client Tape Server Disk Server Client Disk Server Tape Server

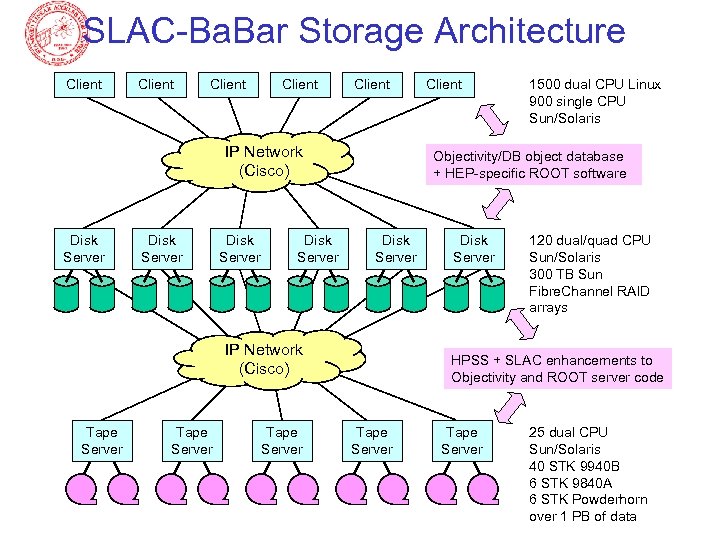

SLAC-Ba. Bar Storage Architecture Client Client IP Network (Cisco) Disk Server Tape Server 1500 dual CPU Linux 900 single CPU Sun/Solaris Objectivity/DB object database + HEP-specific ROOT software Disk Server IP Network (Cisco) Tape Server Client Disk Server 120 dual/quad CPU Sun/Solaris 300 TB Sun Fibre. Channel RAID arrays HPSS + SLAC enhancements to Objectivity and ROOT server code Tape Server 25 dual CPU Sun/Solaris 40 STK 9940 B 6 STK 9840 A 6 STK Powderhorn over 1 PB of data

SLAC-Ba. Bar Storage Architecture Client Client IP Network (Cisco) Disk Server Tape Server 1500 dual CPU Linux 900 single CPU Sun/Solaris Objectivity/DB object database + HEP-specific ROOT software Disk Server IP Network (Cisco) Tape Server Client Disk Server 120 dual/quad CPU Sun/Solaris 300 TB Sun Fibre. Channel RAID arrays HPSS + SLAC enhancements to Objectivity and ROOT server code Tape Server 25 dual CPU Sun/Solaris 40 STK 9940 B 6 STK 9840 A 6 STK Powderhorn over 1 PB of data

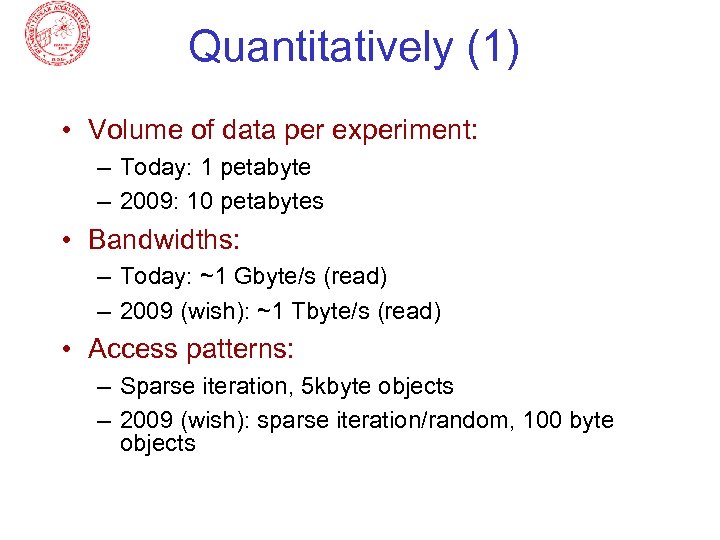

Quantitatively (1) • Volume of data per experiment: – Today: 1 petabyte – 2009: 10 petabytes • Bandwidths: – Today: ~1 Gbyte/s (read) – 2009 (wish): ~1 Tbyte/s (read) • Access patterns: – Sparse iteration, 5 kbyte objects – 2009 (wish): sparse iteration/random, 100 byte objects

Quantitatively (1) • Volume of data per experiment: – Today: 1 petabyte – 2009: 10 petabytes • Bandwidths: – Today: ~1 Gbyte/s (read) – 2009 (wish): ~1 Tbyte/s (read) • Access patterns: – Sparse iteration, 5 kbyte objects – 2009 (wish): sparse iteration/random, 100 byte objects

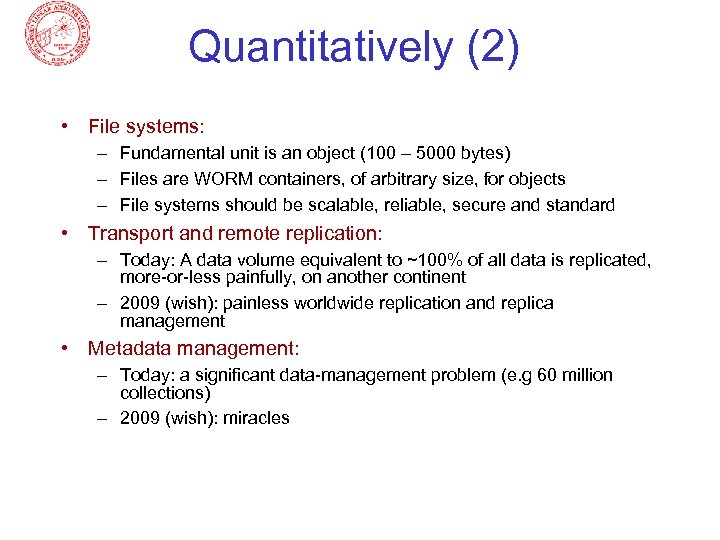

Quantitatively (2) • File systems: – Fundamental unit is an object (100 – 5000 bytes) – Files are WORM containers, of arbitrary size, for objects – File systems should be scalable, reliable, secure and standard • Transport and remote replication: – Today: A data volume equivalent to ~100% of all data is replicated, more-or-less painfully, on another continent – 2009 (wish): painless worldwide replication and replica management • Metadata management: – Today: a significant data-management problem (e. g 60 million collections) – 2009 (wish): miracles

Quantitatively (2) • File systems: – Fundamental unit is an object (100 – 5000 bytes) – Files are WORM containers, of arbitrary size, for objects – File systems should be scalable, reliable, secure and standard • Transport and remote replication: – Today: A data volume equivalent to ~100% of all data is replicated, more-or-less painfully, on another continent – 2009 (wish): painless worldwide replication and replica management • Metadata management: – Today: a significant data-management problem (e. g 60 million collections) – 2009 (wish): miracles

Quantitatively (3) • Heterogeneity and data transformation: – Today: not considered an issue … 99. 9% of the data are only accessible to and intelligible by the members of a collaboration – Tomorrow: we live in terror of being forced to make data public (because it is unintelligible and so the user-support costs would be devastating) • Ontology, Annotation, Provenance: – Today: we think we know what provenance means – 2009 (wish): • Know the provenance of every object • Create new objects and collections making optimal use of all pre -existing objects

Quantitatively (3) • Heterogeneity and data transformation: – Today: not considered an issue … 99. 9% of the data are only accessible to and intelligible by the members of a collaboration – Tomorrow: we live in terror of being forced to make data public (because it is unintelligible and so the user-support costs would be devastating) • Ontology, Annotation, Provenance: – Today: we think we know what provenance means – 2009 (wish): • Know the provenance of every object • Create new objects and collections making optimal use of all pre -existing objects