68c8c6e41e49d97a5afe2f84649daef2.ppt

- Количество слайдов: 13

HEPi. X Fall 2001 Report (2) NERSC, Berkeley Wolfgang Friebel, 16. 11. 2001 C 5 report Nov 16, 2001 C 5 Report

Further topics covered n n n Batch (Sun Grid Engine Enterprise Edition) Distributed Filesystems (Benchmarks) Security (again) (the concept at NERSC) Nov 16, 2001 C 5 Report 2

Batch systems n n Two talks on SGEEE (formerly known as Global Resource Director – GRD or Codine), see below FNAL presented new version of their batch system n n Main scope is resource management not load balancing FBSNG, written primarily in Python, Python API exists Comes with Kerberos 5 support NERSC reported experiences with LSF n Not very pleased with LSF, will also evaluate alternatives Nov 16, 2001 C 5 Report 3

SGEEE Batch n n n n n Ease of installation from source Access to source code Chance of integration into a monitoring system API for C and Perl Excellent load balancing mechanisms (4 scheduler policies) Managing the requests of concurrent groups Mechanisms for recovery from machine crashes Fallback solutions for dying daemons Weakest point is AFS integration and Token prolongation mechanism (basically the same code as for Loadleveler and for older LSF versions) Nov 16, 2001 C 5 Report 4

SGEEE Batch n SGEEE has all ingredients to build a company wide batch infrastructure n n n SGEEE is open source maintained by Sun n n Allocation of resources according to policies ranging from departmental policies to individual user policies Dynamic adjustment of priorities for running jobs to meet policies Supports interactive jobs, array jobs, parallel jobs Can be used with Kerberos (4 and 5) and AFS, Globus integration underway Getting deeper knowledge by studying the code Can enhance the code (examples: more schedulers, tighter AFS integration, monitoring only daemons) Code is centrally maintained by a core developer team Could play a more important role in HEP (component of a grid environment, open industry grade batch system as recommended solution within HEPi. X? ) Nov 16, 2001 C 5 Report 5

Scheduling policies n n Within SGEEE tickets are used to distribute the workload User based functional policy n n Share based policy n n n Certain fractions of the system resources (shares) can be assigned to projects and users. Projects and users receive that shares during a configurable moving time window (e. g. CPU usage for a month based on usage during the past month) Deadline policy n n Tickets are assigned to projects, users and jobs. More tickets mean higher priority and faster execution (if concurrent jobs are running on a CPU) By redistributing tickets the system can assign jobs an increasing weight to meet a certain deadline. Can be used by authorized users only Override policy n Sysadmins can give additional tickets to jobs, users or projects to temporarily adjust their relative importance. Nov 16, 2001 C 5 Report 6

Distributed Filesystems n Candidates for benchmarking n n n NFS versions 2 and 3 GFS (University of Minnesota/Sistina Software) AFS GPFS (IBM cluster file system, being ported to Linux) PVFS – Parallel Virtual Filesystem Not taken n n GPFS – IBM could get it working at NERSC under Linux (not ready? ) PVFS – unstable in tests, single point of failure (metadata server) AFS – slower than NFS, tests done elsewhere, successfully running GFS – designed for SAN, runs over TCP with significant performance penalties, lock management not mature, stability for high number of clients not expected to be good. Good candidate for SAN’s Nov 16, 2001 C 5 Report 7

Distributed Filesystems n Conclusion for NERSC: only NFS remains, AFS too heavy for them n n Benchmarking tools used n n Bonnie Iozone Postmark Benchmarked equipment n n n The talk discussed various combinations of Linux kernel versions (2. 2. x and 2. 4. x), NFS clients (v 2 and v 3) and servers (v 2 and v 3) Dual 866 Mhz PIII with 512 MB RAM Escalade 6200 series 4 channel IDE RAID, with 3 72 GB drives striped Results n n n By carefully choosing Kernel and NFS Versions throughput can be increased For much more details consult the talk Other sites reported very bad NFS performance (confirms NERSC findings, that tuning for NFS is a must) Nov 16, 2001 C 5 Report 8

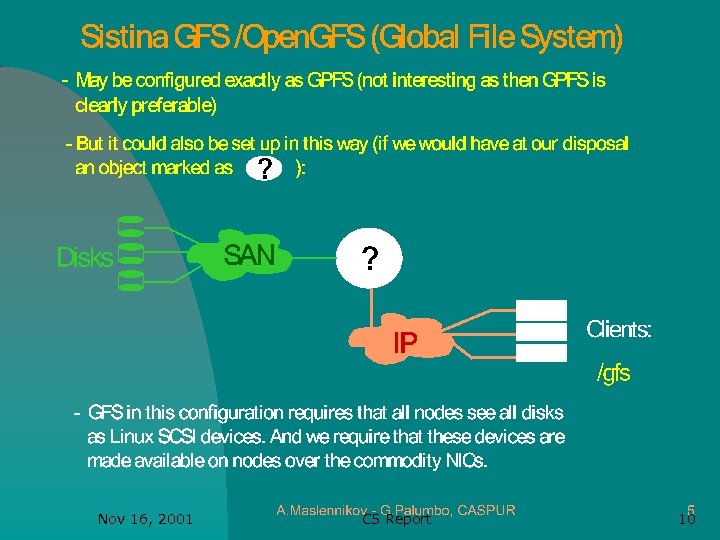

Distributed Filesystems: GFS n Caspur is looking for a filesystem attached to a multinode Linux farm n n By using a SCSI to IP converter (Axis from Dothill) they would be able to setup a serverless GFS n n n Looked for SAN based solutions NFS and GPFS discarded (NFS: performance, GPFS: extra HW & SW) Have chosen GFS, but trying to use GFS over IP (see next slide) Contradicting kernel requirements for GFS and AXIS currently Issues probably solved (11/2001) with equipment from Cisco Looks promising to them, more investigations to come Nov 16, 2001 C 5 Report 9

Nov 16, 2001 C 5 Report 10

Computer Security at NERSC n n Very open community, need a balance between security and availability Main concepts used n n n n n Intrusion detection using BRO (in house development, open source) Immediate actions against attackers (“shunning”) Scanning systems for vulnerabilities Keeping systems/software up to date Firewall for critical assets only(operation consoles, development systems) Virus wall for incoming emails Top level staff in computer security and networking Observed ever increasing scans (30 -40 a day!!), threats Were able to track down hackers and reconstruct the attacks Nov 16, 2001 C 5 Report 11

Computer Security: BRO n n n Passively monitors network Carefully designed to avoid packet drops at high speeds 622 Mbps (OC-12) Two main components n n Event engine, converts network traffic into events (compression) Policy script interpreter (interprets output of event handlers) BRO interacts with the border router to drop hosts immediately (using ACL’s) on attacks BRO records all input in interactive sessions n Allows to reconstruct data even if type ahead or completion mechanisms used Nov 16, 2001 C 5 Report 12

Computer Security: BRO n n n Some of the analysis done in real time, deeper analysis done once a day offline NERSC is relying heavily on intrusion detection by BRO NERSC was able to quickly react on the “Code Red” worm (changes to BRO) Subsequently “Nimda” did very little damage Many more useful tips on practical security (have a look to the talk) Nov 16, 2001 C 5 Report 13

68c8c6e41e49d97a5afe2f84649daef2.ppt