5f85d79261cb961ed2105b2e6c50687e.ppt

- Количество слайдов: 49

HENP Grids and Networks Global Virtual Organizations Harvey B Newman, Professor of Physics LHCNet PI, US CMS Collaboration Board Chair Drivers of the Formation of the Information Society April 18, 2003

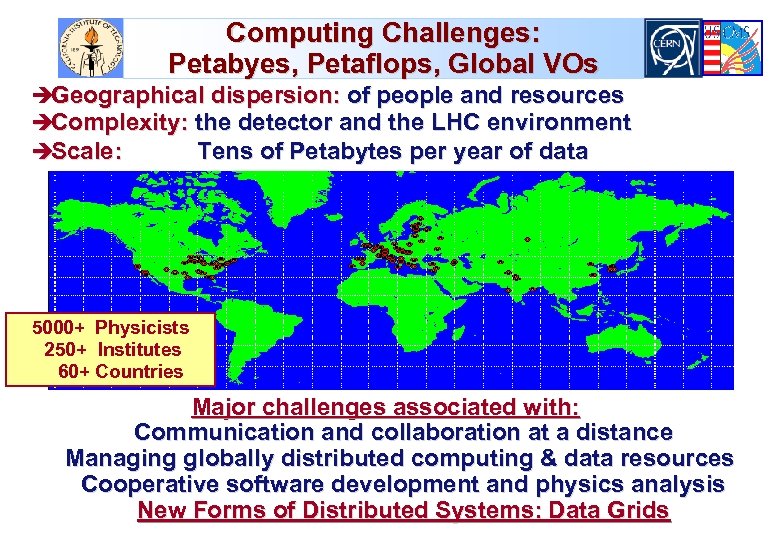

Computing Challenges: Petabyes, Petaflops, Global VOs èGeographical dispersion: of people and resources èComplexity: the detector and the LHC environment èScale: Tens of Petabytes per year of data 5000+ Physicists 250+ Institutes 60+ Countries Major challenges associated with: Communication and collaboration at a distance Managing globally distributed computing & data resources Cooperative software development and physics analysis New Forms of Distributed Systems: Data Grids

Next Generation Networks for Experiments: Goals and Needs Large data samples explored analyzed by thousands of globally dispersed scientists, in hundreds of teams u Providing rapid access to event samples, subsets and analyzed physics results from massive data stores è From Petabytes in 2003, ~100 Petabytes by 2008, to ~1 Exabyte by ~2013. u Providing analyzed results with rapid turnaround, by coordinating and managing the large but LIMITED computing, data handling and NETWORK resources effectively u Enabling rapid access to the data and the collaboration è Across an ensemble of networks of varying capability u Advanced integrated applications, such as Data Grids, rely on seamless operation of our LANs and WANs è With reliable, monitored, quantifiable high performance

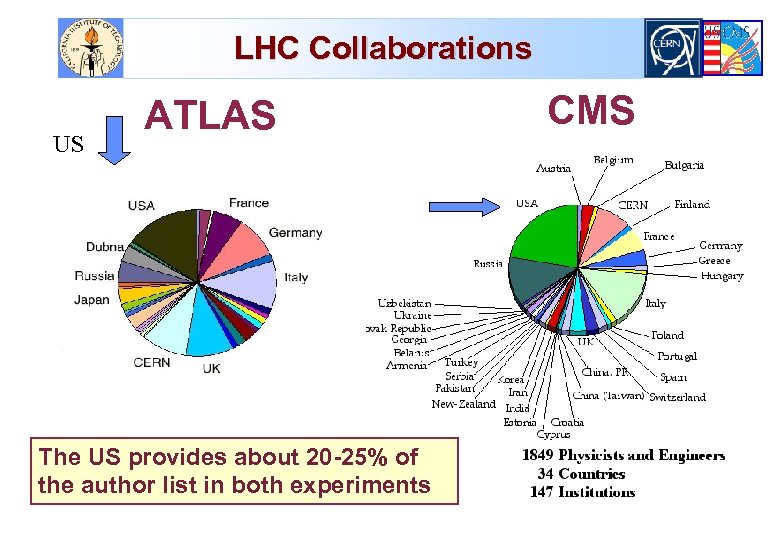

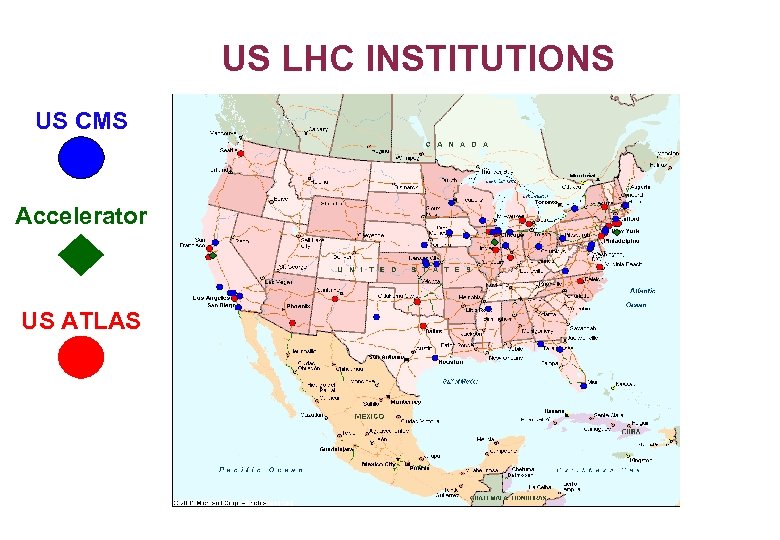

LHC Collaborations US ATLAS The US provides about 20 -25% of the author list in both experiments CMS

US LHC INSTITUTIONS US CMS Accelerator US ATLAS

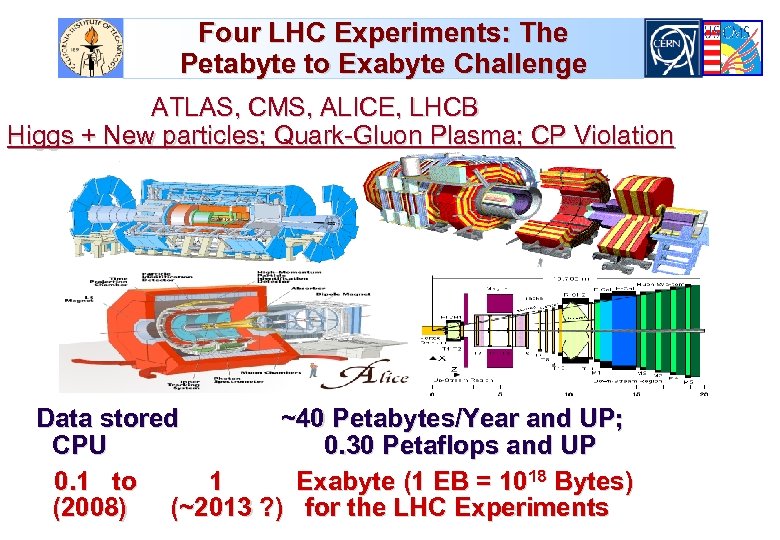

Four LHC Experiments: The Petabyte to Exabyte Challenge ATLAS, CMS, ALICE, LHCB Higgs + New particles; Quark-Gluon Plasma; CP Violation Data stored ~40 Petabytes/Year and UP; CPU 0. 30 Petaflops and UP 0. 1 to 1 Exabyte (1 EB = 1018 Bytes) (2008) (~2013 ? ) for the LHC Experiments

LHC: Higgs Decay into 4 muons (Tracker only); 1000 X LEP Data Rate 109 events/sec, selectivity: 1 in 1013 (1 person in a thousand world populations)

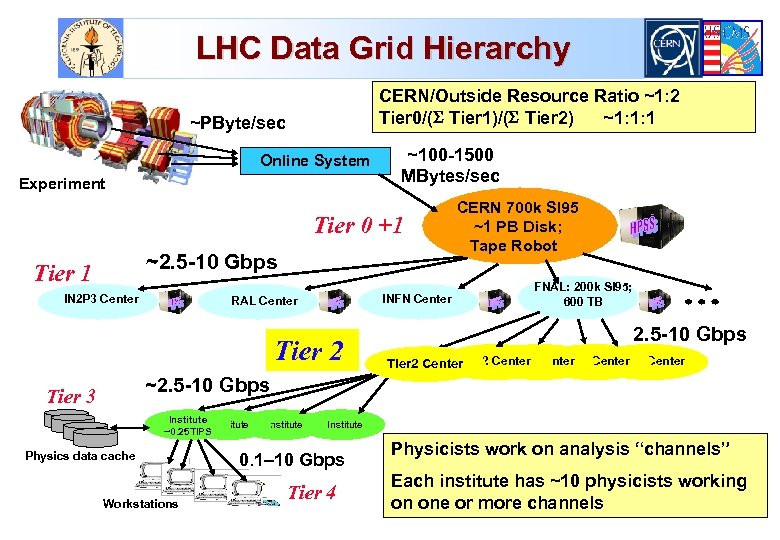

LHC Data Grid Hierarchy CERN/Outside Resource Ratio ~1: 2 Tier 0/( Tier 1)/( Tier 2) ~1: 1: 1 ~PByte/sec Online System Experiment ~100 -1500 MBytes/sec Tier 0 +1 ~2. 5 -10 Gbps Tier 1 IN 2 P 3 Center INFN Center RAL Center Tier 2 CERN 700 k SI 95 ~1 PB Disk; Tape Robot FNAL: 200 k SI 95; 600 TB 2. 5 -10 Gbps Tier 2 Center Tier 2 Center ~2. 5 -10 Gbps Tier 3 Institute ~0. 25 TIPS Physics data cache Workstations Institute 0. 1– 10 Gbps Tier 4 Physicists work on analysis “channels” Each institute has ~10 physicists working on one or more channels

![Transatlantic Net WG (HN, L. Price) Bandwidth Requirements [*] u [*] Installed BW. Maximum Transatlantic Net WG (HN, L. Price) Bandwidth Requirements [*] u [*] Installed BW. Maximum](https://present5.com/presentation/5f85d79261cb961ed2105b2e6c50687e/image-9.jpg)

Transatlantic Net WG (HN, L. Price) Bandwidth Requirements [*] u [*] Installed BW. Maximum Link Occupancy 50% Assumed See http: //gate. hep. anl. gov/lprice/TAN

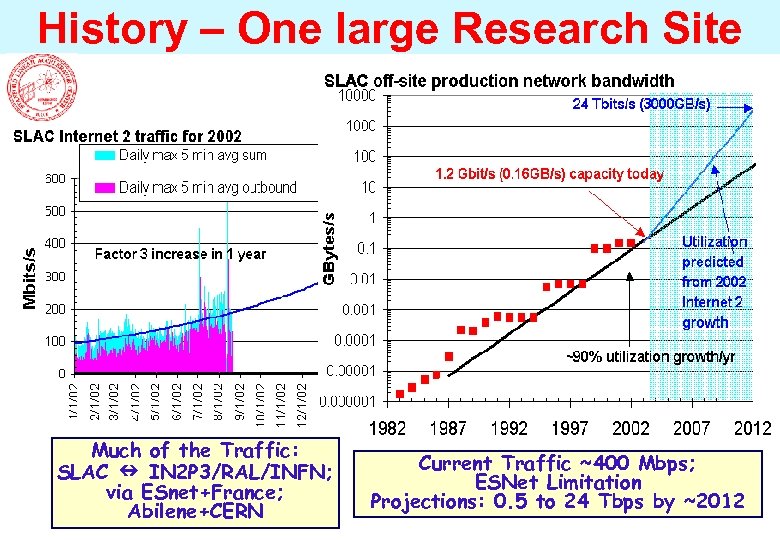

History – One large Research Site Much of the Traffic: SLAC IN 2 P 3/RAL/INFN; via ESnet+France; Abilene+CERN Current Traffic ~400 Mbps; ESNet Limitation Projections: 0. 5 to 24 Tbps by ~2012

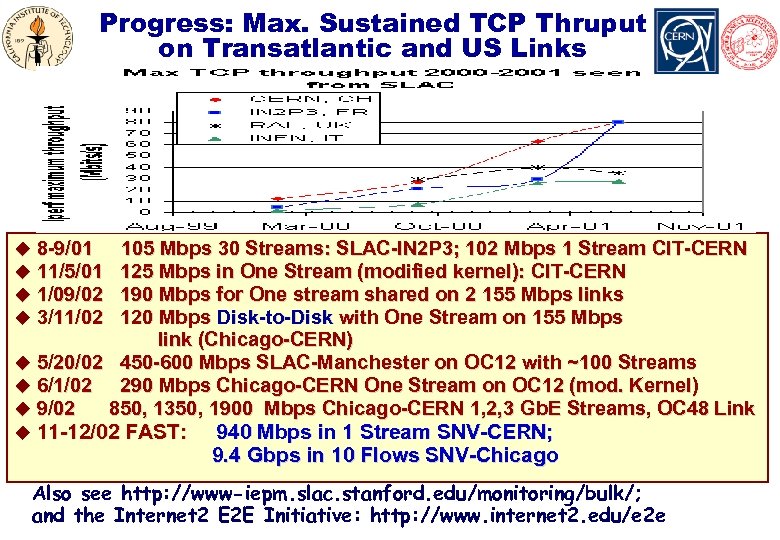

Progress: Max. Sustained TCP Thruput on Transatlantic and US Links * u 8 -9/01 u 11/5/01 u 1/09/02 u 3/11/02 105 Mbps 30 Streams: SLAC-IN 2 P 3; 102 Mbps 1 Stream CIT-CERN 125 Mbps in One Stream (modified kernel): CIT-CERN 190 Mbps for One stream shared on 2 155 Mbps links 120 Mbps Disk-to-Disk with One Stream on 155 Mbps link (Chicago-CERN) u 5/20/02 450 -600 Mbps SLAC-Manchester on OC 12 with ~100 Streams u 6/1/02 290 Mbps Chicago-CERN One Stream on OC 12 (mod. Kernel) u 9/02 850, 1350, 1900 Mbps Chicago-CERN 1, 2, 3 Gb. E Streams, OC 48 Link u 11 -12/02 FAST: 940 Mbps in 1 Stream SNV-CERN; 9. 4 Gbps in 10 Flows SNV-Chicago Also see http: //www-iepm. slac. stanford. edu/monitoring/bulk/; and the Internet 2 E 2 E Initiative: http: //www. internet 2. edu/e 2 e

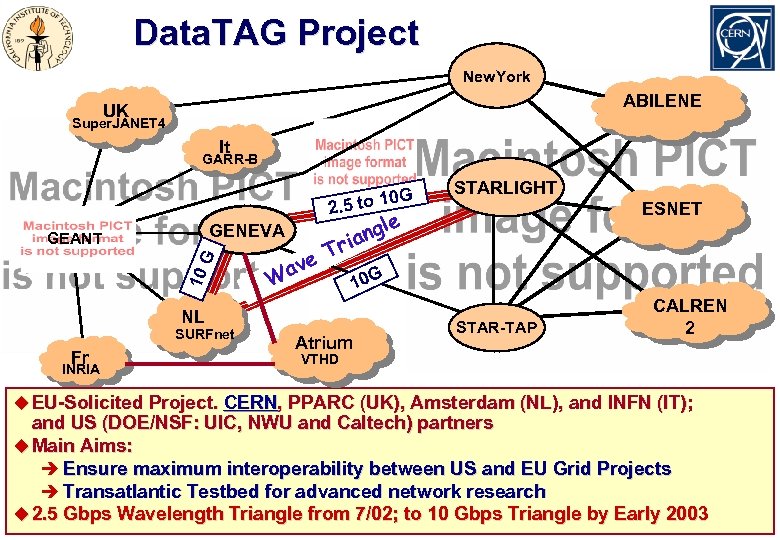

Data. TAG Project New. York ABILENE UK Super. JANET 4 It GARR-B 0 G. 5 to 1 2 GENEVA 10 G GEANT e. T av l ang ri W Fr INRIA ESNET e 10 G NL SURFnet STARLIGHT Atrium STAR-TAP CALREN 2 VTHD u EU-Solicited Project. CERN, PPARC (UK), Amsterdam (NL), and INFN (IT); and US (DOE/NSF: UIC, NWU and Caltech) partners u Main Aims: è Ensure maximum interoperability between US and EU Grid Projects è Transatlantic Testbed for advanced network research u 2. 5 Gbps Wavelength Triangle from 7/02; to 10 Gbps Triangle by Early 2003

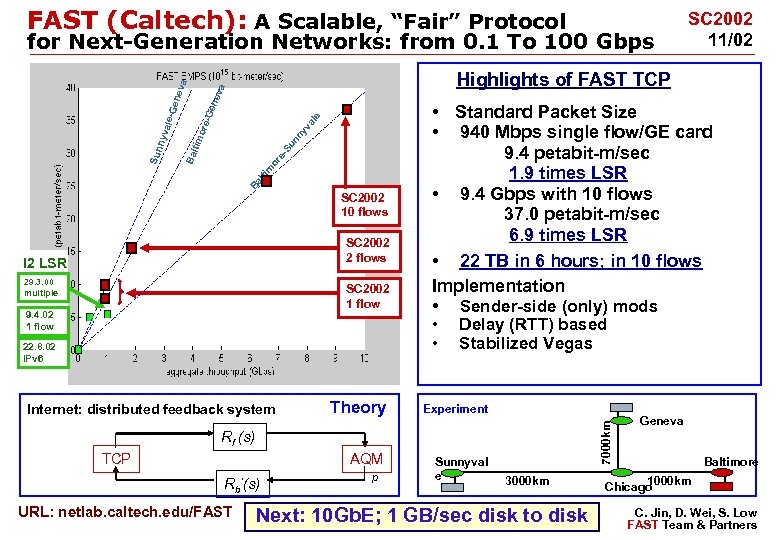

FAST (Caltech): A Scalable, “Fair” Protocol Su or e. Ba lti m SC 2002 10 flows SC 2002 2 flows I 2 LSR 29. 3. 00 multiple SC 2002 1 flow 9. 4. 02 1 flow • Standard Packet Size • 940 Mbps single flow/GE card 9. 4 petabit-m/sec 1. 9 times LSR • 9. 4 Gbps with 10 flows 37. 0 petabit-m/sec 6. 9 times LSR • 22 TB in 6 hours; in 10 flows Implementation • Sender-side (only) mods • • 22. 8. 02 IPv 6 Delay (RTT) based Stabilized Vegas Theory Experiment AQM Internet: distributed feedback system Sunnyval e 7000 km tim nn yv al e ore -Ge nev a Highlights of FAST TCP Bal Sun n yva le-G ene v a for Next-Generation Networks: from 0. 1 To 100 Gbps Rf (s) TCP Rb’(s) URL: netlab. caltech. edu/FAST SC 2002 11/02 p 3000 km Next: 10 Gb. E; 1 GB/sec disk to disk Geneva Baltimore 1000 km Chicago C. Jin, D. Wei, S. Low FAST Team & Partners

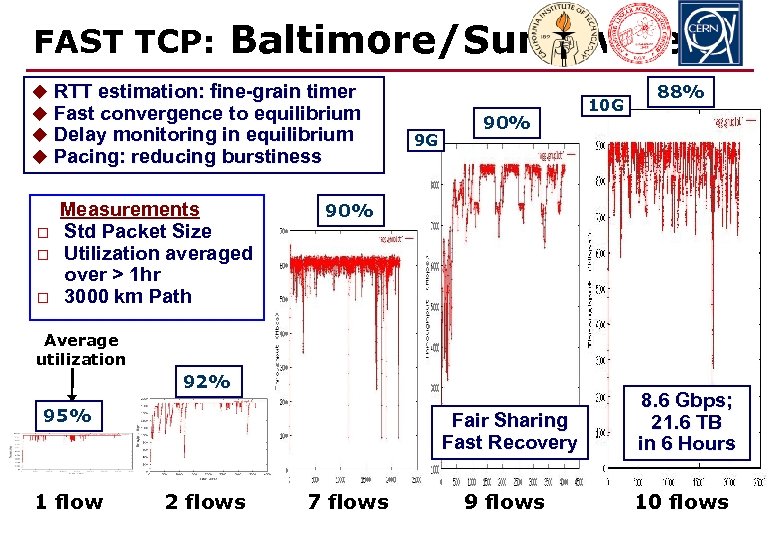

FAST TCP: Baltimore/Sunnyvale u RTT estimation: fine-grain timer u Fast convergence to equilibrium u Delay monitoring in equilibrium u Pacing: reducing burstiness o o o Measurements Std Packet Size Utilization averaged over > 1 hr 3000 km Path 9 G 90% 10 G 88% 90% Average utilization 92% Fair Sharing Fast Recovery 95% 1 flow 2 flows 7 flows 8. 6 Gbps; 21. 6 TB in 6 Hours 9 flows 10 flows

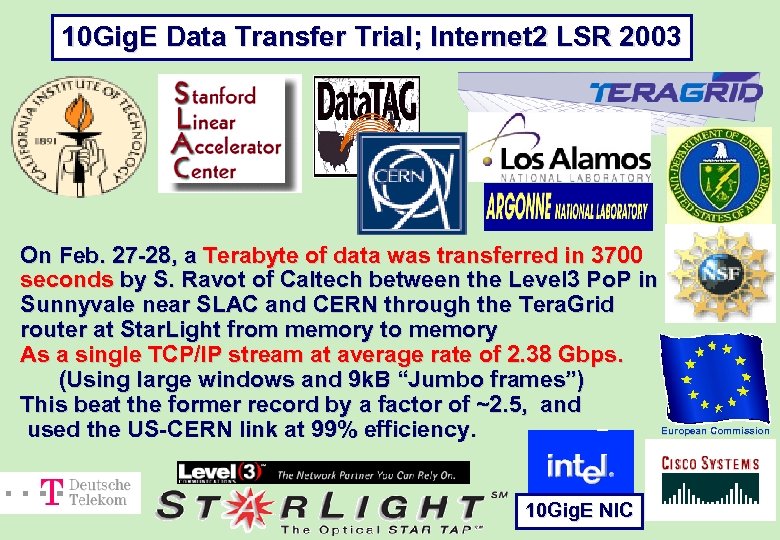

10 Gig. E Data Transfer Trial; Internet 2 LSR 2003 On Feb. 27 -28, a Terabyte of data was transferred in 3700 seconds by S. Ravot of Caltech between the Level 3 Po. P in Sunnyvale near SLAC and CERN through the Tera. Grid router at Star. Light from memory to memory As a single TCP/IP stream at average rate of 2. 38 Gbps. (Using large windows and 9 k. B “Jumbo frames”) This beat the former record by a factor of ~2. 5, and European Commission used the US-CERN link at 99% efficiency. 10 Gig. E NIC

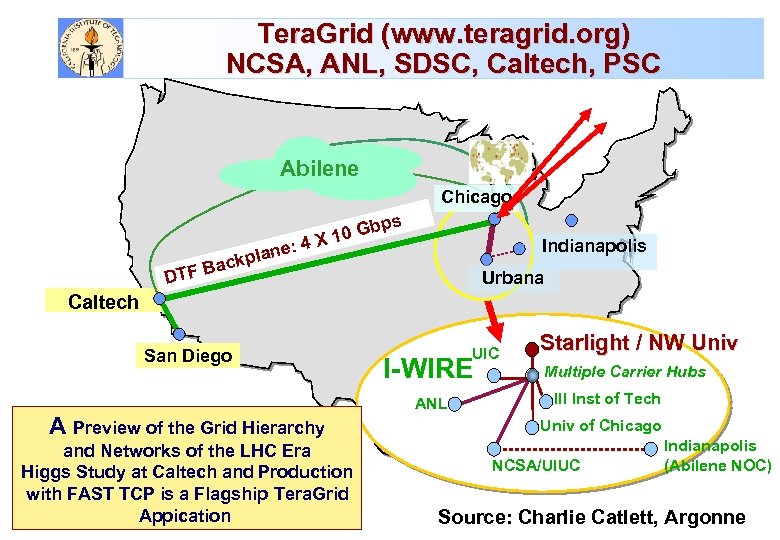

Tera. Grid (www. teragrid. org) NCSA, ANL, SDSC, Caltech, PSC Abilene Chicago X ne: 4 ckpla Gbps 10 Indianapolis a B DTF Urbana Caltech San Diego A Preview (2. 5 Gb/s, Abilene) OC-48 of the Grid Hierarchy and Networks Gb. E (Qwest) Multiple 10 of the LHC Era Higgs Study at Caltech and Production Multiple 10 Gb. E with FAST TCP Dark. Flagship Tera. Grid (I-WIRE is a Fiber) Appication UIC I-WIRE ANL Starlight / NW Univ Multiple Carrier Hubs Ill Inst of Tech Univ of Chicago NCSA/UIUC Indianapolis (Abilene NOC) Source: Charlie Catlett, Argonne

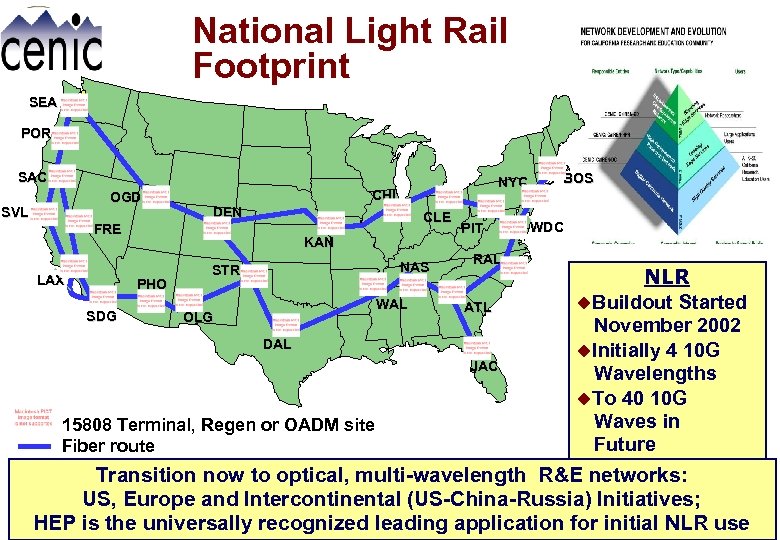

National Light Rail Footprint SEA POR SAC OGD SVL CHI DEN CLE FRE LAX KAN PHO SDG NYC NAS STR WAL OLG PIT RAL ATL DAL JAC 15808 Terminal, Regen or OADM site Fiber route BOS WDC NLR u. Buildout Started November 2002 u. Initially 4 10 G Wavelengths u. To 40 10 G Waves in Future Transition now to optical, multi-wavelength R&E networks: US, Europe and Intercontinental (US-China-Russia) Initiatives; HEP is the universally recognized leading application for initial NLR use

HENP Major Links: Bandwidth Roadmap (Scenario) in Gbps Continuing the Trend: ~1000 Times Bandwidth Growth Per Decade; We are Learning to Use and Share Multi-Gbps Networks Efficiently; HENP is leading the way towards future networks & dynamic Grids

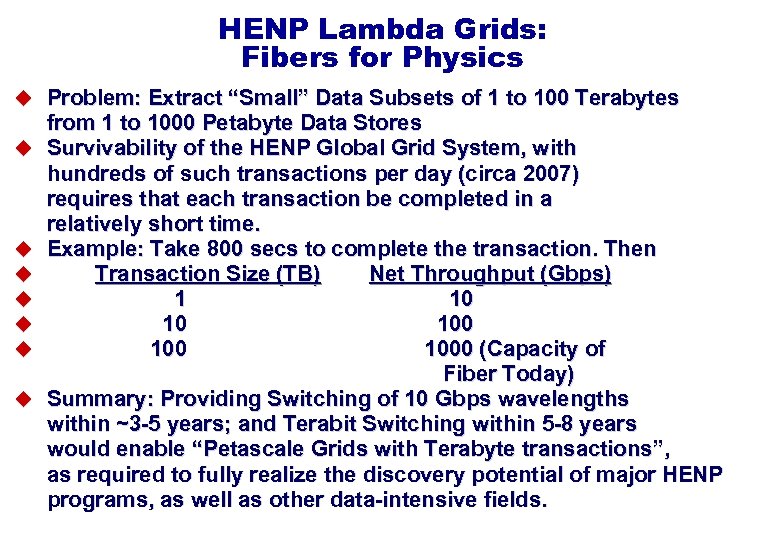

HENP Lambda Grids: Fibers for Physics u Problem: Extract “Small” Data Subsets of 1 to 100 Terabytes u u u u from 1 to 1000 Petabyte Data Stores Survivability of the HENP Global Grid System, with hundreds of such transactions per day (circa 2007) requires that each transaction be completed in a relatively short time. Example: Take 800 secs to complete the transaction. Then Transaction Size (TB) Net Throughput (Gbps) 1 10 10 100 1000 (Capacity of Fiber Today) Summary: Providing Switching of 10 Gbps wavelengths within ~3 -5 years; and Terabit Switching within 5 -8 years would enable “Petascale Grids with Terabyte transactions”, as required to fully realize the discovery potential of major HENP programs, as well as other data-intensive fields.

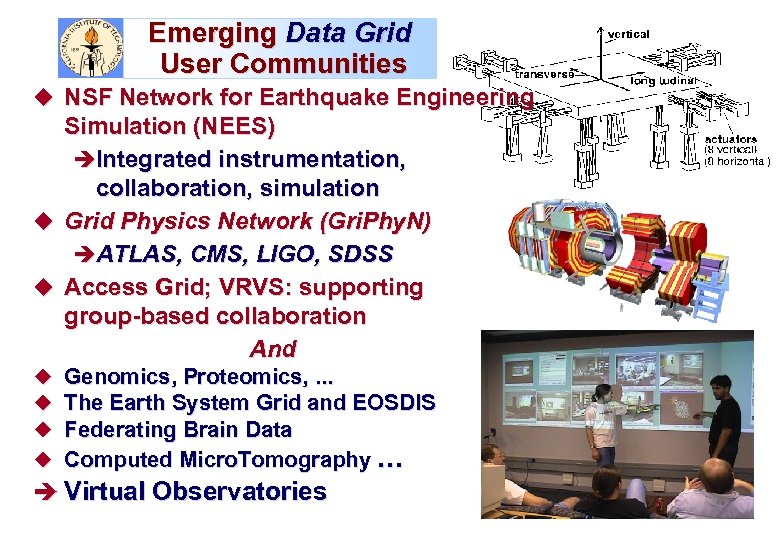

Emerging Data Grid User Communities u NSF Network for Earthquake Engineering Simulation (NEES) èIntegrated instrumentation, collaboration, simulation u Grid Physics Network (Gri. Phy. N) èATLAS, CMS, LIGO, SDSS u Access Grid; VRVS: supporting group-based collaboration And u u Genomics, Proteomics, . . . The Earth System Grid and EOSDIS Federating Brain Data Computed Micro. Tomography … è Virtual Observatories

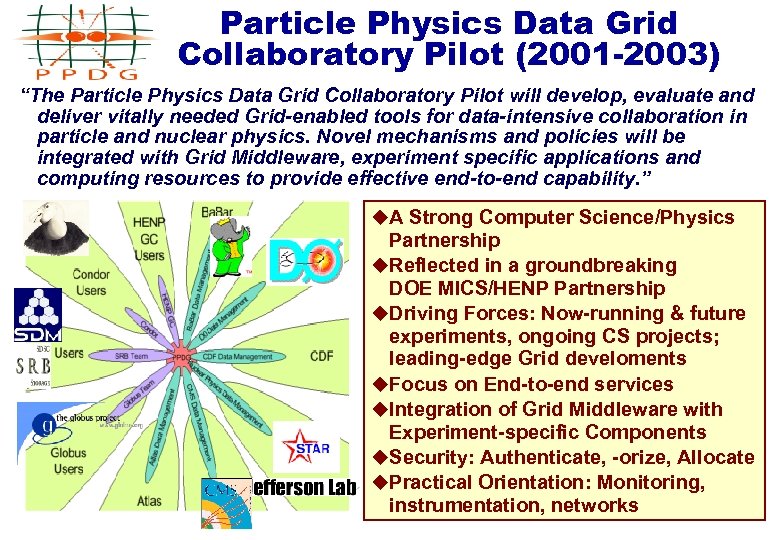

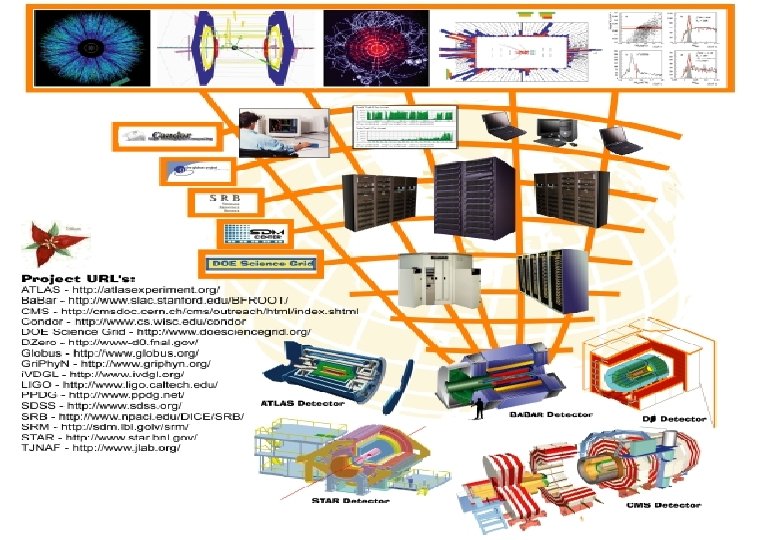

Particle Physics Data Grid Collaboratory Pilot (2001 -2003) “The Particle Physics Data Grid Collaboratory Pilot will develop, evaluate and deliver vitally needed Grid-enabled tools for data-intensive collaboration in particle and nuclear physics. Novel mechanisms and policies will be integrated with Grid Middleware, experiment specific applications and computing resources to provide effective end-to-end capability. ” u. A Strong Computer Science/Physics Partnership u. Reflected in a groundbreaking DOE MICS/HENP Partnership u. Driving Forces: Now-running & future experiments, ongoing CS projects; leading-edge Grid develoments u. Focus on End-to-end services u. Integration of Grid Middleware with Experiment-specific Components u. Security: Authenticate, -orize, Allocate u. Practical Orientation: Monitoring, instrumentation, networks

PPDG Mission and Focii Today u Mission: Enabling new scales of research in experimental physics and experimental computer science r Advancing Grid Technologies by addressing key issues in architecture, integration, deployment and robustness u Vertical Integration of Grid Technologies into the Applications frameworks of Major Experimental Programs r Ongoing as Grids, Networks and Applications Progress u Deployment, hardening and extensions of Common Grid services and standards r Data replication, storage and job management, monitoring and task execution-planning. u Mission-oriented, Interdisciplinary teams of physicists, software and network engineers, and computer scientists r Driven by demanding end-to-end applications of experimental physics

PPDG Accomplishments (I) u First-Generation HENP Application Grids; Grid Subsystems r Production Simulation Grids for ATLAS and CMS; STAR Distributed Analysis Jobs: to 30+ TBytes, 30+ Sites r Data Replication for Ba. Bar: Terabyte Stores systematically replicated from California to France and the UK. r Replication and Storage Management for STAR and JLAB: Development and Deployment of Standard APIs, and Interoperable Implementations. r Data Transfer, Job and Information Management for D 0: Grid. FTP integrated with SAM; Condor-G job scheduler, MDS resource discovery all integrated with SAM. u Initial Security Infrastructure for Virtual Organizations: r PKI certificate management, policies and trust relationships (using DOE Science Grid and Globus) r Standardizing Authorization mechanisms: standard callouts for Local Center Authorization for Globus, EDG r Prototyping secure credential stores r Engagement of site security teams

PPDG Accomplishments (II) u Data and Storage Management: r Robust data transfer over heterogeneous networks using standard and next-generation protocols: Grid. FTP, bbcp; Grid. DT, FAST TCP r Distributed Data Replica management: SRB, SAM, SRM r Common Storage Management interface and services across diverse implementations: SRM - HPSS, Jasmine, Enstore r Object Collection management in diverse RDBMS’s: CAIGEE, SOCATS u Job Planning, Execution and Monitoring: r Job scheduling based on resource discovery and status: Condor-G and extensions for strategy & policy r Retry and Fault Tolerance in response to error conditions: hardened gram, gass-cache, ftsh; Condor-G r Distributed monitoring infrastructure for system tracking, resource discovery, resource & job information: Monalisa, MDS, Hawkeye u Prototypes and Evaluations r Grid enabled physics analysis tools; prototypical environments r End to end troubleshooting and fault handling r Cooperative Monitoring of Grid, Fabric, Applications

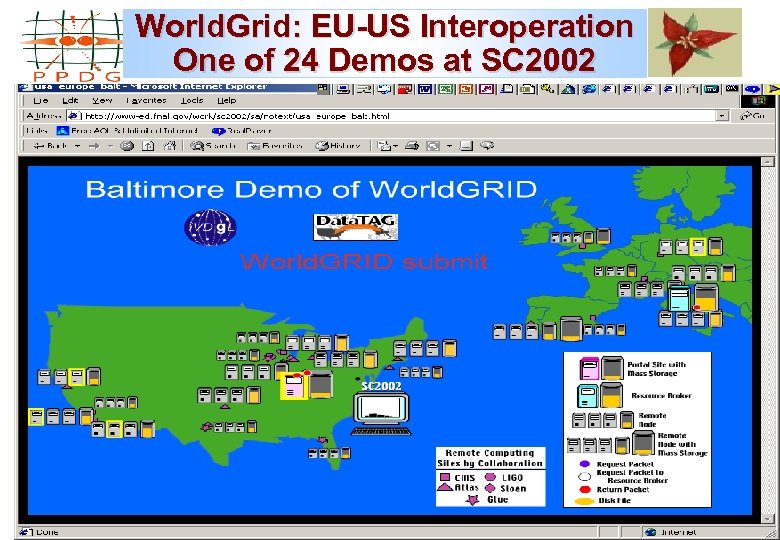

World. Grid: EU-US Interoperation One of 24 Demos at SC 2002 Collaborating with i. VDGL and Data. TAG on international grid testbeds will lead to easier deployment of experiment grids across the globe.

PPDG Collaborators Participated in 24 SC 2002 Demos

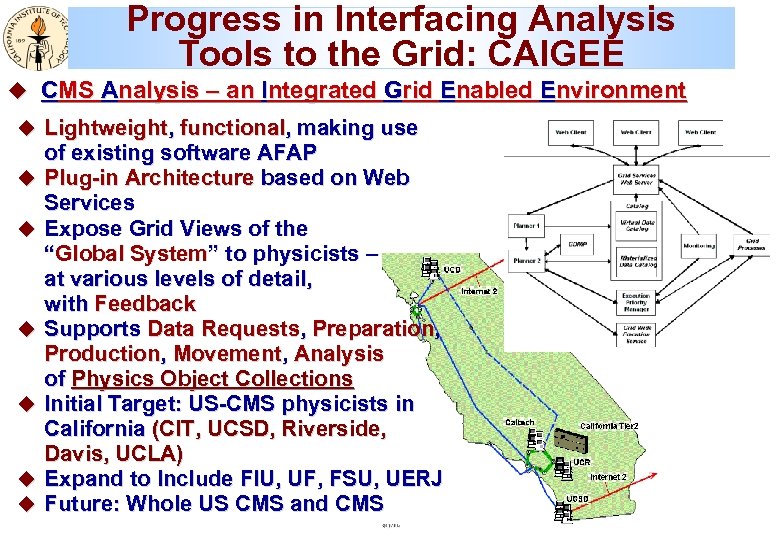

Progress in Interfacing Analysis CAIGEE Tools to the Grid: CAIGEE u CMS Analysis – an Integrated Grid Enabled Environment u Lightweight, functional, making use u u u of existing software AFAP Plug-in Architecture based on Web Services Expose Grid Views of the “Global System” to physicists – at various levels of detail, with Feedback Supports Data Requests, Preparation, Production, Movement, Analysis of Physics Object Collections Initial Target: US-CMS physicists in California (CIT, UCSD, Riverside, Davis, UCLA) Expand to Include FIU, UF, FSU, UERJ Future: Whole US CMS and CMS

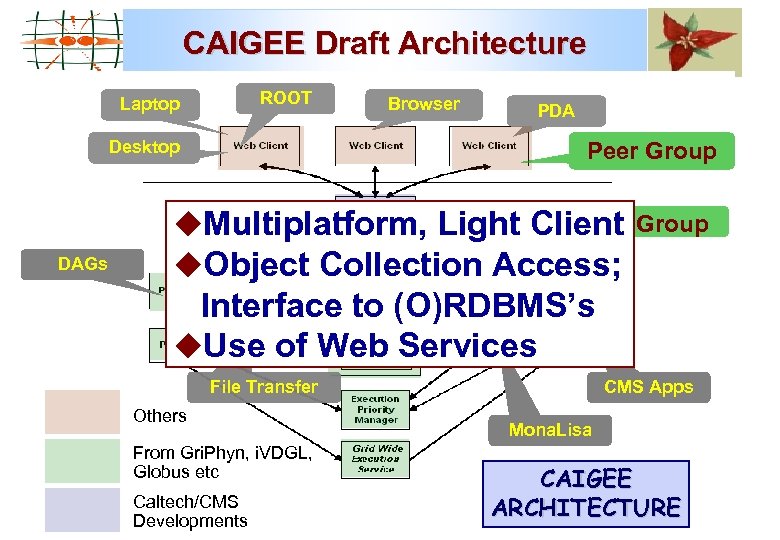

CAIGEE Draft Architecture ROOT Laptop Desktop DAGs Browser PDA Peer Group Clarens Super Peer u. Multiplatform, Light Client Group u. Object Collection Access; Interface to (O)RDBMS’s u. Use of Web Services File Transfer Others From Gri. Phyn, i. VDGL, Globus etc Caltech/CMS Developments CMS Apps Mona. Lisa CAIGEE ARCHITECTURE

PPDG: Common Technologies, Tools & Applications: Who Uses What ?

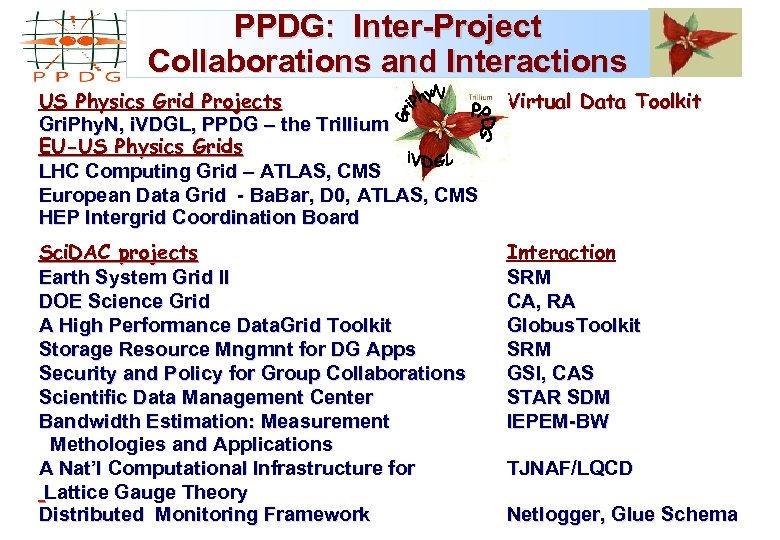

PPDG: Inter-Project Collaborations and Interactions US Physics Grid Projects Gri. Phy. N, i. VDGL, PPDG – the Trillium EU-US Physics Grids LHC Computing Grid – ATLAS, CMS European Data Grid - Ba. Bar, D 0, ATLAS, CMS HEP Intergrid Coordination Board Virtual Data Toolkit Sci. DAC projects Earth System Grid II DOE Science Grid A High Performance Data. Grid Toolkit Storage Resource Mngmnt for DG Apps Security and Policy for Group Collaborations Scientific Data Management Center Bandwidth Estimation: Measurement Methologies and Applications A Nat’l Computational Infrastructure for Lattice Gauge Theory Distributed Monitoring Framework Interaction SRM CA, RA Globus. Toolkit SRM GSI, CAS STAR SDM IEPEM-BW TJNAF/LQCD Netlogger, Glue Schema

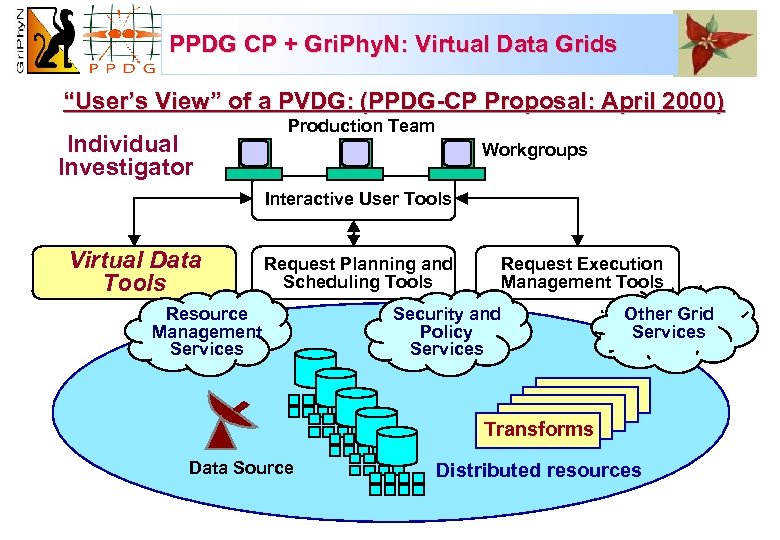

PPDG CP + Gri. Phy. N: Virtual Data Grids “User’s View” of a PVDG: (PPDG-CP Proposal: April 2000) Production Team Individual Investigator Workgroups Interactive User Tools Virtual Data Tools Request Planning and Scheduling Tools Resource è Resource Management è Management Services è Services Request Execution Management Tools Security and è Security and Policy è Policy Services è Services Other Grid è Other Grid Services è Services Transforms Data Source Distributed resources

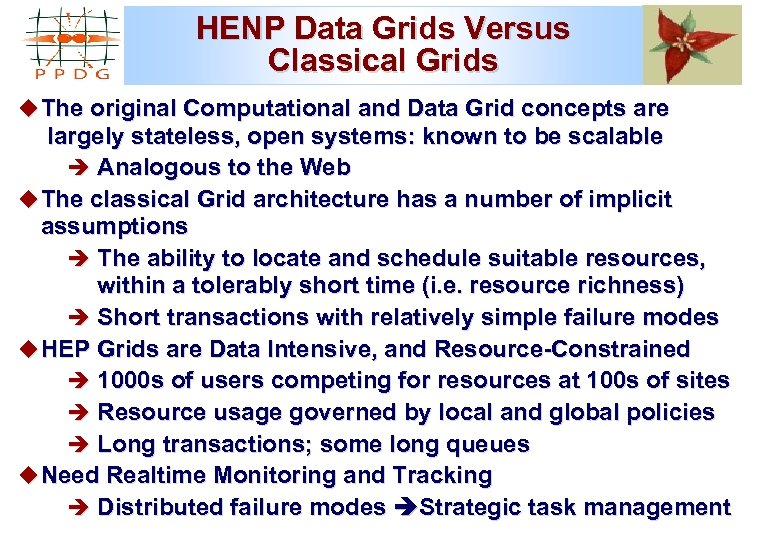

HENP Data Grids Versus Classical Grids u The original Computational and Data Grid concepts are largely stateless, open systems: known to be scalable è Analogous to the Web u The classical Grid architecture has a number of implicit assumptions è The ability to locate and schedule suitable resources, within a tolerably short time (i. e. resource richness) è Short transactions with relatively simple failure modes u HEP Grids are Data Intensive, and Resource-Constrained è 1000 s of users competing for resources at 100 s of sites è Resource usage governed by local and global policies è Long transactions; some long queues u Need Realtime Monitoring and Tracking è Distributed failure modes Strategic task management

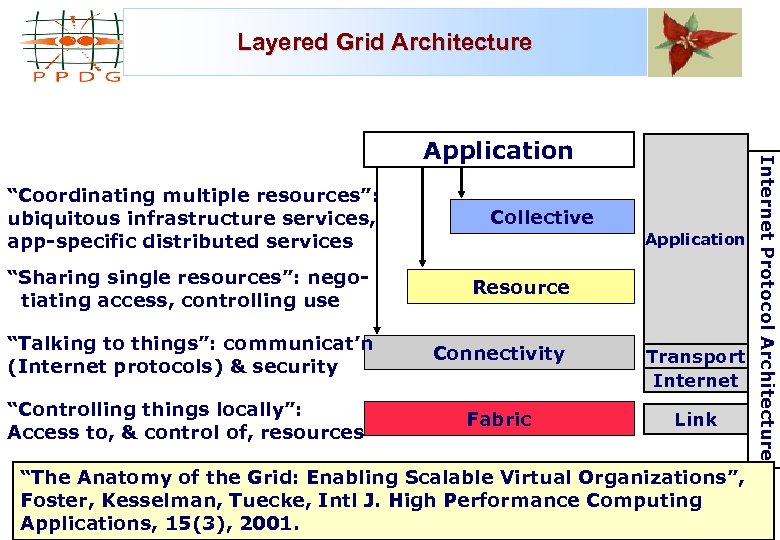

Layered Grid Architecture “Coordinating multiple resources”: ubiquitous infrastructure services, app-specific distributed services Collective Application “Sharing single resources”: negotiating access, controlling use Resource “Talking to things”: communicat’n (Internet protocols) & security Connectivity Transport Internet “Controlling things locally”: Access to, & control of, resources Fabric Link “The Anatomy of the Grid: Enabling Scalable Virtual Organizations”, Foster, Kesselman, Tuecke, Intl J. High Performance Computing Applications, 15(3), 2001. Internet Protocol Architecture Application

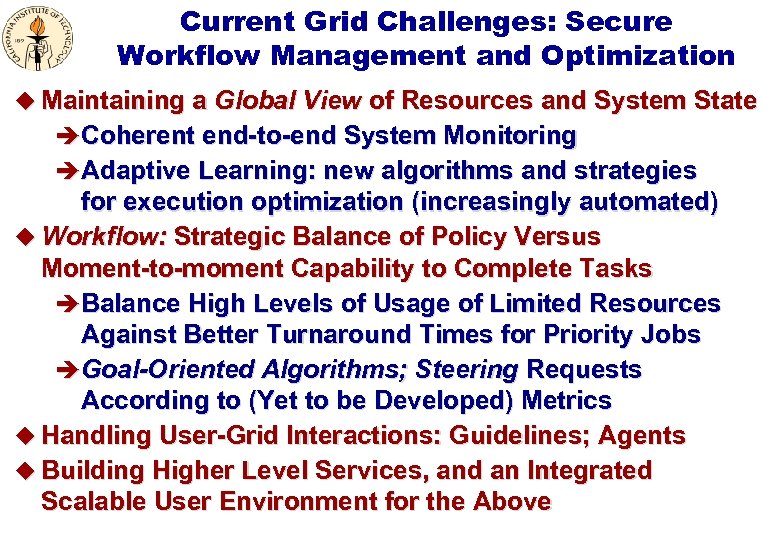

Current Grid Challenges: Secure Workflow Management and Optimization u Maintaining a Global View of Resources and System State è Coherent end-to-end System Monitoring è Adaptive Learning: new algorithms and strategies for execution optimization (increasingly automated) u Workflow: Strategic Balance of Policy Versus Moment-to-moment Capability to Complete Tasks è Balance High Levels of Usage of Limited Resources Against Better Turnaround Times for Priority Jobs è Goal-Oriented Algorithms; Steering Requests According to (Yet to be Developed) Metrics u Handling User-Grid Interactions: Guidelines; Agents u Building Higher Level Services, and an Integrated Scalable User Environment for the Above

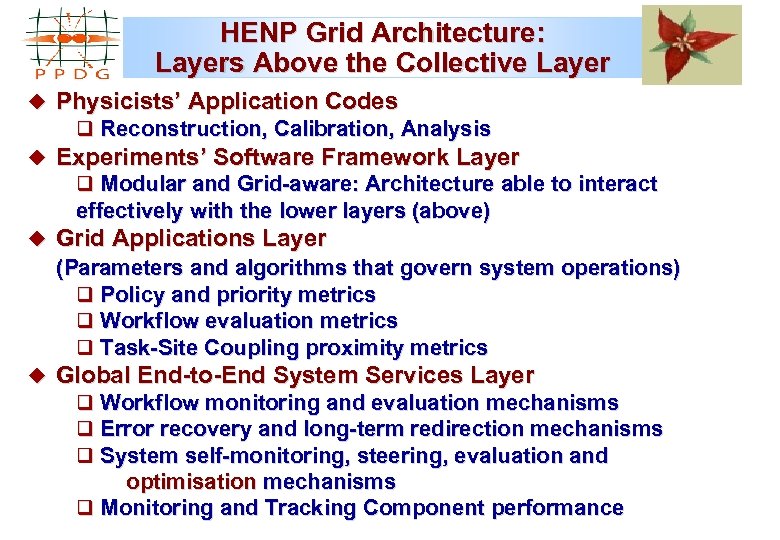

HENP Grid Architecture: Layers Above the Collective Layer u Physicists’ Application Codes q Reconstruction, Calibration, Analysis u Experiments’ Software Framework Layer q Modular and Grid-aware: Architecture able to interact effectively with the lower layers (above) u Grid Applications Layer (Parameters and algorithms that govern system operations) q Policy and priority metrics q Workflow evaluation metrics q Task-Site Coupling proximity metrics u Global End-to-End System Services Layer q Workflow monitoring and evaluation mechanisms q Error recovery and long-term redirection mechanisms q System self-monitoring, steering, evaluation and optimisation mechanisms q Monitoring and Tracking Component performance

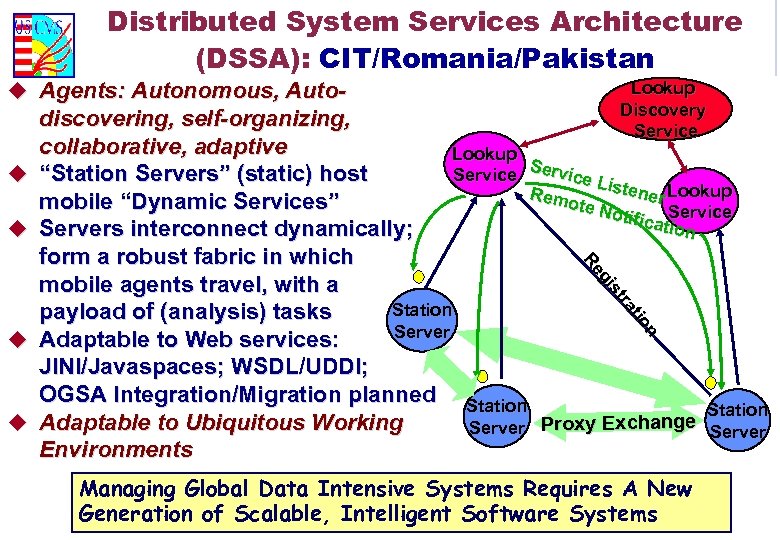

Distributed System Services Architecture (DSSA): CIT/Romania/Pakistan u Agents: Autonomous, Auto- u u u discovering, self-organizing, collaborative, adaptive Lookup S “Station Servers” (static) host Service Li stene Lookup Rem r ote N mobile “Dynamic Services” otific Service ation Servers interconnect dynamically; form a robust fabric in which mobile agents travel, with a Station payload of (analysis) tasks Server Adaptable to Web services: JINI/Javaspaces; WSDL/UDDI; OGSA Integration/Migration planned Station Adaptable to Ubiquitous Working Proxy Exchange Server Environments n on ttiio ra ra stt s gii g Re Re u Lookup Discovery Service Managing Global Data Intensive Systems Requires A New Generation of Scalable, Intelligent Software Systems

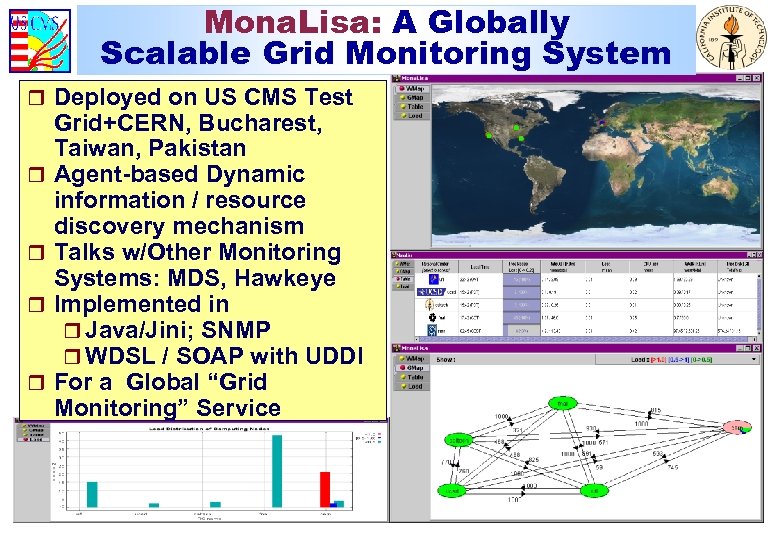

Mona. Lisa: A Globally Scalable Grid Monitoring System r Deployed on US CMS Test r r Grid+CERN, Bucharest, Taiwan, Pakistan Agent-based Dynamic information / resource discovery mechanism Talks w/Other Monitoring Systems: MDS, Hawkeye Implemented in r Java/Jini; SNMP r WDSL / SOAP with UDDI For a Global “Grid Monitoring” Service

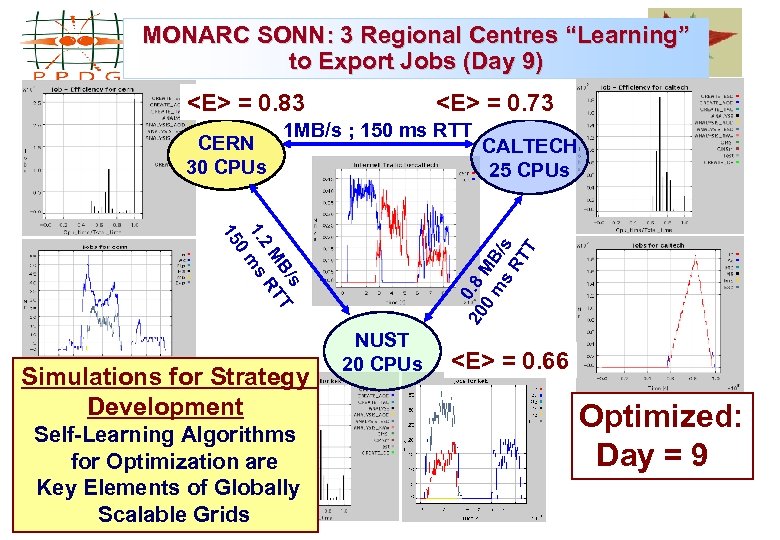

MONARC SONN: 3 Regional Centres “Learning” to Export Jobs (Day 9) <E> = 0. 83 CERN 30 CPUs <E> = 0. 73 1 MB/s ; 150 ms RTT Self-Learning Algorithms for Optimization are Key Elements of Globally Scalable Grids 20 0. 8 0 MB m s /s RT T s B/ T M RT 2 1. ms 0 15 Simulations for Strategy Development CALTECH 25 CPUs NUST 20 CPUs <E> = 0. 66 Optimized: Day = 9

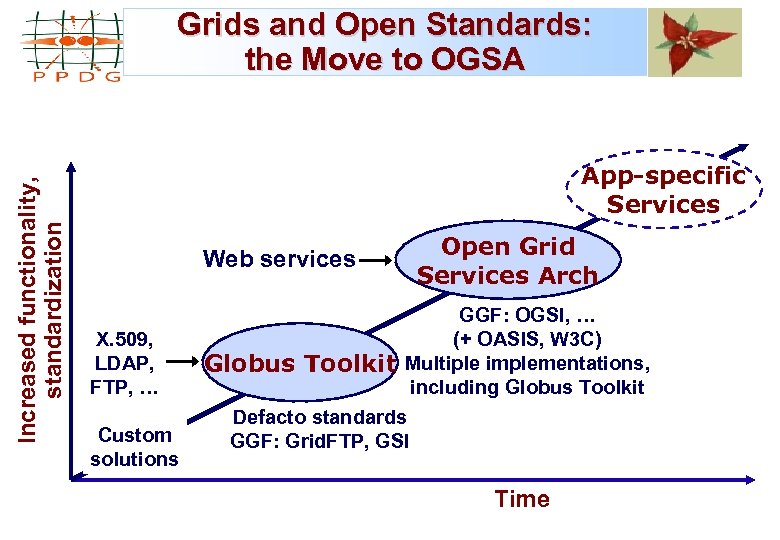

Increased functionality, standardization Grids and Open Standards: the Move to OGSA App-specific Services Web services X. 509, LDAP, FTP, … Custom solutions Open Grid Services Arch GGF: OGSI, … (+ OASIS, W 3 C) Globus Toolkit Multiple implementations, including Globus Toolkit Defacto standards GGF: Grid. FTP, GSI Time

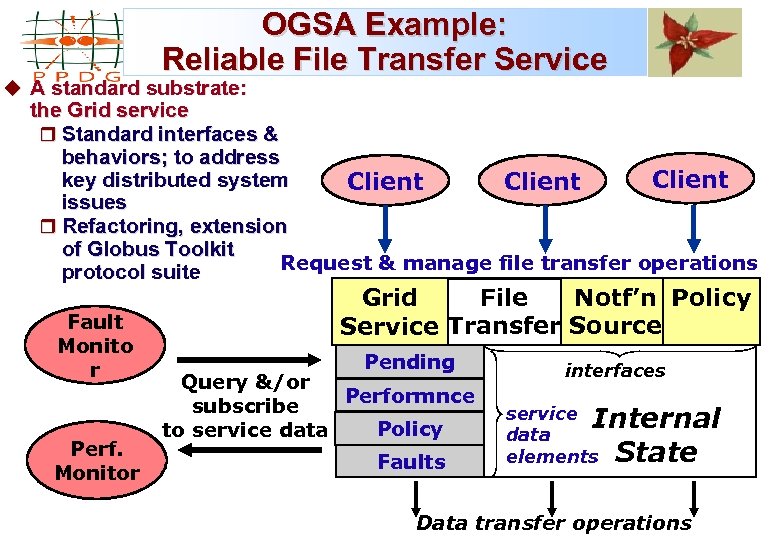

OGSA Example: Reliable File Transfer Service u A standard substrate: the Grid service r Standard interfaces & behaviors; to address key distributed system Client issues r Refactoring, extension of Globus Toolkit Request & manage file transfer operations protocol suite Fault Monito r Perf. Monitor Notf’n Policy File Grid Service Transfer Source Pending interfaces Faults service Internal data elements State Query &/or Performnce subscribe Policy to service data Data transfer operations

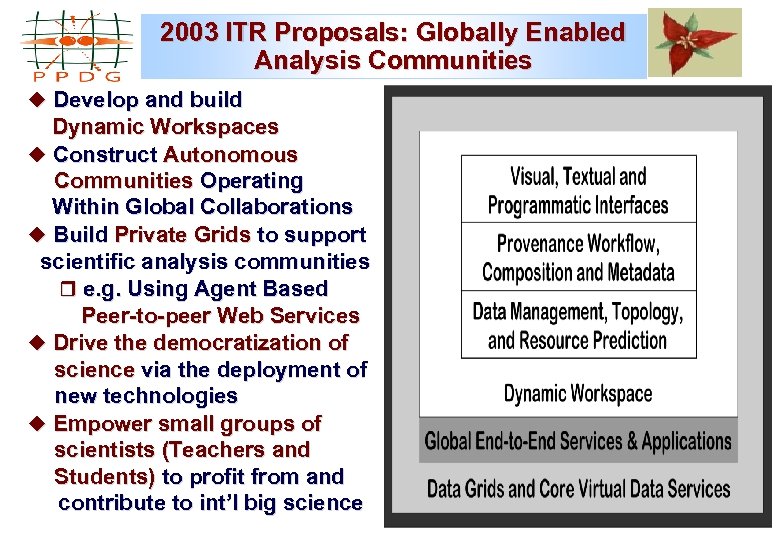

2003 ITR Proposals: Globally Enabled Analysis Communities u Develop and build Dynamic Workspaces u Construct Autonomous Communities Operating Within Global Collaborations u Build Private Grids to support scientific analysis communities r e. g. Using Agent Based Peer-to-peer Web Services u Drive the democratization of science via the deployment of new technologies u Empower small groups of scientists (Teachers and Students) to profit from and contribute to int’l big science

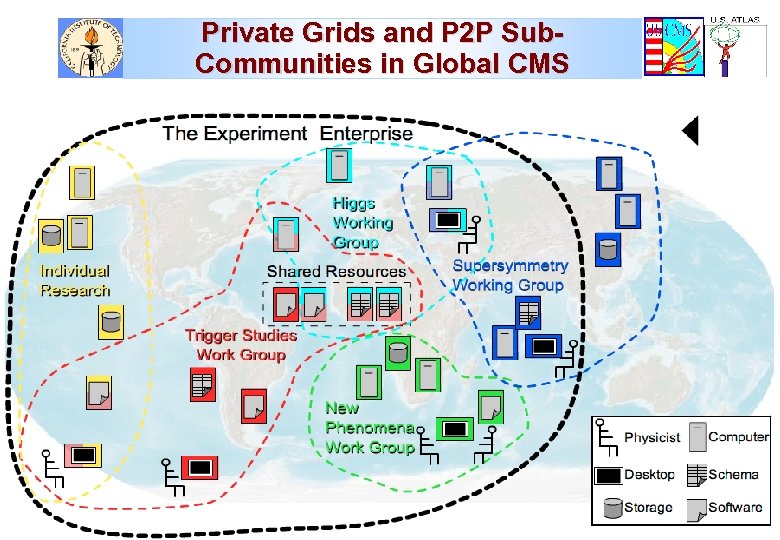

Private Grids and P 2 P Sub. Communities in Global CMS

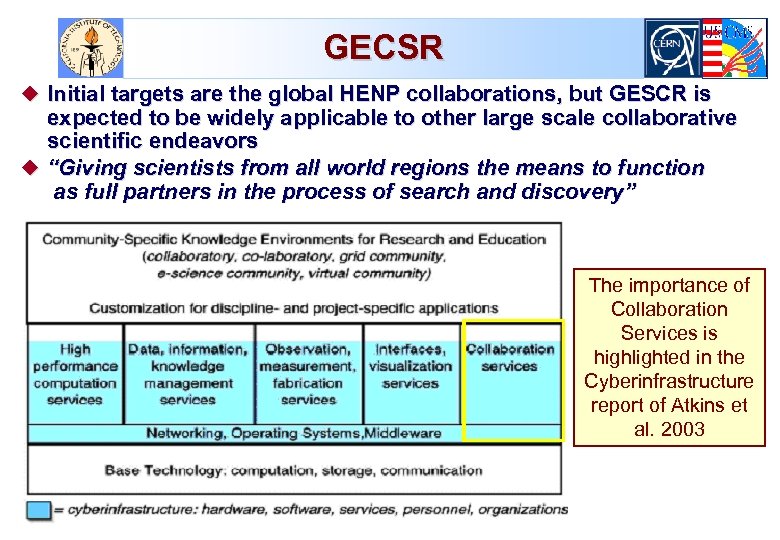

GECSR u Initial targets are the global HENP collaborations, but GESCR is expected to be widely applicable to other large scale collaborative scientific endeavors u “Giving scientists from all world regions the means to function as full partners in the process of search and discovery” The importance of Collaboration Services is highlighted in the Cyberinfrastructure report of Atkins et al. 2003

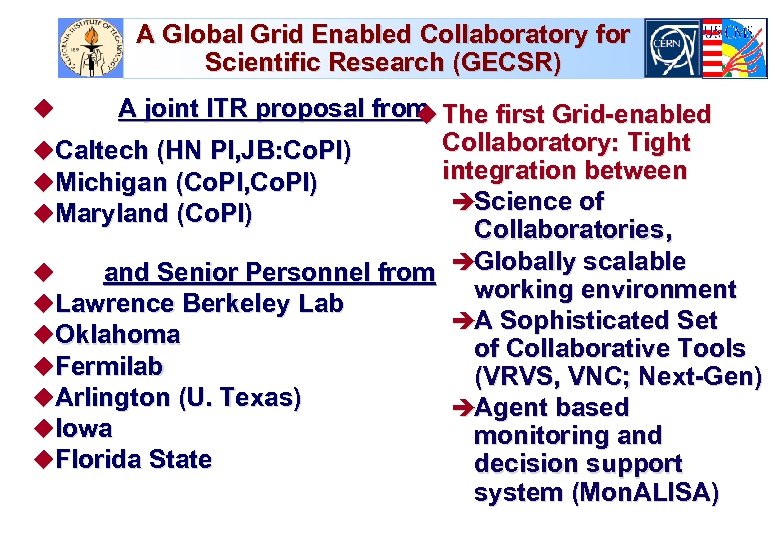

A Global Grid Enabled Collaboratory for Scientific Research (GECSR) A joint ITR proposal from The first Grid-enabled u Collaboratory: Tight u. Caltech (HN PI, JB: Co. PI) integration between u. Michigan (Co. PI, Co. PI) èScience of u. Maryland (Co. PI) Collaboratories, u and Senior Personnel from èGlobally scalable working environment u. Lawrence Berkeley Lab èA Sophisticated Set u. Oklahoma of Collaborative Tools u. Fermilab (VRVS, VNC; Next-Gen) u. Arlington (U. Texas) èAgent based u. Iowa monitoring and u. Florida State decision support system (Mon. ALISA) u

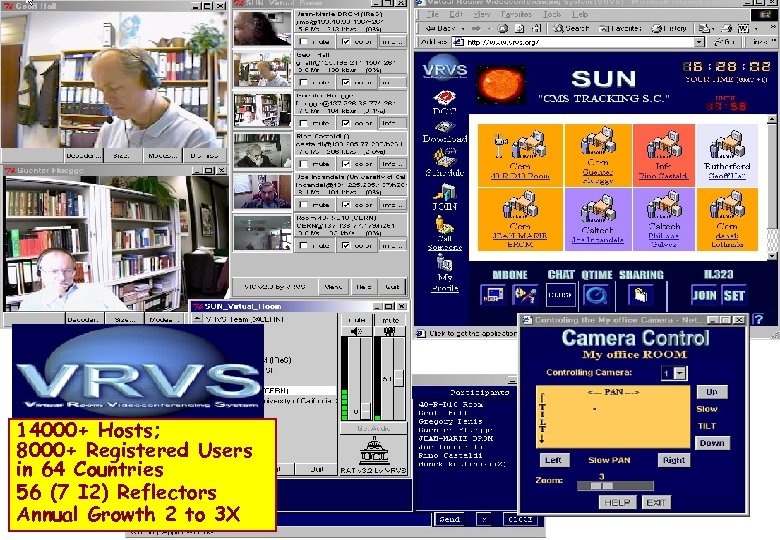

14000+ Hosts; 8000+ Registered Users in 64 Countries 56 (7 I 2) Reflectors Annual Growth 2 to 3 X

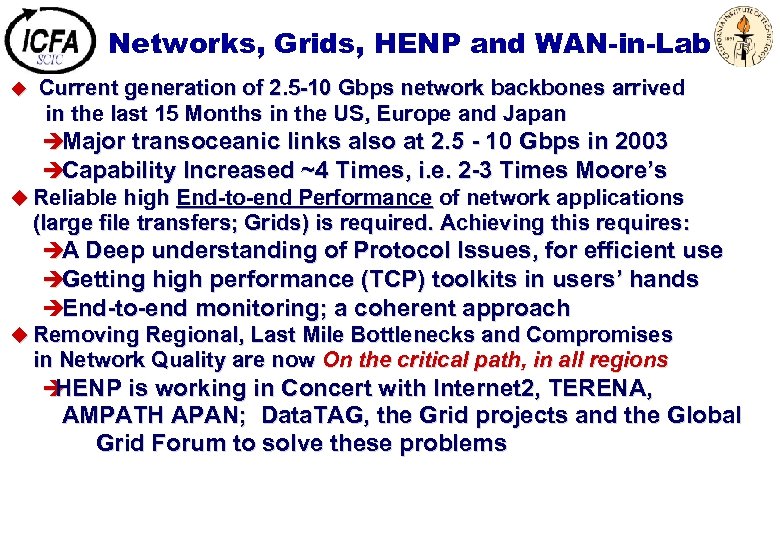

Networks, Grids, HENP and WAN-in-Lab Current generation of 2. 5 -10 Gbps network backbones arrived in the last 15 Months in the US, Europe and Japan èMajor transoceanic links also at 2. 5 - 10 Gbps in 2003 èCapability Increased ~4 Times, i. e. 2 -3 Times Moore’s u Reliable high End-to-end Performance of network applications (large file transfers; Grids) is required. Achieving this requires: èA Deep understanding of Protocol Issues, for efficient use èGetting high performance (TCP) toolkits in users’ hands èEnd-to-end monitoring; a coherent approach u Removing Regional, Last Mile Bottlenecks and Compromises in Network Quality are now On the critical path, in all regions è HENP is working in Concert with Internet 2, TERENA, u AMPATH APAN; Data. TAG, the Grid projects and the Global Grid Forum to solve these problems

ICFA Standing Committee on Interregional Connectivity (SCIC) u Created by ICFA in July 1998 in Vancouver ; Following ICFA-NTF u CHARGE: u Make recommendations to ICFA concerning the connectivity between the Americas, Asia and Europe (and network requirements of HENP) è As part of the process of developing these recommendations, the committee should q Monitor traffic q Keep track of technology developments q Periodically review forecasts of future bandwidth needs, and q Provide early warning of potential problems u u u Create subcommittees when necessary to meet the charge Representatives: Major labs, ECFA, ACFA, NA Users, S. America The chair of the committee should report to ICFA once per year, at its joint meeting with laboratory directors (Feb. 2003)

SCIC in 2002 -3 A Period of Intense Activity u Formed WGs in March 2002; 9 Meetings in 12 Months u Strong Focus on the Digital Divide u Presentations at Meetings and Workshops (e. g. LISHEP, APAN, AMPATH, ICTP and ICFA Seminars) u HENP more visible to governments: in the WSIS Process u Five Reports; Presented to ICFA Feb. 13, 2003 See http: //cern. ch/icfa-scic u Main Report: “Networking for HENP” u Monitoring WG Report u Advanced Technologies WG Report u Digital Divide in Russia Report [H. Newman et al. ] [L. Cottrell] [R. Hughes-Jones, O. Martin et al. ] [A. Santoro et al. ] [V. Ilyin]

SCIC Work in 2003 u Continue Digital Divide Focus è Improve and Systematize Information in Europe; in Cooperation with TERENA and SERENATE è More in-depth information on Asia, with APAN è More in-depth information on South America, with AMPATH è Begin Work on Africa, with ICTP u Set Up HENP Networks Web Site and Database è Share Information on Problems, Pricing; Example Solutions u Continue and if Possible Strengthen Monitoring Work (IEPM) u Continue Work on Specific Improvements: è Brazil and So. America; Romania; Russia; India; Pakistan, China u An ICFA-Sponsored Statement at the World Summit on the Information Society (12/03 in Geneva), prepared by SCIC +CERN u Watch Requirements; the “Lambda” & “Grid Analysis” revolutions u Discuss, Begin to Create a New “Culture of Collaboration”

5f85d79261cb961ed2105b2e6c50687e.ppt