f474c4a34b7b63cebe8d65ea91a6b7f8.ppt

- Количество слайдов: 40

HENP Grids and Networks Global Virtual Organizations Harvey B Newman, Caltech Optical Networks and Grids Meeting the Advanced Network Needs of Science May 7, 2002 http: //l 3 www. cern. ch/~newman/HENPGrids. Nets_I 2 Mem 050702. ppt

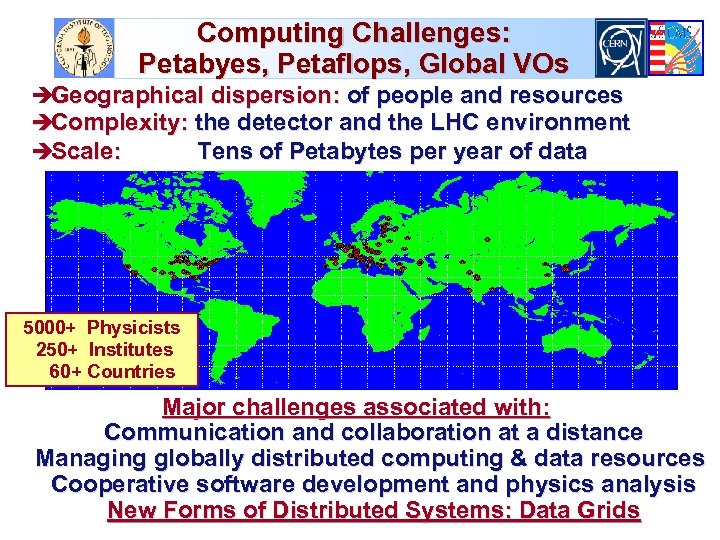

Computing Challenges: Petabyes, Petaflops, Global VOs èGeographical dispersion: of people and resources èComplexity: the detector and the LHC environment èScale: Tens of Petabytes per year of data 5000+ Physicists 250+ Institutes 60+ Countries Major challenges associated with: Communication and collaboration at a distance Managing globally distributed computing & data resources Cooperative software development and physics analysis New Forms of Distributed Systems: Data Grids

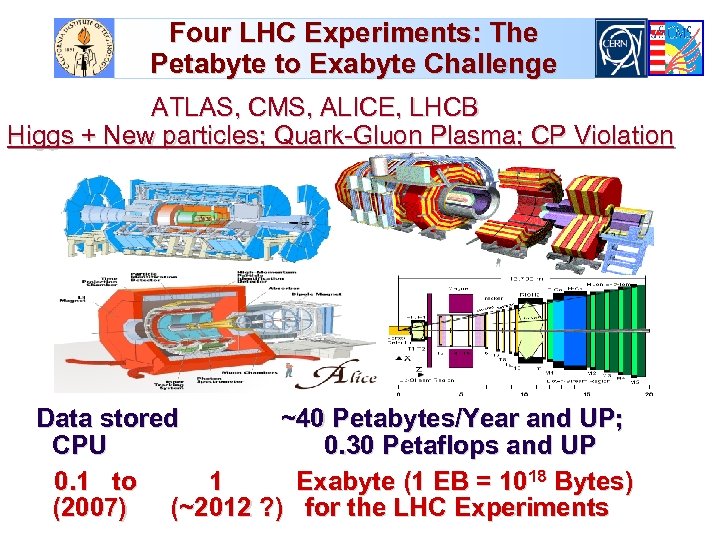

Four LHC Experiments: The Petabyte to Exabyte Challenge ATLAS, CMS, ALICE, LHCB Higgs + New particles; Quark-Gluon Plasma; CP Violation Data stored ~40 Petabytes/Year and UP; CPU 0. 30 Petaflops and UP 0. 1 to 1 Exabyte (1 EB = 1018 Bytes) (2007) (~2012 ? ) for the LHC Experiments

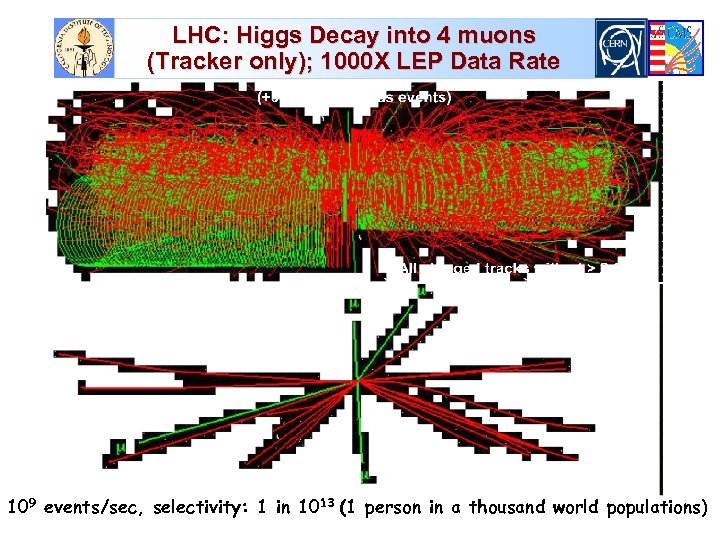

LHC: Higgs Decay into 4 muons (Tracker only); 1000 X LEP Data Rate 109 events/sec, selectivity: 1 in 1013 (1 person in a thousand world populations)

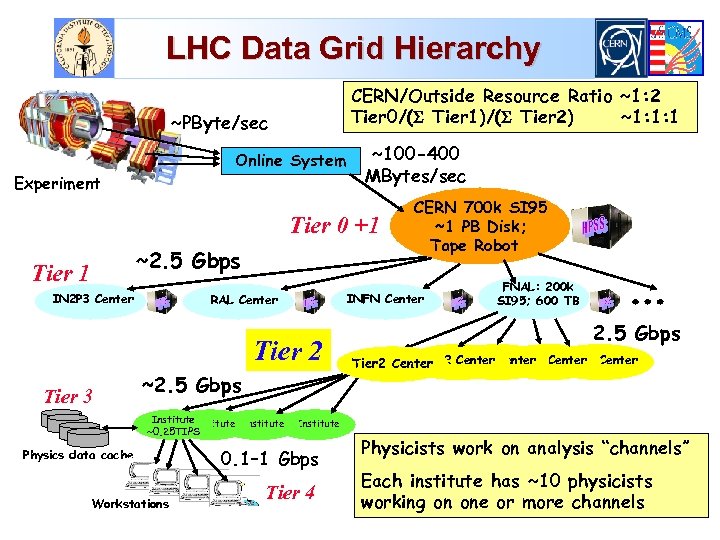

LHC Data Grid Hierarchy CERN/Outside Resource Ratio ~1: 2 Tier 0/( Tier 1)/( Tier 2) ~1: 1: 1 ~PByte/sec Online System Experiment ~100 -400 MBytes/sec Tier 0 +1 ~2. 5 Gbps Tier 1 IN 2 P 3 Center INFN Center RAL Center Tier 2 Tier 3 ~2. 5 Gbps Institute ~0. 25 TIPS Physics data cache Workstations CERN 700 k SI 95 ~1 PB Disk; Tape Robot FNAL: 200 k SI 95; 600 TB 2. 5 Gbps Tier 2 Center Tier 2 Center Institute 0. 1– 1 Gbps Tier 4 Physicists work on analysis “channels” Each institute has ~10 physicists working on one or more channels

Next Generation Networks for Experiments: Goals and Needs Large data samples explored analyzed by thousands of globally dispersed scientists, in hundreds of teams u Providing rapid access to event samples, subsets and analyzed physics results from massive data stores è From Petabytes by 2002, ~100 Petabytes by 2007, to ~1 Exabyte by ~2012. u Advanced integrated applications, such as Data Grids, rely on seamless operation of our LANs and WANs è With reliable, monitored, quantifiable high performance u Providing analyzed results with rapid turnaround, by coordinating and managing the LIMITED computing, data handling and NETWORK resources effectively u Enabling rapid access to the data and the collaboration è Across an ensemble of networks of varying capability

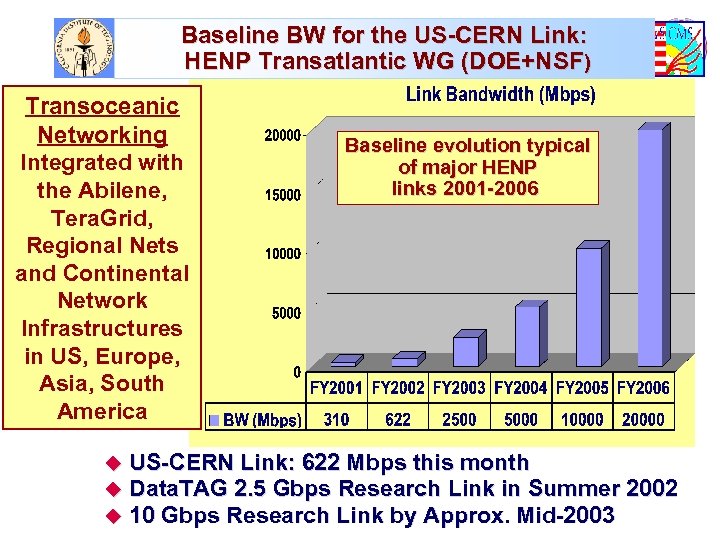

Baseline BW for the US-CERN Link: HENP Transatlantic WG (DOE+NSF) Transoceanic Networking Integrated with the Abilene, Tera. Grid, Regional Nets and Continental Network Infrastructures in US, Europe, Asia, South America Baseline evolution typical of major HENP links 2001 -2006 u US-CERN Link: 622 Mbps this month u Data. TAG 2. 5 Gbps Research Link in Summer 2002 u 10 Gbps Research Link by Approx. Mid-2003

![Transatlantic Net WG (HN, L. Price) Bandwidth Requirements [*] u [*] Installed BW. Maximum Transatlantic Net WG (HN, L. Price) Bandwidth Requirements [*] u [*] Installed BW. Maximum](https://present5.com/presentation/f474c4a34b7b63cebe8d65ea91a6b7f8/image-8.jpg)

Transatlantic Net WG (HN, L. Price) Bandwidth Requirements [*] u [*] Installed BW. Maximum Link Occupancy 50% Assumed See http: //gate. hep. anl. gov/lprice/TAN

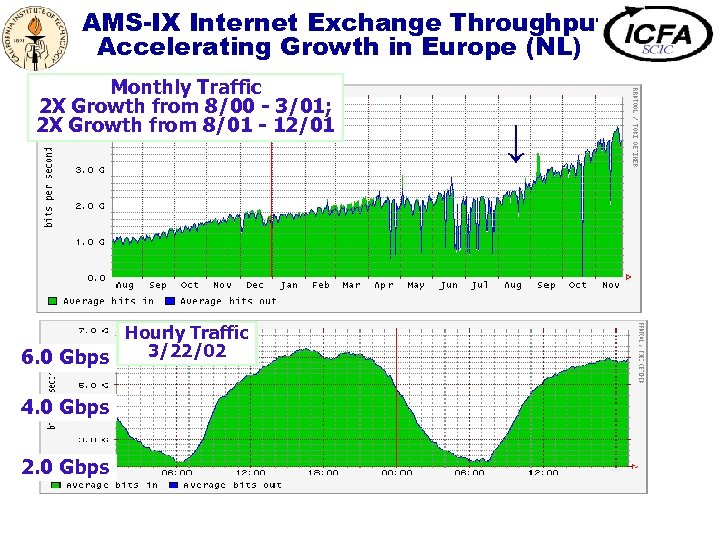

AMS-IX Internet Exchange Throughput Accelerating Growth in Europe (NL) Monthly Traffic 2 X Growth from 8/00 - 3/01; 2 X Growth from 8/01 - 12/01 Hourly Traffic 3/22/02 6. 0 Gbps 4. 0 Gbps 2. 0 Gbps ↓

Emerging Data Grid User Communities u NSF Network for Earthquake Engineering Simulation (NEES) èIntegrated instrumentation, collaboration, simulation u Grid Physics Network (Gri. Phy. N) èATLAS, CMS, LIGO, SDSS u Access Grid; VRVS: supporting group-based collaboration And u u Genomics, Proteomics, . . . The Earth System Grid and EOSDIS Federating Brain Data Computed Micro. Tomography … è Virtual Observatories

Upcoming Grid Challenges: Secure Workflow Management and Optimization u Maintaining a Global View of Resources and System State è Coherent end-to-end System Monitoring è Adaptive Learning: new paradigms for execution optimization (eventually automated) u Workflow Management, Balancing Policy Versus Moment-to-moment Capability to Complete Tasks è Balance High Levels of Usage of Limited Resources Against Better Turnaround Times for Priority Jobs è Matching Resource Usage to Policy Over the Long Term è Goal-Oriented Algorithms; Steering Requests According to (Yet to be Developed) Metrics u Handling User-Grid Interactions: Guidelines; Agents u Building Higher Level Services, and an Integrated Scalable (Agent-Based) User Environment for the Above

Application Architecture: Interfacing to the Grid u (Physicists’) Application Codes u Experiments’ Software Framework Layer q Modular and Grid-aware: Architecture able to interact effectively with the lower layers è Grid Applications Layer (Parameters and algorithms that govern system operations) q Policy and priority metrics q Workflow evaluation metrics q Task-Site Coupling proximity metrics è Global End-to-End System Services Layer q Monitoring and Tracking Component performance q Workflow monitoring and evaluation mechanisms q Error recovery and redirection mechanisms q System self-monitoring, evaluation and optimization mechanisms

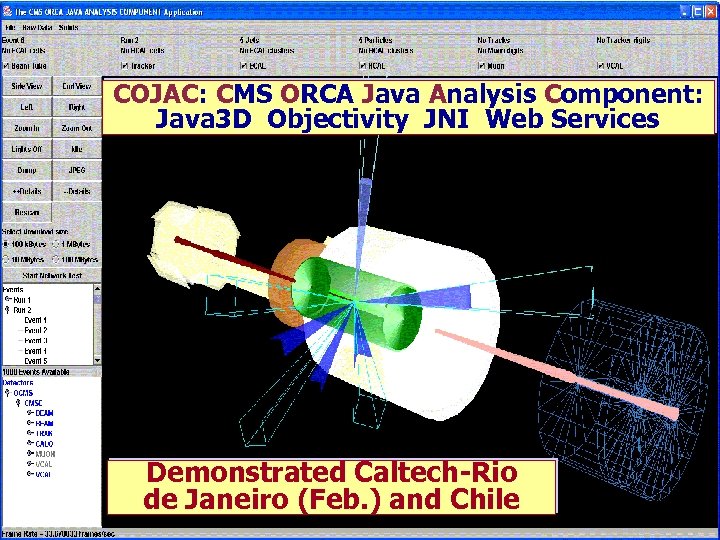

COJAC: CMS ORCA Java Analysis Component: Java 3 D Objectivity JNI Web Services Demonstrated Caltech-Rio de Janeiro (Feb. ) and Chile

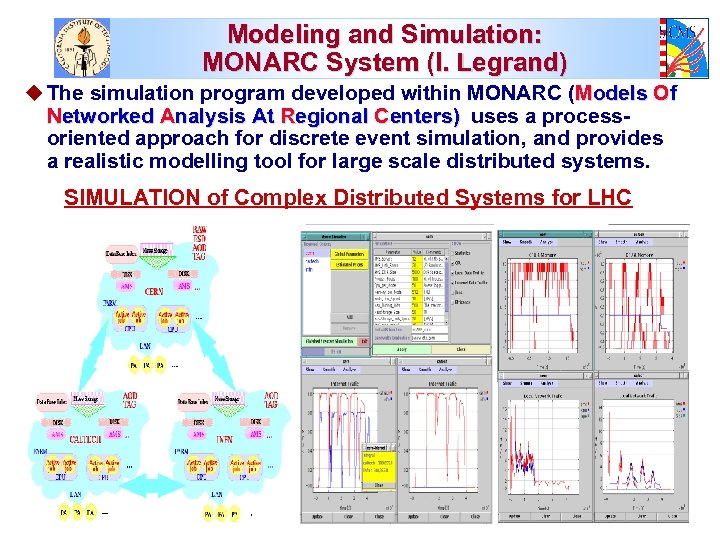

Modeling and Simulation: MONARC System (I. Legrand) u The simulation program developed within MONARC (Models Of Networked Analysis At Regional Centers) uses a processoriented approach for discrete event simulation, and provides a realistic modelling tool for large scale distributed systems. SIMULATION of Complex Distributed Systems for LHC

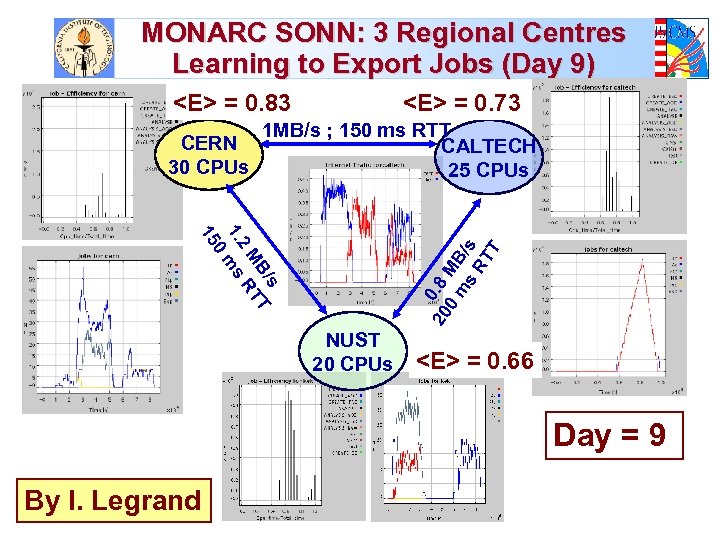

MONARC SONN: 3 Regional Centres Learning to Export Jobs (Day 9) <E> = 0. 83 CERN 30 CPUs <E> = 0. 73 1 MB/s ; 150 ms RTT CALTECH 25 CPUs 20 0. 8 0 MB m s /s RT T s B/ T M RT 2 1. ms 0 15 NUST 20 CPUs <E> = 0. 66 Day = 9 By I. Legrand

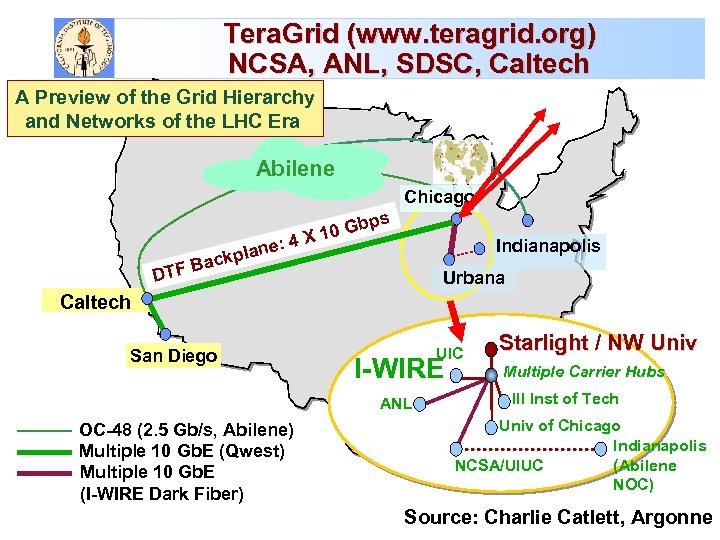

Tera. Grid (www. teragrid. org) NCSA, ANL, SDSC, Caltech A Preview of the Grid Hierarchy and Networks of the LHC Era Abilene Chicago DTF ne ckpla a : bps 0 G 4 X 1 Indianapolis B Urbana Caltech San Diego UIC I-WIRE ANL OC-48 (2. 5 Gb/s, Abilene) Multiple 10 Gb. E (Qwest) Multiple 10 Gb. E (I-WIRE Dark Fiber) Starlight / NW Univ Multiple Carrier Hubs Ill Inst of Tech Univ of Chicago Indianapolis (Abilene NCSA/UIUC NOC) Source: Charlie Catlett, Argonne

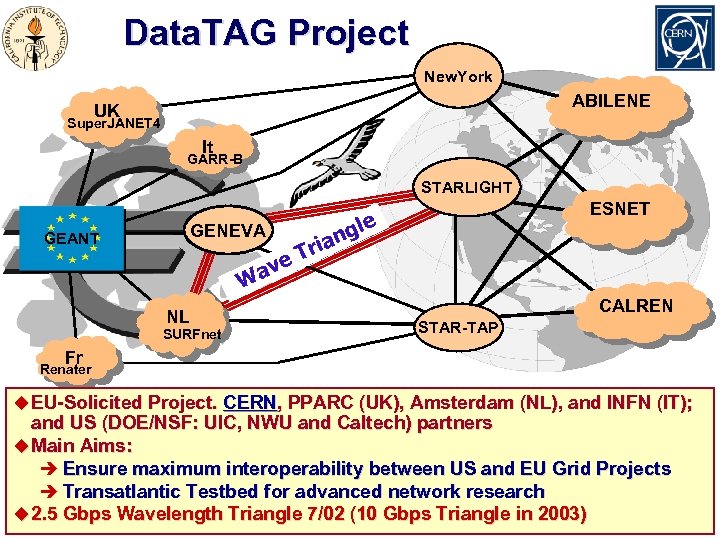

Data. TAG Project New. York ABILENE UK Super. JANET 4 It GARR-B STARLIGHT gle n ria GENEVA GEANT ave W NL SURFnet ESNET T CALREN STAR-TAP Fr Renater u EU-Solicited Project. CERN, PPARC (UK), Amsterdam (NL), and INFN (IT); and US (DOE/NSF: UIC, NWU and Caltech) partners u Main Aims: è Ensure maximum interoperability between US and EU Grid Projects è Transatlantic Testbed for advanced network research u 2. 5 Gbps Wavelength Triangle 7/02 (10 Gbps Triangle in 2003)

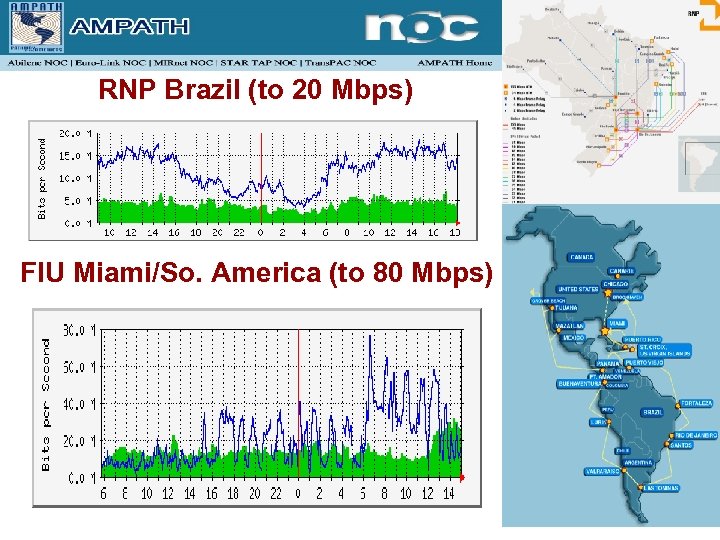

RNP Brazil (to 20 Mbps) FIU Miami/So. America (to 80 Mbps)

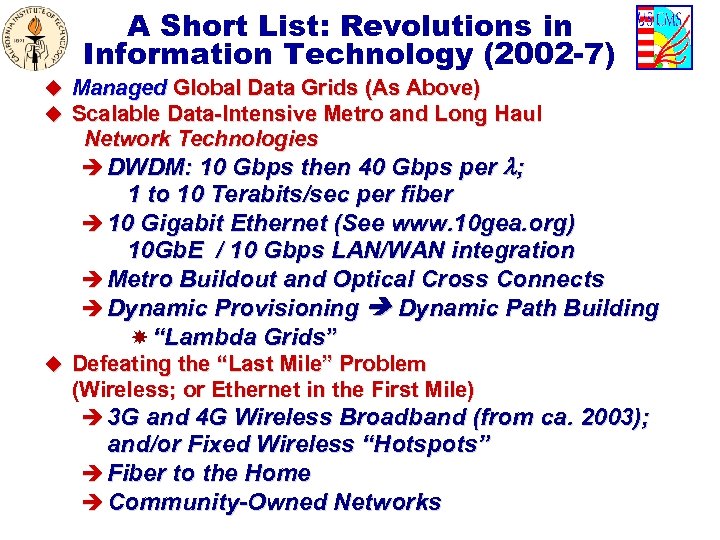

A Short List: Revolutions in Information Technology (2002 -7) u Managed Global Data Grids (As Above) u Scalable Data-Intensive Metro and Long Haul Network Technologies è DWDM: 10 Gbps then 40 Gbps per ; 1 to 10 Terabits/sec per fiber è 10 Gigabit Ethernet (See www. 10 gea. org) 10 Gb. E / 10 Gbps LAN/WAN integration è Metro Buildout and Optical Cross Connects è Dynamic Provisioning Dynamic Path Building “Lambda Grids” u Defeating the “Last Mile” Problem (Wireless; or Ethernet in the First Mile) è 3 G and 4 G Wireless Broadband (from ca. 2003); and/or Fixed Wireless “Hotspots” è Fiber to the Home è Community-Owned Networks

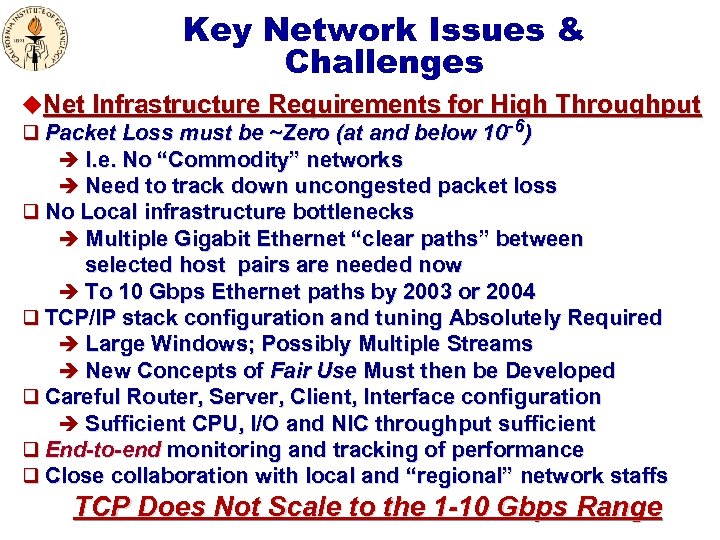

Key Network Issues & Challenges u. Net Infrastructure Requirements for High Throughput q Packet Loss must be ~Zero (at and below 10 -6) è I. e. No “Commodity” networks è Need to track down uncongested packet loss q No Local infrastructure bottlenecks è Multiple Gigabit Ethernet “clear paths” between selected host pairs are needed now è To 10 Gbps Ethernet paths by 2003 or 2004 q TCP/IP stack configuration and tuning Absolutely Required è Large Windows; Possibly Multiple Streams è New Concepts of Fair Use Must then be Developed q Careful Router, Server, Client, Interface configuration è Sufficient CPU, I/O and NIC throughput sufficient q End-to-end monitoring and tracking of performance q Close collaboration with local and “regional” network staffs TCP Does Not Scale to the 1 -10 Gbps Range

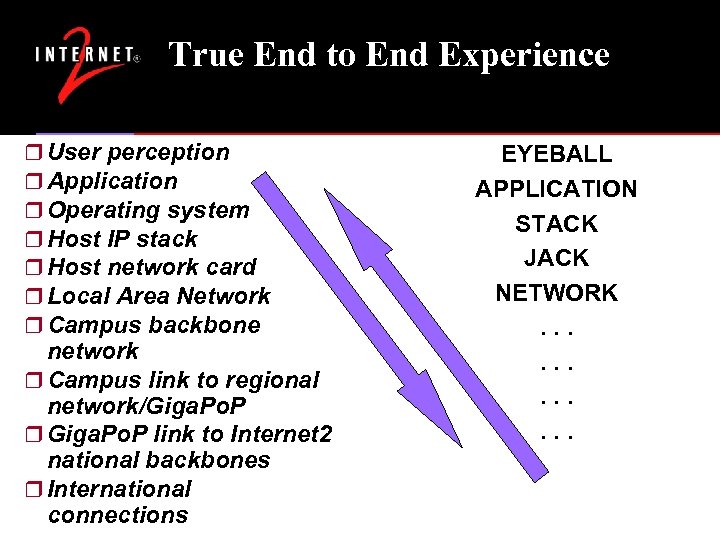

True End to End Experience r User perception r Application r Operating system r Host IP stack r Host network card r Local Area Network r Campus backbone network r Campus link to regional network/Giga. Po. P r Giga. Po. P link to Internet 2 national backbones r International connections EYEBALL APPLICATION STACK JACK NETWORK. . .

![Internet 2 HENP WG [*] u Mission: To help ensure that the required èNational Internet 2 HENP WG [*] u Mission: To help ensure that the required èNational](https://present5.com/presentation/f474c4a34b7b63cebe8d65ea91a6b7f8/image-22.jpg)

Internet 2 HENP WG [*] u Mission: To help ensure that the required èNational and international network infrastructures (end-to-end) èStandardized tools and facilities for high performance and end-to-end monitoring and tracking, and èCollaborative systems u are developed and deployed in a timely manner, and used effectively to meet the needs of the US LHC and other major HENP Programs, as well as the at-large scientific community. èTo carry out these developments in a way that is broadly applicable across many fields u Formed an Internet 2 WG as a suitable framework: Oct. 26 2001 u [*] Co-Chairs: S. Mc. Kee (Michigan), H. Newman (Caltech); Sec’y J. Williams (Indiana) u Website: http: //www. internet 2. edu/henp; also see the Internet 2 End-to-end Initiative: http: //www. internet 2. edu/e 2 e

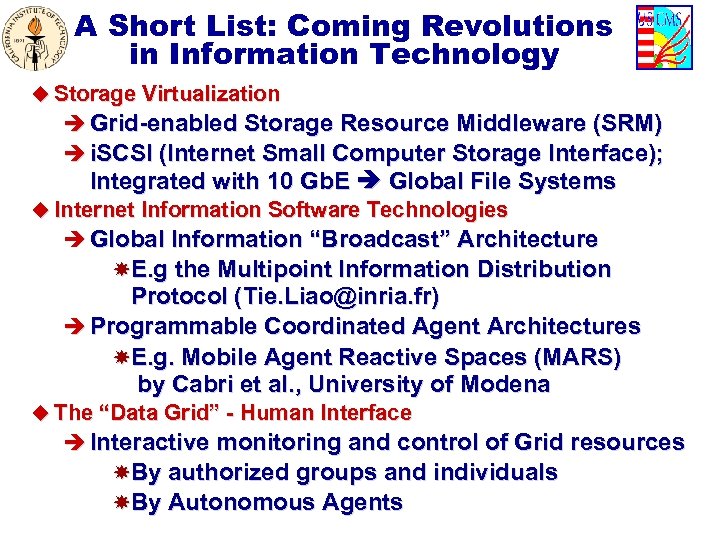

A Short List: Coming Revolutions in Information Technology u Storage Virtualization è Grid-enabled Storage Resource Middleware (SRM) è i. SCSI (Internet Small Computer Storage Interface); Integrated with 10 Gb. E Global File Systems u Internet Information Software Technologies è Global Information “Broadcast” Architecture E. g the Multipoint Information Distribution Protocol (Tie. Liao@inria. fr) è Programmable Coordinated Agent Architectures E. g. Mobile Agent Reactive Spaces (MARS) by Cabri et al. , University of Modena u The “Data Grid” - Human Interface è Interactive monitoring and control of Grid resources By authorized groups and individuals By Autonomous Agents

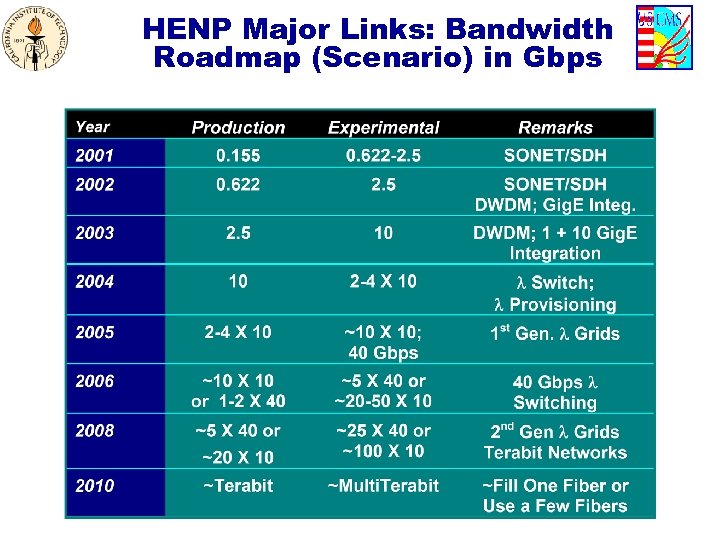

HENP Major Links: Bandwidth Roadmap (Scenario) in Gbps

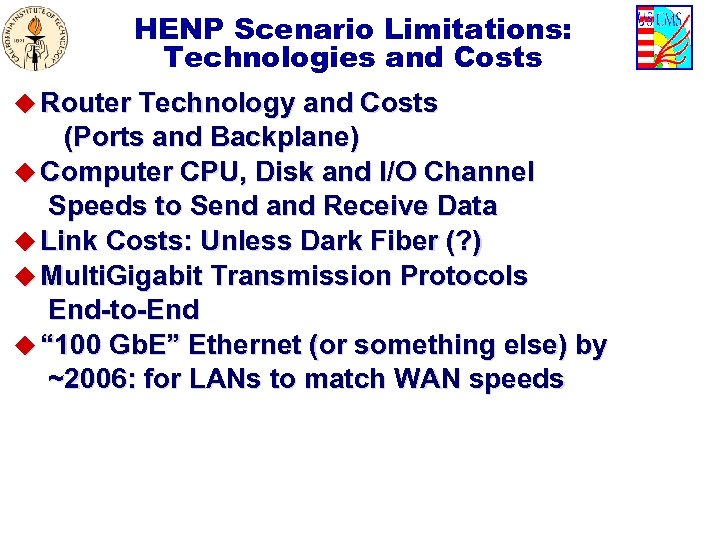

HENP Scenario Limitations: Technologies and Costs u Router Technology and Costs (Ports and Backplane) u Computer CPU, Disk and I/O Channel Speeds to Send and Receive Data u Link Costs: Unless Dark Fiber (? ) u Multi. Gigabit Transmission Protocols End-to-End u “ 100 Gb. E” Ethernet (or something else) by ~2006: for LANs to match WAN speeds

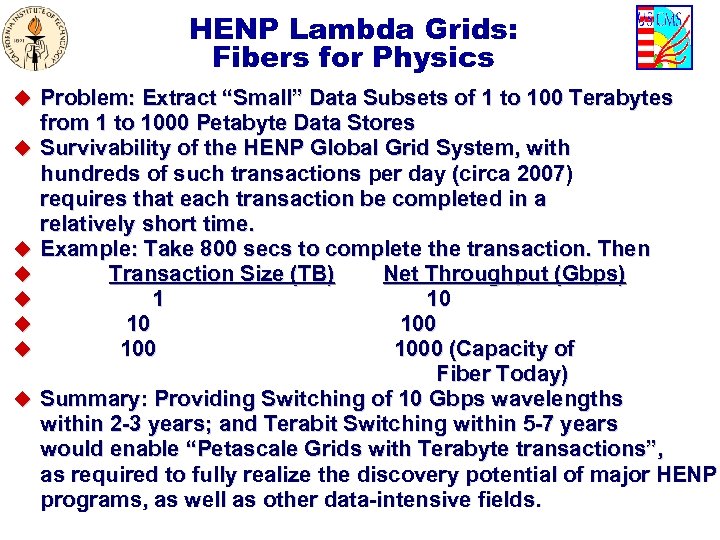

HENP Lambda Grids: Fibers for Physics u Problem: Extract “Small” Data Subsets of 1 to 100 Terabytes u u u u from 1 to 1000 Petabyte Data Stores Survivability of the HENP Global Grid System, with hundreds of such transactions per day (circa 2007) requires that each transaction be completed in a relatively short time. Example: Take 800 secs to complete the transaction. Then Transaction Size (TB) Net Throughput (Gbps) 1 10 10 100 1000 (Capacity of Fiber Today) Summary: Providing Switching of 10 Gbps wavelengths within 2 -3 years; and Terabit Switching within 5 -7 years would enable “Petascale Grids with Terabyte transactions”, as required to fully realize the discovery potential of major HENP programs, as well as other data-intensive fields.

10614 Hosts; 6003 Registered Users in 60 Countries 41 (7 I 2) Reflectors Annual Growth 2 to 3 X

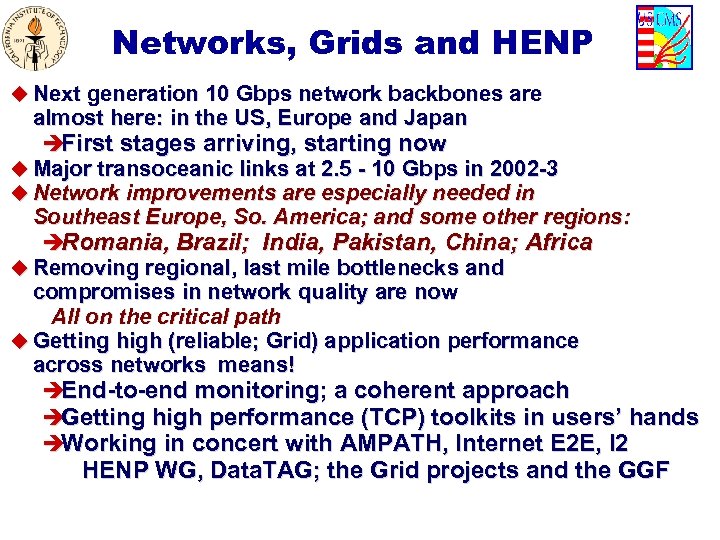

Networks, Grids and HENP u Next generation 10 Gbps network backbones are almost here: in the US, Europe and Japan èFirst stages arriving, starting now u Major transoceanic links at 2. 5 - 10 Gbps in 2002 -3 u Network improvements are especially needed in Southeast Europe, So. America; and some other regions: èRomania, Brazil; India, Pakistan, China; Africa u Removing regional, last mile bottlenecks and compromises in network quality are now All on the critical path u Getting high (reliable; Grid) application performance across networks means! èEnd-to-end monitoring; a coherent approach èGetting high performance (TCP) toolkits in users’ hands èWorking in concert with AMPATH, Internet E 2 E, I 2 HENP WG, Data. TAG; the Grid projects and the GGF

Some Extra Slides Follow

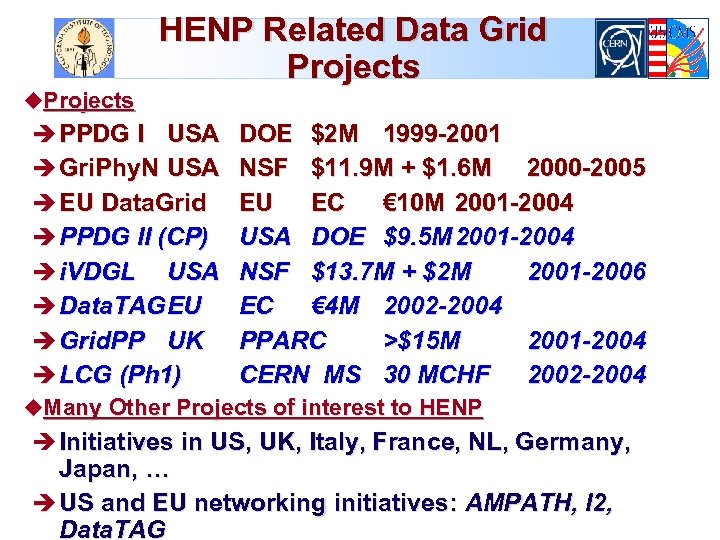

HENP Related Data Grid Projects u. Projects è PPDG I USA è Gri. Phy. N USA è EU Data. Grid è PPDG II (CP) è i. VDGL USA è Data. TAGEU DOE $2 M 1999 -2001 NSF $11. 9 M + $1. 6 M 2000 -2005 EU EC € 10 M 2001 -2004 USA DOE $9. 5 M 2001 -2004 NSF $13. 7 M + $2 M 2001 -2006 EC € 4 M 2002 -2004 PPARC >$15 M 2001 -2004 CERN MS 30 MCHF 2002 -2004 è Grid. PP UK è LCG (Ph 1) u. Many Other Projects of interest to HENP è Initiatives in US, UK, Italy, France, NL, Germany, Japan, … è US and EU networking initiatives: AMPATH, I 2, Data. TAG

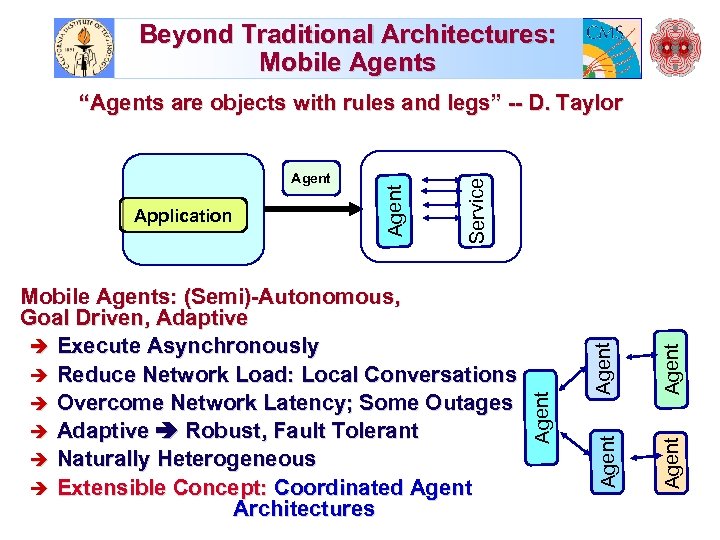

Beyond Traditional Architectures: Mobile Agents Agent Mobile Agents: (Semi)-Autonomous, Goal Driven, Adaptive è Execute Asynchronously è Reduce Network Load: Local Conversations è Overcome Network Latency; Some Outages è Adaptive Robust, Fault Tolerant è Naturally Heterogeneous è Extensible Concept: Coordinated Agent Architectures Agent Application Service Agent “Agents are objects with rules and legs” -- D. Taylor

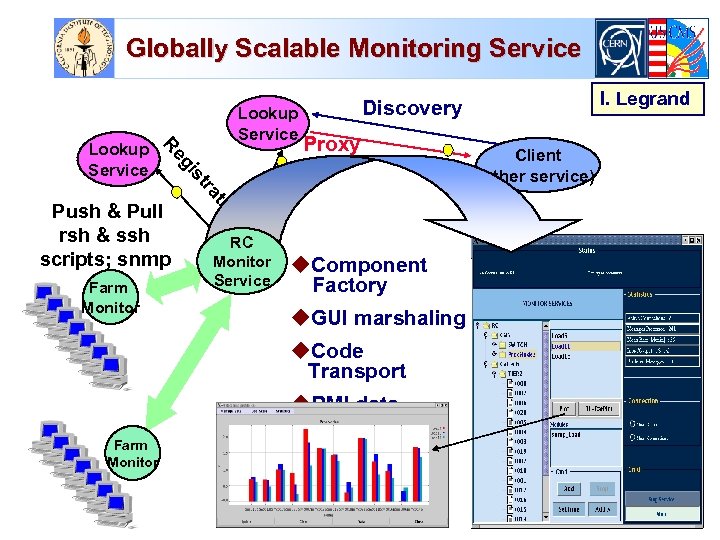

Globally Scalable Monitoring Service n Farm Monitor Proxy tio Push & Pull rsh & ssh scripts; snmp RC Monitor Service u. Component Factory u. GUI marshaling u. Code Transport u. RMI data access Farm Monitor I. Legrand Discovery ra st gi Re Lookup Service Client (other service)

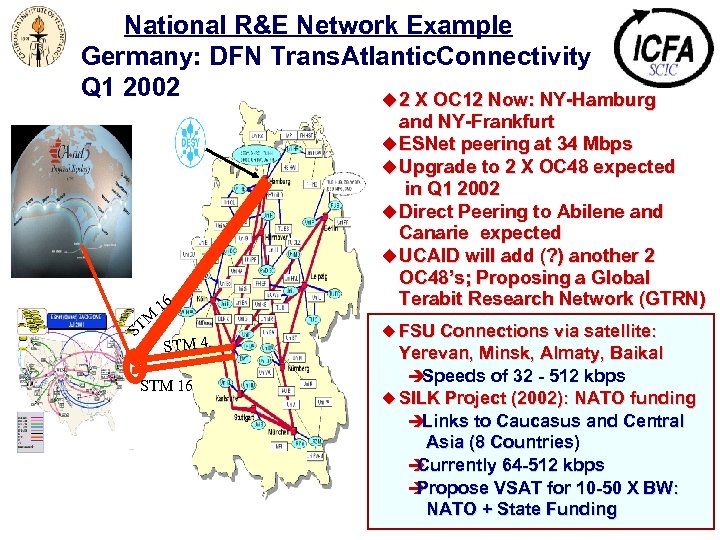

National R&E Network Example Germany: DFN Trans. Atlantic. Connectivity Q 1 2002 u 2 X OC 12 Now: NY-Hamburg M ST 16 STM 4 STM 16 and NY-Frankfurt u ESNet peering at 34 Mbps u Upgrade to 2 X OC 48 expected in Q 1 2002 u Direct Peering to Abilene and Canarie expected u UCAID will add (? ) another 2 OC 48’s; Proposing a Global Terabit Research Network (GTRN) u FSU Connections via satellite: Yerevan, Minsk, Almaty, Baikal è Speeds of 32 - 512 kbps u SILK Project (2002): NATO funding è Links to Caucasus and Central Asia (8 Countries) è Currently 64 -512 kbps è Propose VSAT for 10 -50 X BW: NATO + State Funding

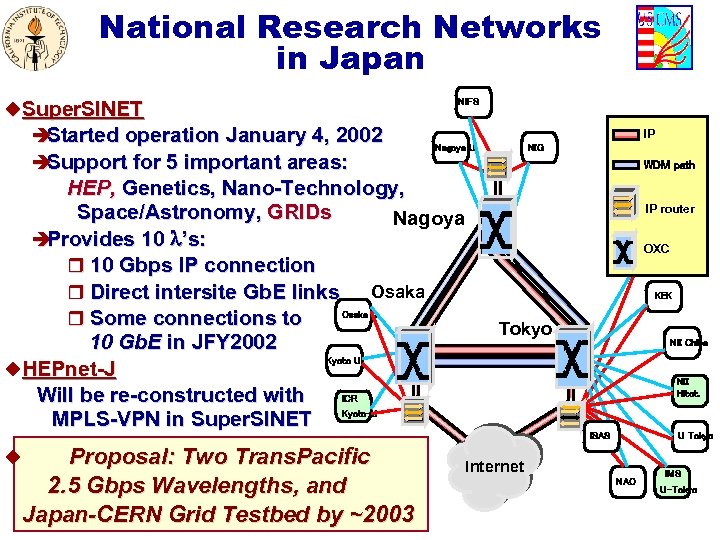

National Research Networks in Japan u. Super. SINET è Started operation January 4, 2002 è Support for 5 important areas: NIFS IP Nagoya U HEP, Genetics, Nano-Technology, Space/Astronomy, GRIDs Nagoya è Provides 10 ’s: r 10 Gbps IP connection Osaka r Direct intersite Gb. E links Osaka U r Some connections to 10 Gb. E in JFY 2002 Kyoto U u. HEPnet-J Will be re-constructed with ICR MPLS-VPN in Super. SINET Kyoto-U NIG WDM path IP router OXC Tohoku U KEK Tokyo NII Chiba NII Hitot. ISAS Proposal: Two Trans. Pacific 2. 5 Gbps Wavelengths, and Japan-CERN Grid Testbed by ~2003 u U Tokyo Internet NAO IMS U-Tokyo

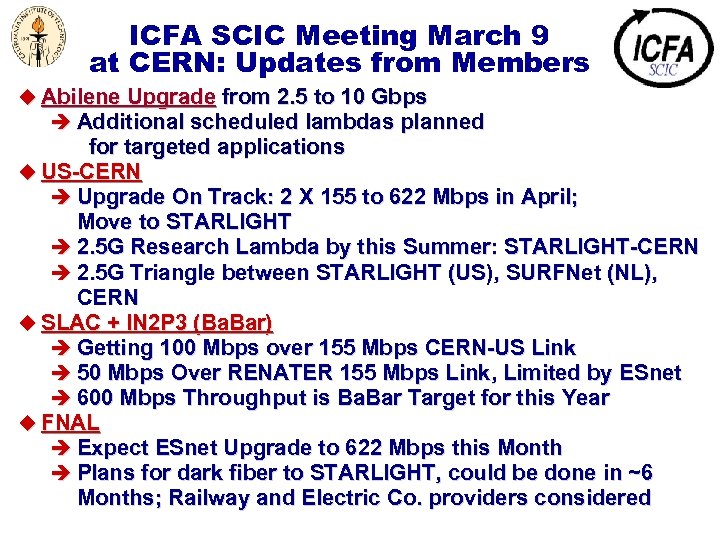

ICFA SCIC Meeting March 9 at CERN: Updates from Members u Abilene Upgrade from 2. 5 to 10 Gbps è Additional scheduled lambdas planned for targeted applications u US-CERN è Upgrade On Track: 2 X 155 to 622 Mbps in April; Move to STARLIGHT è 2. 5 G Research Lambda by this Summer: STARLIGHT-CERN è 2. 5 G Triangle between STARLIGHT (US), SURFNet (NL), CERN u SLAC + IN 2 P 3 (Ba. Bar) è Getting 100 Mbps over 155 Mbps CERN-US Link è 50 Mbps Over RENATER 155 Mbps Link, Limited by ESnet è 600 Mbps Throughput is Ba. Bar Target for this Year u FNAL è Expect ESnet Upgrade to 622 Mbps this Month è Plans for dark fiber to STARLIGHT, could be done in ~6 Months; Railway and Electric Co. providers considered

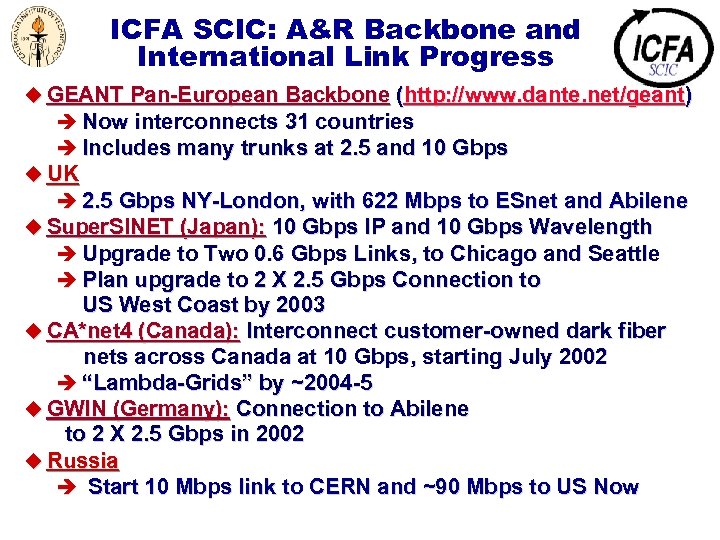

ICFA SCIC: A&R Backbone and International Link Progress u GEANT Pan-European Backbone (http: //www. dante. net/geant) è Now interconnects 31 countries è Includes many trunks at 2. 5 and 10 Gbps u UK è 2. 5 Gbps NY-London, with 622 Mbps to ESnet and Abilene u Super. SINET (Japan): 10 Gbps IP and 10 Gbps Wavelength è Upgrade to Two 0. 6 Gbps Links, to Chicago and Seattle è Plan upgrade to 2 X 2. 5 Gbps Connection to US West Coast by 2003 u CA*net 4 (Canada): Interconnect customer-owned dark fiber nets across Canada at 10 Gbps, starting July 2002 è “Lambda-Grids” by ~2004 -5 u GWIN (Germany): Connection to Abilene to 2 X 2. 5 Gbps in 2002 u Russia è Start 10 Mbps link to CERN and ~90 Mbps to US Now

![Throughput quality improvements: BWTCP < MSS/(RTT*sqrt(loss)) [*] 80% Improvement/Year Factor of 10 In 4 Throughput quality improvements: BWTCP < MSS/(RTT*sqrt(loss)) [*] 80% Improvement/Year Factor of 10 In 4](https://present5.com/presentation/f474c4a34b7b63cebe8d65ea91a6b7f8/image-37.jpg)

Throughput quality improvements: BWTCP < MSS/(RTT*sqrt(loss)) [*] 80% Improvement/Year Factor of 10 In 4 Years Eastern Europe Keeping Up China Recent Improvement [*] See “Macroscopic Behavior of the TCP Congestion Avoidance Algorithm, ” Matthis, Semke, Mahdavi, Ott, Computer Communication Review 27(3), 7/1997

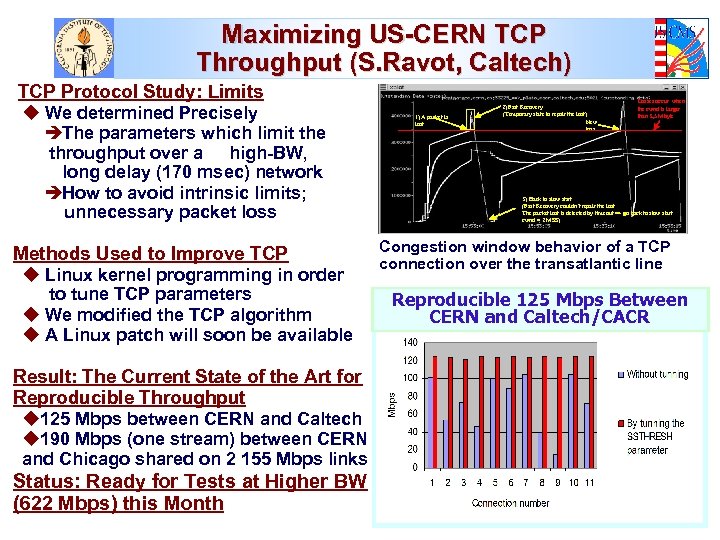

Maximizing US-CERN TCP Throughput (S. Ravot, Caltech) TCP Protocol Study: Limits u We determined Precisely èThe parameters which limit the throughput over a high-BW, long delay (170 msec) network èHow to avoid intrinsic limits; unnecessary packet loss Methods Used to Improve TCP u Linux kernel programming in order to tune TCP parameters u We modified the TCP algorithm u A Linux patch will soon be available Result: The Current State of the Art for Reproducible Throughput u 125 Mbps between CERN and Caltech u 190 Mbps (one stream) between CERN and Chicago shared on 2 155 Mbps links Status: Ready for Tests at Higher BW (622 Mbps) this Month 1) A packet is lost 2) Fast Recovery (Temporary state to repair the lost) New loss Losses occur when the cwnd is larger than 3, 5 Mbyte 3) Back to slow start (Fast Recovery couldn’t repair the lost The packet lost is detected by timeout => go back to slow start cwnd = 2 MSS) Congestion window behavior of a TCP connection over the transatlantic line Reproducible 125 Mbps Between CERN and Caltech/CACR

Rapid Advances of Nat’l Backbones: Next Generation Abilene u. Abilene partnership with Qwest extended through 2006 u. Backbone to be upgraded to 10 -Gbps in phases, to be Completed by October 2003 è Giga. Po. P Upgrade started in February 2002 u. Capability for flexible provisioning in support of future experimentation in optical networking è a multi- infrastructure In

US CMS Tera. Grid Seamless Prototype u Caltech/Wisconsin Condor/NCSA Production u Simple Job Launch from Caltech è Authentication Using Globus Security Infrastructure (GSI) è Resources Identified Using Globus Information Infrastructure (GIS) u CMSIM Jobs (Batches of 100, 12 -14 Hours, 100 GB Output) Sent to the Wisconsin Condor Flock Using Condor-G è Output Files Automatically Stored in NCSA Unitree (Gridftp) u ORCA Phase: Read-in and Process Jobs at NCSA èOutput Files Automatically Stored in NCSA Unitree u Future: Multiple CMS Sites; Storage in Caltech HPSS Also, Using GDMP (With LBNL’s HRM). u Animated Flow Diagram of the DTF Prototype: http: //cmsdoc. cern. ch/~wisniew/infrastructure. html

f474c4a34b7b63cebe8d65ea91a6b7f8.ppt