e8e6328ba2bb28c847d244abfef7085b.ppt

- Количество слайдов: 37

Henning Schulzrinne Dept. of Computer Science Columbia University April 2006 Thoughts on a next-generation Internet and next-generation network management

Overview • • • • The transformation in keynote “big pictures” The transition in cost metrics What has made the Internet successful? Some Internet problems Simplicity wins Architectural complexity New protocol engineering End-to-end application-visible reliability still poor (~ 99. 5%) – even though network elements have gotten much more reliable – particular impact on interactive applications (e. g. , Vo. IP) – transient problems Lots of voodoo network management Existing network management doesn’t work for Vo. IP and other modern applications Need user-centric rather than operator-centric management Proposal: peer-to-peer management – “Do You See What I See? ” Also use for reliability estimation and statistical fault characterization

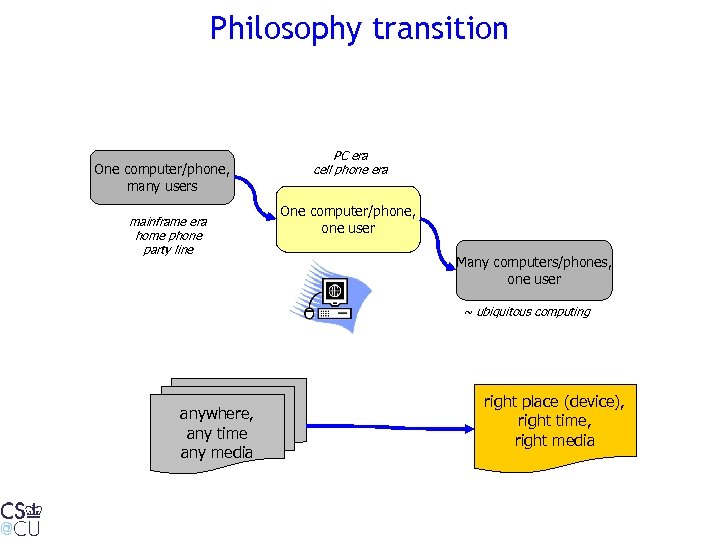

Philosophy transition One computer/phone, many users mainframe era home phone party line PC era cell phone era One computer/phone, one user Many computers/phones, one user ~ ubiquitous computing anywhere, any time any media right place (device), right time, right media

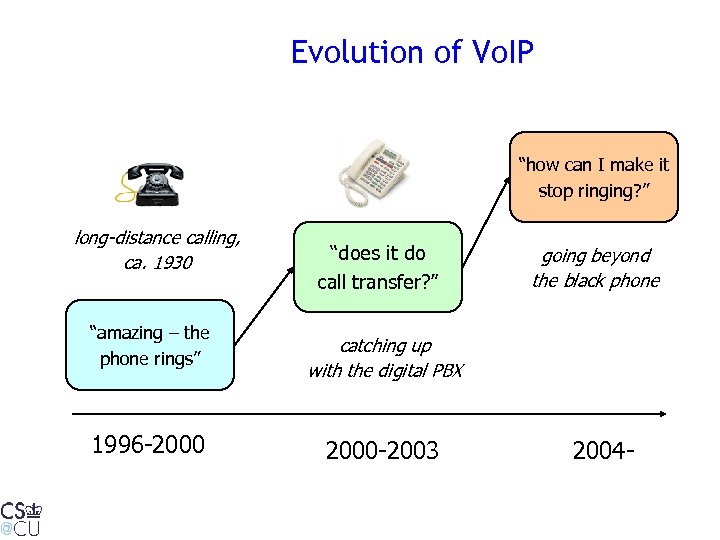

Evolution of Vo. IP “how can I make it stop ringing? ” long-distance calling, ca. 1930 “amazing – the phone rings” 1996 -2000 “does it do call transfer? ” going beyond the black phone catching up with the digital PBX 2000 -2003 2004 -

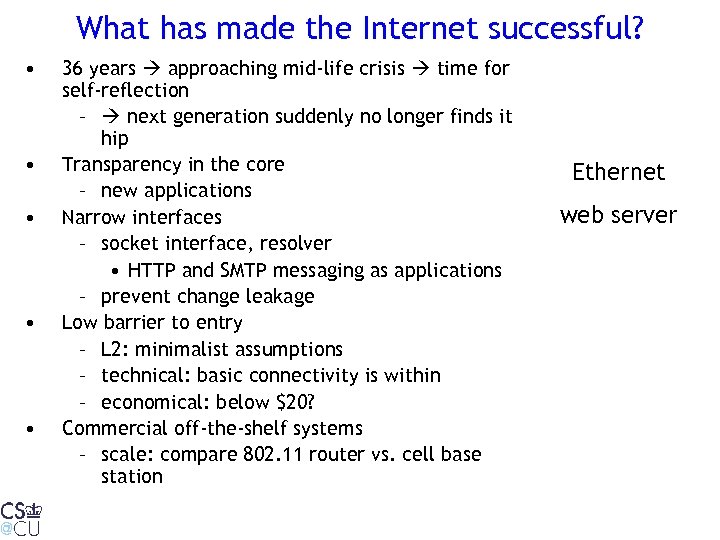

What has made the Internet successful? • • • 36 years approaching mid-life crisis time for self-reflection – next generation suddenly no longer finds it hip Transparency in the core – new applications Narrow interfaces – socket interface, resolver • HTTP and SMTP messaging as applications – prevent change leakage Low barrier to entry – L 2: minimalist assumptions – technical: basic connectivity is within – economical: below $20? Commercial off-the-shelf systems – scale: compare 802. 11 router vs. cell base station Ethernet web server

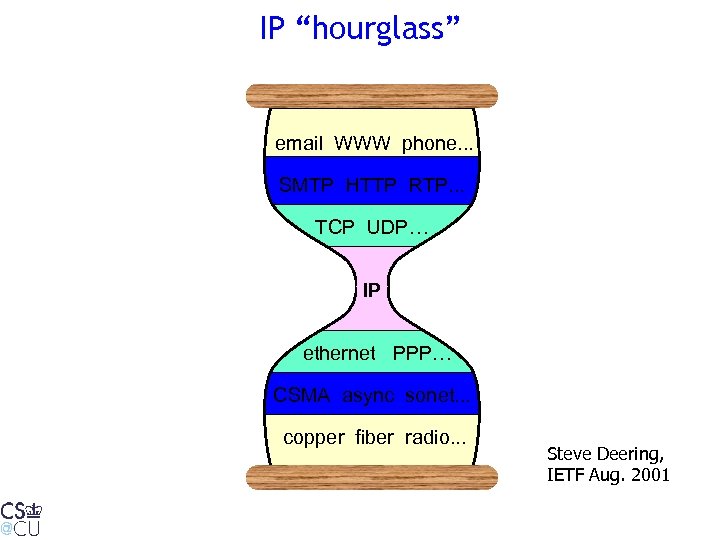

IP “hourglass” email WWW phone. . . SMTP HTTP RTP. . . TCP UDP… IP ethernet PPP… CSMA async sonet. . . copper fiber radio. . . Steve Deering, IETF Aug. 2001

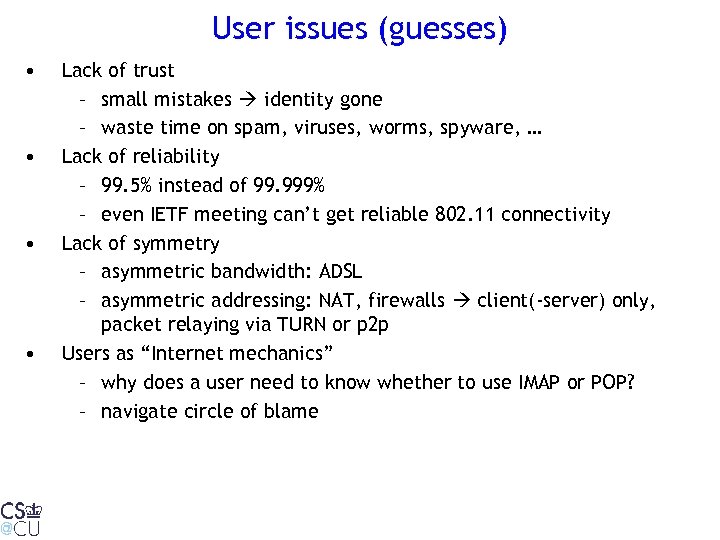

User issues (guesses) • • Lack of trust – small mistakes identity gone – waste time on spam, viruses, worms, spyware, … Lack of reliability – 99. 5% instead of 99. 999% – even IETF meeting can’t get reliable 802. 11 connectivity Lack of symmetry – asymmetric bandwidth: ADSL – asymmetric addressing: NAT, firewalls client(-server) only, packet relaying via TURN or p 2 p Users as “Internet mechanics” – why does a user need to know whether to use IMAP or POP? – navigate circle of blame

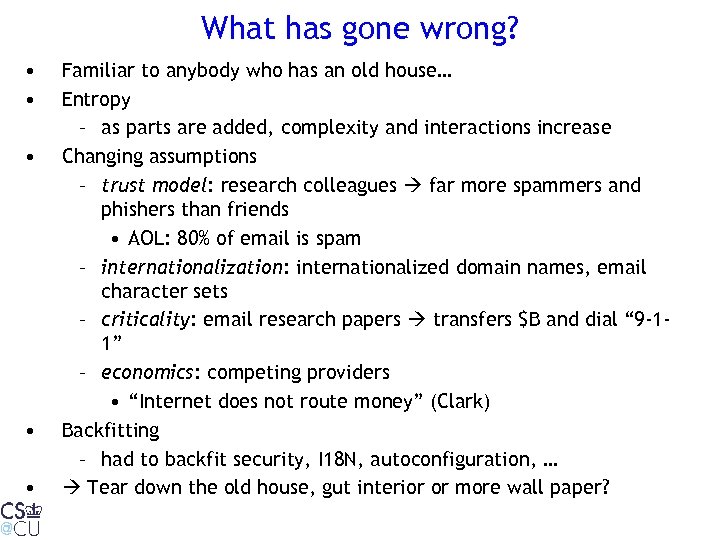

What has gone wrong? • • • Familiar to anybody who has an old house… Entropy – as parts are added, complexity and interactions increase Changing assumptions – trust model: research colleagues far more spammers and phishers than friends • AOL: 80% of email is spam – internationalization: internationalized domain names, email character sets – criticality: email research papers transfers $B and dial “ 9 -11” – economics: competing providers • “Internet does not route money” (Clark) Backfitting – had to backfit security, I 18 N, autoconfiguration, … Tear down the old house, gut interior or more wall paper?

In more detail… • • • Deployment problems Layer creep Simple and universal wins Scaling in human terms Cross-cutting concerns, e. g. , – CPU vs. human cycles • we optimize the $100 component, not the $100/hour labor – introspection – graceful upgrades – no policy magic

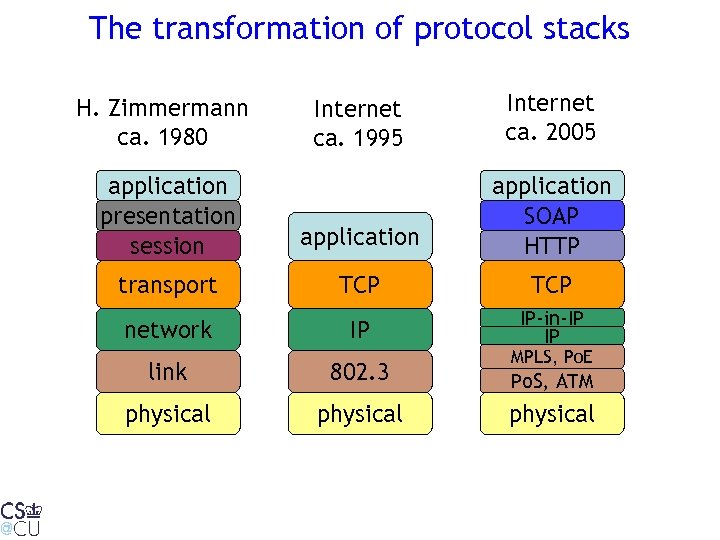

The transformation of protocol stacks Internet ca. 1995 Internet ca. 2005 application presentation session application SOAP HTTP transport TCP network IP IP-in-IP IP H. Zimmermann ca. 1980 MPLS, Po. E link 802. 3 Po. S, ATM physical

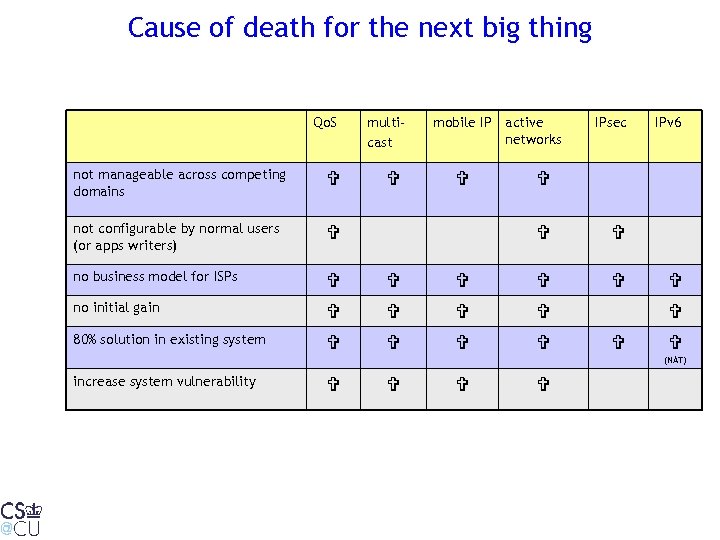

Cause of death for the next big thing Qo. S multicast not manageable across competing domains not configurable by normal users (or apps writers) no business model for ISPs no initial gain 80% solution in existing system mobile IP active networks IPsec IPv 6 (NAT) increase system vulnerability

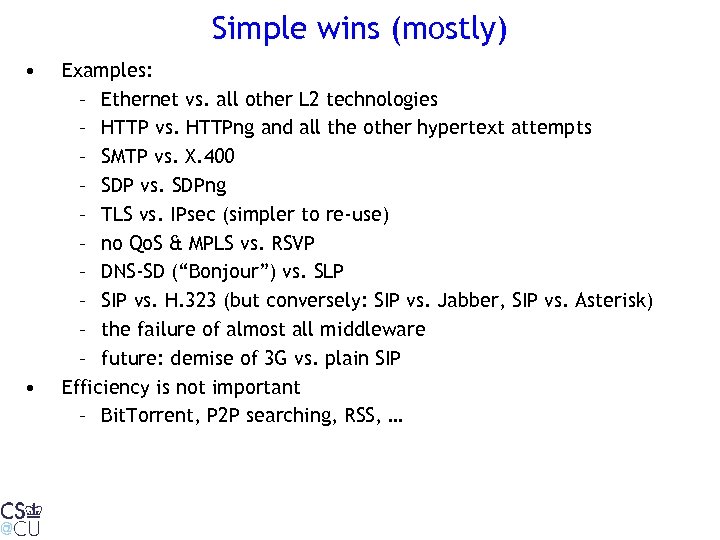

Simple wins (mostly) • • Examples: – Ethernet vs. all other L 2 technologies – HTTP vs. HTTPng and all the other hypertext attempts – SMTP vs. X. 400 – SDP vs. SDPng – TLS vs. IPsec (simpler to re-use) – no Qo. S & MPLS vs. RSVP – DNS-SD (“Bonjour”) vs. SLP – SIP vs. H. 323 (but conversely: SIP vs. Jabber, SIP vs. Asterisk) – the failure of almost all middleware – future: demise of 3 G vs. plain SIP Efficiency is not important – Bit. Torrent, P 2 P searching, RSS, …

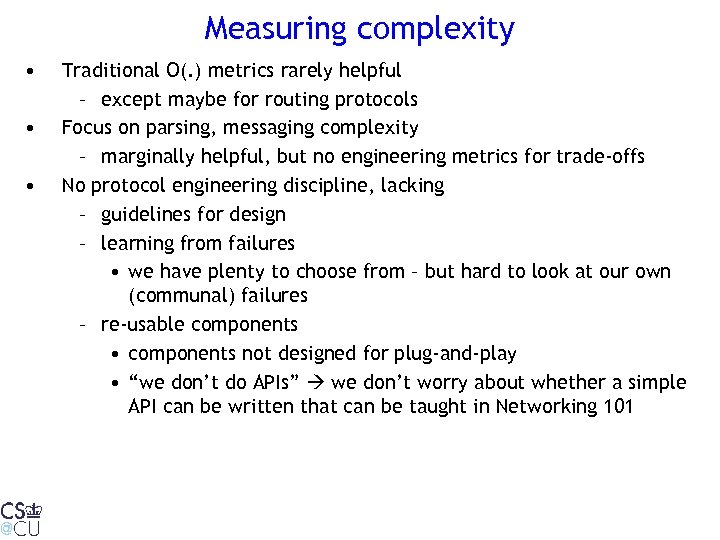

Measuring complexity • • • Traditional O(. ) metrics rarely helpful – except maybe for routing protocols Focus on parsing, messaging complexity – marginally helpful, but no engineering metrics for trade-offs No protocol engineering discipline, lacking – guidelines for design – learning from failures • we have plenty to choose from – but hard to look at our own (communal) failures – re-usable components • components not designed for plug-and-play • “we don’t do APIs” we don’t worry about whether a simple API can be written that can be taught in Networking 101

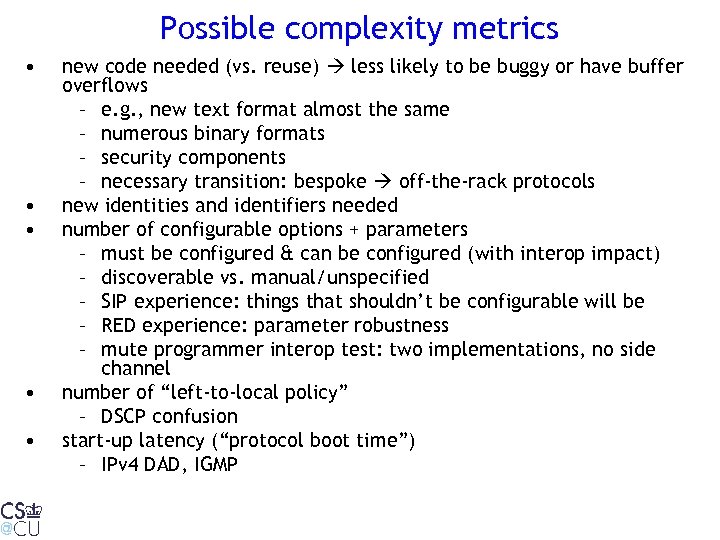

Possible complexity metrics • • • new code needed (vs. reuse) less likely to be buggy or have buffer overflows – e. g. , new text format almost the same – numerous binary formats – security components – necessary transition: bespoke off-the-rack protocols new identities and identifiers needed number of configurable options + parameters – must be configured & can be configured (with interop impact) – discoverable vs. manual/unspecified – SIP experience: things that shouldn’t be configurable will be – RED experience: parameter robustness – mute programmer interop test: two implementations, no side channel number of “left-to-local policy” – DSCP confusion start-up latency (“protocol boot time”) – IPv 4 DAD, IGMP

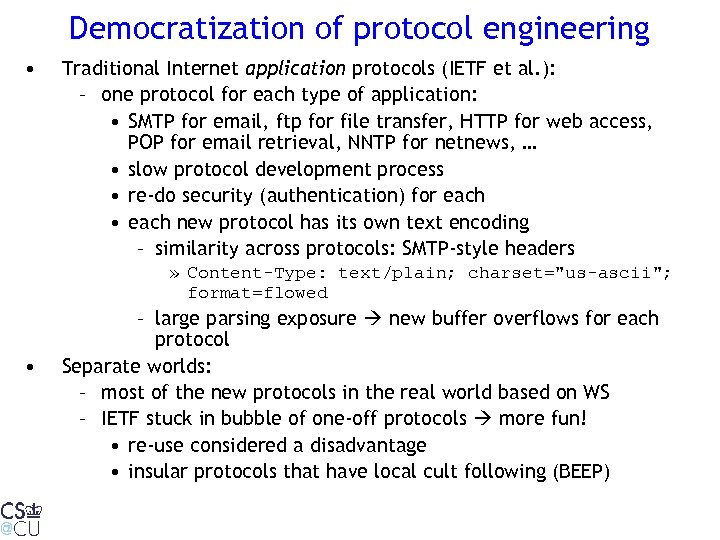

Democratization of protocol engineering • Traditional Internet application protocols (IETF et al. ): – one protocol for each type of application: • SMTP for email, ftp for file transfer, HTTP for web access, POP for email retrieval, NNTP for netnews, … • slow protocol development process • re-do security (authentication) for each • each new protocol has its own text encoding – similarity across protocols: SMTP-style headers » Content-Type: text/plain; charset="us-ascii"; format=flowed • – large parsing exposure new buffer overflows for each protocol Separate worlds: – most of the new protocols in the real world based on WS – IETF stuck in bubble of one-off protocols more fun! • re-use considered a disadvantage • insular protocols that have local cult following (BEEP)

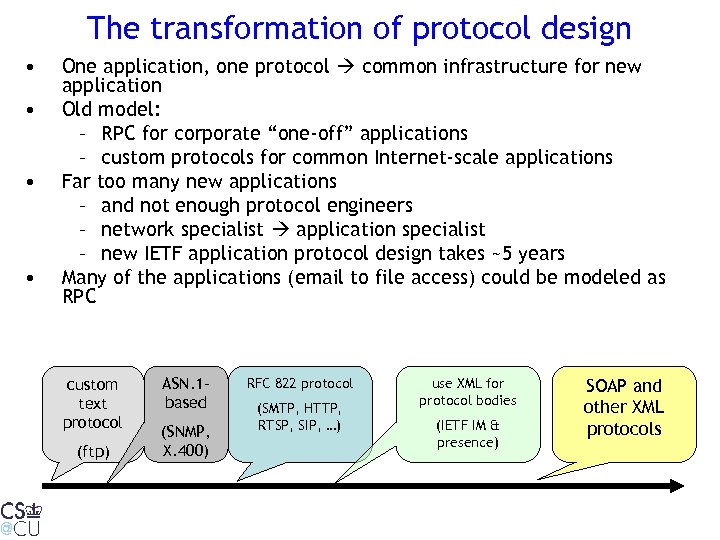

The transformation of protocol design • • One application, one protocol common infrastructure for new application Old model: – RPC for corporate “one-off” applications – custom protocols for common Internet-scale applications Far too many new applications – and not enough protocol engineers – network specialist application specialist – new IETF application protocol design takes ~5 years Many of the applications (email to file access) could be modeled as RPC custom text protocol (ftp) ASN. 1 based (SNMP, X. 400) RFC 822 protocol (SMTP, HTTP, RTSP, SIP, …) use XML for protocol bodies (IETF IM & presence) SOAP and other XML protocols

Why are web services succeeding(*) after RPC failed? • • • SOAP = just another remote procedure call mechanism – plenty of predecessors: Sun. RPC, DCE, DCOM, Corba, … – “client-server computing” – all of them were to transform (enterprise) computing, integrate legacy applications, end world hunger, … Why didn’t they? Speculation: – no web front end (no three-tier applications) – few open-source implementations – no common protocol between PC client (Microsoft) and backend (IBM mainframes, Sun, VMS) – corporate networks local only (one site), with limited backbone bandwidth (*) we hope

Time for a new protocol stack? • Now: add x. 5 sublayers and overlay – HIP, MPLS, TLS, … • Doesn’t tell us what we could/should do – or where functionality belongs – use of upper layers to help lower layers (security associations, authorization) – what is innate complexity and what is entropy? • Examples: – Applications: do we need ftp, SMTP, IMAP, POP, SIP, RTSP, HTTP, p 2 p protocols? – Network: can we reduce complexity by integrating functionality or re-assigning it? • e. g. , should e 2 e security focus on transport layer rather than network layer? – probably need pub/sub layer – currently kludged locally (email, IM, specialized)

(My) guidelines for a new Internet • • Maintain success factors, such as – service transparency – low barrier to entry – narrow interfaces New guidelines – optimize human cycles, not CPU cycles – design for symmetry – security built-in, not bolted-on – everything can be mobile, including networks – sending me data is a privilege, not a right – reliability paramount – isolation of flows • New possibilities: – another look at circuit switching? – knowledge and control (“signaling”) planes? – separate packet forwarding from control – better alignment of costs and benefit – better scaling for Internetscale routing – more general services

More “network” services • Currently, very specialized and limited – packet forwarding – DNS for identifier lookup – DHCP for configuration • New opportunities – packet forwarding with control – general identifier storage and lookup • both server-based and peer-to-peer – SLP/Jini/UDDI service location ontology-based data store – network file storage for temporarily-disconnected mobiles – network computation translation, relaying – trust services ( IRT trust paths work)

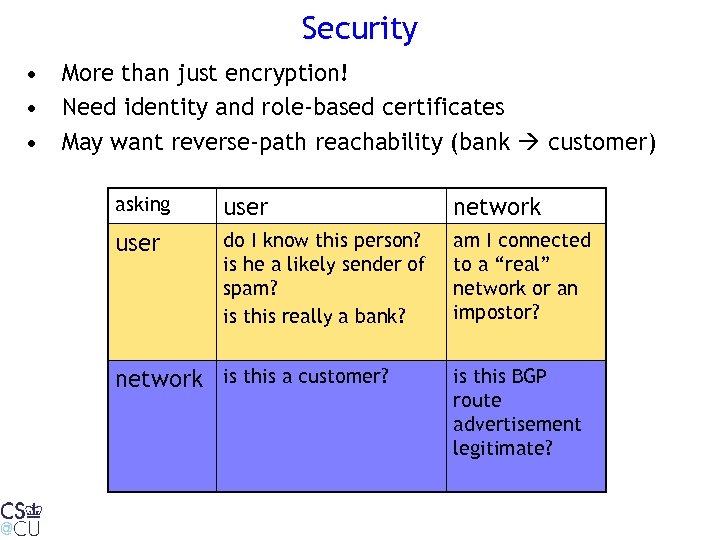

Security • More than just encryption! • Need identity and role-based certificates • May want reverse-path reachability (bank customer) asking user network user do I know this person? is he a likely sender of spam? is this really a bank? am I connected to a “real” network or an impostor? network is this a customer? is this BGP route advertisement legitimate?

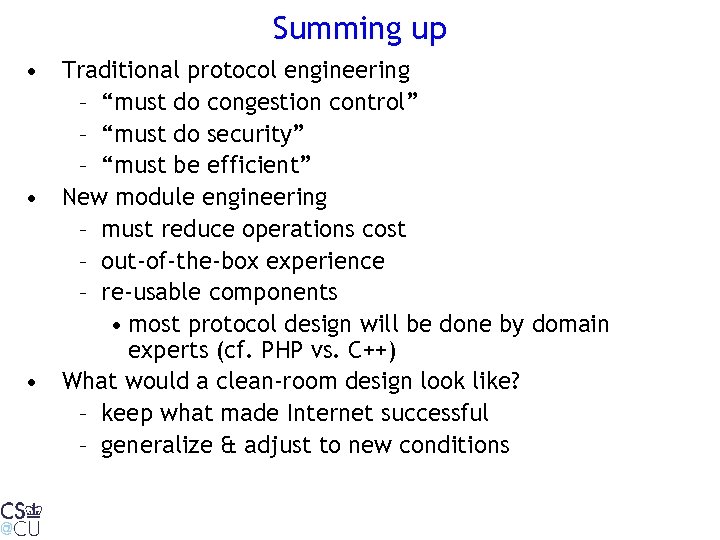

Summing up • Traditional protocol engineering – “must do congestion control” – “must do security” – “must be efficient” • New module engineering – must reduce operations cost – out-of-the-box experience – re-usable components • most protocol design will be done by domain experts (cf. PHP vs. C++) • What would a clean-room design look like? – keep what made Internet successful – generalize & adjust to new conditions

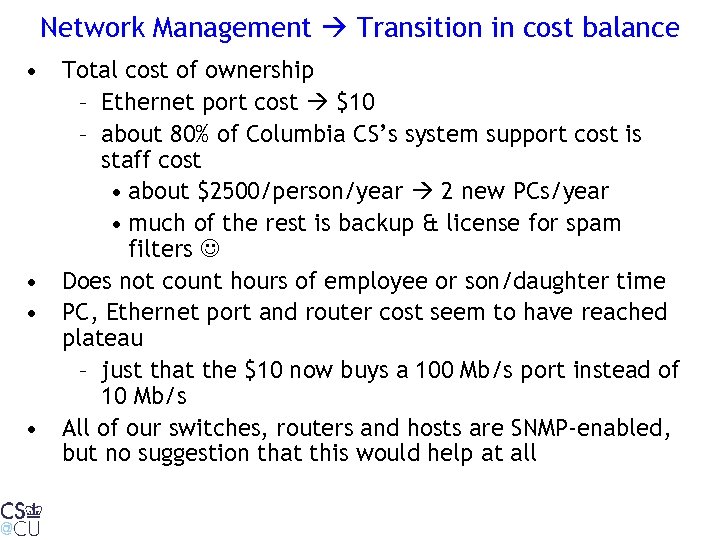

Network Management Transition in cost balance • Total cost of ownership – Ethernet port cost $10 – about 80% of Columbia CS’s system support cost is staff cost • about $2500/person/year 2 new PCs/year • much of the rest is backup & license for spam filters • Does not count hours of employee or son/daughter time • PC, Ethernet port and router cost seem to have reached plateau – just that the $10 now buys a 100 Mb/s port instead of 10 Mb/s • All of our switches, routers and hosts are SNMP-enabled, but no suggestion that this would help at all

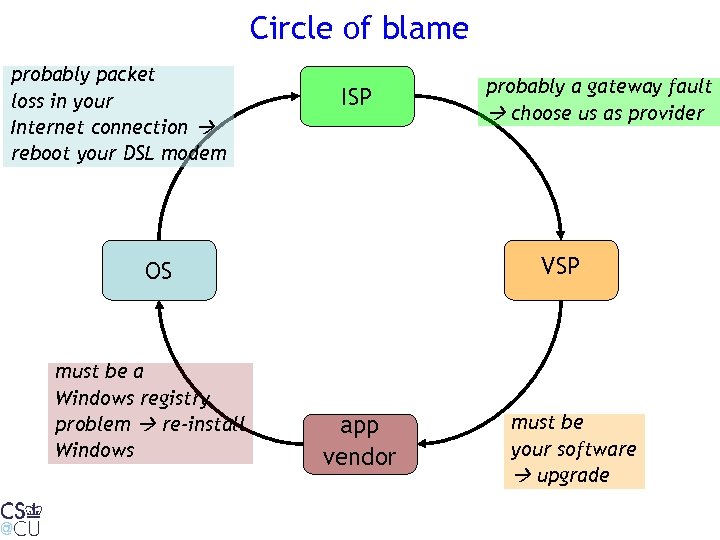

Circle of blame probably packet loss in your Internet connection reboot your DSL modem ISP VSP OS must be a Windows registry problem re-install Windows probably a gateway fault choose us as provider app vendor must be your software upgrade

Diagnostic undecidability • symptom: “cannot reach server” • more precise: send packet, but no response • causes: – NAT problem (return packet dropped)? – firewall problem? – path to server broken? – outdated server information (moved)? – server dead? • 5 causes very different remedies – no good way for non-technical user to tell • Whom do you call?

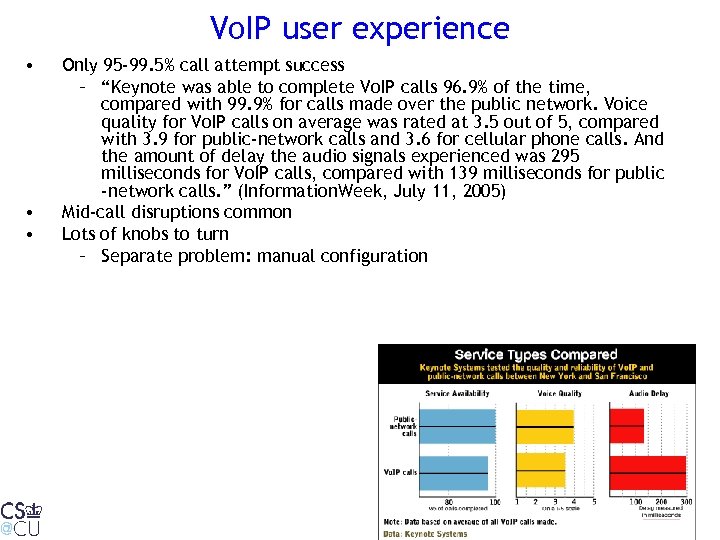

Vo. IP user experience • • • Only 95 -99. 5% call attempt success – “Keynote was able to complete Vo. IP calls 96. 9% of the time, compared with 99. 9% for calls made over the public network. Voice quality for Vo. IP calls on average was rated at 3. 5 out of 5, compared with 3. 9 for public-network calls and 3. 6 for cellular phone calls. And the amount of delay the audio signals experienced was 295 milliseconds for Vo. IP calls, compared with 139 milliseconds for public -network calls. ” (Information. Week, July 11, 2005) Mid-call disruptions common Lots of knobs to turn – Separate problem: manual configuration

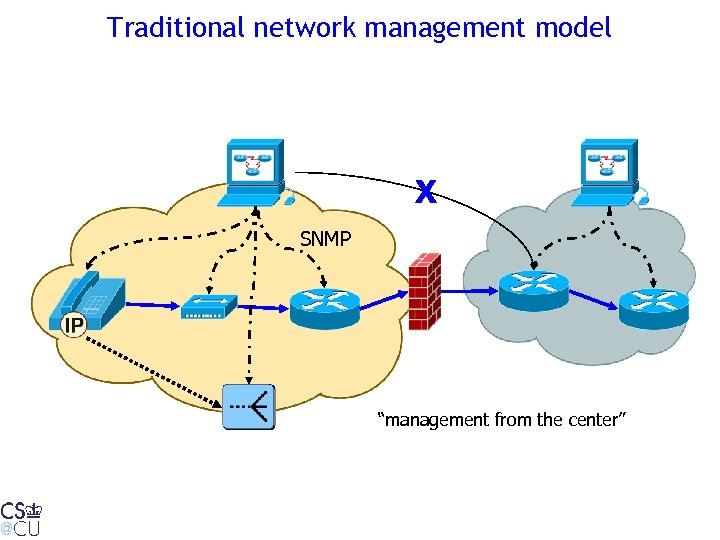

Traditional network management model X SNMP “management from the center”

Old assumptions, now wrong • Single provider (enterprise, carrier) – has access to most path elements – professionally managed • Problems are hard failures & elements operate correctly – element failures (“link dead”) – substantial packet loss • Mostly L 2 and L 3 elements – switches, routers – rarely 802. 11 APs • Problems are specific to a protocol – “IP is not working” • Indirect detection – MIB variable vs. actual protocol performance • End systems don’t need management – DMI & SNMP never succeeded – each application does its own updates

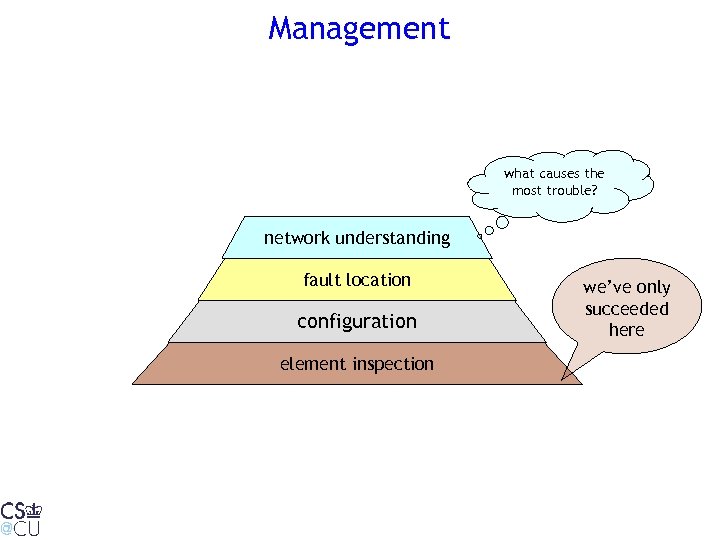

Management what causes the most trouble? network understanding fault location configuration element inspection we’ve only succeeded here

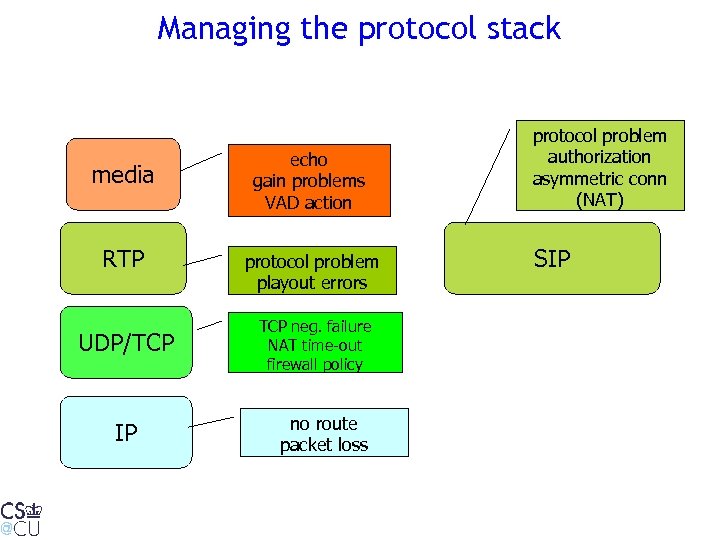

Managing the protocol stack media RTP UDP/TCP IP echo gain problems VAD action protocol problem playout errors TCP neg. failure NAT time-out firewall policy no route packet loss protocol problem authorization asymmetric conn (NAT) SIP

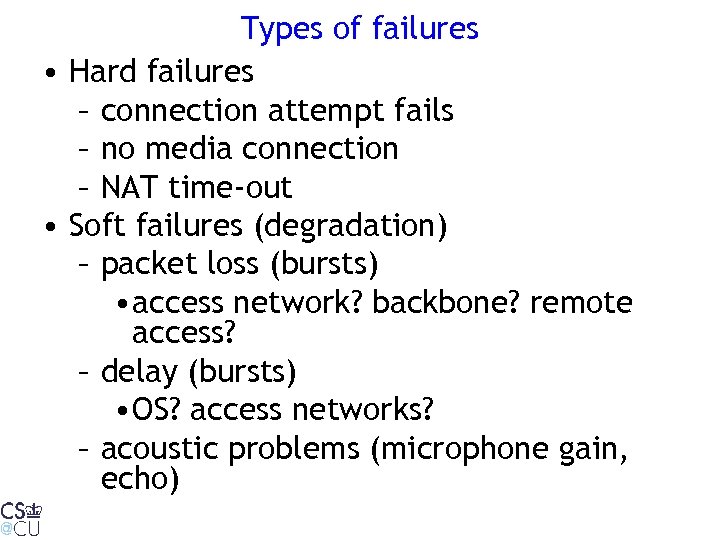

Types of failures • Hard failures – connection attempt fails – no media connection – NAT time-out • Soft failures (degradation) – packet loss (bursts) • access network? backbone? remote access? – delay (bursts) • OS? access networks? – acoustic problems (microphone gain, echo)

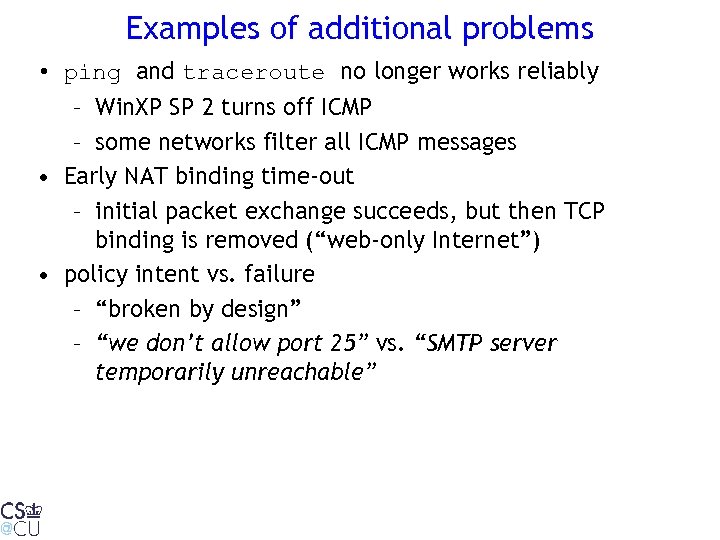

Examples of additional problems • ping and traceroute no longer works reliably – Win. XP SP 2 turns off ICMP – some networks filter all ICMP messages • Early NAT binding time-out – initial packet exchange succeeds, but then TCP binding is removed (“web-only Internet”) • policy intent vs. failure – “broken by design” – “we don’t allow port 25” vs. “SMTP server temporarily unreachable”

Proposal: “Do You See What I See? ” • Each node has a set of active and passive measurement tools • Use intercept (NDIS, pcap) – to detect problems automatically • e. g. , no response to HTTP or DNS request – gather performance statistics (packet jitter) – capture RTCP and similar measurement packets • Nodes can ask others for their view – possibly also dedicated “weather stations” • Iterative process, leading to: – user indication of cause of failure – in some cases, work-around (application-layer routing) TURN server, use remote DNS servers • Nodes collect statistical information on failures and their likely causes

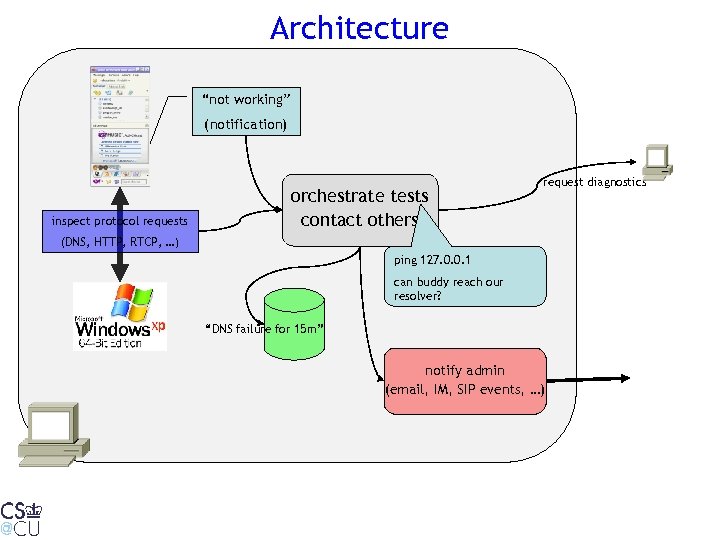

Architecture “not working” (notification) inspect protocol requests orchestrate tests contact others request diagnostics (DNS, HTTP, RTCP, …) ping 127. 0. 0. 1 can buddy reach our resolver? “DNS failure for 15 m” notify admin (email, IM, SIP events, …)

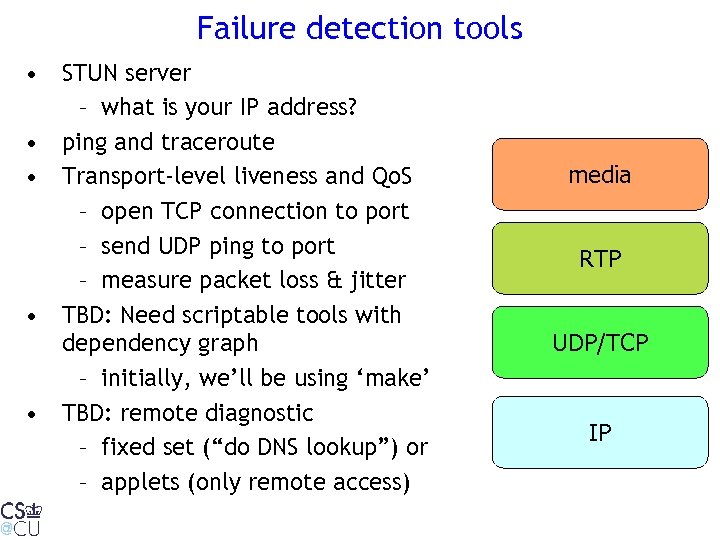

Failure detection tools • STUN server – what is your IP address? • ping and traceroute • Transport-level liveness and Qo. S – open TCP connection to port – send UDP ping to port – measure packet loss & jitter • TBD: Need scriptable tools with dependency graph – initially, we’ll be using ‘make’ • TBD: remote diagnostic – fixed set (“do DNS lookup”) or – applets (only remote access) media RTP UDP/TCP IP

Failure statistics • Which parts of the network are most likely to fail (or degrade) – access network – network interconnects – backbone network – infrastructure servers (DHCP, DNS) – application servers (SIP, RTSP, HTTP, …) – protocol failures/incompatibility • Currently, mostly guesses • End nodes can gather and accumulate statistics

Conclusion • Hypothesis: network reliability as single largest open technical issue prevents (some) new applications • Existing management tools of limited use to most enterprises and end users • Transition to “self-service” networks – support non-technical users, not just NOCs running HP Open. View or Tivoli • Need better view of network reliability

e8e6328ba2bb28c847d244abfef7085b.ppt