69a1933d399a33d1cfe807bbf5896452.ppt

- Количество слайдов: 33

HELSINKI UNIVERSITY OF TECHNOLOGY ADAPTIVE INFORMATICS RESEARCH CENTRE Morpho Challenge in Pascal Challenges Workshop Venice, 12 April 2006 Morfessor in the Morpho Challenge Mathias Creutz and Krista Lagus Helsinki University of Technology (HUT) Adaptive Informatics Research Centre

HELSINKI UNIVERSITY OF TECHNOLOGY ADAPTIVE INFORMATICS RESEARCH CENTRE Morpho Challenge in Pascal Challenges Workshop Venice, 12 April 2006 Morfessor in the Morpho Challenge Mathias Creutz and Krista Lagus Helsinki University of Technology (HUT) Adaptive Informatics Research Centre

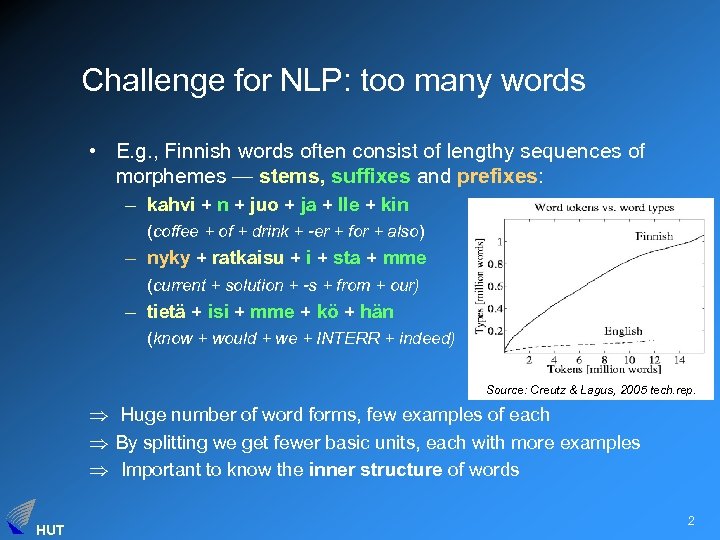

Challenge for NLP: too many words • E. g. , Finnish words often consist of lengthy sequences of morphemes — stems, suffixes and prefixes: – kahvi + n + juo + ja + lle + kin (coffee + of + drink + -er + for + also) – nyky + ratkaisu + i + sta + mme (current + solution + -s + from + our) – tietä + isi + mme + kö + hän (know + would + we + INTERR + indeed) Source: Creutz & Lagus, 2005 tech. rep. Huge number of word forms, few examples of each By splitting we get fewer basic units, each with more examples Important to know the inner structure of words HUT 2

Challenge for NLP: too many words • E. g. , Finnish words often consist of lengthy sequences of morphemes — stems, suffixes and prefixes: – kahvi + n + juo + ja + lle + kin (coffee + of + drink + -er + for + also) – nyky + ratkaisu + i + sta + mme (current + solution + -s + from + our) – tietä + isi + mme + kö + hän (know + would + we + INTERR + indeed) Source: Creutz & Lagus, 2005 tech. rep. Huge number of word forms, few examples of each By splitting we get fewer basic units, each with more examples Important to know the inner structure of words HUT 2

Solution approaches 1. Hand-made morphological analyzers (e. g. , based on Koskenniemi’s TWOL = two-level morphology) 1. accurate 2. labour-intensive construction, commercial, coverage, updating when languages change, addition of new languages • Data-driven methods, preferably minimally supervised (e. g. , John Goldsmith’s Linguistica) + adaptive, language-independent – lower accuracy – many existing algorithms assume few morphemes per word, unsuitable for compounds and multiple affixes HUT 3

Solution approaches 1. Hand-made morphological analyzers (e. g. , based on Koskenniemi’s TWOL = two-level morphology) 1. accurate 2. labour-intensive construction, commercial, coverage, updating when languages change, addition of new languages • Data-driven methods, preferably minimally supervised (e. g. , John Goldsmith’s Linguistica) + adaptive, language-independent – lower accuracy – many existing algorithms assume few morphemes per word, unsuitable for compounds and multiple affixes HUT 3

Goal: segmentation Morfessor • Learn representations of – the smallest individually meaningful units of language (morphemes) – and their interaction – in an unsupervised and data-driven manner from raw text – making as general and as language-independent assumptions as possible. • Evaluate Hutmegs – against a gold-standard morphological analysis of word forms – integrated in NLP applications (e. g. speech recognition) HUT 4

Goal: segmentation Morfessor • Learn representations of – the smallest individually meaningful units of language (morphemes) – and their interaction – in an unsupervised and data-driven manner from raw text – making as general and as language-independent assumptions as possible. • Evaluate Hutmegs – against a gold-standard morphological analysis of word forms – integrated in NLP applications (e. g. speech recognition) HUT 4

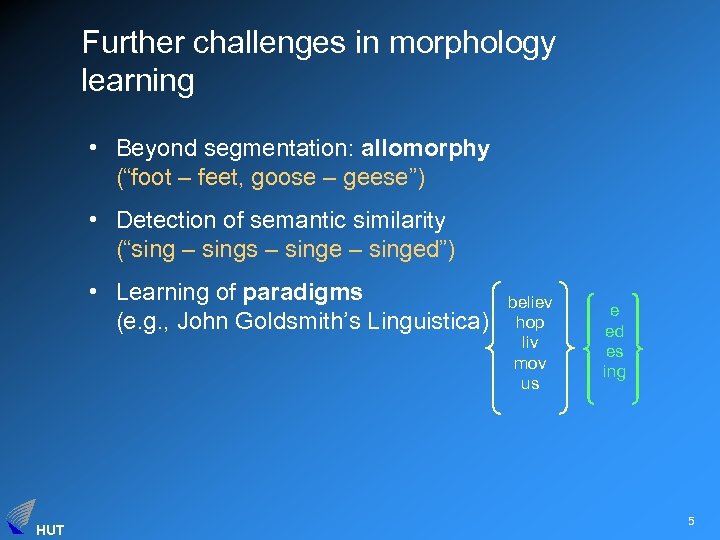

Further challenges in morphology learning • Beyond segmentation: allomorphy (“foot – feet, goose – geese”) • Detection of semantic similarity (“sing – sings – singed”) • Learning of paradigms (e. g. , John Goldsmith’s Linguistica) HUT believ hop liv mov us e ed es ing 5

Further challenges in morphology learning • Beyond segmentation: allomorphy (“foot – feet, goose – geese”) • Detection of semantic similarity (“sing – sings – singed”) • Learning of paradigms (e. g. , John Goldsmith’s Linguistica) HUT believ hop liv mov us e ed es ing 5

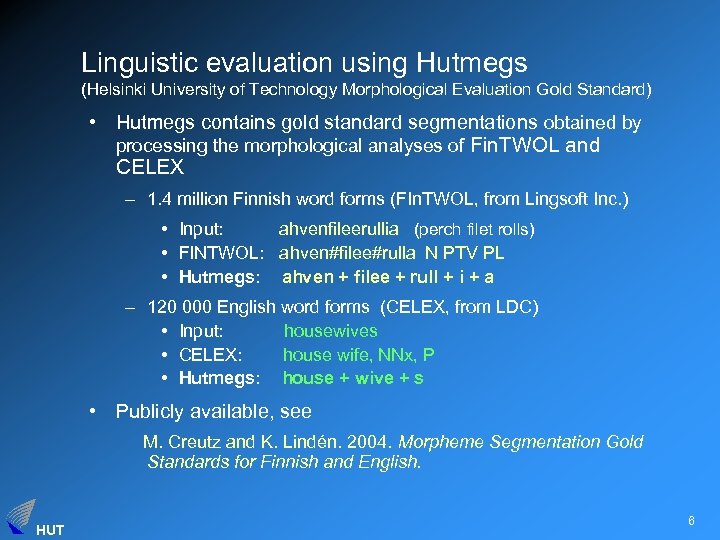

Linguistic evaluation using Hutmegs (Helsinki University of Technology Morphological Evaluation Gold Standard) • Hutmegs contains gold standard segmentations obtained by processing the morphological analyses of Fin. TWOL and CELEX – 1. 4 million Finnish word forms (FIn. TWOL, from Lingsoft Inc. ) • Input: ahvenfileerullia (perch filet rolls) • FINTWOL: ahven#filee#rulla N PTV PL • Hutmegs: ahven + filee + rull + i + a – 120 000 English word forms (CELEX, from LDC) • Input: housewives • CELEX: house wife, NNx, P • Hutmegs: house + wive + s • Publicly available, see M. Creutz and K. Lindén. 2004. Morpheme Segmentation Gold Standards for Finnish and English. HUT 6

Linguistic evaluation using Hutmegs (Helsinki University of Technology Morphological Evaluation Gold Standard) • Hutmegs contains gold standard segmentations obtained by processing the morphological analyses of Fin. TWOL and CELEX – 1. 4 million Finnish word forms (FIn. TWOL, from Lingsoft Inc. ) • Input: ahvenfileerullia (perch filet rolls) • FINTWOL: ahven#filee#rulla N PTV PL • Hutmegs: ahven + filee + rull + i + a – 120 000 English word forms (CELEX, from LDC) • Input: housewives • CELEX: house wife, NNx, P • Hutmegs: house + wive + s • Publicly available, see M. Creutz and K. Lindén. 2004. Morpheme Segmentation Gold Standards for Finnish and English. HUT 6

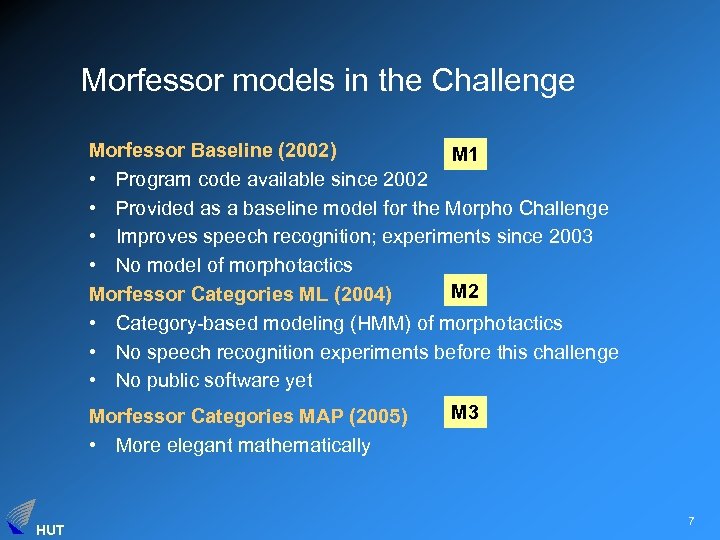

Morfessor models in the Challenge Morfessor Baseline (2002) M 1 • Program code available since 2002 • Provided as a baseline model for the Morpho Challenge • Improves speech recognition; experiments since 2003 • No model of morphotactics M 2 Morfessor Categories ML (2004) • Category-based modeling (HMM) of morphotactics • No speech recognition experiments before this challenge • No public software yet Morfessor Categories MAP (2005) • More elegant mathematically HUT M 3 7

Morfessor models in the Challenge Morfessor Baseline (2002) M 1 • Program code available since 2002 • Provided as a baseline model for the Morpho Challenge • Improves speech recognition; experiments since 2003 • No model of morphotactics M 2 Morfessor Categories ML (2004) • Category-based modeling (HMM) of morphotactics • No speech recognition experiments before this challenge • No public software yet Morfessor Categories MAP (2005) • More elegant mathematically HUT M 3 7

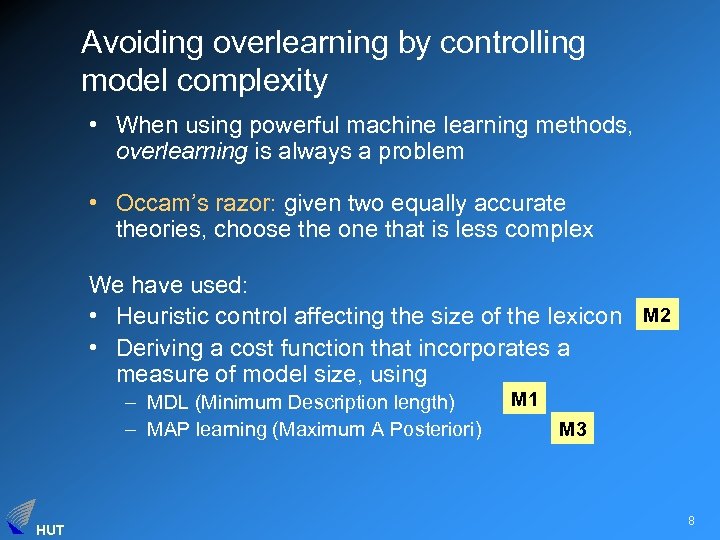

Avoiding overlearning by controlling model complexity • When using powerful machine learning methods, overlearning is always a problem • Occam’s razor: given two equally accurate theories, choose the one that is less complex We have used: • Heuristic control affecting the size of the lexicon M 2 • Deriving a cost function that incorporates a measure of model size, using – MDL (Minimum Description length) – MAP learning (Maximum A Posteriori) HUT M 1 M 3 8

Avoiding overlearning by controlling model complexity • When using powerful machine learning methods, overlearning is always a problem • Occam’s razor: given two equally accurate theories, choose the one that is less complex We have used: • Heuristic control affecting the size of the lexicon M 2 • Deriving a cost function that incorporates a measure of model size, using – MDL (Minimum Description length) – MAP learning (Maximum A Posteriori) HUT M 1 M 3 8

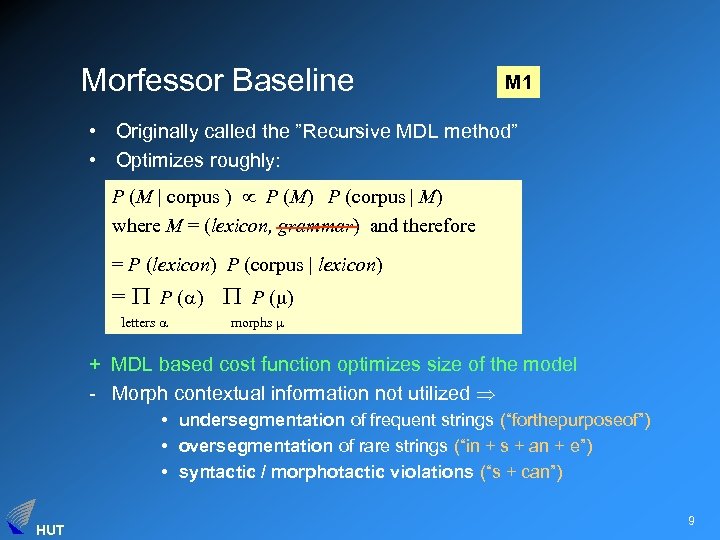

Morfessor Baseline M 1 • Originally called the ”Recursive MDL method” • Optimizes roughly: P (M | corpus ) P (M) P (corpus | M) where M = (lexicon, grammar) and therefore = P (lexicon) P (corpus | lexicon) = P ( ) letters morphs + MDL based cost function optimizes size of the model - Morph contextual information not utilized • undersegmentation of frequent strings (“forthepurposeof”) • oversegmentation of rare strings (“in + s + an + e”) • syntactic / morphotactic violations (“s + can”) HUT 9

Morfessor Baseline M 1 • Originally called the ”Recursive MDL method” • Optimizes roughly: P (M | corpus ) P (M) P (corpus | M) where M = (lexicon, grammar) and therefore = P (lexicon) P (corpus | lexicon) = P ( ) letters morphs + MDL based cost function optimizes size of the model - Morph contextual information not utilized • undersegmentation of frequent strings (“forthepurposeof”) • oversegmentation of rare strings (“in + s + an + e”) • syntactic / morphotactic violations (“s + can”) HUT 9

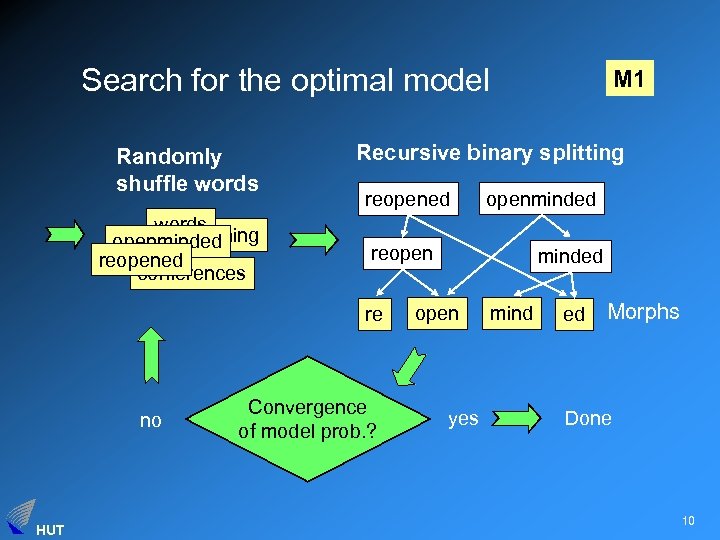

Search for the optimal model Randomly shuffle words opening openminded reopened conferences Recursive binary splitting reopened HUT openminded reopen re no M 1 Convergence of model prob. ? minded open yes mind ed Morphs Done 10

Search for the optimal model Randomly shuffle words opening openminded reopened conferences Recursive binary splitting reopened HUT openminded reopen re no M 1 Convergence of model prob. ? minded open yes mind ed Morphs Done 10

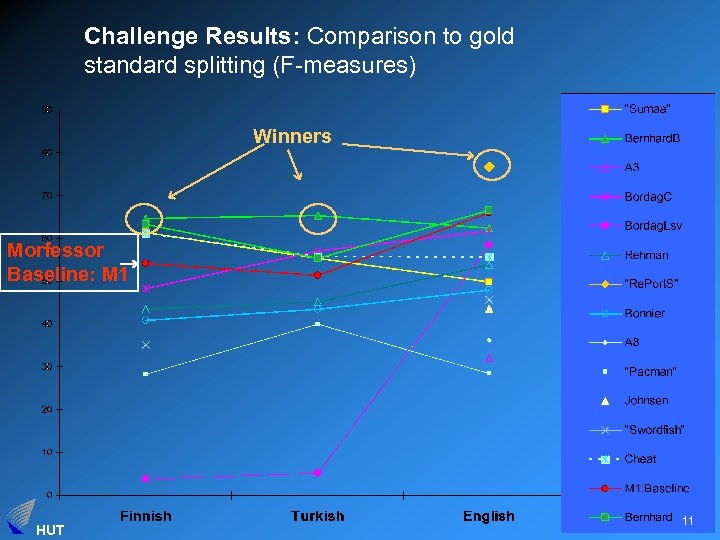

Challenge Results: Comparison to gold standard splitting (F-measures) Winners Morfessor Baseline: M 1 HUT 11

Challenge Results: Comparison to gold standard splitting (F-measures) Winners Morfessor Baseline: M 1 HUT 11

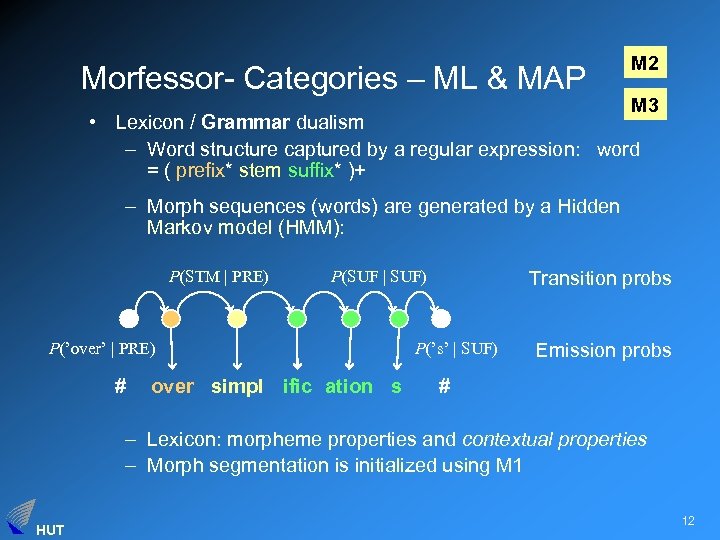

Morfessor- Categories – ML & MAP M 2 M 3 • Lexicon / Grammar dualism – Word structure captured by a regular expression: word = ( prefix* stem suffix* )+ – Morph sequences (words) are generated by a Hidden Markov model (HMM): P(STM | PRE) P(SUF | SUF) P(’over’ | PRE) # over simpl ific ation s Transition probs P(’s’ | SUF) Emission probs # – Lexicon: morpheme properties and contextual properties – Morph segmentation is initialized using M 1 HUT 12

Morfessor- Categories – ML & MAP M 2 M 3 • Lexicon / Grammar dualism – Word structure captured by a regular expression: word = ( prefix* stem suffix* )+ – Morph sequences (words) are generated by a Hidden Markov model (HMM): P(STM | PRE) P(SUF | SUF) P(’over’ | PRE) # over simpl ific ation s Transition probs P(’s’ | SUF) Emission probs # – Lexicon: morpheme properties and contextual properties – Morph segmentation is initialized using M 1 HUT 12

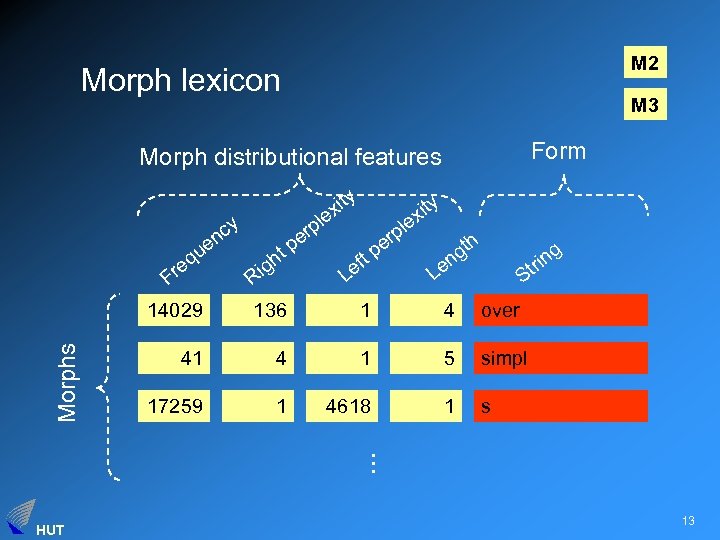

M 2 Morph lexicon M 3 Form Morph distributional features cy n e qu e Fr ity x le y xit e l rp pe t p er tp gh Ri ef L h t ng e g n tri L S 136 1 4 over 41 4 1 5 simpl 17259 1 4618 1 s . . . Morphs 14029 HUT 13

M 2 Morph lexicon M 3 Form Morph distributional features cy n e qu e Fr ity x le y xit e l rp pe t p er tp gh Ri ef L h t ng e g n tri L S 136 1 4 over 41 4 1 5 simpl 17259 1 4618 1 s . . . Morphs 14029 HUT 13

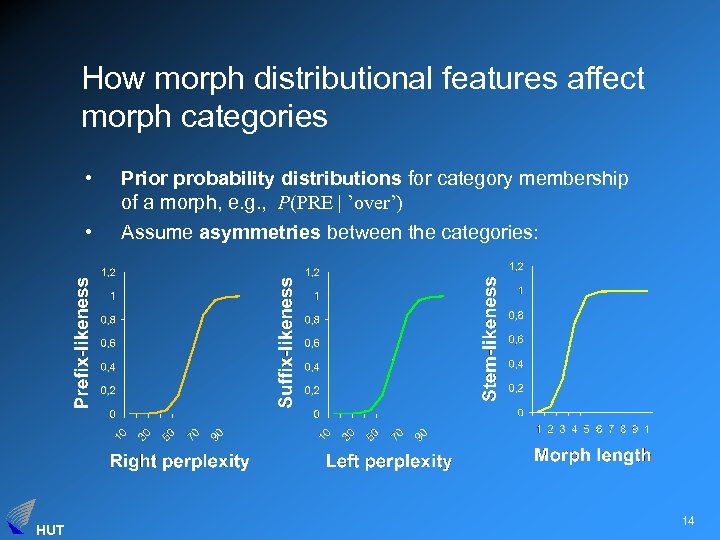

How morph distributional features affect morph categories • • HUT Prior probability distributions for category membership of a morph, e. g. , P(PRE | ’over’) Assume asymmetries between the categories: 14

How morph distributional features affect morph categories • • HUT Prior probability distributions for category membership of a morph, e. g. , P(PRE | ’over’) Assume asymmetries between the categories: 14

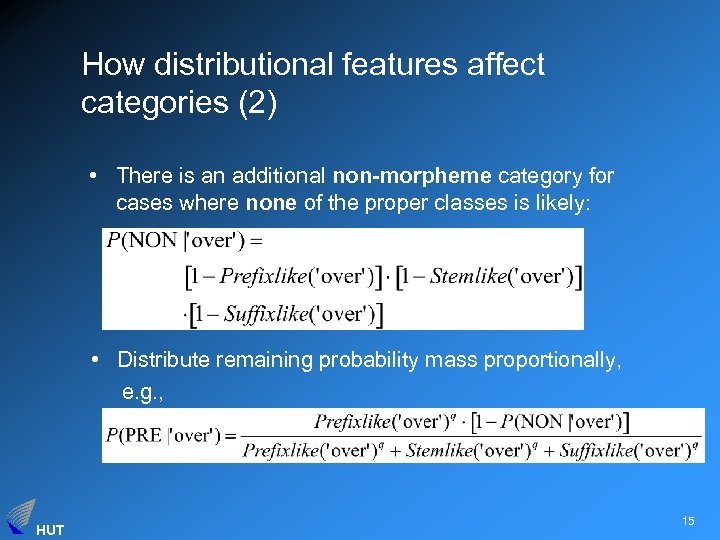

How distributional features affect categories (2) • There is an additional non-morpheme category for cases where none of the proper classes is likely: • Distribute remaining probability mass proportionally, e. g. , HUT 15

How distributional features affect categories (2) • There is an additional non-morpheme category for cases where none of the proper classes is likely: • Distribute remaining probability mass proportionally, e. g. , HUT 15

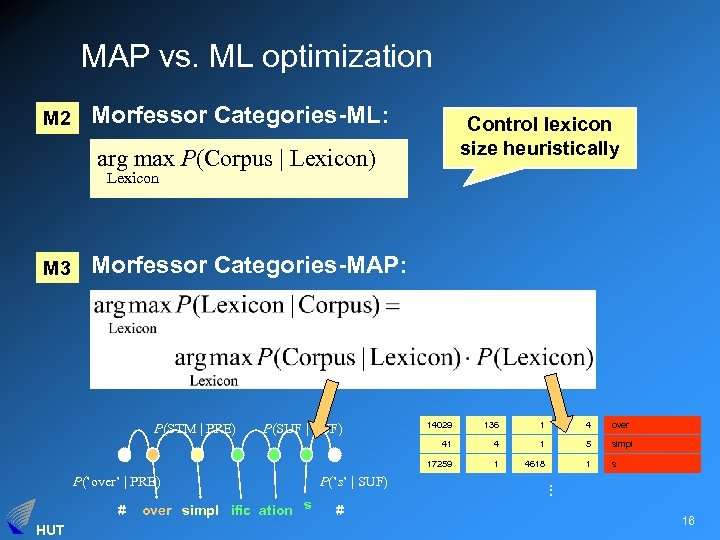

MAP vs. ML optimization M 2 Morfessor Categories-ML: Control lexicon size heuristically arg max P(Corpus | Lexicon) Lexicon M 3 Morfessor Categories-MAP: # HUT over simpl ific ation s P(’s’ | SUF) # 1 4 over 4 1 5 simpl 1 4618 1 s . . . P(’over’ | PRE) 136 17259 P(SUF | SUF) 14029 41 P(STM | PRE) 16

MAP vs. ML optimization M 2 Morfessor Categories-ML: Control lexicon size heuristically arg max P(Corpus | Lexicon) Lexicon M 3 Morfessor Categories-MAP: # HUT over simpl ific ation s P(’s’ | SUF) # 1 4 over 4 1 5 simpl 1 4618 1 s . . . P(’over’ | PRE) 136 17259 P(SUF | SUF) 14029 41 P(STM | PRE) 16

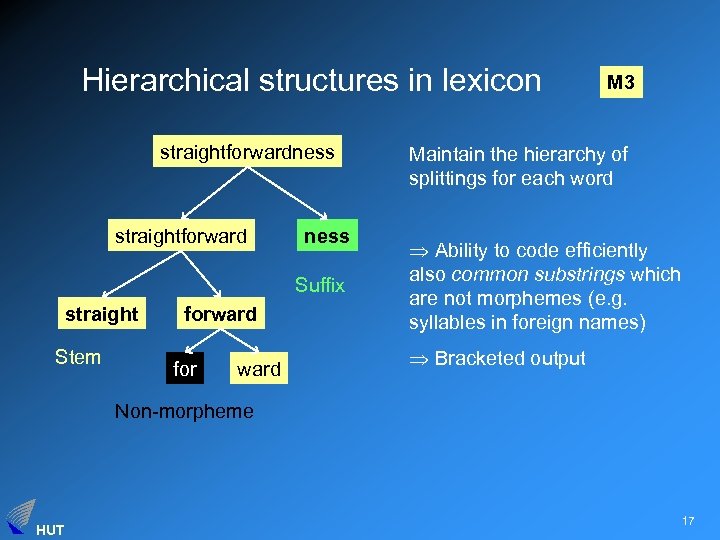

Hierarchical structures in lexicon straightforwardness straightforward ness Suffix straight Stem forward for ward M 3 Maintain the hierarchy of splittings for each word Ability to code efficiently also common substrings which are not morphemes (e. g. syllables in foreign names) Bracketed output Non-morpheme HUT 17

Hierarchical structures in lexicon straightforwardness straightforward ness Suffix straight Stem forward for ward M 3 Maintain the hierarchy of splittings for each word Ability to code efficiently also common substrings which are not morphemes (e. g. syllables in foreign names) Bracketed output Non-morpheme HUT 17

![Example segmentations M 3 Finnish [ aarre kammio ] issa [ accomplish es ] Example segmentations M 3 Finnish [ aarre kammio ] issa [ accomplish es ]](https://present5.com/presentation/69a1933d399a33d1cfe807bbf5896452/image-18.jpg) Example segmentations M 3 Finnish [ aarre kammio ] issa [ accomplish es ] [ aarre kammio ] on [ accomplish ment ] bahama laiset [ beautiful ly ] bahama [ saari en ] [ insur ed ] [ epä [ [ tasa paino ] inen ] ] [ insure s ] maclare n [ insur ing ] [ nais [ autoili ja ] ] a [ [ [ photo graph ] er ] s ] [ sano ttiin ] ko [ present ly ] found töhri ( mis istä ) [ re siding ] [ [ voi mme ] ko ] HUT English [ [ un [ expect ed ] ] ly ] 18

Example segmentations M 3 Finnish [ aarre kammio ] issa [ accomplish es ] [ aarre kammio ] on [ accomplish ment ] bahama laiset [ beautiful ly ] bahama [ saari en ] [ insur ed ] [ epä [ [ tasa paino ] inen ] ] [ insure s ] maclare n [ insur ing ] [ nais [ autoili ja ] ] a [ [ [ photo graph ] er ] s ] [ sano ttiin ] ko [ present ly ] found töhri ( mis istä ) [ re siding ] [ [ voi mme ] ko ] HUT English [ [ un [ expect ed ] ] ly ] 18

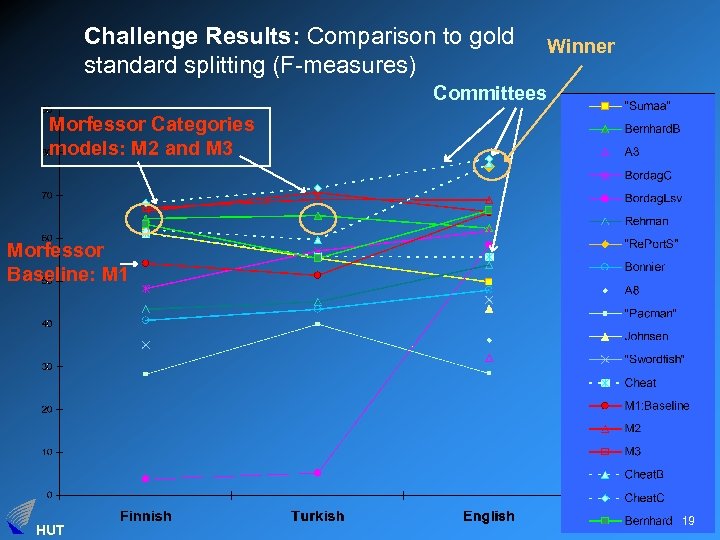

Challenge Results: Comparison to gold standard splitting (F-measures) Winner Committees Morfessor Categories models: M 2 and M 3 Morfessor Baseline: M 1 HUT 19

Challenge Results: Comparison to gold standard splitting (F-measures) Winner Committees Morfessor Categories models: M 2 and M 3 Morfessor Baseline: M 1 HUT 19

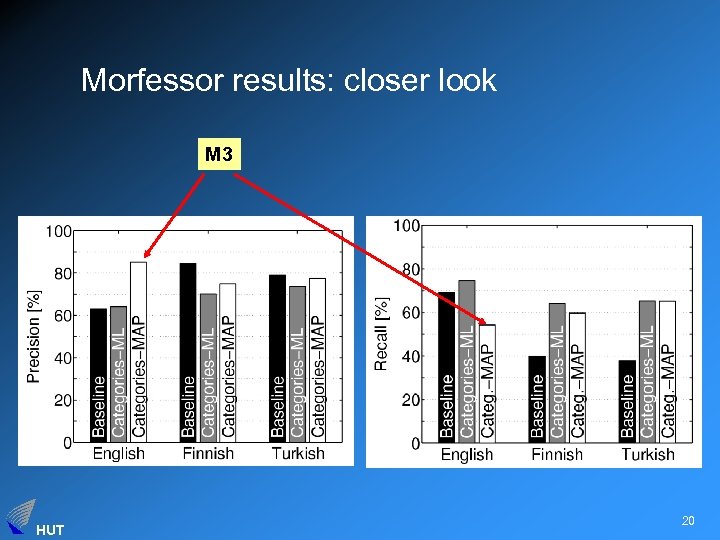

Morfessor results: closer look M 3 HUT 20

Morfessor results: closer look M 3 HUT 20

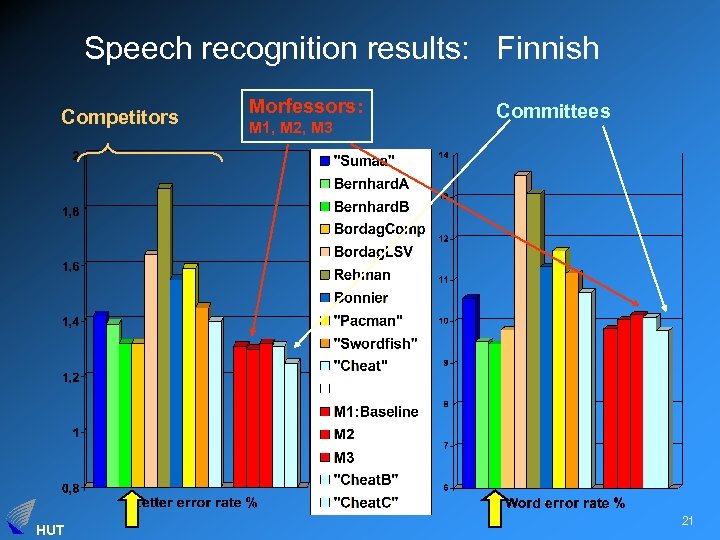

Speech recognition results: Finnish Competitors HUT Morfessors: M 1, M 2, M 3 Committees 21

Speech recognition results: Finnish Competitors HUT Morfessors: M 1, M 2, M 3 Committees 21

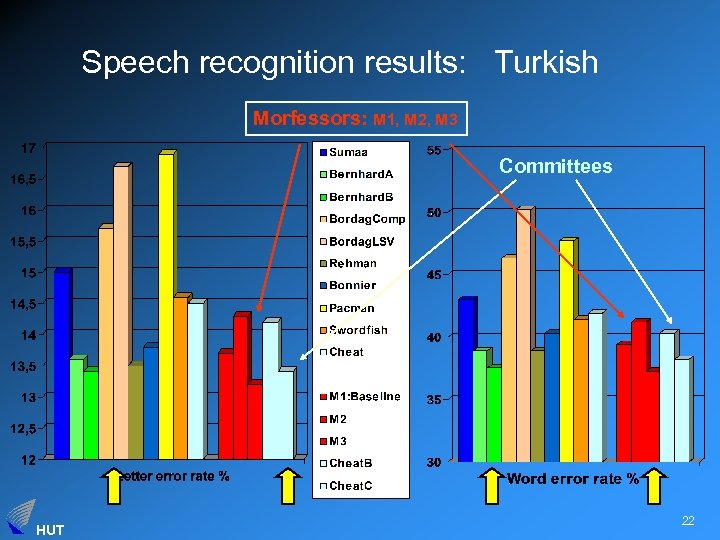

Speech recognition results: Turkish Morfessors: M 1, M 2, M 3 Committees HUT 22

Speech recognition results: Turkish Morfessors: M 1, M 2, M 3 Committees HUT 22

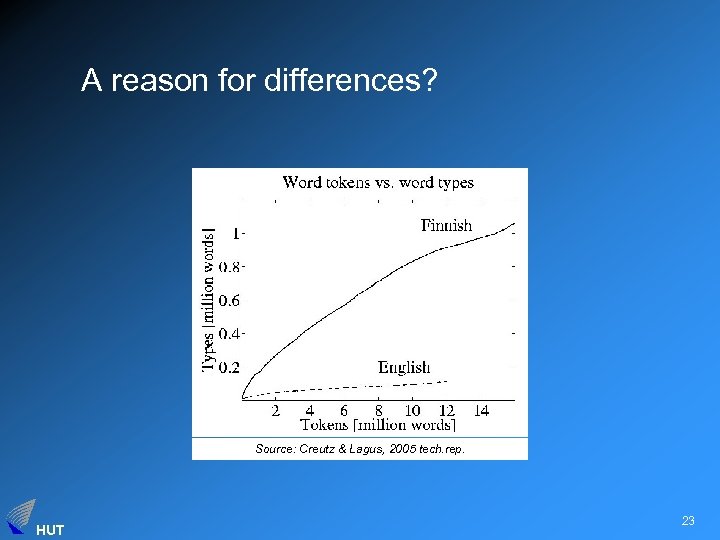

A reason for differences? Source: Creutz & Lagus, 2005 tech. rep. HUT 23

A reason for differences? Source: Creutz & Lagus, 2005 tech. rep. HUT 23

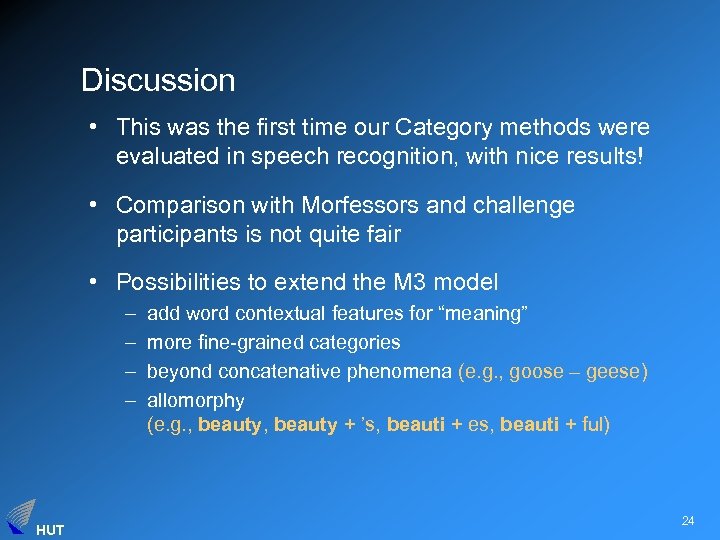

Discussion • This was the first time our Category methods were evaluated in speech recognition, with nice results! • Comparison with Morfessors and challenge participants is not quite fair • Possibilities to extend the M 3 model – – HUT add word contextual features for “meaning” more fine-grained categories beyond concatenative phenomena (e. g. , goose – geese) allomorphy (e. g. , beauty + ’s, beauti + es, beauti + ful) 24

Discussion • This was the first time our Category methods were evaluated in speech recognition, with nice results! • Comparison with Morfessors and challenge participants is not quite fair • Possibilities to extend the M 3 model – – HUT add word contextual features for “meaning” more fine-grained categories beyond concatenative phenomena (e. g. , goose – geese) allomorphy (e. g. , beauty + ’s, beauti + es, beauti + ful) 24

Questions for the Morpho Challenge • How language-general in fact are the methods? – Norwegian, French, German, Arabic, . . . • Did we, or can we succeed in inducing ”basic units of meaning”? – Evaluation in other NLP problems: MT, IR, QA, TE, . . . – Application of morphs to non-NLP problems? Machine vision, image analysis, video analysis. . . • Will there be another Morpho Challenge? HUT 25

Questions for the Morpho Challenge • How language-general in fact are the methods? – Norwegian, French, German, Arabic, . . . • Did we, or can we succeed in inducing ”basic units of meaning”? – Evaluation in other NLP problems: MT, IR, QA, TE, . . . – Application of morphs to non-NLP problems? Machine vision, image analysis, video analysis. . . • Will there be another Morpho Challenge? HUT 25

See you in another challenge! best wishes, Krista (and Sade) HUT 26

See you in another challenge! best wishes, Krista (and Sade) HUT 26

Muistiinpanojani – kuvaa lyhyesti omat menetelmät – pohdi omien menetelmien eroja suhteessa niiden ominaisuuksiin – ole nöyrä, tuo esiin miksi vertailu on epäreilu (aiempi kokemus; oma data; ja puh. tunnistuskin on tuttu sovellus, joten sen ryhmän aiempi tutkimustyö on voinut vaikuttaa menetelmänkehitykseemme epäsuorasti) + pohdintaa meidän menetelmien eroista? + esimerkkisegmentointeja kaikistamme? + Diskussiokamaa Mikon paperista ja meidän paperista + eka tuloskuva on nyt sekava + värit eri tavalla kuin muissa: vaihda värit ja tuplaa, nosta voittajaa paremmin esiin HUT 27

Muistiinpanojani – kuvaa lyhyesti omat menetelmät – pohdi omien menetelmien eroja suhteessa niiden ominaisuuksiin – ole nöyrä, tuo esiin miksi vertailu on epäreilu (aiempi kokemus; oma data; ja puh. tunnistuskin on tuttu sovellus, joten sen ryhmän aiempi tutkimustyö on voinut vaikuttaa menetelmänkehitykseemme epäsuorasti) + pohdintaa meidän menetelmien eroista? + esimerkkisegmentointeja kaikistamme? + Diskussiokamaa Mikon paperista ja meidän paperista + eka tuloskuva on nyt sekava + värit eri tavalla kuin muissa: vaihda värit ja tuplaa, nosta voittajaa paremmin esiin HUT 27

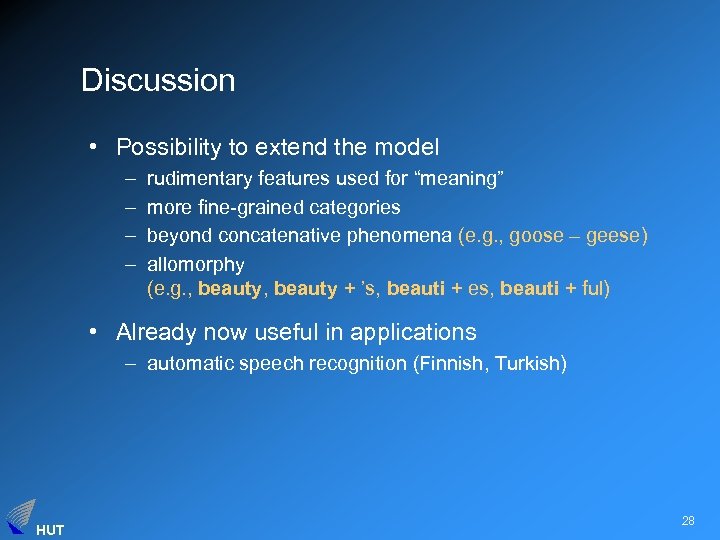

Discussion • Possibility to extend the model – – rudimentary features used for “meaning” more fine-grained categories beyond concatenative phenomena (e. g. , goose – geese) allomorphy (e. g. , beauty + ’s, beauti + es, beauti + ful) • Already now useful in applications – automatic speech recognition (Finnish, Turkish) HUT 28

Discussion • Possibility to extend the model – – rudimentary features used for “meaning” more fine-grained categories beyond concatenative phenomena (e. g. , goose – geese) allomorphy (e. g. , beauty + ’s, beauti + es, beauti + ful) • Already now useful in applications – automatic speech recognition (Finnish, Turkish) HUT 28

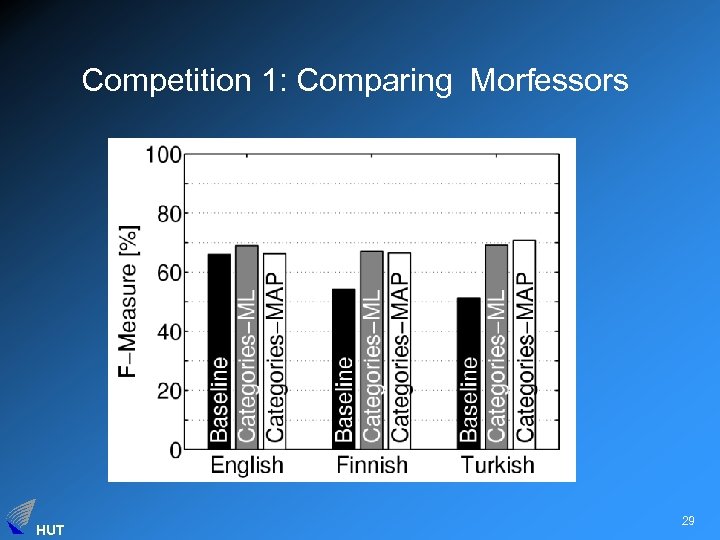

Competition 1: Comparing Morfessors HUT 29

Competition 1: Comparing Morfessors HUT 29

Overview of methods • Machine learning methodology • FEATURES USED: information on – morph contexts (Bernard, Morfessor) – word contexts (Bordag) HUT 30

Overview of methods • Machine learning methodology • FEATURES USED: information on – morph contexts (Bernard, Morfessor) – word contexts (Bordag) HUT 30

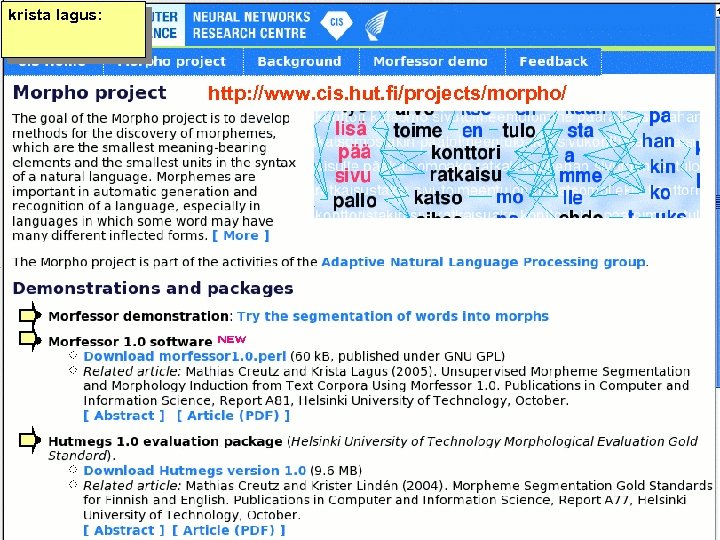

krista lagus: Morpho project page http: //www. cis. hut. fi/projects/morpho/ HUT 31

krista lagus: Morpho project page http: //www. cis. hut. fi/projects/morpho/ HUT 31

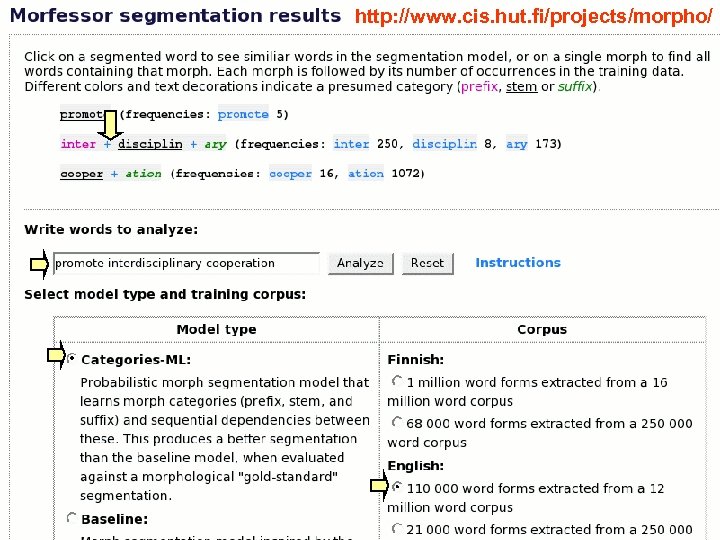

http: //www. cis. hut. fi/projects/morpho/ Demo 6 HUT 32

http: //www. cis. hut. fi/projects/morpho/ Demo 6 HUT 32

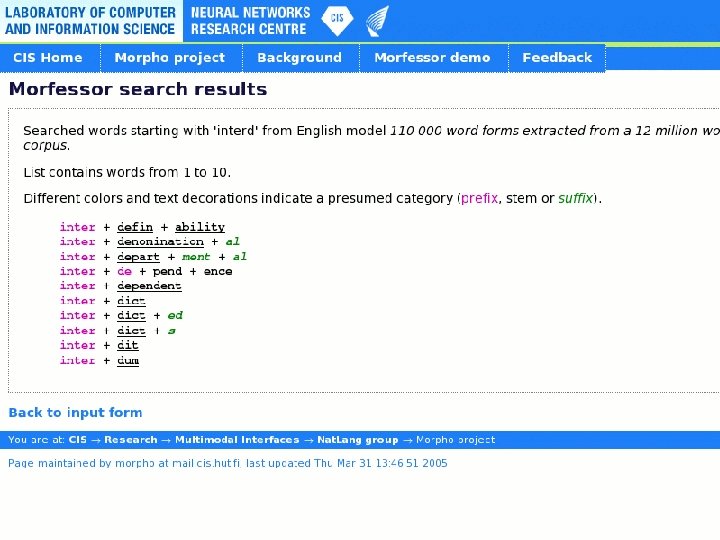

Demo 7 HUT 33

Demo 7 HUT 33