2b72357f8e13dce86678302f480f24f0.ppt

- Количество слайдов: 29

Grid Production Experience in the ATLAS Experiment Kaushik De University of Texas at Arlington BNL Technology Meeting March 29, 2004

Grid Production Experience in the ATLAS Experiment Kaushik De University of Texas at Arlington BNL Technology Meeting March 29, 2004

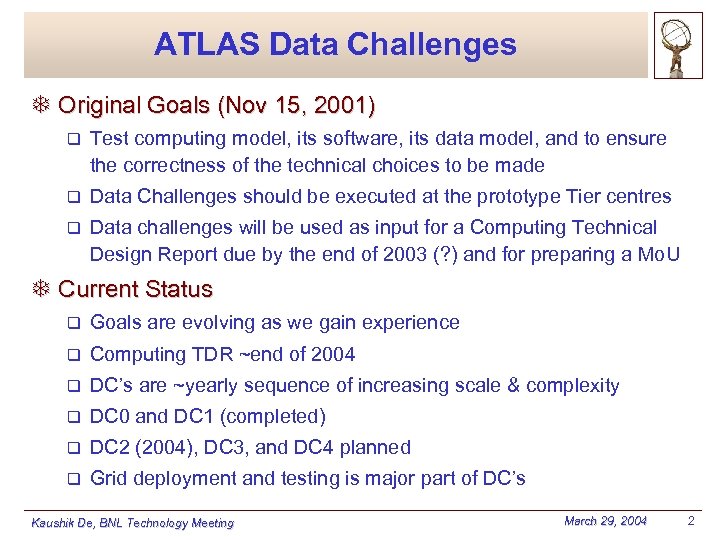

ATLAS Data Challenges T Original Goals (Nov 15, 2001) q Test computing model, its software, its data model, and to ensure the correctness of the technical choices to be made q Data Challenges should be executed at the prototype Tier centres q Data challenges will be used as input for a Computing Technical Design Report due by the end of 2003 (? ) and for preparing a Mo. U T Current Status q Goals are evolving as we gain experience q Computing TDR ~end of 2004 q DC’s are ~yearly sequence of increasing scale & complexity q DC 0 and DC 1 (completed) q DC 2 (2004), DC 3, and DC 4 planned q Grid deployment and testing is major part of DC’s Kaushik De, BNL Technology Meeting March 29, 2004 2

ATLAS Data Challenges T Original Goals (Nov 15, 2001) q Test computing model, its software, its data model, and to ensure the correctness of the technical choices to be made q Data Challenges should be executed at the prototype Tier centres q Data challenges will be used as input for a Computing Technical Design Report due by the end of 2003 (? ) and for preparing a Mo. U T Current Status q Goals are evolving as we gain experience q Computing TDR ~end of 2004 q DC’s are ~yearly sequence of increasing scale & complexity q DC 0 and DC 1 (completed) q DC 2 (2004), DC 3, and DC 4 planned q Grid deployment and testing is major part of DC’s Kaushik De, BNL Technology Meeting March 29, 2004 2

ATLAS DC 1: July 2002 -April 2003 Goals : Produce the data needed for the HLT TDR Get as many ATLAS institutes involved as possible Worldwide collaborative activity Participation : 56 Institutes TAustralia TAustria TItaly TJapan TCanada TCERN TNorway * TPoland TChina TCzech Republic TRussia TSpain TDenmark * TFrance TSweden * TTaiwan TGermany TGreece TUK TUSA * TIsrael Kaushik De, BNL Technology Meeting q * using Grid March 29, 2004 3

ATLAS DC 1: July 2002 -April 2003 Goals : Produce the data needed for the HLT TDR Get as many ATLAS institutes involved as possible Worldwide collaborative activity Participation : 56 Institutes TAustralia TAustria TItaly TJapan TCanada TCERN TNorway * TPoland TChina TCzech Republic TRussia TSpain TDenmark * TFrance TSweden * TTaiwan TGermany TGreece TUK TUSA * TIsrael Kaushik De, BNL Technology Meeting q * using Grid March 29, 2004 3

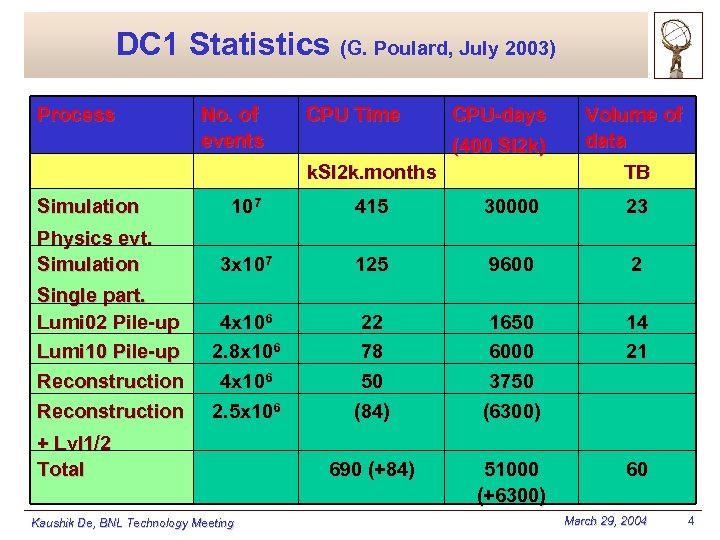

DC 1 Statistics (G. Poulard, July 2003) Process No. of events CPU Time CPU-days (400 SI 2 k) k. SI 2 k. months Simulation Physics evt. Simulation Single part. Lumi 02 Pile-up Lumi 10 Pile-up Reconstruction Volume of data TB 107 415 30000 23 3 x 107 125 9600 2 4 x 106 2. 8 x 106 4 x 106 2. 5 x 106 22 78 50 (84) 1650 6000 3750 (6300) 14 21 690 (+84) 51000 (+6300) 60 + Lvl 1/2 Total Kaushik De, BNL Technology Meeting March 29, 2004 4

DC 1 Statistics (G. Poulard, July 2003) Process No. of events CPU Time CPU-days (400 SI 2 k) k. SI 2 k. months Simulation Physics evt. Simulation Single part. Lumi 02 Pile-up Lumi 10 Pile-up Reconstruction Volume of data TB 107 415 30000 23 3 x 107 125 9600 2 4 x 106 2. 8 x 106 4 x 106 2. 5 x 106 22 78 50 (84) 1650 6000 3750 (6300) 14 21 690 (+84) 51000 (+6300) 60 + Lvl 1/2 Total Kaushik De, BNL Technology Meeting March 29, 2004 4

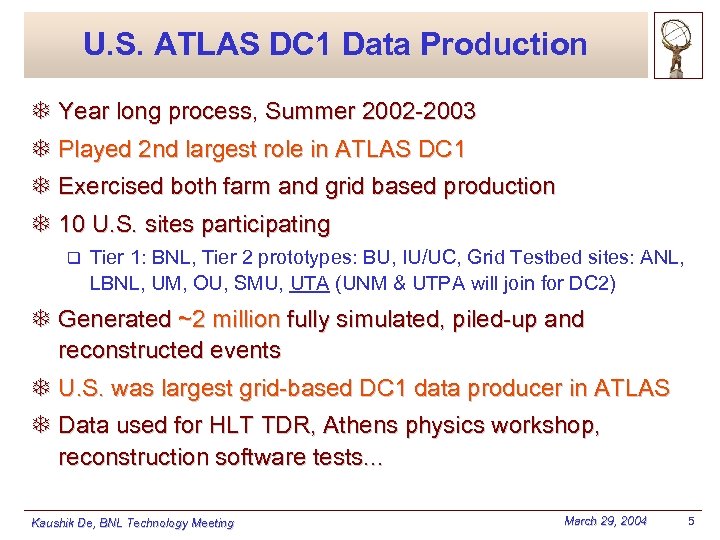

U. S. ATLAS DC 1 Data Production T Year long process, Summer 2002 -2003 T Played 2 nd largest role in ATLAS DC 1 T Exercised both farm and grid based production T 10 U. S. sites participating q Tier 1: BNL, Tier 2 prototypes: BU, IU/UC, Grid Testbed sites: ANL, LBNL, UM, OU, SMU, UTA (UNM & UTPA will join for DC 2) T Generated ~2 million fully simulated, piled-up and reconstructed events T U. S. was largest grid-based DC 1 data producer in ATLAS T Data used for HLT TDR, Athens physics workshop, reconstruction software tests. . . Kaushik De, BNL Technology Meeting March 29, 2004 5

U. S. ATLAS DC 1 Data Production T Year long process, Summer 2002 -2003 T Played 2 nd largest role in ATLAS DC 1 T Exercised both farm and grid based production T 10 U. S. sites participating q Tier 1: BNL, Tier 2 prototypes: BU, IU/UC, Grid Testbed sites: ANL, LBNL, UM, OU, SMU, UTA (UNM & UTPA will join for DC 2) T Generated ~2 million fully simulated, piled-up and reconstructed events T U. S. was largest grid-based DC 1 data producer in ATLAS T Data used for HLT TDR, Athens physics workshop, reconstruction software tests. . . Kaushik De, BNL Technology Meeting March 29, 2004 5

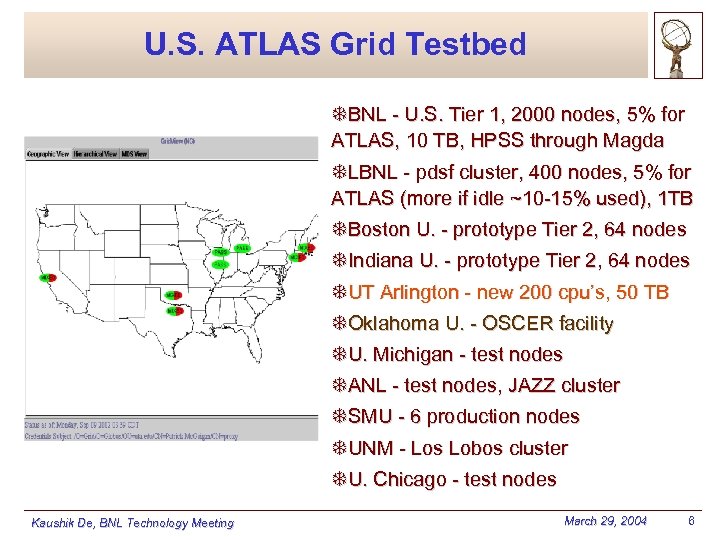

U. S. ATLAS Grid Testbed TBNL - U. S. Tier 1, 2000 nodes, 5% for ATLAS, 10 TB, HPSS through Magda TLBNL - pdsf cluster, 400 nodes, 5% for ATLAS (more if idle ~10 -15% used), 1 TB TBoston U. - prototype Tier 2, 64 nodes TIndiana U. - prototype Tier 2, 64 nodes TUT Arlington - new 200 cpu’s, 50 TB TOklahoma U. - OSCER facility TU. Michigan - test nodes TANL - test nodes, JAZZ cluster TSMU - 6 production nodes TUNM - Los Lobos cluster TU. Chicago - test nodes Kaushik De, BNL Technology Meeting March 29, 2004 6

U. S. ATLAS Grid Testbed TBNL - U. S. Tier 1, 2000 nodes, 5% for ATLAS, 10 TB, HPSS through Magda TLBNL - pdsf cluster, 400 nodes, 5% for ATLAS (more if idle ~10 -15% used), 1 TB TBoston U. - prototype Tier 2, 64 nodes TIndiana U. - prototype Tier 2, 64 nodes TUT Arlington - new 200 cpu’s, 50 TB TOklahoma U. - OSCER facility TU. Michigan - test nodes TANL - test nodes, JAZZ cluster TSMU - 6 production nodes TUNM - Los Lobos cluster TU. Chicago - test nodes Kaushik De, BNL Technology Meeting March 29, 2004 6

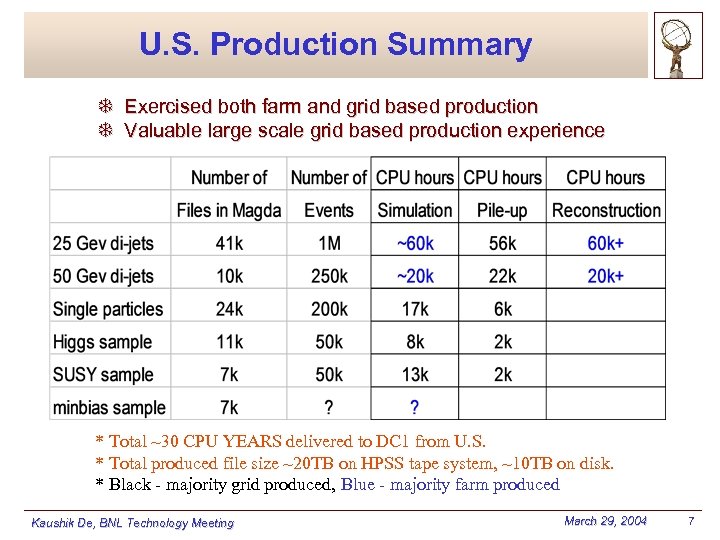

U. S. Production Summary T Exercised both farm and grid based production T Valuable large scale grid based production experience * Total ~30 CPU YEARS delivered to DC 1 from U. S. * Total produced file size ~20 TB on HPSS tape system, ~10 TB on disk. * Black - majority grid produced, Blue - majority farm produced Kaushik De, BNL Technology Meeting March 29, 2004 7

U. S. Production Summary T Exercised both farm and grid based production T Valuable large scale grid based production experience * Total ~30 CPU YEARS delivered to DC 1 from U. S. * Total produced file size ~20 TB on HPSS tape system, ~10 TB on disk. * Black - majority grid produced, Blue - majority farm produced Kaushik De, BNL Technology Meeting March 29, 2004 7

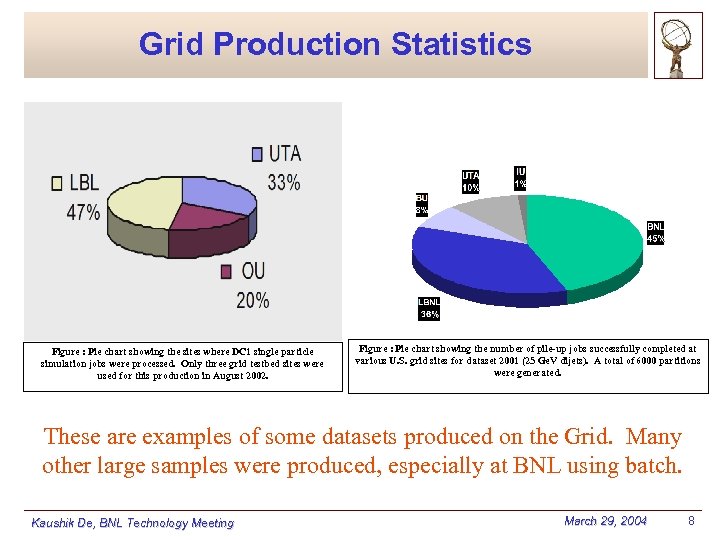

Grid Production Statistics Figure : Pie chart showing the sites where DC 1 single particle simulation jobs were processed. Only three grid testbed sites were used for this production in August 2002. Figure : Pie chart showing the number of pile-up jobs successfully completed at various U. S. grid sites for dataset 2001 (25 Ge. V dijets). A total of 6000 partitions were generated. These are examples of some datasets produced on the Grid. Many other large samples were produced, especially at BNL using batch. Kaushik De, BNL Technology Meeting March 29, 2004 8

Grid Production Statistics Figure : Pie chart showing the sites where DC 1 single particle simulation jobs were processed. Only three grid testbed sites were used for this production in August 2002. Figure : Pie chart showing the number of pile-up jobs successfully completed at various U. S. grid sites for dataset 2001 (25 Ge. V dijets). A total of 6000 partitions were generated. These are examples of some datasets produced on the Grid. Many other large samples were produced, especially at BNL using batch. Kaushik De, BNL Technology Meeting March 29, 2004 8

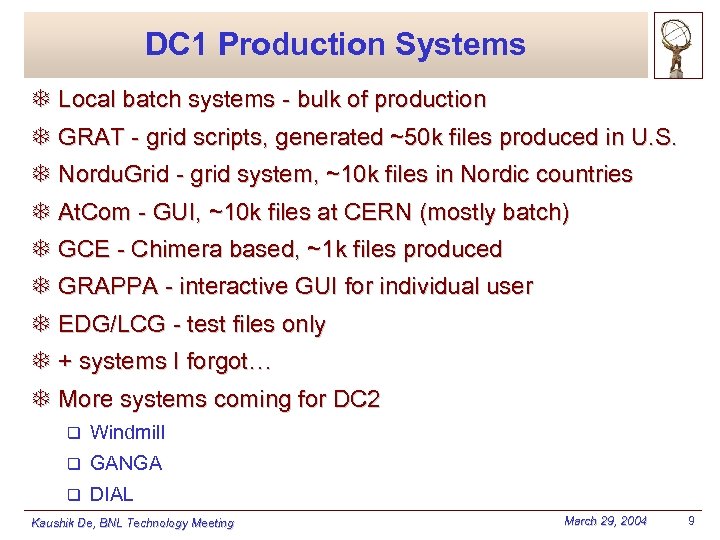

DC 1 Production Systems T Local batch systems - bulk of production T GRAT - grid scripts, generated ~50 k files produced in U. S. T Nordu. Grid - grid system, ~10 k files in Nordic countries T At. Com - GUI, ~10 k files at CERN (mostly batch) T GCE - Chimera based, ~1 k files produced T GRAPPA - interactive GUI for individual user T EDG/LCG - test files only T + systems I forgot… T More systems coming for DC 2 q Windmill q GANGA q DIAL Kaushik De, BNL Technology Meeting March 29, 2004 9

DC 1 Production Systems T Local batch systems - bulk of production T GRAT - grid scripts, generated ~50 k files produced in U. S. T Nordu. Grid - grid system, ~10 k files in Nordic countries T At. Com - GUI, ~10 k files at CERN (mostly batch) T GCE - Chimera based, ~1 k files produced T GRAPPA - interactive GUI for individual user T EDG/LCG - test files only T + systems I forgot… T More systems coming for DC 2 q Windmill q GANGA q DIAL Kaushik De, BNL Technology Meeting March 29, 2004 9

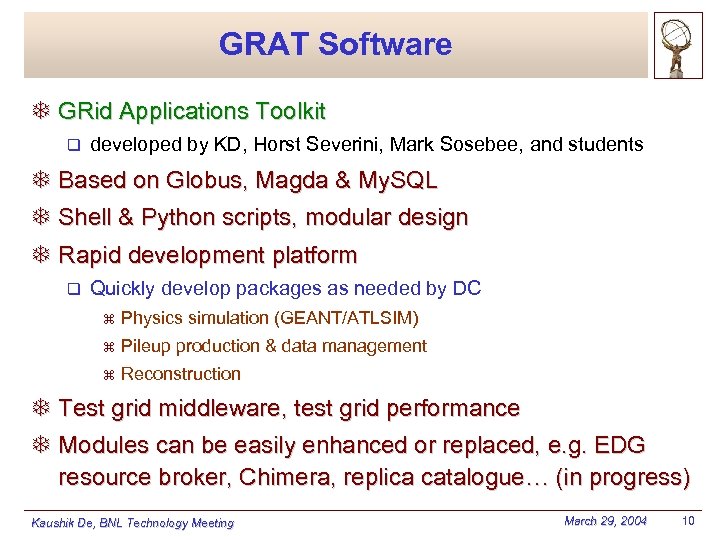

GRAT Software T GRid Applications Toolkit q developed by KD, Horst Severini, Mark Sosebee, and students T Based on Globus, Magda & My. SQL T Shell & Python scripts, modular design T Rapid development platform q Quickly develop packages as needed by DC z Physics simulation (GEANT/ATLSIM) z Pileup production & data management z Reconstruction T Test grid middleware, test grid performance T Modules can be easily enhanced or replaced, e. g. EDG resource broker, Chimera, replica catalogue… (in progress) Kaushik De, BNL Technology Meeting March 29, 2004 10

GRAT Software T GRid Applications Toolkit q developed by KD, Horst Severini, Mark Sosebee, and students T Based on Globus, Magda & My. SQL T Shell & Python scripts, modular design T Rapid development platform q Quickly develop packages as needed by DC z Physics simulation (GEANT/ATLSIM) z Pileup production & data management z Reconstruction T Test grid middleware, test grid performance T Modules can be easily enhanced or replaced, e. g. EDG resource broker, Chimera, replica catalogue… (in progress) Kaushik De, BNL Technology Meeting March 29, 2004 10

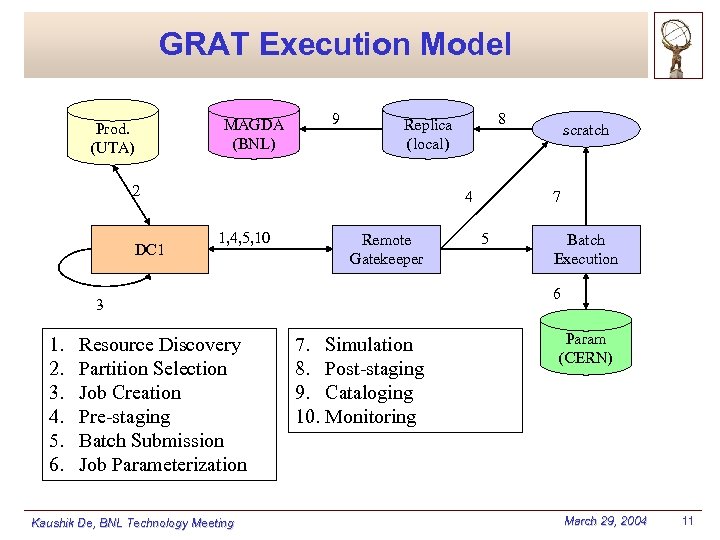

GRAT Execution Model MAGDA (BNL) Prod. (UTA) 9 2 DC 1 4 1, 4, 5, 10 Remote Gatekeeper Resource Discovery Partition Selection Job Creation Pre-staging Batch Submission Job Parameterization Kaushik De, BNL Technology Meeting scratch 7 5 Batch Execution 6 3 1. 2. 3. 4. 5. 6. 8 Replica (local) 7. Simulation 8. Post-staging 9. Cataloging 10. Monitoring Param (CERN) March 29, 2004 11

GRAT Execution Model MAGDA (BNL) Prod. (UTA) 9 2 DC 1 4 1, 4, 5, 10 Remote Gatekeeper Resource Discovery Partition Selection Job Creation Pre-staging Batch Submission Job Parameterization Kaushik De, BNL Technology Meeting scratch 7 5 Batch Execution 6 3 1. 2. 3. 4. 5. 6. 8 Replica (local) 7. Simulation 8. Post-staging 9. Cataloging 10. Monitoring Param (CERN) March 29, 2004 11

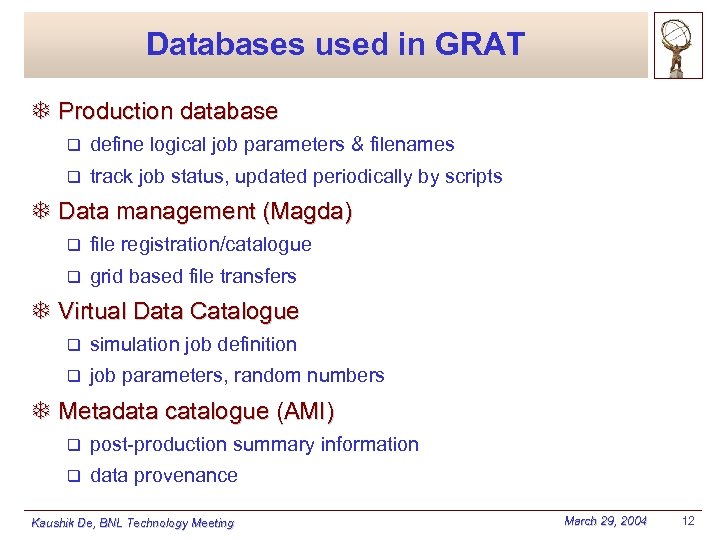

Databases used in GRAT T Production database q define logical job parameters & filenames q track job status, updated periodically by scripts T Data management (Magda) q file registration/catalogue q grid based file transfers T Virtual Data Catalogue q simulation job definition q job parameters, random numbers T Metadata catalogue (AMI) q post-production summary information q data provenance Kaushik De, BNL Technology Meeting March 29, 2004 12

Databases used in GRAT T Production database q define logical job parameters & filenames q track job status, updated periodically by scripts T Data management (Magda) q file registration/catalogue q grid based file transfers T Virtual Data Catalogue q simulation job definition q job parameters, random numbers T Metadata catalogue (AMI) q post-production summary information q data provenance Kaushik De, BNL Technology Meeting March 29, 2004 12

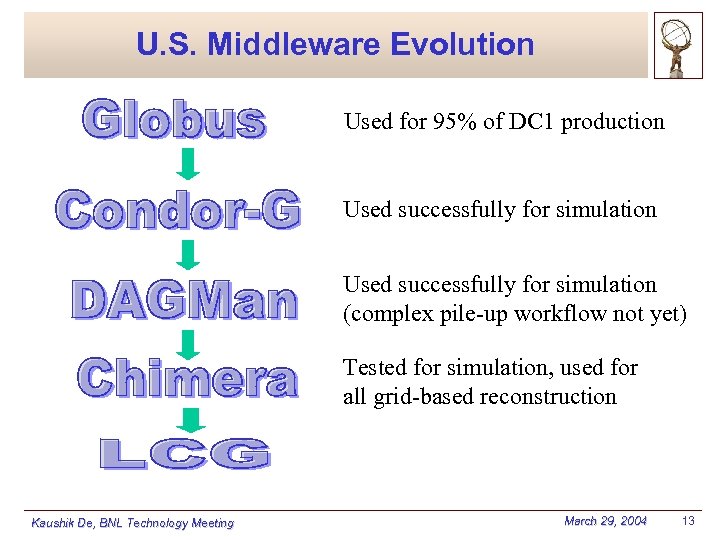

U. S. Middleware Evolution Used for 95% of DC 1 production Used successfully for simulation (complex pile-up workflow not yet) Tested for simulation, used for all grid-based reconstruction Kaushik De, BNL Technology Meeting March 29, 2004 13

U. S. Middleware Evolution Used for 95% of DC 1 production Used successfully for simulation (complex pile-up workflow not yet) Tested for simulation, used for all grid-based reconstruction Kaushik De, BNL Technology Meeting March 29, 2004 13

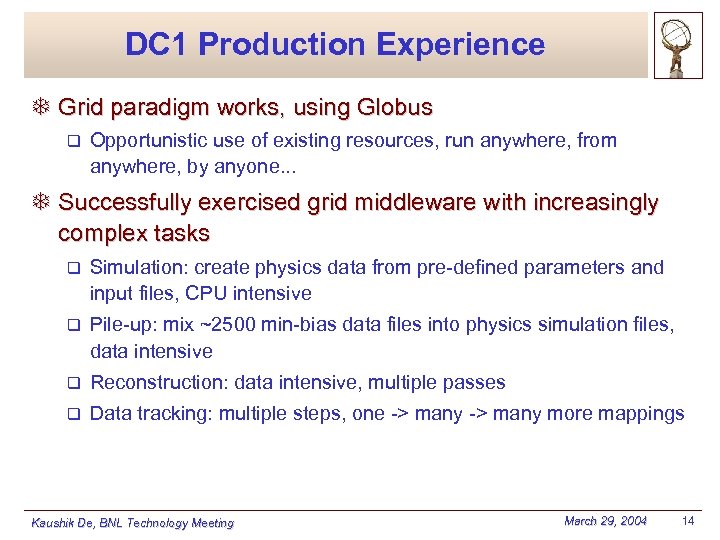

DC 1 Production Experience T Grid paradigm works, using Globus q Opportunistic use of existing resources, run anywhere, from anywhere, by anyone. . . T Successfully exercised grid middleware with increasingly complex tasks q Simulation: create physics data from pre-defined parameters and input files, CPU intensive q Pile-up: mix ~2500 min-bias data files into physics simulation files, data intensive q Reconstruction: data intensive, multiple passes q Data tracking: multiple steps, one -> many more mappings Kaushik De, BNL Technology Meeting March 29, 2004 14

DC 1 Production Experience T Grid paradigm works, using Globus q Opportunistic use of existing resources, run anywhere, from anywhere, by anyone. . . T Successfully exercised grid middleware with increasingly complex tasks q Simulation: create physics data from pre-defined parameters and input files, CPU intensive q Pile-up: mix ~2500 min-bias data files into physics simulation files, data intensive q Reconstruction: data intensive, multiple passes q Data tracking: multiple steps, one -> many more mappings Kaushik De, BNL Technology Meeting March 29, 2004 14

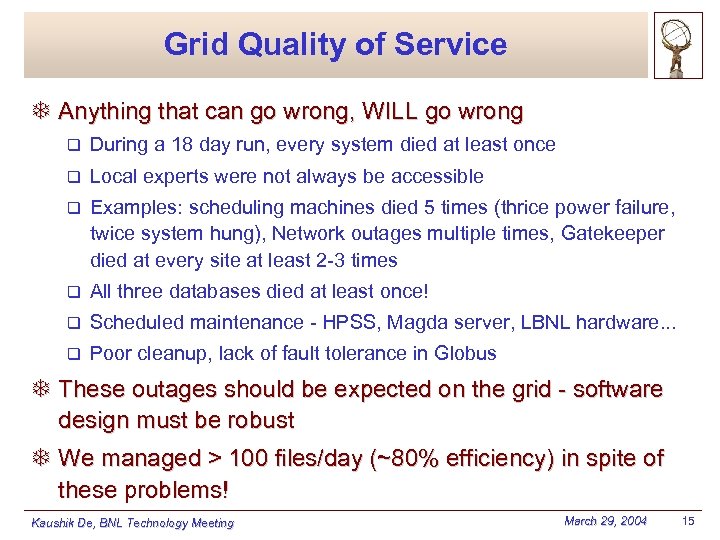

Grid Quality of Service T Anything that can go wrong, WILL go wrong q During a 18 day run, every system died at least once q Local experts were not always be accessible q Examples: scheduling machines died 5 times (thrice power failure, twice system hung), Network outages multiple times, Gatekeeper died at every site at least 2 -3 times q All three databases died at least once! q Scheduled maintenance - HPSS, Magda server, LBNL hardware. . . q Poor cleanup, lack of fault tolerance in Globus T These outages should be expected on the grid - software design must be robust T We managed > 100 files/day (~80% efficiency) in spite of these problems! Kaushik De, BNL Technology Meeting March 29, 2004 15

Grid Quality of Service T Anything that can go wrong, WILL go wrong q During a 18 day run, every system died at least once q Local experts were not always be accessible q Examples: scheduling machines died 5 times (thrice power failure, twice system hung), Network outages multiple times, Gatekeeper died at every site at least 2 -3 times q All three databases died at least once! q Scheduled maintenance - HPSS, Magda server, LBNL hardware. . . q Poor cleanup, lack of fault tolerance in Globus T These outages should be expected on the grid - software design must be robust T We managed > 100 files/day (~80% efficiency) in spite of these problems! Kaushik De, BNL Technology Meeting March 29, 2004 15

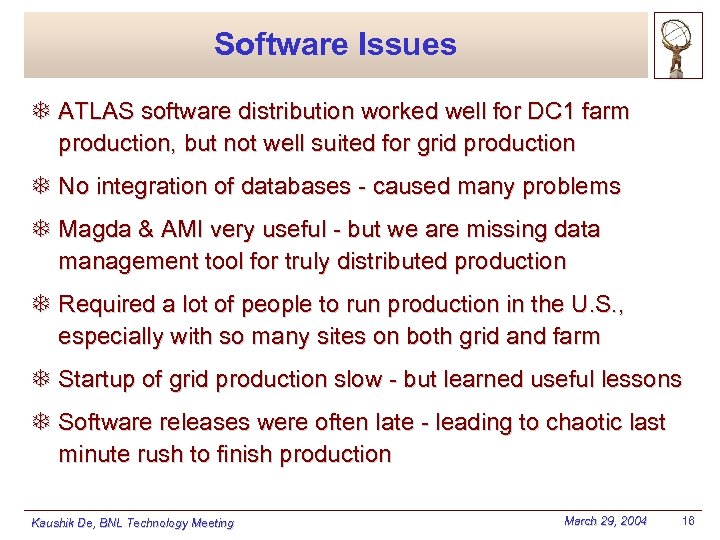

Software Issues T ATLAS software distribution worked well for DC 1 farm production, but not well suited for grid production T No integration of databases - caused many problems T Magda & AMI very useful - but we are missing data management tool for truly distributed production T Required a lot of people to run production in the U. S. , especially with so many sites on both grid and farm T Startup of grid production slow - but learned useful lessons T Software releases were often late - leading to chaotic last minute rush to finish production Kaushik De, BNL Technology Meeting March 29, 2004 16

Software Issues T ATLAS software distribution worked well for DC 1 farm production, but not well suited for grid production T No integration of databases - caused many problems T Magda & AMI very useful - but we are missing data management tool for truly distributed production T Required a lot of people to run production in the U. S. , especially with so many sites on both grid and farm T Startup of grid production slow - but learned useful lessons T Software releases were often late - leading to chaotic last minute rush to finish production Kaushik De, BNL Technology Meeting March 29, 2004 16

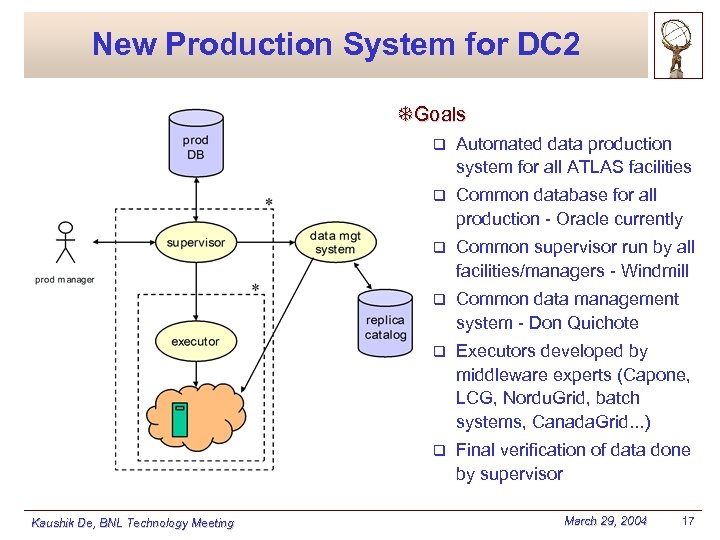

New Production System for DC 2 TGoals q q Common database for all production - Oracle currently q Common supervisor run by all facilities/managers - Windmill q Common data management system - Don Quichote q Executors developed by middleware experts (Capone, LCG, Nordu. Grid, batch systems, Canada. Grid. . . ) q Kaushik De, BNL Technology Meeting Automated data production system for all ATLAS facilities Final verification of data done by supervisor March 29, 2004 17

New Production System for DC 2 TGoals q q Common database for all production - Oracle currently q Common supervisor run by all facilities/managers - Windmill q Common data management system - Don Quichote q Executors developed by middleware experts (Capone, LCG, Nordu. Grid, batch systems, Canada. Grid. . . ) q Kaushik De, BNL Technology Meeting Automated data production system for all ATLAS facilities Final verification of data done by supervisor March 29, 2004 17

Windmill - Supervisor T Supervisor development/U. S. DC production team q UTA: Kaushik De, Mark Sosebee, Nurcan Ozturk + students q BNL: Wensheng Deng, Rich Baker q OU: Horst Severini q ANL: Ed May T Windmill web page q http: //www-hep. uta. edu/windmill T Windmill status q version 0. 5 released February 23 z includes complete library of xml messages between agents z includes sample executors for local, pbs and web services q can run on any Linux machine with Python 2. 2 q development continuing - Oracle production DB, DMS, new schema Kaushik De, BNL Technology Meeting March 29, 2004 18

Windmill - Supervisor T Supervisor development/U. S. DC production team q UTA: Kaushik De, Mark Sosebee, Nurcan Ozturk + students q BNL: Wensheng Deng, Rich Baker q OU: Horst Severini q ANL: Ed May T Windmill web page q http: //www-hep. uta. edu/windmill T Windmill status q version 0. 5 released February 23 z includes complete library of xml messages between agents z includes sample executors for local, pbs and web services q can run on any Linux machine with Python 2. 2 q development continuing - Oracle production DB, DMS, new schema Kaushik De, BNL Technology Meeting March 29, 2004 18

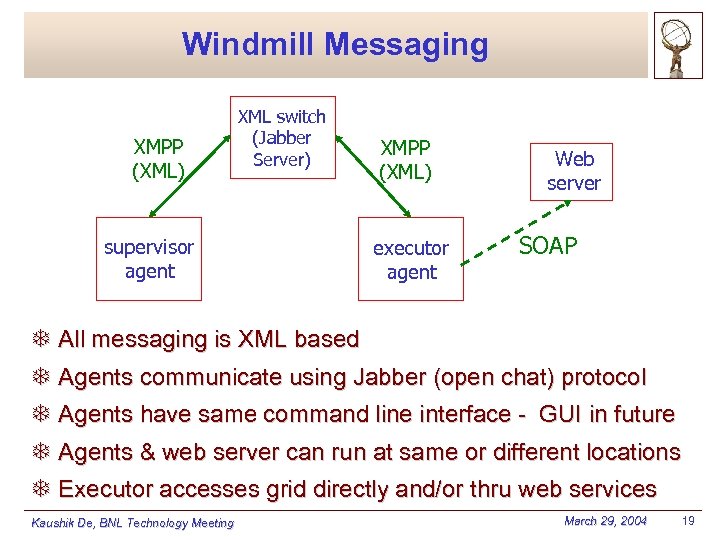

Windmill Messaging XMPP (XML) supervisor agent XML switch (Jabber Server) XMPP (XML) executor agent Web server SOAP T All messaging is XML based T Agents communicate using Jabber (open chat) protocol T Agents have same command line interface - GUI in future T Agents & web server can run at same or different locations T Executor accesses grid directly and/or thru web services Kaushik De, BNL Technology Meeting March 29, 2004 19

Windmill Messaging XMPP (XML) supervisor agent XML switch (Jabber Server) XMPP (XML) executor agent Web server SOAP T All messaging is XML based T Agents communicate using Jabber (open chat) protocol T Agents have same command line interface - GUI in future T Agents & web server can run at same or different locations T Executor accesses grid directly and/or thru web services Kaushik De, BNL Technology Meeting March 29, 2004 19

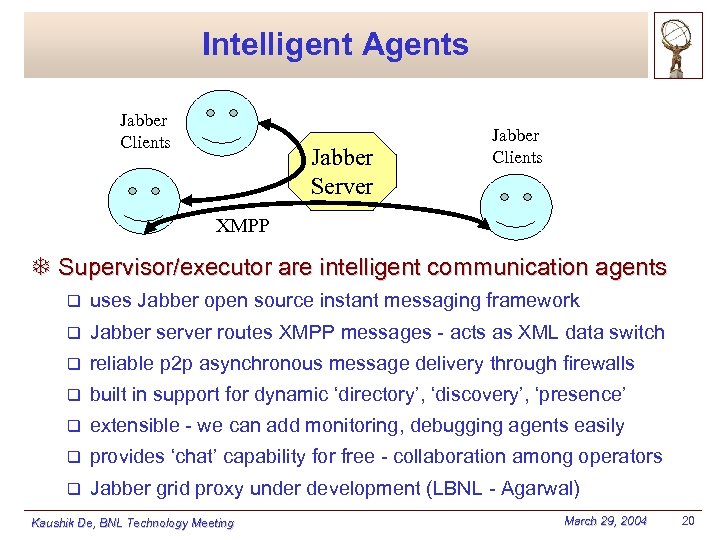

Intelligent Agents Jabber Clients Jabber Server Jabber Clients XMPP T Supervisor/executor are intelligent communication agents q uses Jabber open source instant messaging framework q Jabber server routes XMPP messages - acts as XML data switch q reliable p 2 p asynchronous message delivery through firewalls q built in support for dynamic ‘directory’, ‘discovery’, ‘presence’ q extensible - we can add monitoring, debugging agents easily q provides ‘chat’ capability for free - collaboration among operators q Jabber grid proxy under development (LBNL - Agarwal) Kaushik De, BNL Technology Meeting March 29, 2004 20

Intelligent Agents Jabber Clients Jabber Server Jabber Clients XMPP T Supervisor/executor are intelligent communication agents q uses Jabber open source instant messaging framework q Jabber server routes XMPP messages - acts as XML data switch q reliable p 2 p asynchronous message delivery through firewalls q built in support for dynamic ‘directory’, ‘discovery’, ‘presence’ q extensible - we can add monitoring, debugging agents easily q provides ‘chat’ capability for free - collaboration among operators q Jabber grid proxy under development (LBNL - Agarwal) Kaushik De, BNL Technology Meeting March 29, 2004 20

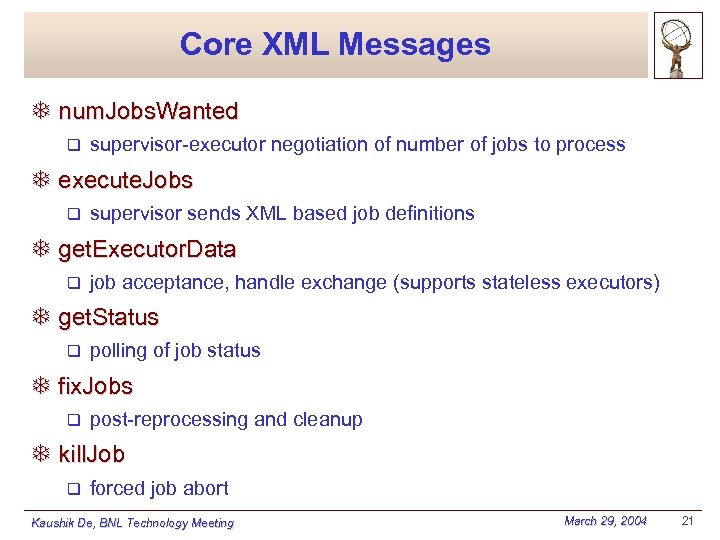

Core XML Messages T num. Jobs. Wanted q supervisor-executor negotiation of number of jobs to process T execute. Jobs q supervisor sends XML based job definitions T get. Executor. Data q job acceptance, handle exchange (supports stateless executors) T get. Status q polling of job status T fix. Jobs q post-reprocessing and cleanup T kill. Job q forced job abort Kaushik De, BNL Technology Meeting March 29, 2004 21

Core XML Messages T num. Jobs. Wanted q supervisor-executor negotiation of number of jobs to process T execute. Jobs q supervisor sends XML based job definitions T get. Executor. Data q job acceptance, handle exchange (supports stateless executors) T get. Status q polling of job status T fix. Jobs q post-reprocessing and cleanup T kill. Job q forced job abort Kaushik De, BNL Technology Meeting March 29, 2004 21

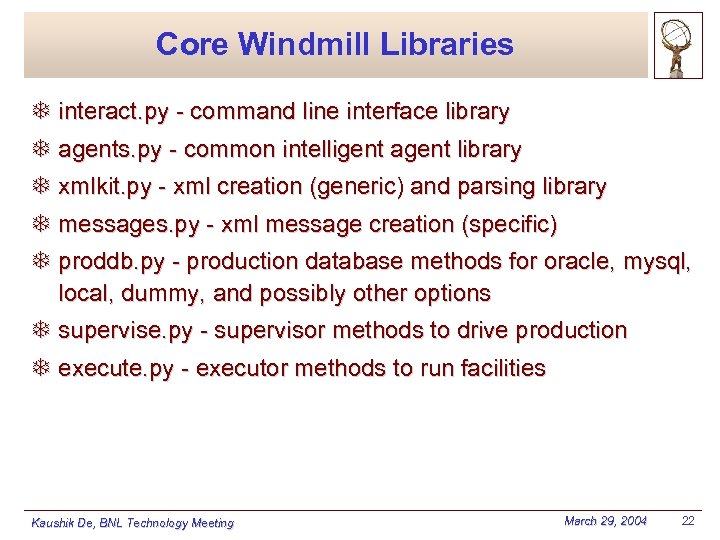

Core Windmill Libraries T interact. py - command line interface library T agents. py - common intelligent agent library T xmlkit. py - xml creation (generic) and parsing library T messages. py - xml message creation (specific) T proddb. py - production database methods for oracle, mysql, local, dummy, and possibly other options T supervise. py - supervisor methods to drive production T execute. py - executor methods to run facilities Kaushik De, BNL Technology Meeting March 29, 2004 22

Core Windmill Libraries T interact. py - command line interface library T agents. py - common intelligent agent library T xmlkit. py - xml creation (generic) and parsing library T messages. py - xml message creation (specific) T proddb. py - production database methods for oracle, mysql, local, dummy, and possibly other options T supervise. py - supervisor methods to drive production T execute. py - executor methods to run facilities Kaushik De, BNL Technology Meeting March 29, 2004 22

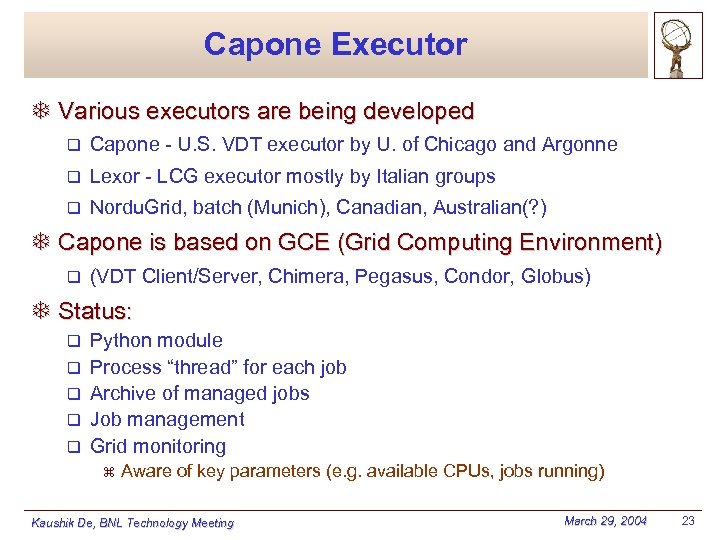

Capone Executor T Various executors are being developed q Capone - U. S. VDT executor by U. of Chicago and Argonne q Lexor - LCG executor mostly by Italian groups q Nordu. Grid, batch (Munich), Canadian, Australian(? ) T Capone is based on GCE (Grid Computing Environment) q (VDT Client/Server, Chimera, Pegasus, Condor, Globus) T Status: q q q Python module Process “thread” for each job Archive of managed jobs Job management Grid monitoring z Aware of key parameters (e. g. available CPUs, jobs running) Kaushik De, BNL Technology Meeting March 29, 2004 23

Capone Executor T Various executors are being developed q Capone - U. S. VDT executor by U. of Chicago and Argonne q Lexor - LCG executor mostly by Italian groups q Nordu. Grid, batch (Munich), Canadian, Australian(? ) T Capone is based on GCE (Grid Computing Environment) q (VDT Client/Server, Chimera, Pegasus, Condor, Globus) T Status: q q q Python module Process “thread” for each job Archive of managed jobs Job management Grid monitoring z Aware of key parameters (e. g. available CPUs, jobs running) Kaushik De, BNL Technology Meeting March 29, 2004 23

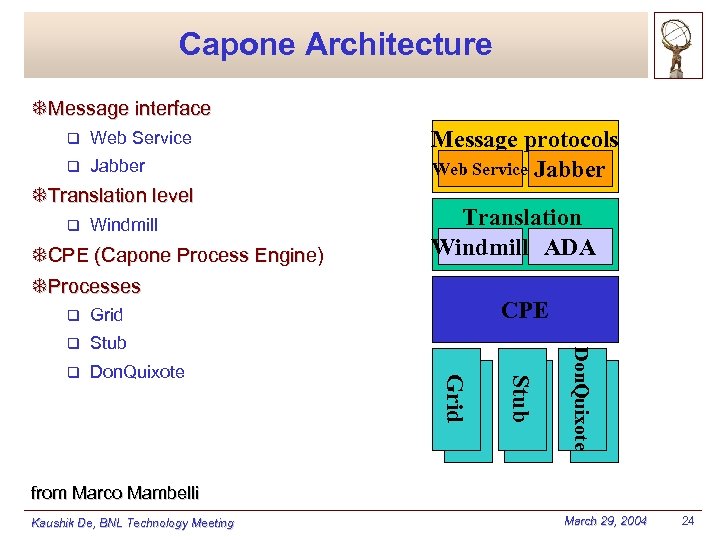

Capone Architecture TMessage interface q Web Service q Jabber TTranslation level q Windmill TCPE (Capone Process Engine) TProcesses Don. Quixote Stub q CPE Grid q Translation Windmill ADA Grid q Message protocols Web Service Jabber from Marco Mambelli Kaushik De, BNL Technology Meeting March 29, 2004 24

Capone Architecture TMessage interface q Web Service q Jabber TTranslation level q Windmill TCPE (Capone Process Engine) TProcesses Don. Quixote Stub q CPE Grid q Translation Windmill ADA Grid q Message protocols Web Service Jabber from Marco Mambelli Kaushik De, BNL Technology Meeting March 29, 2004 24

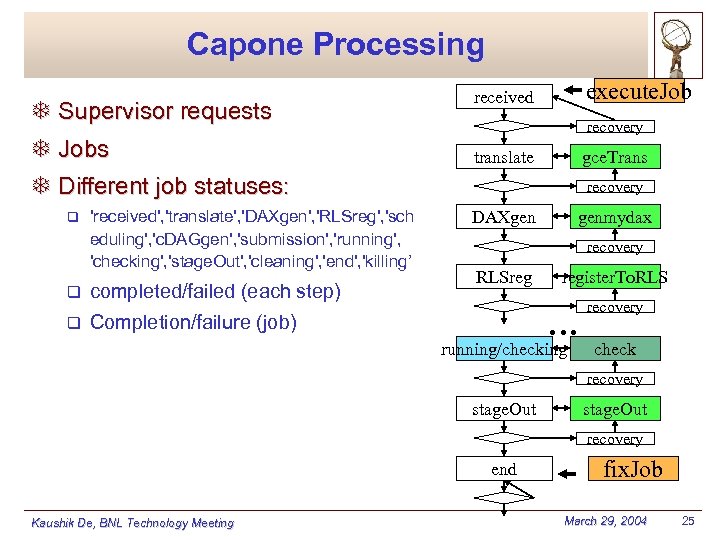

Capone Processing T Supervisor requests T Jobs execute. Job received recovery translate gce. Trans T Different job statuses: q 'received', 'translate', 'DAXgen', 'RLSreg', 'sch eduling', 'c. DAGgen', 'submission', 'running', 'checking', 'stage. Out', 'cleaning', 'end', 'killing’ q completed/failed (each step) q recovery DAXgen genmydax recovery RLSreg Completion/failure (job) register. To. RLS … running/checking recovery check recovery stage. Out recovery end Kaushik De, BNL Technology Meeting fix. Job March 29, 2004 25

Capone Processing T Supervisor requests T Jobs execute. Job received recovery translate gce. Trans T Different job statuses: q 'received', 'translate', 'DAXgen', 'RLSreg', 'sch eduling', 'c. DAGgen', 'submission', 'running', 'checking', 'stage. Out', 'cleaning', 'end', 'killing’ q completed/failed (each step) q recovery DAXgen genmydax recovery RLSreg Completion/failure (job) register. To. RLS … running/checking recovery check recovery stage. Out recovery end Kaushik De, BNL Technology Meeting fix. Job March 29, 2004 25

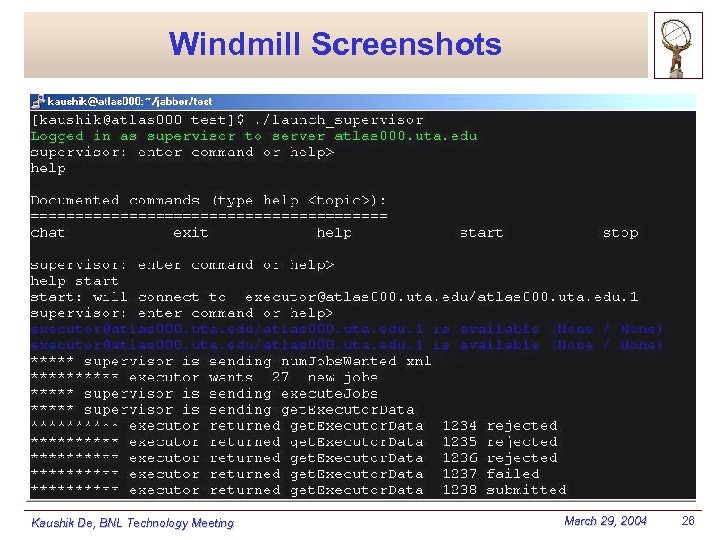

Windmill Screenshots Kaushik De, BNL Technology Meeting March 29, 2004 26

Windmill Screenshots Kaushik De, BNL Technology Meeting March 29, 2004 26

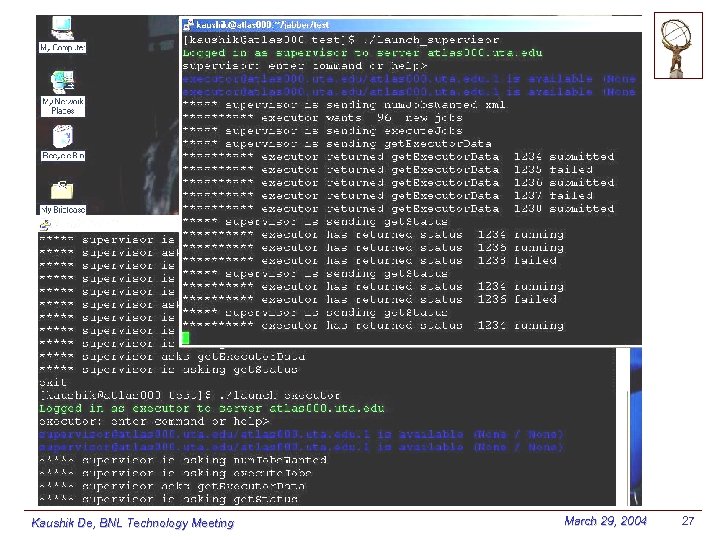

Kaushik De, BNL Technology Meeting March 29, 2004 27

Kaushik De, BNL Technology Meeting March 29, 2004 27

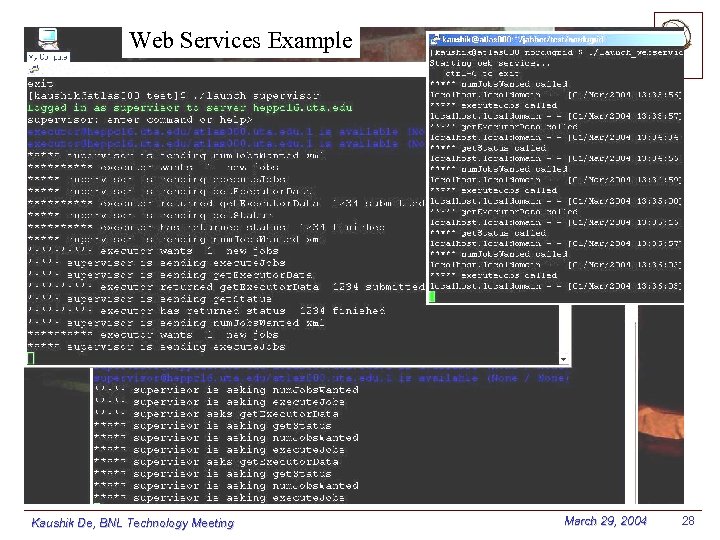

Web Services Example Kaushik De, BNL Technology Meeting March 29, 2004 28

Web Services Example Kaushik De, BNL Technology Meeting March 29, 2004 28

Conclusion T Data Challenges are important for ATLAS software and computing infrastructure readiness T Grids will be the default testbed for DC 2 T U. S. playing a major role in DC 2 planning & production T 12 U. S. sites ready to participate in DC 2 T Major U. S. role in production software development T Test of new grid production system imminent T Physics analysis will be emphasis of DC 2 - new experience T Stay tuned Kaushik De, BNL Technology Meeting March 29, 2004 29

Conclusion T Data Challenges are important for ATLAS software and computing infrastructure readiness T Grids will be the default testbed for DC 2 T U. S. playing a major role in DC 2 planning & production T 12 U. S. sites ready to participate in DC 2 T Major U. S. role in production software development T Test of new grid production system imminent T Physics analysis will be emphasis of DC 2 - new experience T Stay tuned Kaushik De, BNL Technology Meeting March 29, 2004 29