ea7cb958079c77aa3d52c5faf837ce3b.ppt

- Количество слайдов: 29

Gri. Phy. N Management Mike Wilde University of Chicago, Argonne wilde@mcs. anl. gov Paul Avery University of Florida avery@phys. ufl. edu Gri. Phy. N NSF Project Review 29 -30 January 2003 Chicago 29 Jan 2003 Mike Wilde, University of Chicago wilde@mcs. anl. gov

Gri. Phy. N Management Mike Wilde University of Chicago, Argonne wilde@mcs. anl. gov Paul Avery University of Florida avery@phys. ufl. edu Gri. Phy. N NSF Project Review 29 -30 January 2003 Chicago 29 Jan 2003 Mike Wilde, University of Chicago wilde@mcs. anl. gov

Gri. Phy. N Management • Management – – Paul Avery (Florida) Ian Foster (Chicago) Mike Wilde (Argonne) Rick Cavanaugh (Florida) Mike Wilde, University of Chicago co-Director Project Coordinator Deputy Coordinator wilde@mcs. anl. gov 29 Jan 2003 2

Gri. Phy. N Management • Management – – Paul Avery (Florida) Ian Foster (Chicago) Mike Wilde (Argonne) Rick Cavanaugh (Florida) Mike Wilde, University of Chicago co-Director Project Coordinator Deputy Coordinator wilde@mcs. anl. gov 29 Jan 2003 2

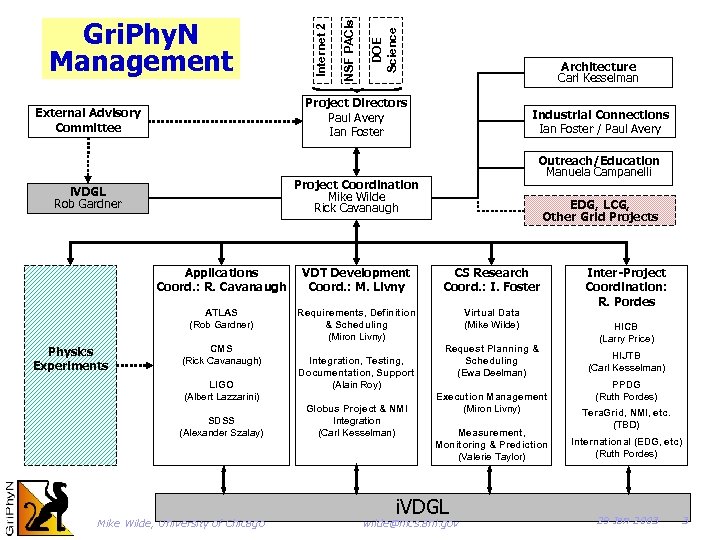

DOE Science NSF PACIs Internet 2 Gri. Phy. N Management Architecture Carl Kesselman Project Directors Paul Avery Ian Foster External Advisory Committee Industrial Connections Ian Foster / Paul Avery Outreach/Education Manuela Campanelli Project Coordination Mike Wilde Rick Cavanaugh i. VDGL Rob Gardner EDG, LCG, Other Grid Projects Applications Coord. : R. Cavanaugh CS Research Coord. : I. Foster ATLAS (Rob Gardner) Physics Experiments VDT Development Coord. : M. Livny Requirements, Definition & Scheduling (Miron Livny) Virtual Data (Mike Wilde) CMS (Rick Cavanaugh) LIGO (Albert Lazzarini) SDSS (Alexander Szalay) Mike Wilde, University of Chicago Integration, Testing, Documentation, Support (Alain Roy) Globus Project & NMI Integration (Carl Kesselman) Request Planning & Scheduling (Ewa Deelman) Execution Management (Miron Livny) Measurement, Monitoring & Prediction (Valerie Taylor) i. VDGL wilde@mcs. anl. gov Inter-Project Coordination: R. Pordes HICB (Larry Price) HIJTB (Carl Kesselman) PPDG (Ruth Pordes) Tera. Grid, NMI, etc. (TBD) International (EDG, etc) (Ruth Pordes) 29 Jan 2003 3

DOE Science NSF PACIs Internet 2 Gri. Phy. N Management Architecture Carl Kesselman Project Directors Paul Avery Ian Foster External Advisory Committee Industrial Connections Ian Foster / Paul Avery Outreach/Education Manuela Campanelli Project Coordination Mike Wilde Rick Cavanaugh i. VDGL Rob Gardner EDG, LCG, Other Grid Projects Applications Coord. : R. Cavanaugh CS Research Coord. : I. Foster ATLAS (Rob Gardner) Physics Experiments VDT Development Coord. : M. Livny Requirements, Definition & Scheduling (Miron Livny) Virtual Data (Mike Wilde) CMS (Rick Cavanaugh) LIGO (Albert Lazzarini) SDSS (Alexander Szalay) Mike Wilde, University of Chicago Integration, Testing, Documentation, Support (Alain Roy) Globus Project & NMI Integration (Carl Kesselman) Request Planning & Scheduling (Ewa Deelman) Execution Management (Miron Livny) Measurement, Monitoring & Prediction (Valerie Taylor) i. VDGL wilde@mcs. anl. gov Inter-Project Coordination: R. Pordes HICB (Larry Price) HIJTB (Carl Kesselman) PPDG (Ruth Pordes) Tera. Grid, NMI, etc. (TBD) International (EDG, etc) (Ruth Pordes) 29 Jan 2003 3

External Advisory Committee • Members – – – – – Fran Berman (SDSC Director) Dan Reed (NCSA Director) Joel Butler (former head, FNAL Computing Division) Jim Gray (Microsoft) Bill Johnston (LBNL, DOE Science Grid) Fabrizio Gagliardi (CERN, EDG Director) David Williams (former head, CERN IT) Paul Messina (former CACR Director) Roscoe Giles (Boston U, NPACI-EOT) • Met with us 3 times: 4/2001, 1/2002, 1/2003 – Extremely useful guidance on project scope & goals Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 4

External Advisory Committee • Members – – – – – Fran Berman (SDSC Director) Dan Reed (NCSA Director) Joel Butler (former head, FNAL Computing Division) Jim Gray (Microsoft) Bill Johnston (LBNL, DOE Science Grid) Fabrizio Gagliardi (CERN, EDG Director) David Williams (former head, CERN IT) Paul Messina (former CACR Director) Roscoe Giles (Boston U, NPACI-EOT) • Met with us 3 times: 4/2001, 1/2002, 1/2003 – Extremely useful guidance on project scope & goals Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 4

Gri. Phy. N Project Challenges • We balance and coordinate – CS research with “goals, milestones & deliverables” – Gri. Phy. N schedule/priorities/risks with those of the 4 experiments – General tools developed by Gri. Phy. N with specific tools developed by 4 experiments – Data Grid design, architecture & deliverables with those of other Grid projects • Appropriate balance requires – Tight management, close coordination, trust • We have (so far) met these challenges – But requires constant attention, good will Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 5

Gri. Phy. N Project Challenges • We balance and coordinate – CS research with “goals, milestones & deliverables” – Gri. Phy. N schedule/priorities/risks with those of the 4 experiments – General tools developed by Gri. Phy. N with specific tools developed by 4 experiments – Data Grid design, architecture & deliverables with those of other Grid projects • Appropriate balance requires – Tight management, close coordination, trust • We have (so far) met these challenges – But requires constant attention, good will Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 5

Meetings in 2000 -2001 • Gri. Phy. N/i. VDGL meetings – Oct. 2000 – Dec. 2000 – Apr. 2001 – Aug. 2001 – Oct. 2001 All-hands Chicago Architecture Chicago All-hands, EAC USC/ISI Planning Chicago All-hands, i. VDGL USC/ISI • Numerous smaller meetings – CS-experiment – CS research – Liaisons with PPDG and EU Data. Grid – US-CMS and US-ATLAS computing reviews – Experiment meetings at CERN Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 6

Meetings in 2000 -2001 • Gri. Phy. N/i. VDGL meetings – Oct. 2000 – Dec. 2000 – Apr. 2001 – Aug. 2001 – Oct. 2001 All-hands Chicago Architecture Chicago All-hands, EAC USC/ISI Planning Chicago All-hands, i. VDGL USC/ISI • Numerous smaller meetings – CS-experiment – CS research – Liaisons with PPDG and EU Data. Grid – US-CMS and US-ATLAS computing reviews – Experiment meetings at CERN Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 6

Meetings in 2002 • Gri. Phy. N/i. VDGL meetings – Jan. 2002 EAC, Planning, i. VDGLFlorida – Mar. 2002 Outreach Workshop Brownsville – Apr. 2002 All-hands Argonne – Jul. 2002 Reliability Workshop ISI – Oct. 2002 Provenance Workshop Argonne – Dec. 2002 Troubleshooting Workshop Chicago – Dec. 2002 All-hands technical ISI + Caltech – Jan. 2003 EAC SDSC • Numerous other 2002 meetings – i. VDGL facilities workshop (BNL) – Grid activities at CMS, ATLAS meetings – Several computing reviews for US-CMS, US-ATLAS – Demos at IST 2002, SC 2002 – Meetings with LCG (LHC Computing Grid) project – HEP coordination meetings (HICB) Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 7

Meetings in 2002 • Gri. Phy. N/i. VDGL meetings – Jan. 2002 EAC, Planning, i. VDGLFlorida – Mar. 2002 Outreach Workshop Brownsville – Apr. 2002 All-hands Argonne – Jul. 2002 Reliability Workshop ISI – Oct. 2002 Provenance Workshop Argonne – Dec. 2002 Troubleshooting Workshop Chicago – Dec. 2002 All-hands technical ISI + Caltech – Jan. 2003 EAC SDSC • Numerous other 2002 meetings – i. VDGL facilities workshop (BNL) – Grid activities at CMS, ATLAS meetings – Several computing reviews for US-CMS, US-ATLAS – Demos at IST 2002, SC 2002 – Meetings with LCG (LHC Computing Grid) project – HEP coordination meetings (HICB) Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 7

Planning Goals • Clarify our vision and direction – Know how to make a difference in science & computing • Map that vision to each application – Create concrete realizations of our vision • Organize as cooperative subteams with specific missions and defined points of interaction • Coordinate our research programs • Shape toolkit to meet challenge-problem needs • “Stop, Look, and Listen” to each experiment’s need – Excite the customer with our vision – Balance the promotion of our ideas with a solid understanding of the size and nature of the problems Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 8

Planning Goals • Clarify our vision and direction – Know how to make a difference in science & computing • Map that vision to each application – Create concrete realizations of our vision • Organize as cooperative subteams with specific missions and defined points of interaction • Coordinate our research programs • Shape toolkit to meet challenge-problem needs • “Stop, Look, and Listen” to each experiment’s need – Excite the customer with our vision – Balance the promotion of our ideas with a solid understanding of the size and nature of the problems Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 8

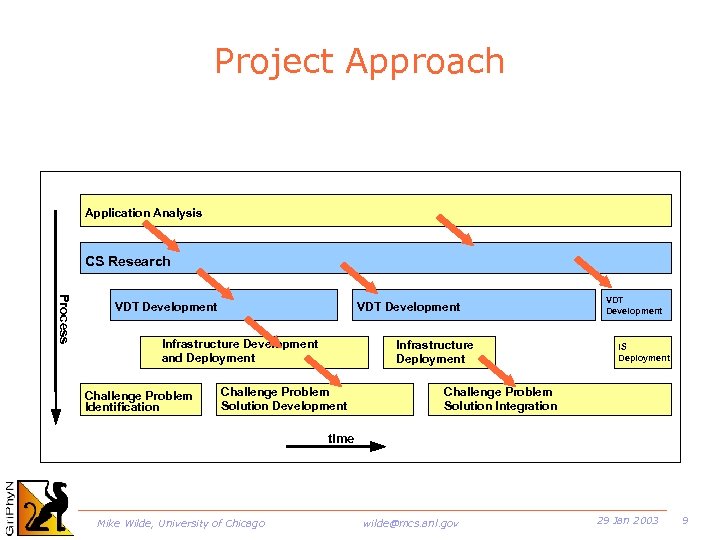

Project Approach Application Analysis CS Research Process VDT Development Infrastructure Development and Deployment Challenge Problem Identification Infrastructure Deployment Challenge Problem Solution Development VDT Development IS Deployment Challenge Problem Solution Integration time Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 9

Project Approach Application Analysis CS Research Process VDT Development Infrastructure Development and Deployment Challenge Problem Identification Infrastructure Deployment Challenge Problem Solution Development VDT Development IS Deployment Challenge Problem Solution Integration time Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 9

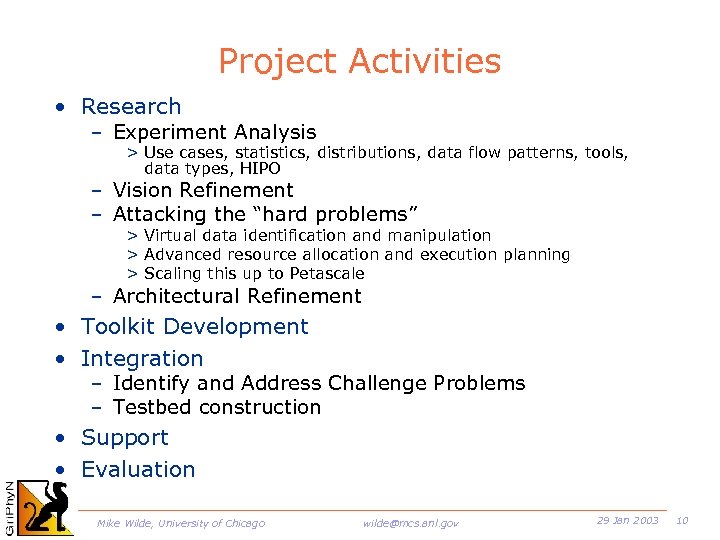

Project Activities • Research – Experiment Analysis > Use cases, statistics, distributions, data flow patterns, tools, data types, HIPO – Vision Refinement – Attacking the “hard problems” > Virtual data identification and manipulation > Advanced resource allocation and execution planning > Scaling this up to Petascale – Architectural Refinement • Toolkit Development • Integration – Identify and Address Challenge Problems – Testbed construction • Support • Evaluation Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 10

Project Activities • Research – Experiment Analysis > Use cases, statistics, distributions, data flow patterns, tools, data types, HIPO – Vision Refinement – Attacking the “hard problems” > Virtual data identification and manipulation > Advanced resource allocation and execution planning > Scaling this up to Petascale – Architectural Refinement • Toolkit Development • Integration – Identify and Address Challenge Problems – Testbed construction • Support • Evaluation Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 10

Research Milestone Highlights Y 1: Execution framework Virtual data prototypes Y 2: Virtual data catalog w/glue language Integ w/ scalable replica catalog service Initial resource usage policy language Y 3: Advanced planning, fault recovery Intelligent catalog Advanced policy languages Y 4: Knowledge management and location Y 5: Transparency and usability Scalability and manageability Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 11

Research Milestone Highlights Y 1: Execution framework Virtual data prototypes Y 2: Virtual data catalog w/glue language Integ w/ scalable replica catalog service Initial resource usage policy language Y 3: Advanced planning, fault recovery Intelligent catalog Advanced policy languages Y 4: Knowledge management and location Y 5: Transparency and usability Scalability and manageability Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 11

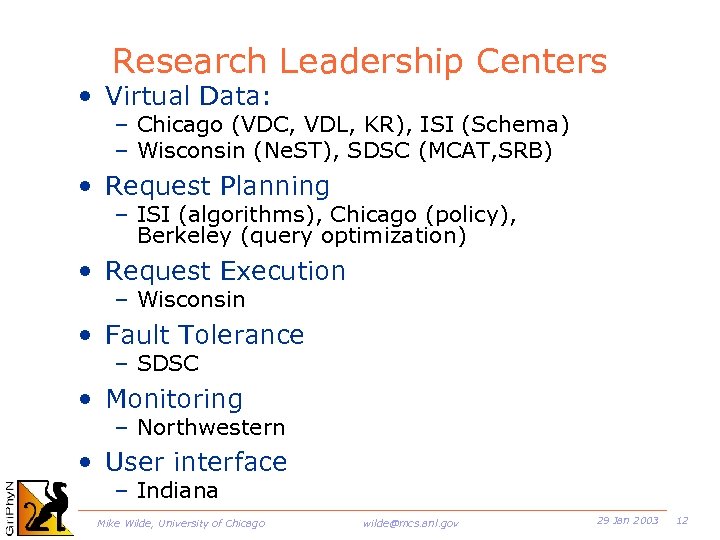

Research Leadership Centers • Virtual Data: – Chicago (VDC, VDL, KR), ISI (Schema) – Wisconsin (Ne. ST), SDSC (MCAT, SRB) • Request Planning – ISI (algorithms), Chicago (policy), Berkeley (query optimization) • Request Execution – Wisconsin • Fault Tolerance – SDSC • Monitoring – Northwestern • User interface – Indiana Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 12

Research Leadership Centers • Virtual Data: – Chicago (VDC, VDL, KR), ISI (Schema) – Wisconsin (Ne. ST), SDSC (MCAT, SRB) • Request Planning – ISI (algorithms), Chicago (policy), Berkeley (query optimization) • Request Execution – Wisconsin • Fault Tolerance – SDSC • Monitoring – Northwestern • User interface – Indiana Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 12

Project Status Overview • Year 1 research fruitful – Virtual data, planning, execution, integration— demonstrated at SC 2001 • Research efforts launched – 80% focused – 20% exploratory • VDT effort staffed and launched – Yearly major release; VDT 1 close; VDT 2 planned; VDT 3 -5 envisioned • Year 2 experiment integrations high level plans done; detailed planning underway • Long term vision refined and unified Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 13

Project Status Overview • Year 1 research fruitful – Virtual data, planning, execution, integration— demonstrated at SC 2001 • Research efforts launched – 80% focused – 20% exploratory • VDT effort staffed and launched – Yearly major release; VDT 1 close; VDT 2 planned; VDT 3 -5 envisioned • Year 2 experiment integrations high level plans done; detailed planning underway • Long term vision refined and unified Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 13

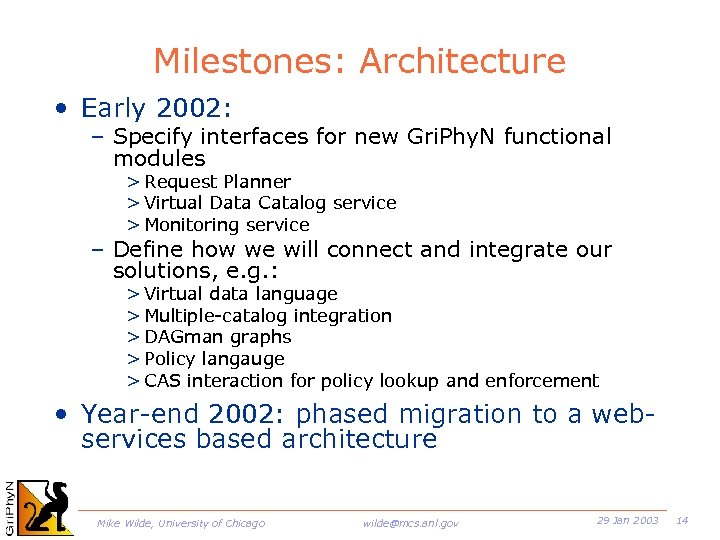

Milestones: Architecture • Early 2002: – Specify interfaces for new Gri. Phy. N functional modules > Request Planner > Virtual Data Catalog service > Monitoring service – Define how we will connect and integrate our solutions, e. g. : > Virtual data language > Multiple-catalog integration > DAGman graphs > Policy langauge > CAS interaction for policy lookup and enforcement • Year-end 2002: phased migration to a webservices based architecture Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 14

Milestones: Architecture • Early 2002: – Specify interfaces for new Gri. Phy. N functional modules > Request Planner > Virtual Data Catalog service > Monitoring service – Define how we will connect and integrate our solutions, e. g. : > Virtual data language > Multiple-catalog integration > DAGman graphs > Policy langauge > CAS interaction for policy lookup and enforcement • Year-end 2002: phased migration to a webservices based architecture Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 14

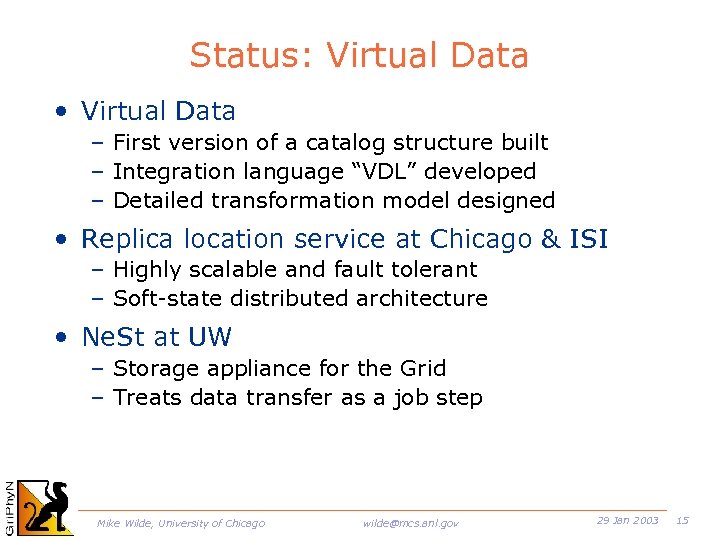

Status: Virtual Data • Virtual Data – First version of a catalog structure built – Integration language “VDL” developed – Detailed transformation model designed • Replica location service at Chicago & ISI – Highly scalable and fault tolerant – Soft-state distributed architecture • Ne. St at UW – Storage appliance for the Grid – Treats data transfer as a job step Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 15

Status: Virtual Data • Virtual Data – First version of a catalog structure built – Integration language “VDL” developed – Detailed transformation model designed • Replica location service at Chicago & ISI – Highly scalable and fault tolerant – Soft-state distributed architecture • Ne. St at UW – Storage appliance for the Grid – Treats data transfer as a job step Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 15

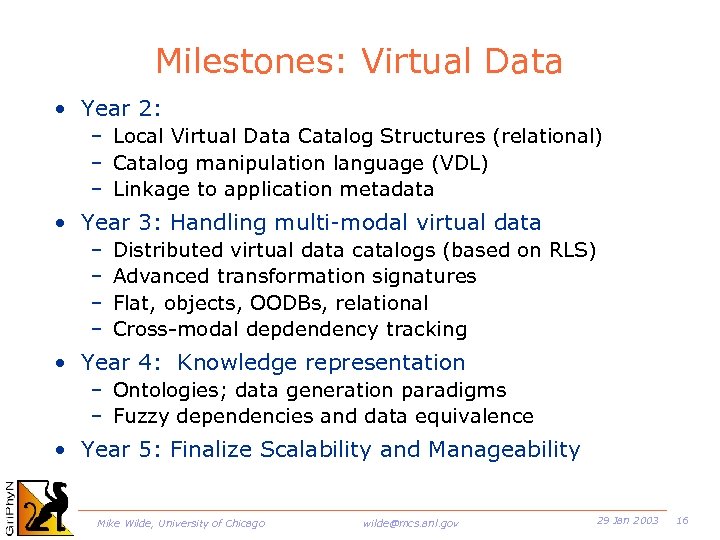

Milestones: Virtual Data • Year 2: – Local Virtual Data Catalog Structures (relational) – Catalog manipulation language (VDL) – Linkage to application metadata • Year 3: Handling multi-modal virtual data – – Distributed virtual data catalogs (based on RLS) Advanced transformation signatures Flat, objects, OODBs, relational Cross-modal depdendency tracking • Year 4: Knowledge representation – Ontologies; data generation paradigms – Fuzzy dependencies and data equivalence • Year 5: Finalize Scalability and Manageability Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 16

Milestones: Virtual Data • Year 2: – Local Virtual Data Catalog Structures (relational) – Catalog manipulation language (VDL) – Linkage to application metadata • Year 3: Handling multi-modal virtual data – – Distributed virtual data catalogs (based on RLS) Advanced transformation signatures Flat, objects, OODBs, relational Cross-modal depdendency tracking • Year 4: Knowledge representation – Ontologies; data generation paradigms – Fuzzy dependencies and data equivalence • Year 5: Finalize Scalability and Manageability Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 16

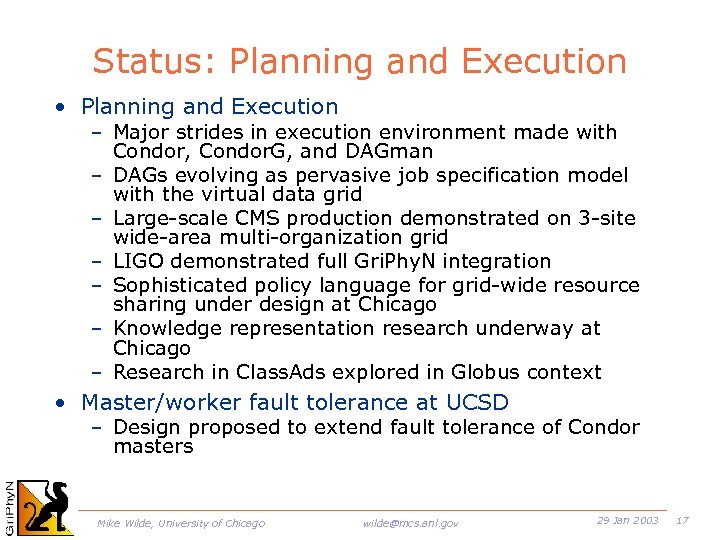

Status: Planning and Execution • Planning and Execution – Major strides in execution environment made with Condor, Condor. G, and DAGman – DAGs evolving as pervasive job specification model with the virtual data grid – Large-scale CMS production demonstrated on 3 -site wide-area multi-organization grid – LIGO demonstrated full Gri. Phy. N integration – Sophisticated policy language for grid-wide resource sharing under design at Chicago – Knowledge representation research underway at Chicago – Research in Class. Ads explored in Globus context • Master/worker fault tolerance at UCSD – Design proposed to extend fault tolerance of Condor masters Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 17

Status: Planning and Execution • Planning and Execution – Major strides in execution environment made with Condor, Condor. G, and DAGman – DAGs evolving as pervasive job specification model with the virtual data grid – Large-scale CMS production demonstrated on 3 -site wide-area multi-organization grid – LIGO demonstrated full Gri. Phy. N integration – Sophisticated policy language for grid-wide resource sharing under design at Chicago – Knowledge representation research underway at Chicago – Research in Class. Ads explored in Globus context • Master/worker fault tolerance at UCSD – Design proposed to extend fault tolerance of Condor masters Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 17

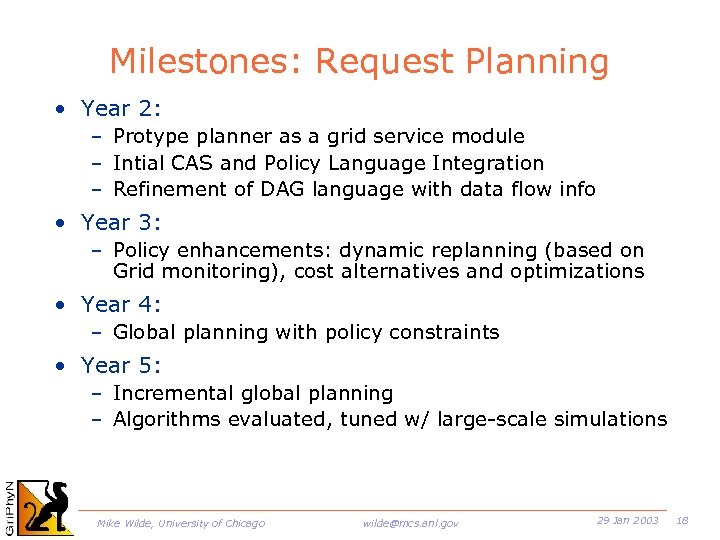

Milestones: Request Planning • Year 2: – Protype planner as a grid service module – Intial CAS and Policy Language Integration – Refinement of DAG language with data flow info • Year 3: – Policy enhancements: dynamic replanning (based on Grid monitoring), cost alternatives and optimizations • Year 4: – Global planning with policy constraints • Year 5: – Incremental global planning – Algorithms evaluated, tuned w/ large-scale simulations Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 18

Milestones: Request Planning • Year 2: – Protype planner as a grid service module – Intial CAS and Policy Language Integration – Refinement of DAG language with data flow info • Year 3: – Policy enhancements: dynamic replanning (based on Grid monitoring), cost alternatives and optimizations • Year 4: – Global planning with policy constraints • Year 5: – Incremental global planning – Algorithms evaluated, tuned w/ large-scale simulations Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 18

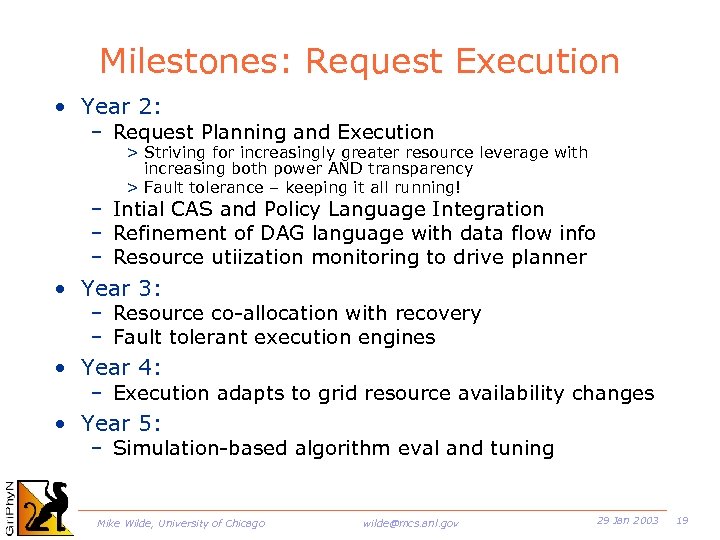

Milestones: Request Execution • Year 2: – Request Planning and Execution > Striving for increasingly greater resource leverage with increasing both power AND transparency > Fault tolerance – keeping it all running! – Intial CAS and Policy Language Integration – Refinement of DAG language with data flow info – Resource utiization monitoring to drive planner • Year 3: – Resource co-allocation with recovery – Fault tolerant execution engines • Year 4: – Execution adapts to grid resource availability changes • Year 5: – Simulation-based algorithm eval and tuning Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 19

Milestones: Request Execution • Year 2: – Request Planning and Execution > Striving for increasingly greater resource leverage with increasing both power AND transparency > Fault tolerance – keeping it all running! – Intial CAS and Policy Language Integration – Refinement of DAG language with data flow info – Resource utiization monitoring to drive planner • Year 3: – Resource co-allocation with recovery – Fault tolerant execution engines • Year 4: – Execution adapts to grid resource availability changes • Year 5: – Simulation-based algorithm eval and tuning Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 19

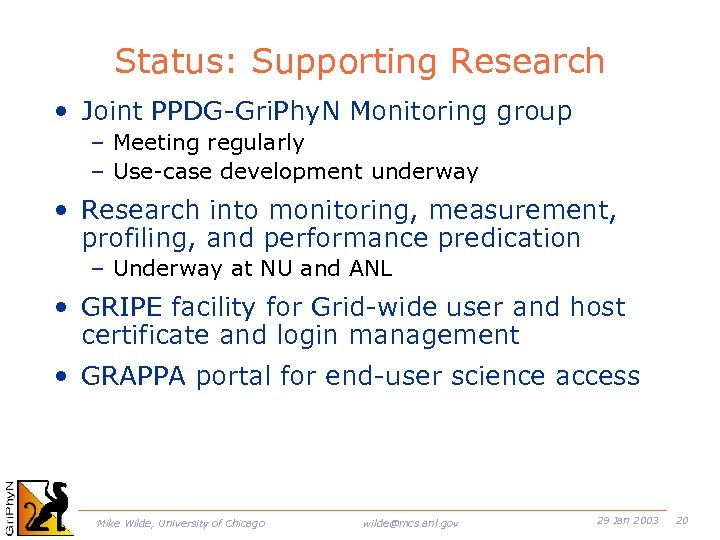

Status: Supporting Research • Joint PPDG-Gri. Phy. N Monitoring group – Meeting regularly – Use-case development underway • Research into monitoring, measurement, profiling, and performance predication – Underway at NU and ANL • GRIPE facility for Grid-wide user and host certificate and login management • GRAPPA portal for end-user science access Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 20

Status: Supporting Research • Joint PPDG-Gri. Phy. N Monitoring group – Meeting regularly – Use-case development underway • Research into monitoring, measurement, profiling, and performance predication – Underway at NU and ANL • GRIPE facility for Grid-wide user and host certificate and login management • GRAPPA portal for end-user science access Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 20

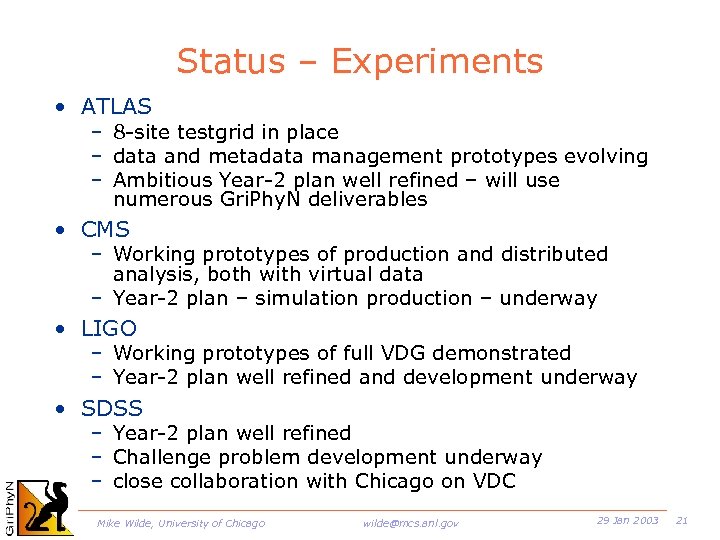

Status – Experiments • ATLAS – 8 -site testgrid in place – data and metadata management prototypes evolving – Ambitious Year-2 plan well refined – will use numerous Gri. Phy. N deliverables • CMS – Working prototypes of production and distributed analysis, both with virtual data – Year-2 plan – simulation production – underway • LIGO – Working prototypes of full VDG demonstrated – Year-2 plan well refined and development underway • SDSS – Year-2 plan well refined – Challenge problem development underway – close collaboration with Chicago on VDC Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 21

Status – Experiments • ATLAS – 8 -site testgrid in place – data and metadata management prototypes evolving – Ambitious Year-2 plan well refined – will use numerous Gri. Phy. N deliverables • CMS – Working prototypes of production and distributed analysis, both with virtual data – Year-2 plan – simulation production – underway • LIGO – Working prototypes of full VDG demonstrated – Year-2 plan well refined and development underway • SDSS – Year-2 plan well refined – Challenge problem development underway – close collaboration with Chicago on VDC Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 21

Year 2 Plan: ATLAS • ATLAS-Gri. Phy. N Challenge Problem I – ATLAS DC 0: 10 M events, O(1000) CPUs – Integration of VDT to provide uniform distributed data access – Use of GRAPPA portal, possibly over DAGman – Demo ATLAS SW Week – March 2002 Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 22

Year 2 Plan: ATLAS • ATLAS-Gri. Phy. N Challenge Problem I – ATLAS DC 0: 10 M events, O(1000) CPUs – Integration of VDT to provide uniform distributed data access – Use of GRAPPA portal, possibly over DAGman – Demo ATLAS SW Week – March 2002 Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 22

Year 2 Plan: ATLAS • ATLAS-Gri. Phy. N Challenge Problem II – Virtualization of pipelines to deliver analysis data products: reconstructions and metadata tags – Full chain production and analysis of event data – Prototyping of typical physicist analysis sessions – Graphical monitoring display of event throughput throughout the Grid – Live update display of distributed histogram population from Athena – Virtual data re-materialization from Athena – Grappa job submission and monitoring Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 23

Year 2 Plan: ATLAS • ATLAS-Gri. Phy. N Challenge Problem II – Virtualization of pipelines to deliver analysis data products: reconstructions and metadata tags – Full chain production and analysis of event data – Prototyping of typical physicist analysis sessions – Graphical monitoring display of event throughput throughout the Grid – Live update display of distributed histogram population from Athena – Virtual data re-materialization from Athena – Grappa job submission and monitoring Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 23

Year 2 Plan: SDSS • Challenge Problem 1 – Balanced resources – Cluster Galaxy Cataloging – Exercises virtual data derivation tracking • Challenge Problem 2 – Compute Intensive – Spatial Correlation Functions and Power Spectra – Provides a research base for scientific knowledge search-engine problems • Challenge Problem 3 – Storage Intensive – Weak Lensing – Provides challenging testbed for advanced request planning algorithms Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 24

Year 2 Plan: SDSS • Challenge Problem 1 – Balanced resources – Cluster Galaxy Cataloging – Exercises virtual data derivation tracking • Challenge Problem 2 – Compute Intensive – Spatial Correlation Functions and Power Spectra – Provides a research base for scientific knowledge search-engine problems • Challenge Problem 3 – Storage Intensive – Weak Lensing – Provides challenging testbed for advanced request planning algorithms Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 24

Integration of Gri. Phy. N and i. VDGL • Tight integration with Gri. Phy. N – – Testbeds VDT support Outreach Common External Advisory Committee Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 25

Integration of Gri. Phy. N and i. VDGL • Tight integration with Gri. Phy. N – – Testbeds VDT support Outreach Common External Advisory Committee Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 25

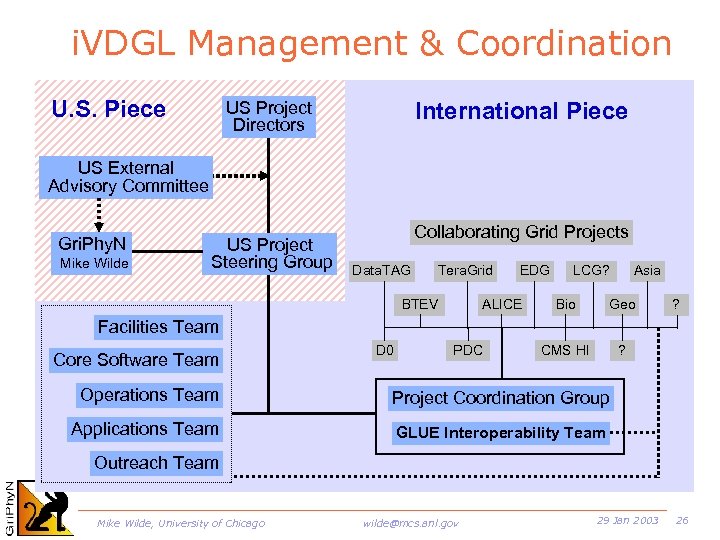

i. VDGL Management & Coordination U. S. Piece International Piece US Project Directors US External Advisory Committee Gri. Phy. N Mike Wilde US Project Steering Group Collaborating Grid Projects Data. TAG Tera. Grid BTEV EDG ALICE LCG? Asia Bio Geo CMS HI ? ? Facilities Team Core Software Team D 0 PDC Operations Team Project Coordination Group Applications Team GLUE Interoperability Team Outreach Team Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 26

i. VDGL Management & Coordination U. S. Piece International Piece US Project Directors US External Advisory Committee Gri. Phy. N Mike Wilde US Project Steering Group Collaborating Grid Projects Data. TAG Tera. Grid BTEV EDG ALICE LCG? Asia Bio Geo CMS HI ? ? Facilities Team Core Software Team D 0 PDC Operations Team Project Coordination Group Applications Team GLUE Interoperability Team Outreach Team Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 26

Global Context: Data Grid Projects • U. S. Infrastructure Projects – – – Gri. Phy. N (NSF) i. VDGL (NSF) Particle Physics Data Grid (DOE) Tera. Grid (NSF) DOE Science Grid (DOE) • EU, Asia major projects – – – – European Data Grid (EDG) (EU, EC) EDG related national Projects (UK, Italy, France, …) Cross. Grid (EU, EC) Data. TAG (EU, EC) LHC Computing Grid (LCG) (CERN) Japanese Project Korea project Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 27

Global Context: Data Grid Projects • U. S. Infrastructure Projects – – – Gri. Phy. N (NSF) i. VDGL (NSF) Particle Physics Data Grid (DOE) Tera. Grid (NSF) DOE Science Grid (DOE) • EU, Asia major projects – – – – European Data Grid (EDG) (EU, EC) EDG related national Projects (UK, Italy, France, …) Cross. Grid (EU, EC) Data. TAG (EU, EC) LHC Computing Grid (LCG) (CERN) Japanese Project Korea project Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 27

Coordination with US Efforts • Trillium = Gri. Phy. N + i. VDGL + PPDG • NMI & VDT • Networking initiatives – HENP working group within Internet 2 – Working closely with National Light Rail • New proposals Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 28

Coordination with US Efforts • Trillium = Gri. Phy. N + i. VDGL + PPDG • NMI & VDT • Networking initiatives – HENP working group within Internet 2 – Working closely with National Light Rail • New proposals Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 28

International Coordination • EU Data. Grid & Data. TAG • HICB: HEP Inter-Grid Coordination Board – HICB-JTB: Joint Technical Board – GLUE • Participation in LHC Computing Grid (LCG) • International networks – Standing Committee on Inter-regional Connectivity – Digital Divide projects, IEEAF Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 29

International Coordination • EU Data. Grid & Data. TAG • HICB: HEP Inter-Grid Coordination Board – HICB-JTB: Joint Technical Board – GLUE • Participation in LHC Computing Grid (LCG) • International networks – Standing Committee on Inter-regional Connectivity – Digital Divide projects, IEEAF Mike Wilde, University of Chicago wilde@mcs. anl. gov 29 Jan 2003 29