d06372bedfd5a78d3d4978c39bc18a1f.ppt

- Количество слайдов: 44

GOMS Analysis & Automated Usability Assessment Melody Y. Ivory (UCB CS) SIMS 213, UI Design & Development March 8, 2001

GOMS Analysis Outline l l l 2 GOMS at a glance Model Human Processor Original GOMS (CMN-GOMS) Variants of GOMS in practice Summary

GOMS at a glance l Proposed by Card, Moran & Newell in 1983 – apply psychology to CS l employ user model (MHP) to predict performance of tasks in UI – – applicable to l l 3 task completion time, short-term memory requirements user interface design and evaluation training and documentation

Model Human Processor (MHP) l Card, Moran & Newell (1983) – – most influential model of user interaction 3 interacting subsystems l l cognitive, perceptual & motor each with processor & memory – l 4 described by parameters l e. g. capacity, cycle time serial & parallel processing Adapted from slide by Dan Glaser

MHP l Card, Moran & Newell (1983) – principles of operation l subsystem behavior under certain conditions – l 5 e. g. Fitts’s Law, Power Law of Practice ten total

MHP Subsystems l Perceptual processor – – – 6 sensory input (audio & visual) code info symbolically output into audio & visual image storage (WM buffers)

MHP Subsystems l Cognitive processor – – input from sensory buffers access LTM to determine response l – 7 previously stored info output response into WM

MHP Subsystems l Motor processor – – 8 input response from WM carry out response

MHP Subsystem Interactions l l Input/output Processing – serial action l – parallel perception l 9 pressing key in response to light driving, reading signs & hearing

MHP Parameters l Based on empirical data – l Processors have – l word processing in the ‘ 70 s cycle time ( ) Memories have – – – storage capacity ( ) decay time of an item ( ) info code type ( ) l 10 physical, acoustic, visual & semantic

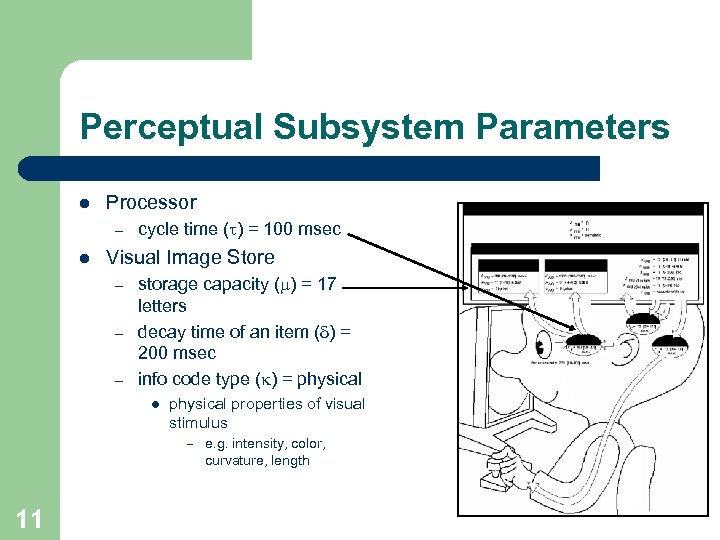

Perceptual Subsystem Parameters l Processor – l cycle time ( ) = 100 msec Visual Image Store – – – storage capacity ( ) = 17 letters decay time of an item ( ) = 200 msec info code type ( ) = physical l physical properties of visual stimulus – e. g. intensity, color, curvature, length 11

One Principle of Operation l Power Law of Practice – task time on the nth trial follows a power law l l 12 Tn = T 1 n-a, where a =. 4 i. e. , you get faster the more times you do it! applies to skilled behavior (perceptual & motor) does not apply to knowledge acquisition or quality

Original GOMS (CMN-GOMS) l l Card, Moran & Newell (1983) Engineering model of user interaction – task analysis (“how to” knowledge) l Goals - user’s intentions (tasks) – l e. g. delete a file, edit text, assist a customer Operators - actions to complete task cognitive, perceptual & motor (MHP) – low-level (e. g. move the mouse to menu) – 13

CMN-GOMS l Engineering model of user interaction – task analysis (“how to” knowledge) l Methods - sequences of actions (operators) based on error-free expert – may be multiple methods for accomplishing same goal l e. g. shortcut key or menu selection – l Selections - rules for choosing appropriate method – – explicit task structure l 14 method predicted based on context hierarchy of goals & sub-goals

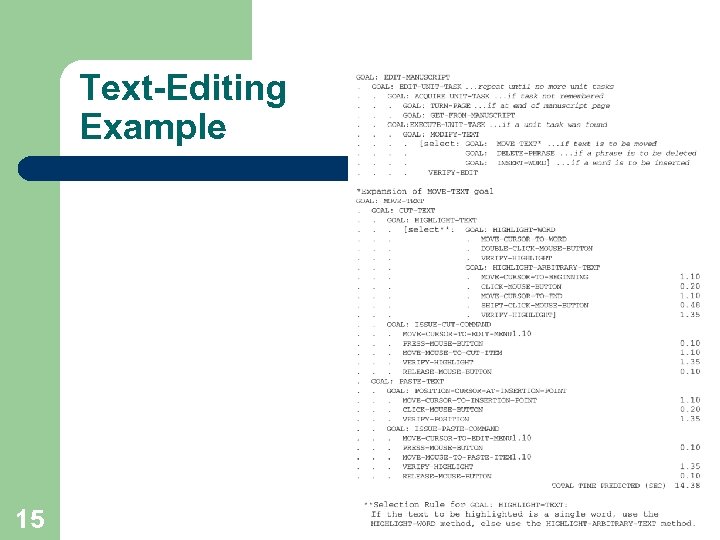

Text-Editing Example 15

CMN-GOMS Analysis l Analysis of explicit task structure – add parameters for operators l l – predict user performance l l – 16 approximations (MHP) or empirical data single value or parameterized estimate execution time (count statements in task structure) short-term memory requirements (stacking depth of task structure) apply before user testing (reduce costs)

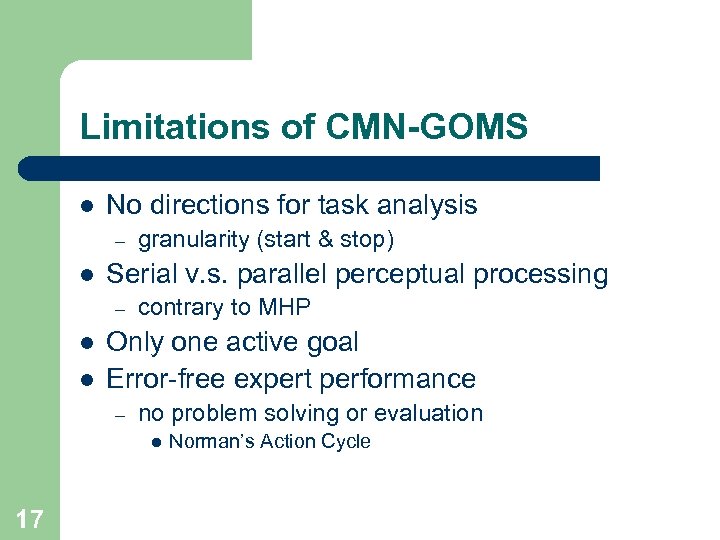

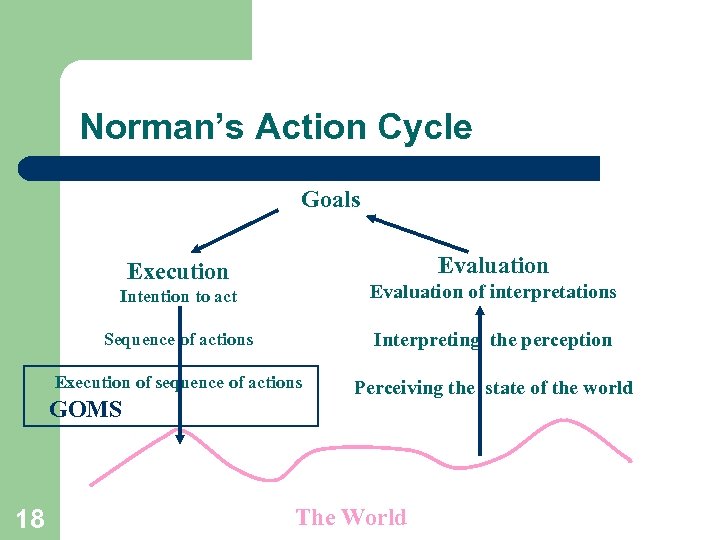

Limitations of CMN-GOMS l No directions for task analysis – l Serial v. s. parallel perceptual processing – l l granularity (start & stop) contrary to MHP Only one active goal Error-free expert performance – no problem solving or evaluation l 17 Norman’s Action Cycle

Norman’s Action Cycle Goals Evaluation Execution Intention to act Evaluation of interpretations Sequence of actions Interpreting the perception Execution of sequence of actions Perceiving the state of the world GOMS 18 The World

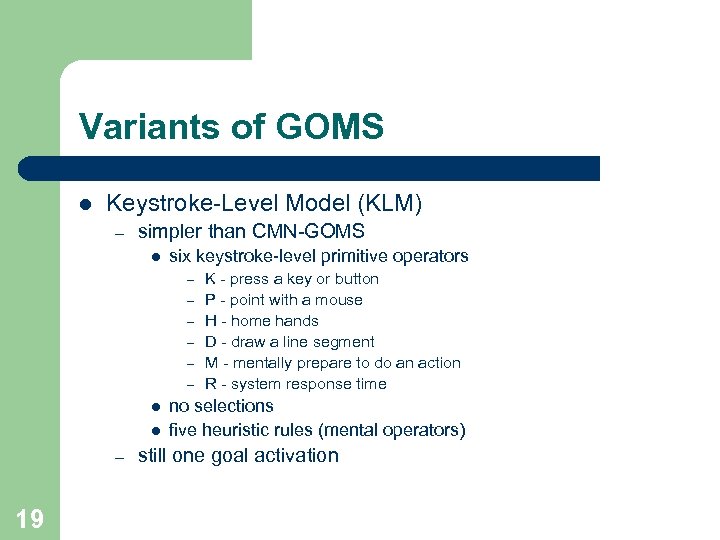

Variants of GOMS l Keystroke-Level Model (KLM) – simpler than CMN-GOMS l six keystroke-level primitive operators – – – l l – 19 K - press a key or button P - point with a mouse H - home hands D - draw a line segment M - mentally prepare to do an action R - system response time no selections five heuristic rules (mental operators) still one goal activation

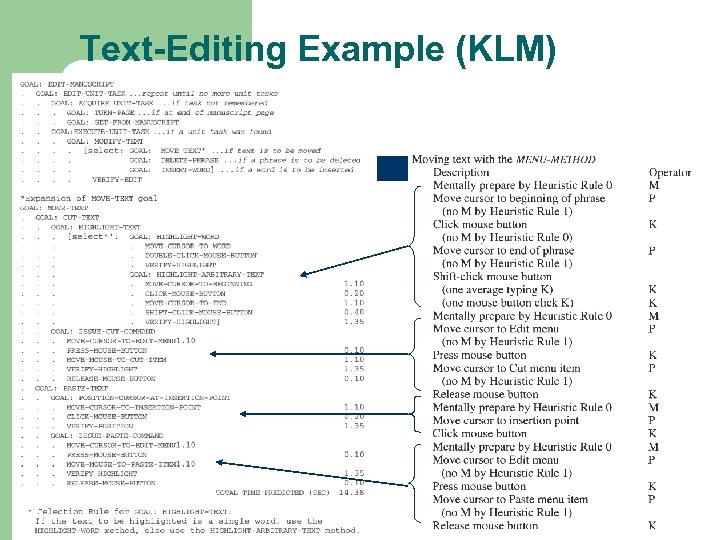

Text-Editing Example (KLM) 20

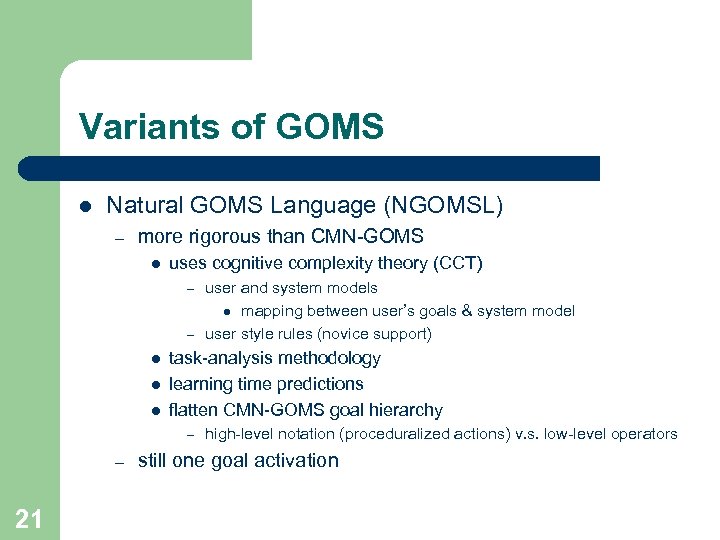

Variants of GOMS l Natural GOMS Language (NGOMSL) – more rigorous than CMN-GOMS l uses cognitive complexity theory (CCT) user and system models l mapping between user’s goals & system model – user style rules (novice support) – l l l task-analysis methodology learning time predictions flatten CMN-GOMS goal hierarchy – – 21 high-level notation (proceduralized actions) v. s. low-level operators still one goal activation

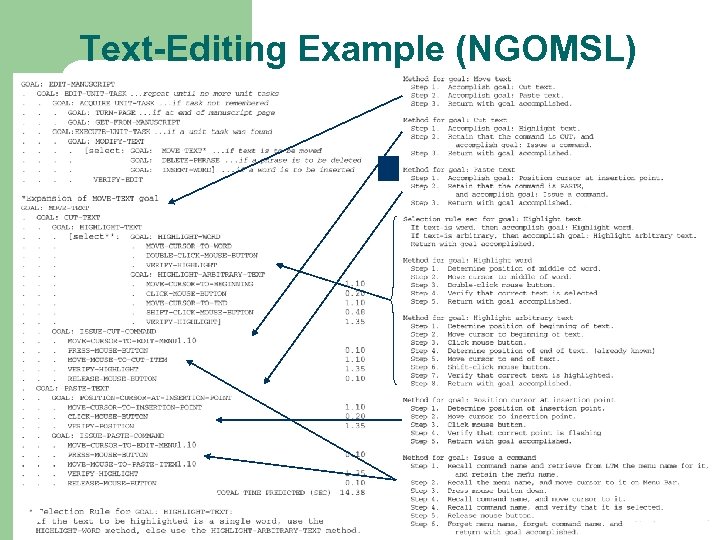

Text-Editing Example (NGOMSL) 22

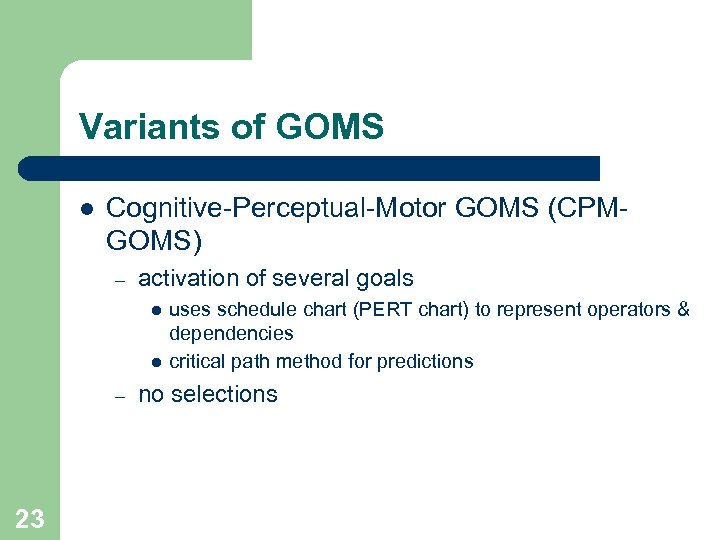

Variants of GOMS l Cognitive-Perceptual-Motor GOMS (CPMGOMS) – activation of several goals l l – 23 uses schedule chart (PERT chart) to represent operators & dependencies critical path method for predictions no selections

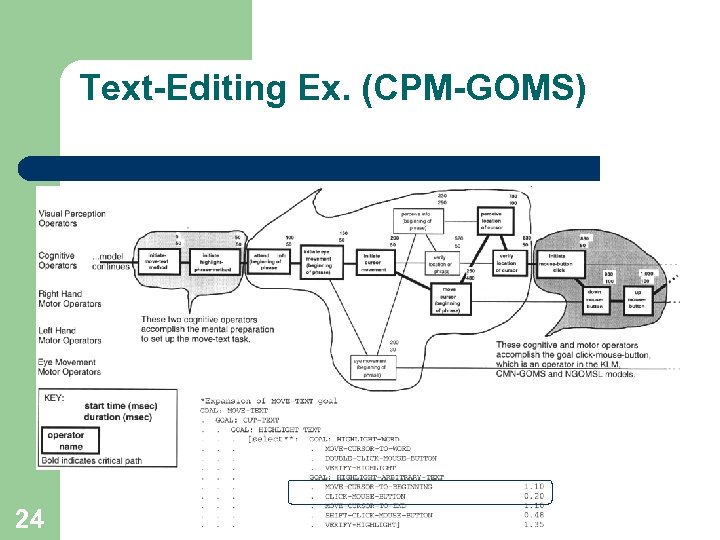

Text-Editing Ex. (CPM-GOMS) 24

GOMS in Practice l l l Mouse-driven text editor (KLM) CAD system (KLM) Television control system (NGOMSL) Minimalist documentation (NGOMSL) Telephone assistance operator workstation (CMP-GOMS) – 25 saved about $2 million a year

Summary l GOMS in general – – l The analysis of knowledge of how to do a task in terms of the components of goals, operators, methods & selection rules. (John & Kieras 94) CMN-GOMS, KLM, NGOMSL, CPM-GOMS Analysis entails l l l task-analysis parameterization of operators predictions – 26 execution time, learning time (NGOMSL), short-term memory requirements

Automated Usability Assessment Outline l l 27 Automated Usability Assessment? Characterizing Automated Methods Automated Assessment Methods Summary

Automated Usability Assessment? l l l 28 What does it mean to automate assessment? How could this be done? What does it require?

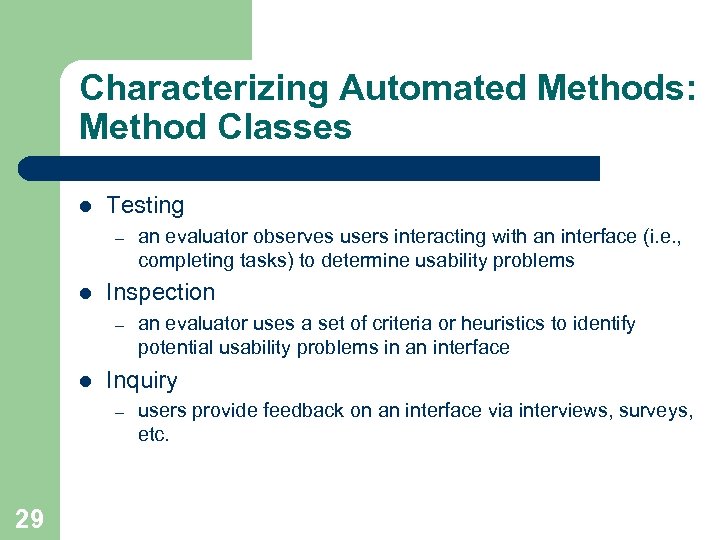

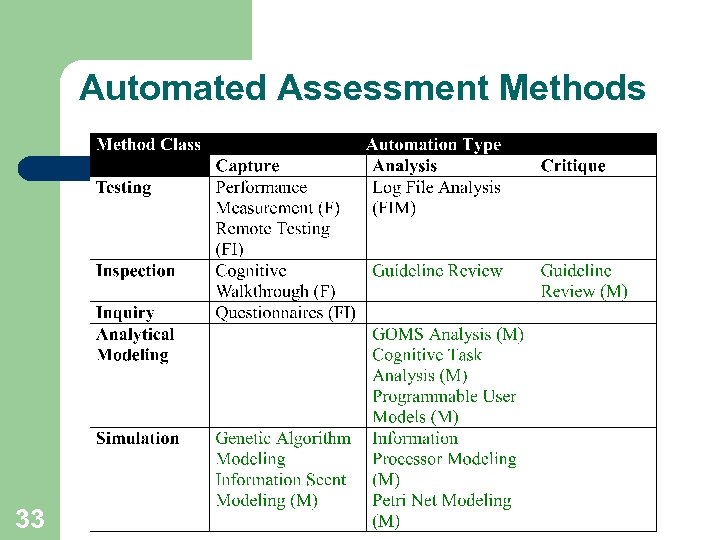

Characterizing Automated Methods: Method Classes l Testing – l Inspection – l an evaluator uses a set of criteria or heuristics to identify potential usability problems in an interface Inquiry – 29 an evaluator observes users interacting with an interface (i. e. , completing tasks) to determine usability problems users provide feedback on an interface via interviews, surveys, etc.

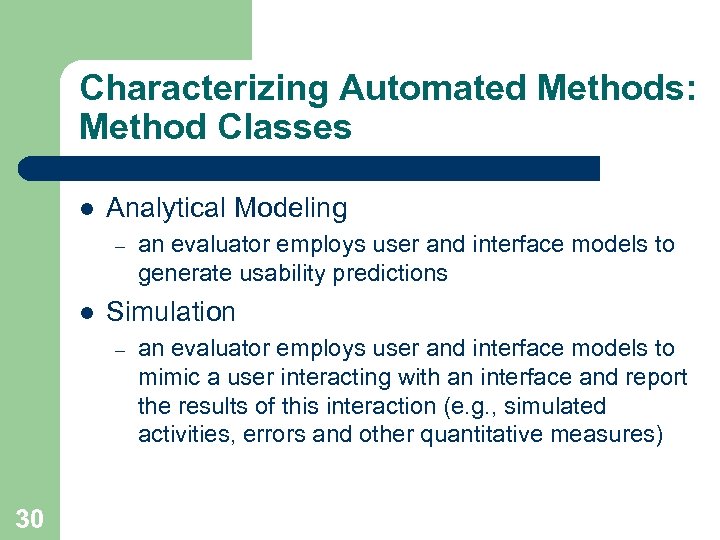

Characterizing Automated Methods: Method Classes l Analytical Modeling – l Simulation – 30 an evaluator employs user and interface models to generate usability predictions an evaluator employs user and interface models to mimic a user interacting with an interface and report the results of this interaction (e. g. , simulated activities, errors and other quantitative measures)

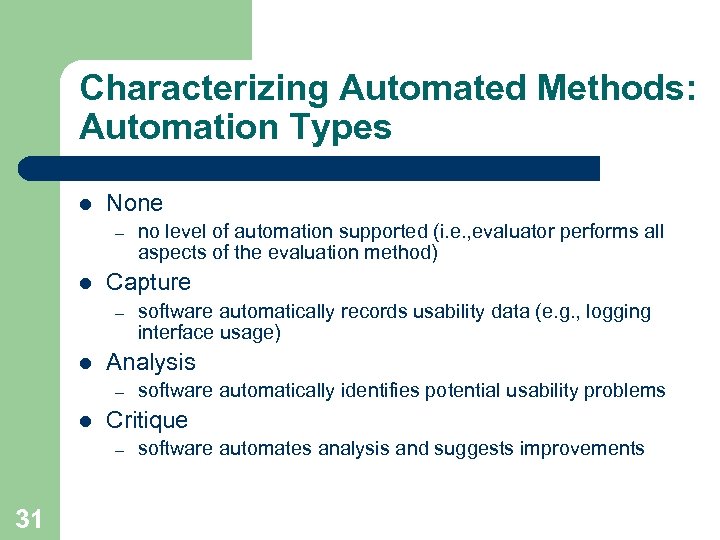

Characterizing Automated Methods: Automation Types l None – l Capture – l software automatically identifies potential usability problems Critique – 31 software automatically records usability data (e. g. , logging interface usage) Analysis – l no level of automation supported (i. e. , evaluator performs all aspects of the evaluation method) software automates analysis and suggests improvements

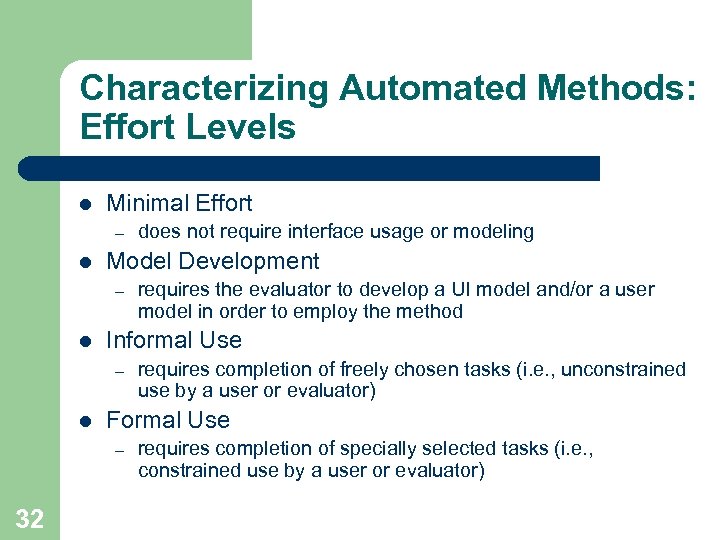

Characterizing Automated Methods: Effort Levels l Minimal Effort – l Model Development – l requires completion of freely chosen tasks (i. e. , unconstrained use by a user or evaluator) Formal Use – 32 requires the evaluator to develop a UI model and/or a user model in order to employ the method Informal Use – l does not require interface usage or modeling requires completion of specially selected tasks (i. e. , constrained use by a user or evaluator)

Automated Assessment Methods 33

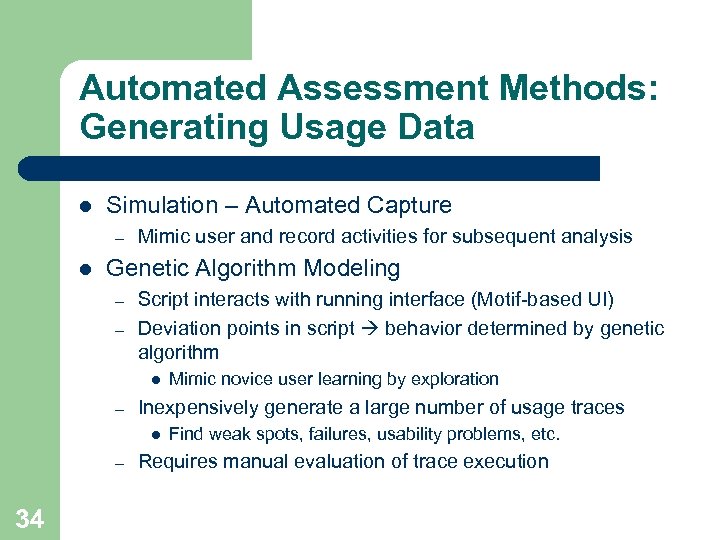

Automated Assessment Methods: Generating Usage Data l Simulation – Automated Capture – l Mimic user and record activities for subsequent analysis Genetic Algorithm Modeling – – Script interacts with running interface (Motif-based UI) Deviation points in script behavior determined by genetic algorithm l – Inexpensively generate a large number of usage traces l – 34 Mimic novice user learning by exploration Find weak spots, failures, usability problems, etc. Requires manual evaluation of trace execution

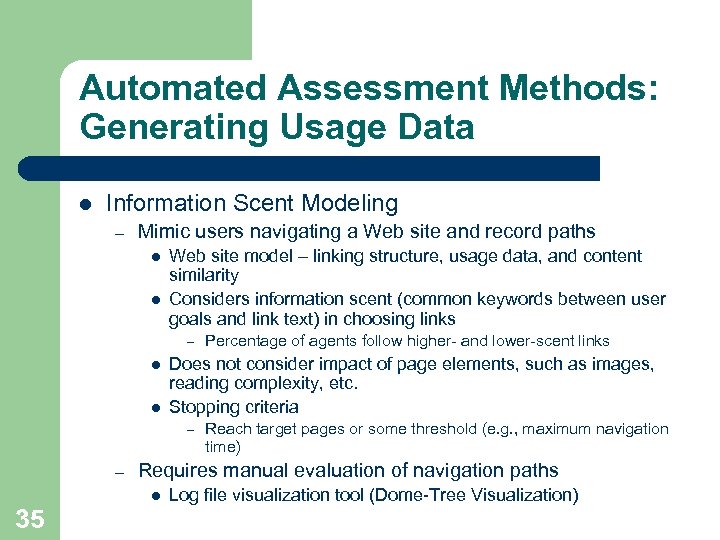

Automated Assessment Methods: Generating Usage Data l Information Scent Modeling – Mimic users navigating a Web site and record paths l l Web site model – linking structure, usage data, and content similarity Considers information scent (common keywords between user goals and link text) in choosing links – l l Does not consider impact of page elements, such as images, reading complexity, etc. Stopping criteria – – Reach target pages or some threshold (e. g. , maximum navigation time) Requires manual evaluation of navigation paths l 35 Percentage of agents follow higher- and lower-scent links Log file visualization tool (Dome-Tree Visualization)

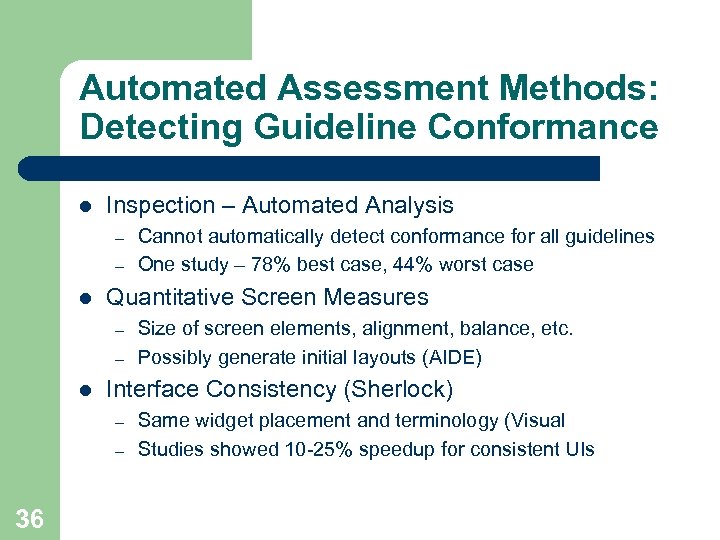

Automated Assessment Methods: Detecting Guideline Conformance l Inspection – Automated Analysis – – l Quantitative Screen Measures – – l Size of screen elements, alignment, balance, etc. Possibly generate initial layouts (AIDE) Interface Consistency (Sherlock) – – 36 Cannot automatically detect conformance for all guidelines One study – 78% best case, 44% worst case Same widget placement and terminology (Visual Studies showed 10 -25% speedup for consistent UIs

Automated Assessment Methods: Detecting Guideline Conformance l Quantitative Web Measures – – Words, links, graphics, page breadth & depth, etc. (Rating Game, Hyper. AT, TANGO) Most techniques not empirically-validated l l HTML Analysis (Web. SAT) – 37 Web TANGO uses expert ratings to develop prediction models All images have alt tags, one outgoing link/page, etc.

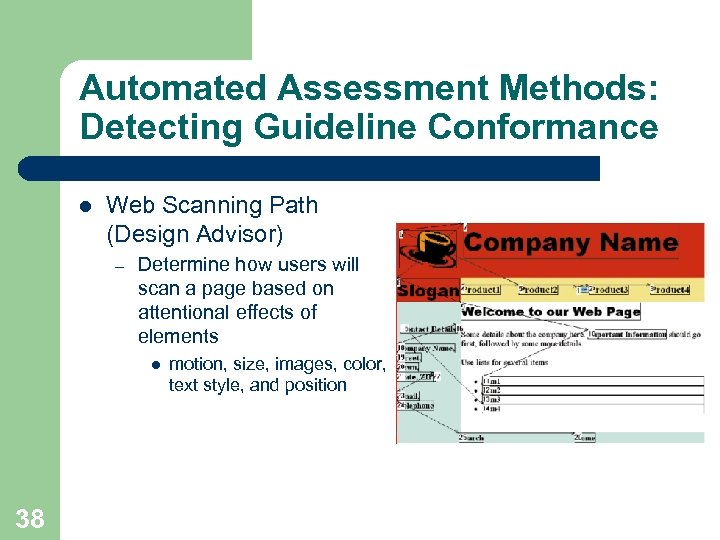

Automated Assessment Methods: Detecting Guideline Conformance l Web Scanning Path (Design Advisor) – Determine how users will scan a page based on attentional effects of elements l 38 motion, size, images, color, text style, and position

Automated Assessment Methods: Suggesting Improvements l l Inspection – Automated Critique Rule-based critique systems – Typically done within a user interface management system l – Apply guidelines relevant to each graphical object Widely applicable to Windows UIs HTML Critique – 39 X Window UIs (KRI/AG), control systems (SYNOP), space systems (CHIMES) Object-based critique systems (Ergoval) – l Very limited application – Syntax, validation, accessibility (Bobby), and others Although useful, not empirically validated

Automated Assessment Methods: Modeling User Performance l Analytical Modeling – Automated Analysis – – l Predict user behavior, mainly execution time No methods for Web interfaces GOMS Analysis (previously discussed) – – Generate predictions for GOMS models (CATHCI, QGOMS) Generate model and predictions (USAGE, CRITIQUE) l l Cognitive Task Analysis – Input interface parameters to an underlying theoretical model (expert system) l 40 UIs developed within user interface development environment – Do not construct new model for each task Generate predictions based on parameters as well as theoretical basis for predictions

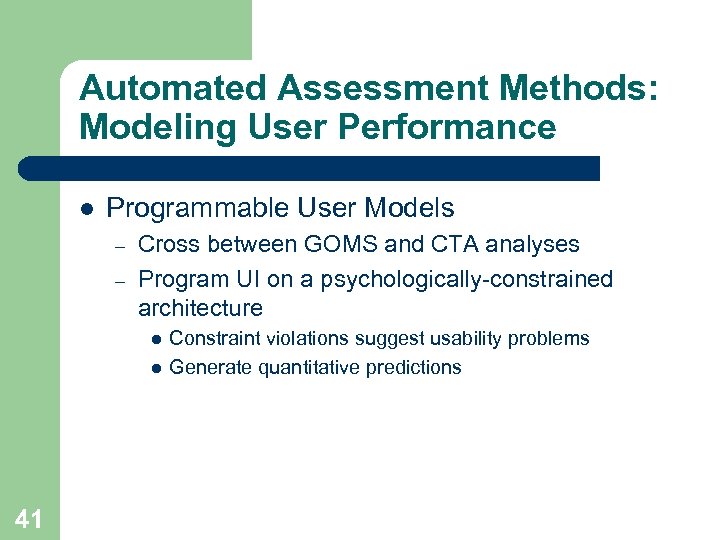

Automated Assessment Methods: Modeling User Performance l Programmable User Models – – Cross between GOMS and CTA analyses Program UI on a psychologically-constrained architecture l l 41 Constraint violations suggest usability problems Generate quantitative predictions

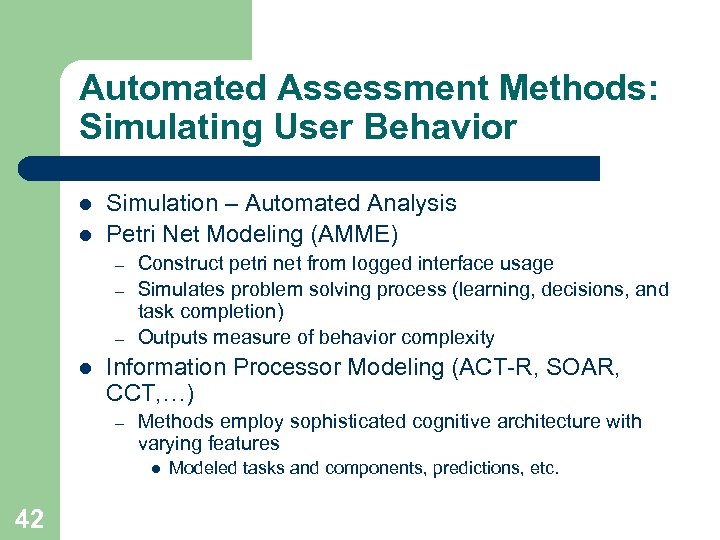

Automated Assessment Methods: Simulating User Behavior l l Simulation – Automated Analysis Petri Net Modeling (AMME) – – – l Construct petri net from logged interface usage Simulates problem solving process (learning, decisions, and task completion) Outputs measure of behavior complexity Information Processor Modeling (ACT-R, SOAR, CCT, …) – Methods employ sophisticated cognitive architecture with varying features l 42 Modeled tasks and components, predictions, etc.

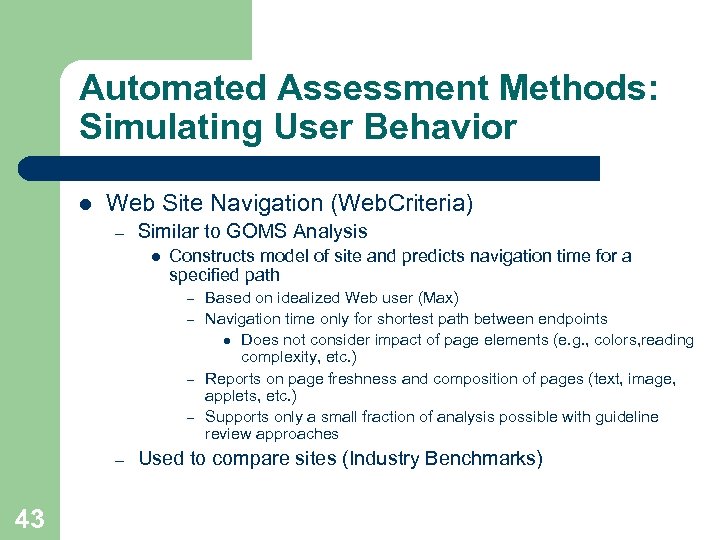

Automated Assessment Methods: Simulating User Behavior l Web Site Navigation (Web. Criteria) – Similar to GOMS Analysis l Constructs model of site and predicts navigation time for a specified path Based on idealized Web user (Max) – Navigation time only for shortest path between endpoints l Does not consider impact of page elements (e. g. , colors, reading complexity, etc. ) – Reports on page freshness and composition of pages (text, image, applets, etc. ) – Supports only a small fraction of analysis possible with guideline review approaches – – 43 Used to compare sites (Industry Benchmarks)

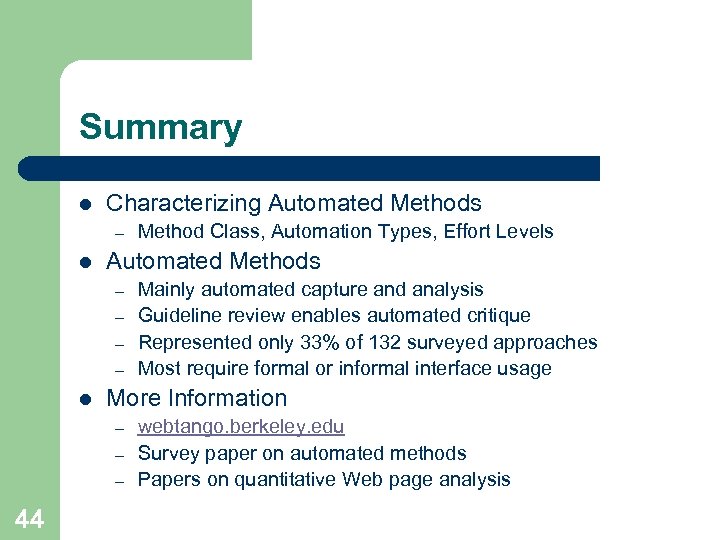

Summary l Characterizing Automated Methods – l Automated Methods – – l Mainly automated capture and analysis Guideline review enables automated critique Represented only 33% of 132 surveyed approaches Most require formal or informal interface usage More Information – – – 44 Method Class, Automation Types, Effort Levels webtango. berkeley. edu Survey paper on automated methods Papers on quantitative Web page analysis

d06372bedfd5a78d3d4978c39bc18a1f.ppt