a27c3cb2189a9f569cf4277148b8b55d.ppt

- Количество слайдов: 79

Globally Optimal Estimates for Geometric Reconstruction Problems Tom Gilat, Adi Lakritz Advanced Topics in Computer Vision Seminar Faculty of Mathematics and Computer Science Weizmann Institute 3 June 2007

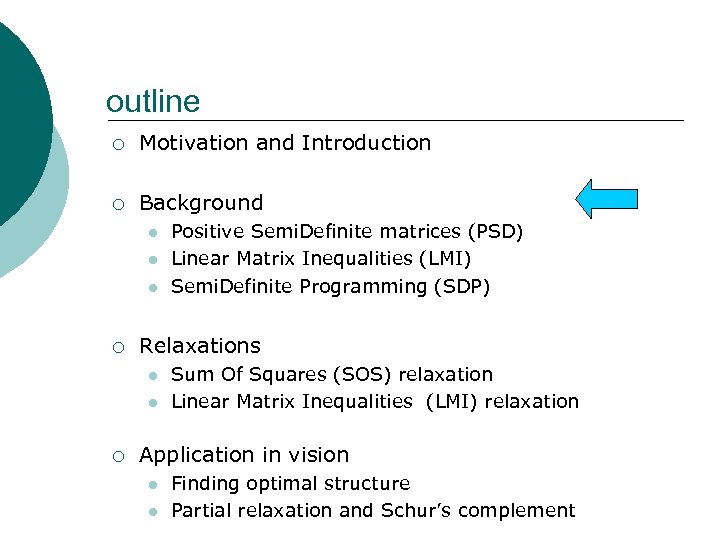

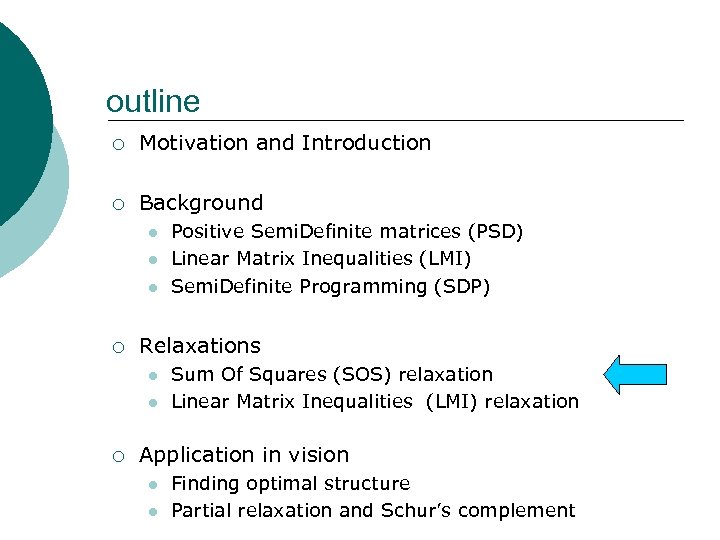

outline ¡ Motivation and Introduction ¡ Background l l l ¡ Relaxations l l ¡ Positive Semi. Definite matrices (PSD) Linear Matrix Inequalities (LMI) Semi. Definite Programming (SDP) Sum Of Squares (SOS) relaxation Linear Matrix Inequalities (LMI) relaxation Application in vision l l Finding optimal structure Partial relaxation and Schur’s complement

Motivation Geometric Reconstruction Problems Polynomial optimization problems (POPs)

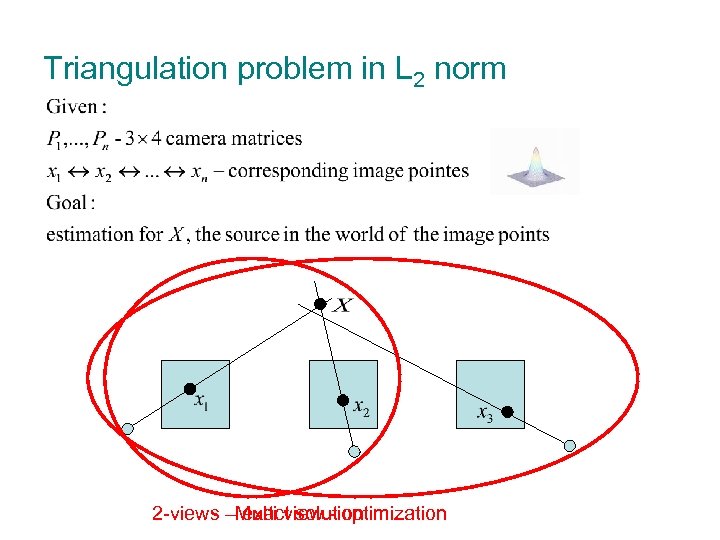

Triangulation problem in L 2 norm 2 -views – exact solution Multi view - optimization

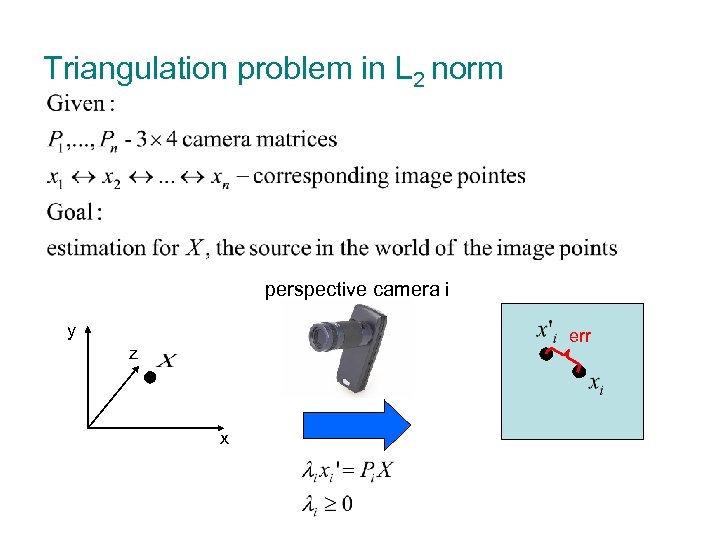

Triangulation problem in L 2 norm perspective camera i y err z x

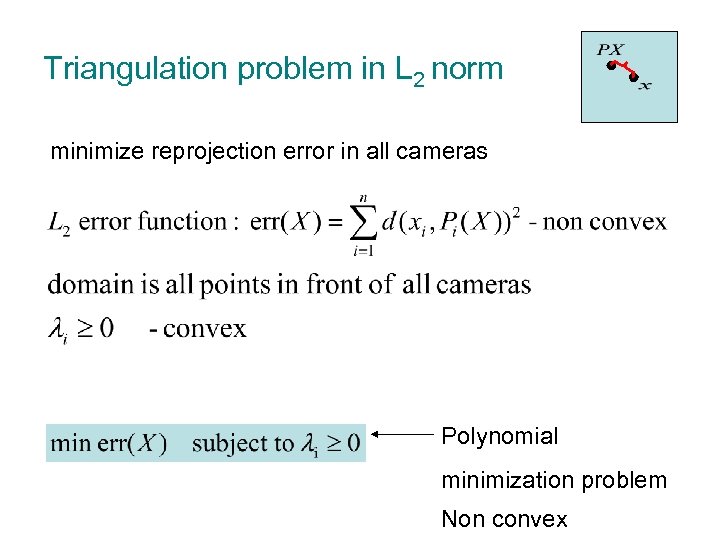

Triangulation problem in L 2 norm minimize reprojection error in all cameras Polynomial minimization problem Non convex

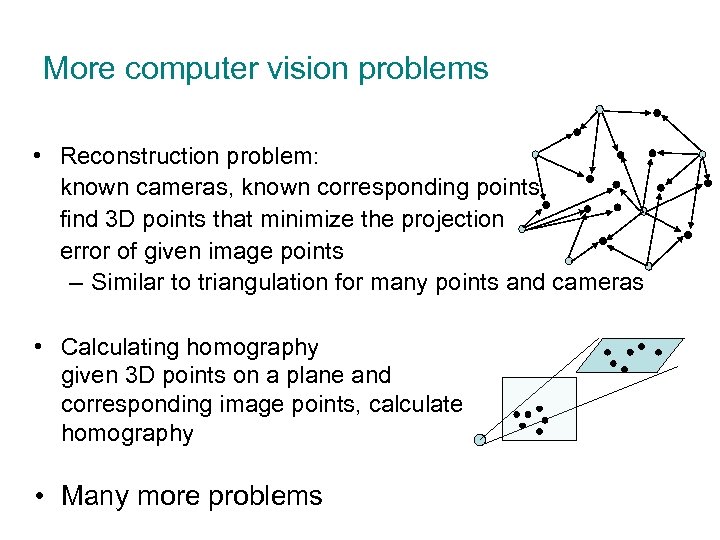

More computer vision problems • Reconstruction problem: known cameras, known corresponding points find 3 D points that minimize the projection error of given image points – Similar to triangulation for many points and cameras • Calculating homography given 3 D points on a plane and corresponding image points, calculate homography • Many more problems

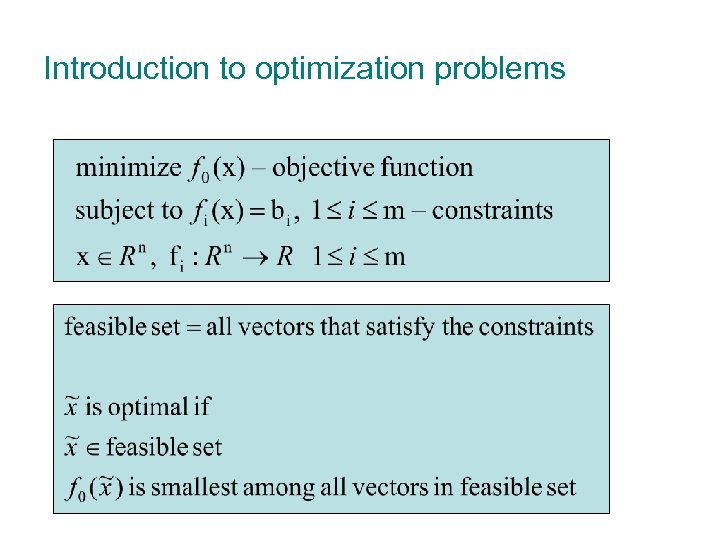

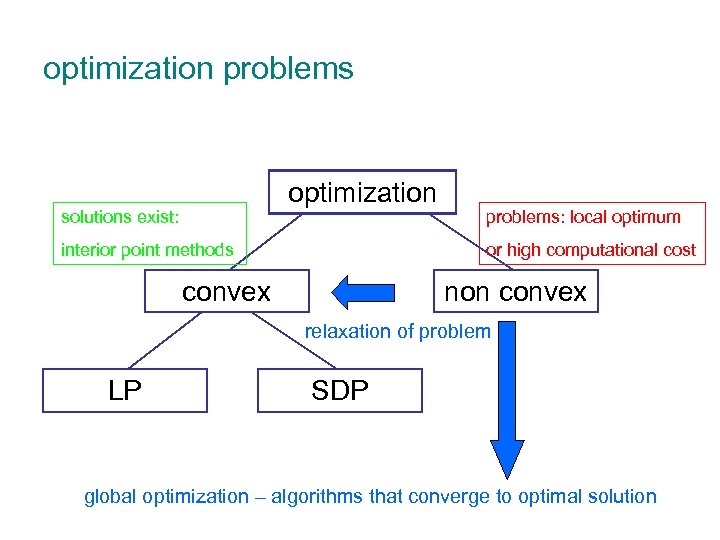

Optimization problems

Introduction to optimization problems

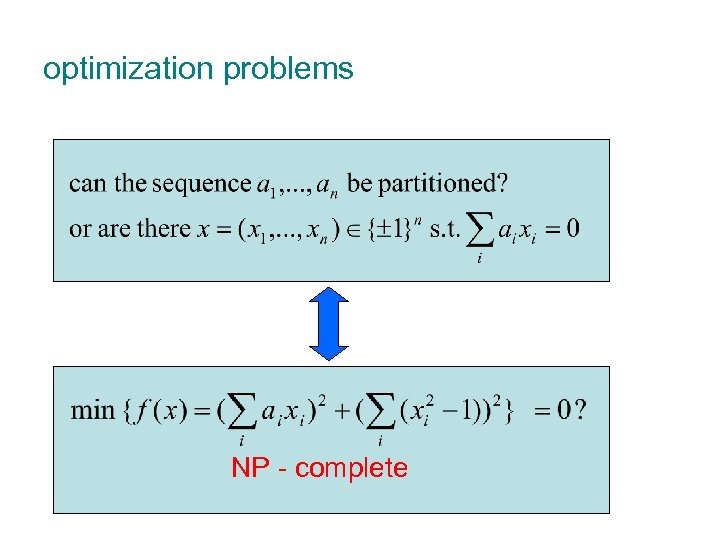

optimization problems NP - complete

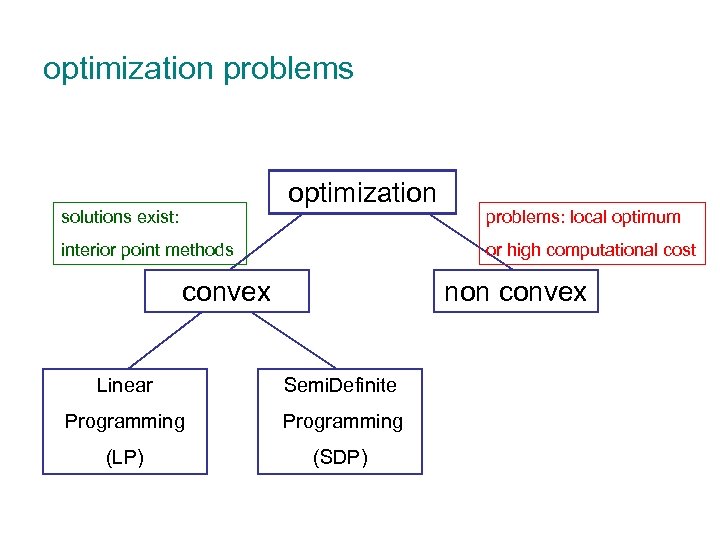

optimization problems optimization solutions exist: interior point methods problems: local optimum or high computational cost convex non convex Linear Semi. Definite Programming (LP) (SDP)

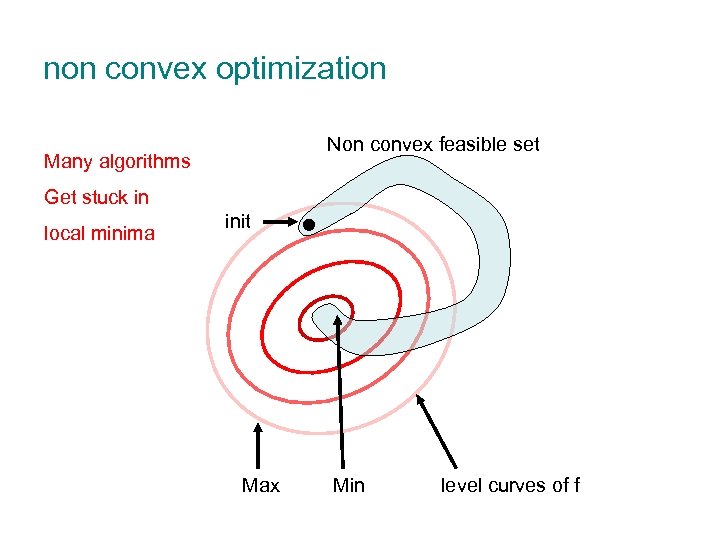

non convex optimization Non convex feasible set Many algorithms Get stuck in local minima init Max Min level curves of f

optimization problems optimization solutions exist: interior point methods problems: local optimum or high computational cost convex non convex relaxation of problem LP SDP global optimization – algorithms that converge to optimal solution

outline ¡ Motivation and Introduction ¡ Background l l l ¡ Relaxations l l ¡ Positive Semi. Definite matrices (PSD) Linear Matrix Inequalities (LMI) Semi. Definite Programming (SDP) Sum Of Squares (SOS) relaxation Linear Matrix Inequalities (LMI) relaxation Application in vision l l Finding optimal structure Partial relaxation and Schur’s complement

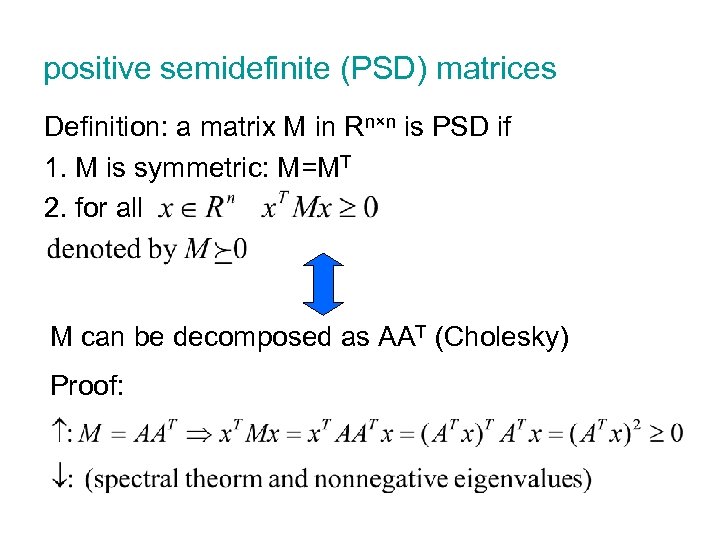

positive semidefinite (PSD) matrices Definition: a matrix M in Rn×n is PSD if 1. M is symmetric: M=MT 2. for all M can be decomposed as AAT (Cholesky) Proof:

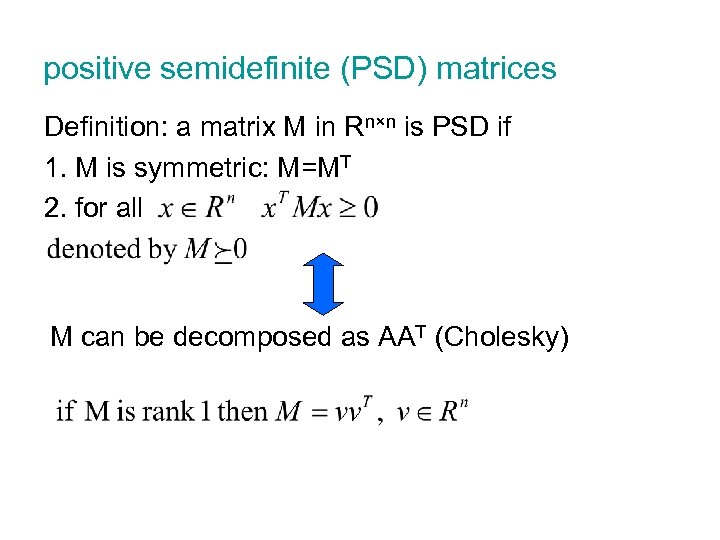

positive semidefinite (PSD) matrices Definition: a matrix M in Rn×n is PSD if 1. M is symmetric: M=MT 2. for all M can be decomposed as AAT (Cholesky)

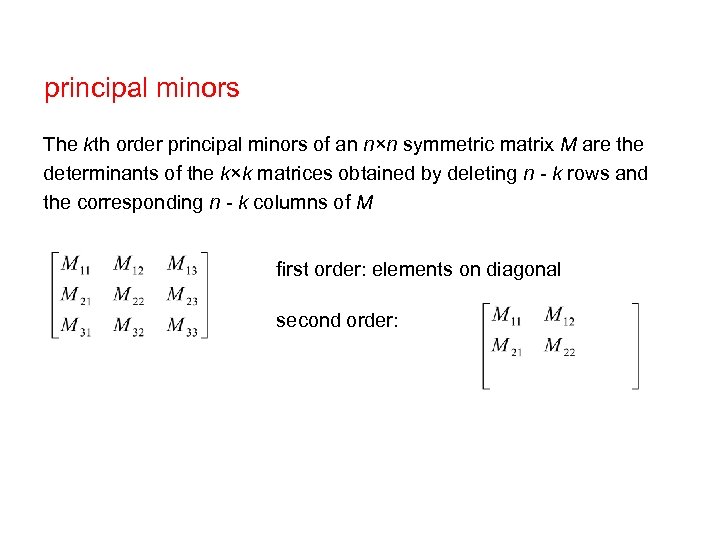

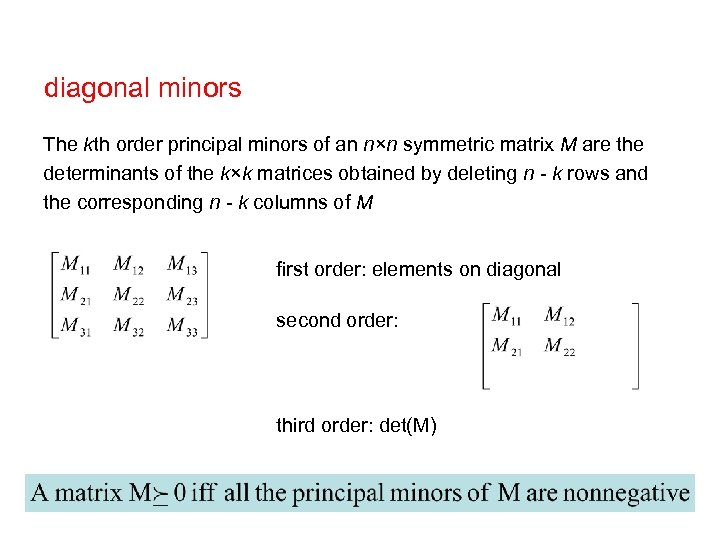

principal minors The kth order principal minors of an n×n symmetric matrix M are the determinants of the k×k matrices obtained by deleting n - k rows and the corresponding n - k columns of M first order: elements on diagonal second order:

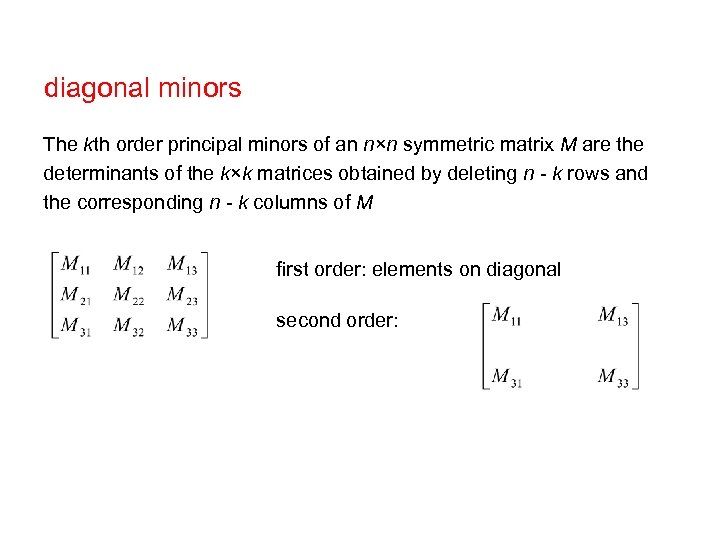

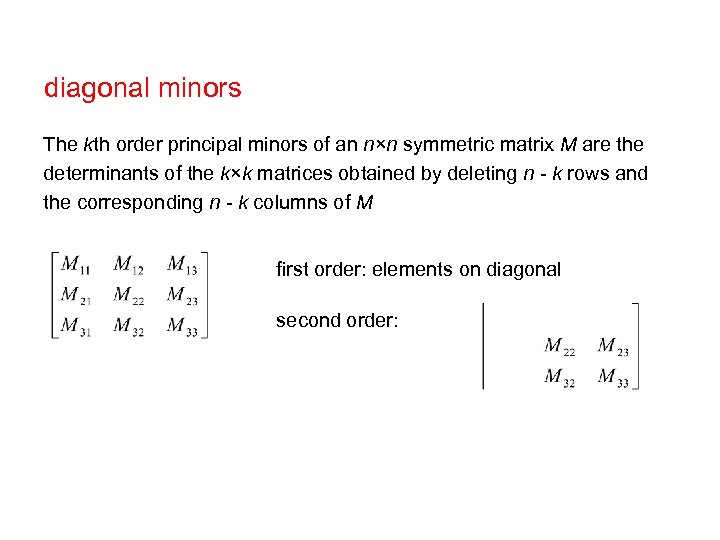

diagonal minors The kth order principal minors of an n×n symmetric matrix M are the determinants of the k×k matrices obtained by deleting n - k rows and the corresponding n - k columns of M first order: elements on diagonal second order:

diagonal minors The kth order principal minors of an n×n symmetric matrix M are the determinants of the k×k matrices obtained by deleting n - k rows and the corresponding n - k columns of M first order: elements on diagonal second order:

diagonal minors The kth order principal minors of an n×n symmetric matrix M are the determinants of the k×k matrices obtained by deleting n - k rows and the corresponding n - k columns of M first order: elements on diagonal second order: third order: det(M)

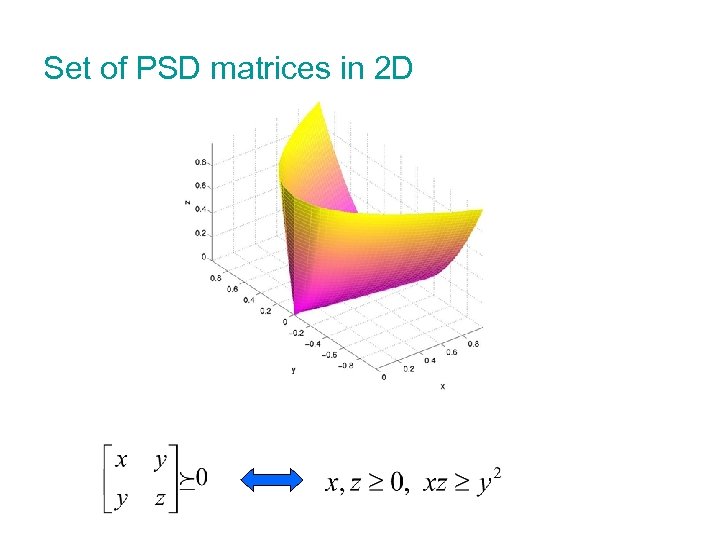

Set of PSD matrices in 2 D

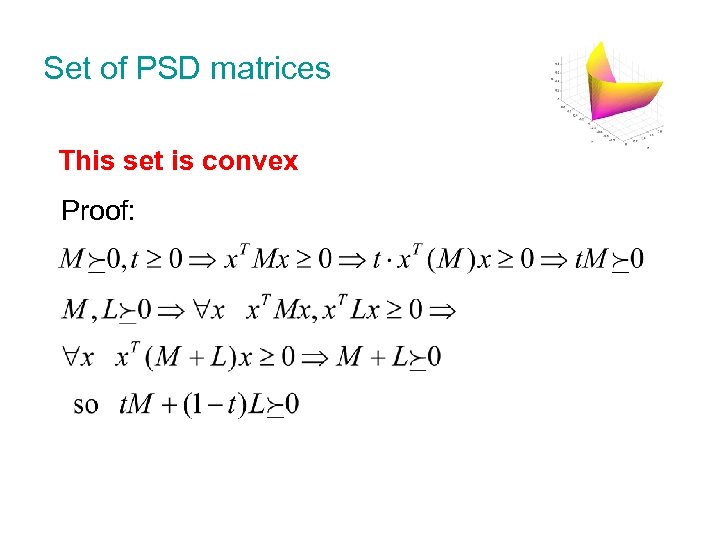

Set of PSD matrices This set is convex Proof:

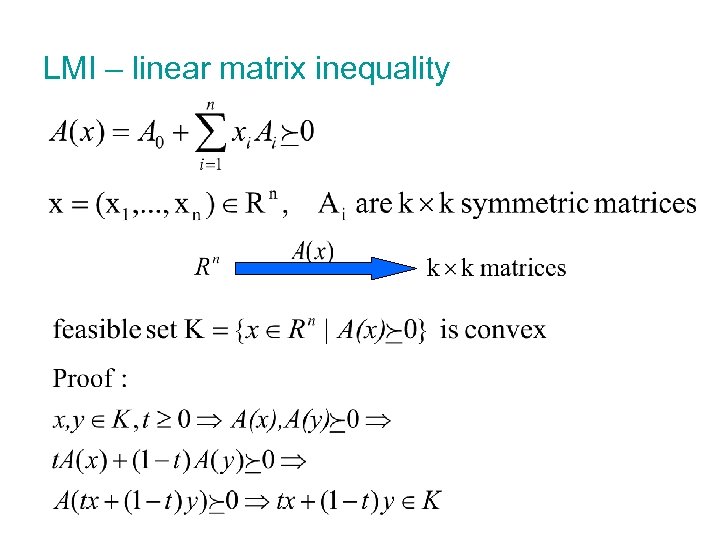

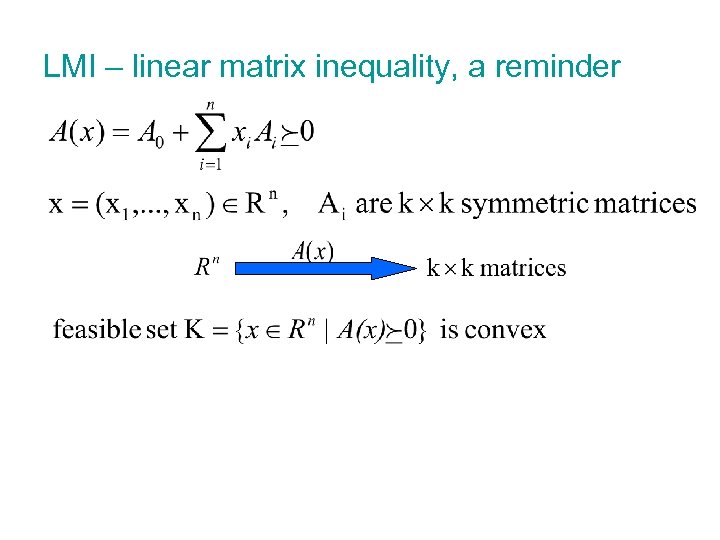

LMI – linear matrix inequality

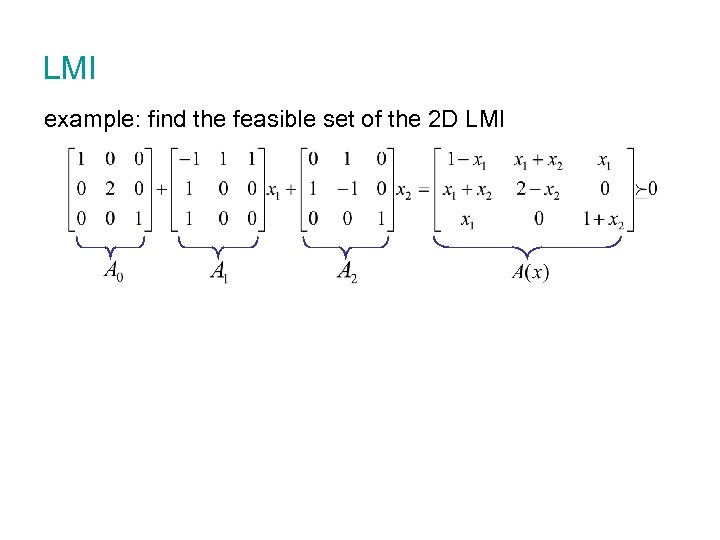

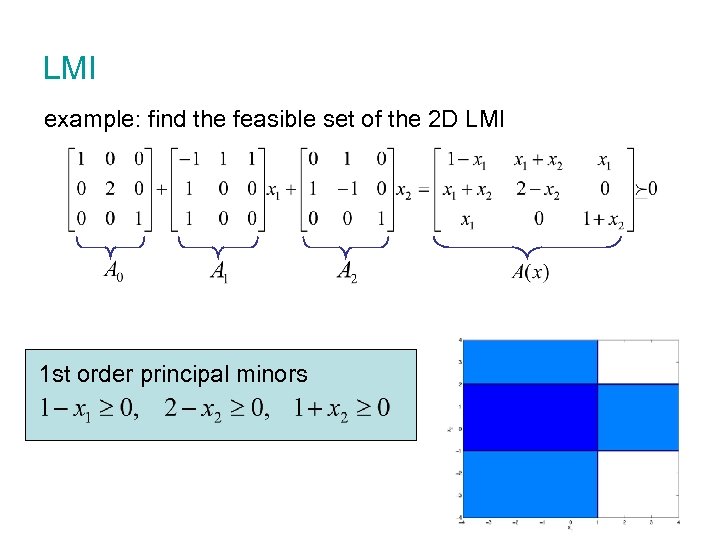

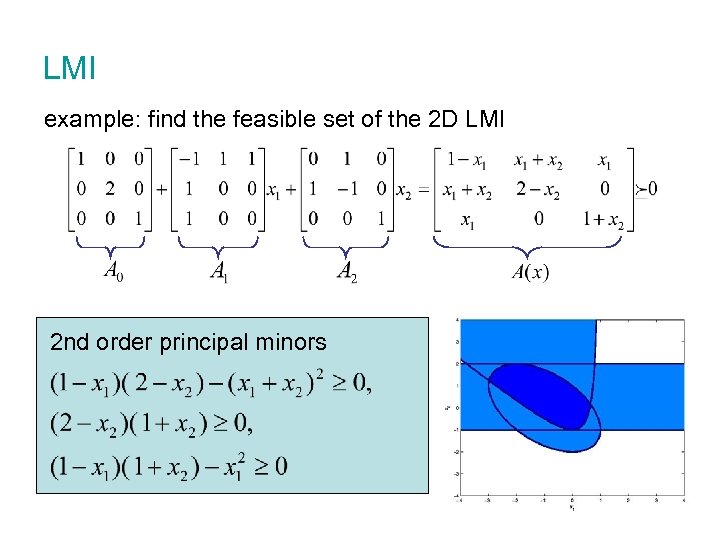

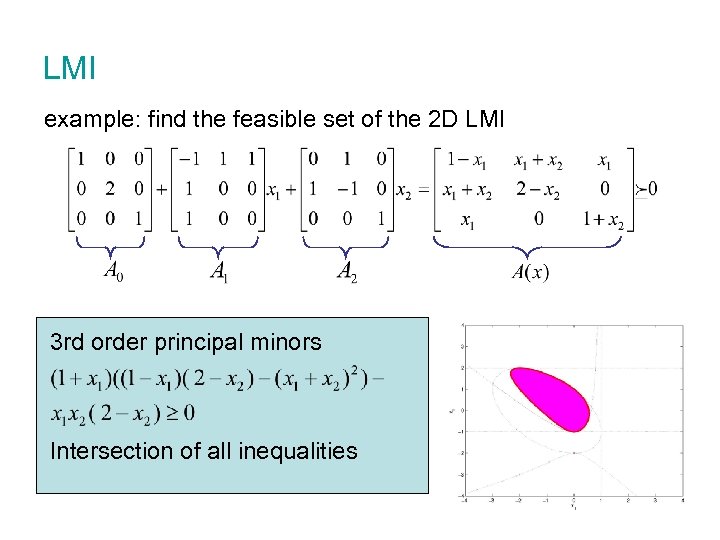

LMI example: find the feasible set of the 2 D LMI

reminder

LMI example: find the feasible set of the 2 D LMI 1 st order principal minors

LMI example: find the feasible set of the 2 D LMI 2 nd order principal minors

LMI example: find the feasible set of the 2 D LMI 3 rd order principal minors Intersection of all inequalities

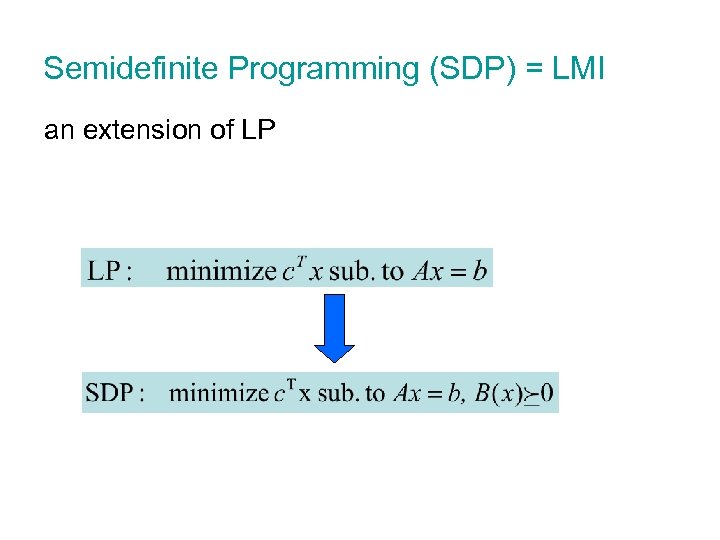

Semidefinite Programming (SDP) = LMI an extension of LP

outline ¡ Motivation and Introduction ¡ Background l l l ¡ Relaxations l l ¡ Positive Semi. Definite matrices (PSD) Linear Matrix Inequalities (LMI) Semi. Definite Programming (SDP) Sum Of Squares (SOS) relaxation Linear Matrix Inequalities (LMI) relaxation Application in vision l l Finding optimal structure Partial relaxation and Schur’s complement

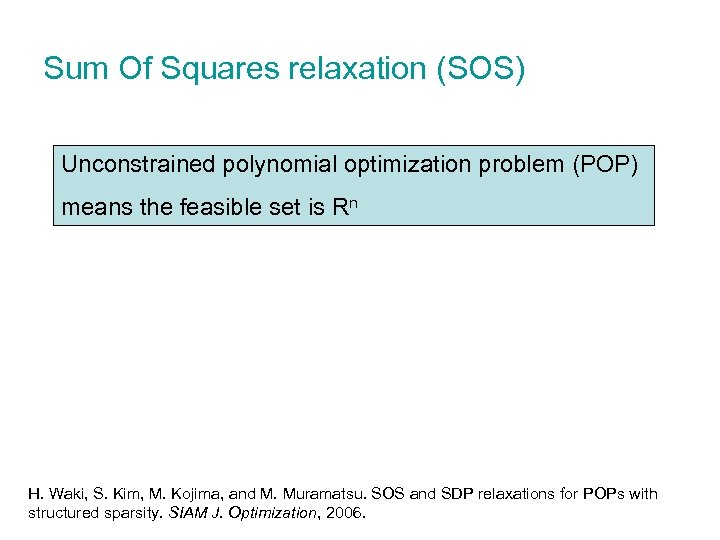

Sum Of Squares relaxation (SOS) Unconstrained polynomial optimization problem (POP) means the feasible set is Rn H. Waki, S. Kim, M. Kojima, and M. Muramatsu. SOS and SDP relaxations for POPs with structured sparsity. SIAM J. Optimization, 2006.

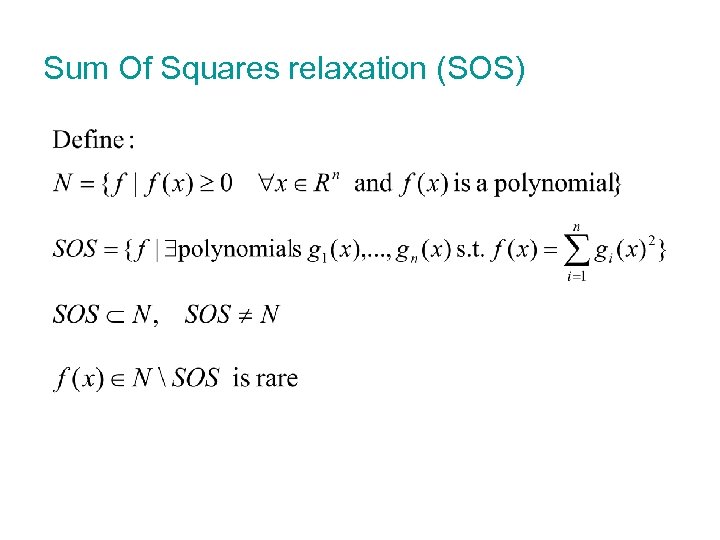

Sum Of Squares relaxation (SOS)

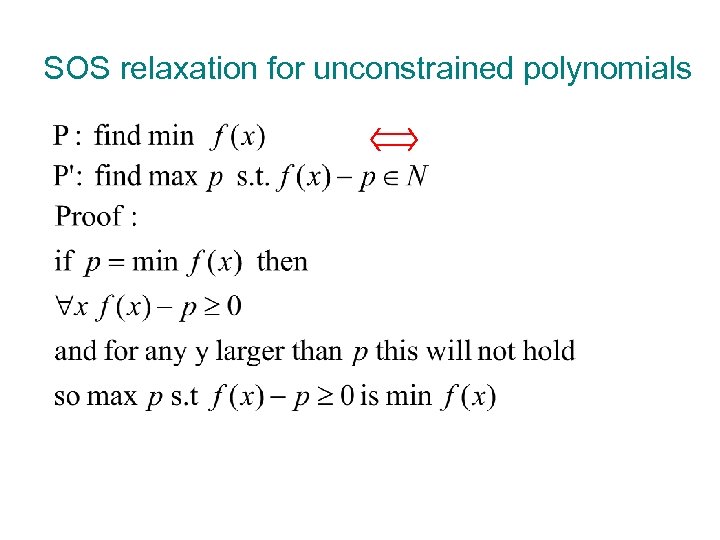

SOS relaxation for unconstrained polynomials

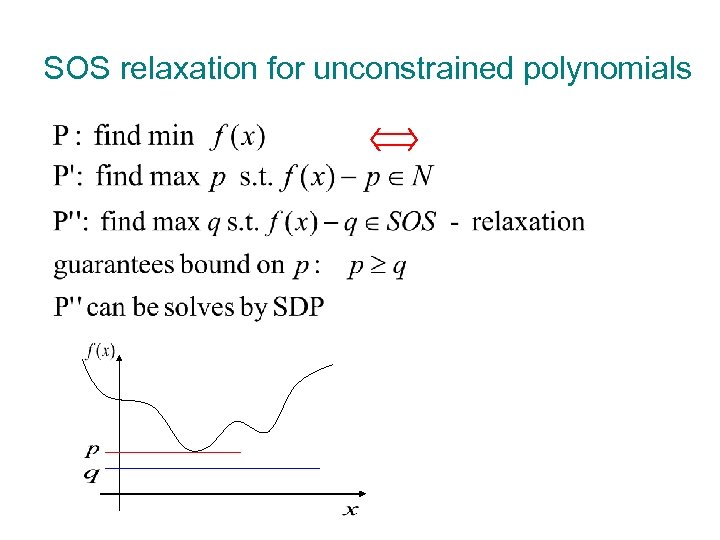

SOS relaxation for unconstrained polynomials

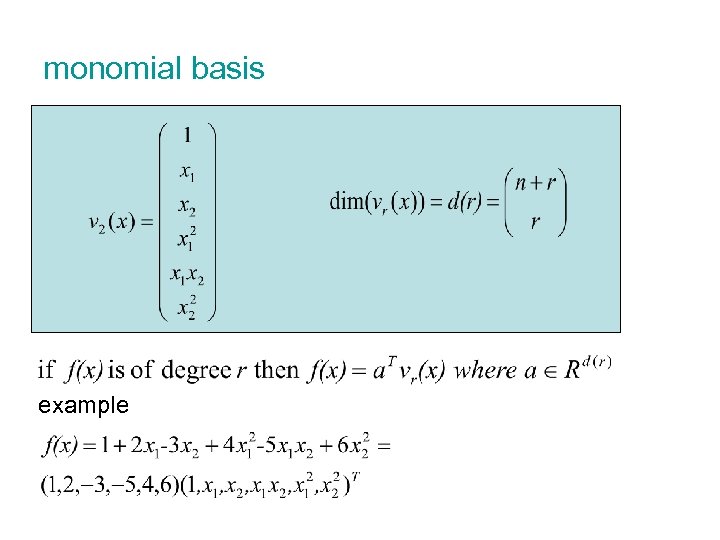

monomial basis example

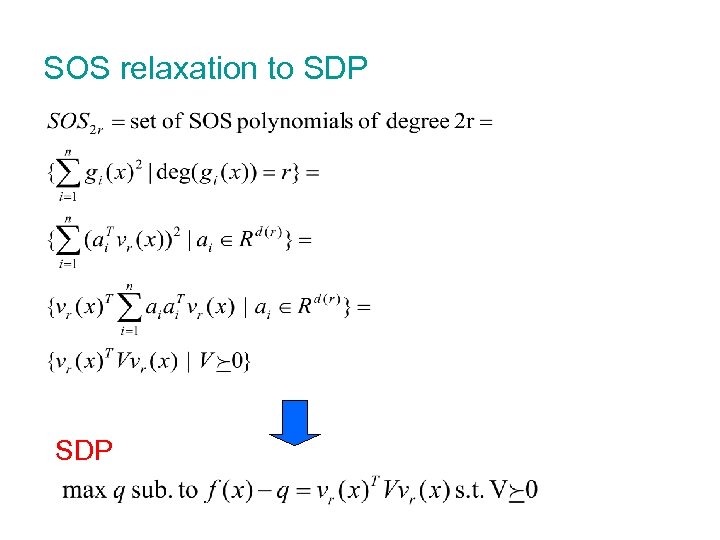

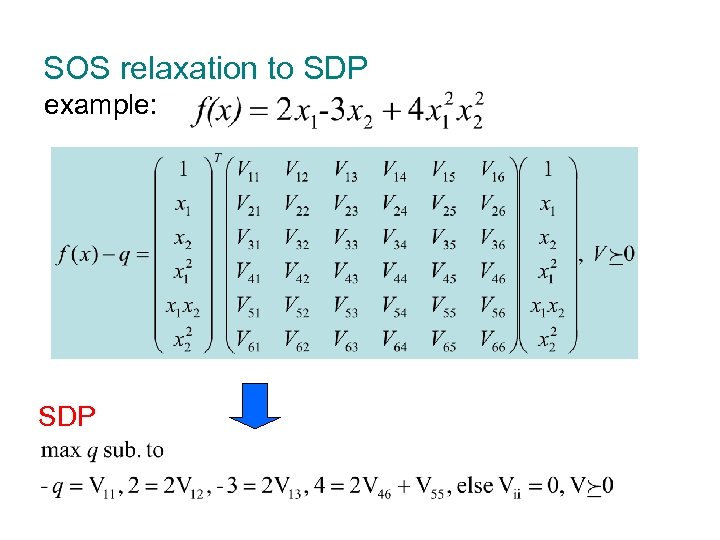

SOS relaxation to SDP

SOS relaxation to SDP example: SDP

SOS for constrained POPs possible to extend this method for constrained POPs by use of generalized Lagrange dual

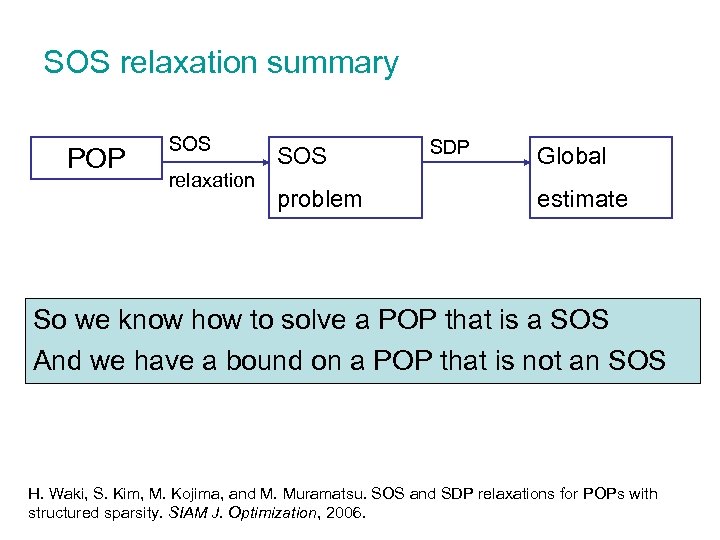

SOS relaxation summary POP SOS relaxation SOS problem SDP Global estimate So we know how to solve a POP that is a SOS And we have a bound on a POP that is not an SOS H. Waki, S. Kim, M. Kojima, and M. Muramatsu. SOS and SDP relaxations for POPs with structured sparsity. SIAM J. Optimization, 2006.

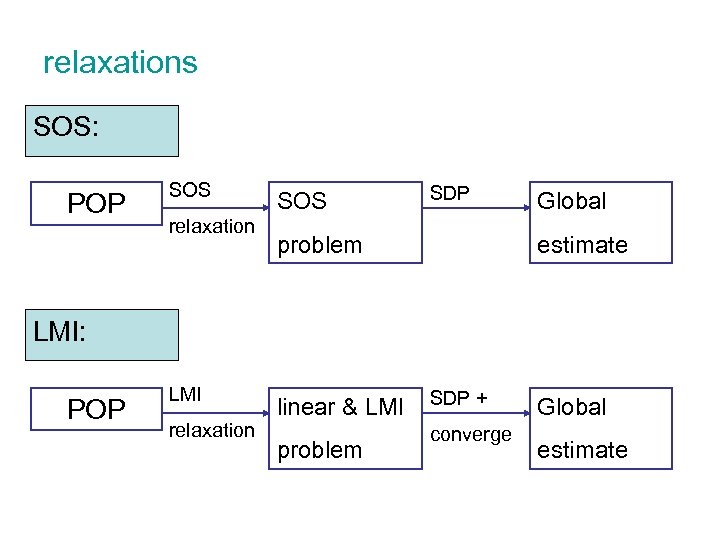

relaxations SOS: POP SOS relaxation SOS SDP problem Global estimate LMI: POP LMI relaxation linear & LMI problem SDP + converge Global estimate

outline ¡ Motivation and Introduction ¡ Background l l l ¡ Relaxations l l ¡ Positive Semi. Definite matrices (PSD) Linear Matrix Inequalities (LMI) Semi. Definite Programming (SDP) Sum Of Squares (SOS) relaxation Linear Matrix Inequalities (LMI) relaxation Application in vision l l Finding optimal structure Partial relaxation and Schur’s complement

LMI relaxations n Constraints are handled n Convergence to optimum is guaranteed n Applies to all polynomials, not SOS as well

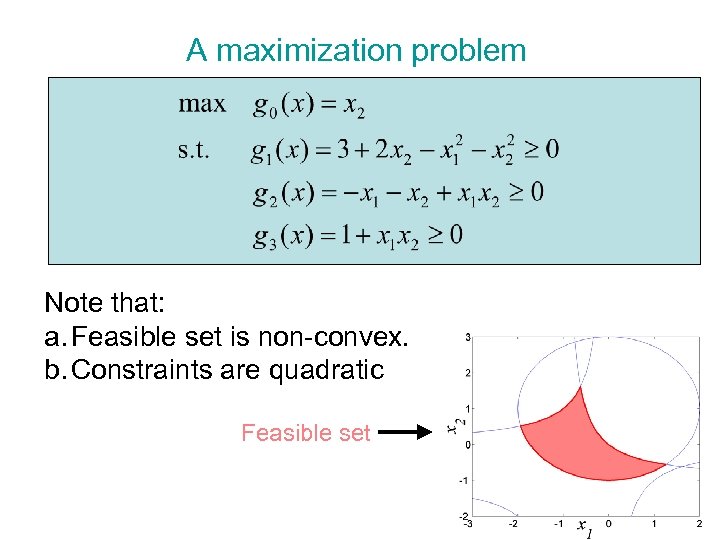

A maximization problem Note that: a. Feasible set is non-convex. b. Constraints are quadratic Feasible set

LMI – linear matrix inequality, a reminder

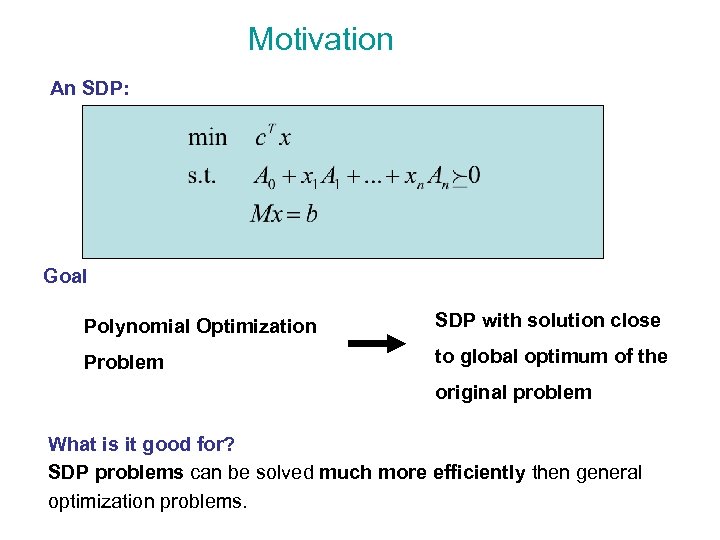

Motivation An SDP: Goal Polynomial Optimization SDP with solution close Problem to global optimum of the original problem What is it good for? SDP problems can be solved much more efficiently then general optimization problems.

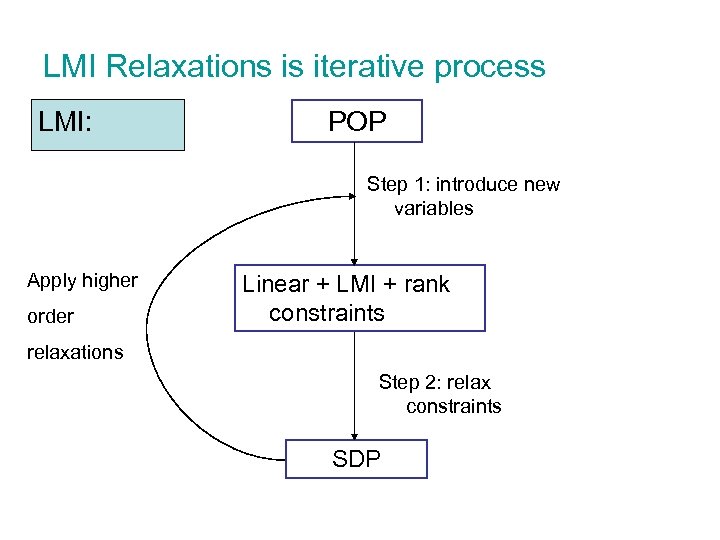

LMI Relaxations is iterative process LMI: POP Step 1: introduce new variables Apply higher order Linear + LMI + rank constraints relaxations Step 2: relax constraints SDP

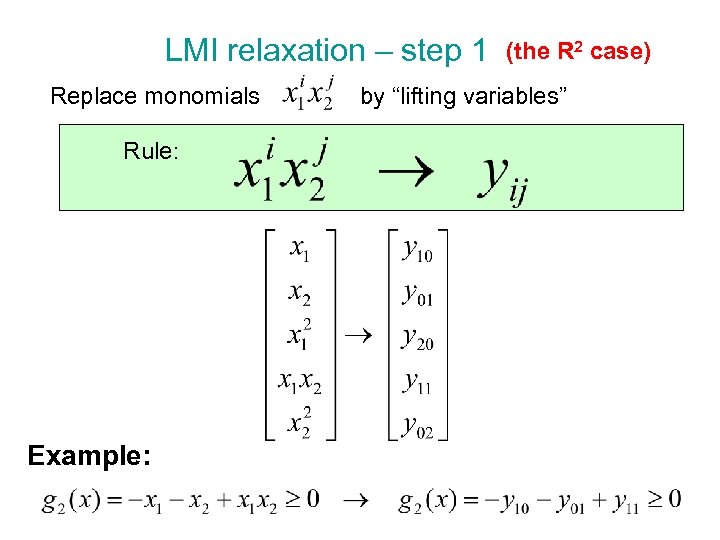

LMI relaxation – step 1 (the R 2 case) Replace monomials by “lifting variables” Rule: Example:

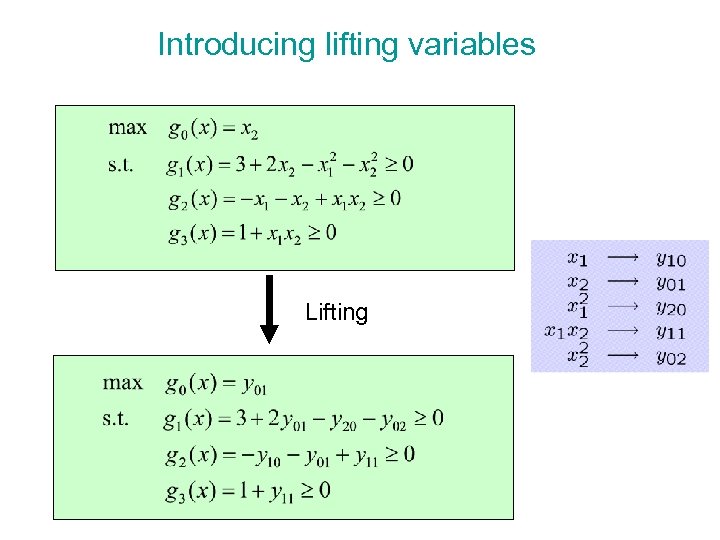

Introducing lifting variables Lifting

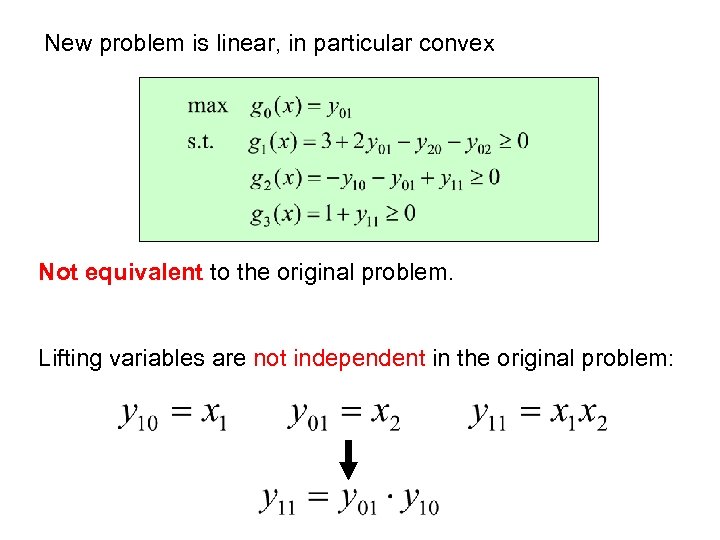

New problem is linear, in particular convex Not equivalent to the original problem. Lifting variables are not independent in the original problem:

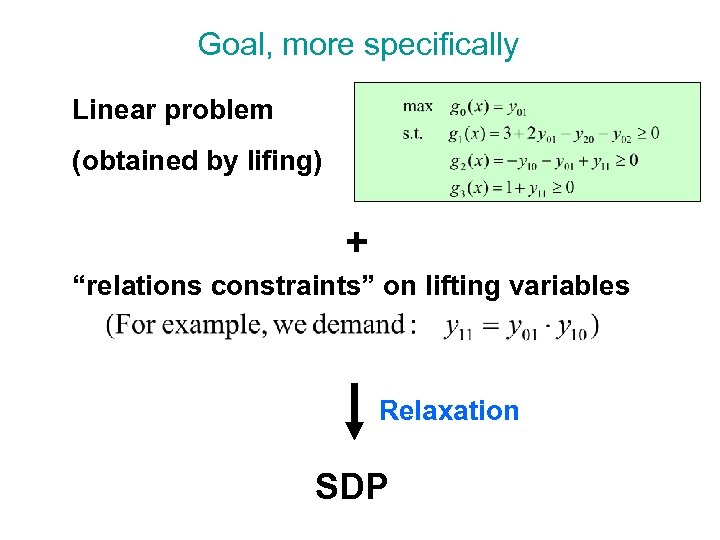

Goal, more specifically Linear problem (obtained by lifing) + “relations constraints” on lifting variables Relaxation SDP

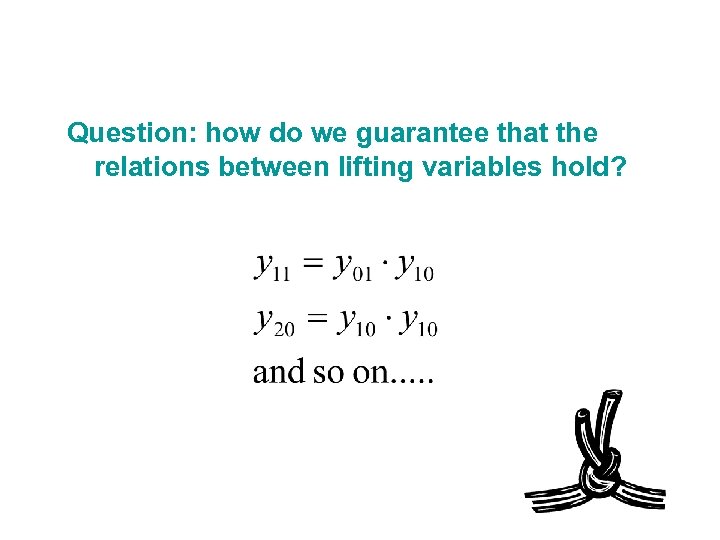

Question: how do we guarantee that the relations between lifting variables hold?

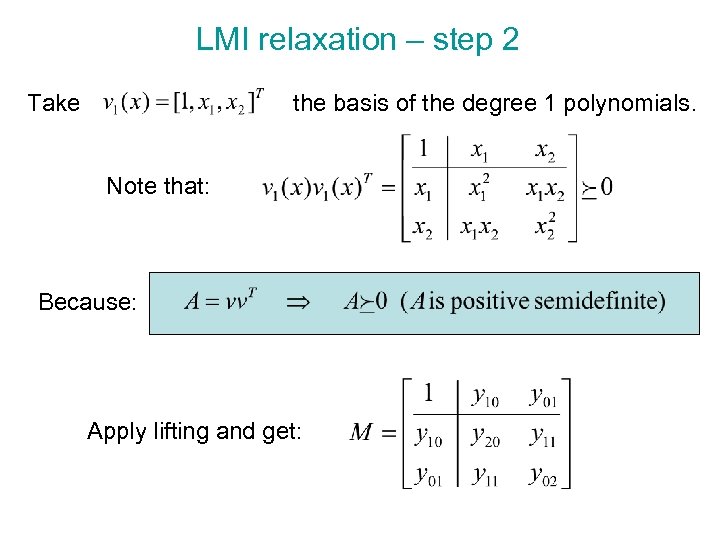

LMI relaxation – step 2 Take the basis of the degree 1 polynomials. Note that: Because: Apply lifting and get:

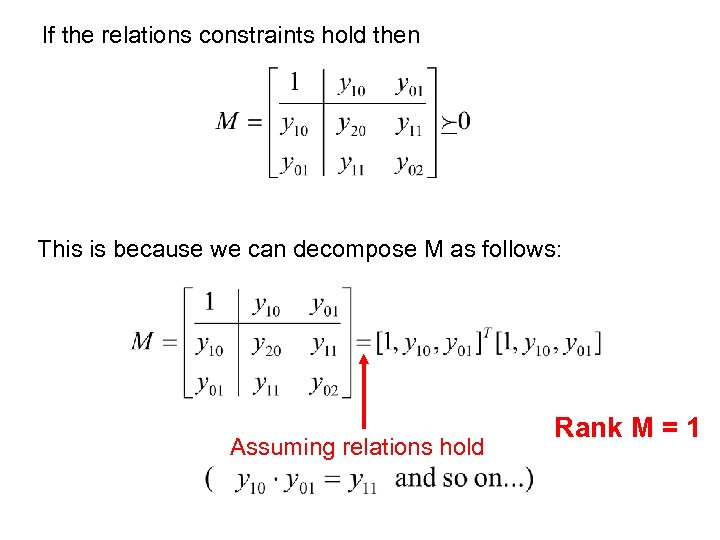

If the relations constraints hold then This is because we can decompose M as follows: Assuming relations hold Rank M = 1

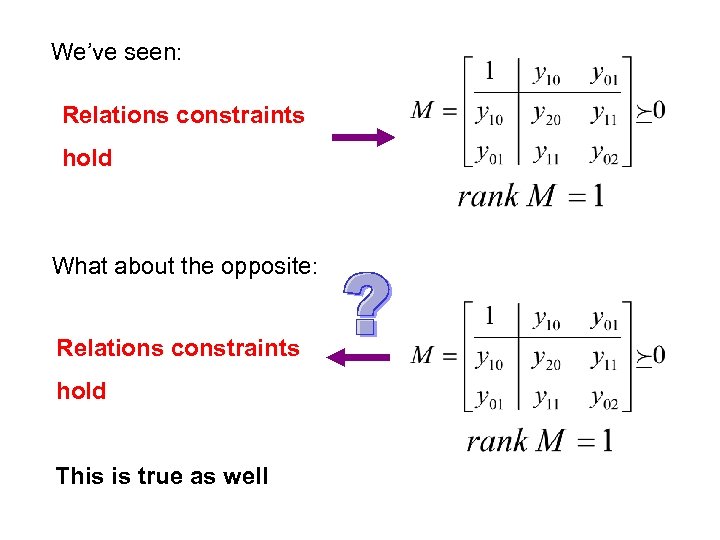

We’ve seen: Relations constraints hold What about the opposite: Relations constraints hold This is true as well

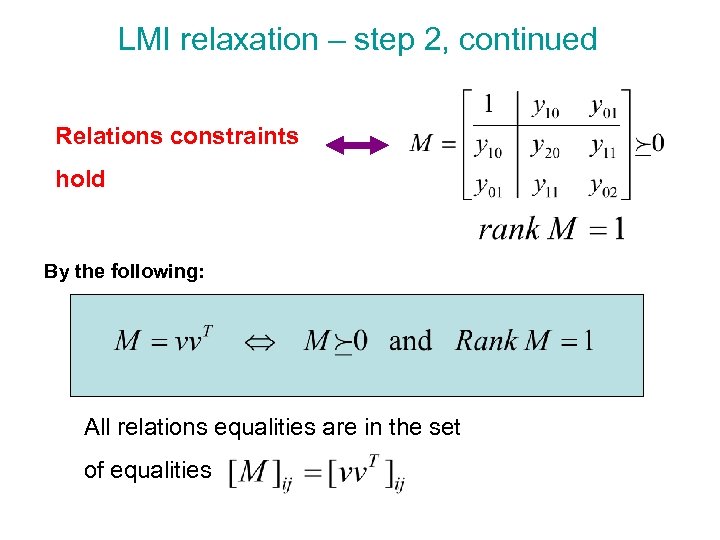

LMI relaxation – step 2, continued Relations constraints hold By the following: All relations equalities are in the set of equalities

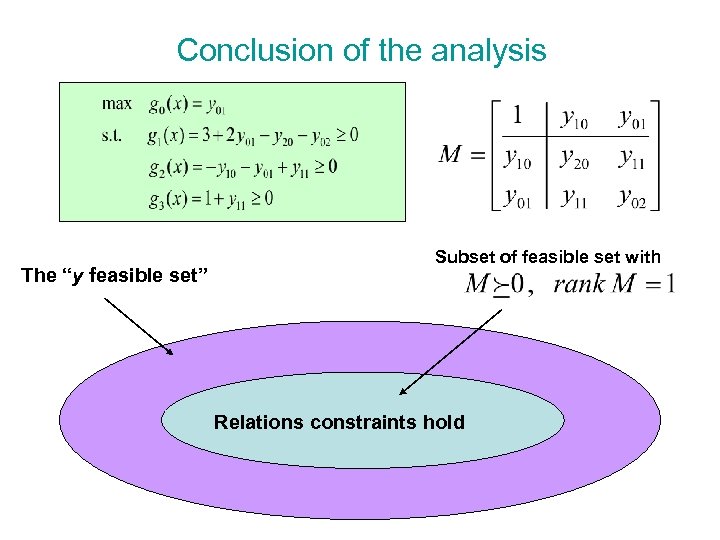

Conclusion of the analysis The “y feasible set” Subset of feasible set with Relations constraints hold

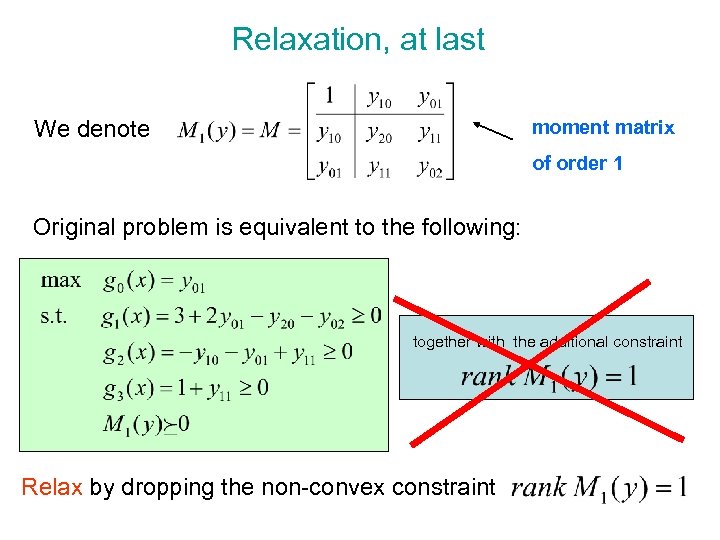

Relaxation, at last We denote moment matrix of order 1 Original problem is equivalent to the following: together with the additional constraint Relax by dropping the non-convex constraint

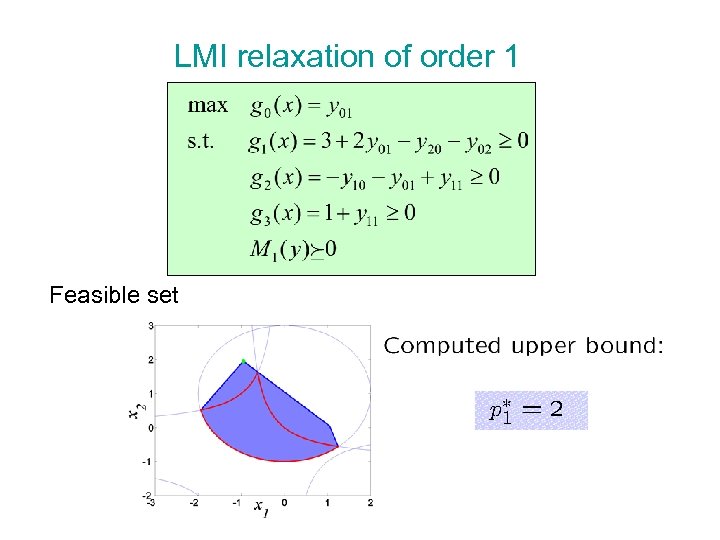

LMI relaxation of order 1 Feasible set

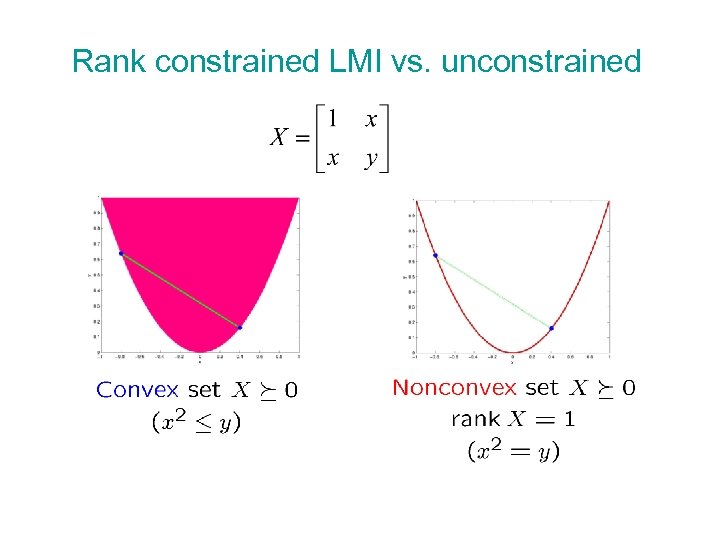

Rank constrained LMI vs. unconstrained

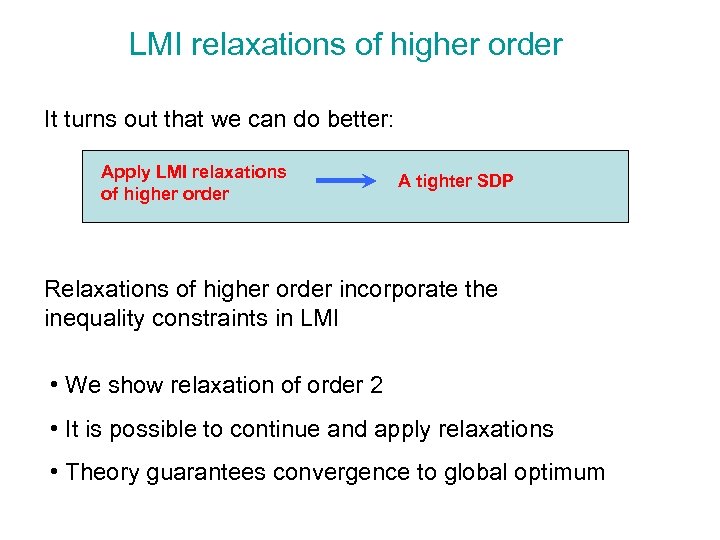

LMI relaxations of higher order It turns out that we can do better: Apply LMI relaxations of higher order A tighter SDP Relaxations of higher order incorporate the inequality constraints in LMI • We show relaxation of order 2 • It is possible to continue and apply relaxations • Theory guarantees convergence to global optimum

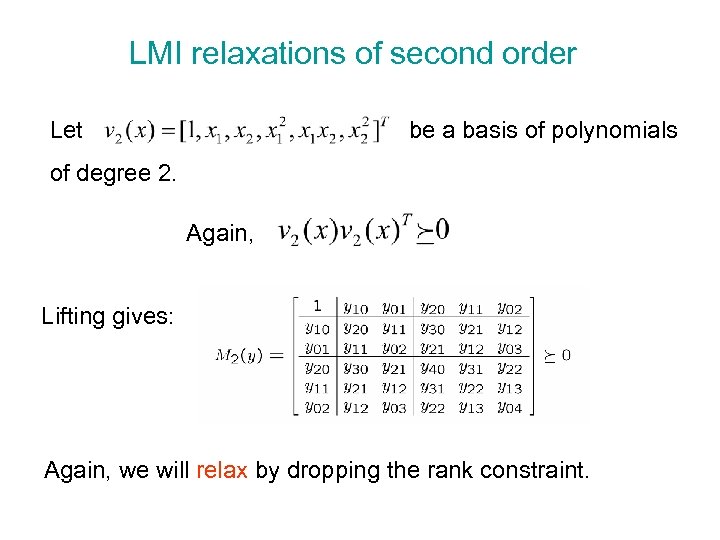

LMI relaxations of second order Let be a basis of polynomials of degree 2. Again, Lifting gives: Again, we will relax by dropping the rank constraint.

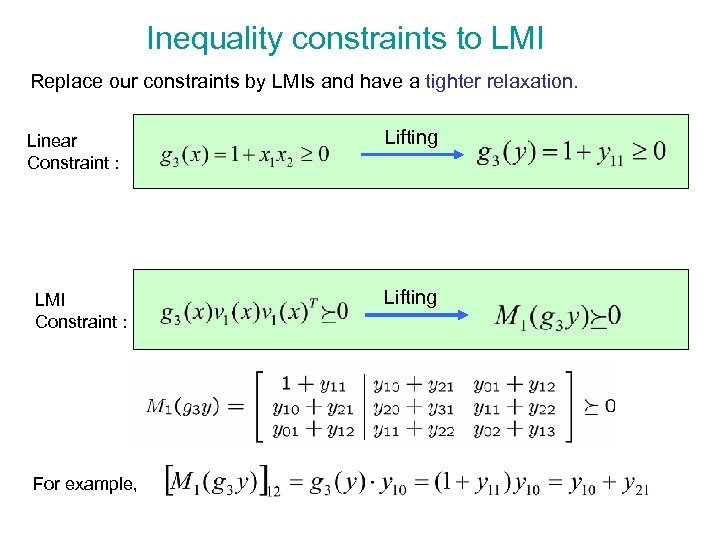

Inequality constraints to LMI Replace our constraints by LMIs and have a tighter relaxation. Linear Constraint : LMI Constraint : For example, Lifting

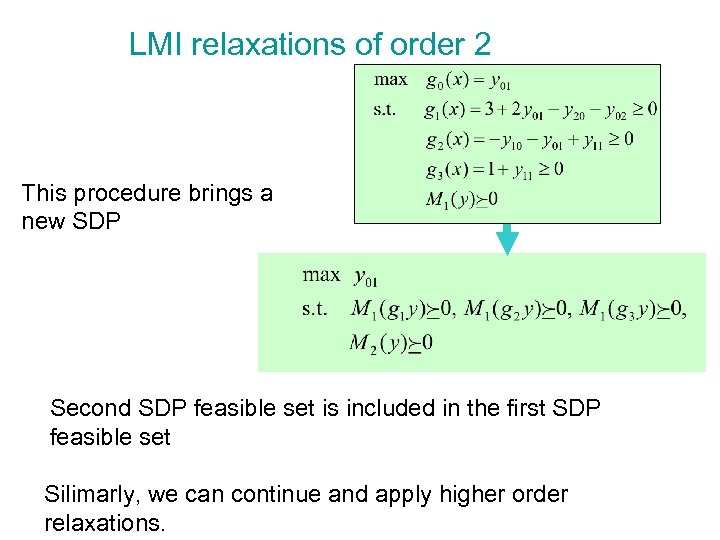

LMI relaxations of order 2 This procedure brings a new SDP Second SDP feasible set is included in the first SDP feasible set Silimarly, we can continue and apply higher order relaxations.

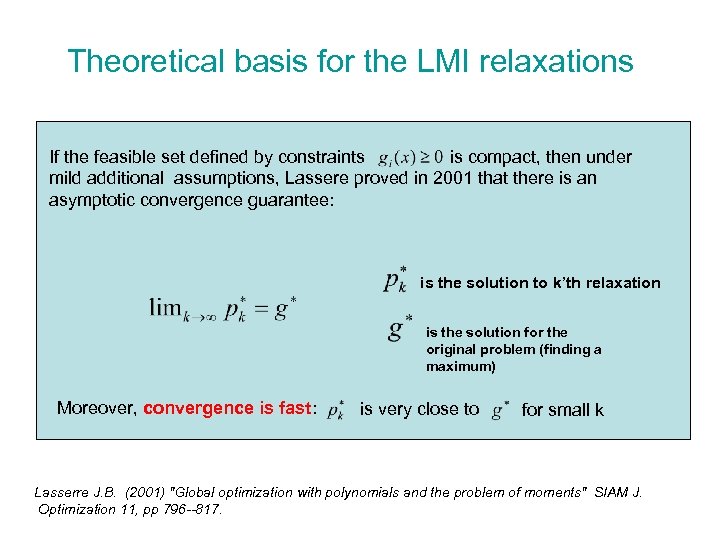

Theoretical basis for the LMI relaxations If the feasible set defined by constraints is compact, then under mild additional assumptions, Lassere proved in 2001 that there is an asymptotic convergence guarantee: is the solution to k’th relaxation is the solution for the original problem (finding a maximum) Moreover, convergence is fast: is very close to for small k Lasserre J. B. (2001) "Global optimization with polynomials and the problem of moments" SIAM J. Optimization 11, pp 796 --817.

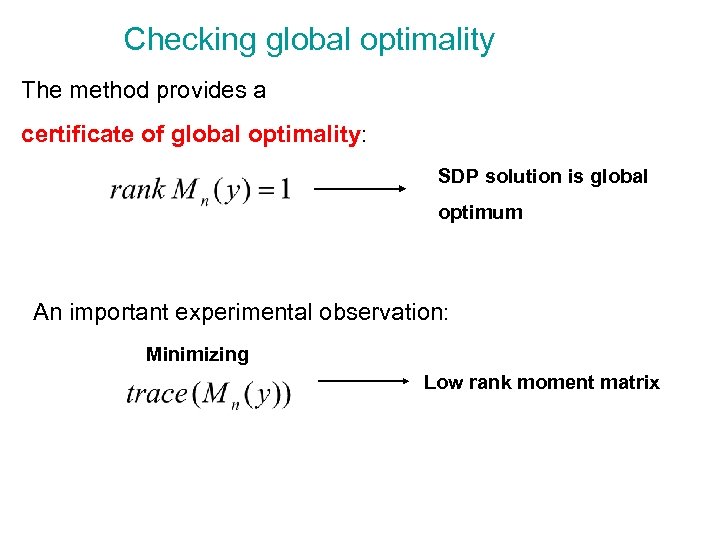

Checking global optimality The method provides a certificate of global optimality: SDP solution is global optimum An important experimental observation: Minimizing Low rank moment matrix

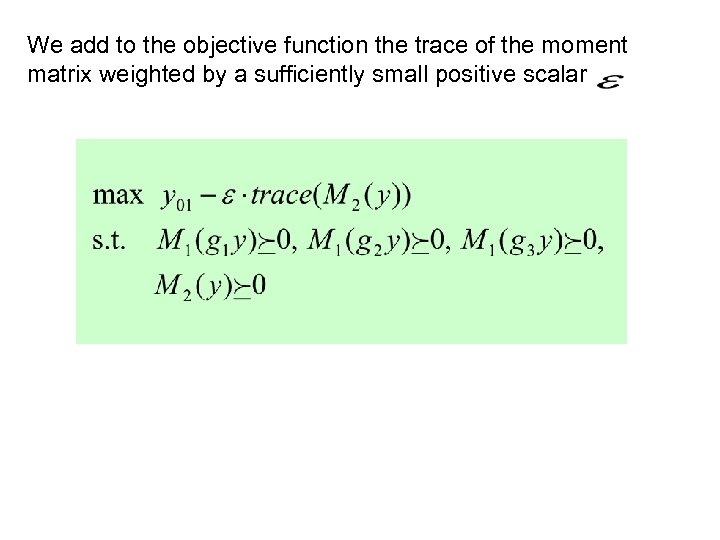

We add to the objective function the trace of the moment matrix weighted by a sufficiently small positive scalar

LMI relaxations in vision Application

outline ¡ Motivation and Introduction ¡ Background l l l ¡ Relaxations l l ¡ Positive Semi. Definite matrices (PSD) Linear Matrix Inequalities (LMI) Semi. Definite Programming (SDP) Sum Of Squares (SOS) relaxation Linear Matrix Inequalities (LMI) relaxation Application in vision l l Finding optimal structure Partial relaxation and Schur’s complement

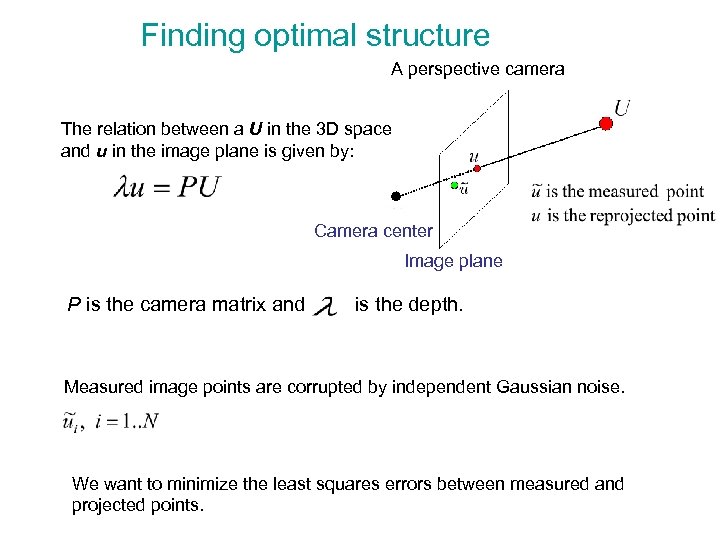

Finding optimal structure A perspective camera The relation between a U in the 3 D space and u in the image plane is given by: Camera center Image plane P is the camera matrix and is the depth. Measured image points are corrupted by independent Gaussian noise. We want to minimize the least squares errors between measured and projected points.

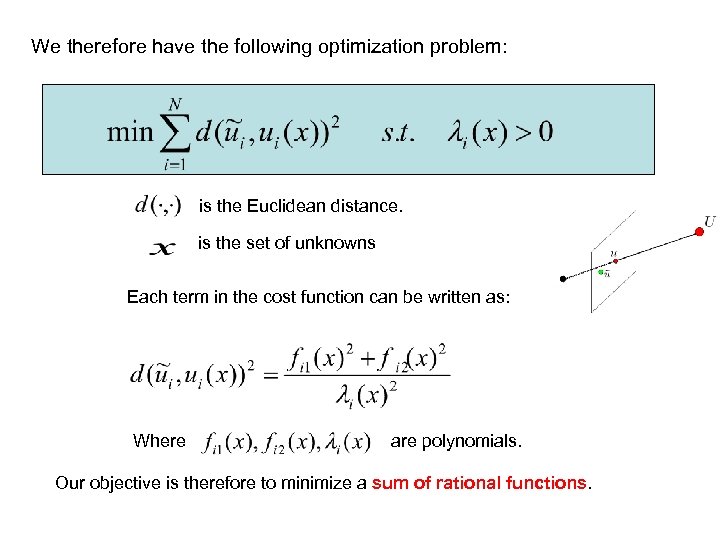

We therefore have the following optimization problem: is the Euclidean distance. is the set of unknowns Each term in the cost function can be written as: Where are polynomials. Our objective is therefore to minimize a sum of rational functions.

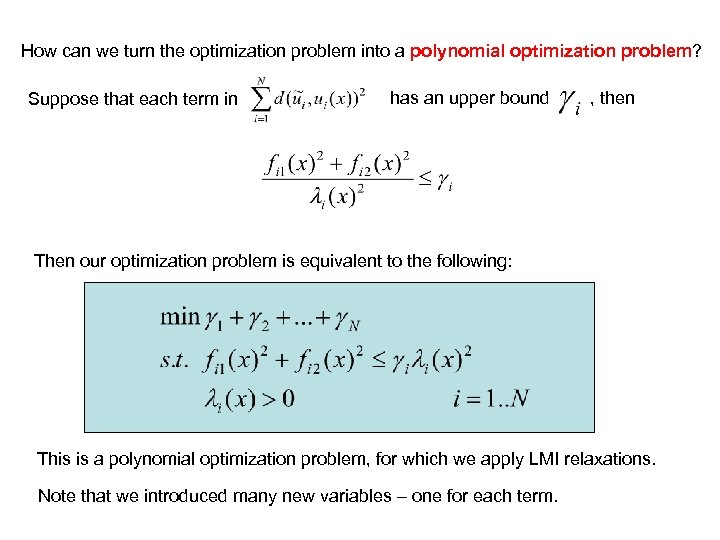

How can we turn the optimization problem into a polynomial optimization problem? Suppose that each term in has an upper bound , then Then our optimization problem is equivalent to the following: This is a polynomial optimization problem, for which we apply LMI relaxations. Note that we introduced many new variables – one for each term.

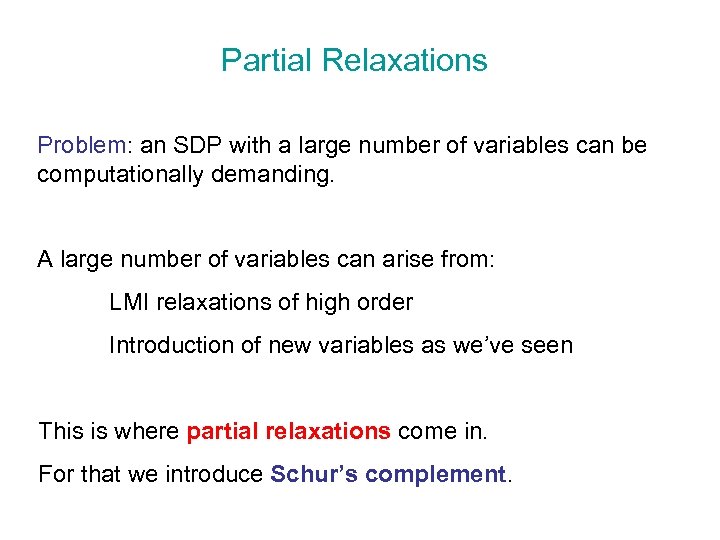

Partial Relaxations Problem: an SDP with a large number of variables can be computationally demanding. A large number of variables can arise from: LMI relaxations of high order Introduction of new variables as we’ve seen This is where partial relaxations come in. For that we introduce Schur’s complement.

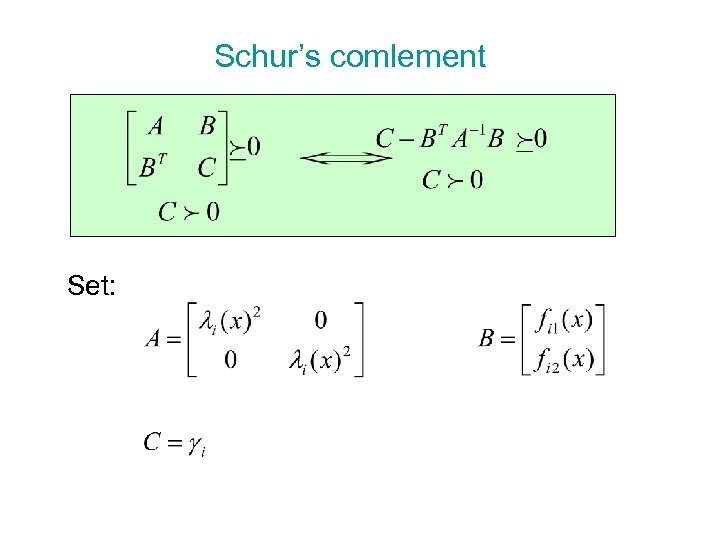

Schur’s comlement Set:

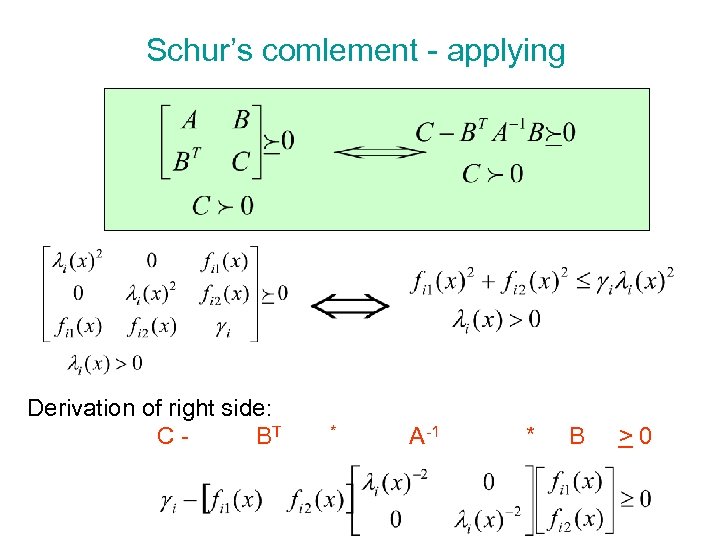

Schur’s comlement - applying Derivation of right side: C - BT * A-1 * B > 0

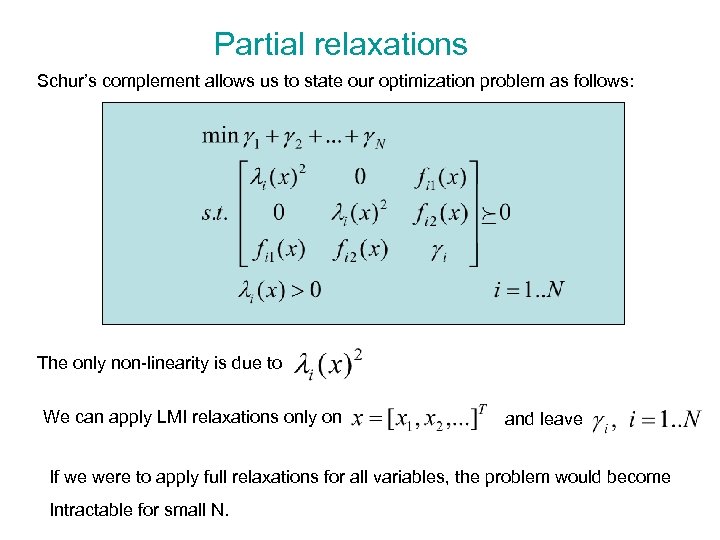

Partial relaxations Schur’s complement allows us to state our optimization problem as follows: The only non-linearity is due to We can apply LMI relaxations only on and leave If we were to apply full relaxations for all variables, the problem would become Intractable for small N.

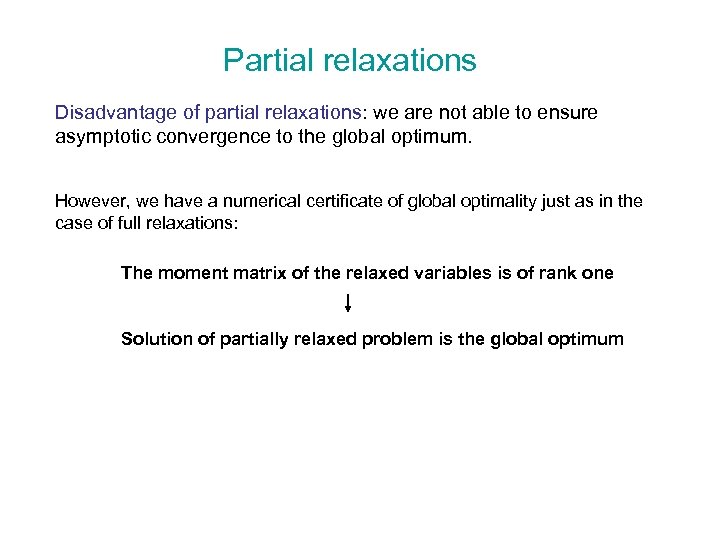

Partial relaxations Disadvantage of partial relaxations: we are not able to ensure asymptotic convergence to the global optimum. However, we have a numerical certificate of global optimality just as in the case of full relaxations: The moment matrix of the relaxed variables is of rank one Solution of partially relaxed problem is the global optimum

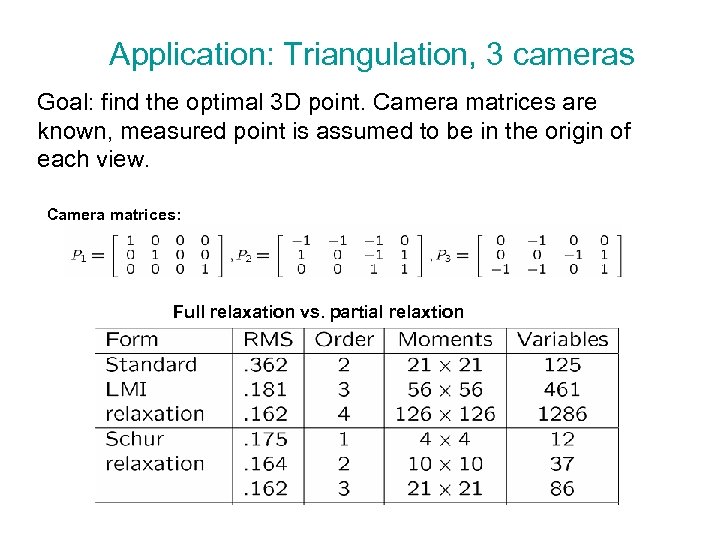

Application: Triangulation, 3 cameras Goal: find the optimal 3 D point. Camera matrices are known, measured point is assumed to be in the origin of each view. Camera matrices: Full relaxation vs. partial relaxtion

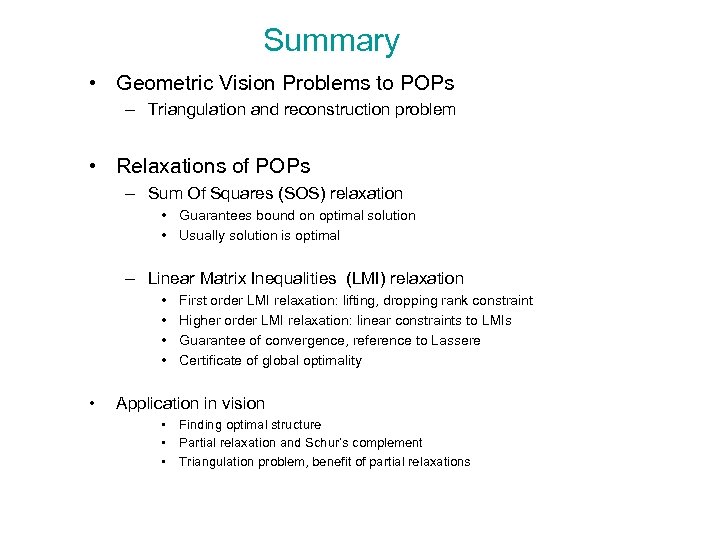

Summary • Geometric Vision Problems to POPs – Triangulation and reconstruction problem • Relaxations of POPs – Sum Of Squares (SOS) relaxation • Guarantees bound on optimal solution • Usually solution is optimal – Linear Matrix Inequalities (LMI) relaxation • • • First order LMI relaxation: lifting, dropping rank constraint Higher order LMI relaxation: linear constraints to LMIs Guarantee of convergence, reference to Lassere Certificate of global optimality Application in vision • Finding optimal structure • Partial relaxation and Schur’s complement • Triangulation problem, benefit of partial relaxations

References ¡ ¡ ¡ F. Kahl and D. Henrion. Globally Optimal Estimates for Geometric Reconstruction Problems. Accepted IJCV H. Waki, S. Kim, M. Kojima, and M. Muramatsu. Sums of squares and semidefinite programming relaxations for polynomial optimization problems with structured sparsity. SIAM J. Optimization, 17(1): 218– 242, 2006. J. B. Lasserre. Global optimization with polynomials and the problem of moments. SIAM J. Optimization, 11: 796– 817, 2001. S. Boyd and L. Vandenberghe. Convex Optimization. Cambridge University Press, 2004. R. I. Hartley and A. Zisserman. Multiple View Geometry in Computer Vision. Cambridge University Press, 2004. Second Edition.

a27c3cb2189a9f569cf4277148b8b55d.ppt