8c607fbda032b499acc0c866e68a9e77.ppt

- Количество слайдов: 41

Global Virtual Organizations for Data Intensive Science Creating a Sustainable Cycle of Innovation Harvey B Newman, Caltech WSIS Pan European Regional Ministerial Conference Bucharest, November 7 -9 2002

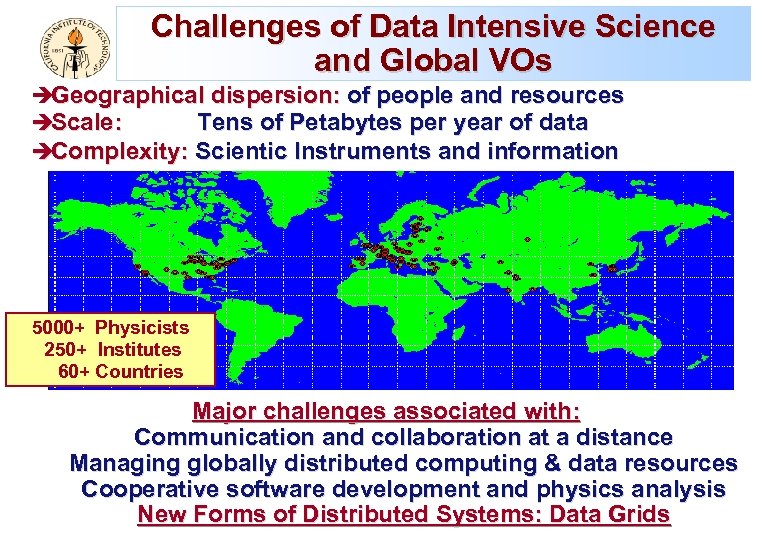

Challenges of Data Intensive Science and Global VOs èGeographical dispersion: of people and resources èScale: Tens of Petabytes per year of data èComplexity: Scientic Instruments and information 5000+ Physicists 250+ Institutes 60+ Countries Major challenges associated with: Communication and collaboration at a distance Managing globally distributed computing & data resources Cooperative software development and physics analysis New Forms of Distributed Systems: Data Grids

Emerging Data Grid User Communities Grid Physics Projects (Gri. Phy. N/i. VDGL/EDG) ATLAS, CMS, LIGO, SDSS; Ba. Bar/D 0/CDF NSF Network for Earthquake Engineering Simulation (NEES) Integrated instrumentation, collaboration, simulation Access Grid; VRVS: supporting new modes of group-based collaboration And Genomics, Proteomics, . . . The Earth System Grid and EOSDIS Federating Brain Data Computed Micro. Tomography … Virtual Observatories Grids are Having a Global Impact on Research in Science & Engineering

Global Networks for HENP and Data Intensive Science u National and International Networks with sufficient capacity and capability, are essential today for è The daily conduct of collaborative work in both experiment and theory è Data analysis by physicists from all world regions è The conception, design and implementation of next generation facilities, as “global (Grid) networks” u “Collaborations on this scale would never have been attempted, if they could not rely on excellent networks” – L. Price, ANL u Grids Require Seamless Network Systems with Known, High Performance

![u Driven High Speed Bulk Throughput Ba. Bar Example [and LHC] by: è HENP u Driven High Speed Bulk Throughput Ba. Bar Example [and LHC] by: è HENP](https://present5.com/presentation/8c607fbda032b499acc0c866e68a9e77/image-5.jpg)

u Driven High Speed Bulk Throughput Ba. Bar Example [and LHC] by: è HENP data rates, e. g. Bar ~500 TB/year, Data rate from experiment >20 MBytes/s; [5 -75 Times More at LHC] è Grid of Multiple regional computer centers (e. g. Lyon-FR, RAL-UK, INFN-IT, CA: LBNL, LLNL, Caltech) need copies of data Data volume Moore’s law u Need high-speed networks and the ability to utilize them fully è High speed Today = 1 TB/day (~100 Mbps Full Time) è Develop 10 -100 TB/day Capability (Several Gbps Full Time) within the next 1 -2 years Data Volumes More than Doubling Each Yr; Driving Grid, Network Needs

HENP Major Links: Bandwidth Roadmap (Scenario) in Gbps Continuing the Trend: ~1000 Times Bandwidth Growth Per Decade; We are Rapidly Learning to Use and Share Multi-Gbps Networks

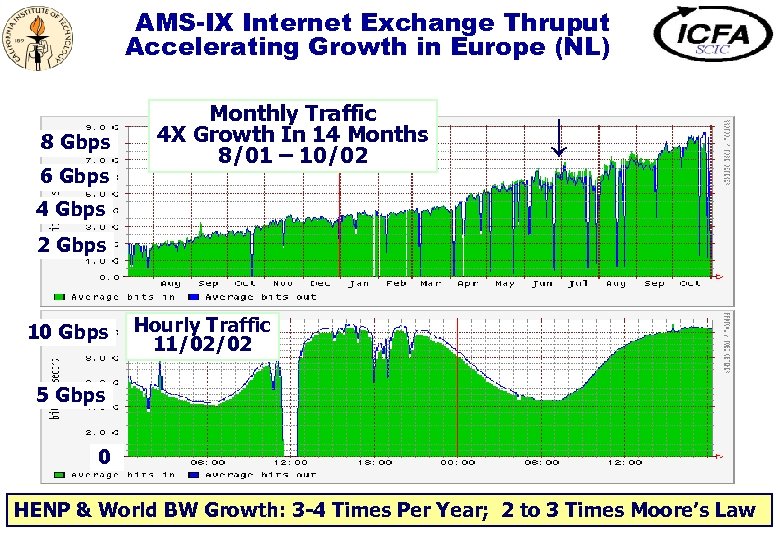

AMS-IX Internet Exchange Thruput Accelerating Growth in Europe (NL) 8 Gbps 6 Gbps Monthly Traffic 4 X Growth In 14 Months 8/01 – 10/02 ↓ 4 Gbps 2 Gbps 10 Gbps Hourly Traffic 11/02/02 5 Gbps 0 HENP & World BW Growth: 3 -4 Times Per Year; 2 to 3 Times Moore’s Law

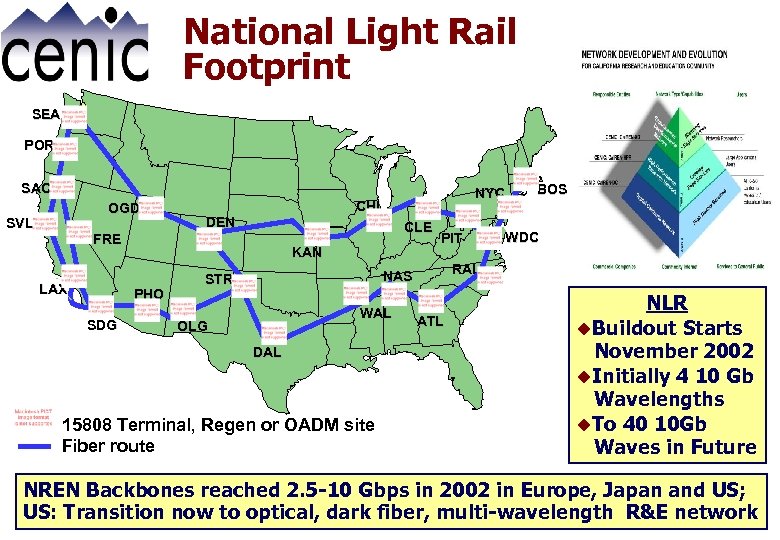

National Light Rail Footprint SEA POR SAC OGD SVL CHI DEN CLE FRE LAX KAN PHO SDG NYC PIT WAL OLG DAL 15808 Terminal, Regen or OADM site Fiber route WDC RAL NAS STR BOS ATL NLR u. Buildout Starts November 2002 u. Initially 4 10 Gb Wavelengths u. To 40 10 Gb Waves in Future NREN Backbones reached 2. 5 -10 Gbps in 2002 in Europe, Japan and US; US: Transition now to optical, dark fiber, multi-wavelength R&E network

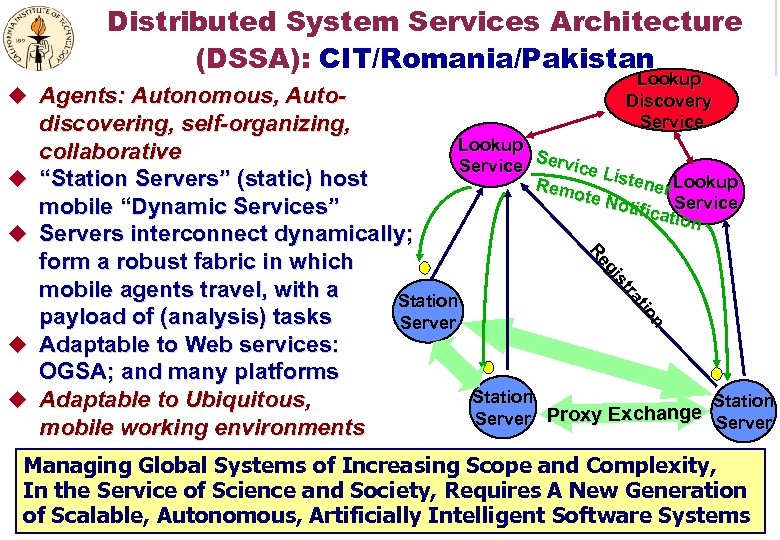

Distributed System Services Architecture (DSSA): CIT/Romania/Pakistan u Agents: Autonomous, Auto- u u Lookup Discovery Service n on tio ra ra stt s gii Re Re discovering, self-organizing, Lookup collaborative S Service Li stene Lookup “Station Servers” (static) host Rem r ote N otific Service mobile “Dynamic Services” ation Servers interconnect dynamically; form a robust fabric in which mobile agents travel, with a Station payload of (analysis) tasks Server Adaptable to Web services: OGSA; and many platforms Station Adaptable to Ubiquitous, Station hange Server Proxy Exc mobile working environments u u Managing Global Systems of Increasing Scope and Complexity, In the Service of Science and Society, Requires A New Generation of Scalable, Autonomous, Artificially Intelligent Software Systems

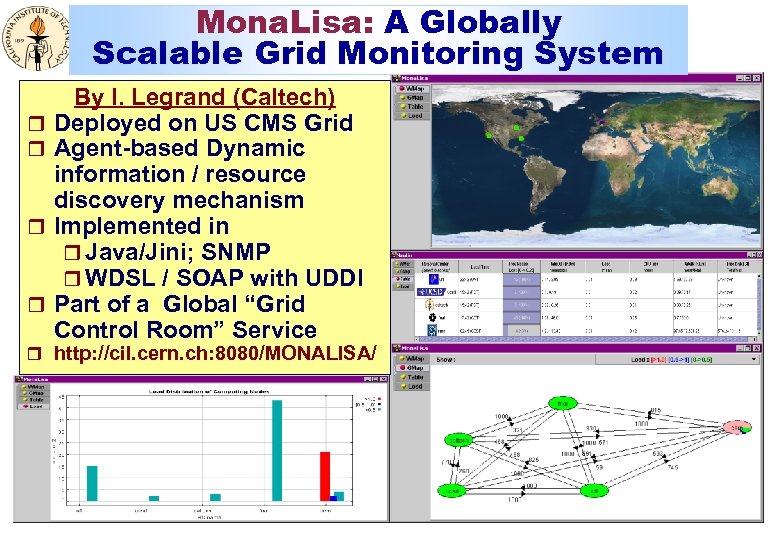

Mona. Lisa: A Globally Scalable Grid Monitoring System r r By I. Legrand (Caltech) Deployed on US CMS Grid Agent-based Dynamic information / resource discovery mechanism Implemented in r Java/Jini; SNMP r WDSL / SOAP with UDDI Part of a Global “Grid Control Room” Service r http: //cil. cern. ch: 8080/MONALISA/

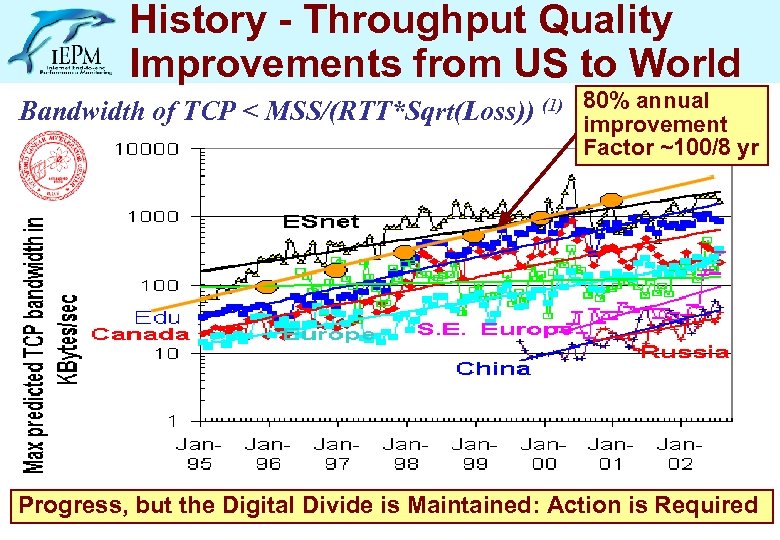

History - Throughput Quality Improvements from US to World Bandwidth of TCP < MSS/(RTT*Sqrt(Loss)) (1) 80% annual improvement Factor ~100/8 yr Progress, but the Digital Divide is Maintained: Action is Required

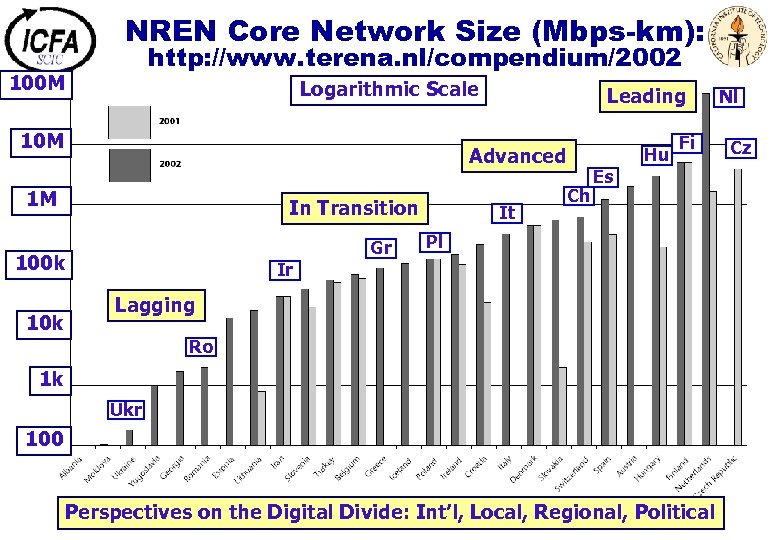

NREN Core Network Size (Mbps-km): http: //www. terena. nl/compendium/2002 100 M Logarithmic Scale 10 M Advanced 1 M In Transition Gr 100 k 10 k Leading It Hu Ch Fi Es Pl Ir Lagging Ro 1 k Ukr 100 Perspectives on the Digital Divide: Int’l, Local, Regional, Political Nl Cz

Building Petascale Global Grids: Implications for Society u Meeting the challenges of Petabyte-to-Exabyte Grids, and Gigabit-to-Terabit Networks, will transform research in science and engineering u These developments could create the first truly global virtual organizations (GVO) u If these developments are successful, and deployed widely as standards, this could lead to profound advances in industry, commerce and society at large è By changing the relationship between people and “persistent” information in their daily lives è Within the next five to ten years u Realizing the benefits of these developments for society, and creating a sustainable cycle of innovation compels us è TO CLOSE the DIGITAL DIVIDE

Recommendations u To realize the Vision of Global Grids, governments, international institutions and funding agencies should: è Define IT international policies (for instance AAA) è Support establishment of international standards è Provide adequate funding to continue R&D in Grid and Network technologies è Deploy international production Grid and Advanced Network testbeds on a global scale è Support education and training in Grid & Network technologies for new communities of users è Create open policies, and encourage joint development programs, to help Close the Digital Divide u The WSIS RO meeting, starting today, is an important step in the right direction

Some Extra Slides Follow

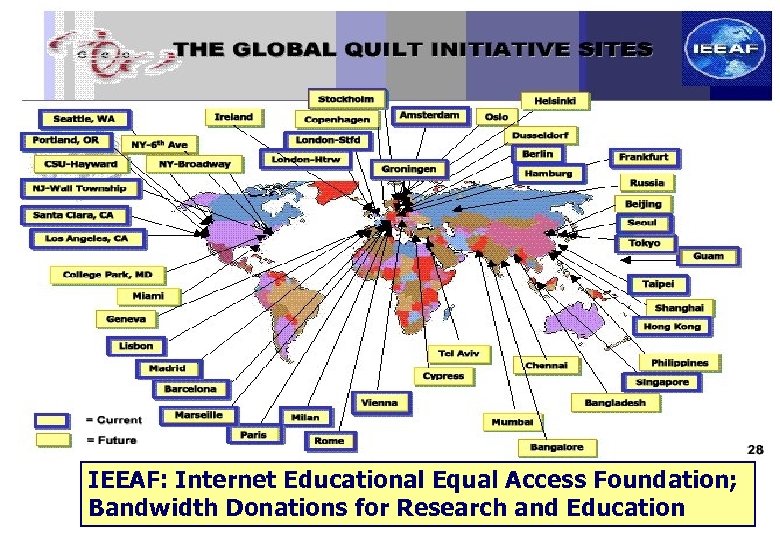

IEEAF: Internet Educational Equal Access Foundation; Bandwidth Donations for Research and Education

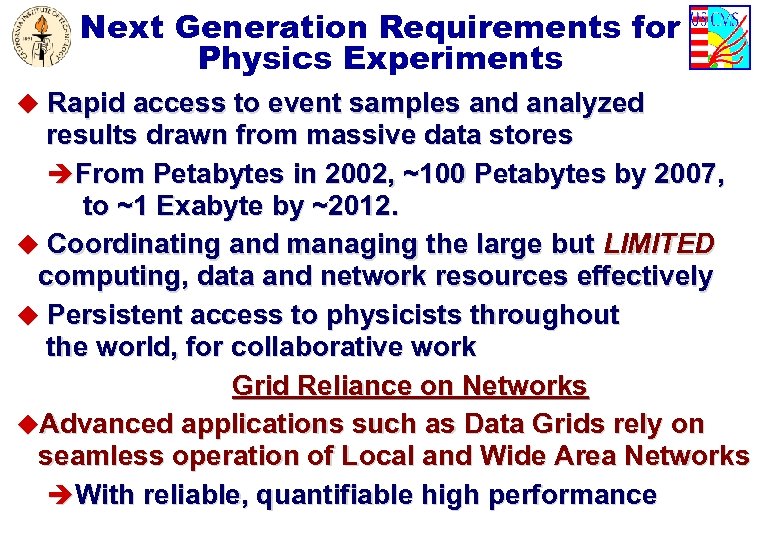

Next Generation Requirements for Physics Experiments u Rapid access to event samples and analyzed results drawn from massive data stores èFrom Petabytes in 2002, ~100 Petabytes by 2007, to ~1 Exabyte by ~2012. u Coordinating and managing the large but LIMITED computing, data and network resources effectively u Persistent access to physicists throughout the world, for collaborative work Grid Reliance on Networks u. Advanced applications such as Data Grids rely on seamless operation of Local and Wide Area Networks èWith reliable, quantifiable high performance

Networks, Grids and HENP u Grids are changing the way we do science and engineering u Next generation 10 Gbps network backbones are here: in the US, Europe and Japan; across oceans èOptical Nets with many 10 Gbps wavelengths will follow u. Removing regional, last mile bottlenecks and compromises in network quality are now All on the critical path u. Network improvements are especially needed in SE Europe, So. America; and many other regions: è Romania; India, Pakistan, China; Brazil, Chile; Africa u Realizing the promise of Network & Grid technologies means èBuilding a new generation of high performance network tools; artificially intelligent scalable software systems èStrong regional and inter-regional funding initiatives to support these ground breaking developments

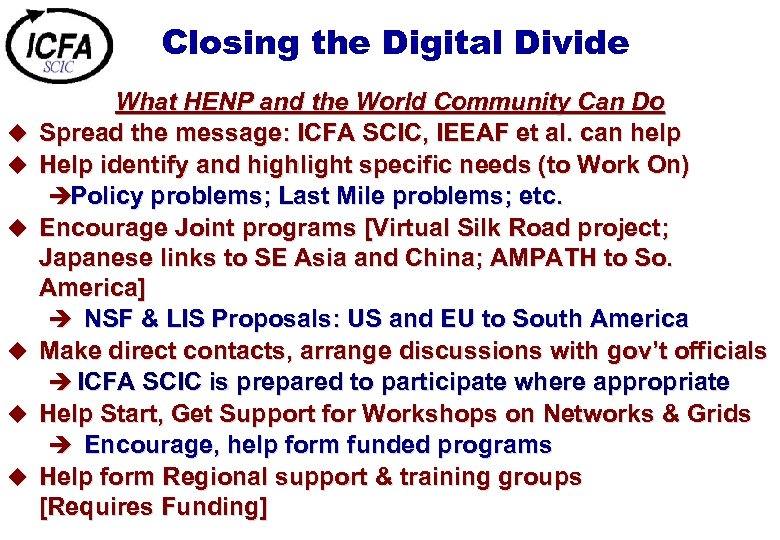

Closing the Digital Divide u u u What HENP and the World Community Can Do Spread the message: ICFA SCIC, IEEAF et al. can help Help identify and highlight specific needs (to Work On) èPolicy problems; Last Mile problems; etc. Encourage Joint programs [Virtual Silk Road project; Japanese links to SE Asia and China; AMPATH to So. America] è NSF & LIS Proposals: US and EU to South America Make direct contacts, arrange discussions with gov’t officials è ICFA SCIC is prepared to participate where appropriate Help Start, Get Support for Workshops on Networks & Grids è Encourage, help form funded programs Help form Regional support & training groups [Requires Funding]

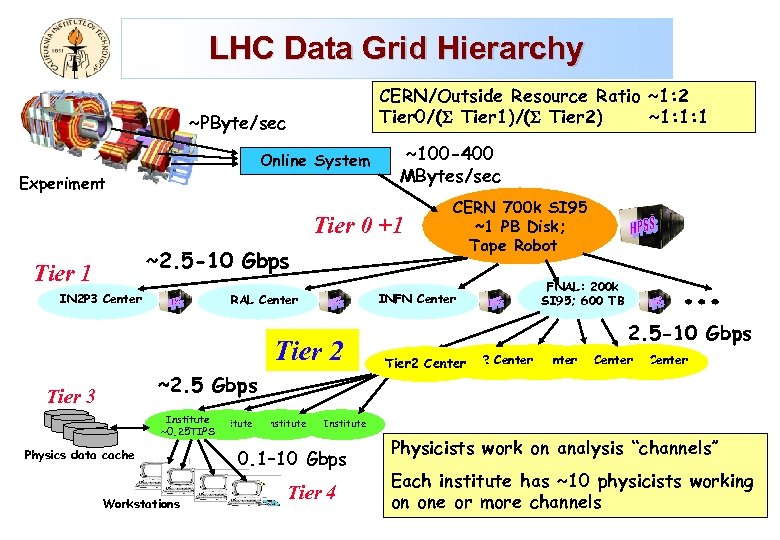

LHC Data Grid Hierarchy CERN/Outside Resource Ratio ~1: 2 Tier 0/( Tier 1)/( Tier 2) ~1: 1: 1 ~PByte/sec Online System Experiment ~100 -400 MBytes/sec Tier 0 +1 ~2. 5 -10 Gbps Tier 1 IN 2 P 3 Center INFN Center RAL Center Tier 2 ~2. 5 Gbps Tier 3 Institute ~0. 25 TIPS Physics data cache Workstations Institute CERN 700 k SI 95 ~1 PB Disk; Tape Robot FNAL: 200 k SI 95; 600 TB 2. 5 -10 Gbps Tier 2 Center Tier 2 Center Institute 0. 1– 10 Gbps Tier 4 Physicists work on analysis “channels” Each institute has ~10 physicists working on one or more channels

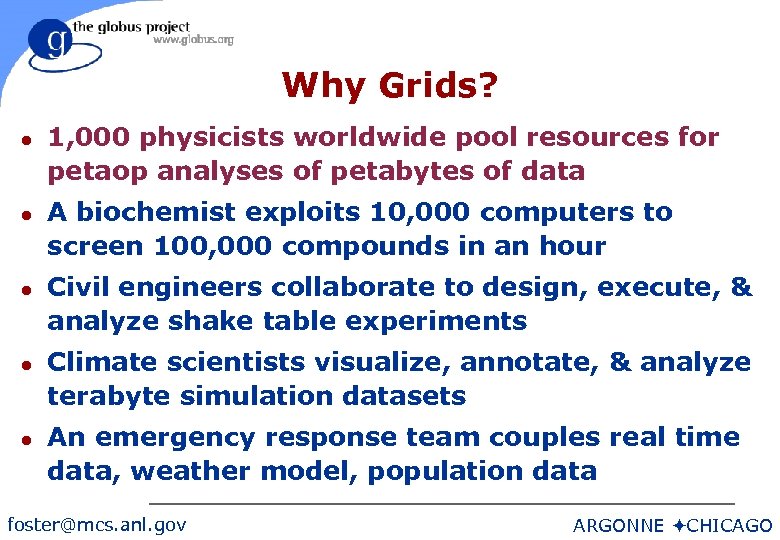

Why Grids? l l l 1, 000 physicists worldwide pool resources for petaop analyses of petabytes of data A biochemist exploits 10, 000 computers to screen 100, 000 compounds in an hour Civil engineers collaborate to design, execute, & analyze shake table experiments Climate scientists visualize, annotate, & analyze terabyte simulation datasets An emergency response team couples real time data, weather model, population data foster@mcs. anl. gov ARGONNE öCHICAGO

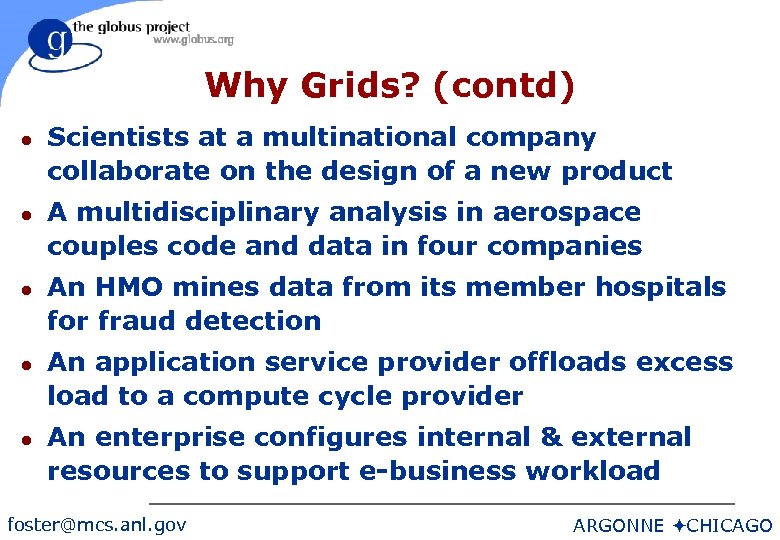

Why Grids? (contd) l l l Scientists at a multinational company collaborate on the design of a new product A multidisciplinary analysis in aerospace couples code and data in four companies An HMO mines data from its member hospitals for fraud detection An application service provider offloads excess load to a compute cycle provider An enterprise configures internal & external resources to support e-business workload foster@mcs. anl. gov ARGONNE öCHICAGO

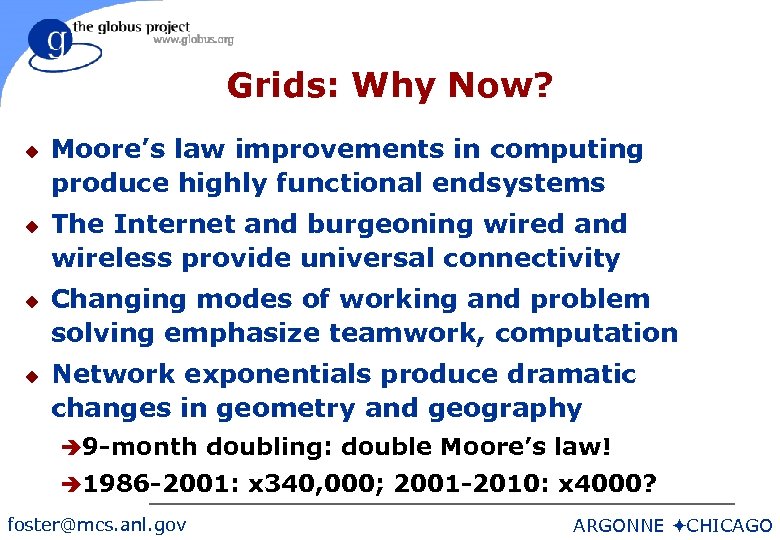

Grids: Why Now? u u Moore’s law improvements in computing produce highly functional endsystems The Internet and burgeoning wired and wireless provide universal connectivity Changing modes of working and problem solving emphasize teamwork, computation Network exponentials produce dramatic changes in geometry and geography è 9 -month doubling: double Moore’s law! è 1986 -2001: x 340, 000; 2001 -2010: x 4000? foster@mcs. anl. gov ARGONNE öCHICAGO

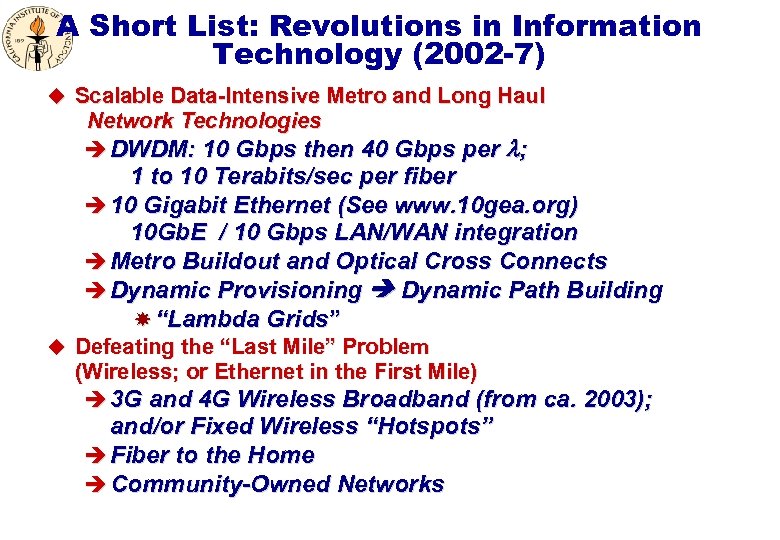

A Short List: Revolutions in Information Technology (2002 -7) u Scalable Data-Intensive Metro and Long Haul Network Technologies è DWDM: 10 Gbps then 40 Gbps per ; 1 to 10 Terabits/sec per fiber è 10 Gigabit Ethernet (See www. 10 gea. org) 10 Gb. E / 10 Gbps LAN/WAN integration è Metro Buildout and Optical Cross Connects è Dynamic Provisioning Dynamic Path Building “Lambda Grids” u Defeating the “Last Mile” Problem (Wireless; or Ethernet in the First Mile) è 3 G and 4 G Wireless Broadband (from ca. 2003); and/or Fixed Wireless “Hotspots” è Fiber to the Home è Community-Owned Networks

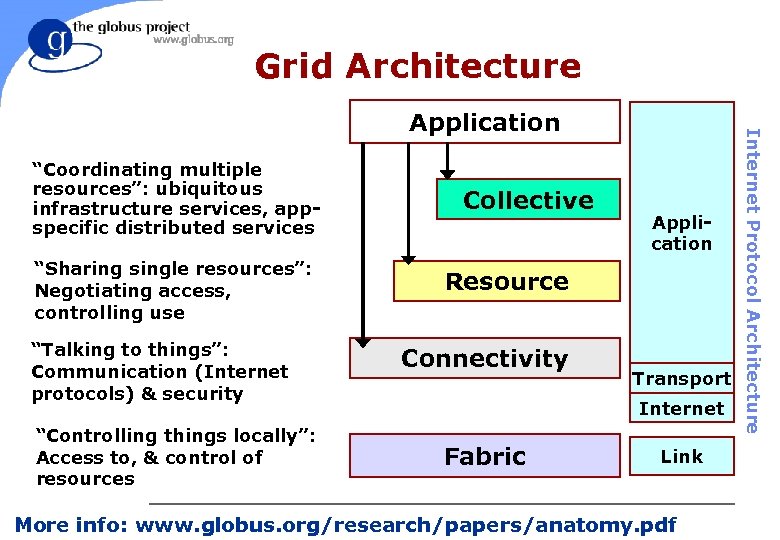

Grid Architecture “Coordinating multiple resources”: ubiquitous infrastructure services, appspecific distributed services “Sharing single resources”: Negotiating access, controlling use “Talking to things”: Communication (Internet protocols) & security “Controlling things locally”: Access to, & control of resources Collective Application Resource Connectivity Transport Internet Fabric Internet Protocol Architecture Application Link foster@mcs. anl. gov More info: www. globus. org/research/papers/anatomy. pdf CHICAGO ARGONNE ö

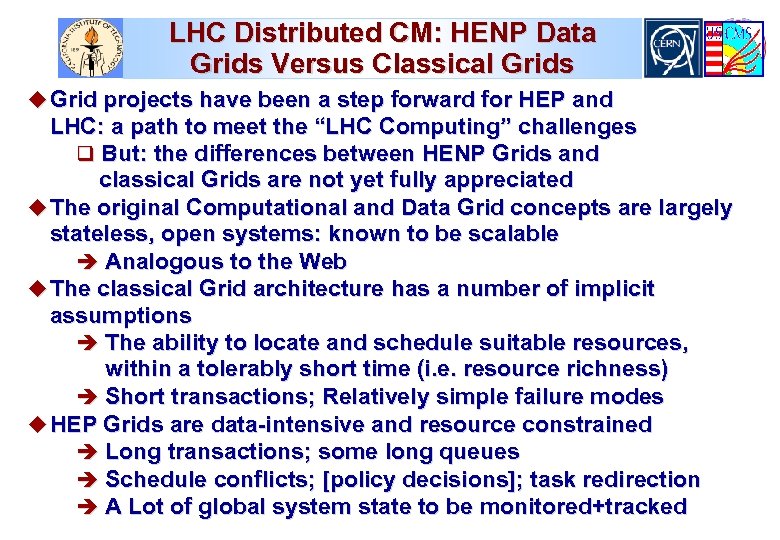

LHC Distributed CM: HENP Data Grids Versus Classical Grids u Grid projects have been a step forward for HEP and LHC: a path to meet the “LHC Computing” challenges q But: the differences between HENP Grids and classical Grids are not yet fully appreciated u The original Computational and Data Grid concepts are largely stateless, open systems: known to be scalable è Analogous to the Web u The classical Grid architecture has a number of implicit assumptions è The ability to locate and schedule suitable resources, within a tolerably short time (i. e. resource richness) è Short transactions; Relatively simple failure modes u HEP Grids are data-intensive and resource constrained è Long transactions; some long queues è Schedule conflicts; [policy decisions]; task redirection è A Lot of global system state to be monitored+tracked

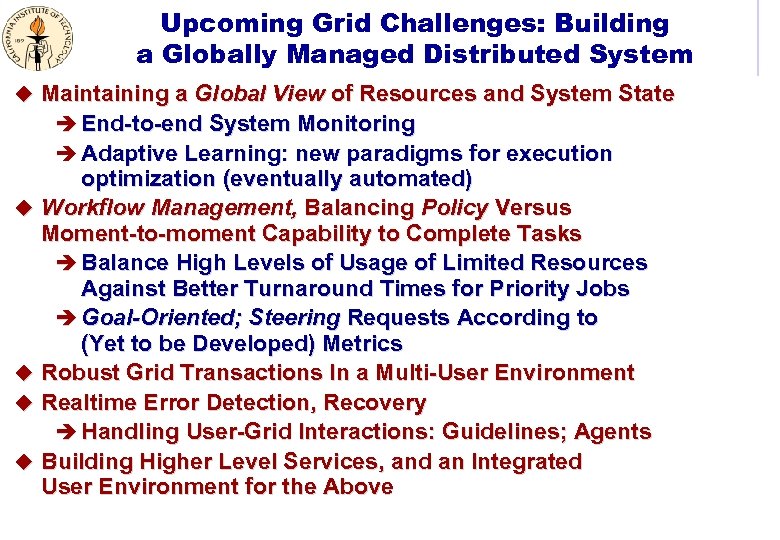

Upcoming Grid Challenges: Building a Globally Managed Distributed System u Maintaining a Global View of Resources and System State è End-to-end System Monitoring è Adaptive Learning: new paradigms for execution optimization (eventually automated) u Workflow Management, Balancing Policy Versus Moment-to-moment Capability to Complete Tasks è Balance High Levels of Usage of Limited Resources Against Better Turnaround Times for Priority Jobs è Goal-Oriented; Steering Requests According to (Yet to be Developed) Metrics u Robust Grid Transactions In a Multi-User Environment u Realtime Error Detection, Recovery è Handling User-Grid Interactions: Guidelines; Agents u Building Higher Level Services, and an Integrated User Environment for the Above

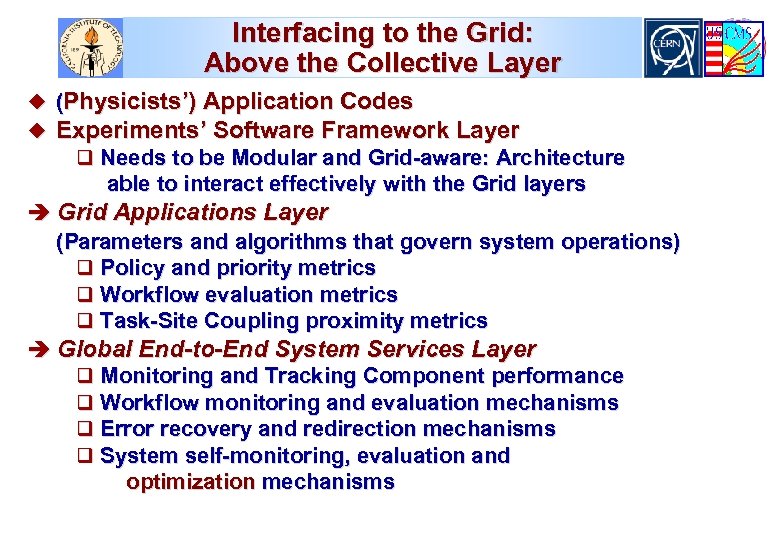

Interfacing to the Grid: Above the Collective Layer u (Physicists’) Application Codes u Experiments’ Software Framework Layer q Needs to be Modular and Grid-aware: Architecture able to interact effectively with the Grid layers è Grid Applications Layer (Parameters and algorithms that govern system operations) q Policy and priority metrics q Workflow evaluation metrics q Task-Site Coupling proximity metrics è Global End-to-End System Services Layer q Monitoring and Tracking Component performance q Workflow monitoring and evaluation mechanisms q Error recovery and redirection mechanisms q System self-monitoring, evaluation and optimization mechanisms

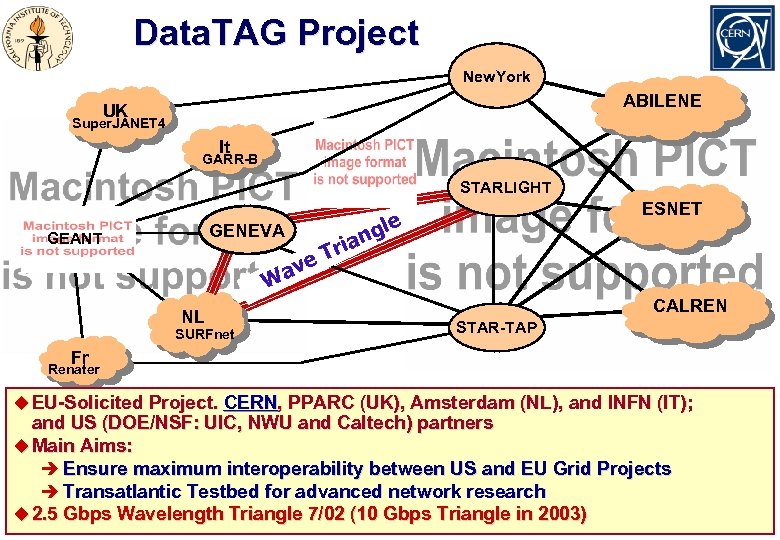

Data. TAG Project New. York ABILENE UK Super. JANET 4 It GARR-B STARLIGHT GENEVA GEANT W NL SURFnet ESNET gle ian r T ve a CALREN STAR-TAP Fr Renater u EU-Solicited Project. CERN, PPARC (UK), Amsterdam (NL), and INFN (IT); and US (DOE/NSF: UIC, NWU and Caltech) partners u Main Aims: è Ensure maximum interoperability between US and EU Grid Projects è Transatlantic Testbed for advanced network research u 2. 5 Gbps Wavelength Triangle 7/02 (10 Gbps Triangle in 2003)

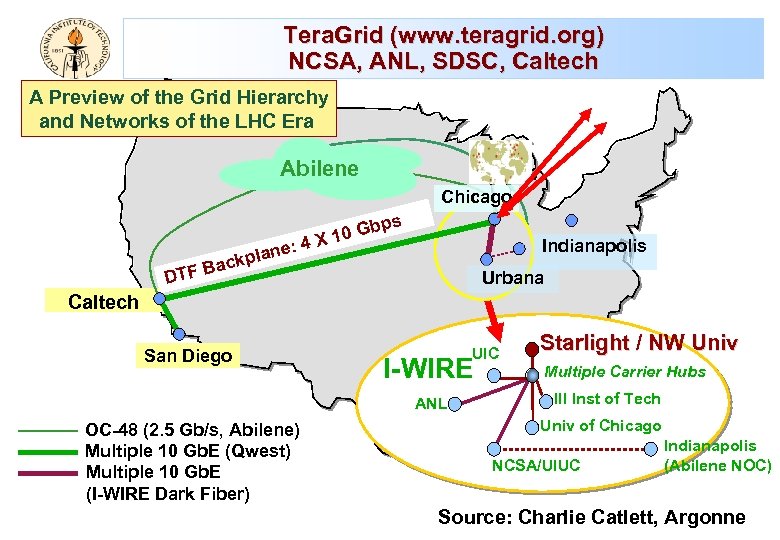

Tera. Grid (www. teragrid. org) NCSA, ANL, SDSC, Caltech A Preview of the Grid Hierarchy and Networks of the LHC Era Abilene Chicago X ne: 4 ckpla Gbps 10 Indianapolis a B DTF Urbana Caltech San Diego UIC I-WIRE ANL OC-48 (2. 5 Gb/s, Abilene) Multiple 10 Gb. E (Qwest) Multiple 10 Gb. E (I-WIRE Dark Fiber) Starlight / NW Univ Multiple Carrier Hubs Ill Inst of Tech Univ of Chicago NCSA/UIUC Indianapolis (Abilene NOC) Source: Charlie Catlett, Argonne

Baseline BW for the US-CERN Link: HENP Transatlantic WG (DOE+NSF) Transoceanic Networking Integrated with the Abilene, Tera. Grid, Regional Nets and Continental Network Infrastructures in US, Europe, Asia, South America Baseline evolution typical of major HENP links 2001 -2006 u Data. TAG 2. 5 Gbps Research Link in Summer 2002 u 10 Gbps Research Link by Approx. Mid-2003

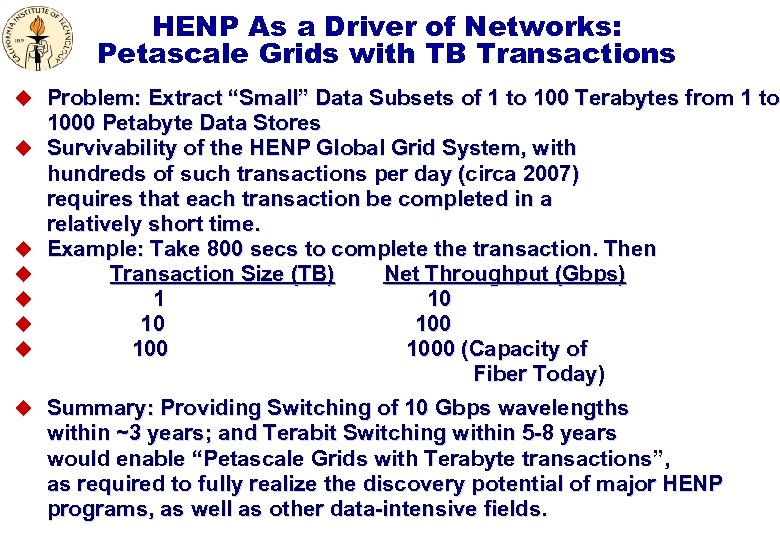

HENP As a Driver of Networks: Petascale Grids with TB Transactions u Problem: Extract “Small” Data Subsets of 1 to 100 Terabytes from 1 to u u u 1000 Petabyte Data Stores Survivability of the HENP Global Grid System, with hundreds of such transactions per day (circa 2007) requires that each transaction be completed in a relatively short time. Example: Take 800 secs to complete the transaction. Then Transaction Size (TB) Net Throughput (Gbps) 1 10 10 100 1000 (Capacity of Fiber Today) u Summary: Providing Switching of 10 Gbps wavelengths within ~3 years; and Terabit Switching within 5 -8 years would enable “Petascale Grids with Terabyte transactions”, as required to fully realize the discovery potential of major HENP programs, as well as other data-intensive fields.

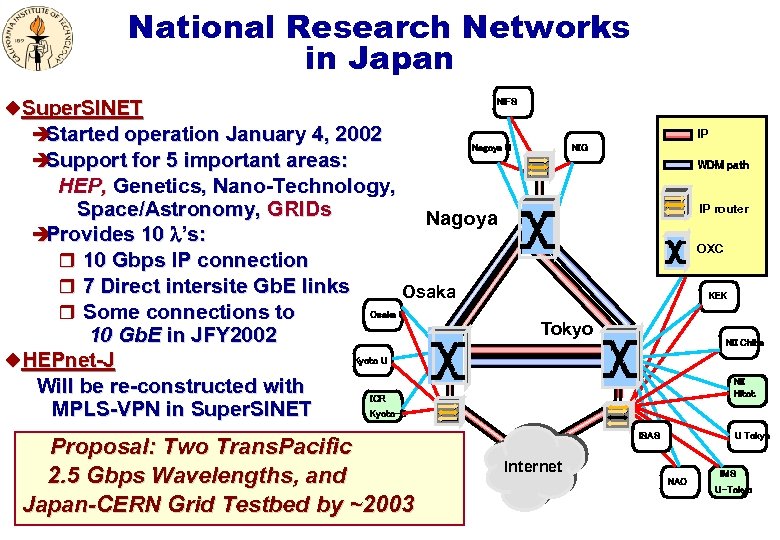

National Research Networks in Japan u. Super. SINET è Started operation January 4, 2002 è Support for 5 important areas: NIFS IP Nagoya U HEP, Genetics, Nano-Technology, Space/Astronomy, GRIDs Nagoya è Provides 10 ’s: r 10 Gbps IP connection r 7 Direct intersite Gb. E links Osaka r Some connections to Osaka U 10 Gb. E in JFY 2002 Kyoto U u. HEPnet-J Will be re-constructed with ICR MPLS-VPN in Super. SINET Kyoto-U Proposal: Two Trans. Pacific 2. 5 Gbps Wavelengths, and Japan-CERN Grid Testbed by ~2003 NIG WDM path IP router OXC Tohoku U KEK Tokyo NII Chiba NII Hitot. ISAS U Tokyo Internet NAO IMS U-Tokyo

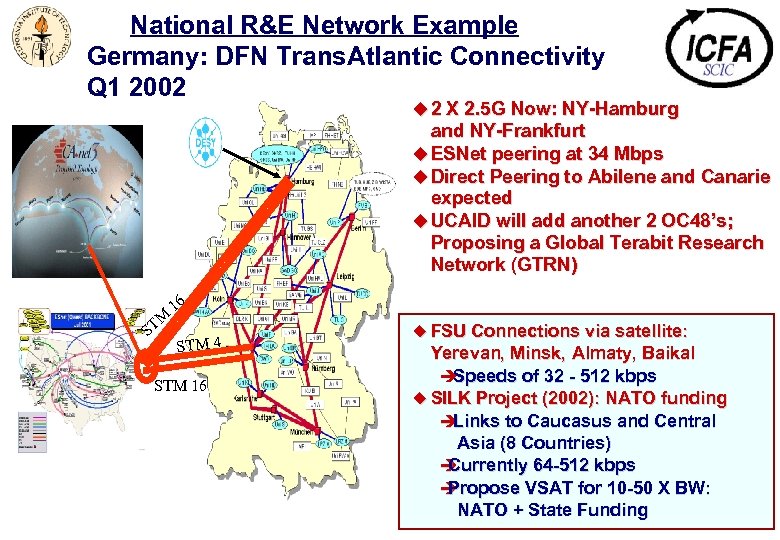

National R&E Network Example Germany: DFN Trans. Atlantic Connectivity Q 1 2002 u 2 X 2. 5 G Now: NY-Hamburg and NY-Frankfurt u ESNet peering at 34 Mbps u Direct Peering to Abilene and Canarie expected u UCAID will add another 2 OC 48’s; Proposing a Global Terabit Research Network (GTRN) M ST 16 STM 4 STM 16 u FSU Connections via satellite: Yerevan, Minsk, Almaty, Baikal è Speeds of 32 - 512 kbps u SILK Project (2002): NATO funding è Links to Caucasus and Central Asia (8 Countries) è Currently 64 -512 kbps è Propose VSAT for 10 -50 X BW: NATO + State Funding

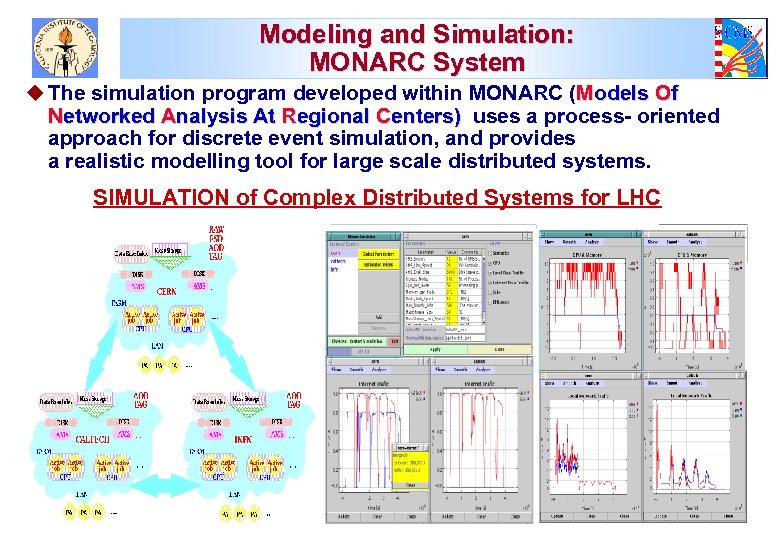

Modeling and Simulation: MONARC System u The simulation program developed within MONARC (Models Of Networked Analysis At Regional Centers) uses a process- oriented approach for discrete event simulation, and provides a realistic modelling tool for large scale distributed systems. SIMULATION of Complex Distributed Systems for LHC

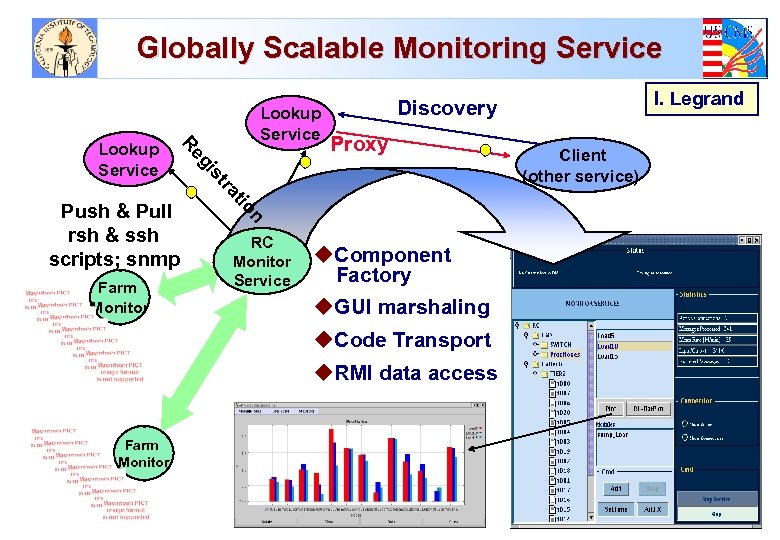

Globally Scalable Monitoring Service n tio Farm Monitor Proxy ra st Push & Pull rsh & ssh scripts; snmp RC Monitor Service u. Component Factory u. GUI marshaling u. Code Transport u. RMI data access Farm Monitor I. Legrand Discovery gi Re Lookup Service Client (other service)

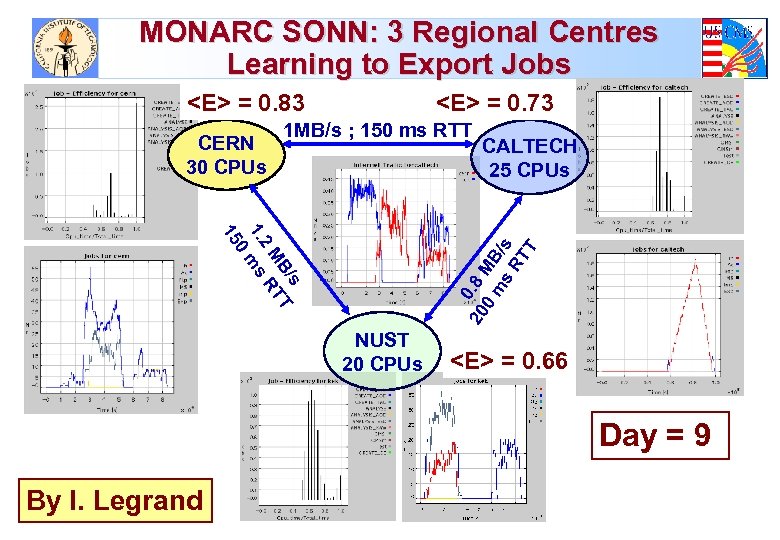

MONARC SONN: 3 Regional Centres Learning to Export Jobs <E> = 0. 83 CERN 30 CPUs <E> = 0. 73 1 MB/s ; 150 ms RTT s B/ T M RT 2 1. ms 0 15 20 0. 8 0 MB m s /s RT T CALTECH 25 CPUs NUST 20 CPUs <E> = 0. 66 Day = 9 By I. Legrand

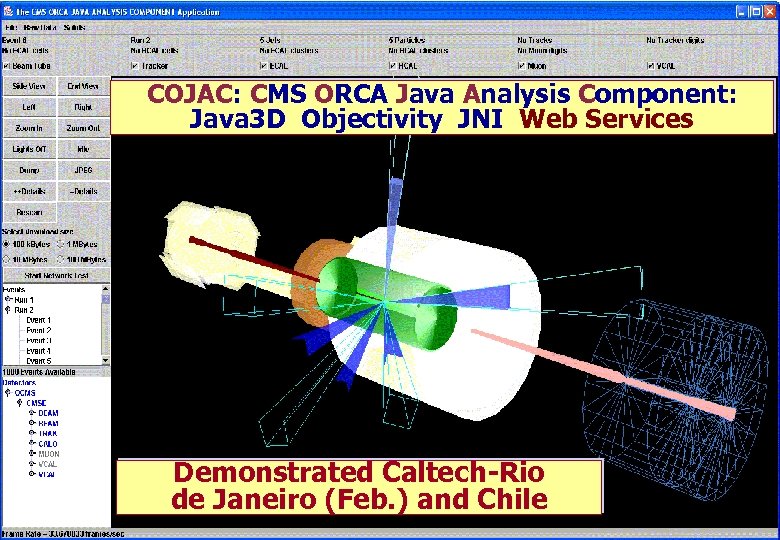

COJAC: CMS ORCA Java Analysis Component: Java 3 D Objectivity JNI Web Services Demonstrated Caltech-Rio de Janeiro (Feb. ) and Chile

![Internet 2 HENP WG [*] u Mission: To help ensure that the required èNational Internet 2 HENP WG [*] u Mission: To help ensure that the required èNational](https://present5.com/presentation/8c607fbda032b499acc0c866e68a9e77/image-39.jpg)

Internet 2 HENP WG [*] u Mission: To help ensure that the required èNational and international network infrastructures (end-to-end) èStandardized tools and facilities for high performance and end-to-end monitoring and tracking [Gridftp; bbcp…] èCollaborative systems u are developed and deployed in a timely manner, and used effectively to meet the needs of the US LHC and other major HENP Programs, as well as the at-large scientific community. èTo carry out these developments in a way that is broadly applicable across many fields u Formed an Internet 2 WG as a suitable framework: October 2001 u [*] Co-Chairs: S. Mc. Kee (Michigan), H. Newman (Caltech); Sec’y J. Williams (Indiana) u Website: http: //www. internet 2. edu/henp; also see the Internet 2 End-to-end Initiative: http: //www. internet 2. edu/e 2 e

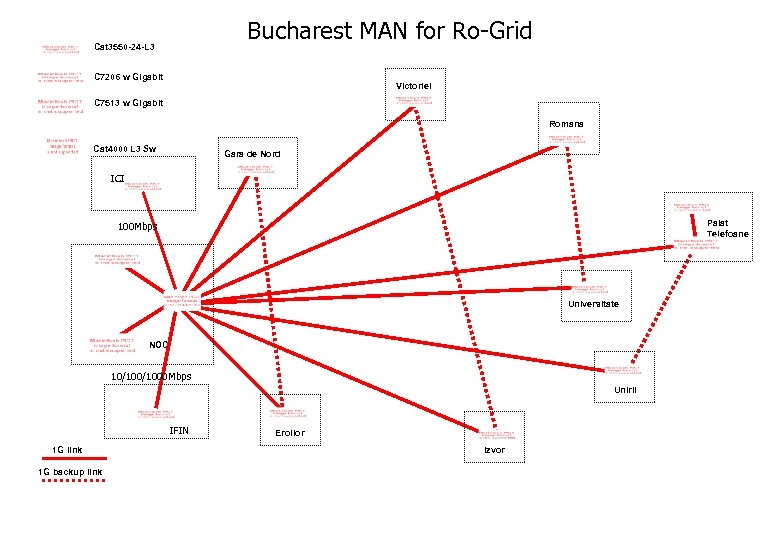

Bucharest MAN for Ro-Grid Cat 3550 -24 -L 3 C 7206 w Gigabit Victoriei C 7513 w Gigabit Romana Cat 4000 L 3 Sw Gara de Nord ICI Palat Telefoane 100 Mbps Universitate NOC 10/1000 Mbps Unirii IFIN 1 G link 1 G backup link Eroilor Izvor

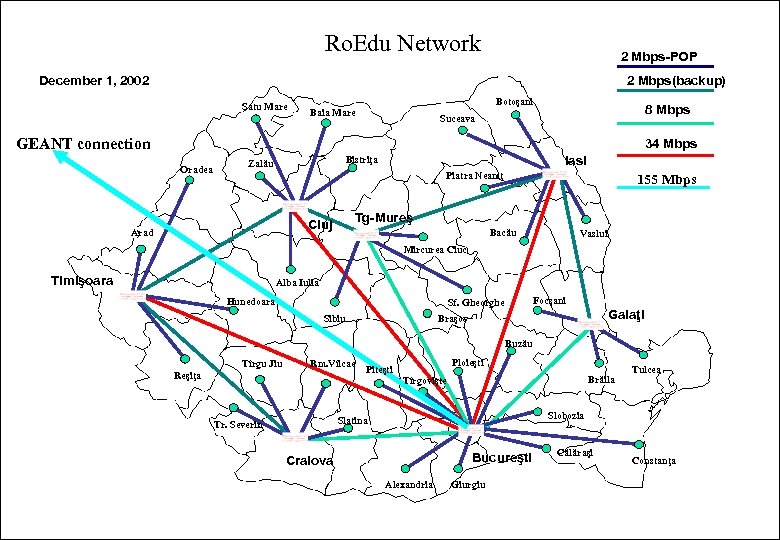

Ro. Edu Network 2 Mbps-POP December 1, 2002 2 Mbps(backup) Satu Mare Botoşani Baia Mare 8 Mbps Suceava GEANT connection 34 Mbps Oradea Iasi Bistriţa Zalău Piatra Neamţ Tg-Mureş Cluj Arad 155 Mbps Bacău Vaslui Mircurea Ciuc Timişoara Alba Iulia Hunedoara Focşani Sf. Gheorghe Sibiu Galaţi Braşov Buzău Tîrgu Jiu Rm. Vîlcae Reşiţa Ploieşti Piteşti Brăila Tîrgovişte Slobozia Slatina Tr. Severin Tulcea Bucureşti Craiova Alexandria Giurgiu Călăraşi Constanţa

8c607fbda032b499acc0c866e68a9e77.ppt