e32cda54cda3c99e2a333e2e69c04444.ppt

- Количество слайдов: 34

Generation

Generation

Aims of this talk Discuss MRS and LKB generation n Describe larger research programme: modular generation n Mention some interactions with other work in progress: n RMRS n SEM-I n

Aims of this talk Discuss MRS and LKB generation n Describe larger research programme: modular generation n Mention some interactions with other work in progress: n RMRS n SEM-I n

Outline of talk Towards modular generation n Why MRS? n MRS and chart generation n Data-driven techniques n SEM-I and documentation n

Outline of talk Towards modular generation n Why MRS? n MRS and chart generation n Data-driven techniques n SEM-I and documentation n

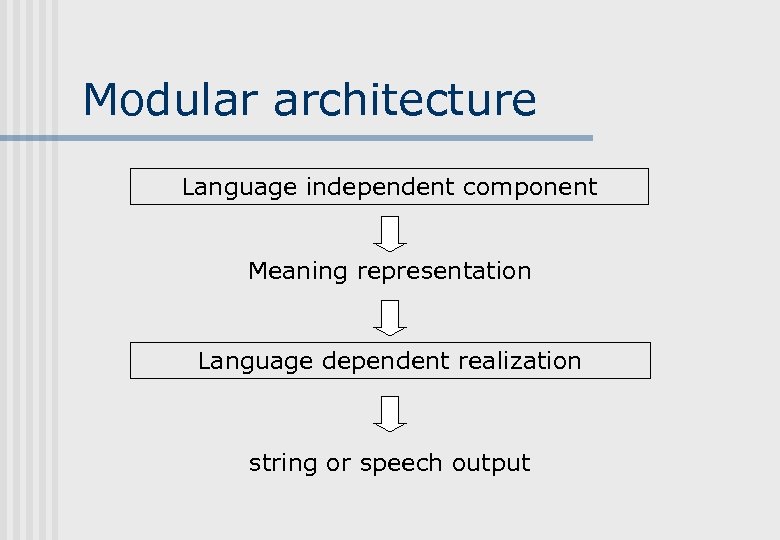

Modular architecture Language independent component Meaning representation Language dependent realization string or speech output

Modular architecture Language independent component Meaning representation Language dependent realization string or speech output

Desiderata for a portable realization module Application independent n Any well-formed input should be accepted n No grammar-specific/conventional information should be essential in the input n Output should be idiomatic n

Desiderata for a portable realization module Application independent n Any well-formed input should be accepted n No grammar-specific/conventional information should be essential in the input n Output should be idiomatic n

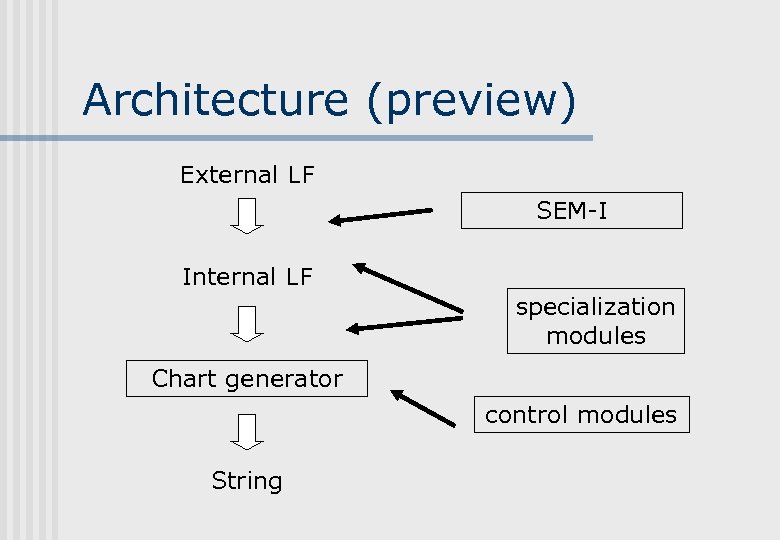

Architecture (preview) External LF SEM-I Internal LF specialization modules Chart generator control modules String

Architecture (preview) External LF SEM-I Internal LF specialization modules Chart generator control modules String

Why MRS? n Flat structures independence of syntax: conventional LFs partially mirror tree structure n manipulation of individual components: can ignore scope structure etc n lexicalised generation n composition by accumulation of EPs: robust composition n n Underspecification

Why MRS? n Flat structures independence of syntax: conventional LFs partially mirror tree structure n manipulation of individual components: can ignore scope structure etc n lexicalised generation n composition by accumulation of EPs: robust composition n n Underspecification

An excursion: Robust MRS Deep Thought: integration of deep and shallow processing via compatible semantics n All components construct RMRSs n Principled way of building robustness into deep processing n Requirements for consistency etc help human users too n

An excursion: Robust MRS Deep Thought: integration of deep and shallow processing via compatible semantics n All components construct RMRSs n Principled way of building robustness into deep processing n Requirements for consistency etc help human users too n

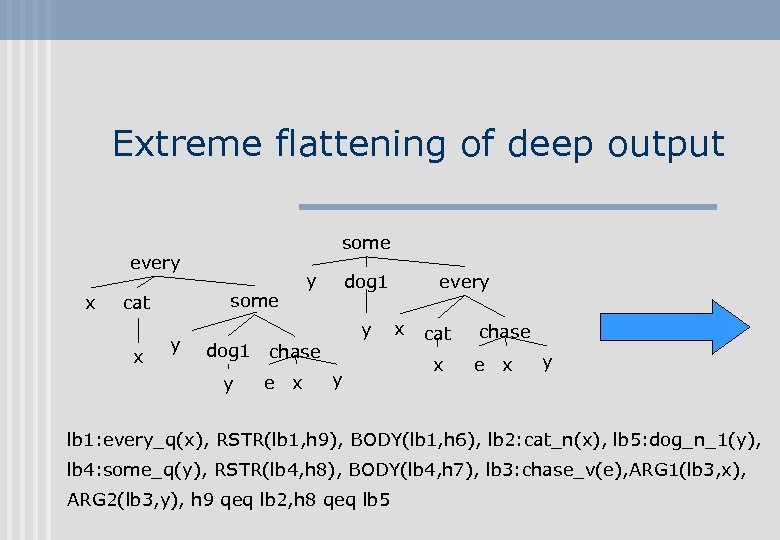

Extreme flattening of deep output some every x some cat x y dog 1 y chase e x y every x cat x chase e x y lb 1: every_q(x), RSTR(lb 1, h 9), BODY(lb 1, h 6), lb 2: cat_n(x), lb 5: dog_n_1(y), lb 4: some_q(y), RSTR(lb 4, h 8), BODY(lb 4, h 7), lb 3: chase_v(e), ARG 1(lb 3, x), ARG 2(lb 3, y), h 9 qeq lb 2, h 8 qeq lb 5

Extreme flattening of deep output some every x some cat x y dog 1 y chase e x y every x cat x chase e x y lb 1: every_q(x), RSTR(lb 1, h 9), BODY(lb 1, h 6), lb 2: cat_n(x), lb 5: dog_n_1(y), lb 4: some_q(y), RSTR(lb 4, h 8), BODY(lb 4, h 7), lb 3: chase_v(e), ARG 1(lb 3, x), ARG 2(lb 3, y), h 9 qeq lb 2, h 8 qeq lb 5

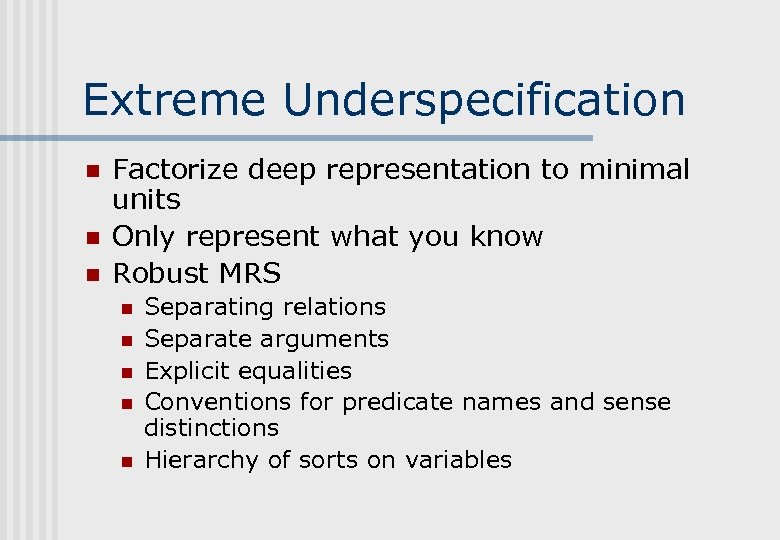

Extreme Underspecification n Factorize deep representation to minimal units Only represent what you know Robust MRS n n n Separating relations Separate arguments Explicit equalities Conventions for predicate names and sense distinctions Hierarchy of sorts on variables

Extreme Underspecification n Factorize deep representation to minimal units Only represent what you know Robust MRS n n n Separating relations Separate arguments Explicit equalities Conventions for predicate names and sense distinctions Hierarchy of sorts on variables

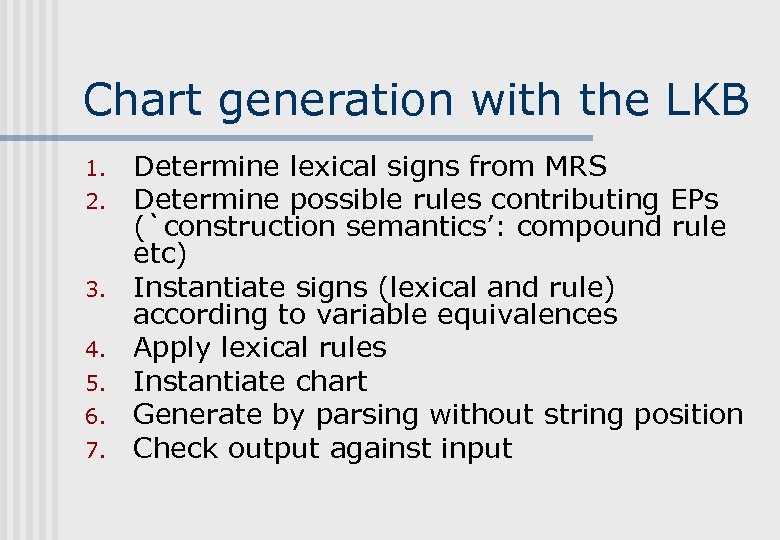

Chart generation with the LKB 1. 2. 3. 4. 5. 6. 7. Determine lexical signs from MRS Determine possible rules contributing EPs (`construction semantics’: compound rule etc) Instantiate signs (lexical and rule) according to variable equivalences Apply lexical rules Instantiate chart Generate by parsing without string position Check output against input

Chart generation with the LKB 1. 2. 3. 4. 5. 6. 7. Determine lexical signs from MRS Determine possible rules contributing EPs (`construction semantics’: compound rule etc) Instantiate signs (lexical and rule) according to variable equivalences Apply lexical rules Instantiate chart Generate by parsing without string position Check output against input

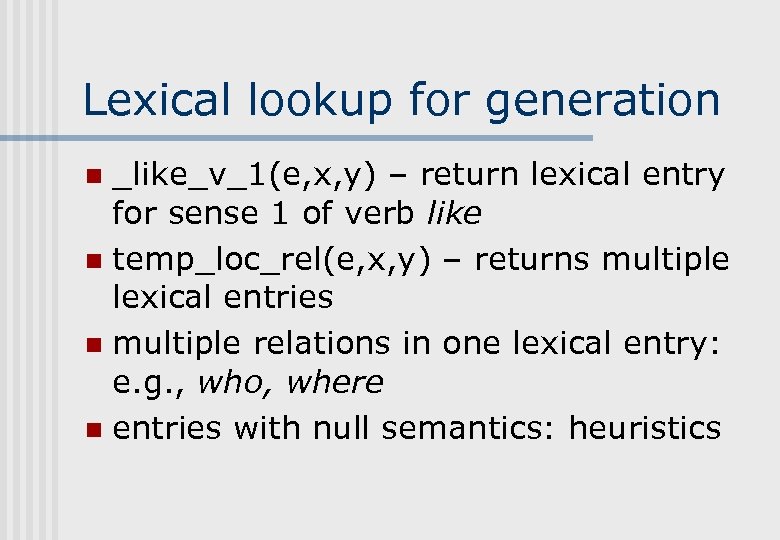

Lexical lookup for generation _like_v_1(e, x, y) – return lexical entry for sense 1 of verb like n temp_loc_rel(e, x, y) – returns multiple lexical entries n multiple relations in one lexical entry: e. g. , who, where n entries with null semantics: heuristics n

Lexical lookup for generation _like_v_1(e, x, y) – return lexical entry for sense 1 of verb like n temp_loc_rel(e, x, y) – returns multiple lexical entries n multiple relations in one lexical entry: e. g. , who, where n entries with null semantics: heuristics n

Instantiation of entries n _like_v_1(e, x, y) & named(x, ”Kim”) & named(y, ”Sandy”) n n n find locations corresponding to `x’s in all FSs replace all `x’s with constant repeat for `y’s etc Also for rules contributing construction semantics `Skolemization’ (misleading name. . . )

Instantiation of entries n _like_v_1(e, x, y) & named(x, ”Kim”) & named(y, ”Sandy”) n n n find locations corresponding to `x’s in all FSs replace all `x’s with constant repeat for `y’s etc Also for rules contributing construction semantics `Skolemization’ (misleading name. . . )

Lexical rule application Lexical rules that contribute EPs only used if EP is in input n Inflectional rules will only apply if variable has the correct sort n Lexical rule application does morphological generation (e. g. , liked, bought) n

Lexical rule application Lexical rules that contribute EPs only used if EP is in input n Inflectional rules will only apply if variable has the correct sort n Lexical rule application does morphological generation (e. g. , liked, bought) n

Chart generation proper Possible lexical signs added to a chart structure n Currently no indexing of chart edges n n n chart generation can use semantic indices, but current results suggest this doesn’t help Rules applied as for chart parsing: edges checked for compatibility with input semantics (bag of EPs)

Chart generation proper Possible lexical signs added to a chart structure n Currently no indexing of chart edges n n n chart generation can use semantic indices, but current results suggest this doesn’t help Rules applied as for chart parsing: edges checked for compatibility with input semantics (bag of EPs)

Root conditions Complete structures must consume all the EPs in the input MRS n Should check for compatibility of scopes n precise qeq matching is (probably) too strict n exactly same scopes is (probably) unrealistic and too slow n

Root conditions Complete structures must consume all the EPs in the input MRS n Should check for compatibility of scopes n precise qeq matching is (probably) too strict n exactly same scopes is (probably) unrealistic and too slow n

Generation failures due to MRS issues n n n Well-formedness check prior to input to generator (optional) Lexical lookup failure: predicate doesn’t match entry, wrong arity, wrong variable types Unwanted instantiations of variables Missing EPs in input: syntax (e. g. , no noun), lexical selection Too many EPs in input: e. g. , two verbs and no coordination

Generation failures due to MRS issues n n n Well-formedness check prior to input to generator (optional) Lexical lookup failure: predicate doesn’t match entry, wrong arity, wrong variable types Unwanted instantiations of variables Missing EPs in input: syntax (e. g. , no noun), lexical selection Too many EPs in input: e. g. , two verbs and no coordination

Improving generation via corpus-based techniques n CONTROL: e. g. intersective modifier order: n Logical representation does not determine order • wet(x) & weather(x) & cold(x) n UNDERSPECIFIED INPUT: e. g. , Determiners: none/a/the/ n Prepositions: in/on/at n

Improving generation via corpus-based techniques n CONTROL: e. g. intersective modifier order: n Logical representation does not determine order • wet(x) & weather(x) & cold(x) n UNDERSPECIFIED INPUT: e. g. , Determiners: none/a/the/ n Prepositions: in/on/at n

Constraining generation for idiomatic output Intersective modifier order: e. g. , adjectives, prepositional phrases n Logical representation does not determine order n n wet(x) & weather(x) & cold(x)

Constraining generation for idiomatic output Intersective modifier order: e. g. , adjectives, prepositional phrases n Logical representation does not determine order n n wet(x) & weather(x) & cold(x)

Adjective ordering n Constraints / preferences big red car n * red big car n cold wet weather n wet cold weather (OK, but dispreferred) n n Difficult to encode in symbolic grammar

Adjective ordering n Constraints / preferences big red car n * red big car n cold wet weather n wet cold weather (OK, but dispreferred) n n Difficult to encode in symbolic grammar

Corpus-derived adjective ordering ngrams perform poorly n Thater: direct evidence plus clustering n positional probability n Malouf (2000): memory-based learning plus positional probability: 92% on BNC n

Corpus-derived adjective ordering ngrams perform poorly n Thater: direct evidence plus clustering n positional probability n Malouf (2000): memory-based learning plus positional probability: 92% on BNC n

Underspecified input to generation We bought a car on Friday Accept: pron(x) & a_quant(y, h 1, h 2) & car(y) & buy(epast, x, y) & on(e, z) & named(z, Friday) and: pron(x) & general_q(y, h 1, h 2) & car(y) & buy(epast, x, y) & temploc(e, z) & named(z, Friday) And maybe: pron(x 1 pl) & car(y) & buy(epast, x, y) & temp_loc(e, z) & named(z, Friday)

Underspecified input to generation We bought a car on Friday Accept: pron(x) & a_quant(y, h 1, h 2) & car(y) & buy(epast, x, y) & on(e, z) & named(z, Friday) and: pron(x) & general_q(y, h 1, h 2) & car(y) & buy(epast, x, y) & temploc(e, z) & named(z, Friday) And maybe: pron(x 1 pl) & car(y) & buy(epast, x, y) & temp_loc(e, z) & named(z, Friday)

Guess the determiner We went climbing in _ Andes n _ president of _ United States n I tore _ pyjamas n I tore _ duvet n George doesn’t like _ vegetables n We bought _ new car yesterday n

Guess the determiner We went climbing in _ Andes n _ president of _ United States n I tore _ pyjamas n I tore _ duvet n George doesn’t like _ vegetables n We bought _ new car yesterday n

Determining determiners n n Determiners are partly conventionalized, often predictable from local context Translation from Japanese etc, speech prosthesis application More `meaning-rich’ determiners assumed to be specified in the input Minnen et al: 85% on WSJ (using Ti. MBL)

Determining determiners n n Determiners are partly conventionalized, often predictable from local context Translation from Japanese etc, speech prosthesis application More `meaning-rich’ determiners assumed to be specified in the input Minnen et al: 85% on WSJ (using Ti. MBL)

Preposition guessing n Choice between temporal in/on/at n n n n in the morning in July on Wednesday morning at three o’clock at New Year ERG uses hand-coded rules and lexical categories Machine learning approach gives very high precision and recall on WSJ, good results on balanced corpus (Lin Mei, 2004, Cambridge MPhil thesis)

Preposition guessing n Choice between temporal in/on/at n n n n in the morning in July on Wednesday morning at three o’clock at New Year ERG uses hand-coded rules and lexical categories Machine learning approach gives very high precision and recall on WSJ, good results on balanced corpus (Lin Mei, 2004, Cambridge MPhil thesis)

SEM-I: semantic interface Meta-level: manually specified `grammar’ relations (constructions and closed-class) n Object-level: linked to lexical database for deep grammars n Definitional: e. g. lemma+POS+sense n Linked test suites, examples, documentation n

SEM-I: semantic interface Meta-level: manually specified `grammar’ relations (constructions and closed-class) n Object-level: linked to lexical database for deep grammars n Definitional: e. g. lemma+POS+sense n Linked test suites, examples, documentation n

SEM-I development SEM-I eventually forms the `API’: stable, changes negotiated. n SEM-I vs Verbmobil SEMDB n Technical limitations of SEMDB n Too painful! n `Munging’ rules: external vs internal n SEM-I development must be incremental n

SEM-I development SEM-I eventually forms the `API’: stable, changes negotiated. n SEM-I vs Verbmobil SEMDB n Technical limitations of SEMDB n Too painful! n `Munging’ rules: external vs internal n SEM-I development must be incremental n

Role of SEM-I in architecture n Offline Definition of `correct’ (R)MRS for developers n Documentation n Checking of test-suites n n Online In unifier/selector: reject invalid RMRSs n Patching up input to generation n

Role of SEM-I in architecture n Offline Definition of `correct’ (R)MRS for developers n Documentation n Checking of test-suites n n Online In unifier/selector: reject invalid RMRSs n Patching up input to generation n

![Goal: semi-automated documentation [incr tsdb()] Lex DB ERG Object-level SEM-I Documentation strings and semantic Goal: semi-automated documentation [incr tsdb()] Lex DB ERG Object-level SEM-I Documentation strings and semantic](https://present5.com/presentation/e32cda54cda3c99e2a333e2e69c04444/image-29.jpg) Goal: semi-automated documentation [incr tsdb()] Lex DB ERG Object-level SEM-I Documentation strings and semantic test-suite Auto-generate examples semi-automatic examples, autogenerated on demand Documentation Meta-level SEM-I autogenerate appendix

Goal: semi-automated documentation [incr tsdb()] Lex DB ERG Object-level SEM-I Documentation strings and semantic test-suite Auto-generate examples semi-automatic examples, autogenerated on demand Documentation Meta-level SEM-I autogenerate appendix

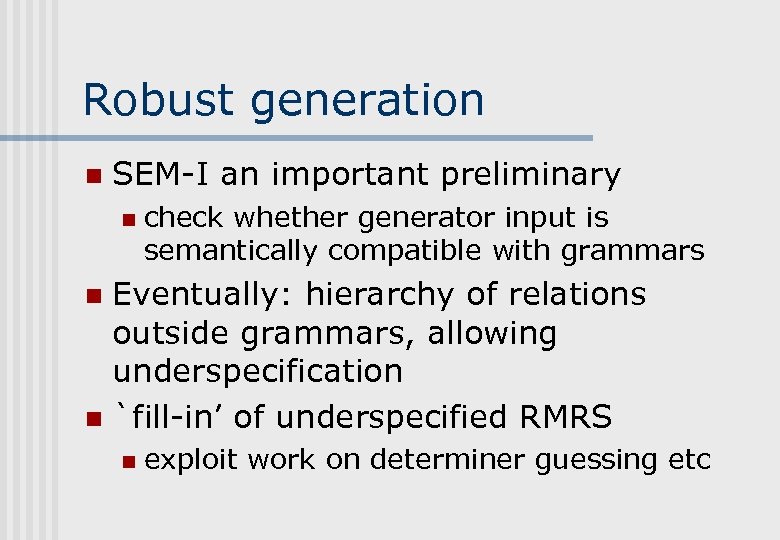

Robust generation n SEM-I an important preliminary n check whether generator input is semantically compatible with grammars Eventually: hierarchy of relations outside grammars, allowing underspecification n `fill-in’ of underspecified RMRS n n exploit work on determiner guessing etc

Robust generation n SEM-I an important preliminary n check whether generator input is semantically compatible with grammars Eventually: hierarchy of relations outside grammars, allowing underspecification n `fill-in’ of underspecified RMRS n n exploit work on determiner guessing etc

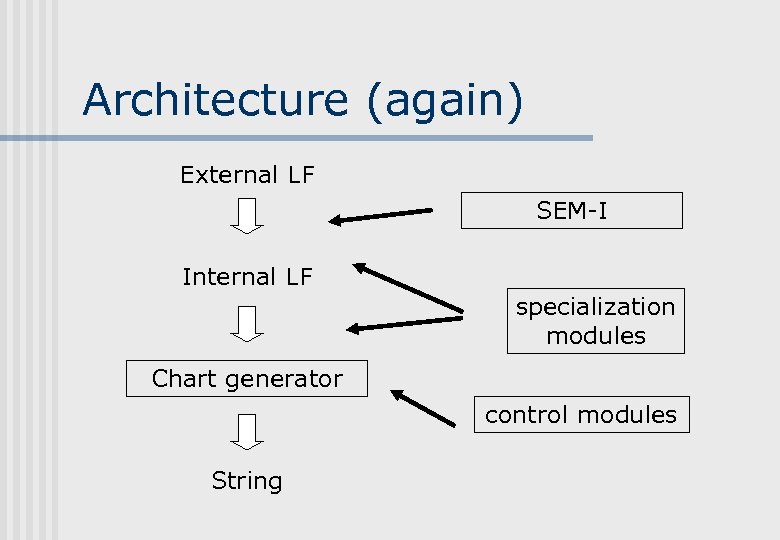

Architecture (again) External LF SEM-I Internal LF specialization modules Chart generator control modules String

Architecture (again) External LF SEM-I Internal LF specialization modules Chart generator control modules String

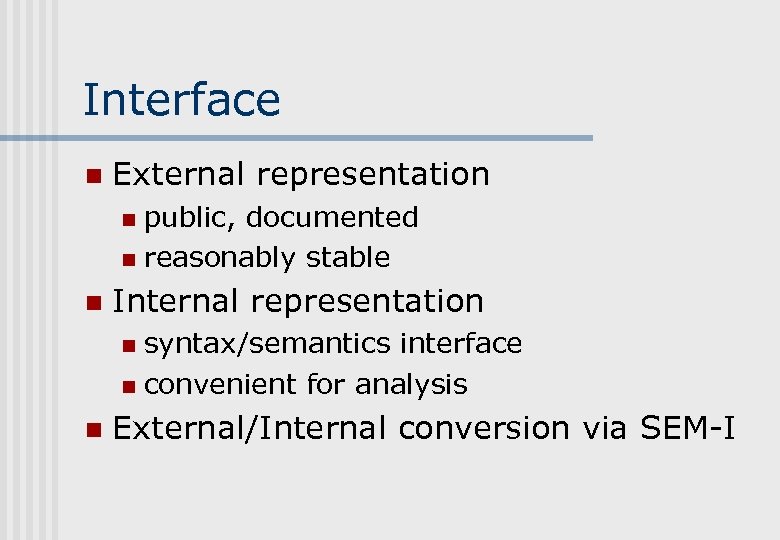

Interface n External representation public, documented n reasonably stable n n Internal representation syntax/semantics interface n convenient for analysis n n External/Internal conversion via SEM-I

Interface n External representation public, documented n reasonably stable n n Internal representation syntax/semantics interface n convenient for analysis n n External/Internal conversion via SEM-I

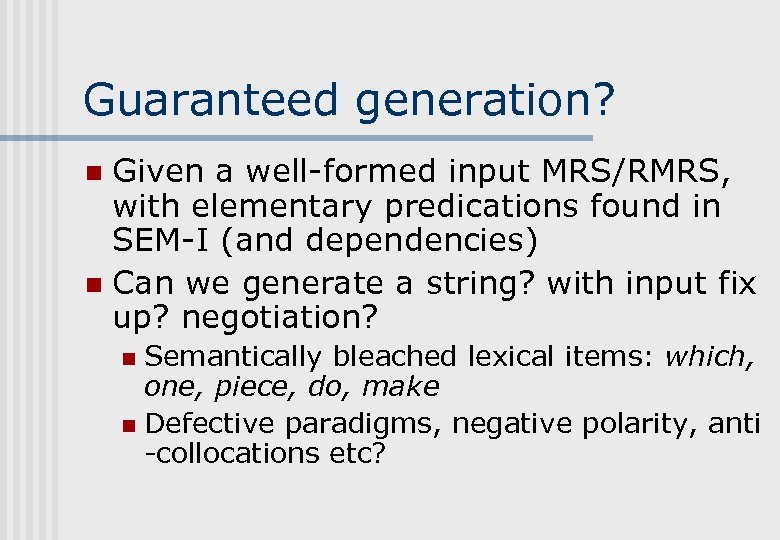

Guaranteed generation? Given a well-formed input MRS/RMRS, with elementary predications found in SEM-I (and dependencies) n Can we generate a string? with input fix up? negotiation? n Semantically bleached lexical items: which, one, piece, do, make n Defective paradigms, negative polarity, anti -collocations etc? n

Guaranteed generation? Given a well-formed input MRS/RMRS, with elementary predications found in SEM-I (and dependencies) n Can we generate a string? with input fix up? negotiation? n Semantically bleached lexical items: which, one, piece, do, make n Defective paradigms, negative polarity, anti -collocations etc? n

Next stages n n n n SEM-I development Documentation and test suite integration Generation from RMRSs produced by shallower parser (or deep/shallow combination) Partially fixed text in generation (cogeneration) Further statistical modules: e. g. , locational prepositions, other modifiers More underspecification Gradually increase flexibility of interface to generation

Next stages n n n n SEM-I development Documentation and test suite integration Generation from RMRSs produced by shallower parser (or deep/shallow combination) Partially fixed text in generation (cogeneration) Further statistical modules: e. g. , locational prepositions, other modifiers More underspecification Gradually increase flexibility of interface to generation