65ca2f6000e008b9bbbf6f5737742a9a.ppt

- Количество слайдов: 34

Fleet Numerical Meteorology and Oceanography Center (FNMOC) COPC Site Update November 14, 2007 Captain John G. Kusters, USN Fleet Numerical Meteorology and Oceanography Center 7 Grace Hopper Ave Monterey, CA 93943 John. Kusters@navy. mil (831) 656 -4327

Fleet Numerical Meteorology and Oceanography Center (FNMOC) COPC Site Update November 14, 2007 Captain John G. Kusters, USN Fleet Numerical Meteorology and Oceanography Center 7 Grace Hopper Ave Monterey, CA 93943 John. Kusters@navy. mil (831) 656 -4327

Outline • • Operational Models and Applications Information Assurance Automation Initiatives Computer Systems Satellite Products NPOESS MILCON Summary

Outline • • Operational Models and Applications Information Assurance Automation Initiatives Computer Systems Satellite Products NPOESS MILCON Summary

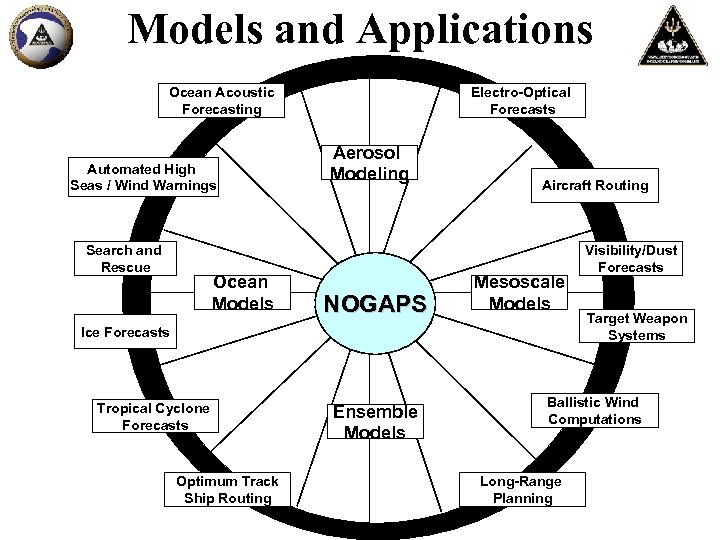

Models and Applications Ocean Acoustic Forecasting Automated High Seas / Wind Warnings Search and Rescue Ocean Models Electro-Optical Forecasts Aerosol Modeling NOGAPS Aircraft Routing Mesoscale Models Ice Forecasts Tropical Cyclone Forecasts Optimum Track Ship Routing Ensemble Models Visibility/Dust Forecasts Target Weapon Systems Ballistic Wind Computations Long-Range Planning

Models and Applications Ocean Acoustic Forecasting Automated High Seas / Wind Warnings Search and Rescue Ocean Models Electro-Optical Forecasts Aerosol Modeling NOGAPS Aircraft Routing Mesoscale Models Ice Forecasts Tropical Cyclone Forecasts Optimum Track Ship Routing Ensemble Models Visibility/Dust Forecasts Target Weapon Systems Ballistic Wind Computations Long-Range Planning

Operational Models • World-class operational and information-assured models are critical to our Nation’s defense – At core of Fleet Numerical reachback/automation efforts • Navy Operational Global Atmospheric Prediction System (NOGAPS) – Premier global model for maritime environment • Tailored for Navy/DOD missions • Drives Navy ocean models – Supports the Ensemble Forecast System (EFS) • Coupled Ocean/Atmosphere Mesoscale Prediction System (COAMPS) – – Regional model for high-resolution support to Naval operations Coupled with littoral ocean models Re-locatable in minutes for on-demand operations support Classification levels up to TS/SCI

Operational Models • World-class operational and information-assured models are critical to our Nation’s defense – At core of Fleet Numerical reachback/automation efforts • Navy Operational Global Atmospheric Prediction System (NOGAPS) – Premier global model for maritime environment • Tailored for Navy/DOD missions • Drives Navy ocean models – Supports the Ensemble Forecast System (EFS) • Coupled Ocean/Atmosphere Mesoscale Prediction System (COAMPS) – – Regional model for high-resolution support to Naval operations Coupled with littoral ocean models Re-locatable in minutes for on-demand operations support Classification levels up to TS/SCI

Operational Models Improvement Process • Direct Feedback Connection with Fleet Users – Fleet Numerical provides enhanced model verification plots – Fleet users provide objective feedback • • Model and/or post-processing adjustments Rigorous but rapid Configuration Management process Long-term model improvements based on Fleet needs Continuous model improvement adds skill to the critical 72 – 120 hour period (resource protection, sortie decisions) • Meets weekly • “Team Monterey” effort – Collocation with Naval Research Lab – Rapid and successful transition of R&D Ops – Naval Postgraduate School Collaboration Continuous Model Improvement Process

Operational Models Improvement Process • Direct Feedback Connection with Fleet Users – Fleet Numerical provides enhanced model verification plots – Fleet users provide objective feedback • • Model and/or post-processing adjustments Rigorous but rapid Configuration Management process Long-term model improvements based on Fleet needs Continuous model improvement adds skill to the critical 72 – 120 hour period (resource protection, sortie decisions) • Meets weekly • “Team Monterey” effort – Collocation with Naval Research Lab – Rapid and successful transition of R&D Ops – Naval Postgraduate School Collaboration Continuous Model Improvement Process

Model Upgrades Last 6 months • NOGAPS/NAVDAS-AR – AVHRR cloud-track polar winds (20071114) – Tuning variational analysis scale factors (20071002). Improved short term tropical forecast performance – NAVDAS-AR (4 DVAR) running in NRL alpha utilizing CRTM and METOP AMSU radiances (20070901) – NAVDAS - CRTM installed under FNMOC CM – NOGAPS - Stopped reading AFWA snow fields and reverted to model snow due to overestimation (2007062212). – NAVDAS - Assimilating 3 -hourly feature track wind files from UW CIMSS for Meteosat-9. It was previously 6 -hourly (April) – NAVDAS - EUMETSAT Meteosat-9 replaced Meteosat-8 atmospheric motion vector (AMV) winds (20070411)

Model Upgrades Last 6 months • NOGAPS/NAVDAS-AR – AVHRR cloud-track polar winds (20071114) – Tuning variational analysis scale factors (20071002). Improved short term tropical forecast performance – NAVDAS-AR (4 DVAR) running in NRL alpha utilizing CRTM and METOP AMSU radiances (20070901) – NAVDAS - CRTM installed under FNMOC CM – NOGAPS - Stopped reading AFWA snow fields and reverted to model snow due to overestimation (2007062212). – NAVDAS - Assimilating 3 -hourly feature track wind files from UW CIMSS for Meteosat-9. It was previously 6 -hourly (April) – NAVDAS - EUMETSAT Meteosat-9 replaced Meteosat-8 atmospheric motion vector (AMV) winds (20070411)

Model Upgrades Last 6 months • COAMPS – All COAMPS areas converted from MVOI to NAVDAS-3 DVAR – Running COAMPS areas operationally on IBM AIX to take load off of SGI systems (20071017) – COAMPS verification graphics package promoted to FNMOC OPS (20070804) – Significant COAMPS update to improve height bias, stratus prediction, and tropical cyclone intensity (20070801). Surface fluxes were increased thus impacting NCOM during VS-07. – METOP-A ATOVS temperature retrievals assimilated by COAMPS/NAVDAS (20070808) – COAMPS-OS operational in the SCIF on AMS 2 Origin 3800 hardware (200706) – COAMPS Europe resolution change from 81/27 to 54/18 km (20070516) – Completed COAMPS SWA dust source file updates and added capability for dust forecasts to WPAC area (20070522)

Model Upgrades Last 6 months • COAMPS – All COAMPS areas converted from MVOI to NAVDAS-3 DVAR – Running COAMPS areas operationally on IBM AIX to take load off of SGI systems (20071017) – COAMPS verification graphics package promoted to FNMOC OPS (20070804) – Significant COAMPS update to improve height bias, stratus prediction, and tropical cyclone intensity (20070801). Surface fluxes were increased thus impacting NCOM during VS-07. – METOP-A ATOVS temperature retrievals assimilated by COAMPS/NAVDAS (20070808) – COAMPS-OS operational in the SCIF on AMS 2 Origin 3800 hardware (200706) – COAMPS Europe resolution change from 81/27 to 54/18 km (20070516) – Completed COAMPS SWA dust source file updates and added capability for dust forecasts to WPAC area (20070522)

NAVDAS-AR Navy Atmospheric Variational Data Assimilation System –Accelerated Representer • 4 D-VAR data assimilation system • Basis for future fully interactive data assimilation/forecast system supporting adaptive observing • Computationally efficient Accelerated Representer numerical solution • The means by which NOGAPS and COAMPS assimilate data in the NPOESS era

NAVDAS-AR Navy Atmospheric Variational Data Assimilation System –Accelerated Representer • 4 D-VAR data assimilation system • Basis for future fully interactive data assimilation/forecast system supporting adaptive observing • Computationally efficient Accelerated Representer numerical solution • The means by which NOGAPS and COAMPS assimilate data in the NPOESS era

Model Upgrades Next 6 Months • NOGAPS/NAVDAS-AR – Assimilate Korean AMDAR winds when quality improvements made (Q 1 FY 08) – FNMOC Beta NAVDAS-AR (Q 1 FY 08) – COSMIC/GPS data assimilation (Q 2 FY 08) – Assimilate AIRS radiances (Q 2 FY 08) – FNMOC Beta on new A 2 Linux cluster (Q 3 FY 08) – FNMOC Ops on new A 2 Linux cluster (Q 3 FY 08) • COAMPS – COAMPS-OS (NAVDAS, classified obs, RUC) (Q 2 FY 08) – COAMPS-OS (coupled NCOM, WW 3, SWAN) upgrade (Q 3 FY 08) – COAMPS-OS merges with AMS COAMPS and becomes COAMPS on A 2 -0 (Q 4 FY 08)

Model Upgrades Next 6 Months • NOGAPS/NAVDAS-AR – Assimilate Korean AMDAR winds when quality improvements made (Q 1 FY 08) – FNMOC Beta NAVDAS-AR (Q 1 FY 08) – COSMIC/GPS data assimilation (Q 2 FY 08) – Assimilate AIRS radiances (Q 2 FY 08) – FNMOC Beta on new A 2 Linux cluster (Q 3 FY 08) – FNMOC Ops on new A 2 Linux cluster (Q 3 FY 08) • COAMPS – COAMPS-OS (NAVDAS, classified obs, RUC) (Q 2 FY 08) – COAMPS-OS (coupled NCOM, WW 3, SWAN) upgrade (Q 3 FY 08) – COAMPS-OS merges with AMS COAMPS and becomes COAMPS on A 2 -0 (Q 4 FY 08)

Information Assurance (IA) Environment • Booze-Allen-Hamilton contracted to facilitate transition of ODAA to Naval Network Warfare Command (NNWC) – ATO in progress including robust Cross Domain Solution • Defense Information Systems Agency (DISA) Enhanced Compliance Review – Annual inspection to evaluate classified network (SIPR), unclassified network (NIPR) and traditional security posture – Over 99% IAVM compliant on evaluated items • High Performance Computing Modernization Officer (HPCMO) Technical and Administrative Reviews – Annual inspection to evaluate HPCMO HPC connections to the network

Information Assurance (IA) Environment • Booze-Allen-Hamilton contracted to facilitate transition of ODAA to Naval Network Warfare Command (NNWC) – ATO in progress including robust Cross Domain Solution • Defense Information Systems Agency (DISA) Enhanced Compliance Review – Annual inspection to evaluate classified network (SIPR), unclassified network (NIPR) and traditional security posture – Over 99% IAVM compliant on evaluated items • High Performance Computing Modernization Officer (HPCMO) Technical and Administrative Reviews – Annual inspection to evaluate HPCMO HPC connections to the network

Automation Initiatives • AOTSR • CAAPS • EVIS

Automation Initiatives • AOTSR • CAAPS • EVIS

Automated Optimum Track Ship Routing (AOTSR) • Ship routing has traditionally been a labor intensive process that requires forecasters to examine each ship route and corresponding model/forecast information • Significant aspects of maritime routing to optimize heavy weather avoidance can be automated – If 70% of oceans/skies are calm, those forecasts are automatically transmitted – As operating thresholds are approached, ship routes are flagged forecaster review – Builds on progress automating high winds/seas warnings for the Pacific and Indian Ocean, which have increasing forecaster efficiency by as much as 90% • Existing resources are being leveraged to develop prototype of Automated OTSR that incorporates a fuel efficiency option – Ship Tracking and Routing System (STARS) automation and integration (Mr. Henry Chen)

Automated Optimum Track Ship Routing (AOTSR) • Ship routing has traditionally been a labor intensive process that requires forecasters to examine each ship route and corresponding model/forecast information • Significant aspects of maritime routing to optimize heavy weather avoidance can be automated – If 70% of oceans/skies are calm, those forecasts are automatically transmitted – As operating thresholds are approached, ship routes are flagged forecaster review – Builds on progress automating high winds/seas warnings for the Pacific and Indian Ocean, which have increasing forecaster efficiency by as much as 90% • Existing resources are being leveraged to develop prototype of Automated OTSR that incorporates a fuel efficiency option – Ship Tracking and Routing System (STARS) automation and integration (Mr. Henry Chen)

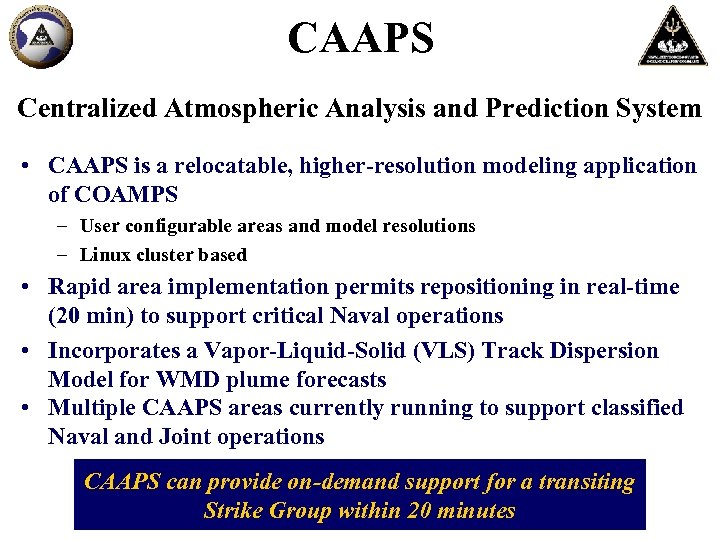

CAAPS Centralized Atmospheric Analysis and Prediction System • CAAPS is a relocatable, higher-resolution modeling application of COAMPS – User configurable areas and model resolutions – Linux cluster based • Rapid area implementation permits repositioning in real-time (20 min) to support critical Naval operations • Incorporates a Vapor-Liquid-Solid (VLS) Track Dispersion Model for WMD plume forecasts • Multiple CAAPS areas currently running to support classified Naval and Joint operations CAAPS can provide on-demand support for a transiting Strike Group within 20 minutes

CAAPS Centralized Atmospheric Analysis and Prediction System • CAAPS is a relocatable, higher-resolution modeling application of COAMPS – User configurable areas and model resolutions – Linux cluster based • Rapid area implementation permits repositioning in real-time (20 min) to support critical Naval operations • Incorporates a Vapor-Liquid-Solid (VLS) Track Dispersion Model for WMD plume forecasts • Multiple CAAPS areas currently running to support classified Naval and Joint operations CAAPS can provide on-demand support for a transiting Strike Group within 20 minutes

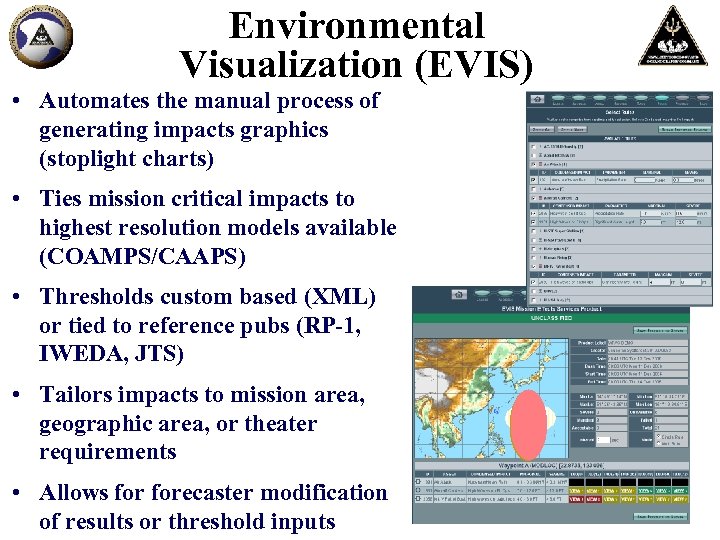

Environmental Visualization (EVIS) • Automates the manual process of generating impacts graphics (stoplight charts) • Ties mission critical impacts to highest resolution models available (COAMPS/CAAPS) • Thresholds custom based (XML) or tied to reference pubs (RP-1, IWEDA, JTS) • Tailors impacts to mission area, geographic area, or theater requirements • Allows forecaster modification of results or threshold inputs

Environmental Visualization (EVIS) • Automates the manual process of generating impacts graphics (stoplight charts) • Ties mission critical impacts to highest resolution models available (COAMPS/CAAPS) • Thresholds custom based (XML) or tied to reference pubs (RP-1, IWEDA, JTS) • Tailors impacts to mission area, geographic area, or theater requirements • Allows forecaster modification of results or threshold inputs

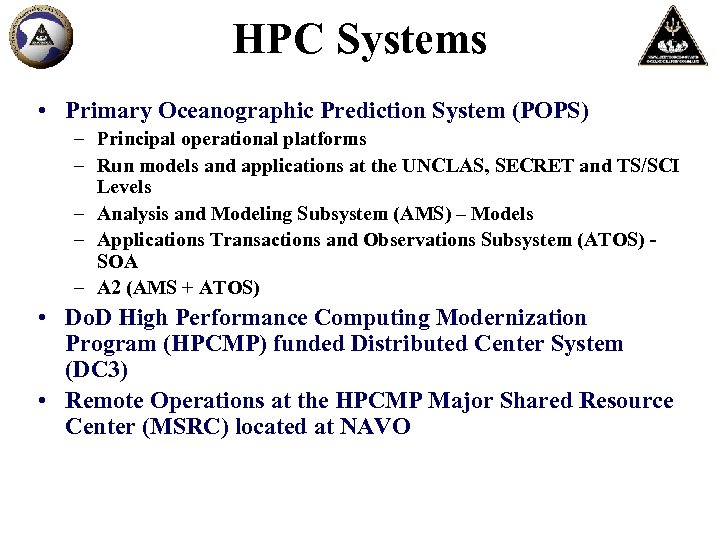

HPC Systems • Primary Oceanographic Prediction System (POPS) – Principal operational platforms – Run models and applications at the UNCLAS, SECRET and TS/SCI Levels – Analysis and Modeling Subsystem (AMS) – Models – Applications Transactions and Observations Subsystem (ATOS) SOA – A 2 (AMS + ATOS) • Do. D High Performance Computing Modernization Program (HPCMP) funded Distributed Center System (DC 3) • Remote Operations at the HPCMP Major Shared Resource Center (MSRC) located at NAVO

HPC Systems • Primary Oceanographic Prediction System (POPS) – Principal operational platforms – Run models and applications at the UNCLAS, SECRET and TS/SCI Levels – Analysis and Modeling Subsystem (AMS) – Models – Applications Transactions and Observations Subsystem (ATOS) SOA – A 2 (AMS + ATOS) • Do. D High Performance Computing Modernization Program (HPCMP) funded Distributed Center System (DC 3) • Remote Operations at the HPCMP Major Shared Resource Center (MSRC) located at NAVO

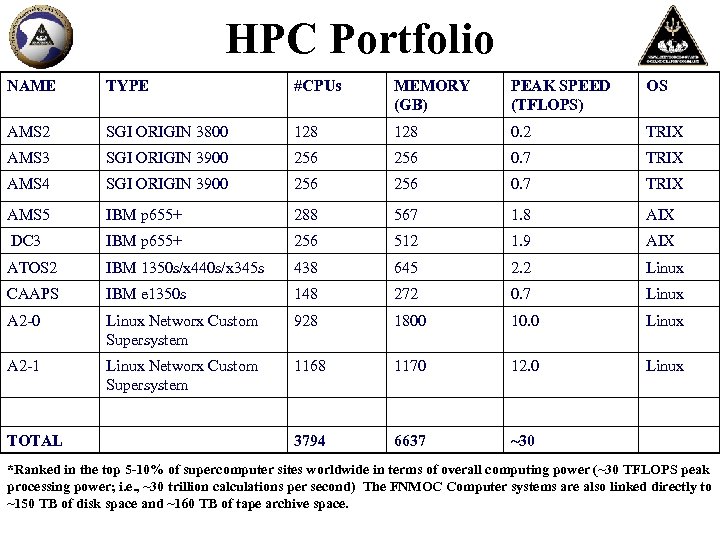

HPC Portfolio NAME TYPE #CPUs MEMORY (GB) PEAK SPEED (TFLOPS) OS AMS 2 SGI ORIGIN 3800 128 0. 2 TRIX AMS 3 SGI ORIGIN 3900 256 0. 7 TRIX AMS 4 SGI ORIGIN 3900 256 0. 7 TRIX AMS 5 IBM p 655+ 288 567 1. 8 AIX DC 3 IBM p 655+ 256 512 1. 9 AIX ATOS 2 IBM 1350 s/x 440 s/x 345 s 438 645 2. 2 Linux CAAPS IBM e 1350 s 148 272 0. 7 Linux A 2 -0 Linux Networx Custom Supersystem 928 1800 10. 0 Linux A 2 -1 Linux Networx Custom Supersystem 1168 1170 12. 0 Linux 3794 6637 ~30 TOTAL *Ranked in the top 5 -10% of supercomputer sites worldwide in terms of overall computing power (~30 TFLOPS peak processing power; i. e. , ~30 trillion calculations per second) The FNMOC Computer systems are also linked directly to ~150 TB of disk space and ~160 TB of tape archive space.

HPC Portfolio NAME TYPE #CPUs MEMORY (GB) PEAK SPEED (TFLOPS) OS AMS 2 SGI ORIGIN 3800 128 0. 2 TRIX AMS 3 SGI ORIGIN 3900 256 0. 7 TRIX AMS 4 SGI ORIGIN 3900 256 0. 7 TRIX AMS 5 IBM p 655+ 288 567 1. 8 AIX DC 3 IBM p 655+ 256 512 1. 9 AIX ATOS 2 IBM 1350 s/x 440 s/x 345 s 438 645 2. 2 Linux CAAPS IBM e 1350 s 148 272 0. 7 Linux A 2 -0 Linux Networx Custom Supersystem 928 1800 10. 0 Linux A 2 -1 Linux Networx Custom Supersystem 1168 1170 12. 0 Linux 3794 6637 ~30 TOTAL *Ranked in the top 5 -10% of supercomputer sites worldwide in terms of overall computing power (~30 TFLOPS peak processing power; i. e. , ~30 trillion calculations per second) The FNMOC Computer systems are also linked directly to ~150 TB of disk space and ~160 TB of tape archive space.

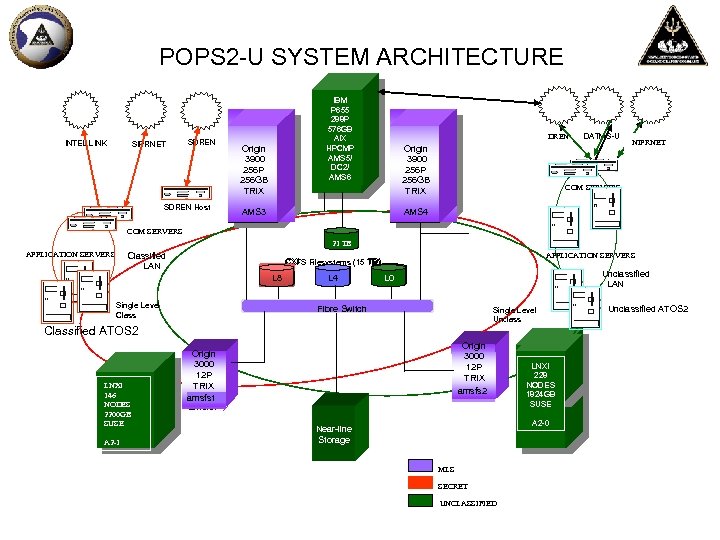

POPS 2 -U SYSTEM ARCHITECTURE Origin INTEL LINK SIPRNET SDREN Host 3900 Origin 256 P 3900 256 P 256 GB TRIX IBM P 655 288 P 576 GB AIX HPCMP AMS 5/ DC 2/ AMS 6 DREN Origin 3900 256 P 256 GB TRIX DATMS-U NIPRNET COM SERVERS HPCMP AMS 3 AMS 4 COM SERVERS 21 TB APPLICATION SERVERS Classified LAN APPLICATION SERVERS CXFS Filesystems (15 TB) L 8 Single Level Class L 4 Unclassified LAN L 0 Fibre Switch Single Level Unclass Classified ATOS 2 LNXI 146 NODES 2200 GB SUSE A 2 -1 Origin 3000 12 P TRIX amsfs 2 Origin 3000 12 P TRIX amsfs 1 LNXI 228 NODES 1824 GB SUSE A 2 -0 Near-line Storage MLS SECRET UNCLASSIFIED Unclassified ATOS 2

POPS 2 -U SYSTEM ARCHITECTURE Origin INTEL LINK SIPRNET SDREN Host 3900 Origin 256 P 3900 256 P 256 GB TRIX IBM P 655 288 P 576 GB AIX HPCMP AMS 5/ DC 2/ AMS 6 DREN Origin 3900 256 P 256 GB TRIX DATMS-U NIPRNET COM SERVERS HPCMP AMS 3 AMS 4 COM SERVERS 21 TB APPLICATION SERVERS Classified LAN APPLICATION SERVERS CXFS Filesystems (15 TB) L 8 Single Level Class L 4 Unclassified LAN L 0 Fibre Switch Single Level Unclass Classified ATOS 2 LNXI 146 NODES 2200 GB SUSE A 2 -1 Origin 3000 12 P TRIX amsfs 2 Origin 3000 12 P TRIX amsfs 1 LNXI 228 NODES 1824 GB SUSE A 2 -0 Near-line Storage MLS SECRET UNCLASSIFIED Unclassified ATOS 2

POPS Architecture Strategy • Technology advances open the opportunity for combining AMS and ATOS functionality by utilizing specialized computing – Emergence of Linux based HPC – Convergence of server and HPC processors • Efficiency for reachback/on-demand modeling – Shared file system – Shared databases – Lower latency for on-demand model response • Long term cost efficiency of Linux vice proprietary operating systems • Cost savings by converging to a single operating system across FNMOC

POPS Architecture Strategy • Technology advances open the opportunity for combining AMS and ATOS functionality by utilizing specialized computing – Emergence of Linux based HPC – Convergence of server and HPC processors • Efficiency for reachback/on-demand modeling – Shared file system – Shared databases – Lower latency for on-demand model response • Long term cost efficiency of Linux vice proprietary operating systems • Cost savings by converging to a single operating system across FNMOC

Specialized Computing • Specialized computing which complements high-capacity systems by tuning hardware to applications will: – Enable transition to an easily scalable Linux environment – SE Linux to replace TRIX and Trusted Solaris • Aligned with NSA investments for an MLS solution – Triple peak speed from 7. 33 to ~ 29. 56 TFLOPS – Triple memory from 2, 645 to ~ 7, 005 GB – Double number of CPUs from 2, 026 to ~ 4, 234 • Combining this with a CAAPS or EVIS interface provides full capability that combines all layers of Battlespace On Demand: – Environmental data – Sensor/weapon performance – Commander/operator decision

Specialized Computing • Specialized computing which complements high-capacity systems by tuning hardware to applications will: – Enable transition to an easily scalable Linux environment – SE Linux to replace TRIX and Trusted Solaris • Aligned with NSA investments for an MLS solution – Triple peak speed from 7. 33 to ~ 29. 56 TFLOPS – Triple memory from 2, 645 to ~ 7, 005 GB – Double number of CPUs from 2, 026 to ~ 4, 234 • Combining this with a CAAPS or EVIS interface provides full capability that combines all layers of Battlespace On Demand: – Environmental data – Sensor/weapon performance – Commander/operator decision

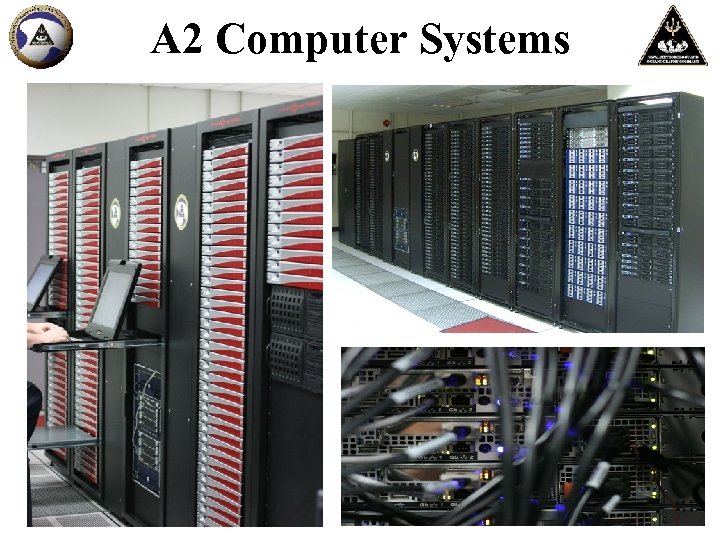

A 2 Computer Systems

A 2 Computer Systems

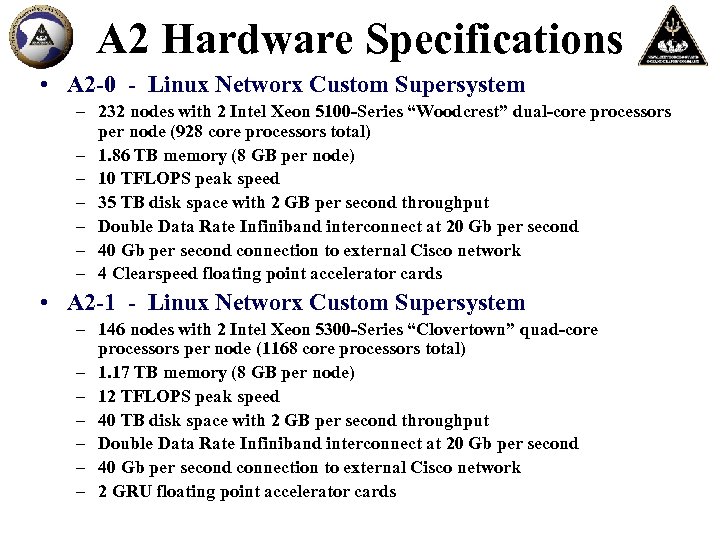

A 2 Hardware Specifications • A 2 -0 - Linux Networx Custom Supersystem – 232 nodes with 2 Intel Xeon 5100 -Series “Woodcrest” dual-core processors per node (928 core processors total) – 1. 86 TB memory (8 GB per node) – 10 TFLOPS peak speed – 35 TB disk space with 2 GB per second throughput – Double Data Rate Infiniband interconnect at 20 Gb per second – 40 Gb per second connection to external Cisco network – 4 Clearspeed floating point accelerator cards • A 2 -1 - Linux Networx Custom Supersystem – 146 nodes with 2 Intel Xeon 5300 -Series “Clovertown” quad-core processors per node (1168 core processors total) – 1. 17 TB memory (8 GB per node) – 12 TFLOPS peak speed – 40 TB disk space with 2 GB per second throughput – Double Data Rate Infiniband interconnect at 20 Gb per second – 40 Gb per second connection to external Cisco network – 2 GRU floating point accelerator cards

A 2 Hardware Specifications • A 2 -0 - Linux Networx Custom Supersystem – 232 nodes with 2 Intel Xeon 5100 -Series “Woodcrest” dual-core processors per node (928 core processors total) – 1. 86 TB memory (8 GB per node) – 10 TFLOPS peak speed – 35 TB disk space with 2 GB per second throughput – Double Data Rate Infiniband interconnect at 20 Gb per second – 40 Gb per second connection to external Cisco network – 4 Clearspeed floating point accelerator cards • A 2 -1 - Linux Networx Custom Supersystem – 146 nodes with 2 Intel Xeon 5300 -Series “Clovertown” quad-core processors per node (1168 core processors total) – 1. 17 TB memory (8 GB per node) – 12 TFLOPS peak speed – 40 TB disk space with 2 GB per second throughput – Double Data Rate Infiniband interconnect at 20 Gb per second – 40 Gb per second connection to external Cisco network – 2 GRU floating point accelerator cards

Satellite Products

Satellite Products

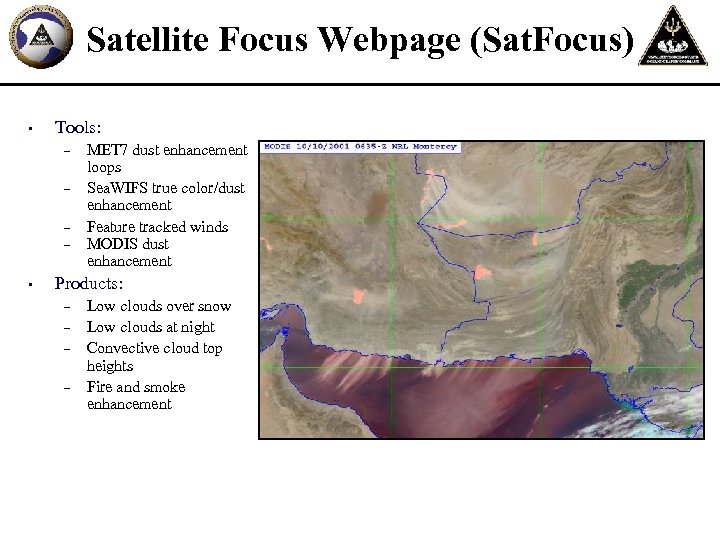

Satellite Focus Webpage (Sat. Focus) • Tools: – – • MET 7 dust enhancement loops Sea. WIFS true color/dust enhancement Feature tracked winds MODIS dust enhancement Products: – – Low clouds over snow Low clouds at night Convective cloud top heights Fire and smoke enhancement

Satellite Focus Webpage (Sat. Focus) • Tools: – – • MET 7 dust enhancement loops Sea. WIFS true color/dust enhancement Feature tracked winds MODIS dust enhancement Products: – – Low clouds over snow Low clouds at night Convective cloud top heights Fire and smoke enhancement

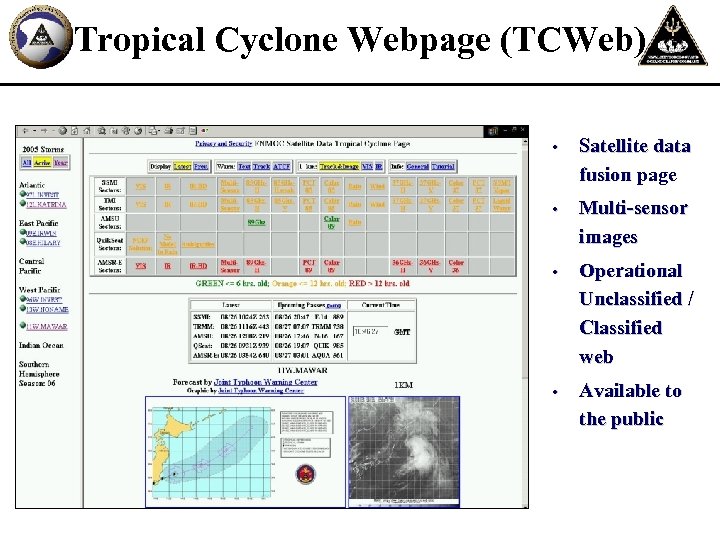

Tropical Cyclone Webpage (TCWeb) • Satellite data fusion page • Multi-sensor images • Operational Unclassified / Classified web • Available to the public

Tropical Cyclone Webpage (TCWeb) • Satellite data fusion page • Multi-sensor images • Operational Unclassified / Classified web • Available to the public

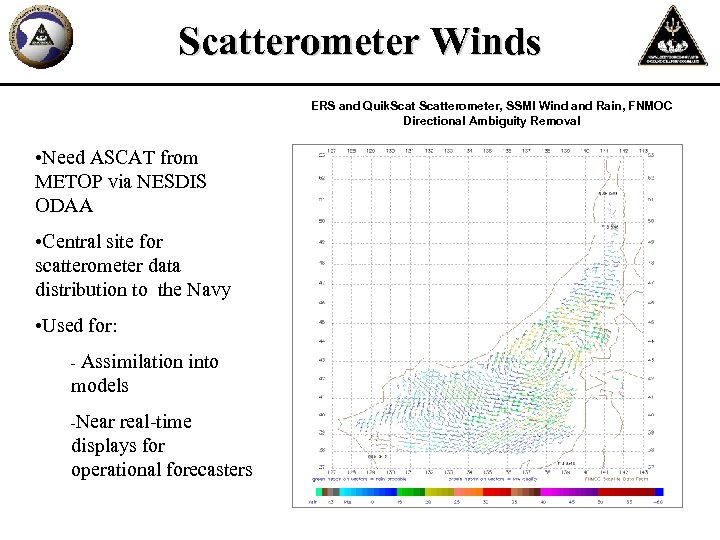

Scatterometer Winds ERS and Quik. Scatterometer, SSMI Wind and Rain, FNMOC Directional Ambiguity Removal • Need ASCAT from METOP via NESDIS ODAA • Central site for scatterometer data distribution to the Navy • Used for: Assimilation into models - -Near real-time displays for operational forecasters

Scatterometer Winds ERS and Quik. Scatterometer, SSMI Wind and Rain, FNMOC Directional Ambiguity Removal • Need ASCAT from METOP via NESDIS ODAA • Central site for scatterometer data distribution to the Navy • Used for: Assimilation into models - -Near real-time displays for operational forecasters

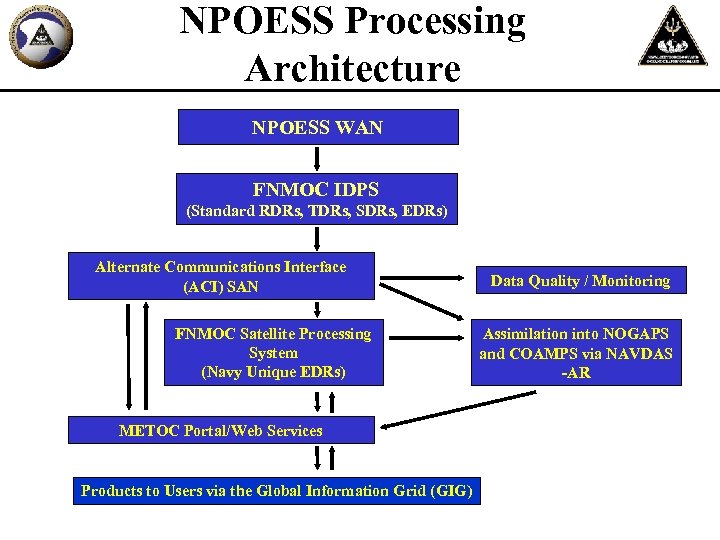

NPOESS Processing Architecture NPOESS WAN FNMOC IDPS (Standard RDRs, TDRs, SDRs, EDRs) Alternate Communications Interface (ACI) SAN FNMOC Satellite Processing System (Navy Unique EDRs) METOC Portal/Web Services Products to Users via the Global Information Grid (GIG) Data Quality / Monitoring Assimilation into NOGAPS and COAMPS via NAVDAS -AR

NPOESS Processing Architecture NPOESS WAN FNMOC IDPS (Standard RDRs, TDRs, SDRs, EDRs) Alternate Communications Interface (ACI) SAN FNMOC Satellite Processing System (Navy Unique EDRs) METOC Portal/Web Services Products to Users via the Global Information Grid (GIG) Data Quality / Monitoring Assimilation into NOGAPS and COAMPS via NAVDAS -AR

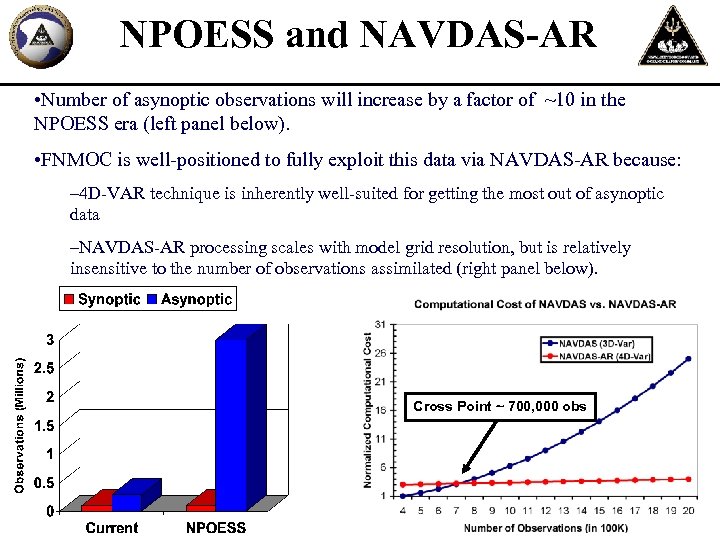

NPOESS and NAVDAS-AR • Number of asynoptic observations will increase by a factor of ~10 in the NPOESS era (left panel below). • FNMOC is well-positioned to fully exploit this data via NAVDAS-AR because: – 4 D-VAR technique is inherently well-suited for getting the most out of asynoptic data –NAVDAS-AR processing scales with model grid resolution, but is relatively insensitive to the number of observations assimilated (right panel below). Cross Point ~ 700, 000 obs

NPOESS and NAVDAS-AR • Number of asynoptic observations will increase by a factor of ~10 in the NPOESS era (left panel below). • FNMOC is well-positioned to fully exploit this data via NAVDAS-AR because: – 4 D-VAR technique is inherently well-suited for getting the most out of asynoptic data –NAVDAS-AR processing scales with model grid resolution, but is relatively insensitive to the number of observations assimilated (right panel below). Cross Point ~ 700, 000 obs

MILCON • $7. 3 M project with groundbreaking planned for July 2007 and completion by June 2008 • NPOESS IDPS - Additional Computer Room • SCIF improvements / enhancements • Watch Floor modernization • New Operational Briefing Area adjacent to Watch Floor • 9400 sq. ft. - new construction • 4800 sq. ft. - rehabilitation of existing spaces

MILCON • $7. 3 M project with groundbreaking planned for July 2007 and completion by June 2008 • NPOESS IDPS - Additional Computer Room • SCIF improvements / enhancements • Watch Floor modernization • New Operational Briefing Area adjacent to Watch Floor • 9400 sq. ft. - new construction • 4800 sq. ft. - rehabilitation of existing spaces

Summary • Fleet Numerical is an operational command, with direct support relationships and connectivity to Fleet and Joint Forces • Fleet Numerical is continually improving its core Numerical Modeling Capability and provides the only Do. D Information-Assured Global Atmospheric Model • “Team Monterey” is a unique partnership that focuses expertise to provide operational support like nowhere else “Supercomputing Excellence for Fleet Safety And Information Superiority”

Summary • Fleet Numerical is an operational command, with direct support relationships and connectivity to Fleet and Joint Forces • Fleet Numerical is continually improving its core Numerical Modeling Capability and provides the only Do. D Information-Assured Global Atmospheric Model • “Team Monterey” is a unique partnership that focuses expertise to provide operational support like nowhere else “Supercomputing Excellence for Fleet Safety And Information Superiority”

Questions?

Questions?

FNMOC BACKUPS

FNMOC BACKUPS

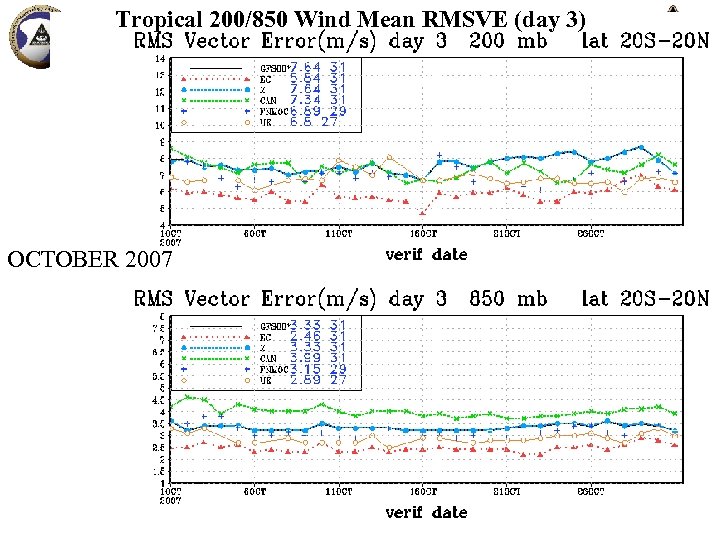

Tropical 200/850 Wind Mean RMSVE (day 3) OCTOBER 2007

Tropical 200/850 Wind Mean RMSVE (day 3) OCTOBER 2007

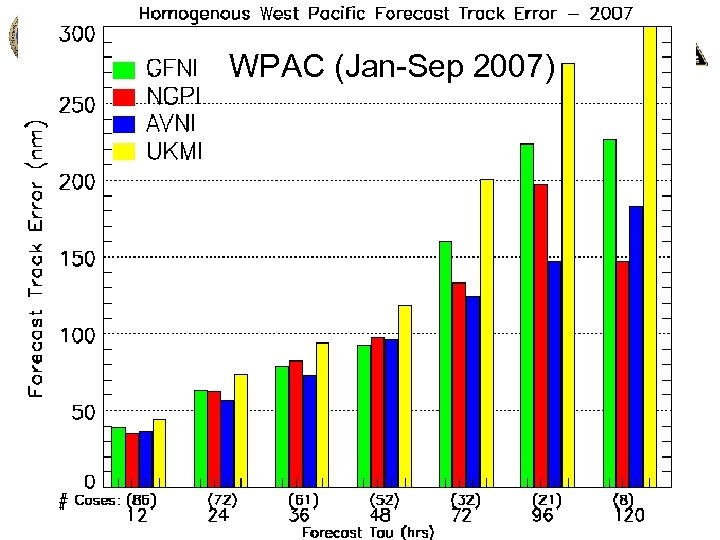

WPAC (Jan-Sep 2007)

WPAC (Jan-Sep 2007)

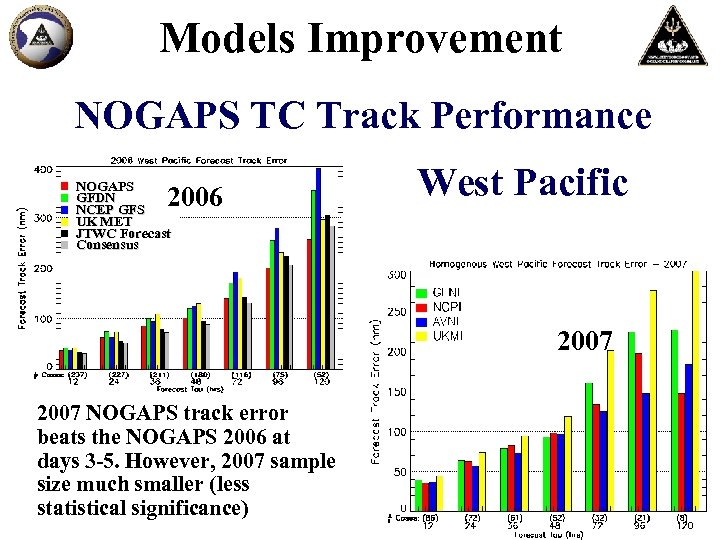

Models Improvement NOGAPS TC Track Performance NOGAPS GFDN NCEP GFS UK MET JTWC Forecast Consensus 2006 West Pacific 2007 NOGAPS track error beats the NOGAPS 2006 at days 3 -5. However, 2007 sample size much smaller (less statistical significance)

Models Improvement NOGAPS TC Track Performance NOGAPS GFDN NCEP GFS UK MET JTWC Forecast Consensus 2006 West Pacific 2007 NOGAPS track error beats the NOGAPS 2006 at days 3 -5. However, 2007 sample size much smaller (less statistical significance)