49b525ef356e1752dec73e68963c5ba4.ppt

- Количество слайдов: 176

First Joint Research Seminar of AI Departments of Ukraine and Netherlands A Review of Research Topics of the AI Department in Kharkov (Meta. Intelligence Laboratory) Vagan Terziyan, Helen Kaikova November 25 - December 5, 1999 Vrije University of Amsterdam (Netherlands)

First Joint Research Seminar of AI Departments of Ukraine and Netherlands A Review of Research Topics of the AI Department in Kharkov (Meta. Intelligence Laboratory) Vagan Terziyan, Helen Kaikova November 25 - December 5, 1999 Vrije University of Amsterdam (Netherlands)

Authors Vagan Terziyan vagan@kture. kharkov. ua Helen Kaikova helen@jytko. jyu. fi In cooperation with: Metaintelligence Laboratory Department of Artificial Intelligence Kharkov State Technical University of Radioelectronics, UKRAINE

Authors Vagan Terziyan vagan@kture. kharkov. ua Helen Kaikova helen@jytko. jyu. fi In cooperation with: Metaintelligence Laboratory Department of Artificial Intelligence Kharkov State Technical University of Radioelectronics, UKRAINE

Contents 4 A Metasemantic Network 4 Metasemantic Algebra of Contexts 4 The Law of Semantic Balance 4 Metapetrinets 4 Multidatabase Mining and Ensemble of Classifiers 4 Trends of Uncertainty, Expanding Context and Discovering Knowledge 4 Recursive Arithmetic 4 Similarity Evaluation in Multiagent Systems 4 On-Line Learning

Contents 4 A Metasemantic Network 4 Metasemantic Algebra of Contexts 4 The Law of Semantic Balance 4 Metapetrinets 4 Multidatabase Mining and Ensemble of Classifiers 4 Trends of Uncertainty, Expanding Context and Discovering Knowledge 4 Recursive Arithmetic 4 Similarity Evaluation in Multiagent Systems 4 On-Line Learning

A Metasemantic Network

A Metasemantic Network

A Semantic Metanetwork is considered formally as the set of semantic networks, which are put on each other in such a way that links of every previous semantic network are in the same time nodes of the next network

A Semantic Metanetwork is considered formally as the set of semantic networks, which are put on each other in such a way that links of every previous semantic network are in the same time nodes of the next network

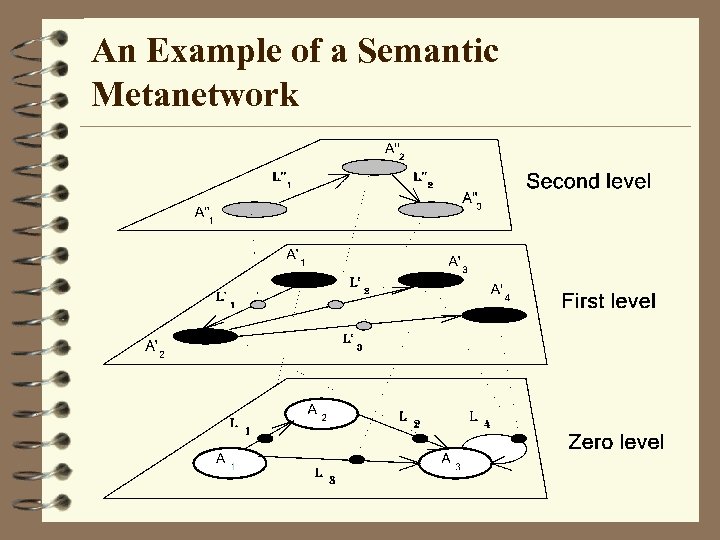

An Example of a Semantic Metanetwork

An Example of a Semantic Metanetwork

How it Works • In a Semantic Metanetwork every higher level controls semantic structure of the lower level. • Simple controlling rules might be, for example, in what contexts certain link of a semantic structure can exist and in what context it should be deleted from the semantic structure. • Such multilevel network can be used in an adaptive control system which structure is automatically changed following changes in a context of the environment. • The algebra for reasoning with a semantic metanetwork is also developed.

How it Works • In a Semantic Metanetwork every higher level controls semantic structure of the lower level. • Simple controlling rules might be, for example, in what contexts certain link of a semantic structure can exist and in what context it should be deleted from the semantic structure. • Such multilevel network can be used in an adaptive control system which structure is automatically changed following changes in a context of the environment. • The algebra for reasoning with a semantic metanetwork is also developed.

Published and Further Developed in Puuronen S. , Terziyan V. , A Metasemantic Network, In: E. Hyvonen, J. Seppanen and M. Syrjanen (eds. ), Ste. P-92 - New Directions in Artificial Intelligence, Publications of the Finnish AI Society, Otaniemi, Finland, 1992, Vol. 1, pp. 136 -143. Terziyan V. , Multilevel Models for Knowledge Bases Control and Their Applications to Automated Information Systems, Doctor of Technical Sciences Degree Thesis, Kharkov State Technical University of Radioelectronics, 1993

Published and Further Developed in Puuronen S. , Terziyan V. , A Metasemantic Network, In: E. Hyvonen, J. Seppanen and M. Syrjanen (eds. ), Ste. P-92 - New Directions in Artificial Intelligence, Publications of the Finnish AI Society, Otaniemi, Finland, 1992, Vol. 1, pp. 136 -143. Terziyan V. , Multilevel Models for Knowledge Bases Control and Their Applications to Automated Information Systems, Doctor of Technical Sciences Degree Thesis, Kharkov State Technical University of Radioelectronics, 1993

A Metasemantic Algebra for Managing Contexts

A Metasemantic Algebra for Managing Contexts

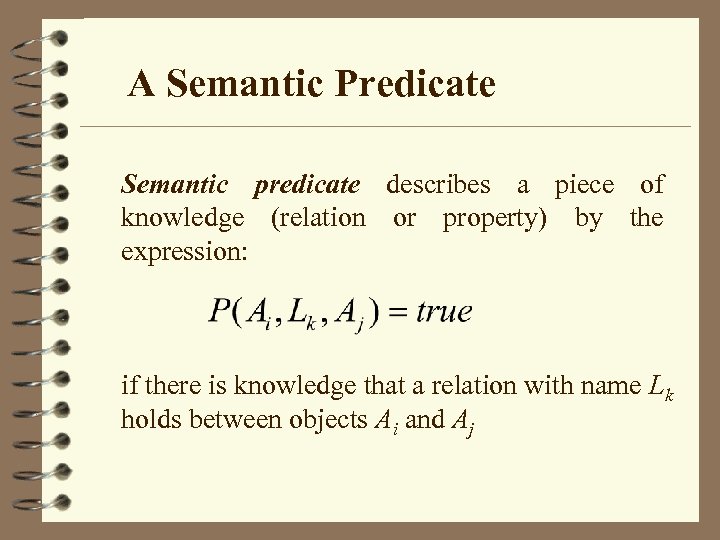

A Semantic Predicate Semantic predicate describes a piece of knowledge (relation or property) by the expression: if there is knowledge that a relation with name Lk holds between objects Ai and Aj

A Semantic Predicate Semantic predicate describes a piece of knowledge (relation or property) by the expression: if there is knowledge that a relation with name Lk holds between objects Ai and Aj

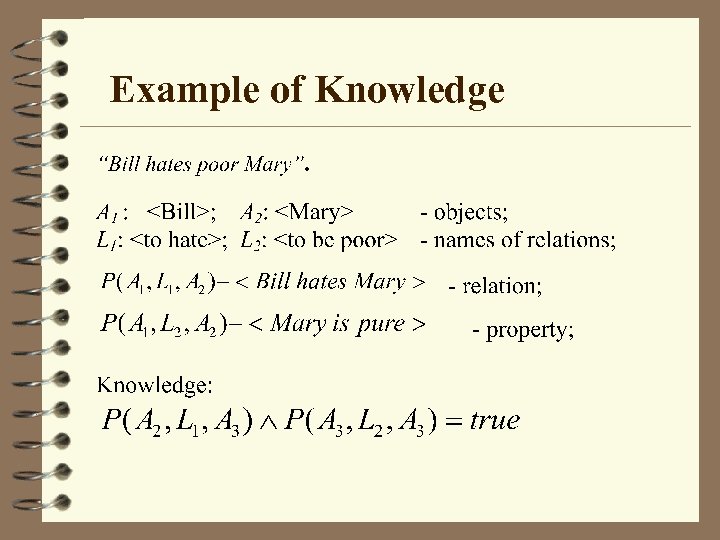

Example of Knowledge

Example of Knowledge

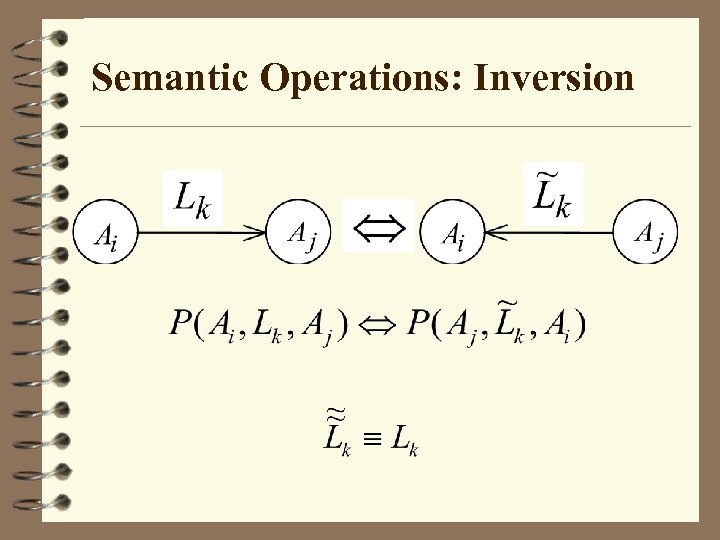

Semantic Operations: Inversion

Semantic Operations: Inversion

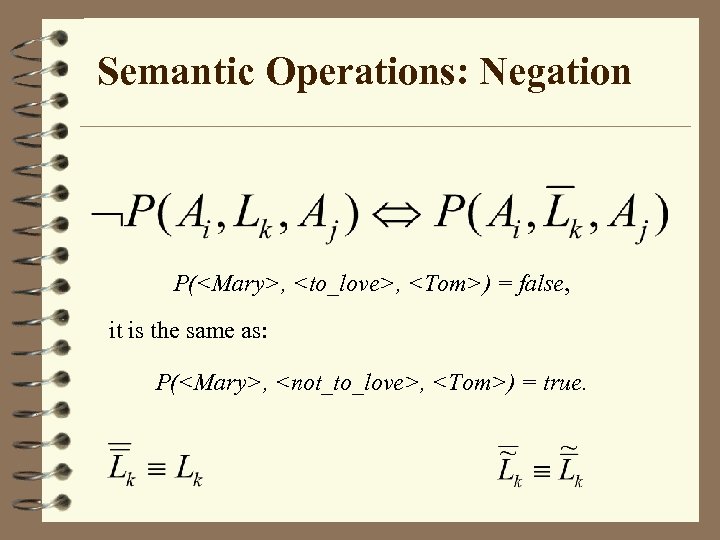

Semantic Operations: Negation P(

Semantic Operations: Negation P(

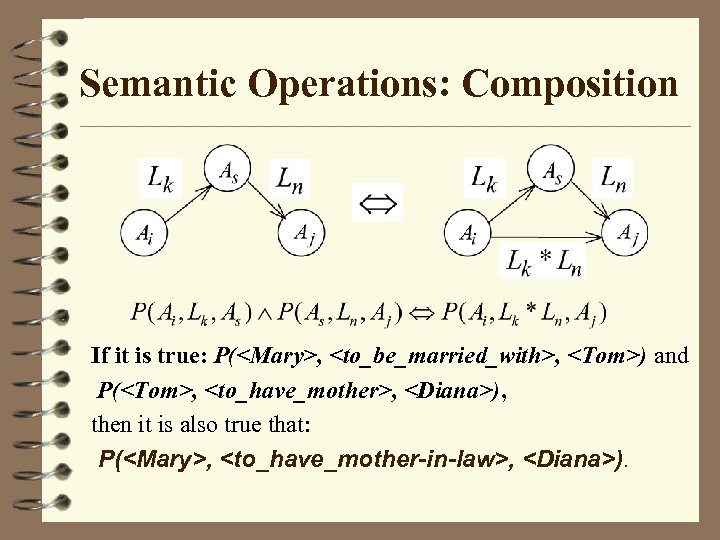

Semantic Operations: Composition If it is true: P(

Semantic Operations: Composition If it is true: P(

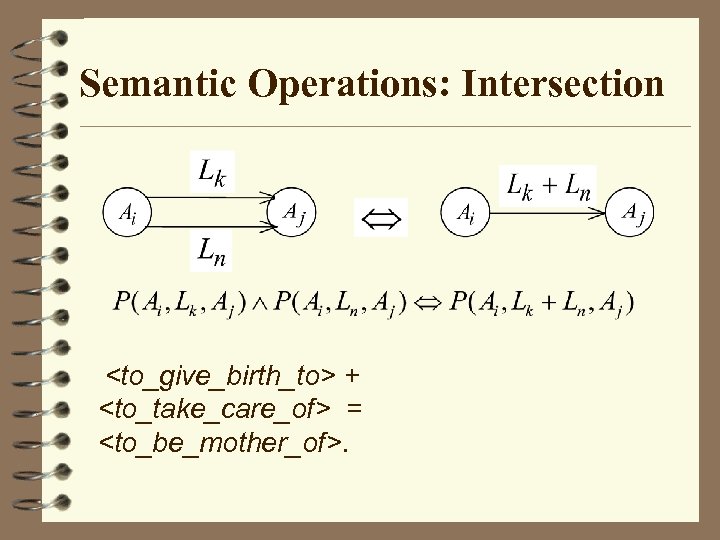

Semantic Operations: Intersection

Semantic Operations: Intersection

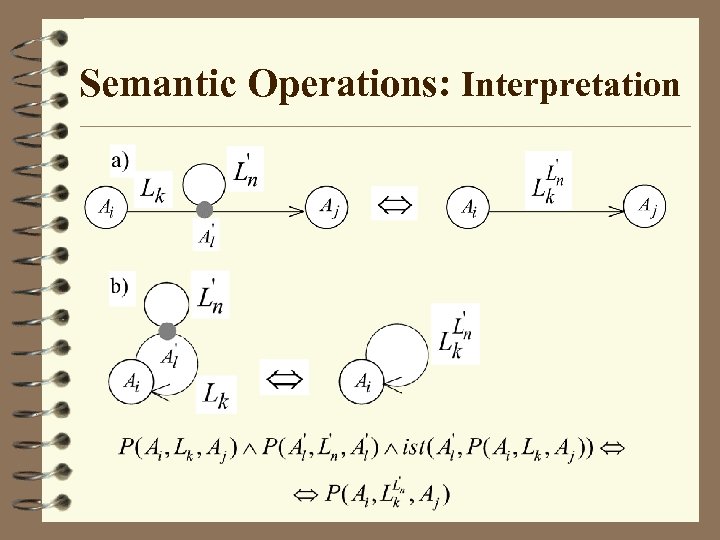

Semantic Operations: Interpretation

Semantic Operations: Interpretation

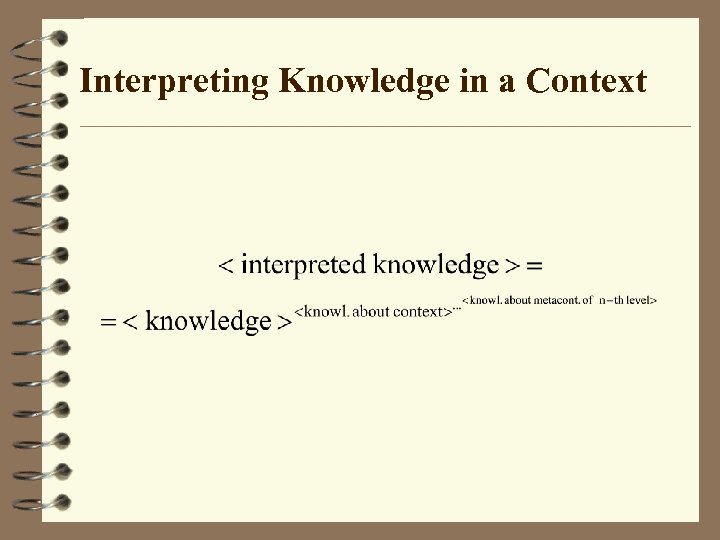

Interpreting Knowledge in a Context

Interpreting Knowledge in a Context

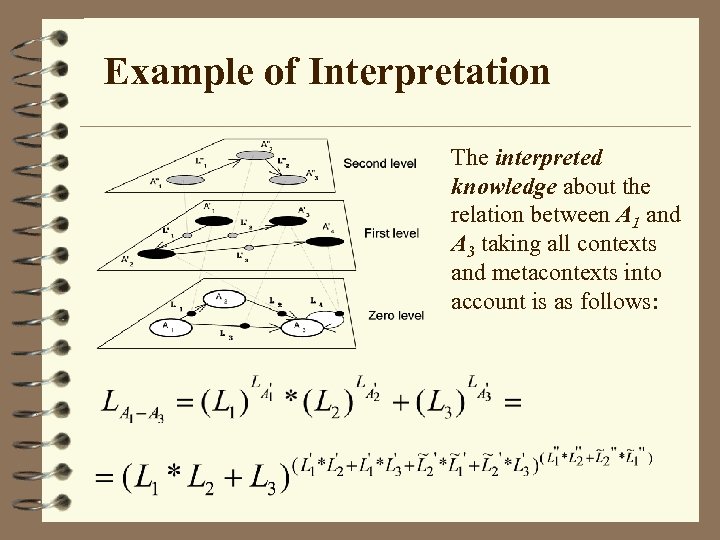

Example of Interpretation The interpreted knowledge about the relation between A 1 and A 3 taking all contexts and metacontexts into account is as follows:

Example of Interpretation The interpreted knowledge about the relation between A 1 and A 3 taking all contexts and metacontexts into account is as follows:

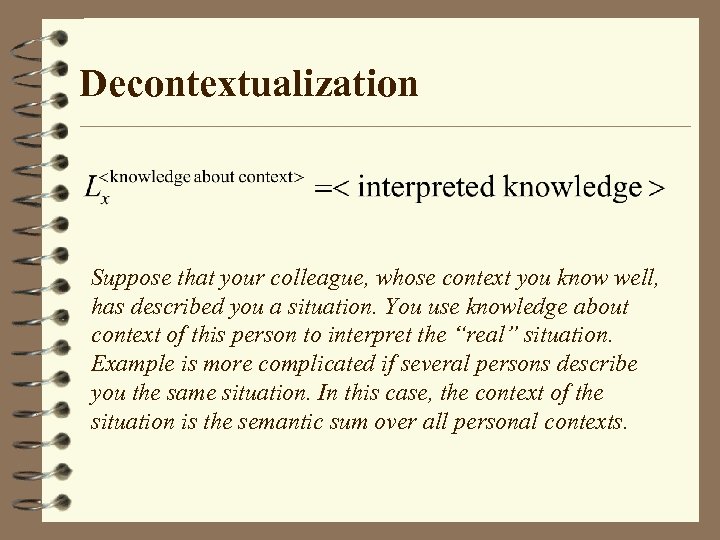

Decontextualization Suppose that your colleague, whose context you know well, has described you a situation. You use knowledge about context of this person to interpret the “real” situation. Example is more complicated if several persons describe you the same situation. In this case, the context of the situation is the semantic sum over all personal contexts.

Decontextualization Suppose that your colleague, whose context you know well, has described you a situation. You use knowledge about context of this person to interpret the “real” situation. Example is more complicated if several persons describe you the same situation. In this case, the context of the situation is the semantic sum over all personal contexts.

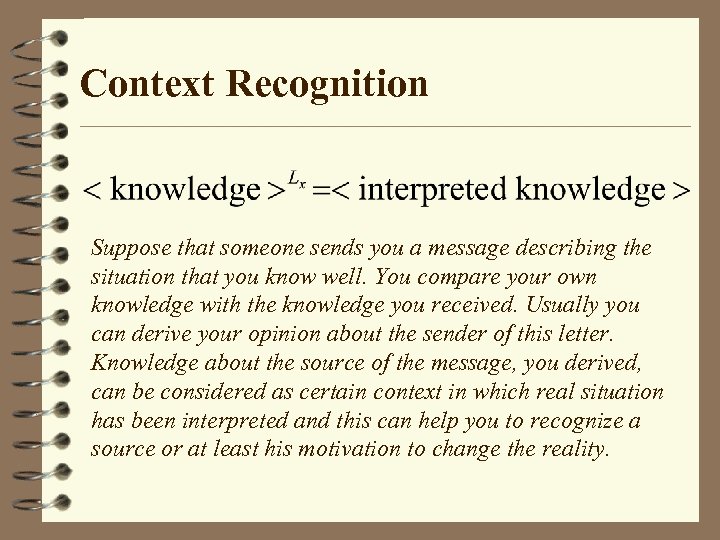

Context Recognition Suppose that someone sends you a message describing the situation that you know well. You compare your own knowledge with the knowledge you received. Usually you can derive your opinion about the sender of this letter. Knowledge about the source of the message, you derived, can be considered as certain context in which real situation has been interpreted and this can help you to recognize a source or at least his motivation to change the reality.

Context Recognition Suppose that someone sends you a message describing the situation that you know well. You compare your own knowledge with the knowledge you received. Usually you can derive your opinion about the sender of this letter. Knowledge about the source of the message, you derived, can be considered as certain context in which real situation has been interpreted and this can help you to recognize a source or at least his motivation to change the reality.

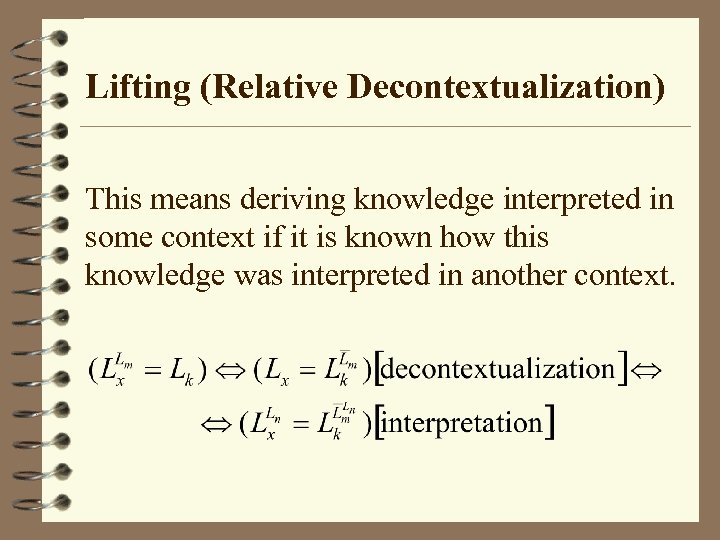

Lifting (Relative Decontextualization) This means deriving knowledge interpreted in some context if it is known how this knowledge was interpreted in another context.

Lifting (Relative Decontextualization) This means deriving knowledge interpreted in some context if it is known how this knowledge was interpreted in another context.

Published and Further Developed in Terziyan V. , Puuronen S. , Multilevel Context Representation Using Semantic Metanetwork, In: Context 97 - Proceedings of International and Interdisciplinary Conference on Modeling and Using Context, Rio de Janeiro, Brazil, Febr. 4 -6, 1997, pp. 21 -32. Terziyan V. , Puuronen S. , Reasoning with Multilevel Contexts in Semantic Metanetworks, In: D. M. Gabbay (Ed. ), Formal Aspects in Context, Kluwer Academic Publishers, 1999, pp. 173 -190.

Published and Further Developed in Terziyan V. , Puuronen S. , Multilevel Context Representation Using Semantic Metanetwork, In: Context 97 - Proceedings of International and Interdisciplinary Conference on Modeling and Using Context, Rio de Janeiro, Brazil, Febr. 4 -6, 1997, pp. 21 -32. Terziyan V. , Puuronen S. , Reasoning with Multilevel Contexts in Semantic Metanetworks, In: D. M. Gabbay (Ed. ), Formal Aspects in Context, Kluwer Academic Publishers, 1999, pp. 173 -190.

The Law of Semantic Balance

The Law of Semantic Balance

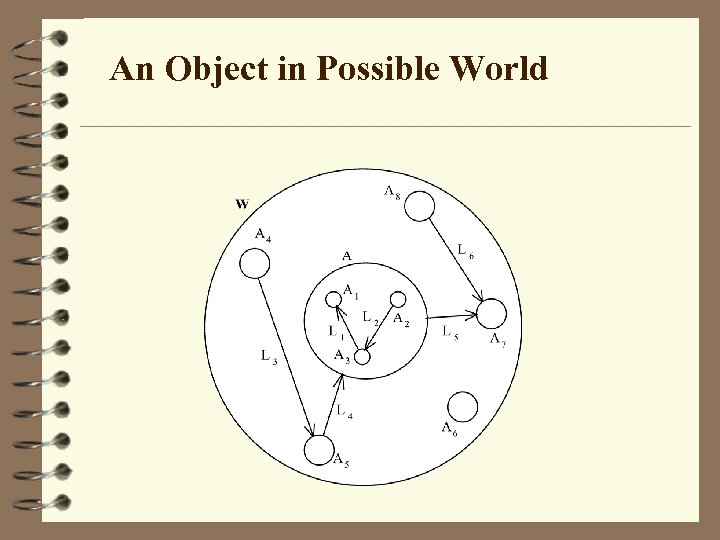

An Object in Possible World

An Object in Possible World

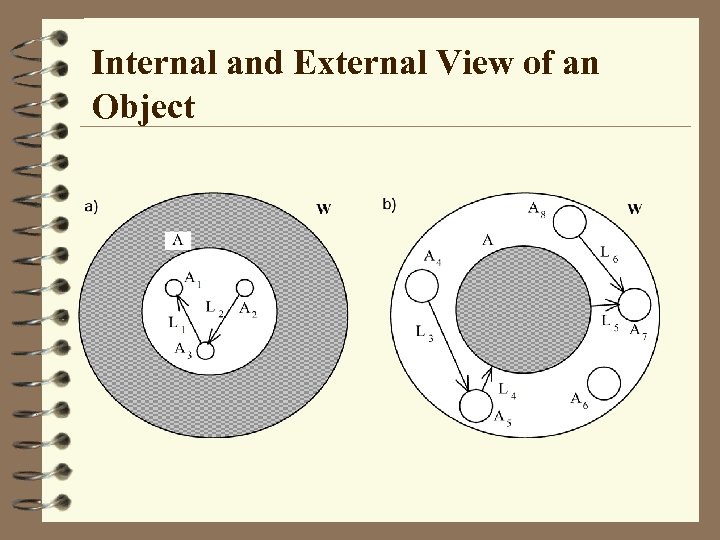

Internal and External View of an Object

Internal and External View of an Object

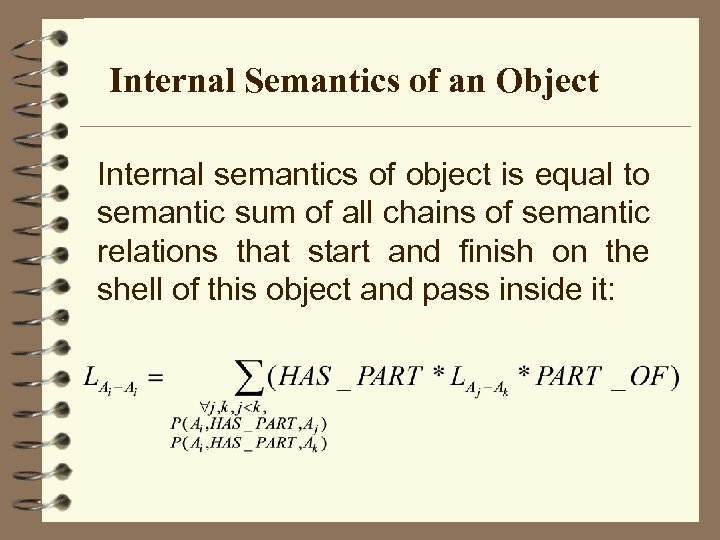

Internal Semantics of an Object Internal semantics of object is equal to semantic sum of all chains of semantic relations that start and finish on the shell of this object and pass inside it:

Internal Semantics of an Object Internal semantics of object is equal to semantic sum of all chains of semantic relations that start and finish on the shell of this object and pass inside it:

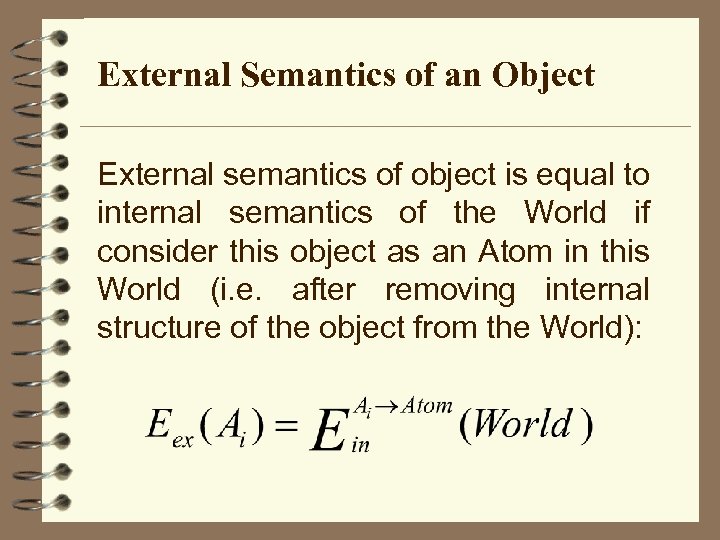

External Semantics of an Object External semantics of object is equal to internal semantics of the World if consider this object as an Atom in this World (i. e. after removing internal structure of the object from the World):

External Semantics of an Object External semantics of object is equal to internal semantics of the World if consider this object as an Atom in this World (i. e. after removing internal structure of the object from the World):

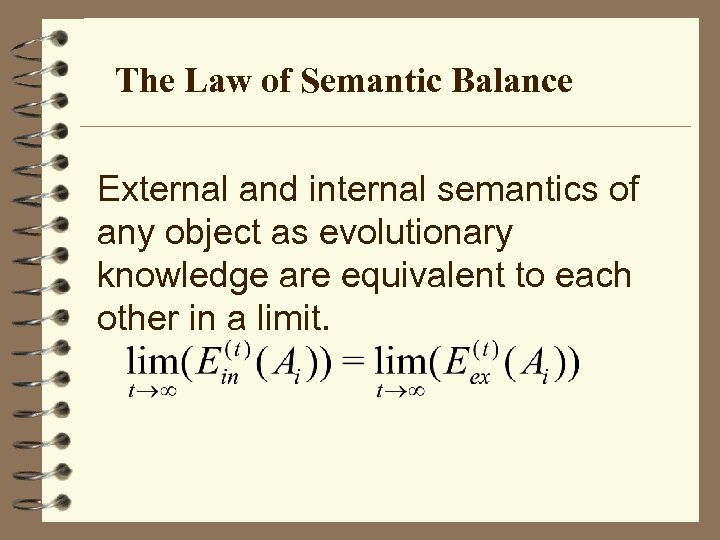

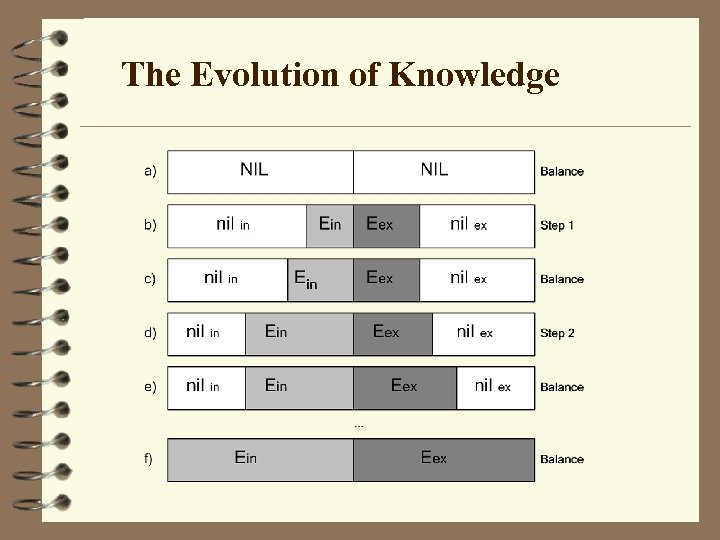

The Law of Semantic Balance External and internal semantics of any object as evolutionary knowledge are equivalent to each other in a limit.

The Law of Semantic Balance External and internal semantics of any object as evolutionary knowledge are equivalent to each other in a limit.

The Evolution of Knowledge

The Evolution of Knowledge

Published and Further Developed in Terziyan V. , Multilevel Models for Knowledge Bases Control and Their Applications to Automated Information Systems, Doctor of Technical Sciences Degree Thesis, Kharkov State Technical University of Radioelectronics, 1993 Grebenyuk V. , Kaikova H. , Terziyan V. , Puuronen S. , The Law of Semantic Balance and its Use in Modeling Possible Worlds, In: STe. P-96 Genes, Nets and Symbols, Publications of the Finnish AI Society, Vaasa, Finland, 1996, pp. 97 -103. Terziyan V. , Puuronen S. , Knowledge Acquisition Based on Semantic Balance of Internal and External Knowledge, In: I. Imam, Y. Kondratoff, A. El-Dessouki and A. Moonis (Eds. ), Multiple Approaches to Intelligent Systems, Lecture Notes in Artificial Intelligence, Springer-Verlag, V. 1611, 1999, pp. 353 -361.

Published and Further Developed in Terziyan V. , Multilevel Models for Knowledge Bases Control and Their Applications to Automated Information Systems, Doctor of Technical Sciences Degree Thesis, Kharkov State Technical University of Radioelectronics, 1993 Grebenyuk V. , Kaikova H. , Terziyan V. , Puuronen S. , The Law of Semantic Balance and its Use in Modeling Possible Worlds, In: STe. P-96 Genes, Nets and Symbols, Publications of the Finnish AI Society, Vaasa, Finland, 1996, pp. 97 -103. Terziyan V. , Puuronen S. , Knowledge Acquisition Based on Semantic Balance of Internal and External Knowledge, In: I. Imam, Y. Kondratoff, A. El-Dessouki and A. Moonis (Eds. ), Multiple Approaches to Intelligent Systems, Lecture Notes in Artificial Intelligence, Springer-Verlag, V. 1611, 1999, pp. 353 -361.

Metapetrinets for Flexible Modelling and Control of Complicated Dynamic Processes

Metapetrinets for Flexible Modelling and Control of Complicated Dynamic Processes

A Metapetrinet • A metapetrinet is able not only to change the marking of a petrinet but also to reconfigure dynamically its structure • Each level of the new structure is an ordinary petrinet of some traditional type. • A basic level petrinet simulates the process of some application. • The second level, i. e. the metapetrinet, is used to simulate and help controlling the configuration change at the basic level.

A Metapetrinet • A metapetrinet is able not only to change the marking of a petrinet but also to reconfigure dynamically its structure • Each level of the new structure is an ordinary petrinet of some traditional type. • A basic level petrinet simulates the process of some application. • The second level, i. e. the metapetrinet, is used to simulate and help controlling the configuration change at the basic level.

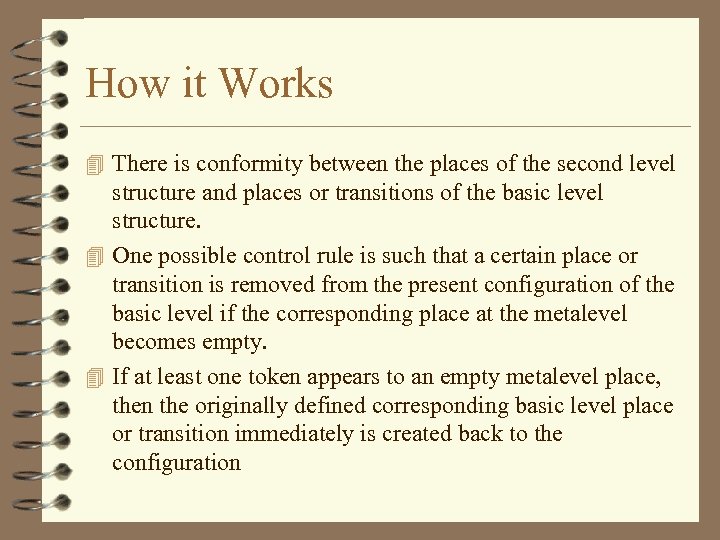

How it Works 4 There is conformity between the places of the second level structure and places or transitions of the basic level structure. 4 One possible control rule is such that a certain place or transition is removed from the present configuration of the basic level if the corresponding place at the metalevel becomes empty. 4 If at least one token appears to an empty metalevel place, then the originally defined corresponding basic level place or transition immediately is created back to the configuration

How it Works 4 There is conformity between the places of the second level structure and places or transitions of the basic level structure. 4 One possible control rule is such that a certain place or transition is removed from the present configuration of the basic level if the corresponding place at the metalevel becomes empty. 4 If at least one token appears to an empty metalevel place, then the originally defined corresponding basic level place or transition immediately is created back to the configuration

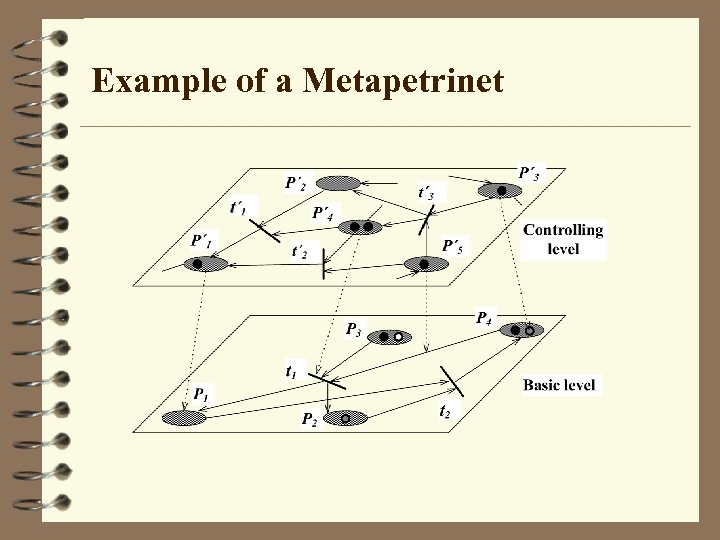

Example of a Metapetrinet

Example of a Metapetrinet

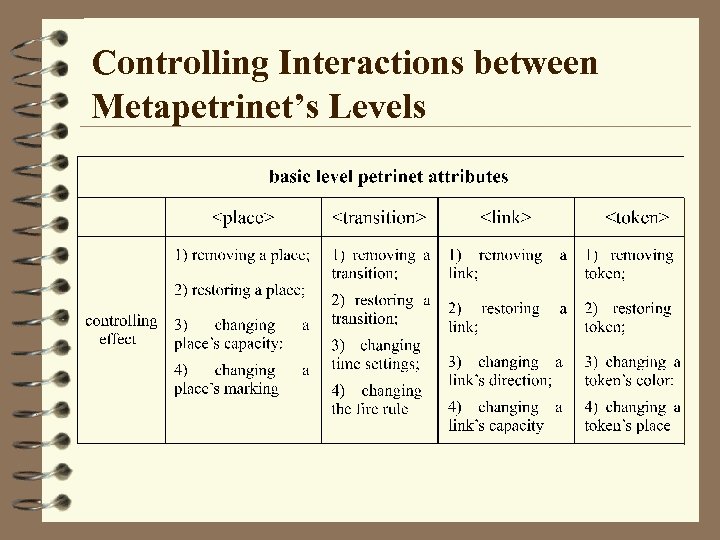

Controlling Interactions between Metapetrinet’s Levels

Controlling Interactions between Metapetrinet’s Levels

Published and Further Developed in Terziyan V. , Multilevel Models for Knowledge Bases Control and Their Applications to Automated Information Systems, Doctor of Technical Sciences Degree Thesis, Kharkov State Technical University of Radioelectronics, 1993 Savolainen V. , Terziyan V. , Metapetrinets for Controlling Complex and Dynamic Processes, International Journal of Information and Management Sciences, V. 10, No. 1, March 1999, pp. 13 -32.

Published and Further Developed in Terziyan V. , Multilevel Models for Knowledge Bases Control and Their Applications to Automated Information Systems, Doctor of Technical Sciences Degree Thesis, Kharkov State Technical University of Radioelectronics, 1993 Savolainen V. , Terziyan V. , Metapetrinets for Controlling Complex and Dynamic Processes, International Journal of Information and Management Sciences, V. 10, No. 1, March 1999, pp. 13 -32.

Mining Several Databases with an Ensemble of Classifiers

Mining Several Databases with an Ensemble of Classifiers

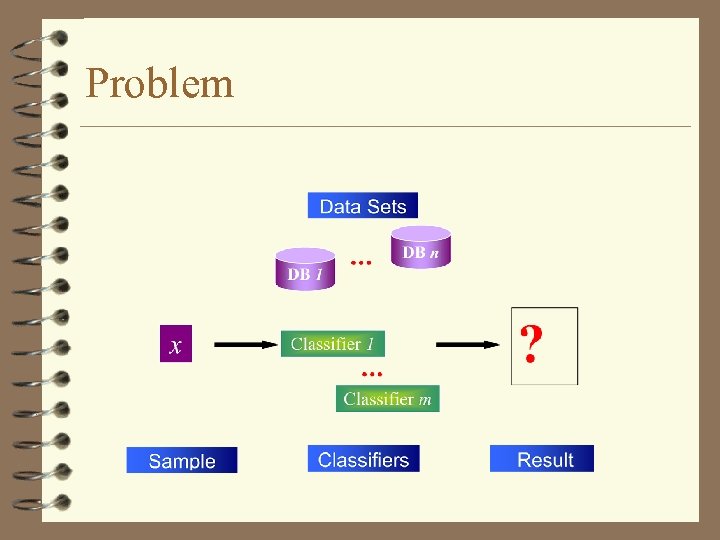

Problem

Problem

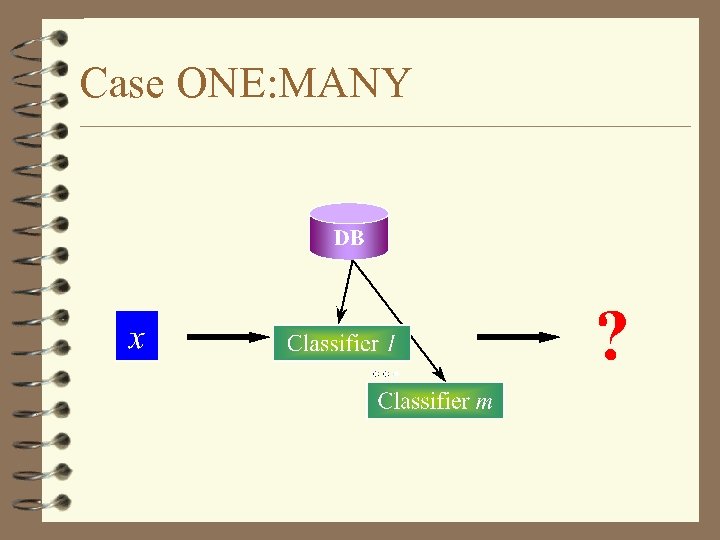

Case ONE: MANY

Case ONE: MANY

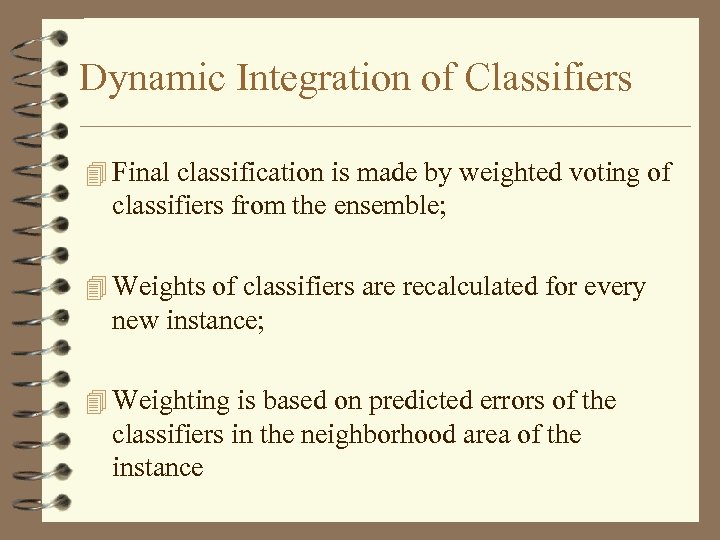

Dynamic Integration of Classifiers 4 Final classification is made by weighted voting of classifiers from the ensemble; 4 Weights of classifiers are recalculated for every new instance; 4 Weighting is based on predicted errors of the classifiers in the neighborhood area of the instance

Dynamic Integration of Classifiers 4 Final classification is made by weighted voting of classifiers from the ensemble; 4 Weights of classifiers are recalculated for every new instance; 4 Weighting is based on predicted errors of the classifiers in the neighborhood area of the instance

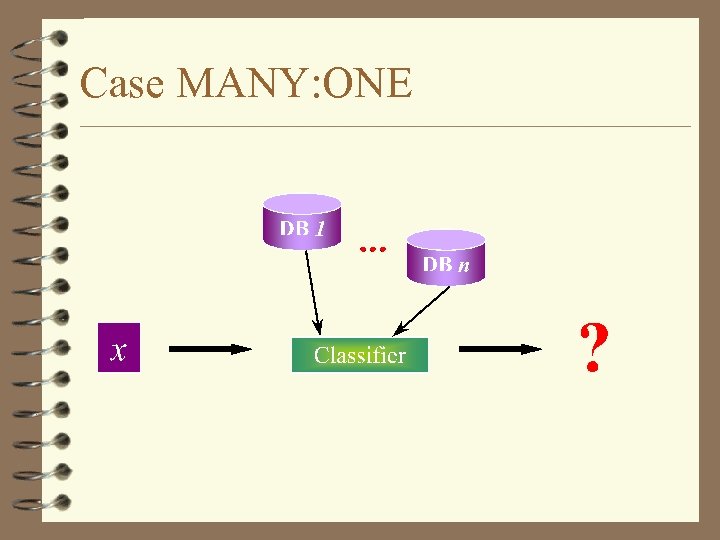

Case MANY: ONE

Case MANY: ONE

Integration of Databases 4 Final classification of an instance is obtained by weighted voting of predictions made by the classifier for every database separately; 4 Weighting is based on taking the integral of the error function of the classifier across every database

Integration of Databases 4 Final classification of an instance is obtained by weighted voting of predictions made by the classifier for every database separately; 4 Weighting is based on taking the integral of the error function of the classifier across every database

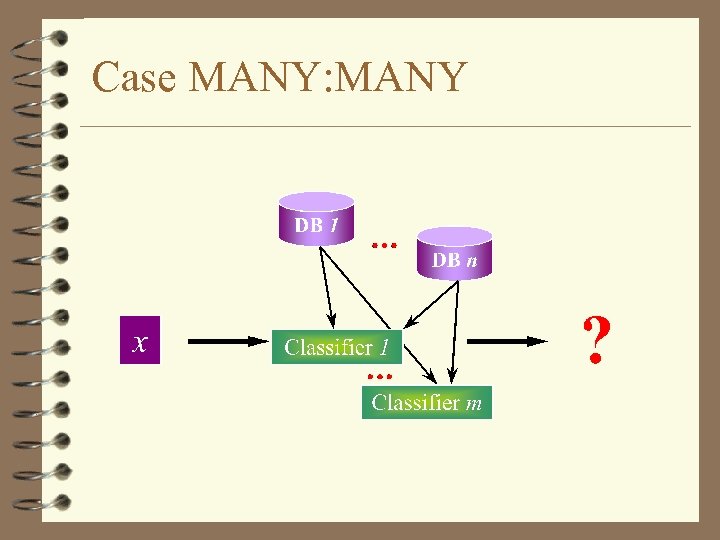

Case MANY: MANY

Case MANY: MANY

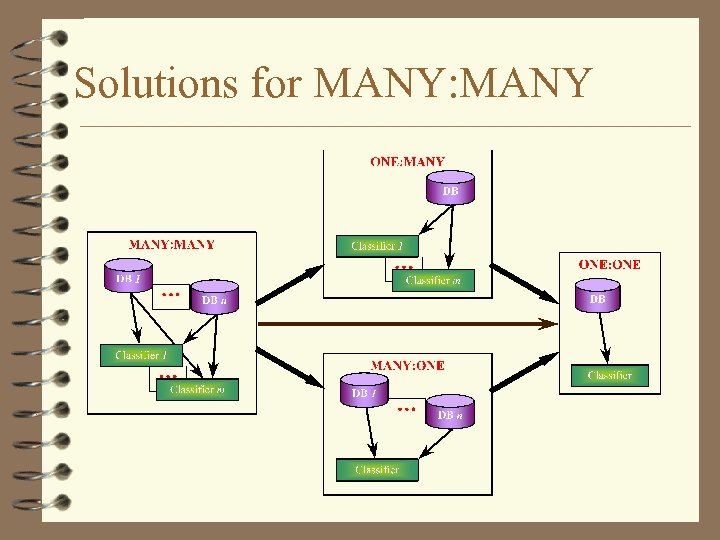

Solutions for MANY: MANY

Solutions for MANY: MANY

Decontextualization of Predictions 4 Sometimes actual value cannot be predicted as weighted 4 4 mean of individual predictions of classifiers from the ensemble; It means that the actual value is outside the area of predictions; It happens if classifiers are effected by the same type of a context with different power; It results to a trend among predictions from the less powerful context to the most powerful one; In this case actual value can be obtained as the result of “decontextualization” of the individual predictions

Decontextualization of Predictions 4 Sometimes actual value cannot be predicted as weighted 4 4 mean of individual predictions of classifiers from the ensemble; It means that the actual value is outside the area of predictions; It happens if classifiers are effected by the same type of a context with different power; It results to a trend among predictions from the less powerful context to the most powerful one; In this case actual value can be obtained as the result of “decontextualization” of the individual predictions

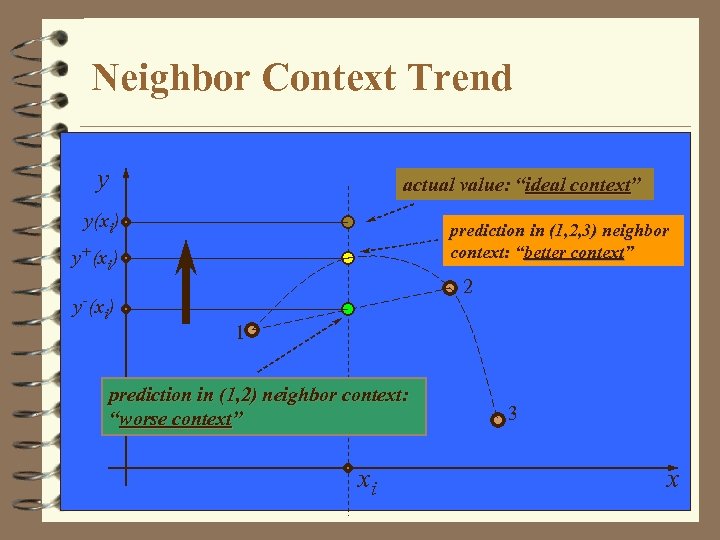

Neighbor Context Trend y actual value: “ideal context” y(xi) prediction in (1, 2, 3) neighbor context: “better context” y+(xi) 2 y-(x ) i 1 prediction in (1, 2) neighbor context: “worse context” xi 3 x

Neighbor Context Trend y actual value: “ideal context” y(xi) prediction in (1, 2, 3) neighbor context: “better context” y+(xi) 2 y-(x ) i 1 prediction in (1, 2) neighbor context: “worse context” xi 3 x

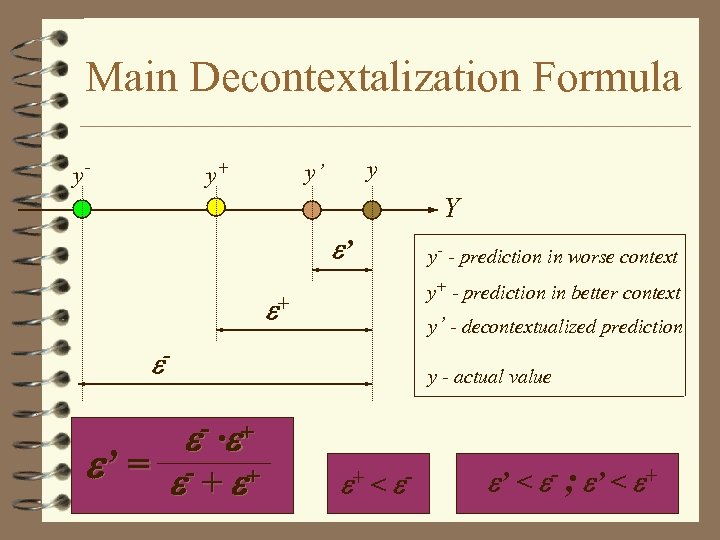

Main Decontextalization Formula y- y+ y y’ Y ’ y+ - prediction in better context + y’ - decontextualized prediction - - · + ’ = - + + y- - prediction in worse context y - actual value + < - ’ < - ; ’ < +

Main Decontextalization Formula y- y+ y y’ Y ’ y+ - prediction in better context + y’ - decontextualized prediction - - · + ’ = - + + y- - prediction in worse context y - actual value + < - ’ < - ; ’ < +

Some Notes 4 Dynamic integration of classifiers based on locally adaptive weights of classifiers allows to handle the case «One Dataset - Many Classifiers» ; 4 Integration of databases based on their integral weights relatively to the classification accuracy allows to handle the case «One Classifier - Many Datasets» ; 4 Successive or parallel application of the two abowe algorithms allows a variety of solutions for the case «Many Classifiers - Many Datasets» ; 4 Decontextualization as the opposite to weighted voting way of integration of classifiers allows to handle context of classification in the case of a trend

Some Notes 4 Dynamic integration of classifiers based on locally adaptive weights of classifiers allows to handle the case «One Dataset - Many Classifiers» ; 4 Integration of databases based on their integral weights relatively to the classification accuracy allows to handle the case «One Classifier - Many Datasets» ; 4 Successive or parallel application of the two abowe algorithms allows a variety of solutions for the case «Many Classifiers - Many Datasets» ; 4 Decontextualization as the opposite to weighted voting way of integration of classifiers allows to handle context of classification in the case of a trend

Published in Puuronen S. , Terziyan V. , Logvinovsky A. , Mining Several Data Bases with an Ensemble of Classifiers, In: T. Bench-Capon, G. Soda and M. Tjoa (Eds. ), Database and Expert Systems Applications, Lecture Notes in Computer Science, Springer-Verlag , V. 1677, 1999, pp. 882 -891.

Published in Puuronen S. , Terziyan V. , Logvinovsky A. , Mining Several Data Bases with an Ensemble of Classifiers, In: T. Bench-Capon, G. Soda and M. Tjoa (Eds. ), Database and Expert Systems Applications, Lecture Notes in Computer Science, Springer-Verlag , V. 1677, 1999, pp. 882 -891.

Other Related Publications Terziyan V. , Tsymbal A. , Puuronen S. , The Decision Support System for Telemedicine Based on Multiple Expertise, International Journal of Medical Informatics, Elsevier, V. 49, No. 2, 1998, pp. 217 -229. Tsymbal A. , Puuronen S. , Terziyan V. , Arbiter Meta-Learning with Dynamic Selection of Classifiers and its Experimental Investigation, In: J. Eder, I. Rozman, and T. Welzer (Eds. ), Advances in Databases and Information Systems, Lecture Notes in Computer Science, Springer-Verlag, Vol. 1691, 1999, pp. 205 -217. Skrypnik I. , Terziyan V. , Puuronen S. , Tsymbal A. , Learning Feature Selection for Medical Databases, In: Proceedings of the 12 th IEEE Symposium on Computer-Based Medical Systems CBMS'99, Stamford, CT, USA, June 1999, IEEE CS Press, pp. 53 -58. Puuronen S. , Terziyan V. , Tsymbal A. , A Dynamic Integration Algorithm for an Ensemble of Classifiers, In: Zbigniew W. Ras, Andrzej Skowron (Eds. ), Foundations of Intelligent Systems: 11 th International Symposium ISMIS'99, Warsaw, Poland, June 1999, Lecture Notes in Artificial Intelligence, V. 1609, Springer-Verlag, pp. 592 -600.

Other Related Publications Terziyan V. , Tsymbal A. , Puuronen S. , The Decision Support System for Telemedicine Based on Multiple Expertise, International Journal of Medical Informatics, Elsevier, V. 49, No. 2, 1998, pp. 217 -229. Tsymbal A. , Puuronen S. , Terziyan V. , Arbiter Meta-Learning with Dynamic Selection of Classifiers and its Experimental Investigation, In: J. Eder, I. Rozman, and T. Welzer (Eds. ), Advances in Databases and Information Systems, Lecture Notes in Computer Science, Springer-Verlag, Vol. 1691, 1999, pp. 205 -217. Skrypnik I. , Terziyan V. , Puuronen S. , Tsymbal A. , Learning Feature Selection for Medical Databases, In: Proceedings of the 12 th IEEE Symposium on Computer-Based Medical Systems CBMS'99, Stamford, CT, USA, June 1999, IEEE CS Press, pp. 53 -58. Puuronen S. , Terziyan V. , Tsymbal A. , A Dynamic Integration Algorithm for an Ensemble of Classifiers, In: Zbigniew W. Ras, Andrzej Skowron (Eds. ), Foundations of Intelligent Systems: 11 th International Symposium ISMIS'99, Warsaw, Poland, June 1999, Lecture Notes in Artificial Intelligence, V. 1609, Springer-Verlag, pp. 592 -600.

An Interval Approach to Discover Knowledge from Multiple Fuzzy Estimations

An Interval Approach to Discover Knowledge from Multiple Fuzzy Estimations

The Problem of Interval Estimation 4 Measurements (as well as expert opinions) are not absolutely accurate. 4 The measurement result is expected to lie in the interval around the actual value. 4 The inaccuracy leads to the need to estimate the resulting inaccuracy of data processing. 4 When experts are used to estimate the value of some parameter, intervals are commonly used to describe degrees of belief.

The Problem of Interval Estimation 4 Measurements (as well as expert opinions) are not absolutely accurate. 4 The measurement result is expected to lie in the interval around the actual value. 4 The inaccuracy leads to the need to estimate the resulting inaccuracy of data processing. 4 When experts are used to estimate the value of some parameter, intervals are commonly used to describe degrees of belief.

Noise of an Interval Estimation 4 In many real life cases there is also some noise which does not allow direct measurement of parameters. 4 The noise can be considered as an undesirable effect (context) to the evaluation of a parameter. 4 Different measurement instruments as well as different experts possess different resistance against the influence of noise. 4 Using measurements from several different instruments as well as estimations from multiple experts we try to discover the effect caused by noise and thus be able to derive the decontextualized measurement result.

Noise of an Interval Estimation 4 In many real life cases there is also some noise which does not allow direct measurement of parameters. 4 The noise can be considered as an undesirable effect (context) to the evaluation of a parameter. 4 Different measurement instruments as well as different experts possess different resistance against the influence of noise. 4 Using measurements from several different instruments as well as estimations from multiple experts we try to discover the effect caused by noise and thus be able to derive the decontextualized measurement result.

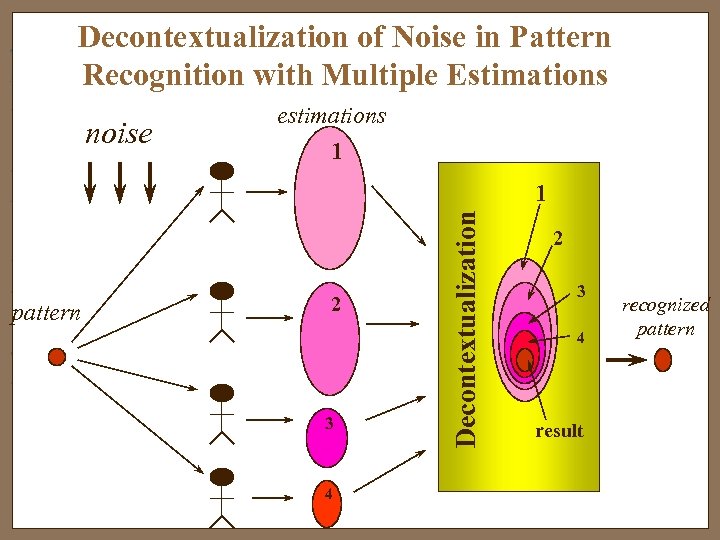

Decontextualization of Noise in Pattern Recognition with Multiple Estimations noise estimations 1 pattern 2 3 4 Decontextualization 1 2 3 4 result recognized pattern

Decontextualization of Noise in Pattern Recognition with Multiple Estimations noise estimations 1 pattern 2 3 4 Decontextualization 1 2 3 4 result recognized pattern

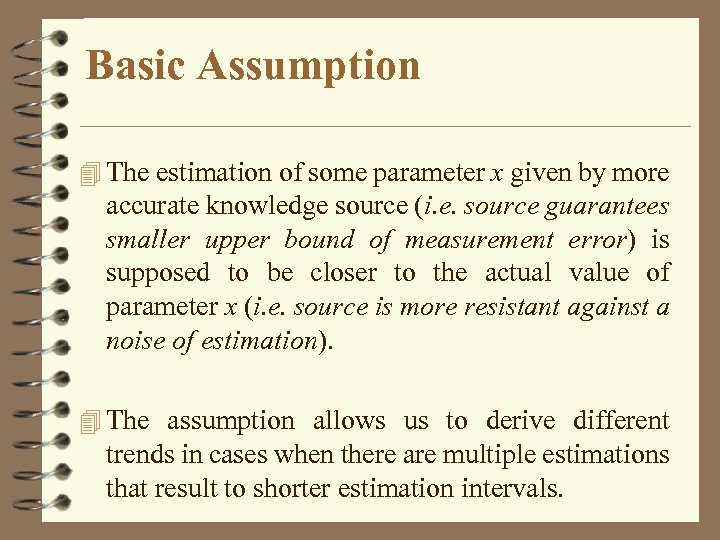

Basic Assumption 4 The estimation of some parameter x given by more accurate knowledge source (i. e. source guarantees smaller upper bound of measurement error) is supposed to be closer to the actual value of parameter x (i. e. source is more resistant against a noise of estimation). 4 The assumption allows us to derive different trends in cases when there are multiple estimations that result to shorter estimation intervals.

Basic Assumption 4 The estimation of some parameter x given by more accurate knowledge source (i. e. source guarantees smaller upper bound of measurement error) is supposed to be closer to the actual value of parameter x (i. e. source is more resistant against a noise of estimation). 4 The assumption allows us to derive different trends in cases when there are multiple estimations that result to shorter estimation intervals.

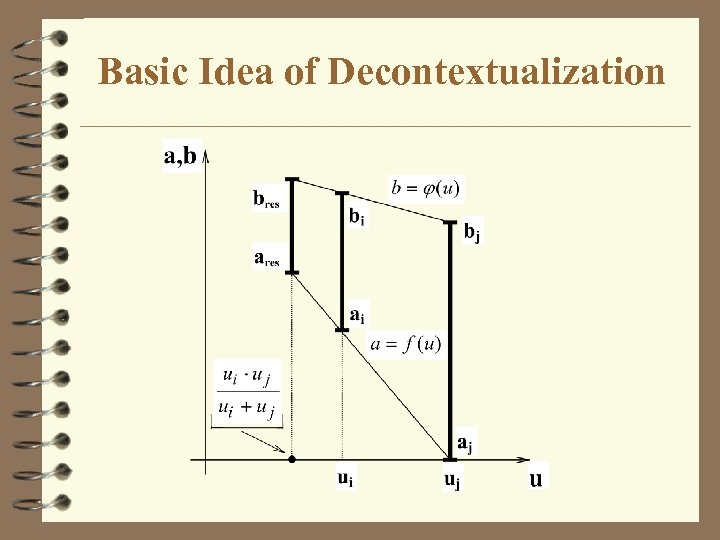

Basic Idea of Decontextualization

Basic Idea of Decontextualization

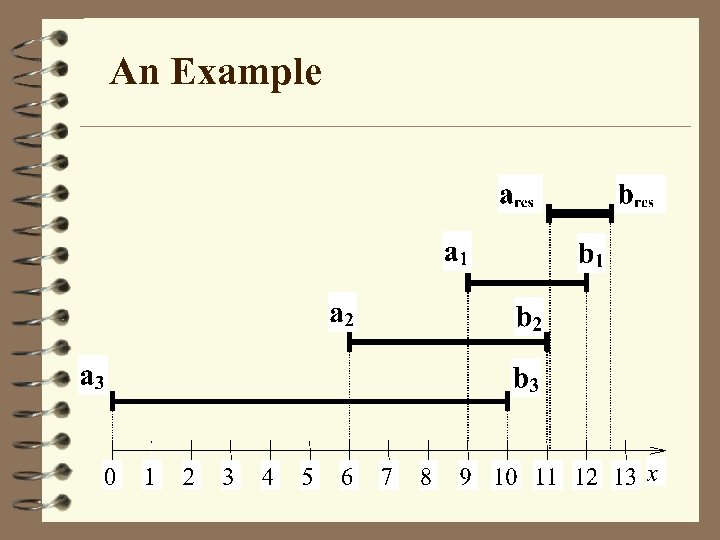

An Example

An Example

Some Notes 4 If you have several opinions (estimations, recognition results, solutions etc. ) with different value of uncertainty you can select the most precise one, however 4 it seems more reasonable to order opinions from the worst to the best one and try to recognize a trend of uncertainty which helps you to derive opinion more precise than the best one.

Some Notes 4 If you have several opinions (estimations, recognition results, solutions etc. ) with different value of uncertainty you can select the most precise one, however 4 it seems more reasonable to order opinions from the worst to the best one and try to recognize a trend of uncertainty which helps you to derive opinion more precise than the best one.

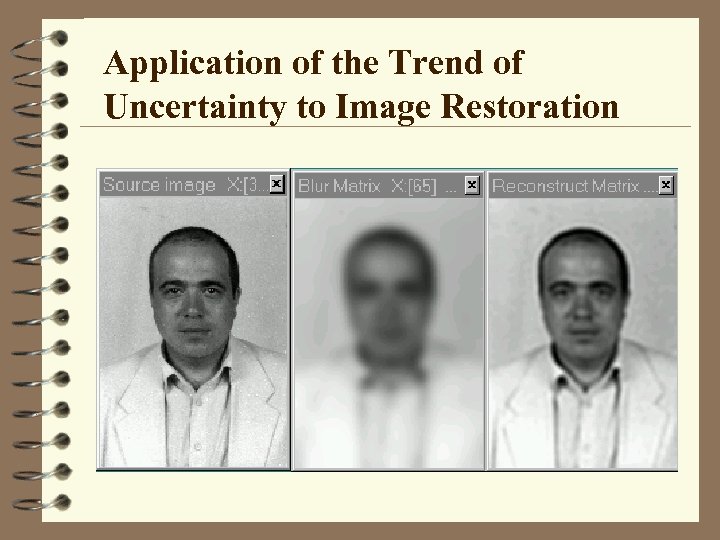

Application of the Trend of Uncertainty to Image Restoration

Application of the Trend of Uncertainty to Image Restoration

Published and Further Developed in Terziyan V. , Puuronen S. , Kaikova H. , Handling Uncertainty by Decontextualizing Estimated Intervals, In: Proceedings of MISC'99 Workshop on Applications of Interval Analysis to Systems and Control with special emphasis on recent advances in Modal Interval Analysis, 24 -26 February 1999, Universitat de Girona, Spain, pp. 111 -121. Terziyan V. , Puuronen S. , Kaikova H. , Interval-Based Parameter Recognition with the Trends in Multiple Estimations, Pattern Recognition and Image Analysis: Advances of Mathematical Theory and Applications, Interperiodica Publishing, V. 9, No. 4, August 1999.

Published and Further Developed in Terziyan V. , Puuronen S. , Kaikova H. , Handling Uncertainty by Decontextualizing Estimated Intervals, In: Proceedings of MISC'99 Workshop on Applications of Interval Analysis to Systems and Control with special emphasis on recent advances in Modal Interval Analysis, 24 -26 February 1999, Universitat de Girona, Spain, pp. 111 -121. Terziyan V. , Puuronen S. , Kaikova H. , Interval-Based Parameter Recognition with the Trends in Multiple Estimations, Pattern Recognition and Image Analysis: Advances of Mathematical Theory and Applications, Interperiodica Publishing, V. 9, No. 4, August 1999.

Flexible Arithmetic for Huge Numbers with Recursive Series of Operations

Flexible Arithmetic for Huge Numbers with Recursive Series of Operations

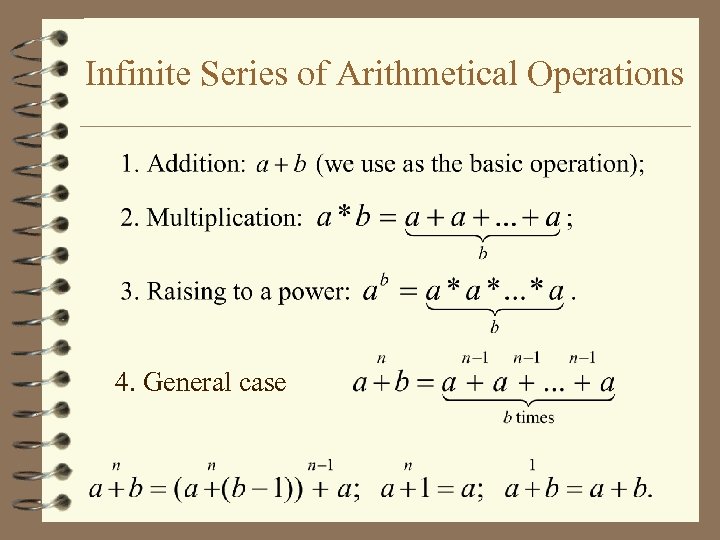

Infinite Series of Arithmetical Operations 4. General case

Infinite Series of Arithmetical Operations 4. General case

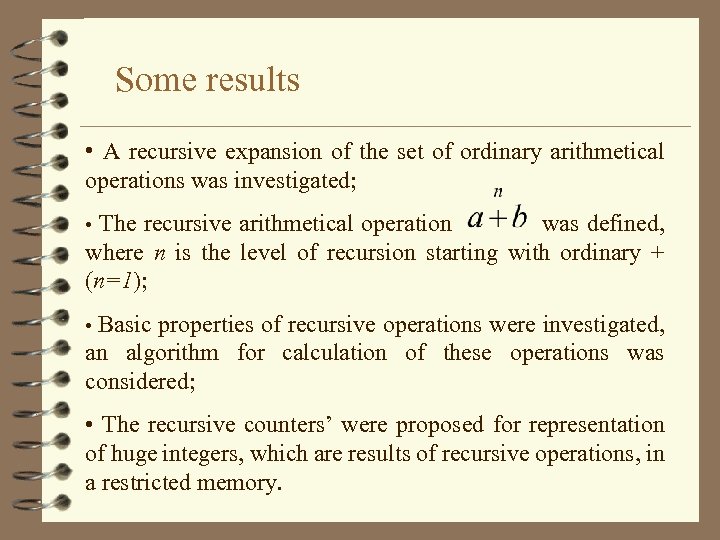

Some results • A recursive expansion of the set of ordinary arithmetical operations was investigated; • The recursive arithmetical operation was defined, where n is the level of recursion starting with ordinary + (n=1); • Basic properties of recursive operations were investigated, an algorithm for calculation of these operations was considered; • The recursive counters’ were proposed for representation of huge integers, which are results of recursive operations, in a restricted memory.

Some results • A recursive expansion of the set of ordinary arithmetical operations was investigated; • The recursive arithmetical operation was defined, where n is the level of recursion starting with ordinary + (n=1); • Basic properties of recursive operations were investigated, an algorithm for calculation of these operations was considered; • The recursive counters’ were proposed for representation of huge integers, which are results of recursive operations, in a restricted memory.

Published in Terziyan V. , Tsymbal A. , Puuronen S. , Flexible Arithmetic For Huge Numbers with Recursive Series of Operations, In: 13 -th AAECC Symposium on Applied Algebra, Algebraic Algorithms, and Error-Correcting Codes, 15 -19 November 1999, Hawaii, USA.

Published in Terziyan V. , Tsymbal A. , Puuronen S. , Flexible Arithmetic For Huge Numbers with Recursive Series of Operations, In: 13 -th AAECC Symposium on Applied Algebra, Algebraic Algorithms, and Error-Correcting Codes, 15 -19 November 1999, Hawaii, USA.

A Similarity Evaluation Technique for Cooperative Problem Solving with a Group of Agents

A Similarity Evaluation Technique for Cooperative Problem Solving with a Group of Agents

Goal 4 The goal of this research is to develop simple similarity evaluation technique to be used for cooperative problem solving based on opinions of several agents 4 Problem solving here is finding of an appropriate solution for the problem among available ones based on opinions of several agents

Goal 4 The goal of this research is to develop simple similarity evaluation technique to be used for cooperative problem solving based on opinions of several agents 4 Problem solving here is finding of an appropriate solution for the problem among available ones based on opinions of several agents

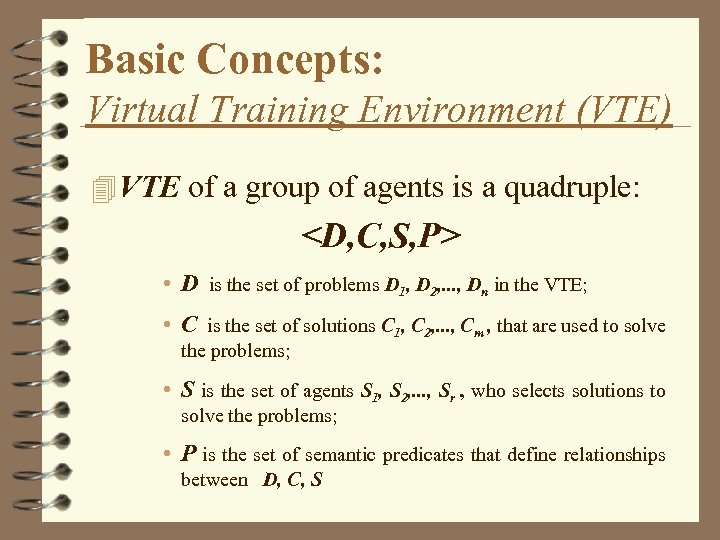

Basic Concepts: Virtual Training Environment (VTE) 4 VTE of a group of agents is a quadruple:

Basic Concepts: Virtual Training Environment (VTE) 4 VTE of a group of agents is a quadruple:

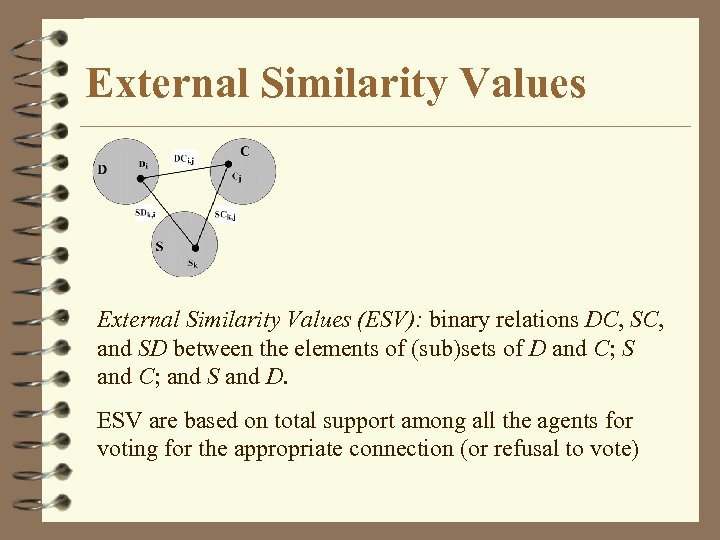

External Similarity Values (ESV): binary relations DC, SC, and SD between the elements of (sub)sets of D and C; S and C; and S and D. ESV are based on total support among all the agents for voting for the appropriate connection (or refusal to vote)

External Similarity Values (ESV): binary relations DC, SC, and SD between the elements of (sub)sets of D and C; S and C; and S and D. ESV are based on total support among all the agents for voting for the appropriate connection (or refusal to vote)

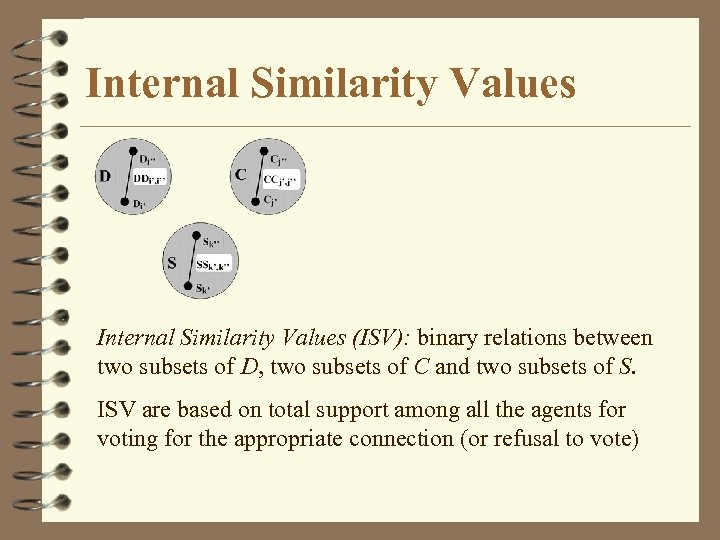

Internal Similarity Values (ISV): binary relations between two subsets of D, two subsets of C and two subsets of S. ISV are based on total support among all the agents for voting for the appropriate connection (or refusal to vote)

Internal Similarity Values (ISV): binary relations between two subsets of D, two subsets of C and two subsets of S. ISV are based on total support among all the agents for voting for the appropriate connection (or refusal to vote)

Why we Need Similarity Values (or Distance Measure) ? 4 Distance between problems is used by agents to recognize nearest solved problems for any new problem 4 distance between solutions is necessary to compare and evaluate solutions made by different agents 4 distance between agents is useful to evaluate weights of all agents to be able to integrate them by weighted voting.

Why we Need Similarity Values (or Distance Measure) ? 4 Distance between problems is used by agents to recognize nearest solved problems for any new problem 4 distance between solutions is necessary to compare and evaluate solutions made by different agents 4 distance between agents is useful to evaluate weights of all agents to be able to integrate them by weighted voting.

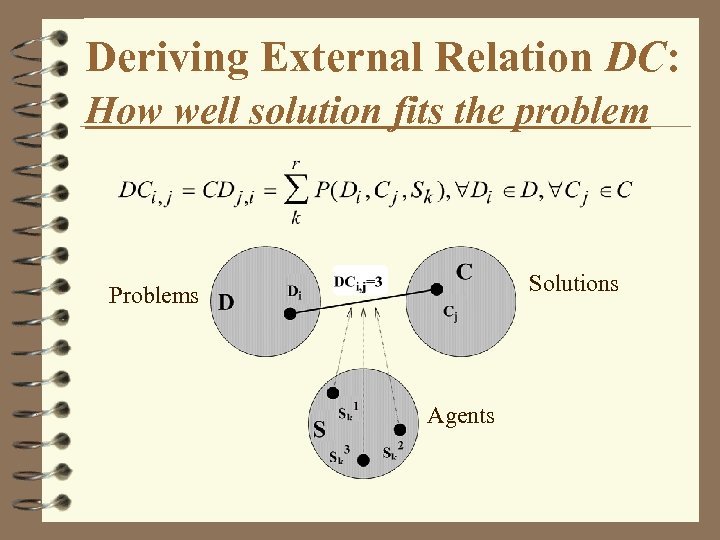

Deriving External Relation DC: How well solution fits the problem Solutions Problems Agents

Deriving External Relation DC: How well solution fits the problem Solutions Problems Agents

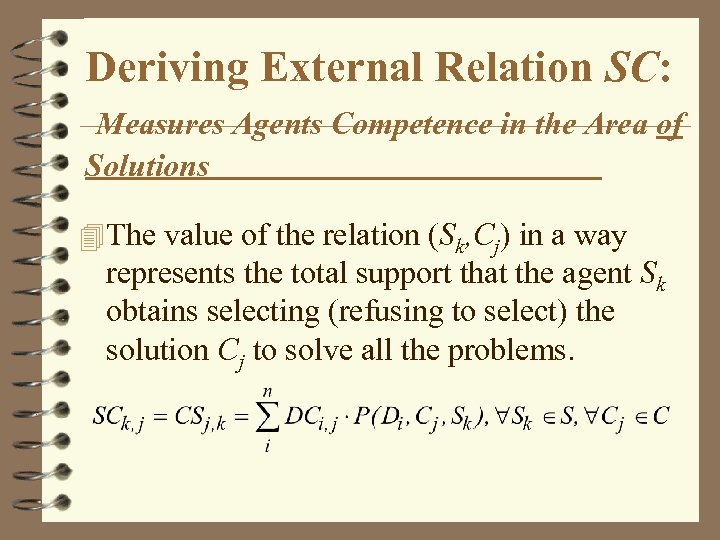

Deriving External Relation SC: Measures Agents Competence in the Area of Solutions 4 The value of the relation (Sk, Cj) in a way represents the total support that the agent Sk obtains selecting (refusing to select) the solution Cj to solve all the problems.

Deriving External Relation SC: Measures Agents Competence in the Area of Solutions 4 The value of the relation (Sk, Cj) in a way represents the total support that the agent Sk obtains selecting (refusing to select) the solution Cj to solve all the problems.

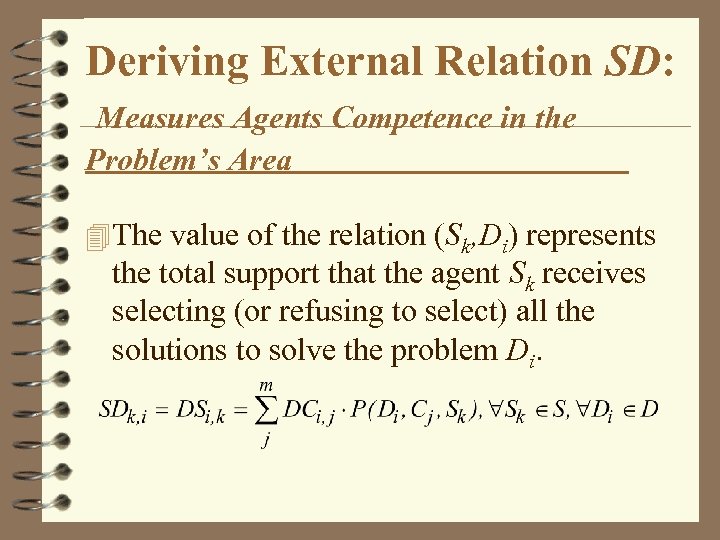

Deriving External Relation SD: Measures Agents Competence in the Problem’s Area 4 The value of the relation (Sk, Di) represents the total support that the agent Sk receives selecting (or refusing to select) all the solutions to solve the problem Di.

Deriving External Relation SD: Measures Agents Competence in the Problem’s Area 4 The value of the relation (Sk, Di) represents the total support that the agent Sk receives selecting (or refusing to select) all the solutions to solve the problem Di.

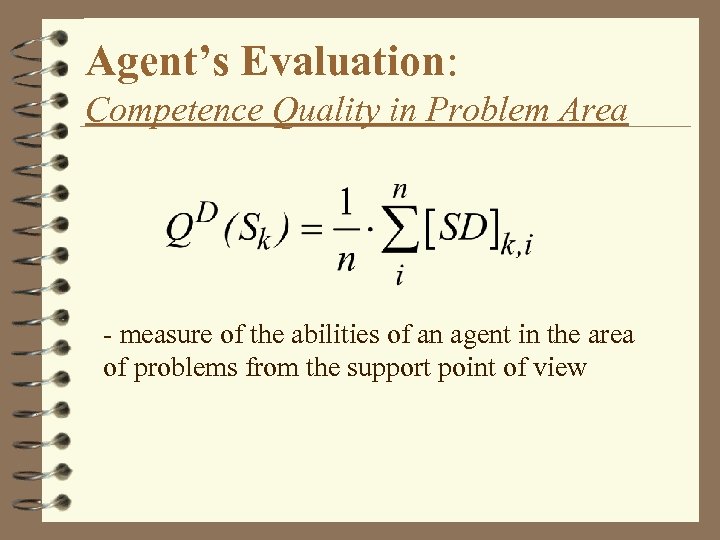

Agent’s Evaluation: Competence Quality in Problem Area - measure of the abilities of an agent in the area of problems from the support point of view

Agent’s Evaluation: Competence Quality in Problem Area - measure of the abilities of an agent in the area of problems from the support point of view

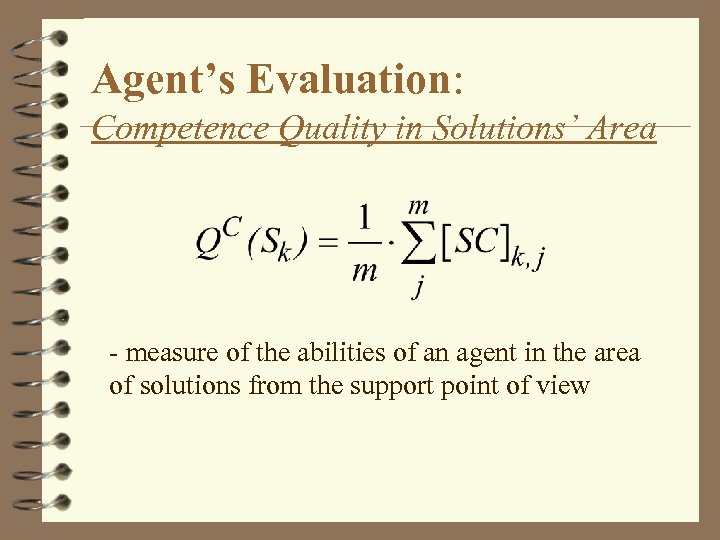

Agent’s Evaluation: Competence Quality in Solutions’ Area - measure of the abilities of an agent in the area of solutions from the support point of view

Agent’s Evaluation: Competence Quality in Solutions’ Area - measure of the abilities of an agent in the area of solutions from the support point of view

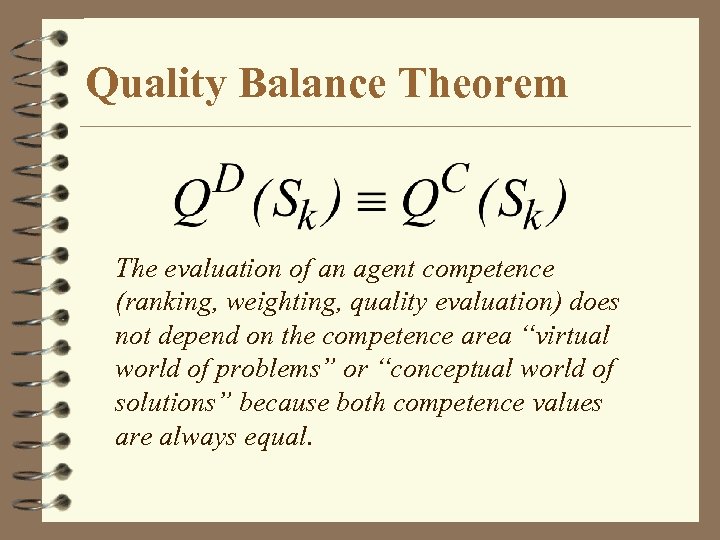

Quality Balance Theorem The evaluation of an agent competence (ranking, weighting, quality evaluation) does not depend on the competence area “virtual world of problems” or “conceptual world of solutions” because both competence values are always equal.

Quality Balance Theorem The evaluation of an agent competence (ranking, weighting, quality evaluation) does not depend on the competence area “virtual world of problems” or “conceptual world of solutions” because both competence values are always equal.

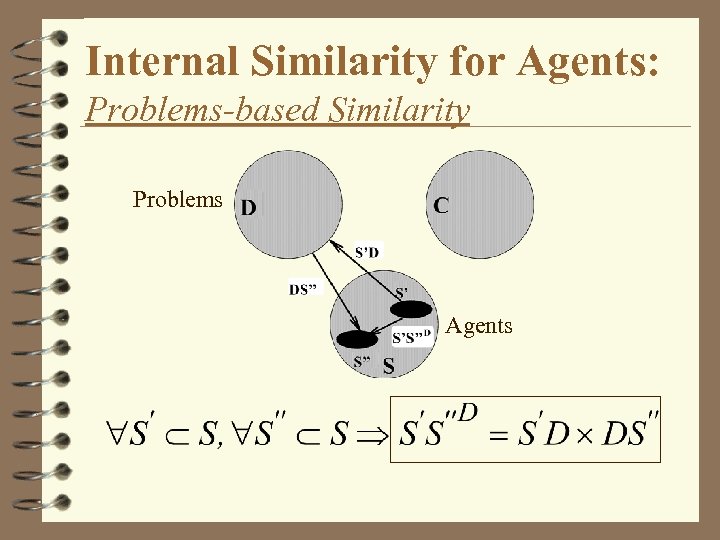

Internal Similarity for Agents: Problems-based Similarity Problems Agents

Internal Similarity for Agents: Problems-based Similarity Problems Agents

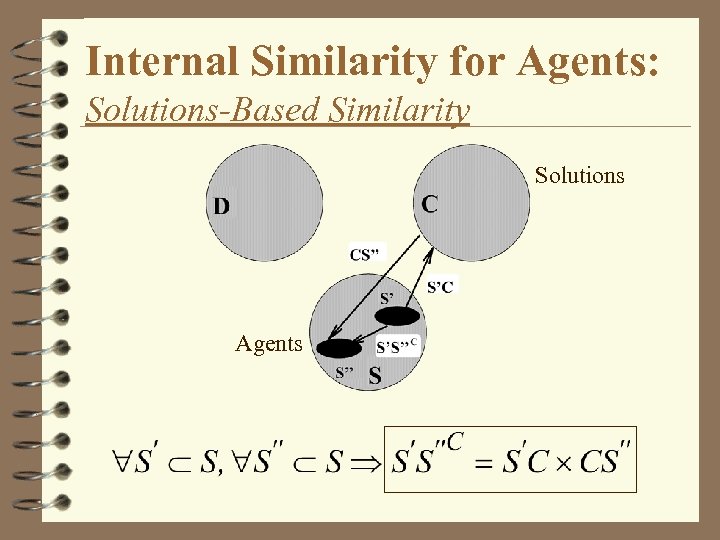

Internal Similarity for Agents: Solutions-Based Similarity Solutions Agents

Internal Similarity for Agents: Solutions-Based Similarity Solutions Agents

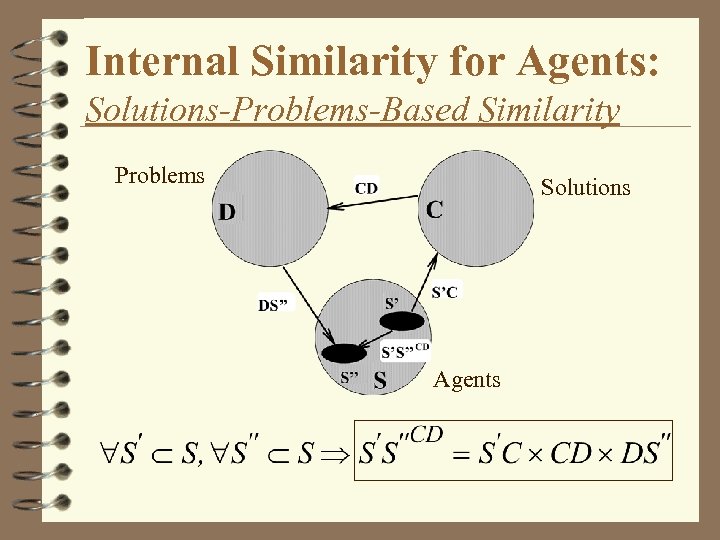

Internal Similarity for Agents: Solutions-Problems-Based Similarity Problems Solutions Agents

Internal Similarity for Agents: Solutions-Problems-Based Similarity Problems Solutions Agents

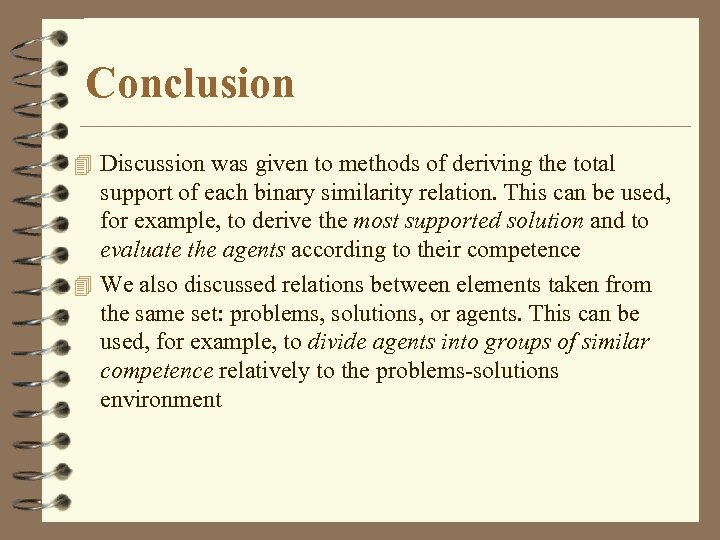

Conclusion 4 Discussion was given to methods of deriving the total support of each binary similarity relation. This can be used, for example, to derive the most supported solution and to evaluate the agents according to their competence 4 We also discussed relations between elements taken from the same set: problems, solutions, or agents. This can be used, for example, to divide agents into groups of similar competence relatively to the problems-solutions environment

Conclusion 4 Discussion was given to methods of deriving the total support of each binary similarity relation. This can be used, for example, to derive the most supported solution and to evaluate the agents according to their competence 4 We also discussed relations between elements taken from the same set: problems, solutions, or agents. This can be used, for example, to divide agents into groups of similar competence relatively to the problems-solutions environment

Published in Puuronen S. , Terziyan V. , A Similarity Evaluation Technique for Cooperative Problem Solving with a Group of Agents, In: M. Klush, O. M. Shegory, G. Weiss (Eds. ), Cooperative Information Agents III, Lecture Notes in Artificial Intelligence, Springer-Verlag, V. 1652, 1999, pp. 163 -174.

Published in Puuronen S. , Terziyan V. , A Similarity Evaluation Technique for Cooperative Problem Solving with a Group of Agents, In: M. Klush, O. M. Shegory, G. Weiss (Eds. ), Cooperative Information Agents III, Lecture Notes in Artificial Intelligence, Springer-Verlag, V. 1652, 1999, pp. 163 -174.

On-Line Incremental Instance. Based Learning

On-Line Incremental Instance. Based Learning

The Problems Addressed The following problems has been investigated both on-line learning for human experts and for artificial predictors: • How to derive the most supported knowledge (on-line prediction or classification) from the multiple experts (ensemble of classifiers); • how to make quality evaluation of the most supported opinion (of the ensemble prediction); • how to make, evaluate, use and refine ranks (weights) of all the experts (predictors) to improve the results of the on-line learning algorithm.

The Problems Addressed The following problems has been investigated both on-line learning for human experts and for artificial predictors: • How to derive the most supported knowledge (on-line prediction or classification) from the multiple experts (ensemble of classifiers); • how to make quality evaluation of the most supported opinion (of the ensemble prediction); • how to make, evaluate, use and refine ranks (weights) of all the experts (predictors) to improve the results of the on-line learning algorithm.

Results Published in Kaikova H. , Terziyan V. , Temporal Knowledge Acquisition From Multiple Experts, In: Shoval P. & Silberschatz A. (Eds. ), Proceedings of NGITS’ 97 The Third International Workshop on Next Generation Information Technologies and Systems, Neve Ilan, Israel, June - July, 1997, pp. 44 - 55. Puuronen S. , Terziyan V. , Omelayenko B. , Experimental Investigation of Two Rank Refinement Strategies for Voting with Multiple Experts, Artificial Intelligence, Donetsk Institute of Artificial Intelligence, V. 2, 1988, pp. 25 -41. Omelayenko B. , Terziyan. V. , Puuronen S. , Managing Training Examples for Fast Learning of Classifiers Ranks, In: CSIT’ 99 - International Workshop on Computer Science and Information Technologies, January 1999, Moscow, Russia, pp. 139 -148. Puuronen S. , Terziyan V. , Omelayenko B. , Multiple Experts Voting: Two Rank Refinement Strategies, In: Integrating Technology & Human Decisions: Global Bridges into the 21 st Century, Proceedings of the D. S. I. ’ 99 5 -th International Conference, 4 -7 July 1999, Athens, Greece, V. 1, pp. 634 -636.

Results Published in Kaikova H. , Terziyan V. , Temporal Knowledge Acquisition From Multiple Experts, In: Shoval P. & Silberschatz A. (Eds. ), Proceedings of NGITS’ 97 The Third International Workshop on Next Generation Information Technologies and Systems, Neve Ilan, Israel, June - July, 1997, pp. 44 - 55. Puuronen S. , Terziyan V. , Omelayenko B. , Experimental Investigation of Two Rank Refinement Strategies for Voting with Multiple Experts, Artificial Intelligence, Donetsk Institute of Artificial Intelligence, V. 2, 1988, pp. 25 -41. Omelayenko B. , Terziyan. V. , Puuronen S. , Managing Training Examples for Fast Learning of Classifiers Ranks, In: CSIT’ 99 - International Workshop on Computer Science and Information Technologies, January 1999, Moscow, Russia, pp. 139 -148. Puuronen S. , Terziyan V. , Omelayenko B. , Multiple Experts Voting: Two Rank Refinement Strategies, In: Integrating Technology & Human Decisions: Global Bridges into the 21 st Century, Proceedings of the D. S. I. ’ 99 5 -th International Conference, 4 -7 July 1999, Athens, Greece, V. 1, pp. 634 -636.

We will be Happy to Cooperate with You !

We will be Happy to Cooperate with You !

Advanced Diagnostics Algorithms in Online Field Device Monitoring Vagan Terziyan (editor) http: //www. cs. jyu. fi/ai/Metso_Diagnostics. ppt “Industrial Ontologies” Group: http: //www. cs. jyu. fi/ai/Onto. Group/index. html “Industrial Ontologies” Group, Agora Center, University of Jyväskylä, 2003

Advanced Diagnostics Algorithms in Online Field Device Monitoring Vagan Terziyan (editor) http: //www. cs. jyu. fi/ai/Metso_Diagnostics. ppt “Industrial Ontologies” Group: http: //www. cs. jyu. fi/ai/Onto. Group/index. html “Industrial Ontologies” Group, Agora Center, University of Jyväskylä, 2003

Contents 4 Introduction: Onto. Serv. Net – Global “Health. Onto. Serv. Net Care” Environment for Industrial Devices; 4 Bayesian Metanetworks for Context-Sensitive Metanetworks Industrial Diagnostics; 4 Temporal Industrial Diagnostics with Diagnostics Uncertainty; 4 Dynamic Integration of Classification Integration Algorithms for Industrial Diagnostics; 4 Industrial Diagnostics with Real-Time Neuro. Fuzzy Systems; Systems 4 Conclusion.

Contents 4 Introduction: Onto. Serv. Net – Global “Health. Onto. Serv. Net Care” Environment for Industrial Devices; 4 Bayesian Metanetworks for Context-Sensitive Metanetworks Industrial Diagnostics; 4 Temporal Industrial Diagnostics with Diagnostics Uncertainty; 4 Dynamic Integration of Classification Integration Algorithms for Industrial Diagnostics; 4 Industrial Diagnostics with Real-Time Neuro. Fuzzy Systems; Systems 4 Conclusion.

Vagan Terziyan Andriy Zharko Oleksandr Kononenko Oleksiy Khriyenko

Vagan Terziyan Andriy Zharko Oleksandr Kononenko Oleksiy Khriyenko

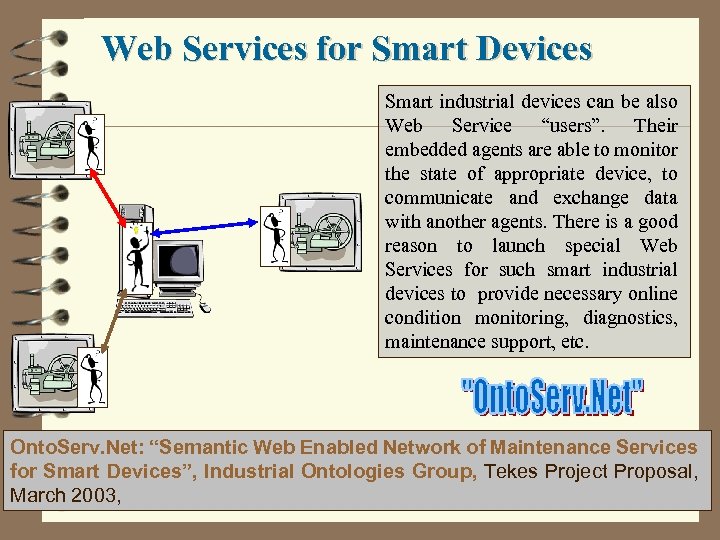

Web Services for Smart Devices Smart industrial devices can be also Web Service “users”. Their embedded agents are able to monitor the state of appropriate device, to communicate and exchange data with another agents. There is a good reason to launch special Web Services for such smart industrial devices to provide necessary online condition monitoring, diagnostics, maintenance support, etc. Onto. Serv. Net: “Semantic Web Enabled Network of Maintenance Services for Smart Devices”, Industrial Ontologies Group, Tekes Project Proposal, March 2003,

Web Services for Smart Devices Smart industrial devices can be also Web Service “users”. Their embedded agents are able to monitor the state of appropriate device, to communicate and exchange data with another agents. There is a good reason to launch special Web Services for such smart industrial devices to provide necessary online condition monitoring, diagnostics, maintenance support, etc. Onto. Serv. Net: “Semantic Web Enabled Network of Maintenance Services for Smart Devices”, Industrial Ontologies Group, Tekes Project Proposal, March 2003,

Global Network of Maintenance Services Onto. Serv. Net: “Semantic Web Enabled Network of Maintenance Services for Smart Devices”, Industrial Ontologies Group, Tekes Project Proposal, March 2003,

Global Network of Maintenance Services Onto. Serv. Net: “Semantic Web Enabled Network of Maintenance Services for Smart Devices”, Industrial Ontologies Group, Tekes Project Proposal, March 2003,

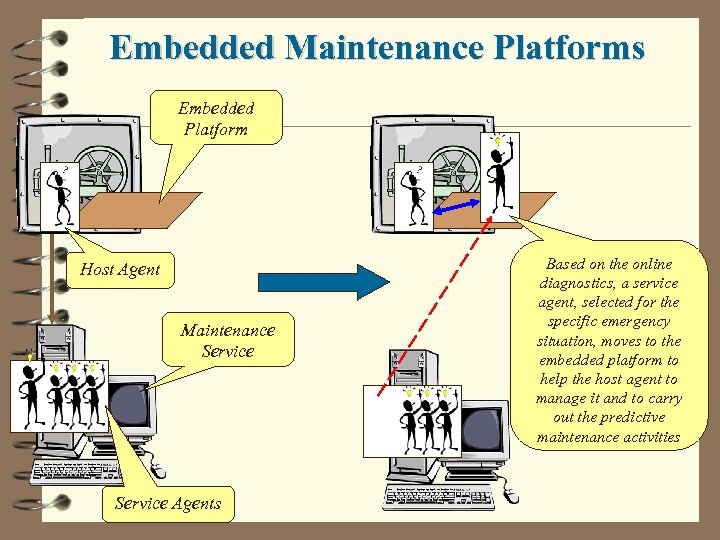

Embedded Maintenance Platforms Embedded Platform Host Agent Maintenance Service Agents Based on the online diagnostics, a service agent, selected for the specific emergency situation, moves to the embedded platform to help the host agent to manage it and to carry out the predictive maintenance activities

Embedded Maintenance Platforms Embedded Platform Host Agent Maintenance Service Agents Based on the online diagnostics, a service agent, selected for the specific emergency situation, moves to the embedded platform to help the host agent to manage it and to carry out the predictive maintenance activities

Onto. Serv. Net Challenges 4 New group of Web service users – smart industrial devices 4 Internal (embedded) and external (Web-based) agent Internal external enabled service platforms 4 “Mobile Service Component” concept supposes that any Component service component can move, be executed and learn at any platform from the Service Network, including service requestor side. 4 Semantic Peer-to-Peer concept for service network Peer-to-Peer management assumes ontology-based decentralized service network management.

Onto. Serv. Net Challenges 4 New group of Web service users – smart industrial devices 4 Internal (embedded) and external (Web-based) agent Internal external enabled service platforms 4 “Mobile Service Component” concept supposes that any Component service component can move, be executed and learn at any platform from the Service Network, including service requestor side. 4 Semantic Peer-to-Peer concept for service network Peer-to-Peer management assumes ontology-based decentralized service network management.

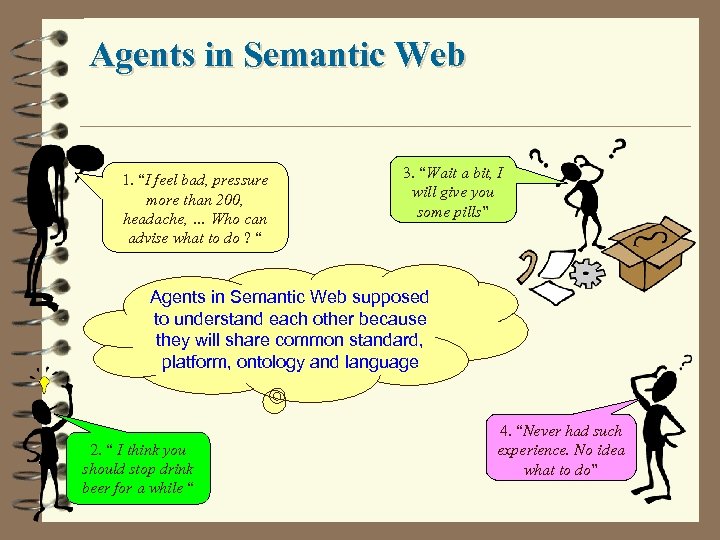

Agents in Semantic Web 1. “I feel bad, pressure more than 200, headache, … Who can advise what to do ? “ 3. “Wait a bit, I will give you some pills” Agents in Semantic Web supposed to understand each other because they will share common standard, platform, ontology and language 2. “ I think you should stop drink beer for a while “ 4. “Never had such experience. No idea what to do”

Agents in Semantic Web 1. “I feel bad, pressure more than 200, headache, … Who can advise what to do ? “ 3. “Wait a bit, I will give you some pills” Agents in Semantic Web supposed to understand each other because they will share common standard, platform, ontology and language 2. “ I think you should stop drink beer for a while “ 4. “Never had such experience. No idea what to do”

The Challenge: Global Understanding e. Nvironment (GUN) How to make entities from our physical world to understand each other when necessary ? . . … Its elementary ! But not easy !! Just to make agents from them !!!

The Challenge: Global Understanding e. Nvironment (GUN) How to make entities from our physical world to understand each other when necessary ? . . … Its elementary ! But not easy !! Just to make agents from them !!!

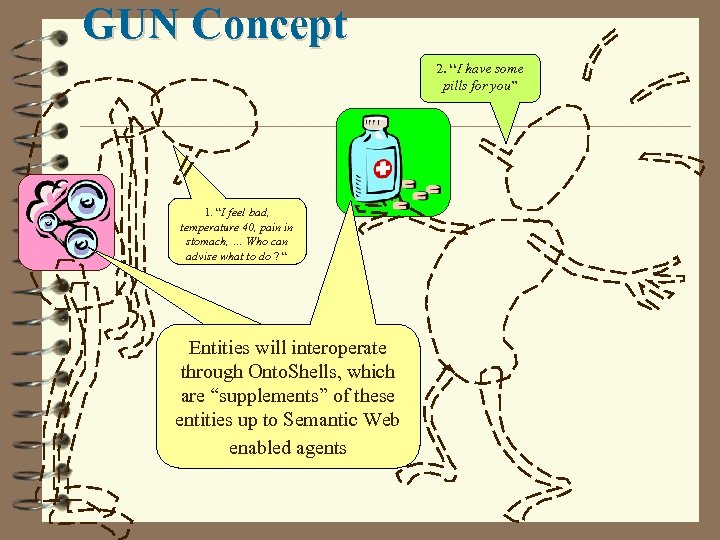

GUN Concept 2. “I have some pills for you” 1. “I feel bad, temperature 40, pain in stomach, … Who can advise what to do ? “ Entities will interoperate through Onto. Shells, which are “supplements” of these entities up to Semantic Web enabled agents

GUN Concept 2. “I have some pills for you” 1. “I feel bad, temperature 40, pain in stomach, … Who can advise what to do ? “ Entities will interoperate through Onto. Shells, which are “supplements” of these entities up to Semantic Web enabled agents

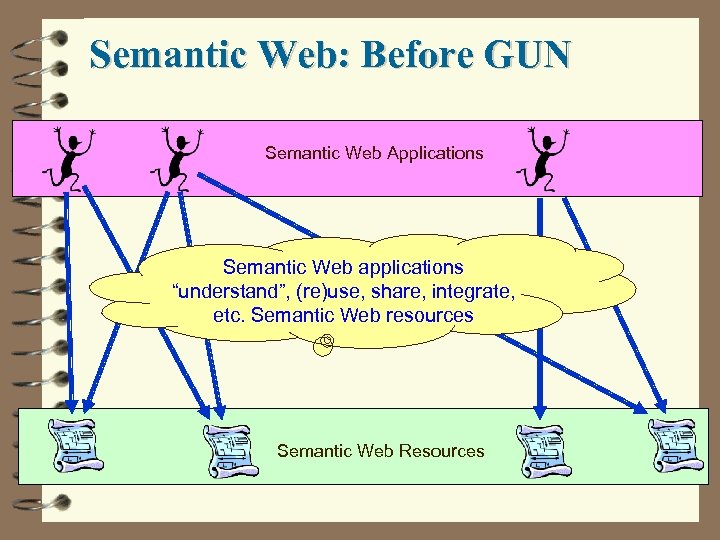

Semantic Web: Before GUN Semantic Web Applications Semantic Web applications “understand”, (re)use, share, integrate, etc. Semantic Web resources Semantic Web Resources

Semantic Web: Before GUN Semantic Web Applications Semantic Web applications “understand”, (re)use, share, integrate, etc. Semantic Web resources Semantic Web Resources

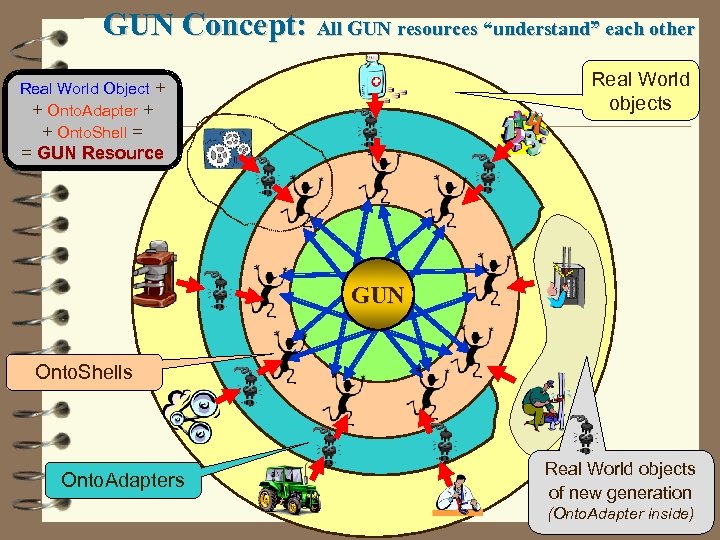

GUN Concept: All GUN resources “understand” each other Real World objects Real World Object + + Onto. Adapter + + Onto. Shell = = GUN Resource GUN Onto. Shells Onto. Adapters Real World objects of new generation (Onto. Adapter inside)

GUN Concept: All GUN resources “understand” each other Real World objects Real World Object + + Onto. Adapter + + Onto. Shell = = GUN Resource GUN Onto. Shells Onto. Adapters Real World objects of new generation (Onto. Adapter inside)

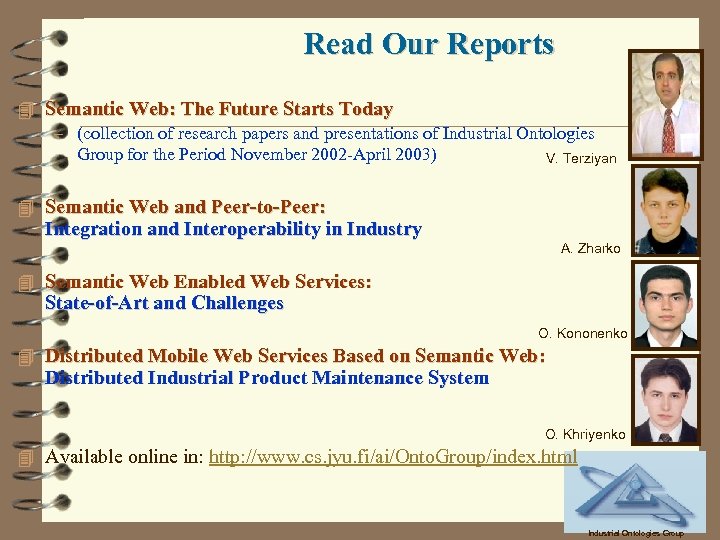

Read Our Reports 4 Semantic Web: The Future Starts Today – (collection of research papers and presentations of Industrial Ontologies Group for the Period November 2002 -April 2003) V. Terziyan 4 Semantic Web and Peer-to-Peer: Integration and Interoperability in Industry A. Zharko 4 Semantic Web Enabled Web Services: State-of-Art and Challenges O. Kononenko 4 Distributed Mobile Web Services Based on Semantic Web: Distributed Industrial Product Maintenance System O. Khriyenko 4 Available online in: http: //www. cs. jyu. fi/ai/Onto. Group/index. html Industrial Ontologies Group

Read Our Reports 4 Semantic Web: The Future Starts Today – (collection of research papers and presentations of Industrial Ontologies Group for the Period November 2002 -April 2003) V. Terziyan 4 Semantic Web and Peer-to-Peer: Integration and Interoperability in Industry A. Zharko 4 Semantic Web Enabled Web Services: State-of-Art and Challenges O. Kononenko 4 Distributed Mobile Web Services Based on Semantic Web: Distributed Industrial Product Maintenance System O. Khriyenko 4 Available online in: http: //www. cs. jyu. fi/ai/Onto. Group/index. html Industrial Ontologies Group

Vagan Terziyan Oleksandra Vitko

Vagan Terziyan Oleksandra Vitko

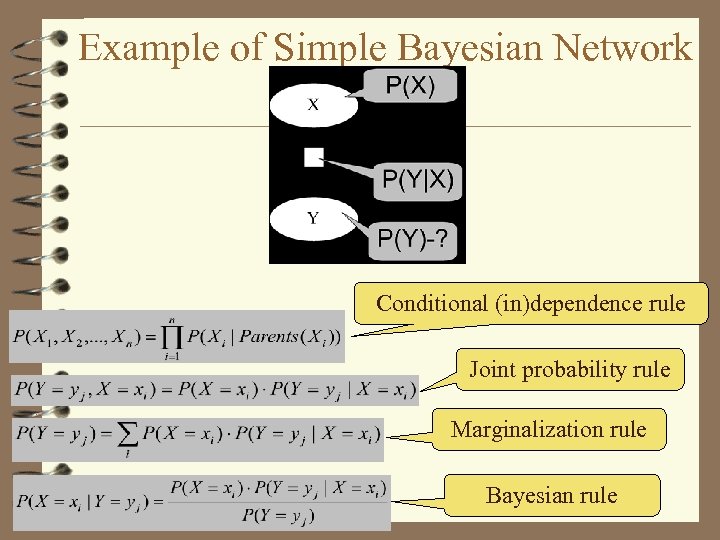

Example of Simple Bayesian Network Conditional (in)dependence rule Joint probability rule Marginalization rule Bayesian rule

Example of Simple Bayesian Network Conditional (in)dependence rule Joint probability rule Marginalization rule Bayesian rule

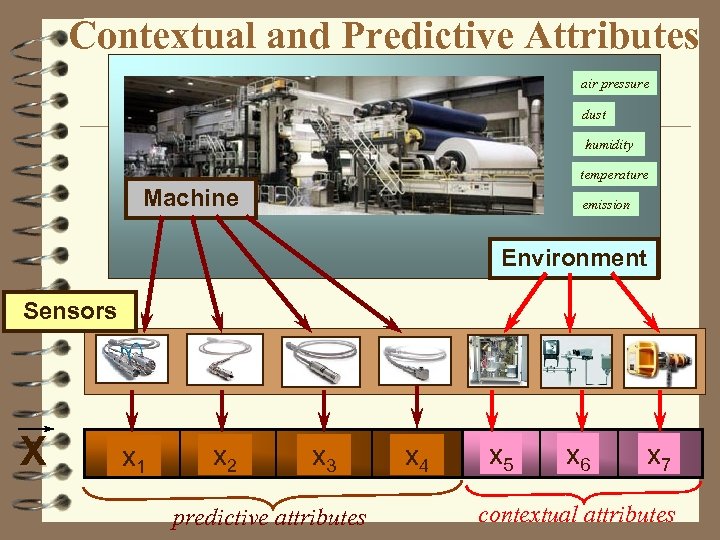

Contextual and Predictive Attributes air pressure dust humidity temperature Machine emission Environment Sensors X x 1 x 2 x 3 predictive attributes x 4 x 5 x 6 x 7 contextual attributes

Contextual and Predictive Attributes air pressure dust humidity temperature Machine emission Environment Sensors X x 1 x 2 x 3 predictive attributes x 4 x 5 x 6 x 7 contextual attributes

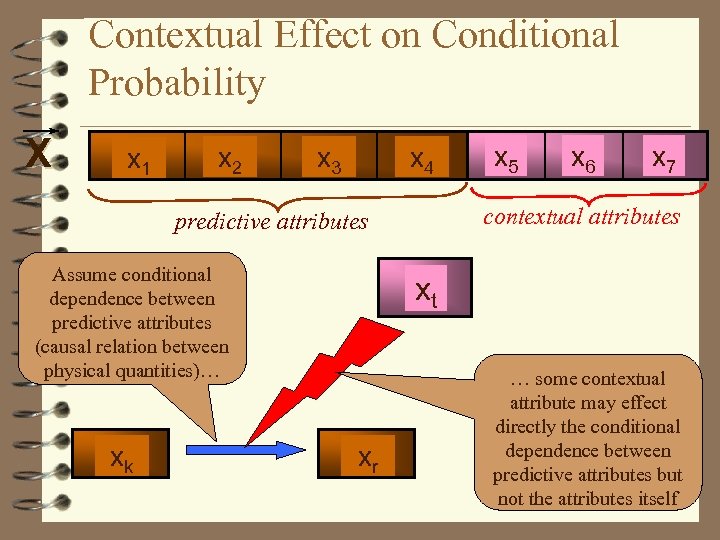

Contextual Effect on Conditional Probability X x 1 x 2 x 3 x 4 xk x 6 x 7 contextual attributes predictive attributes Assume conditional dependence between predictive attributes (causal relation between physical quantities)… x 5 xt xr … some contextual attribute may effect directly the conditional dependence between predictive attributes but not the attributes itself

Contextual Effect on Conditional Probability X x 1 x 2 x 3 x 4 xk x 6 x 7 contextual attributes predictive attributes Assume conditional dependence between predictive attributes (causal relation between physical quantities)… x 5 xt xr … some contextual attribute may effect directly the conditional dependence between predictive attributes but not the attributes itself

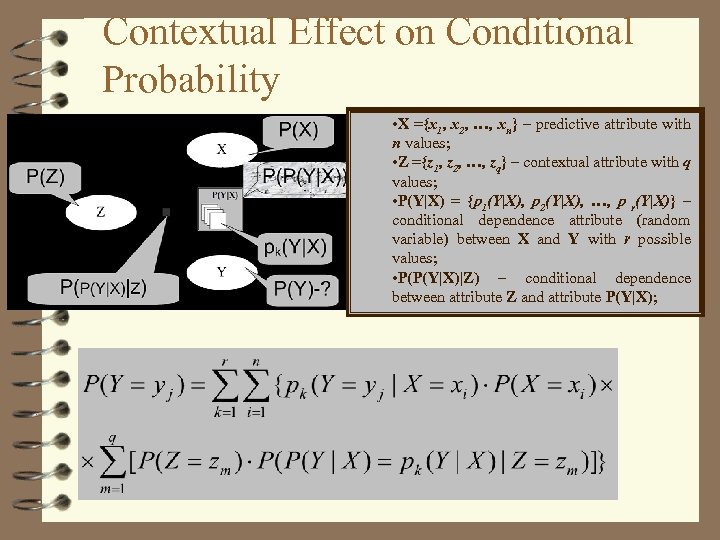

Contextual Effect on Conditional Probability • X ={x 1, x 2, …, xn} – predictive attribute with n values; • Z ={z 1, z 2, …, zq} – contextual attribute with q values; • P(Y|X) = {p 1(Y|X), p 2(Y|X), …, p r(Y|X)} – conditional dependence attribute (random variable) between X and Y with r possible values; • P(P(Y|X)|Z) – conditional dependence between attribute Z and attribute P(Y|X);

Contextual Effect on Conditional Probability • X ={x 1, x 2, …, xn} – predictive attribute with n values; • Z ={z 1, z 2, …, zq} – contextual attribute with q values; • P(Y|X) = {p 1(Y|X), p 2(Y|X), …, p r(Y|X)} – conditional dependence attribute (random variable) between X and Y with r possible values; • P(P(Y|X)|Z) – conditional dependence between attribute Z and attribute P(Y|X);

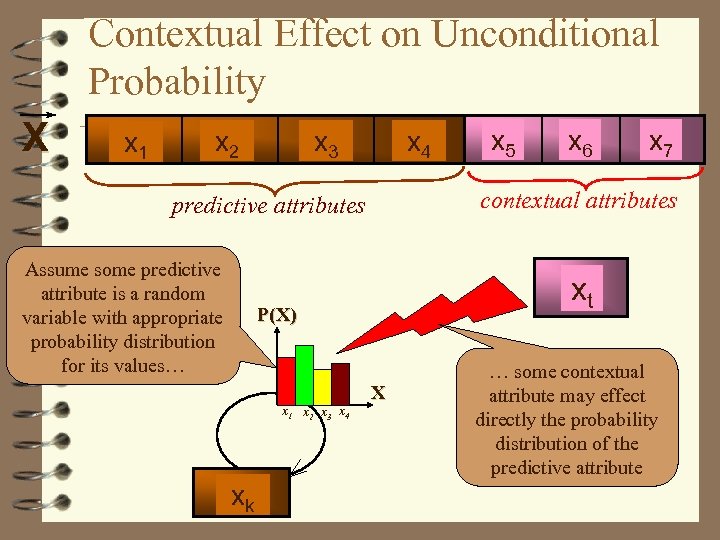

Contextual Effect on Unconditional Probability X x 1 x 2 x 3 x 4 xk x 7 xt P(X) x 1 x 2 x 3 x 4 x 6 contextual attributes predictive attributes Assume some predictive attribute is a random variable with appropriate probability distribution for its values… x 5 X … some contextual attribute may effect directly the probability distribution of the predictive attribute

Contextual Effect on Unconditional Probability X x 1 x 2 x 3 x 4 xk x 7 xt P(X) x 1 x 2 x 3 x 4 x 6 contextual attributes predictive attributes Assume some predictive attribute is a random variable with appropriate probability distribution for its values… x 5 X … some contextual attribute may effect directly the probability distribution of the predictive attribute

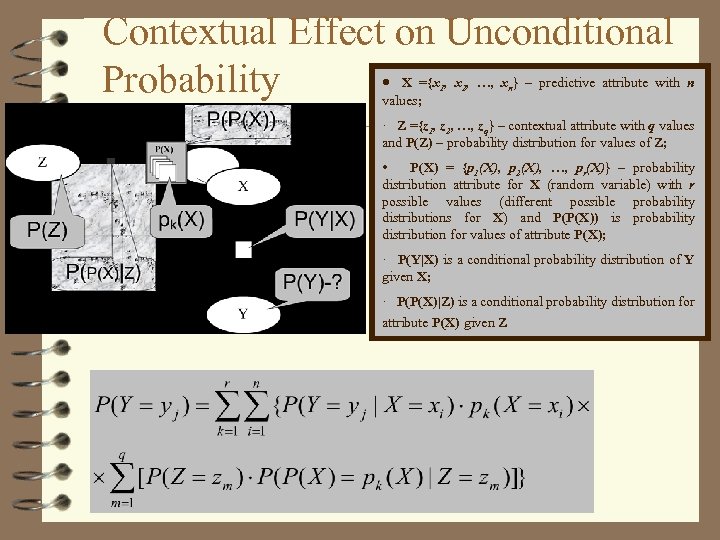

Contextual Effect on Unconditional · Probability X ={x 1, x 2, …, xn} – predictive attribute with n values; · Z ={z 1, z 2, …, zq} – contextual attribute with q values and P(Z) – probability distribution for values of Z; • P(X) = {p 1(X), p 2(X), …, pr(X)} – probability distribution attribute for X (random variable) with r possible values (different possible probability distributions for X) and P(P(X)) is probability distribution for values of attribute P(X); · P(Y|X) is a conditional probability distribution of Y given X; · P(P(X)|Z) is a conditional probability distribution for attribute P(X) given Z

Contextual Effect on Unconditional · Probability X ={x 1, x 2, …, xn} – predictive attribute with n values; · Z ={z 1, z 2, …, zq} – contextual attribute with q values and P(Z) – probability distribution for values of Z; • P(X) = {p 1(X), p 2(X), …, pr(X)} – probability distribution attribute for X (random variable) with r possible values (different possible probability distributions for X) and P(P(X)) is probability distribution for values of attribute P(X); · P(Y|X) is a conditional probability distribution of Y given X; · P(P(X)|Z) is a conditional probability distribution for attribute P(X) given Z

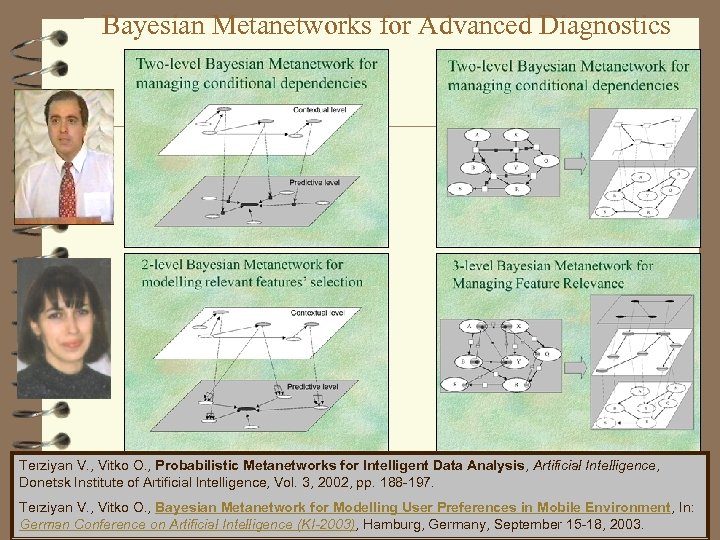

Bayesian Metanetworks for Advanced Diagnostics Terziyan V. , Vitko O. , Probabilistic Metanetworks for Intelligent Data Analysis, Artificial Intelligence, Donetsk Institute of Artificial Intelligence, Vol. 3, 2002, pp. 188 -197. Terziyan V. , Vitko O. , Bayesian Metanetwork for Modelling User Preferences in Mobile Environment, In: German Conference on Artificial Intelligence (KI-2003), Hamburg, Germany, September 15 -18, 2003.

Bayesian Metanetworks for Advanced Diagnostics Terziyan V. , Vitko O. , Probabilistic Metanetworks for Intelligent Data Analysis, Artificial Intelligence, Donetsk Institute of Artificial Intelligence, Vol. 3, 2002, pp. 188 -197. Terziyan V. , Vitko O. , Bayesian Metanetwork for Modelling User Preferences in Mobile Environment, In: German Conference on Artificial Intelligence (KI-2003), Hamburg, Germany, September 15 -18, 2003.

Two-level Bayesian Metanetwork for managing conditional dependencies

Two-level Bayesian Metanetwork for managing conditional dependencies

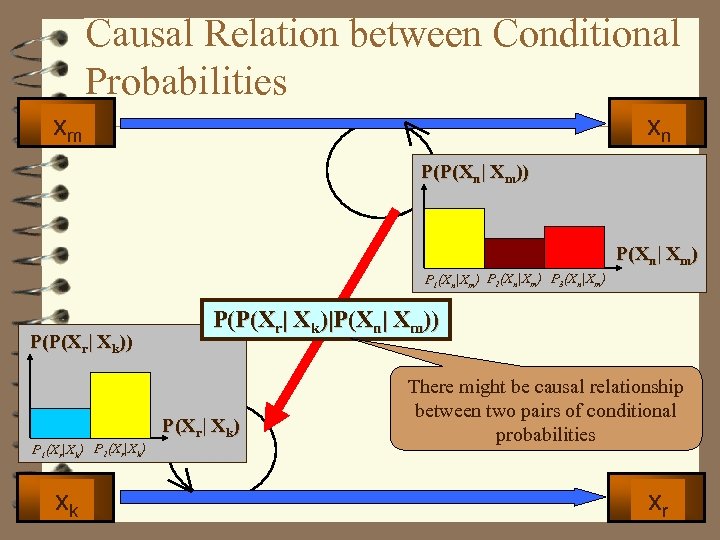

Causal Relation between Conditional Probabilities xm xn P(P(Xn| Xm)) P(Xn| Xm) P 1(Xn|Xm) P 2(Xn|Xm) P 3(Xn|Xm) P(P(Xr| Xk)|P(Xn| Xm)) P(Xr| Xk) P 1(Xr|Xk) P 2(Xr|Xk) xk There might be causal relationship between two pairs of conditional probabilities xr

Causal Relation between Conditional Probabilities xm xn P(P(Xn| Xm)) P(Xn| Xm) P 1(Xn|Xm) P 2(Xn|Xm) P 3(Xn|Xm) P(P(Xr| Xk)|P(Xn| Xm)) P(Xr| Xk) P 1(Xr|Xk) P 2(Xr|Xk) xk There might be causal relationship between two pairs of conditional probabilities xr

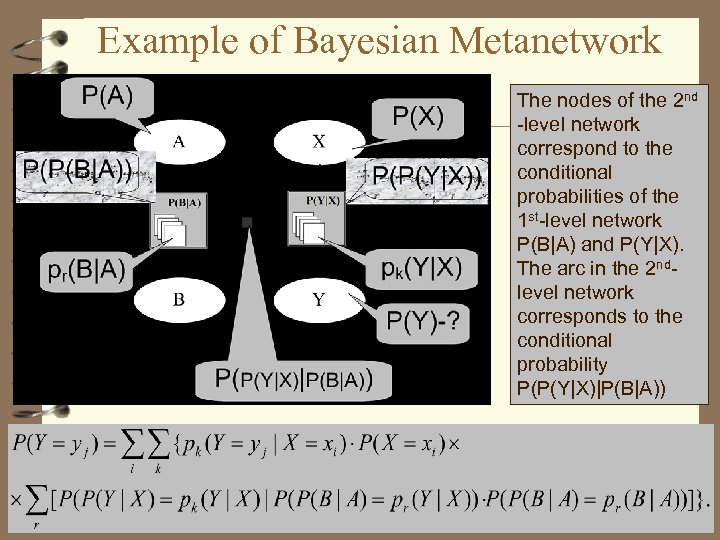

Example of Bayesian Metanetwork The nodes of the 2 nd -level network correspond to the conditional probabilities of the 1 st-level network P(B|A) and P(Y|X). The arc in the 2 ndlevel network corresponds to the conditional probability P(P(Y|X)|P(B|A))

Example of Bayesian Metanetwork The nodes of the 2 nd -level network correspond to the conditional probabilities of the 1 st-level network P(B|A) and P(Y|X). The arc in the 2 ndlevel network corresponds to the conditional probability P(P(Y|X)|P(B|A))

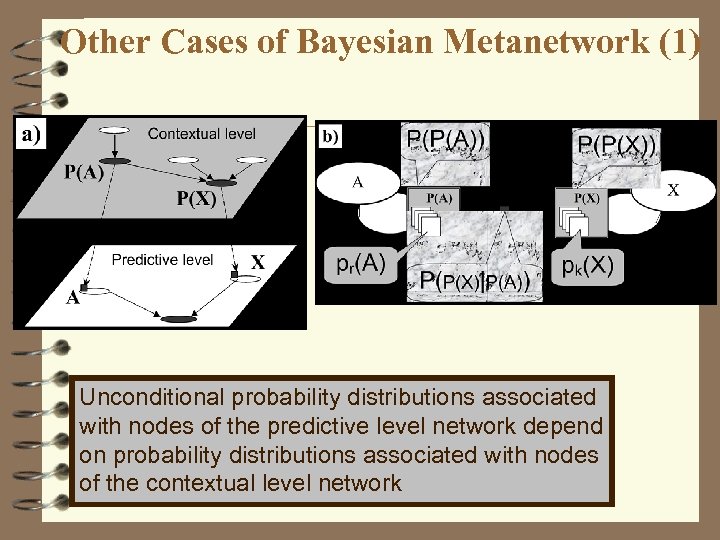

Other Cases of Bayesian Metanetwork (1) Unconditional probability distributions associated with nodes of the predictive level network depend on probability distributions associated with nodes of the contextual level network

Other Cases of Bayesian Metanetwork (1) Unconditional probability distributions associated with nodes of the predictive level network depend on probability distributions associated with nodes of the contextual level network

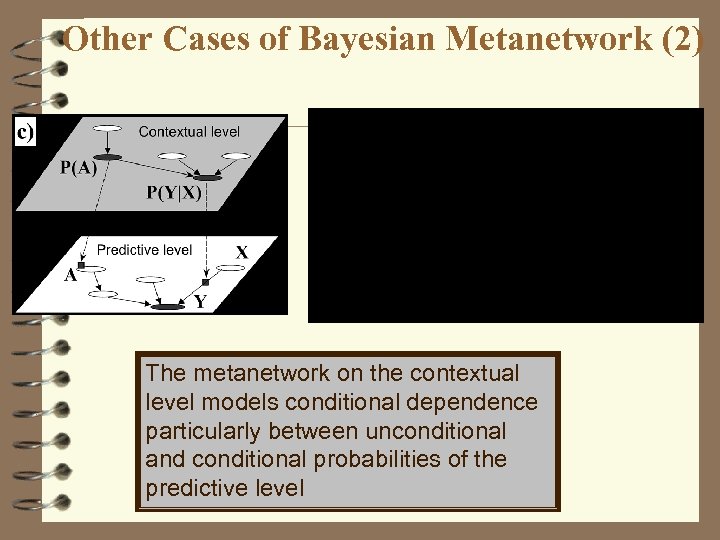

Other Cases of Bayesian Metanetwork (2) The metanetwork on the contextual level models conditional dependence particularly between unconditional and conditional probabilities of the predictive level

Other Cases of Bayesian Metanetwork (2) The metanetwork on the contextual level models conditional dependence particularly between unconditional and conditional probabilities of the predictive level

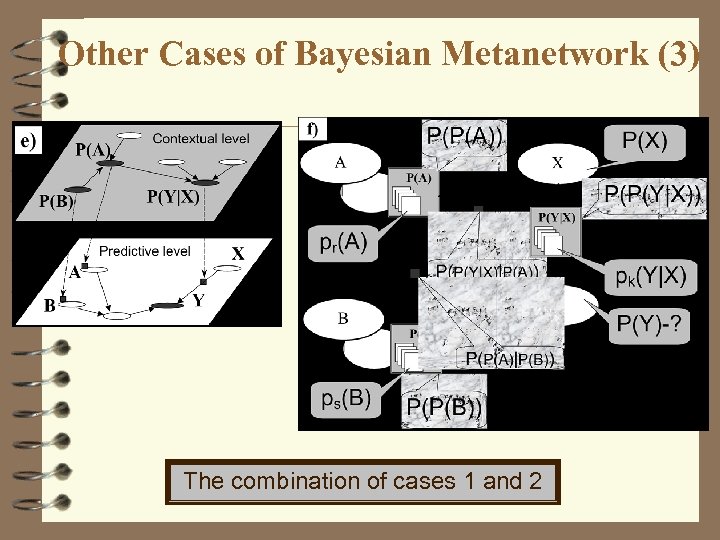

Other Cases of Bayesian Metanetwork (3) The combination of cases 1 and 2

Other Cases of Bayesian Metanetwork (3) The combination of cases 1 and 2

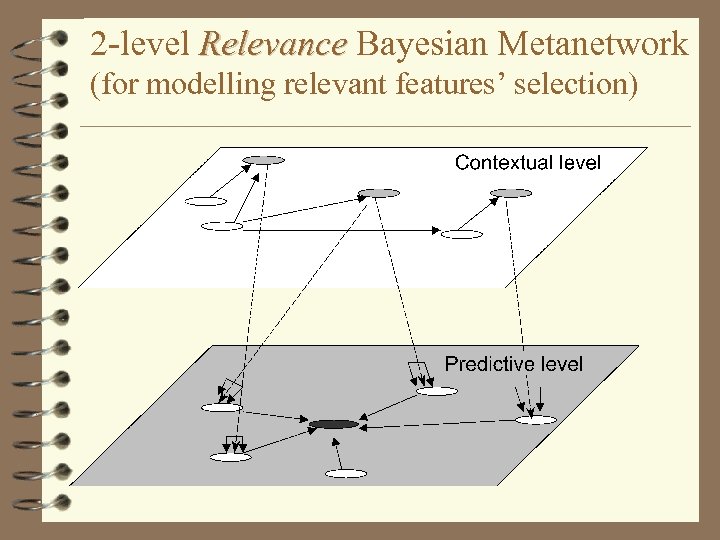

2 -level Relevance Bayesian Metanetwork Relevance (for modelling relevant features’ selection)

2 -level Relevance Bayesian Metanetwork Relevance (for modelling relevant features’ selection)

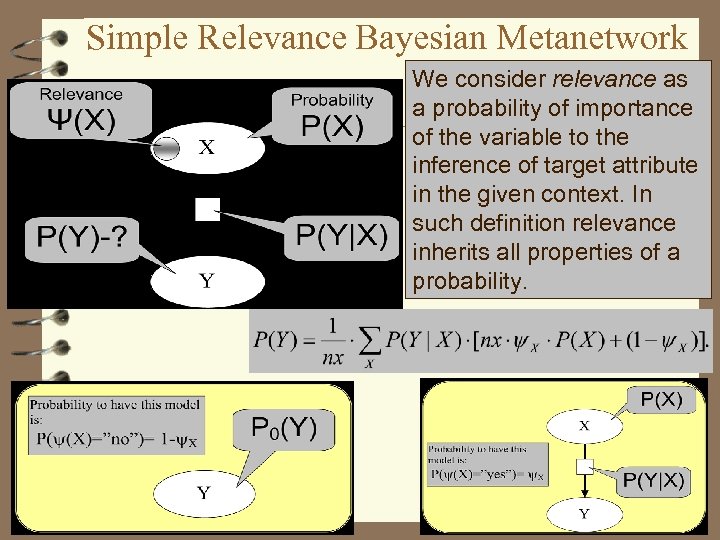

Simple Relevance Bayesian Metanetwork We consider relevance as a probability of importance of the variable to the inference of target attribute in the given context. In such definition relevance inherits all properties of a probability.

Simple Relevance Bayesian Metanetwork We consider relevance as a probability of importance of the variable to the inference of target attribute in the given context. In such definition relevance inherits all properties of a probability.

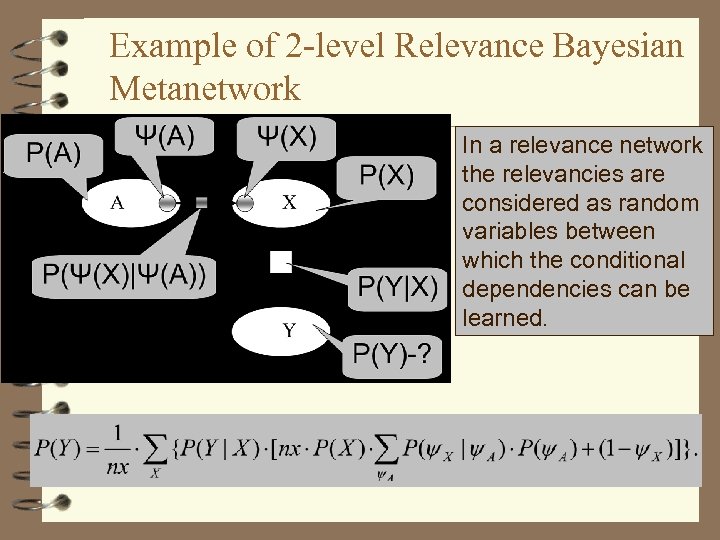

Example of 2 -level Relevance Bayesian Metanetwork In a relevance network the relevancies are considered as random variables between which the conditional dependencies can be learned.

Example of 2 -level Relevance Bayesian Metanetwork In a relevance network the relevancies are considered as random variables between which the conditional dependencies can be learned.

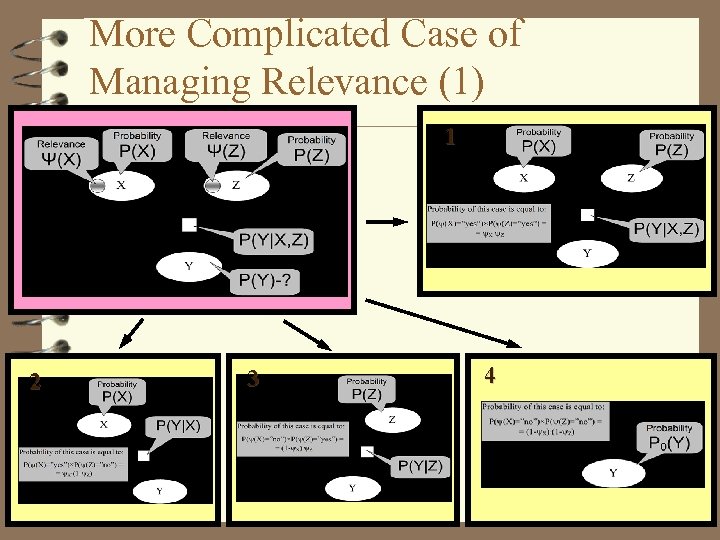

More Complicated Case of Managing Relevance (1) 1 2 3 4

More Complicated Case of Managing Relevance (1) 1 2 3 4

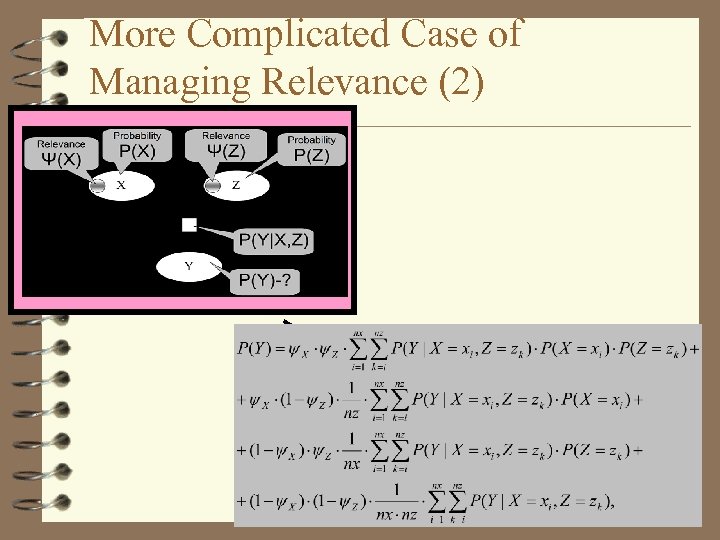

More Complicated Case of Managing Relevance (2)

More Complicated Case of Managing Relevance (2)

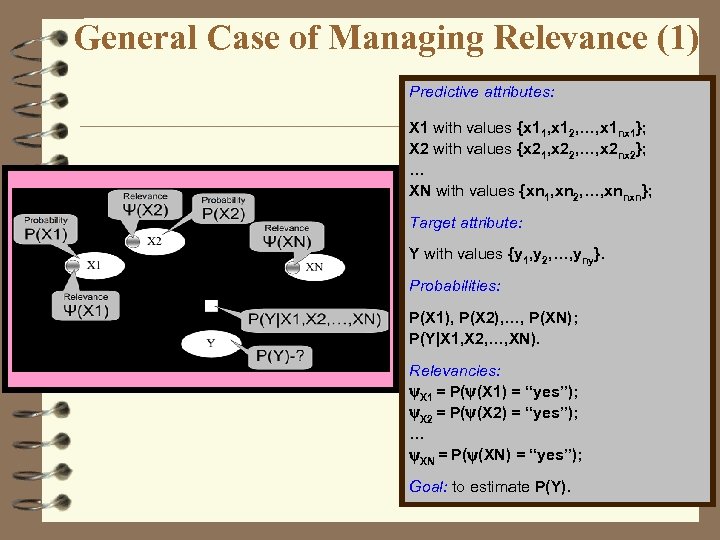

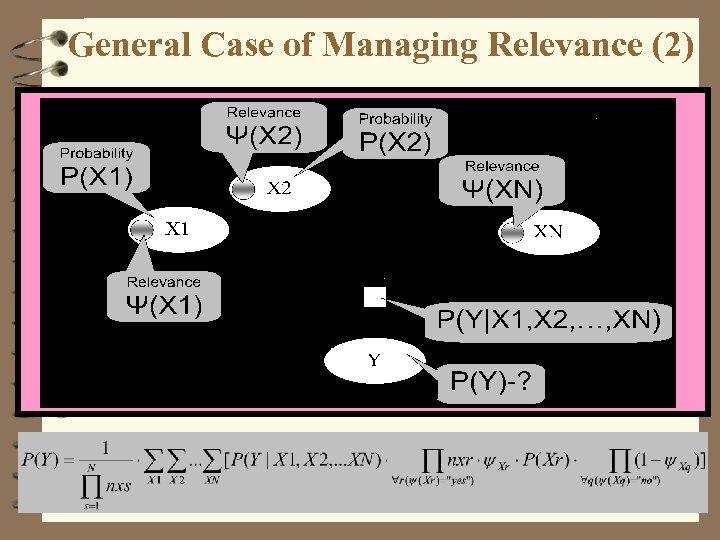

General Case of Managing Relevance (1) Predictive attributes: X 1 with values {x 11, x 12, …, x 1 nx 1}; X 2 with values {x 21, x 22, …, x 2 nx 2}; … XN with values {xn 1, xn 2, …, xnnxn}; Target attribute: Y with values {y 1, y 2, …, yny}. Probabilities: P(X 1), P(X 2), …, P(XN); P(Y|X 1, X 2, …, XN). Relevancies: X 1 = P( (X 1) = “yes”); X 2 = P( (X 2) = “yes”); … XN = P( (XN) = “yes”); Goal: to estimate P(Y).

General Case of Managing Relevance (1) Predictive attributes: X 1 with values {x 11, x 12, …, x 1 nx 1}; X 2 with values {x 21, x 22, …, x 2 nx 2}; … XN with values {xn 1, xn 2, …, xnnxn}; Target attribute: Y with values {y 1, y 2, …, yny}. Probabilities: P(X 1), P(X 2), …, P(XN); P(Y|X 1, X 2, …, XN). Relevancies: X 1 = P( (X 1) = “yes”); X 2 = P( (X 2) = “yes”); … XN = P( (XN) = “yes”); Goal: to estimate P(Y).

General Case of Managing Relevance (2)

General Case of Managing Relevance (2)

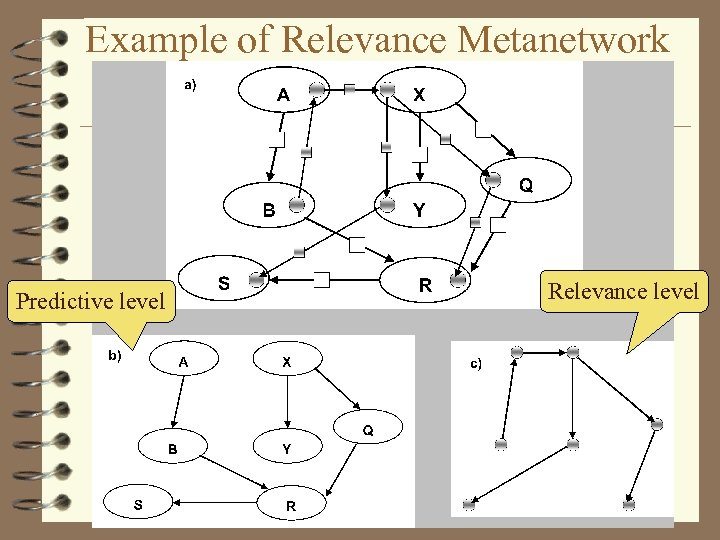

Example of Relevance Metanetwork Predictive level Relevance level

Example of Relevance Metanetwork Predictive level Relevance level

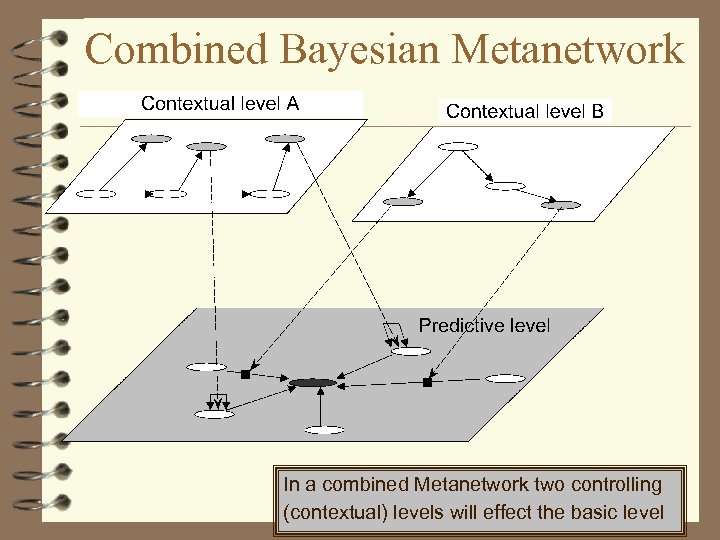

Combined Bayesian Metanetwork In a combined Metanetwork two controlling (contextual) levels will effect the basic level

Combined Bayesian Metanetwork In a combined Metanetwork two controlling (contextual) levels will effect the basic level

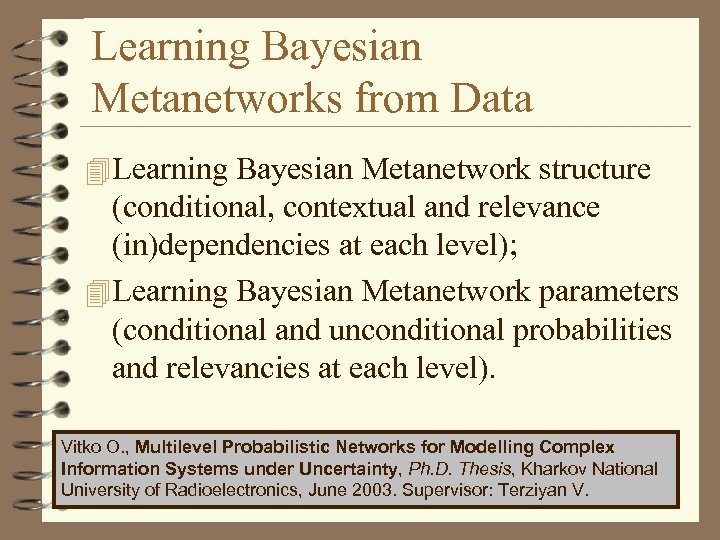

Learning Bayesian Metanetworks from Data 4 Learning Bayesian Metanetwork structure (conditional, contextual and relevance (in)dependencies at each level); 4 Learning Bayesian Metanetwork parameters (conditional and unconditional probabilities and relevancies at each level). Vitko O. , Multilevel Probabilistic Networks for Modelling Complex Information Systems under Uncertainty, Ph. D. Thesis, Kharkov National University of Radioelectronics, June 2003. Supervisor: Terziyan V.

Learning Bayesian Metanetworks from Data 4 Learning Bayesian Metanetwork structure (conditional, contextual and relevance (in)dependencies at each level); 4 Learning Bayesian Metanetwork parameters (conditional and unconditional probabilities and relevancies at each level). Vitko O. , Multilevel Probabilistic Networks for Modelling Complex Information Systems under Uncertainty, Ph. D. Thesis, Kharkov National University of Radioelectronics, June 2003. Supervisor: Terziyan V.

When Bayesian Metanetworks ? 1. Bayesian Metanetwork can be considered as very powerful tool in cases where structure (or strengths) of causal relationships between observed parameters of an object essentially depends on context (e. g. external environment parameters); 2. Also it can be considered as a useful model for such an object, which diagnosis depends on different set of observed parameters depending on the context.

When Bayesian Metanetworks ? 1. Bayesian Metanetwork can be considered as very powerful tool in cases where structure (or strengths) of causal relationships between observed parameters of an object essentially depends on context (e. g. external environment parameters); 2. Also it can be considered as a useful model for such an object, which diagnosis depends on different set of observed parameters depending on the context.

Vagan Terziyan Vladimir Ryabov

Vagan Terziyan Vladimir Ryabov

Temporal Diagnostics of Field Devices • The approach to temporal diagnostics uses the algebra of uncertain temporal relations*. • Uncertain temporal relations are formalized using probabilistic representation. • Relational networks are composed of uncertain relations between some events (set of symptoms) • A number of relational networks can be combined into a temporal scenario describing some particular course of events (diagnosis). • In future, a newly composed relational network can be compared with existing temporal scenarios, and the probabilities of belonging to each particular scenario are derived. * Ryabov V. , Puuronen S. , Terziyan V. , Representation and Reasoning with Uncertain Temporal Relations, In: A. Kumar and I. Russel (Eds. ), Proceedings of the Twelfth International Florida AI Research Society Conference - FLAIRS-99, AAAI Press, California, 1999, pp. 449 -453.

Temporal Diagnostics of Field Devices • The approach to temporal diagnostics uses the algebra of uncertain temporal relations*. • Uncertain temporal relations are formalized using probabilistic representation. • Relational networks are composed of uncertain relations between some events (set of symptoms) • A number of relational networks can be combined into a temporal scenario describing some particular course of events (diagnosis). • In future, a newly composed relational network can be compared with existing temporal scenarios, and the probabilities of belonging to each particular scenario are derived. * Ryabov V. , Puuronen S. , Terziyan V. , Representation and Reasoning with Uncertain Temporal Relations, In: A. Kumar and I. Russel (Eds. ), Proceedings of the Twelfth International Florida AI Research Society Conference - FLAIRS-99, AAAI Press, California, 1999, pp. 449 -453.

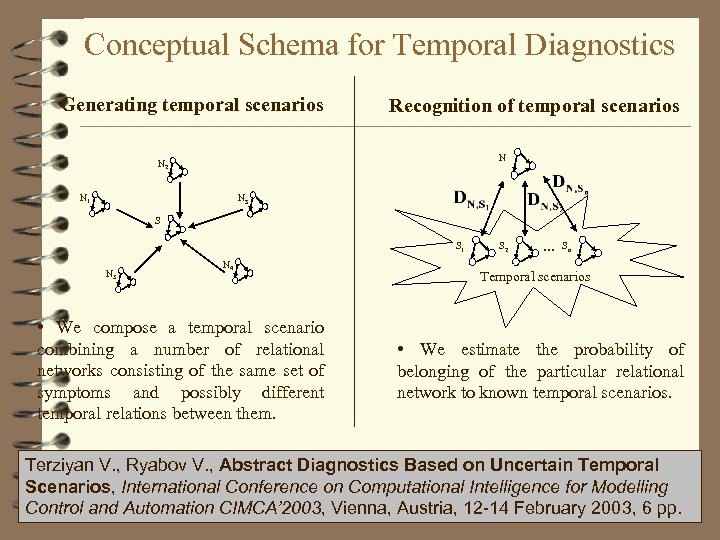

Conceptual Schema for Temporal Diagnostics Generating temporal scenarios Recognition of temporal scenarios N N 2 N 1 N 3 S S 1 N 5 N 4 • We compose a temporal scenario combining a number of relational networks consisting of the same set of symptoms and possibly different temporal relations between them. S 2 … Sn Temporal scenarios • We estimate the probability of belonging of the particular relational network to known temporal scenarios. Terziyan V. , Ryabov V. , Abstract Diagnostics Based on Uncertain Temporal Scenarios, International Conference on Computational Intelligence for Modelling Control and Automation CIMCA’ 2003, Vienna, Austria, 12 -14 February 2003, 6 pp.

Conceptual Schema for Temporal Diagnostics Generating temporal scenarios Recognition of temporal scenarios N N 2 N 1 N 3 S S 1 N 5 N 4 • We compose a temporal scenario combining a number of relational networks consisting of the same set of symptoms and possibly different temporal relations between them. S 2 … Sn Temporal scenarios • We estimate the probability of belonging of the particular relational network to known temporal scenarios. Terziyan V. , Ryabov V. , Abstract Diagnostics Based on Uncertain Temporal Scenarios, International Conference on Computational Intelligence for Modelling Control and Automation CIMCA’ 2003, Vienna, Austria, 12 -14 February 2003, 6 pp.

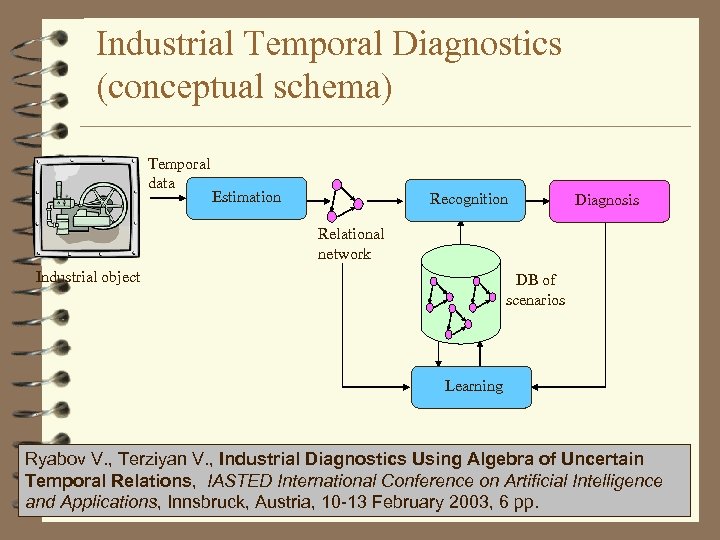

Industrial Temporal Diagnostics (conceptual schema) Temporal data Estimation Recognition Diagnosis Relational network Industrial object DB of scenarios Learning Ryabov V. , Terziyan V. , Industrial Diagnostics Using Algebra of Uncertain Temporal Relations, IASTED International Conference on Artificial Intelligence and Applications, Innsbruck, Austria, 10 -13 February 2003, 6 pp.

Industrial Temporal Diagnostics (conceptual schema) Temporal data Estimation Recognition Diagnosis Relational network Industrial object DB of scenarios Learning Ryabov V. , Terziyan V. , Industrial Diagnostics Using Algebra of Uncertain Temporal Relations, IASTED International Conference on Artificial Intelligence and Applications, Innsbruck, Austria, 10 -13 February 2003, 6 pp.

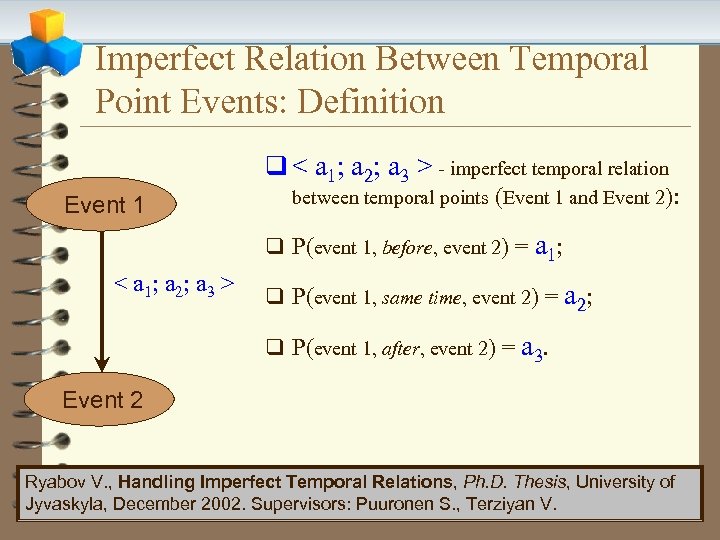

Imperfect Relation Between Temporal Point Events: Definition q < a 1; a 2; a 3 > - imperfect temporal relation Event 1 between temporal points (Event 1 and Event 2): q P(event 1, before, event 2) = a 1; < a 1; a 2; a 3 > q P(event 1, same time, event 2) = a 2; q P(event 1, after, event 2) = a 3. Event 2 Ryabov V. , Handling Imperfect Temporal Relations, Ph. D. Thesis, University of Jyvaskyla, December 2002. Supervisors: Puuronen S. , Terziyan V.

Imperfect Relation Between Temporal Point Events: Definition q < a 1; a 2; a 3 > - imperfect temporal relation Event 1 between temporal points (Event 1 and Event 2): q P(event 1, before, event 2) = a 1; < a 1; a 2; a 3 > q P(event 1, same time, event 2) = a 2; q P(event 1, after, event 2) = a 3. Event 2 Ryabov V. , Handling Imperfect Temporal Relations, Ph. D. Thesis, University of Jyvaskyla, December 2002. Supervisors: Puuronen S. , Terziyan V.

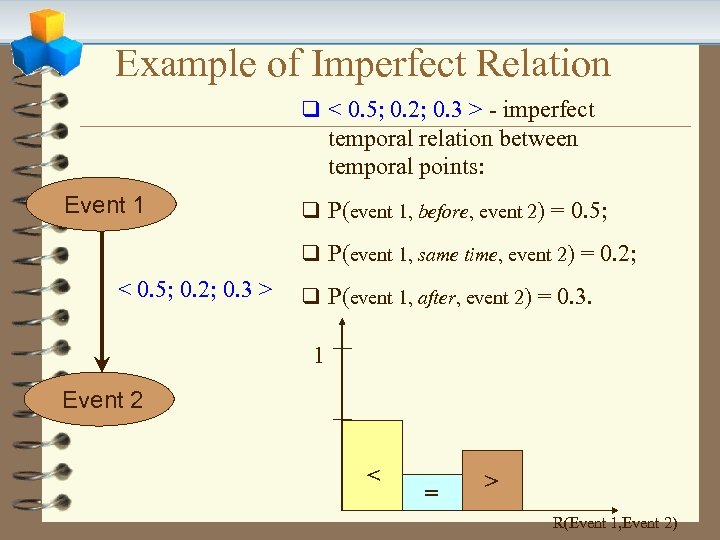

Example of Imperfect Relation q < 0. 5; 0. 2; 0. 3 > - imperfect temporal relation between temporal points: Event 1 q P(event 1, before, event 2) = 0. 5; q P(event 1, same time, event 2) = 0. 2; < 0. 5; 0. 2; 0. 3 > q P(event 1, after, event 2) = 0. 3. 1 Event 2 < = > R(Event 1, Event 2)

Example of Imperfect Relation q < 0. 5; 0. 2; 0. 3 > - imperfect temporal relation between temporal points: Event 1 q P(event 1, before, event 2) = 0. 5; q P(event 1, same time, event 2) = 0. 2; < 0. 5; 0. 2; 0. 3 > q P(event 1, after, event 2) = 0. 3. 1 Event 2 < = > R(Event 1, Event 2)

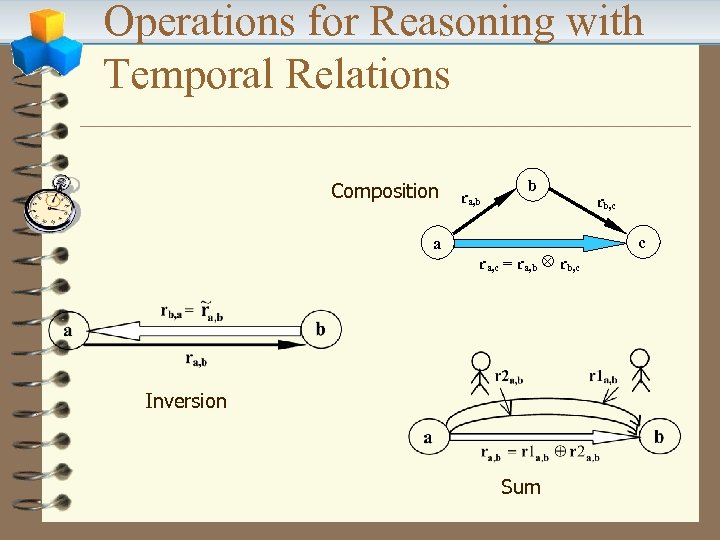

Operations for Reasoning with Temporal Relations Composition a ra, b b r a, c = r a, b Ä r b, c Inversion Sum rb, c c

Operations for Reasoning with Temporal Relations Composition a ra, b b r a, c = r a, b Ä r b, c Inversion Sum rb, c c

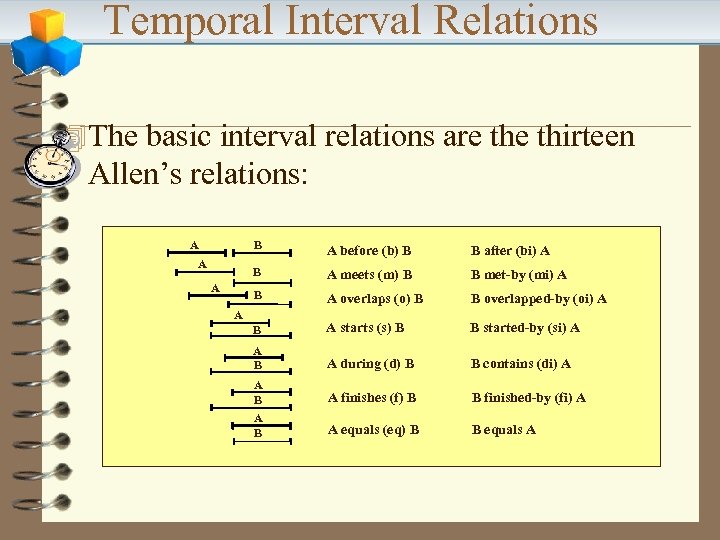

Temporal Interval Relations 4 The basic interval relations are thirteen Allen’s relations: A B A meets (m) B B met-by (mi) A B after (bi) A B A A before (b) B A overlaps (o) B B overlapped-by (oi) A B A starts (s) B B started-by (si) A A B A during (d) B B contains (di) A A B A finishes (f) B B finished-by (fi) A A B A equals (eq) B B equals A A

Temporal Interval Relations 4 The basic interval relations are thirteen Allen’s relations: A B A meets (m) B B met-by (mi) A B after (bi) A B A A before (b) B A overlaps (o) B B overlapped-by (oi) A B A starts (s) B B started-by (si) A A B A during (d) B B contains (di) A A B A finishes (f) B B finished-by (fi) A A B A equals (eq) B B equals A A

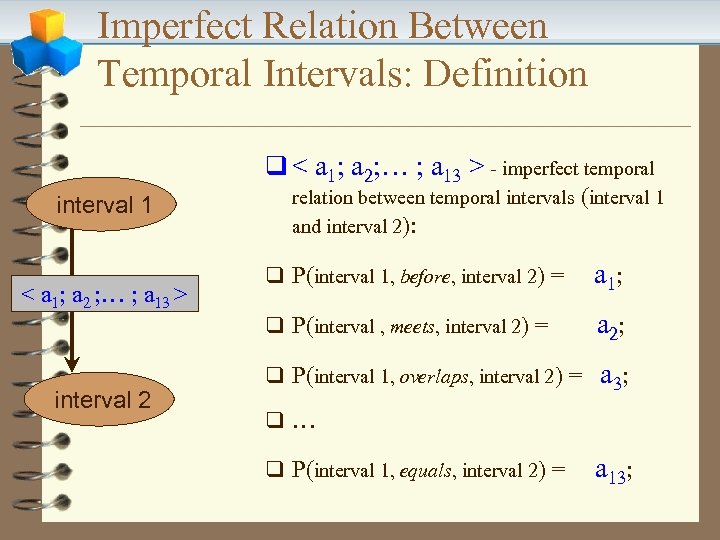

Imperfect Relation Between Temporal Intervals: Definition q < a 1; a 2; … ; a 13 > - imperfect temporal interval 1 < a 1; a 2 ; … ; a 13 > interval 2 relation between temporal intervals (interval 1 and interval 2): q P(interval 1, before, interval 2) = a 1; q P(interval , meets, interval 2) = a 2; q P(interval 1, overlaps, interval 2) = a 3; q… q P(interval 1, equals, interval 2) = a 13;

Imperfect Relation Between Temporal Intervals: Definition q < a 1; a 2; … ; a 13 > - imperfect temporal interval 1 < a 1; a 2 ; … ; a 13 > interval 2 relation between temporal intervals (interval 1 and interval 2): q P(interval 1, before, interval 2) = a 1; q P(interval , meets, interval 2) = a 2; q P(interval 1, overlaps, interval 2) = a 3; q… q P(interval 1, equals, interval 2) = a 13;

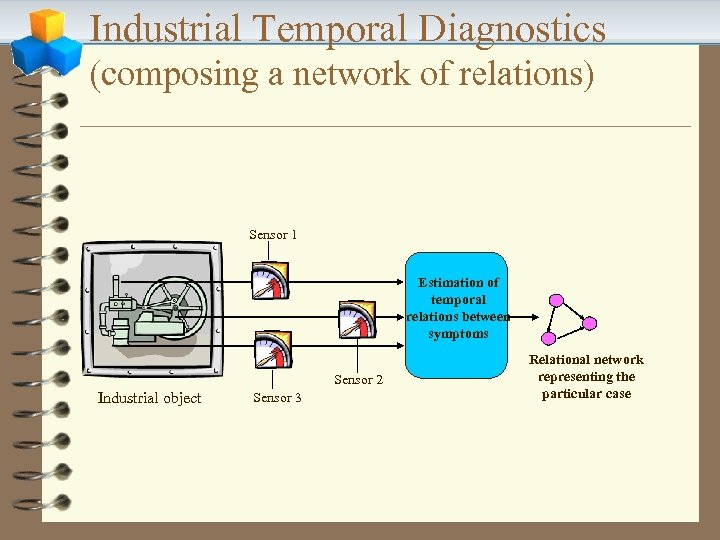

Industrial Temporal Diagnostics (composing a network of relations) Sensor 1 Estimation of temporal relations between symptoms Sensor 2 Industrial object Sensor 3 Relational network representing the particular case

Industrial Temporal Diagnostics (composing a network of relations) Sensor 1 Estimation of temporal relations between symptoms Sensor 2 Industrial object Sensor 3 Relational network representing the particular case

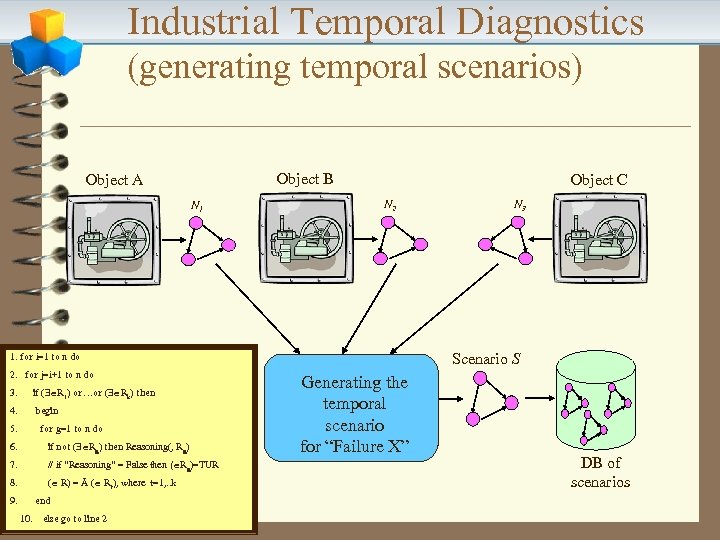

Industrial Temporal Diagnostics (generating temporal scenarios) Object B Object A N 1 Object C N 2 Scenario S 1. for i=1 to n do 2. for j=i+1 to n do 3. if ( R 1) or…or ( Rk) then 4. begin 5. for g=1 to n do 6. if not ( Rg) then Reasoning(, Rg) 7. // if “Reasoning” = False then ( Rg)=TUR 8. ( R) = Å ( Rt), where t=1, . . k 9. end 10. else go to line 2 N 3 Generating the temporal scenario for “Failure X” DB of scenarios

Industrial Temporal Diagnostics (generating temporal scenarios) Object B Object A N 1 Object C N 2 Scenario S 1. for i=1 to n do 2. for j=i+1 to n do 3. if ( R 1) or…or ( Rk) then 4. begin 5. for g=1 to n do 6. if not ( Rg) then Reasoning(, Rg) 7. // if “Reasoning” = False then ( Rg)=TUR 8. ( R) = Å ( Rt), where t=1, . . k 9. end 10. else go to line 2 N 3 Generating the temporal scenario for “Failure X” DB of scenarios

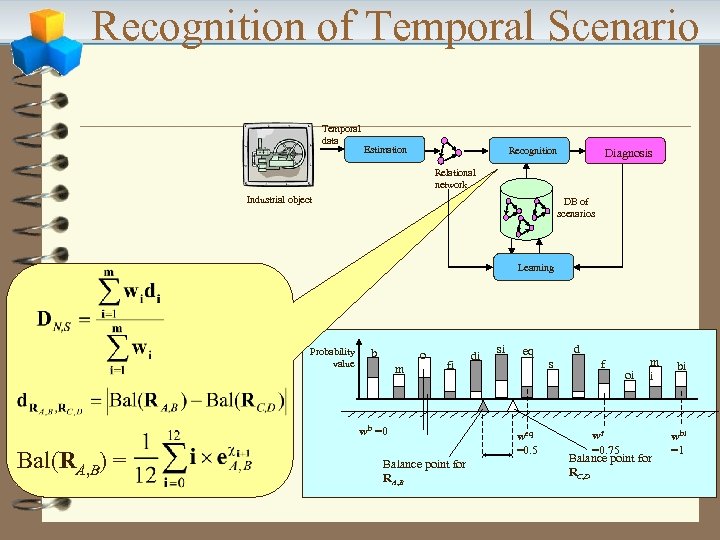

Recognition of Temporal Scenario Temporal data Estimation Recognition Diagnosis Relational network Industrial object DB of scenarios Learning Probability value b o m fi wb =0 Bal(RA, B) = Balance point for RA, B di si eq weq =0. 5 d s f oi m i wf =0. 75 Balance point for RC, D bi wbi =1

Recognition of Temporal Scenario Temporal data Estimation Recognition Diagnosis Relational network Industrial object DB of scenarios Learning Probability value b o m fi wb =0 Bal(RA, B) = Balance point for RA, B di si eq weq =0. 5 d s f oi m i wf =0. 75 Balance point for RC, D bi wbi =1

When Temporal Diagnostics ? 1. Temporal diagnostics considers not only a static set of symptoms, but also the time during which they were monitored. This often allows having a broader view on the situation, and sometimes only considering temporal relations between different symptoms can give us a hint to precise diagnostics; 2. This approach might be useful for example in cases when appropriate causal relationships between events (symptoms) are not yet known and the only available for study are temporal relationships; 3. Combination of Bayesian (based on probabilistic causal knowledge) and Temporal Diagnostics would be quite powerful diagnostic tool.

When Temporal Diagnostics ? 1. Temporal diagnostics considers not only a static set of symptoms, but also the time during which they were monitored. This often allows having a broader view on the situation, and sometimes only considering temporal relations between different symptoms can give us a hint to precise diagnostics; 2. This approach might be useful for example in cases when appropriate causal relationships between events (symptoms) are not yet known and the only available for study are temporal relationships; 3. Combination of Bayesian (based on probabilistic causal knowledge) and Temporal Diagnostics would be quite powerful diagnostic tool.

Vagan Terziyan V. , Dynamic Integration of Virtual Predictors, In: L. I. Kuncheva, F. Steimann, C. Haefke, M. Aladjem, V. Novak (Eds), Proceedings of the International ICSC Congress on Computational Intelligence: Methods and Applications - CIMA'2001, Bangor, Wales, UK, June 19 - 22, 2001, ICSC Academic Press, Canada/The Netherlands, pp. 463 -469.

Vagan Terziyan V. , Dynamic Integration of Virtual Predictors, In: L. I. Kuncheva, F. Steimann, C. Haefke, M. Aladjem, V. Novak (Eds), Proceedings of the International ICSC Congress on Computational Intelligence: Methods and Applications - CIMA'2001, Bangor, Wales, UK, June 19 - 22, 2001, ICSC Academic Press, Canada/The Netherlands, pp. 463 -469.

The Problem During the past several years, in a variety of application domains, researchers in machine learning, computational learning theory, pattern recognition and statistics have tried to combine efforts to learn how to create and combine an ensemble of classifiers. The primary goal of combining several classifiers is to obtain a more accurate prediction than can be obtained from any single classifier alone.

The Problem During the past several years, in a variety of application domains, researchers in machine learning, computational learning theory, pattern recognition and statistics have tried to combine efforts to learn how to create and combine an ensemble of classifiers. The primary goal of combining several classifiers is to obtain a more accurate prediction than can be obtained from any single classifier alone.

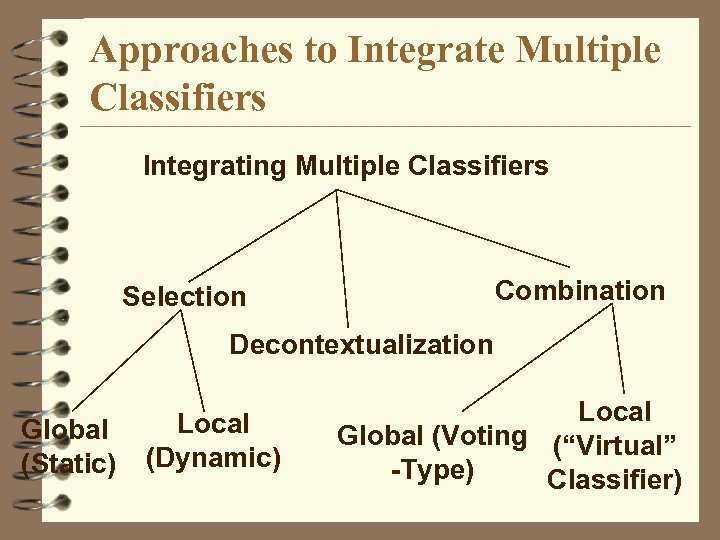

Approaches to Integrate Multiple Classifiers Integrating Multiple Classifiers Combination Selection Decontextualization Global (Static) Local (Dynamic) Local Global (Voting (“Virtual” -Type) Classifier)

Approaches to Integrate Multiple Classifiers Integrating Multiple Classifiers Combination Selection Decontextualization Global (Static) Local (Dynamic) Local Global (Voting (“Virtual” -Type) Classifier)

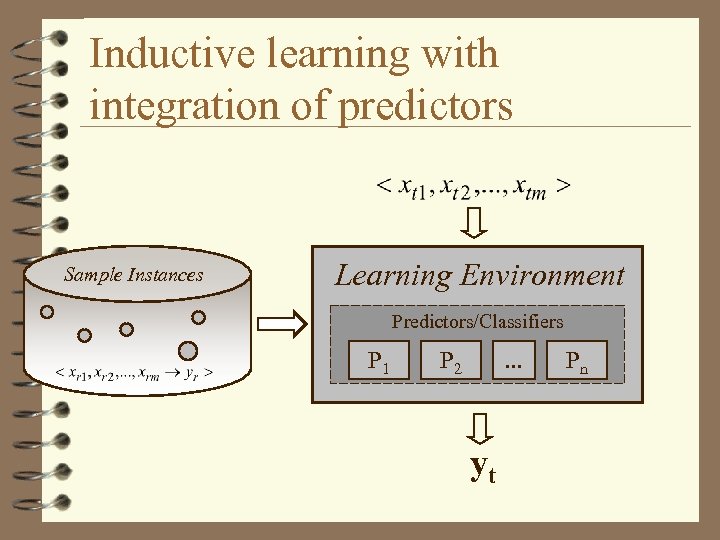

Inductive learning with integration of predictors Sample Instances Learning Environment Predictors/Classifiers P 1 P 2 . . . yt Pn

Inductive learning with integration of predictors Sample Instances Learning Environment Predictors/Classifiers P 1 P 2 . . . yt Pn

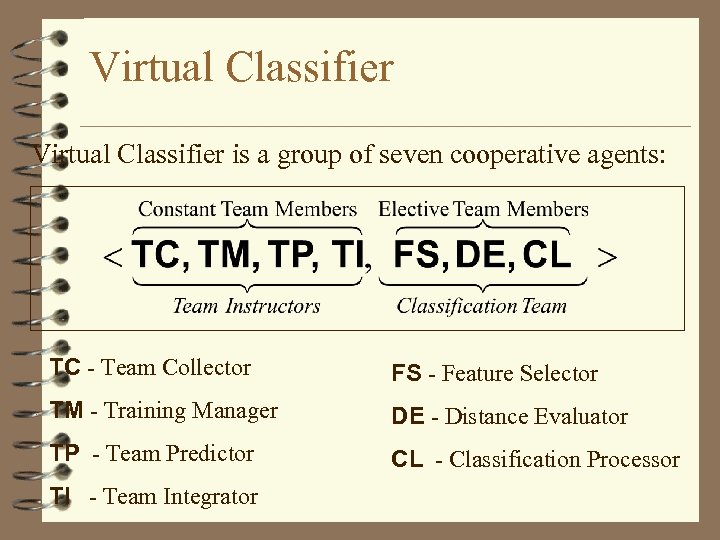

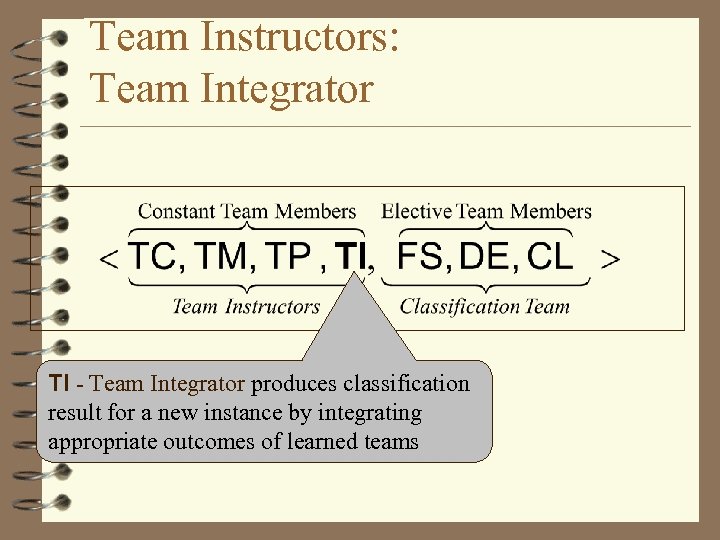

Virtual Classifier is a group of seven cooperative agents: TC - Team Collector FS - Feature Selector TM - Training Manager DE - Distance Evaluator TP - Team Predictor CL - Classification Processor TI - Team Integrator

Virtual Classifier is a group of seven cooperative agents: TC - Team Collector FS - Feature Selector TM - Training Manager DE - Distance Evaluator TP - Team Predictor CL - Classification Processor TI - Team Integrator

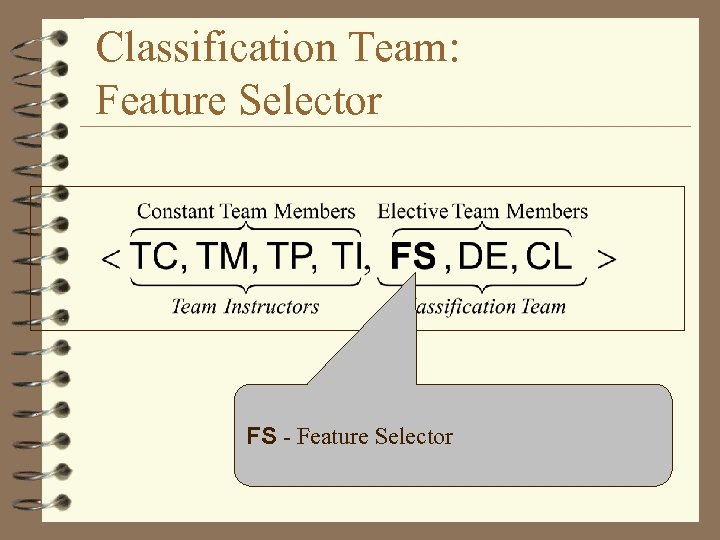

Classification Team: Feature Selector FS - Feature Selector

Classification Team: Feature Selector FS - Feature Selector

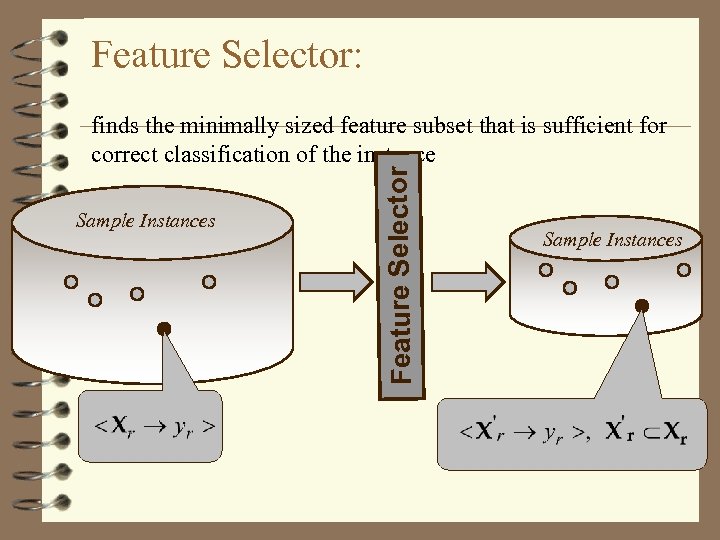

Feature Selector: Sample Instances Feature Selector finds the minimally sized feature subset that is sufficient for correct classification of the instance Sample Instances

Feature Selector: Sample Instances Feature Selector finds the minimally sized feature subset that is sufficient for correct classification of the instance Sample Instances

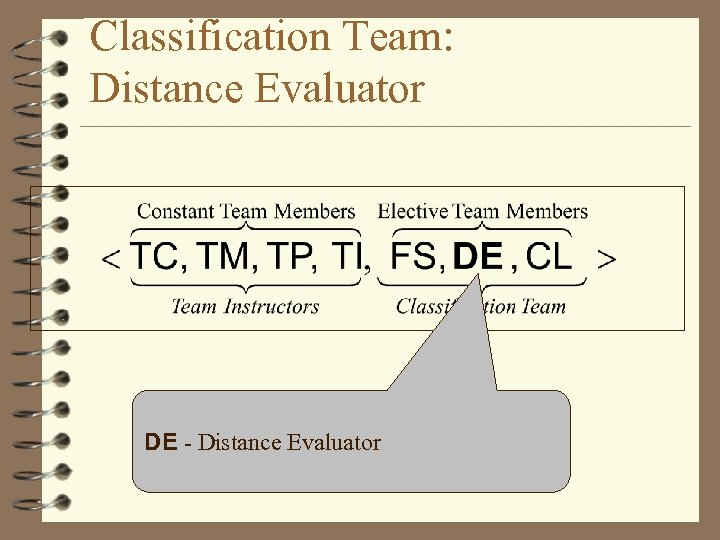

Classification Team: Distance Evaluator DE - Distance Evaluator

Classification Team: Distance Evaluator DE - Distance Evaluator

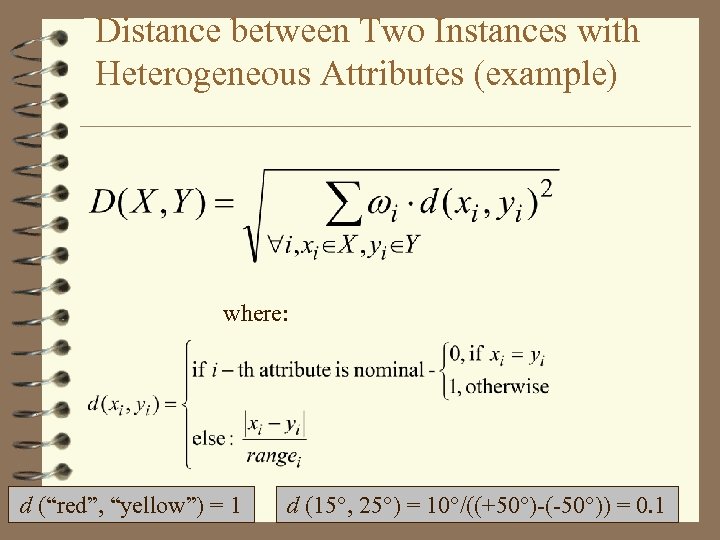

Distance between Two Instances with Heterogeneous Attributes (example) where: d (“red”, “yellow”) = 1 d (15°, 25°) = 10°/((+50°)-(-50°)) = 0. 1

Distance between Two Instances with Heterogeneous Attributes (example) where: d (“red”, “yellow”) = 1 d (15°, 25°) = 10°/((+50°)-(-50°)) = 0. 1

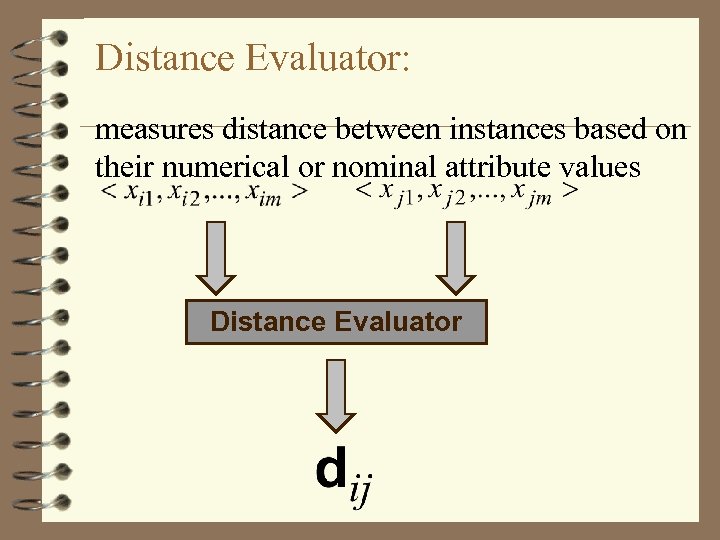

Distance Evaluator: measures distance between instances based on their numerical or nominal attribute values Distance Evaluator

Distance Evaluator: measures distance between instances based on their numerical or nominal attribute values Distance Evaluator

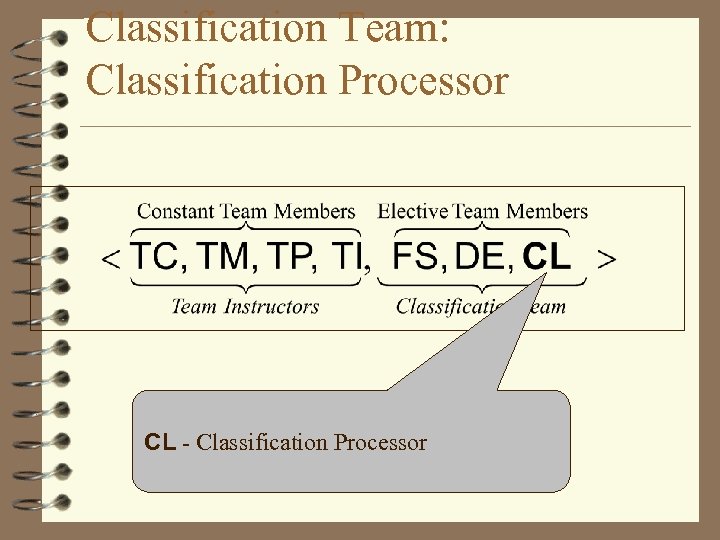

Classification Team: Classification Processor CL - Classification Processor

Classification Team: Classification Processor CL - Classification Processor

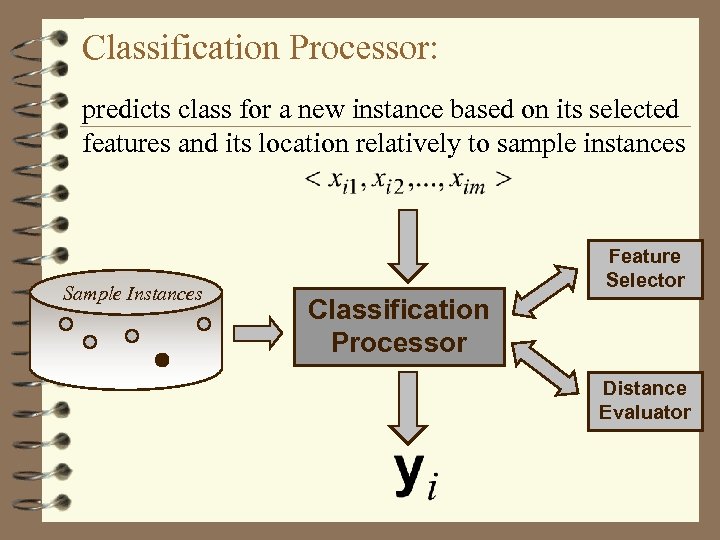

Classification Processor: predicts class for a new instance based on its selected features and its location relatively to sample instances Sample Instances Feature Selector Classification Processor Distance Evaluator

Classification Processor: predicts class for a new instance based on its selected features and its location relatively to sample instances Sample Instances Feature Selector Classification Processor Distance Evaluator

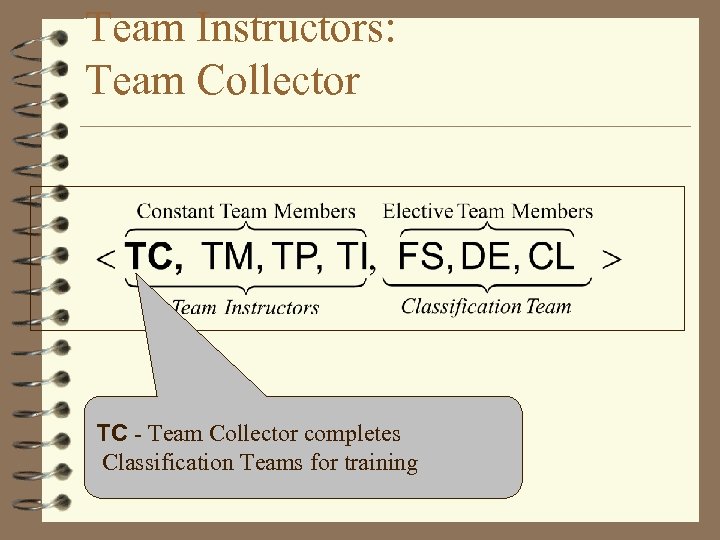

Team Instructors: Team Collector TC - Team Collector completes Classification Teams for training

Team Instructors: Team Collector TC - Team Collector completes Classification Teams for training

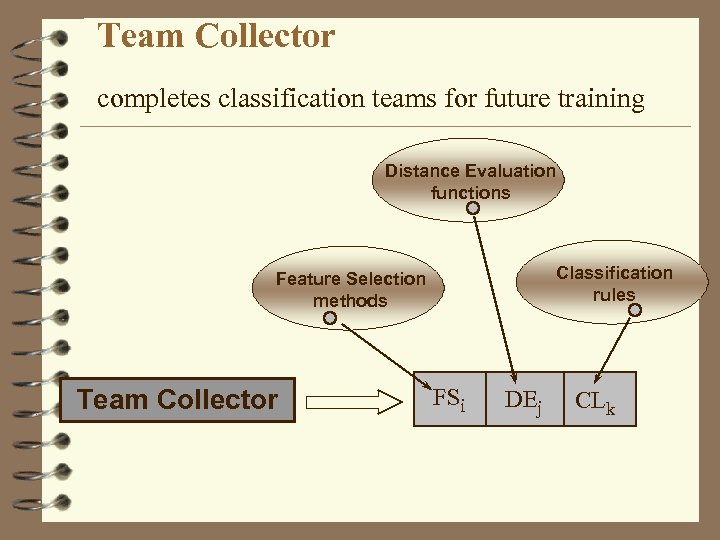

Team Collector completes classification teams for future training Distance Evaluation functions Classification rules Feature Selection methods Team Collector FSi DEj CLk

Team Collector completes classification teams for future training Distance Evaluation functions Classification rules Feature Selection methods Team Collector FSi DEj CLk

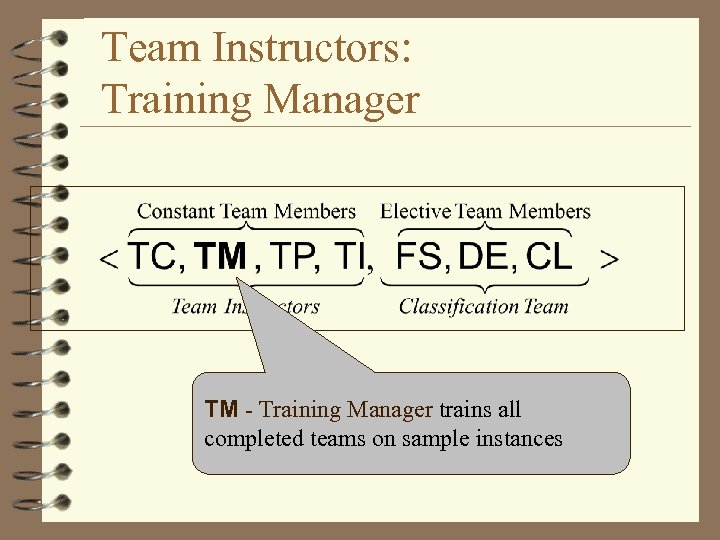

Team Instructors: Training Manager TM - Training Manager trains all completed teams on sample instances

Team Instructors: Training Manager TM - Training Manager trains all completed teams on sample instances

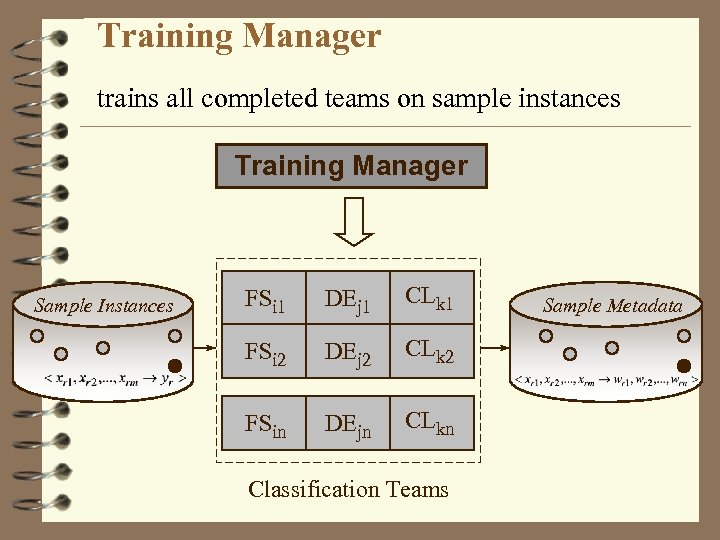

Training Manager trains all completed teams on sample instances Training Manager Sample Instances FSi 1 DEj 1 CLk 1 FSi 2 DEj 2 CLk 2 FSin DEjn CLkn Classification Teams Sample Metadata

Training Manager trains all completed teams on sample instances Training Manager Sample Instances FSi 1 DEj 1 CLk 1 FSi 2 DEj 2 CLk 2 FSin DEjn CLkn Classification Teams Sample Metadata

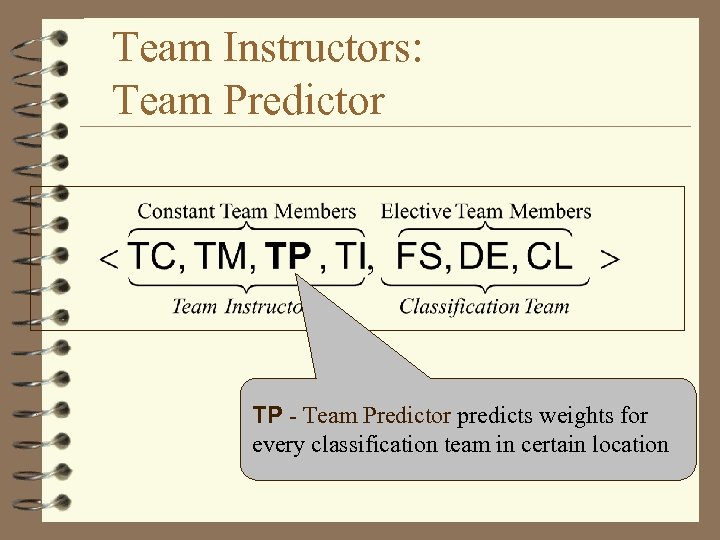

Team Instructors: Team Predictor TP - Team Predictor predicts weights for every classification team in certain location

Team Instructors: Team Predictor TP - Team Predictor predicts weights for every classification team in certain location

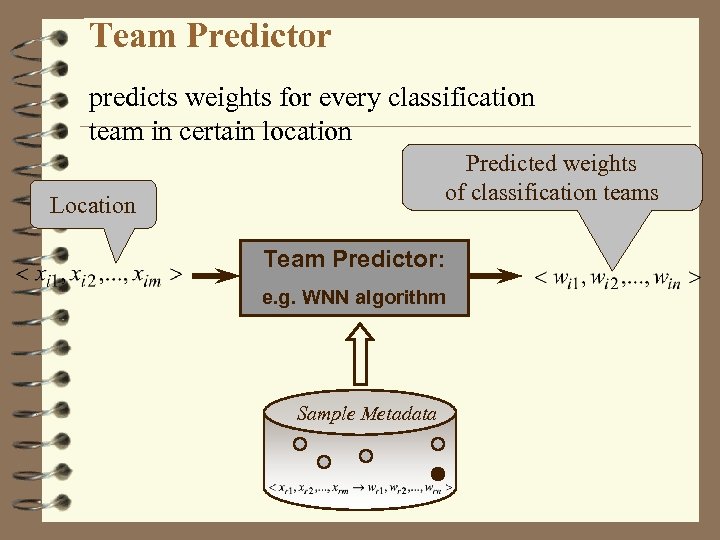

Team Predictor predicts weights for every classification team in certain location Predicted weights of classification teams Location Team Predictor: e. g. WNN algorithm Sample Metadata

Team Predictor predicts weights for every classification team in certain location Predicted weights of classification teams Location Team Predictor: e. g. WNN algorithm Sample Metadata

Team Prediction: Locality assumption Each team has certain subdomains in the space of instance attributes, where it is more reliable than the others; This assumption is supported by the experiences, that classifiers usually work well not only in certain points of the domain space, but in certain subareas of the domain space [Quinlan, 1993]; If a team does not work well with the instances near a new instance, then it is quite probable that it will not work well with this new instance also.

Team Prediction: Locality assumption Each team has certain subdomains in the space of instance attributes, where it is more reliable than the others; This assumption is supported by the experiences, that classifiers usually work well not only in certain points of the domain space, but in certain subareas of the domain space [Quinlan, 1993]; If a team does not work well with the instances near a new instance, then it is quite probable that it will not work well with this new instance also.

Team Instructors: Team Integrator TI - Team Integrator produces classification result for a new instance by integrating appropriate outcomes of learned teams

Team Instructors: Team Integrator TI - Team Integrator produces classification result for a new instance by integrating appropriate outcomes of learned teams

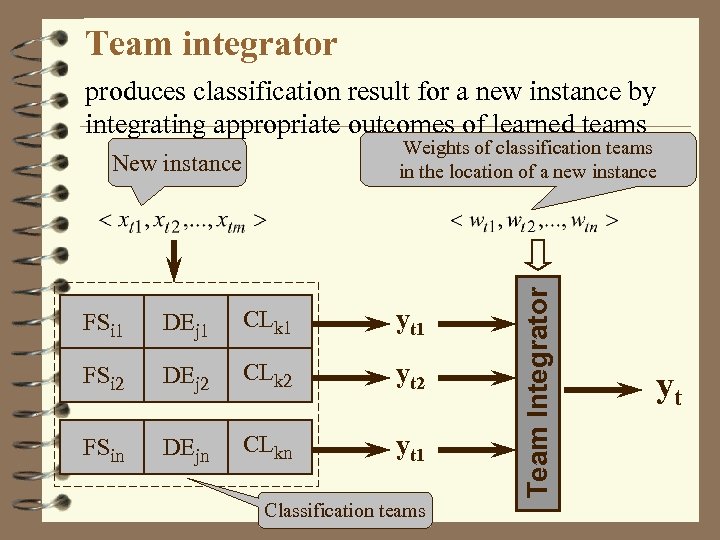

Team integrator produces classification result for a new instance by integrating appropriate outcomes of learned teams New instance FSi 1 DEj 1 CLk 1 yt 1 FSi 2 DEj 2 CLk 2 yt 2 FSin DEjn CLkn yt 1 Classification teams Team Integrator Weights of classification teams in the location of a new instance yt

Team integrator produces classification result for a new instance by integrating appropriate outcomes of learned teams New instance FSi 1 DEj 1 CLk 1 yt 1 FSi 2 DEj 2 CLk 2 yt 2 FSin DEjn CLkn yt 1 Classification teams Team Integrator Weights of classification teams in the location of a new instance yt

Static Selection of a Classifier 4 Static selection means that we try all teams on a sample set and for further classification select one, which achieved the best classification accuracy among others for the whole sample set. Thus we select a team only once and then use it to classify all new domain instances.