7de75deb9b9bb37ac5c5fe50954026ca.ppt

- Количество слайдов: 22

Feature selection based on information theory, consistency and separability indices Włodzisław Duch, Tomasz Winiarski, Krzysztof Grąbczewski, Jacek Biesiada, Adam Kachel Dept. of Informatics, Nicholas Copernicus University, Toruń, Poland http: //www. phys. uni. torun. pl/~duch ICONIP Singapore, 18 -22. 11. 2002

What am I going to say • • Selection of information Information theory - filters Information theory - selection Consistency indices Separability indices Empirical comparison: artificial data Empirical comparison: real data Conclusions, or what have we learned?

Selection of information • Attention: basic cognitive skill • Find relevant information: – – discard attributes that do not contain information, use weights to express the relative importance, create new, more informative attributes reduce dimensionality aggregating information • Ranking: treat each feature as independent. Selection: search for subsets, remove redundant. • Filters: universal criteria, model-independent. Wrappers: criteria specific for data models are used. • Here: filters for ranking and selection.

Information theory - filters X – vectors, Xj – attributes, Xj=f attribute values, Ci - class i =1. . K, joint probability distribution p(C, Xj). The amount of information contained in this joint distribution, summed over all classes, gives an estimation of feature importance: For continuous attribute values integrals are approximated by sums. This implies discretization into rk(f) regions, an issue in itself. Alternative: fitting p(Ci, f) density using Gaussian or other kernels. Which method is more accurate and what are expected errors?

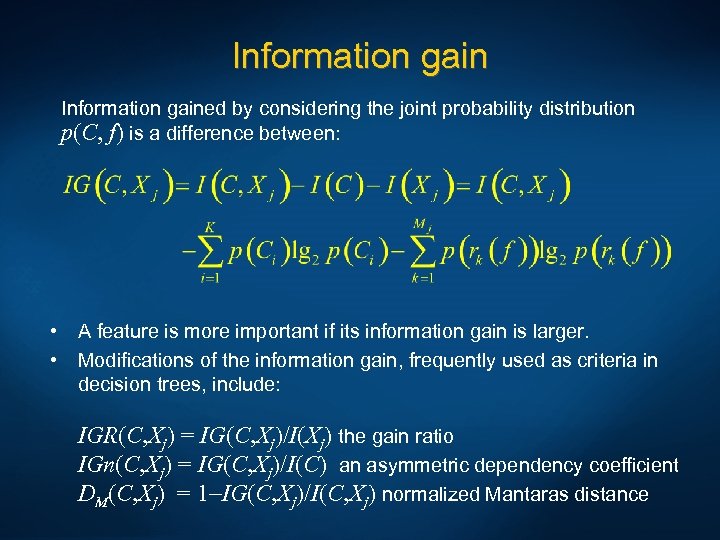

Information gained by considering the joint probability distribution p(C, f) is a difference between: • • A feature is more important if its information gain is larger. Modifications of the information gain, frequently used as criteria in decision trees, include: IGR(C, Xj) = IG(C, Xj)/I(Xj) the gain ratio IGn(C, Xj) = IG(C, Xj)/I(C) an asymmetric dependency coefficient DM(C, Xj) = 1 -IG(C, Xj)/I(C, Xj) normalized Mantaras distance

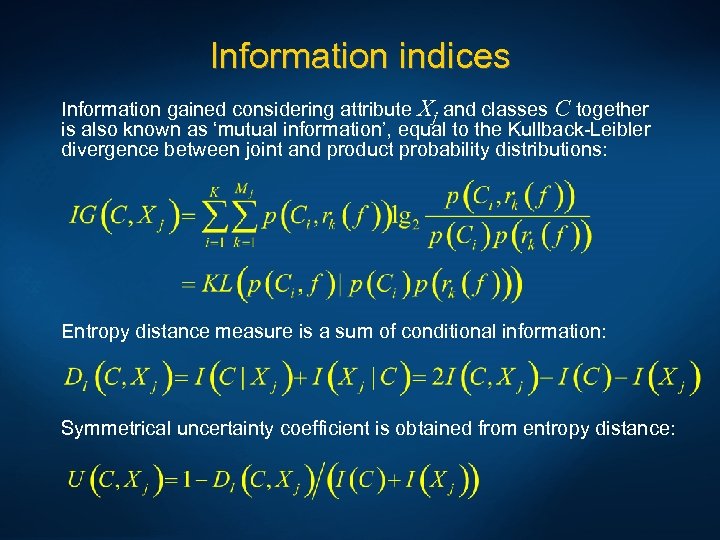

Information indices Information gained considering attribute Xj and classes C together is also known as ‘mutual information’, equal to the Kullback-Leibler divergence between joint and product probability distributions: Entropy distance measure is a sum of conditional information: Symmetrical uncertainty coefficient is obtained from entropy distance:

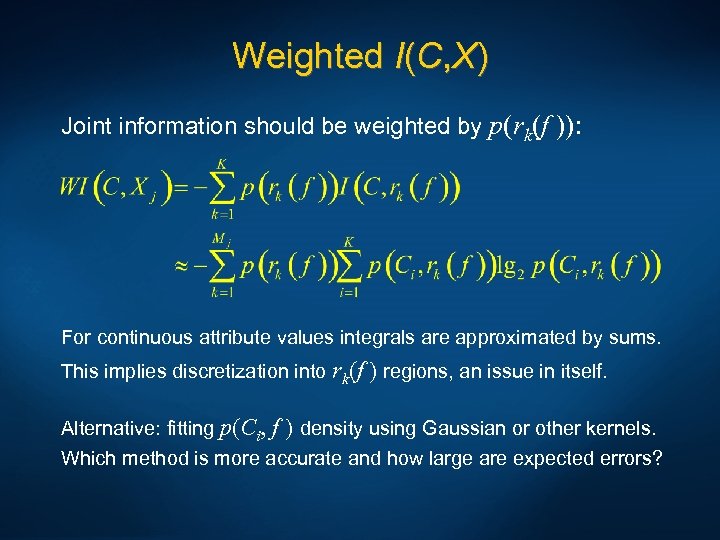

Weighted I(C, X) Joint information should be weighted by p(rk(f )): For continuous attribute values integrals are approximated by sums. This implies discretization into rk(f ) regions, an issue in itself. Alternative: fitting p(Ci, f ) density using Gaussian or other kernels. Which method is more accurate and how large are expected errors?

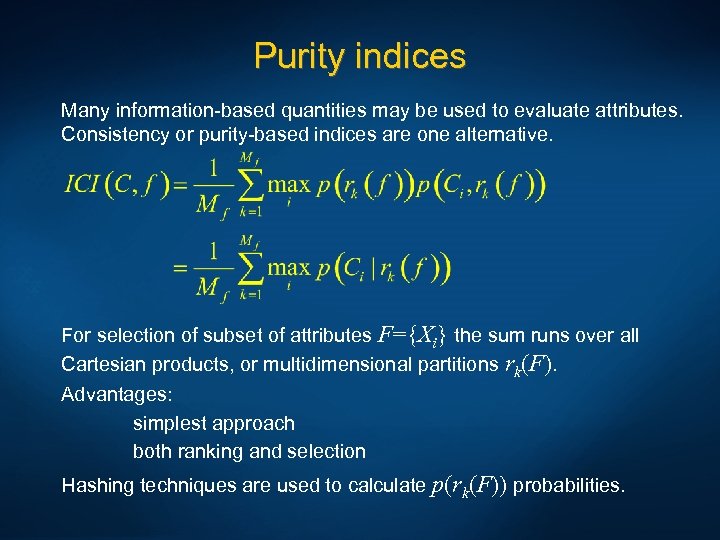

Purity indices Many information-based quantities may be used to evaluate attributes. Consistency or purity-based indices are one alternative. For selection of subset of attributes F={Xi} the sum runs over all Cartesian products, or multidimensional partitions rk(F). Advantages: simplest approach both ranking and selection Hashing techniques are used to calculate p(rk(F)) probabilities.

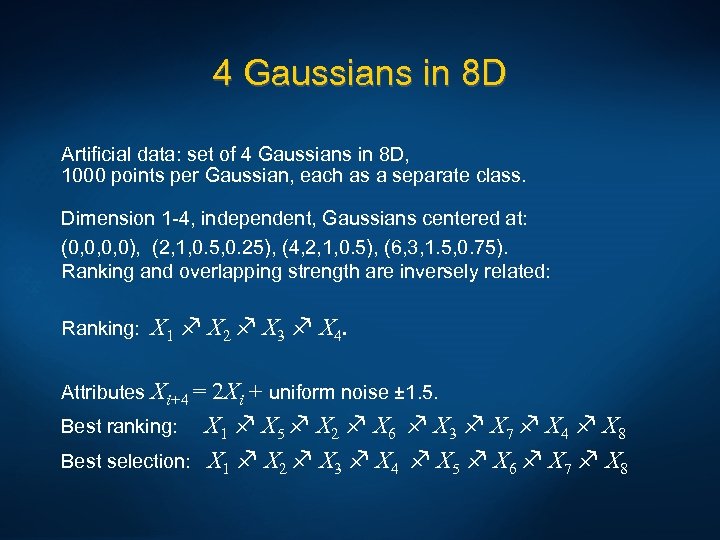

4 Gaussians in 8 D Artificial data: set of 4 Gaussians in 8 D, 1000 points per Gaussian, each as a separate class. Dimension 1 -4, independent, Gaussians centered at: (0, 0, 0, 0), (2, 1, 0. 5, 0. 25), (4, 2, 1, 0. 5), (6, 3, 1. 5, 0. 75). Ranking and overlapping strength are inversely related: Ranking: X 1 X 2 X 3 X 4. Attributes Xi+4 = 2 Xi + uniform noise ± 1. 5. Best ranking: X 1 X 5 X 2 X 6 X 3 X 7 X 4 X 8 Best selection: X 1 X 2 X 3 X 4 X 5 X 6 X 7 X 8

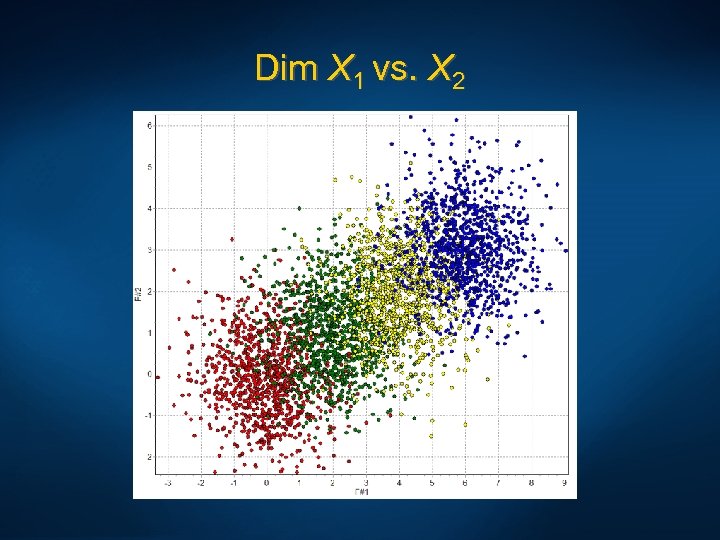

Dim X 1 vs. X 2

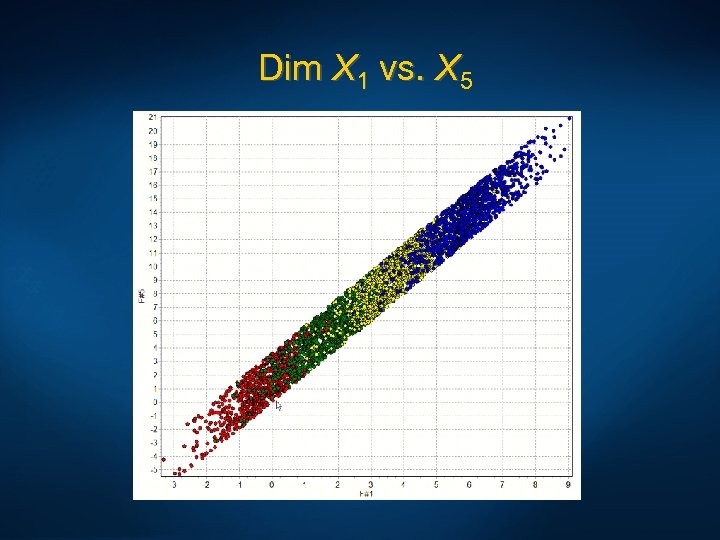

Dim X 1 vs. X 5

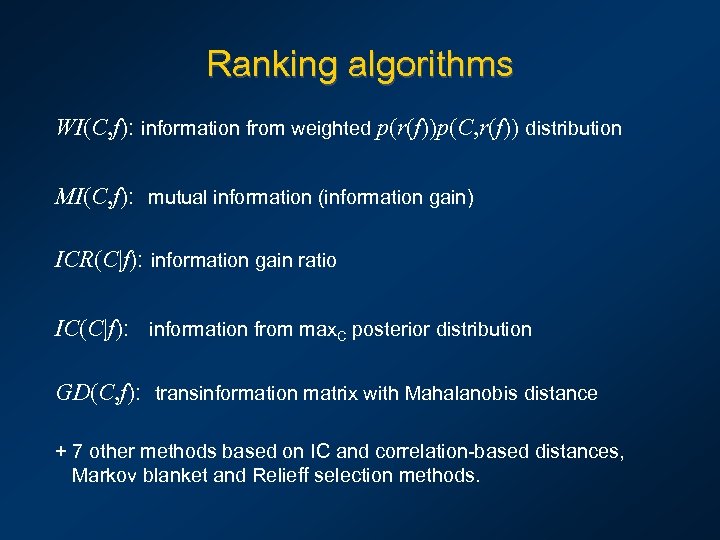

Ranking algorithms WI(C, f): information from weighted p(r(f))p(C, r(f)) distribution MI(C, f): mutual information (information gain) ICR(C|f): information gain ratio IC(C|f): information from max. C posterior distribution GD(C, f): transinformation matrix with Mahalanobis distance + 7 other methods based on IC and correlation-based distances, Markov blanket and Relieff selection methods.

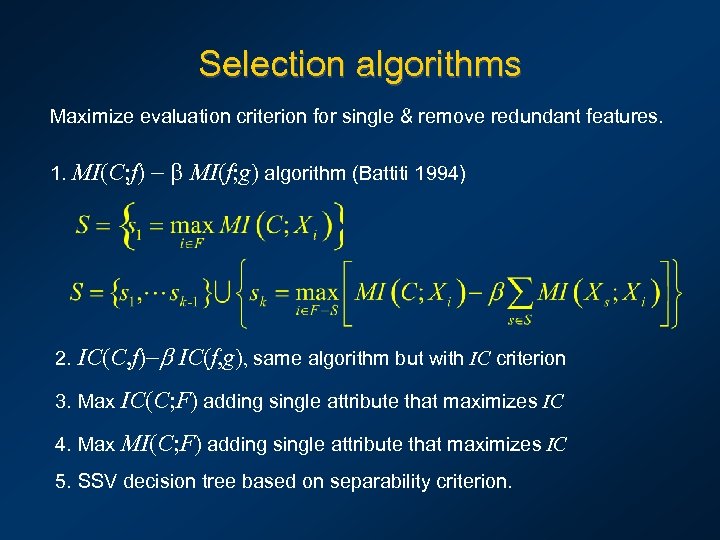

Selection algorithms Maximize evaluation criterion for single & remove redundant features. 1. MI(C; f) - b MI(f; g) algorithm (Battiti 1994) 2. IC(C, f)-b IC(f, g), same algorithm but with IC criterion 3. Max IC(C; F) adding single attribute that maximizes IC 4. Max MI(C; F) adding single attribute that maximizes IC 5. SSV decision tree based on separability criterion.

Ranking for 8 D Gaussians Partitions of each attribute into 4, 8, 16, 24, 32 parts, with equal width. • Methods that found perfect ranking: MI(C; f), IGR(C; f), WI(C, f), GD transinformation distance • IC(f): correct, except for P 8, feature 2 -6 reversed (6 is the noisy version of 2). • Other, more sophisticated algorithms, made more errors. Selection for Gaussian distributions is rather easy using any evaluation measure. Simpler algorithms work better.

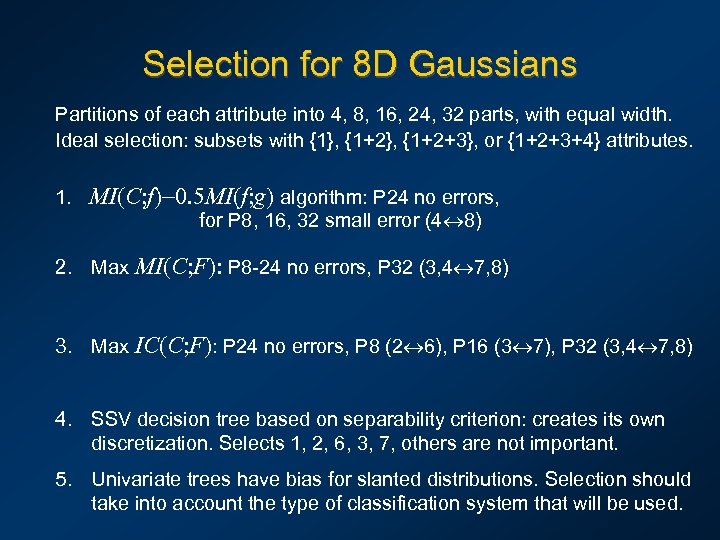

Selection for 8 D Gaussians Partitions of each attribute into 4, 8, 16, 24, 32 parts, with equal width. Ideal selection: subsets with {1}, {1+2+3}, or {1+2+3+4} attributes. 1. MI(C; f)-0. 5 MI(f; g) algorithm: P 24 no errors, for P 8, 16, 32 small error (4 8) 2. Max MI(C; F): P 8 -24 no errors, P 32 (3, 4 7, 8) 3. Max IC(C; F): P 24 no errors, P 8 (2 6), P 16 (3 7), P 32 (3, 4 7, 8) 4. SSV decision tree based on separability criterion: creates its own discretization. Selects 1, 2, 6, 3, 7, others are not important. 5. Univariate trees have bias for slanted distributions. Selection should take into account the type of classification system that will be used.

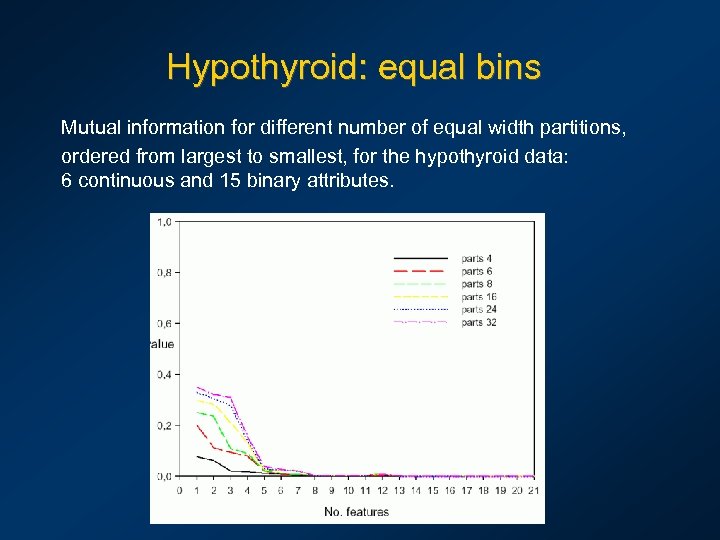

Hypothyroid: equal bins Mutual information for different number of equal width partitions, ordered from largest to smallest, for the hypothyroid data: 6 continuous and 15 binary attributes.

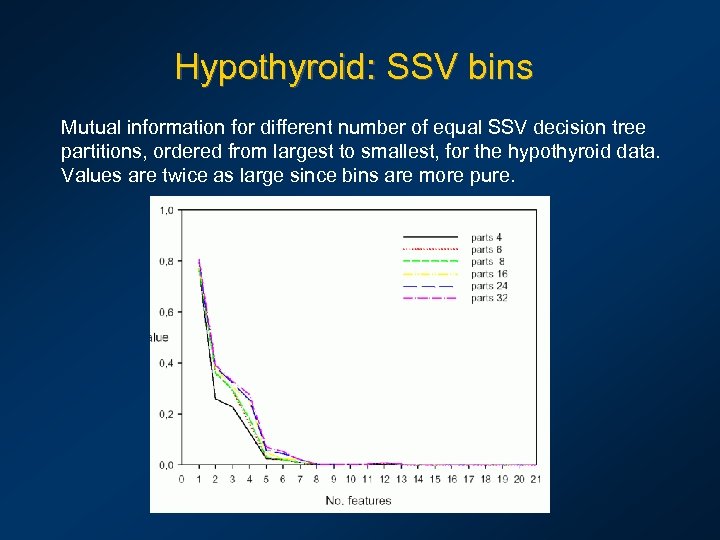

Hypothyroid: SSV bins Mutual information for different number of equal SSV decision tree partitions, ordered from largest to smallest, for the hypothyroid data. Values are twice as large since bins are more pure.

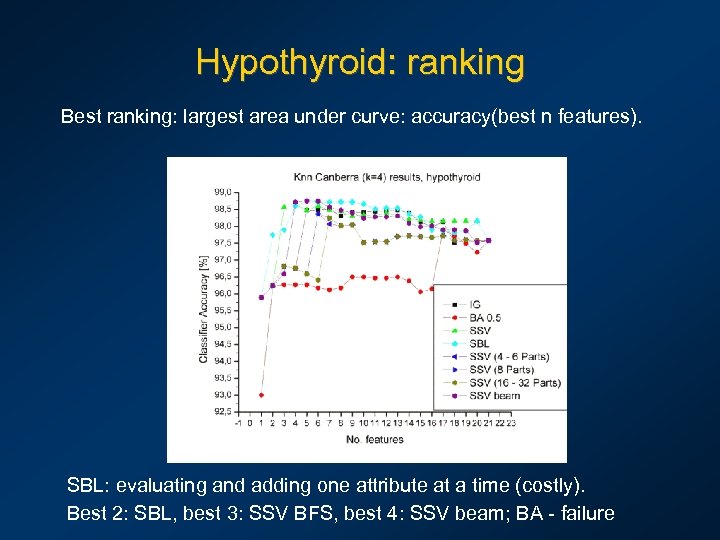

Hypothyroid: ranking Best ranking: largest area under curve: accuracy(best n features). SBL: evaluating and adding one attribute at a time (costly). Best 2: SBL, best 3: SSV BFS, best 4: SSV beam; BA - failure

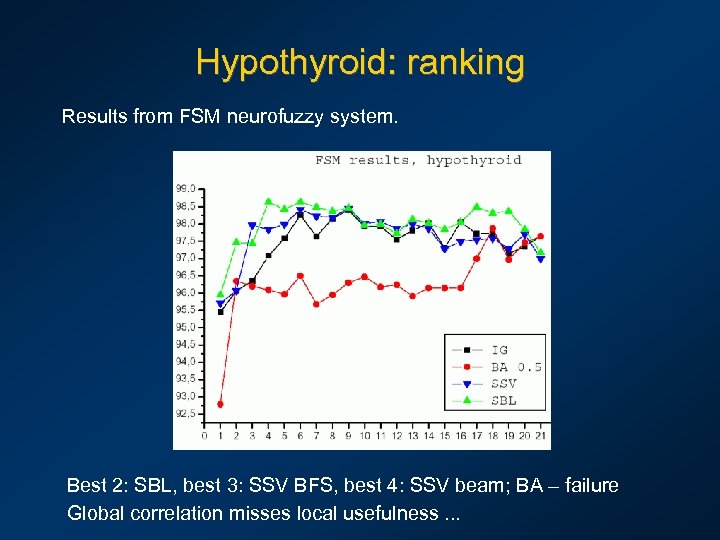

Hypothyroid: ranking Results from FSM neurofuzzy system. Best 2: SBL, best 3: SSV BFS, best 4: SSV beam; BA – failure Global correlation misses local usefulness. . .

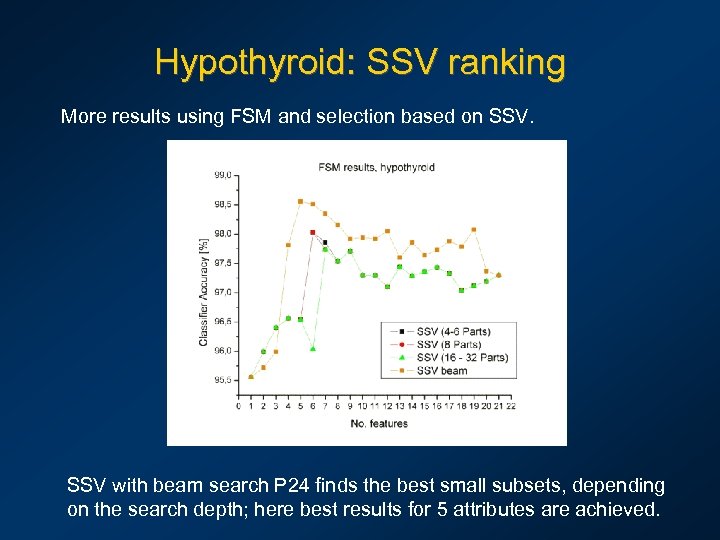

Hypothyroid: SSV ranking More results using FSM and selection based on SSV with beam search P 24 finds the best small subsets, depending on the search depth; here best results for 5 attributes are achieved.

Conclusions About 20 ranking and selection methods have been checked. • The actual feature evaluation index (information, consistency, correlation) is not so important. • Discretization is very important; naive equi-width or equidistance discretization may give unpredictable results; entropy-based discretization is fine, but separability-based is less expensive. • Continuous kernel-based approximations to calculation of feature evaluation indices are a useful alternative. • Ranking is easy if global evaluation is sufficient, but different sets of features may be important for separation of different classes, and some are important in small regions only – cf. decision trees. • Selection requires calculation of multidimensional evaluation indices, done effectively using hashing techniques. • Local selection and ranking is the most promising technique.

Open questions Is the best selection method based on filters possible? Perhaps it depends on the ability of different methods to use the information contained in selected attributes. • Discretization or kernel estimation? • • • Best discretization: Vopt histograms, entropy, separability? How useful is fuzzy partitioning? Use of feature weighting from ranking/selection to scale input data. How to make evaluation index that includes local information? Hoe to use selection methods to find combination of attributes? These and other ranking/selection methods will be integrated into the Ghost. Miner data mining package: Google: Ghost. Miner

7de75deb9b9bb37ac5c5fe50954026ca.ppt