e5f221c01792e7f26e0aafee6cc1888f.ppt

- Количество слайдов: 36

Extracting More ILP F 00: 1 CS/EE 5810 CS/EE 6810

Extracting More ILP F 00: 1 CS/EE 5810 CS/EE 6810

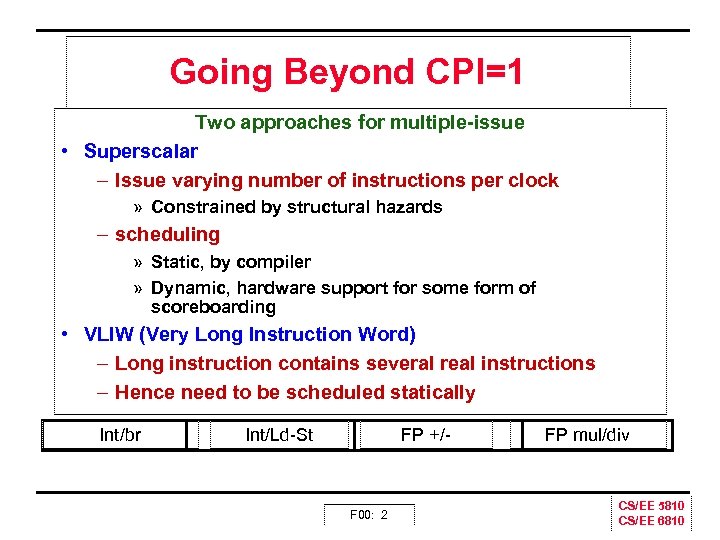

Going Beyond CPI=1 Two approaches for multiple issue • Superscalar – Issue varying number of instructions per clock » Constrained by structural hazards – scheduling » Static, by compiler » Dynamic, hardware support for some form of scoreboarding • VLIW (Very Long Instruction Word) – Long instruction contains several real instructions – Hence need to be scheduled statically Int/br Int/Ld-St FP +/- F 00: 2 FP mul/div CS/EE 5810 CS/EE 6810

Going Beyond CPI=1 Two approaches for multiple issue • Superscalar – Issue varying number of instructions per clock » Constrained by structural hazards – scheduling » Static, by compiler » Dynamic, hardware support for some form of scoreboarding • VLIW (Very Long Instruction Word) – Long instruction contains several real instructions – Hence need to be scheduled statically Int/br Int/Ld-St FP +/- F 00: 2 FP mul/div CS/EE 5810 CS/EE 6810

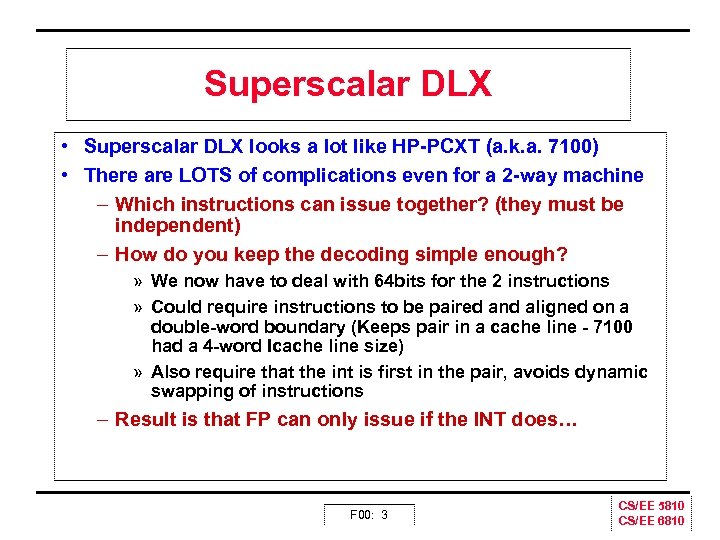

Superscalar DLX • Superscalar DLX looks a lot like HP PCXT (a. k. a. 7100) • There are LOTS of complications even for a 2 way machine – Which instructions can issue together? (they must be independent) – How do you keep the decoding simple enough? » We now have to deal with 64 bits for the 2 instructions » Could require instructions to be paired and aligned on a double word boundary (Keeps pair in a cache line 7100 had a 4 word Icache line size) » Also require that the int is first in the pair, avoids dynamic swapping of instructions – Result is that FP can only issue if the INT does… F 00: 3 CS/EE 5810 CS/EE 6810

Superscalar DLX • Superscalar DLX looks a lot like HP PCXT (a. k. a. 7100) • There are LOTS of complications even for a 2 way machine – Which instructions can issue together? (they must be independent) – How do you keep the decoding simple enough? » We now have to deal with 64 bits for the 2 instructions » Could require instructions to be paired and aligned on a double word boundary (Keeps pair in a cache line 7100 had a 4 word Icache line size) » Also require that the int is first in the pair, avoids dynamic swapping of instructions – Result is that FP can only issue if the INT does… F 00: 3 CS/EE 5810 CS/EE 6810

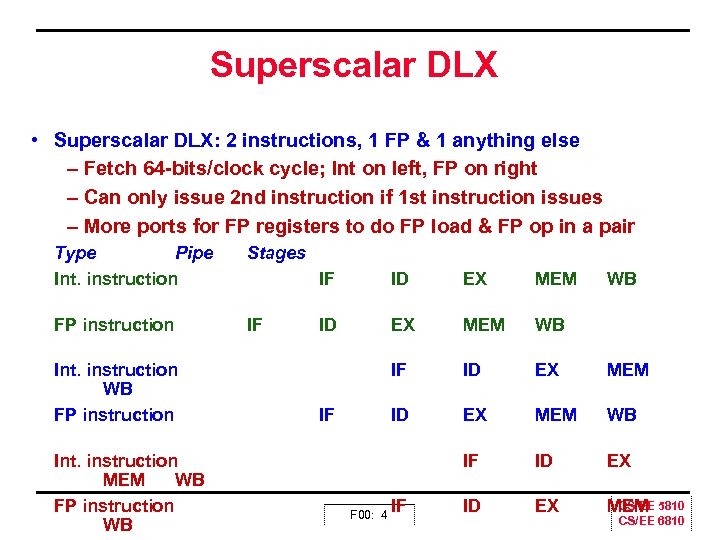

Superscalar DLX • Superscalar DLX: 2 instructions, 1 FP & 1 anything else – Fetch 64 bits/clock cycle; Int on left, FP on right – Can only issue 2 nd instruction if 1 st instruction issues – More ports for FP registers to do FP load & FP op in a pair Type Pipe Int. instruction Stages FP instruction IF Int. instruction WB FP instruction Int. instruction MEM WB FP instruction WB IF ID EX MEM WB IF ID EX CS/EE MEM 5810 IF F 00: 4 IF WB CS/EE 6810

Superscalar DLX • Superscalar DLX: 2 instructions, 1 FP & 1 anything else – Fetch 64 bits/clock cycle; Int on left, FP on right – Can only issue 2 nd instruction if 1 st instruction issues – More ports for FP registers to do FP load & FP op in a pair Type Pipe Int. instruction Stages FP instruction IF Int. instruction WB FP instruction Int. instruction MEM WB FP instruction WB IF ID EX MEM WB IF ID EX CS/EE MEM 5810 IF F 00: 4 IF WB CS/EE 6810

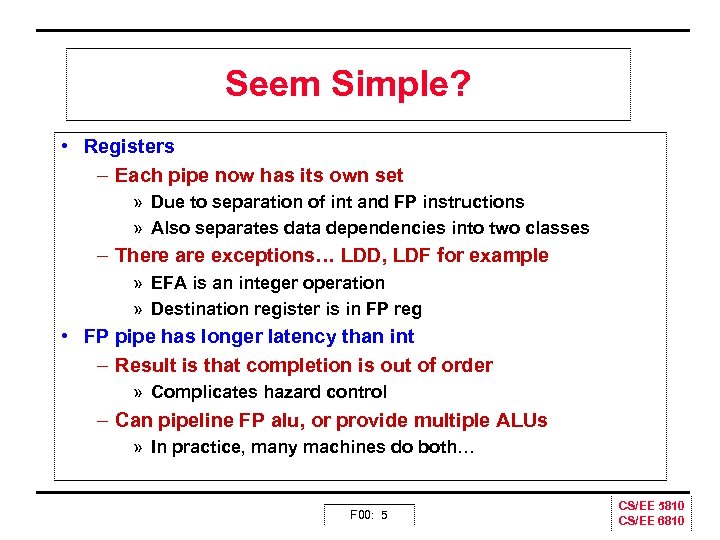

Seem Simple? • Registers – Each pipe now has its own set » Due to separation of int and FP instructions » Also separates data dependencies into two classes – There are exceptions… LDD, LDF for example » EFA is an integer operation » Destination register is in FP reg • FP pipe has longer latency than int – Result is that completion is out of order » Complicates hazard control – Can pipeline FP alu, or provide multiple ALUs » In practice, many machines do both… F 00: 5 CS/EE 5810 CS/EE 6810

Seem Simple? • Registers – Each pipe now has its own set » Due to separation of int and FP instructions » Also separates data dependencies into two classes – There are exceptions… LDD, LDF for example » EFA is an integer operation » Destination register is in FP reg • FP pipe has longer latency than int – Result is that completion is out of order » Complicates hazard control – Can pipeline FP alu, or provide multiple ALUs » In practice, many machines do both… F 00: 5 CS/EE 5810 CS/EE 6810

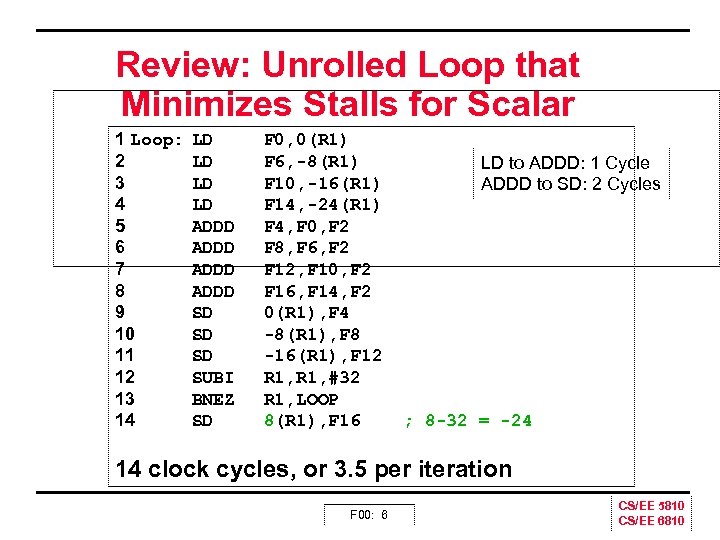

Review: Unrolled Loop that Minimizes Stalls for Scalar 1 Loop: 2 3 4 5 6 7 8 9 10 11 12 13 14 LD LD ADDD SD SD SD SUBI BNEZ SD F 0, 0(R 1) F 6, -8(R 1) F 10, -16(R 1) F 14, -24(R 1) F 4, F 0, F 2 F 8, F 6, F 2 F 12, F 10, F 2 F 16, F 14, F 2 0(R 1), F 4 -8(R 1), F 8 -16(R 1), F 12 R 1, #32 R 1, LOOP 8(R 1), F 16 LD to ADDD: 1 Cycle ADDD to SD: 2 Cycles ; 8 -32 = -24 14 clock cycles, or 3. 5 per iteration F 00: 6 CS/EE 5810 CS/EE 6810

Review: Unrolled Loop that Minimizes Stalls for Scalar 1 Loop: 2 3 4 5 6 7 8 9 10 11 12 13 14 LD LD ADDD SD SD SD SUBI BNEZ SD F 0, 0(R 1) F 6, -8(R 1) F 10, -16(R 1) F 14, -24(R 1) F 4, F 0, F 2 F 8, F 6, F 2 F 12, F 10, F 2 F 16, F 14, F 2 0(R 1), F 4 -8(R 1), F 8 -16(R 1), F 12 R 1, #32 R 1, LOOP 8(R 1), F 16 LD to ADDD: 1 Cycle ADDD to SD: 2 Cycles ; 8 -32 = -24 14 clock cycles, or 3. 5 per iteration F 00: 6 CS/EE 5810 CS/EE 6810

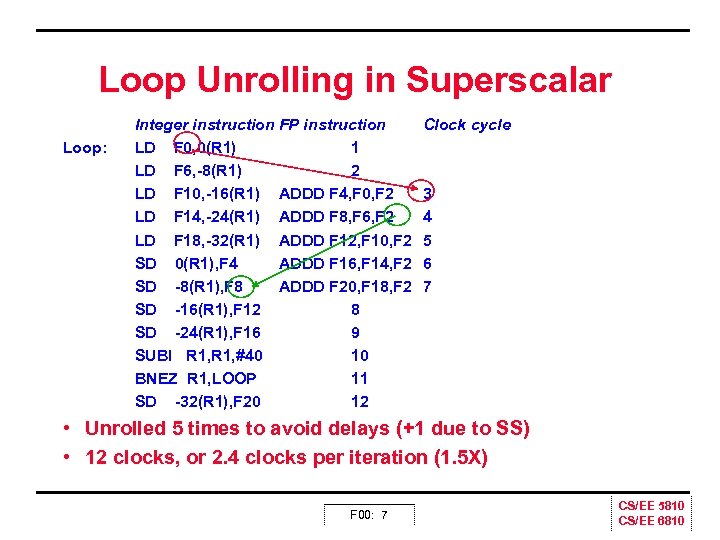

Loop Unrolling in Superscalar Loop: Integer instruction FP instruction LD F 0, 0(R 1) 1 LD F 6, 8(R 1) 2 LD F 10, 16(R 1) ADDD F 4, F 0, F 2 LD F 14, 24(R 1) ADDD F 8, F 6, F 2 LD F 18, 32(R 1) ADDD F 12, F 10, F 2 SD 0(R 1), F 4 ADDD F 16, F 14, F 2 SD 8(R 1), F 8 ADDD F 20, F 18, F 2 SD 16(R 1), F 12 8 SD 24(R 1), F 16 9 SUBI R 1, #40 10 BNEZ R 1, LOOP 11 SD 32(R 1), F 20 12 Clock cycle 3 4 5 6 7 • Unrolled 5 times to avoid delays (+1 due to SS) • 12 clocks, or 2. 4 clocks per iteration (1. 5 X) F 00: 7 CS/EE 5810 CS/EE 6810

Loop Unrolling in Superscalar Loop: Integer instruction FP instruction LD F 0, 0(R 1) 1 LD F 6, 8(R 1) 2 LD F 10, 16(R 1) ADDD F 4, F 0, F 2 LD F 14, 24(R 1) ADDD F 8, F 6, F 2 LD F 18, 32(R 1) ADDD F 12, F 10, F 2 SD 0(R 1), F 4 ADDD F 16, F 14, F 2 SD 8(R 1), F 8 ADDD F 20, F 18, F 2 SD 16(R 1), F 12 8 SD 24(R 1), F 16 9 SUBI R 1, #40 10 BNEZ R 1, LOOP 11 SD 32(R 1), F 20 12 Clock cycle 3 4 5 6 7 • Unrolled 5 times to avoid delays (+1 due to SS) • 12 clocks, or 2. 4 clocks per iteration (1. 5 X) F 00: 7 CS/EE 5810 CS/EE 6810

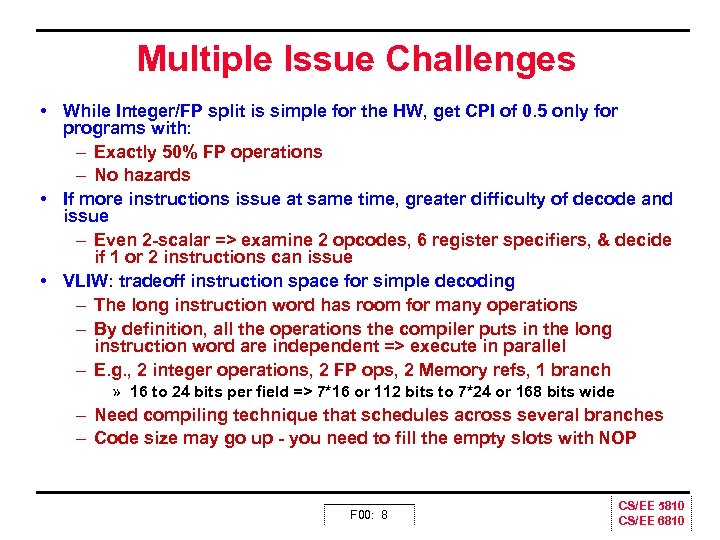

Multiple Issue Challenges • While Integer/FP split is simple for the HW, get CPI of 0. 5 only for programs with: – Exactly 50% FP operations – No hazards • If more instructions issue at same time, greater difficulty of decode and issue – Even 2 scalar => examine 2 opcodes, 6 register specifiers, & decide if 1 or 2 instructions can issue • VLIW: tradeoff instruction space for simple decoding – The long instruction word has room for many operations – By definition, all the operations the compiler puts in the long instruction word are independent => execute in parallel – E. g. , 2 integer operations, 2 FP ops, 2 Memory refs, 1 branch » 16 to 24 bits per field => 7*16 or 112 bits to 7*24 or 168 bits wide – Need compiling technique that schedules across several branches – Code size may go up you need to fill the empty slots with NOP F 00: 8 CS/EE 5810 CS/EE 6810

Multiple Issue Challenges • While Integer/FP split is simple for the HW, get CPI of 0. 5 only for programs with: – Exactly 50% FP operations – No hazards • If more instructions issue at same time, greater difficulty of decode and issue – Even 2 scalar => examine 2 opcodes, 6 register specifiers, & decide if 1 or 2 instructions can issue • VLIW: tradeoff instruction space for simple decoding – The long instruction word has room for many operations – By definition, all the operations the compiler puts in the long instruction word are independent => execute in parallel – E. g. , 2 integer operations, 2 FP ops, 2 Memory refs, 1 branch » 16 to 24 bits per field => 7*16 or 112 bits to 7*24 or 168 bits wide – Need compiling technique that schedules across several branches – Code size may go up you need to fill the empty slots with NOP F 00: 8 CS/EE 5810 CS/EE 6810

Superscalar in Practice? • Test example scalar vector sum – Shows 50% improvement over scheduled single issue • HP 7100 experience – Getting simple 2 way issue right is not too hard – Most applications show 50% 70% speedup – No applications slow down » However, very branchy code doesn’t speed up much – What did slow down? » The compiler! Increased compiler complexity… • Still, basically a win – Got 1/2 to 3/4 of the idea speedup – Too bad the benefits diminish with increased issue width… F 00: 9 CS/EE 5810 CS/EE 6810

Superscalar in Practice? • Test example scalar vector sum – Shows 50% improvement over scheduled single issue • HP 7100 experience – Getting simple 2 way issue right is not too hard – Most applications show 50% 70% speedup – No applications slow down » However, very branchy code doesn’t speed up much – What did slow down? » The compiler! Increased compiler complexity… • Still, basically a win – Got 1/2 to 3/4 of the idea speedup – Too bad the benefits diminish with increased issue width… F 00: 9 CS/EE 5810 CS/EE 6810

VLIW History Snippet Go back to the early 80’s… • Cyndrome – Bob Rau VLIW compiler guy from U of Illinois is chief architect • Multiflow – Josh Fisher VLIW compiler guy from Yale is chief architect • Both went after the mini supercomputer market – mini super = Cray like performance for a lot less money – Market segment disappeared before machines were ready • Rau and Fisher both went to work for HP – HP develops PA WW and then forms partnership with Intel • Result is the VLIW Merced (Itainium, IA 64, EPIC) F 00: 10 CS/EE 5810 CS/EE 6810

VLIW History Snippet Go back to the early 80’s… • Cyndrome – Bob Rau VLIW compiler guy from U of Illinois is chief architect • Multiflow – Josh Fisher VLIW compiler guy from Yale is chief architect • Both went after the mini supercomputer market – mini super = Cray like performance for a lot less money – Market segment disappeared before machines were ready • Rau and Fisher both went to work for HP – HP develops PA WW and then forms partnership with Intel • Result is the VLIW Merced (Itainium, IA 64, EPIC) F 00: 10 CS/EE 5810 CS/EE 6810

Intel/HP “Explicitly Parallel Instruction Computer (EPIC)” • 3 Instructions in 128 bit “groups”; field determines if instructions dependent or independent – Smaller code size than old VLIW, larger than x 86/RISC – Groups can be linked to show independence > 3 instr • 64 integer registers + 64 floating point registers – Not separate files per functional unit as in old VLIW • Hardware checks dependencies (interlocks => binary compatibility over time) • Predicated execution (select 1 out of 64 1 bit flags) => 40% fewer mispredictions? • IA 64 : name of instruction set architecture; EPIC is type • Merced is internal name of first implementation (1999) • Itainium it the name of the first chip you can buy F 00: 11 CS/EE 5810 CS/EE 6810

Intel/HP “Explicitly Parallel Instruction Computer (EPIC)” • 3 Instructions in 128 bit “groups”; field determines if instructions dependent or independent – Smaller code size than old VLIW, larger than x 86/RISC – Groups can be linked to show independence > 3 instr • 64 integer registers + 64 floating point registers – Not separate files per functional unit as in old VLIW • Hardware checks dependencies (interlocks => binary compatibility over time) • Predicated execution (select 1 out of 64 1 bit flags) => 40% fewer mispredictions? • IA 64 : name of instruction set architecture; EPIC is type • Merced is internal name of first implementation (1999) • Itainium it the name of the first chip you can buy F 00: 11 CS/EE 5810 CS/EE 6810

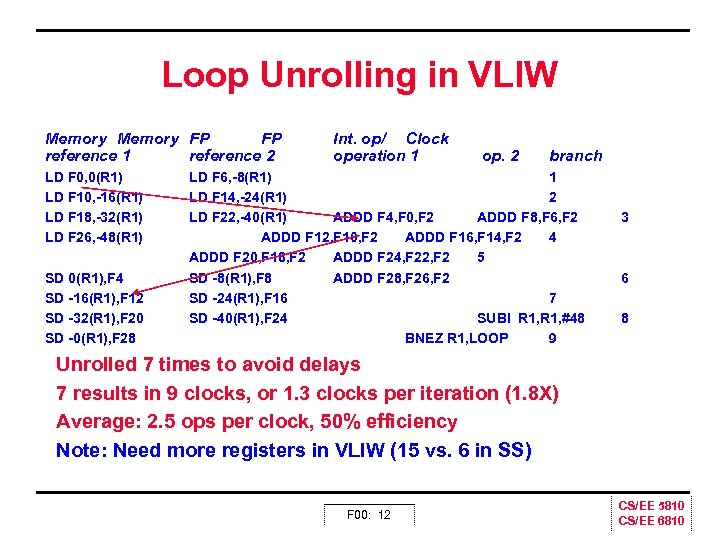

Loop Unrolling in VLIW Memory FP FP reference 1 reference 2 LD F 0, 0(R 1) LD F 10, 16(R 1) LD F 18, 32(R 1) LD F 26, 48(R 1) SD 0(R 1), F 4 SD 16(R 1), F 12 SD 32(R 1), F 20 SD 0(R 1), F 28 Int. op/ Clock operation 1 op. 2 branch LD F 6, 8(R 1) 1 LD F 14, 24(R 1) 2 LD F 22, 40(R 1) ADDD F 4, F 0, F 2 ADDD F 8, F 6, F 2 ADDD F 12, F 10, F 2 ADDD F 16, F 14, F 2 4 ADDD F 20, F 18, F 2 ADDD F 24, F 22, F 2 5 SD 8(R 1), F 8 ADDD F 28, F 26, F 2 SD 24(R 1), F 16 7 SD 40(R 1), F 24 SUBI R 1, #48 BNEZ R 1, LOOP 9 3 6 8 Unrolled 7 times to avoid delays 7 results in 9 clocks, or 1. 3 clocks per iteration (1. 8 X) Average: 2. 5 ops per clock, 50% efficiency Note: Need more registers in VLIW (15 vs. 6 in SS) F 00: 12 CS/EE 5810 CS/EE 6810

Loop Unrolling in VLIW Memory FP FP reference 1 reference 2 LD F 0, 0(R 1) LD F 10, 16(R 1) LD F 18, 32(R 1) LD F 26, 48(R 1) SD 0(R 1), F 4 SD 16(R 1), F 12 SD 32(R 1), F 20 SD 0(R 1), F 28 Int. op/ Clock operation 1 op. 2 branch LD F 6, 8(R 1) 1 LD F 14, 24(R 1) 2 LD F 22, 40(R 1) ADDD F 4, F 0, F 2 ADDD F 8, F 6, F 2 ADDD F 12, F 10, F 2 ADDD F 16, F 14, F 2 4 ADDD F 20, F 18, F 2 ADDD F 24, F 22, F 2 5 SD 8(R 1), F 8 ADDD F 28, F 26, F 2 SD 24(R 1), F 16 7 SD 40(R 1), F 24 SUBI R 1, #48 BNEZ R 1, LOOP 9 3 6 8 Unrolled 7 times to avoid delays 7 results in 9 clocks, or 1. 3 clocks per iteration (1. 8 X) Average: 2. 5 ops per clock, 50% efficiency Note: Need more registers in VLIW (15 vs. 6 in SS) F 00: 12 CS/EE 5810 CS/EE 6810

Advantages of HW (Tomasulo) vs. SW (VLIW) Speculation • • • HW determines address conflicts HW better branch prediction HW maintains precise exception model HW does not execute bookkeeping instructions Works across multiple implementations SW speculation is much easier for HW design F 00: 13 CS/EE 5810 CS/EE 6810

Advantages of HW (Tomasulo) vs. SW (VLIW) Speculation • • • HW determines address conflicts HW better branch prediction HW maintains precise exception model HW does not execute bookkeeping instructions Works across multiple implementations SW speculation is much easier for HW design F 00: 13 CS/EE 5810 CS/EE 6810

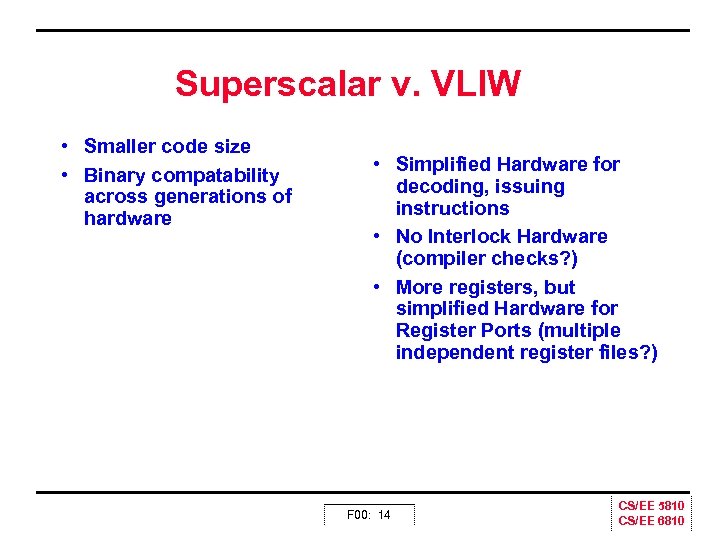

Superscalar v. VLIW • Smaller code size • Binary compatability across generations of hardware • Simplified Hardware for decoding, issuing instructions • No Interlock Hardware (compiler checks? ) • More registers, but simplified Hardware for Register Ports (multiple independent register files? ) F 00: 14 CS/EE 5810 CS/EE 6810

Superscalar v. VLIW • Smaller code size • Binary compatability across generations of hardware • Simplified Hardware for decoding, issuing instructions • No Interlock Hardware (compiler checks? ) • More registers, but simplified Hardware for Register Ports (multiple independent register files? ) F 00: 14 CS/EE 5810 CS/EE 6810

Multi Issue Dynamic Scheduling • Scoreboarding required – Let’s pick dataflow model = Tomasulo – Issue and let the reservation stations sort things our – But, still can’t issue a dependent pair on the same cycle • 2 options for fixing the dependent pair problem – Pipeline the IF/ID stage and run twice as fast as EX » Not too hard since IF and ID are pretty simple » Not true for CISC like X 86 – Decoupling » » Provide queues for destinations of loads, moves, stores Sort of virtual register/renaming approach Scoreboard will become more complex But performance will likely go up F 00: 15 CS/EE 5810 CS/EE 6810

Multi Issue Dynamic Scheduling • Scoreboarding required – Let’s pick dataflow model = Tomasulo – Issue and let the reservation stations sort things our – But, still can’t issue a dependent pair on the same cycle • 2 options for fixing the dependent pair problem – Pipeline the IF/ID stage and run twice as fast as EX » Not too hard since IF and ID are pretty simple » Not true for CISC like X 86 – Decoupling » » Provide queues for destinations of loads, moves, stores Sort of virtual register/renaming approach Scoreboard will become more complex But performance will likely go up F 00: 15 CS/EE 5810 CS/EE 6810

Dynamic Scheduling in Superscalar • How to issue two instructions and keep in order instruction issue for Tomasulo? – Assume 1 integer + 1 floating point – 1 Tomasulo control for integer, 1 for floating point • Issue 2 X Clock Rate, so that issue remains in order • Only FP loads might cause dependency between integer and FP issue: – Replace load reservation station with a load queue; operands must be read in the order they are fetched – Load checks addresses in Store Queue to avoid RAW violation – Store checks addresses in Load Queue to avoid WAR, WAW – Called “decoupled architecture” F 00: 16 CS/EE 5810 CS/EE 6810

Dynamic Scheduling in Superscalar • How to issue two instructions and keep in order instruction issue for Tomasulo? – Assume 1 integer + 1 floating point – 1 Tomasulo control for integer, 1 for floating point • Issue 2 X Clock Rate, so that issue remains in order • Only FP loads might cause dependency between integer and FP issue: – Replace load reservation station with a load queue; operands must be read in the order they are fetched – Load checks addresses in Store Queue to avoid RAW violation – Store checks addresses in Load Queue to avoid WAR, WAW – Called “decoupled architecture” F 00: 16 CS/EE 5810 CS/EE 6810

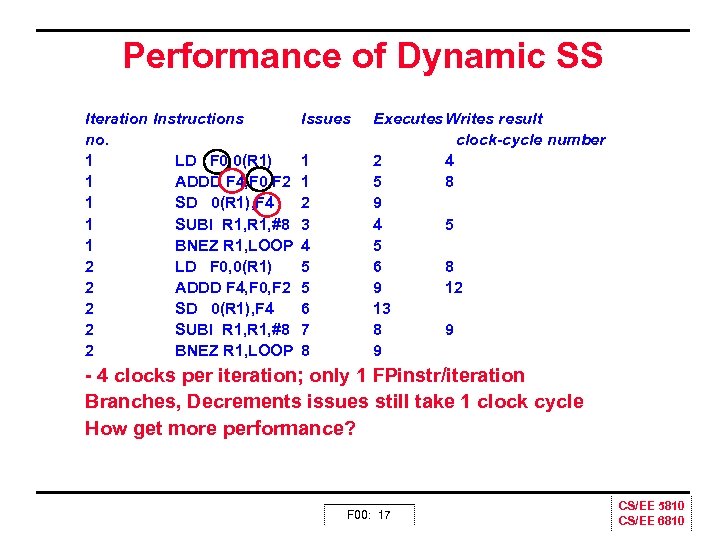

Performance of Dynamic SS Iteration Instructions no. 1 LD F 0, 0(R 1) 1 ADDD F 4, F 0, F 2 1 SD 0(R 1), F 4 1 SUBI R 1, #8 1 BNEZ R 1, LOOP 2 LD F 0, 0(R 1) 2 ADDD F 4, F 0, F 2 2 SD 0(R 1), F 4 2 SUBI R 1, #8 2 BNEZ R 1, LOOP Issues 1 1 2 3 4 5 5 6 7 8 Executes Writes result clock-cycle number 2 4 5 8 9 4 5 5 6 8 9 12 13 8 9 9 4 clocks per iteration; only 1 FPinstr/iteration Branches, Decrements issues still take 1 clock cycle How get more performance? F 00: 17 CS/EE 5810 CS/EE 6810

Performance of Dynamic SS Iteration Instructions no. 1 LD F 0, 0(R 1) 1 ADDD F 4, F 0, F 2 1 SD 0(R 1), F 4 1 SUBI R 1, #8 1 BNEZ R 1, LOOP 2 LD F 0, 0(R 1) 2 ADDD F 4, F 0, F 2 2 SD 0(R 1), F 4 2 SUBI R 1, #8 2 BNEZ R 1, LOOP Issues 1 1 2 3 4 5 5 6 7 8 Executes Writes result clock-cycle number 2 4 5 8 9 4 5 5 6 8 9 12 13 8 9 9 4 clocks per iteration; only 1 FPinstr/iteration Branches, Decrements issues still take 1 clock cycle How get more performance? F 00: 17 CS/EE 5810 CS/EE 6810

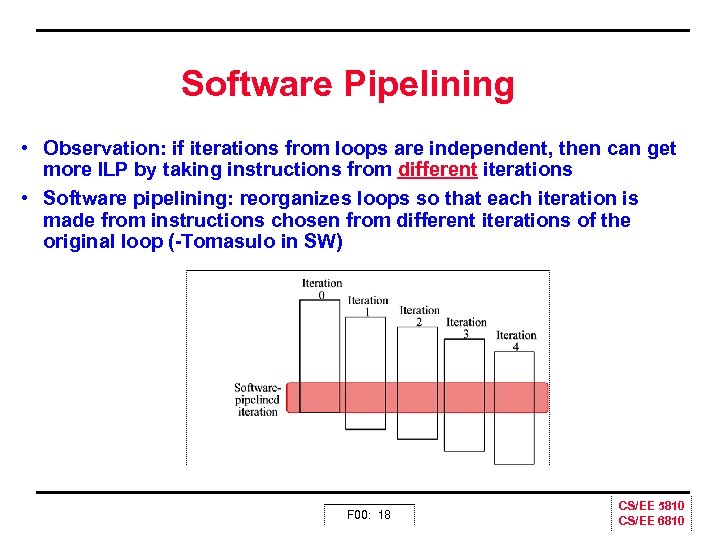

Software Pipelining • Observation: if iterations from loops are independent, then can get more ILP by taking instructions from different iterations • Software pipelining: reorganizes loops so that each iteration is made from instructions chosen from different iterations of the original loop ( Tomasulo in SW) F 00: 18 CS/EE 5810 CS/EE 6810

Software Pipelining • Observation: if iterations from loops are independent, then can get more ILP by taking instructions from different iterations • Software pipelining: reorganizes loops so that each iteration is made from instructions chosen from different iterations of the original loop ( Tomasulo in SW) F 00: 18 CS/EE 5810 CS/EE 6810

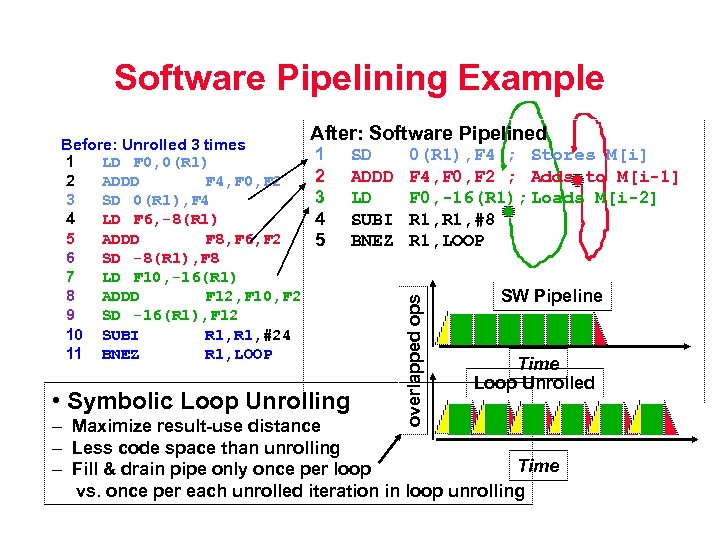

Software Pipelining Example After: Software Pipelined 1 2 3 4 5 • Symbolic Loop Unrolling SD ADDD LD SUBI BNEZ 0(R 1), F 4 ; Stores M[i] F 4, F 0, F 2 ; Adds to M[i-1] F 0, -16(R 1); Loads M[i-2] R 1, #8 R 1, LOOP overlapped ops Before: Unrolled 3 times 1 LD F 0, 0(R 1) 2 ADDD F 4, F 0, F 2 3 SD 0(R 1), F 4 4 LD F 6, -8(R 1) 5 ADDD F 8, F 6, F 2 6 SD -8(R 1), F 8 7 LD F 10, -16(R 1) 8 ADDD F 12, F 10, F 2 9 SD -16(R 1), F 12 10 SUBI R 1, #24 11 BNEZ R 1, LOOP SW Pipeline Time Loop Unrolled – Maximize result use distance – Less code space than unrolling Time – Fill & drain pipe only once per loop vs. once per each unrolled iteration in loop unrolling

Software Pipelining Example After: Software Pipelined 1 2 3 4 5 • Symbolic Loop Unrolling SD ADDD LD SUBI BNEZ 0(R 1), F 4 ; Stores M[i] F 4, F 0, F 2 ; Adds to M[i-1] F 0, -16(R 1); Loads M[i-2] R 1, #8 R 1, LOOP overlapped ops Before: Unrolled 3 times 1 LD F 0, 0(R 1) 2 ADDD F 4, F 0, F 2 3 SD 0(R 1), F 4 4 LD F 6, -8(R 1) 5 ADDD F 8, F 6, F 2 6 SD -8(R 1), F 8 7 LD F 10, -16(R 1) 8 ADDD F 12, F 10, F 2 9 SD -16(R 1), F 12 10 SUBI R 1, #24 11 BNEZ R 1, LOOP SW Pipeline Time Loop Unrolled – Maximize result use distance – Less code space than unrolling Time – Fill & drain pipe only once per loop vs. once per each unrolled iteration in loop unrolling

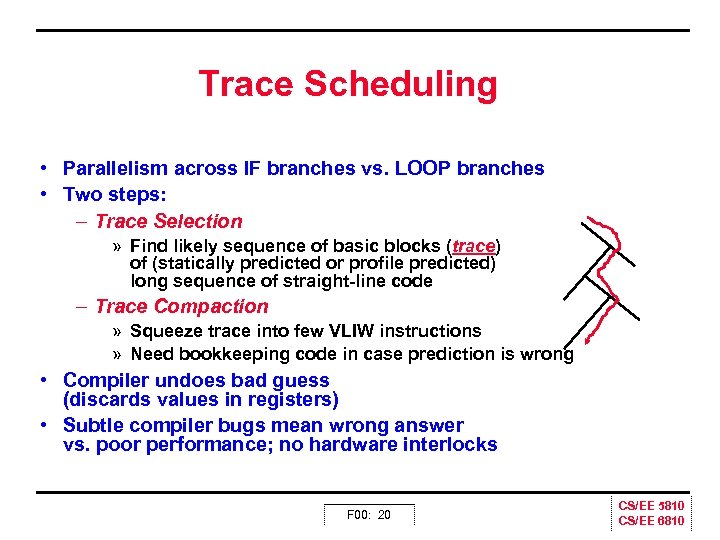

Trace Scheduling • Parallelism across IF branches vs. LOOP branches • Two steps: – Trace Selection » Find likely sequence of basic blocks (trace) of (statically predicted or profile predicted) long sequence of straight line code – Trace Compaction » Squeeze trace into few VLIW instructions » Need bookkeeping code in case prediction is wrong • Compiler undoes bad guess (discards values in registers) • Subtle compiler bugs mean wrong answer vs. poor performance; no hardware interlocks F 00: 20 CS/EE 5810 CS/EE 6810

Trace Scheduling • Parallelism across IF branches vs. LOOP branches • Two steps: – Trace Selection » Find likely sequence of basic blocks (trace) of (statically predicted or profile predicted) long sequence of straight line code – Trace Compaction » Squeeze trace into few VLIW instructions » Need bookkeeping code in case prediction is wrong • Compiler undoes bad guess (discards values in registers) • Subtle compiler bugs mean wrong answer vs. poor performance; no hardware interlocks F 00: 20 CS/EE 5810 CS/EE 6810

Limitations on Multiple Issue • How much ILP can be found in the application – Most fundamental problem – Requires deep unrolling, hence big focus on loops » Compiler complexity goes way up » Deep unrolling requires lots of registers (either real or renamed) – Increased HW cost » » » More ports for RF Cost of scoreboarding and forwarding paths Memory bandwidth goes way up the biggie! Most machines have separate I and D ports already Some even have multiple D ports, big expense! Branch prediction HW is a MUST F 00: 21 CS/EE 5810 CS/EE 6810

Limitations on Multiple Issue • How much ILP can be found in the application – Most fundamental problem – Requires deep unrolling, hence big focus on loops » Compiler complexity goes way up » Deep unrolling requires lots of registers (either real or renamed) – Increased HW cost » » » More ports for RF Cost of scoreboarding and forwarding paths Memory bandwidth goes way up the biggie! Most machines have separate I and D ports already Some even have multiple D ports, big expense! Branch prediction HW is a MUST F 00: 21 CS/EE 5810 CS/EE 6810

Limits to Multi Issue Machines • Inherent limitations of ILP – 1 branch in 5: How to keep a 5 way VLIW busy? – Latencies of units: many operations must be scheduled – Need about Pipeline Depth x No. Functional Units of independent. Difficulties in building HW – Easy: More instruction bandwidth – Easy: Duplicate FUs to get parallel execution – Hard: Increase ports to Register File (bandwidth) » VLIW example needs 7 read and 3 write for Int. Reg. & 5 read and 3 write for FP reg – Harder: Increase ports to memory (bandwidth) – Decoding Superscalar and impact on clock rate, pipeline depth? F 00: 22 CS/EE 5810 CS/EE 6810

Limits to Multi Issue Machines • Inherent limitations of ILP – 1 branch in 5: How to keep a 5 way VLIW busy? – Latencies of units: many operations must be scheduled – Need about Pipeline Depth x No. Functional Units of independent. Difficulties in building HW – Easy: More instruction bandwidth – Easy: Duplicate FUs to get parallel execution – Hard: Increase ports to Register File (bandwidth) » VLIW example needs 7 read and 3 write for Int. Reg. & 5 read and 3 write for FP reg – Harder: Increase ports to memory (bandwidth) – Decoding Superscalar and impact on clock rate, pipeline depth? F 00: 22 CS/EE 5810 CS/EE 6810

Limits to Multi Issue Machines • Limitations specific to either Superscalar or VLIW implementation – Decode issue in Superscalar: how wide practical? – VLIW code size: unroll loops + wasted fields in VLIW » IA 64 compresses dependent instructions, but still larger – VLIW lock step => 1 hazard & all instructions stall » IA 64 not lock step? Dynamic pipeline? – VLIW & binary compatibility IA 64 promises binary compatibility F 00: 23 CS/EE 5810 CS/EE 6810

Limits to Multi Issue Machines • Limitations specific to either Superscalar or VLIW implementation – Decode issue in Superscalar: how wide practical? – VLIW code size: unroll loops + wasted fields in VLIW » IA 64 compresses dependent instructions, but still larger – VLIW lock step => 1 hazard & all instructions stall » IA 64 not lock step? Dynamic pipeline? – VLIW & binary compatibility IA 64 promises binary compatibility F 00: 23 CS/EE 5810 CS/EE 6810

Limits to ILP • Conflicting studies of amount – Benchmarks (vectorized Fortran FP vs. integer C programs) – Hardware sophistication – Compiler sophistication • How much ILP is available using existing mechanims with increasing HW budgets? • Do we need to invent new HW/SW mechanisms to keep on processor performance curve? F 00: 24 CS/EE 5810 CS/EE 6810

Limits to ILP • Conflicting studies of amount – Benchmarks (vectorized Fortran FP vs. integer C programs) – Hardware sophistication – Compiler sophistication • How much ILP is available using existing mechanims with increasing HW budgets? • Do we need to invent new HW/SW mechanisms to keep on processor performance curve? F 00: 24 CS/EE 5810 CS/EE 6810

Limits to ILP Initial HW Model here; MIPS compilers. Assumptions for ideal/perfect machine to start: 1. Register renaming–infinite virtual registers and all WAW & WAR hazards are avoided 2. Branch prediction–perfect; no mispredictions 3. Jump prediction–all jumps perfectly predicted => machine with perfect speculation & an unbounded buffer of instructions available 4. Memory-address alias analysis–addresses are known & a store can be moved before a load provided addresses not equal 1 cycle latency for all instructions; unlimited number of instructions issued per clock cycle F 00: 25 CS/EE 5810 CS/EE 6810

Limits to ILP Initial HW Model here; MIPS compilers. Assumptions for ideal/perfect machine to start: 1. Register renaming–infinite virtual registers and all WAW & WAR hazards are avoided 2. Branch prediction–perfect; no mispredictions 3. Jump prediction–all jumps perfectly predicted => machine with perfect speculation & an unbounded buffer of instructions available 4. Memory-address alias analysis–addresses are known & a store can be moved before a load provided addresses not equal 1 cycle latency for all instructions; unlimited number of instructions issued per clock cycle F 00: 25 CS/EE 5810 CS/EE 6810

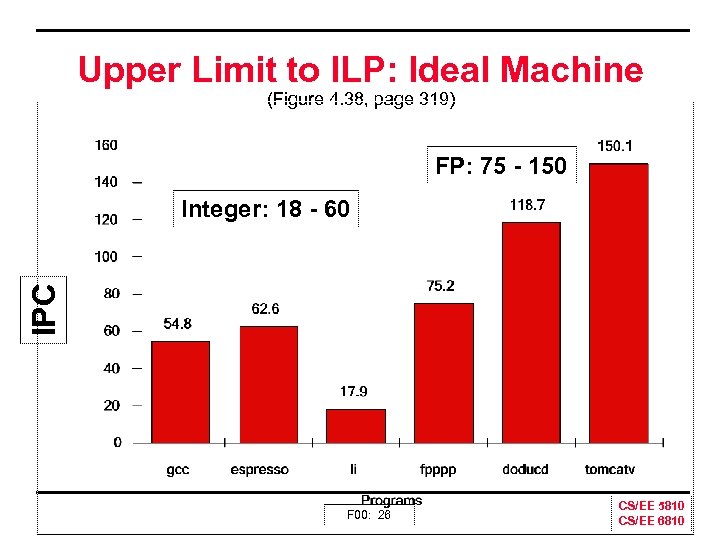

Upper Limit to ILP: Ideal Machine (Figure 4. 38, page 319) FP: 75 150 IPC Integer: 18 60 F 00: 26 CS/EE 5810 CS/EE 6810

Upper Limit to ILP: Ideal Machine (Figure 4. 38, page 319) FP: 75 150 IPC Integer: 18 60 F 00: 26 CS/EE 5810 CS/EE 6810

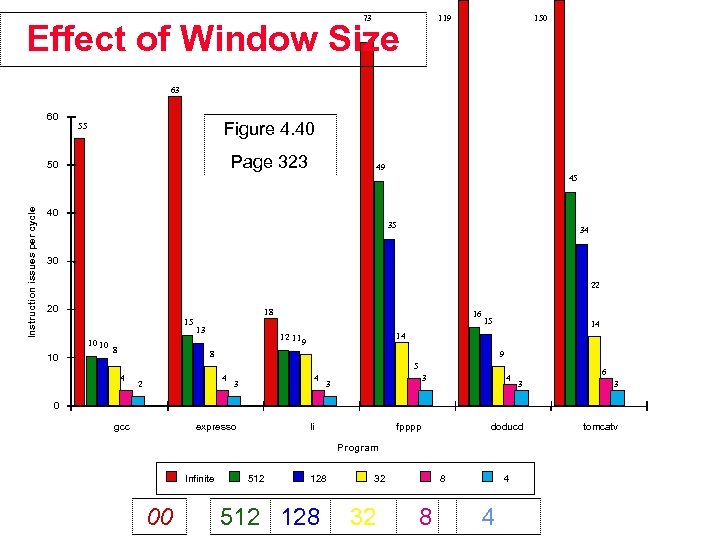

73 119 Effect of Window Size 150 63 60 Figure 4. 40 55 Page 323 50 49 Instruction issues per cycle 45 40 35 34 30 22 20 18 15 10 10 10 8 13 16 15 14 14 12 11 9 8 9 5 4 4 2 4 3 3 3 4 6 3 3 doducd tomcatv 0 gcc expresso li fpppp Program Infinite 00 512 128 32 32 8 8 4 4

73 119 Effect of Window Size 150 63 60 Figure 4. 40 55 Page 323 50 49 Instruction issues per cycle 45 40 35 34 30 22 20 18 15 10 10 10 8 13 16 15 14 14 12 11 9 8 9 5 4 4 2 4 3 3 3 4 6 3 3 doducd tomcatv 0 gcc expresso li fpppp Program Infinite 00 512 128 32 32 8 8 4 4

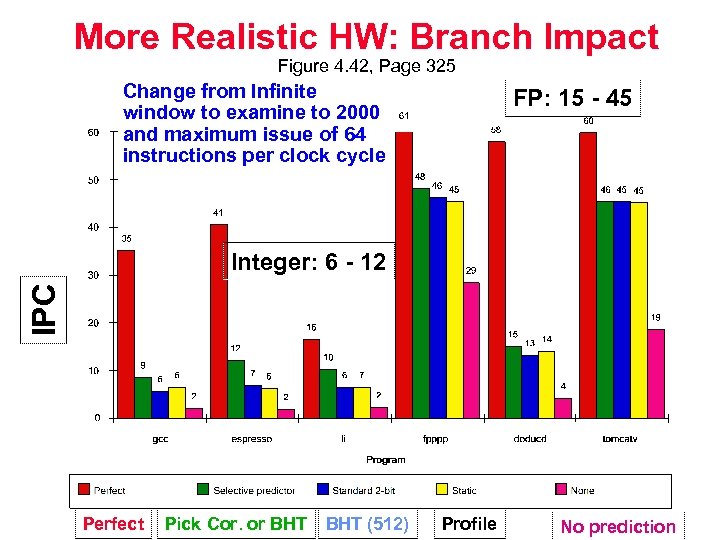

More Realistic HW: Branch Impact Figure 4. 42, Page 325 Change from Infinite window to examine to 2000 and maximum issue of 64 instructions per clock cycle FP: 15 45 IPC Integer: 6 12 Perfect Pick Cor. or BHT (512) Profile No prediction

More Realistic HW: Branch Impact Figure 4. 42, Page 325 Change from Infinite window to examine to 2000 and maximum issue of 64 instructions per clock cycle FP: 15 45 IPC Integer: 6 12 Perfect Pick Cor. or BHT (512) Profile No prediction

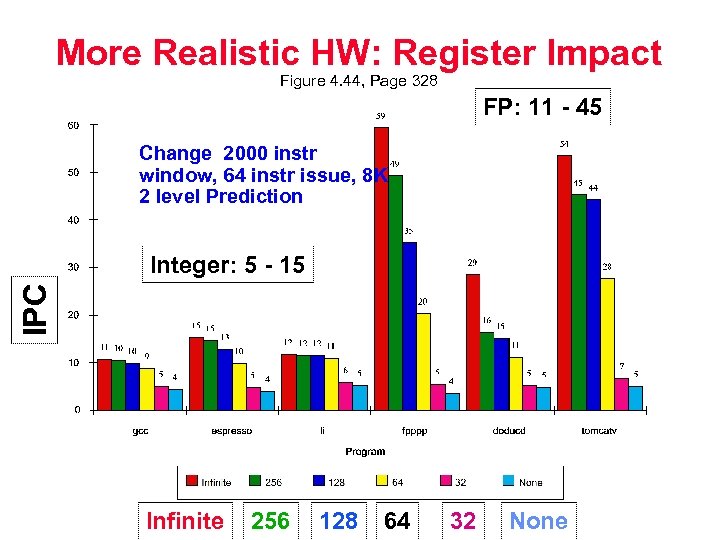

More Realistic HW: Register Impact Figure 4. 44, Page 328 FP: 11 45 Change 2000 instr window, 64 instr issue, 8 K 2 level Prediction IPC Integer: 5 15 Infinite 256 128 64 32 None

More Realistic HW: Register Impact Figure 4. 44, Page 328 FP: 11 45 Change 2000 instr window, 64 instr issue, 8 K 2 level Prediction IPC Integer: 5 15 Infinite 256 128 64 32 None

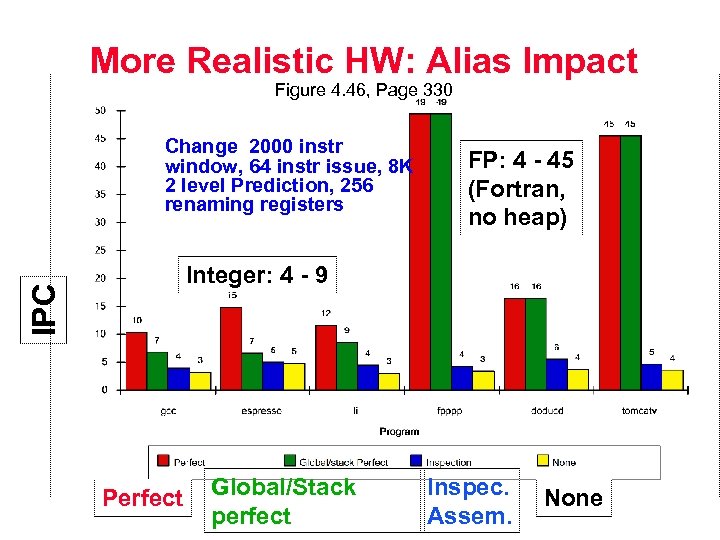

More Realistic HW: Alias Impact Figure 4. 46, Page 330 Change 2000 instr window, 64 instr issue, 8 K 2 level Prediction, 256 renaming registers FP: 4 45 (Fortran, no heap) IPC Integer: 4 9 Perfect Global/Stack perfect Inspec. Assem. None

More Realistic HW: Alias Impact Figure 4. 46, Page 330 Change 2000 instr window, 64 instr issue, 8 K 2 level Prediction, 256 renaming registers FP: 4 45 (Fortran, no heap) IPC Integer: 4 9 Perfect Global/Stack perfect Inspec. Assem. None

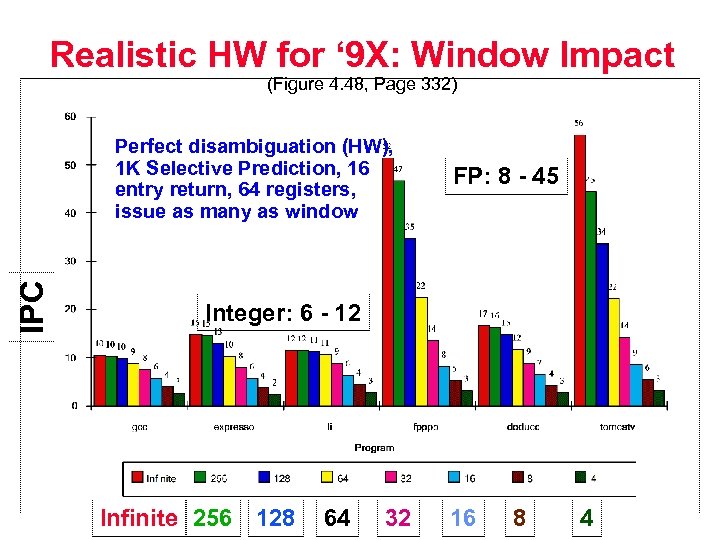

Realistic HW for ‘ 9 X: Window Impact (Figure 4. 48, Page 332) IPC Perfect disambiguation (HW), 1 K Selective Prediction, 16 entry return, 64 registers, issue as many as window FP: 8 45 Integer: 6 12 Infinite 256 128 64 32 16 8 4

Realistic HW for ‘ 9 X: Window Impact (Figure 4. 48, Page 332) IPC Perfect disambiguation (HW), 1 K Selective Prediction, 16 entry return, 64 registers, issue as many as window FP: 8 45 Integer: 6 12 Infinite 256 128 64 32 16 8 4

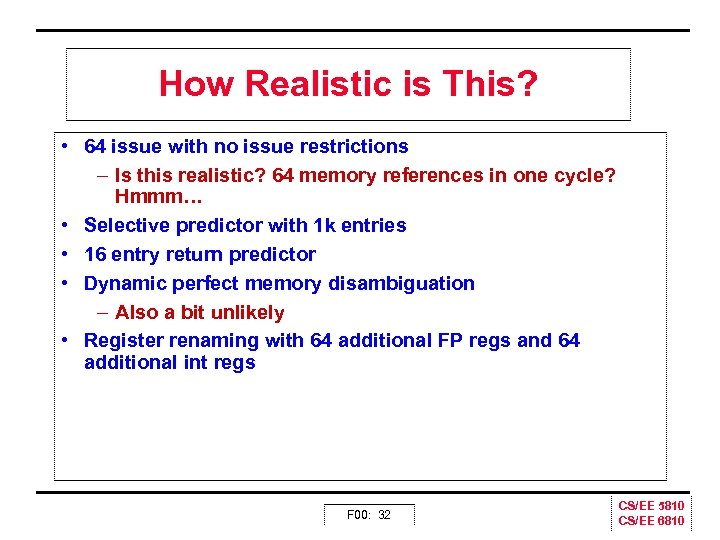

How Realistic is This? • 64 issue with no issue restrictions – Is this realistic? 64 memory references in one cycle? Hmmm… • Selective predictor with 1 k entries • 16 entry return predictor • Dynamic perfect memory disambiguation – Also a bit unlikely • Register renaming with 64 additional FP regs and 64 additional int regs F 00: 32 CS/EE 5810 CS/EE 6810

How Realistic is This? • 64 issue with no issue restrictions – Is this realistic? 64 memory references in one cycle? Hmmm… • Selective predictor with 1 k entries • 16 entry return predictor • Dynamic perfect memory disambiguation – Also a bit unlikely • Register renaming with 64 additional FP regs and 64 additional int regs F 00: 32 CS/EE 5810 CS/EE 6810

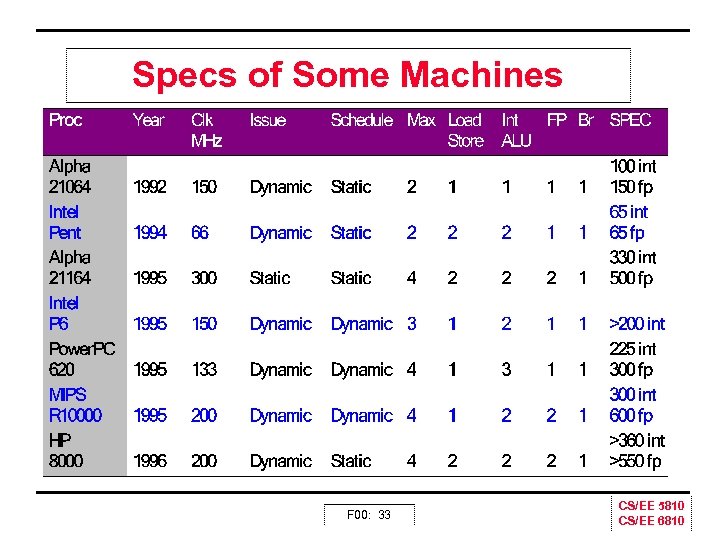

Specs of Some Machines F 00: 33 CS/EE 5810 CS/EE 6810

Specs of Some Machines F 00: 33 CS/EE 5810 CS/EE 6810

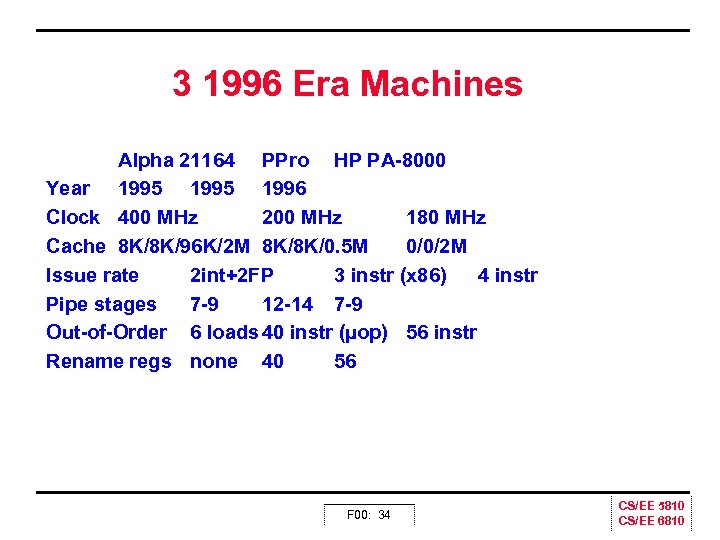

3 1996 Era Machines Alpha 21164 PPro HP PA 8000 Year 1995 1996 Clock 400 MHz 200 MHz 180 MHz Cache 8 K/8 K/96 K/2 M 8 K/8 K/0. 5 M 0/0/2 M Issue rate 2 int+2 FP 3 instr (x 86) 4 instr Pipe stages 7 9 12 14 7 9 Out of Order 6 loads 40 instr (µop) 56 instr Rename regs none 40 56 F 00: 34 CS/EE 5810 CS/EE 6810

3 1996 Era Machines Alpha 21164 PPro HP PA 8000 Year 1995 1996 Clock 400 MHz 200 MHz 180 MHz Cache 8 K/8 K/96 K/2 M 8 K/8 K/0. 5 M 0/0/2 M Issue rate 2 int+2 FP 3 instr (x 86) 4 instr Pipe stages 7 9 12 14 7 9 Out of Order 6 loads 40 instr (µop) 56 instr Rename regs none 40 56 F 00: 34 CS/EE 5810 CS/EE 6810

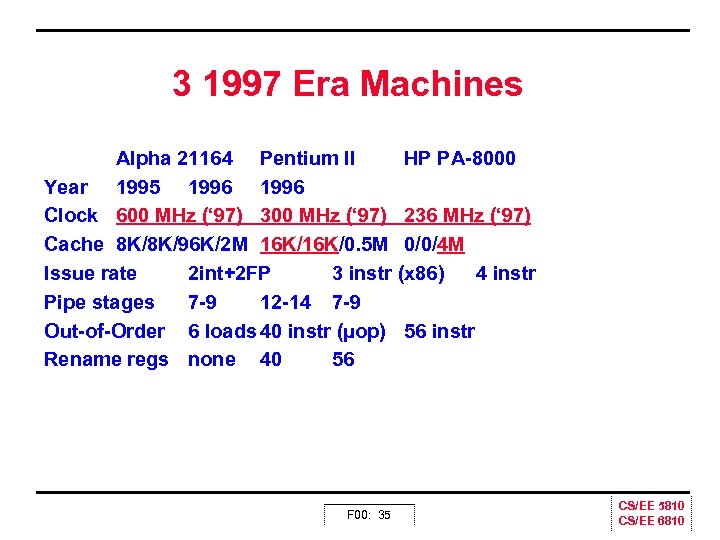

3 1997 Era Machines Alpha 21164 Pentium II HP PA 8000 Year 1995 1996 Clock 600 MHz (‘ 97) 300 MHz (‘ 97) 236 MHz (‘ 97) Cache 8 K/8 K/96 K/2 M 16 K/0. 5 M 0/0/4 M Issue rate 2 int+2 FP 3 instr (x 86) 4 instr Pipe stages 7 9 12 14 7 9 Out of Order 6 loads 40 instr (µop) 56 instr Rename regs none 40 56 F 00: 35 CS/EE 5810 CS/EE 6810

3 1997 Era Machines Alpha 21164 Pentium II HP PA 8000 Year 1995 1996 Clock 600 MHz (‘ 97) 300 MHz (‘ 97) 236 MHz (‘ 97) Cache 8 K/8 K/96 K/2 M 16 K/0. 5 M 0/0/4 M Issue rate 2 int+2 FP 3 instr (x 86) 4 instr Pipe stages 7 9 12 14 7 9 Out of Order 6 loads 40 instr (µop) 56 instr Rename regs none 40 56 F 00: 35 CS/EE 5810 CS/EE 6810

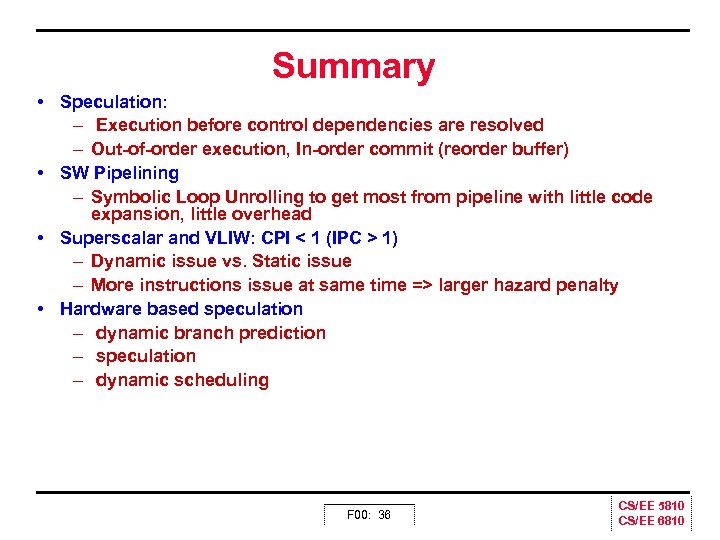

Summary • Speculation: – Execution before control dependencies are resolved – Out of order execution, In order commit (reorder buffer) • SW Pipelining – Symbolic Loop Unrolling to get most from pipeline with little code expansion, little overhead • Superscalar and VLIW: CPI < 1 (IPC > 1) – Dynamic issue vs. Static issue – More instructions issue at same time => larger hazard penalty • Hardware based speculation – dynamic branch prediction – speculation – dynamic scheduling F 00: 36 CS/EE 5810 CS/EE 6810

Summary • Speculation: – Execution before control dependencies are resolved – Out of order execution, In order commit (reorder buffer) • SW Pipelining – Symbolic Loop Unrolling to get most from pipeline with little code expansion, little overhead • Superscalar and VLIW: CPI < 1 (IPC > 1) – Dynamic issue vs. Static issue – More instructions issue at same time => larger hazard penalty • Hardware based speculation – dynamic branch prediction – speculation – dynamic scheduling F 00: 36 CS/EE 5810 CS/EE 6810