0b24811f98dc8bebaa4191a2791a65d8.ppt

- Количество слайдов: 28

Evolution of the Italian Tier 1 (INFN-T 1) Umea, May 2009 Felice. Rosso@cnaf. infn. it 1

Evolution of the Italian Tier 1 (INFN-T 1) Umea, May 2009 Felice. Rosso@cnaf. infn. it 1

In 2001 the project of the Italian Tier 1 in Bologna at CNAF was born. First computers were based on Intel Pentium III @ 800 MHz, 512 Mbytes of RAM , 20 GBytes of local storage and 100 Mbit/sec network interface. No redundancy: 1 chiller, 1 electric line power, 1 static UPS (based on chemical batteries), 1 motor generator diesel engine, no remote control. Maximum usable electric power was 1200 KVA, that means maximum 400 KVA for computers. Situation not compatible with HEP experiment requests. We decided to rebuild everything! In 2008 works started for the new mainframe rooms and new power station. Today all works are finished, all is up&running. We are on time and the original schedule was fully respected. Total infrastructure redundancy and total remote control. May 2009 2

In 2001 the project of the Italian Tier 1 in Bologna at CNAF was born. First computers were based on Intel Pentium III @ 800 MHz, 512 Mbytes of RAM , 20 GBytes of local storage and 100 Mbit/sec network interface. No redundancy: 1 chiller, 1 electric line power, 1 static UPS (based on chemical batteries), 1 motor generator diesel engine, no remote control. Maximum usable electric power was 1200 KVA, that means maximum 400 KVA for computers. Situation not compatible with HEP experiment requests. We decided to rebuild everything! In 2008 works started for the new mainframe rooms and new power station. Today all works are finished, all is up&running. We are on time and the original schedule was fully respected. Total infrastructure redundancy and total remote control. May 2009 2

New infrastructure is based on 4 rooms: 1) Chiller cluster (5 + 1) 2) 2 Mainframe rooms + Power Panel Room 3) 1 Power station 4) 2 Rotary UPS and 1 diesel motor generator Chiller cluster: 350 KWatt cooled per chiller: in best effiency (12 C in 7 C out) ratio 2: 1, 50 KWatt needed to cool 100 KWatt Mainframe: more than 100 (48 + 70) rack APC Netshelter SX 42 U 600 mm Wide x 1070 mm deep Enclosure. 15 KVolt Power station 3 Transformer (2 + 1 redundancy, 2500 KVA each one) Rotary UPS (2 x 1700 KVA) 2 electrical lines (red and green, 230 Volt per phase, 3 phases + ground). Motor generator diesel engine: 1250 KVA (60. 000 cc) May 2009 3

New infrastructure is based on 4 rooms: 1) Chiller cluster (5 + 1) 2) 2 Mainframe rooms + Power Panel Room 3) 1 Power station 4) 2 Rotary UPS and 1 diesel motor generator Chiller cluster: 350 KWatt cooled per chiller: in best effiency (12 C in 7 C out) ratio 2: 1, 50 KWatt needed to cool 100 KWatt Mainframe: more than 100 (48 + 70) rack APC Netshelter SX 42 U 600 mm Wide x 1070 mm deep Enclosure. 15 KVolt Power station 3 Transformer (2 + 1 redundancy, 2500 KVA each one) Rotary UPS (2 x 1700 KVA) 2 electrical lines (red and green, 230 Volt per phase, 3 phases + ground). Motor generator diesel engine: 1250 KVA (60. 000 cc) May 2009 3

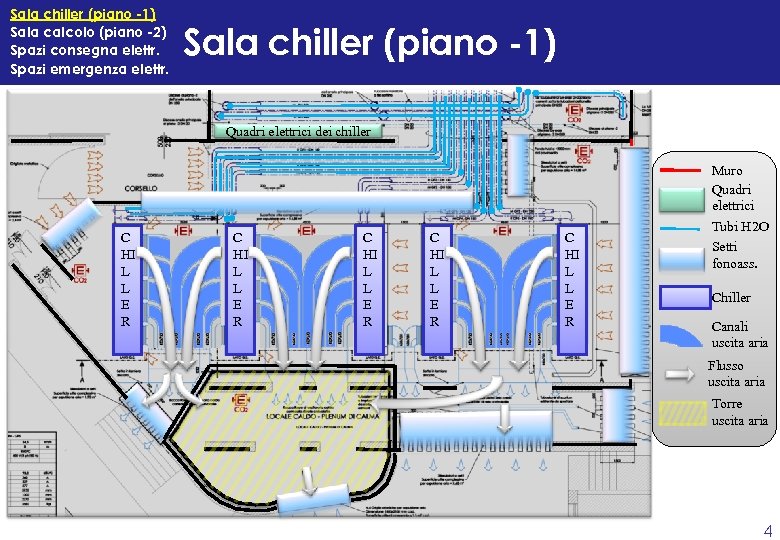

Sala chiller (piano -1) Sala calcolo (piano -2) Spazi consegna elettr. Spazi emergenza elettr. Sala chiller (piano -1) Quadri elettrici dei chiller Muro Quadri elettrici C HI L L E R C HI L L E R Tubi H 2 O Setti fonoass. Chiller Canali uscita aria Flusso uscita aria Torre uscita aria 4

Sala chiller (piano -1) Sala calcolo (piano -2) Spazi consegna elettr. Spazi emergenza elettr. Sala chiller (piano -1) Quadri elettrici dei chiller Muro Quadri elettrici C HI L L E R C HI L L E R Tubi H 2 O Setti fonoass. Chiller Canali uscita aria Flusso uscita aria Torre uscita aria 4

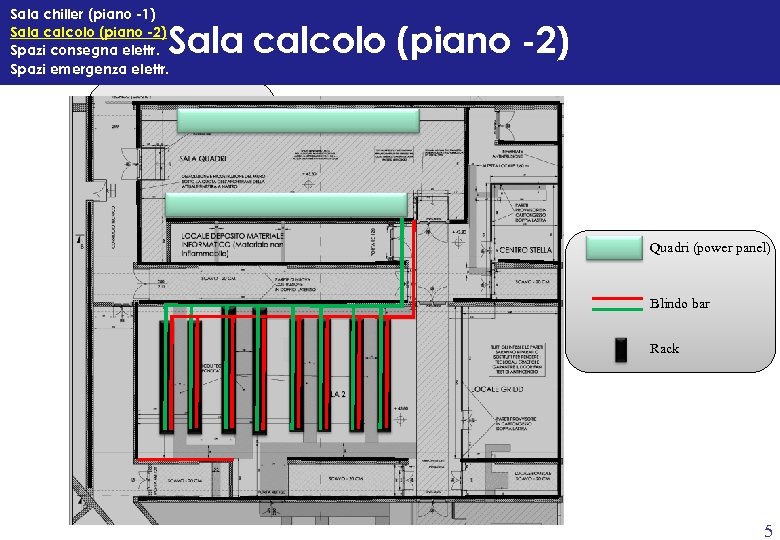

Sala chiller (piano -1) Sala calcolo (piano -2) Spazi consegna elettr. Spazi emergenza elettr. Sala calcolo (piano -2) Quadri (power panel) Blindo bar Rack 5

Sala chiller (piano -1) Sala calcolo (piano -2) Spazi consegna elettr. Spazi emergenza elettr. Sala calcolo (piano -2) Quadri (power panel) Blindo bar Rack 5

Sala chiller (piano -1) Sala calcolo (piano -2) Spazi consegna elettr. Spazi emergenza elettr. Spazi consegna elettr. Cabina consegna Corridoio cavi 15 KV Sala di trasformazione Quadri elettrici ENEL 1 TR V 15 KV 3 TR ci tri let e ri 2 TR ad Qu ia R a n z a Collaudo elettrico n i 6

Sala chiller (piano -1) Sala calcolo (piano -2) Spazi consegna elettr. Spazi emergenza elettr. Spazi consegna elettr. Cabina consegna Corridoio cavi 15 KV Sala di trasformazione Quadri elettrici ENEL 1 TR V 15 KV 3 TR ci tri let e ri 2 TR ad Qu ia R a n z a Collaudo elettrico n i 6

Sala chiller (piano -1) Sala calcolo (piano -2) Spazi consegna elettr. Spazi emergenza elettr. 2 cisterne gasolio (diesel oil tank) 2 gruppi rotanti (rotary UPS) 7

Sala chiller (piano -1) Sala calcolo (piano -2) Spazi consegna elettr. Spazi emergenza elettr. 2 cisterne gasolio (diesel oil tank) 2 gruppi rotanti (rotary UPS) 7

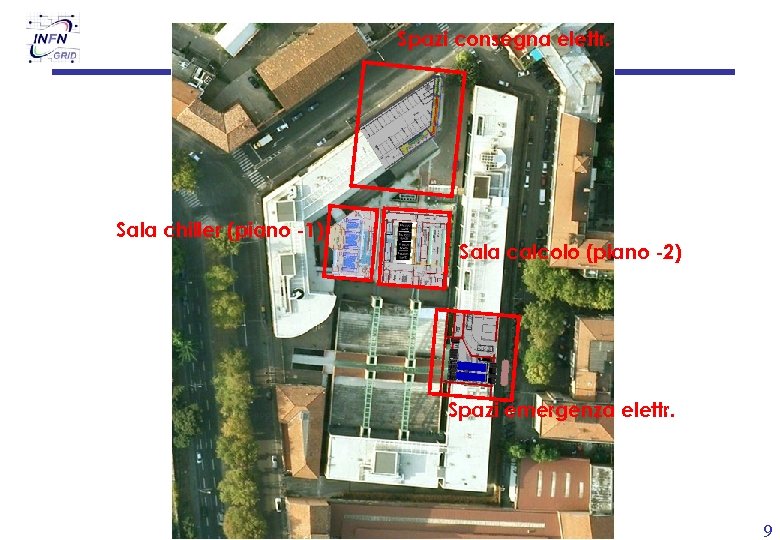

Spazi consegna elettr. Sala chiller (piano -1) Sala calcolo (piano -2) Spazi emergenza elettr. 8

Spazi consegna elettr. Sala chiller (piano -1) Sala calcolo (piano -2) Spazi emergenza elettr. 8

Spazi consegna elettr. Sala chiller (piano -1) Sala calcolo (piano -2) Spazi emergenza elettr. 9

Spazi consegna elettr. Sala chiller (piano -1) Sala calcolo (piano -2) Spazi emergenza elettr. 9

Foto impianto H 2 O 10

Foto impianto H 2 O 10

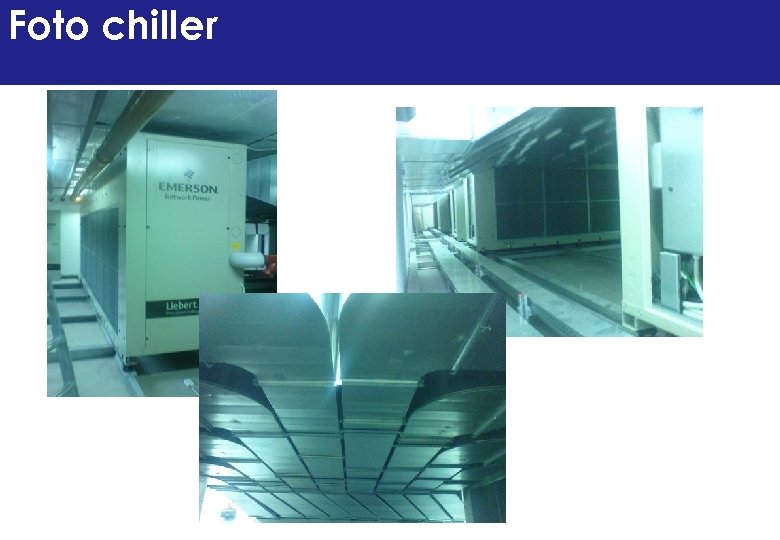

Foto chiller

Foto chiller

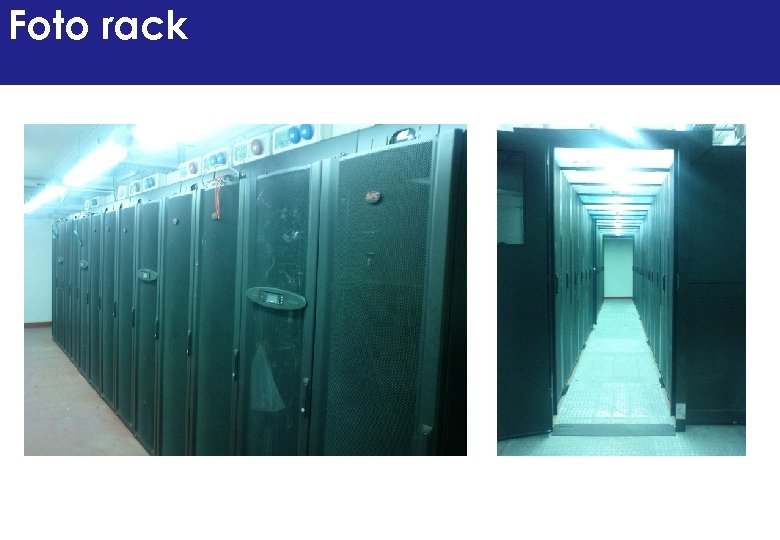

Foto rack

Foto rack

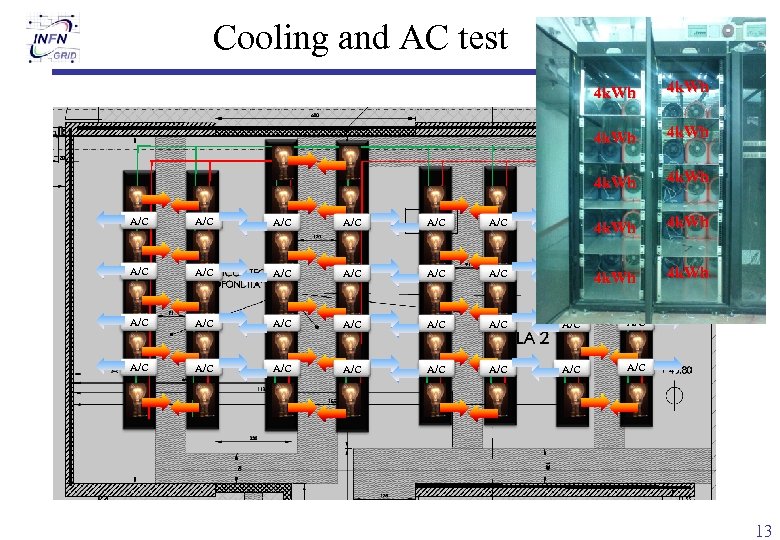

Cooling and AC test 4 k. Wh 4 k. Wh A/C A/C A/C A/C A/C A/C A/C A/C 13

Cooling and AC test 4 k. Wh 4 k. Wh A/C A/C A/C A/C A/C A/C A/C A/C 13

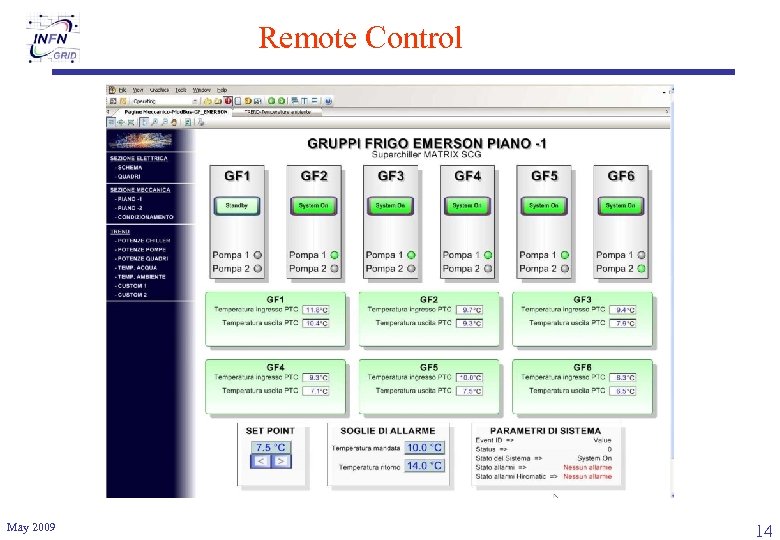

Remote Control May 2009 14

Remote Control May 2009 14

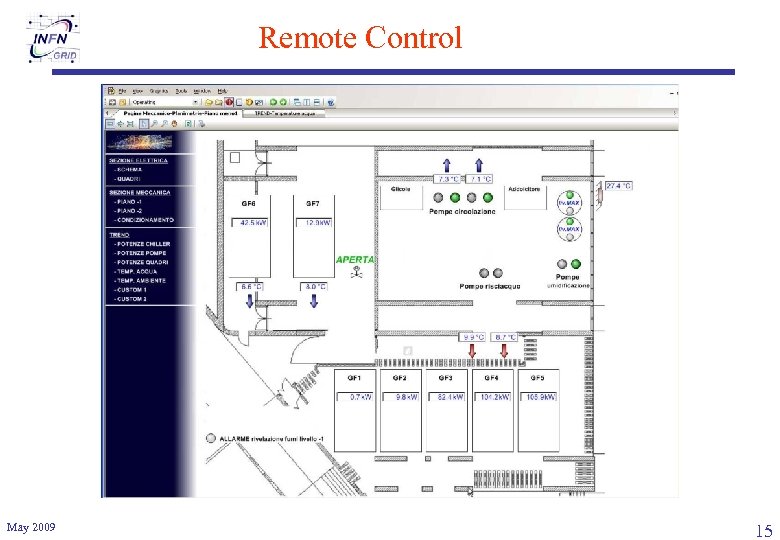

Remote Control May 2009 15

Remote Control May 2009 15

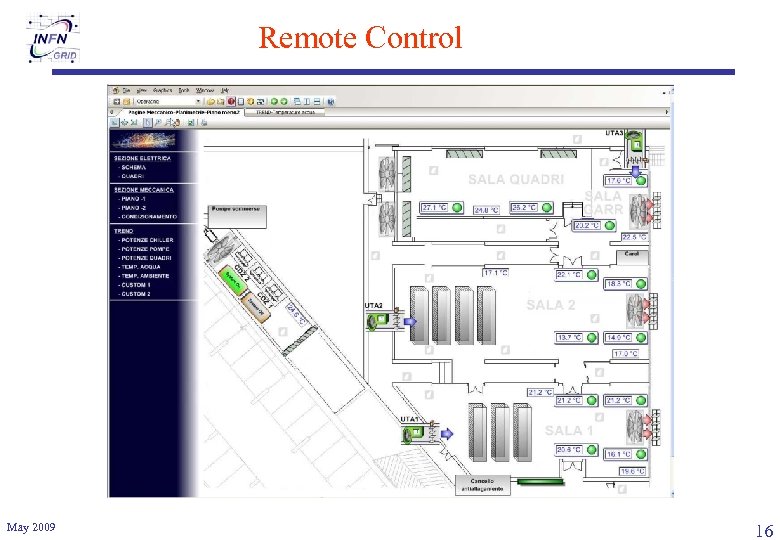

Remote Control May 2009 16

Remote Control May 2009 16

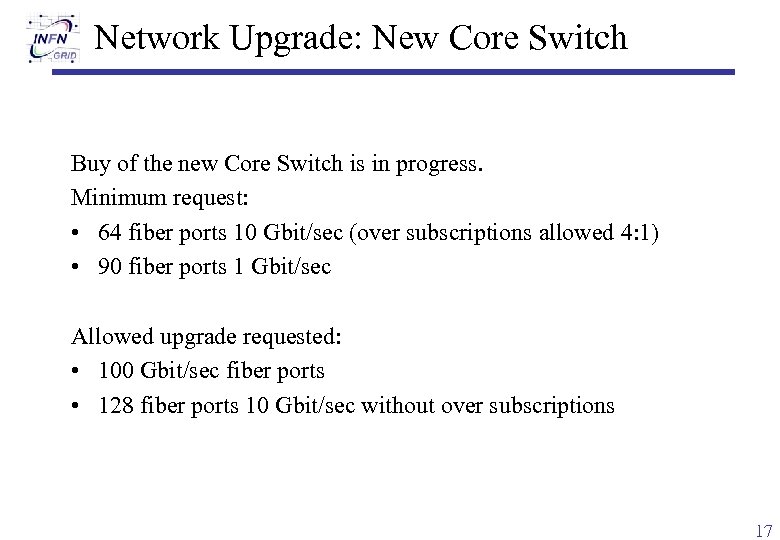

Network Upgrade: New Core Switch Buy of the new Core Switch is in progress. Minimum request: • 64 fiber ports 10 Gbit/sec (over subscriptions allowed 4: 1) • 90 fiber ports 1 Gbit/sec Allowed upgrade requested: • 100 Gbit/sec fiber ports • 128 fiber ports 10 Gbit/sec without over subscriptions 17

Network Upgrade: New Core Switch Buy of the new Core Switch is in progress. Minimum request: • 64 fiber ports 10 Gbit/sec (over subscriptions allowed 4: 1) • 90 fiber ports 1 Gbit/sec Allowed upgrade requested: • 100 Gbit/sec fiber ports • 128 fiber ports 10 Gbit/sec without over subscriptions 17

INFN-T 1 WAN connection today • INFN-T 1 CERN (T 0): 10 Gbit/sec • INFN-T 1 FZK (T 1): 10 Gbit/sec [backup of INFN-T 1 CERN] + IN 2 P 3 + SARA link • INFN-T 1 General purpose: 10 Gbit/sec May 2009 18

INFN-T 1 WAN connection today • INFN-T 1 CERN (T 0): 10 Gbit/sec • INFN-T 1 FZK (T 1): 10 Gbit/sec [backup of INFN-T 1 CERN] + IN 2 P 3 + SARA link • INFN-T 1 General purpose: 10 Gbit/sec May 2009 18

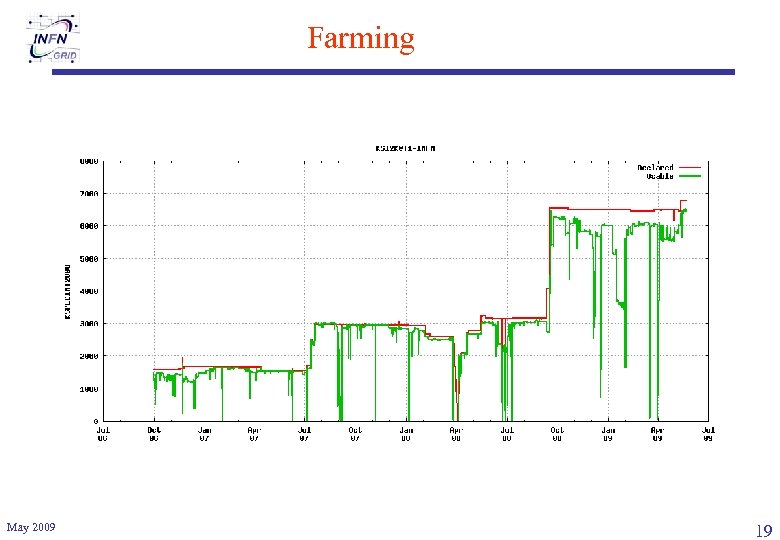

Farming May 2009 19

Farming May 2009 19

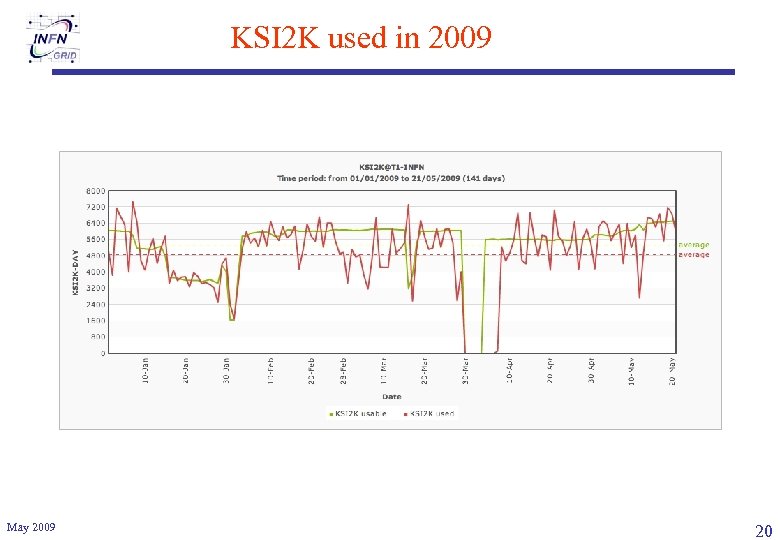

KSI 2 K used in 2009 May 2009 20

KSI 2 K used in 2009 May 2009 20

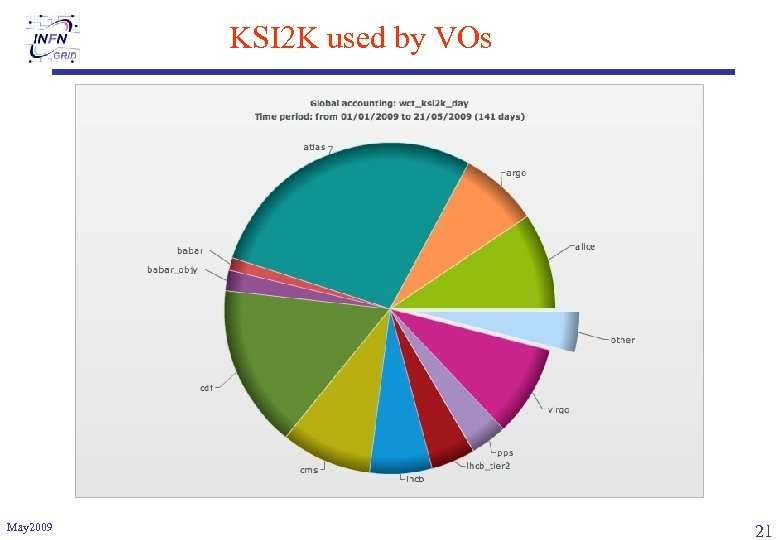

KSI 2 K used by VOs May 2009 21

KSI 2 K used by VOs May 2009 21

Farming upgrade • • • May 2009 Q 4/2009: +20. 000 HEP-SPEC installed No more FSB technology (Nehalem and Shanghai only) Option to buy in 2010 directly (less bureaucracy) Remote Console ~50% for LHC experiments We support more than 20 experiments/collaborations 22

Farming upgrade • • • May 2009 Q 4/2009: +20. 000 HEP-SPEC installed No more FSB technology (Nehalem and Shanghai only) Option to buy in 2010 directly (less bureaucracy) Remote Console ~50% for LHC experiments We support more than 20 experiments/collaborations 22

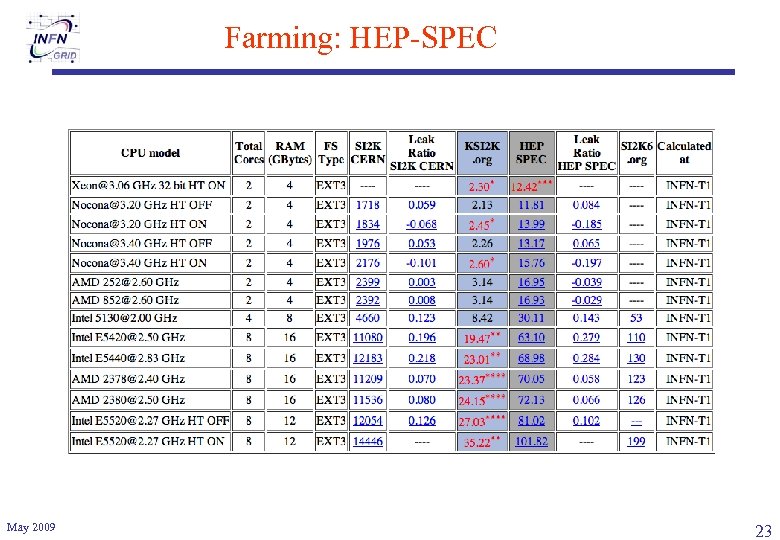

Farming: HEP-SPEC May 2009 23

Farming: HEP-SPEC May 2009 23

Disk Storage Systems n All systems interconnected in a SAN ¨ ¨ ¨ n 13 EMC/DELL Clarion CX 3 -80 systems (SATA disks) interconnected to SAN ¨ ¨ n 12 FC switches (2 core switches) with 2/4 gbps connections ~ 200 disk-servers ~ 2. 6 PB raw (~ 2. 1 PB-N) of disk-spac 1 dedicated to databases (FC disks) ~ 0. 8 GB/s bandwidth 150 -200 TB each 12 disk-servers (2 x 1 gbits uplinks + 2 FC 4 connections) part configured as gridftp servers (if needed), 64 bits OS (see next? ? slide) 1 CX 4 -960 (SATA disks) ~ 2 GB/s bandwidth ¨ 600 TB ¨ n Other older hw (Flexline, Fast-T etc. . ) being progressively phased out No support (part used as cold spares) ¨ Not suitable for GPFS ¨ May 2009 24 24

Disk Storage Systems n All systems interconnected in a SAN ¨ ¨ ¨ n 13 EMC/DELL Clarion CX 3 -80 systems (SATA disks) interconnected to SAN ¨ ¨ n 12 FC switches (2 core switches) with 2/4 gbps connections ~ 200 disk-servers ~ 2. 6 PB raw (~ 2. 1 PB-N) of disk-spac 1 dedicated to databases (FC disks) ~ 0. 8 GB/s bandwidth 150 -200 TB each 12 disk-servers (2 x 1 gbits uplinks + 2 FC 4 connections) part configured as gridftp servers (if needed), 64 bits OS (see next? ? slide) 1 CX 4 -960 (SATA disks) ~ 2 GB/s bandwidth ¨ 600 TB ¨ n Other older hw (Flexline, Fast-T etc. . ) being progressively phased out No support (part used as cold spares) ¨ Not suitable for GPFS ¨ May 2009 24 24

Tape libraries n New SUN SL 8500 in production since July’ 08 10000 slots ¨ 20 T 10 KB drives (1 TB) in place ¨ n 8 T 10 KA (0. 5 TB) drives still in place 4000 tapes on line (4 PB) “old” SUN SL 5500 1 PB on line, 9 9940 b and 5 LTO drives ¨ Nearly full ¨ No more used for writing ¨ n Repack on going ~5 k 200 GB tapes to be repacked ¨ ~ 500 GB tapes ¨ May 2009 25 25

Tape libraries n New SUN SL 8500 in production since July’ 08 10000 slots ¨ 20 T 10 KB drives (1 TB) in place ¨ n 8 T 10 KA (0. 5 TB) drives still in place 4000 tapes on line (4 PB) “old” SUN SL 5500 1 PB on line, 9 9940 b and 5 LTO drives ¨ Nearly full ¨ No more used for writing ¨ n Repack on going ~5 k 200 GB tapes to be repacked ¨ ~ 500 GB tapes ¨ May 2009 25 25

How experiments use the storage • Experiments present at CNAF make different use of (storage) resources – – Some use almost only the disk storage (e. g. CDF, BABAR) Some use also the tape system as an archive for older data (e. g. VIRGO) The LHC experiments exploit the functionalities of the HSM system… …. but in different ways • CMS and Alice) use primarily the disk with tape back-end, while others Atlas and LHCb concentrate their activity on the disk-only storage (see next slide for details) • Standardization over few storage systems, protocols – – May 2009 Srm vs. direct access file, rfio, as LAN protocols Gridftp as WAN protocol Some other protocols used but not supported (xrootd, bbftp) 26

How experiments use the storage • Experiments present at CNAF make different use of (storage) resources – – Some use almost only the disk storage (e. g. CDF, BABAR) Some use also the tape system as an archive for older data (e. g. VIRGO) The LHC experiments exploit the functionalities of the HSM system… …. but in different ways • CMS and Alice) use primarily the disk with tape back-end, while others Atlas and LHCb concentrate their activity on the disk-only storage (see next slide for details) • Standardization over few storage systems, protocols – – May 2009 Srm vs. direct access file, rfio, as LAN protocols Gridftp as WAN protocol Some other protocols used but not supported (xrootd, bbftp) 26

STORAGE CLASSES • • 3 class of services/quality (aka Storage Classes) defined in WLCG Present implementation at CNAF of the 3 SC’s – Disk 1 Tape 0 (D 1 T 0 or online-replica) GPFS/Sto. RM • Space managed by VO • Mainly LHCb, Atlas, some usage from CMS and Alice – Disk 1 Tape 1 (D 1 T 1 or online-custodial) GPFS/TSM/Sto. RM • Space managed by VO (i. e. if disk is full, copy fails) • Large buffer of disk with tape back end and no garbage collector • LHCb only – Disk 0 Tape 1 (D 0 T 1 or nearline-replica) CASTOR • Space managed by system • Data migrated to tapes and deleted from disk when staging area full • CMS, LHCb, Atlas, Alice • testing GPFS/TSM/Sto. RM This setup satisfies nearly all WLCG requirements (so far) exception made for: • Multiple copies in different Storage Area for a s. URL • Name space orthogonality May 2009 27

STORAGE CLASSES • • 3 class of services/quality (aka Storage Classes) defined in WLCG Present implementation at CNAF of the 3 SC’s – Disk 1 Tape 0 (D 1 T 0 or online-replica) GPFS/Sto. RM • Space managed by VO • Mainly LHCb, Atlas, some usage from CMS and Alice – Disk 1 Tape 1 (D 1 T 1 or online-custodial) GPFS/TSM/Sto. RM • Space managed by VO (i. e. if disk is full, copy fails) • Large buffer of disk with tape back end and no garbage collector • LHCb only – Disk 0 Tape 1 (D 0 T 1 or nearline-replica) CASTOR • Space managed by system • Data migrated to tapes and deleted from disk when staging area full • CMS, LHCb, Atlas, Alice • testing GPFS/TSM/Sto. RM This setup satisfies nearly all WLCG requirements (so far) exception made for: • Multiple copies in different Storage Area for a s. URL • Name space orthogonality May 2009 27

Questions? May 2009 28

Questions? May 2009 28